A Review of Hyperscanning and Its Use in Virtual Environments

Abstract

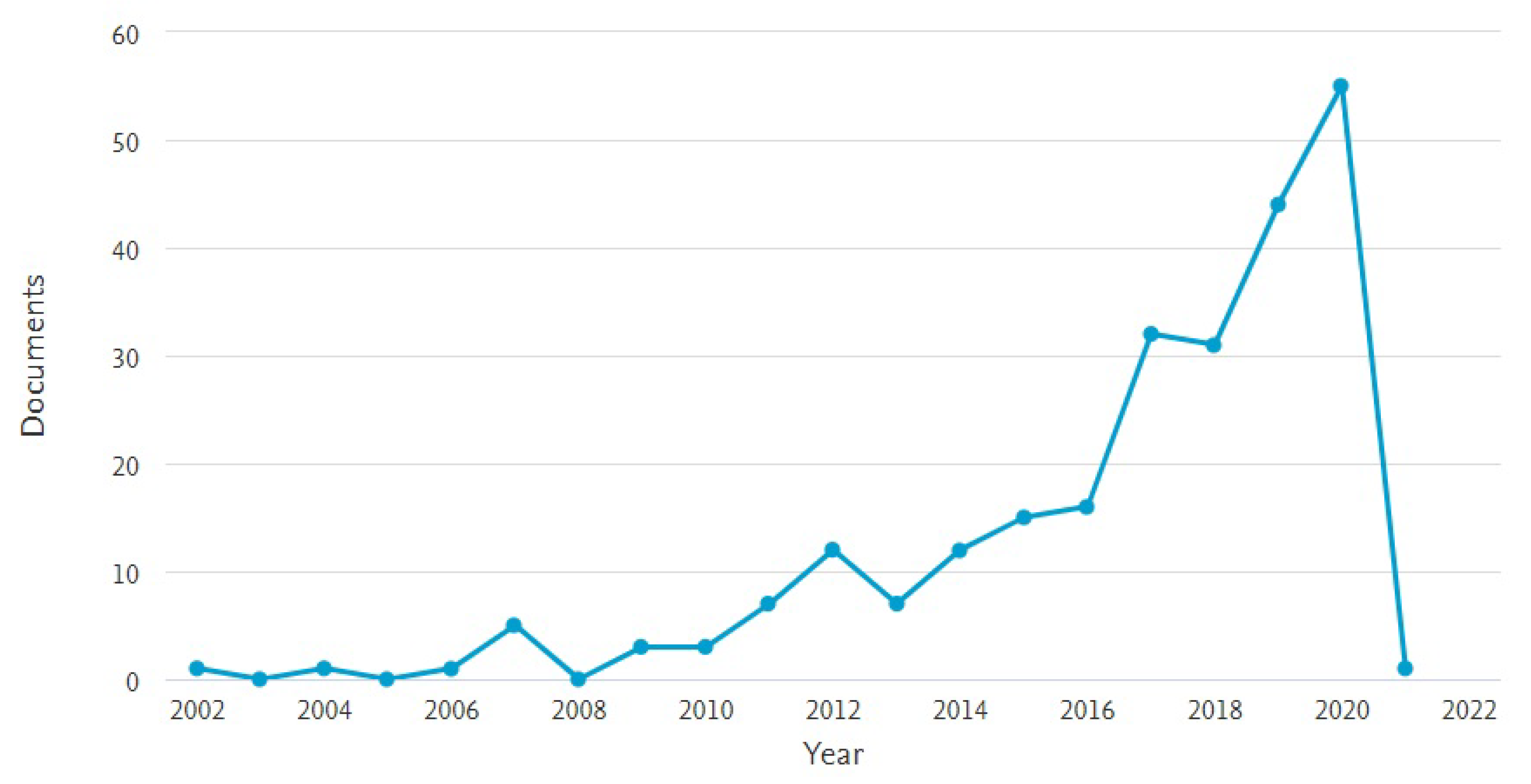

1. Introduction

2. Methodology

- Neuro-imaging methods: Electroencephalography (EEG), functional Near-infrared Spectroscopy (fNIRS), and functional Magnetic Resonance Imaging (fMRI).

- Study Paradigms: Imitation tasks, co-ordination tasks, eye contact/gaze-based tasks, co-operation and competition tasks, and ecologically valid/natural scenarios. A detailed description of each of the study paradigms can be found in Section 3.1.

3. Background

3.1. Study Paradigms

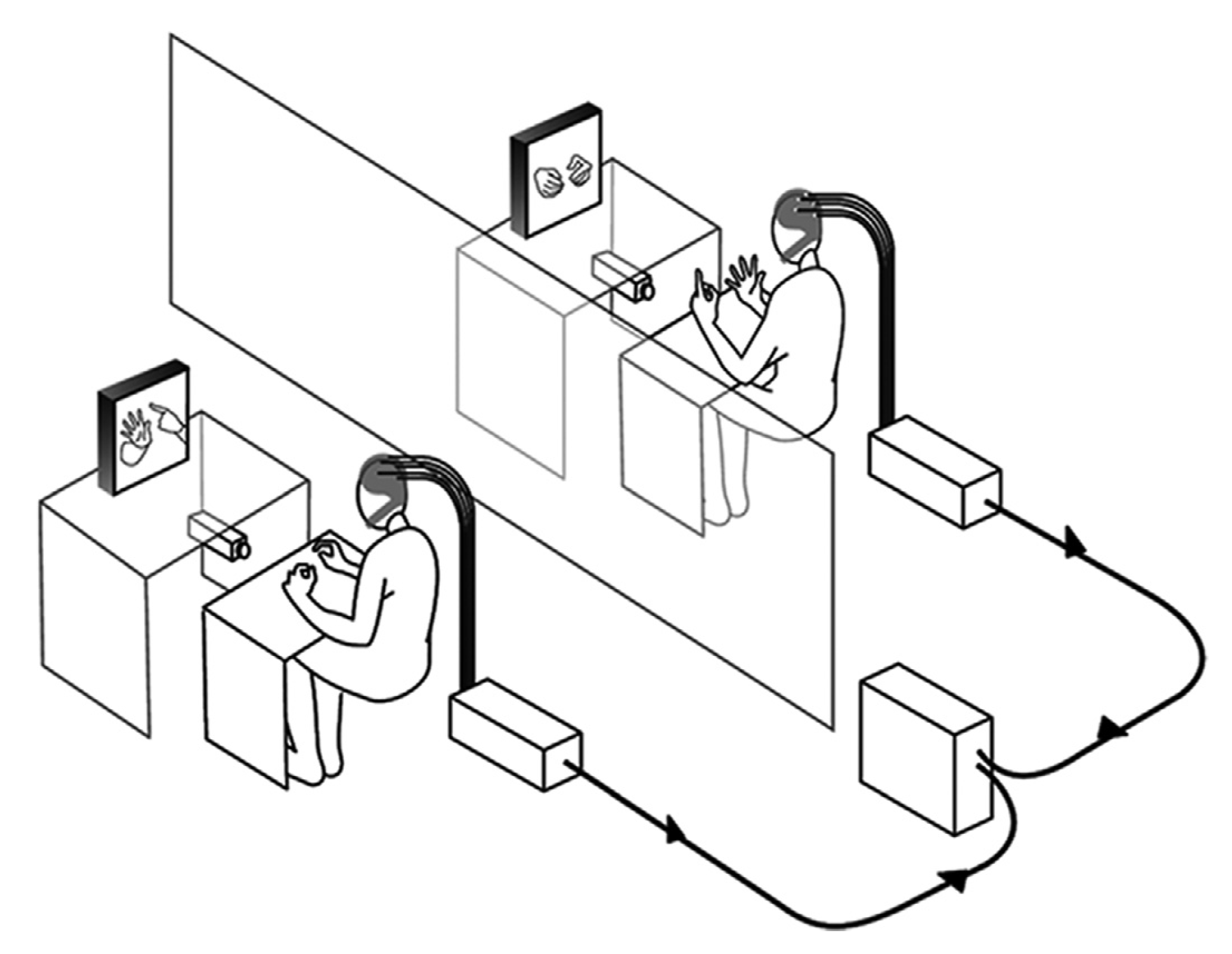

- Imitation tasks: These tasks require one participant to imitate the other’s movement or behaviour. The tasks are designed to assess how movements or behaviours are “taken on board” by the participant, attempting to imitate a given task or behaviour. Results from studies using this paradigm demonstrate that there appears to be a clear correlation of neural activity or inter-brain synchrony between the person performing the task and the one imitating it [34], especially in cases where imitation appears to be “mirrored” [33,34]. Participant pairs that do not perform well on such a task do not appear to exhibit the same level of inter-brain synchrony. Figure 2 shows an imitation task used by Delaherche et al. [34] to study inter-brain synchrony using the hyperscanning method.

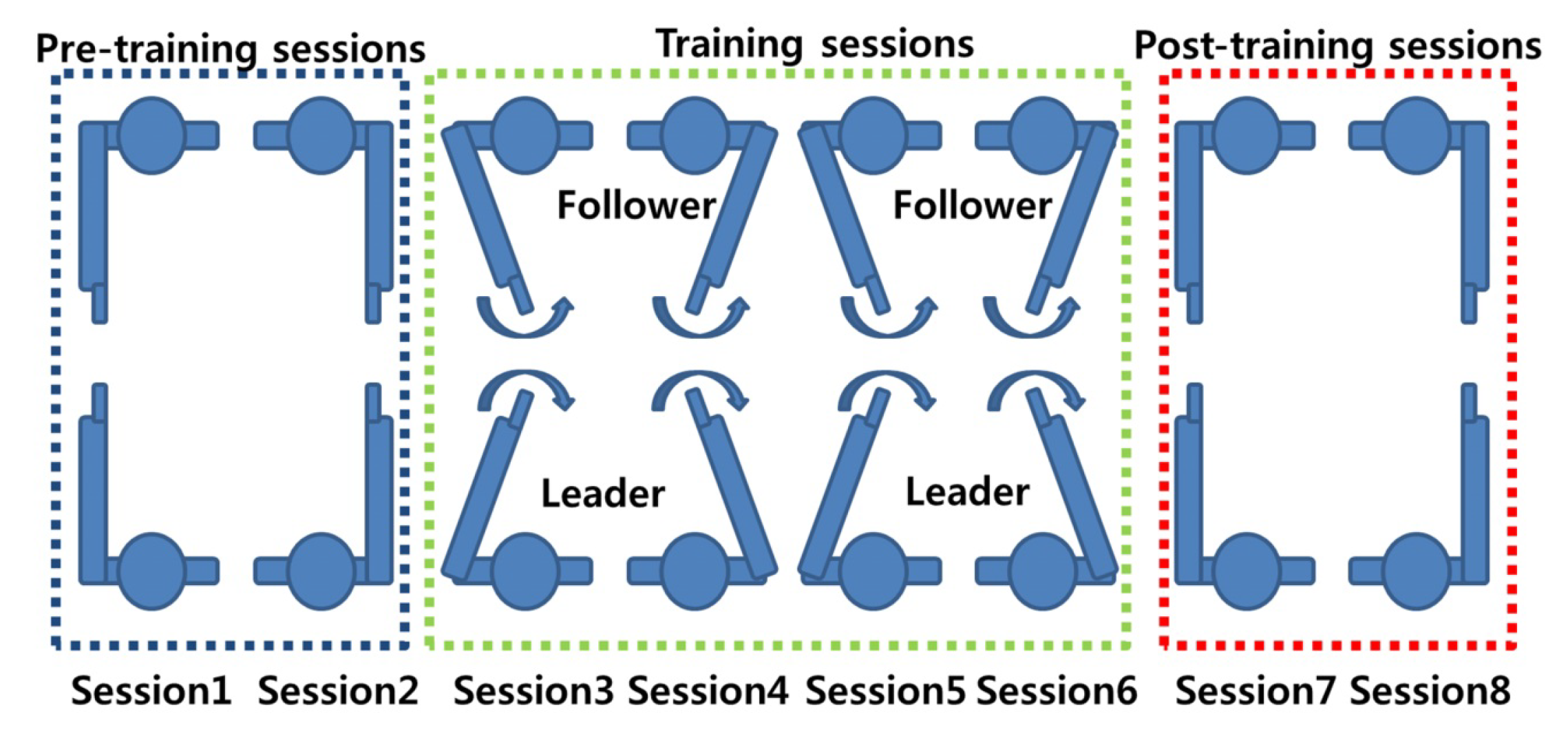

- Co-ordination tasks: Co-ordination tasks require participants to act in a synchronised manner. These tasks attempt to mimic the behavioural synchronisation that is commonly seen in daily life. For example, the footsteps of two people walking together may unconsciously sync each other up, even though their intrinsic cycles are different [33]. It must be noted that in some of the references listed in this paper, there is little difference between imitation and co-ordination tasks. For example, Yun et al. [33] demonstrated via their experiment that both co-ordination and imitation are intrinsic parts of their experimental design (Figure 3). While only the results from the co-ordination tasks (Sessions 1, 2, 7, and 8) were analysed for inter-brain synchrony, it is the imitation task (training sessions/social interaction) that is said to help induce synchrony between the two brains.

- Eye contact/gaze-based tasks: Studies that have employed this experimental paradigm require participants to look at each other and/or follow the gaze of a participant. Mutual gaze or eye contact between people offers critical cues that are used in social interaction and communication between people. The information exchange between people through eye contact offers an ideal base to study the neural mechanism that underlies this behaviour via hyperscanning. Several studies have demonstrated that the extent of inter-brain synchrony between people can be gauged by studying mutual eye gaze exchanges [27,43,46]. Figure 4 shows a gaze-based task used by Saito et al. [43] to study the neural correlates of joint attention using linked fMRI scanners.

- Economic exchange tasks: Economic exchange tasks have also been used to study social interaction using the hyperscanning technique. These tasks generally revolve around one participant offering a certain amount of “money” from a known amount to the other participant. The other participant is free to accept or reject this offer. Studies have demonstrated that offers considered “fair” or equitable generally demonstrate a correlation in neural activity among participating dyads. This is especially true in the case where the dyads are able to view each other’s faces [48]. An interesting variation to the game has been demonstrated by Ciaramidaro et al. [42]. In this study, they empirically demonstrate the existence of empathy between a “punisher” and a player in an economic exchange game involving a triad.

- Cooperation and competition tasks: Cooperation and competition form an intrinsic part of human life. Oftentimes, people need to work together to achieve a common goal, across all spheres of life. Similarly, we sometimes find ourselves competing against another person or several people in order to achieve a goal. Both these behaviours have been studied using hyperscanning in a bid to unearth the workings of social interactions. Studies using this paradigm have demonstrated that inter-brain synchrony is more likely to occur when participants are cooperating towards achieving a common goal rather than competing against each other [35]. Interestingly, it also demonstrates that cooperation in the “virtual environment” evokes a greater level of inter-brain synchrony in comparison to the real-world.

- Ecologically valid/natural scenarios: Of all of the hyperscanning experimental paradigms, this is the most interesting one, as it puts participants into real-world scenarios to study social interaction via neuroimaging techniques. The most commonly used neuroimaging technique employed for these studies appears to be EEG. This is possibly due to the advances in quality, portability, and the relatively low cost of EEG headsets. Additionally, the decreasing costs have allowed for relatively large-scale studies to be conducted while being able to obtain good-quality data, such as that demonstrated by Dikker et al. [37] who captured data from 12 EEG systems. Other researchers have also employed this experimental paradigm in scenarios ranging from card game play [31] and music performance [50] to piloting an aircraft [36], as shown in Figure 5.

3.2. Data Acquisition and Analysis

- Objective Data: This refers to the neural recordings made of the users utilising any of the neuroimaging techniques described earlier.

- Subjective Data: This includes questionnaires, such as the Positive and Negative Affect Schedule (PANAS) [51] and Self Assessment Manakin (SAM) [52] that are administered as part of the study. These questionnaires provide the researcher with “emotion” and other subjective measures of the participants. These questionnaires also provide important information regarding the user’s mental state during the activity.

3.2.1. Objective Data

- Partial Directed Coherence (PDC): PDC was introduced by [54] as a means to describe the relationship between multivariate time series data. The PDC from y to x is defined as:where is an element in , which is the Fourier Transform of the multivariate auto-regressive model coefficients, , of the time series; is the column of . With this method, the relationship between the data sets is expressed as the direction of information flow between the brains. PDC is based on multivariate auto-regressive modeling and Grander Causality. The analysis technique appears to lend itself to studies where one person’s behaviour drives another’s.

- Phase Locking Value (PLV): PLV, as defined by [55], is:where N represents the total number of epochs (a specified time window based on which data are segregated into equal parts for analysis) and and represent the phase of the signals for the electrodes i and j. The phase difference between the electrodes is given by . For the inter-brain synchrony analysis, the PLV value for each pair of electrodes i and j, where i belongs to one subject and j to the other, is computed. The value of the PLV measure varies between 0 and 1, with 1 indicating perfect inter-brain synchrony and 0 indicating no synchrony of phase-locking between the two signals.

- 3.

- Amplitude and Power Relation: The most frequently used method to study inter-brain synchrony between socially interacting individuals has been the changes in EEG amplitude or power. The changes in amplitude and/or power are estimated from event-related changes. The demonstration of a co-variance of these markers constitutes a display of inter-brain synchrony. This is, however, a weak form of demonstrating neural coupling among socially interacting individuals. While this sort of coupling is suggestive of inter-brain synchrony, it is by no means conclusive [53].

3.2.2. Subjective Data

4. The Case for Using Hyperscanning in Virtual Environments

5. Practical Implications for Using Hyperscanning in Virtual Environments

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

Glossary of Terms

| AR | Augmented Reality |

| BCI | Brain Computer Interface |

| CCorr | circular Correlation Coefficient |

| CSCW | Computer Supported Collaborative Work |

| EEG | Electroencephalograph |

| fMRI | Functional Magnetic Resonance Imaging |

| fNIRS | Functional Near-Infrared Spectroscopy |

| GSR | Galvanic Skin Response |

| HCI | Human-Computer Interaction |

| HMD | Head Mounted Display |

| HR | Heart Rate |

| KMI | Kraskov Mutual Information |

| MI | Motor Imagery |

| PANAS | Positive and Negative Affect Schedule |

| PDC | Partial Directed Coherence |

| PLI | Phase Locking Index |

| PLV | Phase Locking Value |

| SAM | Self Assessment Manakin |

| SI | Synchronization Index |

| TF | Time-Frequency Analysis |

| VR | Virtual Reality |

| VE | Virtual Environment |

| WTC | Wavelet Transform Coherence |

References

- Brandão, T.; Matias, M.; Ferreira, T.; Vieira, J.; Schulz, M.S.; Matos, P.M. Attachment, emotion regulation, and well-being in couples: Intrapersonal and interpersonal associations. J. Personal. 2020, 88, 748–761. [Google Scholar] [CrossRef] [PubMed]

- Karreman, A.; Vingerhoets, A.J. Attachment and well-being: The mediating role of emotion regulation and resilience. Personal. Individ. Differ. 2012, 53, 821–826. [Google Scholar] [CrossRef]

- Caruana, N.; Mcarthur, G.; Woolgar, A.; Brock, J. Simulating social interactions for the experimental investigation of joint attention. Neurosci. Biobehav. Rev. 2017, 74, 115–125. [Google Scholar] [CrossRef] [PubMed]

- Mojzisch, A.; Schilbach, L.; Helmert, J.R.; Pannasch, S.; Velichkovsky, B.M.; Vogeley, K. The effects of self-involvement on attention, arousal, and facial expression during social interaction with virtual others: A psychophysiological study. Soc. Neurosci. 2006, 1, 184–195. [Google Scholar] [CrossRef] [PubMed]

- Innocenti, A.; De Stefani, E.; Bernardi, N.F.; Campione, G.C.; Gentilucci, M. Gaze direction and request gesture in social interactions. PLoS ONE 2012, 7, e36390. [Google Scholar] [CrossRef]

- Treger, S.; Sprecher, S.; Erber, R. Laughing and liking: Exploring the interpersonal effects of humor use in initial social interactions. Eur. J. Soc. Psychol. 2013, 43, 532–543. [Google Scholar] [CrossRef]

- Holm Kvist, M. Children’s crying in play conflicts: A locus for moral and emotional socialization. In Research on Children and Social Interaction; Equinox Publishing Ltd.: Sheffield, UK, 2018; Volume 2. [Google Scholar]

- Graham, R.; Labar, K.S. Neurocognitive mechanisms of gaze-expression interactions in face processing and social attention. Neuropsychologia 2012, 50, 553–566. [Google Scholar] [CrossRef][Green Version]

- Ishii, R.; Nakano, Y.I.; Nishida, T. Gaze awareness in conversational agents: Estimating a user’s conversational engagement from eye gaze. ACM Trans. Interact. Intell. Syst. 2013, 3, 1–25. [Google Scholar] [CrossRef]

- Cacioppo, J.T.; Berntson, G.G.; Adolphs, R.; Carter, C.S.; McClintock, M.K.; Meaney, M.J.; Schacter, D.L.; Sternberg, E.M.; Suomi, S.; Taylor, S.E. Foundations in Social Neuroscience; MIT Press: Cambridge, MA, USA, 2002. [Google Scholar]

- Pönkänen, L.M.; Peltola, M.J.; Hietanen, J.K. The observer observed: Frontal EEG asymmetry and autonomic responses differentiate between another person’s direct and averted gaze when the face is seen live. Int. J. Psychophysiol. 2011, 82, 180–187. [Google Scholar] [CrossRef]

- Pönkänen, L.M.; Hietanen, J.K. Eye contact with neutral and smiling faces: Effects on autonomic responses and frontal EEG asymmetry. Front. Hum. Neurosci. 2012, 6, 122. [Google Scholar] [CrossRef]

- Hasson, U.; Ghazanfar, A.A.; Galantucci, B.; Garrod, S.; Keysers, C. Brain-to-brain coupling: A mechanism for creating and sharing a social world. Trends Cogn. Sci. 2012, 16, 114–121. [Google Scholar] [CrossRef] [PubMed]

- Hari, R.; Kujala, M.V. Brain basis of human social interaction: From concepts to brain imaging. Physiol. Rev. 2009, 89, 453–479. [Google Scholar] [CrossRef] [PubMed]

- Duane, T.D.; Behrendt, T. Extrasensory electroencephalographic induction between identical twins. Science 1965, 150, 367. [Google Scholar] [CrossRef]

- Billinghurst, M.; Kato, H. Collaborative augmented reality. Commun. ACM 2002, 45, 64–70. [Google Scholar] [CrossRef]

- Kim, S.; Billinghurst, M.; Lee, G. The effect of collaboration styles and view independence on video-mediated remote collaboration. Comput. Support. Coop. Work CSCW 2018, 27, 569–607. [Google Scholar] [CrossRef]

- Yang, J.; Sasikumar, P.; Bai, H.; Barde, A.; Sörös, G.; Billinghurst, M. The effects of spatial auditory and visual cues on mixed reality remote collaboration. J. Multimodal User Interfaces 2020, 14, 337–352. [Google Scholar] [CrossRef]

- Bai, H.; Sasikumar, P.; Yang, J.; Billinghurst, M. A User Study on Mixed Reality Remote Collaboration with Eye Gaze and Hand Gesture Sharing. In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 25–30 April 2020; pp. 1–13. [Google Scholar]

- Piumsomboon, T.; Day, A.; Ens, B.; Lee, Y.; Lee, G.; Billinghurst, M. Exploring enhancements for remote mixed reality collaboration. In SIGGRAPH Asia 2017 Mobile Graphics & Interactive Applications; Association for Computing Machinery: New York, NY, USA, 2017; pp. 1–5. [Google Scholar]

- Frith, C.D.; Frith, U. Implicit and explicit processes in social cognition. Neuron 2008, 60, 503–510. [Google Scholar] [CrossRef] [PubMed]

- Wang, M.Y.; Luan, P.; Zhang, J.; Xiang, Y.T.; Niu, H.; Yuan, Z. Concurrent mapping of brain activation from multiple subjects during social interaction by hyperscanning: A mini-review. Quant. Imaging Med. Surg. 2018, 8, 819. [Google Scholar] [CrossRef]

- Liu, D.; Liu, S.; Liu, X.; Zhang, C.; Li, A.; Jin, C.; Chen, Y.; Wang, H.; Zhang, X. Interactive brain activity: Review and progress on EEG-based hyperscanning in social interactions. Front. Psychol. 2018, 9, 1862. [Google Scholar] [CrossRef]

- Montague, P.R.; Berns, G.S.; Cohen, J.D.; McClure, S.M.; Pagnoni, G.; Dhamala, M.; Wiest, M.C.; Karpov, I.; King, R.D.; Apple, N.; et al. Hyperscanning: Simultaneous fMRI during Linked Social Interactions. NeuroImage 2002, 16, 1159–1164. [Google Scholar] [CrossRef]

- Scholkmann, F.; Holper, L.; Wolf, U.; Wolf, M. A new methodical approach in neuroscience: Assessing inter-personal brain coupling using functional near-infrared imaging (fNIRI) hyperscanning. Front. Hum. Neurosci. 2013, 7, 813. [Google Scholar] [CrossRef] [PubMed]

- Koike, T.; Tanabe, H.C.; Sadato, N. hyperscanning neuroimaging technique to reveal the “two-in-one” system in social interactions. Neurosci. Res. 2015, 90, 25–32. [Google Scholar] [CrossRef]

- Bilek, E.; Ruf, M.; Schäfer, A.; Akdeniz, C.; Calhoun, V.D.; Schmahl, C.; Demanuele, C.; Tost, H.; Kirsch, P.; Meyer-Lindenberg, A. Information flow between interacting human brains: Identification, validation, and relationship to social expertise. Proc. Natl. Acad. Sci. USA 2015, 112, 5207–5212. [Google Scholar] [CrossRef] [PubMed]

- Babiloni, F.; Astolfi, L. Social neuroscience and hyperscanning techniques: Past, present and future. Neurosci. Biobehav. Rev. 2014, 44, 76–93. [Google Scholar] [CrossRef] [PubMed]

- Rapoport, A.; Chammah, A.M.; Orwant, C.J. Prisoner’s Dilemma: A Study in Conflict and Cooperation; University of Michigan Press: Ann Arbor, MI, USA, 1965; Volume 165. [Google Scholar]

- Axelrod, R. Effective choice in the prisoner’s dilemma. J. Confl. Resolut. 1980, 24, 3–25. [Google Scholar] [CrossRef]

- Babiloni, F.; Cincotti, F.; Mattia, D.; Mattiocco, M.; Fallani, F.D.V.; Tocci, A.; Bianchi, L.; Marciani, M.G.; Astolfi, L. Hypermethods for EEG hyperscanning. In Proceedings of the 2006 International Conference of the IEEE Engineering in Medicine and Biology Society, New York, NY, USA, 31 August–3 September 2006; IEEE: Piscataway, NJ, USA, 2006; pp. 3666–3669. [Google Scholar]

- Yun, K.; Chung, D.; Jeong, J. Emotional interactions in human decision making using EEG hyperscanning. In Proceedings of the International Conference of Cognitive Science, Seoul, Korea, 27–29 July 2008; p. 4. [Google Scholar]

- Yun, K.; Watanabe, K.; Shimojo, S. Interpersonal body and neural synchronization as a marker of implicit social interaction. Sci. Rep. 2012, 2, 959. [Google Scholar] [CrossRef] [PubMed]

- Delaherche, E.; Dumas, G.; Nadel, J.; Chetouani, M. Automatic measure of imitation during social interaction: A behavioral and hyperscanning-EEG benchmark. Pattern Recognit. Lett. 2015, 66, 118–126. [Google Scholar] [CrossRef]

- Sinha, N.; Maszczyk, T.; Wanxuan, Z.; Tan, J.; Dauwels, J. EEG hyperscanning study of inter-brain synchrony during cooperative and competitive interaction. In Proceedings of the 2016 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Budapest, Hungary, 9–12 October 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 4813–4818. [Google Scholar]

- Toppi, J.; Borghini, G.; Petti, M.; He, E.J.; De Giusti, V.; He, B.; Astolfi, L.; Babiloni, F. Investigating cooperative behavior in ecological settings: An EEG hyperscanning study. PLoS ONE 2016, 11, e0154236. [Google Scholar] [CrossRef]

- Dikker, S.; Wan, L.; Davidesco, I.; Kaggen, L.; Oostrik, M.; McClintock, J.; Rowland, J.; Michalareas, G.; Van Bavel, J.J.; Ding, M.; et al. Brain-to-brain synchrony tracks real-world dynamic group interactions in the classroom. Curr. Biol. 2017, 27, 1375–1380. [Google Scholar] [CrossRef]

- Pérez, A.; Carreiras, M.; Duñabeitia, J.A. Brain-to-brain entrainment: EEG interbrain synchronization while speaking and listening. Sci. Rep. 2017, 7, 1–12. [Google Scholar] [CrossRef]

- Sciaraffa, N.; Borghini, G.; Aricò, P.; Di Flumeri, G.; Colosimo, A.; Bezerianos, A.; Thakor, N.V.; Babiloni, F. Brain interaction during cooperation: Evaluating local properties of multiple-brain network. Brain Sci. 2017, 7, 90. [Google Scholar] [CrossRef] [PubMed]

- Szymanski, C.; Pesquita, A.; Brennan, A.A.; Perdikis, D.; Enns, J.T.; Brick, T.R.; Müller, V.; Lindenberger, U. Teams on the same wavelength perform better: Inter-brain phase synchronization constitutes a neural substrate for social facilitation. Neuroimage 2017, 152, 425–436. [Google Scholar] [CrossRef] [PubMed]

- Zhang, J.; Zhou, Z. Multiple Human EEG Synchronous Analysis in Group Interaction-Prediction Model for Group Involvement and Individual Leadership. In International Conference on Augmented Cognition; Springer: Cham, Switzerland, 2017; pp. 99–108. [Google Scholar]

- Ciaramidaro, A.; Toppi, J.; Casper, C.; Freitag, C.; Siniatchkin, M.; Astolfi, L. Multiple-brain connectivity during third party punishment: An EEG hyperscanning study. Sci. Rep. 2018, 8, 1–13. [Google Scholar] [CrossRef] [PubMed]

- Saito, D.N.; Tanabe, H.C.; Izuma, K.; Hayashi, M.J.; Morito, Y.; Komeda, H.; Uchiyama, H.; Kosaka, H.; Okazawa, H.; Fujibayashi, Y.; et al. “Stay tuned”: Inter-individual neural synchronization during mutual gaze and joint attention. Front. Integr. Neurosci. 2010, 4, 127. [Google Scholar] [CrossRef] [PubMed]

- Stephens, G.J.; Silbert, L.J.; Hasson, U. Speaker–listener neural coupling underlies successful communication. Proc. Natl. Acad. Sci. USA 2010, 107, 14425–14430. [Google Scholar] [CrossRef]

- Dikker, S.; Silbert, L.J.; Hasson, U.; Zevin, J.D. On the same wavelength: Predictable language enhances speaker–listener brain-to-brain synchrony in posterior superior temporal gyrus. J. Neurosci. 2014, 34, 6267–6272. [Google Scholar] [CrossRef]

- Koike, T.; Tanabe, H.C.; Okazaki, S.; Nakagawa, E.; Sasaki, A.T.; Shimada, K.; Sugawara, S.K.; Takahashi, H.K.; Yoshihara, K.; Bosch-Bayard, J.; et al. Neural substrates of shared attention as social memory: A hyperscanning functional magnetic resonance imaging study. Neuroimage 2016, 125, 401–412. [Google Scholar] [CrossRef]

- Nozawa, T.; Sasaki, Y.; Sakaki, K.; Yokoyama, R.; Kawashima, R. Interpersonal frontopolar neural synchronization in group communication: An exploration toward fNIRS hyperscanning of natural interactions. Neuroimage 2016, 133, 484–497. [Google Scholar] [CrossRef]

- Tang, H.; Mai, X.; Wang, S.; Zhu, C.; Krueger, F.; Liu, C. Interpersonal brain synchronization in the right temporo-parietal junction during face-to-face economic exchange. Soc. Cogn. Affect. Neurosci. 2016, 11, 23–32. [Google Scholar] [CrossRef]

- Liu, T.; Pelowski, M. Clarifying the interaction types in two-person neuroscience research. Front. Hum. Neurosci. 2014, 8, 276. [Google Scholar] [CrossRef]

- Acquadro, M.A.; Congedo, M.; De Riddeer, D. Music performance as an experimental approach to hyperscanning studies. Front. Hum. Neurosci. 2016, 10, 242. [Google Scholar] [CrossRef] [PubMed]

- Watson, D.; Clark, L.A. The PANAS-X: Manual for the Positive and Negative Affect Schedule-Expanded Form. Available online: https://ir.uiowa.edu/cgi/viewcontent.cgi?article=1011&context=psychology_pubs (accessed on 23 November 2020).

- Bradley, M.M.; Lang, P.J. Measuring emotion: The self-assessment manikin and the semantic differential. J. Behav. Ther. Exp. Psychiatry 1994, 25, 49–59. [Google Scholar] [CrossRef]

- Burgess, A.P. On the interpretation of synchronization in EEG hyperscanning studies: A cautionary note. Front. Hum. Neurosci. 2013, 7, 881. [Google Scholar] [CrossRef] [PubMed]

- Baccalá, L.A.; Sameshima, K. Partial directed coherence: A new concept in neural structure determination. Biol. Cybern. 2001, 84, 463–474. [Google Scholar] [CrossRef] [PubMed]

- Lachaux, J.P.; Rodriguez, E.; Martinerie, J.; Varela, F.J. Measuring phase synchrony in brain signals. Hum. Brain Mapp. 1999, 8, 194–208. [Google Scholar] [CrossRef]

- Jammalamadaka, S.R.; Sengupta, A. Topics in Circular Statistics; World Scientific: Singapore, 2001; Volume 5. [Google Scholar]

- Kraskov, A.; Stögbauer, H.; Grassberger, P. Estimating mutual information. Phys. Rev. E 2004, 69, 066138. [Google Scholar] [CrossRef]

- Tognoli, E.; Lagarde, J.; DeGuzman, G.C.; Kelso, J.S. The phi complex as a neuromarker of human social coordination. Proc. Natl. Acad. Sci. USA 2007, 104, 8190–8195. [Google Scholar] [CrossRef]

- Mu, Y.; Han, S.; Gelfand, M.J. The role of gamma interbrain synchrony in social coordination when humans face territorial threats. Soc. Cogn. Affect. Neurosci. 2017, 12, 1614–1623. [Google Scholar] [CrossRef]

- Kawasaki, M.; Kitajo, K.; Yamaguchi, Y. Sensory-motor synchronization in the brain corresponds to behavioral synchronization between individuals. Neuropsychologia 2018, 119, 59–67. [Google Scholar] [CrossRef]

- Konvalinka, I.; Bauer, M.; Stahlhut, C.; Hansen, L.K.; Roepstorff, A.; Frith, C.D. Frontal alpha oscillations distinguish leaders from followers: Multivariate decoding of mutually interacting brains. Neuroimage 2014, 94, 79–88. [Google Scholar] [CrossRef]

- Ménoret, M.; Varnet, L.; Fargier, R.; Cheylus, A.; Curie, A.; des Portes, V.; Nazir, T.A.; Paulignan, Y. Neural correlates of non-verbal social interactions: A dual-EEG study. Neuropsychologia 2014, 55, 85–97. [Google Scholar] [CrossRef] [PubMed]

- Reindl, V.; Gerloff, C.; Scharke, W.; Konrad, K. Brain-to-brain synchrony in parent-child dyads and the relationship with emotion regulation revealed by fNIRS-based hyperscanning. NeuroImage 2018, 178, 493–502. [Google Scholar] [CrossRef] [PubMed]

- Cui, X.; Bryant, D.M.; Reiss, A.L. NIRS-based hyperscanning reveals increased interpersonal coherence in superior frontal cortex during cooperation. NeuroImage 2012, 59, 2430–2437. [Google Scholar] [CrossRef] [PubMed]

- Pan, Y.; Cheng, X.; Zhang, Z.; Li, X.; Hu, Y. Cooperation in lovers: An fNIRS-based hyperscanning study. Hum. Brain Mapp. 2017, 38, 831–841. [Google Scholar] [CrossRef]

- Holper, L.; Scholkmann, F.; Wolf, M. Between-brain connectivity during imitation measured by fNIRS. NeuroImage 2012, 63, 212–222. [Google Scholar] [CrossRef]

- Hirsch, J.; Zhang, X.; Noah, J.A.; Ono, Y. Frontal temporal and parietal systems synchronize within and across brains during live eye-to-eye contact. NeuroImage 2017, 157, 314–330. [Google Scholar] [CrossRef]

- Witmer, B.G.; Singer, M.J. Measuring presence in virtual environments: A presence questionnaire. Presence 1998, 7, 225–240. [Google Scholar] [CrossRef]

- De Kort, Y.A.; IJsselsteijn, W.A.; Poels, K. Digital games as social presence technology: Development of the Social Presence in Gaming Questionnaire (SPGQ). Proc. Presence 2007, 195203, 1–9. [Google Scholar]

- Gupta, K.; Lee, G.A.; Billinghurst, M. Do you see what i see? the effect of gaze tracking on task space remote collaboration. IEEE Trans. Vis. Comput. Graph. 2016, 22, 2413–2422. [Google Scholar] [CrossRef]

- Kim, S.; Lee, G.; Sakata, N.; Billinghurst, M. Improving co-presence with augmented visual communication cues for sharing experience through video conference. In Proceedings of the 2014 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Munich, Germany, 10–12 September 2014; IEEE: Piscataway, NJ, USA, 2014; pp. 83–92. [Google Scholar]

- Singer, T.; Lamm, C. The social neuroscience of empathy. Ann. N. Y. Acad. Sci. 2009, 1156, 81–96. [Google Scholar] [CrossRef]

- Hu, Y.; Pan, Y.; Shi, X.; Cai, Q.; Li, X.; Cheng, X. Inter-brain synchrony and cooperation context in interactive decision making. Biol. Psychol. 2018, 133, 54–62. [Google Scholar] [CrossRef] [PubMed]

- Govern, J.M.; Marsch, L.A. Development and validation of the situational self-awareness scale. Conscious. Cogn. 2001, 10, 366–378. [Google Scholar] [CrossRef] [PubMed]

- Van Bel, D.T.; Smolders, K.; IJsselsteijn, W.A.; de Kort, Y. Social connectedness: Concept and measurement. Intell. Environ. 2009, 2, 67–74. [Google Scholar]

- Yarosh, S.; Markopoulos, P.; Abowd, G.D. Towards a questionnaire for measuring affective benefits and costs of communication technologies. In Proceedings of the 17th ACM Conference on Computer Supported Cooperative Work & Social Computing, Baltimore, MD, USA, 15–19 February 2014; pp. 84–96. [Google Scholar]

- Ens, B.; Lanir, J.; Tang, A.; Bateman, S.; Lee, G.; Piumsomboon, T.; Billinghurst, M. Revisiting collaboration through mixed reality: The evolution of groupware. Int. J. Hum. Comput. Stud. 2019, 131, 81–98. [Google Scholar] [CrossRef]

- Klinger, E.; Bouchard, S.; Légeron, P.; Roy, S.; Lauer, F.; Chemin, I.; Nugues, P. Virtual reality therapy versus cognitive behavior therapy for social phobia: A preliminary controlled study. Cyberpsychol. Behav. 2005, 8, 76–88. [Google Scholar] [CrossRef]

- Matamala-Gomez, M.; Donegan, T.; Bottiroli, S.; Sandrini, G.; Sanchez-Vives, M.V.; Tassorelli, C. Immersive virtual reality and virtual embodiment for pain relief. Front. Hum. Neurosci. 2019, 13, 279. [Google Scholar] [CrossRef]

- Joda, T.; Gallucci, G.; Wismeijer, D.; Zitzmann, N. Augmented and virtual reality in dental medicine: A systematic review. Comput. Biol. Med. 2019, 108, 93–100. [Google Scholar] [CrossRef]

- Alizadehsalehi, S.; Hadavi, A.; Huang, J.C. From BIM to extended reality in AEC industry. Autom. Constr. 2020, 116, 103254. [Google Scholar] [CrossRef]

- Alizadehsalehi, S.; Hadavi, A.; Huang, J.C. BIM/MR-Lean Construction Project Delivery Management System. In Proceedings of the 2019 IEEE Technology & Engineering Management Conference (TEMSCON), Atlanta, GA, USA, 12–14 June 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1–6. [Google Scholar]

- Alizadehsalehi, S.; Hadavi, A.; Huang, J.C. Virtual reality for design and construction education environment. In AEI 2019: Integrated Building Solutions—The National Agenda; American Society of Civil Engineers: Reston, VA, USA, 2019; pp. 193–203. [Google Scholar]

- Masai, K.; Kunze, K.; Sugimoto, M.; Billinghurst, M. Empathy Glasses. In Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems, CHI EA’16, San Jose, CA, USA, 7–12 May 2016; Association for Computing Machinery: New York, NY, USA, 2016; pp. 1257–1263. [Google Scholar] [CrossRef]

- Dey, A.; Piumsomboon, T.; Lee, Y.; Billinghurst, M. Effects of Sharing Physiological States of Players in a Collaborative Virtual Reality Gameplay. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, CHI’17, Denver, CO, USA, 6–11 May 2017; Association for Computing Machinery: New York, NY, USA, 2017; pp. 4045–4056. [Google Scholar] [CrossRef]

- Dey, A.; Chen, H.; Zhuang, C.; Billinghurst, M.; Lindeman, R.W. Effects of Sharing Real-Time Multi-Sensory Heart Rate Feedback in Different Immersive Collaborative Virtual Environments. In Proceedings of the 2018 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Munich, Germany, 16–20 October 2018; pp. 165–173. [Google Scholar] [CrossRef]

- Dey, A.; Chen, H.; Hayati, A.; Billinghurst, M.; Lindeman, R.W. Sharing Manipulated Heart Rate Feedback in Collaborative Virtual Environments. In Proceedings of the 2019 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Beijing, China, 14–18 October 2019; pp. 248–257. [Google Scholar] [CrossRef]

- Dey, A.; Chatburn, A.; Billinghurst, M. Exploration of an EEG-Based Cognitively Adaptive Training System in Virtual Reality. In Proceedings of the 2019 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Osaka, Japan, 23–27 March 2019; pp. 220–226. [Google Scholar] [CrossRef]

- Salminen, M.; Järvelä, S.; Ruonala, A.; Timonen, J.; Mannermaa, K.; Ravaja, N.; Jacucci, G. Bio-adaptive social VR to evoke affective interdependence: DYNECOM. In Proceedings of the 23rd International Conference on Intelligent User Interfaces, Tokyo, Japan, 7–11 March 2018; pp. 73–77. [Google Scholar]

- Marín-Morales, J.; Higuera-Trujillo, J.L.; Greco, A.; Guixeres, J.; Llinares, C.; Scilingo, E.P.; Alcañiz, M.; Valenza, G. Affective computing in virtual reality: Emotion recognition from brain and heartbeat dynamics using wearable sensors. Sci. Rep. 2018, 8, 1–15. [Google Scholar] [CrossRef]

- Jantz, J.; Molnar, A.; Alcaide, R. A brain-computer interface for extended reality interfaces. In ACM Siggraph 2017 VR Village; Association for Computing Machinery: New York, NY, USA, 2017; pp. 1–2. [Google Scholar]

- Csikszentmihalyi, M.; Csikzentmihaly, M. Flow: The Psychology of Optimal Experience; Harper & Row New York: New York, NY, USA, 1990; Volume 1990. [Google Scholar]

- Shehata, M.; Cheng, M.; Leung, A.; Tsuchiya, N.; Wu, D.A.; Tseng, C.H.; Nakauchi, S.; Shimojo, S. Team Flow Is a Unique Brain State Associated with Enhanced Information Integration and Neural Synchrony. 2020. Available online: https://authors.library.caltech.edu/104079/ (accessed on 23 November 2020).

- Nakamura, J.; Csikszentmihalyi, M. The Concept of Flow; Flow and the Foundations of Positive Psychology; Springer: Dordrecht, The Netherlands, 2014; pp. 239–263. [Google Scholar]

- Bohil, C.J.; Alicea, B.; Biocca, F.A. Virtual reality in neuroscience research and therapy. Nat. Rev. Neurosci. 2011, 12, 752–762. [Google Scholar] [CrossRef]

- Lotte, F.; Faller, J.; Guger, C.; Renard, Y.; Pfurtscheller, G.; Lécuyer, A.; Leeb, R. Combining BCI with virtual reality: Towards new applications and improved BCI. In Towards Practical Brain-Computer Interfaces; Springer: Berlin/Heidelberg, Germany, 2012; pp. 197–220. [Google Scholar]

- Lenhardt, A.; Ritter, H. An augmented-reality based brain-computer interface for robot control. In International Conference on Neural Information Processing; Springer: Berlin/Heidelberg, Germany, 2010; pp. 58–65. [Google Scholar]

- Kerous, B.; Liarokapis, F. BrainChat-A Collaborative Augmented Reality Brain Interface for Message Communication. In Proceedings of the 2017 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct), Nantes, France, 9–13 October 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 279–283. [Google Scholar]

- Vourvopoulos, A.; Liarokapis, F. Evaluation of commercial brain–computer interfaces in real and virtual world environment: A pilot study. Comput. Electr. Eng. 2014, 40, 714–729. [Google Scholar] [CrossRef]

- Chin, Z.Y.; Ang, K.K.; Wang, C.; Guan, C. Online performance evaluation of motor imagery BCI with augmented-reality virtual hand feedback. In Proceedings of the 2010 Annual International Conference of the IEEE Engineering in Medicine and Biology, Montréal, QC, Canada, 20 July 2020; IEEE: Piscataway, NJ, USA, 2010; pp. 3341–3344. [Google Scholar]

- Naves, E.L.; Bastos, T.F.; Bourhis, G.; Silva, Y.M.L.R.; Silva, V.J.; Lucena, V.F. Virtual and augmented reality environment for remote training of wheelchairs users: Social, mobile, and wearable technologies applied to rehabilitation. In Proceedings of the 2016 IEEE 18th International Conference on e-Health Networking, Applications and Services (Healthcom), Munich, Germany, 14–16 September 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 1–4. [Google Scholar]

- Wolpaw, J.R.; Birbaumer, N.; McFarland, D.J.; Pfurtscheller, G.; Vaughan, T.M. Brain–computer interfaces for communication and control. Clin. Neurophysiol. 2002, 113, 767–791. [Google Scholar] [CrossRef]

- Nijboer, F.; Sellers, E.; Mellinger, J.; Jordan, M.A.; Matuz, T.; Furdea, A.; Halder, S.; Mochty, U.; Krusienski, D.; Vaughan, T.; et al. A P300-based brain–computer interface for people with amyotrophic lateral sclerosis. Clin. Neurophysiol. 2008, 119, 1909–1916. [Google Scholar] [CrossRef] [PubMed]

- Farwell, L.A.; Donchin, E. Talking off the top of your head: Toward a mental prosthesis utilizing event-related brain potentials. Electroencephalogr. Clin. Neurophysiol. 1988, 70, 510–523. [Google Scholar] [CrossRef]

- Pritchard, W.S. Psychophysiology of P300. Psychol. Bull. 1981, 89, 506. [Google Scholar] [CrossRef]

- Fabiani, M.; Gratton, G.; Karis, D.; Donchin, E. Definition, identification, and reliability of measurement of the P300 component of the event-related brain potential. Adv. Psychophysiol. 1987, 2, 78. [Google Scholar]

- Ryan, D.B.; Frye, G.; Townsend, G.; Berry, D.; Mesa-G, S.; Gates, N.A.; Sellers, E.W. Predictive spelling with a P300-based brain–computer interface: Increasing the rate of communication. Intl. J. Hum. Comput. Interact. 2010, 27, 69–84. [Google Scholar] [CrossRef]

- Horlings, R.; Datcu, D.; Rothkrantz, L.J. Emotion recognition using brain activity. In Proceedings of the 9th International Conference on Computer Systems and Technologies and Workshop for PhD Students in Computing, Ruse, Bulgaria, 12 June 2008; p. II-1. [Google Scholar]

- Schupp, H.T.; Flaisch, T.; Stockburger, J.; Junghöfer, M. Emotion and attention: Event-related brain potential studies. Prog. Brain Res. 2006, 156, 31–51. [Google Scholar]

- Bernal, G.; Yang, T.; Jain, A.; Maes, P. PhysioHMD: A conformable, modular toolkit for collecting physiological data from head-mounted displays. In Proceedings of the 2018 ACM International Symposium on Wearable Computers, Singapore, 8–12 October 2018; pp. 160–167. [Google Scholar]

- Gumilar, I.; Sareen, E.; Bell, R.; Stone, A.; Hayati, A.; Mao, J.; Barde, A.; Gupta, A.; Dey, A.; Lee, G.; et al. A comparative study on inter-brain synchrony in real and virtual environments using hyperscanning. Comput. Graph. 2020, 94, 62–75. [Google Scholar] [CrossRef]

| Authors | Study | Neuro Imaging Method |

|---|---|---|

| T. D. Duane & Thomas Behrendt [15] | Extrasensory Electroencephalographic Induction Between Identical Twins | EEG |

| Babiloni et al. [31] | Hypermethods for EEG hyperscanning | EEG |

| Yun et al. [32] | Emotional Interactions in Human Decision Making Using EEG hyperscanning | EEG |

| Yun et al. [33] | Interpersonal Body and Neural Synchronization as a Marker of Implicit Social Interaction | EEG |

| Delaherche et al. [34] | Automatic Measure of Imitation During Social Interaction: A Behavioral and hyperscanning-EEG Benchmark | EEG |

| Sinha et al. [35] | EEG hyperscanning Study of Inter-Brain Synchrony During Cooperative and Competitive Interaction | EEG |

| Toppi et al. [36] | Investigating Cooperative Behavior in Ecological Settings: An EEG hyperscanning Study | EEG |

| Dikker et al. [37] | Brain-to-Brain Synchrony Tracks Real-World Dynamic Group Interactions in the Classroom | EEG |

| Pérez et al. [38] | Brain-to-Brain Entrainment: EEG Interbrain Synchronization While Speaking and Listening | EEG |

| Sciaraffa et al. [39] | Brain Interaction During Cooperation: Evaluating Local Properties of Multiple-Brain Network | EEG |

| Szymanski et al. [40] | Teams on The Same Wavelength Perform Better: Inter-Brain Phase Synchronization Constitutes a Neural Substrate for Social Facilitation | EEG |

| Jiacai Zhang & Zixiong Zhou [41] | Multiple Human EEG Synchronous Analysis in Group Interaction-Prediction Model for Group Involvement and Individual Leadership | EEG |

| Ciaramidaro et al. [42] | Multiple-Brain Connectivity During Third Party Punishment: An EEG hyperscanning Study | EEG |

| Montague et al. [1] | hyperscanning: Simultaneous fMRI during Linked Social Interactions | fMRI |

| Saito et al. [43] | “Stay Tuned”: Inter-Individual Neural Synchronization During Mutual Gaze and Joint Attention | fMRI |

| Stephens et al. [44] | Speaker–Listener Neural Coupling Underlies Successful Communication | fMRI |

| Dikker et al. [45] | On the Same Wavelength: Predictable Language Enhances Speaker–Listener Brain-to-Brain Synchrony in Posterior Superior Temporal Gyrus | fMRI |

| Bilek et al. [27] | Information Flow Between Interacting Human Brains: Identification, Validation and Relationship to Social Expertise | fMRI |

| Koike et al. [46] | Neural Substrates of Shared Attention as Social Memory: A hyperscanning Functional Magnetic Resonance Imaging Study | fMRI |

| Nozawa et al. [47] | Interpersonal Frontopolar Neural Synchronization in Group Communication: An Exploration Toward fNIRS hyperscanning of Natural Interactions | fNIRS |

| Tang et al. [48] | Interpersonal Brain Synchronization In The Right Temporo-Parietal Junction During Face-To-Face Economic Exchange | fNIRS |

| Authors | Study | Paradigm |

|---|---|---|

| Delaherche et al. [34] | Automatic Measure of Imitation During Social Interaction—A Behavioral and hyperscanning-EEG Benchmark | Imitation Task |

| Kyongsik Yun et al. [33] | Interpersonal Body and Neural Synchronization as a Marker of Implicit Social Interaction | Co-ordination Task |

| Saito et al. [43] | “Stay Tuned”: Inter-Individual Neural Synchronization During Mutual Gaze and Joint Attention | Eye Contact/gaze-based Task |

| Bilek et al. [27] | Information Flow Between Interacting Human Brains: Identification, Validation and Relationship to Social Expertise | Eye Contact/gaze-based Task |

| Koike et al. [46] | Neural Substrates of Shared Attention as Social Memory: A hyperscanning Functional Magnetic Resonance Imaging Study | Eye Contact/gaze-based Task |

| Tang et al. [48] | Interpersonal Brain Synchronization In The Right Temporo-Parietal Junction During Face-To-Face Economic Exchange | Economic Exchange |

| Ciaramidaro et al. [42] | Multiple-Brain Connectivity During Third Party Punishment: An EEG hyperscanning Study | Economic Exchange |

| Sinha et al. [35] | EEG hyperscanning Study of Inter-Brain Synchrony During Cooperative and Competitive Interaction | Cooperation and Competition Task |

| Babiloni et al. [31] | Hypermethods for EEG hyperscanning | Real World/Ecologically Valid/Natural Scenarios |

| Toppi et al. [36] | Investigating Cooperative Behavior in Ecological Settings: An EEG hyperscanning Study | Real World/Ecologically Valid/Natural Scenarios |

| Dikker et al. [37] | Brain-to-Brain Synchrony Tracks Real-World Dynamic Group Interactions in the Classroom | Real World/Ecologically Valid/Natural Scenarios |

| Authors | Study | Analysis Methods |

|---|---|---|

| Yun et al. [32] | Emotional Interactions in Human Decision Making using EEG hyperscanning | Correlation (Signal-based correlation) |

| Sinha et al. [35] | EEG hyperscanning study of inter-brain synchrony during cooperative and competitive interaction | Correlation (Signal-based correlation) |

| Dikker et al. [37] | Brain-to-Brain Synchrony Tracks Real-World Dynamic Group Interactions in the Classroom | Correlation (Signal-based correlation) |

| Dikker et al. [45] | On the Same Wavelength: Predictable Language Enhances Speaker-Listener Brain-to-Brain Synchrony in Posterior Superior Temporal Gyrus | Correlation (Voxel-based correlation) |

| Koike et al. [46] | Neural substrates of shared attention as social memory: A hyperscanning functional magnetic resonance imaging study | Correlation (Voxel-based correlation) |

| Bilek et al. [27] | Information flow between interacting human brains: Identification, validation, and relationship to social expertise | Correlation (Voxel-based correlation) |

| Saito et al. [43] | “Stay Tuned”: Inter-Individual Neural Synchronization During Mutual Gaze and Joint Attention | Correlation (Voxel-based correlation) |

| Toppi et al. [36] | Investigating Cooperative Behavior in Ecological Settings: An EEG hyperscanning Study | Partial Directed Coherence |

| Tognoli et al. [58] | The phi complex as a neuromarker of human social coordination | Phase-based method (SI) |

| Szymanski et al. [40] | Teams on the same wavelength perform better: Inter-brain phase synchronization constitutes a neural substrate for social facilitation | Phase-based method (PLI) |

| Yun et al. [33] | Interpersonal body and neural synchronization as a marker of implicit social interaction | Phase-based method (PLV) |

| Mu et al. [59] | The role of gamma interbrain synchrony in social coordination when humans face territorial threats | Phase-based method (PLV) |

| Kawasaki et al. [60] | Sensory-motor synchronization in the brain corresponds to behavioral synchronization between individuals | Phase-based method (PLV) |

| Konvalinka et al. [61] | Frontal alpha oscillations distinguish leaders from followers: Multivariate decoding of mutually interacting brains | Phase-based method (SI) |

| Ménoret et al. [62] | Neural correlates of non-verbal social interactions: A dual-EEG study | Wavelet-based method (TF analysis) |

| Reindl et al. [63] | Brain-to-brain synchrony in parent-child dyads and the relationship with emotion regulation revealed by fNIRS-based hyperscanning | Wavelet-based method (WTC) |

| Cui et al. [64] | NIRS-based hyperscanning reveals increased interpersonal coherence in superior frontal cortex during cooperation | Wavelet-based method (WTC) |

| Nozawa et al. [47] | Interpersonal frontopolar neural synchronization in group communication: An exploration toward fNIRS hyperscanning of natural interactions | Wavelet-based method (WTC) |

| Pan et al. [65] | Cooperation in lovers: An fNIRS-based hyperscanning study | Wavelet-based method (WTC), Granger-causality |

| Holper et al. [66] | Between-brain connectivity during imitation measured by fNIRS | Wavelet-based method (WTC), Granger-causality |

| Hirsch et al. [67] | Frontal temporal and parietal systems synchronize within and across brains during live eye-to-eye contact | Wavelet-based method, Correlation |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Barde, A.; Gumilar, I.; Hayati, A.F.; Dey, A.; Lee, G.; Billinghurst, M. A Review of Hyperscanning and Its Use in Virtual Environments. Informatics 2020, 7, 55. https://doi.org/10.3390/informatics7040055

Barde A, Gumilar I, Hayati AF, Dey A, Lee G, Billinghurst M. A Review of Hyperscanning and Its Use in Virtual Environments. Informatics. 2020; 7(4):55. https://doi.org/10.3390/informatics7040055

Chicago/Turabian StyleBarde, Amit, Ihshan Gumilar, Ashkan F. Hayati, Arindam Dey, Gun Lee, and Mark Billinghurst. 2020. "A Review of Hyperscanning and Its Use in Virtual Environments" Informatics 7, no. 4: 55. https://doi.org/10.3390/informatics7040055

APA StyleBarde, A., Gumilar, I., Hayati, A. F., Dey, A., Lee, G., & Billinghurst, M. (2020). A Review of Hyperscanning and Its Use in Virtual Environments. Informatics, 7(4), 55. https://doi.org/10.3390/informatics7040055