A Conceptual Probabilistic Framework for Annotation Aggregation of Citizen Science Data

Abstract

1. Introduction

2. Related Work

3. Modeling the Domain

3.1. Participating Concepts: Tasks, Workers and Annotations

- Worker

- A worker is any of the participants in the annotation process. In our example, each of the volunteer citizen scientists involved in labeling images is a worker.

- Task

- A task can be understood as the minimal piece of work that can be assigned to a worker. In our example, labeling each of the images obtained from Twitter is a task.

- Annotation

- An annotation is the result of the processing of the task by the worker. An example of annotation in the above described disaster management example conveys the following information: Task 22 has been labeled by worker 12 as moderate.

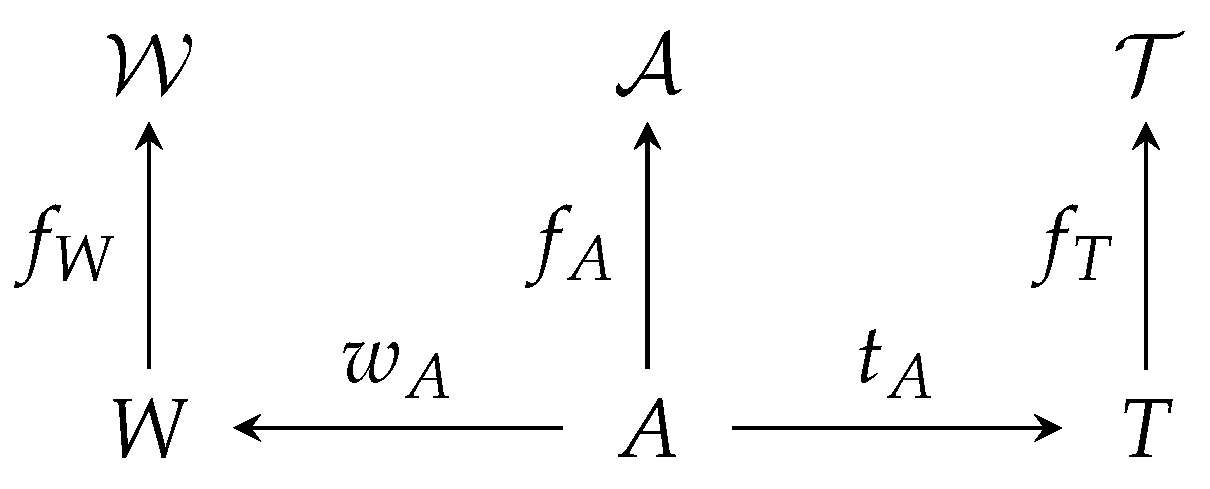

3.2. Abstract Mathematical Model

3.3. The Consensus Problem

- The number of workers , tasks and annotations

- For each worker , its observable characteristics, namely

- For each task , its observable characteristics, namely

- For each annotation , the task being annotated (), the worker that did the annotation () and the annotated characteristics ()

- A probabilistic model of annotation, consisting of the following:

- -

- An emission model , returning the probability that in a domain of characteristics , a worker with characteristics annotates a task with characteristics with label

- -

- A joint prior over every unobservable characteristic

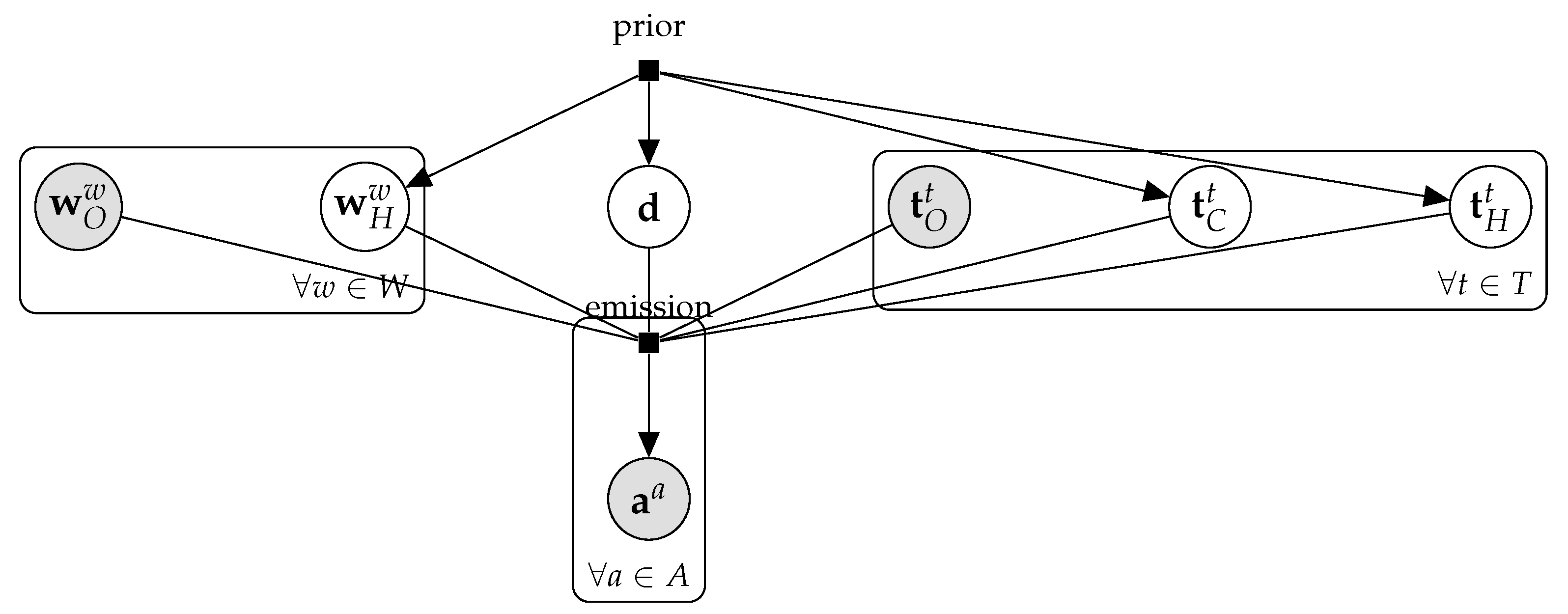

4. Discrete Annotation Models

4.1. The Multinomial Model

- Tasks are indistinguishable other than by their real classes. That is, the task feature space with and

- The general domain characteristics store the following:

- The probability that the “real” label of a task comes from each of the classes. Thus, the domain space is , that is, the set of probability distributions over This domain can be encoded as a stochastic vector of dimension , where can be understood as the probability of a task being of class

- The noisy labeling model for this simple model is the same for each worker. It has as characteristic an unobservable stochastic matrix of dimension Intuitively, element of the matrix can be understood as “the probability that a worker labels an image of class with label ” Thus, when is the identity matrix, our workers are perfect reporters of the real label. The further away from the identity, the bigger the confusion.

- Workers are indistinguishable from one another. Hence,

- The emission model in this case is

- The prior is assumed to be a Dirichlet both for and for each of the rows of and also encodes that is the prior for the real label of the tasks.where , , and .

4.2. The DS Model

- Tasks are indistinguishable other than by their real classes.

- The general domain characteristics store only the stochastic vector with the probability that the “real” label of a task comes from each of the classes.

- Each worker w has as characteristic an unobservable stochastic matrix Intuitively, element of the matrix can be understood as “the probability of worker w labeling an image of real class with label ”

- The emission model in this case is

- The prior is assumed to be a Dirichlet both for p and for each of the rows of . (The DS model, as presented in [13], used maximum likelihood to estimate its parameters and thus no prior was presented. Later, Paun et al. [14] presented the prior provided here.)where , and are the same as for the multinomial model and

5. Evaluating Data Quality in Highly Uncertain Scenarios

- Gold sets measure accuracy by comparing annotations to a ground truth;

- Auditing measures both accuracy and consistency by having an expert review the labels;

- Consensus, or overlap, measures consistency and agreement amongst a group.

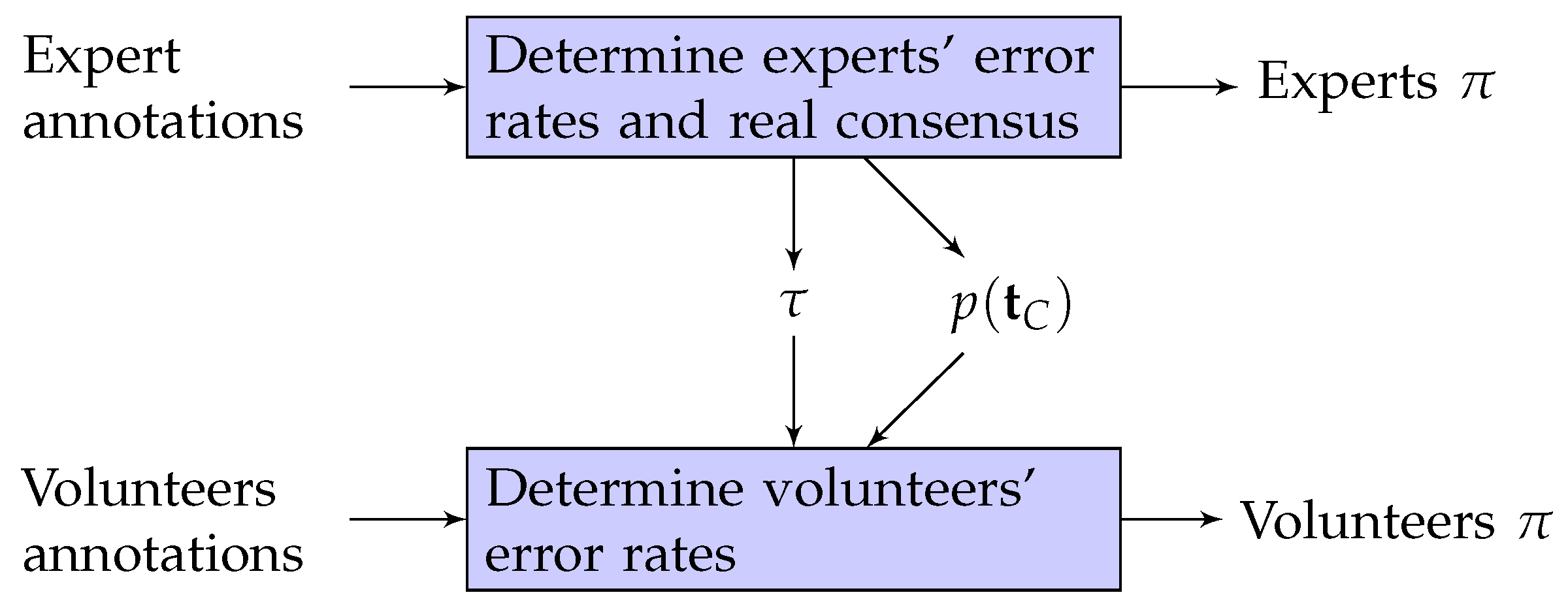

5.1. Data and Methodology

5.2. Evaluating Expert Infallibility

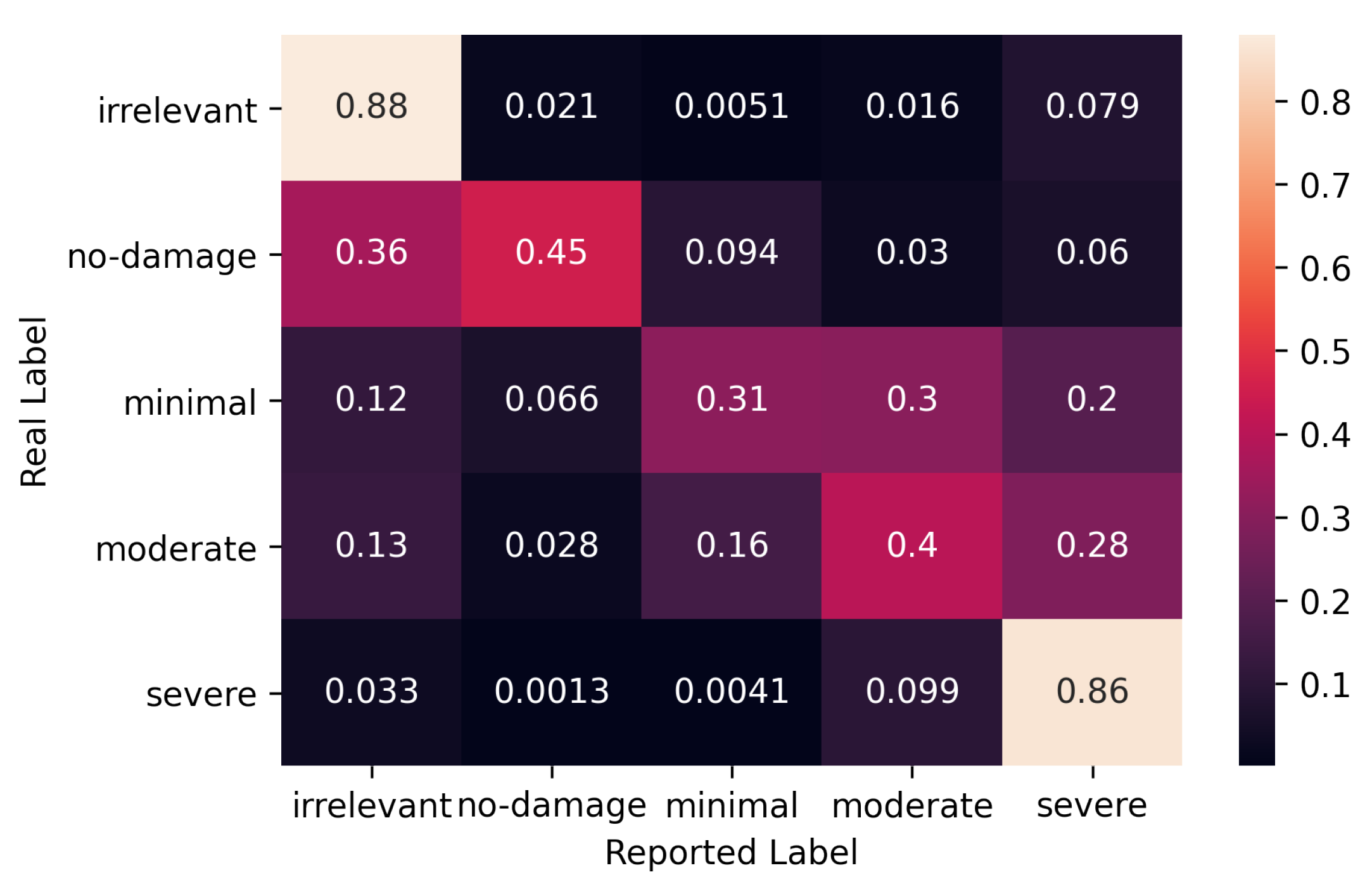

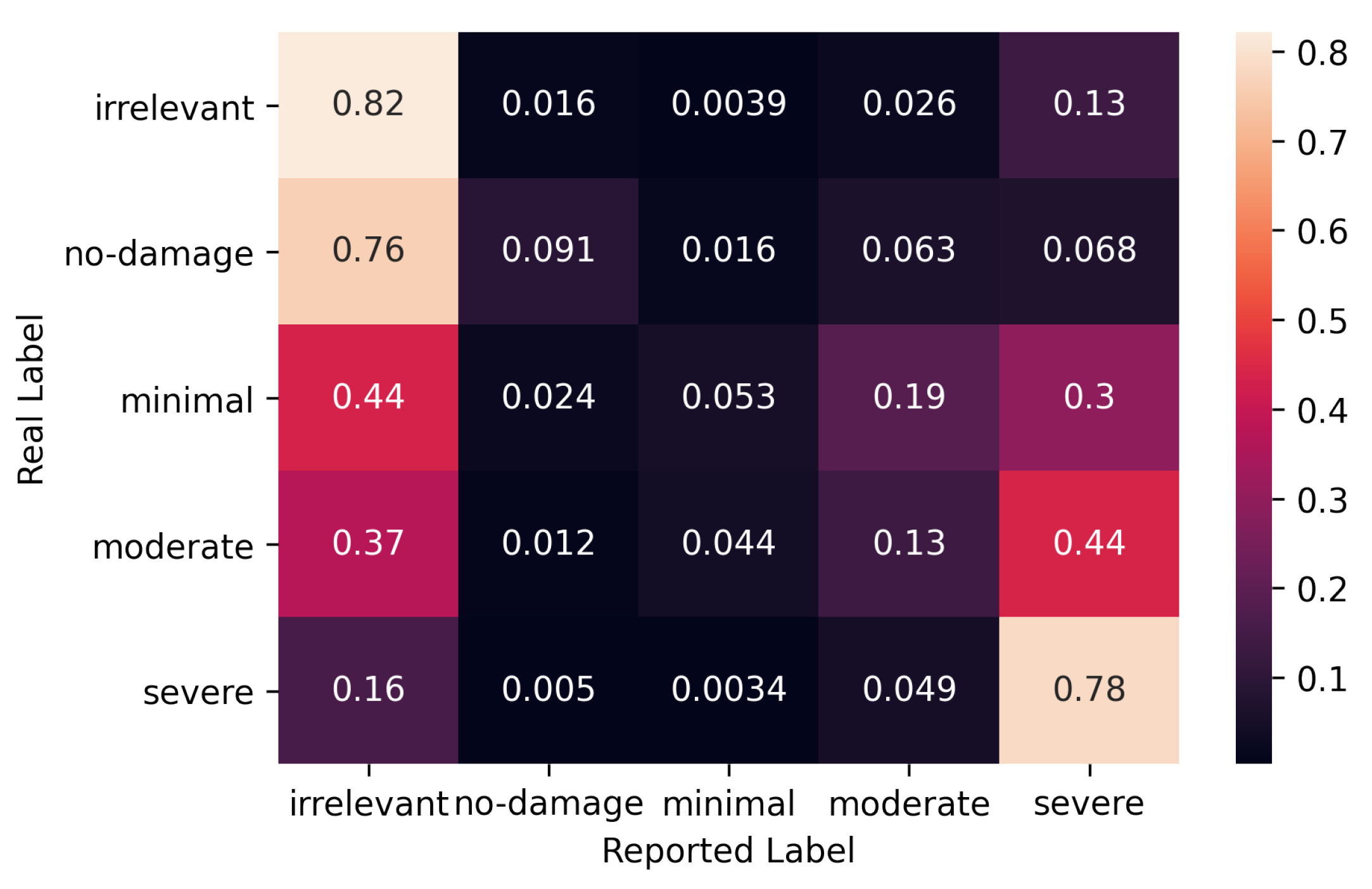

5.3. Error Rates for Experts

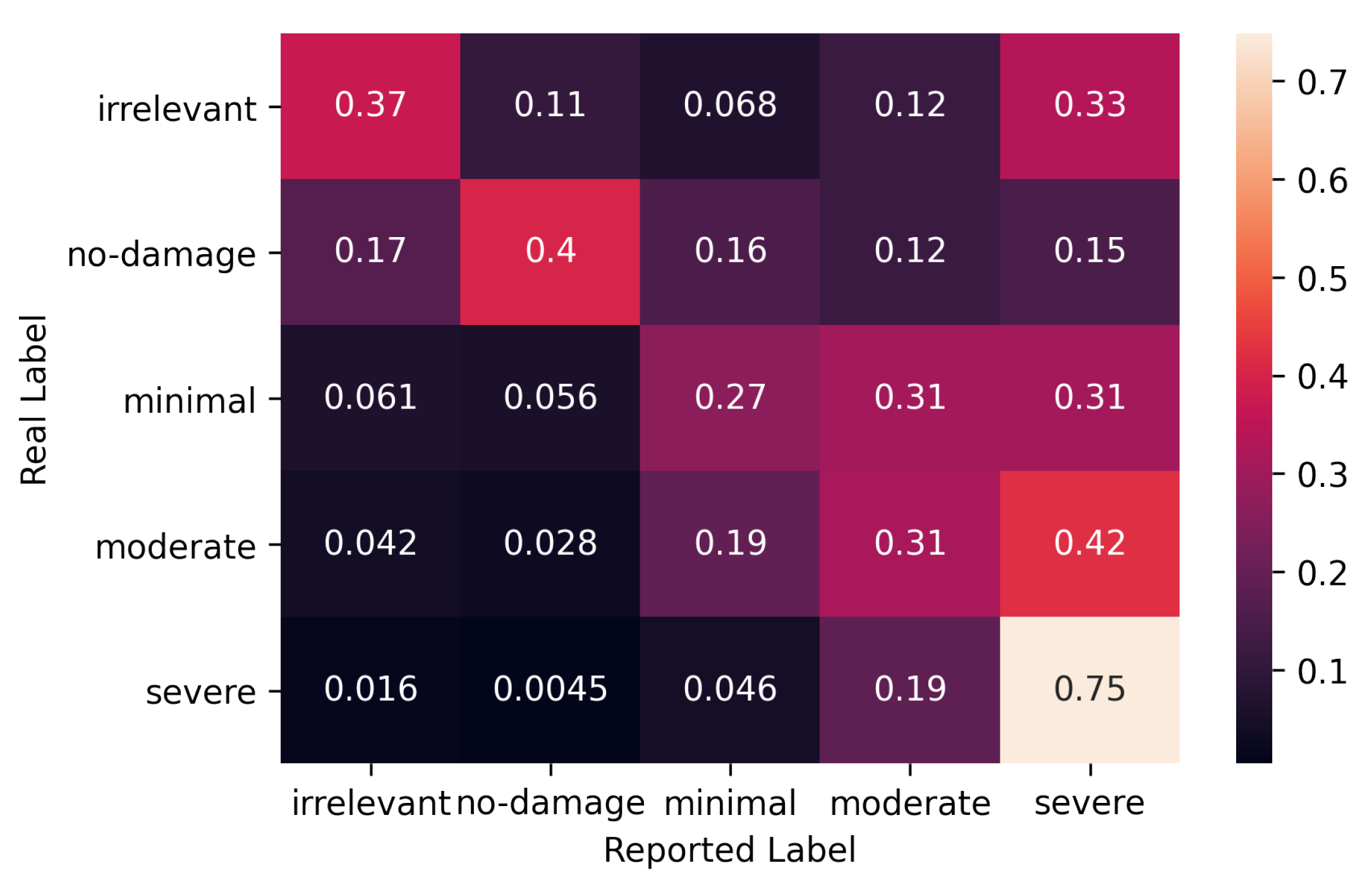

5.4. Evaluation of Volunteer Crowd

5.5. Evaluation of Paid Crowd

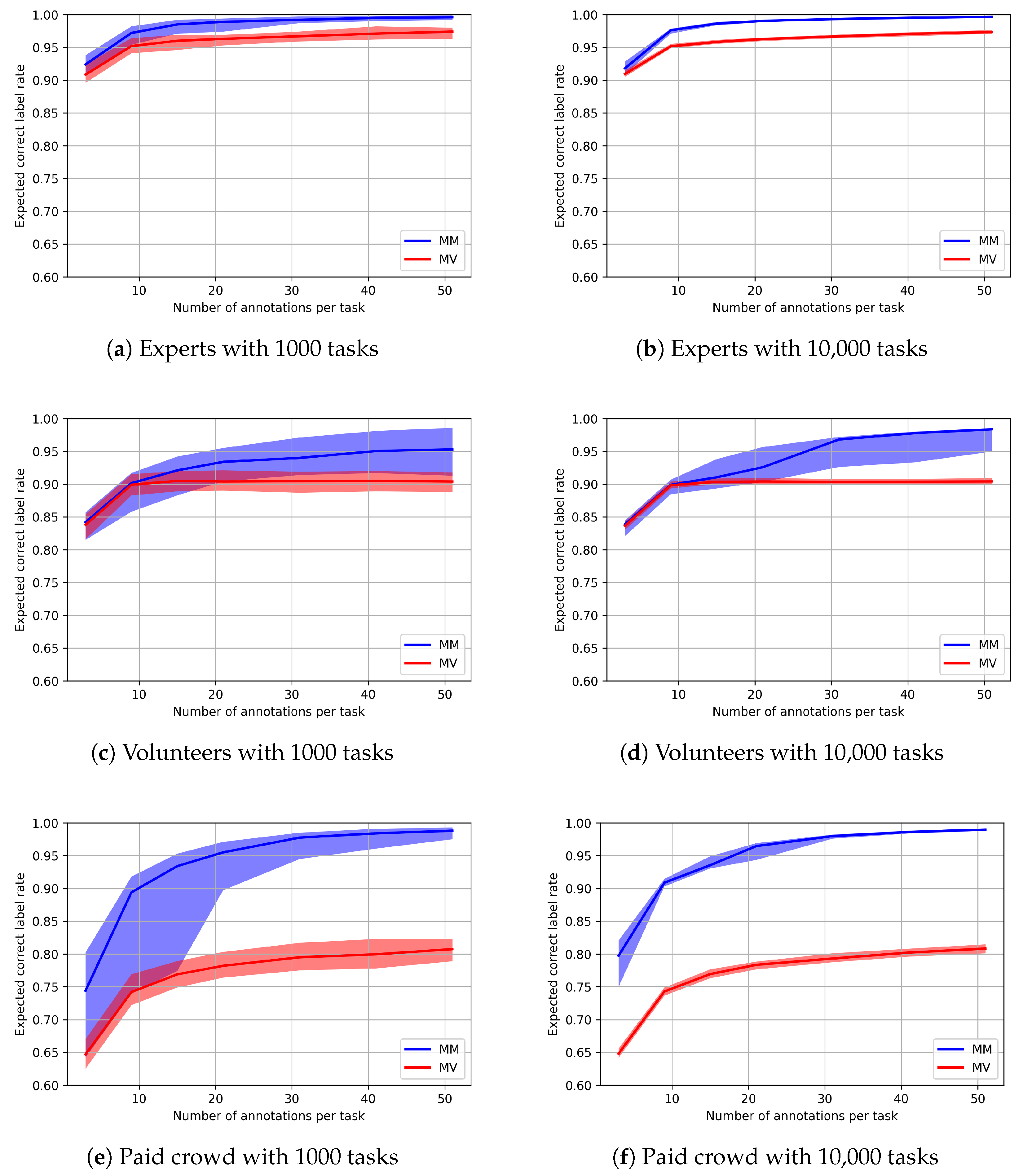

5.6. Prospective Comparison

6. Conclusions and Future Work

- can be applied in scenarios where the hypothesis of infallible experts does not hold;

- can be used to characterize and study the different behaviors of different communities (in our case, experts, volunteers and paid workers);

- can be actioned to perform prospective analysis, allowing the manager of a citizen science experiment to make informed decisions on aspects such as the number of annotations required for each task to reach a specific level of accuracy.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Gura, T. Citizen science: Amateur experts. Nature 2013, 496, 259–261. [Google Scholar] [CrossRef]

- Haklay, M. Citizen Science and Volunteered Geographic Information: Overview and Typology of Participation. In Crowdsourcing Geographic Knowledge: Volunteered Geographic Information (VGI) in Theory and Practice; Sui, D., Elwood, S., Goodchild, M., Eds.; Springer: Dordrecht, The Netherlands, 2013; pp. 105–122. [Google Scholar] [CrossRef]

- González, D.L.; Alejandrodob; Therealmarv; Keegan, M.; Mendes, A.; Pollock, R.; Babu, N.; Fiordalisi, F.; Oliveira, N.A.; Andersson, K.; et al. Scifabric/Pybossa: v3.1.3. 2020. Available online: https://zenodo.org/record/3882334 (accessed on 16 August 2020).

- Lau, B.P.L.; Marakkalage, S.H.; Zhou, Y.; Hassan, N.U.; Yuen, C.; Zhang, M.; Tan, U.X. A survey of data fusion in smart city applications. Inf. Fusion 2019, 52, 357–374. [Google Scholar] [CrossRef]

- Fehri, R.; Bogaert, P.; Khlifi, S.; Vanclooster, M. Data fusion of citizen-generated smartphone discharge measurements in Tunisia. J. Hydrol. 2020, 590, 125518. [Google Scholar] [CrossRef]

- Kosmidis, E.; Syropoulou, P.; Tekes, S.; Schneider, P.; Spyromitros-Xioufis, E.; Riga, M.; Charitidis, P.; Moumtzidou, A.; Papadopoulos, S.; Vrochidis, S.; et al. hackAIR: Towards Raising Awareness about Air Quality in Europe by Developing a Collective Online Platform. ISPRS Int. J. Geo-Inf. 2018, 7, 187. [Google Scholar] [CrossRef]

- Feldman, A.M. Majority Voting. In Welfare Economics and Social Choice Theory; Feldman, A.M., Ed.; Springer: Boston, MA, USA, 1980; pp. 161–177. [Google Scholar] [CrossRef]

- Moss, S. Probabilistic Knowledge; Oxford University Press: Oxford, UK; New York, NY, USA, 2018. [Google Scholar]

- Shannon, C.E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Cover, T.M.; Thomas, J.A. Elements of Information Theory, 2nd ed.; John Wiley & Sons: Hoboken, NJ, USA, 2006. [Google Scholar]

- Collins, L.M.; Lanza, S.T. Latent Class and Latent Transition Analysis: With Applications in the Social, Behavioral, and Health Sciences; Wiley Series in Probability and Statistics; Wiley: New York, NY, USA, 2009. [Google Scholar]

- He, J.; Fan, X. Latent class analysis. Encycl. Personal. Individ. Differ. 2018, 1, 1–4. [Google Scholar]

- Dawid, A.P.; Skene, A.M. Maximum Likelihood Estimation of Observer Error-Rates Using the EM Algorithm. J. R. Stat. Soc. Ser. C (Appl. Stat.) 1979, 28, 20–28. [Google Scholar] [CrossRef]

- Paun, S.; Carpenter, B.; Chamberlain, J.; Hovy, D.; Kruschwitz, U.; Poesio, M. Comparing Bayesian Models of Annotation. Trans. Assoc. Comput. Linguist. 2018, 6, 571–585. [Google Scholar] [CrossRef]

- Passonneau, R.J.; Carpenter, B. The Benefits of a Model of Annotation. Trans. Assoc. Comput. Linguist. 2014, 2, 311–326. [Google Scholar] [CrossRef]

- Inel, O.; Khamkham, K.; Cristea, T.; Dumitrache, A.; Rutjes, A.; van der Ploeg, J.; Romaszko, L.; Aroyo, L.; Sips, R.J. CrowdTruth: Machine-Human Computation Framework for Harnessing Disagreement in Gathering Annotated Data. In The Semantic Web—ISWC 2014; Mika, P., Tudorache, T., Bernstein, A., Welty, C., Knoblock, C., Vrandečić, D., Groth, P., Noy, N., Janowicz, K., Goble, C., Eds.; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2014; pp. 486–504. [Google Scholar] [CrossRef]

- Dumitrache, A.; Inel, O.; Timmermans, B.; Ortiz, C.; Sips, R.J.; Aroyo, L.; Welty, C. Empirical methodology for crowdsourcing ground truth. Semant. Web 2020, 1–19. [Google Scholar] [CrossRef]

- Aroyo, L.; Welty, C. Truth Is a Lie: Crowd Truth and the Seven Myths of Human Annotation. AI Mag. 2015, 36, 15–24. [Google Scholar] [CrossRef]

- Dumitrache, A.; Inel, O.; Aroyo, L.; Timmermans, B.; Welty, C. CrowdTruth 2.0: Quality Metrics for Crowdsourcing with Disagreement. arXiv 2018, arXiv:1808.06080. [Google Scholar]

- Bu, Q.; Simperl, E.; Chapman, A.; Maddalena, E. Quality assessment in crowdsourced classification tasks. Int. J. Crowd Sci. 2019, 3, 222–248. [Google Scholar] [CrossRef]

- Dempster, A.P.; Laird, N.M.; Rubin, D.B. Maximum Likelihood from Incomplete Data via the EM Algorithm. J. R. Stat. Soc. Ser. B (Methodol.) 1977, 39, 1–38. [Google Scholar]

- Karger, D.R.; Oh, S.; Shah, D. Iterative Learning for Reliable Crowdsourcing Systems. In Advances in Neural Information Processing Systems 24; Shawe-Taylor, J., Zemel, R.S., Bartlett, P.L., Pereira, F., Weinberger, K.Q., Eds.; Curran Associates, Inc.: New York, NY, USA, 2011; pp. 1953–1961. [Google Scholar]

- Nguyen, V.A.; Shi, P.; Ramakrishnan, J.; Weinsberg, U.; Lin, H.C.; Metz, S.; Chandra, N.; Jing, J.; Kalimeris, D. CLARA: Confidence of Labels and Raters. In Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, KDD ’20, New York, NY, USA, 23–27 August 2020; Association for Computing Machinery: New York, NY, USA, 2020; pp. 2542–2552. [Google Scholar] [CrossRef]

- Pipino, L.L.; Lee, Y.W.; Wang, R.Y. Data Quality Assessment. Commun. ACM 2002, 45, 211–218. [Google Scholar] [CrossRef]

- Freitag, A.; Meyer, R.; Whiteman, L. Strategies Employed by Citizen Science Programs to Increase the Credibility of Their Data. Citiz. Sci. Theory Pract. 2016, 1, 2. [Google Scholar] [CrossRef]

- Wiggins, A.; Newman, G.; Stevenson, R.D.; Crowston, K. Mechanisms for Data Quality and Validation in Citizen Science. In Proceedings of the IEEE Seventh International Conference on e-Science Workshops, Stockholm, Sweden, 5–8 December 2011; pp. 14–19. [Google Scholar] [CrossRef]

- Ho, C.J.; Vaughan, J. Online Task Assignment in Crowdsourcing Markets. In Proceedings of the AAAI Conference on Artificial Intelligence, Toronto, ON, Canada, 22–26 July 2012; Volume 26. [Google Scholar]

- Imran, M.; Castillo, C.; Lucas, J.; Meier, P.; Vieweg, S. AIDR: Artificial intelligence for disaster response. In Proceedings of the 23rd International Conference on World Wide Web, Seoul, Korea, 7–11 April 2014; pp. 159–162. [Google Scholar]

- van Smeden, M.; Naaktgeboren, C.A.; Reitsma, J.B.; Moons, K.G.M.; de Groot, J.A.H. Latent Class Models in Diagnostic Studies When There is No Reference Standard—A Systematic Review. Am. J. Epidemiol. 2014, 179, 423–431. [Google Scholar] [CrossRef]

- Imran, M.; Alam, F.; Qazi, U.; Peterson, S.; Ofli, F. Rapid Damage Assessment Using Social Media Images by Combining Human and Machine Intelligence. arXiv 2020, arXiv:2004.06675. [Google Scholar]

- Kirilenko, A.P.; Desell, T.; Kim, H.; Stepchenkova, S. Crowdsourcing analysis of Twitter data on climate change: Paid workers vs. volunteers. Sustainability 2017, 9, 2019. [Google Scholar] [CrossRef]

- Ravi Shankar, A.; Fernandez-Marquez, J.L.; Pernici, B.; Scalia, G.; Mondardini, M.R.; Di Marzo Serugendo, G. Crowd4Ems: A crowdsourcing platform for gathering and geolocating social media content in disaster response. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2019, 42, 331–340. [Google Scholar] [CrossRef]

- Gwet, K.L. Handbook of Inter-Rater Reliability: The Definitive Guide to Measuring the Extent of Agreement Among Raters, 4th ed.; Advanced Analytics, LLC: Gaithersburg, MD, USA, 2014. [Google Scholar]

- Landis, J.R.; Koch, G.G. The Measurement of Observer Agreement for Categorical Data. Biometrics 1977, 33, 159–174. [Google Scholar] [CrossRef] [PubMed]

- Sheng, V.S.; Provost, F.; Ipeirotis, P.G. Get another label? improving data quality and data mining using multiple, noisy labelers. In Proceeding of the 14th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining—KDD 08, Las Vegas, NV, USA, 24–27 August 2008; ACM Press: New York, NY, USA, 2008; p. 614. [Google Scholar] [CrossRef]

- Carpenter, B.; Gelman, A.; Hoffman, M.D.; Lee, D.; Goodrich, B.; Betancourt, M.; Brubaker, M.; Guo, J.; Li, P.; Riddell, A. Stan: A Probabilistic Programming Language. J. Stat. Softw. 2017, 76, 1–32. [Google Scholar] [CrossRef]

- Rodríguez, C.E.; Walker, S.G. Label Switching in Bayesian Mixture Models: Deterministic Relabeling Strategies. J. Comput. Graph. Stat. 2014, 23, 25–45. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cerquides, J.; Mülâyim, M.O.; Hernández-González, J.; Ravi Shankar, A.; Fernandez-Marquez, J.L. A Conceptual Probabilistic Framework for Annotation Aggregation of Citizen Science Data. Mathematics 2021, 9, 875. https://doi.org/10.3390/math9080875

Cerquides J, Mülâyim MO, Hernández-González J, Ravi Shankar A, Fernandez-Marquez JL. A Conceptual Probabilistic Framework for Annotation Aggregation of Citizen Science Data. Mathematics. 2021; 9(8):875. https://doi.org/10.3390/math9080875

Chicago/Turabian StyleCerquides, Jesus, Mehmet Oğuz Mülâyim, Jerónimo Hernández-González, Amudha Ravi Shankar, and Jose Luis Fernandez-Marquez. 2021. "A Conceptual Probabilistic Framework for Annotation Aggregation of Citizen Science Data" Mathematics 9, no. 8: 875. https://doi.org/10.3390/math9080875

APA StyleCerquides, J., Mülâyim, M. O., Hernández-González, J., Ravi Shankar, A., & Fernandez-Marquez, J. L. (2021). A Conceptual Probabilistic Framework for Annotation Aggregation of Citizen Science Data. Mathematics, 9(8), 875. https://doi.org/10.3390/math9080875