A Functional Interpolation Approach to Compute Periodic Orbits in the Circular-Restricted Three-Body Problem

Abstract

1. Introduction

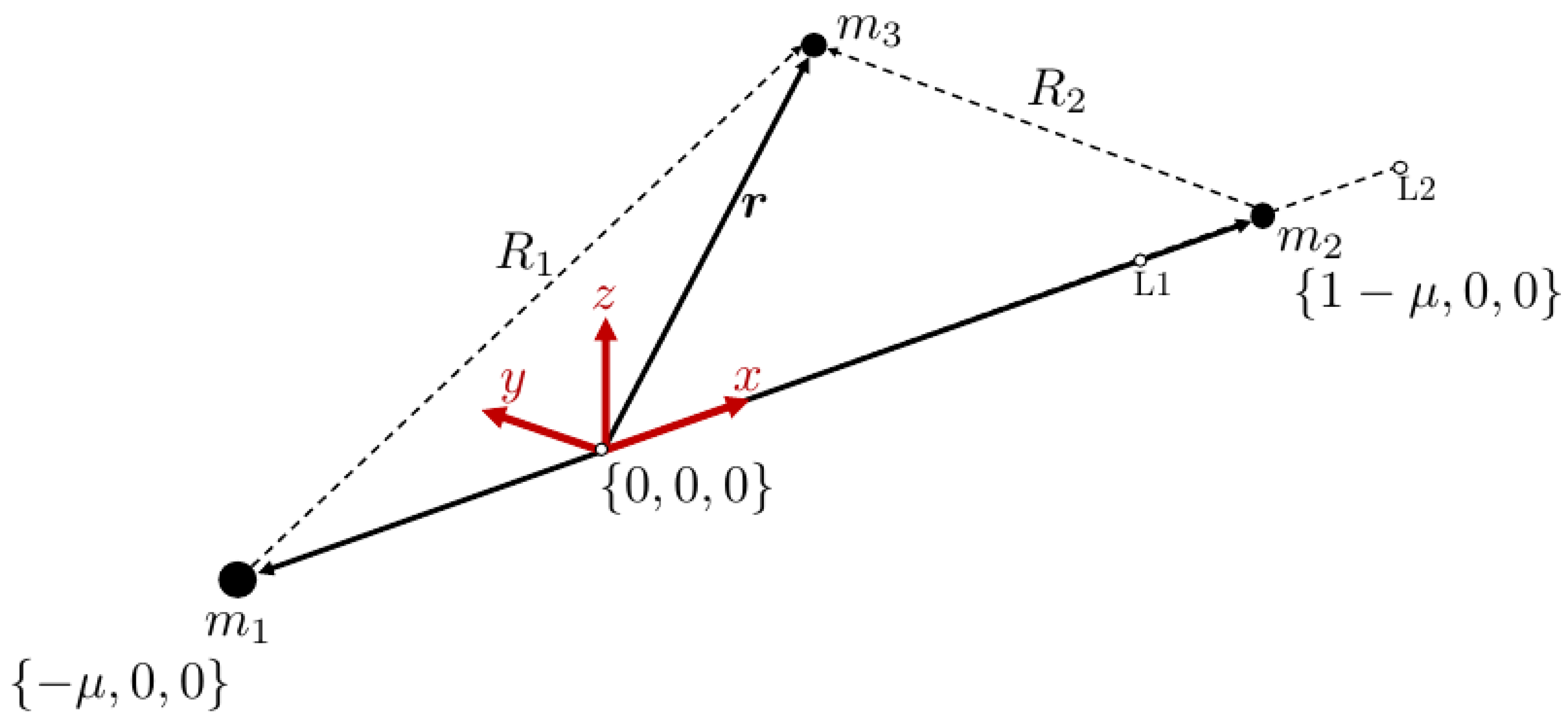

2. Circular-Restricted Three-Body Problem

3. Solving the Problem Using the Theory of Functional Connections Framework

- 1.

- Choose k linearly independent support functions, .

- 2.

- Write each switching function as a linear combination of the support functions with k unknown coefficients.

- 3.

- Based on the switching function definition, write a system of equations to solve for the unknown coefficients.

3.1. Derivation of Constrained Expression

3.2. Definition of the Free Function

3.3. Discretization of the Domain

3.4. Solution of the Resulting Algebraic Equation

4. Numerical Test

4.1. Initialization

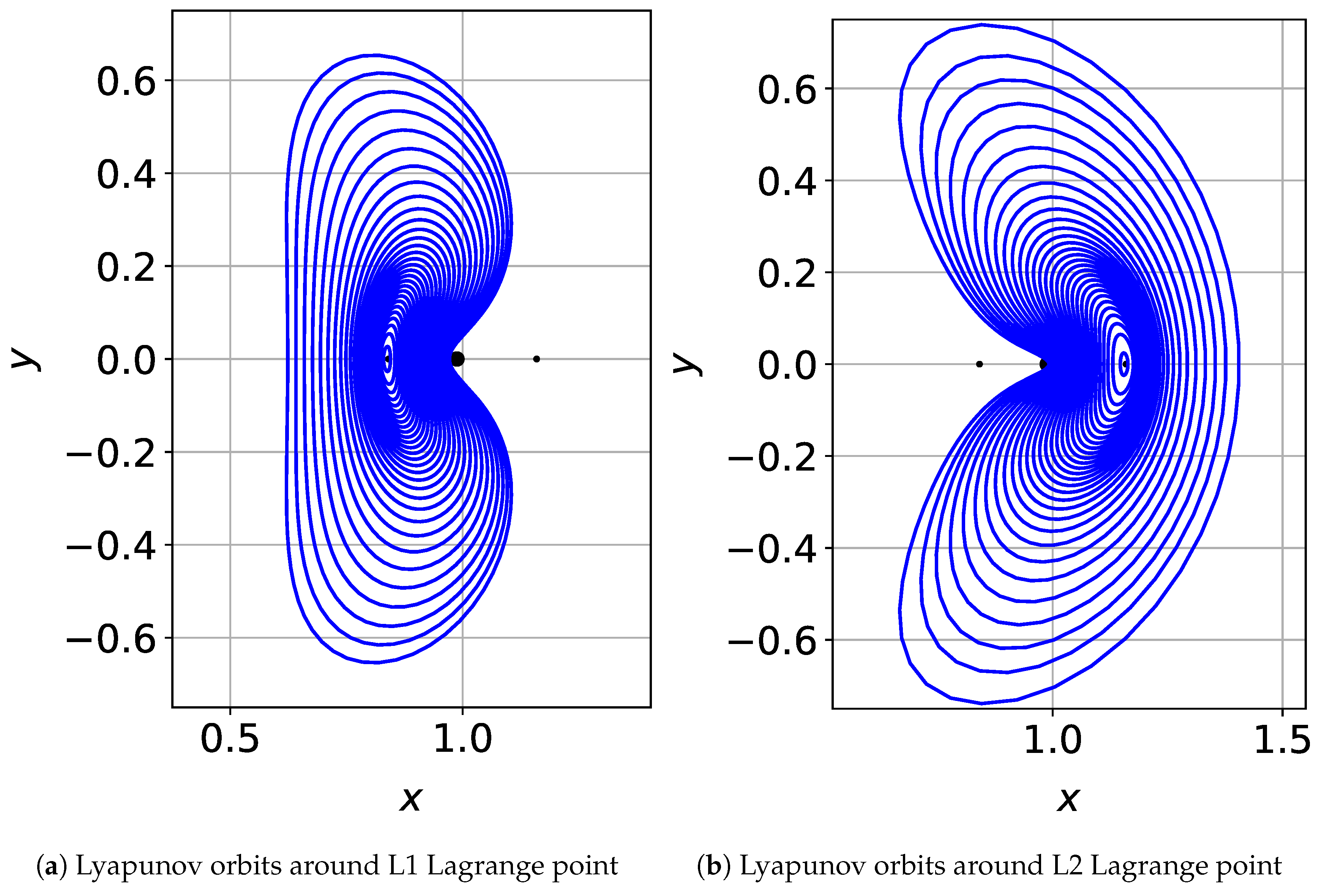

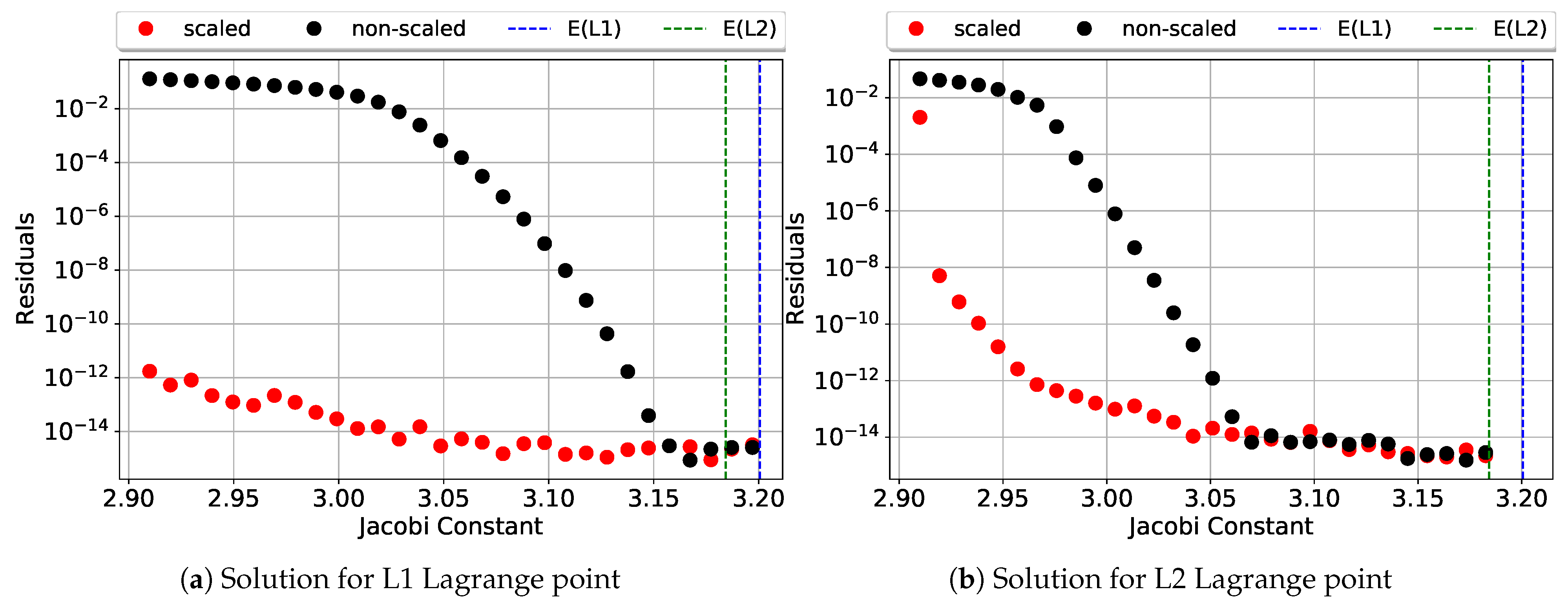

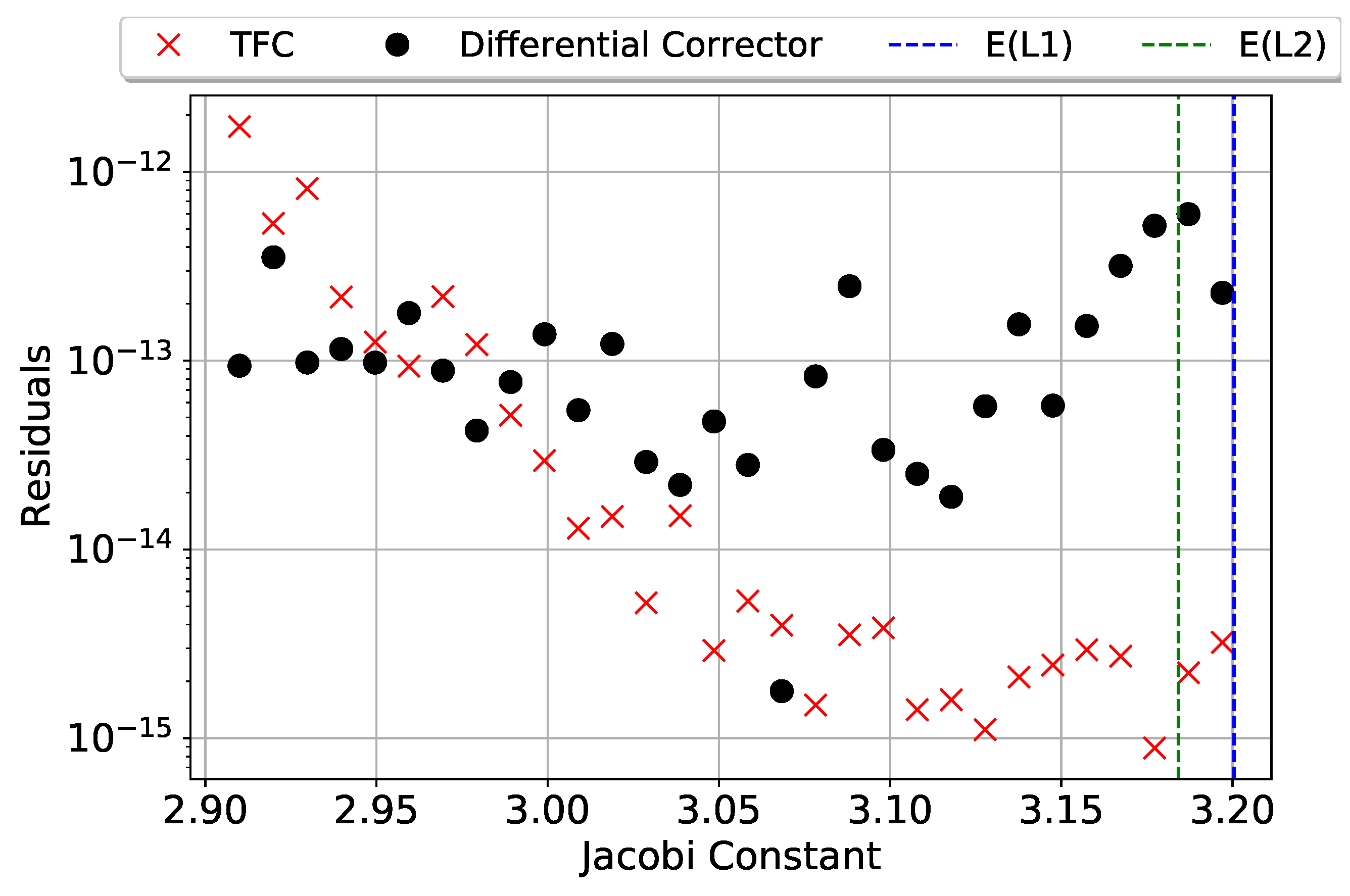

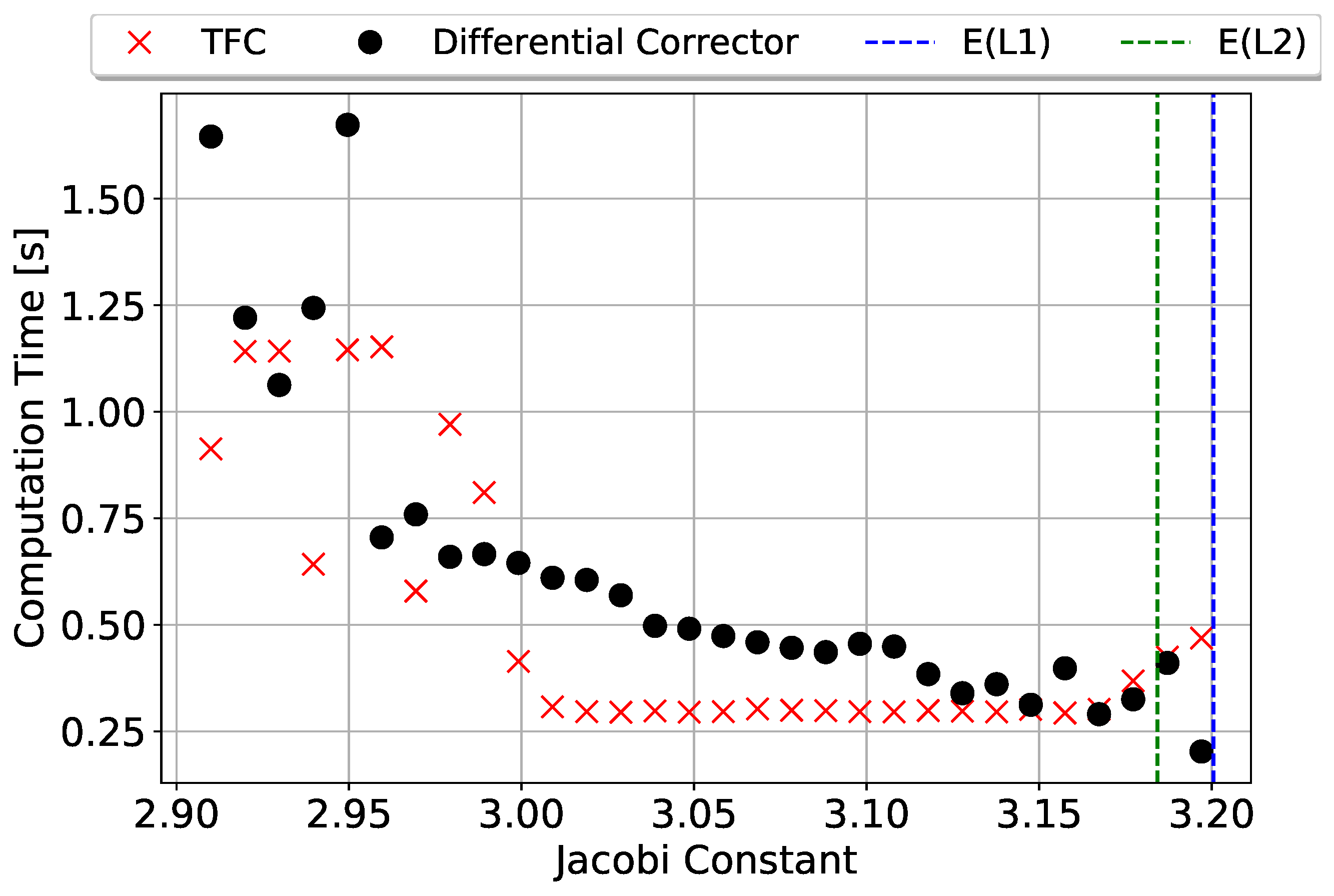

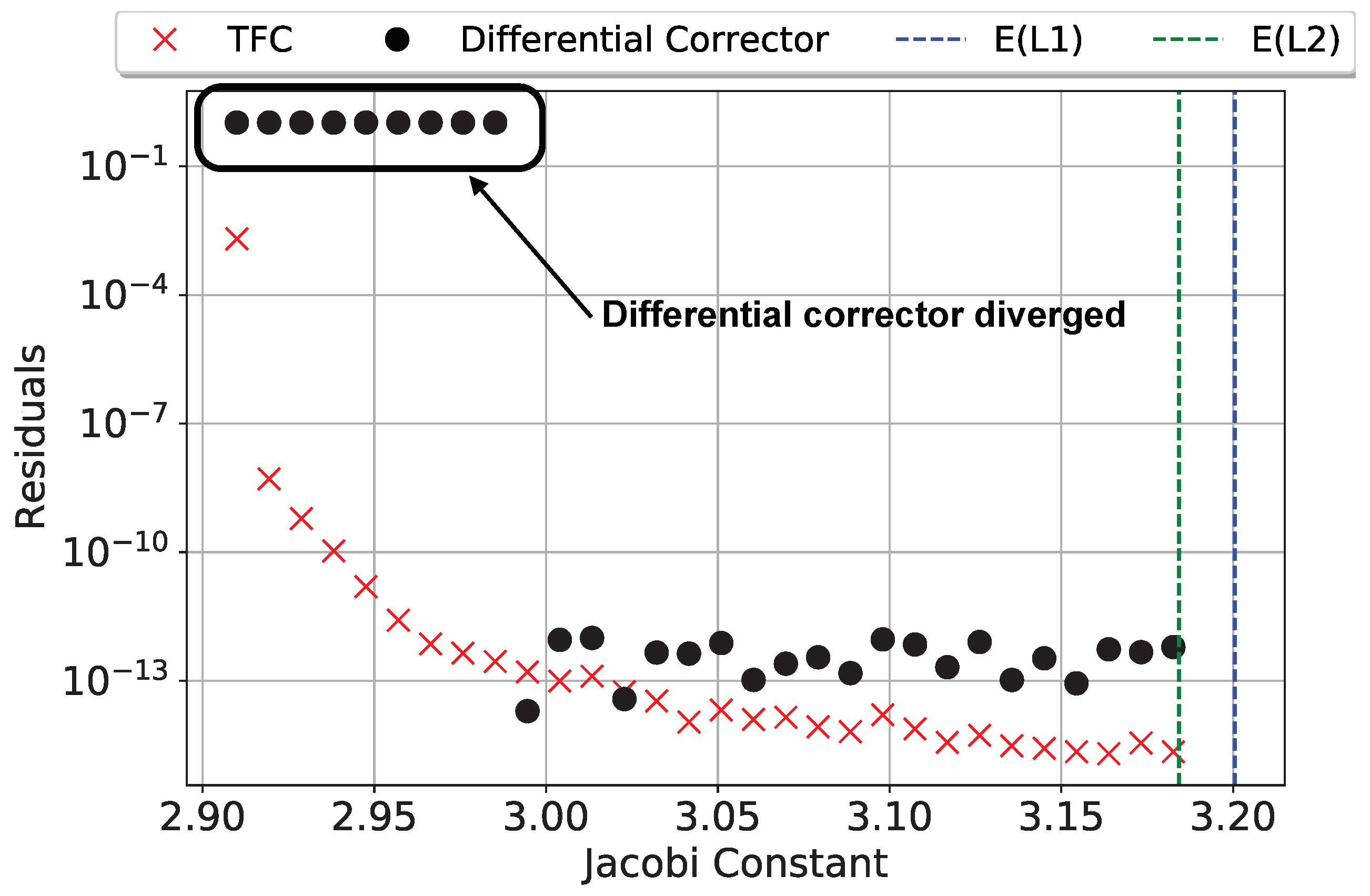

4.2. Lyapunov Orbits

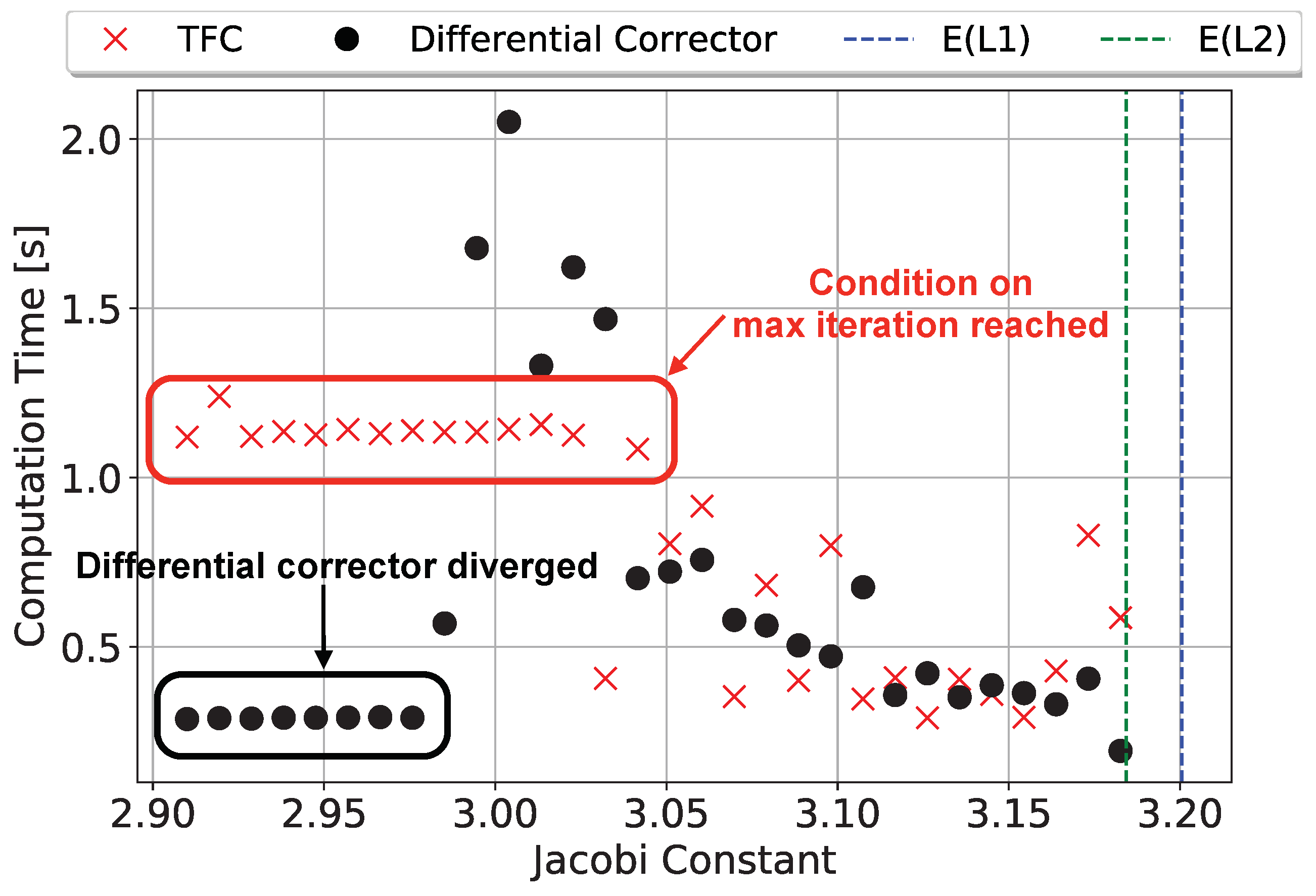

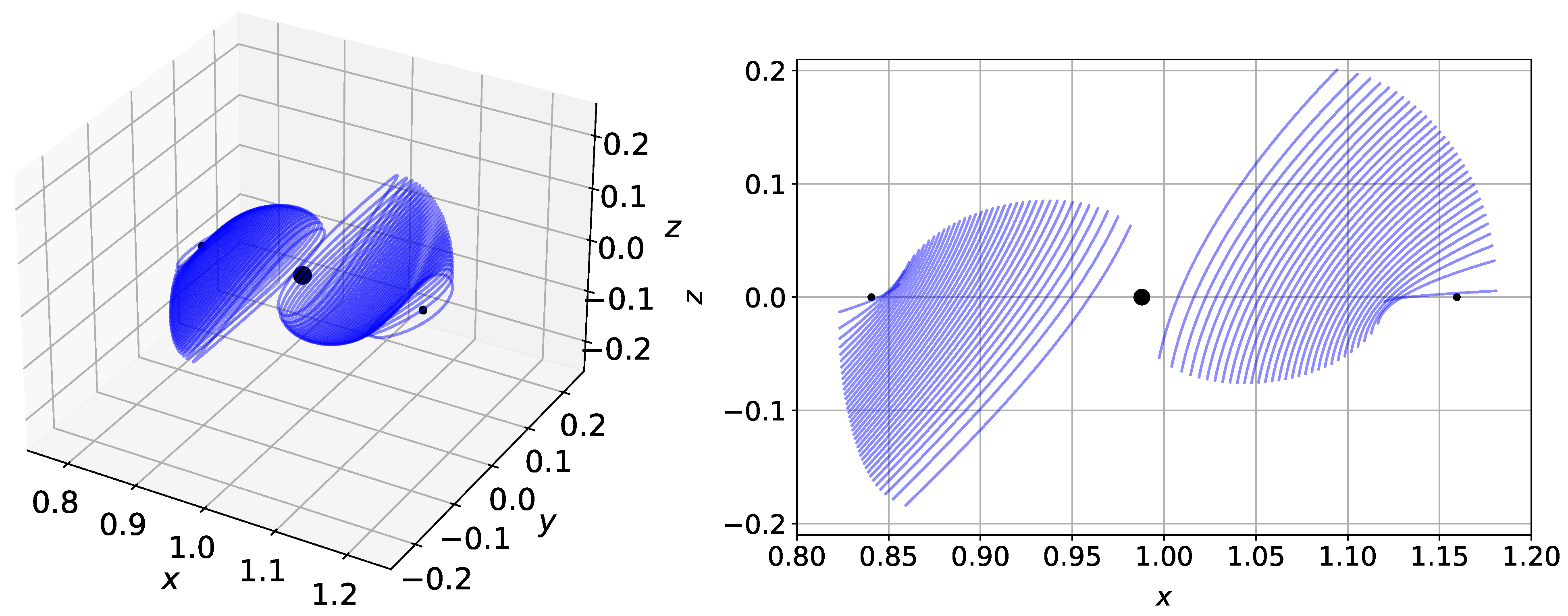

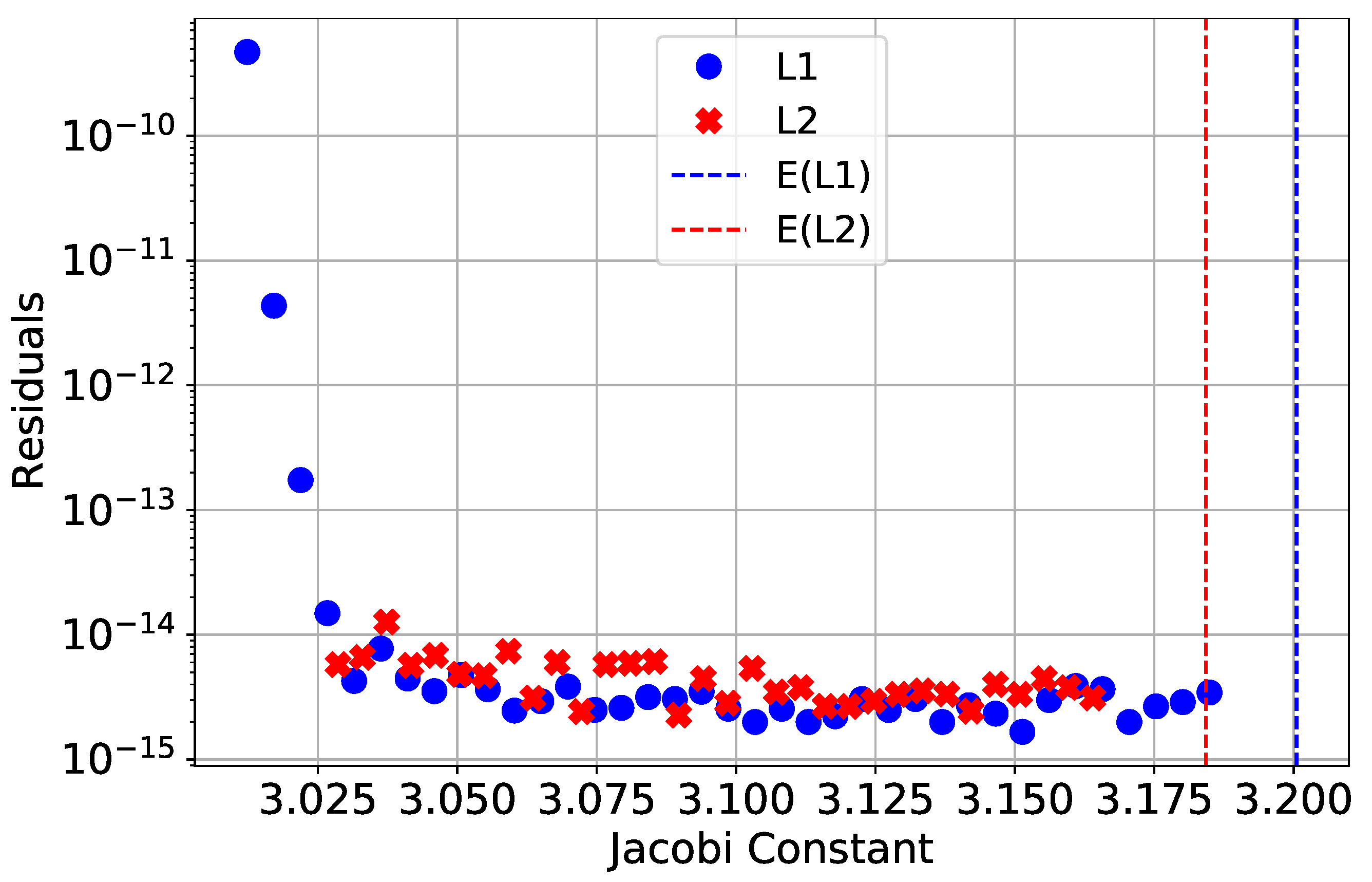

4.3. Halo Orbits

5. Conclusions

- Definition of the free function. In this study, Chebyshev orthogonal polynomials were used to define the free function; however, a large number of basis terms were needed to accurately describe the trajectories for both Lyapunov and Halo orbits. One idea to remedy this is to use a hybrid basis composed of terms to capture the periodic and non-periodic portions separately.

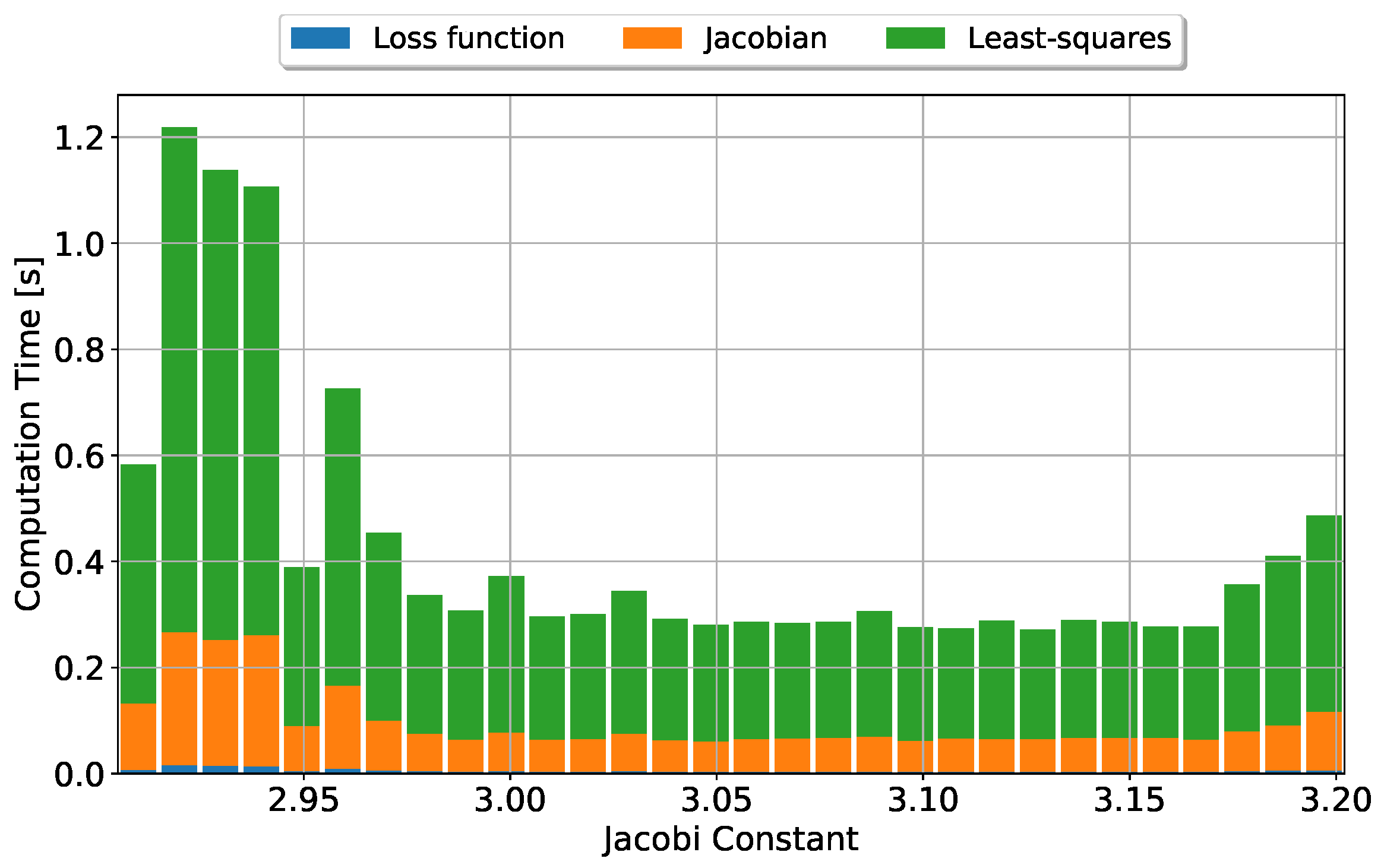

- Faster least-squares implementation. As seen in Figure 6, the major bottleneck with computation time lies in the least-squares portion, which is an order of magnitude slower than the evaluation of the loss vector and Jacobian combined. The authors acknowledge that a more efficient algorithm than NumPy’s pinv() could be implemented.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| CR3BP | circular-restricted three-body problem |

| ELM | Extreme Learning Machine |

| ISEE3 | International Sun-Earth Explorer 3 |

| JPL | Jet Propulsion Laboratory |

| NASA | National Aeronautics and Space Administration |

| NN | neural networks |

| NSTRF | NASA Space Technology Research Fellowship |

| ODE | ordinary differential equation |

| PDE | partial differential equation |

| SVD | singular-value decomposition |

| TFC | Theory of Functional Connections |

References

- Farquhar, R.W. The Control and Use of Libration-Point Satellites. Ph.D. Thesis, Department of Aeronautics and Astronautics, Stanford University, Stanford, CA, USA, 1968. [Google Scholar]

- Breakwell, J.V.; Brown, J.V. The ‘Halo’family of 3-Dimensional Periodic Orbits in the Earth-Moon Restricted 3-Body Problem. Celest. Mech. 1979, 20, 389–404. [Google Scholar] [CrossRef]

- Mortari, D. The Theory of Connections: Connecting Points. Mathematics 2017, 5, 57. [Google Scholar] [CrossRef]

- Leake, C.; Johnston, H.; Mortari, D. The Multivariate Theory of Functional Connections: Theory, Proofs, and Application in Partial Differential Equations. Mathematics 2020, 8, 1303. [Google Scholar] [CrossRef]

- Howell, K. Three-dimensional, Periodic, Halo Orbits. Celest. Mech. 1984, 32, 53–71. [Google Scholar] [CrossRef]

- Mortari, D.; Johnston, H.; Smith, L. High Accuracy Least-squares Solutions of Nonlinear Differential Equations. J. Comput. Appl. Math. 2019, 293–307. [Google Scholar] [CrossRef] [PubMed]

- Johnston, H.; Mortari, D. Least-squares Solutions of Boundary-value Problems in Hybrid Systems. J. Comput. Appl. Math. 2021, 393, 113524. [Google Scholar] [CrossRef]

- Furfaro, R.; Mortari, D. Least-squares Solution of a Class of Optimal Space Guidance Problems via Theory of Connections. Acta Astronaut. 2020, 168, 92–103. [Google Scholar] [CrossRef]

- Johnston, H.; Schiassi, E.; Furfaro, R.; Mortari, D. Fuel-Efficient Powered Descent Guidance on Large Planetary Bodies via Theory of Functional Connections. J. Astronaut. Sci. 2020, 67, 1521–1552. [Google Scholar] [CrossRef] [PubMed]

- Mortari, D.; Mai, T.; Efendiev, Y. Theory of Functional Connections Applied to Nonlinear Programming under Equality Constraints. In Proceedings of the 2019 AAS/AIAA Astrodynamics Specialist Conference, Portland, ME, USA, 11–15 August 2019. Paper AAS 19-675. [Google Scholar]

- Leake, C.; Mortari, D. Deep Theory of Functional Connections: A New Method for Estimating the Solutions of Partial Differential Equations. Mach. Learn. Knowl. Extr. 2020, 2, 37–55. [Google Scholar] [CrossRef] [PubMed]

- Leake, C.; Johnston, H.; Smith, L.; Mortari, D. Analytically Embedding Differential Equation Constraints into Least Squares Support Vector Machines Using the Theory of Functional Connections. Mach. Learn. Knowl. Extr. 2019, 1, 1058–1083. [Google Scholar] [CrossRef] [PubMed]

- Gil, A.; Segura, J.; Temme, N. Numerical Methods for Special Functions; Society for Industrial and Applied Mathematics: Philadelphia, PA, USA, 2007; pp. 51–80. [Google Scholar] [CrossRef]

- Lanczos, C. Applied Analysis; Dover Publications, Inc.: New York, NY, USA, 1957; p. 453. [Google Scholar]

- Huang, G.B.; Zhu, Q.Y.; Siew, C.K. Extreme Learning Machine: Theory and Applications. Neurocomputing 2006, 70, 489–501. [Google Scholar] [CrossRef]

- Schiassi, E.; Leake, C.; De Florio, M.; Johnston, H.; Furfaro, R.; Mortari, D. Extreme Theory of Functional Connections: A Physics-Informed Neural Network Method for Solving Parametric Differential Equations. arXiv 2020, arXiv:2005.10632. [Google Scholar]

- Schiassi, E.; D’Ambrosio, A.; Johnston, H.; de Florio, M.; Furfaro, R.; Curti, F.; Mortari, D. Physics-Informed Extreme Theory of Functional Connections Applied to Optimal Orbit Transfer. In Proceedings of the AAS/AIAA Astrodynamics Specialist Conference, Lake Tahoe, CA, USA, 9–13 August 2020. AAS 20-524. [Google Scholar]

- Lanczos, C. Applied Analysis. In Progress in Industrial Mathematics at ECMI 2008; Dover Publications, Inc.: New York, NY, USA, 1957; p. 504. [Google Scholar]

- Wright, K. Chebyshev Collocation Methods for Ordinary Differential Equations. Comput. J. 1964, 6, 358–365. [Google Scholar] [CrossRef]

- Frostig, R.; Johnson, M.; Leary, C. Compiling Machine Learning Programs via High-level Tracing. In Proceedings of the SysML Conference, Stanford, CA, USA, 15–16 February 2018. [Google Scholar]

- Bradbury, J.; Frostig, R.; Hawkins, P.; Johnson, M.J.; Leary, C.; Maclaurin, D.; Wanderman-Milne, S. JAX: Composable Transformations of Python+NumPy Programs. 2018. Available online: http://github.com/google/jax (accessed on 5 February 2021).

- Baydin, A.G.; Pearlmutter, B.A.; Radul, A.A.; Siskind, J.M. Automatic Differentiation in Machine Learning: A Survey. J. Mach. Learn. Res. 2018, 18, 1–43. [Google Scholar]

- Richardson, D.L. Analytic Construction of Periodic Orbits about the Collinear Points. Celest. Mech. 1980, 22, 241–253. [Google Scholar] [CrossRef]

- Leake, C.; Johnston, H. TFC: A Functional Interpolation Framework. 2020. Available online: https://github.com/leakec/tfc (accessed on 15 February 2021).

| Variable | Value |

|---|---|

| Earth mass (kg) | |

| Moon mass (kg) |

| Variable | Value |

|---|---|

| N [number of points] | 140 [Lyapunov] or 200 [Halo] |

| m [basis terms] | 130 [Lyapunov] or 190 [Halo] |

| [tolerance] | |

| Maximum iterations | 20 |

| Least-squares method | numpy.pinv() |

| Variable | Average (ms) | Standard Deviation (ms) |

|---|---|---|

| Loss vector | 5.69 | 3.46 |

| Jacobian | 91.1 | 55.8 |

| Least-squares | 329.8 | 203.8 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Johnston, H.; Lo, M.W.; Mortari, D. A Functional Interpolation Approach to Compute Periodic Orbits in the Circular-Restricted Three-Body Problem. Mathematics 2021, 9, 1210. https://doi.org/10.3390/math9111210

Johnston H, Lo MW, Mortari D. A Functional Interpolation Approach to Compute Periodic Orbits in the Circular-Restricted Three-Body Problem. Mathematics. 2021; 9(11):1210. https://doi.org/10.3390/math9111210

Chicago/Turabian StyleJohnston, Hunter, Martin W. Lo, and Daniele Mortari. 2021. "A Functional Interpolation Approach to Compute Periodic Orbits in the Circular-Restricted Three-Body Problem" Mathematics 9, no. 11: 1210. https://doi.org/10.3390/math9111210

APA StyleJohnston, H., Lo, M. W., & Mortari, D. (2021). A Functional Interpolation Approach to Compute Periodic Orbits in the Circular-Restricted Three-Body Problem. Mathematics, 9(11), 1210. https://doi.org/10.3390/math9111210