Robust Aggregation in Over-the-Air Computation with Federated Learning: A Semantic Anti-Interference Approach

Abstract

1. Introduction

- The proposed SAIA uses a semantic autoencoder to compress model parameters into low-dimensional representations—reducing noise and communication overhead while preserving accuracy. It also adopts server-side median aggregation to filter outliers from noise/data heterogeneity, boosting robustness and convergence vs. mean-based methods for scalable training in volatile environments.

- For data heterogeneity and small local datasets, the SAIA applies a dual strategy (adaptive weighting + data augmentation). Accuracy-based adaptive weighting aligns local model updates to ensure consistent convergence; targeted augmentation expands effective dataset size for data-limited devices, mitigating overfitting for resource-constrained IoT.

- The SAIA integrates privacy protection and computational efficiency: it transmits compressed semantic representations to lower model reconstruction risks for sensitive IoT, and its lightweight design cuts communication/computational loads for resource-limited devices.

2. System Model

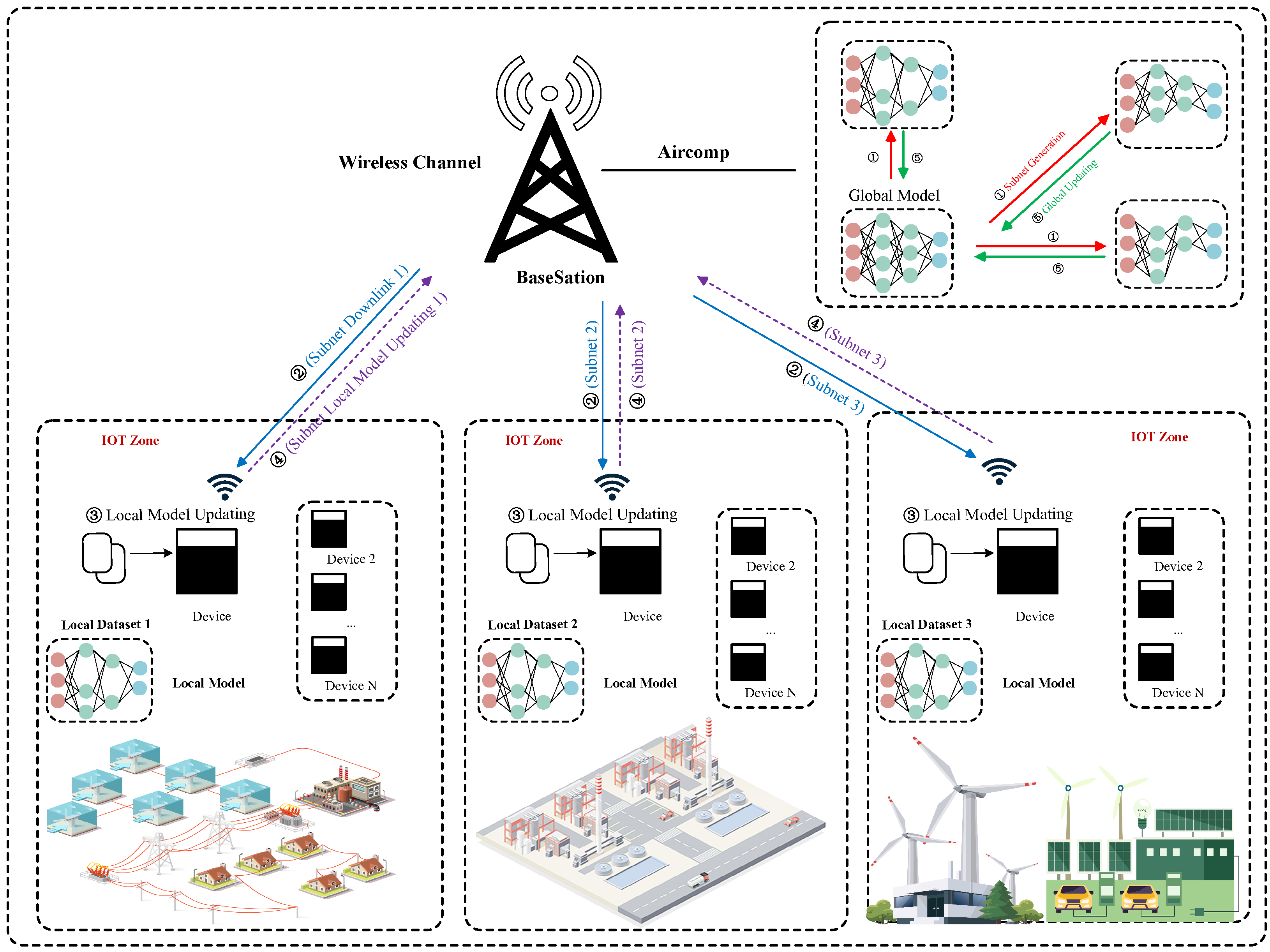

2.1. Communication Network Architecture

- Central Server: A robust ground station manages distributed training. It initializes global model parameters using methods such as Xavier initialization to support steady convergence. During each communication round t, the server sends to all clients over a downlink channel and gathers their updates via OTAC on the uplink, calculating new parameters .

- Clients: Each client k, typically an edge device like an IoT sensor with limited computing and storage, holds a local dataset . It trains a local CNN model with parameters using stochastic gradient descent with momentum (SGDM) at set learning rates, momentum, batch sizes, and epochs, then sends updates or encoded versions to the server via OTAC.

- Wireless Channel: The network uses a shared wireless medium for uplink (client to server) and downlink (server to client) communications. OTAC enables clients to transmit updates simultaneously, combining signals via analog superposition; the received signal includes noise. The channel assumes equal gains () via power control and minimal interference between clients, thanks to synchronized OTAC transmissions [5]. This setup isolates AWGN effects and facilitates testing of SAIA’s semantic encoding and aggregation methods. In real IoT environments, non-orthogonal signals or channel fading can introduce distortion. Our work centers on algorithms, with plans to tackle interference using techniques like beamforming or interference cancellation, as noted in Section 6. Channel gains are fixed at through power control for balanced contributions. The uplink faces additive white Gaussian noise, modeled as , with noise variance and identity matrix . The downlink is assumed to be noise-free, achieved with high transmit power and reliable modulation such as quadrature amplitude modulation (QAM).

- Communication Protocol: The system runs synchronously across T rounds, with all K clients joining each round. The AirFL process, shown in Figure 1, proceeds as follows for each round :

- Broadcast: The server sends global model parameters to all clients over a noise free downlink, ensuring each starts with the same model for round t.

- Local Training: Each client trains a local CNN on its dataset using SGDM to reduce the local loss (Equation (9)), generating updated parameters .

2.2. Mathematical Model

2.3. Training Objective

3. Problem Formulation

3.1. Assumptions and Practical Considerations

- Ideal Channel: We assume perfect power control (), a noise-free downlink, and an uplink affected only by Additive White Gaussian Noise (AWGN).

- Full Synchronous Participation: We assume all K clients participate in every communication round without failure or dropout.

- Non-Ideal Channel Model: Our simulations incorporate a more realistic channel with random client dropouts (simulating connection failure) and a simplified inter-user interference model to test the framework’s robustness.

- Asynchronous Participation: The dropout model simulates an asynchronous environment where a subset of clients contributes to the global model in any given round.

3.2. Problem 1: Channel Noise

3.3. Problem 2: Data Heterogeneity

3.4. Problem 3: Resource Constraints

3.5. Problem 4: Small Local Datasets

4. Proposed Solution

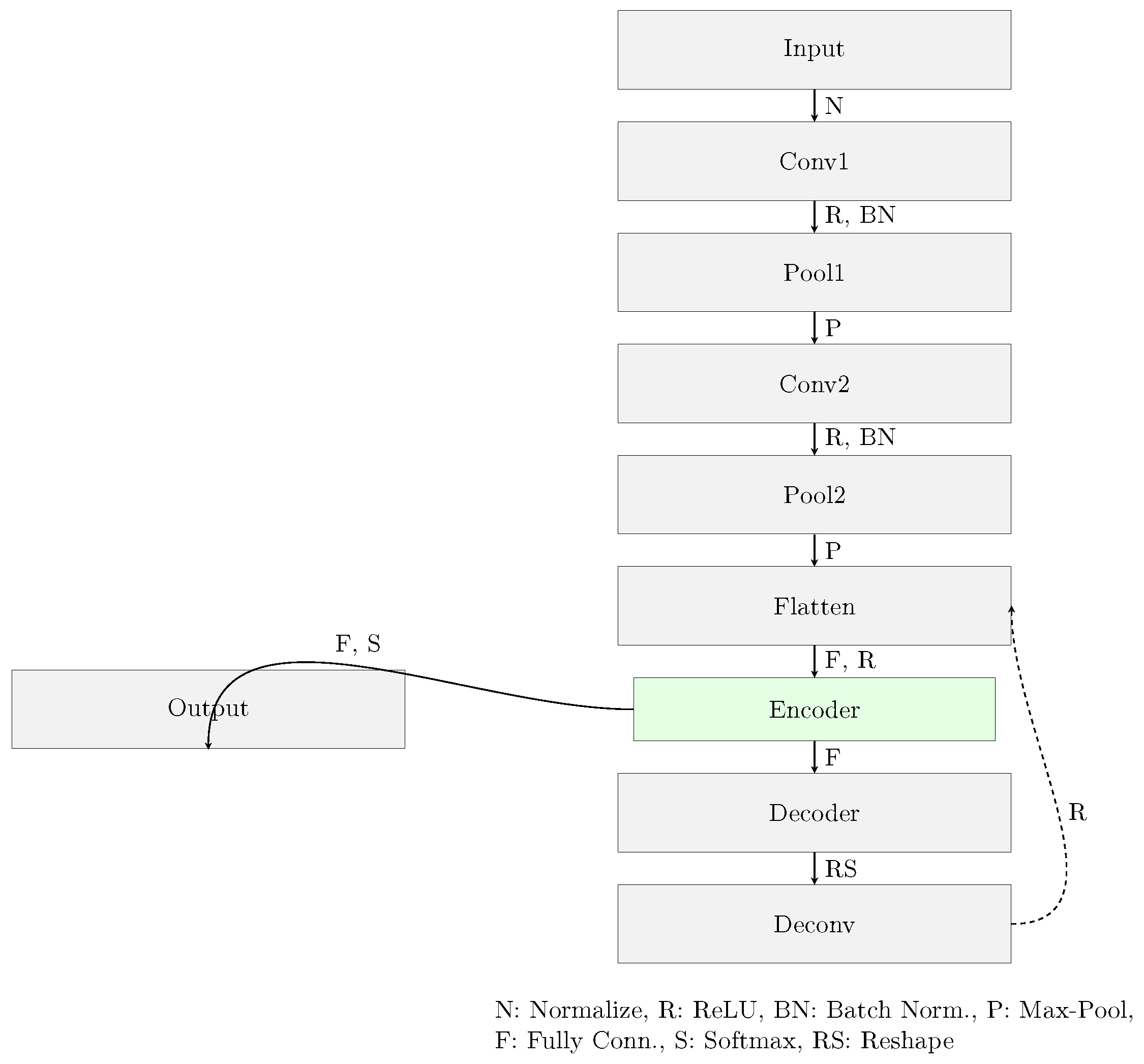

4.1. Neural Network Optimization Architecture

- Input Layer: Normalizes input to [0, 1].

- Convolutional Layer 1 (Conv1): Applies 32 filters (), stride 1, same padding, batch normalization, and ReLU.

- Pooling Layer 1 (Pool1): 2 × 2 max-pooling, stride 2, reducing spatial dimensions. No parameters.

- Convolutional Layer 2 (Conv2): Applies 64 filters (), stride 1, same padding, batch normalization, and ReLU.

- Pooling Layer 2 (Pool2): 2 × 2 max-pooling, stride 2.

- Semantic AutoEncoder Module: First, the encoder flattens Pool2 output and maps to a low-dimensional bottleneck via a fully connected layer with ReLU, yielding ; second, the decoder maps back to the flattened feature space, followed by reshaping and symmetric deconvolutional layers.

- Output Layer: Maps the bottleneck vector to C units with softmax.

4.2. Optimization Methods

- Semantic Encoding and Decoding: The encoder maps parameters to a semantic representation:and the decoder reconstructs:

- Data Augmentation: Clients apply transformations to increase effective dataset size:reducing variance:where (e.g., for rotations and translations), addressing Problem 4, assuming transformations preserve data distribution.

- Robust Aggregation: The SAIA uses iterative decoding, median aggregation, and adaptive weighting, formalized in Algorithm 1. The recovery of individual vectors from the superimposed signal as described in Equation (4) is a well-studied problem in the field of compressed sensing and modern multi-user communication [28,29]. Our approach leverages these principles by operating under the assumption that the semantic vectors exhibit sufficient sparsity. This sparsity is a common and desirable property in compressed latent representations generated by autoencoders, as it encourages disentangled and efficient features [30]. Under this sparsity assumption, Equation (5) becomes a standard L1-regularized least squares problem. Algorithms such as the Iterative Shrinkage-Thresholding Algorithm (ISTA) are specifically designed to solve such problems efficiently. Therefore, our work employs an ISTA-based method as the iterative decoder. This strategic choice allows us to leverage established signal processing techniques for the physical layer recovery, enabling our research to focus on the overarching end-to-end performance and robustness of the semantic-aware federated learning framework. Median aggregation minimizes the -error (Equation (18)), and adaptive weighting reduces divergence (Equation (24)), addressing Problems 1 and 2, assuming reliable client accuracy metrics.

| Algorithm 1 Semantic Anti-Interference Aggregation (SAIA). |

|

4.3. SAIA Algorithm

4.4. Complexity Analysis

5. Experimental Results and Discussion

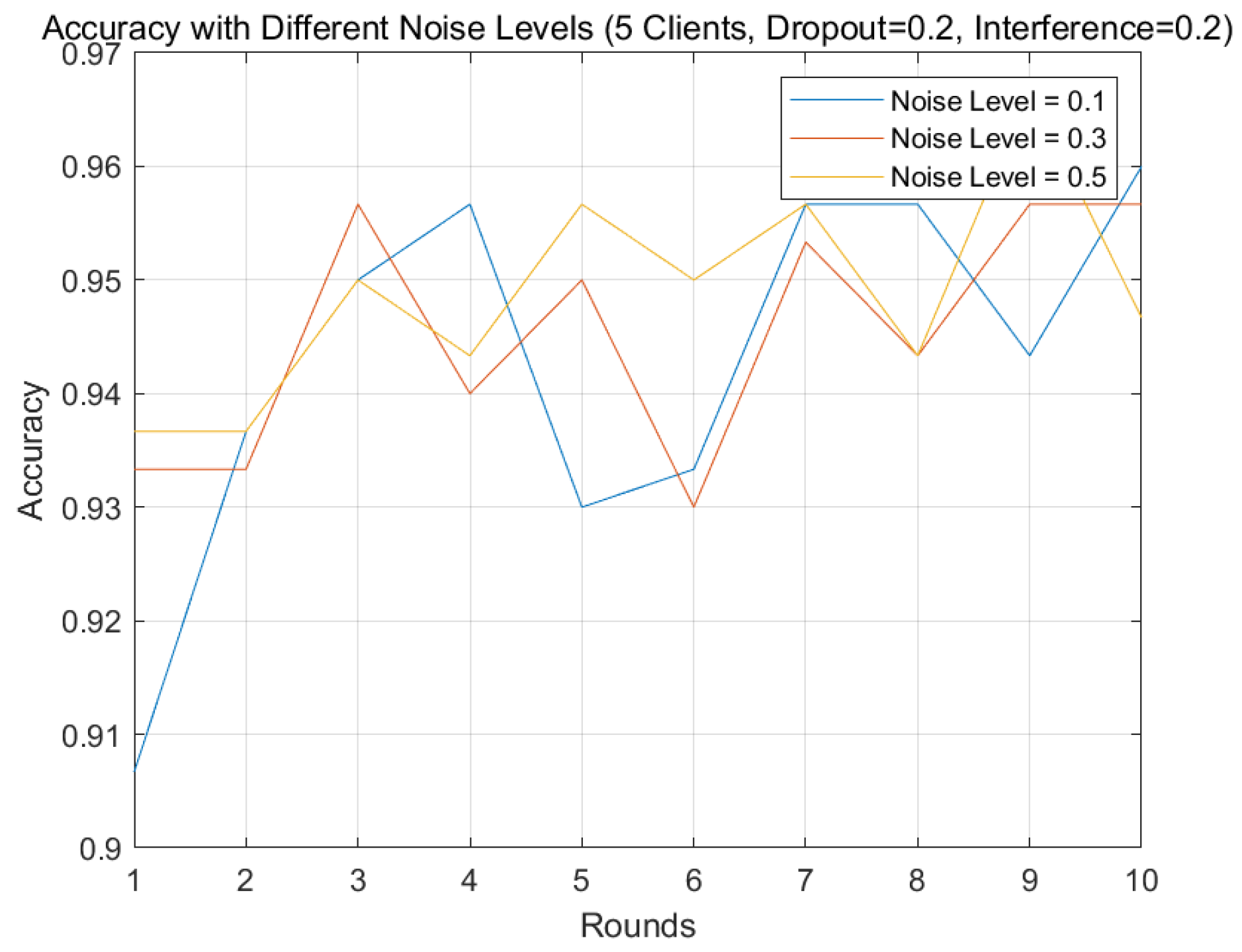

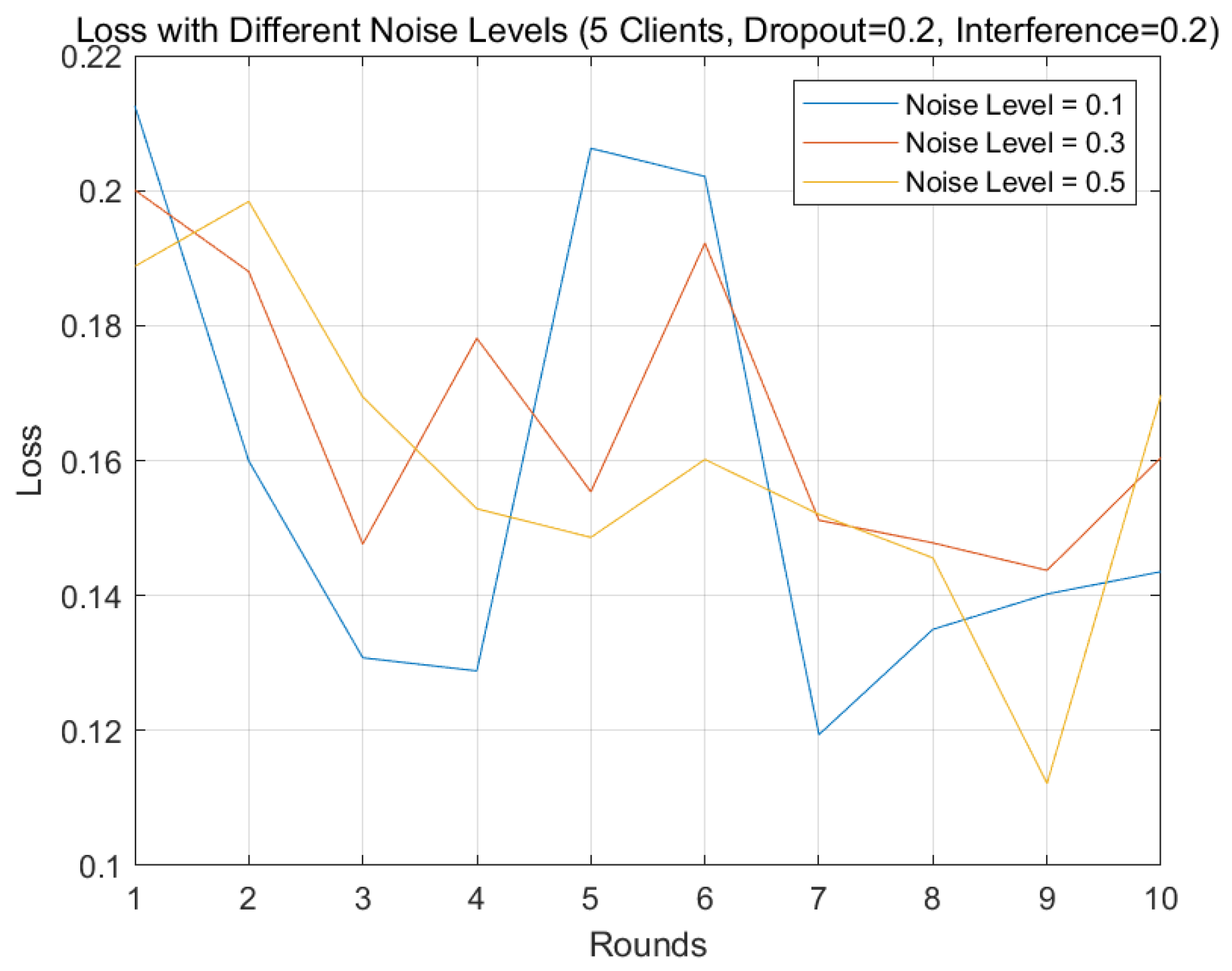

5.1. Noise Sensitivity Analysis

5.2. Alignment with Problem Objectives

- Problem 2 (Data Heterogeneity): Client consistency across skewed datasets tests the SAIA’s alignment of non-i.i.d. updates, validated by adaptive weighting (Equation (24)).

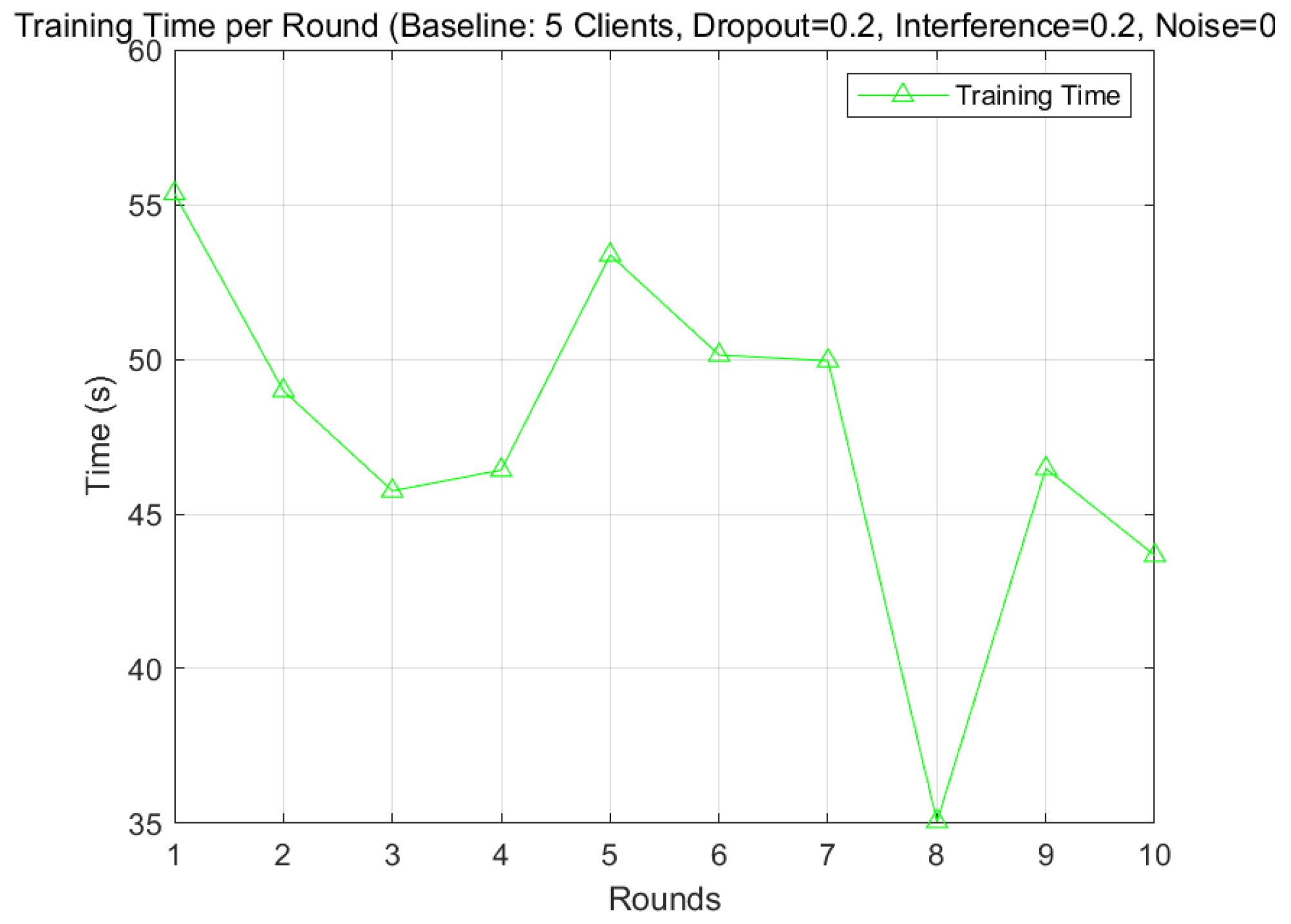

- Problem 3 (Resource Constraints): Training time and communication overheads to assess the SAIA’s scalability, validated by low-dimensional encoding (Equation (25)).

- Problem 4 (Small Datasets): Classification performance and generalization errors test the SAIA’s mitigation of overfitting, validated by data augmentation (Equation (27)).

5.3. Performance Metrics

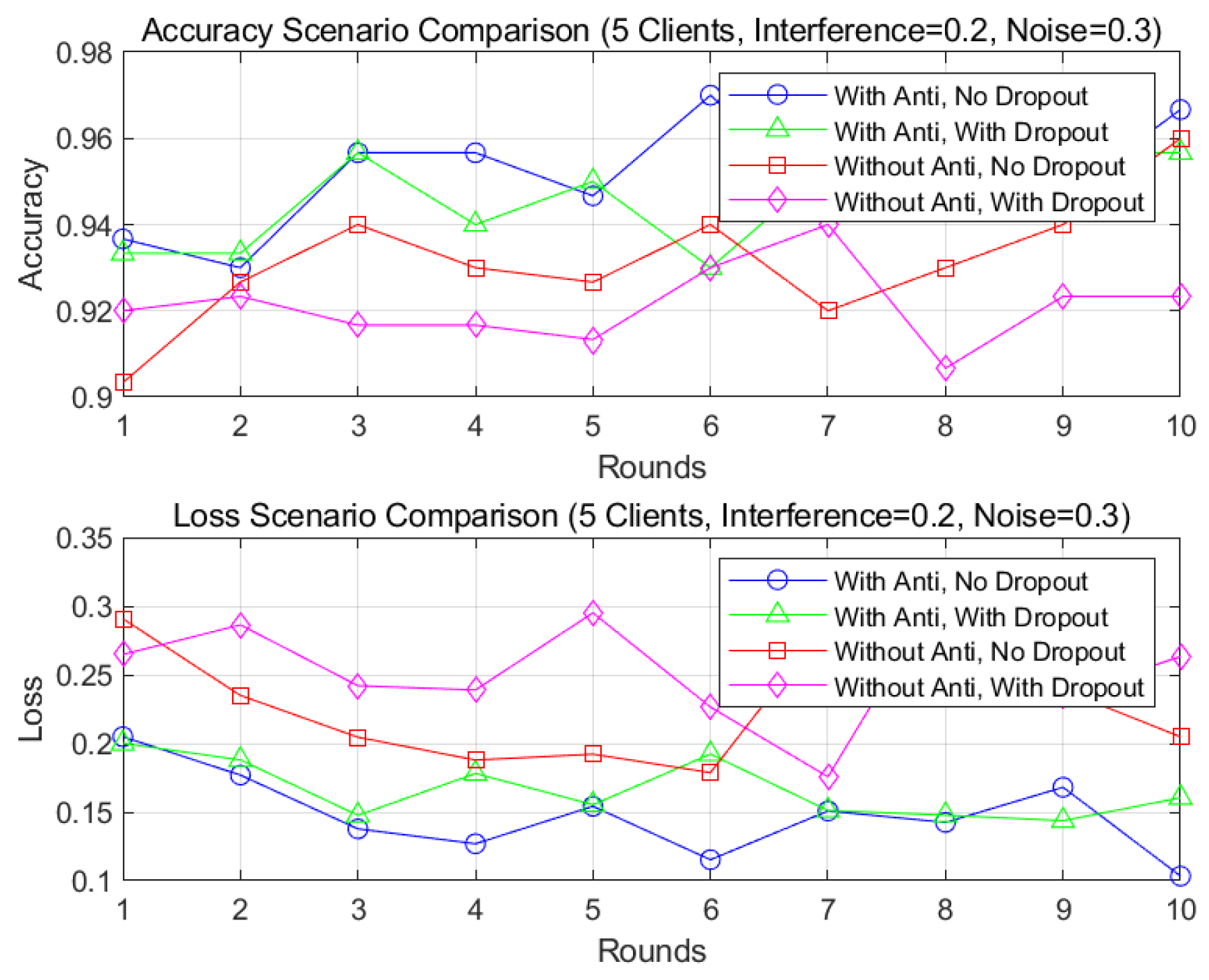

5.4. Convergence Behavior

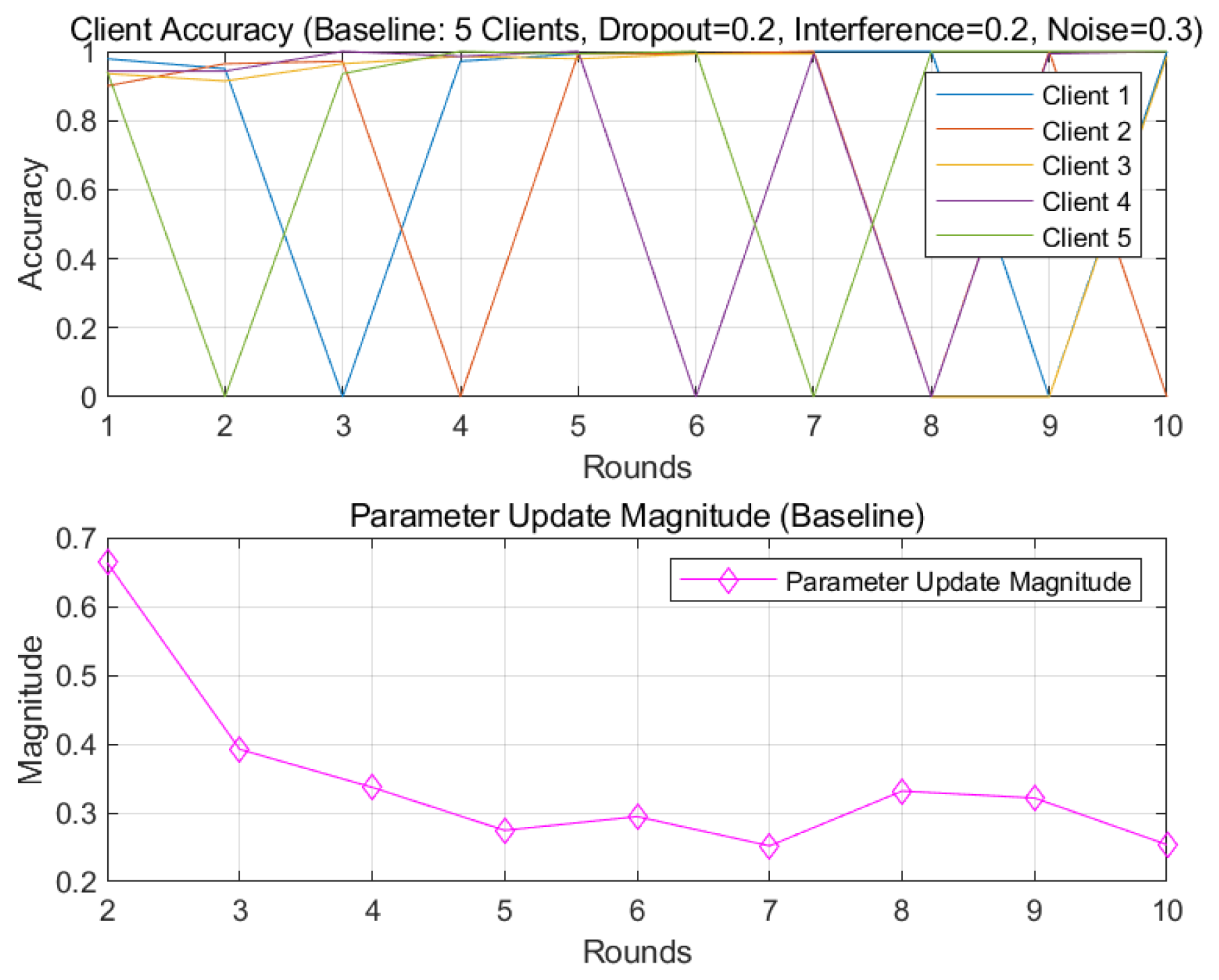

5.5. Client Consistency and Parameter Updates

5.6. Computational Overhead

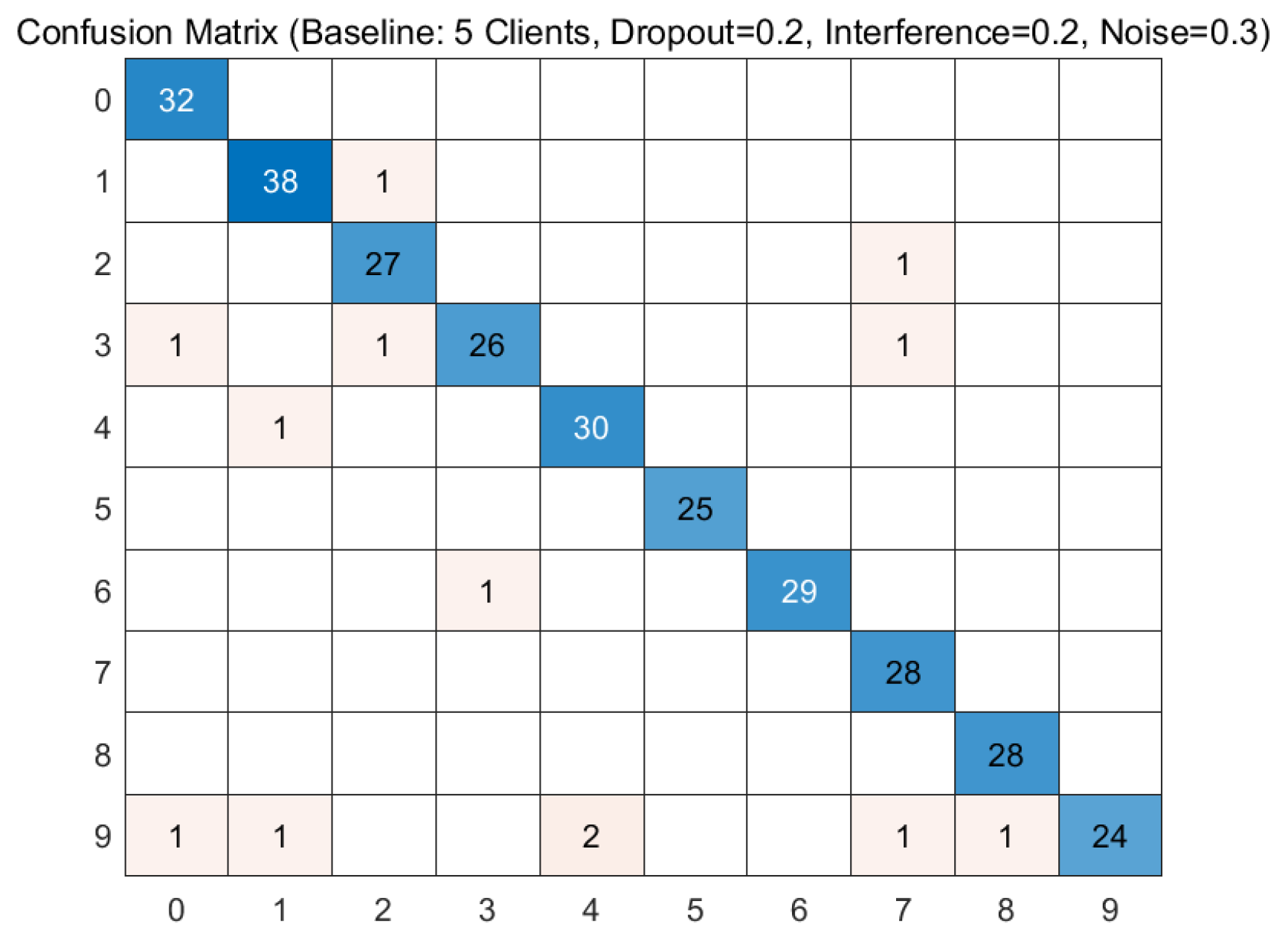

5.7. Classification Performance

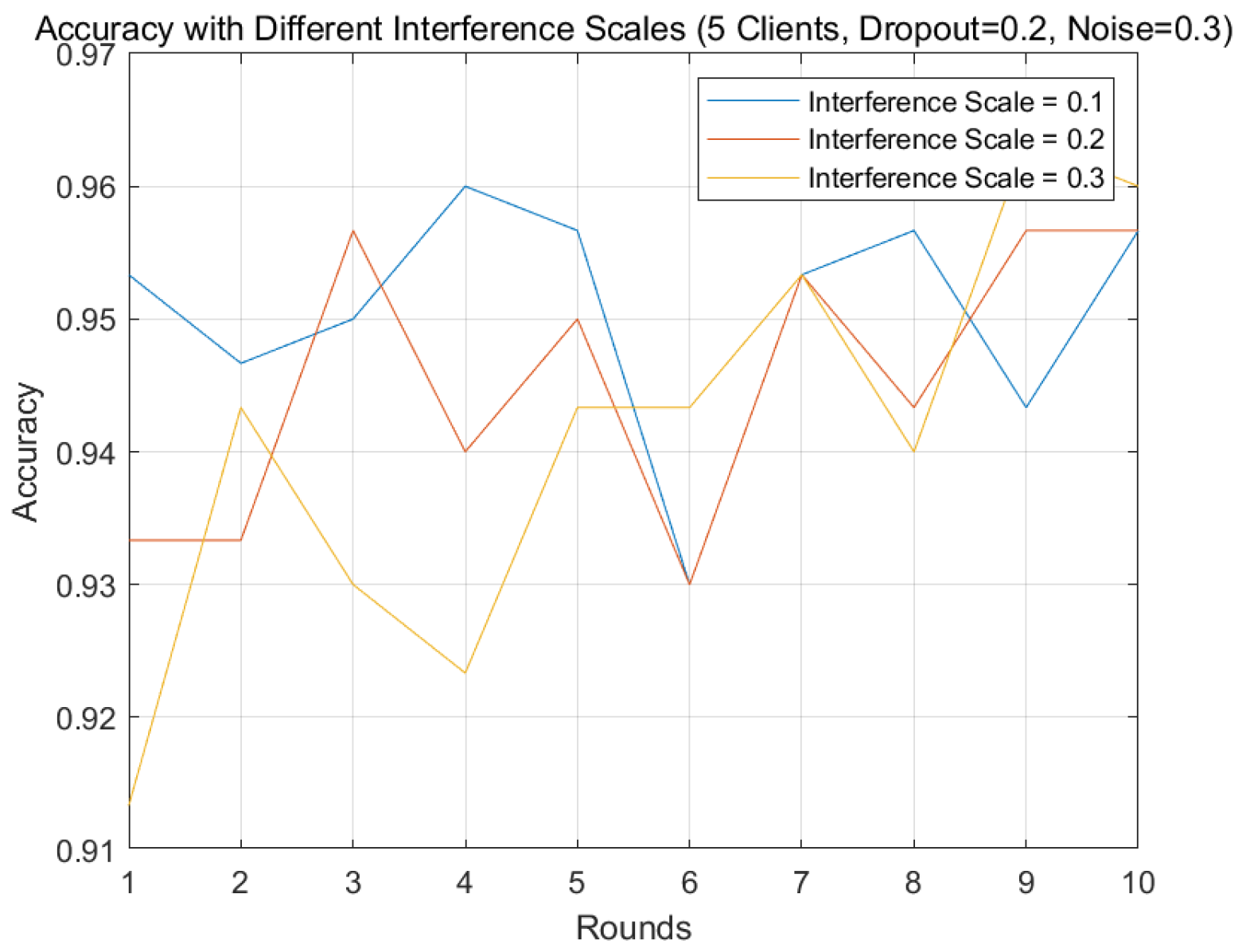

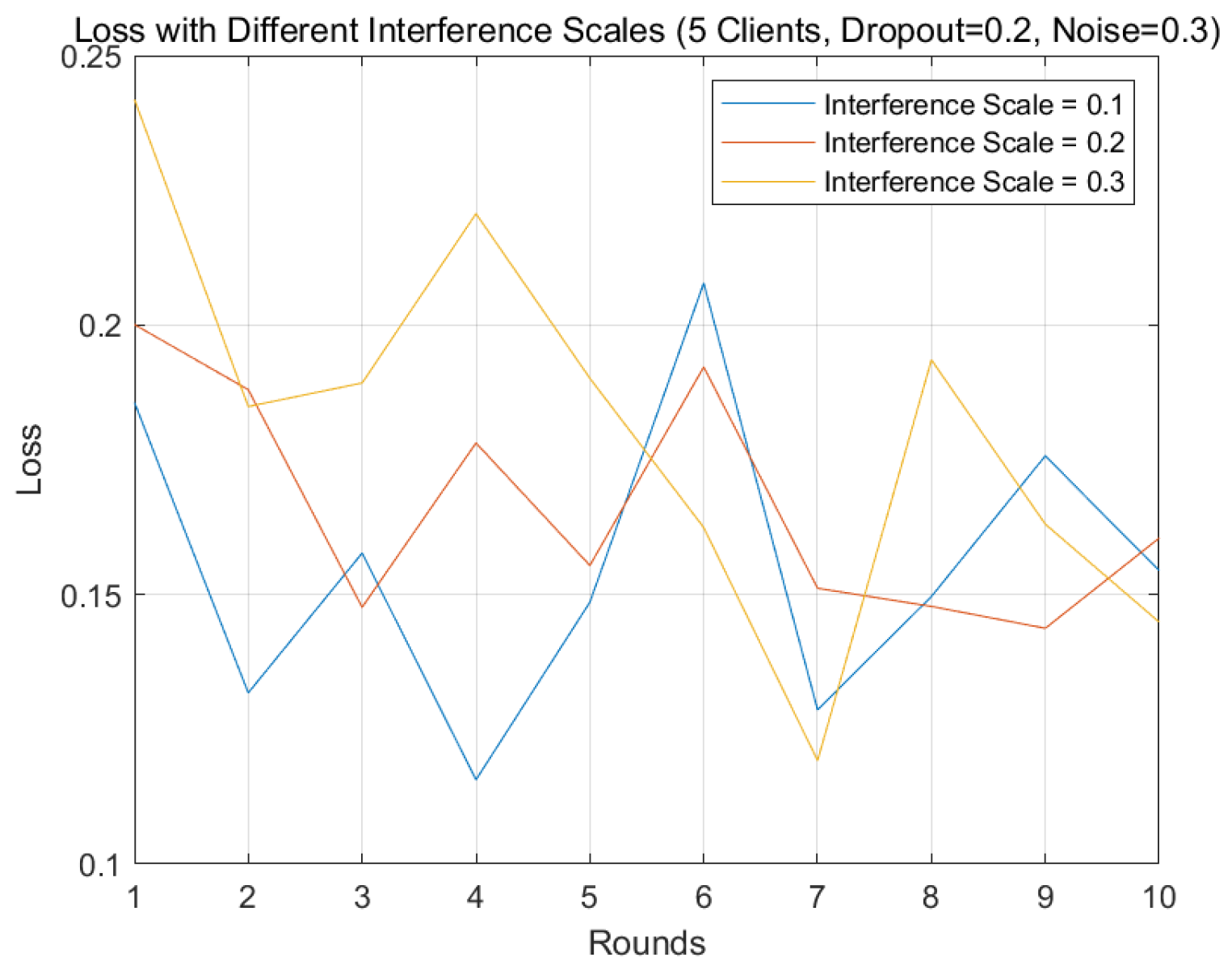

5.8. Interference Sensitivity Analysis

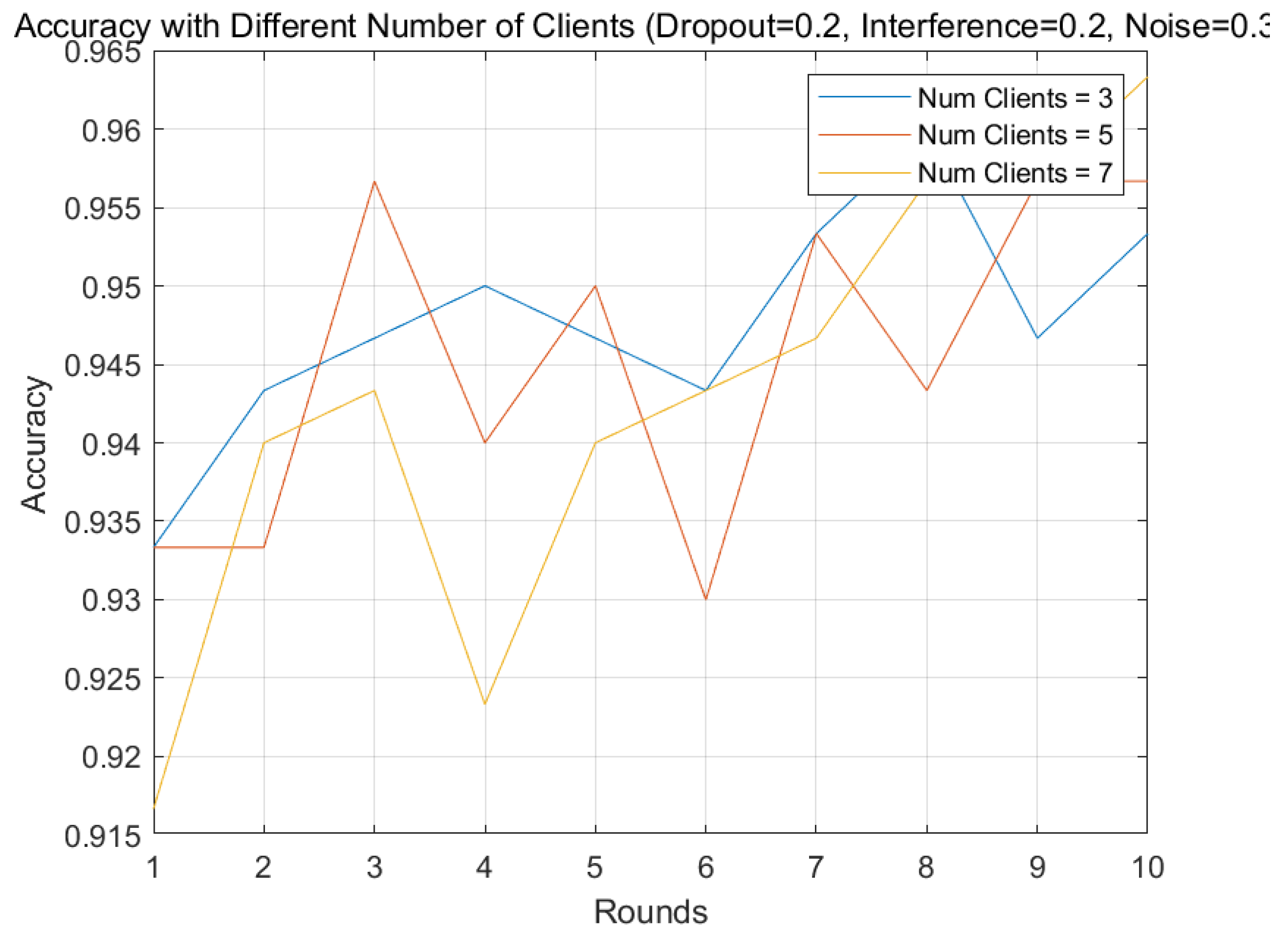

5.9. Effect of Number of Clients

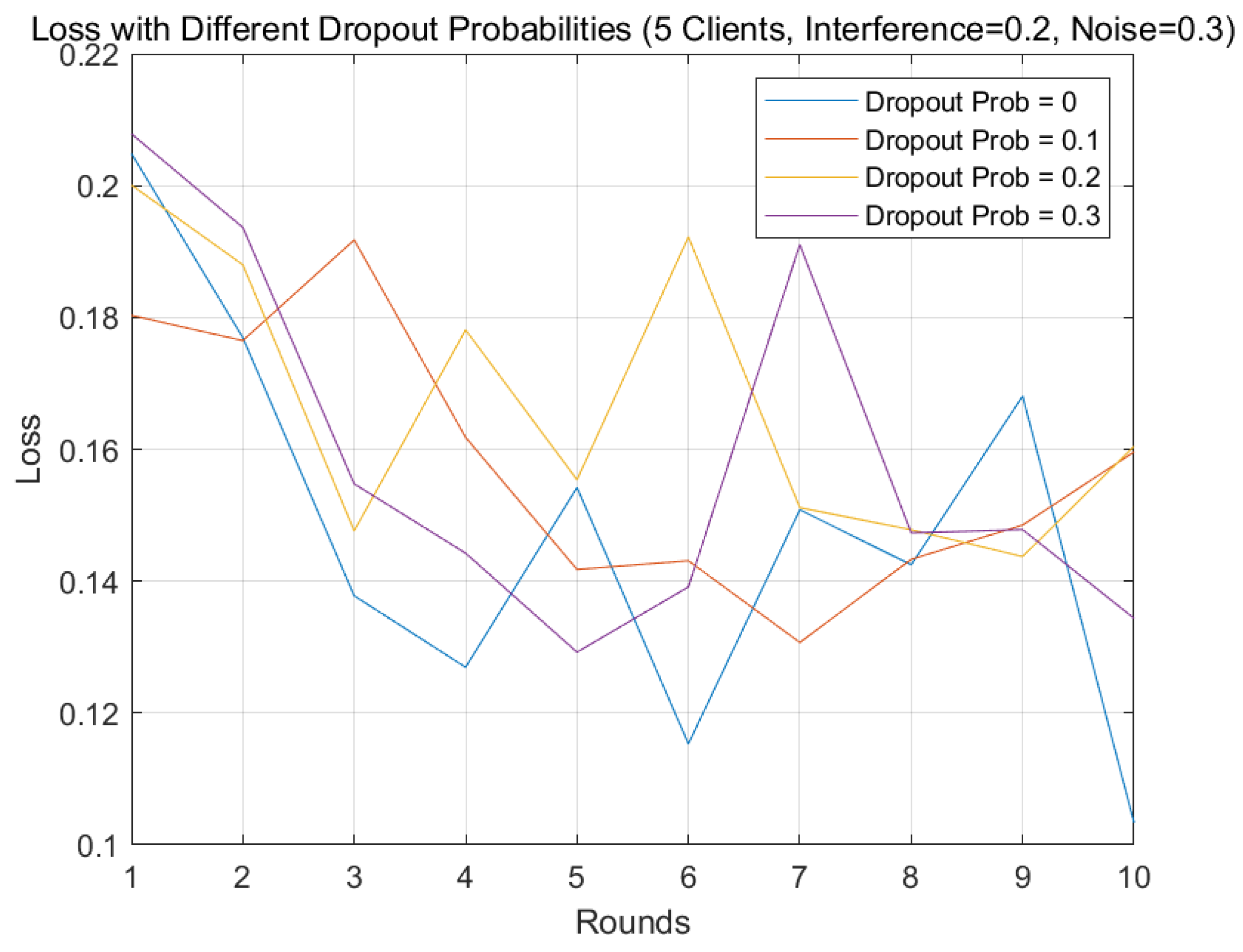

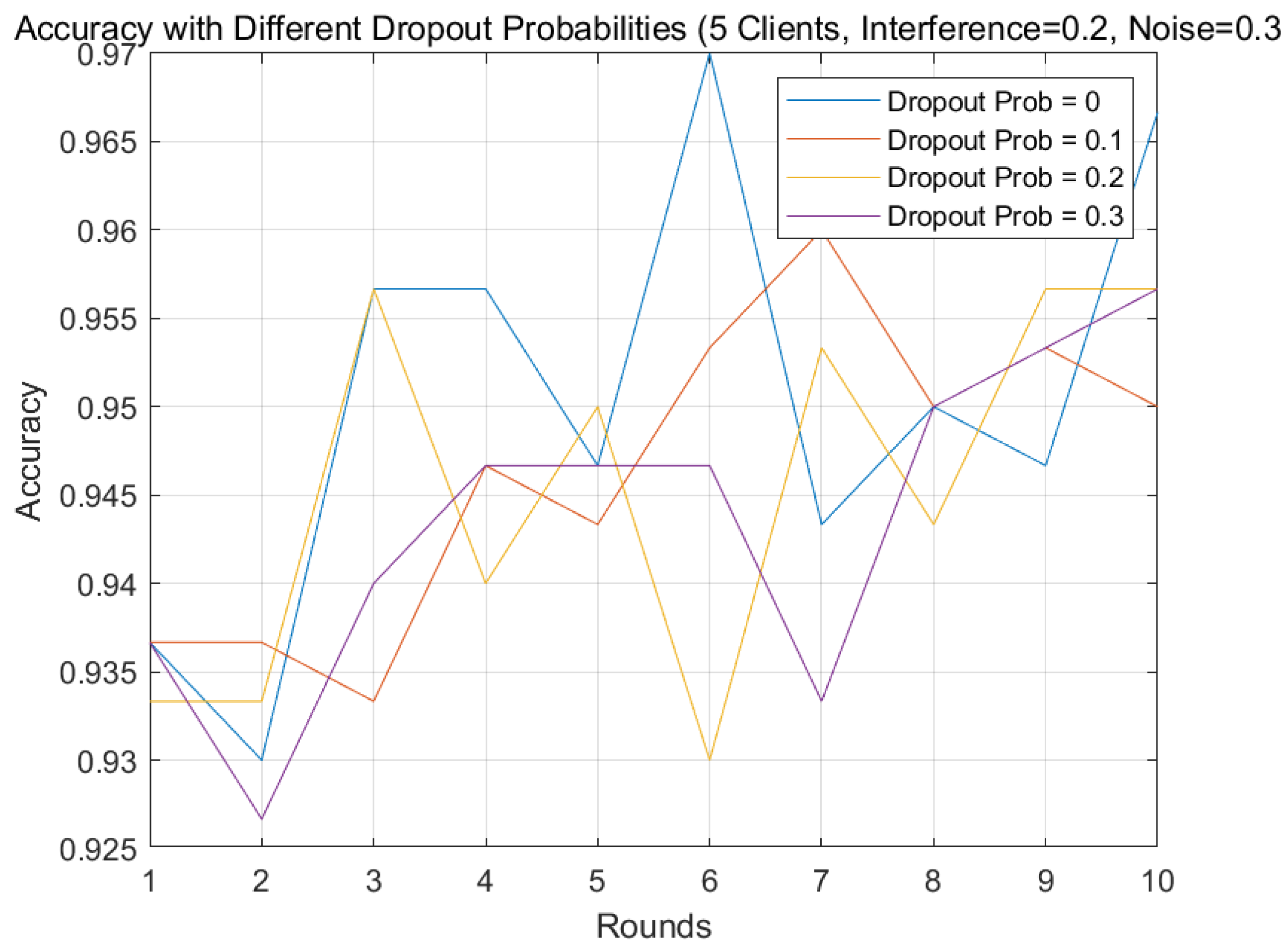

5.10. Dropout Probability Analysis

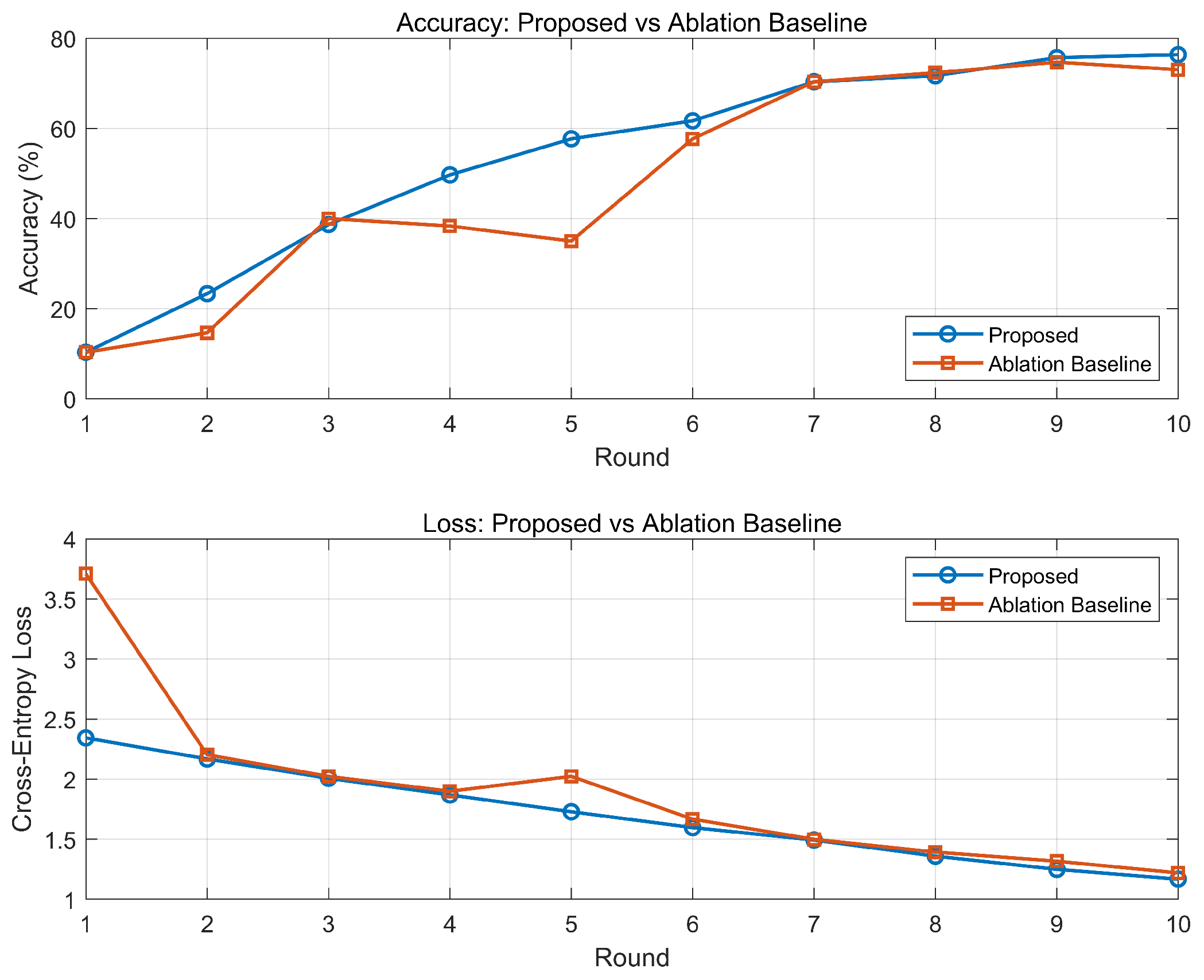

5.11. Ablation Study

5.12. Analysis of Privacy Enhancement

5.13. Evaluation and Insights

6. Conclusions and Future Works

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| AirFL | Over-the-Air Federated Learning |

| AWGN | Additive White Gaussian Noise |

| CNN | Convolutional Neural Network |

| FL | Federated Learning |

| IoT | Internet of Things |

| KL | Kullback-Leibler (Divergence) |

| OTAC | Over-the-Air Computation |

| SAIA | Semantic Anti-Interference Aggregation |

| SGDM | Stochastic Gradient Descent with Momentum |

| SNR | Signal-to-Noise Ratio |

References

- Zikria, Y.B.; Ali, R.; Afzal, M.K.; Kim, S.W. Next-generation internet of things (iot): Opportunities, challenges, and solutions. Sensors 2021, 21, 1174. [Google Scholar] [CrossRef]

- Rathore, M.M.; Paul, A.; Hong, W.H.; Seo, H.; Awan, I.; Saeed, S. Exploiting IoT and big data analytics: Defining smart digital city using real-time urban data. Sustain. Cities Soc. 2018, 40, 600–610. [Google Scholar] [CrossRef]

- Parikh, D.; Radadia, S.; Eranna, R.K. Privacy-Preserving Machine Learning Techniques, Challenges and Research Directions. Int. Res. J. Eng. Technol. 2024, 11, 499. [Google Scholar]

- Wen, J.; Zhang, Z.; Lan, Y.; Cui, Z.; Cai, J.; Zhang, W. A survey on federated learning: Challenges and applications. Int. J. Mach. Learn. Cybern. 2023, 14, 513–535. [Google Scholar] [CrossRef]

- Yang, K.; Jiang, T.; Shi, Y.; Ding, Z. Federated Learning via Over-the-Air Computation. IEEE Trans. Wirel. Commun. 2020, 19, 2022–2035. [Google Scholar] [CrossRef]

- Ji, J.; Lam, C.T.; Wang, K.; Ng, B.K. AMNED: An Efficient Framework for Spiking Neuron Coding in AirComp Federated Learning. IEEE Access 2025, 13, 138970–138985. [Google Scholar] [CrossRef]

- Naseh, D.; Bozorgchenani, A.; Shinde, S.S.; Tarchi, D. Unified Distributed Machine Learning for 6G Intelligent Transportation Systems: A Hierarchical Approach for Terrestrial and Non-Terrestrial Networks. Network 2025, 5, 41. [Google Scholar] [CrossRef]

- Abdullahi, M.; Cao, A.; Zafar, A.; Xiao, P.; Hemadeh, I.A. A generalized bit error rate evaluation for index modulation based OFDM system. IEEE Access 2020, 8, 70082–70094. [Google Scholar] [CrossRef]

- Jin, C.; Chang, Z.; Hu, F.; Luan, M.; Hämäläinen, T. Enhanced Physical Layer Security for Full-Duplex Facultative Symbiotic Radio: A Pattern Switching and Multi-Device Scheduling Strategy. In Proceedings of the 2025 IEEE Wireless Communications and Networking Conference (WCNC), Milan, Italy, 24–27 March 2025; pp. 1–6. [Google Scholar]

- Liu, Y.; Qu, Z.; Wang, J. Compressed Hierarchical Federated Learning for Edge-Level Imbalanced Wireless Networks. IEEE Trans. Comput. Soc. Syst. 2025, 12, 3131–3142. [Google Scholar] [CrossRef]

- Singh, S.; Kumar, M.; Kumar, R. Powering the future: A survey of ambient RF-based communication systems for next-gen wireless networks. IET Wirel. Sens. Syst. 2024, 14, 265–292. [Google Scholar] [CrossRef]

- Cui, Y.; Guo, J.; Wen, C.K.; Jin, S. Communication-efficient personalized federated edge learning for massive mimo csi feedback. IEEE Trans. Wirel. Commun. 2023, 23, 7362–7375. [Google Scholar] [CrossRef]

- Lan, M.; Ling, Q.; Xiao, S.; Zhang, W. Quantization bits allocation for wireless federated learning. IEEE Trans. Wirel. Commun. 2023, 22, 8336–8351. [Google Scholar] [CrossRef]

- Shi, W.; Yao, J.; Xu, W.; Xu, J.; You, X.; Eldar, Y.C.; Zhao, C. Combating interference for over-the-air federated learning: A statistical approach via RIS. IEEE Trans. Signal Process. 2025, 73, 936–953. [Google Scholar] [CrossRef]

- Cao, X.; Lyu, Z.; Zhu, G.; Xu, J.; Xu, L.; Cui, S. An overview on over-the-air federated edge learning. IEEE Wirel. Commun. 2024, 31, 202–210. [Google Scholar] [CrossRef]

- Phong, N.H.; Santos, A.; Ribeiro, B. PSO-convolutional neural networks with heterogeneous learning rate. IEEE Access 2022, 10, 89970–89988. [Google Scholar] [CrossRef]

- Pakina, A.K.; Pujari, M. Differential privacy at the edge: A federated learning framework for GDPR-compliant TinyML deployments. IOSR J. Comput. Eng. 2024, 26, 52–64. [Google Scholar]

- Lee, H.S.; Lee, J.W. Adaptive transmission scheduling in wireless networks for asynchronous federated learning. IEEE J. Sel. Areas Commun. 2021, 39, 3673–3687. [Google Scholar] [CrossRef]

- Qiao, Y.; Adhikary, A.; Kim, K.T.; Zhang, C.; Hong, C.S. Knowledge distillation assisted robust federated learning: Towards edge intelligence. In Proceedings of the ICC 2024—IEEE International Conference on Communications, Denver, CO, USA, 9–13 June 2024; IEEE: New York, NY, USA, 2024; pp. 843–848. [Google Scholar]

- Ma, B.; Yin, X.; Tan, J.; Chen, Y.; Huang, H.; Wang, H.; Xue, W.; Ban, X. FedST: Federated style transfer learning for non-IID image segmentation. In Proceedings of the AAAI Conference on Artificial Intelligence, Philadelphia, PA, USA, 25 February–4 March 2024; Volume 38, pp. 4053–4061. [Google Scholar]

- Houssein, E.H.; Sayed, A. Boosted federated learning based on improved Particle Swarm Optimization for healthcare IoT devices. Comput. Biol. Med. 2023, 163, 107195. [Google Scholar] [CrossRef]

- Baqer, M. Lightweight Federated Learning Approach for Resource-Constrained Internet of Things. Sensors 2025, 25, 5633. [Google Scholar] [CrossRef] [PubMed]

- Ridolfi, L.; Naseh, D.; Shinde, S.S.; Tarchi, D. Implementation and evaluation of a federated learning framework on raspberry PI platforms for IoT 6G applications. Future Internet 2023, 15, 358. [Google Scholar] [CrossRef]

- Zhou, X.; Liang, W.; Kawai, A.; Fueda, K.; She, J.; Wang, K.I.K. Adaptive segmentation enhanced asynchronous federated learning for sustainable intelligent transportation systems. IEEE Trans. Intell. Transp. Syst. 2024, 25, 6658–6666. [Google Scholar] [CrossRef]

- Wang, Z.; Zhou, Y.; Shi, Y.; Zhuang, W. Interference management for over-the-air federated learning in multi-cell wireless networks. IEEE J. Sel. Areas Commun. 2022, 40, 2361–2377. [Google Scholar] [CrossRef]

- Wang, S.; Chen, M.; Brinton, C.G.; Yin, C.; Saad, W.; Cui, S. Performance optimization for variable bitwidth federated learning in wireless networks. IEEE Trans. Wirel. Commun. 2023, 23, 2340–2356. [Google Scholar] [CrossRef]

- Zhu, G.; Liu, D.; Du, Y.; You, C.; Zhang, J.; Huang, K. Toward an Intelligent Edge: Wireless Communication Meets Machine Learning. IEEE Commun. Mag. 2020, 58, 19–25. [Google Scholar] [CrossRef]

- Donoho, D.L. Compressed sensing. IEEE Trans. Inf. Theory 2006, 52, 1289–1306. [Google Scholar] [CrossRef]

- Bockelmann, C.; Pratas, N.K.; Güllü, H.; Nikopour, H.; Au, K.; Stefanovic, C.; Popovski, P. Massive MIMO for machine-to-machine communication. IEEE Commun. Mag. 2016, 54, 162–169. [Google Scholar]

- Bengio, Y.; Courville, A.; Vincent, P. Representation learning: A review and new perspectives. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 1798–1828. [Google Scholar] [CrossRef]

- Wang, K.; Lam, C.T.; Ng, B.K. RIS-Assisted High-Speed Communications with Time-Varying Distance-Dependent Rician Channels. Appl. Sci. 2022, 12, 11857. [Google Scholar] [CrossRef]

- Wang, K.; Lam, C.T.; Ng, B.K. Positioning Information Based High-Speed Communications with Multiple RISs: Doppler Mitigation and Hardware Impairments. Appl. Sci. 2022, 12, 7076. [Google Scholar] [CrossRef]

- Wang, K.; Lam, C.T.; Ng, B.K. Hardware aging analysis for reconfigurable intelligent surfaces. IET Electron. Lett. 2023, 59, e12714. [Google Scholar] [CrossRef]

| Symbol | Description |

|---|---|

| Global CNN parameters optimized across clients. | |

| K | Number of edge devices, typically 5. |

| Client k’s non-i.i.d. dataset. | |

| Local dataset size, affects overfitting. | |

| Input data for local training. | |

| Sample label, C classes. | |

| Uplink AWGN, variance . | |

| AWGN intensity, tested 0.1–0.5. | |

| Identity matrix for noise independence. | |

| Channel gain, normalized to 1. | |

| Client k’s local CNN parameters. | |

| Encoded parameters, reduces noise. | |

| d | CNN parameter count, large. |

| Encoded representation size, typically 256. | |

| Superposed updates with noise. | |

| Median of decoded representations, robust. | |

| Adaptive weights by client accuracy. | |

| Client k’s validation accuracy. | |

| Encoder maps to semantic representation. | |

| Decoder reconstructs from semantic encoding. | |

| Global weighted average loss. | |

| Client k’s non-i.i.d. loss. | |

| Cross-entropy loss for predictions. | |

| CNN softmax output, C classes. | |

| SGDM learning rate, typically 0.01. | |

| L | Lipschitz constant, bounds smoothness. |

| Client parameter variance, non-i.i.d. | |

| KL divergence, measures data heterogeneity. | |

| Scales to . | |

| SNR | Signal-to-noise ratio, ≥20 dB. |

| CNN weight variance, typically 0.1. | |

| Client k’s training cost. | |

| E | Epochs per dataset, typically 10. |

| C | Per-sample computational cost. |

| Augmented dataset size, reduces overfitting. | |

| Dataset augmentation factor, typically 2. | |

| Autoencoder reconstruction error bound. | |

| Mutual information in semantic encoding. | |

| T | Total AirFL rounds, typically 10. |

| Parameter | Value |

|---|---|

| Model Parameter Dimension (d) | ≈8.2 |

| Semantic Vector Dimension () | 256 |

| Regularization ( in Equation (5)) | 0.01 |

| ISTA Iterations (Decoding) | 50 |

| Total Rounds (T) | 10 |

| Local Epochs (E) | 10 |

| Learning Rate () | 0.01 |

| Noise Variance () | SAIA Accuracy | FedAvg Accuracy |

|---|---|---|

| 0.1 | 98.33% ± 0.21% | 95.67% ± 0.45% |

| 0.3 | 97.00% ± 0.35% | 94.33% ± 0.58% |

| 0.5 | 95.67% ± 0.41% | 92.00% ± 0.72% |

| Client | Data Skew | Acc. (SAIA) | Acc. (FedAvg) | Loss (SAIA) |

|---|---|---|---|---|

| 1 | 0–2 (60%) | 99.50% | 95.71% | 0.0294 |

| 2 | 3–5 (70%) | 98.50% | 88.57% | 0.0563 |

| 3 | 6–8 (50%) | 99.50% | 94.29% | 0.0512 |

| 4 | 1–4 (40%) | 99.00% | 96.43% | 0.0301 |

| 5 | Balanced | 99.50% | 97.14% | 0.0018 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Ji, J.-C.; Lam, C.-T.; Wang, K.; Ng, B.K. Robust Aggregation in Over-the-Air Computation with Federated Learning: A Semantic Anti-Interference Approach. Mathematics 2026, 14, 124. https://doi.org/10.3390/math14010124

Ji J-C, Lam C-T, Wang K, Ng BK. Robust Aggregation in Over-the-Air Computation with Federated Learning: A Semantic Anti-Interference Approach. Mathematics. 2026; 14(1):124. https://doi.org/10.3390/math14010124

Chicago/Turabian StyleJi, Jun-Cheng, Chan-Tong Lam, Ke Wang, and Benjamin K. Ng. 2026. "Robust Aggregation in Over-the-Air Computation with Federated Learning: A Semantic Anti-Interference Approach" Mathematics 14, no. 1: 124. https://doi.org/10.3390/math14010124

APA StyleJi, J.-C., Lam, C.-T., Wang, K., & Ng, B. K. (2026). Robust Aggregation in Over-the-Air Computation with Federated Learning: A Semantic Anti-Interference Approach. Mathematics, 14(1), 124. https://doi.org/10.3390/math14010124