An Optimization Method of Large-Scale Video Stream Concurrent Transmission for Edge Computing

Abstract

1. Introduction

- (1)

- In this work, an edge data stream access forwarding scheme based on DPDK and link aggregation technology is proposed to satisfy the need of communication performance within edge clusters. This scheme is significant for the large-scale data-intensive computing job from the artificial intelligent applications.

- (2)

- The scheme makes full use of DPDK’s parallel packet processing support for multi-core and multi-NICs. It adopts a Q-learning data stream scheduling that not only enables the bandwidth overlap of multiple NICs but also adapts to the high speed, high concurrency, high traffic and strong real-time characteristics of communication systems to obtain a higher throughput at a lower cost [5,6,7,8].

2. Research Background

2.1. Streaming Media and Related Concepts

2.2. Edge Live Broadcast and Recorded Broadcast Cluster

3. Related Work

4. Formalized Description of the Problem

4.1. Formal Description

4.2. Symbol Descriptions

5. System Model

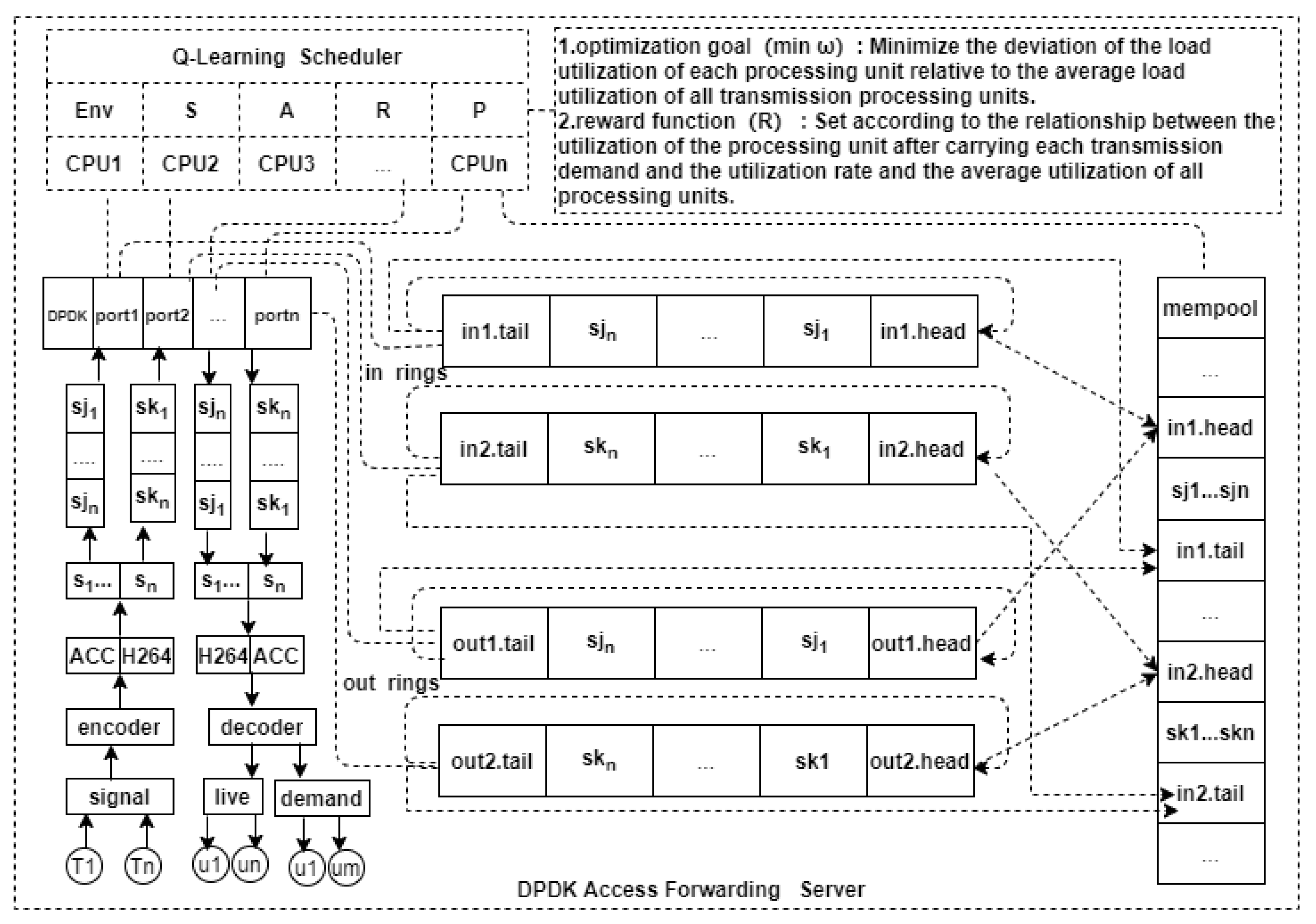

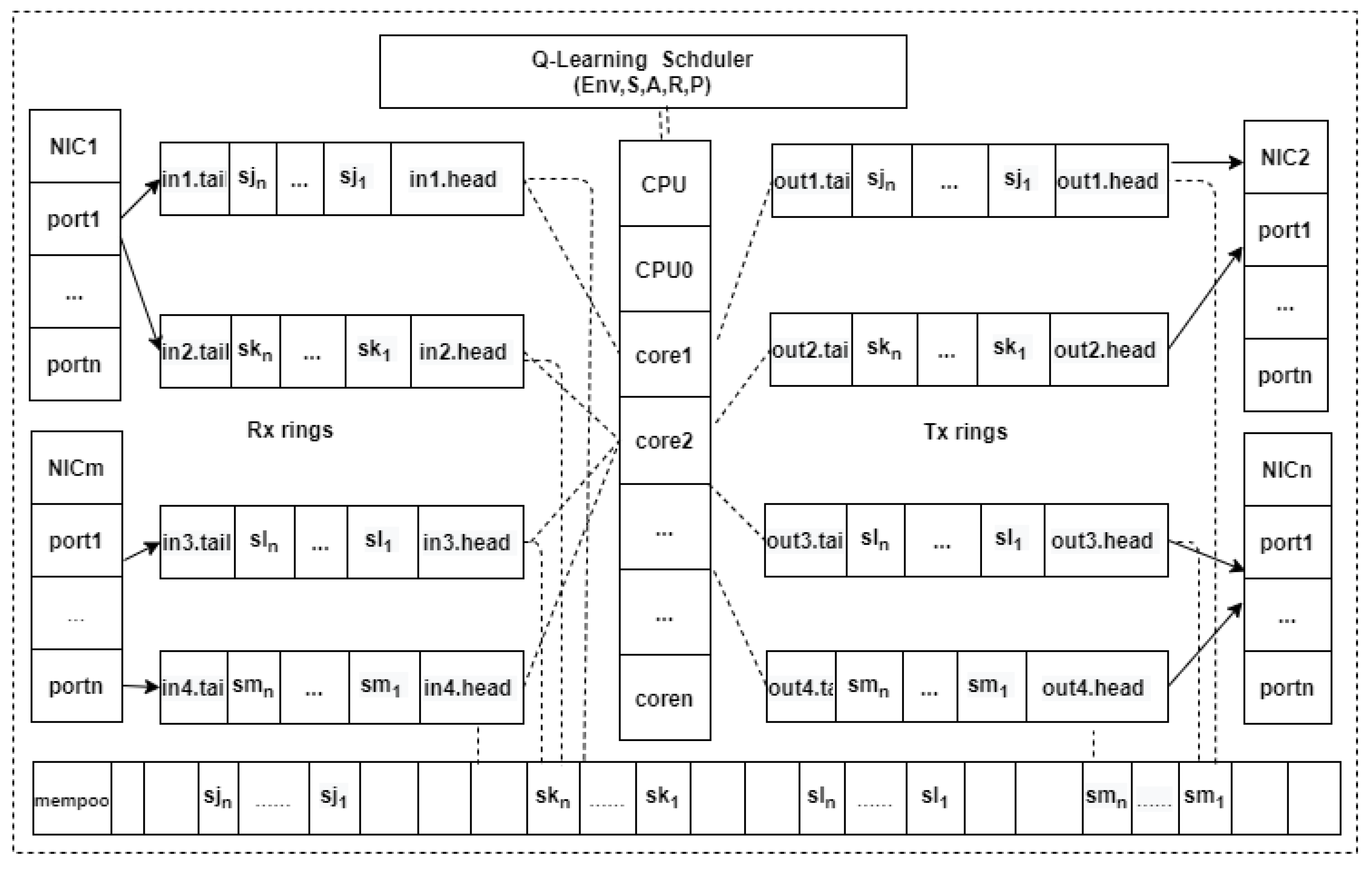

5.1. System Framework Design

- (1)

- Q-learning scheduler

- (2)

- DPDK multi-NIC multi-port transmission module

- (3)

- Memory pool and multiple cyclic queues for receiving and forwarding (Mempool and I/O rings): these are responsible for managing the memory pool in the system, managing the cyclic multiple queues on the receiving and forwarding sides, the number of buffers, the table of forwarding core–buffer relationships, and the control for data flow overflow situations [18,19].

5.2. System Working Principle

6. The Q-Learning-Based Scheduling for Video Stream Transmission

6.1. Model Description

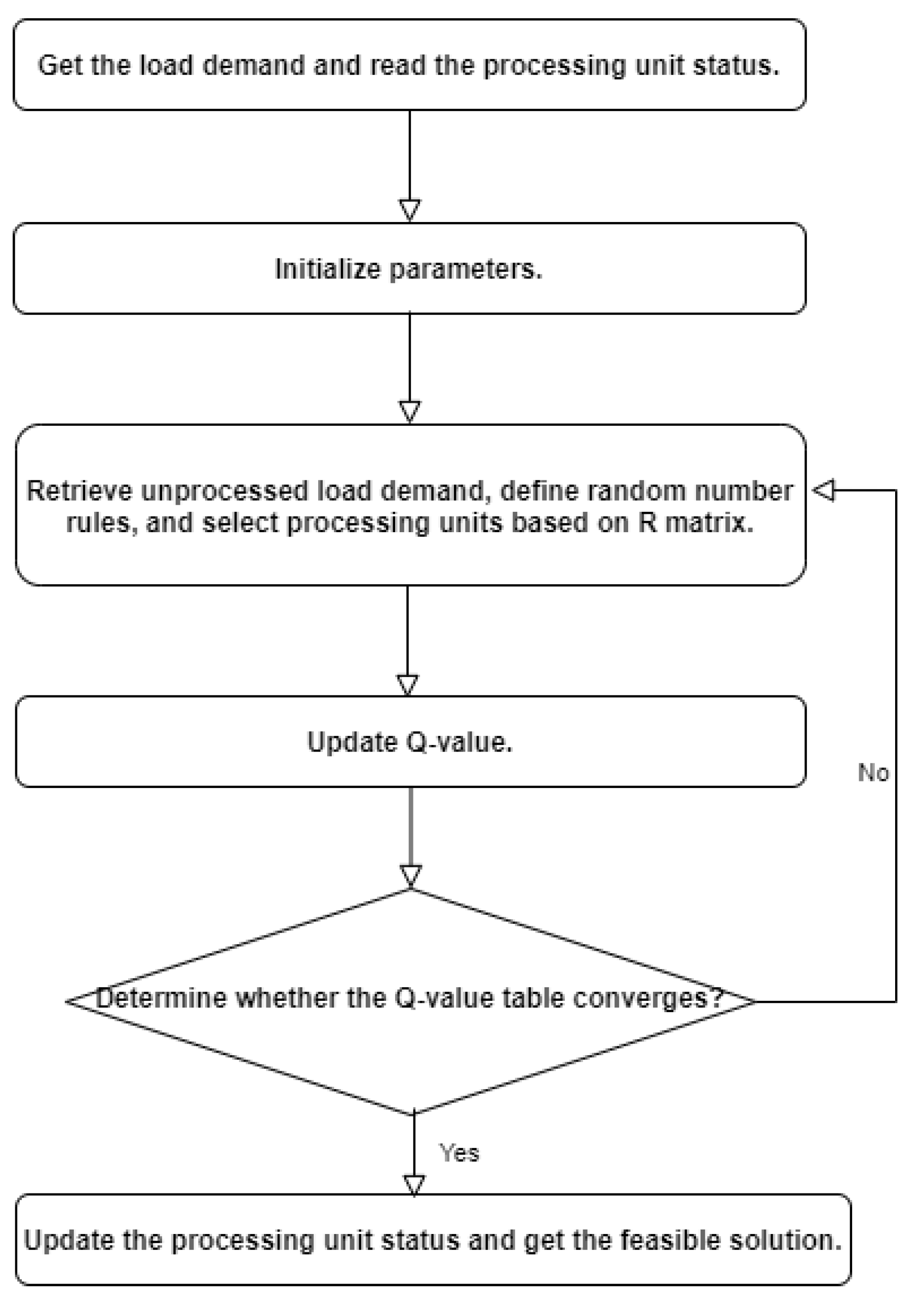

6.2. Scheduling Algorithm Design

6.2.1. Algorithm Principle

6.2.2. Algorithm Implementation

- (1)

- When the utilization rate of the processing unit after bearing the transfer demand exceeds the limit of the utilization limit , the reward value of this processing unit is set to 0, i.e., this processing unit cannot meet the current transfer demand.

- (2)

- When the remaining available load capacity of the processing unit is greater than the load of the transfer demand and the utilization rate of the processing unit after bearing the transfer demand is not greater than the average utilization rate of all processing units, then this processing unit is the optimal choice. The significance of achieving this is to balance the load.

- (3)

- When the remaining available load capacity of the processing unit is greater than the load of the transfer demand and the utilization of the processing unit after bearing this transfer demand is greater than the average utilization rate of all processing units but does not exceed the upper limit of utilization rate , then the processing unit is the second best choice. Therefore, a discount term is added to (2) to reduce the R-value of this processing unit. The reason why the R-value of such a processing unit is not directly set to 0 is to find a feasible solution more quickly.

7. System Performance Optimization Methods

7.1. Memory Buffer Optimization Strategy

7.2. Data Flow Distribution Optimization Strategy

7.3. Link Aggregation

8. Experimental Design and Analysis

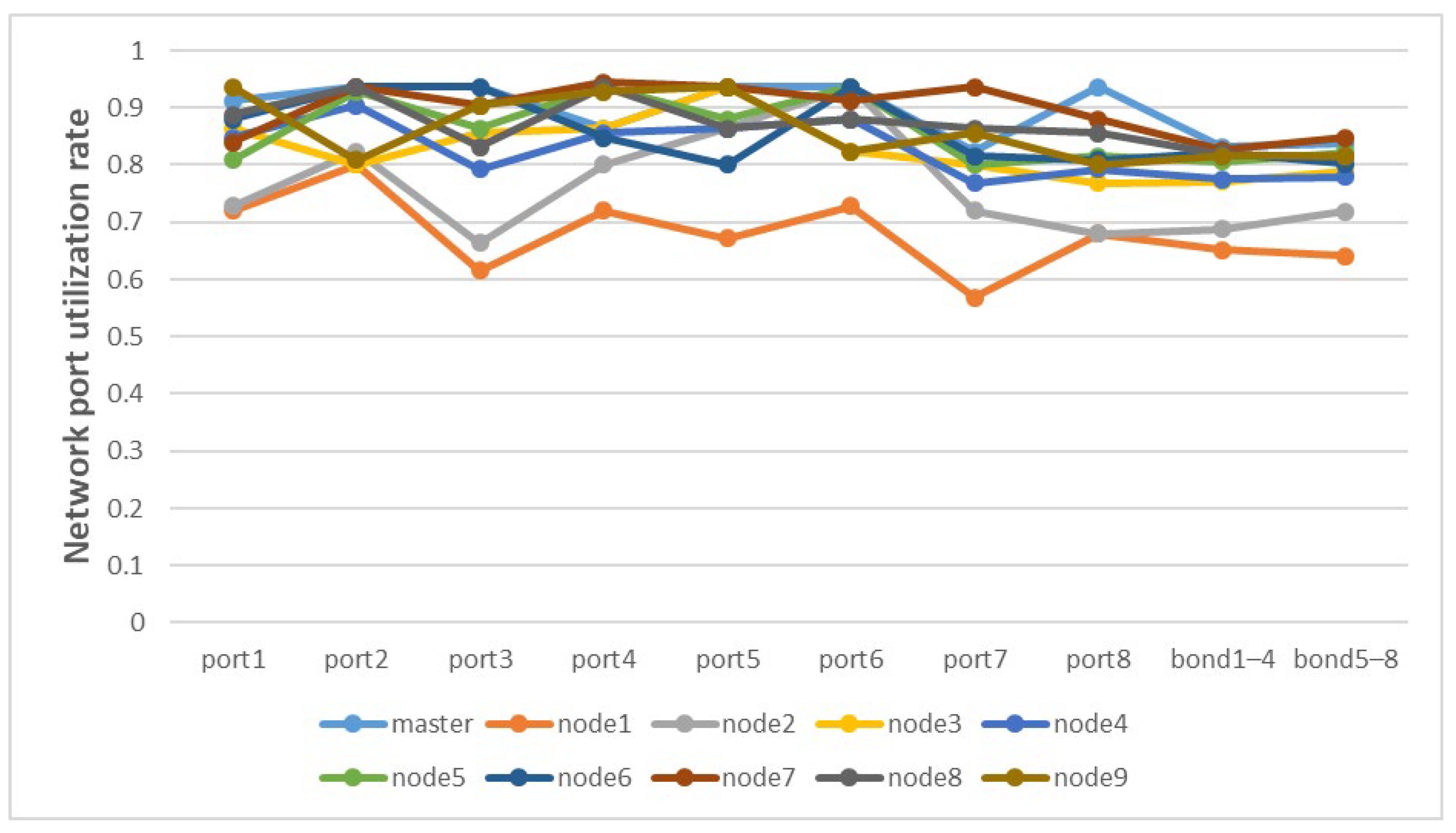

8.1. Port Rate

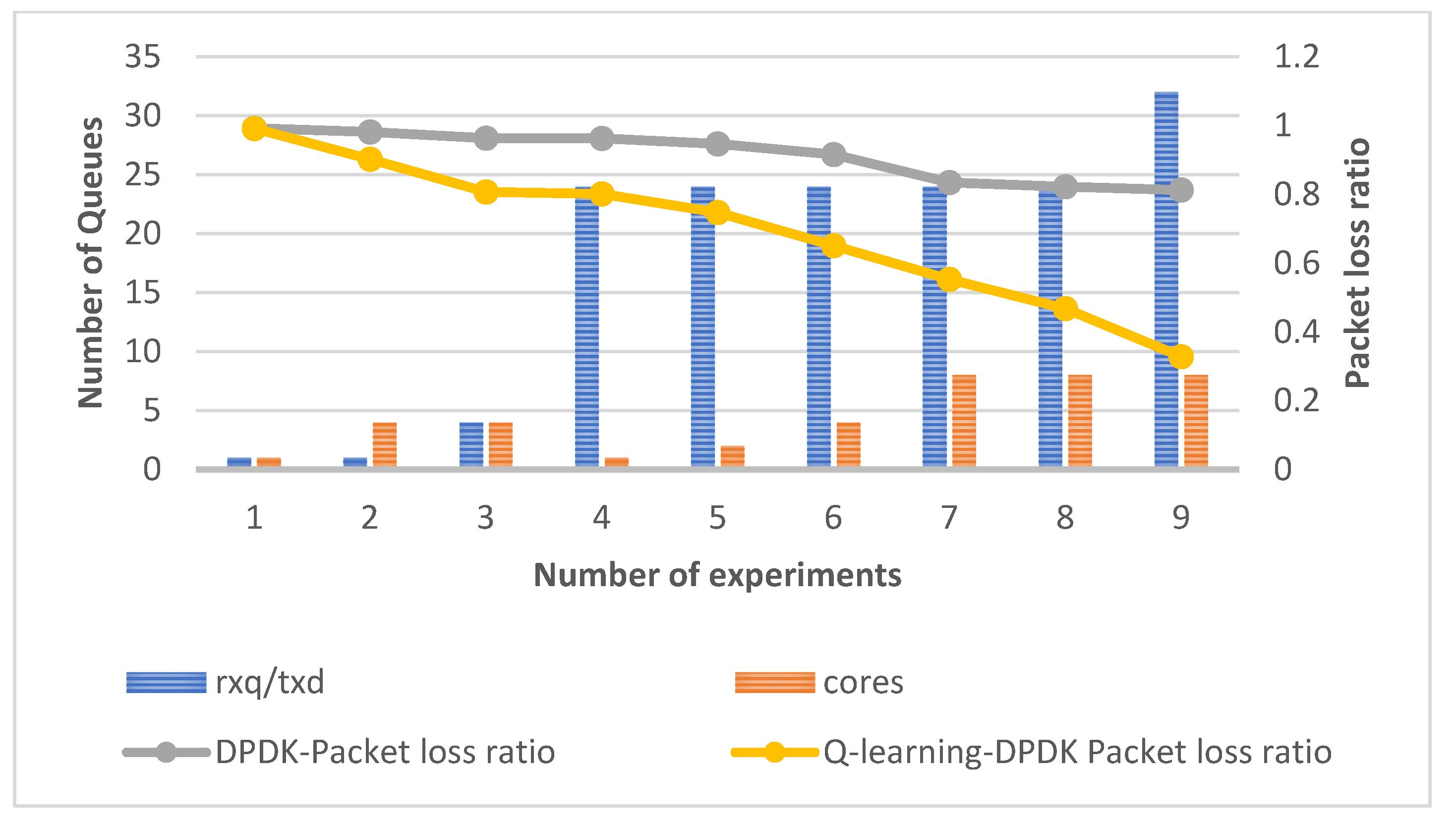

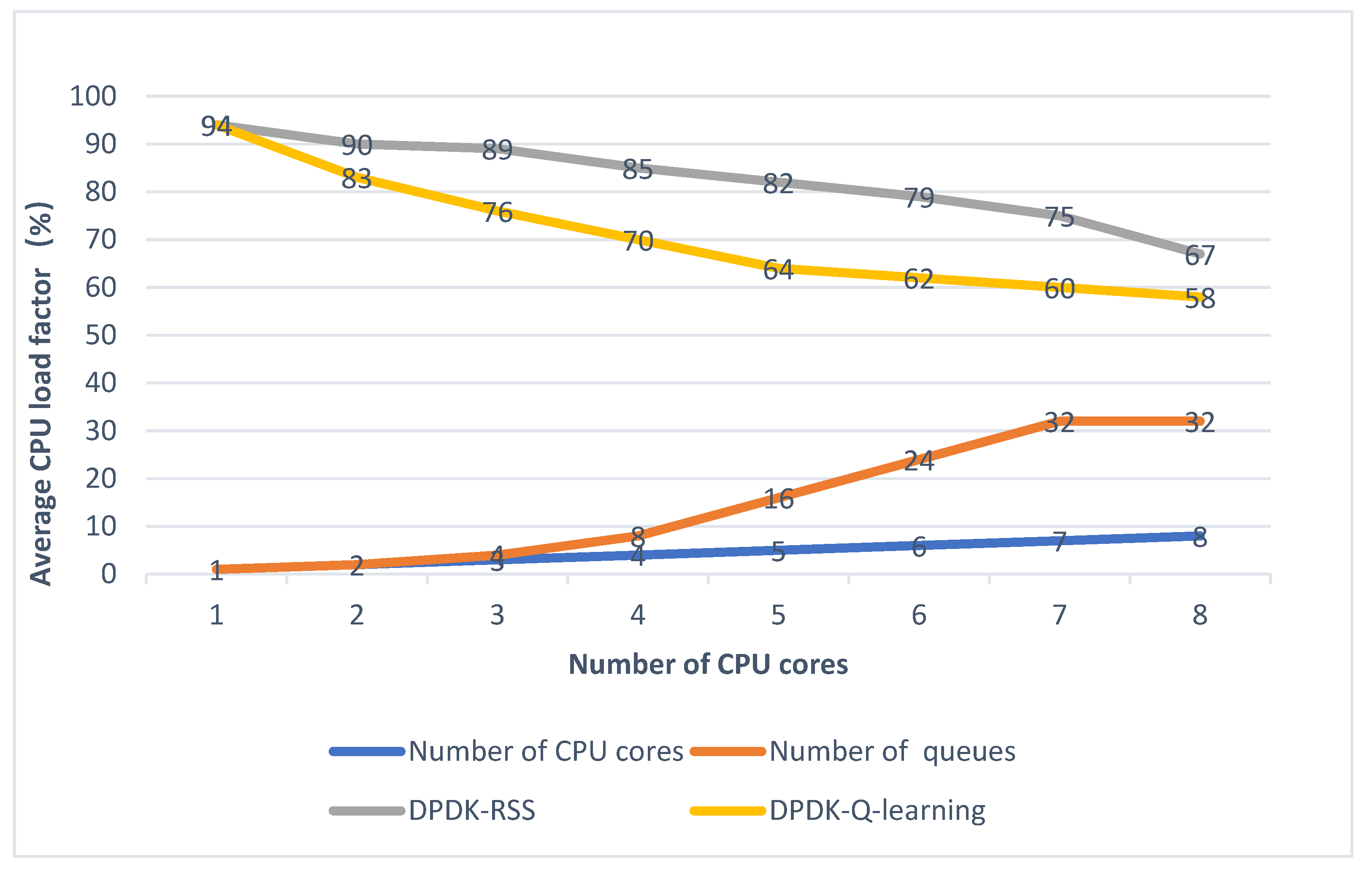

8.2. Kernel Counts, Queue Counts, and Queue Length Tests

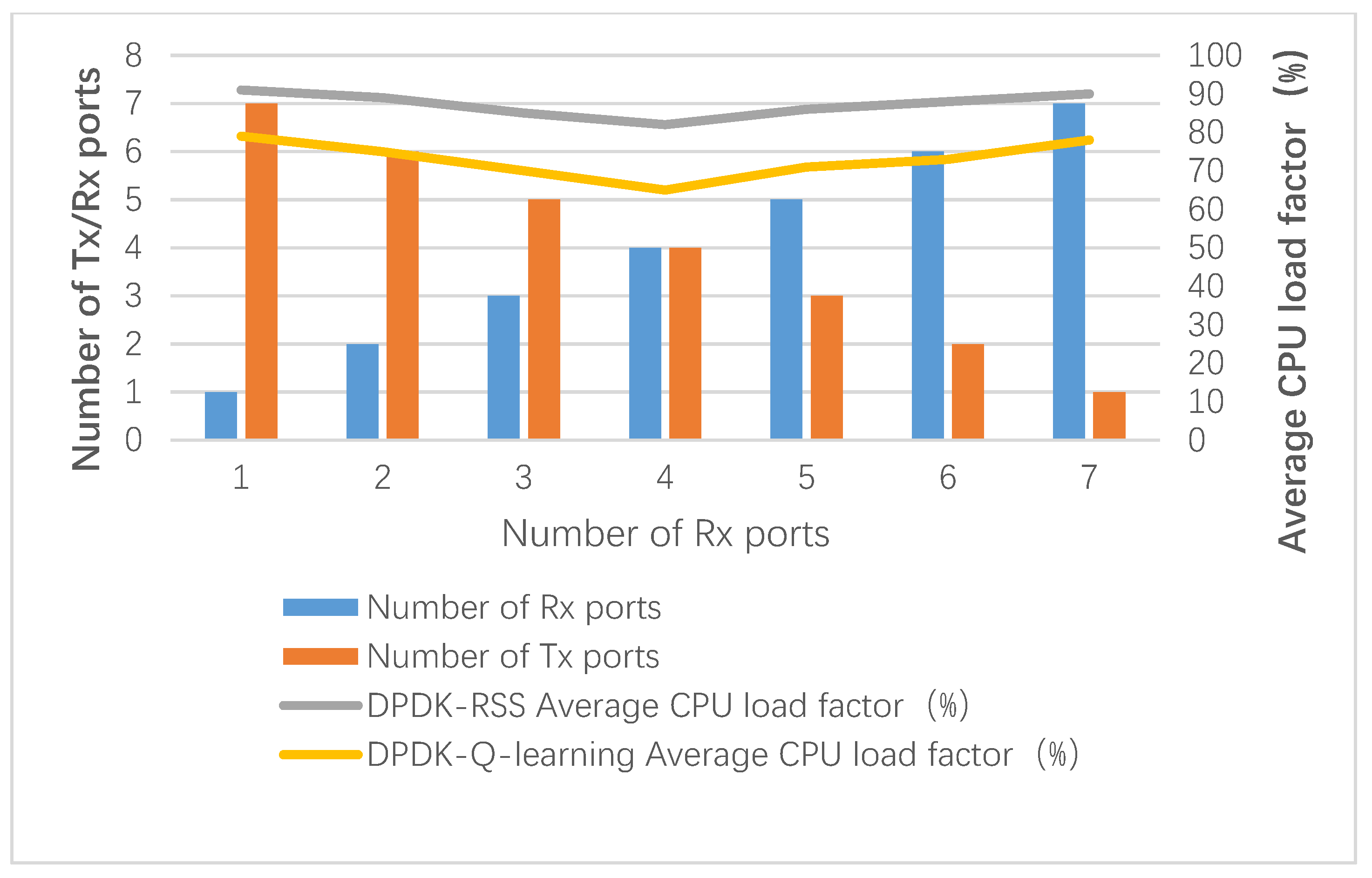

8.3. Adjustment in the Number of Ports

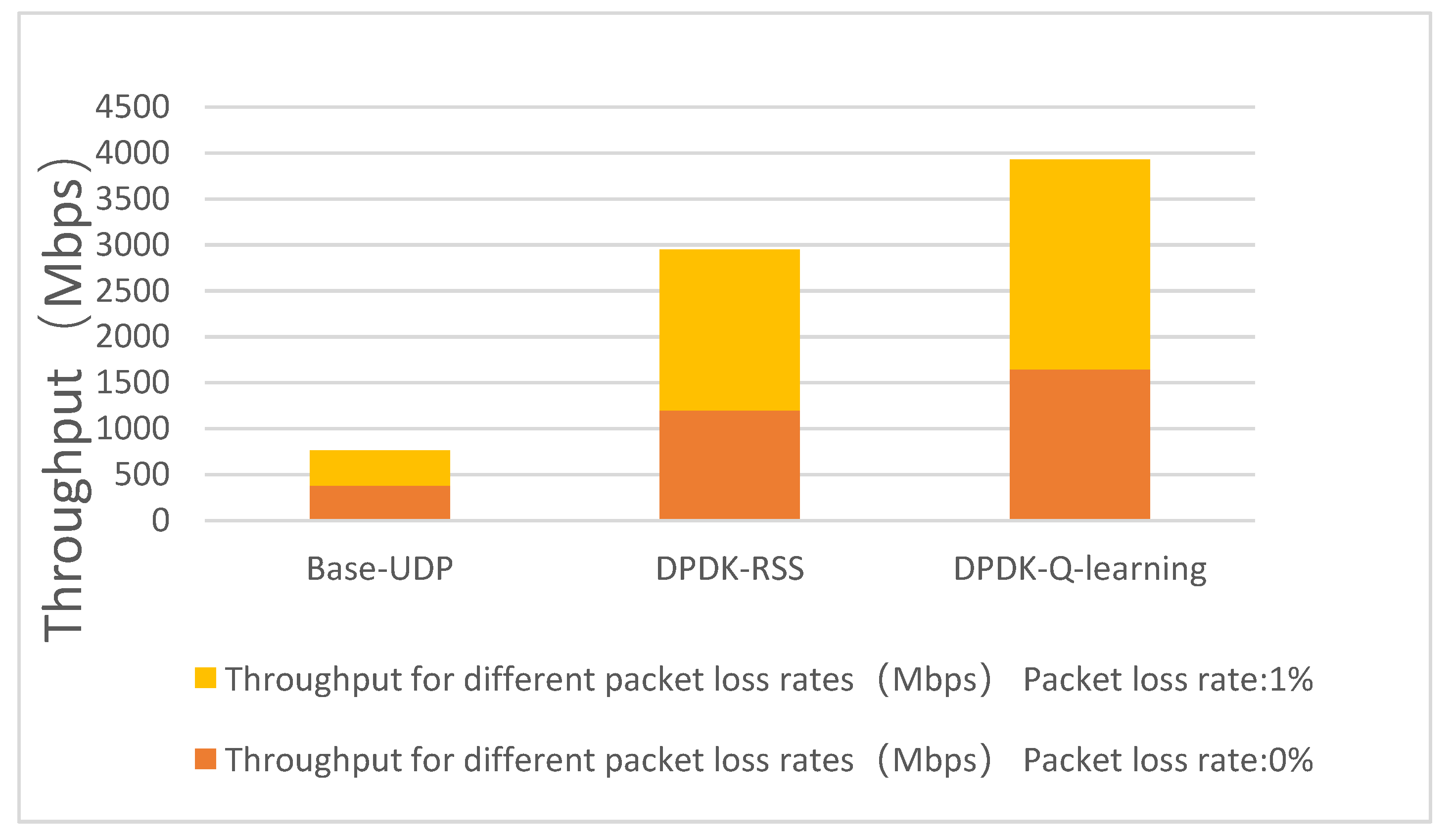

8.4. Algorithm Comparison

9. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Cisco. Cisco Annual Internet Report (2018–2023) White Paper. 2020. Available online: https://www.cisco.com/c/en/us/solutions/collateral/executive-perspectives/annual-internet-report/white-paper-c11-741490.html (accessed on 2 May 2023).

- Zheng, Y.; Xiaowu, H.; Jiaxing, W.; Jing, W.; Yi, Z. Edge computing Technology for Real time Video Stream Analysis. China Sci. Inf. Sci. 2022, 52, 1–53. [Google Scholar]

- Jedari, B.; Premsankar, G.; Illahi, G.; Di Francesco, M.; Mehrabi, A.; Ylä-Jääski, A. Video caching, analytics, and delivery at the wireless edge: A survey and future directions. IEEE Commun. Surv. Tutor. 2021, 23, 431–471. [Google Scholar]

- Altamimi, S.D.; Shirmohammadi, S. QoE-Fair DASH Video Streaming Using Server-side Reinforcement Learning. ACM Trans. Multimed. Comput. Commun. Appl. 2020, 16, 1–21. [Google Scholar] [CrossRef]

- Ueno, Y.; Nakamura, R.; Kuga, Y.; Esaki, H. P2PNIC: High-Speed Packet Forwarding by Direct Communication between NICs. In Proceedings of the IEEE INFOCOM 2021—IEEE Conference on Computer Communications Workshops (INFOCOM WKSHPS), Vancouver, BC, Canada, 10–13 May 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Li, C.; Chen, Q. Dynamic monitoring model based on DPDK parallel communication. J. Comput. Appl. 2020, 40, 335–341. [Google Scholar]

- Wu, M.; Chen, Q.; Wang, J. A Flexible High-Speed Bypass Parallel Communication Mechanism for GPU Cluster. IEEE Access 2020, 8, 103256–103272. [Google Scholar] [CrossRef]

- Zhou, D.; Fan, B.; Lim, H.; Kaminsky, M.; Andersen, D.G. Scalable, high performance ether-net forwarding with CuckooSwitch. In Proceedings of the Ninth ACM Conference on Emerging Networking Experiments and Technologies (CoNEXT ’13), New York, NY, USA, 9–12 December 2013; pp. 97–108. [Google Scholar] [CrossRef]

- Hu, N.; Cen, X.; Luan, F.; Sun, L.; Wu, C. A Novel Video Transmission Optimization Mechanism Based on Reinforcement Learning and Edge Computing. Mob. Inf. Syst. 2021, 2021, 6258200. [Google Scholar] [CrossRef]

- Qi, Z. A novel video delivery mechanism for caching-enabled networks. Multimed. Tools Appl. 2020, 79, 25535–25549. [Google Scholar]

- Gao, Y.; Wei, X.; Kang, B.; Chen, J. Edge Intelligence Empowered Cross-Modal Streaming Transmission. IEEE Netw. 2020, 35, 236–243. [Google Scholar] [CrossRef]

- Xu, T.; Chen, X.; Wu, C.; Wang, J.; Zheng, R.; Liu, D.; Tan, Y.; Ren, A.; Li, J. 3DS: An Efficient DPDK-based Data Distribution Service for Distributed Real-time Applications. HPCC/DSS/SmartCity/DependSys. In Proceedings of the 8th DependSys 2022, Hainan, China, 18–20 December 2022; pp. 1283–1290. [Google Scholar]

- Tashtarian, F.; Falanji, R.; Bentaleb, A.; Erfanian, A.; Mashhadi, P.S.; Timmerer, C.; Hellwagner, H.; Zimmermann, R. Quality Optimization of Live Streaming Services over HTTP with Reinforcement Learning. In Proceedings of the 2021 IEEE Global Communications Conference (GLOBECOM), Madrid, Spain, 7–11 December 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Park, P.K.; Moon, S.; Hong, S.; Kim, T. Experimental Study of Zero-Copy Performance for Immersive Streaming Service in Linux. In Proceedings of the 2022 13th International Conference on Information and Communication Technology Convergence (ICTC), Jeju Island, Republic of Korea, 19–21 October 2022; pp. 2284–2288. [Google Scholar] [CrossRef]

- Zeng, Z.; Monis, L.; Qi, S.; Ramakrishnan, K.K. DEMO: MiddleNet: A High-Performance, Lightweight, Unified NFV &Middlebox Framework. In Proceedings of the 2022 IEEE 8th International Conference on Network Softwarization (NetSoft), Milan, Italy, 27 June 2022–1 July 2022; pp. 246–248. [Google Scholar]

- Wu, M.; Chen, Q.; Wang, J. Toward low CPU usage and efficient DPDK communication in a cluster. J. Supercomput. 2022, 78, 1852–1884. [Google Scholar] [CrossRef]

- Shi, J.; Pesavento, D.; Benmohamed, L. NDN-DPDK: NDN Forwarding at 100 Gbps on Commodity Hardware//ICN ’20. In Proceedings of the 7th ACM Conference on Information-Centric Networking, ACM, Virtual Event, 29 September–1 October 2020. [Google Scholar]

- Haecki, R.; Humbel, L.; Achermann, R.; Cock, D.; Schwyn, D.; Roscoe, T. CleanQ: A lightweight, uniform, formally specified interface for in-tra-machine data transfer. arXiv 2019, arXiv:1911.08773. [Google Scholar]

- Schramm, N.; Runge, T.M.; Wolfinger, B.E. The Impact of Cache Partitioning on Software-Based Packet Processing. In Proceedings of the 2019 International Conference on Networked Systems (NetSys), Munich, Germany, 18–21 March 2019. [Google Scholar]

- Zou, P.; Ozel, O.; Subramaniam, S. Optimizing Information Freshness Through Computation-Transmission Tradeoff and Queue Management in Edge Computing. IEEE/ACM Trans. Netw. 2021, 29, 949–963. [Google Scholar] [CrossRef]

- Intel. Intel DPDK: Programmers Guide [OL]. Available online: https://doc.DPDK.org/guides/index.html (accessed on 1 July 2022).

- Kai, L.; Lin, Y.; Xiangzhan, Y.; Yang, H. Dynamic load balancing method for traffic based on DPDK. Intell. Comput. Appl. 2017, 7, 85–89, 91. [Google Scholar]

- Li, C.; Song, L.; Zeng, X. An Adaptive Throughput-First Packet Scheduling Algorithm for DPDK-Based Packet Processing Systems. Futur. Internet 2021, 13, 78. [Google Scholar] [CrossRef]

- Pandey, A.; Bargaje, G.; Krishnam, S.; Anand, T.; Monis, L.; Tahiliani, M.P. DPDK-FQM: Framework for Queue Management Algorithms in DPDK. In Proceedings of the 2020 IEEE Conference on Network Function Virtualization and Software Defined Networks (NFV-SDN), Leganes, Spain, 10–12 November 2020. [Google Scholar]

- Xi, S.; Li, F.; Wang, X. FlowValve: Packet Scheduling Offloaded on NP-based Smart NICs. In Proceedings of the 2022 IEEE 42nd International Conference on Distributed Computing Systems (ICDCS), Bologna, Italy, 10–13 July 2022; pp. 347–358. [Google Scholar] [CrossRef]

- Heping, F.; Shuguang, L.; Yongyi, R.; Kunhua, Z. Integrated scheduling optimization of multiple data centers based on deep reinforcement learning. J. Comput. Appl. 2023, 1, 1–11. [Google Scholar]

- Li, X. An efficient data evacuation strategy using mul-ti-objective reinforcement learning. Appl. Intell. 2022, 52, 7498–7512. [Google Scholar] [CrossRef]

- Yi, S.; Li, X.; Wang, H.; Qin, Y.; Yan, J. Energy-aware disaster backup among cloud datacenters using mul-ti-objective reinforcement learning in software defined network. Concurr. Comput. Pract. Exp. 2022, 34, e6588. [Google Scholar] [CrossRef]

- Qin, Y.; Wang, H.; Yi, S.; Li, X.; Zhai, L. An energy-aware scheduling algorithm for budget-constrained scientific workflows based on multi-objective reinforcement learning. J. Supercomput. 2019, 76, 455–480. [Google Scholar] [CrossRef]

- Qin, Y.; Wang, H.; Yi, S.; Li, X.; Zhai, L. A mul-ti-objective reinforcement learning algorithm for time constrained scientific workflow scheduling in clouds. Front. Comput. Sci. 2021, 15, 24–35. [Google Scholar] [CrossRef]

- Xie, J.; Miao, M.; Ren, F.; Cheng, W.; Shu, R.; Zhang, T. Overload Detecting in High Performance Network I/O Frameworks. In Proceedings of the IEEE 2nd International Conference on Data Science and Systems (HPCC/SmartCity/DSS), Sydney, NSW, Australia, 12–14 December 2016. [Google Scholar]

- Wiles, K. Pktgen-DPDK [EB/OL]. Available online: https://github.com/pktgen/Pktgen-DPDK (accessed on 3 July 2022).

| Symbol | Description |

|---|---|

| UN | set of transfer processing units data |

| unitij | the transfer CPU processing thread core corresponding to the jth interface of the ith NIC |

| data | the set of data blocks to be transferred for processing |

| datak | the load amount demanded for the kth data block |

| the original load of unitij before scheduling starts | |

| the existing load of unitij at a certain point in the scheduling process | |

| the total load capacity of unitij | |

| avg_loadcur | the average load utilization rate of all units in the current round |

| 0–1 variables, set to 1 if datak are assigned to unitij processing; otherwise, set to 0 | |

| indicates the deviation of the load utilization of unitij in the current round relative to the average load utilization of all transfer processing units |

| 1: Initialize the load allocation array LOAD_DIST and read in the load demands for each data block transferred. | |

| 2: Initialize all elements in the Q-value table old_Q to 0. | |

| 3: Initialize the discount factor (0 < < 0.5, the default is 0.3) in the Q-value table update equation and the reward value matrix R. The matrix R is the reward value matrix and the elements in the matrix take the reward value obtained by assigning processing units to port j of NIC i. The port of NIC forms a mapping relationship with the receiving queue. | |

| 4: If the processing unit has not been assigned for the current load demand: 4.1: Initialize a random number rand, if rand < ε, then randomly select an available processing unit from the current state to reach the next state; otherwise, select that load unit with the largest R value from it according to the R matrix. | |

| 4.2: | (7) |

| is the value corresponding to the selected action in state s, R(s,a) is the reward value obtained after selecting a processing unit, is the discount factor (0 < < 0.5), and is the expected value obtained after selecting the processing unit with the largest reward value in the state s’. 4.3: Select the next load demand, take the next state as the current state, and go back to step 4. | |

| 5: If the Q-value table does not converge, add 1 to the number of iterations. Reinitialize the current state as the starting point, set old_Q as the current Q-value table, and go back to step 4 to continue learning. If the Q-value table converges, the load allocation scheme is obtained according to the Q-value table. Add the selected set of processing units in the allocation scheme to the load allocation array LOAD_DIST. | |

| 6: Exit the loop, return to the LOAD_DIST array, and update the processing unit status. | |

| 1: Initialize available unit set and selection operation set. |

| 2: For each demand: |

| 3: Start Q-learning; |

| 4: If Q table is convergent: |

| 5: Break; |

| 6: Use the Q table to obtain unit selection decision. |

| 7: Add the selection decision to selection operation set. |

| 8: Display the load distribution result. |

| Name | Model/Version | Description |

|---|---|---|

| CPU | Intel (R) Xeon (R) E31230 V2@3.3Ghz | 2 × 4 cores with 8 threads |

| Memory | DDR31600Mhz | 32 G |

| OS | Debian9.0/Centos7.0 | - |

| kernel | Linux version 3.10.0862.14.4.e17.x86_64 | - |

| NIC | I350-T4-4-port 1 Gb/s each port PCI-e X4 | - |

| DPDK | DPDK17.11.3 | 8-Ports (2 × 4) |

| OVS | 2.9.0 | - |

| Package Length (Byte) | pps | Mbps | CPU Cores | RxTx Queue Numbers | RxTx Queue Length | Number of DPDK Packets Received | DPDK-Delay (s) | Number of Q-Learning-DPDK Packets Received | Q-Learning-DPDK-Delay (s) |

|---|---|---|---|---|---|---|---|---|---|

| 512 | 230,000 | 960 | 1 | 24 | 512 | 100,000,000 | 36.53 | 100,000,000 | 31.35 |

| 384 | 295,600 | 950 | 1 | 24 | 512 | 100,000,000 | 32.12 | 100,000,000 | 26.17 |

| 256 | 512,000 | 952 | 1 | 24 | 512 | 100,000,000 | 18.74 | 100,000,000 | 12.54 |

| 128 | 1,019,000 | 997 | 1 | 24 | 512 | 100,000,000 | 12.35 | 100,000,000 | 8.67 |

| 64 | 1,483,000 | 996 | 1 | 1 | 512 | 99,271,456 | 9.27 | 99,482,355 | 6.54 |

| 64 | 1,483,000 | 996 | 1 | 24 | 512 | 99,907,154 | 9.14 | 99,950,126 | 6.32 |

| 64 | 1,483,000 | 996 | 1 | 24 | 512 | 99,147,518 | 78.56 | 99,467,518 | 61.16 |

| 64 | 1,483,000 | 996 | 2 | 24 | 512 | 99,192,432 | 74.44 | 99,252,432 | 58.47 |

| 64 | 1,483,000 | 996 | 4 | 24 | 512 | 99,084,322 | 68.35 | 99,784,322 | 60.41 |

| 64 | 1,483,000 | 996 | 8 | 24 | 512 | 99,160,316 | 69.42 | 99,862,157 | 63.22 |

| 64 | 1,483,000 | 996 | 8 | 24 | 4096 | 99,117,416 | 75.33 | 99,999,366 | 65.36 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liu, H.; Chen, Q.; Liu, P. An Optimization Method of Large-Scale Video Stream Concurrent Transmission for Edge Computing. Mathematics 2023, 11, 2622. https://doi.org/10.3390/math11122622

Liu H, Chen Q, Liu P. An Optimization Method of Large-Scale Video Stream Concurrent Transmission for Edge Computing. Mathematics. 2023; 11(12):2622. https://doi.org/10.3390/math11122622

Chicago/Turabian StyleLiu, Haitao, Qingkui Chen, and Puchen Liu. 2023. "An Optimization Method of Large-Scale Video Stream Concurrent Transmission for Edge Computing" Mathematics 11, no. 12: 2622. https://doi.org/10.3390/math11122622

APA StyleLiu, H., Chen, Q., & Liu, P. (2023). An Optimization Method of Large-Scale Video Stream Concurrent Transmission for Edge Computing. Mathematics, 11(12), 2622. https://doi.org/10.3390/math11122622