1. Introduction

Currently, a significant multidiscipline effort deals with developing technologies that can be applied for training in simulated environments. Such training can be used in different scenarios, from studying drivers’ behavior to improving road safety and pilot training, the latter of which has been one of the leading forces for the development of these systems since the early years. These technologies require understanding human sensory systems and their influence to be studied effectively with the proposer instrumentation and modeling tools.

During simulator training, body movements cannot precisely match what is being shown on screen, causing a mismatch in sensory information and leading to simulator sickness as described in [

1,

2]. This discrepancy is caused by several factors like delays due to tracking and rendering of the output image and physical limitations of the movement range of training systems. Consequently, attempts to overcome this problem covers several different research directions, including but not limited to dynamic motion systems, forecasting movement, and galvanic vestibular stimulation [

3]. However, the problem can also be reversed, so that body reaction is used to estimate the accuracy of simulated motion. One of such indicators is an ocular response to enforced accelerations by an external system or device, just like a flight simulator.

Due to the size and position of the fovea, which is the part of a human eye retina with a high density of light-sensitive photoreceptors, clear vision is achieved when the object of interest is moving slower than 4°/s. A unique mechanism exists so that the region of interest on the acquired image stays on the retina as the body moves. It is called the vestibular–ocular reflex (VOR), and it is one of the interaction processes between a human body and the surrounding environment. It operates via a neural path between the vestibular and oculomotor systems: eyes compensate head rotations by rotating in the opposite direction [

4].

Incorrect functioning of VOR leads to disruptions of clear vision such as the inability to compensate micromovements of the head. However, as an existing connection between external accelerations and angular velocities with the vestibular response is not entirely understood, VOR cannot be estimated directly. A natural way to study VOR is to observe it using immersive technologies (such as virtual or mixed reality) and produce reliable and accurate mathematical models of VOR with human motion as input and electrophysiological response as output. This response could be electroencephalographic signals, oculographic information, or eye motion data, among others. Despite the importance of such mathematical model design, the number and complexity of physiological aspects increase the difficulty of generating specific models for given motion cues that use a reasonably small number of parameters [

5].

An alternative way to represent VOR dynamics is to use nonparametric models to reproduce the aforementioned input–output relationship while maintaining a tractable numerical complexity. Several methodologies propose nonparametric models, including adaptive autoregressive systems, polynomial approximations, swarm optimization techniques, and artificial neural networks. Nevertheless, the dynamic nature of VOR limits the applicability of the models under a wide variety of working scenarios. Dynamic approximate models can also be considered as modeling options for systems describing VOR dynamics. In particular, differential neural networks (DNNs) have been used for a long time as efficient modeling strategies of dynamic systems with uncertain mathematical models that are affected by perturbations and modeling inaccuracies. Notice that DNN based models could be well fitted to represent the VOR dynamics [

6,

7]. Still, the selection of activation functions could be a matter of discussion, considering that sigmoidal or other monotonical functions may not capture the complex electrophysiological VOR response.

Izhikevich model of neuron activity [

8] is a bioinspired characterization of electrophysiology-based approximate mathematical models. Izhikevich artificial mathematical models have been proven to be an efficient model of diverse neuron responses [

9]. Therefore, an aggregation of several Izhikevich artificial neurons is named electrophysiology-inspired approximated DNN or spiking DNNs [

10,

11].

Because of the modeling abilities of DNN using Izhikevich neuron dynamics, this paper proposes a method to approximate oculomotor response using the described spiking DNN model. The main contributions of this study can be summarized as follows:

a novel modeling strategy is proposed for the ocular response on head movements based on a spiking DNN with no parameters;

a new aggregated system is used to confirm the validity of the proposed model. It consists of an experimental system with a motion platform, inertial sensors, an eye-tracking device for acquiring data, and a neural network for processing it.

This manuscript is organized as follows. In

Section 2, we provide a general description of the vestibular–ocular response. In

Section 3, we introduce the uncertain model of ocular response, which is then formulated as a spiking-differential-neural-network-based nonparametric identifier in

Section 4. In

Section 5, we describe general modeling strategy as the process of collecting experimental data. In

Section 6, we cover processing of the obtained data and assessing performance of the proposed model. Conclusions and final remarks of

Section 7 close the study.

2. Description of Vestibular–Ocular Connection

As jet aviation and then crewed spaceflight progressed, they brought attention to several physiological phenomena: a vestibular–ocular reflex. Its disruption was stated to lead to deterioration of a human being in the pioneering work by A.L. Yarbus [

12]. Possible causes of disorder include biological prerequisites like vestibular neuronitis [

13] or congenital predisposition [

14] as well as environmental change. Crewed spaceflight provided an essential context for studying the activity of the vestibular system and its connection to the rest of the body. The papers by I. Kozlovskaya and L. Kornilova (Institute of Biomedical Problems, Moscow, Russia) [

15,

16] examine vestibular–sensory disorders in a weightless environment and methodology for diagnosing the VOR functioning.

A general approach for detecting dysfunctions is to compare actual data with the reference. For vestibular–sensory disorders, the latter takes the form of a VOR model. The most common method of creating such models is to describe the system as a dynamic one formed by differential and difference equations. One such example is [

17] that uses a bilateral model of an eye. It describes ocular dynamics based on the activity of extraocular muscles connected to the right and left sides of an eye. These muscles are more sensitive to positive difference, so they are more active when the difference is negative [

18]. The downside of this model is that muscle behavior is described using a large number of parameters that require the application of genetic algorithms to improve the model accuracy [

19].

An alternative method was proposed in [

20]. It uses statistical methods to approximate the actual dynamics of optokinetic–vestibule–cervical and vestibular nystagmus. Typical dynamics of nystagmus’ slow phase drive the values of the five parameters of the model. With known dynamics of head rotations and depending on supporting visual information, this model generates both phases of nystagmus. However, such modeling approaches do not provide enough flexibility and require vast processing power to solve the underlying optimization problem.

3. Modeling Ocular Response to Enforced Acceleration

This study is focused on developing a nonparametric model based on a single-layer DNN able to characterize ocular response. The network uses artificial neurons implemented as Izhikevich models, so it operates as a Spiking DNN or SDNN for short. The proposed model produces a vector of two angular coordinates of ocular rotation based on linear acceleration and angular velocities from a vestibular system which serves as an input. Training input data come from a tracking system and ground truth output from a bidimensional eye tracker. The two signals were resampled to have equally acquired information.

Let be the coordinates vector of the eye movement. Its evolution over time is forced by information from the vestibular system—linear acceleration and angular velocity . These values are obtained with respect the body motion.

The electrophysiological system relating inertial information with ocular movement operates using the physiological process of VOR. The continuous dynamics of

as the system state vector, coupled with input vector

justifies that a model of this relation has uncertain dynamics defined by the following differential equation:

Here is the state vector, is the input vector that drives uncertain dynamics described by the proposed vector function . f is Lipschitz with respect to its first argument with a positive constant . is the vector of external perturbations to the system not involved in the modeling process. These perturbations belong to a subset of . Such class is admissible considering the nature of inputs and signals that affect the VOR dynamics.

4. Formulation of Spiking-Differential-Neural-Network-Based Model

For the vestibular–ocular system with an uncertain mathematical model (

1), the SDNN formulation assumes the following form:

The vector defines the SDNN state. The matrix describes the linear component of the network dynamics. This matrix is selected as a Hurwitz one to provide boundedness for the state . The two following components form approximation of an uncertain system with traditional SDNN. and are the weights matrices and and are the vector and matrix of activation functions respectively. Choice of the exact values of and is left to the SDNN designer, depending on the value of expected approximation error and methodologies of selecting the size of each layer of general artificial neural networks.

Dynamic nature of the real biological neural networks bioinspired the proposal in this study to use activation functions based on neuron evolution. Thus, each component of

and

is described as the output of the Izhikevich model of neuron [

8]:

Here

,

and

are the scalar parameters of the Izhikevich model.

characterizes the artificial neuron response and is used as the model output in (

2).

is a vector of input weights.

Function

in (

2) represents approximation error due to selection of a finite number of Izhikevich neurons in the proposed SDNN design. Based on SDNN modeling characteristics this error belongs to the following set:

. This result is a consequence of the dynamics of the Izhikevich artificial neuron.

The term

in (

2) characterises external perturbations, or elements affecting VOR system dynamics while being independent of the states values. This term can be said to belong to the set

with

being a positive scalar. Together, the two terms

and

represent the degree of vagueness of the underlying electrophysiological system when describing dynamic activation functions of the SDNN representation.

Based on the described approximate dynamical model, this study considers a model for uncertain dynamics of the VOR based on the design of an adaptive SDNN. The proposed approximate adaptive model can be described as follows:

Vector

defines the approximated dynamics of the 2 eye coordinates. The right-hand side of the VOR dynamics consists of spiking neurons and satisfies the model structure described in (

2). The parameters

and

in (

5) must be adjusted by a set of learning laws. It is necessary to have the learning laws derived in such a way so that the proposed identifier operating under these learning laws and identical input can reproduce state trajectories of (

1). The aforementioned allows issuing the following problem formulation corresponding to the modeling process based on the application of Izhikevich artificial neurons.

Problem statement for the nonparametric modeling with SDNN.

The problem considered in this study is designing the nonlinear algorithm

adjusting the weights

in a way that ensures the identification error

has a stable equilibrium point at the origin:

where

defines the quality of approximation of the proposed SDNN.

is a positive definite matrix that adjusts influence of different components of the modeling error vector to the overall approximation quality.

This problem can be solved using Lyapunov stability theory by deriving dynamics of

and

from identification error

. To develop the stability study, the dynamics of

admits the following ordinary differential equation:

The process of applying Lyapunov-based stability confirms that identification error has an upper ultimate bound [

21,

22]. The suggested Lyapunov function has a quadratic form that depends on identification error and SDNN weights. Dynamics of these weights must be selected in such a way to ensure identification error may have an ultimate bound. The following theorem demonstrates that such a bound exists.

Theorem 1. If there exist positive definite matrices and and positive and bounded scalar such that for the matrix inequality there exists at least one positive definite solution , then the learning laws described bywith scalars , , with any matrix satisfying justify the identification error Δ converging to a ball with its center at the origin and an ultimate bound given by Proof of Theorem 1. Taking into consideration the dynamics of the identification error

presented in (

7), one may propose an energetic function depending on the deviation between the state

and

as well as the deviation between the weights estimated with the identifier and the actual values of the approximation.

For the particular case of the SDNN considered in this study, the aforementioned energetic function is given by:

Here

is the tracking error already, for which its dynamics has been defined in (

7). The symbol

represents the weighted

norm of finite-dimensional vectors with the positive definite and symmetric matrix

. Additionally, the terms

,

are the matrix norms of the deviation weights

. For this study, the trace operator is selected as the matrix norms for the weights deviations. Hence, the energetic function is

Notice that the function

E operates as a Lyapunov-like class with a positive definite, null value when the three arguments vanish and are radially unbounded. Now, the full-time derivative of

E corresponds to

where

. The term

admits the following upper bound

where

, while the value of

and

have been presented in the learning laws for the proposed identifier.

Transition in (

14) was obtained by applying the Young’s inequality [

21]

, which is valid for any

,

and any positive definite and symmetric matrix

a number of times. Taking the result in (

14) into the right-hand side of the time derivative of

, leads to

With the addition and subtraction of the following terms

,

and

, the next right hand side holds for the time derivative of

Using the learning laws (

9) and the matrix inequality (

8) presented in the theorem statement, transforms the right-hand side of the derivative of

E into

Using the definition of the Lyapunov yields the following outcome:

The integration of these last differential inclusions and following the convergence to an invariant set scheme presented in [

21], yields to prove the ultimate boundedness of the identification error as well as the weights. □

The obtained values of

and

that minimize the expression (

6) may be fixed and used further for solving the prediction problem. The scheme of the whole process (identification and prediction) is shown in

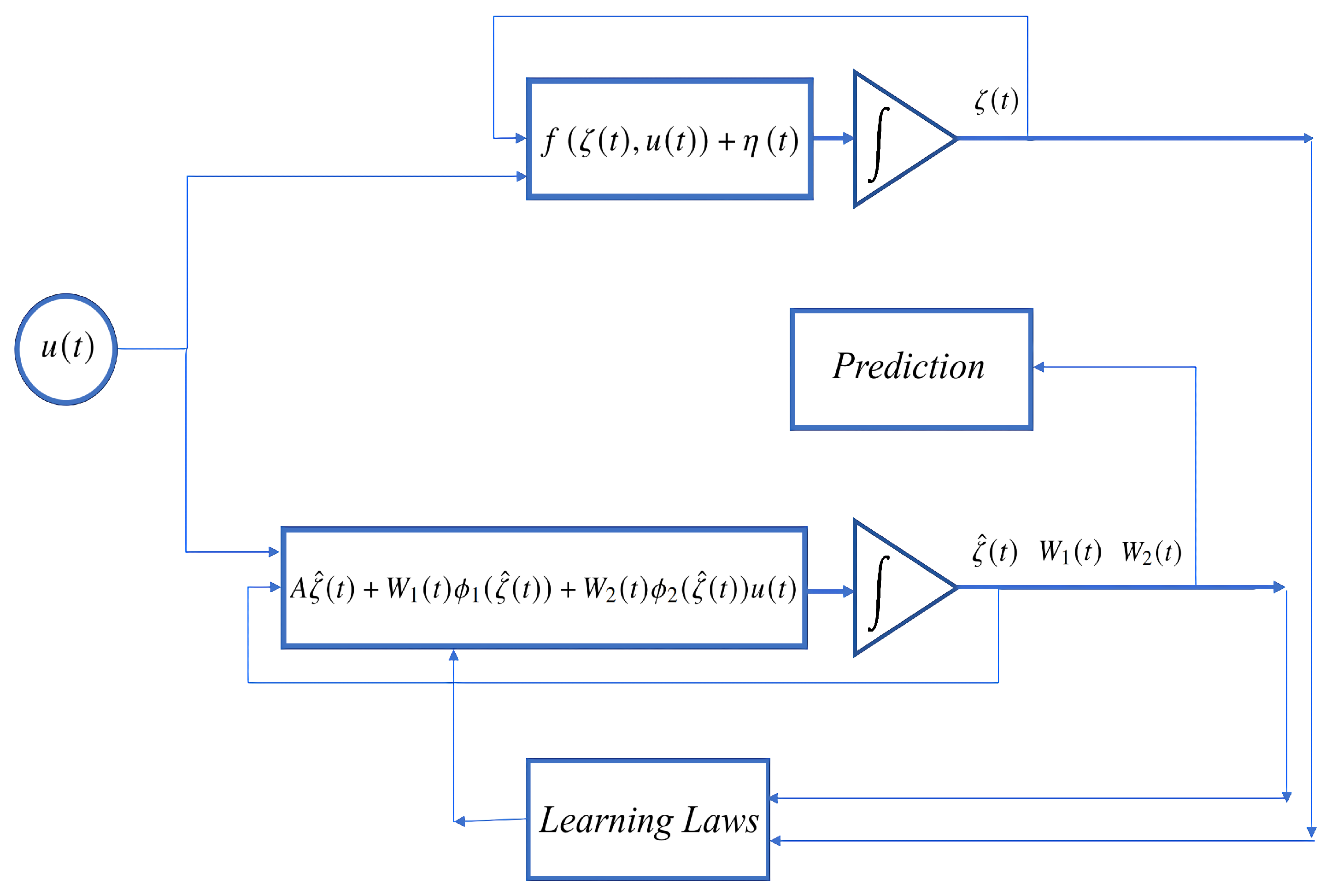

Figure 1.

5. Modeling Process and Experimental Validation

The proposed approximate model was tested in an experiment that collects the data from a volunteer using an instrumented controlled acceleration motion device. The data were recorded at a predefined frequency and then injected (offline) to the proposed SDNN-based identifier. This section details all the aspects of the experiment.

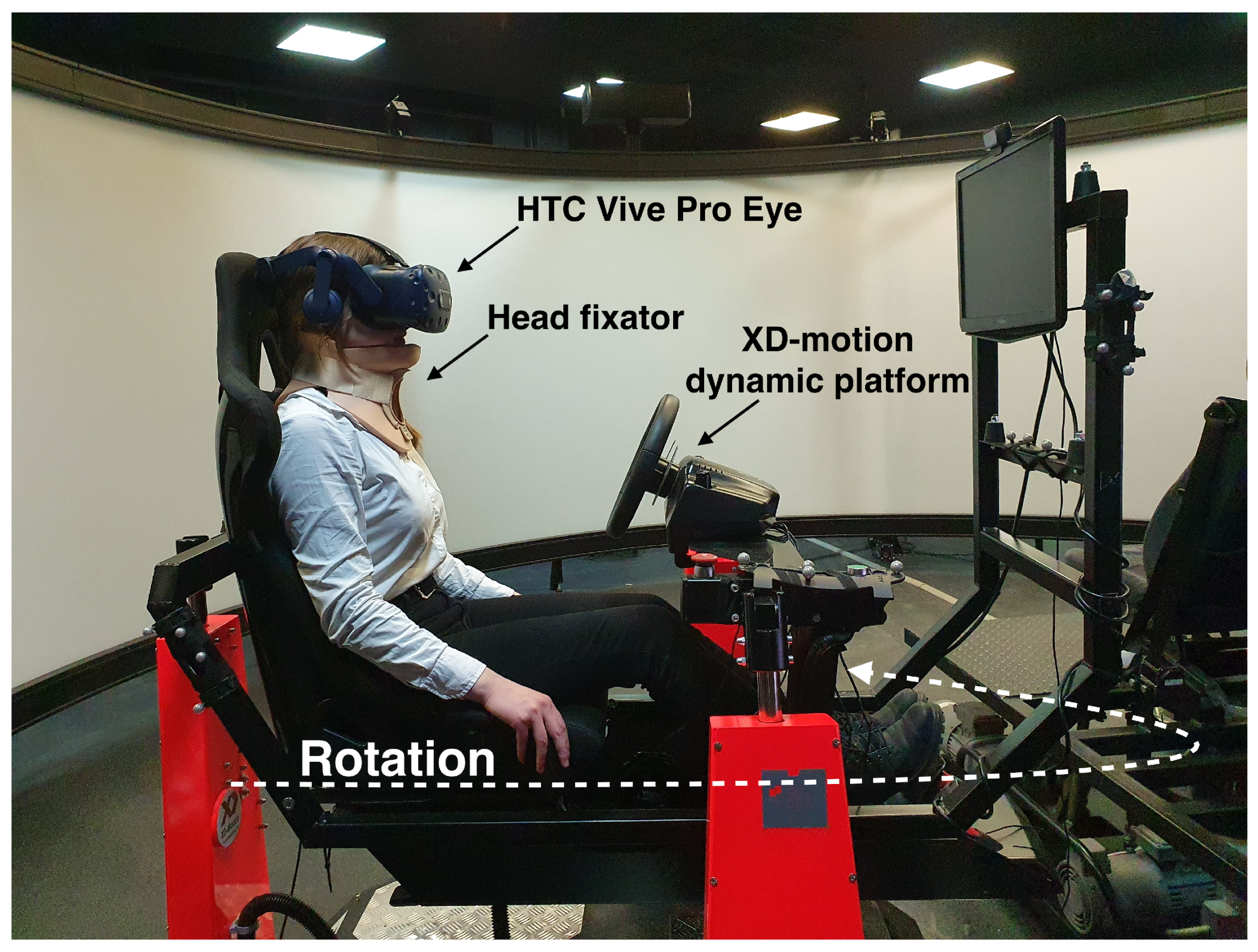

A rotating dynamic platform was used to enforce controlled rotational movements on a test subject. This experiment used an XD-motion platform with 4 degrees of freedom produced by Vympel corporation. The data collecting system is based on a virtual reality headset HTC Vive Pro Eye. The headset’s position and orientation quaternion in a fixed coordinate system were obtained from the SteamVR tracking system. SRanipal software gathered data provided by a built-in eye-tracking system and produced view origin and direction vectors for each eye as the output at a maximum frequency of 120 Hz. The whole experimental setup is shown in

Figure 2. The resulting ocular movements and head dynamics were recorded and later processed to be modeled by the proposed SDNN.

The experimental process is as follows. First, a test subject puts on and adjusts the belts of the headset for it to stay firmly fixed on the head throughout the whole experiment. Then, the eye tracker is calibrated according to SRanipal documentation and guidelines. After finishing the calibration procedure, any adjustment of the headset by the test subject leads to resetting the experiment, according to SRanipal guidelines. The test subject is then sat on the dynamic platform straight. The platform performs rotational movements around the vertical axis, alternating clockwise and counterclockwise. Movement frequency and amplitude remain constant for 30 s, after which a 20-s break takes place, and new movement parameters are loaded. The order of these parameter sets is randomized. The test subject isn’t provided any indication of these parameters. Visual and audio cues of motion are further reduced with the headset screen showing solid black and headphones playing static during the experiment.

The choice of movement pattern is based on several factors. First, horizontal semicircular channels are stimulated more than the other two for this kind of movement, so ocular response is also primarily horizontal, allowing to focus on a single axis. Second, the platform has the most reach on this rotational axis, which allows for more diverse movement patterns. Additionally, pitch and roll rotations on this platform are performed by adjusting the length of the legs. However, this adjustment happens even in an idle state when no rotation is being performed, leading to additional platform vibrations introducing parasitic ocular response.

During the processing phase, each movement pattern is handled individually. The leading and trailing 3 s of each recording are trimmed. The view direction vector is converted from a headset coordinate system into angles of eye rotation in horizontal and vertical planes. The head coordinates data were sampled at a lower frequency than eye-tracking data, so the former were smoothed using a Gaussian filter. Head orientation quaternion was converted into Euler angles. After leaving only data corresponding to horizontal angles, angular velocity and linear acceleration were calculated.

6. Numerical Simulation

The collected data from the two motion patterns were used to test the proposed SDNN model. These two patterns are 18 25-degree rotation cycles per minute and 50-degree rotations at a rate of 4.8 cycles per minute. They are later referred to as high- and low-frequency movements. As described earlier, linear accelerations and angular velocities formed the system input

u while eye rotation angles were used as a reference state

.

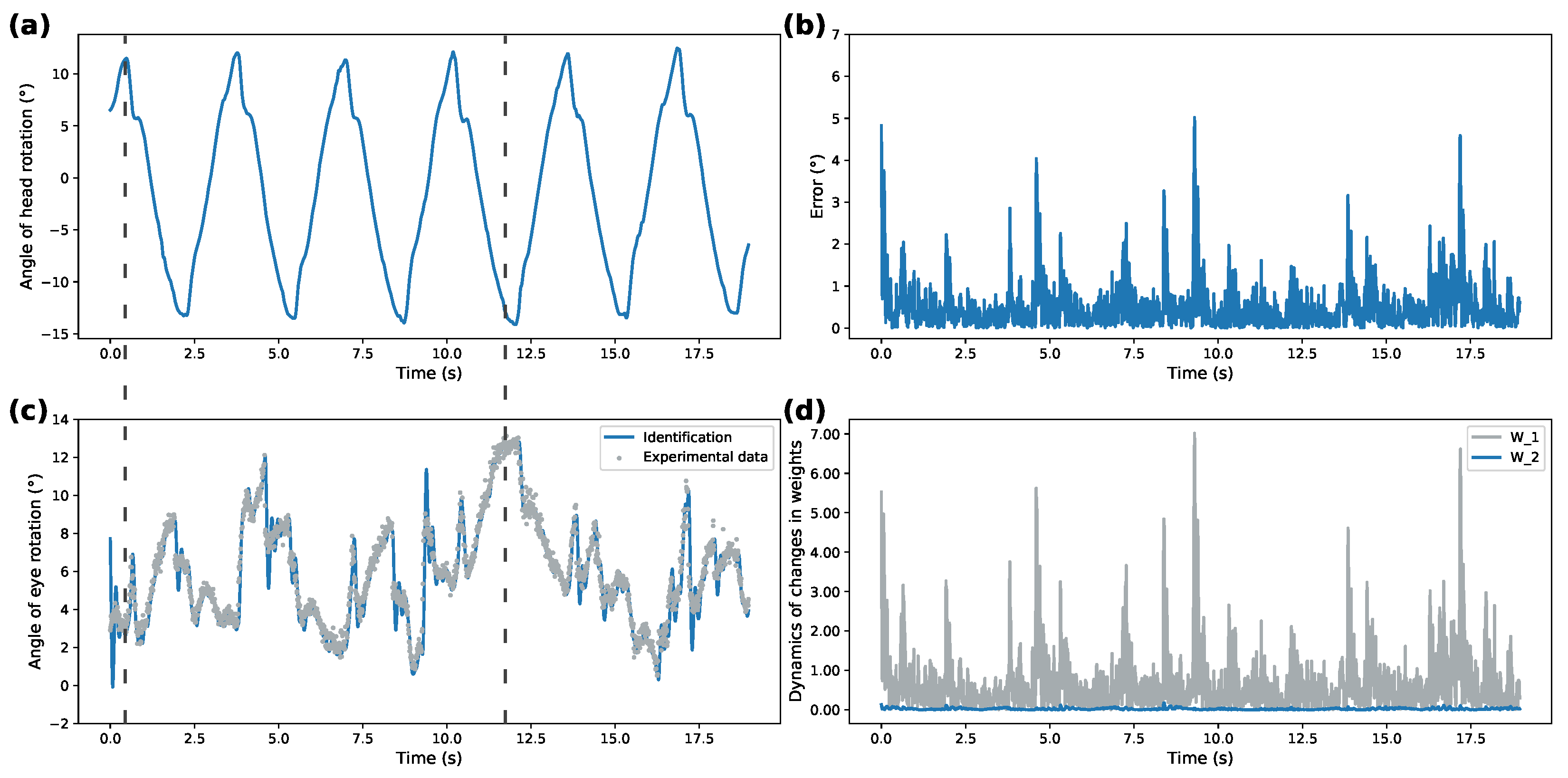

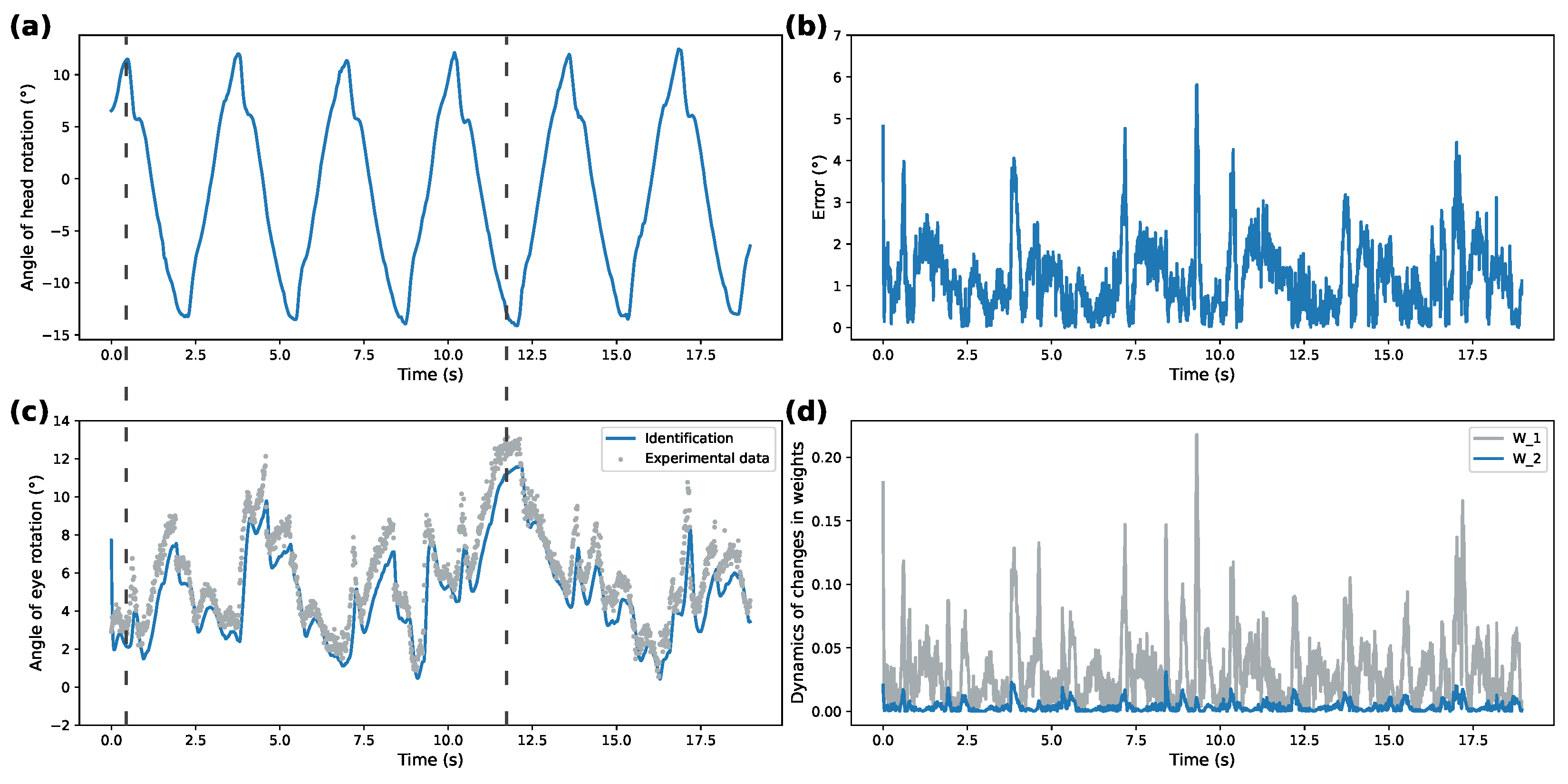

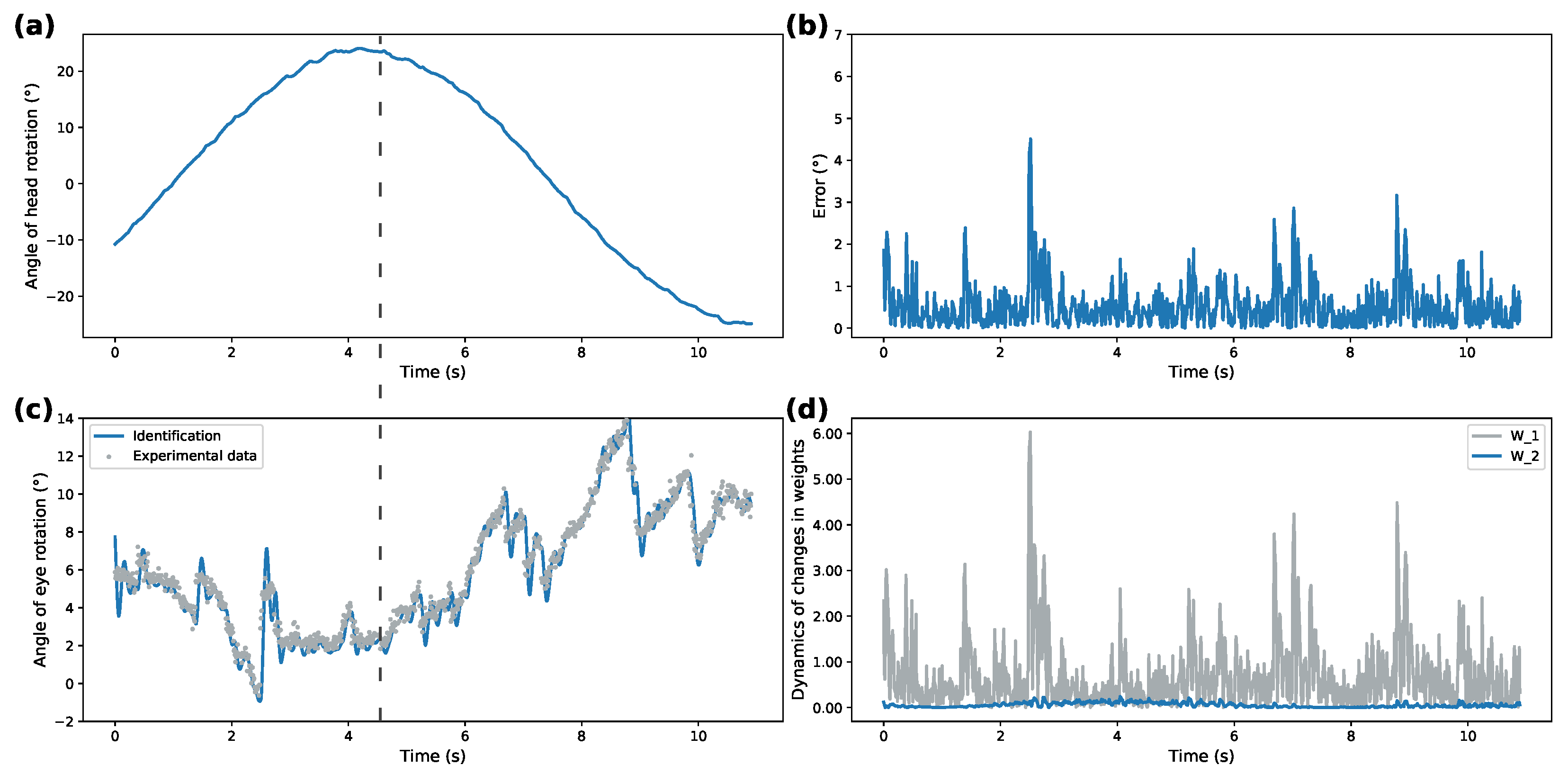

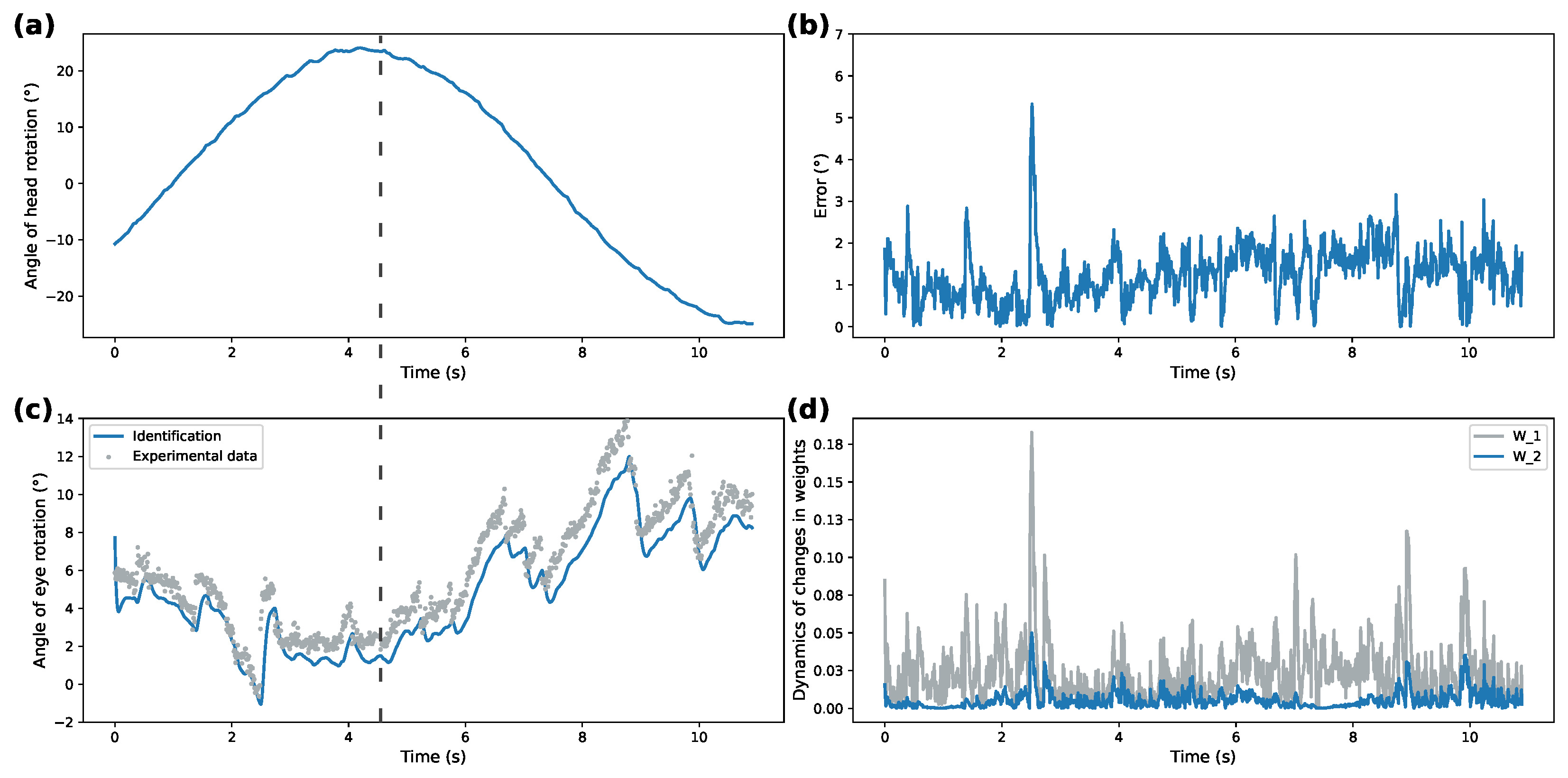

Figure 3,

Figure 4,

Figure 5 and

Figure 6 compare dynamics of the proposed SDNN identifier with Izhikevich and sigmoidal activation functions on the obtained data.

Figure 3a and

Figure 5a demonstrate recorded head rotation profile.

Figure 3b and

Figure 5b show evolution of identification error (shown as mean square error) of the proposed identifier. In both cases, the origin is shown to be a practical stable equilibrium point for the analyzed modeling error. Direct comparison between recorded and modeled data is shown in

Figure 3c and

Figure 5c. Finally,

Figure 3d and

Figure 5d show evolution of the weights from initial conditions. The highlighted dashed line on both figures illustrates the work of VOR. The correspondence between ground truth eye-tracking data and identifier state shows the validity of the proposed identifier.

The identification performance of the proposed spiking identifier was compared against the traditional sigmoidal DNN-based identifier, shown in

Figure 4 and

Figure 6. These figures are structured identically to

Figure 3 and

Figure 5. Note the different

y-axis scales between all figures on the weights dynamics plot. Parameter values for both identifiers are presented in

Table 1. Numerical values are compared in

Table 2 as the performance of the two approaches using mean square error (MSE), mean absolute error (MAE), and standardized mean absolute error (sMAE).

Overall, correspondence between modeled behavior and ground truth data shows the applicability of the proposed system under different patterns of rotational movements. Additionally, Izhikevich activation functions for both patterns demonstrate over 50% better performance for modeling ocular response than the DNN implementing sigmoidal activation functions. This shows that SDNN can be used as a generalized approximation class for ocular response dynamics.

7. Conclusions

This study examines modeling physiological VOR systems using SDNN. The proposed nonparametric model implements an arrangement of the artificial neurons described by Izhikevich dynamics with fixed parameters to follow eye movements caused by known head accelerations. Learning laws have been derived for the proposed SDNN to ensure convergence to the origin of identification error. An experimental setup is proposed and used to obtain data and confirm the validity of the proposed SDNN-based nonparametric model. Comparison of the proposed modeling strategy and a traditional identifier with sigmoidal activation functions was performed for different experimental conditions and demonstrated the efficacy of the proposed approach. One potential use of this study is estimating the accuracy of motion cues simulation. Suppose the ground truth of the ocular motion is acquired using a model of vestibular–ocular response. In that case, it can be compared with experimental data on a dynamic platform to assess how accurate the movement was in terms of vestibule system reaction. Despite the additional computational complexity produced with the application of Izhikevich models, the identification quality improves significantly compared to the traditional sigmoidal (algebraic form) forms. This fact justifies the approximated model proposed in this study and opens novel options to create representations of complex biological systems with multirate dynamics.

8. Patents

A derivative from this work is currently undergoing software registration process.

Author Contributions

Conceptualization, I.C., O.A. and V.C.; methodology, I.C. and O.A.; software, V.P. and A.M.; validation, A.M. and V.P.; formal analysis, O.A. and I.C.; investigation, I.C.; resources, V.C.; data curation, A.M.; writing—original draft preparation, A.M. and V.P.; writing—review and editing, I.C., O.A. and V.C.; visualization, V.P.; supervision, I.C.; project administration, V.C. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by Ministry of Science and Higher Education of the Russian Federation grant number 075-15-2020-923 “Supersonic”.

Institutional Review Board Statement

Ethical review and approval were waived for this study, due to the study only considered to evaluation of motion cues with volunteers in their normal conditions.

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

Acknowledgments

The authors thank Alexander Poznyak and Vladimir Alexandrov for fruitful discussions and helpful suggestions and Ernest Sleptsov for valuable advices concerning literature review.

Conflicts of Interest

The authors declare no conflict of interest. The funders had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript, or in the decision to publish the results.

Abbreviations

The following abbreviations are used in this manuscript:

| VOR | Vestibular–Ocular Reflex |

| DNN | Differential Neural Network |

| SDNN | Spiking Differential Neural Network |

| MSE | Mean Square Error |

| MAE | Mean Absolute Error |

| sMAE | Standardized Mean Absolute Error |

References

- Stoffreges, T.; Hettinger, L.; Haas, M.; Roe, M.; Smart, J. Postural instability and motion sickness in a fixed-base flight simulator. Hum. Factors 2000, 42, 458–469. [Google Scholar] [CrossRef] [PubMed]

- Johnson, D.M. Introduction to and Review of Simulator Sickness Research; U.S. Army Research Institute for the Behavioral and Social Sciences: Fort Belvoir, VA, USA, 2005; Volume 1832. [Google Scholar]

- Sadovnichii, V.A.; Aleksandrov, V.V.; Aleksandrova, O.V.; Vega, R.; Konovalenko, I.S.; Soto, E.; Tikhonova, K.V.; Gordillo Domingez, J.L.; Gonzalez Petlacalco, O. Galvanic Correction of Pilot’s Vestibular Activity during Visual Flight Control. Mosc. Univ. Mech. Bull. 2019, 74, 1–8. [Google Scholar] [CrossRef]

- Goldberg, J.M.; Cullen, K.E. Vestibular control of the head: Possible functions of the vestibulocollic reflex. Exp. Brain Res. 2011, 210, 331–345. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Nagayama, M.; Aritake, T.; Hino, H.; Kanda, T.; Miyazaki, T.; Yanagisawa, M.; Akaho, S.; Murata, N. Detecting cell assemblies by NMF-based clustering from calcium imaging data. Neural Netw. 2022, 149, 29–39. [Google Scholar] [CrossRef] [PubMed]

- Kumar, A.; Das, S.; Yadav, V.K. Global exponential synchronization of complex-valued recurrent neural networks in presence of uncertainty along with time-varying bounded and unbounded delay terms. Int. J. Dyn. Control 2021. [Google Scholar] [CrossRef]

- Kahloul, A.A.; Sakly, A. Constrained parameterized optimal control of switched systems based on continuous Hopfield neural networks. Int. J. Dyn. Control 2018, 6, 262–269. [Google Scholar] [CrossRef]

- Izhikevich, E.M. Simple model of spiking neurons. IEEE Trans. Neural Netw. 2003, 14, 1569–1572. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Liu, C.; Shen, W.; Zhang, L.; Du, Y.; Yuan, Z. Spike Neural Network Learning Algorithm Based on an Evolutionary Membrane Algorithm. IEEE Access 2021, 9, 17071–17082. [Google Scholar] [CrossRef]

- Dar, M.R.; Kant, N.A.; Khanday, F.A. Dynamics and implementation techniques of fractional-order neuron models: A survey. In Fractional Order Systems; Elsevier: Amsterdam, The Netherlands, 2022; pp. 483–511. [Google Scholar]

- Schumm, S.N.; Gabrieli, D.; Meaney, D.F. Plasticity impairment exposes CA3 vulnerability in a hippocampal network model of mild traumatic brain injury. Hippocampus 2022, 32, 231–250. [Google Scholar] [CrossRef] [PubMed]

- Yarbus, A.L. Eye Movements and Vision; Plenum Press: New York, NY, USA, 1967. [Google Scholar]

- Pal’chun, V.; Guseva, A.; Baybakova, E.; Makoeva, A. Recovery of vestibulo-ocular reflex in vestibular neuronitis depending on severity of vestibulo-ocular reflex damage. Vestn. Otorinolaringol. 2019, 84, 33. [Google Scholar] [CrossRef] [PubMed]

- Gordon, C.; Spitzer, O.; Doweck, I.; Shupak, A.; Gadoth, N. The vestibulo-ocular reflex and seasickness susceptibility. J. Vestib. Res. Equilib. Orientat. 1996, 6, 229–233. [Google Scholar] [CrossRef]

- Kornilova, L.N.; Kozlovskaya, I.B. Neurosensory Mechanisms of Space Adaptation Syndrome. Hum. Physiol. 2003, 29, 527–538. [Google Scholar] [CrossRef]

- Naumov, I.; Kornilova, L.; Glukhikh, D.; Pavlova, A.; Khabarova, E.; Ekimovsky, G.; Vasin, A. Vestibular Function after Repeated Space Flights. Hum. Physiol. 2015, 49, 33–40. [Google Scholar] [CrossRef]

- Broomhead, D.; Akman, O.; Abadi, R. Eye movement instabilities and nystagmus can be predicted by a nonlinear dynamics model of the saccadic system. J. Math. Biol. 2005, 51, 661–694. [Google Scholar]

- van Opstal, A.; van Gisbergen, J. Scatter in the metrics of saccades and properties of the collicular motor map. Vis. Res. 1989, 29, 1183–1196. [Google Scholar] [CrossRef]

- Akman, O.E.; Avramidis, E. Optimisation of an exemplar oculomotor model using multi-objective genetic algorithms executed on a GPU-CPU combination. BMC Syst. Biol. 2017, 11, 40. [Google Scholar]

- Bokov, T.Y.; Suchalkina, A.; Yakusheva, E.; Yakushev, A. Mathematical modelling of vestibular nystagmus. Part I. The statistical model. Russ. J. Biomech. 2014, 18, 40–57. [Google Scholar]

- Poznyak, A.; Sanchez, E.; Yu, W. Differential Neural Networks for Robust Nonlinear Control (Identification, State Estimation an Trajectory Tracking); World Scientific: Singapore, 2001. [Google Scholar]

- Fuentes-Aguilar, R.Q.; Chairez, I. Adaptive Tracking Control of State Constraint Systems Based on Differential Neural Networks: A Barrier Lyapunov Function Approach. IEEE Trans. Neural Netw. Learn. Syst. 2020, 31, 5390–5401. [Google Scholar] [CrossRef] [PubMed]

| Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).