Zeroing Neural Networks Combined with Gradient for Solving Time-Varying Linear Matrix Equations in Finite Time with Noise Resistance

Abstract

1. Introduction

- (1)

- Specially for the design of IEAZNN model, the gradient of the energy function is used as the first error function for superior convergence. To resist noise influences, a new, second error function is designed based on the IEZNN model. Then, the IEAZNN is developed for the anti-noise and finite-time convergence.

- (2)

- Different from other ZNN models, which are either for achieving anti-noise or finite time convergence, the presented IEAZNN model is noise-tolerant and has the advantage of finite-time convergence at the same time.

- (3)

- The theoretical analyses and experimental comparisons show that the proposed IEAZNN model in this paper performs well in both convergence speed and solution accuracy under various noise disturbances.

2. Problem Formulation and Related Models

2.1. Conventional ZNN Model

2.2. Integration-Enhanced ZNN Model

3. Noise-Enduring IEAZNN Model

3.1. Model Design

- (1)

- (2)

- By the property of the trace, we can obtain the above derivative of , , with respect to X.

- (3)

- Define to replace the error function in (2).

3.2. Theoretical Analyses

4. Comparative Verifications

| Algorithm 1: Matlab program core ideas. |

← 1, repeat IEAZNN model (10) until t=10 for i← 1 to 6 do figure(1); plot(t,) error ←, theo ← for j← 1 to 6 do figure(1); plot(t,) figure(2); plot(t,norm(error)) |

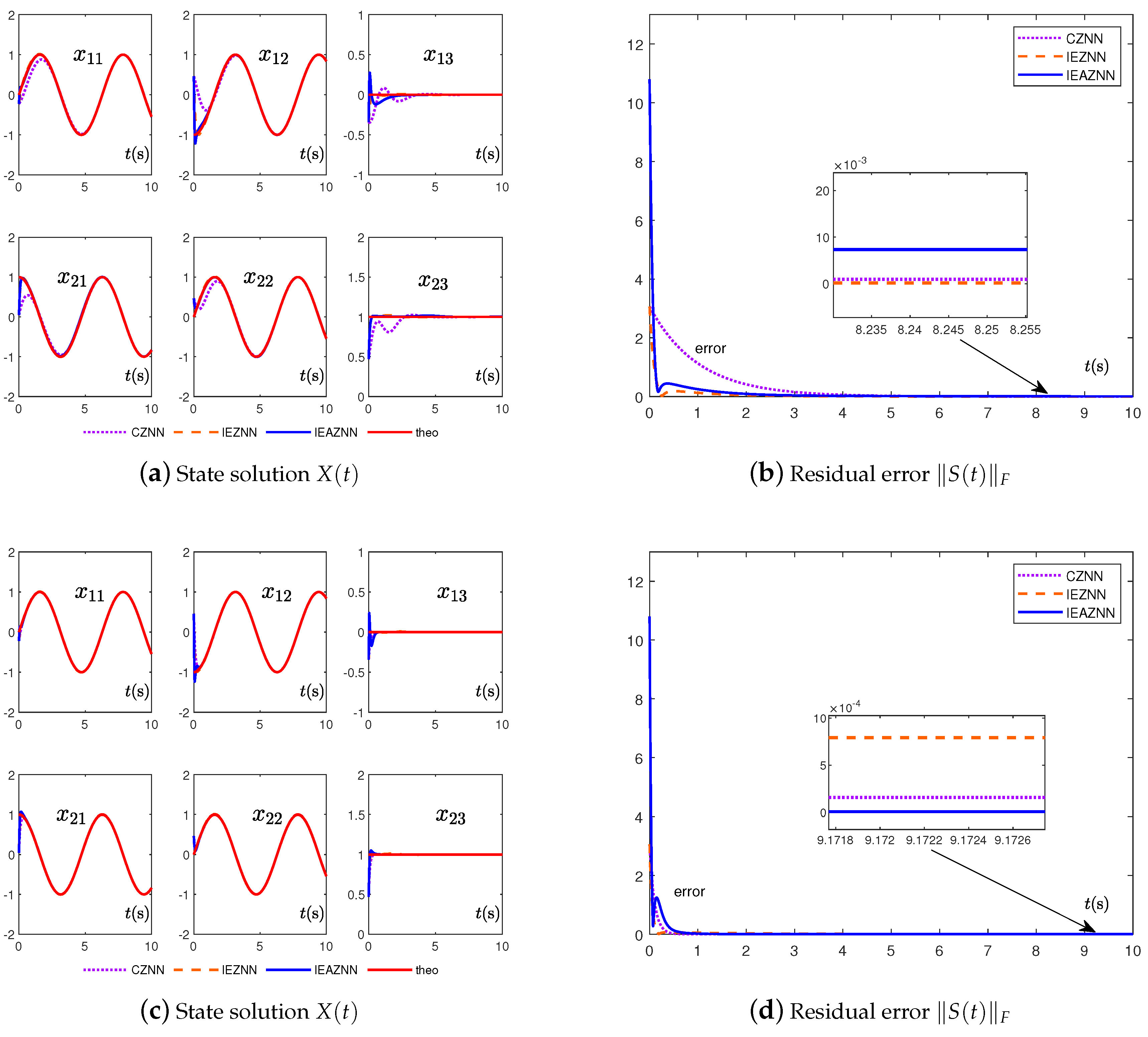

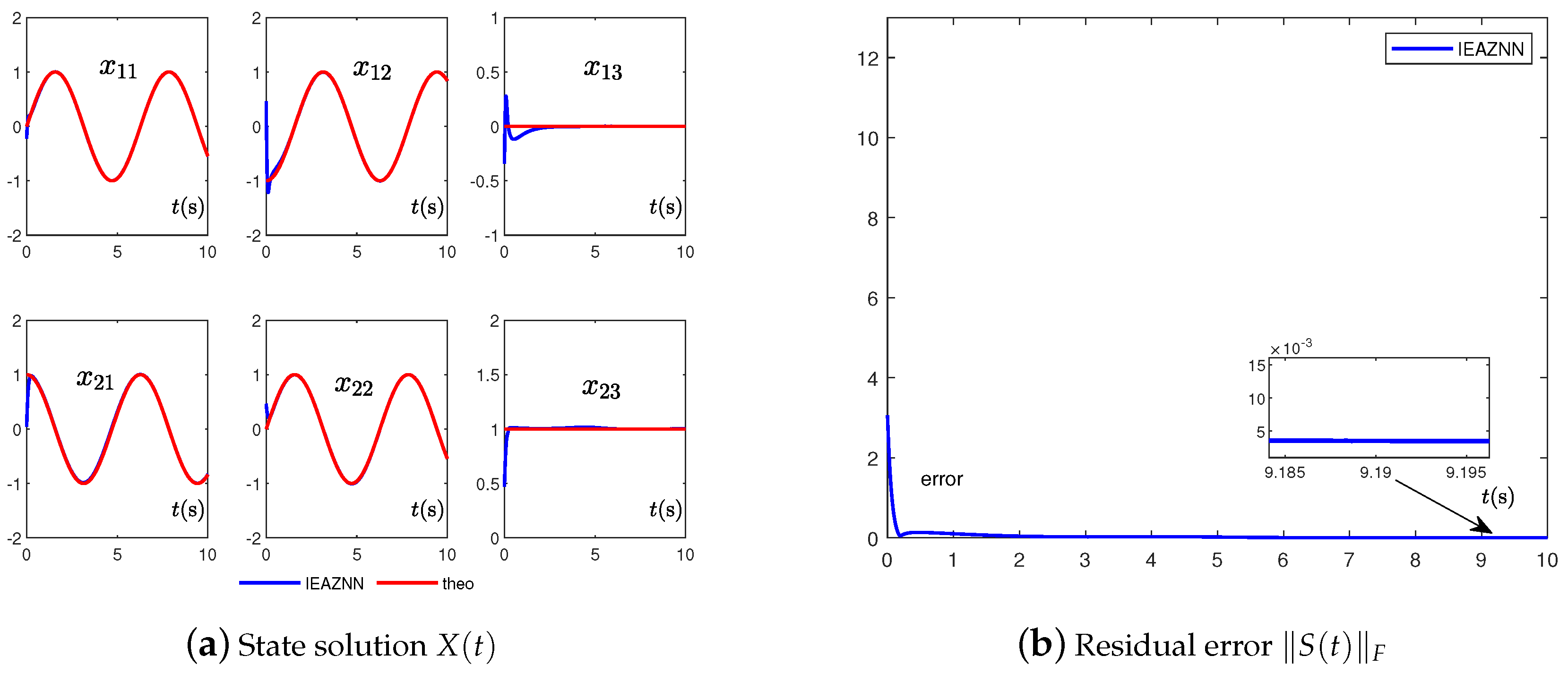

- (1)

- In the case of zero-noise interference, the convergence effect of the CZNN model (3) is stable and the accuracy is high whether or . Once there is noise interference, the CZNN model (3) cannot accurately solve the time-varying LME (1). However, whether there exists noise or not, the IEZNN model (5) and IEAZNN model (10) can always accurately solve the LME problem (1).

- (2)

- Because of the different design formulas, the presented IEAZNN model (10) in this paper has higher convergence accuracy and better convergence performance when dealing with dynamic linear noise, whether or , in comparison with the other two ZNN models.

- (3)

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| LME | linear matrix equation |

| RNN | recurrent neural network |

| GNN | gradient-based neural network |

| ZNN | zeroing neural network |

| CZNN | conventional zeroing neural network |

| FTZNN | finite-time zeroing neural network |

| NTZNN | noise-tolerant zeroing neural network |

| IEZNN | integration-enhanced zeroing neural network |

| NAF | novel activation function |

| DMI | dynamic matrix inversion |

| IEAZNN | integration-enhanced combined accelerating zeroing neural network |

Appendix A

References

- Zhang, Z.; Zheng, L.; Yu, J.; Li, Y.; Yu, Z. Three recurrent neural networks and three numerical methods for solving a repetitive motion planning scheme of redundant robot manipulators. IEEE ASME Trans. Mechatron. 2017, 22, 1423–1434. [Google Scholar] [CrossRef]

- Zhang, Z.; Zheng, L.; Chen, Z.; Kong, L.; Karimi, H.R. Mutual-collision-avoidance scheme synthesized by neural networks for dual redundant robot manipulators executing cooperative tasks. IEEE Trans. Neural Netw. Learn. Syst. 2021, 32, 1052–1066. [Google Scholar] [CrossRef] [PubMed]

- Jin, L.; Li, S.; Xiao, L.; Lu, R.; Liao, B. Cooperative motion generation in a distributed network of redundant robot manipulators with noises. IEEE Trans. Syst. Man Cybern. Syst. 2017, 48, 1715–1724. [Google Scholar] [CrossRef]

- Wang, H.; Liu, X.; Liu, K. Robust adaptive neural tracking control for a class of stochastic nonlinear interconnected systems. IEEE Trans. Neural Netw. Learn. Syst. 2015, 27, 510–523. [Google Scholar] [CrossRef]

- Safarzadeh, M.; Sadeghi Goughery, H.; Salemi, A. Global-DGMRES method for matrix equation A × B = C. Int. J. Comput. Math. 2022, 99, 1005–1021. [Google Scholar] [CrossRef]

- Zhang, Y.; Ma, W.; Yi, C. The link between newton iteration for matrix inversion and Zhang neural network (ZNN). In Proceedings of the 2008 IEEE International Conference on Industrial Technology, Chengdu, China, 21–24 April 2008; pp. 1–6. [Google Scholar] [CrossRef]

- Saad, Y.; van der Vorst, H.A. Iterative solution of linear systems in the 20th century. J. Comput. Appl. Math. 2000, 123, 1–33. [Google Scholar] [CrossRef]

- Zhou, J.; Wei, W.; Zhang, R.; Zheng, Z. Damped Newton stochastic gradient descent method for neural networks training. Mathematics 2021, 9, 1533. [Google Scholar] [CrossRef]

- Concas, A.; Reichel, L.; Rodriguez, G.; Zhang, Y. Iterative methods for the computation of the Perron vector of adjacency matrices. Mathematics 2021, 9, 1522. [Google Scholar] [CrossRef]

- Lv, X.; Xiao, L.; Tan, Z. Improved Zhang neural network with finite-time convergence for time-varying linear system of equations solving. Inf. Process. Lett. 2019, 147, 88–93. [Google Scholar] [CrossRef]

- Gerontitis, D.; Moysis, L.; Stanimirović, P.; Katsikis, V.N.; Volos, C. Varying-parameter finite-time zeroing neural network for solving linear algebraic systems. Electron. Lett. 2020, 56, 810–813. [Google Scholar] [CrossRef]

- Chen, K. Recurrent implicit dynamics for online matrix inversion. Appl. Math. Comput. 2013, 219, 10218–10224. [Google Scholar] [CrossRef]

- Li, X.; Yu, J.; Li, S.; Ni, L. A nonlinear and noise-tolerant ZNN model solving for time-varying linear matrix equation. Neurocomputing 2018, 317, 70–78. [Google Scholar] [CrossRef]

- Liao, B.; Zhang, Y.; Jin, L. Taylor O(h3) discretization of ZNN models for dynamic equality-constrained quadratic programming with application to manipulators. IEEE Trans. Neural Netw. Learn. Syst. 2015, 27, 225–237. [Google Scholar] [CrossRef] [PubMed]

- Yi, C.; Chen, Y.; Lu, Z. Improved gradient-based neural networks for online solution of Lyapunov matrix equation. Inf. Process. Lett. 2011, 111, 780–786. [Google Scholar] [CrossRef]

- Chen, Y.; Yi, C.; Qiao, D. Improved neural solution for the Lyapunov matrix equation based on gradient search. Inf. Process. Lett. 2013, 113, 876–881. [Google Scholar] [CrossRef]

- Xiao, L.; Lu, R. A fully complex-valued gradient neural network for rapidly computing complex-valued linear matrix equations. Chin. J. Electron. 2017, 26, 1194–1197. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, J.; Weng, J. Dynamic moore-penrose inversion with unknown derivatives: Gradient neural network approach. IEEE Trans. Neural Netw. Learn. Syst. 2022, 1–11. [Google Scholar] [CrossRef]

- Tan, Z. Fixed-time convergent gradient neural network for solving online Sylvester equation. Mathematics 2022, 10, 3090. [Google Scholar] [CrossRef]

- Zhang, Z.; Fu, T.; Yan, Z.; Jin, L.; Xiao, L.; Sun, Y.; Yu, Z.; Li, Y. A varying-parameter convergent-differential neural network for solving joint-angular-drift problems of redundant robot manipulators. IEEE ASME Trans. Mechatron. 2018, 23, 679–689. [Google Scholar] [CrossRef]

- Zhang, Y.; Ge, S.S. Design and analysis of a general recurrent neural network model for time-varying matrix inversion. IEEE Trans. Neural Netw. 2005, 16, 1477–1490. [Google Scholar] [CrossRef]

- Yan, J.; Xiao, X.; Li, H.; Zhang, J.; Yan, J.; Liu, M. Noise-tolerant zeroing neural network for solving non-stationary Lyapunov equation. IEEE Access 2019, 7, 41517–41524. [Google Scholar] [CrossRef]

- Li, K.; Jiang, C.; Xiao, X.; Huang, H.; Li, Y.; Yan, J. Residual error feedback zeroing neural network for solving time-varying Sylvester equation. IEEE Access 2022, 10, 2860–2868. [Google Scholar] [CrossRef]

- Xiao, L. A new design formula exploited for accelerating Zhang neural network and its application to time-varying matrix inversion. Theor. Comput. Sci. 2016, 647, 50–58. [Google Scholar] [CrossRef]

- Jin, J.; Gong, J. An interference-tolerant fast convergence zeroing neural network for dynamic matrix inversion and its application to mobile manipulator path tracking. Alex. Eng. J. 2021, 60, 659–669. [Google Scholar] [CrossRef]

- Jin, L.; Li, S.; Hu, B.; Liu, M.; Yu, J. A noise-suppressing neural algorithm for solving the time-varying system of linear equations: A control-based approach. IEEE Trans. Ind. Inform. 2018, 15, 236–246. [Google Scholar] [CrossRef]

- Stanimirović, P.S.; Katsikis, V.N.; Li, S. Integration enhanced and noise tolerant ZNN for computing various expressions involving outer inverses. Neurocomputing 2019, 329, 129–143. [Google Scholar] [CrossRef]

- Jin, L.; Zhang, Y.; Li, S. Integration-enhanced Zhang neural network for real-time-varying matrix inversion in the presence of various kinds of noises. IEEE Trans. Neural Netw. Learn. Syst. 2015, 27, 2615–2627. [Google Scholar] [CrossRef]

- Zhang, Y.; Chen, K. Comparison on Zhang neural network and gradient neural network for time-varying linear matrix equation A × B = C solving. In Proceedings of the 2008 IEEE International Conference on Industrial Technology, Chengdu, China, 21–24 April 2008; pp. 1–6. [Google Scholar]

- Dai, J.; Li, Y.; Xiao, L.; Jia, L. Zeroing neural network for time-varying linear equations with application to dynamic positioning. IEEE Trans. Ind. Inform. 2022, 18, 1552–1561. [Google Scholar] [CrossRef]

- Liao, S.; Liu, J.; Qi, Y.; Huang, H.; Zheng, R.; Xiao, X. An adaptive gradient neural network to solve dynamic linear matrix equations. IEEE Trans. Syst. Man Cybern. Syst. 2021, 52, 5913–5924. [Google Scholar] [CrossRef]

- Xiao, L.; Dai, J.; Jin, L.; Li, W.; Li, S.; Hou, J. A noise-enduring and finite-time zeroing neural network for equality-constrained time-varying nonlinear optimization. IEEE Trans. Syst. Man Cybern. Syst. 2019, 51, 4729–4740. [Google Scholar] [CrossRef]

- Xu, F.; Li, Z.; Nie, Z.; Shao, H.; Guo, D. Zeroing neural network for solving time-varying linear equation and inequality systems. IEEE Trans. Neural Netw. Learn. Syst. 2019, 30, 2346–2357. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Yue, S.; Chen, K.; Yi, C. MATLAB simulation and comparison of Zhang neural network and gradient neural network for time-varying Lyapunov equation solving. In Proceedings of the International Symposium on Neural Networks, Beijing, China, 24–28 September 2008; Springer: Berlin/Heidelberg, Germany, 2008; pp. 117–127. [Google Scholar]

| No Noise | Constant Noise | Dynamic Bounded Noise | ||

|---|---|---|---|---|

| CZNN | Cannot reach convergence | Cannot reach convergence | ||

| IEZNN | ||||

| IEAZNN | ||||

| CZNN | Cannot reach convergence | Cannot reach convergence | ||

| IEZNN | ||||

| IEAZNN |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cai, J.; Dai, W.; Chen, J.; Yi, C. Zeroing Neural Networks Combined with Gradient for Solving Time-Varying Linear Matrix Equations in Finite Time with Noise Resistance. Mathematics 2022, 10, 4828. https://doi.org/10.3390/math10244828

Cai J, Dai W, Chen J, Yi C. Zeroing Neural Networks Combined with Gradient for Solving Time-Varying Linear Matrix Equations in Finite Time with Noise Resistance. Mathematics. 2022; 10(24):4828. https://doi.org/10.3390/math10244828

Chicago/Turabian StyleCai, Jun, Wenlong Dai, Jingjing Chen, and Chenfu Yi. 2022. "Zeroing Neural Networks Combined with Gradient for Solving Time-Varying Linear Matrix Equations in Finite Time with Noise Resistance" Mathematics 10, no. 24: 4828. https://doi.org/10.3390/math10244828

APA StyleCai, J., Dai, W., Chen, J., & Yi, C. (2022). Zeroing Neural Networks Combined with Gradient for Solving Time-Varying Linear Matrix Equations in Finite Time with Noise Resistance. Mathematics, 10(24), 4828. https://doi.org/10.3390/math10244828