1. Introduction

Undoubtedly, the most popular iteration methods in the literature are the Newton’s method, the Halley’s method [

1] and the Chebyshev’s method [

2]. A vast historical survey of these illustrious iteration methods can be found in the papers of Ypma [

3], Scavo and Thoo [

4] and Ezquerro et al. [

5]. It is well known that the Newton’s method is quadratically convergent while Halley and Chebyshev’s methods are cubically convergent to simple zeros. However, all these methods converge linearly if the zeros are multiple.

In 1870, Schröder [

6] presented the following modification of Newton’s method:

which restores the quadratic convergence when the multiplicity

of the zero is known. Driven by the same reasons, in 1963 Obreshkov [

7] developed the following modifications of Halley and Chebyshev’s methods:

and

The methods (

2) and (

3) are known as

Halley’s method for multiple zeros and

Chebyshev’s method for multiple zeros, and their convergence order is known to be three if the multiplicity

m of the zero is known.

In 1994, Osada [

8] defined the following third order modification of the Newton’s method:

known as

Osada’s method for multiple zeros. In 2008, he [

9] used an arbitrary real parameter to construct an iteration family for multiple zeros that includes as special cases the methods (

1)–(

4). Another member of the mentioned Osada’s iteration family is

Super-Halley method for multiple zeros which can be defined by (see [

10] and references therein):

Let

and

. We define the following extension of the Osada’s iteration family:

where the iteration function

is defined by:

with

and

defined as follows:

Apparently, the domain of the iteration function (

7) is the set:

It is easy to see that the iteration (

6) includes the Halley’s method (

2) for

, the Chebyshev’s method (

3) for

, Super–Halley method (

5) for

and the Osada’s method (

4) for

and

. Hereafter, the iteration (

6) shall be called

Chebyshev–Halley family for multiple zeros. Note that Chebyshev–Halley family for simple zeros (

) has been firstly introduced and studied in its explicit form by Hernández and Salanova [

11].

In 2009, Proinov [

12] established two types of local convergence theorems about the Newton’s method (

1) (applied to polynomials) under two different types of initial conditions. In 2015 and 2016, Proinov and Ivanov [

13] and Ivanov [

14] used the same two types of initial conditions to establish local convergence theorems about the Halley’s method (

2) and the Chebyshev’s method (

3) for multiple polynomial zeros. Very recently, Ivanov [

10] have proved two general theorems (Theorems 3 and 4) that provide sets of initial approximations to ensure the

Q-convergence ([

15] (Definition 2.1)) of Picard iteration

and have applied them to investigate the local convergence of Super–Halley method (

5) for multiple polynomial zeros.

In this paper, we use the approach of [

10] to investigate the

Q-convergence of Chebyshev–Halley family (

6). Thus, we obtain two kinds of local convergence theorems (Theorems 1 and 2) that supply the exact bounds of sets of initial approximations accompanied by a priori and a posteriori error estimates that guarantee the

Q-convergence of the iteration (

6). An assessment of the asymptotic error constant of the family (

6) is also established. Our results unify and complement the results of Proinov and Ivanov [

13] and Ivanov [

10,

14] about the methods (

2), (

3) and (

5). On the other hand, the results about the Osada’s method (

4) (Corollarys 4 and 5) are the first such results in the literature. At the end of our study, we use Theorem 1 to compare the convergence domains and error estimates of the methods (

2)–(

5) and several new randomly generated members of (

6).

2. Main Results

From now on,

shall denote the ring of univariate polynomials over

. Let

be a polynomial of degree

and

be a zero of

f. We define the functions

and

by

where

d denotes the distance from

to the nearest among the other zeros of

f and

denotes the distance from

x to the nearest zero of

f not equal to

. Note that, if

is an unique zero of

f, then we set

. Also, we note that the domain of

is the set,

In this section, we present two local convergence theorems about Chebyshev–Halley family (

7) under two different kinds of initial conditions regarding the functions of initial conditions defined by (

10).

2.1. Local Convergence Theorem of the First Kind

Furthermore, for the integers

n and

m (

) and the number

, we define the real function

by:

where the function

is defined by:

and the function

is defined by:

with

. It is worth mentioning that

is positive and increasing while

is decreasing on the interval

.

The following is our first main theorem of this paper:

Theorem 1. Let be a polynomial of degree and be a zero of f with known multiplicity . Suppose is an initial guess satisfying the conditions:where E is defined by (

10)

and the function is defined by:with and defined by (

13)

and (

12).

Then the iteration (

6)

is well defined and convergent Q-cubically to ξ with error estimates for all :where and the function is defined by (

11).

Besides, the following estimate of the asymptotic error constant holds: In the cases

,

and

, we get the following consequences of Theorem 1 about Chebyshev, Halley and Super-Halley methods, which where proven in [

10,

13,

14], but without the assessment of the asymptotic error constants:

Corollary 1 ([

14] (Theorem 2)).

Let be a polynomial of degree and be a zero of f with multiplicity . Suppose satisfies the following initial conditions:where the function is defined by (

10)

and the function is defined by:Then the Chebyshev’s iteration (

3)

is well defined and convergent Q-cubically to ξ with error estimates (

15),

where , and with the following estimate of the asymptotic error constant: Corollary 2 ([

13] (Theorem 4.5)).

Let be a polynomial of degree and be a zero of f with multiplicity . Suppose satisfies the following initial conditionwhere is defined by (

10).

Then the Halley’s iteration (

2)

is well defined and convergent Q-cubically to ξ with error estimates (

15)

where and the function is defined byBesides, the following estimate of the asymptotic error constant holds: Corollary 3 ([

10] (Theorem 3)).

Let be a polynomial of degree and be a zero of f with multiplicity . Suppose satisfies the following initial conditionwhere is defined by (

10).

Then Super-Halley iteration (

5)

is well defined and convergent Q-cubically to ξ with error estimates (

15),

where and the function is defined by:The estimate (

18)

of the asymptotic error constant holds. In the case

and

, we get the following consequence of Theorem 1 about the Osada’s method (

4):

Corollary 4. Let be a polynomial of degree and be a zero of f with multiplicity . Suppose satisfies the following initial conditions:where is defined by (

10)

and the function is defined by:Then the Osada’s iteration (

4)

is well defined and convergent Q-cubically to ξ with error estimates (

15)

where . Besides, the following estimate of the asymptotic error constant holds: 2.2. Local Convergence Theorem of the Second Kind

Before stating our second main theorem, for the integers

n and

m (

) and the number

, we define the real function

by:

where the function

is defined by:

and the function

is defined by:

with

. Obviously, the function

is positive and increasing while the function

is decreasing on the interval

.

The next theorem is our second main result of this paper.

Theorem 2. Let be a polynomial of degree and be a zero of f with known multiplicity . Suppose is an initial guess satisfying the conditions:where the function is defined by (

10)

and the function is defined bywith defined by (

21).

Then the iteration sequence (

6)

is well defined and convergent Q-cubically to ξ with error estimates for all :where and with . In the cases

,

and

, from Theorem 2 we immediately get [

14] (Theorem 3), [

13] (Theorem 5.7) and [

10] (Theorem 4) about Chebyshev, Halley and Super-Halley methods, respectively. In the case

and

, we get the following consequence of Theorem 2 about the Osada’s method.

Corollary 5. Let be a polynomial of degree and be a zero of f with multiplicity . Suppose satisfies the following initial conditions:where is defined by (

10)

and the function is defined by (

25)

withThen the Osada’s iteration (

4)

is well defined and convergent Q-cubically to ξ with error estimates (

26).

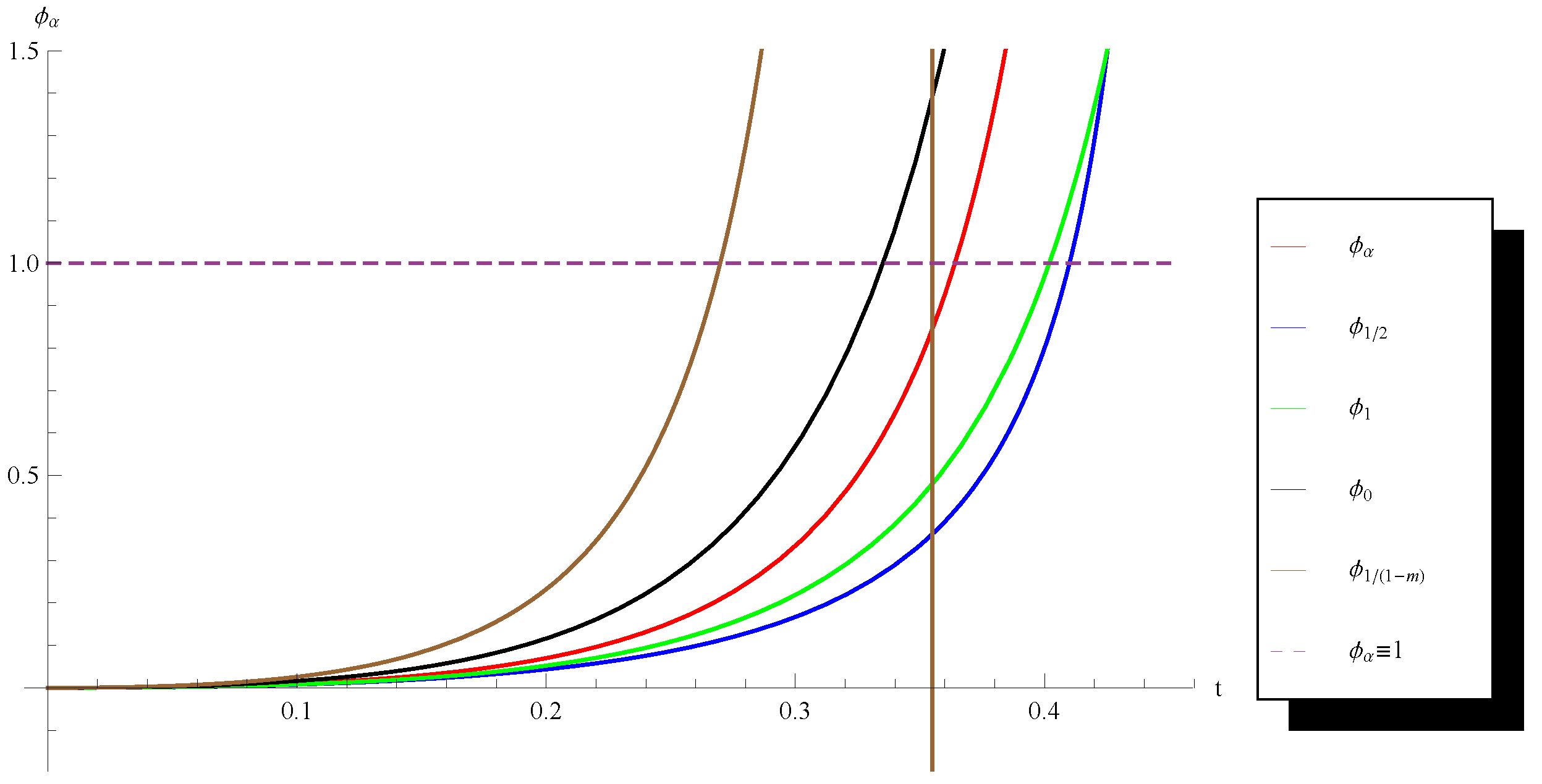

4. Comparative Analysis

In this section, we use Theorem 1 to compare the convergence domains and the error estimates of several particular members of Chebyshev–Halley iteration family (

6). Define the function

by (

11). It is easy to see that the initial condition (

14) can be presented in the form

where

is the unique solution of the equation

in the interval

(see e.g., Corollarys 2 and 3). Observe that as bigger is

as larger is the convergence domain of the respective method and better are its error estimates. In order to compare the convergence domains of the Halley’s method (

2), the Chebyshev’s method (

3), Super-Haley method (

5) and the Osada’s method (

4) with each other and with some other members of the family (

6), in the following figures we depict the functions

obtained for the couples

and

with

,

,

,

and with four complex numbers

randomly chosen from the rectangle

Note that these two couples

have been chosen to highlight the cases

and

since we know that (see [

10] (Remark 1)) in the second case Corollary 3 provides a larger convergence domain with better error estimates for Super–Halley method than Corollary 2 for the Halley’s method and vice versa in the first case.

One can see from the graphs (

Figure 1,

Figure 2,

Figure 3 and

Figure 4) that in all considered cases except the first one (

Figure 1), the randomly chosen method has a larger convergence domain and better error estimates than the Osada’s method. In the second case (

Figure 2), the random method is better than the Chebyshev’s method while in the last case (

Figure 4) the random method is better even than the Halley’s method.