Comparing the Use of Two Different Model Approaches on Students’ Understanding of DNA Models

Abstract

1. Introduction

1.1. Teaching Genetics: The Role of Outreach Laboratories and Model-Support

1.2. Empirical Findings on Students’ Understanding of Scientific Models

1.3. Objectives of the Study

2. Materials and Methods

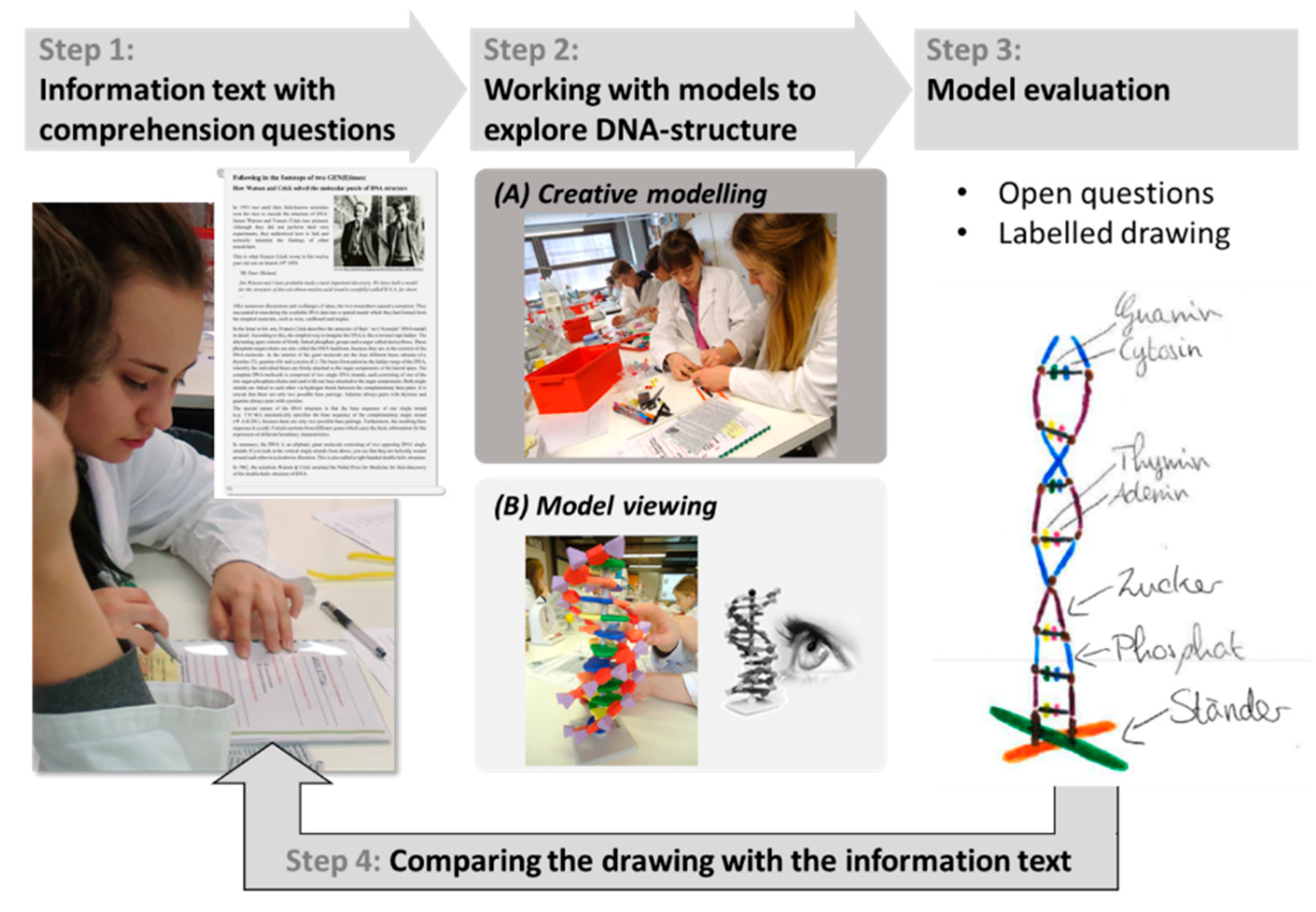

2.1. Educational Intervention

2.2. Participants

2.3. Test Design and Instruments

2.4. Statistical Analysis

3. Results

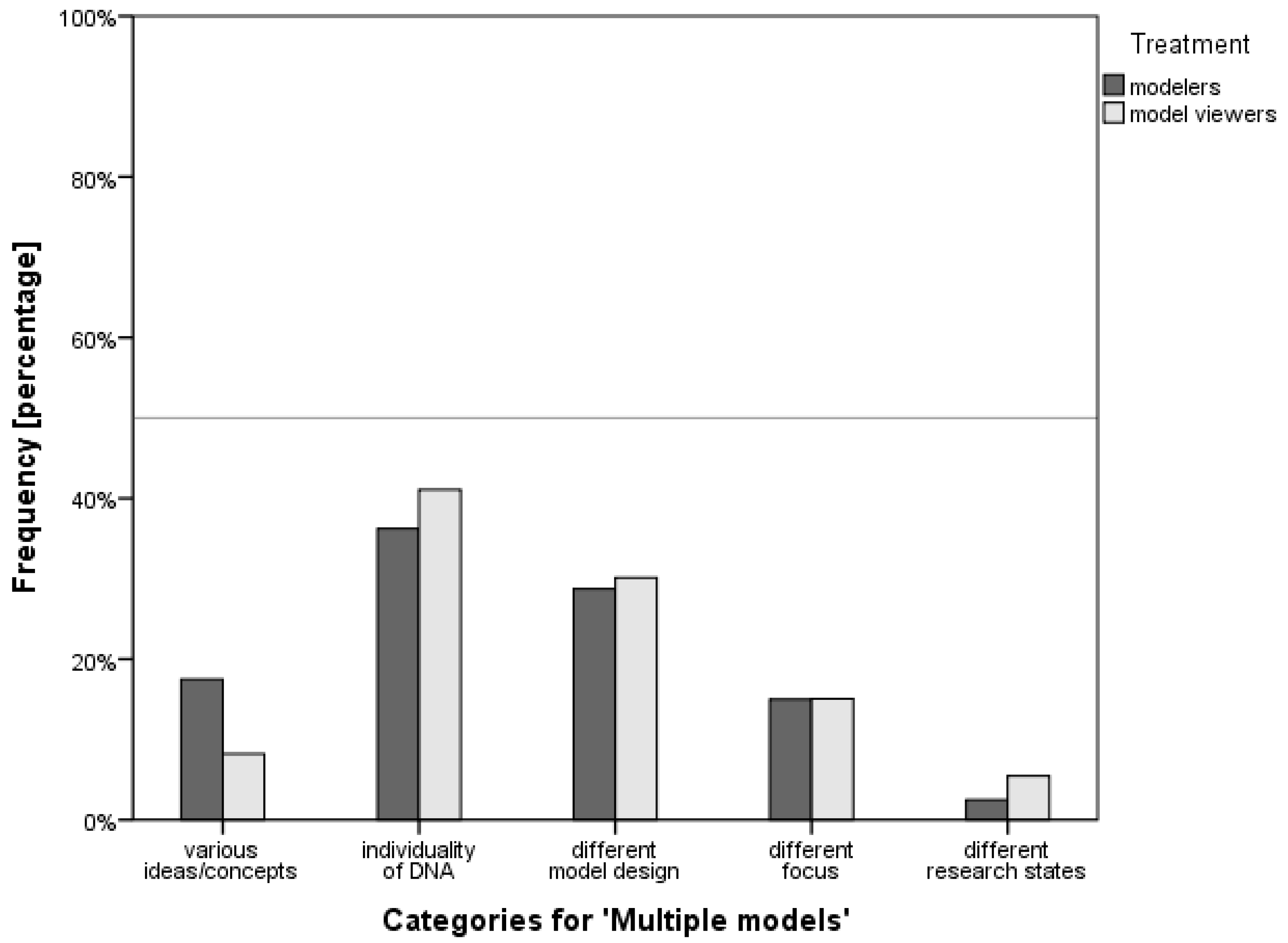

3.1. Qualitative Assessment

3.2. Quantitative Assessment

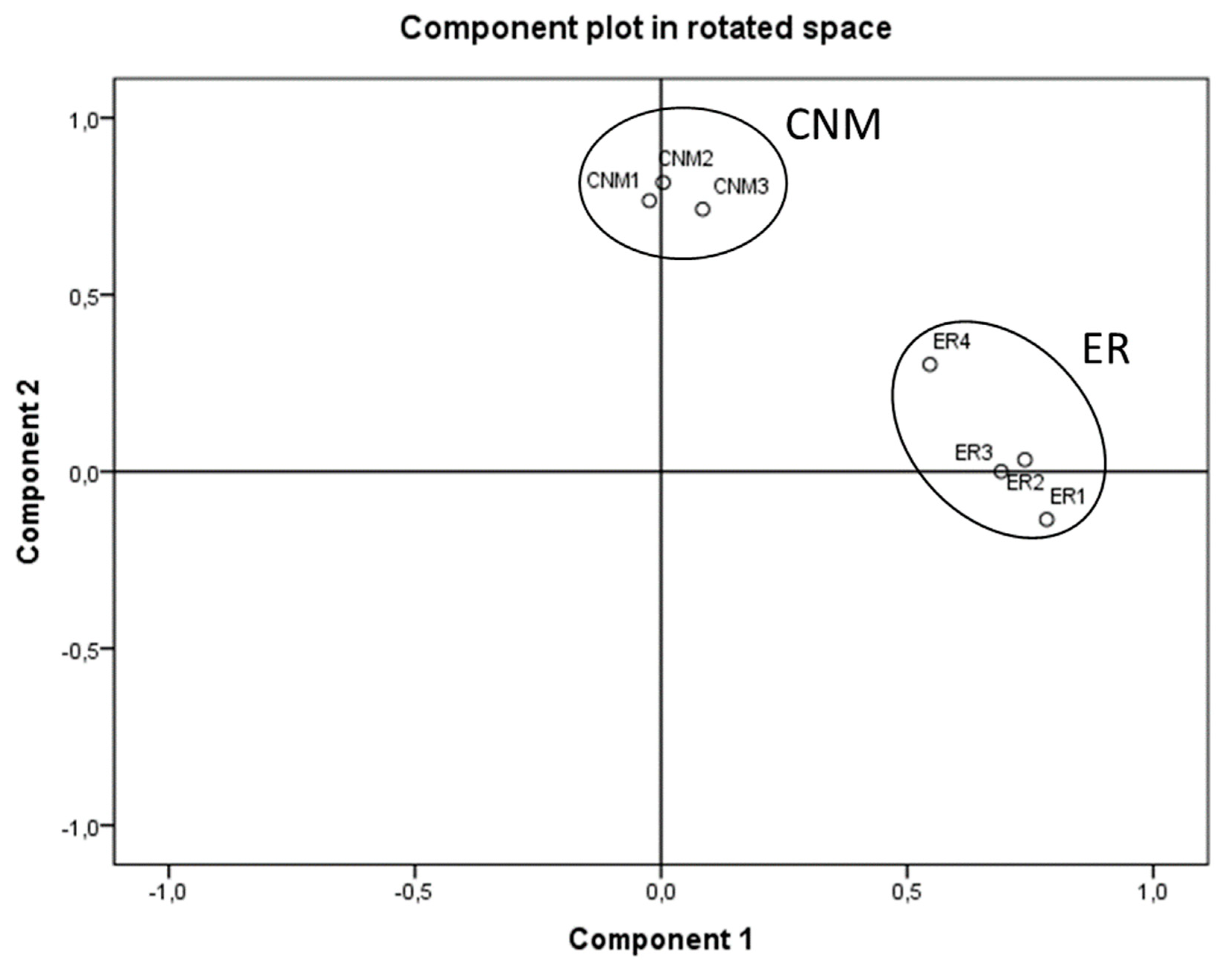

3.2.1. Factor Analysis

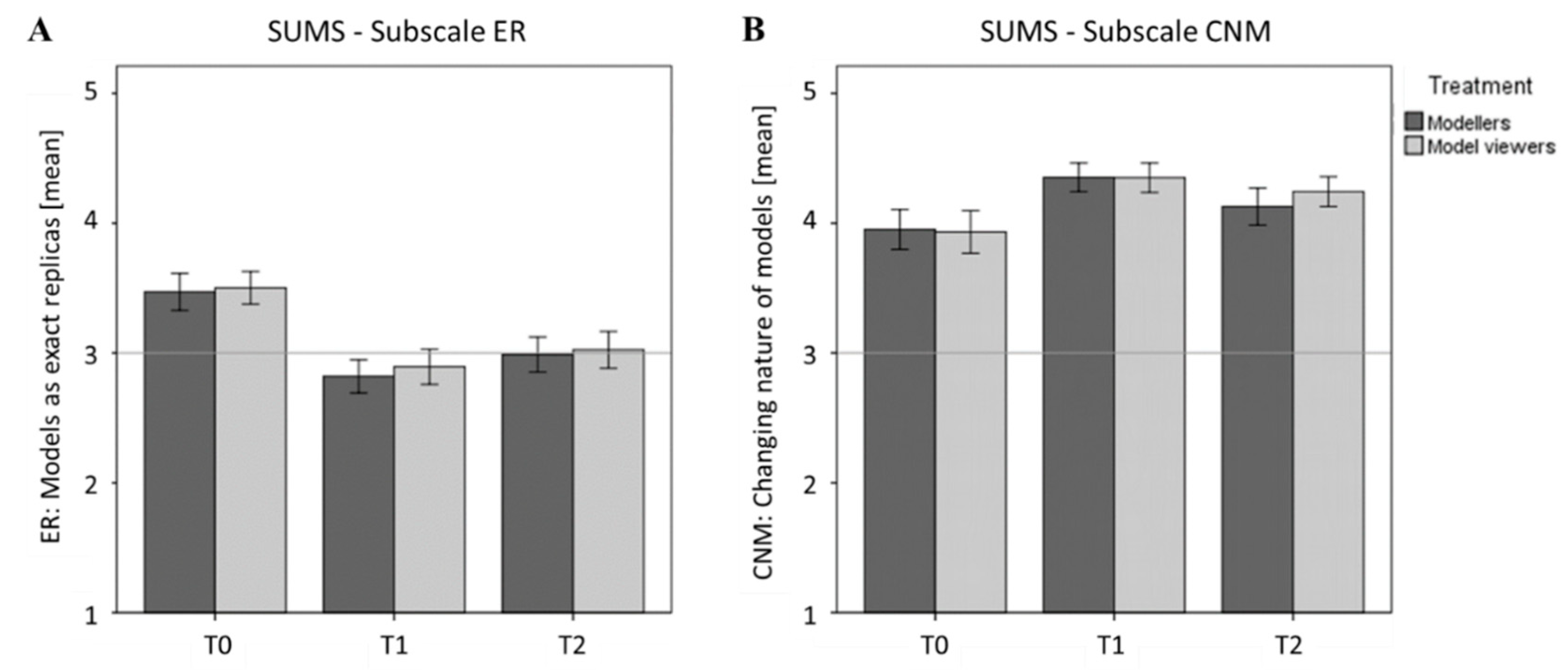

3.2.2. Influences of the Model-Based Approaches on Two Subscales of the SUMS

4. Discussion

4.1. Influences of the Model-Based Approaches on Students’ Understanding of Multiple Models

4.2. Influences of the Model-Based Approaches on Two Subscales of the SUMS

4.3. Limitations of the Study

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Graw, J. Genetik [Genetics], 6th ed.; Springer: Berlin/Heidelberg, Germany, 2015; ISBN 9783662448168. [Google Scholar]

- Gilbert, J.K.; Boulter, C.; Rutherford, M. Models in explanations, Part 1: Horses for courses? Int. J. Sci. Educ. 1998, 20, 83–97. [Google Scholar] [CrossRef]

- Matynia, A.; Kushner, S.A.; Silva, A.J. Genetic approaches to molecular and cellular cognition: A focus on LTP and learning and memory. Ann. Rev. Genet. 2002, 36, 687–720. [Google Scholar] [CrossRef]

- Plomin, R.; Walker, S.O. Genetics and educational psychology. Br. J. Educ. Psychol. 2003, 73, 3–14. [Google Scholar] [CrossRef] [PubMed]

- Watson, J.D.; Crick, F.H.C. Molecular structure of nucleic acids. A structure for deoxyribose nucleic acid. Nature 1953, 171, 737–738. [Google Scholar] [CrossRef]

- Usher, S. Letters of Note. Correspondence Deserving of a Wider Audience; Canongate: Edinburgh, Scotland, 2013; ISBN 9781782112235. [Google Scholar]

- Klug, A. Rosalind Franklin and the discovery of the structure of DNA. Nature 1968, 219, 808–810. [Google Scholar] [CrossRef] [PubMed]

- Elkin, L.O. Rosalind Franklin and the double helix. Phys. Today 2003, 56, 42–48. [Google Scholar] [CrossRef]

- Longden, B. Genetics—are there inherent learning difficulties? J. Biol. Educ. 1982, 16, 135–140. [Google Scholar] [CrossRef]

- Kindfield, A.C.H. Confusing chromosome number and structure: A common student error. J. Biol. Educ. 1991, 25, 193–200. [Google Scholar] [CrossRef]

- Euler, M. The Role of Experiments in the Teaching and Learning of Physics; IOS Press: Amsterdam, The Netherlands, 2015; pp. 175–221. [Google Scholar]

- Scharfenberg, F.-J.; Bogner, F.X.; Klautke, S. Learning in a gene technology laboratory with educational focus: Results of a teaching unit with authentic experiments. Biochem. Mol. Biol. Edu. 2007, 35, 28–39. [Google Scholar] [CrossRef]

- Franke, G.; Bogner, F.X. Cognitive influences of students’ alternative conceptions within a hands-on gene technology module. J. Educ. Res. 2011, 104, 158–170. [Google Scholar] [CrossRef]

- Scharfenberg, F.-J.; Bogner, F.X. Teaching Gene Technology in an Outreach Lab: Students’ Assigned Cognitive load clusters and the clusters’ relationships to learner characteristics, laboratory variables, and cognitive achievement. Res. Sci. Educ. 2013, 43, 141–161. [Google Scholar] [CrossRef]

- Langheinrich, J.; Bogner, F.X. Computer-related self-concept: The impact on cognitive achievement. Stud. Educ. Eval. 2016, 50, 46–52. [Google Scholar] [CrossRef]

- Scharfenberg, F.-J.; Bogner, F.X. A new two-step approach for hands-on teaching of gene technology: Effects on students’ activities during experimentation in an outreach gene technology lab. Res. Sci. Educ. 2011, 41, 505–523. [Google Scholar] [CrossRef]

- Langheinrich, J.; Bogner, F.X. Student conceptions about the DNA structure within a hierarchical organizational level: Improvement by experiment- and computer-based outreach learning. Biochem. Mol. Biol. Edu. 2015, 43, 393–402. [Google Scholar] [CrossRef]

- Goldschmidt, M.; Scharfenberg, F.-J.; Bogner, F.X. Instructional efficiency of different discussion approaches in an outreach laboratory: Teacher-guided versus student-centered. J. Educ. Res. 2016, 109, 27–36. [Google Scholar] [CrossRef]

- Bielik, T.; Yarden, A. Promoting the asking of research questions in a high-school biotechnology inquiry-oriented program. Int. J. STEM Educ. 2016, 3, 397. [Google Scholar] [CrossRef]

- Ben-Nun, M.S.; Yarden, A. Learning molecular genetics in teacher-led outreach laboratories. J. Biol. Educ. 2009, 44, 19–25. [Google Scholar] [CrossRef]

- Meissner, B.; Bogner, F.X. Enriching students’ education using interactive workstations at a salt mine turned science center. J. Chem. Educ. 2011, 88, 510–515. [Google Scholar] [CrossRef]

- Mierdel, J.; Bogner, F.X. Simply inGEN(E)ious! How creative DNA-modeling can enrich classic hands-on experimentation. 2019; submitted. [Google Scholar]

- Rotbain, Y.; Marbach-Ad, G.; Stavy, R. Effect of bead and illustrations models on high school students’ achievement in molecular genetics. J. Res. Sci. Teach. 2006, 43, 500–529. [Google Scholar] [CrossRef]

- Stull, A.T.; Gainer, M.J.; Hegarty, M. Learning by enacting: The role of embodiment in chemistry education. Learn. Instr. 2018, 55, 80–92. [Google Scholar] [CrossRef]

- Stull, A.T.; Hegarty, M. Model manipulation and learning: Fostering representational competence with virtual and concrete models. J. Educ. Psychol. 2016, 108, 509–527. [Google Scholar] [CrossRef]

- Ferk, V.; Vrtacnik, M.; Blejec, A.; Gril, A. Students’ understanding of molecular structure representations. Int. J. Sci. Educ. 2003, 25, 1227–1245. [Google Scholar] [CrossRef]

- Werner, S.; Förtsch, C.; Boone, W.; von Kotzebue, L.; Neuhaus, B.J. Investigating how german biology teachers use three-dimensional physical models in classroom instruction: A video study. Res. Sci. Educ. 2017, 1, 195. [Google Scholar] [CrossRef]

- Svoboda, J.; Passmore, C. The strategies of modeling in biology education. Sci. Educ. 2013, 22, 119–142. [Google Scholar] [CrossRef]

- Gilbert, J.K.; Justi, R. Learning Scientific Concepts from Modelling-Based Teaching. In Modelling-Based Teaching in Science Education. Models and Modeling in Science Education; Gilbert, J.K., Justi, R., Eds.; Springer: Cham, Switzerland, 2016; Volume 9. [Google Scholar]

- Odenbaugh, J. Idealized, inaccurate but successful: A pragmatic approach to evaluating models in theoretical ecology. Biol. Philos. 2005, 20, 231–255. [Google Scholar] [CrossRef]

- Justi, R.; Gilbert, J.K. Science teachers’ knowledge about and attitudes towards the use of models and modelling in learning science. Int. J. Sci. Educ. 2002, 24, 1273–1292. [Google Scholar] [CrossRef]

- Oh, P.S.; Oh, S.J. What teachers of science need to know about models: An overview. Int. J. Sci. Educ. 2011, 33, 1109–1130. [Google Scholar] [CrossRef]

- Mierdel, J.; Bogner, F.X. Investigations of modellers and model viewers in an out-of-school gene-technology laboratory. 2019; submitted. [Google Scholar]

- Mierdel, J.; Bogner, F.X. Is creativity, hands-on modeling and cognitive learning gender-dependent? Think. Skills Creat. 2019, 31, 91–102. [Google Scholar] [CrossRef]

- Runco, M.A.; Acar, S.; Cayirdag, N. A closer look at the creativity gap and why students are less creative at school than outside of school. Think. Skills Creat. 2017, 24, 242–249. [Google Scholar] [CrossRef]

- NGSS Lead States. Next Generation Science Standards: For States, by States; The National Academies Press: Washington, DC, USA, 2013. [Google Scholar]

- Halloun, I.A. Mediated modeling in science education. Sci. Educ. 2007, 16, 653–697. [Google Scholar] [CrossRef]

- Treagust, D.F.; Chittleborough, G.D.; Mamiala, T.L. Students’ understanding of the role of scientific models in learning science. Int. J. Sci. Educ. 2002, 24, 357–368. [Google Scholar] [CrossRef]

- Grosslight, L.; Unger, C.; Jay, E.; Smith, C.L. Understanding models and their use in science: Conceptions of middle and high school students and experts. J. Res. Sci. Teach. 1991, 28, 799–822. [Google Scholar] [CrossRef]

- Justi, R.; Gilbert, J.K. Teachers’ views on the nature of models. Int. J. Sci. Educ. 2003, 25, 1369–1386. [Google Scholar] [CrossRef]

- Grünkorn, J.; Upmeier zu Belzen, A.; Krüger, D. Assessing students’ understandings of biological models and their use in science to evaluate a theoretical framework. Int. J. Sci. Educ. 2014, 36, 1651–1684. [Google Scholar] [CrossRef]

- Gogolin, S.; Krüger, D. Students’ understanding of the nature and purpose of models. J. Res. Sci. Teach. 2018, 55, 1313–1338. [Google Scholar] [CrossRef]

- Chittleborough, G.D.; Treagust, D.F. Why models are advantageous to learning science. Educ. Quím. 2009, 20, 12–17. [Google Scholar] [CrossRef]

- Krell, M.; Upmeier zu Belzen, A.; Krüger, D. Students’ understanding of the purpose of models in different biological contexts. Int. J. Biol. Educ. 2012, 2, 1–34. [Google Scholar]

- Passmore, C.M.; Svoboda, J. Exploring opportunities for argumentation in modelling classrooms. Int. J. Sci. Educ. 2012, 34, 1535–1554. [Google Scholar] [CrossRef]

- Günther, S.; Fleige, J.; Upmeier zu Belzen, A.; Krüger, D. Interventionsstudie Mit Angehenden Lehrkräften zur Förderung von Modellkompetenz im Unterrichtsfach Biologie [Intervention Study with Pre-Service Teachers for Fostering Model Competence in Biology Education]. In Entwicklung Von Professionalität Pädagogischen Personals; Gräsel, C., Trempler, K., Eds.; Springer: Wiesbaden, Germany, 2017; pp. 215–236. [Google Scholar]

- Justi, R.S.; Gilbert, J.K. Modelling, teachers’ views on the nature of modelling, and implications for the education of modellers. Int. J. Sci. Educ. 2002, 24, 369–387. [Google Scholar] [CrossRef]

- Krell, M.; Walzer, C.; Hergert, S.; Krüger, D. Development and application of a category system to describe pre-service science teachers’ activities in the process of scientific modelling. Res. Sci. Educ. 2017, 1–27. [Google Scholar] [CrossRef]

- Treagust, D.F.; Chittleborough, G.D.; Mamiala, T.L. Students’ understanding of the descriptive and predictive nature of teaching models in organic chemistry. Res. Sci. Educ. 2004, 34, 1–20. [Google Scholar] [CrossRef]

- Krell, M.; Upmeier zu Belzen, A.; Krüger, D. Students’ levels of understanding models and modelling in biology: Global or aspect-dependent? Res. Sci. Educ. 2014, 44, 109–132. [Google Scholar] [CrossRef]

- Upmeier zu Belzen, A.; Krüger, D. Modellkompetenz im Biologieunterricht [Model competence in biology education]. Die Zeitschrift für Didaktik der Naturwissenschaften 2010, 16, 41–57. [Google Scholar]

- Wen-Yu Lee, S.; Chang, H.-Y.; Wu, H.-K. Students’ views of scientific models and modeling: Do representational characteristics of models and students’ educational levels matter? Res. Sci. Educ. 2017, 47, 305–328. [Google Scholar] [CrossRef]

- Ainsworth, S. DeFT: A conceptual framework for considering learning with multiple representations. Learn. Instr. 2006, 16, 183–198. [Google Scholar] [CrossRef]

- ISB. Staatsinstitut für Schulqualität und Bildungsforschung—Bavarian Syllabus Gymnasium G8; Kastner: Wolnzach, Germany, 2007. [Google Scholar]

- World Health Organization. Declaration of Helsinki. Bull. World Health Organ. 2001, 79, 373–374. [Google Scholar]

- Mayring, P. Qualitative Inhaltsanalyse: Grundlagen und Techniken (Beltz Pädagogik) Taschenbuch 2; Beltz: Weinheim, Germany, 2015. [Google Scholar]

- Cohen, J. Weighted kappa: Nominal scale agreement provision for scaled disagreement or partial credit. Psychol. Bull. 1968, 70, 213–220. [Google Scholar] [CrossRef] [PubMed]

- Landis, R.J.; Koch, G.G. The measurement of observer agreement for categorical data. Biometrics 1977, 33, 159–174. [Google Scholar] [CrossRef]

- Döring, N.; Bortz, J. Forschungsmethoden und Evaluation in den Sozial-und Humanwissenschaften [Research Methods and Evaluation in Social and Human Sciences], 5th ed.; Springer: Berlin/Heidelberg, Germany, 2016. [Google Scholar]

- Kline, P. The Handbook of Psychological Testing, 2nd ed.; Routledge: London, UK, 2000. [Google Scholar]

- Hair, J.; Black, W.; Babin, B.; Anderson, R.; Tatham, R. Multivariate Data Analysis, 6th ed.; Pearson Educational: Cranbury, NJ, USA, 2006. [Google Scholar]

- Field, A.P. Discovering Statistics Using IBM SPSS Statistics (and Sex and Drugs and Rock’ n’ Roll), 4th ed.; SAGE: London, UK, 2013. [Google Scholar]

- Fisher, R.A. On the interpretation of chi square from contingency tables, and the calculation of P. J. Royal Stat. Soc. 1922, 85, 87–94. [Google Scholar] [CrossRef]

- Pearson, K. On the criterion that a given system of deviations from the probable in the case of a correlated system of variables is such that it can be reasonably supposed to have arisen from random sampling. Philos. Mag. 1900, 50, 157–175. [Google Scholar] [CrossRef]

- Kaiser, H.F. A second generation little jiffy. Psychometrika 1970, 35, 401–415. [Google Scholar] [CrossRef]

- Kaiser, H.F. Consequently perhaps. Measurement 1960, XX, 141–151. [Google Scholar]

- Bühner, M.; Ziegler, M. Statistik für Psychologen und Sozialwissenschaftler [Statistics for Psychologists and Social Scientists], 3rd ed.; Pearson Studium: München, Germany, 2012; ISBN 9783827372741. [Google Scholar]

- Field, A.P. Discovering Statistics Using SPSS (and Sex and Drugs and Rock’ n’ Roll), 3rd ed.; SAGE: London, UK, 2009. [Google Scholar]

- KMK. Beschlüsse der Kultusministerkonferenz—Bildungsstandards im Fach Biologie für den Mittleren Bildungsabschluss [Resolution of the Standing Conference of the Ministers of Education and Cultural Affairs of the Länder in the Federal Republic of Germany—Standards of Biology Education for Secondary School]; Luchterhand: Munich, Germany, 2005. [Google Scholar]

- Louca, L.T.; Zacharia, Z.C. Modeling-based learning in science education: Cognitive, metacognitive, social, material and epistemological contributions. Educ. Rev. 2012, 64, 471–492. [Google Scholar] [CrossRef]

- Gobert, J.D.; Pallant, A. Fostering students’ epistemologies of models via authentic model-based tasks. J. Sci. Educ. Technol. 2004, 13, 7–22. [Google Scholar] [CrossRef]

- Dori, Y.J.; Barak, M. Virtual and physical molecular modeling: Fostering model perception and spatial understanding. Educ. Technol. Soc. 2001, 4, 61–74. [Google Scholar]

- Mahr, B. Information science and the logic of models. Softw. Syst. Model. 2009, 8, 365–383. [Google Scholar] [CrossRef]

- Gilbert, J.K. Models and Modelling: Routes to More Authentic Science Education. Int. J. Sci. Math. Educ. 2004, 2, 115–130. [Google Scholar] [CrossRef]

| Categories | Description | Example(s) from the Students’ Answers | |

|---|---|---|---|

| MM0 | missings | no or inadequate answer | - |

| MM1 | various ideas/concepts | There can be various ideas about the original and different models are valid at the same time. Differing concepts lead to different interpretations of the data. | ‘Because everyone has different interpretations of a representation, e.g., everyone presents things/components etc. differently.’ |

| MM2 | individuality of DNA | The complexity and the individuality of the original DNA structure result in diverse model versions, especially regarding the representation of possible base sequences. | ’Every human being is different, so the bases in each person are also arranged differently.’ |

| MM3 | different model design | Differing methods of presentation (e.g., 2D or 3D, different colors, large or small, separated elements or one piece). | ‘Because it can be displayed in different sizes and proportions.’ ‘Each one represents the individual components differently, e.g., in different colors.’ |

| MM4 | different focus | The complexity of the original allows different perspectives or variations of focusing on the original (interior or exterior, different sections or states of the original, etc.) | ‘To explain various ‘properties’, there are for example models where you only see the base pairings, and others where you can see the right-handed double helical structure, etc.’ |

| MM5 | different research states | Integrating new findings about the original into the model and improved technology leads to new findings about the original. | ‘There are more and more new research findings.’ |

| Subscale | Number of Items | ||||

|---|---|---|---|---|---|

| ER | Models as exact replicas | 4 | 0.609 | 0.633 | 0.663 |

| CNM | The changing nature of models | 3 | 0.699 | 0.682 | 0.791 |

| Components | |||

|---|---|---|---|

| Item | Factor 1 (ER) | Factor 2 (CNM) | |

| ER1 | A model should be an exact replica. | 0.783 | |

| ER2 | A model needs to be close to the real thing. | 0.739 | |

| ER3 | A model needs to be close to the real thing by being very exact, so nobody can disprove it. | 0.691 | |

| ER4 | Everything about a model should be able to tell what it represents. | 0.546 | |

| CNM2 | A model can change if there are new findings. | 0.817 | |

| CNM1 | A model can change if there are new theories or evidence prove otherwise. | 0.766 | |

| CNM3 | A model can change if there are changes in data or belief. | 0.742 | |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mierdel, J.; Bogner, F.X. Comparing the Use of Two Different Model Approaches on Students’ Understanding of DNA Models. Educ. Sci. 2019, 9, 115. https://doi.org/10.3390/educsci9020115

Mierdel J, Bogner FX. Comparing the Use of Two Different Model Approaches on Students’ Understanding of DNA Models. Education Sciences. 2019; 9(2):115. https://doi.org/10.3390/educsci9020115

Chicago/Turabian StyleMierdel, Julia, and Franz X. Bogner. 2019. "Comparing the Use of Two Different Model Approaches on Students’ Understanding of DNA Models" Education Sciences 9, no. 2: 115. https://doi.org/10.3390/educsci9020115

APA StyleMierdel, J., & Bogner, F. X. (2019). Comparing the Use of Two Different Model Approaches on Students’ Understanding of DNA Models. Education Sciences, 9(2), 115. https://doi.org/10.3390/educsci9020115