Interactive Learning with Student Response System to Encourage Students to Provide Peer Feedback

Abstract

1. Introduction

1.1. Peer Review Approach

1.2. Peer Feedback Type

1.3. Student Response Systems Support Interactive Learning

2. Research Questions

- How do the Architecture Education Student (AES) and Engineering Education Student (EES) groups compare as regards the type of feedback students provide to their peers?

- Is there any relationship between the percentage of Opinion-type feedback (critical thinking) and the subsequent assignment scores when compared between the AES and EES groups?

- How do the AES and EES groups compare based on student opinions as to what the good points of being an anonymous assessor are?

- How do the AES and EES groups compare based on the impact of peer feedback activities on the students’ performances as measured by final exam scores?

3. Method

3.1. Context of the Study and Participants

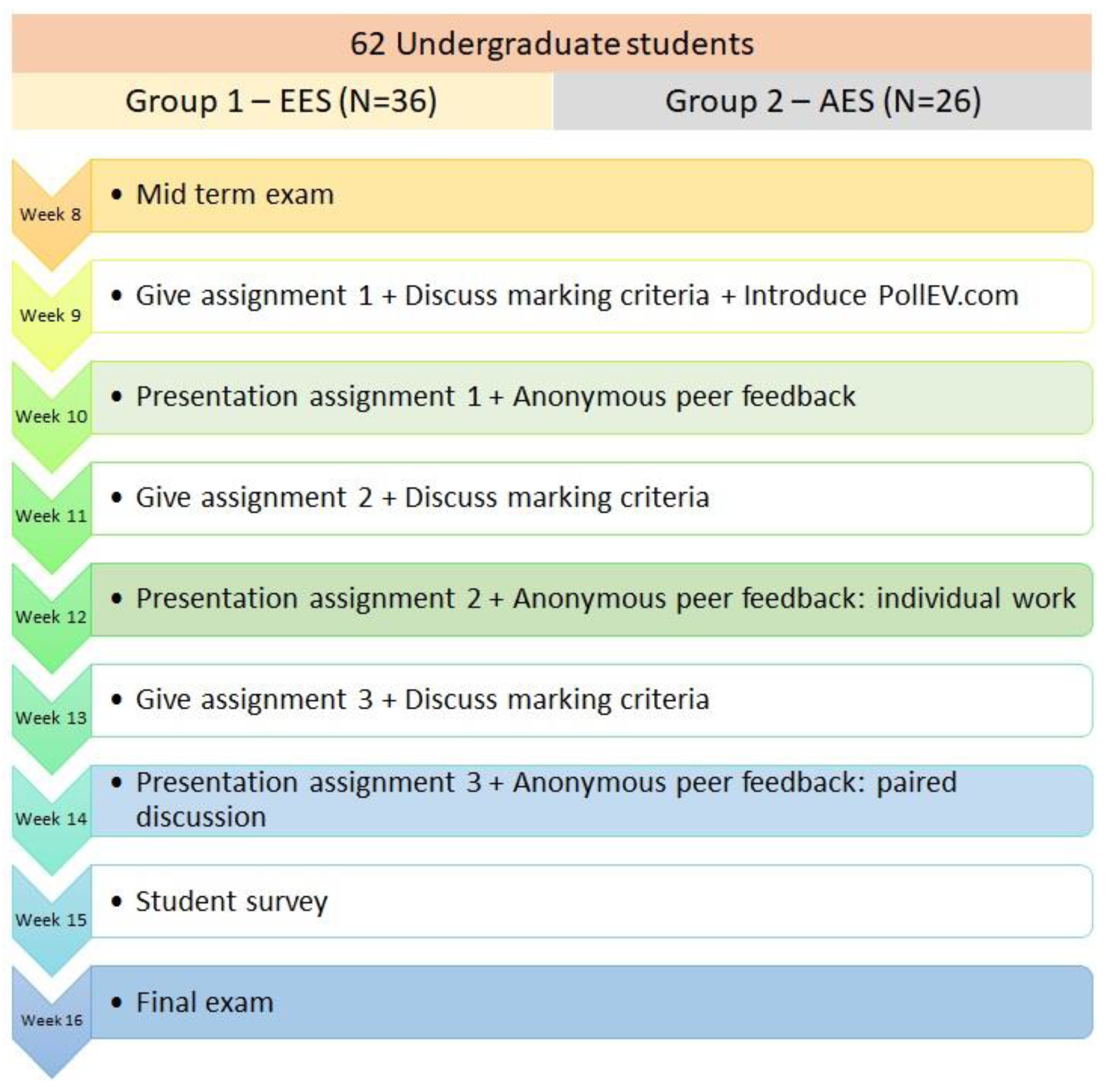

3.2. Experimental Procedure

3.3. Instruments

4. Results and Discussion

4.1. Research Question 1: How Do the AES and EES Groups Compare as Regards the Type of Feedback Students Provide to Their Peers?

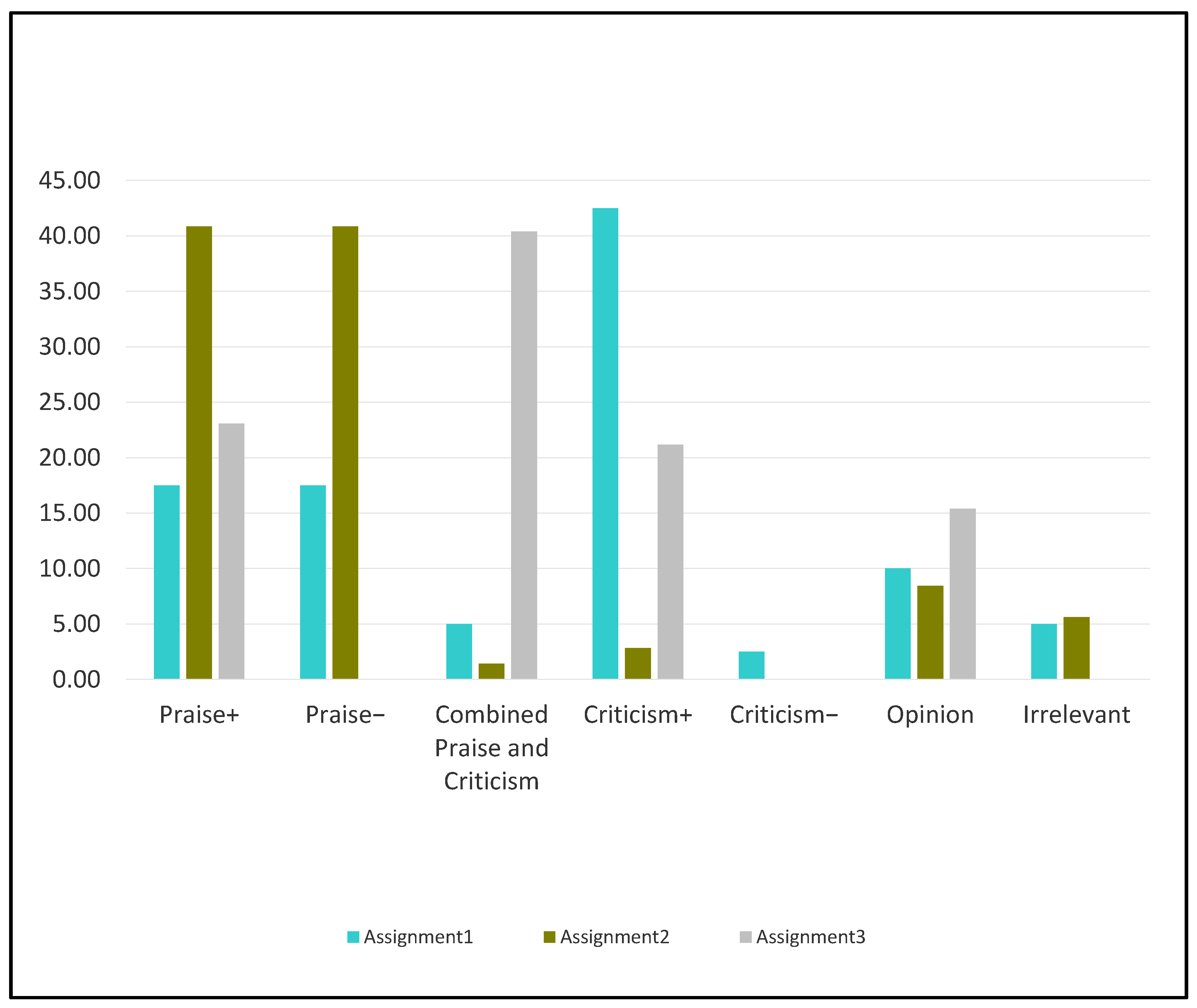

4.1.1. The Analysis of Peer Feedback from Engineering Education Students

“There is no perfect work. Most work always gets a negative comment. I can point out the mistakes of my friend’s work anyway.”[EES30]

“After the first round of peer feedback, I saw many negative feedbacks, and some of them are rude and irrelevant. So for the next round, I tried to point out the good things in order to encourage my classmates and not upset them. I guess most people prefer compliments and polite feedback.”[EES05]

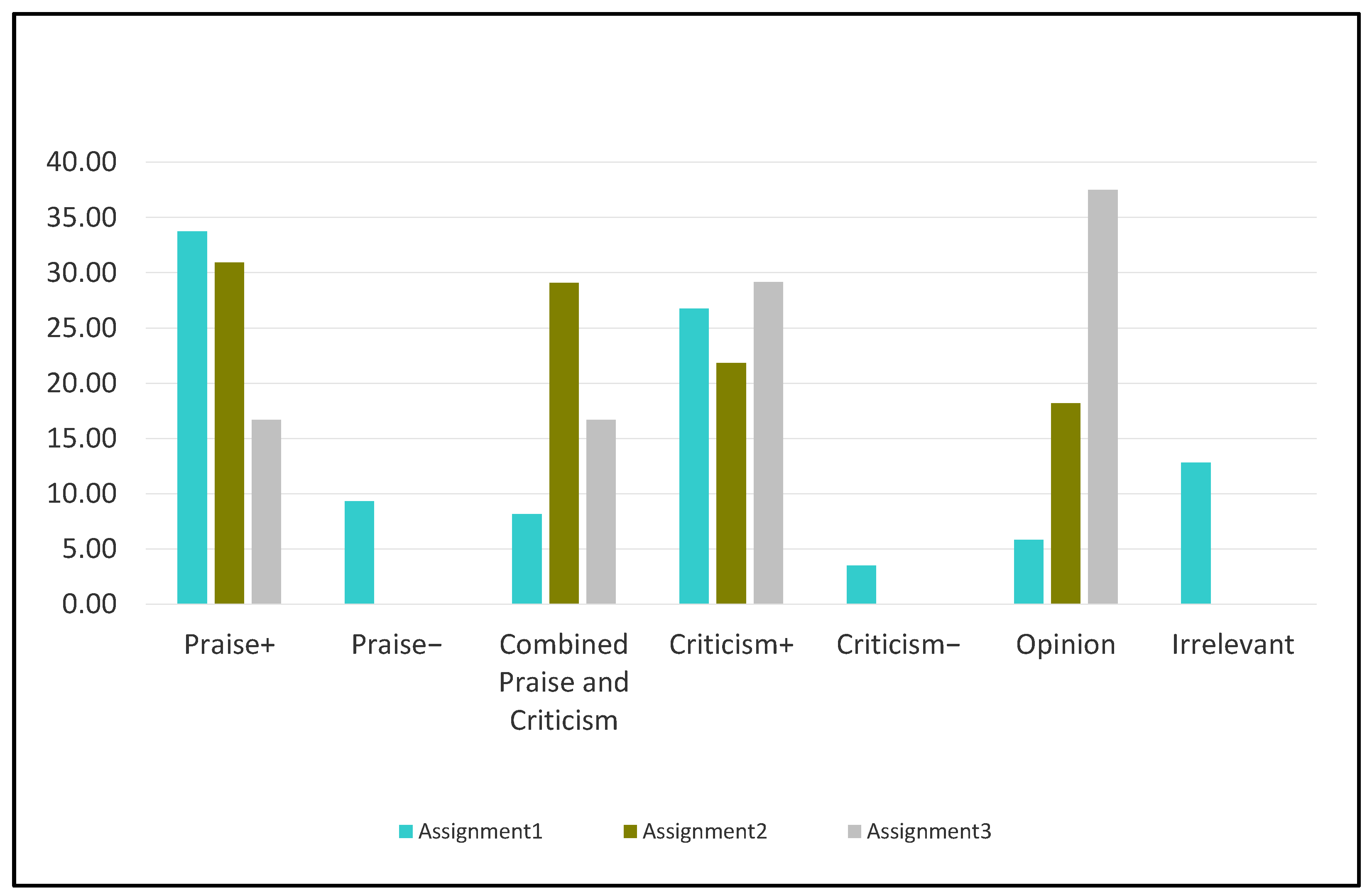

4.1.2. The Analysis of Peer Feedback from Architecture Education Students

“For assignment 3, we have a chance to discuss before giving a paired comment. So we were brainstorming. My partner had an opinion that I didn’t think of. Then we discussed about our different opinions. Finally, we came up with agreement, conclusion. So it’s a high quality of feedback.”[AES18]

However, one student reported that: “Paired discussion is a waste of time. We will have different opinions anyway.”[AES06]

“I prefer to give feedback anonymously to my classmates because I feel braver to express my opinions without embarrassment and criticize my friends’ works without hesitating.”[AES10]

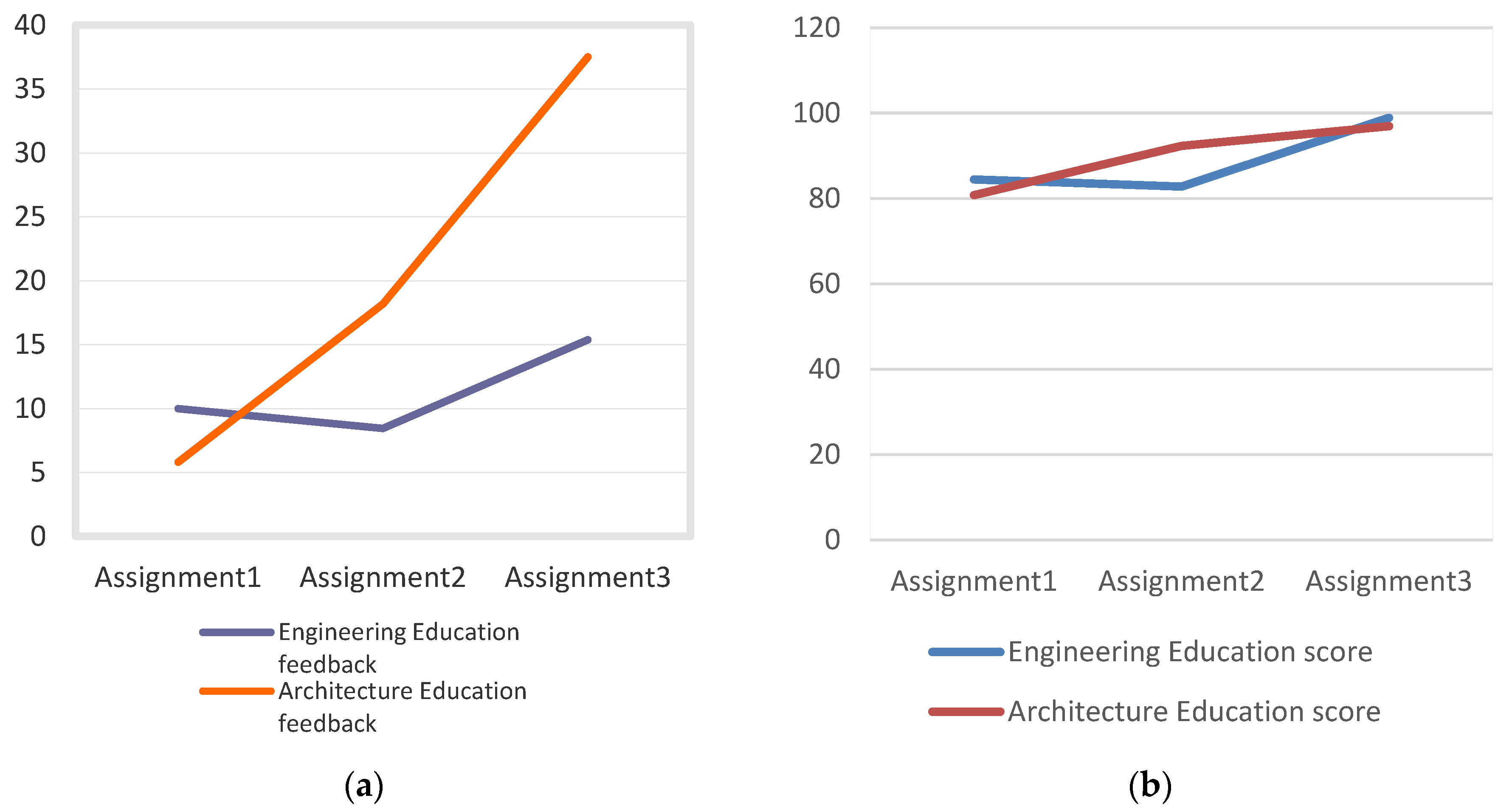

4.2. Research Question 2: Is There Any Relationship between the Percentage of Opinion-Type Feedback (Critical Thinking) and the Subsequent Assignment Scores When Compared between the AES and EES Groups?

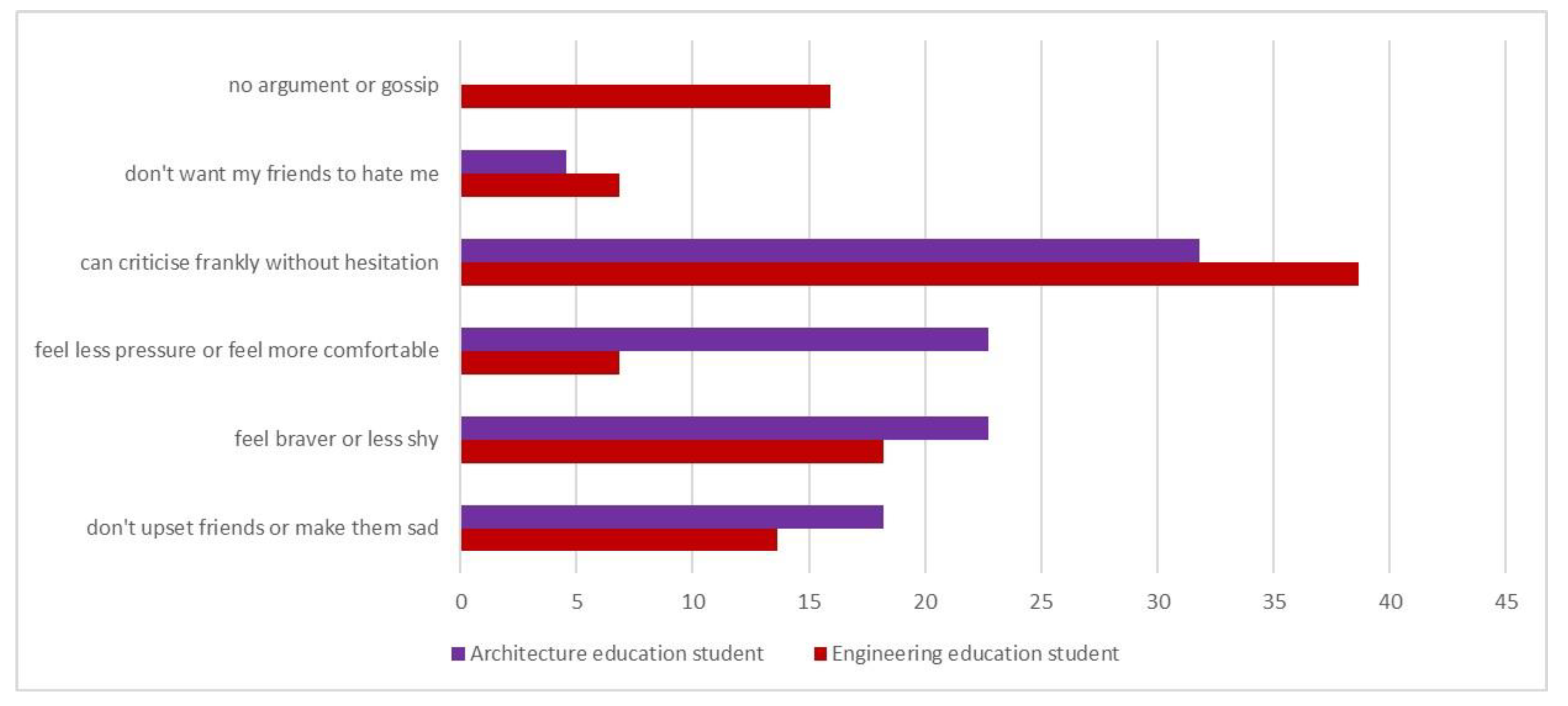

4.3. Research Question 3: How Do the AES and EES Groups Compare Based on Student Opinions as to What the Good Points of Being an Anonymous Assessor Are?

“It’s good that I can provide anonymous feedback or else I will be reluctant to criticize my friends’ work. I couldn’t say what I think exactly. So it’s not my real feedback.”[AES21]

“I prefer to provide feedback anonymously to avoid argument because my friend might be angry and disagree with my comment as he knew it’s my comment.”[EES04]

- Using rude words in feedback: Some students were concerned about using rude words or inappropriate words in feedback because of not revealing their identity. Therefore, it might encourage more impolite feedback or too frank feedback with strong words that might upset the receiver. Further study is required to understand how to reduce rude words in feedback.

- Unreliable feedback: Many studies have analyzed the quality of feedback comparing peer feedback and teacher feedback, based on the level of their knowledge [30,31]. In this study, several students mentioned that friends should help friends. Although this is an anonymous peer feedback process, some students do not want to upset their friends; therefore, the quality of feedback is not always based on the student’s ability but may be based on friendly evaluation. Double marking of the quality of peer feedback is required to make the students take assessor roles more seriously [31]. One of the students made the following comment during our study:

“We are close friends. So I couldn’t upset her with my frank feedback.”[AES01]

4.4. Research Question 4: How Do the AES and EES Groups Compare Based on the Impact of Peer Feedback Activities on the Students’ Performances as Measured by Final Exam Scores?

- Develop new ideas: “I get new ideas from evaluating peer work and from seeing a variety of feedback. Many students point out different issues with some good suggestions that I’ve never thought of. I like to listen to the different opinions.” [AES12]

- Encourage to work better: “Many positive and negative feedbacks from my classmates encourage me to work better. I like my work to be accepted. It’s good to see many feedbacks and this evaluation process makes me pay more attention to marking criteria. So I understand how to gain good scores.” [EES20]

- Realize my own mistakes: “When I evaluate my peer work, I compare it with my own work. So I realize my own mistakes. This peer feedback process makes me think more and learn more from other people’s mistakes and my own mistakes.” [AES15]

- Share knowledge and exchange ideas: “Peer feedback process provides an opportunity to share knowledge and exchange ideas between classmates. So we can learn from each other. It’s good. We don’t learn only how to do the assignment, we also learn how to provide the feedback and see how to improve it from many classmates’ ideas.” [AES09]

- Compare evaluation ability: “Some of my classmates’ comments are similar to my comments. Seeing many peer feedbacks make me aware of my and my classmates’ evaluation abilities. We learn in the same class, same lesson, sometimes we have the same comment, sometime we have different opinions of how to criticize work and how to improve work.” [EES25]

5. Limitations

“My friend who sat next to me read my comment. So this is not a real anonymous tool. We better use this tool in our free time (not in face-to-face classroom) and without the time limit.”[EES14]

“It’s a good website to express anonymous feedback. So I can criticize frankly. I recommend using it in other subjects as well and it will be great if PoolEV.com became more well known for peer feedback activities.”[AES07]

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Anderson, O.W.; Krathwohl, D.R. A Taxonomy for Learning, Teaching and Assessing. A Revision of Bloom’s Taxonomy of Educational Objectives (Abridged ed.); Addison Wesley Longman, Inc.: New York, NY, USA, 2001. [Google Scholar]

- Kay, R.; MacDonald, T.; DiGiuseppe, M. A comparison of lecture-based, active, and flipped classroom teaching approaches in higher education. J. Comput. High. Educ. 2019, 31, 449–4712. [Google Scholar] [CrossRef]

- Carless, D.; Boud, D. The development of student feedback literacy: Enabling uptake of feedback. Assess. Eval. High. Educ. 2018, 43, 1315–1325. [Google Scholar] [CrossRef]

- Nicol, D.; Thomson, A.; Breslin, C. Rethinking Feedback Practices in Higher Education: A Peer Review Perspective. Assess. Eval. High. Educ. 2014, 39, 102–122. [Google Scholar] [CrossRef]

- Chien, S.Y.; Hwang, G.J.; Jong, M.S.Y. Effects of peer assessment within the context of spherical video-based virtual reality on EFL students’ English-Speaking performance and learning perceptions. Comput. Educ. 2020, 146, 103751. [Google Scholar] [CrossRef]

- Rotsaert, T.; Panadero, E.; Schellens, T.; Raes, A. “Now you know what you’re doing right and wrong!” Peer feedback quality in synchronous peer assessment in secondary education. Eur. J. Psychol. Educ. 2017, 33, 1–21. [Google Scholar] [CrossRef]

- Topping, K. Peer assessment between students in colleges and universities. Rev. Educ. Res. 1998, 68, 249–276. [Google Scholar] [CrossRef]

- Falchikov, N.; Goldfinch, J. Student PA in higher education: A meta-analysis comparing peer and teacher marks. Rev. Educ. Res. 2000, 70, 287–322. [Google Scholar] [CrossRef]

- Li, L. The role of anonymity in peer assessment. Assess. Eval. High. Educ. 2017, 42, 645–656. [Google Scholar] [CrossRef]

- Stracke, E.; Kumar, V. Feedback and self-regulated learning: Insights from supervisors’ and PhD examiners’ reports. Reflective Pract. 2010, 11, 19–32. [Google Scholar] [CrossRef]

- Chen, I.-C.; Hwang, G.-J.; Lai, C.-L.; Wang, W.-C. From design to reflection: Effects of peer-scoring and comments on students’ behavioral patterns and learning outcomes in musical theater performance. Comput. Educ. 2020, 150, 103856. [Google Scholar] [CrossRef]

- Tai, J.; Ajjawi, R.; Boud, D.; Dawson, P.; Panadero, E. Developing Evaluative Judgement: Enabling Students to Make Decisions about the Quality of Work. High. Educ. 2018, 76, 467–481. [Google Scholar] [CrossRef]

- Masikunis, G.; Panayiotidis, A.; Burke, L. Changing the nature of lectures using a personal response system. Innov. Educ. Teach. Int. 2009, 46, 199–212. [Google Scholar] [CrossRef]

- Dunn, P.K.; Richardson, A.; Oprescu, F.; McDonald, C. Mobile-phone-based classroom response systems: Students’ perceptions of engagement and learning in a large undergraduate course. Int. J. Math. Educ. Sci. Technol. 2013, 44, 1160–1174. [Google Scholar] [CrossRef]

- Sheng, R.; Goldie, C.L.; Pulling, C.; Lucktar-Flude, M. Evaluating student perceptions of a multi-platform classroom response system in undergraduate nursing. Nurse Educ. Today 2019, 78, 25–31. [Google Scholar] [CrossRef]

- Jones, M.E.; Antonenko, P.D.; Greenwood, C.M. The impact of collaborative and individualized student response system strategies on learner motivation, metacognition, and knowledge transfer. J. Comput. Assist. Learn. 2012, 28, 477–487. [Google Scholar] [CrossRef]

- Wang, Y.-H. Interactive response system (IRS) for college students: Individual versus cooperative learning. Interact. Learn. Environ. 2018, 26, 943–957. [Google Scholar] [CrossRef]

- De Gagne, J.C. The impact of clickers in nursing education: A review of literature. Nurse Educ. Today 2011, 31, e34–e40. [Google Scholar] [CrossRef]

- Boud, D. Sustainable assessment: Rethinking assessment for the learning society. Stud. Contin. Educ. 2000, 22, 151–167. [Google Scholar] [CrossRef]

- Vanderhoven, E.; Raes, A.; Montrieux, H.; Rotsaert, T. What if pupils can assess their peers anonymously? A quasi-experiment study. Comput. Educ. 2015, 81, 123–132. [Google Scholar] [CrossRef]

- Ge, Z.G. Exploring e-learners’ perceptions of net-based peer-reviewed English writing. Int. J. Comput. Support. Collab. Learn. 2011, 6, 75–91. [Google Scholar] [CrossRef]

- Richard, B. Life in a Thai School. 2020. Available online: thaischoollife.comin-a-thai-school/ (accessed on 1 November 2022).

- Camacho-Miñano, M.-d.-M.; del Campo, C. Useful interactive teaching tool for learning: Clickers in higher education. Interact. Learn. Environ. 2016, 24, 706–723. [Google Scholar] [CrossRef]

- Lantz, M.E.; Stawiski, A. Effectiveness of clickers: Effect of feedback and the timing of questions on learning. Comput. Hum. Behav. 2014, 31, 280–286. [Google Scholar] [CrossRef]

- Lu, J.; Law, N. Online peer assessment: Effects of cognitive and affective feedback. Instr. Sci. 2012, 40, 257–275. [Google Scholar] [CrossRef]

- Lundstrom, K.; Baker, W. To give is better than to receive: The benefits of peer review to the reviewer’s own writing. J. Second Lang. Writ. 2009, 18, 30–43. [Google Scholar] [CrossRef]

- Jones, A.G. Audience response systems in a Korean cultural context: Poll everywhere’s effects on student engagement in English courses. J. Asia TEFL. 2019, 16, 624–643. [Google Scholar] [CrossRef]

- Li, L.; Steckelberg, A.L.; Srinivasan, S. Utilizing Peer Interactions to Promote Learning through a Web-based Peer Assessment System. Can. J. Learn. Technol./Rev. Can. L’apprentissage Technol. 2009, 34. [Google Scholar] [CrossRef]

- van den Bos, A.H.; Tan, E. Effects of anonymity on online peer review in second-language writing. Comput. Educ. 2019, 142, 103638. [Google Scholar] [CrossRef]

- Sridharan, B.; Tai, J.; Boud, D. Does the use of summative peer assessment in collaborative group work inhibit good judgement? High. Educ. 2019, 77, 853–870. [Google Scholar] [CrossRef]

- Sitthiworachart, J.; Joy, M. Computer support of effective peer assessment in an undergraduate programming class. J. Comput. Assist. Learn. 2008, 24, 217–231. [Google Scholar] [CrossRef]

- Gielen, S.; Tops, L.; Dochy, F.; Onghena, P.; Smeets, S. A comparative study of peer and teacher feedback and of various peer feedback forms in a secondary school writing curriculum. Br. Educ. Res. J. 2010, 36, 143–162. [Google Scholar] [CrossRef]

- Gielen, S.; Peeters, E.; Dochy, E.; Onghena, P.; Struyven, K. Improving the effectiveness of peer feedback for learning. Learn. Instr. 2010, 20, 304–315. [Google Scholar] [CrossRef]

- Cartney, P. Exploring the use of peer assessment as a vehicle for closing the gap between feedback given and feedback used. Assess. Eval. High. Educ. 2010, 35, 551–564. [Google Scholar] [CrossRef]

| Type | Definition | Examples of Comments on Students’ Brochure Designs |

|---|---|---|

| Praise+ | Feedback that is positive or supporting with detailed explanation. | The brochure is excellent. The design is good, with the frame and different font size making the reader understand the content easier. |

| Praise− | Simple feedback that is positive or supporting. | Good job! |

| Combined Praise and Criticism | Feedback that is both positive and negative with detailed explanation. | Text color and background color contrast well, but it is not a good choice of color, as it is too dark. |

| Criticism+ | Feedback that is negative or unfavorable with detailed explanation. | Font and color are not attractive. The fancy font is too small and difficult to read. There is not enough information for the reader. |

| Criticism− | Simple feedback that is negative or unfavorable. | It’s not great! |

| Opinion | Feedback or suggestion that is constructive. | This brochure uses white space well. The title font should be bigger than this. I could not read it. Bullets or arrows should be applied for important information and to attract reader attention. |

| Irrelevant | Feedback that is not related to content or non-sense. | I don’t want to go. Good bye! |

| Research Question | Data Source | Data Analysis |

|---|---|---|

| Peer feedback on the three assignments from the two groups of students | Categorizing the types of peer feedback into seven types |

| Peer Opinion-type feedback and assignment scores from the three assignments | Descriptive analysis |

| Students’ perceptions of the good points of being an anonymous assessor | Descriptive and content analysis |

| Scores on essay writing (final exam) | Independent samples t-test |

| Students’ agreement (Likert scale) on the sentences regarding peer feedback process motivating them to improve their subsequent assignment and feedback | 10-point scale analysis summing the value of each selected option | |

| Students’ views on the peer feedback process from the open-ended question (e.g., encourage to work better, realize their own mistakes, etc.) | Content analysis |

| Group | N | Mean | SD | |

|---|---|---|---|---|

| Final exam scores | AES | 26 | 7.345 | 1.266 |

| EES | 36 | 7.059 | 1.473 |

| Strongly Disagree----------Strongly Agree | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | ||

| 1 | Evaluating my peers’ work makes me compare it with my own work and thus want to improve it | 3 | 9 | 21 | 9 | 12 | |||||

| 2 | Seeing peer feedback encourages me to improve my own work | 9 | 9 | 12 | 12 | 12 | |||||

| 3 | Seeing peer feedback encourages me to improve my ability to provide feedback | 3 | 6 | 9 | 12 | 12 | 12 | ||||

| 4 | Evaluating my peers’ work inspires my creative thinking | 3 | 9 | 18 | 12 | 12 | |||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sitthiworachart, J.; Joy, M.; Ponce, H.R. Interactive Learning with Student Response System to Encourage Students to Provide Peer Feedback. Educ. Sci. 2023, 13, 310. https://doi.org/10.3390/educsci13030310

Sitthiworachart J, Joy M, Ponce HR. Interactive Learning with Student Response System to Encourage Students to Provide Peer Feedback. Education Sciences. 2023; 13(3):310. https://doi.org/10.3390/educsci13030310

Chicago/Turabian StyleSitthiworachart, Jirarat, Mike Joy, and Héctor R. Ponce. 2023. "Interactive Learning with Student Response System to Encourage Students to Provide Peer Feedback" Education Sciences 13, no. 3: 310. https://doi.org/10.3390/educsci13030310

APA StyleSitthiworachart, J., Joy, M., & Ponce, H. R. (2023). Interactive Learning with Student Response System to Encourage Students to Provide Peer Feedback. Education Sciences, 13(3), 310. https://doi.org/10.3390/educsci13030310