Student Learning Approaches: Beyond Assessment Type to Feedback and Student Choice

Abstract

:1. Introduction

1.1. Student Approaches to Learning and Assessment

1.2. Student Feedback Preferences

1.3. The Present Study

2. Materials and Methods

2.1. Participants

2.2. Study Design

2.3. Procedure

2.4. Data Processing

3. Results

3.1. Sample Characterisation and Feedback Preferences

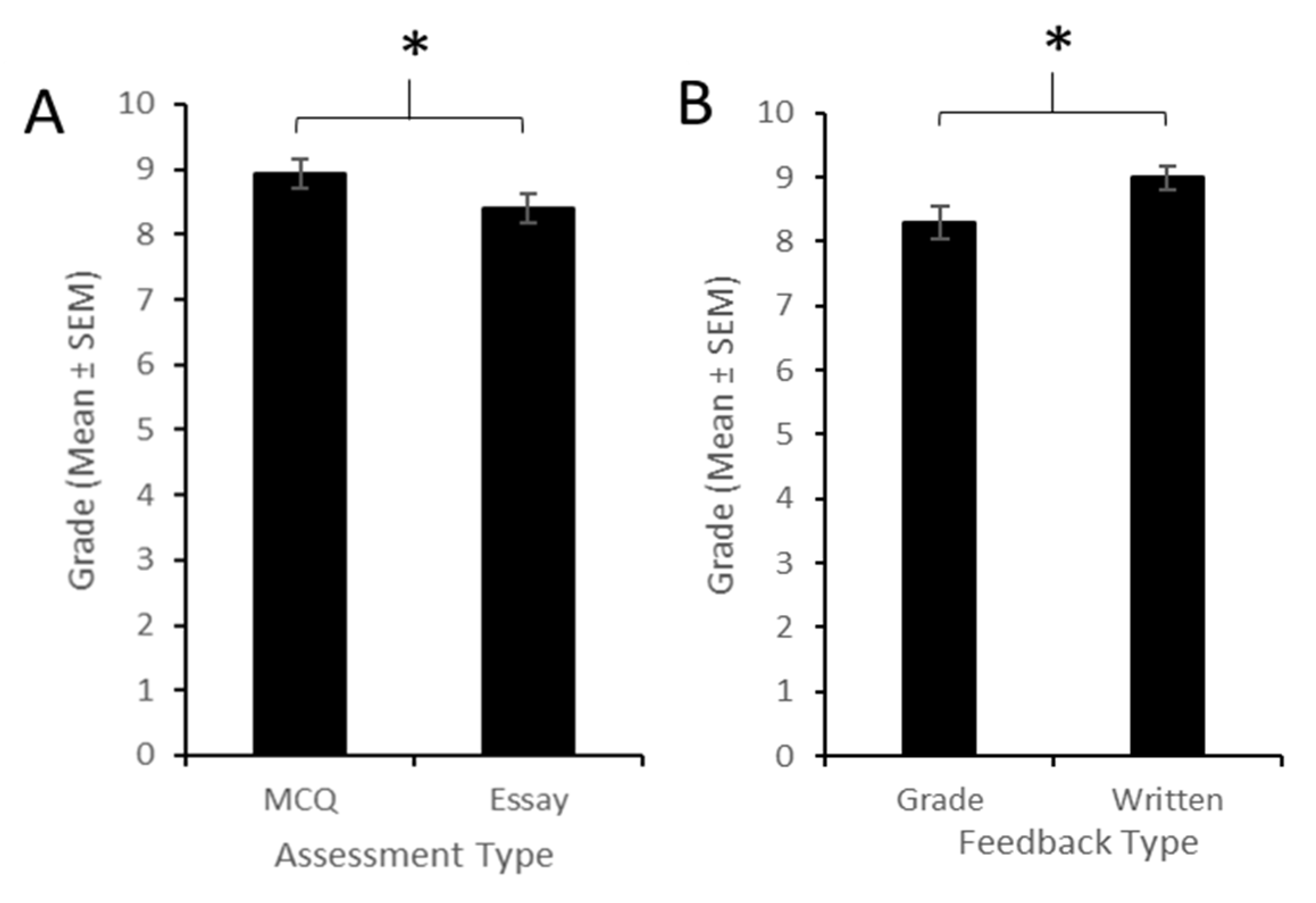

3.2. Outcome Measures

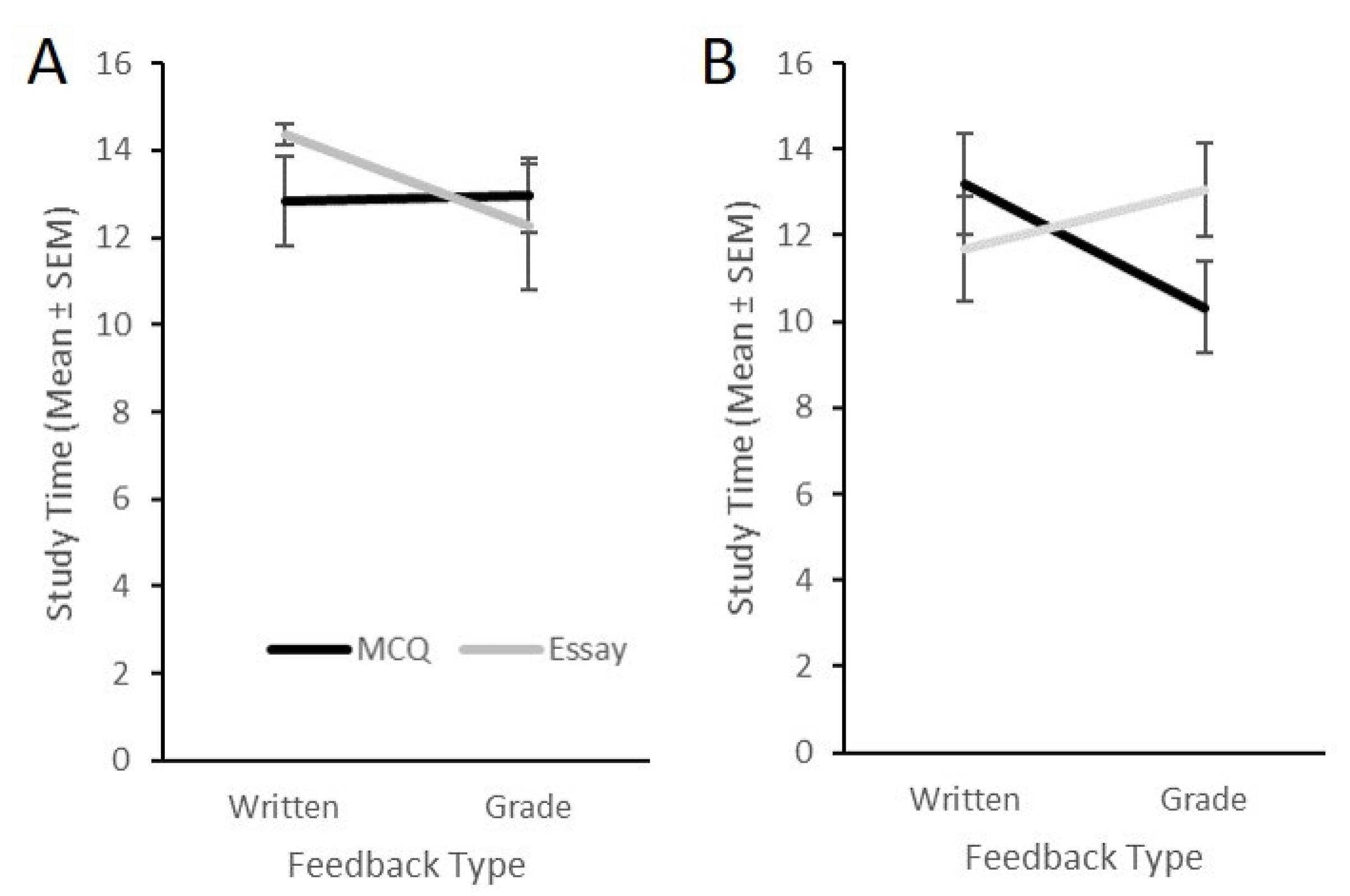

3.3. Student Learning Approach: Assessment, Feedback and Choice

4. Discussion

4.1. The Relationship between Assessment Type and Learning Approach

4.2. Feedback Preferences and the Impact of Expected Feedback on Learning Approach

4.3. Evaluation of the Present Study and Future Research

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Entwistle, N.; McCune, V. The conceptual bases of study strategy inventories. Educ. Psychol. Rev. 2004, 16, 325–345. [Google Scholar] [CrossRef]

- Beattie IV, V.; Collins, B.; McInnes, B. Deep and surface learning: A simple or simplistic dichotomy? J. Account. Educ. 1997, 6, 1–12. [Google Scholar] [CrossRef]

- Biggs, J.B. Student Approaches to Learning and Studying; Council for Educational Research: Hawthorn, VIC, Australia, 1987. [Google Scholar]

- Vermunt, J.D.; Donche, V. A learning patterns perspective on student learning in higher education: State of the art and moving forward. Educ. Psychol. Rev. 2017, 29, 269–299. [Google Scholar] [CrossRef]

- Song, Y.; Vermunt, J.D. A comparative study of learning patterns of secondary school, high school and college students. Stud. Educ. Eval. 2021, 68, 100958. [Google Scholar] [CrossRef]

- Coffield, F.; Moseley, D.; Hall, E.; Ecclestone, K.; Coffield, F.; Moseley, D.; Hall, E.; Ecclestone, K. Learning Styles and Pedagogy in Post-16 Learning: A Systematic and Critical Review; Learning & Skills Research Centre: London, UK, 2004. [Google Scholar]

- Baeten, M.; Kyndt, E.; Struyven, K.; Dochy, F. Using student-centred learning environments to stimulate deep approaches to learning: Factors encouraging or discouraging their effectiveness. Educ. Res. Rev. 2010, 5, 243–260. [Google Scholar] [CrossRef]

- Chotitham, S.; Wongwanich, S.; Wiratchai, N. Deep learning and its effects on achievement. Procedia Soc. Behav. Sci. 2014, 116, 3313–3316. [Google Scholar] [CrossRef] [Green Version]

- Gijbels, D.; Dochy, F. Students’ assessment preferences and approaches to learning: Can formative assessment make a difference? Educ. Stud. 2006, 32, 399–409. [Google Scholar] [CrossRef]

- Biggs, J.B. Individual differences in study processes and the quality of learning outcomes. High. Educ. 1979, 8, 381–394. [Google Scholar] [CrossRef]

- Biggs, J.B. Faculty patterns in study behaviour. Aust. J. Psychol. 1970, 22, 161–174. [Google Scholar] [CrossRef]

- Entwistle, N.J.; Entwistle, D. The relationships between personality, study methods and academic performance. Br. J. Educ. Psychol. 1970, 40, 132–143. [Google Scholar] [CrossRef]

- Phan, H.P. Relations between goals, self-efficacy, critical thinking and deep processing strategies: A path analysis. Educ. Psychol. 2009, 29, 777–799. [Google Scholar] [CrossRef]

- Hall, M.; Ramsay, A.; Raven, J. Changing the learning environment to promote deep learning approaches in first-year accounting students. J. Account. Educ. 2004, 13, 489–505. [Google Scholar] [CrossRef] [Green Version]

- Winstone, N.E.; Nash, R.A.; Rowntree, J.; Menezes, R. What do students want most from written feedback information? Distinguishing necessities from luxuries using a budgeting methodology. Assess. Eval. High. Educ. 2016, 41, 1237–1253. [Google Scholar] [CrossRef] [Green Version]

- Marton, F.; Säaljö, R. On qualitative differences in learning—Ii Outcome as a function of the learner’s conception of the task. Br. J. Educ. Psychol. 1976, 46, 115–127. [Google Scholar] [CrossRef]

- Trigwell, K.; Prosser, M. Improving the quality of student learning: The influence of learning context and student approaches to learning on learning outcomes. High. Educ. 1991, 22, 251–266. [Google Scholar] [CrossRef]

- Asikainen, H.; Parpala, A.; Virtanen, V.; Lindblom-Ylänne, S. The relationship between student learning process, study success and the nature of assessment: A qualitative study. Stud. Educ. Eval. 2013, 39, 211–217. [Google Scholar] [CrossRef]

- Biggs, J. Enhancing teaching through constructive alignment. High. Educ. 1996, 32, 347–364. [Google Scholar] [CrossRef]

- Smith, S.N.; Miller, R.J. Learning approaches: Examination type, discipline of study, and gender. Educ. Psychol. 2005, 25, 43–53. [Google Scholar] [CrossRef]

- Newble, D.I.; Jaeger, K. The effect of assessments and examinations on the learning of medical students. Med. Educ. 1983, 17, 165–171. [Google Scholar] [CrossRef] [PubMed]

- Scouller, K.M.; Prosser, M. Students’ experiences in studying for multiple choice question examinations. Stud. Educ. Eval. 1994, 19, 267–279. [Google Scholar] [CrossRef]

- Thomas, P.; Bain, J. Contextual dependence of learning approaches. Hum. Learn. 1984, 3, 230–242. [Google Scholar]

- Yonker, J.E. The relationship of deep and surface study approaches on factual and applied test-bank multiple-choice question performance. Assess. Eval. High. Educ. 2011, 36, 673–686. [Google Scholar] [CrossRef]

- Scouller, K. The influence of assessment method on students’ learning approaches: Multiple choice question examination versus assignment essay. High. Educ. 1998, 35, 453–472. [Google Scholar] [CrossRef]

- Minbashian, A.; Huon, G.F.; Bird, K.D. Approaches to studying and academic performance in short-essay exams. High. Educ. 2004, 47, 161–176. [Google Scholar] [CrossRef]

- Rowe, A.D.; Wood, L.N. Student perceptions and preferences for feedback. Asian Soc. Sci. 2008, 4, 78–88. [Google Scholar]

- Hattie, J.; Timperley, H. The power of feedback. Rev. Educ. Res. 2007, 77, 81–112. [Google Scholar] [CrossRef]

- Ramsden, P. Learning to Teach in Higher Education; Routledge: New York, NY, USA, 2003. [Google Scholar]

- Carless, D. From teacher transmission of information to student feedback literacy: Activating the learner role in feedback processes. Act. Learn. High. Educ. 2020, 1469787420945845. [Google Scholar] [CrossRef]

- Kluger, A.N.; DeNisi, A. The effects of feedback interventions on performance: A historical review, a meta-analysis, and a preliminary feedback intervention theory. Psychol. Bull. 1996, 119, 254. [Google Scholar] [CrossRef]

- Wisniewski, B.; Zierer, K.; Hattie, J. The power of feedback revisited: A meta-analysis of educational feedback research. Front. Psychol. 2020, 10, 3087. [Google Scholar] [CrossRef]

- Carless, D.; Boud, D. The development of student feedback literacy: Enabling uptake of feedback. Assess. Eval. High. Educ. 2018, 43, 1315–1325. [Google Scholar] [CrossRef] [Green Version]

- Forsythe, A.; Johnson, S. Thanks, but no-thanks for the feedback. Assess. Eval. High. Educ. 2017, 42, 850–859. [Google Scholar] [CrossRef]

- Sadler, D.R. Beyond feedback: Developing student capability in complex appraisal. Assess. Eval. High. Educ. 2010, 35, 535–550. [Google Scholar] [CrossRef] [Green Version]

- Higgins, R.; Hartley, P.; Skelton, A. The conscientious consumer: Reconsidering the role of assessment feedback in student learning. Stud. Educ. Eval. 2002, 27, 53–64. [Google Scholar] [CrossRef]

- Hyland, F. ESL writers and feedback: Giving more autonomy to students. Lang. Teach. Res. 2000, 4, 33–54. [Google Scholar] [CrossRef]

- Weaver, M.R. Do students value feedback? Student perceptions of tutors’ written responses. Assess. Eval. High. Educ. 2006, 31, 379–394. [Google Scholar] [CrossRef]

- Price, M.; Handley, K.; Millar, J.; O’donovan, B. Feedback: All that effort, but what is the effect? Assess. Eval. High. Educ. 2010, 35, 277–289. [Google Scholar] [CrossRef]

- Mulliner, E.; Tucker, M. Feedback on feedback practice: Perceptions of students and academics. Assess. Eval. High. Educ. 2017, 42, 266–288. [Google Scholar] [CrossRef]

- Ferguson, P. Student perceptions of quality feedback in teacher education. Assess. Eval. High. Educ. 2011, 36, 51–62. [Google Scholar] [CrossRef]

- Austen, L.; Malone, C. What students’ want in written feedback: Praise, clarity and precise individual commentary. Pract. Res. High. Educ. 2018, 11, 47–58. [Google Scholar]

- Lipnevich, A.A.; Smith, J.K. Response to assessment feedback: The effects of grades, praise, and source of information. ETS Res. Rep. Ser. 2008, 2008, i–57. [Google Scholar] [CrossRef] [Green Version]

- Almeida, P.A.; Teixeira-Dias, J.J.; Martinho, M.; Balasooriya, C.D. The interplay between students’ perceptions of context and approaches to learning. Res. Pap. Educ. 2011, 26, 149–169. [Google Scholar] [CrossRef]

- Gijbels, D.; Coertjens, L.; Vanthournout, G.; Struyf, E.; Van Petegem, P. Changing students’ approaches to learning: A two-year study within a university teacher training course. Educ. Stud. 2009, 35, 503–513. [Google Scholar] [CrossRef]

- Evans, C.; Cools, E.; Charlesworth, Z.M. Learning in higher education–how cognitive and learning styles matter. Teach. High. Educ. 2010, 15, 467–478. [Google Scholar] [CrossRef]

- Polychroni, F.; Koukoura, K.; Anagnostou, I. Academic self-concept, reading attitudes and approaches to learning of children with dyslexia: Do they differ from their peers? Eur. J. Spec. Needs Educ. 2006, 21, 415–430. [Google Scholar] [CrossRef]

- Dommett, E.J.; Gardner, B.; van Tilburg, W. Staff and students perception of lecture capture. Internet High. Educ. 2020, 46, 100732. [Google Scholar] [CrossRef]

- Biggs, J.; Kember, D.; Leung, D.Y. The revised two-factor study process questionnaire: R-SPQ-2F. Br. J. Educ. Psychol. 2001, 71, 133–149. [Google Scholar] [CrossRef]

- Asikainen, H.; Gijbels, D. Do students develop towards more deep approaches to learning during studies? A systematic review on the development of students’ deep and surface approaches to learning in higher education. Educ. Psychol. Rev. 2017, 29, 205–234. [Google Scholar] [CrossRef]

- Biggs, J.B. Teaching for Quality Learning at University: What the Student Does; McGraw-Hill Education (UK): London, UK, 2011. [Google Scholar]

- Entwistle, N.; Ramsden, P. Understanding Student Learning (Routledge Revivals); Routledge: London, UK, 2015. [Google Scholar]

- Bitchener, J. Evidence in support of written corrective feedback. J. Second Lang. Writ. 2008, 17, 102–118. [Google Scholar] [CrossRef]

- Wu, Q.; Jessop, T. Formative assessment: Missing in action in both research-intensive and teaching focused universities? Assess. Eval. High. Educ. 2018, 43, 1019–1031. [Google Scholar] [CrossRef]

- Entwistle, N.J.; Entwistle, A. Contrasting forms of understanding for degree examinations: The student experience and its implications. High. Educ. 1991, 22, 205–227. [Google Scholar] [CrossRef]

- Filius, R.M.; de Kleijn, R.A.; Uijl, S.G.; Prins, F.; van Rijen, H.V.; Grobbee, D.E. Promoting deep learning through online feedback in SPOCs. Frontline Learn. Res. 2018, 6, 92. [Google Scholar] [CrossRef]

- Smith, J. Learning styles: Fashion fad or lever for change? The application of learning style theory to inclusive curriculum delivery. Innov. Educ. Teach. Int. 2002, 39, 63–70. [Google Scholar] [CrossRef]

- Knight, P.T. Complexity and curriculum: A process approach to curriculum-making. Teach. High. Educ. 2001, 6, 369–381. [Google Scholar] [CrossRef]

- Irwin, B.; Hepplestone, S. Examining increased flexibility in assessment formats. Assess. Eval High. Educ. 2012, 37, 773–785. [Google Scholar] [CrossRef]

- Reid, W.A.; Duvall, E.; Evans, P. Relationship between assessment results and approaches to learning and studying in year two medical students. Med. Educ. 2007, 41, 754–762. [Google Scholar] [CrossRef]

- Leung, S.F.; Mok, E.; Wong, D. The impact of assessment methods on the learning of nursing students. Nurse Educ. Today 2008, 28, 711–719. [Google Scholar] [CrossRef]

- Carless, D. Feedback loops and the longer-term: Towards feedback spirals. Assess. Eval. High. Educ. 2019, 44, 705–714. [Google Scholar] [CrossRef]

- Javali, S.B.; Gudaganavar, N.V.; Raj, S.M. Effect of varying sample size in estimation of coefficients of internal consistency. Webmed Central 2011, 2, 1–15. [Google Scholar]

| Assessment Type (N) | Feedback Choice (N) | Feedback Type (N) |

|---|---|---|

| MCQ (31) | Yes (15) | Written (7) |

| Grade (8) | ||

| No (16) | Written (8) | |

| Grade (8) | ||

| Short essay (32) | Yes (16) | Written (11) |

| Grade (5) | ||

| No (16) | Written (8) | |

| Grade (8) |

| Low | Moderate | High | |

|---|---|---|---|

| Volume of notes | 31 | 26 | 6 |

| Use of diagrams | 57 | 6 | 0 |

| Level of detail | 35 | 24 | 4 |

| Scale Range | Mean (SD) | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | |

|---|---|---|---|---|---|---|---|---|---|---|

| 1. Grade | 1–10 | 8.67 (1.28) | ||||||||

| 2. Study Time | 0–15 | 12.67 (2.84) | 0.296 * | |||||||

| 3.Assessment Time | 0–15 | 5.96 (4.37) | −0.162 | 0.160 | ||||||

| 4. Deep Learning | 10–50 | 29.87 (5.80) | 0.025 | 0.050 | −0.141 | |||||

| 5. Surface Learning | 10–50 | 25.25 (7.22) | −0.061 | −0.126 | −0.054 | −0.603 ** | ||||

| 6. Deep Motive | 5–25 | 15.25 (3.47) | 0.045 | 0.037 | −0.106 | 0.832 ** | −0.583 ** | |||

| 7. Deep Strategy | 5–25 | 14.62 (3.49) | 0.004 | 0.045 | −0.129 | 0.835 ** | −0.567 ** | 0.390 ** | ||

| 8. Surface Motive | 5–25 | 12.05 (4.58) | −0.019 | −0.154 | −0.041 | −0.555 ** | 0.828 ** | 0.578 ** | −0.348 ** | |

| 9. Surface Strategy | 5–25 | 13.84 (4.29) | −0.124 | 0.049 | −0.047 | −0.423 ** | 0.801 ** | −0.415 ** | −0.415 ** | 0.327 ** |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Clack, A.; Dommett, E.J. Student Learning Approaches: Beyond Assessment Type to Feedback and Student Choice. Educ. Sci. 2021, 11, 468. https://doi.org/10.3390/educsci11090468

Clack A, Dommett EJ. Student Learning Approaches: Beyond Assessment Type to Feedback and Student Choice. Education Sciences. 2021; 11(9):468. https://doi.org/10.3390/educsci11090468

Chicago/Turabian StyleClack, Alice, and Eleanor J. Dommett. 2021. "Student Learning Approaches: Beyond Assessment Type to Feedback and Student Choice" Education Sciences 11, no. 9: 468. https://doi.org/10.3390/educsci11090468

APA StyleClack, A., & Dommett, E. J. (2021). Student Learning Approaches: Beyond Assessment Type to Feedback and Student Choice. Education Sciences, 11(9), 468. https://doi.org/10.3390/educsci11090468