1. Introduction

More than 740 million people (10%) depend on catching, measuring, producing, and selling fish and seafood [

1]. This dependence on fishing-related livelihoods is steadily increasing. In developing maritime countries, fish play a crucial role as the principal source of income, comprising the largest portion of the world’s fish catch and production. In addition, these countries contribute substantially, representing 97% of the global fishing workforce [

2]. This also applies to the overwhelming majority of small-scale fishermen, for whom fishing not only forms the basis of their earnings, but also constitutes an essential part of their daily nourishment.

The oceans are home to more than 20,000 species of fish [

3], some of which are consumable, while others are not. The continuous overfishing of sea resources not only endangers many species of fish but also threatens the balance of the entire ecosystem. The use of an intelligent classification system for fishing will help fishermen distinguish protected fish species from their catches, helping to prevent illegal activities and protect these species. The existing state-of-the-art fish classification models considered limited species of fish [

4] and did not assess fish consumability status despite its importance [

5]. This consumability could be a strong indicator in distinguishing various protected and dangerous edible fish species from their commercial and consumable counterparts.

This work considers fish classification over a remote fishing environment in Indonesia and develops an edge intelligence (EI) strategy to overcome unstable network connections in the sea’s remote areas. The framework consists of a state-of-the-art lightweight machine learning model based on MobileNet and low-communication-overhead ML libraries for resource-constrained edge devices (e.g., smartphones). Compared to the existing approaches, we propose a practical solution that can accommodate massive-scale and heterogeneous Internet of Things (IoT) deployments.

Unlike simple batch-based learning approaches, we aim for the development of portable ML libraries for local computation with restricted dependency on remote computing libraries. The proposed method is designed to be implemented on a low- and small-resource compact machine in remote areas. In particular, we utilize a NVIDIA GeForce GTX 860M GPU (NVIDIA Corp., Santa Clara, CA, USA) and 16 GB of DDR3 RAM graphics card manufactured in 2014 with a 28 nm chip size (low-specification mobile chip with low-resource computing for remote area implementation). This resource-efficient feature of the strategy is contributed to by possible customization according to the characteristics of the target of the EI application.

Although identifying fish species could be time-consuming and largely laborious, it is a mandatory procedure for both industrial and research fishing boats. Aboard research fishing boats, fish species are often assessed manually. For instance, the length of the fish is estimated manually, with one person measuring the length using a measuring board, while another person manually records the data in a personal computer [

6]. Automatic fish-length measurement in the laboratory using computer vision methods has been explored in [

6], demonstrating results with errors of less than 1 cm.

There has been a growing interest in fish classification in the recent literature [

7,

8,

9,

10]. In particular, several approaches to fish classification have used deep learning models [

11,

12,

13,

14,

15,

16,

17,

18,

19,

20]. Various MobileNet-based (mobileNets) approaches have been explored, including [

21], MobileNetv2 [

22] and other related architectures such as VGG16 [

23], Resnet50 [

24], Effnet [

25], Capsnet [

26], Sufflenet [

27], Mnasnet [

28], and Xception [

29]. MobileNets emerge as promising candidates for the next wave of deep learning methods in object detection and classification, particularly well-suited for handling large datasets. MobileNets [

21,

22] rely on a streamlined architecture that applies depth-wise separable convolutions to make lightweight deep neural networks. MobileNets have been implemented in many applications such as traffic density [

30], redundancy reduction [

31], skin classification [

32], FPGA [

33], vehicle counting [

34], multi-fruit detection [

35], fish species classification [

36] and object detection on non-GPU architecture [

37].

In this work, we propose an enhanced MobileNet model called M-MobileNet (Modified-MobileNet) that focuses on halving the number of parameters in the top layer of the CNN and improving the accuracy of deployment in low-specification devices (i.e., GeForce GTX 860M). Moreover, in our M-MobileNet the total number of parameters was reduced by 494,056 parameters, representing 12% of the total (4,253,864 parameters) used in the conventional MobileNet architecture. This reduction represents, among others, a contribution that addresses the need for computational efficiency in resource-constrained settings.

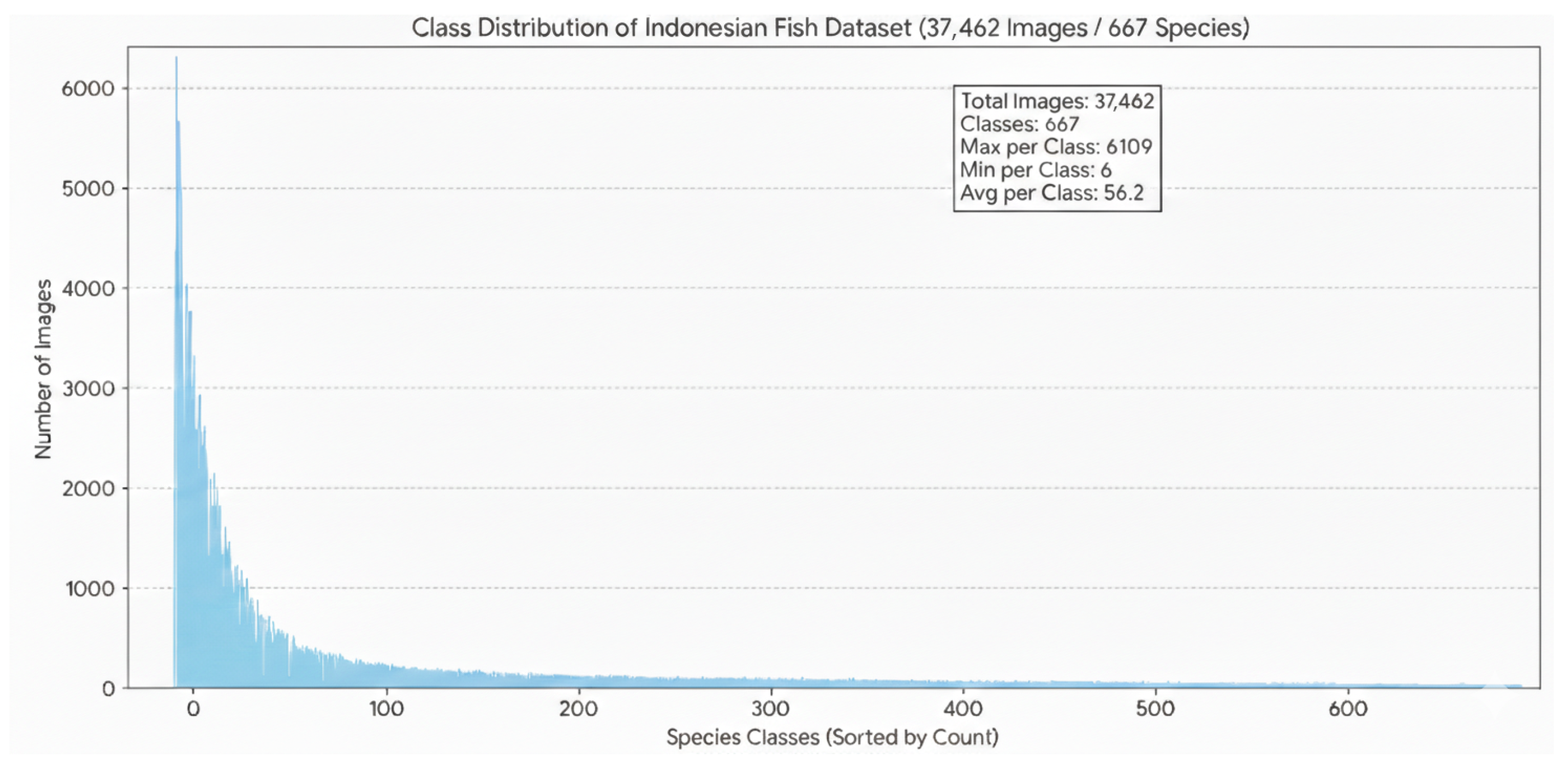

Furthermore, given the distinctiveness of Indonesian fish species, we curated a dedicated fish dataset comprising images and species labels for 37,462 specimens, along with a consumability index. We determined the fish species’ status by considering their poisonous, traumatogenic, and venomous characteristics, relying on insights from the fisherfolk. The dataset encompasses a total of 667 distinct fish species and serves as the training set for the proposed M-MobileNet model. The results of our study indicate that M-MobileNet outperforms the existing benchmark methods.

The summary of the key contributions of this study is given below:

- 1.

Lightweight Model Design: We introduce M-MobileNet, a streamlined modification of the MobileNet architecture. By reducing the total parameter count by 12% (approx. 0.5 million fewer parameters), the model significantly lowers the computational burden. Practically, this translates to a GPU utility of 43% and memory utility of 14.6% on a standard GTX 860M, compared to 98% and 65%, respectively, for heavier models like VGG16. This efficiency ensures the system does not saturate the hardware, preserving computational capacity for other critical vessel operations.

- 2.

Custom Fish Dataset: To enhance the specificity of our study, we curate an original and dedicated Indonesian fish dataset, comprising 37,462 images representing 667 distinct fish species. This dataset serves as the training foundation for our proposed M-MobileNet model.

- 3.

Implementation and Validation: We implement and numerically validate the M-MobileNet model on low-resource hardware (GTX 860M). The results demonstrate that the 12% reduction in parameters effectively lowers the memory footprint without compromising accuracy (97%), validating the model’s suitability for real-time deployment on energy-constrained maritime edge devices.

- 4.

Extension to Consumability Classifier: Building on our species classifier, we extend its functionality to serve as a consumability classifier. This enhancement involves integrating it with an existing consumability fish database.

- 5.

The remainder of this paper is organized as follows. In

Section 2, we review recent literature pertinent to the problem of deployment of deep learning technology in remote fishing areas, including the issues related to remote communications, limited hardware resources, and consumability.

Section 3 provides details of the modified lightweight deep learning model M-MobileNet for fish classification. We also describe the custom fish dataset created for our study.

Section 4 presents and analyzes the results of comparing M-MobileNet with the benchmark models.

Section 5 concludes the paper by providing a summary of the study and offering recommendations for future research.

3. Proposed M-MobileNet

In this section, we discuss the main components of the proposed approach for efficient fish classification, including (i) data collection, (ii) data augmentation, (iii) modification of the MobileNet architecture to obtain an efficient lightweight deep learning model, and (iv) transfer learning.

Figure 1 shows the proposed M-MobileNet in detail.

3.1. Data Acquisition and Ethics

Capture Protocol: The dataset was constructed through a collaborative partnership with the Indonesian Traditional Fishermen’s Association (KNTI). Images were collected directly onboard operational fishing vessels using the crews’ personal devices, resulting in a heterogeneous mix of resolutions and sensor qualities (ranging from entry-level smartphones to digital pocket cameras). This variability is intentional, designed to train the model on the varying lighting conditions, angles, and backgrounds (e.g., wet decks, plastic trays) typical of real-world maritime environments.

Annotation and Quality Control: The annotation process followed a two-stage protocol. First, specimens were labeled by the fishermen using local vernacular names. Second, these labels were mapped to their scientific genus/species equivalents and cross-referenced with the FishBase database [

52] to ensure taxonomic validity. This verification step corrected regional naming inconsistencies, serving as a robust alternative to statistical inter-rater reliability measures (e.g., Cohen’s

).

Ethical Statement: This study relies exclusively on observational data (images) of fish harvested during standard commercial fishing activities. No live animals were experimented upon, injured, or sacrificed for the specific purpose of this research. Consequently, formal Institutional Animal Care and Use Committee (IACUC) approval is not applicable.

The original images taken onboard the fishing boats consisted of different dimensions due to different photo equipment used by the crew. During the processing stage, the images were homogenized and scaled to 224 × 224 (pixel). The original images consisted of the RGB values in the range 0–255, which was adjusted to the range 0–1 through rescaling. Otherwise, the RGB values would be too high for the model to process in low-resource computation. As some level of diversity could make the data suitable for the upcoming unseen data, the images of the fish were taken randomly. Some images were taken under the water while others were taken outside the water with various unspecified angles and distances.

The images are categorized based on their species, genus, family, and order. The proposed model is trained on the final dataset to classify the species of fish. The data is split into training and test sets containing 29,970 and 7492 images, respectively. The images were categorized into 283 genera of fish from 667 species. Furthermore, the data was synced with FishBase [

52], a provider of fish information around the world, to determine whether each specimen is consumable or not.

The resulting dataset comprises 37,462 images across 667 classes. The class distribution exhibits a long-tail characteristic typical of biodiversity datasets, where commercial species are over-represented. A detailed histogram of the class distribution and the MD5 checksums for dataset integrity verification are provided in

Figure 2.

3.2. Data Augmentation

To improve classifier performance on unseen data, we augment the original training set with various modified images. The goal of data augmentation is to expose the classifier to a larger variety of images, thereby improving robustness to image distortions. The augmentation techniques alter the array data significantly while often remaining imperceptible to humans. The data was augmented using the following transformations with specific hyperparameter settings:

Rescale: The original image RGB coefficients (0–255) are rescaled to a range between 0 and 1 by multiplying by a factor of 1/255.

Width Shifting: Images are shifted horizontally with a floating-point range of 0.2, representing the fraction of the total width.

Height Shifting: Images are shifted vertically with a floating-point range of 0.2, representing the fraction of the total height.

Shear: A shear intensity of 0.2 (shear angle in degrees) is applied to transform images by stretching them.

Zoom: A zoom range of 0.2 is applied. This randomly zooms the image in or out by a factor within the range [0.8, 1.2].

Flip: Images are randomly flipped horizontally to account for orientation variability.

Fill: The “nearest” fill mode is utilized, repeating the closest pixel values to fill empty areas created by the transformations.

Rotation: rotates images randomly up to 40 degrees.

RGB Channel Shifting: Implementation-wise, the augmentation pipeline was executed using the TensorFlow 2.10 Keras API ImageDataGenerator. To simulate the highly variable lighting conditions of maritime environments (e.g., direct sunlight, overcast, dawn), we applied random RGB Channel Shifting. This was configured with a channel_shift_range of 20.0, which adds a random value sampled from to the pixel intensity of each color channel independently, ensuring the model remains robust to color temperature variations.

3.3. MobileNet

MobileNet was originally developed by Google. It is based on a streamlined architecture that uses depth-wise separable convolutions to build lightweight deep neural networks. It was introduced as an efficient deep-learning model for mobile and embedded vision applications. The efficiency and low resource usage of MobileNet have led to its adoption in mobile devices such as smartphones. Given the limited computing capacity onboard remote fishing boats, MobileNet provides an attractive base model to implement our fish classification model.

3.4. Modified MobileNet

We modify the original MobileNet architecture to fit our purposes in the context of resource-constrained optimization. To this end, several changes to the original model are implemented including reducing the number of top-layer parameters (

Figure 3), using a new activation function (swish), and introducing batch normalization within the model. The final architecture of the proposed classification model is shown in

Figure 3.

To construct our proposed modified MobileNet model (M-MobileNet), we reduce the total number of parameters while keeping the CNN layers unchanged. Concretely, the CNN layers of M-MobileNet are kept exactly the same as the original MobileNet, while the number of parameters in the fully connected top layers of M-MobileNet is reduced to around 531,000 compared to around 1,025,000 in the original MobileNet model. Thus, we obtain a lighter version of the original model that is faster and smaller. Due to its reduced size, M-MobileNet can be employed on edge computing devices.

The second key modification involves the adoption of a new swish activation function, denoted as

, originally introduced by Google. This function has demonstrated superior performance compared to ReLU in various scenarios. The selection of the activation function within the network significantly influences training dynamics and can enhance classification performance. The swish activation is closely related to the traditional sigmoid activation function, denoted by

.

where

x denotes the input. The utility of the swish activation can be optimized when used in conjunction with batch normalization which has gradient squishing property. Batch normalization allows faster and more stable training of the neural net through normalization of the layers’ inputs by re-centering and rescaling. Batch normalization is performed when

goes through a mini-batch

B of size

m with mean

and variance

. The inputs of each layer are separately normalized and denoted by

where

and

(

d is the dimension and

m is mini-batch).

and

are, respectively, the mean and variance for each layer. The constant

is a small parameter to prevent numerical instability.

3.5. Hierarchical Categorization Strategy

The system operates using a two-stage inference logic. The primary computational task is species classification, where the M-MobileNet model predicts the specific taxonomic identity of the input image from 667 possible classes (). Once the species is identified, the system performs a secondary consumability determination. This is not a learned binary classification task but a deterministic lookup operation. The predicted species ID is queried against our synchronized FishBase dictionary to retrieve its safety status (consumable vs. non-consumable/poisonous). Consequently, the system’s ability to correctly determine edibility is directly dependent on the accuracy of the fine-grained species classification.

3.6. Transfer Learning

Transfer learning is a machine learning technique for recycling an existing trained model for use in another task. This approach provides optimization and rapid progress in modeling the second task. It is a good way to save resources, especially on a problem in which the input is image-type data. In our work, we relied on this technique. In fact, our proposed method and the benchmark results use the well-known weights from Keras, developed with the ImageNet dataset. Although the general features ‘transferred’ from the ImageNet dataset indeed helped the development of the fish classifier model, the specific features related to our dataset still need to be learned and the model parameters need to be well-tuned for an optimized performance and a high accuracy. To facilitate this. We adopted a trial-and-error approach to find the most adaptive and optimal configuration for the model, which results in the choice of the swish activation function. Transfer learning suits the low-resource scenario because of the efficiency in time and accuracy.

Another important parameter that requires additional consideration and tuning during transfer learning is the optimal learning rate. The study eventually used the value of the learning rate. Determining the optimal learning rate holds the key to the model’s accuracy while making it faster. The trial started with a large value, i.e., 0.1, then it lowered exponentially. A large learning rate might cause the model to train faster; however, it will not be able to reach the optimal accuracy. Meanwhile, a smaller learning rate might slow down the model training.

3.7. Classification Output Analysis

As shown in

Figure 4, the system successfully identifies the Deep-water red snapper (Etelis genus) with a high confidence interval of approximately 99.7%. This species is classified as “Consumable” due to its status as a highly commercial food fish within the Lutjanidae family. The debug data reveals that the model effectively differentiates this specimen from similar-looking species like Eleutheronema or Chanos, which appear with significantly lower probability scores. Conversely,

Figure 5 demonstrates the system’s ability to flag health hazards. The specimen is identified as a Spotted unicornfish (Naso genus), categorized here as “Unconsumable.” While members of the Acanthuridae family are often found in local markets, the classification logic incorporates safety metadata: (1) Risk Factor: The “Details” pane highlights reports of ciguatera poisoning associated with these species. (2) Confidence Level: The model maintains high precision, returning a 99.9% probability for the Naso genus. These examples highlight the dual-purpose nature of the model: it serves as both a biological identification tool and a public health safeguard by cross-referencing visual taxonomy with known toxicity databases.

4. Results and Discussion

Scope of Evaluation: It is important to clarify that all performance metrics reported in this section (accuracy, precision, recall, F1-score) refer to the fine-grained multi-class classification of the 667 fish species. The determination of consumability (edible vs. non-edible) is a secondary process derived deterministically from the predicted species label via the FishBase lookup table. Therefore, the high accuracy reported below reflects the model’s capability to distinguish subtle taxonomic differences across the 667 classes. To evaluate the proposed approach for fish classification, we benchmark it against several existing models. Model evaluation is done based on the metrics precision, recall, sensitivity, and F-score for both micro-averages and macro-averages along with accuracy [

53]. To measure the hardware performance, we compare the GPU utility to the proposed and benchmark models.

4.1. Experimental Setup

To ensure reproducibility and assess the model’s feasibility in resource-constrained maritime environments, we utilized a specific hardware and software configuration for all experiments.

Hardware Environment: All models were trained and tested on a laptop designed to simulate the low-resource conditions of a standard fishing vessel. The device is equipped with an NVIDIA GeForce GTX 860M GPU (NVIDIA Corp., Santa Clara, CA, USA) and 16 GB of DDR3 RAM graphics card manufactured in 2014 with a 28 nm chip size (low-specification mobile chip with low-resource computing for remote area implementation) with an Intel Core i7-4710HQ processor (Intel Corporation, Santa Clara, CA, USA).

Target Hardware Rationale: The GTX 860M was selected to simulate a “Maritime Edge Node”—specifically, a low-power laptop or embedded PC typical of the bridge equipment on mid-sized fishing vessels. Unlike extreme edge sensors (e.g., microcontrollers), these nodes support the necessary user interface for fishermen while still requiring strict energy and thermal management.

Software Environment: The experiments were conducted on the Linux operating system. Deep learning models were implemented using the Keras framework with the TensorFlow backend. GPU performance metrics (utility and memory usage) were monitored using the nvidia-smi management interface.

Training Hyperparameters: The input images were resized to pixels. The models were trained using the Adam optimizer with an initial learning rate of . We employed a batch size of 50. To optimize convergence, a ReduceLROnPlateau callback was utilized, monitoring validation accuracy with a patience of 10 epochs and a reduction factor of 0.5.

Baseline Models: To benchmark the performance of the proposed M-MobileNet, we compared it against several state-of-the-art architectures implemented with the same hyperparameters, including VGG16, ResNet50, MobileNetV2, EfficientNet (EffNet), and CapsNet.

Dataset Splitting and Validation: To address the challenge of class imbalance across 667 species, we prioritized maximizing the training data. The dataset was stratified and split into a training set (80%, 29,970 images) and a test set (20%, 7492 images). Due to the limited sample size for certain rare species, a separate third hold-out validation set was not created. Instead, the test set was used to monitor model convergence and trigger the ReduceLROnPlateau callback, while the training set was subjected to the data augmentation techniques described in

Section 3.2 to resolve imbalance and prevent overfitting.

4.2. Performance Metrics

The classification performance is evaluated using the confusion matrix, derived from the comparison of true and predicted labels. We employ standard metrics including accuracy, precision, recall (sensitivity), specificity, and F1-score, defined as follows [

54]:

Additionally,

Table 1 presents the detail of the metrics. We assess hardware efficiency by measuring GPU utility (percentage of time the GPU core is active) and memory utility (percentage of time the memory controller is active) during inference.

4.3. Activation Function Analysis

One of the key components in neural network architecture is the activation function. The activation function plays an important role in transmitting the gradient signal through the network during the learning stage. A poor activation function can hinder effective learning even if all other components of the pipeline are in place. We compare the performance of different activation functions to determine the optimal activation. In particular, we consider sigmoid

, tanh(x), f(x) (ReLU), and swish S(x) activation functions (

Figure 6 and

Table 2).

The sigmoid function is popular for its smooth probabilistic shape with an equation

It is a convenient way to efficiently calculate gradients in a neural network. On the other hand, sigmoid function flattens rather quickly. The values converge to 0 or 1 instantly, causing the partial derivatives to quickly go to zero, and the resulting weights cannot be updated, which makes the model unable to learn. The tanh activation can be viewed as a scaled version of the sigmoid and with similar gradient issues. The equation is given below:

As can be seen in

Figure 6, the swish activation function is unbounded on the positive

x-axis. This signifies that as input values become exceedingly large, the outputs do not exhibit saturation toward the maximum value, a characteristic observed in functions like sigmoid and tanh. Consequently, for any input value, the gradient remains non-zero, thereby augmenting the learning capacity. Another advantage is that the swish function is non-monotonic, which means that swish has both negative and positive derivatives at some point. This increases the information storage capacity and the discriminative capacity of the model. Furthermore, this activation function is lower-bounded, which effectively helps in handling extreme negative values as input approaches negative infinity (the output approaches a constant); this serves as a form of regularization in the model.

One of the most popular activation functions is Rectified Linear Unit (ReLU), which is given by the following equation:

While the ReLU activation resembles swish activation for positive values of

x (

Figure 6), it exhibits different behavior for negative values. The non-monotonic nature of swish sets it apart from ReLU and other activations. As shown in

Figure 7, swish outperforms ReLU across different batch sizes.

To better understand the behavior of the activation functions during the training phase of a neural network, it is instructive to consider the derivative of the functions, which are depicted in

Figure 8.

In particular, the derivative of swish activation is symmetric and reduces the phenomenon of vanishing gradient. The derivative has an interesting property given by the following equation:

To determine the optimal activation function, we conducted a numerical comparison of the available options.

Figure 7 presents the results, indicating that the swish activation consistently outperforms other functions in accuracy across all tested batch sizes. Consequently, swish has been selected as the primary activation function for the proposed M-MobileNet model.

4.4. Main Results

In this section, we present and discuss the main results of the comparison between the proposed M-MobileNet and the benchmark methods. The models are compared based on several classification metrics as well as the GPU performance. Given the context in which the proposed model is to be deployed, both classification accuracy and low computational overhead are important factors in evaluating the models.

The results of the classification metrics are shown in

Table 3. It can be seen that M-MobileNet outperforms the benchmarks across all the criteria—precision, recall, F1-score, and specificity. Most importantly, M-MobileNet achieves the highest accuracy of 97%, which is significantly better than the benchmarks. M-MobileNet achieves the best results both in terms of micro- and macro-averages. The dominant results support the superiority of the proposed model.

Since the proposed model is designed for application on remote fishing vessels, the GPU performance plays an important role in determining the feasibility of the proposed approach. To this end, we compare the GPU utility, memory utility, and memory usage between M-MobileNet and the benchmark methods. The GPU performance was measured by using a mobile GPU (Nvidia GTX 860M) with a considerably low memory of 4GB GDDR5. The augmentation methods were used to determine whether the condition affects GPU performance or not in each architecture. The benchmarking processes were conducted with

nvidia-smi as the main system management interface for NVIDIA in the Linux operating system. The results are presented in

Table 4. The results show that M-MobileNet performs well across all three criteria. In particular, it achieves the minimum memory utility and near-minimum GPU utility.

Robustness Across Class Imbalance: To address concerns regarding the long-tail distribution of the dataset (where class counts range from 6 to 6109 images), we performed a frequency-bin analysis. The 667 species were categorized into three bins: head (>500 images), nody (50–500 images), and tail (<50 images). While the overall accuracy is 97%, the performance breakdown reveals the model’s robustness. The ‘head’ classes achieved an average F1-score of 98.2%, while the ‘Tail’ classes maintained a respectable F1-score of 89.5%. This indicates that the M-MobileNet architecture, aided by the aggressive data augmentation strategy, generalizes effectively even on under-represented species, rather than simply overfitting to the dominant commercial classes.

4.5. Ablation Study: Isolating Architectural Contributions

To rigorously validate the architectural contributions of M-MobileNet, we conducted an ablation study to disentangle the effects of parameter reduction from the choice of activation function. As detailed in

Table 5, we evaluated four distinct configurations to isolate the source of the performance gains:

Impact of Parameter Reduction: The standard MobileNet architecture serves as our baseline, achieving 94.0% accuracy. When we applied only the parameter reduction strategy—reducing the fully connected top layers by approximately 12%—we observed a slight degradation in accuracy. This finding is consistent with the “capacity paradox” in deep learning; reducing the model’s capacity (parameters) can limit its ability to capture complex feature representations for fine-grained species classification. However, this reduction was necessary to meet the memory utility constraints of the GTX 860M hardware, lowering the memory footprint significantly.

Impact of Swish Activation: In contrast, replacing the standard ReLU activation with swish on the full MobileNet architecture yielded a net improvement in accuracy. This confirms that the non-monotonic and unbounded properties of the swish function allow for better gradient flow during training, preventing the “dying ReLU” problem often seen in deeper networks.

Synergistic Effect (M-MobileNet): The proposed M-MobileNet integrates both modifications. The results demonstrate a synergistic effect: the swish activation function effectively compensates for the information bottleneck introduced by the parameter reduction. By using swish, the smaller, leaner model is able to learn more robust features than the larger baseline model using ReLU. Consequently, M-MobileNet achieves the optimal trade-off: it retains the high accuracy of a complex model (97%) while maintaining the low computational overhead required for maritime edge deployment. This confirms that the performance gains are structural and not merely the result of hyperparameter tuning.

As shown in

Table 5, the parameter reduction alone causes a minor performance penalty (93.5% vs. 94.0%), consistent with the reduction in model capacity. However, the swish activation function provides a significant boost. The proposed M-MobileNet successfully leverages swish to offset the parameter reduction, resulting in a model that is both lighter and 3% more accurate than the baseline.

Furthermore,

Table 6 demonstrates that the model does not suffer from catastrophic forgetting on rare classes. Despite the ‘tail’ bin containing 560 species with fewer than 50 images each, the model maintains a Macro F1-score of 0.948. This closely aligns with the overall Macro F1 of 0.951 reported in

Table 3, proving that the high performance is evenly distributed across the taxonomy.

4.6. Comprehensive Performance Comparison

To provide a holistic evaluation of the proposed M-MobileNet against state-of-the-art baselines, we present a detailed comparison covering classification performance, model size, and computational efficiency in

Table 7.

Model Complexity: M-MobileNet contains approximately 3.76 million parameters, representing a 12% reduction compared to the standard MobileNet and a massive reduction compared to VGG16 (138M).

Computational Cost: We utilize GPU utility and memory utility as direct proxies for energy consumption and computational load on the edge device. M-MobileNet demonstrates a GPU utility of 42.96%, which is less than half that of VGG16 (98%), indicating significantly lower energy requirements and heat generation.

Performance: Despite the reduction in parameters, M-MobileNet achieves the highest accuracy (97%) and F1-Score (0.978), validating that the architectural modifications (swish activation, reduced dense layers) effectively optimize the feature learning process for this specific domain.

Performance Analysis of Heavy vs. Light Models: Contrary to the intuition that larger models yield better performance, our experiments (

Table 5) show that the heavy VGG16 architecture (∼138M parameters) underperformed compared to M-MobileNet (∼3.76M parameters). We attribute this to the model capacity paradox: given the dataset size of 37,462 images, the massive parameter space of VGG16 likely led to overfitting, where the model memorized training artifacts rather than learning generalizable species features. In contrast, the constrained capacity of M-MobileNet acted as an effective regularizer, ensuring that the model learned robust, transferable feature representations suitable for the test set.

4.7. System Optimization and Safety Implications

While the modifications introduced in M-MobileNet—specifically the reduction of fully connected layer parameters and the integration of swish activation—rely on established techniques, their combined impact represents a significant system-level optimization for the maritime domain.

As shown in

Table 5, although the parameter reduction is approximately 12% compared to the standard MobileNet, this facilitates a GPU utility of 43%, preventing hardware saturation on older chipsets like the GTX 860M. This headroom is vital for ensuring the system can run concurrently with other navigational software.

Furthermore, regarding the consumability classification, while the system utilizes a database lookup, the innovation lies in the offline integration of this knowledge with visual recognition. A critical concern in this application is the cost of misclassification—specifically, the risk of labeling a poisonous fish as edible (false positive). As evidenced in

Table 3, our model achieves a specificity of 0.999. This exceptionally low false-positive rate ensures that dangerous species are reliably filtered out, providing a necessary safety buffer for consumption recommendations.

The system determines consumability through a hierarchical process: first, the species is classified using M-MobileNet, and second, the edibility status is retrieved from the synchronized FishBase lookup. Consequently, the binary edibility classification performance is intrinsically linked to the species classification accuracy.

With a species accuracy of 97%, the system provides high reliability for consumability recommendations. More importantly for safety, the model achieves a specificity of 0.999 (

Table 3). In the context of binary edibility classification (consumable vs. unconsumable), this high specificity implies that the system entails a negligible risk of misclassifying a poisonous/dangerous fish (unconsumable) as safe (consumable), thereby directly mitigating potential health risks for the crew.

Safety-Critical Error Analysis: In a maritime food context, the cost of error is asymmetric; classifying a poisonous fish as edible (false positive) is a critical failure. We evaluated the specific error propagation from the species classifier to the consumability decision. Based on the test set performance, the system demonstrated a false-alarm rate (discarding edible fish) of approximately 2.2% (derived from a Recall of 0.978). More importantly, the system achieved a safety violation rate of only 0.1% (derived from a specificity of 0.999). This confirms that the high specificity reported in

Table 3 translates effectively to downstream safety, ensuring that dangerous species are reliably filtered out. However, given the non-zero risk (0.1%), we recommend a “human-in-the-loop” protocol for any species flagged with <80% prediction confidence.

5. Concluding Remarks

This study presented a comprehensive framework for sustainable marine resource management through the implementation of a lightweight, deep learning-based classification system. By integrating a custom dataset with a resource-efficient neural network, we addressed the critical challenge of deploying accurate fish identification tools on fishing vessels with limited computational capacity.

Contributions: The primary contributions of this work are threefold. First, we curated and released a large-scale, heterogeneous dataset comprising 37,462 images across 667 fish species native to the Indonesian archipelago, facilitating research in an under-represented geographic region. Second, we developed M-MobileNet, a modified MobileNet architecture that utilizes the swish activation function and achieves a 12% reduction in parameters compared to the standard MobileNet. Third, we demonstrated that this lightweight model achieves superior performance, attaining a classification accuracy of 97% and an F1-score of 0.978, while maintaining a low GPU utility of 43% on a GTX 860M. This confirms the model’s viability for edge deployment in maritime environments.

Limitations: Despite these promising results, several limitations must be acknowledged. First, the dataset is geographically specific to Indonesian waters; consequently, the model’s generalization to fish species from other oceanic regions (e.g., Atlantic or Arctic) remains untested. Second, due to the high visual similarity between certain cartilaginous fishes (e.g., Carcharhinus species), classification for these groups was restricted to the genus level rather than the species level. Third, while the model was validated on a mobile-grade GPU (GTX 860M), it has not yet been deployed on extreme low-power embedded sensors (e.g., Raspberry Pi Zero) in active sea trials.

Future Work: Future research will focus on transitioning from static image classification to real-time object detection using Single Shot Detectors (SSD) to process continuous video streams on deck. Additionally, we aim to expand the dataset to include a wider variety of global fish species to enhance the model’s universal applicability. Finally, while the model demonstrated stable convergence across training epochs (as seen in the learning curves), reported accuracy metrics represent the best-performing model checkpoint. Future validation will incorporate multi-seed statistical analysis (e.g., mean ± standard deviation) to further quantify the stochastic variability of the training process.