1. Introduction

The rapid expansion of online and blended education has intensified research interest in personalized learning systems capable of adapting instructional pathways to the needs of individual learners. Advances in artificial intelligence (AI), learning analytics, and educational data mining have enabled learning management systems (LMS) and intelligent tutoring systems (ITS) to deliver adaptive feedback, content recommendations, and assessment at scale [

1,

2]. Despite this progress, most deployed adaptive learning platforms remain limited in their capacity to design effective learning trajectories for heterogeneous learners over extended time horizons.

A core limitation of current personalization approaches lies in learning pathway design the problem of determining which learning activities should be presented, in which order, and at what level of granularity, given a learner’s prior knowledge, objectives, time constraints, and contextual conditions. This problem is inherently complex, involving large combinatorial search spaces, prerequisite constraints, uncertain learner states, and competing pedagogical objectives [

3,

4]. In practice, many systems rely on heuristic rules, instructor-defined sequences, or single-objective optimization criteria, such as short-term performance or course completion, which fail to capture the multifaceted nature of learning.

Existing approaches address this challenge only partially. Rule-based adaptive systems offer transparency and pedagogical control but require extensive manual authoring and scale poorly to diverse learner populations. Reinforcement-learning-based tutors’ model instructional decisions as sequential control problems, yet they often depend on carefully engineered reward functions and tend to optimize short-term outcomes rather than long-term mastery or retention [

5,

6]. Recommendation systems improve resource relevance using collaborative or content-based filtering but frequently overlook curricular structure, prerequisite dependencies, and causal learning effects [

7,

8]. As a result, there remains a gap between localized adaptive interventions and the broader goal of coherent, personalized learning pathway optimization.

More recently, research in artificial intelligence has demonstrated that complex design problems can be addressed through population-based, iterative optimization paradigms, in which candidate solutions are generated, evaluated using explicit criteria, and progressively refined through selection and variation. Such approaches have proven effective in domains characterized by large search spaces and multiple competing objectives. While these paradigms have primarily been explored outside education, they offer a useful conceptual foundation for rethinking how learning pathways can be designed, evaluated, and adapted in a principled and autonomous manner.

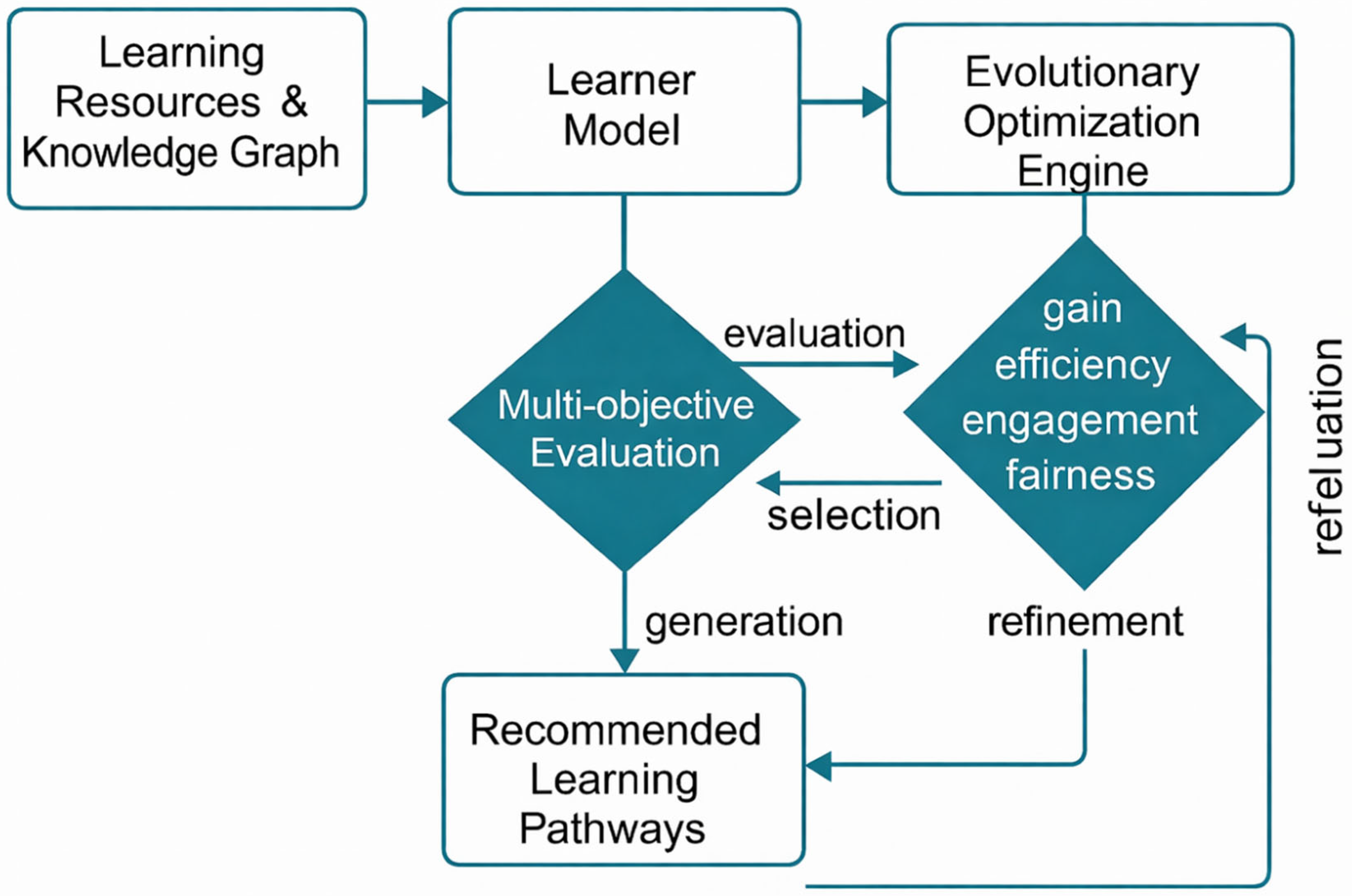

Building on this perspective, this article proposes AlphaLearn, a conceptual and methodological framework that formulates personalized learning pathway design as a constrained, multi-objective evolutionary optimization problem. AlphaLearn does not present an implemented system nor claim empirical performance gains. Instead, it provides a structured framework that integrates learner modelling, curricular knowledge representation, and evolutionary search mechanisms to explore the space of possible learning pathways in a systematic way.

AlphaLearn brings together three components that are often treated independently in the literature. First, learner modelling is employed to estimate learner state, expected mastery gain, engagement likelihood, and risk of failure or dropout, drawing on techniques from knowledge tracing and learning analytics [

2,

9]. Second, knowledge graphs and curricular constraints encode prerequisite relations and domain structure, ensuring that candidate pathways remain pedagogically valid and interpretable [

10]. Third, an evolutionary optimization process maintains a population of candidate pathways, evaluates them using multiple pedagogical criteria, and refines them through selection, variation, and diversity-preserving mechanisms [

11,

12]. This design explicitly supports trade-offs between objectives such as learning gain, time efficiency, engagement, and robustness.

Fairness and equity constitute an additional motivation for this work. Recent studies in educational AI have shown that adaptive systems may inadvertently reinforce existing inequalities when trained on biased data or optimized solely for aggregate performance metrics [

13]. Personalized pathway recommendation raises concerns about differential treatment of learner subgroups, potentially leading to unequal learning opportunities. AlphaLearn therefore treats fairness not as an external constraint but as an explicit dimension of pathway evaluation, allowing equity-related indicators to be incorporated into the optimization process.

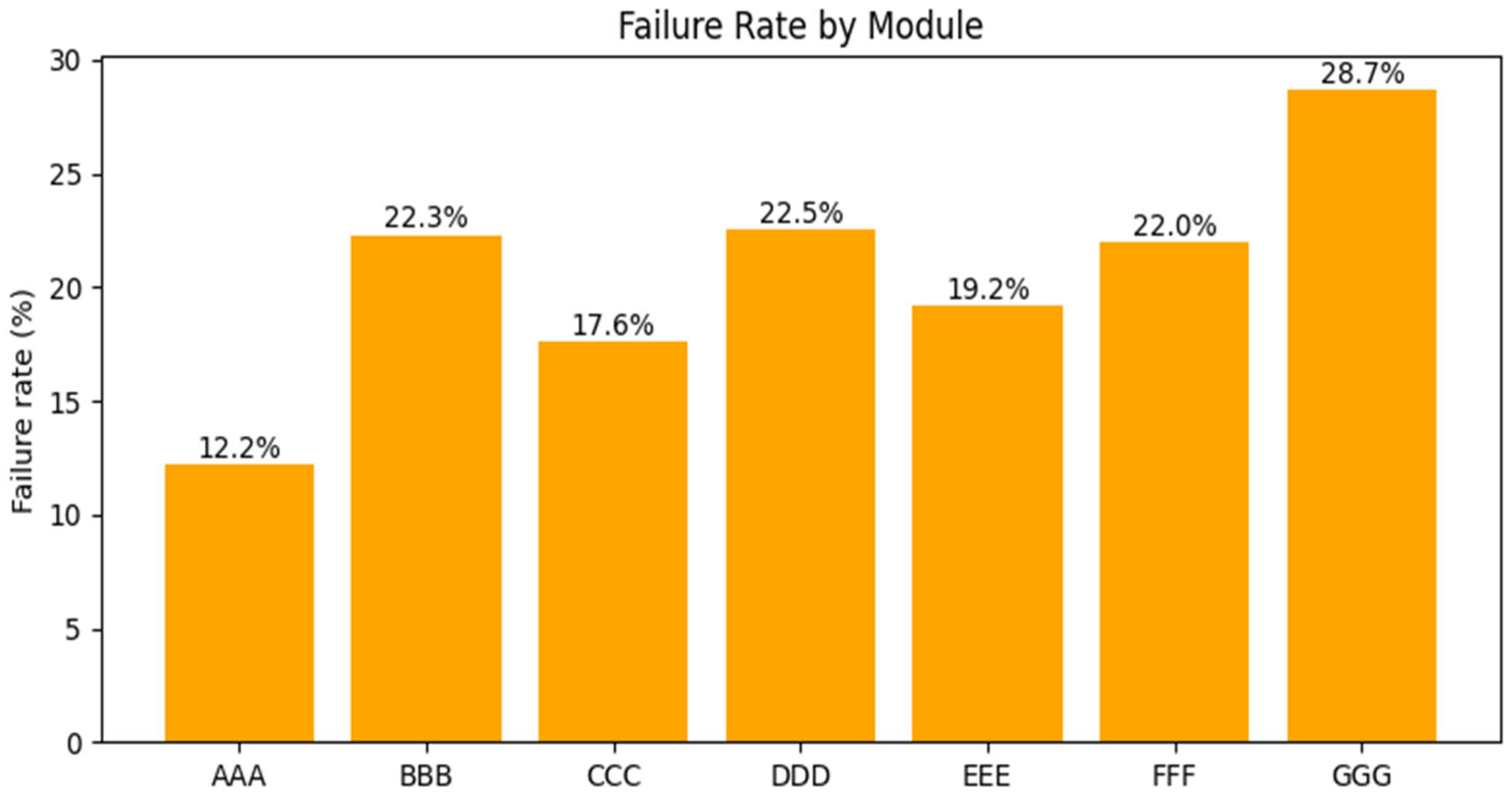

The contribution of this article is threefold. First, it introduces AlphaLearn, a coherent conceptual framework that formalizes personalized learning pathway design as a multi-objective evolutionary optimization problem. Second, it provides a detailed architectural and algorithmic description of the proposed framework, including pathway representation, fitness components, and evolutionary search dynamics, serving as a foundation for future implementations and empirical studies. Third, it complements the conceptual framework with a descriptive analysis of learning analytics data from the Open University Learning Analytics Dataset (OULAD), illustrating substantial heterogeneity in learner outcomes and motivating the need for adaptive and fairness-aware sequencing strategies.

This work is positioned as a conceptual and methodological contribution rather than a fully validated system. Its objective is to establish a rigorous foundation for future simulations, implementations, and controlled experiments that investigate evolutionary approaches to personalized learning. By reframing learning pathway design as an evolutionary optimization problem, AlphaLearn aims to advance current research on adaptive e-leaning toward more autonomous, scalable, and ethically grounded personalization.

The remainder of this paper is organized as follows.

Section 2 reviews related work on adaptive learning systems, evolutionary optimization, and fairness in educational AI.

Section 3 presents the AlphaLearn framework and its evolutionary search process.

Section 4 reports a descriptive analysis of the OULAD dataset.

Section 5 discusses implications, limitations, and ethical considerations.

2. Related Work

2.1. Adaptive Learning Systems and Personalized Instruction

Adaptive learning systems aim to tailor instructional content, sequencing, and feedback to individual learners based on their evolving knowledge, preferences, and performance. Early work in this area was dominated by intelligent tutoring systems (ITS), which rely on explicit domain models, learner models, and pedagogical rules to guide instruction [

3]. While ITS have demonstrated strong effectiveness in well-defined domains such as mathematics and programming, they require extensive manual authoring and are difficult to scale across heterogeneous learners and domains.

More recent adaptive systems embedded in learning management systems (LMS) adopt lighter-weight personalization mechanisms, including conditional content release, mastery paths, and heuristic rules based on assessment outcomes. Although these approaches are easier to deploy, they typically provide limited adaptivity and rely on static instructional sequences defined by instructors [

14]. As a result, personalization remains local and reactive, rather than proactive and globally optimized across learning trajectories.

Learning analytics and educational data mining have further expanded the capabilities of adaptive systems by enabling data-driven insights into learner behavior and performance [

1,

4]. However, many analytics-driven interventions focus on prediction (e.g., performance or dropout risk) rather than on optimization of learning pathways, leaving the design of instructional sequences largely unchanged.

2.2. Learner Modelling and Knowledge Tracing

Accurate learner modelling is a foundational component of personalized learning. A substantial body of research has focused on knowledge tracing, which seeks to estimate a learner’s mastery of underlying skills or concepts based on interaction data. Classical approaches such as Bayesian Knowledge Tracing (BKT) provide interpretable probabilistic models of skill acquisition but rely on simplifying assumptions about learning and forgetting [

15].

More recent advances leverage neural and hybrid models, including Deep Knowledge Tracing (DKT) and memory-augmented architectures, which capture complex temporal patterns in learner behavior [

9,

16]. These models have improved predictive accuracy and are increasingly used to inform adaptive feedback and content recommendation. Nevertheless, learner modelling alone does not determine how learning activities should be sequenced over time, particularly when multiple objectives such as efficiency, engagement, and robustness must be balanced.

In practice, learner models are often used as inputs to rule-based or greedy decision policies, limiting their potential to support holistic pathway optimization. This gap highlights the need for mechanisms that can systematically explore alternative instructional sequences while leveraging learner state estimates.

2.3. Recommendation Systems and Learning Pathways

Recommendation systems have been widely applied in educational contexts to suggest learning resources based on learner preferences, behavior, or similarity to other users. Collaborative filtering and content-based approaches have been shown to improve resource relevance and learner satisfaction [

7]. Hybrid recommenders further incorporate contextual features and learning objectives to refine recommendations [

8].

Despite these advances, most educational recommender systems focus on item-level recommendations rather than on the construction of coherent learning pathways. They often ignore prerequisite structures, curricular constraints, and long-term learning goals, which can result in fragmented or pedagogically suboptimal sequences. Moreover, recommendation accuracy does not necessarily translate into improved learning outcomes, particularly when causal learning effects are not explicitly modeled [

17].

Therefore, recommender-based approaches alone are insufficient to address the broader challenge of personalized pathway design across courses or programs.

2.4. Reinforcement Learning for Instructional Sequencing

Reinforcement learning (RL) has been explored as a framework for instructional decision-making, modeling the interaction between learner and system as a sequential decision process. RL-based tutors aim to select actions (e.g., tasks, hints, feedback) that maximize expected rewards, such as learning gain or engagement [

6].

While RL provides a principled approach to sequential optimization, its application in education faces several challenges. Reward design is non-trivial, as short-term performance improvements may not align with long-term mastery or retention. RL methods also require substantial interaction data or high-fidelity simulators, which limits their applicability in real-world educational settings. Furthermore, most RL-based systems learn a single policy, reducing their ability to explore diverse instructional strategies or trade-offs among competing objectives.

These limitations suggest that complementary approaches capable of maintaining and evaluating multiple candidate pathways simultaneously may offer greater flexibility and robustness.

2.5. Evolutionary Optimization and Multi-Objective Approaches

Evolutionary algorithms (EAs) provide population-based optimization methods inspired by natural selection and have been successfully applied to problems characterized by large search spaces and multiple competing objectives. Multi-objective evolutionary algorithms, such as NSGA-II, explicitly maintain a set of non-dominated solutions, enabling exploration of trade-offs among objectives [

11].

In educational research, evolutionary methods have been applied to curriculum sequencing, learning object selection, and scheduling, demonstrating potential advantages over greedy or rule-based approaches [

12]. Variable-length representations further allow pathways of differing duration and depth to be optimized within a unified framework. However, most existing studies remain narrow in scope, focus on isolated components, or lack integration with modern learner modelling and learning analytics.

Moreover, fairness and equity considerations are rarely incorporated into evolutionary optimization in educational contexts, despite growing evidence that adaptive systems may produce disparate outcomes across learner groups.

2.6. Fairness and Ethical Considerations in Educational AI

Fairness has emerged as a critical concern in AI-driven educational systems. Research shows that predictive and adaptive models trained on historical data can reflect and amplify existing biases related to gender, socio-economic status, or prior educational opportunity [

13]. In adaptive learning systems, biased personalization may lead to systematically different learning opportunities for different groups.

Recent work proposes fairness-aware approaches that incorporate equity constraints or regularization terms into optimization objectives, or that monitor group-level outcomes to detect disparate impact [

18,

19]. However, these ideas are only beginning to be explored in the context of learning pathway design and sequencing.

This gap motivates the integration of fairness considerations directly into the design and evaluation of adaptive learning pathways, rather than treating them as post-hoc adjustments.

2.7. Research Gap and Positioning of AlphaLearn

In summary, prior research has made substantial progress in learner modelling, recommendation systems, reinforcement learning, and evolutionary optimization for education. However, existing approaches tend to address isolated aspects of personalization and rarely provide an integrated framework for multi-objective, constraint-aware, and fairness-conscious learning pathway optimization.

AlphaLearn is positioned to address this gap by combining learner modelling, curricular knowledge representation, and evolutionary search within a unified conceptual framework. Unlike rule-based, greedy, or single-policy approaches, AlphaLearn explicitly maintains and evaluates multiple candidate pathways, supports trade-offs among pedagogical objectives, and incorporates fairness as an optimization dimension. In doing so, it advances the methodological foundations for adaptive learning systems capable of autonomous, scalable, and ethically grounded personalization.

4. The AlphaLearn Framework

This section provides a conceptual and architectural specification of the AlphaLearn framework rather than a description of a deployed or empirically evaluated system. The purpose is to formalise the structure and optimisation logic of the framework, independent of any specific implementation.

AlphaLearn is conceived as a modular agent composed of five interacting layers, as illustrated in (

Figure 5).

4.1. Overview and Design Rationale

AlphaLearn is proposed as a conceptual and methodological framework for personalized learning pathway design, grounded in the formulation of instructional sequencing as a constrained, multi-objective optimization problem. The framework is designed to address three recurrent limitations in existing adaptive learning systems: (1) reliance on static or greedy sequencing strategies, (2) limited capacity to balance multiple pedagogical objectives, and (3) insufficient integration of fairness considerations.

Rather than selecting a single “best” next activity, AlphaLearn maintains and evaluates a population of candidate learning pathways, enabling systematic exploration of alternative instructional trajectories. Each pathway represents a coherent sequence of learning resources that respects curricular constraints and is evaluated using learner-specific predictive signals. This population-based perspective allows AlphaLearn to model personalization as an iterative refinement process, in which pathways are progressively improved according to explicit pedagogical criteria.

AlphaLearn is not presented as an implemented system, but as a formal framework intended to guide future implementations and empirical studies. Its design emphasizes modularity, interpretability, and extensibility.

4.2. Architectural Components

AlphaLearn is organized into five interacting layers, each corresponding to a distinct functional role in the personalization process.

- ➢

Data and Resource Layer

The Data and Resource Layer stores and organizes the instructional content and curricular structure. It includes:

Learning resources (e.g., videos, readings, exercises, assessments),

Metadata describing resource attributes such as estimated difficulty, duration, learning objectives, and modality,

Knowledge graphs encoding prerequisite relations and conceptual dependencies.

This layer defines the feasible search space for pathway generation. By encoding curricular constraints explicitly, AlphaLearn ensures that candidate pathways remain pedagogically valid and interpretable.

- ➢

Learner Model Layer

The Learner Model Layer estimates the learner’s current and prospective learning state based on interaction data. It provides predictive signals used to evaluate candidate pathways, including:

Estimated mastery of skills or concepts,

Predicted learning gain associated with specific resources,

Engagement likelihood and persistence indicators,

Risk estimates for failure or withdrawal.

These estimates may be derived from knowledge tracing models, learning analytics features, or hybrid approaches. Importantly, AlphaLearn treats the learner model as a black-box predictor: the framework does not depend on a specific modelling technique, allowing different models to be substituted without altering the optimization logic.

- ➢

Pathway Representation

A learning pathway is represented as an ordered, variable-length sequence of learning resources:

where each

is a learning resource drawn from the resource layer, and

may vary across pathways. Variable-length representation allows AlphaLearn to accommodate learners with different prior knowledge, time availability, and learning goals.

Pathways must satisfy feasibility constraints, including prerequisite satisfaction and curriculum rules. These constraints are enforced during pathway generation and variation to prevent invalid sequences.

- ✓

Illustrative Example

Consider a learner enrolled in an introductory programming module who has demonstrated partial mastery of basic variables but limited understanding of control structures such as conditionals and loops. Based on the learner model, AlphaLearn generates an initial population of feasible learning pathways composed of video lectures, practice exercises, and formative assessments that respect curricular prerequisite constraints.

For example, one candidate pathway may prioritise rapid progression by introducing loop constructs early, aiming to maximise time efficiency. Another pathway may include additional exercises on conditional statements and formative quizzes to increase engagement and reduce the risk of withdrawal. A third pathway may balance both strategies by interleaving short instructional videos with adaptive practice tasks.

Each candidate pathway is evaluated using the multi-objective fitness function, which estimates expected learning gain, time efficiency, engagement likelihood, and fairness-related indicators. Through the evolutionary optimization process, pathways that perform poorly across these criteria are discarded, while promising pathways are refined through variation and selection. The outcome is not a single prescribed sequence, but a set of pedagogically valid learning pathways that reflect different trade-offs and can be selected according to instructional priorities or learner needs.

- ➢

Evolutionary Optimization Engine

The Evolutionary Optimization Engine is responsible for generating, evaluating, and refining candidate pathways. It maintains a population of pathways and iteratively applies evolutionary operators:

Selection: choosing promising pathways based on multi-objective fitness,

Variation: generating new pathways via mutation and recombination,

Diversity preservation: maintaining heterogeneity to avoid premature convergence.

Unlike single-policy optimization approaches, this engine supports parallel exploration of multiple instructional strategies, enabling trade-offs between competing objectives.

- ➢

Evaluation and Orchestration Layer

The Evaluation and Orchestration Layer computes fitness values for each pathway and interfaces with the learning environment. It aggregates learner model predictions, constraint checks, and fairness indicators into structured evaluation outputs. In a deployed system, this layer would also handle pathway recommendation, instructor oversight, and feedback collection; in this article, it serves as the conceptual integration point.

4.3. Multi-Objective Fitness Formulation

Each pathway

is evaluated using a vector-valued fitness function:

where:

estimates expected learning gain or mastery improvement,

captures time efficiency or cost-effectiveness,

reflects predicted engagement or persistence,

represents fairness-related indicators (e.g., disparity-sensitive penalties).

These objectives may be combined using weighted aggregation or handled explicitly through Pareto-based selection. The framework does not prescribe a single aggregation strategy, allowing adaptation to different pedagogical priorities and institutional contexts.

4.4. Evolutionary Search Process

AlphaLearn employs a population-based evolutionary loop to refine learning pathways over successive iterations. The process is summarized in Algorithm 1.

| Algorithm 1. AlphaLearn evolutionary pathway optimization |

Input:

R Set of learning resources

L Learner model

C Curricular constraints

N Population size

T Maximum number of iterations

Output:

P* Set of non-dominated (Pareto-optimal) learning pathways

Initialize population P0 with N feasible pathways sampled from R subject to C

for t = 1 to T do

for each pathway P in Pt − 1 do

Evaluate fitness vector f(P) using learner model L

end for

Select a subset S from Pt − 1 based on the multi-objective fitness function F(P)

Generate offspring O by applying variation operators to S

Enforce feasibility constraints C on all offspring

Combine Pt − 1 and O into an intermediate population

Apply elitism and diversity preservation to form Pt

end for

Return P* |

This process yields a set of candidate pathways rather than a single solution, enabling informed selection among alternative trade-offs.

While the evolutionary optimisation process follows established principles of multi-objective evolutionary algorithms, its contribution lies in the formulation of learning pathway design as a constrained optimisation problem and in the integration of learner modelling, knowledge graph constraints, and fairness-aware objectives within a unified framework.

4.5. Fairness-Aware Optimization

Fairness is incorporated as a first-class consideration in AlphaLearn. Rather than optimizing solely for individual-level performance, the framework allows group-level indicators to influence pathway evaluation. Examples include:

Penalizing pathways predicted to widen performance gaps across learner subgroups,

Constraining optimization to satisfy equity thresholds,

Monitoring disparity-sensitive metrics during selection.

By integrating fairness into the fitness formulation, AlphaLearn supports equity-aware personalization without requiring post-hoc correction.

5. Conclusions

This paper introduced AlphaLearn, a conceptual and methodological framework that reframes personalized learning pathway design as a constrained multi-objective optimization problem. Motivated by the limitations of heuristic and single-objective adaptive systems, AlphaLearn integrates learner modelling, knowledge graph representations, and evolutionary optimization to explore and refine alternative learning pathways under multiple pedagogical criteria.

The primary contribution of this work lies not in the validation of a deployed system, but in the formalization of an evolutionary perspective on adaptive sequencing. By maintaining a population of candidate pathways and evaluating them across dimensions such as learning effectiveness, efficiency, engagement, and fairness, AlphaLearn provides a principled foundation for moving beyond greedy or myopic instructional decisions. The proposed five-layer architecture clarifies the functional roles of data representation, learner modelling, optimization, evaluation, and orchestration, offering a modular blueprint for future implementations. To ground the framework in empirical reality, this study complemented the conceptual contribution with a descriptive analysis of large-scale learning analytics data from the Open University Learning Analytics Dataset. The analysis revealed substantial heterogeneity in learner outcomes, failure rates, and withdrawal patterns across modules, underscoring the inadequacy of one-size-fits-all sequencing strategies. These findings reinforce the central motivation of AlphaLearn: adaptive learning systems must account for contextual variability and competing objectives when designing personalized learning trajectories. Fairness and equity were treated as integral design considerations rather than secondary concerns. The framework explicitly accommodates fairness-aware evaluation, enabling adaptive systems to balance individual optimization with group-level equity objectives. In doing so, AlphaLearn aligns with emerging research that emphasizes ethical responsibility, transparency, and accountability in educational artificial intelligence. This work has several limitations. AlphaLearn remains a conceptual and methodological proposal, and no empirical evaluation of learning gains or engagement improvements has been conducted. The descriptive analysis is based on a single dataset from a specific institutional context, and the effectiveness of evolutionary pathway optimization depends on the quality of underlying learner models and curricular representations. Additionally, population-based optimization introduces computational considerations that must be addressed in large-scale deployments. Despite these limitations, AlphaLearn establishes a clear research agenda for future work. Immediate next steps include implementing a prototype using state-of-the-art evolutionary algorithms, conducting simulation studies and controlled experiments on public datasets, and comparing performance against established adaptive sequencing baselines. Further research should also investigate hybrid approaches that combine evolutionary optimization with reinforcement learning, as well as systematic evaluations of fairness-aware optimization criteria. Finally, extending pathway optimization beyond single courses toward program-level and lifelong learning scenarios represents a promising direction for future exploration.

In summary, AlphaLearn contributes a structured and ethically grounded framework for adaptive learning pathway optimization. By bridging evolutionary optimization, learning analytics, and fairness-aware design, this work provides a foundation for advancing autonomous, scalable, and responsible personalized e-learning systems.