Cross-Validation Model Averaging for Generalized Functional Linear Model

Abstract

1. Introduction

2. Model Averaging for Generalized Functional Linear Model

2.1. The Generalized Functional Linear Model

2.2. Model Averaging Estimation

3. Asymptotic Property for Model Averaging Estimator

Notations and Conditions

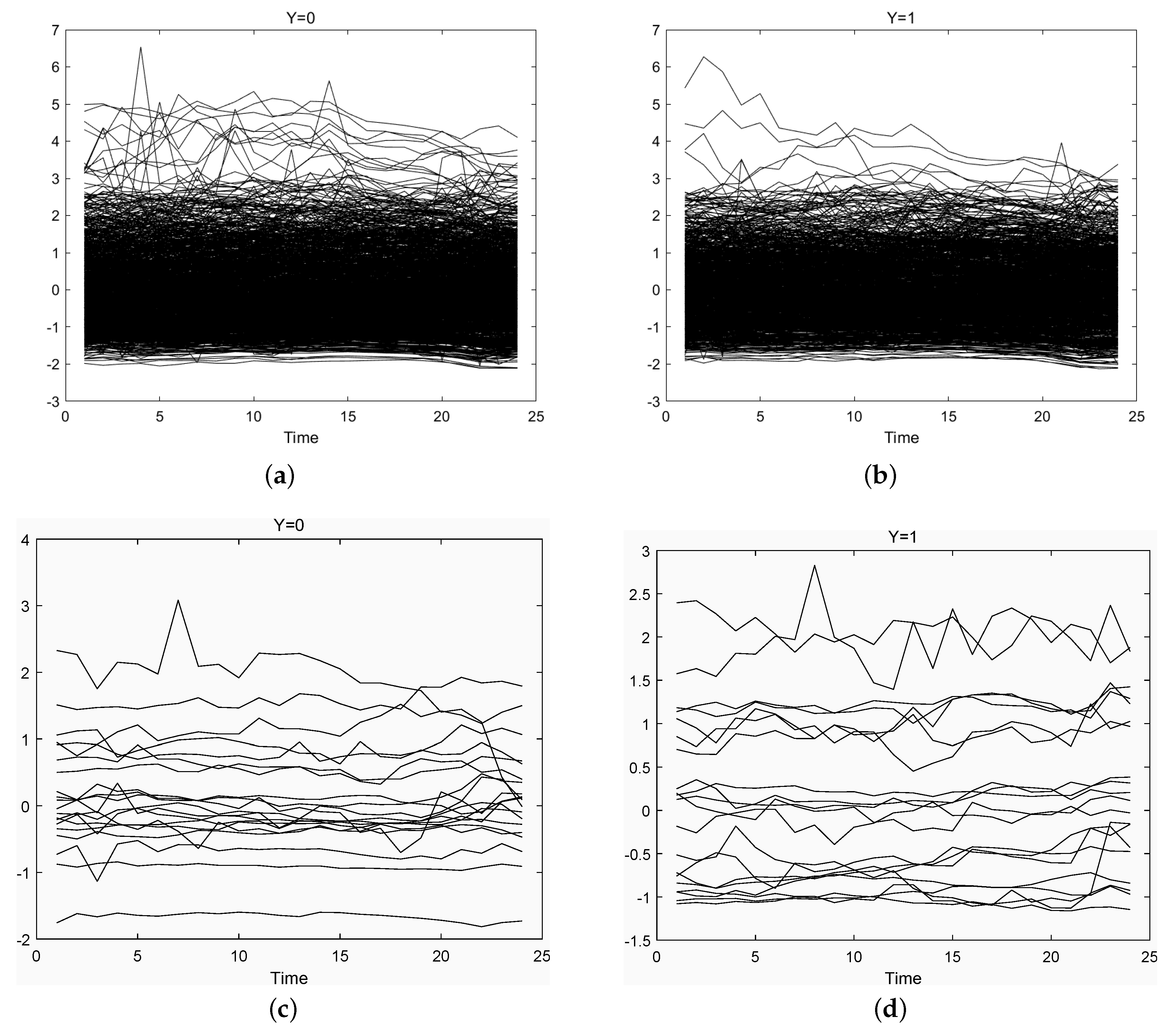

4. Numerical Examples

4.1. Simulation I: Fixed Number of Candidate Models

- Case 1

- For , are generated from the standard normal distribution ; for , . The basis functions are B-spline functions with parameters as mentioned above.

- Case 2

- For , . The basis functions are B-spline functions with parameters as mentioned above.

- Case 3

- For , are generated from the standard normal distribution ; for , . The basis functions are Fourier functions with parameters as mentioned above.

- Case 4

- For , . The basis functions are Fourier functions with parameters as mentioned above.

4.2. Simulation II: Divergent Number of Candidate Models

4.3. Application: Beijing Second-Hand House Price Data

5. Concluding Remarks

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A. Lemmas and Proofs

Appendix B. Proof of Theorem 1

Appendix B.1. Proof of (A11)

Appendix B.2. Proof of (A12)

Appendix C. Simulation Results in Section 4.1

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.432 | 0.408 | 0.404 | 0.433 | 0.408 | 0.394 | 0.393 | |

| 1 | Median | 0.417 | 0.417 | 0.417 | 0.417 | 0.417 | 0.375 | 0.417 |

| Var | 0.023 | 0.023 | 0.020 | 0.023 | 0.024 | 0.023 | 0.021 | |

| Mean | 0.312 | 0.294 | 0.249 | 0.311 | 0.292 | 0.225 | 0.226 | |

| 2 | Median | 0.333 | 0.333 | 0.250 | 0.333 | 0.333 | 0.250 | 0.250 |

| Var | 0.013 | 0.013 | 0.016 | 0.013 | 0.013 | 0.013 | 0.013 | |

| Mean | 0.273 | 0.262 | 0.226 | 0.273 | 0.260 | 0.188 | 0.189 | |

| 3 | Median | 0.250 | 0.250 | 0.250 | 0.250 | 0.250 | 0.167 | 0.167 |

| Var | 0.017 | 0.017 | 0.015 | 0.017 | 0.017 | 0.016 | 0.015 | |

| Mean | 0.256 | 0.243 | 0.183 | 0.256 | 0.247 | 0.162 | 0.163 | |

| 4 | Median | 0.250 | 0.250 | 0.167 | 0.250 | 0.250 | 0.167 | 0.167 |

| Var | 0.018 | 0.017 | 0.011 | 0.018 | 0.017 | 0.013 | 0.013 | |

| Mean | 0.203 | 0.196 | 0.148 | 0.203 | 0.193 | 0.133 | 0.134 | |

| 5 | Median | 0.167 | 0.167 | 0.167 | 0.167 | 0.167 | 0.083 | 0.083 |

| Var | 0.014 | 0.014 | 0.011 | 0.014 | 0.013 | 0.009 | 0.009 | |

| Mean | 0.234 | 0.233 | 0.135 | 0.234 | 0.233 | 0.117 | 0.115 | |

| 6 | Median | 0.250 | 0.250 | 0.125 | 0.250 | 0.250 | 0.083 | 0.083 |

| Var | 0.016 | 0.016 | 0.010 | 0.016 | 0.016 | 0.010 | 0.010 | |

| Mean | 0.214 | 0.213 | 0.149 | 0.214 | 0.214 | 0.118 | 0.117 | |

| 7 | Median | 0.208 | 0.208 | 0.167 | 0.208 | 0.250 | 0.083 | 0.083 |

| Var | 0.014 | 0.015 | 0.010 | 0.014 | 0.015 | 0.009 | 0.008 | |

| Mean | 0.213 | 0.209 | 0.134 | 0.213 | 0.210 | 0.104 | 0.103 | |

| 8 | Median | 0.250 | 0.167 | 0.125 | 0.250 | 0.167 | 0.083 | 0.083 |

| Var | 0.012 | 0.012 | 0.009 | 0.012 | 0.012 | 0.008 | 0.008 | |

| Mean | 0.196 | 0.196 | 0.128 | 0.196 | 0.196 | 0.096 | 0.099 | |

| 9 | Median | 0.167 | 0.167 | 0.083 | 0.167 | 0.167 | 0.083 | 0.083 |

| Var | 0.014 | 0.014 | 0.012 | 0.014 | 0.015 | 0.008 | 0.008 | |

| Mean | 0.209 | 0.208 | 0.126 | 0.209 | 0.206 | 0.088 | 0.087 | |

| 10 | Median | 0.167 | 0.167 | 0.083 | 0.167 | 0.167 | 0.083 | 0.083 |

| Var | 0.016 | 0.016 | 0.009 | 0.016 | 0.016 | 0.006 | 0.006 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.355 | 0.350 | 0.329 | 0.355 | 0.349 | 0.322 | 0.322 | |

| 1 | Median | 0.350 | 0.350 | 0.325 | 0.350 | 0.350 | 0.325 | 0.313 |

| Var | 0.006 | 0.007 | 0.007 | 0.006 | 0.007 | 0.006 | 0.006 | |

| Mean | 0.262 | 0.262 | 0.234 | 0.262 | 0.262 | 0.227 | 0.227 | |

| 2 | Median | 0.275 | 0.275 | 0.225 | 0.275 | 0.275 | 0.225 | 0.225 |

| Var | 0.005 | 0.005 | 0.004 | 0.005 | 0.005 | 0.004 | 0.004 | |

| Mean | 0.205 | 0.205 | 0.184 | 0.205 | 0.205 | 0.174 | 0.174 | |

| 3 | Median | 0.200 | 0.200 | 0.175 | 0.200 | 0.200 | 0.175 | 0.175 |

| Var | 0.005 | 0.005 | 0.004 | 0.005 | 0.005 | 0.003 | 0.003 | |

| Mean | 0.163 | 0.163 | 0.134 | 0.163 | 0.163 | 0.128 | 0.128 | |

| 4 | Median | 0.150 | 0.150 | 0.125 | 0.150 | 0.150 | 0.125 | 0.125 |

| Var | 0.004 | 0.004 | 0.003 | 0.004 | 0.004 | 0.003 | 0.003 | |

| Mean | 0.139 | 0.139 | 0.113 | 0.139 | 0.139 | 0.110 | 0.110 | |

| 5 | Median | 0.125 | 0.125 | 0.113 | 0.125 | 0.125 | 0.100 | 0.100 |

| Var | 0.003 | 0.003 | 0.003 | 0.003 | 0.003 | 0.002 | 0.002 | |

| Mean | 0.136 | 0.136 | 0.101 | 0.136 | 0.136 | 0.094 | 0.094 | |

| 6 | Median | 0.125 | 0.125 | 0.100 | 0.125 | 0.125 | 0.100 | 0.100 |

| Var | 0.003 | 0.003 | 0.002 | 0.003 | 0.003 | 0.002 | 0.002 | |

| Mean | 0.129 | 0.129 | 0.099 | 0.129 | 0.129 | 0.086 | 0.086 | |

| 7 | Median | 0.125 | 0.125 | 0.100 | 0.125 | 0.125 | 0.075 | 0.075 |

| Var | 0.003 | 0.003 | 0.003 | 0.003 | 0.003 | 0.002 | 0.002 | |

| Mean | 0.121 | 0.121 | 0.091 | 0.121 | 0.121 | 0.083 | 0.082 | |

| 8 | Median | 0.113 | 0.113 | 0.075 | 0.113 | 0.113 | 0.075 | 0.075 |

| Var | 0.003 | 0.003 | 0.002 | 0.003 | 0.003 | 0.002 | 0.002 | |

| Mean | 0.127 | 0.127 | 0.090 | 0.127 | 0.127 | 0.084 | 0.083 | |

| 9 | Median | 0.125 | 0.125 | 0.100 | 0.125 | 0.125 | 0.075 | 0.075 |

| Var | 0.003 | 0.003 | 0.002 | 0.003 | 0.003 | 0.002 | 0.002 | |

| Mean | 0.121 | 0.121 | 0.088 | 0.121 | 0.121 | 0.069 | 0.069 | |

| 10 | Median | 0.125 | 0.125 | 0.075 | 0.125 | 0.125 | 0.075 | 0.075 |

| Var | 0.003 | 0.003 | 0.002 | 0.003 | 0.003 | 0.002 | 0.002 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.349 | 0.349 | 0.332 | 0.349 | 0.349 | 0.330 | 0.330 | |

| 1 | Median | 0.345 | 0.345 | 0.330 | 0.345 | 0.345 | 0.330 | 0.330 |

| Var | 0.002 | 0.002 | 0.002 | 0.002 | 0.002 | 0.002 | 0.002 | |

| Mean | 0.240 | 0.240 | 0.232 | 0.240 | 0.240 | 0.228 | 0.228 | |

| 2 | Median | 0.240 | 0.240 | 0.230 | 0.240 | 0.240 | 0.230 | 0.230 |

| Var | 0.001 | 0.001 | 0.002 | 0.001 | 0.001 | 0.002 | 0.002 | |

| Mean | 0.176 | 0.176 | 0.174 | 0.176 | 0.176 | 0.168 | 0.168 | |

| 3 | Median | 0.170 | 0.170 | 0.170 | 0.170 | 0.170 | 0.160 | 0.160 |

| Var | 0.002 | 0.002 | 0.001 | 0.002 | 0.002 | 0.001 | 0.001 | |

| Mean | 0.143 | 0.143 | 0.133 | 0.143 | 0.143 | 0.135 | 0.134 | |

| 4 | Median | 0.140 | 0.140 | 0.130 | 0.140 | 0.140 | 0.130 | 0.130 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.126 | 0.126 | 0.114 | 0.126 | 0.126 | 0.115 | 0.115 | |

| 5 | Median | 0.120 | 0.120 | 0.110 | 0.120 | 0.120 | 0.110 | 0.110 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.109 | 0.109 | 0.097 | 0.109 | 0.109 | 0.095 | 0.096 | |

| 6 | Median | 0.110 | 0.110 | 0.090 | 0.110 | 0.110 | 0.090 | 0.090 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.106 | 0.106 | 0.090 | 0.106 | 0.106 | 0.089 | 0.089 | |

| 7 | Median | 0.110 | 0.110 | 0.090 | 0.110 | 0.110 | 0.090 | 0.090 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.096 | 0.096 | 0.081 | 0.096 | 0.096 | 0.084 | 0.084 | |

| 8 | Median | 0.090 | 0.090 | 0.080 | 0.090 | 0.090 | 0.080 | 0.080 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.090 | 0.090 | 0.075 | 0.090 | 0.090 | 0.070 | 0.070 | |

| 9 | Median | 0.085 | 0.085 | 0.070 | 0.085 | 0.085 | 0.065 | 0.065 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.091 | 0.091 | 0.075 | 0.091 | 0.091 | 0.069 | 0.068 | |

| 10 | Median | 0.090 | 0.090 | 0.070 | 0.090 | 0.090 | 0.065 | 0.065 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.362 | 0.346 | 0.359 | 0.359 | 0.342 | 0.351 | 0.354 | |

| 1 | Median | 0.333 | 0.333 | 0.333 | 0.333 | 0.333 | 0.333 | 0.333 |

| Var | 0.021 | 0.021 | 0.021 | 0.021 | 0.021 | 0.021 | 0.022 | |

| Mean | 0.315 | 0.251 | 0.262 | 0.300 | 0.245 | 0.245 | 0.248 | |

| 2 | Median | 0.333 | 0.250 | 0.250 | 0.250 | 0.250 | 0.250 | 0.250 |

| Var | 0.020 | 0.016 | 0.016 | 0.019 | 0.015 | 0.015 | 0.016 | |

| Mean | 0.269 | 0.193 | 0.208 | 0.257 | 0.188 | 0.185 | 0.184 | |

| 3 | Median | 0.250 | 0.167 | 0.167 | 0.250 | 0.167 | 0.167 | 0.167 |

| Var | 0.016 | 0.014 | 0.014 | 0.015 | 0.013 | 0.012 | 0.013 | |

| Mean | 0.258 | 0.174 | 0.176 | 0.252 | 0.167 | 0.163 | 0.164 | |

| 4 | Median | 0.250 | 0.167 | 0.167 | 0.250 | 0.167 | 0.167 | 0.167 |

| Var | 0.018 | 0.013 | 0.012 | 0.017 | 0.013 | 0.012 | 0.012 | |

| Mean | 0.244 | 0.145 | 0.169 | 0.239 | 0.137 | 0.138 | 0.135 | |

| 5 | Median | 0.250 | 0.167 | 0.167 | 0.250 | 0.167 | 0.083 | 0.083 |

| Var | 0.017 | 0.010 | 0.013 | 0.017 | 0.010 | 0.011 | 0.011 | |

| Mean | 0.234 | 0.142 | 0.150 | 0.227 | 0.131 | 0.122 | 0.119 | |

| 6 | Median | 0.250 | 0.167 | 0.167 | 0.250 | 0.083 | 0.083 | 0.083 |

| Var | 0.018 | 0.010 | 0.012 | 0.017 | 0.010 | 0.009 | 0.009 | |

| Mean | 0.214 | 0.127 | 0.142 | 0.205 | 0.118 | 0.113 | 0.110 | |

| 7 | Median | 0.167 | 0.083 | 0.167 | 0.167 | 0.083 | 0.083 | 0.083 |

| Var | 0.016 | 0.011 | 0.012 | 0.016 | 0.010 | 0.009 | 0.009 | |

| Mean | 0.230 | 0.120 | 0.156 | 0.223 | 0.110 | 0.105 | 0.107 | |

| 8 | Median | 0.250 | 0.083 | 0.167 | 0.167 | 0.083 | 0.083 | 0.083 |

| Var | 0.018 | 0.010 | 0.014 | 0.017 | 0.009 | 0.009 | 0.010 | |

| Mean | 0.204 | 0.121 | 0.160 | 0.192 | 0.108 | 0.100 | 0.099 | |

| 9 | Median | 0.167 | 0.083 | 0.167 | 0.167 | 0.083 | 0.083 | 0.083 |

| Var | 0.017 | 0.009 | 0.016 | 0.016 | 0.009 | 0.008 | 0.008 | |

| Mean | 0.201 | 0.114 | 0.178 | 0.182 | 0.101 | 0.096 | 0.096 | |

| 10 | Median | 0.167 | 0.083 | 0.167 | 0.167 | 0.083 | 0.083 | 0.083 |

| Var | 0.019 | 0.010 | 0.017 | 0.019 | 0.009 | 0.008 | 0.008 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.369 | 0.336 | 0.349 | 0.369 | 0.336 | 0.342 | 0.341 | |

| 1 | Median | 0.375 | 0.325 | 0.350 | 0.375 | 0.325 | 0.350 | 0.338 |

| Var | 0.007 | 0.007 | 0.006 | 0.006 | 0.007 | 0.006 | 0.006 | |

| Mean | 0.265 | 0.253 | 0.239 | 0.265 | 0.248 | 0.233 | 0.233 | |

| 2 | Median | 0.275 | 0.250 | 0.250 | 0.275 | 0.250 | 0.225 | 0.225 |

| Var | 0.006 | 0.005 | 0.005 | 0.006 | 0.005 | 0.005 | 0.005 | |

| Mean | 0.204 | 0.204 | 0.184 | 0.204 | 0.203 | 0.175 | 0.175 | |

| 3 | Median | 0.200 | 0.200 | 0.175 | 0.200 | 0.200 | 0.175 | 0.175 |

| Var | 0.004 | 0.004 | 0.003 | 0.004 | 0.004 | 0.003 | 0.003 | |

| Mean | 0.175 | 0.175 | 0.147 | 0.175 | 0.175 | 0.143 | 0.142 | |

| 4 | Median | 0.175 | 0.175 | 0.150 | 0.175 | 0.175 | 0.150 | 0.125 |

| Var | 0.004 | 0.004 | 0.004 | 0.004 | 0.004 | 0.004 | 0.003 | |

| Mean | 0.157 | 0.157 | 0.130 | 0.157 | 0.157 | 0.118 | 0.118 | |

| 5 | Median | 0.150 | 0.150 | 0.125 | 0.150 | 0.150 | 0.125 | 0.125 |

| Var | 0.004 | 0.004 | 0.003 | 0.004 | 0.004 | 0.003 | 0.003 | |

| Mean | 0.148 | 0.148 | 0.120 | 0.148 | 0.148 | 0.108 | 0.107 | |

| 6 | Median | 0.150 | 0.150 | 0.125 | 0.150 | 0.150 | 0.100 | 0.100 |

| Var | 0.004 | 0.004 | 0.003 | 0.004 | 0.004 | 0.002 | 0.002 | |

| Mean | 0.150 | 0.150 | 0.116 | 0.150 | 0.150 | 0.092 | 0.091 | |

| 7 | Median | 0.150 | 0.150 | 0.113 | 0.150 | 0.150 | 0.100 | 0.100 |

| Var | 0.003 | 0.003 | 0.003 | 0.003 | 0.004 | 0.002 | 0.002 | |

| Mean | 0.162 | 0.161 | 0.125 | 0.162 | 0.161 | 0.091 | 0.092 | |

| 8 | Median | 0.150 | 0.150 | 0.125 | 0.150 | 0.150 | 0.088 | 0.100 |

| Var | 0.005 | 0.005 | 0.004 | 0.005 | 0.005 | 0.002 | 0.002 | |

| Mean | 0.173 | 0.167 | 0.130 | 0.173 | 0.165 | 0.086 | 0.087 | |

| 9 | Median | 0.175 | 0.175 | 0.125 | 0.175 | 0.150 | 0.075 | 0.075 |

| Var | 0.004 | 0.004 | 0.004 | 0.004 | 0.004 | 0.002 | 0.002 | |

| Mean | 0.192 | 0.172 | 0.147 | 0.192 | 0.167 | 0.088 | 0.090 | |

| 10 | Median | 0.200 | 0.175 | 0.150 | 0.200 | 0.150 | 0.075 | 0.075 |

| Var | 0.006 | 0.005 | 0.005 | 0.006 | 0.005 | 0.002 | 0.002 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.345 | 0.338 | 0.332 | 0.345 | 0.336 | 0.330 | 0.330 | |

| 1 | Median | 0.350 | 0.340 | 0.330 | 0.350 | 0.340 | 0.330 | 0.330 |

| Var | 0.003 | 0.002 | 0.002 | 0.003 | 0.002 | 0.002 | 0.002 | |

| Mean | 0.239 | 0.239 | 0.227 | 0.239 | 0.239 | 0.225 | 0.225 | |

| 2 | Median | 0.240 | 0.240 | 0.230 | 0.240 | 0.240 | 0.225 | 0.220 |

| Var | 0.002 | 0.002 | 0.002 | 0.002 | 0.002 | 0.002 | 0.002 | |

| Mean | 0.182 | 0.182 | 0.170 | 0.182 | 0.182 | 0.168 | 0.168 | |

| 3 | Median | 0.180 | 0.180 | 0.170 | 0.180 | 0.180 | 0.170 | 0.170 |

| Var | 0.002 | 0.002 | 0.001 | 0.002 | 0.002 | 0.001 | 0.001 | |

| Mean | 0.152 | 0.152 | 0.141 | 0.152 | 0.152 | 0.136 | 0.136 | |

| 4 | Median | 0.150 | 0.150 | 0.140 | 0.150 | 0.150 | 0.140 | 0.140 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.135 | 0.135 | 0.120 | 0.135 | 0.135 | 0.114 | 0.114 | |

| 5 | Median | 0.130 | 0.130 | 0.120 | 0.130 | 0.130 | 0.110 | 0.110 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.129 | 0.129 | 0.110 | 0.129 | 0.129 | 0.100 | 0.101 | |

| 6 | Median | 0.130 | 0.130 | 0.110 | 0.130 | 0.130 | 0.100 | 0.100 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.128 | 0.128 | 0.107 | 0.128 | 0.128 | 0.092 | 0.093 | |

| 7 | Median | 0.130 | 0.130 | 0.100 | 0.130 | 0.130 | 0.090 | 0.090 |

| Var | 0.002 | 0.002 | 0.001 | 0.002 | 0.002 | 0.001 | 0.001 | |

| Mean | 0.134 | 0.134 | 0.109 | 0.134 | 0.134 | 0.086 | 0.087 | |

| 8 | Median | 0.130 | 0.130 | 0.110 | 0.130 | 0.130 | 0.080 | 0.080 |

| Var | 0.002 | 0.002 | 0.001 | 0.002 | 0.002 | 0.001 | 0.001 | |

| Mean | 0.147 | 0.147 | 0.117 | 0.147 | 0.147 | 0.086 | 0.088 | |

| 9 | Median | 0.140 | 0.140 | 0.110 | 0.140 | 0.140 | 0.090 | 0.090 |

| Var | 0.002 | 0.002 | 0.002 | 0.002 | 0.002 | 0.001 | 0.001 | |

| Mean | 0.171 | 0.171 | 0.135 | 0.171 | 0.171 | 0.093 | 0.096 | |

| 10 | Median | 0.170 | 0.170 | 0.135 | 0.170 | 0.170 | 0.090 | 0.090 |

| Var | 0.003 | 0.003 | 0.002 | 0.003 | 0.003 | 0.001 | 0.001 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.494 | 0.482 | 0.430 | 0.490 | 0.483 | 0.405 | 0.413 | |

| 1 | Median | 0.500 | 0.500 | 0.417 | 0.500 | 0.500 | 0.417 | 0.417 |

| Var | 0.026 | 0.029 | 0.026 | 0.027 | 0.029 | 0.022 | 0.022 | |

| Mean | 0.428 | 0.412 | 0.317 | 0.427 | 0.412 | 0.318 | 0.303 | |

| 2 | Median | 0.417 | 0.417 | 0.333 | 0.417 | 0.417 | 0.333 | 0.333 |

| Var | 0.021 | 0.023 | 0.028 | 0.021 | 0.023 | 0.018 | 0.018 | |

| Mean | 0.416 | 0.401 | 0.317 | 0.419 | 0.403 | 0.313 | 0.302 | |

| 3 | Median | 0.417 | 0.417 | 0.292 | 0.417 | 0.417 | 0.292 | 0.250 |

| Var | 0.028 | 0.031 | 0.037 | 0.027 | 0.030 | 0.032 | 0.031 | |

| Mean | 0.424 | 0.387 | 0.393 | 0.420 | 0.382 | 0.357 | 0.344 | |

| 4 | Median | 0.500 | 0.417 | 0.417 | 0.458 | 0.417 | 0.333 | 0.333 |

| Var | 0.047 | 0.048 | 0.056 | 0.044 | 0.046 | 0.046 | 0.043 | |

| Mean | 0.372 | 0.362 | 0.493 | 0.398 | 0.365 | 0.380 | 0.355 | |

| 5 | Median | 0.333 | 0.333 | 0.583 | 0.417 | 0.333 | 0.417 | 0.333 |

| Var | 0.052 | 0.054 | 0.067 | 0.049 | 0.052 | 0.053 | 0.048 | |

| Mean | 0.400 | 0.383 | 0.608 | 0.427 | 0.390 | 0.446 | 0.430 | |

| 6 | Median | 0.417 | 0.375 | 0.667 | 0.417 | 0.375 | 0.500 | 0.417 |

| Var | 0.072 | 0.075 | 0.060 | 0.066 | 0.075 | 0.067 | 0.066 | |

| Mean | 0.374 | 0.378 | 0.628 | 0.428 | 0.388 | 0.481 | 0.468 | |

| 7 | Median | 0.333 | 0.333 | 0.667 | 0.417 | 0.417 | 0.500 | 0.500 |

| Var | 0.072 | 0.075 | 0.052 | 0.063 | 0.072 | 0.067 | 0.070 | |

| Mean | 0.457 | 0.457 | 0.673 | 0.527 | 0.474 | 0.615 | 0.593 | |

| 8 | Median | 0.417 | 0.417 | 0.750 | 0.583 | 0.500 | 0.667 | 0.667 |

| Var | 0.098 | 0.098 | 0.053 | 0.071 | 0.091 | 0.073 | 0.075 | |

| Mean | 0.565 | 0.565 | 0.738 | 0.642 | 0.583 | 0.652 | 0.659 | |

| 9 | Median | 0.583 | 0.583 | 0.750 | 0.750 | 0.667 | 0.750 | 0.750 |

| Var | 0.099 | 0.099 | 0.040 | 0.079 | 0.087 | 0.072 | 0.074 | |

| Mean | 0.565 | 0.565 | 0.744 | 0.662 | 0.613 | 0.698 | 0.694 | |

| 10 | Median | 0.583 | 0.583 | 0.750 | 0.667 | 0.667 | 0.750 | 0.750 |

| Var | 0.096 | 0.096 | 0.037 | 0.063 | 0.080 | 0.057 | 0.065 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.406 | 0.403 | 0.366 | 0.406 | 0.401 | 0.341 | 0.342 | |

| 1 | Median | 0.400 | 0.400 | 0.350 | 0.400 | 0.400 | 0.325 | 0.325 |

| Var | 0.006 | 0.007 | 0.007 | 0.006 | 0.007 | 0.007 | 0.007 | |

| Mean | 0.378 | 0.377 | 0.310 | 0.378 | 0.378 | 0.272 | 0.271 | |

| 2 | Median | 0.375 | 0.375 | 0.300 | 0.375 | 0.375 | 0.250 | 0.250 |

| Var | 0.010 | 0.010 | 0.010 | 0.010 | 0.010 | 0.008 | 0.007 | |

| Mean | 0.428 | 0.428 | 0.324 | 0.428 | 0.427 | 0.253 | 0.251 | |

| 3 | Median | 0.463 | 0.463 | 0.300 | 0.463 | 0.450 | 0.225 | 0.225 |

| Var | 0.018 | 0.018 | 0.016 | 0.018 | 0.018 | 0.010 | 0.009 | |

| Mean | 0.465 | 0.427 | 0.370 | 0.470 | 0.430 | 0.259 | 0.254 | |

| 4 | Median | 0.500 | 0.475 | 0.350 | 0.500 | 0.475 | 0.225 | 0.225 |

| Var | 0.031 | 0.035 | 0.029 | 0.030 | 0.034 | 0.021 | 0.020 | |

| Mean | 0.281 | 0.231 | 0.507 | 0.310 | 0.228 | 0.282 | 0.276 | |

| 5 | Median | 0.200 | 0.175 | 0.500 | 0.225 | 0.175 | 0.238 | 0.225 |

| Var | 0.035 | 0.021 | 0.041 | 0.034 | 0.020 | 0.030 | 0.029 | |

| Mean | 0.242 | 0.242 | 0.612 | 0.289 | 0.242 | 0.325 | 0.321 | |

| 6 | Median | 0.175 | 0.175 | 0.675 | 0.225 | 0.175 | 0.238 | 0.238 |

| Var | 0.040 | 0.040 | 0.036 | 0.039 | 0.037 | 0.050 | 0.049 | |

| Mean | 0.298 | 0.298 | 0.712 | 0.363 | 0.294 | 0.368 | 0.362 | |

| 7 | Median | 0.200 | 0.200 | 0.725 | 0.313 | 0.200 | 0.300 | 0.288 |

| Var | 0.059 | 0.059 | 0.014 | 0.056 | 0.056 | 0.064 | 0.063 | |

| Mean | 0.476 | 0.476 | 0.749 | 0.553 | 0.473 | 0.498 | 0.495 | |

| 8 | Median | 0.513 | 0.513 | 0.763 | 0.588 | 0.500 | 0.588 | 0.575 |

| Var | 0.086 | 0.086 | 0.009 | 0.068 | 0.084 | 0.076 | 0.076 | |

| Mean | 0.497 | 0.497 | 0.785 | 0.625 | 0.500 | 0.592 | 0.586 | |

| 9 | Median | 0.525 | 0.525 | 0.800 | 0.700 | 0.538 | 0.663 | 0.650 |

| Var | 0.104 | 0.104 | 0.005 | 0.057 | 0.099 | 0.062 | 0.064 | |

| Mean | 0.606 | 0.606 | 0.807 | 0.746 | 0.627 | 0.662 | 0.661 | |

| 10 | Median | 0.750 | 0.750 | 0.825 | 0.825 | 0.800 | 0.763 | 0.750 |

| Var | 0.105 | 0.105 | 0.004 | 0.042 | 0.101 | 0.053 | 0.054 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.394 | 0.394 | 0.360 | 0.394 | 0.394 | 0.338 | 0.338 | |

| 1 | Median | 0.390 | 0.390 | 0.355 | 0.390 | 0.390 | 0.340 | 0.340 |

| Var | 0.004 | 0.004 | 0.003 | 0.004 | 0.004 | 0.003 | 0.003 | |

| Mean | 0.345 | 0.345 | 0.280 | 0.345 | 0.345 | 0.241 | 0.244 | |

| 2 | Median | 0.340 | 0.340 | 0.275 | 0.340 | 0.340 | 0.240 | 0.240 |

| Var | 0.005 | 0.005 | 0.003 | 0.005 | 0.005 | 0.002 | 0.002 | |

| Mean | 0.426 | 0.426 | 0.286 | 0.426 | 0.426 | 0.190 | 0.200 | |

| 3 | Median | 0.430 | 0.430 | 0.270 | 0.430 | 0.430 | 0.190 | 0.200 |

| Var | 0.008 | 0.008 | 0.008 | 0.008 | 0.008 | 0.002 | 0.002 | |

| Mean | 0.524 | 0.490 | 0.390 | 0.526 | 0.490 | 0.170 | 0.190 | |

| 4 | Median | 0.550 | 0.540 | 0.400 | 0.550 | 0.540 | 0.160 | 0.180 |

| Var | 0.017 | 0.025 | 0.018 | 0.015 | 0.025 | 0.002 | 0.003 | |

| Mean | 0.225 | 0.199 | 0.535 | 0.241 | 0.198 | 0.168 | 0.170 | |

| 5 | Median | 0.160 | 0.160 | 0.560 | 0.180 | 0.160 | 0.150 | 0.160 |

| Var | 0.028 | 0.018 | 0.017 | 0.027 | 0.018 | 0.006 | 0.006 | |

| Mean | 0.186 | 0.183 | 0.665 | 0.225 | 0.184 | 0.183 | 0.183 | |

| 6 | Median | 0.140 | 0.140 | 0.680 | 0.180 | 0.140 | 0.140 | 0.150 |

| Var | 0.014 | 0.013 | 0.009 | 0.014 | 0.012 | 0.013 | 0.011 | |

| Mean | 0.251 | 0.251 | 0.735 | 0.322 | 0.252 | 0.251 | 0.253 | |

| 7 | Median | 0.170 | 0.170 | 0.740 | 0.260 | 0.170 | 0.170 | 0.190 |

| Var | 0.033 | 0.033 | 0.004 | 0.028 | 0.031 | 0.033 | 0.028 | |

| Mean | 0.376 | 0.376 | 0.776 | 0.511 | 0.379 | 0.376 | 0.383 | |

| 8 | Median | 0.335 | 0.335 | 0.780 | 0.520 | 0.335 | 0.335 | 0.385 |

| Var | 0.065 | 0.065 | 0.002 | 0.048 | 0.062 | 0.065 | 0.057 | |

| Mean | 0.467 | 0.467 | 0.797 | 0.650 | 0.476 | 0.467 | 0.491 | |

| 9 | Median | 0.475 | 0.475 | 0.800 | 0.700 | 0.480 | 0.475 | 0.510 |

| Var | 0.087 | 0.087 | 0.002 | 0.039 | 0.082 | 0.087 | 0.076 | |

| Mean | 0.652 | 0.652 | 0.822 | 0.820 | 0.675 | 0.652 | 0.713 | |

| 10 | Median | 0.780 | 0.780 | 0.820 | 0.840 | 0.790 | 0.780 | 0.800 |

| Var | 0.071 | 0.071 | 0.002 | 0.012 | 0.062 | 0.071 | 0.048 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.389 | 0.378 | 0.417 | 0.396 | 0.381 | 0.381 | 0.387 | |

| 1 | Median | 0.417 | 0.333 | 0.417 | 0.417 | 0.417 | 0.417 | 0.417 |

| Var | 0.024 | 0.024 | 0.023 | 0.023 | 0.023 | 0.022 | 0.024 | |

| Mean | 0.286 | 0.268 | 0.363 | 0.299 | 0.269 | 0.268 | 0.268 | |

| 2 | Median | 0.250 | 0.250 | 0.333 | 0.250 | 0.250 | 0.250 | 0.250 |

| Var | 0.022 | 0.021 | 0.029 | 0.022 | 0.022 | 0.021 | 0.021 | |

| Mean | 0.230 | 0.219 | 0.382 | 0.259 | 0.228 | 0.219 | 0.219 | |

| 3 | Median | 0.167 | 0.167 | 0.333 | 0.250 | 0.167 | 0.167 | 0.167 |

| Var | 0.024 | 0.023 | 0.040 | 0.024 | 0.023 | 0.023 | 0.023 | |

| Mean | 0.186 | 0.181 | 0.460 | 0.242 | 0.199 | 0.181 | 0.181 | |

| 4 | Median | 0.167 | 0.167 | 0.417 | 0.167 | 0.167 | 0.167 | 0.167 |

| Var | 0.022 | 0.022 | 0.048 | 0.031 | 0.024 | 0.022 | 0.022 | |

| Mean | 0.195 | 0.194 | 0.545 | 0.284 | 0.216 | 0.194 | 0.194 | |

| 5 | Median | 0.167 | 0.167 | 0.583 | 0.250 | 0.167 | 0.167 | 0.167 |

| Var | 0.029 | 0.030 | 0.054 | 0.046 | 0.034 | 0.030 | 0.030 | |

| Mean | 0.213 | 0.211 | 0.642 | 0.374 | 0.256 | 0.211 | 0.211 | |

| 6 | Median | 0.167 | 0.167 | 0.667 | 0.333 | 0.167 | 0.167 | 0.167 |

| Var | 0.042 | 0.042 | 0.045 | 0.062 | 0.049 | 0.042 | 0.042 | |

| Mean | 0.208 | 0.210 | 0.680 | 0.424 | 0.268 | 0.210 | 0.210 | |

| 7 | Median | 0.167 | 0.167 | 0.750 | 0.417 | 0.167 | 0.167 | 0.167 |

| Var | 0.052 | 0.053 | 0.037 | 0.068 | 0.060 | 0.053 | 0.053 | |

| Mean | 0.228 | 0.228 | 0.727 | 0.513 | 0.310 | 0.228 | 0.228 | |

| 8 | Median | 0.167 | 0.167 | 0.750 | 0.500 | 0.250 | 0.167 | 0.167 |

| Var | 0.059 | 0.059 | 0.025 | 0.067 | 0.071 | 0.059 | 0.059 | |

| Mean | 0.259 | 0.258 | 0.730 | 0.572 | 0.366 | 0.258 | 0.258 | |

| 9 | Median | 0.167 | 0.167 | 0.750 | 0.583 | 0.250 | 0.167 | 0.167 |

| Var | 0.084 | 0.084 | 0.030 | 0.069 | 0.091 | 0.084 | 0.084 | |

| Mean | 0.303 | 0.303 | 0.761 | 0.665 | 0.455 | 0.303 | 0.303 | |

| 10 | Median | 0.167 | 0.167 | 0.750 | 0.750 | 0.417 | 0.167 | 0.167 |

| Var | 0.099 | 0.099 | 0.020 | 0.047 | 0.096 | 0.099 | 0.099 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.378 | 0.348 | 0.387 | 0.380 | 0.350 | 0.354 | 0.353 | |

| 1 | Median | 0.375 | 0.350 | 0.375 | 0.375 | 0.350 | 0.350 | 0.350 |

| Var | 0.008 | 0.008 | 0.008 | 0.008 | 0.007 | 0.007 | 0.007 | |

| Mean | 0.277 | 0.251 | 0.330 | 0.287 | 0.253 | 0.258 | 0.258 | |

| 2 | Median | 0.275 | 0.250 | 0.325 | 0.275 | 0.250 | 0.250 | 0.250 |

| Var | 0.007 | 0.006 | 0.010 | 0.007 | 0.006 | 0.007 | 0.006 | |

| Mean | 0.193 | 0.183 | 0.374 | 0.216 | 0.186 | 0.205 | 0.205 | |

| 3 | Median | 0.175 | 0.175 | 0.375 | 0.200 | 0.175 | 0.200 | 0.200 |

| Var | 0.006 | 0.005 | 0.020 | 0.007 | 0.005 | 0.006 | 0.006 | |

| Mean | 0.168 | 0.167 | 0.512 | 0.219 | 0.171 | 0.217 | 0.216 | |

| 4 | Median | 0.150 | 0.150 | 0.550 | 0.200 | 0.150 | 0.200 | 0.200 |

| Var | 0.008 | 0.008 | 0.022 | 0.012 | 0.008 | 0.011 | 0.011 | |

| Mean | 0.141 | 0.141 | 0.613 | 0.237 | 0.152 | 0.237 | 0.237 | |

| 5 | Median | 0.125 | 0.125 | 0.650 | 0.200 | 0.125 | 0.200 | 0.200 |

| Var | 0.008 | 0.008 | 0.020 | 0.019 | 0.009 | 0.018 | 0.018 | |

| Mean | 0.132 | 0.132 | 0.700 | 0.294 | 0.146 | 0.292 | 0.291 | |

| 6 | Median | 0.100 | 0.100 | 0.700 | 0.250 | 0.125 | 0.250 | 0.250 |

| Var | 0.011 | 0.011 | 0.010 | 0.030 | 0.013 | 0.029 | 0.029 | |

| Mean | 0.138 | 0.138 | 0.742 | 0.392 | 0.161 | 0.381 | 0.377 | |

| 7 | Median | 0.100 | 0.100 | 0.750 | 0.375 | 0.125 | 0.375 | 0.375 |

| Var | 0.014 | 0.014 | 0.007 | 0.039 | 0.017 | 0.033 | 0.033 | |

| Mean | 0.154 | 0.154 | 0.769 | 0.512 | 0.193 | 0.490 | 0.487 | |

| 8 | Median | 0.100 | 0.100 | 0.775 | 0.550 | 0.125 | 0.500 | 0.500 |

| Var | 0.023 | 0.023 | 0.004 | 0.042 | 0.028 | 0.039 | 0.039 | |

| Mean | 0.175 | 0.175 | 0.788 | 0.624 | 0.232 | 0.583 | 0.580 | |

| 9 | Median | 0.100 | 0.100 | 0.800 | 0.675 | 0.125 | 0.625 | 0.625 |

| Var | 0.038 | 0.038 | 0.005 | 0.032 | 0.046 | 0.035 | 0.035 | |

| Mean | 0.192 | 0.192 | 0.800 | 0.695 | 0.282 | 0.654 | 0.653 | |

| 10 | Median | 0.100 | 0.100 | 0.800 | 0.725 | 0.175 | 0.688 | 0.675 |

| Var | 0.049 | 0.049 | 0.004 | 0.024 | 0.063 | 0.029 | 0.029 |

| R | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 | |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.380 | 0.339 | 0.367 | 0.380 | 0.340 | 0.338 | 0.339 | |

| 1 | Median | 0.380 | 0.340 | 0.360 | 0.380 | 0.340 | 0.340 | 0.340 |

| Var | 0.003 | 0.003 | 0.004 | 0.003 | 0.003 | 0.003 | 0.003 | |

| Mean | 0.278 | 0.242 | 0.310 | 0.284 | 0.242 | 0.228 | 0.229 | |

| 2 | Median | 0.270 | 0.240 | 0.300 | 0.280 | 0.240 | 0.230 | 0.230 |

| Var | 0.005 | 0.003 | 0.005 | 0.004 | 0.003 | 0.002 | 0.002 | |

| Mean | 0.180 | 0.177 | 0.385 | 0.198 | 0.179 | 0.176 | 0.179 | |

| 3 | Median | 0.170 | 0.170 | 0.380 | 0.190 | 0.170 | 0.170 | 0.180 |

| Var | 0.002 | 0.002 | 0.009 | 0.003 | 0.002 | 0.002 | 0.002 | |

| Mean | 0.141 | 0.141 | 0.527 | 0.184 | 0.143 | 0.141 | 0.146 | |

| 4 | Median | 0.140 | 0.140 | 0.540 | 0.170 | 0.140 | 0.140 | 0.140 |

| Var | 0.001 | 0.001 | 0.010 | 0.003 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.122 | 0.122 | 0.649 | 0.203 | 0.126 | 0.122 | 0.130 | |

| 5 | Median | 0.120 | 0.120 | 0.660 | 0.185 | 0.120 | 0.120 | 0.120 |

| Var | 0.002 | 0.002 | 0.004 | 0.007 | 0.002 | 0.002 | 0.002 | |

| Mean | 0.109 | 0.109 | 0.716 | 0.266 | 0.116 | 0.109 | 0.125 | |

| 6 | Median | 0.100 | 0.100 | 0.720 | 0.240 | 0.110 | 0.100 | 0.110 |

| Var | 0.002 | 0.002 | 0.003 | 0.013 | 0.002 | 0.002 | 0.003 | |

| Mean | 0.103 | 0.103 | 0.754 | 0.371 | 0.115 | 0.103 | 0.129 | |

| 7 | Median | 0.090 | 0.090 | 0.750 | 0.360 | 0.100 | 0.090 | 0.120 |

| Var | 0.003 | 0.003 | 0.002 | 0.020 | 0.004 | 0.003 | 0.005 | |

| Mean | 0.102 | 0.102 | 0.775 | 0.490 | 0.119 | 0.102 | 0.141 | |

| 8 | Median | 0.090 | 0.090 | 0.780 | 0.500 | 0.100 | 0.090 | 0.120 |

| Var | 0.005 | 0.005 | 0.002 | 0.023 | 0.006 | 0.005 | 0.007 | |

| Mean | 0.112 | 0.112 | 0.791 | 0.629 | 0.143 | 0.112 | 0.184 | |

| 9 | Median | 0.090 | 0.090 | 0.790 | 0.650 | 0.110 | 0.090 | 0.140 |

| Var | 0.009 | 0.009 | 0.002 | 0.015 | 0.012 | 0.009 | 0.017 | |

| Mean | 0.114 | 0.114 | 0.802 | 0.707 | 0.155 | 0.114 | 0.211 | |

| 10 | Median | 0.080 | 0.080 | 0.800 | 0.720 | 0.110 | 0.080 | 0.160 |

| Var | 0.014 | 0.014 | 0.002 | 0.007 | 0.019 | 0.014 | 0.025 |

Appendix D. Simulation Results in Section 4.2

| N | R = 1 | AIC | BIC | PCA | SAIC | SBIC | CV1 | CV2 |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.329 | 0.325 | 0.312 | 0.323 | 0.322 | 0.313 | 0.313 | |

| 200 | Median | 0.325 | 0.325 | 0.300 | 0.325 | 0.325 | 0.325 | 0.325 |

| Var | 0.006 | 0.006 | 0.005 | 0.006 | 0.006 | 0.006 | 0.006 | |

| Mean | 0.330 | 0.319 | 0.305 | 0.327 | 0.314 | 0.304 | 0.304 | |

| 400 | Median | 0.325 | 0.313 | 0.300 | 0.325 | 0.313 | 0.300 | 0.300 |

| Var | 0.003 | 0.003 | 0.003 | 0.003 | 0.003 | 0.003 | 0.003 | |

| Mean | 0.332 | 0.326 | 0.304 | 0.330 | 0.326 | 0.305 | 0.304 | |

| 1000 | Median | 0.330 | 0.320 | 0.303 | 0.330 | 0.320 | 0.303 | 0.300 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 |

| N | R = 3 | AIC | BIC | PCA | SAIC | SBIC | CV1 | CV2 |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.173 | 0.173 | 0.168 | 0.173 | 0.173 | 0.162 | 0.162 | |

| 200 | Median | 0.175 | 0.175 | 0.175 | 0.175 | 0.175 | 0.175 | 0.163 |

| Var | 0.003 | 0.003 | 0.002 | 0.003 | 0.003 | 0.002 | 0.002 | |

| Mean | 0.172 | 0.172 | 0.163 | 0.172 | 0.171 | 0.167 | 0.169 | |

| 400 | Median | 0.175 | 0.175 | 0.163 | 0.175 | 0.175 | 0.175 | 0.175 |

| Var | 0.001 | 0.001 | 0.002 | 0.001 | 0.001 | 0.001 | 0.002 | |

| Mean | 0.175 | 0.193 | 0.156 | 0.175 | 0.189 | 0.149 | 0.148 | |

| 1000 | Median | 0.180 | 0.198 | 0.160 | 0.180 | 0.190 | 0.145 | 0.145 |

| Var | 0.001 | 0.001 | 0.000 | 0.001 | 0.001 | 0.001 | 0.001 |

| N | R = 7 | AIC | BIC | PCA | SAIC | SBIC | CV1 | CV2 |

|---|---|---|---|---|---|---|---|---|

| Mean | 0.104 | 0.104 | 0.109 | 0.104 | 0.104 | 0.095 | 0.097 | |

| 200 | Median | 0.100 | 0.100 | 0.100 | 0.100 | 0.100 | 0.100 | 0.088 |

| Var | 0.003 | 0.003 | 0.003 | 0.003 | 0.003 | 0.003 | 0.002 | |

| Mean | 0.106 | 0.106 | 0.101 | 0.106 | 0.106 | 0.087 | 0.087 | |

| 400 | Median | 0.100 | 0.100 | 0.100 | 0.100 | 0.100 | 0.088 | 0.088 |

| Var | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | 0.001 | |

| Mean | 0.109 | 0.109 | 0.103 | 0.109 | 0.109 | 0.084 | 0.083 | |

| 1000 | Median | 0.110 | 0.110 | 0.105 | 0.110 | 0.110 | 0.085 | 0.080 |

| Var | 0.001 | 0.001 | 0.000 | 0.001 | 0.001 | 0.000 | 0.000 |

References

- Ando, Tomohiro, and Ker Chau Li. 2014. A model-averaging approach for high-dimensional regression. Journal of the American Statistical Association 109: 254–65. [Google Scholar] [CrossRef]

- Ando, Tomohiro, and Ker Chau Li. 2017. A weight-relaxed model averaging approach for high-dimensional generalized linear models. The Annals of Statistics 45: 2654–79. [Google Scholar] [CrossRef]

- Andrews, Donald W. K. 1991. Asymptotic optimality of generalized CL, cross-validation, and generalized cross-validation in regression with heteroskedastic errors. Journal of Econometrics 47: 359–77. [Google Scholar] [CrossRef]

- Balan, Raluca M., and Ioana Schiopu-Kratina. 2005. Asymptotic results with generalized estimating equations for longitudinal data. The Annals of Statistics 33: 522–41. [Google Scholar] [CrossRef]

- Buckland, Steven T., Kenneth P. Burnham, and Nicole H. Augustin. 1997. Model selection: An integral part of inference. Biometrics 53: 603–18. [Google Scholar] [CrossRef]

- Chen, Kani, Inchi Hu, and Zhiliang Ying. 1999. Strong consistency of maximum quasi-likelihood estimators in generalized linear models with fixed and adaptive designs. The Annals of Statistics 27: 1155–63. [Google Scholar] [CrossRef]

- Claeskens, Gerda, and Raymond J. Carroll. 2007. An asymptotic theory for model selection inference in general semiparametric problems. Biometrika 94: 249–65. [Google Scholar] [CrossRef]

- Flynn, Cheryl J., Clifford M. Hurvich, and Jeffrey S. Simonoff. 2013. Efficiency for regularization parameter selection in penalized likelihood estimation of misspecified models. Journal of the American Statistical Association 108: 1031–43. [Google Scholar] [CrossRef]

- Gao, Yan, Xinyu Zhang, Shouyang Wang, and Guohua Zou. 2016. Model averaging based on leave-subject-out cross-validation. Journal of Econometrics 192: 139–51. [Google Scholar] [CrossRef]

- Hansen, Bruce E. 2007. Least squares model averaging. Econometrica 75: 1175–89. [Google Scholar] [CrossRef]

- Hansen, Bruce E., and Jeffrey S. Racine. 2012. Jacknife model averaging. Journal of Econometrics 167: 38–46. [Google Scholar] [CrossRef]

- Hoffmann-Jørgensen, Jørgen. 1974. Sums of independent Banach space valued random variables. Studia Mathematica 52: 159–86. [Google Scholar] [CrossRef]

- Hoffmann-Jørgensen, Jørgen, and Gilles Pisier. 1976. The law of large numbers and the central limit theorem in Banach spaces. The Annals of Probability 4: 587–99. [Google Scholar] [CrossRef]

- Hjort, Nils L., and Gerda Claeskens. 2003. Frequentist model average estimators. Journal of the American Statistical Association 98: 879–99. [Google Scholar] [CrossRef]

- James, Gareth M. 2002. Generalized linear models with functional predictors. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 64: 411–32. [Google Scholar] [CrossRef]

- Kahane, Jean Pierrc. 1968. Some Random Series of Functions. Lexington: D. C. Heath. [Google Scholar]

- Li, Ker Chau. 1987. Asymptotic optimality for Cp,CL, cross-validation and generalized cross-validation: discrete index set. The Annals of Statistics 15: 958–75. [Google Scholar] [CrossRef]

- Liang, Hua, Guohua Zou, Alan T. K. Wan, and Xinyu Zhang. 2011. Optimal weight choice for frequentist model average estimators. Journal of the American Statistical Association 106: 1053–66. [Google Scholar] [CrossRef]

- Lv, Jinchi, and Jun S. Liu. 2014. Model selection principles in misspecified models. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 76: 141–67. [Google Scholar] [CrossRef]

- Müller, Hans Georg, and Ulrich Stadtmüller. 2005. Generalized functional linear models. The Annals of Statistics 33: 774–805. [Google Scholar] [CrossRef]

- Wan, Alan T. K., Xinyu Zhang, and Guohua Zou. 2010. Least squares model averaging by Mallows criterion. Journal of Econometrics 156: 277–83. [Google Scholar] [CrossRef]

- Wu, Chien-Fu. 1981. Asymptotic theory of nonlinear least squares estimation. The Annals of Statistics 9: 501–13. [Google Scholar] [CrossRef]

- Xu, Ganggang, Suojin Wang, and Jianhua Z. Huang. 2014. Focused information criterion and model averaging based on weighted composite quantile regression. Scandinavian Journal of Statistics 41: 365–81. [Google Scholar] [CrossRef]

- Yang, Yuhong. 2001. Adaptive regression by mixing. Journal of the American Statistical Association 96: 574–88. [Google Scholar] [CrossRef]

- Yao, Fang, Müller Hans-Georg, and Wang Jane-Ling. 2005. Functional data analysis for sparse longitudinal data. Journal of the American Statistical Association 100: 577–90. [Google Scholar] [CrossRef]

- Zhang, Xinyu, and Hua Liang. 2011. Focused information criterion and model averaging for generalized additive partial linear models. The Annals of Statistics 39: 174–200. [Google Scholar] [CrossRef]

- Zhang, Xinyu, Alan T. K. Wan, and Sherry Z. Zhou. 2012. Focused information criteria model selection and model averaging in a Tobit model with a non-zero threshold. Journal of Business and Economic Statistics 30: 132–42. [Google Scholar] [CrossRef]

- Zhang, Xinyu, Alan T. K. Wan, and Guohua Zou. 2013. Model averaging by jackknife criterion in models with dependent data. Journal of Econometrics 174: 82–94. [Google Scholar] [CrossRef]

- Zhang, Xinyu. 2015. Consistency of model averaging estimators. Economics Letters 130: 120–23. [Google Scholar] [CrossRef]

- Zhang, Xinyu, Dalei Yu, Guohua Zou, and Hua Liang. 2016. Optimal model averaging estimation for generalized linear models and generalized Linear mixed-effects models. Journal of the American Statistical Association 111: 1775–90. [Google Scholar] [CrossRef]

- Zhang, Xinyu, Jeng-Min Chiou, and Yanyuan Ma. 2018. Functional prediction through averaging estimated functional linear regression models. Biometrika 105: 945–62. [Google Scholar] [CrossRef]

- Zhao, Shangwei, Jun Liao, and Dalei Yu. 2018. Model averaging estimator in ridge regression and its large sample properties. Statistical Papers. [Google Scholar] [CrossRef]

- Zhu, Rong, Guohua Zou, and Xinyu Zhang. 2018. Optimal model averaging estimation for partial functional linear models. Journal of Systems Science and Mathematical Sciences 38: 777–800. [Google Scholar]

- Zinn, Joel. 1977. A note on the central limit theorem in Banach spaces. The Annals of Probability 5: 283–86. [Google Scholar] [CrossRef]

| Rounds | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 |

|---|---|---|---|---|---|---|---|

| 1 | 0.301 | 0.301 | 0.275 | 0.301 | 0.301 | 0.221 | 0.221 |

| 2 | 0.292 | 0.292 | 0.247 | 0.292 | 0.292 | 0.178 | 0.176 |

| 3 | 0.290 | 0.290 | 0.242 | 0.290 | 0.290 | 0.187 | 0.187 |

| 4 | 0.280 | 0.280 | 0.233 | 0.280 | 0.280 | 0.176 | 0.174 |

| 5 | 0.276 | 0.276 | 0.233 | 0.276 | 0.276 | 0.147 | 0.149 |

| 6 | 0.316 | 0.316 | 0.233 | 0.316 | 0.316 | 0.188 | 0.188 |

| 7 | 0.269 | 0.269 | 0.244 | 0.269 | 0.269 | 0.164 | 0.164 |

| 8 | 0.294 | 0.294 | 0.225 | 0.294 | 0.294 | 0.174 | 0.174 |

| 9 | 0.316 | 0.316 | 0.235 | 0.316 | 0.316 | 0.187 | 0.187 |

| 10 | 0.282 | 0.282 | 0.242 | 0.282 | 0.282 | 0.174 | 0.173 |

| 11 | 0.292 | 0.292 | 0.240 | 0.292 | 0.292 | 0.162 | 0.162 |

| 12 | 0.285 | 0.285 | 0.261 | 0.285 | 0.285 | 0.188 | 0.188 |

| 13 | 0.282 | 0.282 | 0.219 | 0.282 | 0.282 | 0.150 | 0.149 |

| 14 | 0.264 | 0.264 | 0.280 | 0.264 | 0.264 | 0.188 | 0.188 |

| 15 | 0.282 | 0.282 | 0.247 | 0.282 | 0.282 | 0.187 | 0.187 |

| 16 | 0.295 | 0.295 | 0.269 | 0.295 | 0.295 | 0.185 | 0.185 |

| 17 | 0.328 | 0.328 | 0.252 | 0.328 | 0.328 | 0.204 | 0.202 |

| 18 | 0.301 | 0.301 | 0.245 | 0.301 | 0.301 | 0.187 | 0.187 |

| 19 | 0.278 | 0.278 | 0.209 | 0.278 | 0.278 | 0.150 | 0.150 |

| 20 | 0.311 | 0.311 | 0.249 | 0.311 | 0.311 | 0.183 | 0.183 |

| Rounds | AIC | BIC | FPCA | S-AIC | S-BIC | CV1 | CV2 |

|---|---|---|---|---|---|---|---|

| 1 | 0.287 | 0.287 | 0.235 | 0.287 | 0.287 | 0.166 | 0.165 |

| 2 | 0.289 | 0.289 | 0.244 | 0.289 | 0.289 | 0.181 | 0.180 |

| 3 | 0.290 | 0.290 | 0.246 | 0.290 | 0.290 | 0.174 | 0.173 |

| 4 | 0.293 | 0.293 | 0.249 | 0.293 | 0.293 | 0.182 | 0.182 |

| 5 | 0.296 | 0.296 | 0.249 | 0.296 | 0.296 | 0.190 | 0.190 |

| 6 | 0.285 | 0.285 | 0.249 | 0.285 | 0.285 | 0.175 | 0.175 |

| 7 | 0.297 | 0.297 | 0.246 | 0.297 | 0.297 | 0.184 | 0.183 |

| 8 | 0.292 | 0.292 | 0.252 | 0.292 | 0.292 | 0.179 | 0.179 |

| 9 | 0.283 | 0.283 | 0.248 | 0.283 | 0.283 | 0.174 | 0.173 |

| 10 | 0.291 | 0.291 | 0.246 | 0.291 | 0.291 | 0.182 | 0.181 |

| 11 | 0.291 | 0.291 | 0.247 | 0.291 | 0.291 | 0.184 | 0.186 |

| 12 | 0.294 | 0.294 | 0.240 | 0.294 | 0.294 | 0.175 | 0.175 |

| 13 | 0.293 | 0.293 | 0.254 | 0.293 | 0.293 | 0.190 | 0.187 |

| 14 | 0.295 | 0.295 | 0.233 | 0.295 | 0.295 | 0.175 | 0.175 |

| 15 | 0.293 | 0.293 | 0.244 | 0.293 | 0.293 | 0.176 | 0.177 |

| 16 | 0.288 | 0.288 | 0.237 | 0.288 | 0.288 | 0.179 | 0.178 |

| 17 | 0.282 | 0.282 | 0.243 | 0.282 | 0.282 | 0.173 | 0.173 |

| 18 | 0.290 | 0.290 | 0.245 | 0.290 | 0.290 | 0.178 | 0.177 |

| 19 | 0.294 | 0.294 | 0.257 | 0.294 | 0.294 | 0.186 | 0.187 |

| 20 | 0.285 | 0.285 | 0.244 | 0.285 | 0.285 | 0.179 | 0.179 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, H.; Zou, G. Cross-Validation Model Averaging for Generalized Functional Linear Model. Econometrics 2020, 8, 7. https://doi.org/10.3390/econometrics8010007

Zhang H, Zou G. Cross-Validation Model Averaging for Generalized Functional Linear Model. Econometrics. 2020; 8(1):7. https://doi.org/10.3390/econometrics8010007

Chicago/Turabian StyleZhang, Haili, and Guohua Zou. 2020. "Cross-Validation Model Averaging for Generalized Functional Linear Model" Econometrics 8, no. 1: 7. https://doi.org/10.3390/econometrics8010007

APA StyleZhang, H., & Zou, G. (2020). Cross-Validation Model Averaging for Generalized Functional Linear Model. Econometrics, 8(1), 7. https://doi.org/10.3390/econometrics8010007