Comparison of Management and Orchestration Solutions for the 5G Era

Abstract

1. Introduction

- Providing a comprehensive survey of three representative widely accepted and established open-source MANO frameworks.

- Comparing the functionalities provided by each of the major components comprising the MANO frameworks under discussion.

- Defining the appropriate test procedures based on well-known functional and operational KPIs defined in the literature.

- Defining a sandbox environment supporting automatic test execution, common to all MANO frameworks to ensure fairness among them.

- Providing an in-depth comparison of MANO frameworks, based on sandbox environment testing, highlighting advantages and disadvantages of each solution, to guide further development.

2. Related Work

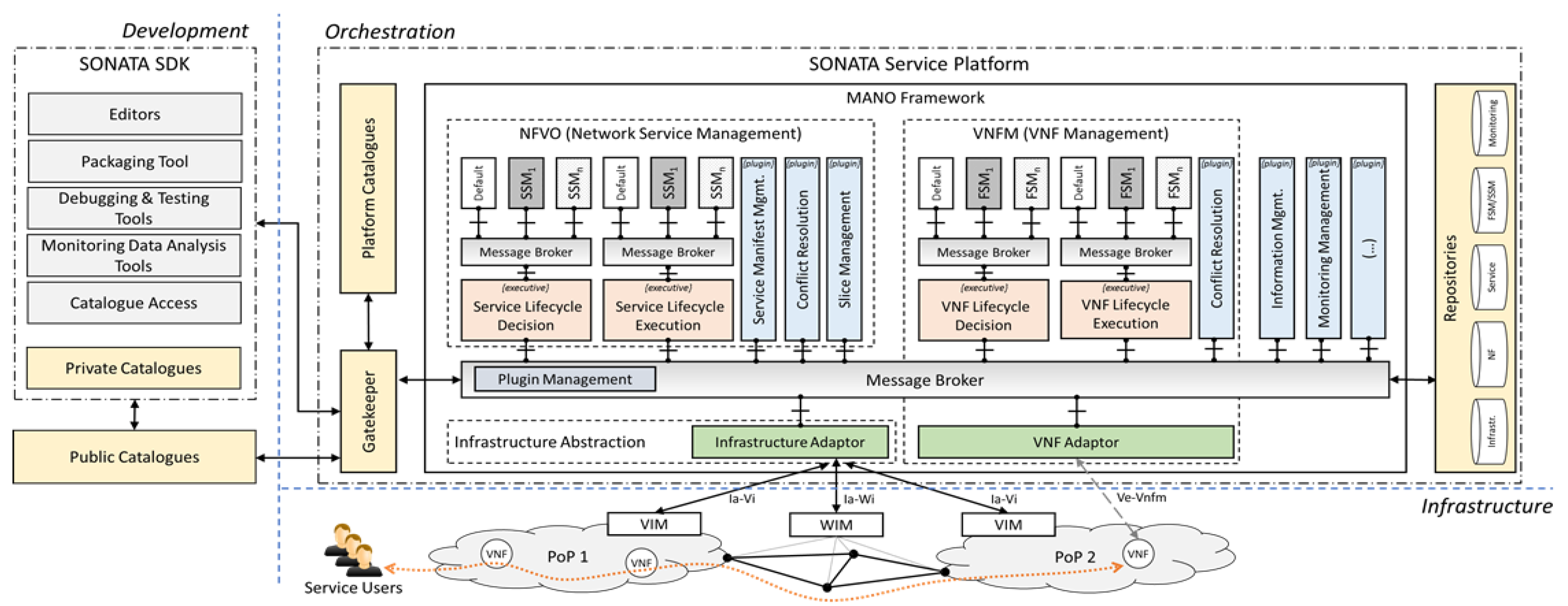

2.1. Open-Source MANO Frameworks Overview

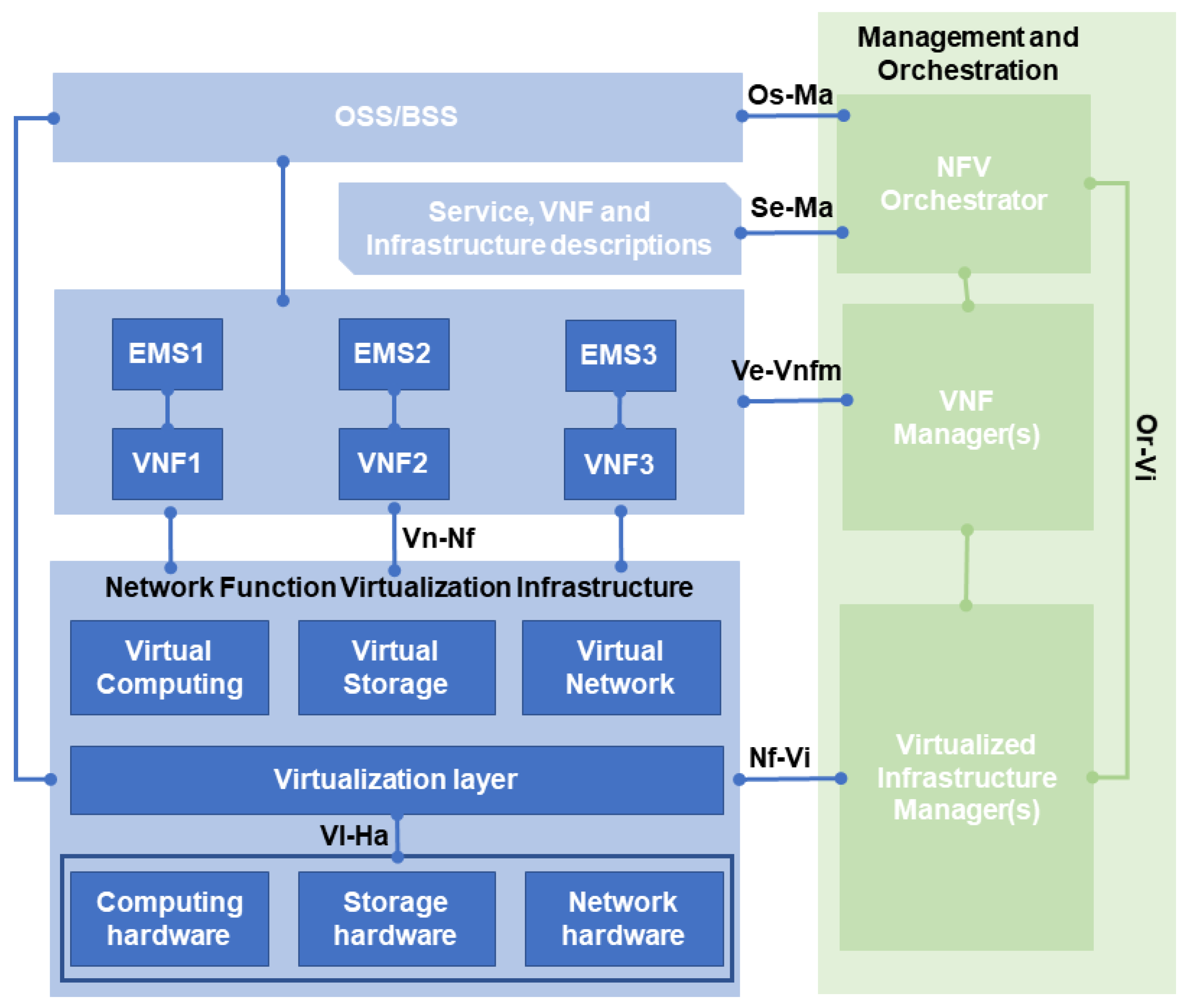

- The Virtualized Infrastructure Manager (VIM): Responsible for coordinating the functionalities that are used to control and manage the interaction of a VNF with computing, storage and network resources, as well as their virtualization. Multiple VIMs instances may be deployed, one per different type of NFVI technology.

- The VNF Manager: Responsible for the Life-Cycle Management (LCM) of one or multiple VNFs. Several VNF Managers may be deployed.

- The NFV Orchestrator (NFVO): Responsible for the orchestration and management of the NS deployed on the NFVIs.

2.2. Motivation and State of the Art

3. Comparison of the MANO Components

3.1. External API

3.2. Ns Life-Cycle Management

3.3. Infrastructure Abstraction

3.4. Monitoring Framework

3.5. Slice Manager

3.6. SLA Manager

3.7. Policy Manager

4. Performance Evaluation

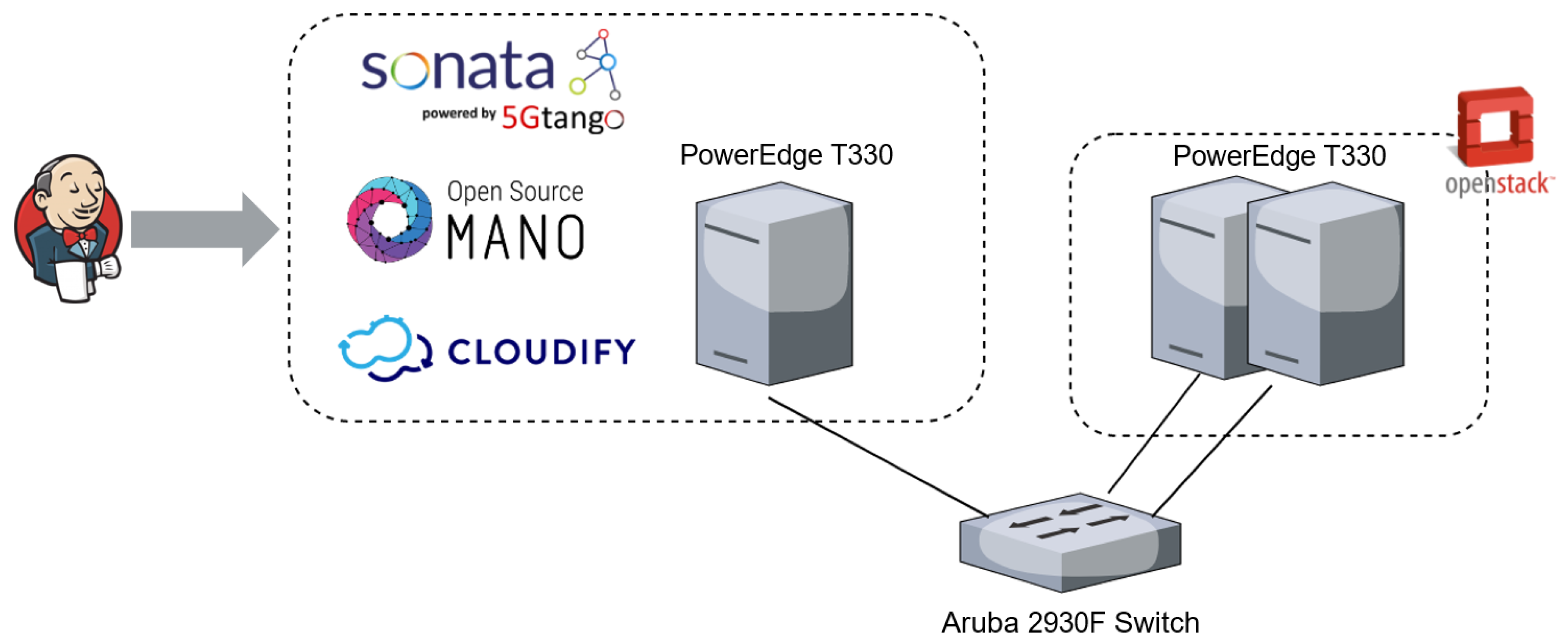

4.1. Testbed Setup

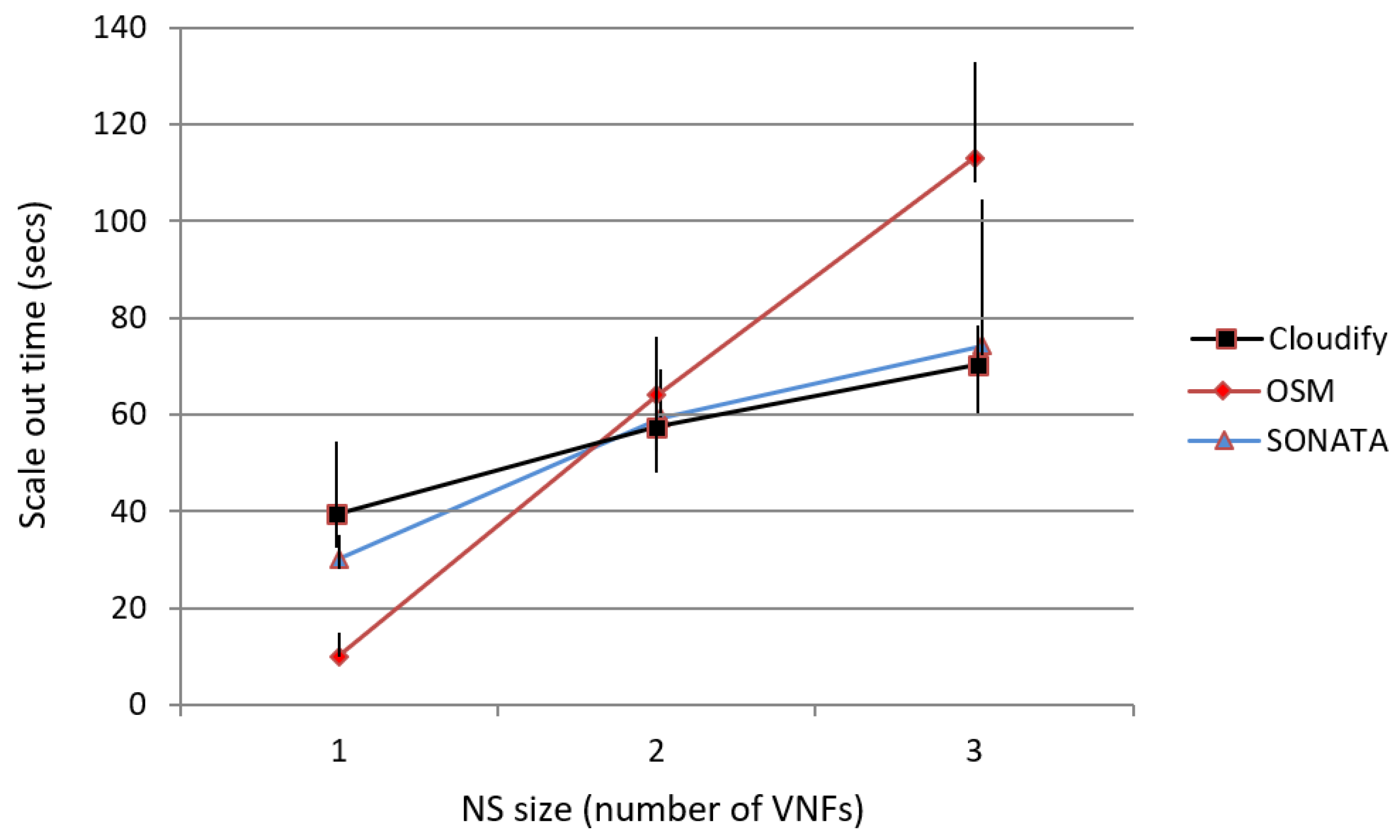

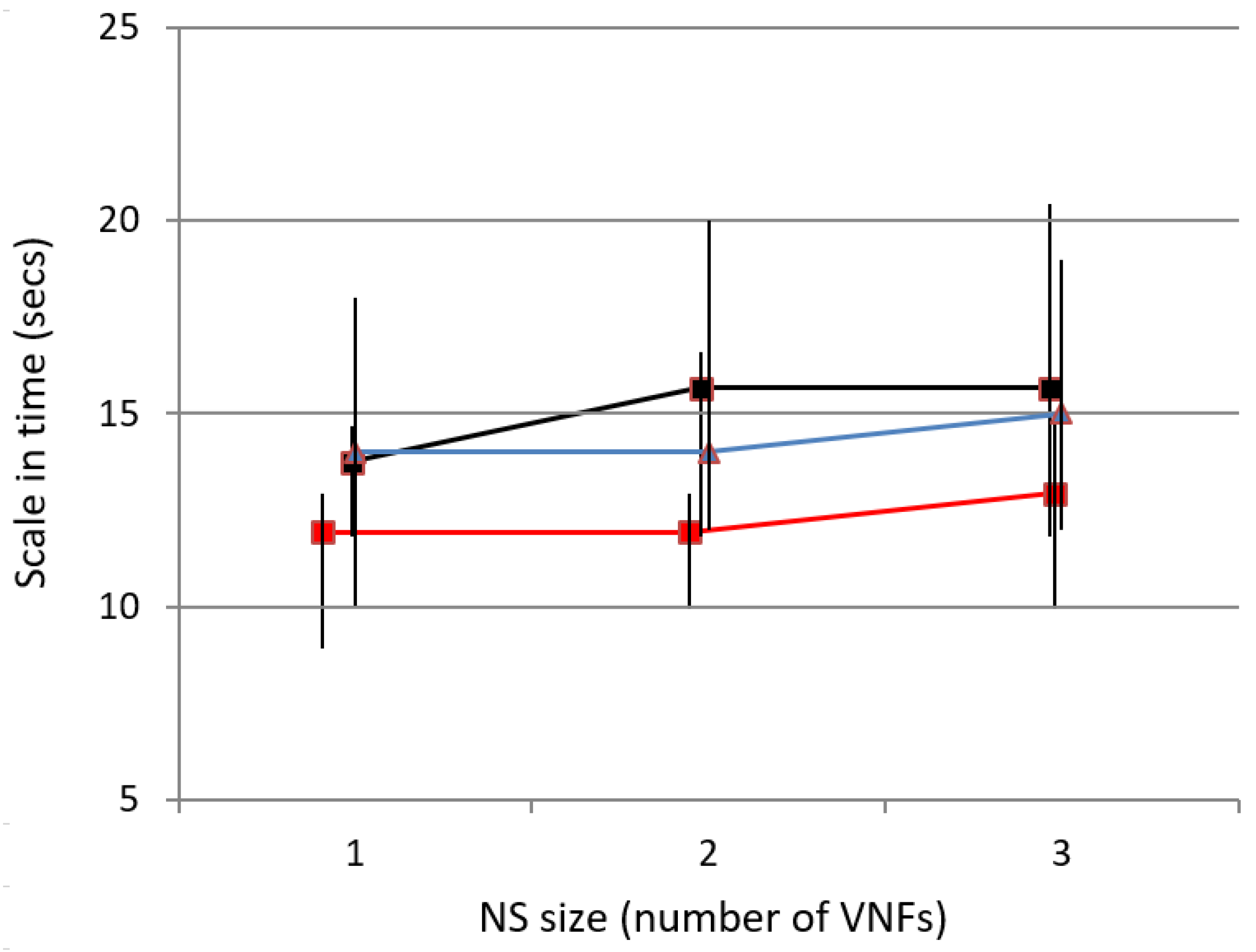

4.2. Comparison of Functional KPIs

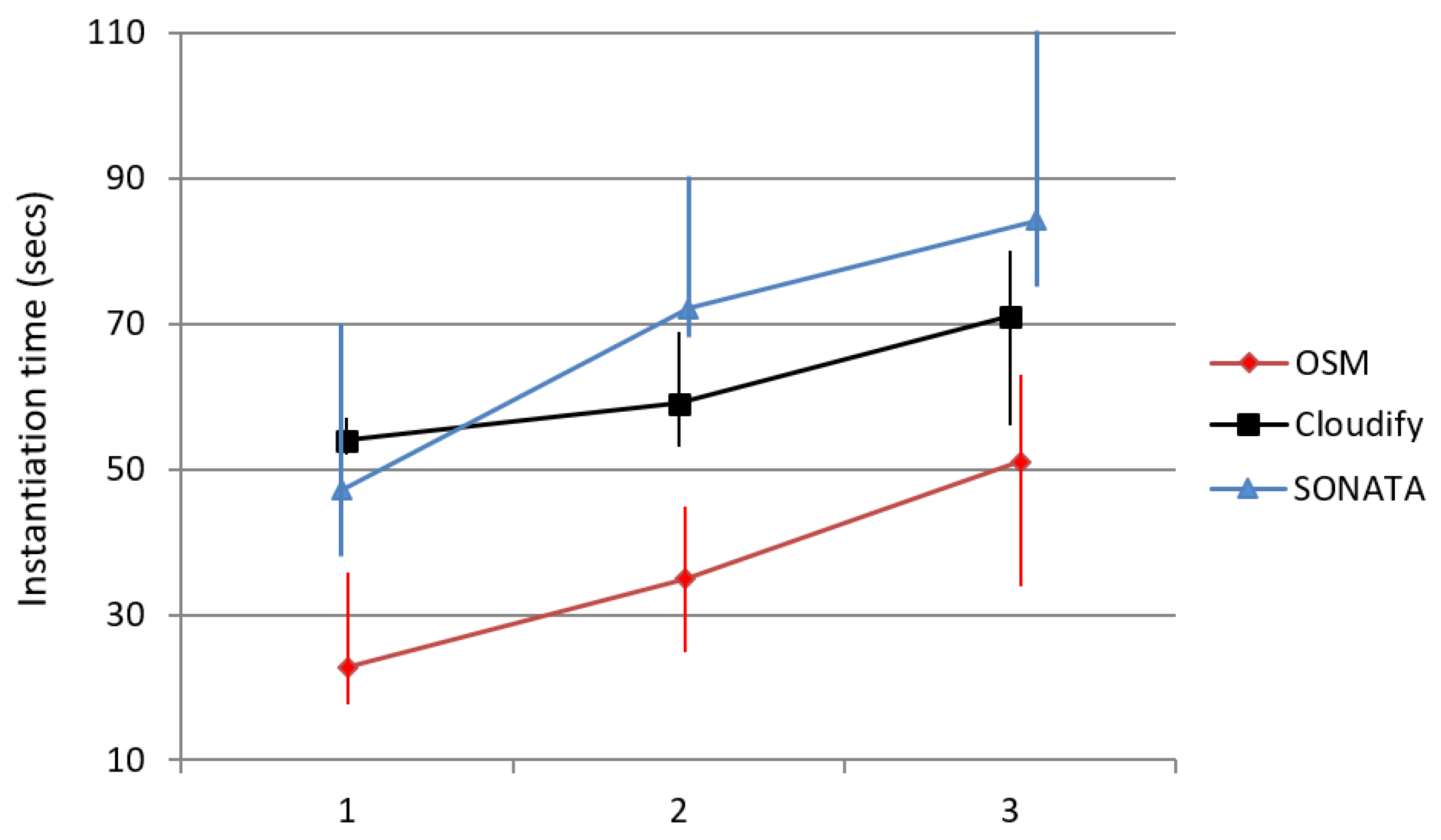

4.3. Testing Scenarios for Operational KPIs

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| AWS | Amazon Web services |

| BSS | Business Support System |

| CNCF | Cloud-native Computing Foundation |

| CNFs | Cloud-native Network Functions |

| CLI | Command Line Interpreter |

| DPD | Deployment Process Delay |

| EMS | Element Management System |

| EOE | Embedded Orchestration Engine |

| FG | Forwarding Graph |

| FSM | Function Specific Manager |

| GSMA | Global System for Mobile Communications Association |

| HA | High Availability |

| IoT | Internet of Things |

| KPI | Key Performance Indicator |

| LCM | Life-Cycle Management |

| LTE | Long-Term Evolution |

| NFV | Network Function Virtualization |

| NSD | Network Service Descriptor |

| NS | Network Service |

| NSI | Network Slice Instance |

| NST | Network Slice Template |

| NFVI | NFV Infrastructure |

| NFVO | NFV Orchestrator |

| NBI | Northbound Interface |

| OPD | On-boarding Process Delay |

| ONAP | Open Networking Automation Platform |

| MANO | Management and Orchestration |

| OSS | Operations Support System |

| OSM | Open-Source MANO |

| QoD | Quality of Decision |

| QoE | Quality of Experience |

| QoS | Quality of Service |

| RO | Resource Orchestration |

| ROD | Run-time Orchestration Delay |

| SLA | Service-Level Agreement |

| SO | Service Orchestration |

| SSM | Service-Specific Manager |

| SLA-I | SLA Instance |

| SLA-T | SLA Template |

| SDN | Software Defined Networking |

| SP | Service Platform |

| T-API | Transport API |

| VDU | Virtual Deployment Unit |

| VM | Virtual Machine |

| VNF | Virtual Network Function |

| VTN | Virtual Tenant Network |

| VIM | Virtualized Infrastructure Manager |

| VNFFG | VNF Forwarding Graph |

| VNFM | VNF Manager |

| WIM | WAN Infrastructure Manager |

References

- Flynn, K. The MobileBroadband Standard. Available online: https://www.3gpp.org/release-16 (accessed on 19 November 2019).

- Soenen, T.; Rossem, S.V.; Tavernier, W.; Vicens, F.; Valocchi, D.; Trakadas, P.; Karkazis, P.; Xilouris, G.; Eardley, P.; Kolometsos, S.; et al. Insights from SONATA: Implementing and Integrating a Microservice-Based NFV Service Platform with a DevOps Methodology. In Proceedings of the NOMS 2018—IEEE/IFIP Network Operations and Management Symposium, Taipei, Taiwan, 23–27 April 2018. [Google Scholar]

- Network Functions Virtualisation (NFV)—Architectural Framework. Available online: https://www.etsi.org/deliver/etsi_gs/NFV/001_099/002/01.02.01_60/gs_NFV002v010201p.pdf (accessed on 10 November 2019).

- De Sousa, N.F.S.; Perez, D.A.L.; Rosa, R.V.; Santos, M.A.; Rothenberg, C.E. Network Service Orchestration: A Survey. Comput. Commun. 2019, 142–143, 69–94. [Google Scholar] [CrossRef]

- Mijumbi, R.; Serrat, J.; Gorricho, J.-L.; Bouten, N.; Turck, F.D.; Boutaba, R. Network Function Virtualization: State-of-the-Art and Research Challenges. IEEE Commun. Surv. Tutor. 2016, 18, 236–262. [Google Scholar] [CrossRef]

- Rotsos, C.; King, D.; Farshad, A.; Bird, J.; Fawcett, L.; Georgalas, N.; Gunkel, M.; Shiomoto, K.; Wang, A.; Mauthe, A.; et al. Network Service Orchestration Standardization: A Technology Survey. Comput. Stand. Interfaces 2017, 54, 203–215. [Google Scholar] [CrossRef]

- OSM Release FIVE Technical Overview. Available online: https://osm.etsi.org/images/ OSM-Whitepaper-TechContent-ReleaseFIVE-FINAL.pdf (accessed on 5 November 2019).

- Edge Networking & Network Orchestration. Available online: https://cloudify.co/ (accessed on 29 December 2019).

- Pol, A.; Vilalta, R.; Munoz, R.; Vicens, F.; Carrillo, S.C.; Roman, A.; Trakadas, P.; Karkazis, P.; Kapassa, E.; Touloupou, M.; et al. Advanced NFV Features Applied to Multimedia Real-Time Communications Use Case. In Proceedings of the 2019 IEEE 2nd 5G World Forum (5GWF), Dresden, Germany, 30 September–2 October 2019. [Google Scholar]

- Shekhawat, Y.; Touloupou, M.; Kapassa, E.; Kyriazis, D.; Xilouris, G.; Portabales, A.R.; Piesk, J.; Sprengel, H.; Gomez, I.D.; Vicens, F.; et al. Orchestrating Live Immersive Media Services Over Cloud Native Edge Infrastructure. In Proceedings of the 2019 IEEE 2nd 5G World Forum (5GWF), Dresden, Germany, 30 September–2 October 2019. [Google Scholar]

- Caruso, G.; Nucci, F.; Gordo, O.P.; Rizou, S.; Magen, J.; Agapiou, G.; Trakadas, P. Embedding 5G Solutions Enabling New Business Scenarios in Media and Entertainment Industry. In Proceedings of the 2019 IEEE 2nd 5G World Forum (5GWF), Dresden, Germany, 30 September–2 October 2019. [Google Scholar]

- Zahariadis, T.; Voulkidis, A.; Karkazis, P.; Trakadas, P. Preventive Maintenance of Critical Infrastructures Using 5G Networks & Drones. In Proceedings of the 2017 14th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS), Lecce, Italy, 29 August–1 September 2017. [Google Scholar]

- Muthanna, A.; Ateya, A.A.; Khakimov, A.; Gudkova, I.; Abuarqoub, A.; Samouylov, K.; Koucheryavy, A. Secure and Reliable IoT Networks Using Fog Computing with Software-Defined Networking and Blockchain. J. Sens. Actuator Netw. 2019, 8, 15. [Google Scholar] [CrossRef]

- Fröhlich, A.A. SmartData: An IoT-Ready API for Sensor Networks. Int. J. Sens. Netw. 2018, 28, 202. [Google Scholar] [CrossRef]

- Ahmed, K.; Blech, J.; Gregory, M.; Schmidt, H. Software Defined Networks in Industrial Automation. J. Sens. Actuator Netw. 2018, 7, 33. [Google Scholar] [CrossRef]

- Simoes, N. NFV Management and Orchestration: Analysis of OSM and ONAP. In Proceedings of the MAPiS 2019 1st MAP-i Seminar Proceedings, Aveiro, Portugal, 31 January 2019; pp. 1–5. [Google Scholar]

- Nogales, B.; Vidal, I.; Lopez, D.R.; Rodriguez, J.; Garcia-Reinoso, J.; Azcorra, A. Design and Deployment of an Open Management and Orchestration Platform for Multi-Site NFV Experimentation. IEEE Commun. Mag. 2019, 57, 20–27. [Google Scholar] [CrossRef]

- Peuster, M.; Schneider, S.; Zhao, M.; Xilouris, G.; Trakadas, P.; Vicens, F.; Tavernier, W.; Soenen, T.; Vilalta, R.; Andreou, G.; et al. Introducing Automated Verification and Validation for Virtualized Network Functions and Services. IEEE Commun. Mag. 2019, 57, 96–102. [Google Scholar] [CrossRef]

- JAAS: Juju as a Service. Available online: https://jaas.ai/docs (accessed on 29 November 2019).

- Trakadas, P.; Karkazis, P.; Leligou, H.C.; Zahariadis, T.; Tavernier, W.; Soenen, T.; Rossem, S.; Murillo, M. Scalable monitoring for multiple virtualized infrastructures for 5G services. In Proceedings of the The International Symposium on Advances in Software Defined Networking and Network Functions Virtualization (SoftNetworking 2018), Athens, Greece, 26 April 2018; pp. 1–4. [Google Scholar]

- Prometheus. From Metrics to Insight. Available online: https://prometheus.io/ (accessed on 3 November 2019).

- NFV-EVE012 Report on Network Slicing Support with ETSI NFV Architecture Framework. Available online: https://www.etsi.org/deliver/etsi_gr/NFV-EVE/001_099/012/03.01.01_60/gr_NFV-EVE012v030101p.pdf (accessed on 3 November 2019).

- Study on Management and Orchestration of Network Slicing for Next Generation Network. Available online: http://www.3gpp.org/ftp//Specs/archive/28_series/28.801/ (accessed on 3 November 2019).

- Soenen, T.; Vicens, F.; Bonnet, J.; Parada, C.; Kapassa, E.; Touloupou, M.; Fotopoulou, E.; Zafeiropoulos, A.; Pol, A.; Kolometsos, S.; et al. SLA-controlled Proxy Service Through Customisable MANO Supporting Operator Policies. In Proceedings of the IFIP/IEEE Symposium on Integrated Network and Service Management (IM), Arlington, VA, USA, 8–12 April 2019; pp. 707–708. [Google Scholar]

- Web Services Agreement Specification (WS-Agreement). Available online: https://www.ogf.org/documents/GFD.192.pdf (accessed on 3 November 2019).

- Gouvas, P.; Fotopoulou, E.; Zafeiropoulos, A.; Vassilakis, C. A Context Model and Policies Management Framework for Reconfigurable-by-Design Distributed Applications. Procedia Comput. Sci. 2016, 97, 122–125. [Google Scholar] [CrossRef][Green Version]

- Yilma, G.M.; Yousaf, F.Z.; Sciancalepore, V.; Costa-Perez, X. Tutorial Paper Benchmarking Open-Source NFV MANO Systems: OSM and ONAP. Available online: https://www.researchgate.net/publication/332630638_Tutorial_Paper_Benchmarking_Open-Source_NFV _MANO_Systems_OSM_and_ONAP (accessed on 20 November 2019).

- Cloudify Kubernetes Plugin. Available online: https://docs.cloudify.co/5.0.0/working_with/official_plugins /configuration/kubernetes (accessed on 20 November 2019).

| Component | SONATA | OSM | Cloudify |

|---|---|---|---|

| External API | Asynchronous RESTful API/Users and Services authorization | ||

| NS Life-Cycle Management | VNF and NS level | VNF level | VNF level |

| (SSM and FSM) | (Juju charms) | (EOE) | |

| Infrastructure Abstraction | VMs/Containers/Emulator | VMs | VMs/Containers |

| Monitoring Framework | Run-time rules reconfiguration | ||

| VM/container/service | VM | System resources/custom | |

| metrics | metrics | metrics | |

| Slice Manager | Based on 3GPP specs | Based on 3GPP specs | N/A |

| SLA Manager | NS level SLAs | NS Level SLAs | |

| Easy user interface | N/A | Definition in NSD | |

| licensing support | |||

| Policy Manager | Easy user interface | Self-Healing Actions | |

| Multiple Policies per NS | Single Policy per NS | Single Policy per NS | |

| Security Actions | Inference Engine | ||

| PowerEdge T330 | |

|---|---|

| CPU | Intel Xeon E3-1240 v6 3.7 GHz, 8 MB cache, 4 Cores/8 Threads, TDP 72 W |

| RAM | 16 GB (1 × 16 GB) 2400 MT/s DDR4 ECC UDIMM |

| HDD | 300 GB 10 K RPM SAS 12 GBps 2.5 in Hot-plug Hard Drive, 3.5 in HYB CARR |

| NET | Intel Ethernet I350 QP 1 GB Server Adapter |

| Operating Systems | |

|---|---|

| SONATA | Ubuntu 18.04.3 LTS |

| OSM | Ubuntu 18.04.3 LTS |

| Cloudify | CentOS 7 |

| OpenStack | Ubuntu 18.04.3 LTS |

| Functional Characteristic | SONATA | OSM | CLOUDIFY |

|---|---|---|---|

| Resource footprint | Low (2 vCPU, 8 GB, 40 GB HDD) | ||

| Platform installation time | 30 min | 25 min | 25 min |

| Supported VIM types | OpenStack | OpenStack | OpenStack |

| OpenVIM | VMware | ||

| Kubernetes | OpenVIM | ||

| AWS | |||

| CLI support | Yes | Yes | Yes |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Trakadas, P.; Karkazis, P.; Leligou, H.C.; Zahariadis, T.; Vicens, F.; Zurita, A.; Alemany, P.; Soenen, T.; Parada, C.; Bonnet, J.; et al. Comparison of Management and Orchestration Solutions for the 5G Era. J. Sens. Actuator Netw. 2020, 9, 4. https://doi.org/10.3390/jsan9010004

Trakadas P, Karkazis P, Leligou HC, Zahariadis T, Vicens F, Zurita A, Alemany P, Soenen T, Parada C, Bonnet J, et al. Comparison of Management and Orchestration Solutions for the 5G Era. Journal of Sensor and Actuator Networks. 2020; 9(1):4. https://doi.org/10.3390/jsan9010004

Chicago/Turabian StyleTrakadas, Panagiotis, Panagiotis Karkazis, Helen C. Leligou, Theodore Zahariadis, Felipe Vicens, Arturo Zurita, Pol Alemany, Thomas Soenen, Carlos Parada, Jose Bonnet, and et al. 2020. "Comparison of Management and Orchestration Solutions for the 5G Era" Journal of Sensor and Actuator Networks 9, no. 1: 4. https://doi.org/10.3390/jsan9010004

APA StyleTrakadas, P., Karkazis, P., Leligou, H. C., Zahariadis, T., Vicens, F., Zurita, A., Alemany, P., Soenen, T., Parada, C., Bonnet, J., Fotopoulou, E., Zafeiropoulos, A., Kapassa, E., Touloupou, M., & Kyriazis, D. (2020). Comparison of Management and Orchestration Solutions for the 5G Era. Journal of Sensor and Actuator Networks, 9(1), 4. https://doi.org/10.3390/jsan9010004