1. Introduction

Over the last few years, indoor navigation became a subject of research interest because people spend a considerable amount of their time in indoor spaces such as houses, office buildings, commercial centers, and transportation facilities, among others. According to the Environmental Protection Agency, people spend on the average 90% of their time indoors [

1]. Furthermore, in 2008, the percentage of people living in cities surpassed those living in rural areas, and this trend is expected to continue; the United Nations estimates that 70% of the world’s population will be living in cities and towns by 2050 [

2], where buildings and their large variety of associated spaces such as underground passages, sky bridges, garages, yards, etc. are becoming increasingly complex conglomerates of enclosed spaces [

3].

Indoor navigation consists of finding the most suitable path connecting two positions within an indoor environment while avoiding collision with obstacles. Indoor navigation is related to the specific tasks of pathfinding and route-planning; while pathfinding deals with the detection of possible routes between two locations, route-planning is directed to optimization of the route given certain constraints [

4].

One direct application of indoor pathfinding is the navigational assistance for blind or wheelchair people, where algorithms generate efficient paths according to their mobility restrictions [

5]. Local authorities are increasingly being required to make accessibility diagnoses and to take corrective actions in public spaces for enabling navigation for disabled people [

6]. Building crisis management, such as fire protection or planned terrorist attacks, is another application where planned paths can provide possible safe and efficient evacuation paths under different emergency conditions [

7]. Other applications are related to augmented reality, very important for gaming, tourism purposes, or for training emergency assistance units such as fire brigades or military and police corps. Moreover, the guidance of robots and drones inside buildings constitutes an application of interest for the future, which is also of special interest in emergency situations.

Navigational information is extracted from geometric models representing as-built environments. Traditionally, two-dimensional (2D) drawings or building layouts were used as input. However, they usually contain 2D geometric information, while three-dimensional (3D) and semantic data are missing. Successful pathfinding for a 3D indoor environment depends on the accurate and updated geometry, semantics, and topology of building components and spaces [

8]. Openings in buildings such as doors and windows, transition spaces such as staircases or corridors, and structural elements such as beams and columns are relevant for indoor planning specially in crisis management applications. The position and size of indoor obstacles are also of a great interest because they usually disturb the navigation process.

Building information models (BIMs) stand as a valuable source for facilitating navigation [

9]. However, they are usually not up to date, thereby not representing the current state of buildings and their content.

Laser scanners are well established in the robotics and remote-sensing communities for collecting and analyzing three-dimensional data of the as-built status of large-scale infrastructures. Acquisition is fast and point clouds depict the reality with high quality. The raw data from the acquisition process need to be processed in order to extract the useful information for the purpose for which it is intended. Although intense efforts were made in the last few years for extracting geometric, semantic, and topological features from point clouds [

10,

11,

12], as-built modeling is still an active research of interest.

Efforts are mainly aimed to reconstruct building components, spaces, and openings, while less attention is paid to obstacle detection. Indoor environments are often busy and cluttered with the presence of objects such as pieces of furniture that can act as obstacles in indoor pathfinding, affecting the safety of pedestrians. Although obstacle detection is fundamental for obstacle-aware indoor pathfinding, state-of-the-art research on indoor navigation does not usually deal with the obstacle issue, and routing algorithms mostly consider empty spaces [

13].

The focus of this work is to develop an obstacle-aware indoor path-planning methodology based on 3D point clouds. Most indoor models in the literature ignore real architectural characteristics such as the number of doors, openings, and windows, as well as obstacles [

14]. The contribution of this work is related to the perception and understanding the role of the as-built models of indoor environments in order to enable pathfinding according to the spatial restrictions of pedestrians. Point clouds are firstly used to reconstruct semantically rich 3D indoor models, which constitute the basis for network generation. Next, point clouds are directly used to update the network. The navigable space is extracted from the surfaces representing floors and doors, and initial paths are updated when obstacles are detected in the point cloud, and when they are considered to interrupt the navigation process.

This paper is organized as follows:

Section 2 reviews related works and establishes their differences with respect to our work.

Section 3 describes the proposed methodology.

Section 4 reports the results obtained from applying the methodology to different case studies. Finally,

Section 5 is devoted to concluding this work.

2. Related Work

Several reviews were presented in the last few years with regard to as-built modeling from point clouds [

11,

12,

15]. Most of the works addressed, with great success, the reconstruction of structural building components [

16,

17,

18] and openings [

19,

20,

21,

22], while less attention was paid to the accurate modeling of floor elements, to the modeling of the free space, and to the modeling of obstacles, although they are essential for indoor path finding.

The detailed modeling of floor elements was recently addressed by several works. The combined use of point clouds and mobile laser scanner trajectories was used to segment and classify floors into stairs, ramps, and flat surfaces in indoor environments [

23,

24]. The procedure is based on the angle formed by trajectory with regard to a horizontal plane, followed by a projection in the point cloud discretized into a voxel-based model and a region growing. The trajectory path was also used outdoors to detect road regions and thereby classify ground elements into curbs, sidewalks, ramps, and stairs from geometrical and topological features [

25,

26].

Trajectory is a valuable source of information also in terms of modeling the free space. It can be assumed that trajectory depicts the path followed by the system during the acquisition process. This fact is exploited in indoor modeling for detecting open doors, which are the transition elements between two adjacent spaces [

26,

27]. In Reference [

26], after door detection, the timestamp correspondence between trajectory and point cloud was used to subdivide the raw point cloud into connected rooms by implementing an energy-minimization function. Subdivided spaces were next submitted to a surface-based modeling approach. Trajectory was also used by Reference [

27] to label doors, floors, walls, and ceilings in indoor spaces. This work was extended in Reference [

28], in which the indoor space was subdivided by implementing morphological operations and connected components.

In terms of indoor navigation, a methodology extracting topological relationships between the spaces of an 3D indoor environment modeled from point clouds was recently presented by References [

18,

29]. Although these works solved, with great success, the modeling of indoor structures and the extraction of topological relationships between them, obstacles were not taken into account. Another very interesting work is the one presented by Reference [

30], in which indoor geographic information system (GIS) maps were used to create a topological navigation graph to perform path-planning for wheelchair users. Also, in this paper, obstacles were not considered.

Most of previous works on indoor navigation considering obstacles were mostly 2D approaches. A skeleton-abstraction algorithm which generates a graph of intervisible locations was proposed by Reference [

31]. Each node in the graph represents a point location, and each edge represents a visible connection between them. The proposal considered the presence of obstacles but as a set of 2D points, for example, from computer-aided design (CAD) files or 2D floorplans. Also, considering the 2D representation of indoor environments, a formal definition of an indoor routing graph was presented by Reference [

32], in which the presence of obstacles inside rooms was manually represented in the indoor network by adding nodes around the obstacles. A data model to support pathfinding for vehicles among moving obstacles in forest fires was implemented in Reference [

33]. Static obstacles such as trees and buildings, and dynamic simulated obstacles such as the spread of fire were considered in the pathfinding. The GIS-based simulation was tested in a case study, in which the geometric model, composed by the terrain, road network, trees, and buildings, was obtained from OpenStreetMap, and the fire spread was the obstacle represented by moving polygons crossing the network. Mortari et al. [

14] presented a network-generation strategy taking into account obstacles in indoor scenes. The approach was based on predefined models, where obstacles were represented as 2D geometry in the floor plane. The result was a 3D network because 2D floor plans were abstracted at different height levels. Xiong et al. [

8] introduced a method that supports 3D indoor path-planning from semantic 3D models represented in LoD4 CityGML. Although the method considered obstacles, experiments for testing the method were carried out with models without the presence of obstacles. Lui et al. [

34] developed a methodology for real indoor navigation based on grid models, which were obtained from 2D floor plans where obstacles were predefined. Rodenberg [

35] proposed a methodology for indoor pathfinding based on an octree representation of indoor point clouds. The A* pathfinding algorithm, based on using heuristics to guide the search, was conducted through empty nodes and, consequently, obstacles were avoided. Li et al. [

36] also recently presented a path-planning method for drones indoors based on occupancy voxel maps, and on which the navigable space was composed of the empty voxels.

In this paper, the methodology is based on the use of point clouds which have the capability to depict the real state of the indoor scene. Point clouds are not only the basis for reconstructing permanent building elements such as envelope or openings, but they are also directly detected from point clouds, enabling obstacle-aware indoor pathfinding. Preliminary experiments on indoor pathfinding from point clouds considering obstacles are presented in Reference [

37].

3. Methodology

The methodology starts with the reconstruction of a simple surface-based indoor model including openings. Point cloud regions not belonging to permanent building elements, and consequently belonging to elements such as furniture are used for obstacle detection. Indoor paths are initially generated from the 3D building model, and the original point cloud is used to check if obstacles intersect with the indoor path. If obstacles exist, the indoor path is readapted until no obstacles are detected, enabling accurate pathfinding. The methodology is organized in terms of building reconstruction (

Section 3.1), obstacle detection (

Section 3.2), and indoor path-planning (

Section 3.3).

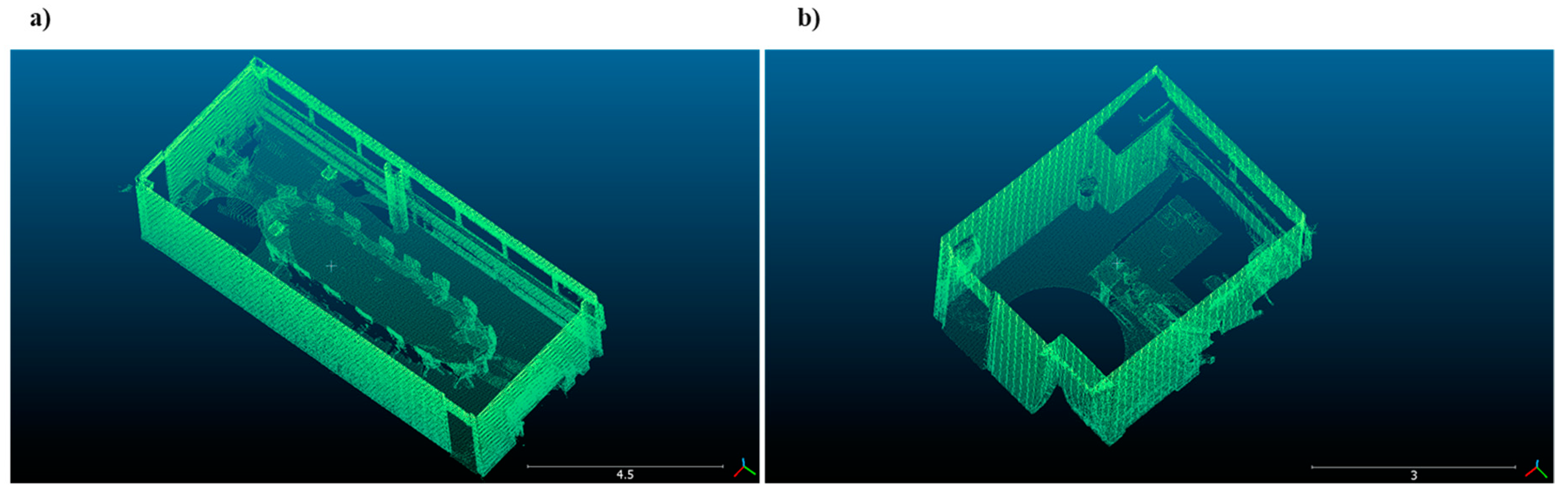

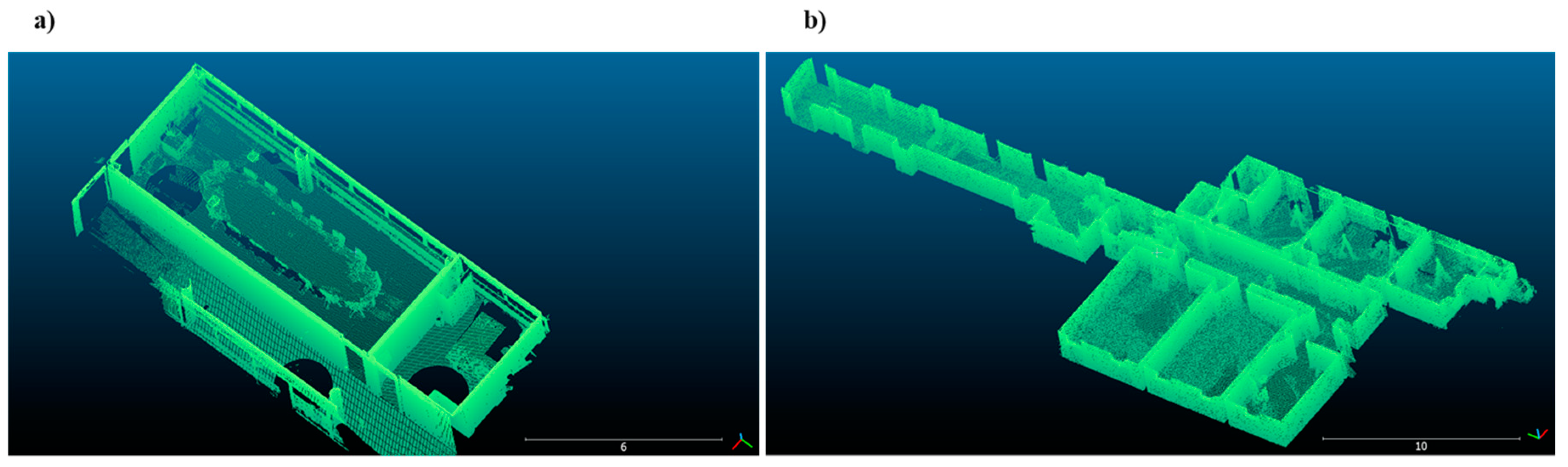

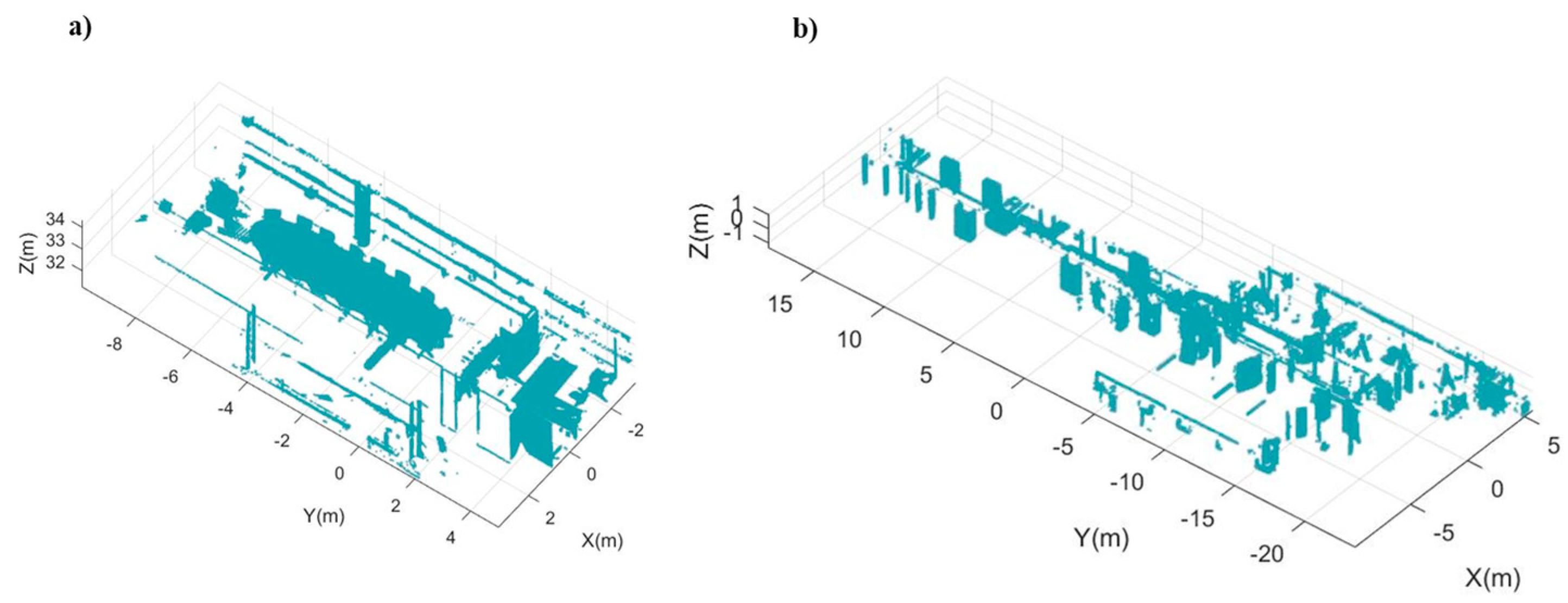

3.1. Building Reconstruction

This section includes the steps implemented to generate simple 3D models, enriched with semantics and topology, which are the basis of the indoor pathfinding algorithm. Building envelope elements, including floors, ceilings, and walls, are modeled using a data-driven approach (

Section 3.1.1), while openings, i.e., windows and doors, are extracted with a model-driven approach based on the generalized Hough transform (

Section 3.1.2). The geometry of these building elements is represented according to a gbXML schema (

Section 3.1.3), because it can be directly used for extracting the initial navigable network.

3.1.1. Envelope Reconstruction

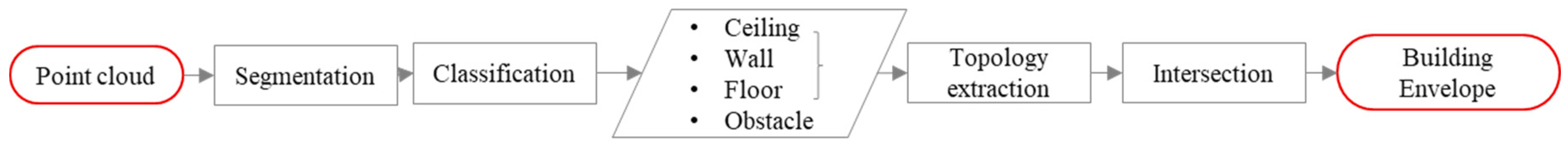

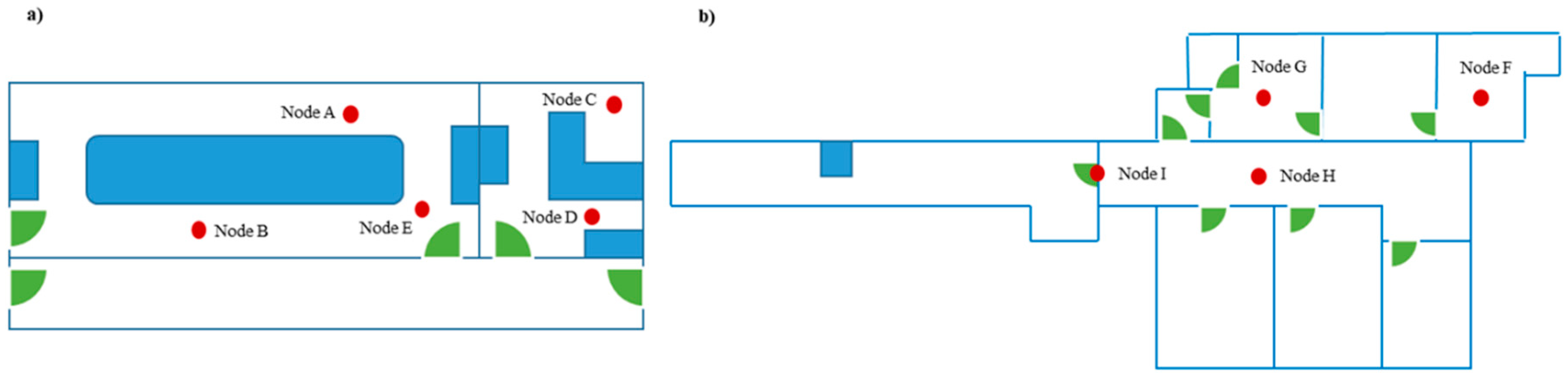

The first step of the methodology aims to parameterize the building envelope including ceilings, floors, and walls, as well as to isolate points belonging to indoor elements such as furniture, columns, plants, and other objects that can behave as obstacles to navigation (

Figure 1).

The point cloud is segmented into planar regions by implementing a region-growing algorithm. The algorithm includes in the region all those points satisfying two geometric conditions: planarity and surface smoothness. In this process, thresholds are coarse enough to ensure that window and door elements are included in the region of the wall where they are contained.

Hereafter, regions are labeled by simple geometric and topologic hierarchical classification into four classes: ceilings, floors, walls, and obstacles. Floors and ceilings are the lower and the higher horizontal regions, respectively. Walls are those vertical regions adjacent and perpendicular to floors and ceilings. Obstacles are the remaining regions, that is, all regions not satisfying the conditions to be floors, ceilings, and walls. The first three are intersected with one another in order to obtain the boundary points defining envelope elements. The last class consists of the remaining regions of an indoor scene such as those belonging to furniture. Although columns are structural elements, they are also considered as obstacles for navigation purposes if they are inside the space enclosed by envelope elements. Points belonging to this class are considered as obstacle candidates and used in the following steps for obstacle detection and routing correction (

Section 3.2 and

Section 3.3).

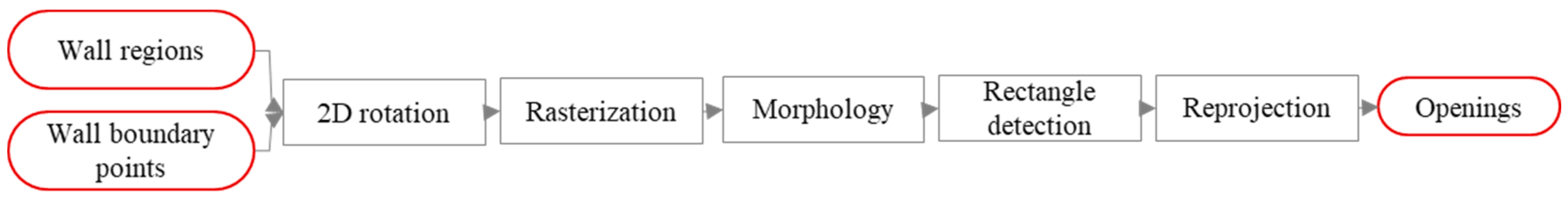

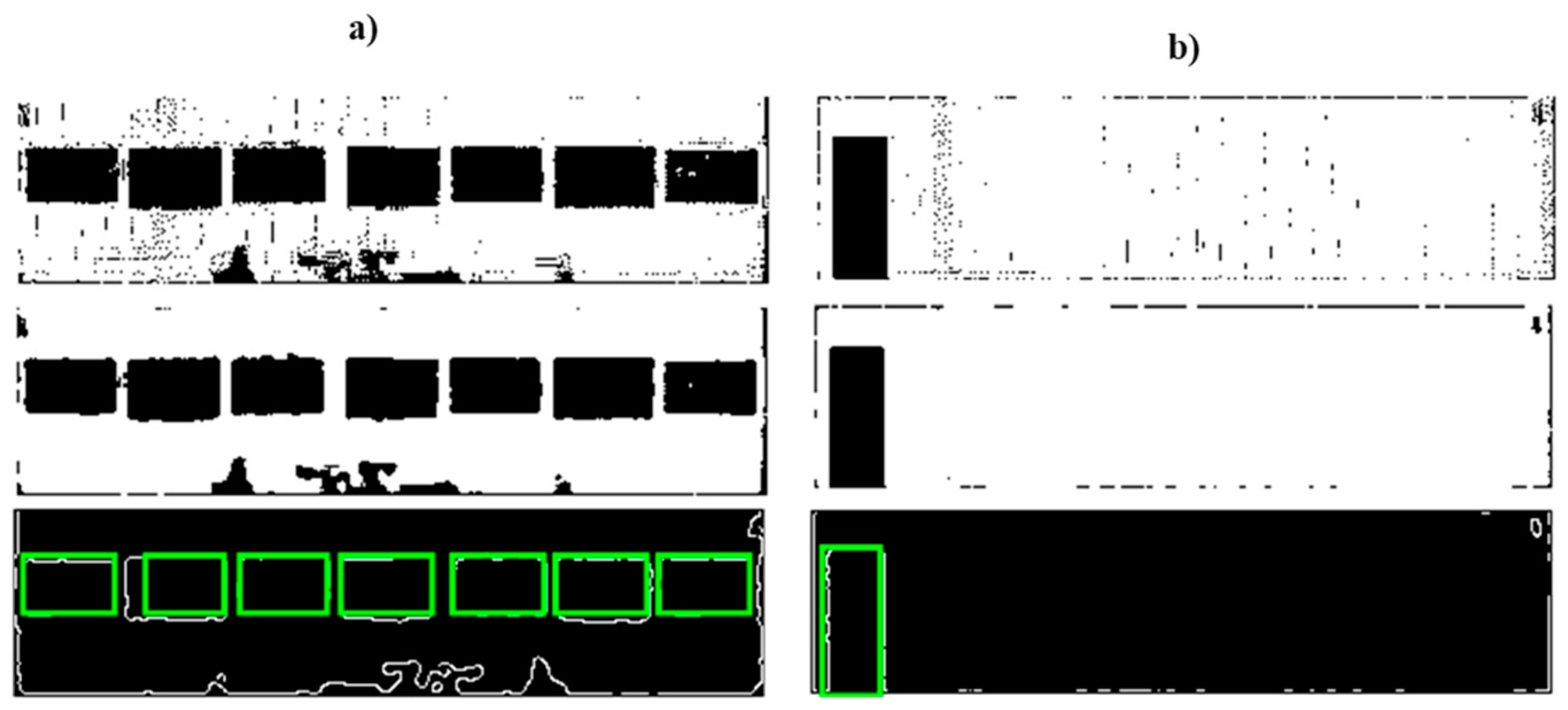

3.1.2. Detection of Openings

As in Reference [

20], windows and doors are modeled by finding parametrized rectangular shapes in images based on the generalized Hough transform (GHT) (

Figure 2). In comparison with Reference [

37], in which closed doors were extracted from color images, in this work, no color information is surveyed, and the reconstruction of openings is based on the detection of holes in wall regions.

To ensure a successful rasterization, wall regions are rotated around the

Z-axis in a way that they become parallel to the

XZ or

YZ plane, as appropriate [

38].

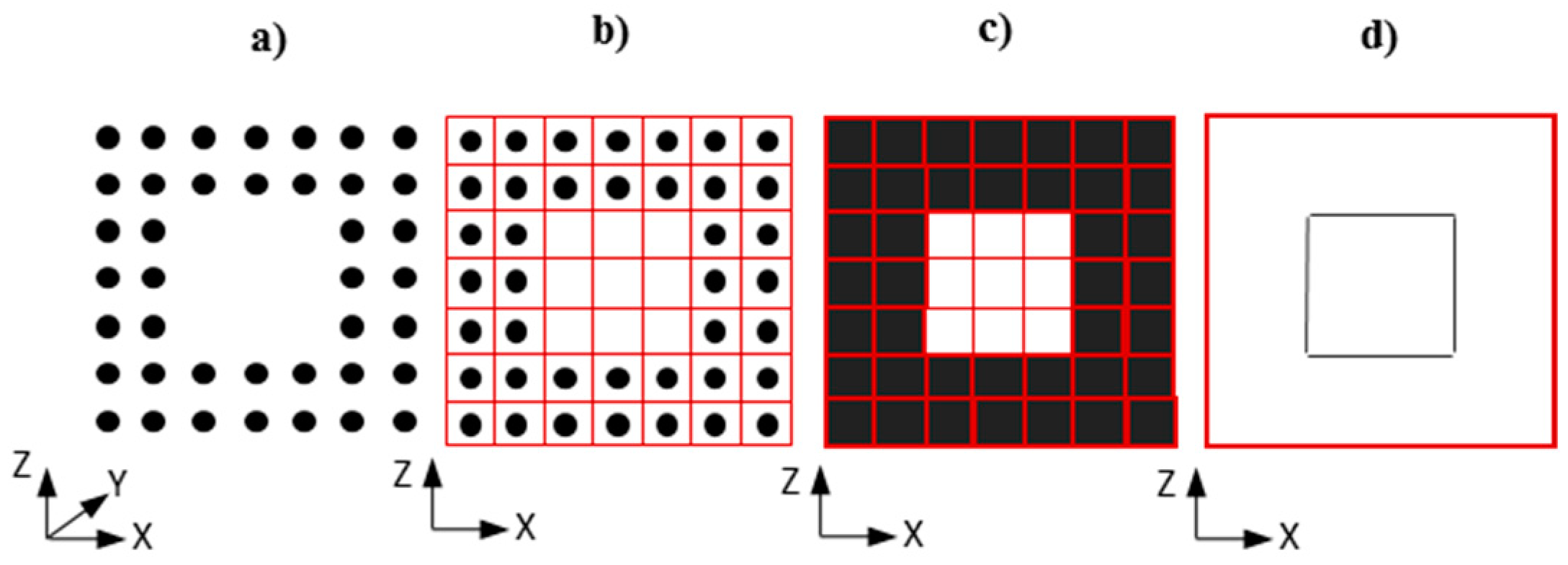

For each wall, the associated planar region (

Figure 3a) is converted to a raster by projecting the points on the wall plane defined by its boundary points (

Figure 3b). For this purpose, a rectangular matrix is created, and pixels are assigned a value of either one or zero depending on whether or not any points fall inside the pixel (

Figure 3c). The binarized raster is submitted to a median filter to reduce salt-and-pepper noise. Finally, edge detection is performed using the Canny method, which finds edges by looking for local maxima of the gradient of the image (

Figure 3d).

Edge images are the input of the GHT. Rectangles are found and detection is enforced taking into account a minimum and maximum width and length. As openings are assumed as holes in the wall region, they can be confused with holes caused by the presence of other objects with similar size and shape such as cupboards, bookshelves, etc. In this case, candidates could be pruned by analyzing the original 3D point cloud through histograms point-to-plane as in Reference [

39], in which the number and position of the peaks with regard to the wall plane made possible the classification of candidates in closed doors, open doors, or furniture. Alternatively, door candidates can be verified as doors if they are detected from two adjacent indoor spaces. If the trajectory followed by the system during acquisition is available, it can also be used for door verification.

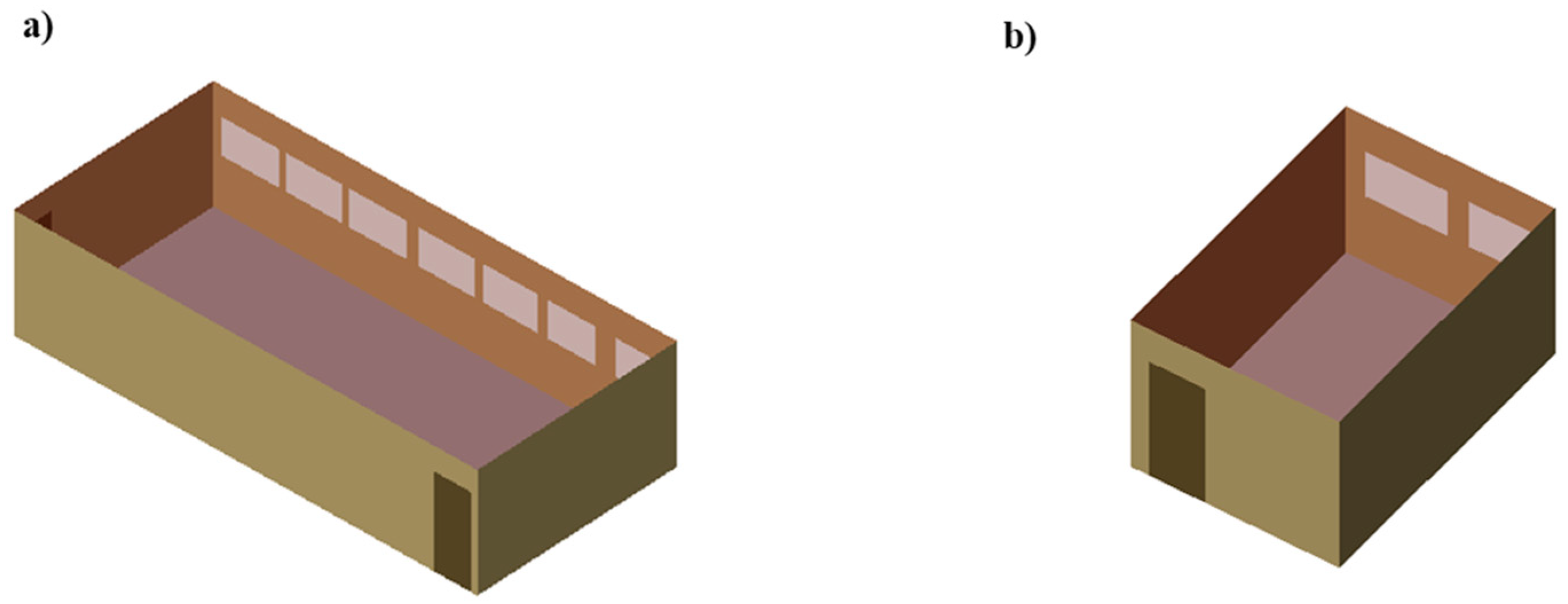

3.1.3. Model Generation

Features extracted from the previous steps are organized into an explicit format. Although other schemas such as cityGML, indoorGML, and IFC are more complete, we consider gbXML as a suitable model since detailed geometry is simplified, enabling the preservation of the essential relationships between rooms and indoor passes. The gbXML model represents buildings with their geometry, semantics, topology, and appearance. Even though the schema was developed to support energy analysis, semantic contents such as constructive materials make it appropriate for other applications such as emergency routing in a fire crisis, etc. Spaces are represented as enclosed units defined by building envelope components, and their geometry is simplified, since building components are represented as surfaces delimited by their boundary points, which are defined by Cartesian coordinates (

x,

y,

z). Consequently, building elements such as walls have no thickness. Openings are included in the walls where they are contained, and they are also represented by their boundary points. Several works already support the use of gbXML for route-planning in emergency response applications [

7,

40].

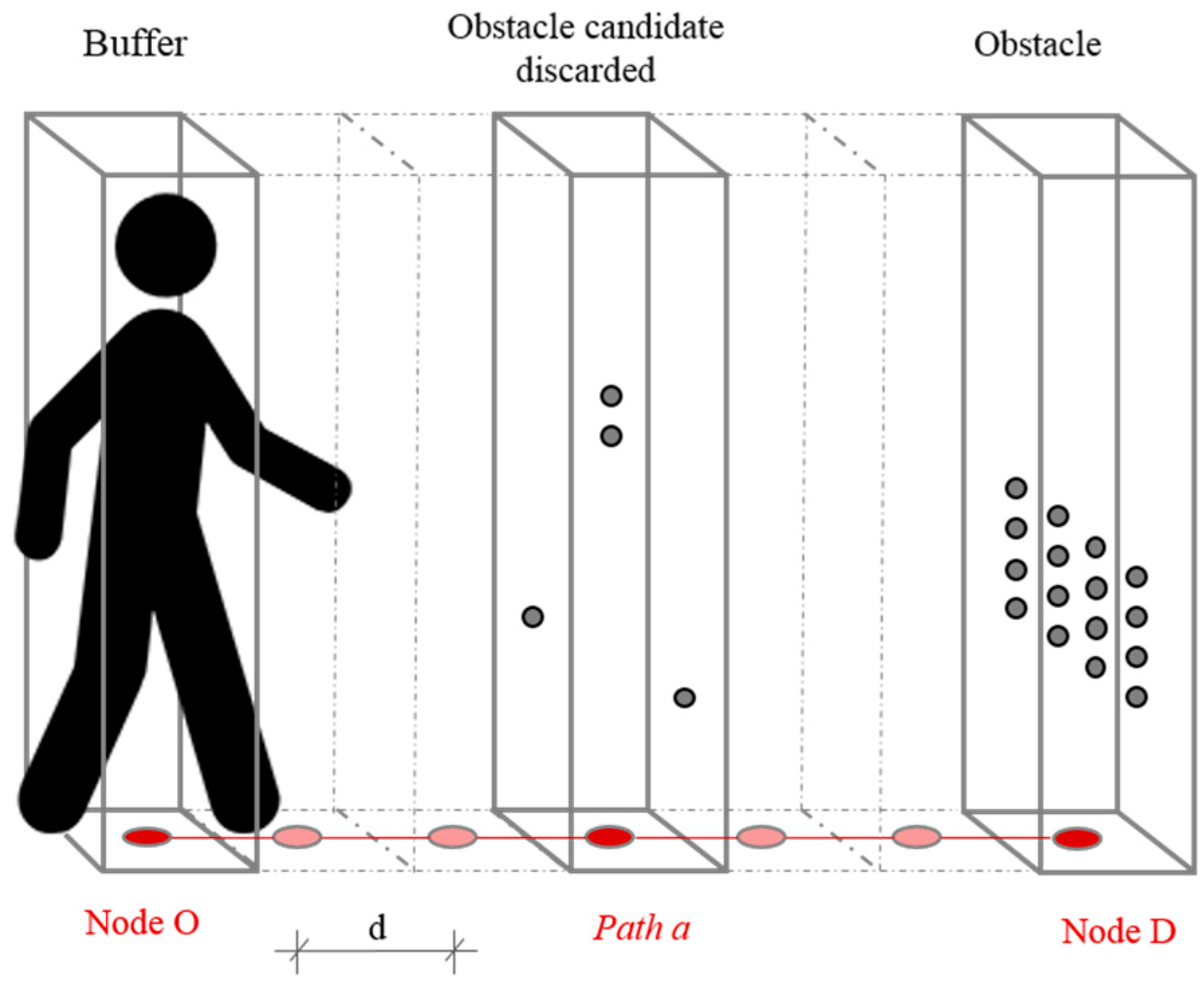

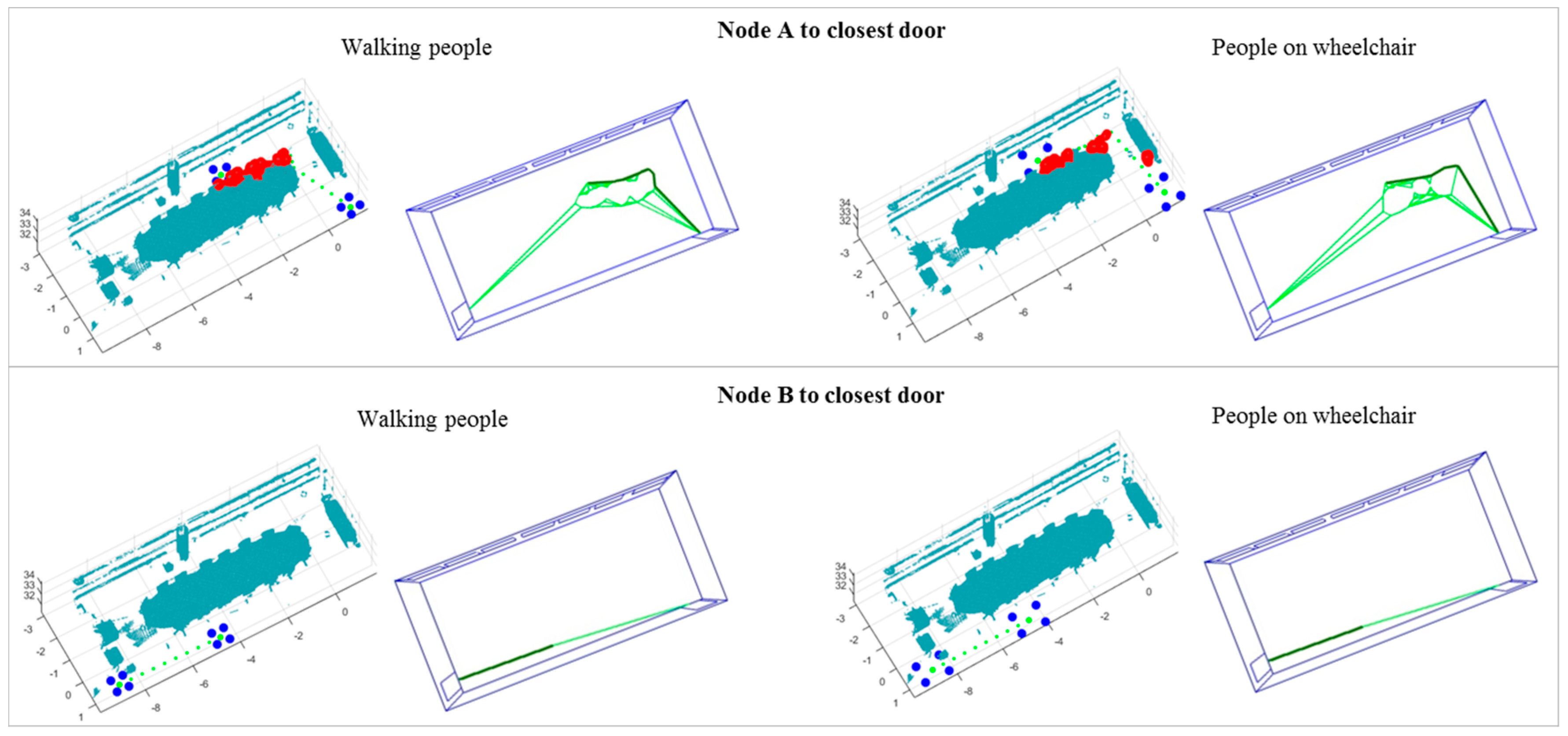

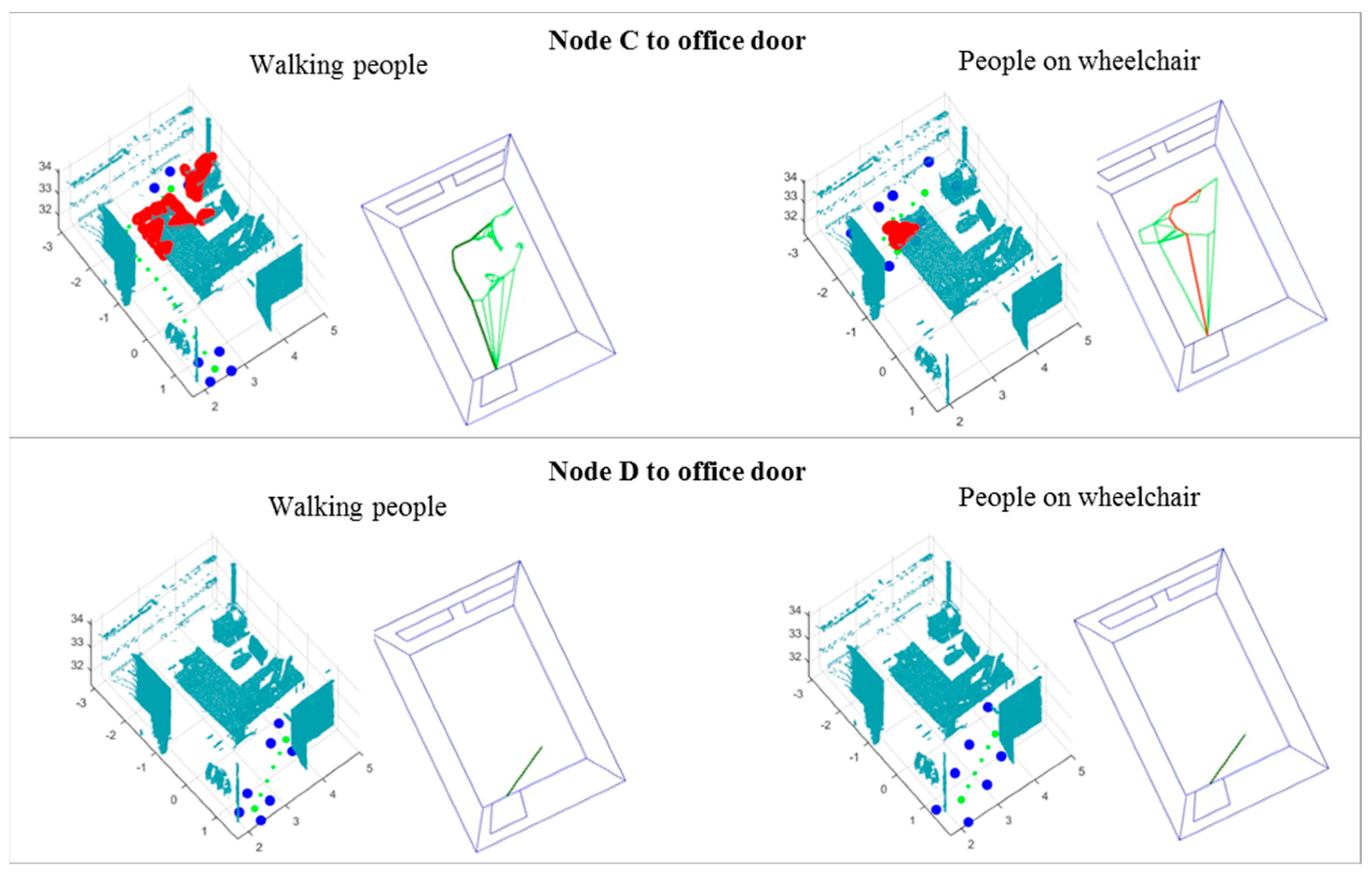

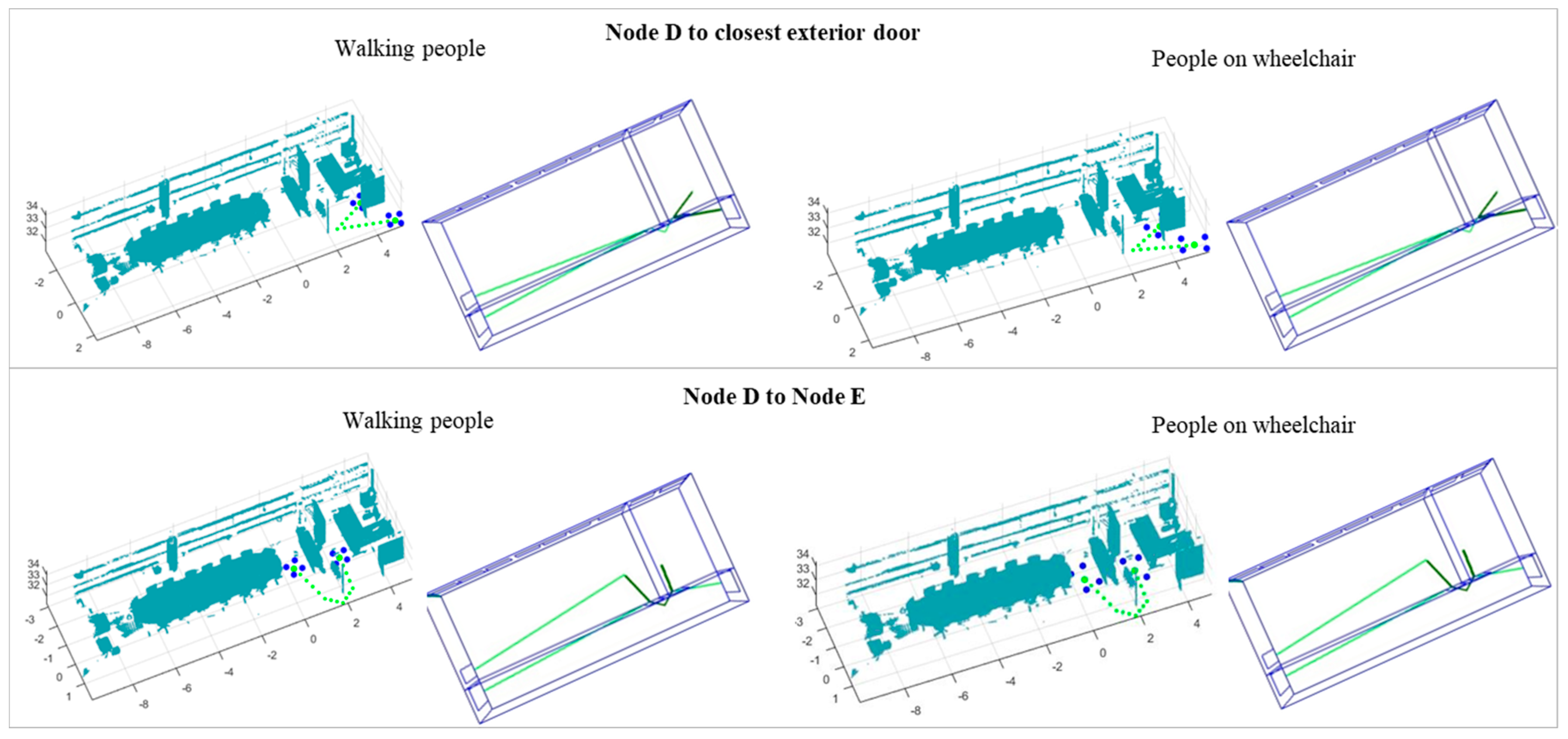

3.2. Obstacle Detection

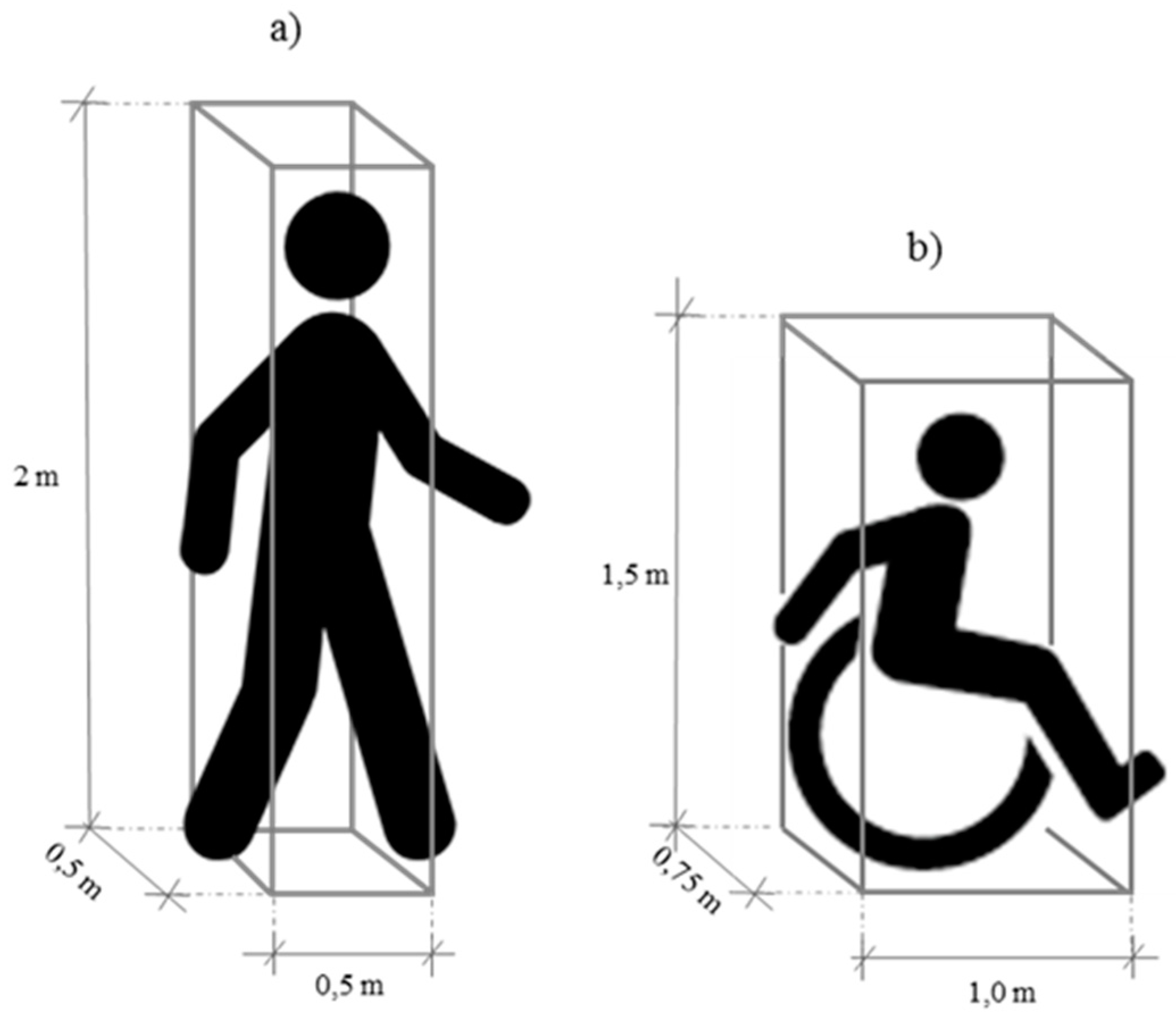

In this approach, an obstacle is defined as the presence of a set of 3D points interrupting the navigation between two nodes. The existence of obstacles is analyzed by checking if there are points inside the volume of a buffer representing a person (

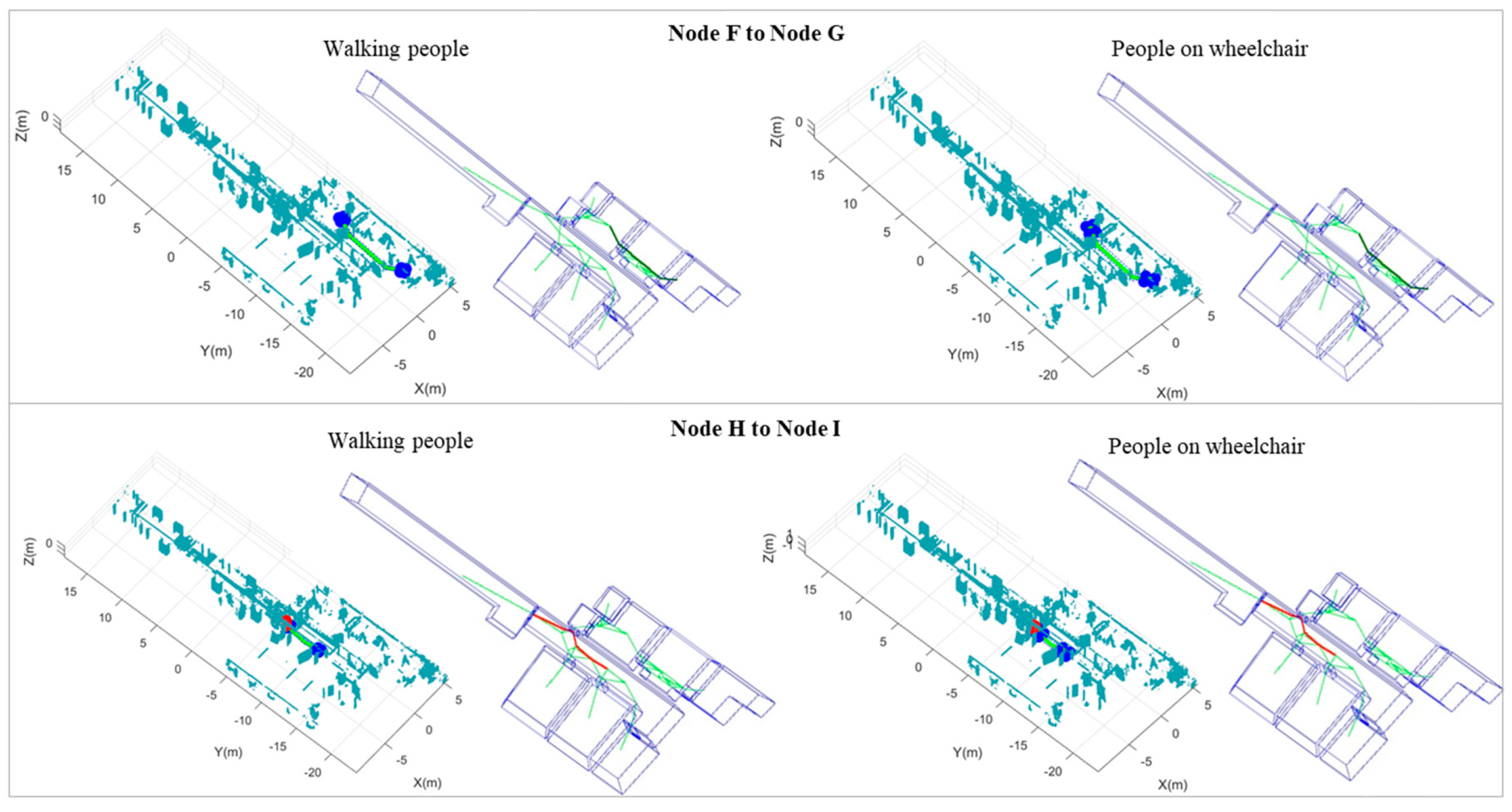

Figure 4). A rectangular prism is selected as the buffer shape. The buffer size varies upon considering the user as a walking person or a person on wheelchair.

The algorithm moves the buffer along the path (from Node O (origin) to Node D (destination)) considering a step (d) equal or inferior to the buffer depth to ensure that the whole path is analyzed. For each step, the algorithm checks if there are points of the obstacle class inside the buffer. In case a set of points is detected, it is analyzed to determine if the obstacle candidate is a true of false obstacle for indoor navigation. For instance, we consider a piece of furniture as a real obstacle in contrast to plant leaves or spurious points.

Size and aggregation are the features considered for evaluating obstacle candidates. Size is measured as the number of points, and it is related to the obstacle size. Aggregation is measured as the median of the Euclidean distance from each point to the closest point, and it is related to obstacle consistence. An obstacle is considered a true positive if it has a size greater than a size threshold and an aggregation less than an aggregation threshold.

Since both features depend on the point cloud density, the thresholds are also a function of it. In this way, the average point spacing of the entire dataset plus a 10% safety margin, and the number of points in an area equal to 10% of the buffer section are the thresholds for aggregation and size, respectively. In both cases, the parameters are selected with respect to empirical knowledge. They are tested to be appropriate for distinguishing real obstacles from spurious points or other small objects not impeding the navigation.

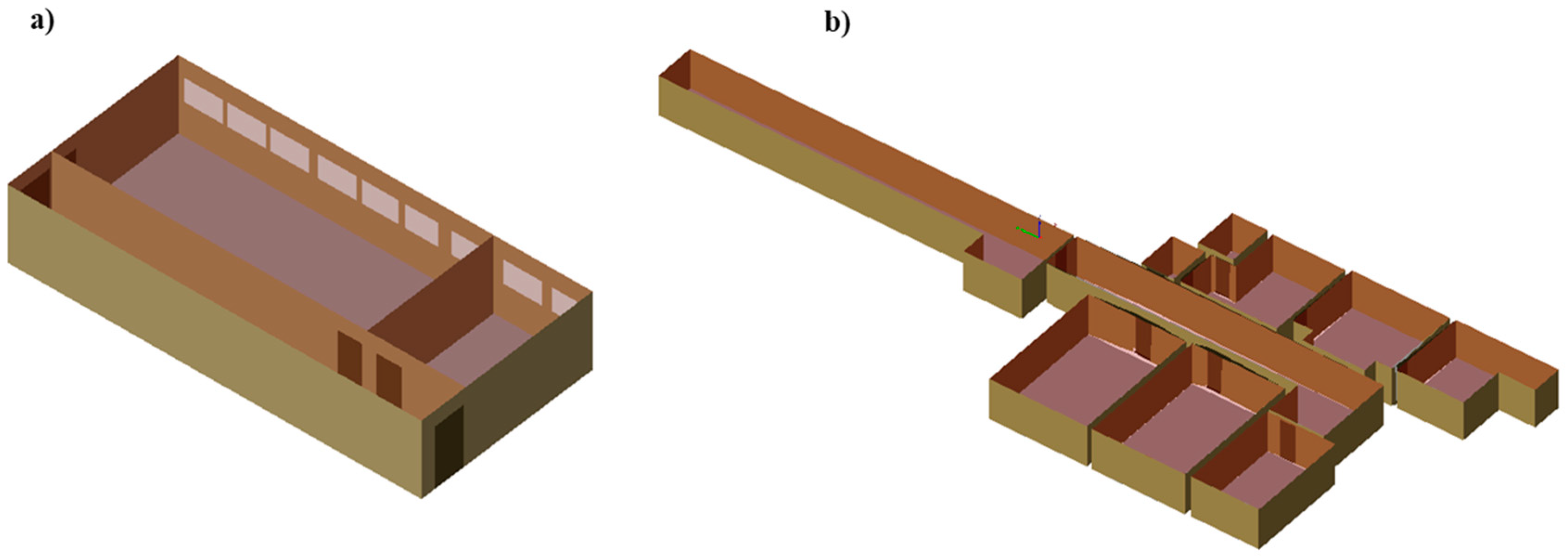

3.3. Indoor Pathfinding

The gbXML model introduced in

Section 3.1.3 is used for the construction of a navigable model, which consists of a logical model including all indoor spaces and navigable networks for individual spaces [

20]. The logical model is a graph reflecting the building topology, where graph nodes represent individual spaces, and links represent spatial relations among adjacent spaces. The navigable network is generated based on the Voronoi diagram with initial nucleation nodes located at the doors and concave corners, while additional nodes are added later in order to densify the network. Links allowed for navigation have a distance attribute used for the shortest pathfinding. The gbXML model conceives building elements as surfaces and, consequently, in a correct representation according to the schema, walls would have no thickness. In case of a building representation in which doors are represented by two surfaces or by a prism, the condition for the initial network generation would be a one-to-one representation for doors.

Dijkstra’s algorithm is used in this research to find the shortest path [

41]. It computes a path between start and goal nodes by searching for the minimal travel cost based on distance attributes. Because the algorithm expands by means of analyzing all neighbors, the speed of the algorithm depends on the size of the network and the distance between the start and goal node. Therefore, it can be replaced with any pathfinding algorithm to improve the computational efficiency, such as the widely used A* [

42].

Because the initial model does not include information about indoor objects, such as furniture or installations, the resulting paths are only a rough approximation of the real situation and may not be precise enough for applications like robot navigation. However, the paths are updated locally when obstacle objects are added to the navigable model. A polygon identifying an area of obstacle points detected by the buffer described in

Section 3.2 triggers modification of the navigable network. A new obstacle area is calculated and added to the network. In order to reduce the number of points added to the network, the obstacle polygon is processed as follows: (a) a convex hull is calculated; (b) consecutive collinear points and edges shorter than a minimum edge length threshold are removed as they do not change the shape of the polygon; and (c) in order to avoid collision detection with the same obstacle points in the next iteration of the algorithm, the polygon is expanded by the half of the buffer depth size (d/2). The obtained polygon is added to the obstacle area defined in the previous iteration. Points belonging to the final polygon are then used to update the navigable network. Links between network nodes, which are within the obstacle area, are excluded from navigation.

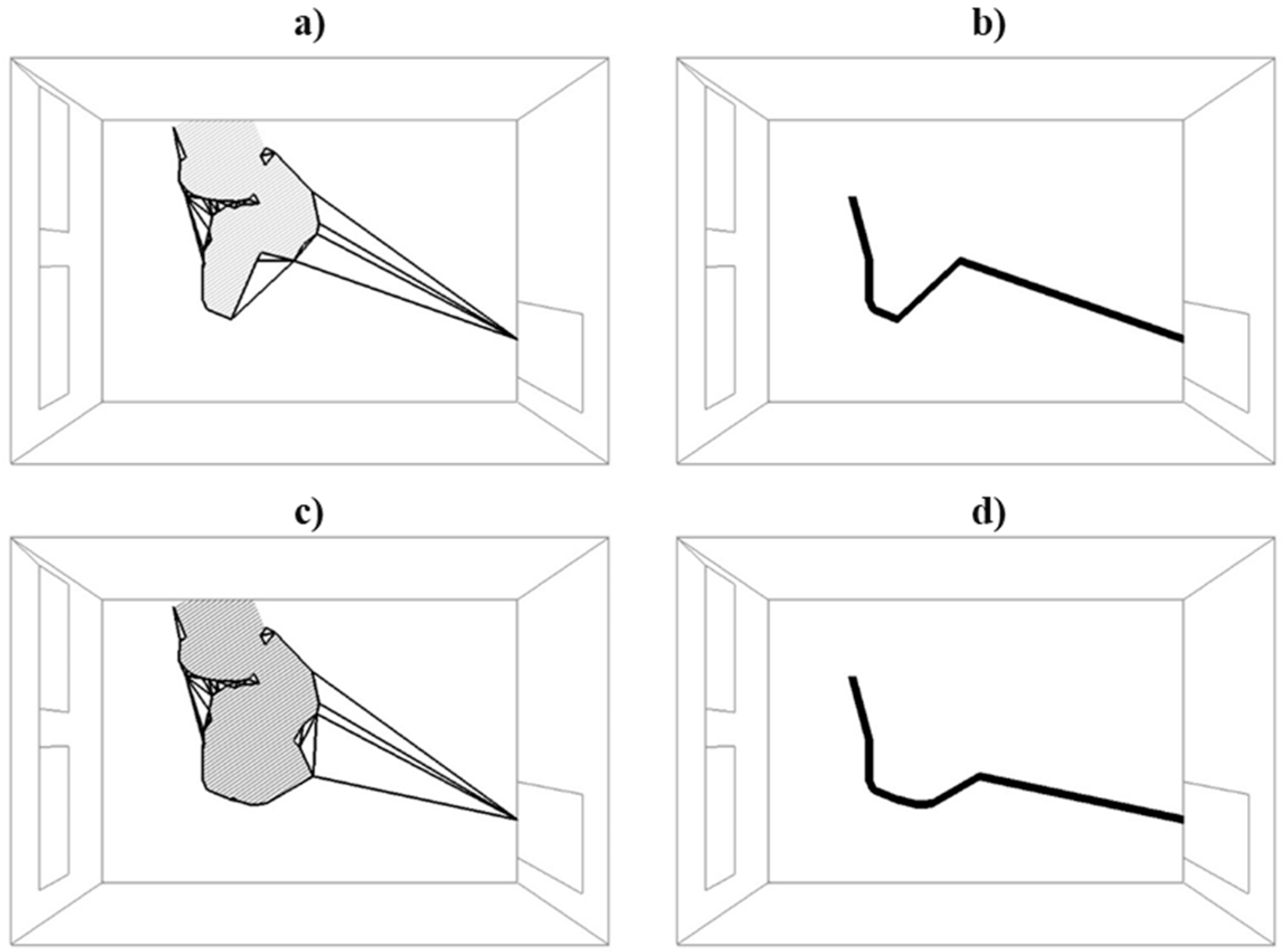

In theory, introduction of a new obstacle area to the network (see

Figure 5a,c) should result in paths which are longer than those obtained in a previous iteration, as it is necessary to go around a detected obstacle object. However, in some cases, consecutive paths get shorter. For instance, the length of the shortest path shown in

Figure 5b is 5.42 m, while the path length obtained after adding a new obstacle polygon to the network is 5.14 m (see

Figure 5d). This is related to the location of network nodes and navigable network generation method. The method may be improved by introduction of additional nodes in areas not covered by the network; random or regular distribution may be considered. However, it is out of the scope of this research.

The presented network is a discrete model and, thus, calculated paths are an approximation of real paths. It was shown that the method used in this research produces better accuracy of the route length in comparison to prevailing approaches [

40].

5. Conclusions

This paper proposes an automatic methodology for obstacle-aware indoor pathfinding. Point clouds were firstly processed to parameterize and reconstruct 3D indoor maps which constituted the basis for the network generation. Next, classified point clouds were directly used to update the network by obstacle detection and path correction.

Similar to the update of highly autonomous driving maps for autonomous vehicles, the methodology is based on the concept of updating the network from the use of point clouds. Obstacle detection is dependent on data completeness. The better the scene is depicted, the more accurate the paths are that are obtained. However, the methodology is not dependent on the complete acquisition of the entire building, since the correction of paths is considered just for the immediate area of the path instead of for the entire scene. In this way, although the paper is not conceived for a real-time application, it could be used for pathfinding with mobile laser scanning data as the input, such as for the case of autonomous wheelchairs. The distinction of dynamic and static obstacles is not considered in the methodology; however, this is an important topic for the generation of highly detailed 3D indoor maps. Consequently, this topic will be considered for future work.

From the results, the following main conclusions could be drawn:

Results show a robust methodology for indoor pathfinding under the presence of obstacles;

Buffer size can be changed for simulating different user conditions, such as pedestrians or people with reduced mobility;

Although obstacles are searched in the 3D space, the network is created for each room in the 2.5D space. For instance, if a table is detected as an obstacle, the route cannot continue above or under it;

The methodology is quality-dependent since obstacle detection depends on the input data completeness. The presence of occlusions from an incomplete survey can generate false negatives.

Future work will aim to extend the methodology to more complex scenarios including different floors elements such as stairs, ramps, etc. Extending the network creation to the 3D space will also be part of the future work, especially important for emergency applications.