Location Privacy in the Wake of the GDPR

Abstract

:1. Introduction

“A high-privacy position assigns primary value to privacy claims, has high organizational distrust, and advocates comprehensive privacy interventions through legal rules and enforcement. A limited-privacy position views privacy claims as usually less worthy than business efficiency and societal-protection interests, is generally trustful of organizations, and opposes most new regulatory interventions as unnecessary and costly”.([16], p. 434)

2. Privacy at the Individual Level

2.1. The Individual Data Subject or Consumer

2.2. A Typology of Privacy at the Individual Level

Another strategy for Alice is collective, for example, participating in popular resistance to unpopular government action. When the government of the Federal Republic of Germany announced a national Census on April 27, 1983, German citizens protested so strongly that a dismayed German government had to comply with the Federal Constitutional Court’s order to stop the process and take into account several restrictions imposed by the Court in future censuses. Apparently, asking the public for personal information in 1983, the fiftieth anniversary of the National Socialists’ ascent to power, was bad timing, to say the least [41]. When the Census was finally conducted in 1987, thousands of citizens either boycotted (overt resistance) or sabotaged (covert resistance) what they perceived as Orwellian state-surveillance [42]. We should bear in mind that these remarkable events took place in an era where the government was the only legitimate collector of data at such a massive, nationwide scale and at a great cost (approx. a billion German marks). Nowadays, state and corporate surveillance are deeply entangled. In response, technologically savvy digital rights activists have been influential in several venues, including the Internet Engineering Task Force (IETF) and the Internet Corporation for Assigned Names and Numbers (ICANN), through the Non-commercial User Constituency (NCUC) caucus. Yet their efforts have largely remained within a community of technical experts (‘tech justice’) with little integration so far with ‘social justice’ activists [43].“in spite of doomsday scenarios about the death of privacy, in societies with liberal democratic, economic and political systems, the initial advantages offered by technological developments may be weakened by their own ironic vulnerabilities and [by] ‘human ingenuity’”.([40], p. 388)

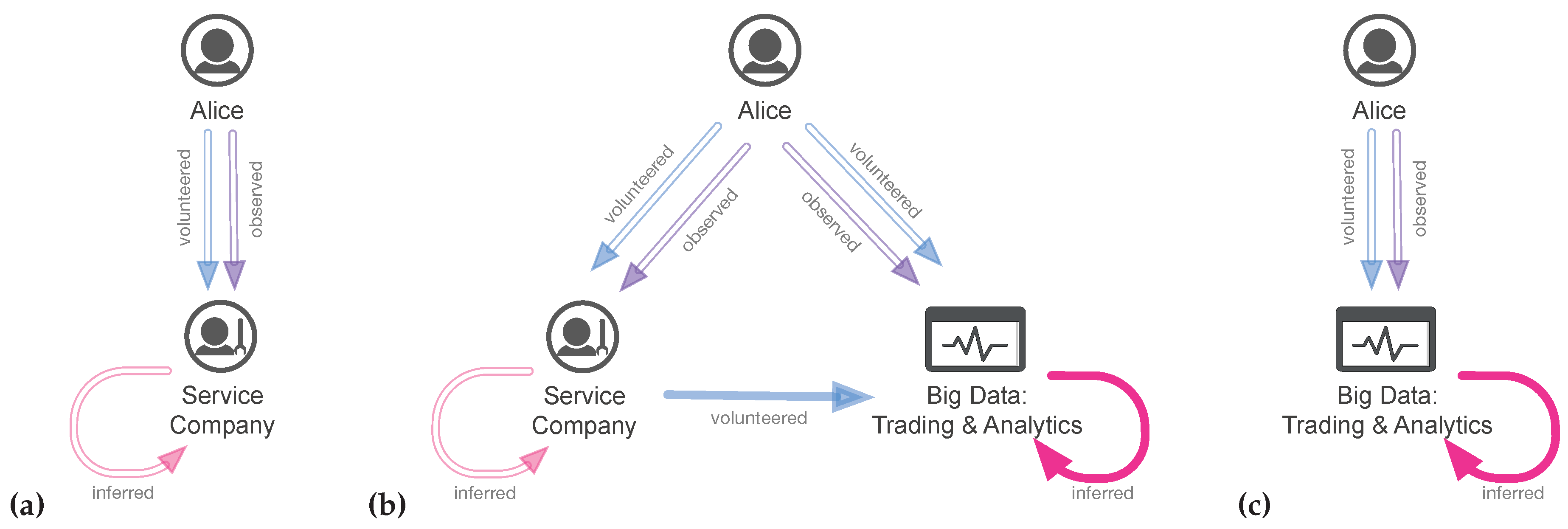

3. The Transformation Processes of Our Data in the Context of Location Privacy

3.1. Data Types in Play

- unique identifier This is a data element that is associated with just one entity of interest; that entity may be a data subject or something else. This is a wide class of identifiers;

- key identifier A data element that can be exploited with minimal effort to identify a (privacy-sensitive) data subject;

- quasi-identifier A data element that almost discloses the identity of a data subject, due to its semi-unique value, and that will allow full disclosure when combined with other quasi-identifiers;

- private attribute The remainder class of privacy-relevant but non-identifying data elements.

- location This is the base case and it provides the whereabouts of an entity, a data subject, or that of a data subject’s activity;

- location with co-variates A slight extension of the first case, in which the provided location is augmented with quasi-identifiers or private data elements. Such augmentation allows contextual inference about the function of the location to the data subjects;

- timed location This is a next step up the ladder, and associates with any provided location also some form of time stamp. The combination of these data elements allows inferences towards what we can generically call trajectories. Presence and absence information also falls in this category;

- timed location with co-variates The final case, which allows inference over entity or data subject activity trails and life cycles.

3.2. Inference over Personal and Location Data

4. Privacy at the Cultural Level

4.1. Cultural Theory: A Typology of Cultures

The mix of individualism and hierarchy is an improvement over the choice to opt-out, that infers consent from inaction.“requires affirmative consent, which must be freely given, specific, informed, and unambiguous. Consent can’t be assumed from inaction. Pre-ticked boxes aren’t sufficient to constitute consent”.[6]

4.2. A Typology of Privacy Cultures

- “The possibility for e-citizens to negotiate and conclude contracts with the platforms (possibly via intermediaries) regarding the use of their personal data, so that they can decide for themselves which use they wish to make of them;

- The ability to monetize these data (or not) according to the terms of the contract (which could include licensing, leasing, etc.);

- The ability, conversely, to pay the price of the service provided by the platforms without giving away our data (the price of privacy?)” (p. 7).

“Empires like Google and Amazon cannot be beaten from below. No start-up can compete with their data power and cash. If you are lucky, one of the big Internet whales will swallow your company. If you are unlucky, your ideas will be copied.’’

“The dividends of the digital economy must benefit the whole society. An important step in this direction: we [the state] must set limits to the internet giants if they violate the principles of our social market economy. […] A new data-for-all law could offer decisive leverage: As soon as an Internet Company achieves a market share above a fixed threshold for a certain time period, it will be required to share a representative, anonymized part of their data sets with the public. With this data other companies or start-ups can develop their own ideas and bring their own products to the market place. In this setting the data are not "owned” exclusively by e.g., Google, but belong to the general public.”

“We should not balk at proposing ambitious political reforms to go along with their new data ownership regime. These must openly acknowledge that the most meaningful scale at which a radical change in democratic political culture can occur today is not the nation state, as some on the left and the right are prone to believe, but, rather the city. The city is a symbol of outward-looking cosmopolitanism—a potent answer to the homogeneity and insularity of the nation state. Today it is the only place where the idea of exerting meaningful democratic control over one’s life, however trivial the problem, is still viable.”

- as a tradeable private good in return for another private good,

- as something that constitutes who we are, and therefore is unalienable,

- as something to be delegated to a trusted father-state and traded with a public good, and

- as something that does not exist anymore and we should get over with.

5. Personal Data Protection in the African Union: An Outlook

“transfer of personal data to a third country or an international organization may take place where the Commission has decided that the third country, a territory or one or more specified sectors within that third country, or the international organization in question ensures an adequate level of protection.”

“the major legal systems in Africa namely common and civil law legal systems which are Western in origin, create fertile grounds for adaptability of European law. While these systems were forcibly imposed on Africa by European countries during colonial rule as part of the colonial superstructure and an instrument of coercing Africans to participate in the colonial economy, they were inherited by African countries on independence. [...] Thus, the attitude to view these systems as colonial has diminished significantly as more customisation continues to take place. It is arguable that African countries are no strangers to the adaptation of ‘foreign law’.”(p. 451)

“debates over privacy are never-ending, for they are tied to changes in the norms of society as to what kinds of personal conduct are regarded as beneficial, neutral, or harmful to the public good. In short, privacy is an arena of democratic politics. It involves the proper roles of government, the degree of privacy to afford sectors such as business, science, education, and the professions, and the role of privacy claims in struggles over rights, such as equality, due process, and consumerism.”

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Powles, J. The G.D.P.R., Europe’s New Privacy Law, and the Future of the Global Data Economy. The New Yorker, 25 May 2018. [Google Scholar]

- Hoofnagle, C.J.; van der Sloot, B.; Zuiderveen Borgesius, F. The European Union General Data Protection Regulation: What It Is And What It Means. SSRN Electron. J. 2018. [Google Scholar] [CrossRef]

- Hearn, A. Facebook moves 1.5bn users out of reach of new European privacy law. The Guardian, 19 April 2018. [Google Scholar]

- Schwartz, P.M.; Peifer, K.N. Transatlantic Data Privacy Law. Georget. Law J. 2017, 106, 115–179. [Google Scholar] [CrossRef]

- United Nations Conference on Trade and Development (UNCTAD). Data Protection Regulations and International Data Flows: Implications for Trade and Development; United Nations Publication: New York, NY, USA; Geneva, Switzerland, 2016. [Google Scholar]

- Solove, D. Why I Love the GDPR: 10 Reasons. Available online: https://teachprivacy.com/why-i-love-the-gdpr/ (accessed on 6 February 2019).

- Houser, K.A.; Voss, W.G. GDPR: The End of Google and Facebook or a New Paradigm in Data Privacy? Richmond J. Law Technol. 2018. [Google Scholar] [CrossRef]

- Tiku, N. Europe’s New Privacy Law Will Change the Web and More. Wired Magazine, 30 March 2018. [Google Scholar]

- Zuboff, S. The secrets of surveillance capitalism. FAZ.net, 5 March 2016. Available online: https://www.faz.net/aktuell/feuilleton/debatten/the-digital-debate/shoshana-zuboff-secrets-of-surveillance-capitalism-14103616.html(accessed on 5 March 2016).

- Searls, D. Brands Need to Fire Adtech. Available online: https://blogs.harvard.edu/doc/2017/03/23/brands-need-to-fire-adtech/ (accessed on 6 February 2019).

- Layton, R.; McLendon, J. The GDPR: What It Really Does and How the U.S. Can Chart a Better Course. Fed. Soc. Rev. 2018, 19, 234–248. [Google Scholar]

- Buttarelli, G. The EU GDPR as a clarion call for a new global digital gold standard. Int. Data Priv. Law 2016, 6, 77–78. [Google Scholar] [CrossRef]

- Albrecht, J.P. Hands Off Our Data! Available online: https://www.janalbrecht.eu/wp-content/uploads/2018/02/JP_Albrecht_hands-off_final_WEB.pdf (accessed on 6 February 2019).

- Whitman, J.Q. The two western cultures of privacy: Dignity versus liberty. Yale Law J. 2003, 113, 1151. [Google Scholar] [CrossRef]

- Chakravorti, B. Why the Rest of the World Can’t Free Ride on Europe’s GDPR Rules; Harvard Business Review: Boston, MA, USA, 2018. [Google Scholar]

- Westin, A.F. Social and political dimensions of privacy. J. Soc. Issues 2003, 59, 431–453. [Google Scholar] [CrossRef]

- Reed, P.J.; Spiro, E.S.; Butts, C.T. Thumbs up for privacy?: Differences in online self-disclosure behavior across national cultures. Soc. Sci. Res. 2016, 59, 155–170. [Google Scholar] [CrossRef] [PubMed]

- Westin, A.F.; Rübhausen, O.M. Privacy and Freedom; Atheneum: New York, NY, USA, 1967; Volume 1. [Google Scholar]

- Keßler, C.; McKenzie, G. A geoprivacy manifesto. Trans. GIS 2018, 22, 3–19. [Google Scholar] [CrossRef]

- Floridi, L. The 4th Revolution; Oxford University Press: Oxford, UK, 2014. [Google Scholar]

- Warren, S.D.; Brandeis, L.D. The Right to Privacy. Harv. Law Rev. 1890, 4, 193. [Google Scholar] [CrossRef]

- Mulligan, D.K.; Koopman, C.; Doty, N. Privacy is an essentially contested concept: A multi-dimensional analytic for mapping privacy. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2016, 374, 20160118. [Google Scholar] [CrossRef] [PubMed]

- Zook, M.; Barocas, S.; Crawford, K.; Keller, E.; Gangadharan, S.P.; Goodman, A.; Hollander, R.; Koenig, B.A.; Metcalf, J.; Narayanan, A.; et al. Ten simple rules for responsible big data research. PLoS Comput. Biol. 2017. [Google Scholar] [CrossRef] [PubMed]

- Masser, I.; Wegener, M. Brave New GIS Worlds Revisited. Environ. Plan. B Plan. Des. 2016. [Google Scholar] [CrossRef]

- Taylor, L.; Broeders, D. In the name of Development: Power, profit and the datafication of the global South. Geoforum 2015, 64, 229–237. [Google Scholar] [CrossRef]

- Mann, L. Left to other peoples’ devices? A political economy perspective on the big data revolution in development. Dev. Chang. 2018, 49, 3–36. [Google Scholar] [CrossRef]

- Fairfield, J.A.T.; Engel, C. Privacy as a public good. Duke Law J. 2015, 65, 385–457. [Google Scholar]

- Agre, P.E.; Rotenberg, M. Technology and Privacy: The New Landscape; MIT Press: Cambridge, MA, USA, 1997. [Google Scholar]

- Altman, I. Privacy regulation: Culturally universal or culturally specific? J. Soc. Issues 1977, 33, 66–84. [Google Scholar] [CrossRef]

- Ouchi, W.G. A Conceptual Framework for the Design of Organizational Control Mechanisms. Manag. Sci. 1979, 25, 833–848. [Google Scholar] [CrossRef]

- Ciborra, C.U. Reframing the Role of Computers in Organizations—The Transactions Cost Approach. Off. Technol. People 1987, 3, 17–38. [Google Scholar] [CrossRef]

- Herrmann, M. Privacy in Location-Based Services; Privacy in Locatie-Gebaseerde Diensten. Ph.D. Thesis, Katholieke Universiteit Leuven, Leuven, Belgium, 2016. [Google Scholar]

- Greenwald, G.; MacAskill, E. NSA Prism program taps in to user data of Apple, Google and others. The Guardian, 7 June 2013. [Google Scholar]

- Goffman, E. The Presentation of Self in Everyday Life; Anchor Books: New York, NY, USA, 1959; Volume 5. [Google Scholar]

- Fried, C. Privacy. Yale Law J. 1968, 77, 475–493. [Google Scholar] [CrossRef]

- Bowker, G.C.; Baker, K.; Millerand, F.; Ribes, D. Toward Information Infrastructure Studies: Ways of Knowing in a Networked Environment. In International Handbook of Internet Research; Springer Netherlands: Dordrecht, The Netherlands, 2009; pp. 97–117. [Google Scholar]

- Kounadi, O.; Resch, B. A Geoprivacy by Design Guideline for Research Campaigns That Use Participatory Sensing Data. J. Empir. Res. Hum. Res. Ethics 2018, 13, 203–222. [Google Scholar] [CrossRef] [PubMed]

- Newman, J. Google’s Schmidt Roasted for Privacy Comments. PC World, 11 December 2009. [Google Scholar]

- The Guardian. The Cambridge Analytica Files. Available online: https://www.theguardian.com/news/series/cambridge-analytica-files (accessed on 2 February 2019).

- Marx, G.T. A Tack in the Shoe: Neutralizing and Resisting the New Surveillance. J. Soc. Issues 2003, 59, 369–390. [Google Scholar] [CrossRef]

- Anonymous. Datenschrott für eine Milliarde? DER SPIEGEL, 16 March 1987. [Google Scholar]

- Anonymous. Volkszählung: “Laßt 1000 Fragebogen glühen”. DER SPIEGEL, 28 March 1983. [Google Scholar]

- Dencik, L.; Hintz, A.; Cable, J. Towards data justice? The ambiguity of anti-surveillance resistance in political activism. Big Data Soc. 2016. [Google Scholar] [CrossRef]

- European Union. Regulation (EU) 2016/679 of the European Parliament and of The Council of 27 April 2016 on the Protection of Natural Persons With Regard To the Processing of Personal Data and on the Free Movement of Such Data, And Repealing Directive 95/46/EC (General Data Protection Regulation); European Union: Brussels, Belgium, 2016. [Google Scholar]

- De Graaff, V.; de By, R.A.; van Keulen, M. Automated Semantic Trajectory Annotation with Indoor Point-of-interest Visits in Urban Areas. In Proceedings of the 31st Annual ACM Symposium on Applied Computing, Pisa, Italy, 4–8 April 2016; pp. 552–559. [Google Scholar] [CrossRef]

- Haklay, M.; Weber, P. OpenStreetMap: User-generated street maps. IEEE Pervasive Comput. 2008, 7, 12–18. [Google Scholar] [CrossRef]

- Cetl, V.; Tomas, R.; Kotsev, A.; de Lima, V.N.; Smith, R.S.; Jobst, M. Establishing Common Ground Through INSPIRE: The Legally-Driven European Spatial Data Infrastructure. In Service-Oriented Mapping; Springer International Publishing AG: Dordrecht, The Netherlands, 2019; pp. 63–84. [Google Scholar]

- Matthews, K. The Current State of IoT Cybersecurity. Available online: https://www.iotforall.com/current-state-iot-cybersecurity/ (accessed on 6 February 2019).

- Barnes, B. The Elements of Social Theory; Princeton University Press: Princeton, NJ, USA, 2014. [Google Scholar]

- Pepperday, M.E. Way of Life Theory: The Underlying Structure of Worldviews, Social Relations and Lifestyles. Ph.D. Thesis, Australian National University, Canberra, Australia, 2009. [Google Scholar]

- Douglas, M. CulturaL Bias. In The Active Voice; Routledge and Kegan Paul: London, UK, 1982. [Google Scholar]

- Regan, P.M. Response to Privacy as a Public Good. Duke Law J. 2016, 65, 51–65. [Google Scholar]

- Thompson, M. Organising and Disorganising. a Dynamic and Non-Linear Theory of Institutional Emergence and Its Implication; Triarchy Press: Devon, UK, 2008. [Google Scholar]

- Sprenger, P. Sun on Privacy: ‘Get Over It’. Wired News, 26 January 1999. [Google Scholar]

- Johnson, B. Privacy no longer a social norm, says Facebook founder. The Guardian, 11 January 2010. [Google Scholar]

- Couldry, N.; Mejias, U.A. Data colonialism: Rethinking big data’s relation to the contemporary subject. Telev. New Media 2018. [Google Scholar] [CrossRef]

- Landreau, I.; Peliks, G.; Binctin, N.; Pez-Pérard, V. My Data Are Mine. Available online: https://www.generationlibre.eu/wp-content/uploads/2018/01/Rapport-Data-2018-EN-v2.pdf (accessed on 3 February 2019).

- Nahles, A. Die Tech-Riesen des Silicon Valleys gefährden den fairen Wettbewerb. Handelsblatt, 14 August 2018. [Google Scholar]

- Morozov, E. There is a leftwing way to challenge big tech for our data. Here it is. The Guardian, 19 August 2018. [Google Scholar]

- Shaw, J.; Graham, M. An Informational Right to the City? Code, Content, Control, and the Urbanization of Information. Antipode 2017, 49, 907–927. [Google Scholar] [CrossRef]

- Greenleaf, G.; Cottier, B. Data Privacy Laws and Bills: Growth in Africa, GDPR Influence. In 152 Privacy Laws & Business International Report; Number 18–52 in UNSW Law Research Paper; University of New South Wales: Sydney, Australia, 2018; pp. 11–13. [Google Scholar]

- African Union. African Union Convention on Cyber Security and Personal Data Protection; African Union: Addis Ababa, Ethiopia, 2014. [Google Scholar]

- Makulilo, A.B. A Person Is a Person through Other Persons—A Critical Analysis of Privacy and Culture in Africa. Beijing Law Rev. 2016, 7, 192–204. [Google Scholar] [CrossRef]

- Makulilo, A.B. “One size fits all”: Does Europe impose its data protection regime on Africa? Datenschutz Und Datensicherheit 2013, 37, 447–451. [Google Scholar] [CrossRef]

- Olinger, H.N.; Britz, J.J.; Olivier, M.S. Western privacy and/or Ubuntu? Some critical comments on the influences in the forthcoming data privacy bill in South Africa. Int. Inf. Libr. Rev. 2007, 39, 31–43. [Google Scholar] [CrossRef]

- Donovan, K.P.; Frowd, P.M.; Martin, A.K. ASR Forum on surveillance in Africa: Politics, histories, techniques. Afr. Stud. Rev. 2016, 59, 31–37. [Google Scholar] [CrossRef]

| Goal Incongruity | |||

|---|---|---|---|

| Low(er) | High(er) | ||

| (Alice’s) | Low(er) | Cell (4) | Cell (3) |

| Ability to control | Alice – Government institution | Alice – Private corporation | |

| human behavior, | Privacy strategy: | Privacy strategy: | |

| machine behavior, | Compliance; lodge complaint | Control behavior of | |

| outputs | to DPA in case of violation | corporation (via GDPR); | |

| of GDPR; anti-surveillance | lodge complaint to DPA in | ||

| resistance | case of violation of GDPR | ||

| High(er) | Cell (1) | Cell (2) | |

| Alice – Bob | Alice – (Bob – Carol – Dan – etc.) | ||

| Privacy strategy: | Privacy strategy: | ||

| Right and duty of partial display | Geoprivacy by design |

| Measures Controlling Human/Machine Behavior and Outputs | |

|---|---|

| Prior to start of campaign | human behavior (participation agreement, informed consent, institutional approval); outputs (define criteria of access to restricted data) |

| Security and safe settings | human behavior (assign privacy manager, train data collectors); machine behavior (ensure secure sensing devices, ensure secure IT system) |

| Processing and analysis | outputs (delete data from sensing devices, remove identifiers from data set) |

| Safe disclosure | outputs (reduce spatial and temporal precision, consider alternatives to point maps) human behavior (provide contact information, use disclaimers, avoid the release of multiple versions of anonymized data, avoid the disclosure of anonymization metadata, plan a mandatory licensing agreement, authenticate data requestors) |

| Grid | |||

|---|---|---|---|

| Weak | Strong | ||

| Group | Strong | Egalitarianism | Hierarchy |

| Weak | Individualism | Fatalism |

| Grid | |||

|---|---|---|---|

| Weak | Strong | ||

| Group | Strong | Data distributism (egalitarianism) | Data distributism (hierarchy) |

| Slogan: We produce and manage | Slogan: Data-for-all law | ||

| our personal data | |||

| Privacy: Personal data as | Privacy: Personal data as a good | ||

| unalienable, constituting the self | that may be traded with a | ||

| public good | |||

| Weak | Data distributism (individualism) | Data extractivism (fatalism) | |

| Slogan: My data are mine, but | Slogan: You have zero privacy, | ||

| I sell them for a fair price | get over it | ||

| Privacy: Personal data as tradeable | Privacy: Zero | ||

| product. |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Georgiadou, Y.; de By, R.A.; Kounadi, O. Location Privacy in the Wake of the GDPR. ISPRS Int. J. Geo-Inf. 2019, 8, 157. https://doi.org/10.3390/ijgi8030157

Georgiadou Y, de By RA, Kounadi O. Location Privacy in the Wake of the GDPR. ISPRS International Journal of Geo-Information. 2019; 8(3):157. https://doi.org/10.3390/ijgi8030157

Chicago/Turabian StyleGeorgiadou, Yola, Rolf A. de By, and Ourania Kounadi. 2019. "Location Privacy in the Wake of the GDPR" ISPRS International Journal of Geo-Information 8, no. 3: 157. https://doi.org/10.3390/ijgi8030157

APA StyleGeorgiadou, Y., de By, R. A., & Kounadi, O. (2019). Location Privacy in the Wake of the GDPR. ISPRS International Journal of Geo-Information, 8(3), 157. https://doi.org/10.3390/ijgi8030157