1. Introduction

Different types of three-dimensional (3D) representations of the environment have been a topic of research in the field of geo-information and surveying since the 1960s. Often, they have been called “3D maps” (e.g., [

1,

2]). In this respect, their components include a textured three dimensional (3D) model of objects (terrain, building,

etc.) [

1]. In an urban context, the term “3D city model” has perhaps been used more so (e.g., [

3]). Both 3D city models and 3D maps can contain other information in addition to the geometry of an environment, but neither of the terms is accurately defined.

3D maps have some advantages over two-dimensional (2D) maps, especially in navigation use. These advantages include the faster orientation of the viewer when compared to navigating by street names with a traditional map, as real world objects can be represented in a more recognizable way [

4]. Additionally, the identification of visual cues and landmarks has been considered to be more intuitive with 3D maps than with simplified 2D maps [

4]. Results have been similar in cases where a 2D map is combined with a 3D view [

5]. 3D maps of urban areas have been considered a potential tool for urban planning, decision making, and information visualization [

6]. As the technology has advanced, 3D maps used for navigation and online purposes have become mainstream, with one of the examples being Google Earth [

7]. The entire 3D mapping and 3D modeling market is by some estimates expected to grow from $1.1 billion in 2013 to $7.7 billion by 2018, an annual growth rate of 48% [

8].

Currently, there are two widely used technologies for creating 3D maps of the existing urban environment accurately and cost effectively: photogrammetry and laser scanning. In the future, other sensor techniques, such as depth cameras may also utilized. In photogrammetry, 3D data is derived from 2D images by mono-plotting (single-ray back projection), stereo-imagery interpretation, or multi-imagery block adjustment. Several platforms for optical sensors can be used. The second technique is Airborne Laser Scanning (ALS). It is a method based on Light Detection and Ranging (LIDAR) measurements from an aircraft, where the precise position and orientation of the sensor is known, and therefore the position (x,y,z) of the reflecting objects can be determined [

9]. In addition to ALS, there is increasing interest in Terrestrial Laser Scanning (TLS), where the laser scanner is mounted on a tripod or even on a moving platform, as in Mobile Laser Scanning (MLS). The output of the laser scanner is then a georeferenced point cloud of LIDAR measurements. The point density of the laser data greatly influences the methodological development and quality of the produced 3D models. Methodologies for 3D modeling based on the data can be found in several studies [

10,

11,

12,

13,

14]. MLS has gained attention as a method for generating accurate, very detailed city models [

15,

16,

17]. With some limitations, 2D GIS data can also be used to create simple three-dimensional city models for computer-based systems [

18,

19]. For different application scenarios, photorealistic or thematic visualizations can be made from 3D city models [

20]. More stylized, sketch-like renderings can also be generated [

21]. The aforementioned methods allow for 3D reconstruction, the process of determining the geometry and the appearance of existing objects for making geometrical replicas of, for example, the natural environment, old towns, and archaeological elements.

Originally, textured 3D model research was initiated in virtual reality development (VR). VR refers to computer-simulated environments that can either be replicas of real world or else imaginary, built with geometric models and surface textures, so it is close to the textured 3D models used in surveying and city modelling. Already in 1965, Ivan Sutherland envisioned an “Ultimate Display” that would allow a person to look into a virtual world that would appear as real as the physical world [

22]. Systems that visualize GIS data in a 3D VR were also developed in laboratories already in the 1990s. One of the examples combining GIS with VR was introduced by Verbree

et al. [

18]. With this system, different views (map, aerial view, and street-level view) and functionalities are used to support urban planning [

18]. Technologies offering an immersive presentation, like virtual glasses, have also been tested for GIS use [

23]. In computer games terminology, modeling without textures, using simple models would be called “whiteboxing”, as textured models are commonly used in games. In the field of geo-information and surveying, 3D reconstruction nowadays more and more includes also the textures. The challenge when working with virtual reality and 3D reconstruction is to keep the amount of data low but still to have enough level of detail in the textures. This is required especially when working with mobile applications.

A game engine is an extendable software system on which a computer game or a similar application can be built. Applications developed with game engines for purposes other than entertainment are often called “serious games”. Game engines, such as Unreal or Unity 3D [

24], have also been used for GIS visualization, with the aim of increasing the level of interactivity and engagement [

25]. Stock

et al. [

26] have presented a project for developing a collaborative virtual environment using GIS data and a game engine. Manyoko

et al. [

27] have used CryENGINE 3 for landscape visualization, using it to visualize wind turbine projects. High-quality visualizations are useful for public impact assessments of large projects, and game engines together with suitable models can be used to create them.

Research combining virtual reality, GIS, and urban planning can be found in the existing literature since the 1990s, when computer graphics first made the concept feasible (e.g., [

28,

29,

30]). In 1999, researchers envisioned participatory GIS systems based on virtual reality (e.g., [

31]). The adoption of those ideas in practice, however, has been quite slow [

32].

1.1. Open Data for 3D City Modeling

3D city models can also be based on open data, and even released as open data. Open data refers to information that has been made freely available for anyone to use. In most cases, the data is produced by a state funded actor, but open data can also be published by other organizations, companies or citizens. Open data usually satisfy the following criteria: Public domain, technical accessibility, free access, licensing permitting reuse, easy to access and use, and understandability [

33]. Over

et al. [

34] have presented a case where Open Street Map (OSM) data were combined with public domain terrain data to produce web-based 3D city models. Another application based on OSM, ViziCities, has been developed by Hawkes and Smart [

35].

In 2010, the UK government allowed a significant number of data sets to be freely accessible via a program called ShareGeo Open [

36]. Since early 2012, all government geo data in the Netherlands has been available to the public, including The Netherlands topographic database [

37]. The discussion of whether or not to open the data reserves controlled by public organizations in Finland began at an institutional level, by latest, in 2009 [

38]. Several benefits have been seen in opening these data reserves, including increased transparency and efficiency of governance and the creation of innovations and economic growth [

38]. The opening of public data reserves can also be seen as a component and an enabler in a larger shift towards open innovation throughout Europe [

39]. In Finland, software developers and individual citizens have been encouraged to utilize these public data reserves with various campaigns. For example, the Apps4Finland competition was organized for the fifth time in 2013 [

40]. Many public organizations have already opened their data reserves as a part of this movement. A list of available data sources can be found at the opendata.fi service [

41]. The National Land Survey of Finland (NLS) opened several data sources in May 2012, with a license permitting commercial utilization of the data [

42]. The data included the topographic database and a large amount of classified aerial laser scanning data [

43]. As a result, a large amount of GIS data became freely available.

1.2. 3D City Models for Smart Cities

3D city models can also be seen as a component of the Smart city concept. Even though the term has been criticized as being poorly defined, inaccurate, and quite technology centric [

44], it is often used in the context of discussing the development of urban regions. In the definition commonly used by, for example, the IBM corporation, the concept of a smart city refers to networking all the systems of an urban infrastructure [

45], thus enabling a more efficient use of resources, the more accurate prediction of potential problems, and a faster response to them. Geo-information systems play a central role in this process. Additionally, some authors have connected aspects of citizen interaction, governance, and innovation ecosystems to the smart city concept [

46]. Regardless of how the term is defined, it seems apparent that urban information systems require large and sufficiently accurate city models that can easily be updated. These models can then be used to create different types of applications that are required in a smart city. If the models are used to visualize accurate information about a city’s infrastructure, it seems natural to use 3D models instead of 2D maps, as suggested by Döllner

et al. [

3]. Prandi

et al. [

47] have presented a framework in which a CityGML model is used as a starting point to build a web-based information system to support the processes of a smart city.

Many studies have presented the use of Information and Communications Technologies (ICTs) in urban planning and smart cities, such as broadband deployment, e-services, and open data (e.g., [

32,

38,

39,

48,

49]). For application development, it is essential that the model has an organized structure and that other information can be connected to the model’s components.

1.3. Virtual Worlds

3D virtual worlds, where the user is present as an avatar, based on computer games and social media, have been used for many different purposes [

50]. Many virtualization projects pertaining to urban plans started in Second Life but later on moved to OpenSimulator [

32,

51]. In education applications, the virtual worlds have been tested frequently. In this context, their benefits include immersive visualization, social contacts, and rich simulated experiences. The main problems have been the hardware requirements, the need to type when communicating, and the learning curve of the software being used [

52]. In a GIS context, networked 3D systems accessed over an Internet connection offer the simultaneous presence of several users as avatars and can enhance cooperation by increasing the interaction between users compared to static 3D web pages [

19]. In an urban planning context, systems accessed over an Internet connection that enable the study of a 3D city model as an avatar have been developed, for instance the Tapiola 3D system [

53].

1.4. Combining 3D Maps, Virtual Worlds, and Open Data into Collaborative Virtual Worlds

Interactive online systems that can be used to study a city model, and query data for individual objects have been built by, for example, Rodriques

et al. [

54]. However, for most application scenarios involving 3D maps, such as urban planning, tools for interacting with the 3D maps are needed. Just visualizing the virtual model is not sufficient. For example, according to Wu

et al. [

55], a virtual 3D environment for urban planning should meet the following criteria: (1) be accessible using regular computer and software; (2) offer 3D visualization; (3) support interaction with the city model, for example by adding a building model; and (4) enable commenting via traditional channels, like forums. Virtual worlds are a potential platform for 3D environments because they offer interaction and visualization tools, as noted by Batty

et al. [

2]. Unlike most professional planning systems, they are collaborative multi-user systems that emphasize an immersive experience. In addition, they can easily be deployed to a large number of users.

To utilize virtual worlds in various applications, such as Building Information Modeling (BIM), games, smart cities, and location-based services, there is a huge need to use 3D maps as “dummy” platforms upon which to build applications on. These 3D maps must be available with open licensing terms and preferably in a format that permits easy application development on top of the map data. Open data is an increasingly attractive data source for generating such 3D maps. The applications developed utilizing the map data can be intended for the general public, or they can be niche applications built by small companies. In addition to the map data, virtual worlds need tools that enable interaction with map-type data and support large data sets. Eventually, open data combined with virtual world platforms that permit open software development may be the route towards creating an open 3D Earth.

To enable the targeted updating of map entities in a virtual world, it is not sufficient to only convert the 2D geometry commonly used in GIS into a 3D format. The object structure of the geographic data must be preserved in the virtual world. If the objects include, for example, buildings, then the same object division has to be present in the virtual world. After this step, an individual building can be located and updated as necessary without reconstructing the entire data set for the building objects. In addition, the non-geometric data, such as attribute information associated with the objects must be transferred to the virtual world. Without the object structure and associated data, it is very difficult to apply the data for any purposes other than visualization.

The mapping task has been for centuries performed by state organization and the mapping has been, therefore, extremely centralized. This work has been done by trained staff typically having background in the field of surveying. Since early 2000, it has been possible to map the surroundings by ordinary, non-skilled citizens having GNSS receivers, cameras and smart phones. The collection of geospatial user-created information is today called by many different terms such as crowdsourcing, collaboratively contributed geographic information, web based public participation geographic information system, collaborative mapping, web mapping 2.0, neogeography, wikimapping and volunteered geographic information. More commonly, crowdsourcing is understood as geospatial data collection of voluntary citizens who are untrained in the disciplines of geography, cartography or related fields. Short review of crowdsourcing can be found in Heipke [

56] and Fritz

et al. [

57].

The goal of our work is to develop a method that will make it possible to create 3D maps that can be viewed in a collaborative virtual world, based on open data and open source technology, with the intent of maintaining the attribute data related to map objects. Unlike in professional applications, we aim to use data and tools that are available to anyone. In this work, Meshmoon [

58] is used as an example of a collaborative virtual world platform based on open source code. Instead of using a model viewer application, and transferring the models, we use a client-server architecture offered by the virtual world platform. By this, the virtual world scene can be automatically kept up to date for all clients. Differently from most CAD applications, by using a multi-user virtual world, the users of the system can also communicate with each other over the system, and observe what other users are doing. We aim to develop a process where a virtual environment running in the Meshmoon system will be generated as automatically as possible from the open data sets provided by the NLS, using ALS data, orthophotos, and data from the topographic database.

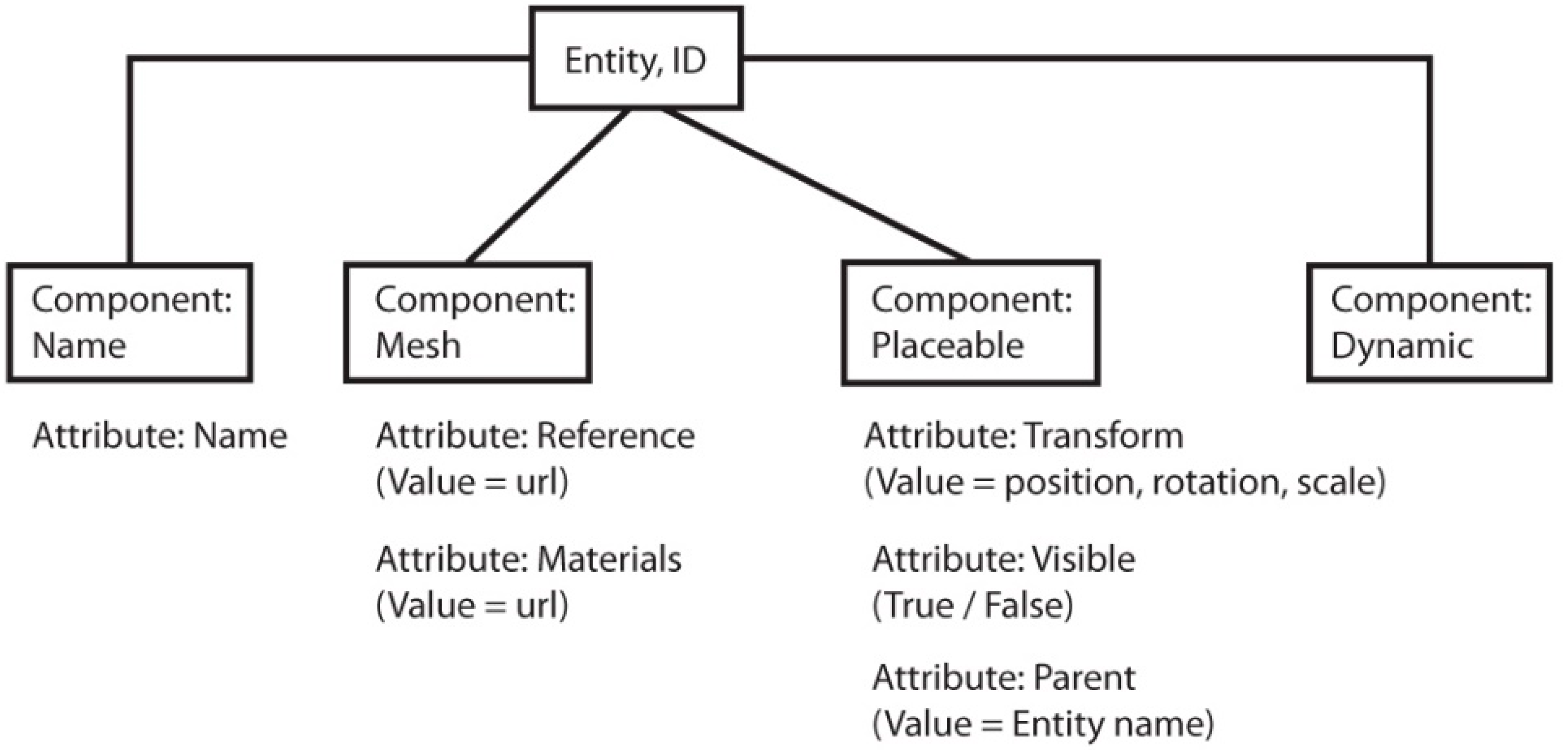

3. Generating Virtual World Scenes Based on the National Land Survey Data

The process of creating virtual world scenes from NLS open data was developed iteratively. To program the processing tools, we used the Python programming language [

65]. As we wanted to create a virtual environment where additional data could be accurately added later on, we chose to use a coordinate system based on the TM35FIN in the virtual world. Thus, all of the objects in the virtual world can be returned to a real-world coordinate system, and

vice-versa. To resolve some of the difficulties of using long coordinates, we defined a shifted coordinate system for the virtual world based on the, with the specified shift being −6,672,000 for the north axis and −378,500 for the east axis.

Existing 3D modeling software can be used when creating city models [

66]. As a proof of concept, we created the first versions of 3D virtual environments from open data by combining a 3D modeling suite, Blender 3D [

67], and some automated processing. After this, the automated processing methods were developed. The development goal was to minimize manual processing, and to retain the object division of the original data. By using the same unique identifier numbers as in the topographic database, individual objects could be located based on their id. Thus, it would also be possible to replace individual objects with updated versions. In addition, we wanted to associate more data with the Meshmoon objects: Road pavement information (paved or unpaved), building classifications, and so forth. This information was written out to the virtual world scene file, as additional data components that were added to object entities.

3.1. Buildings

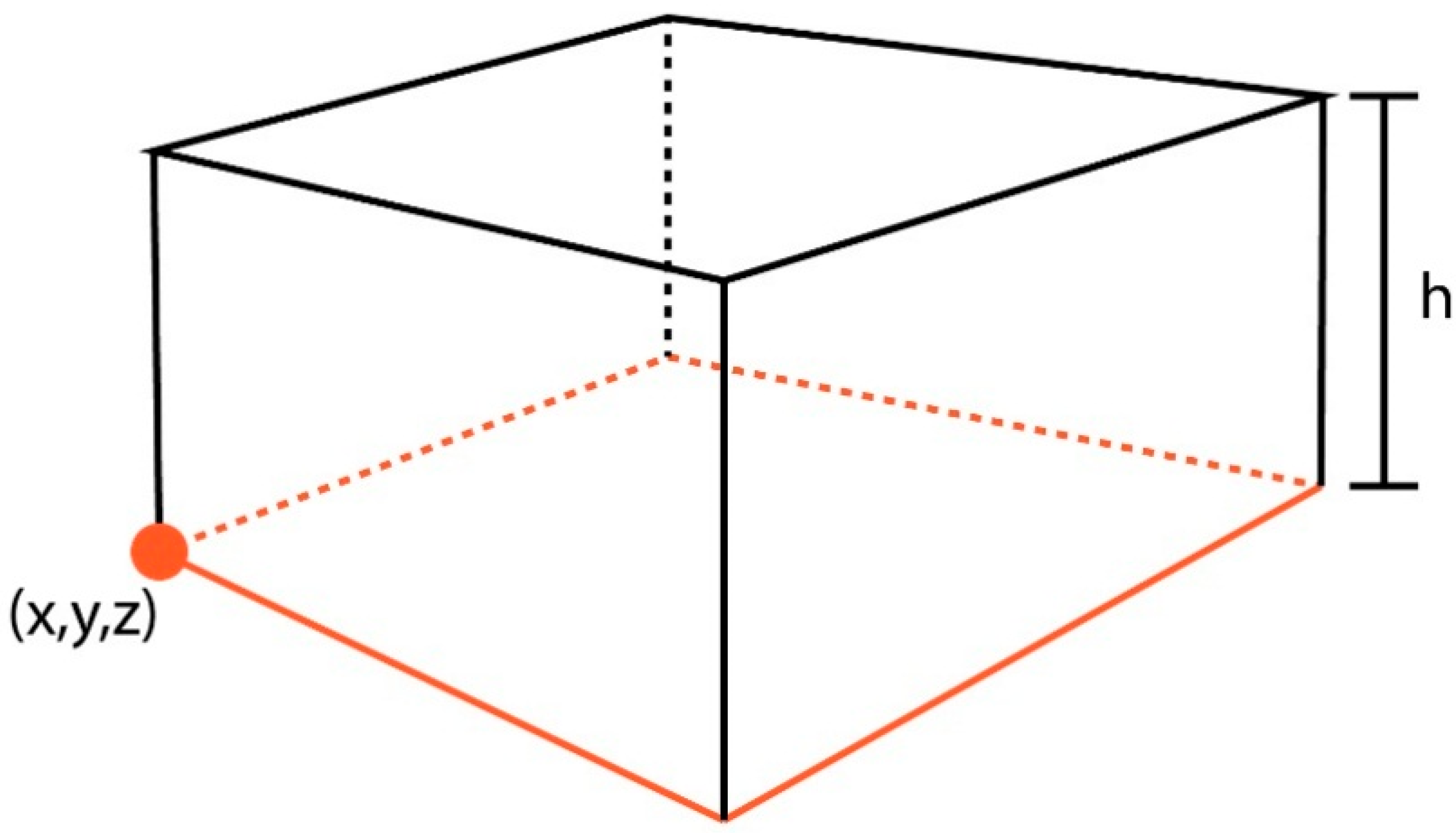

To represent buildings, simple block models (

Figure 2) were created using the building outlines of the topographic database. To make editing and updating the models simple, the building objects were separated: An individual building was represented as an individual mesh model and an independent entity in the virtual world. In the original data, the building geometry was defined in a global coordinate system, TM35FIN. Since we did not want the mesh models to use global coordinates, the models were generated with the mesh model located in its own coordinate system. The mesh model’s coordinate system used the first point of the building outline as the origin. The position of the mesh in the virtual world scene was then applied to position the mesh model. The building models were created by extruding the wall segments from the building outline. The height of the building was defined based on its height classification in the topographic database (1–3 with 3 reserved for chimneys, towers,

etc.), resulting in two different building heights in the scene. The rendering material was chosen from two alternatives based on the height classification.

Figure 2.

Single building model, with the original outline marked in orange, showing the height (h) and the origin (x,y,z) of the mesh model coordinate system.3.2. Roads

Figure 2.

Single building model, with the original outline marked in orange, showing the height (h) and the origin (x,y,z) of the mesh model coordinate system.3.2. Roads

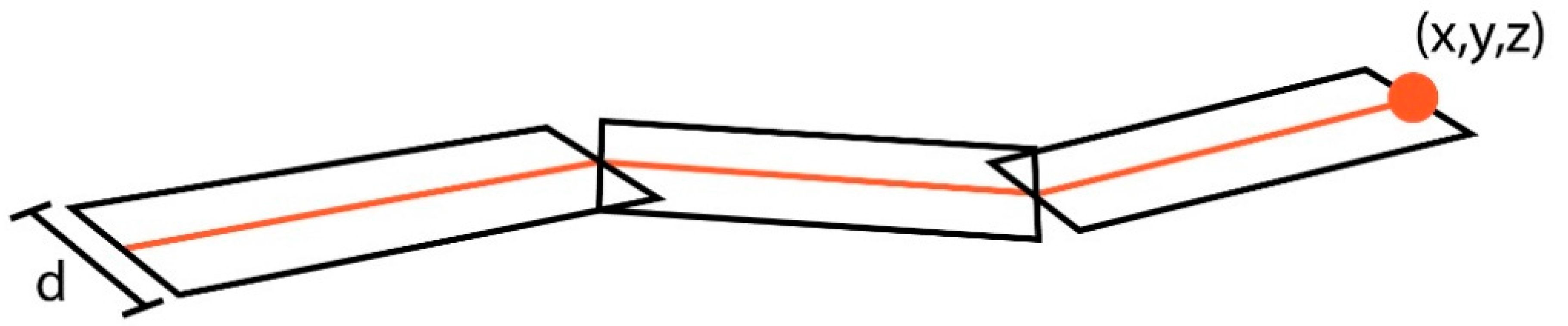

3.2. Roads

The road objects were only described as polylines in the source data. Planar surfaces were created from them. Beginning with the starting and end points of an individual line segment:

The line segment can also be described as a vector (

v), with a perpendicular vector (

vn) being:

The length of the perpendicular vector (

vn) is then

ln:

Based on the length (

ln), we can then define the unit vector of the normal vector and multiply it by the desired width of the offset surface (

d), forming the corner points (

si,

i = 1, ..., 4) of the plane, based on the original line segment (

l)

The object structure of the original data was preserved. The coordinates of the mesh model were defined using the same system as with the building models, taking the first point of each road object as its origin. The width and material of the road objects were defined based on the pavement class of the road objects in the topographic database (

Figure 3).

Figure 3.

Single road model containing several segments, with the original road line marked in orange, showing the width (d) and the origin of the mesh model coordinate system (x,y,z).

Figure 3.

Single road model containing several segments, with the original road line marked in orange, showing the width (d) and the origin of the mesh model coordinate system (x,y,z).

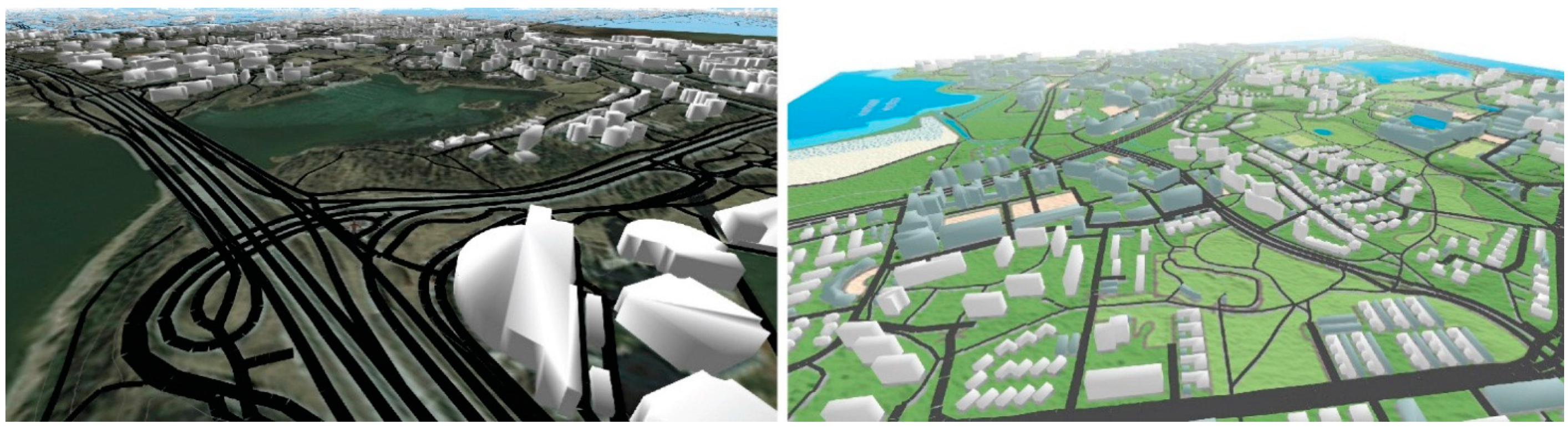

3.3. Terrain

The terrain was represented as a 3D surface, using the using the terrain component of the Meshmoon platform. As the terrain component required a height map image as the source data, the height map was created by triangulating the classified ALS points to a Digital Elevation Model (DEM), and then rasterizing the DEM. The terrain object in Meshmoon was adjusted to match the scale of the other objects, using the elevation contours as a reference. The size of terrain was limited to 1.5 by 1.5 km, to ensure the performance of the resulting scene. To enhance the visual appearance of the terrain, two alternative textures were created for it using NLS open data. Both an aerial image and a map raster were used as a texture (

Figure 4). The colors of the map raster were edited so that they could be adapted better to the scene lighting. The position and scale of the images were defined based on the known dimensions of the image areas in both cases. The images were cropped to accurately fit the terrain. In addition, 2D textured planar surfaces were added to the borders of the terrain, with a lighter colored map raster as texture.

Figure 4.

The terrain with the building and road models textured using an aerial image (left) and an edited map raster (right).

Figure 4.

The terrain with the building and road models textured using an aerial image (left) and an edited map raster (right).

4. Results

By using a combination of 3D modeling and automated processing, three-dimensional, map-like virtual environments could be created for the Meshmoon platform. The method utilized as a proof of concept (

Figure 5) still contained a number of problems. First, there were many manual steps involved in processing the data, especially with the building models. These manual steps were further complicated by long coordinates in the data, which were not supported by Blender’s user interface. The coordinate shift had to be applied manually before and after the editing phase, which made the process quite demanding and prone to error. In addition, the files that contained all of the building and road geometries were large in size and therefore slow to open and save. Since all objects of the same type were in the same file, editing, for example, an individual building required opening the entire file, manually editing the individual building, and then republishing the entire data set each time. Visually, there was also an issue with the objects, as the objects in the topographic database spanned a 12 km by 12 km area, whereas the terrain object (generated from ALS data) only spanned a 3 km by 3 km area. Meshmoon software was unable to reliably display more than one terrain tile at a time with satisfactory performance, so it would not have been possible to expand the terrain by adding more ALS tiles.

Figure 5.

Buildings, roads, terrain, and contours in Meshmoon in an early, manually created version.

Figure 5.

Buildings, roads, terrain, and contours in Meshmoon in an early, manually created version.

By developing the processing methods further, it was possible to solve some of the issues faced when using a combination of automated processing and 3D modeling. The issue caused by different tile sizes of terrain and other objects was resolved by cropping everything to a 1.5 km by 1.5 km area. With the developed automated processing, the number of steps required to create a scene was considerably reduced: The scripts produce an output that can directly be uploaded to the server. Using the scripts, a virtual world scene can be created from any area in Finland for which ALS data are available. The generation and publishing of objects as well as setting up the scene was accomplished in a few hours, starting with downloading the data sets. The process of scene creation is presented in

Figure 6. Since the buildings and roads are modeled as individual objects, the object count is quite high. The 1.5 km by 1.5 km sample area contains approximately 770 mesh objects, but less than 2 MB of mesh data, as the meshes are quite simple.

Figure 6.

The process of scene creation. Automated steps are marked with green. The software implementations we have developed have been marked with an asterisk.

Figure 6.

The process of scene creation. Automated steps are marked with green. The software implementations we have developed have been marked with an asterisk.

Utilizing a 3D Map in a Virtual World

In the virtual world scene, the user can move with a human-sized avatar or using a camera. A moveable downward-looking aerial camera (similar to most 3D map systems), or a free-flying camera (

Figure 7) are both available. The compass application for Meshmoon can be used to make it easier to navigate in the scene, always showing the direction the camera is pointing at.

Figure 7.

The Meshmoon scene can be navigated using an avatar, an aerial camera, or a free-flying camera.

Figure 7.

The Meshmoon scene can be navigated using an avatar, an aerial camera, or a free-flying camera.

The building and road objects retain their object ids and object division of the original GML data. Object data from GML are also included, for example, building use and height classes, which are presented visually, and street names included as additional data in the road objects. The data can be viewed on an object-by-object basis or used to search for objects (

Figure 8) using the application developed on the platform. For example, the distribution of residential buildings in the given area, or the location of a road with a specified name can be visualized by using the developed tool.

Figure 8.

On left: Searching all road objects where the name contains a given string. On right: Searching all buildings marked in the data as commercial-use buildings. The objects that were located are highlighted in orange.

Figure 8.

On left: Searching all road objects where the name contains a given string. On right: Searching all buildings marked in the data as commercial-use buildings. The objects that were located are highlighted in orange.

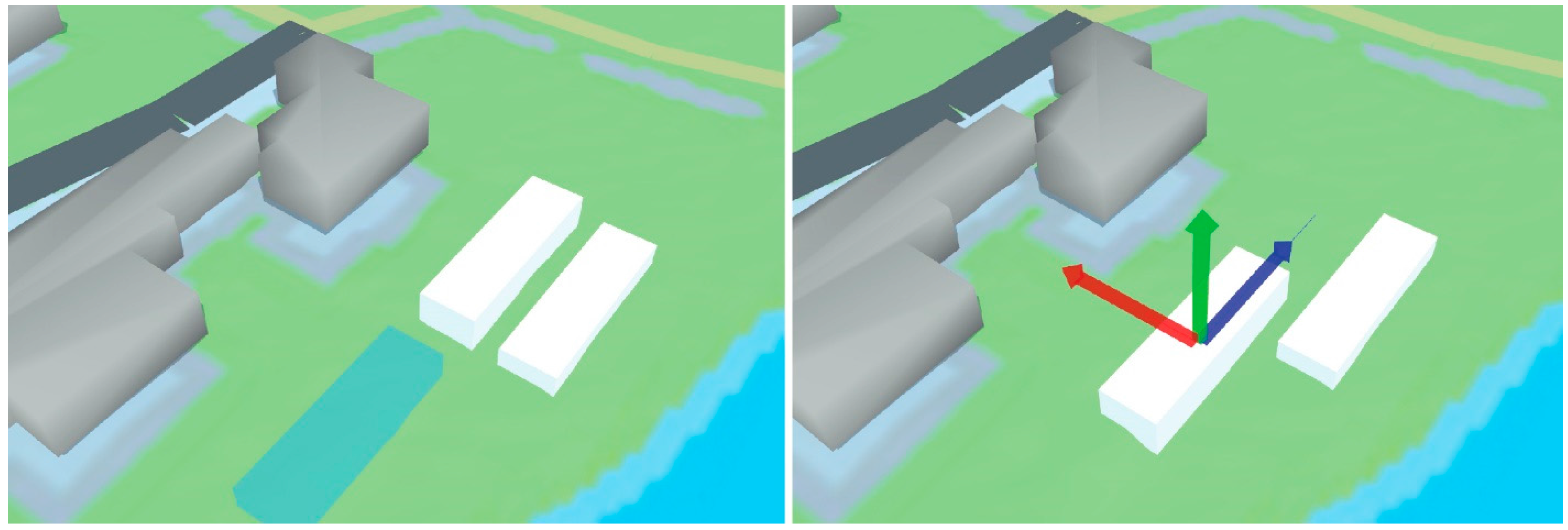

The content-creation tools of the Meshmoon platform can be used with the created scene. It is possible for users to move, scale, and delete existing building objects, to edit their data, or to create new objects using the existing mesh library (

Figure 9). The mesh library contains a set of geometric primitives that can be added to the scene without using any external content production tools. By using these geometric primitives, the proposed building masses can be added to the 3D map, and refine their location and size iteratively. All of this work is carried out directly in the 3D environment. For example, these tools could be utilized to carry out the early stages of urban planning process. Since all data are on the server, changes made to the scene are immediately transferred to all other users online in the same scene, enabling collaboration.

Figure 9.

User adding a block element, and moving a block element.

Figure 9.

User adding a block element, and moving a block element.

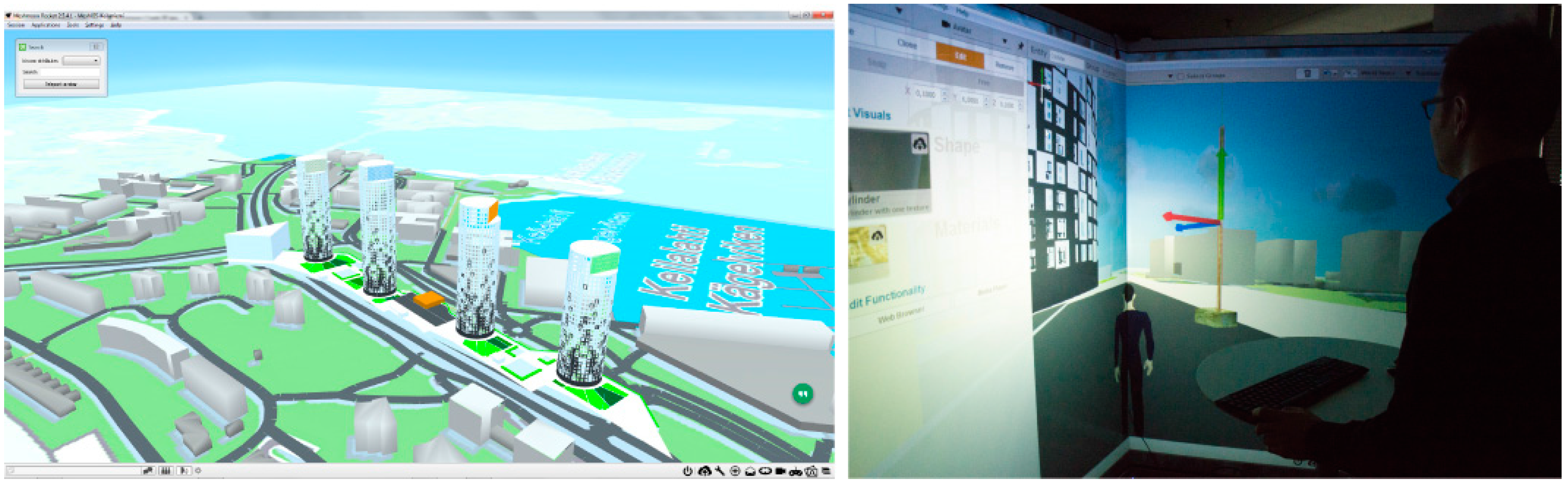

Other 3D models can also be imported to the same environment.

Figure 10 (left) shows an early design stage building model, which has been imported to the scene, and placed to its proposed location. The models can be explored with or without avatar.

Figure 10 (right) shows a user in a two-display CAVE, adding a set of simple mesh objects to the scene. When placing mesh objects, the users preferred to move in the scene as avatar. For studying the model in overall view, an aerial camera was seen as more efficient, as moving long distances with the avatar was too time consuming. Inputting object coordinates directly was also possible, but required more effort from the user, as the scene operates in a shifted coordinate system, and no tools to automatically perform a reverse shift are provided by the platform.

Figure 10.

Left: A building model imported into the scene. Right: User interacting with the model in a CAVE.

Figure 10.

Left: A building model imported into the scene. Right: User interacting with the model in a CAVE.

5. Discussion

The creation of a virtual world scene was partially automated using the developed method. Building and road models were automatically created from the source data. In addition, the files containing their ids, positions, and any additional data were automatically written. The processing was performed separately for both object types.

The resulting scene files had to be manually uploaded to the server, after which uploading the mesh models and assembling the final scene was done automatically. Automating these steps involved in the process would require a higher degree of integration with the platform’s back end. Currently, the conversion from GML is performed on a local machine by running a series of python scripts and publishing the scene using existing content production tools that are part of the Meshmoon system.

The creation of the terrain object consisted of processing the ALS point cloud to a height map image, and then using a terrain component of the virtual world to create a 3D surface from the height map image. This processing chain should clearly be improved in the future. Triangulating a set of ALS points to be used as the 3D mesh representing the terrain would be the most direct solution.

In conclusion, while some manual steps remain, it was possible to write automated scripts for most for the most labor-intensive tasks. Looking at the end result, the representation of buildings as simple block models is an acceptable solution in limited cases only. For more complex built entities, like churches, larger building complexes, water towers etc., the block models fail to sufficiently represent the original building. The use of ALS data to obtain roof geometries for the buildings would help enhance their visual quality and increase the level of detail. The limitation of only two different height classes in buildings also reduces the visual quality of the scene. Currently, a 20-storey office tower cannot be distinguished from a five-storey apartment building by the mesh models alone. This could be resolved by using a data set containing more detailed height information; for example, by calculating the correct building heights from ALS data. In addition, having only a single height definition for a building footprint is problematic if the building is located on a sloped surface. In these cases, the building outline should be extruded downwards as well to prevent a gap appearing between the building and the terrain. In an ideal situation, the used data set should have a terrain intersection curve for building objects. With this information, it would be possible to create the building models without this issue.

The representation of roads as chains of simple planes is not problem free, either. Currently, the resulting road meshes have obvious gaps, when the consecutive segments are in angle. For best results, a data set containing the geometry of road edges should be used.

The presented system utilizes a shifted, orthogonal coordinate system in the virtual world. As such, it can be used for any region from which suitable source data is available, by using a different coordinate shift. While this simplifies the processing, it also creates a set of problems. If the size of the virtual world scene is expanded, a geographic coordinate system using latitudes and longitudes should eventually be utilized. Currently, our method does not include any solutions to handling a scene with two differing coordinate systems.

The performance of the platform limits the size of the area displayed. As a visualization tool, Meshmoon is inferior to existing visualization applications (e.g., see [

68]). Additionally, the simplistic, not textured road and building models do not fully utilize the rendering potential offered by the platform. The road objects, and roofs could be textured using an orthophoto, but for texturing the building facades, either oblique aerial images, or terrestrial images would be required. If a more complex model of the environment would be used, such as illustrated by [

16], the sense of immersion offered by the platform would be stronger. Having photo textures in buildings would also help to identify them. However, as the experience of developing small applications utilizing the data, and the potential for collaborative interaction with the model illustrate, there are some benefits to using an existing virtual world system, especially as it permits application development. In addition, display devices like CAVE or OculusRift can easily be used, as they are readily supported by the platform.

In the presented case, all processing methods were specifically developed for the data sets offered by NLS as open data, and using a shifted coordinate system adapted for Southern Finland. As such, they are not directly transferrable to other countries. However, a different coordinate shift and set of tags in GML could be used to create similar virtual world scenes from other regions, if suitable source data is available. The emergence of standardized city model format, CityGML [

69] reduces the difficulties of developing area-specific processing workflows, and paves the way for more widely applicable virtual world generation methods.

For building virtual worlds, there are other platforms besides Meshmoon, for example [

70,

71]. Commercial hosting is available at least for [

71]. The automated generation of simple 3D city models containing building and road objects from Open Street Map data has been implemented by Vizicities [

72], and can also be found from older GIS literature [

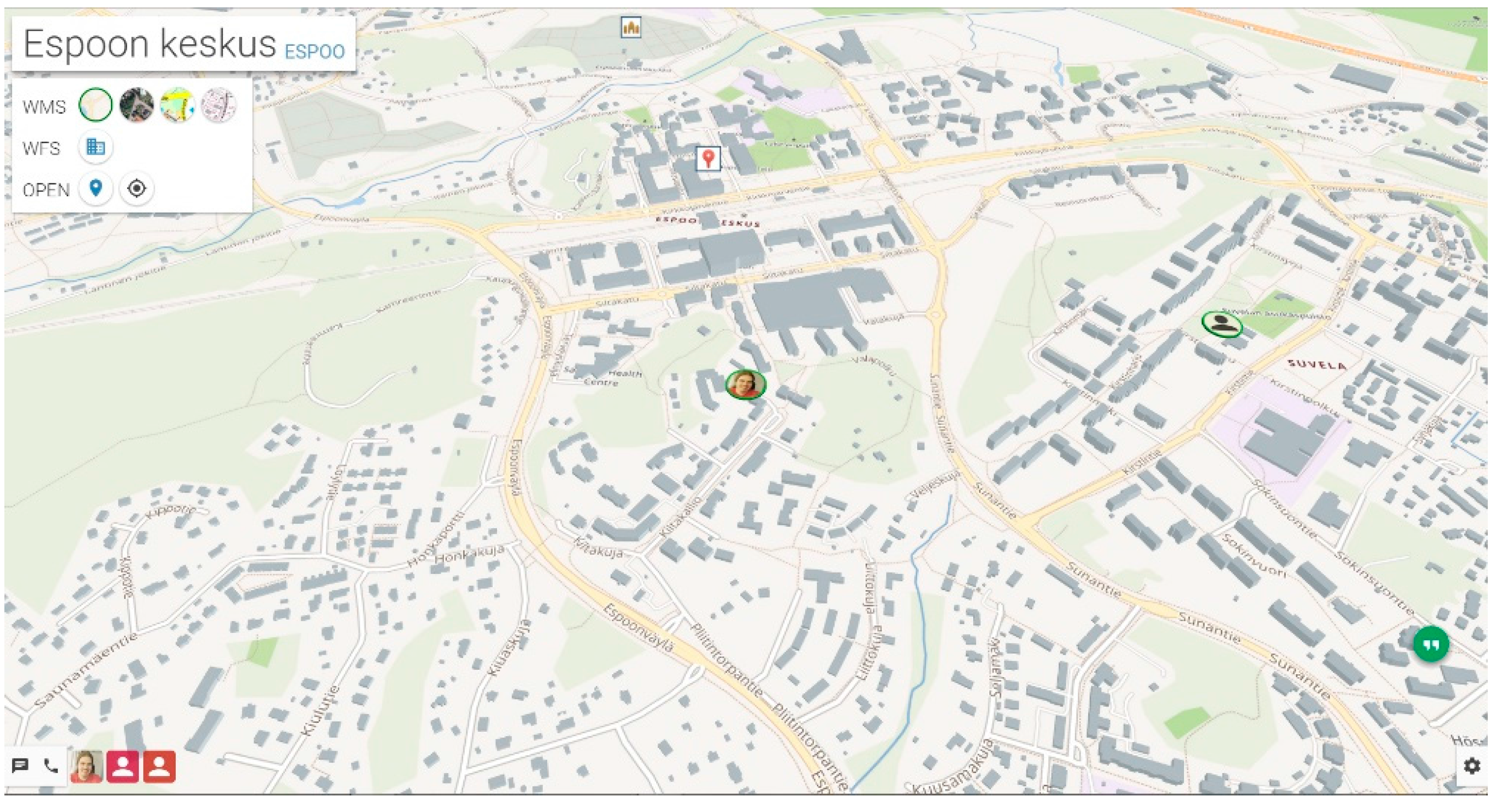

18]. However, the combination of multi user virtual worlds and large map like scenes is less common. Meshmoon Geo [

73], a commercial tool based on realXtend technology and the Meshmoon platform was released by Adminotech in February 2015, employing the data from OSM and the city of Espoo. The scene (

Figure 11) is generated automatically from GIS data. In this case, a dataset containing accurate building heights is used. However, instead of a DEM, a flat terrain is used. The data from city is obtained directly from the GIS server via a WFS/WMS interface. Video conferencing tools can be used in the scene. Currently, we have been unable to verify the ability of other existing virtual world platforms to support large, map like scenes.

Figure 11.

A Meshmoon Geo scene.

Figure 11.

A Meshmoon Geo scene.

6. Conclusions

The paper demonstrated methods that enable the creation of 3D maps for a collaborative, up-to-date virtual world based on open source technology, from open data, while maintaining the attribute data related to the objects. The generation of a virtual world scene from NLS data was partially automated. The work was implemented in Meshmoon virtual environment. Its ability to support existing 3D mesh formats was a significant enabling factor when developing the automated processing methods. As the client-server architecture of Meshmoon permits synchronized editing of world entities by users, it is possible to collaboratively edit the map data in the scene and, thus, keeping the maps updated also by crowdsourcing. Improving the open data maps with collaborative mapping is one of the potential strengths of the platform. For more efficient editing, and content creation in the scene, the content creation tools of the platform should be improved. Currently, the tools do not function fluently with an aerial camera, but are best suited for working with avatar.

The application development possibility in virtual worlds is a significant new development direction with geographic data. It has great potential in areas like 3D data visualization [

30], urban planning [

74,

75], gaming [

76], or disaster management [

77]. As more GIS data are released as open data, we can expect that more applications utilizing such data will be developed, both by GIS professionals and by developers coming from other disciplines. To stimulate development, the data should be released in a format that easily permits the utilization of data for a variety of applications. The development of automatic processing methods that permit the use of open data-based 3D maps in virtual environments is a prerequisite for such applications.