Improved Dominance Soft Set Based Decision Rules with Pruning for Leukemia Image Classification

Abstract

1. Introduction

1.1. Research Motivation

1.2. Research Contribution

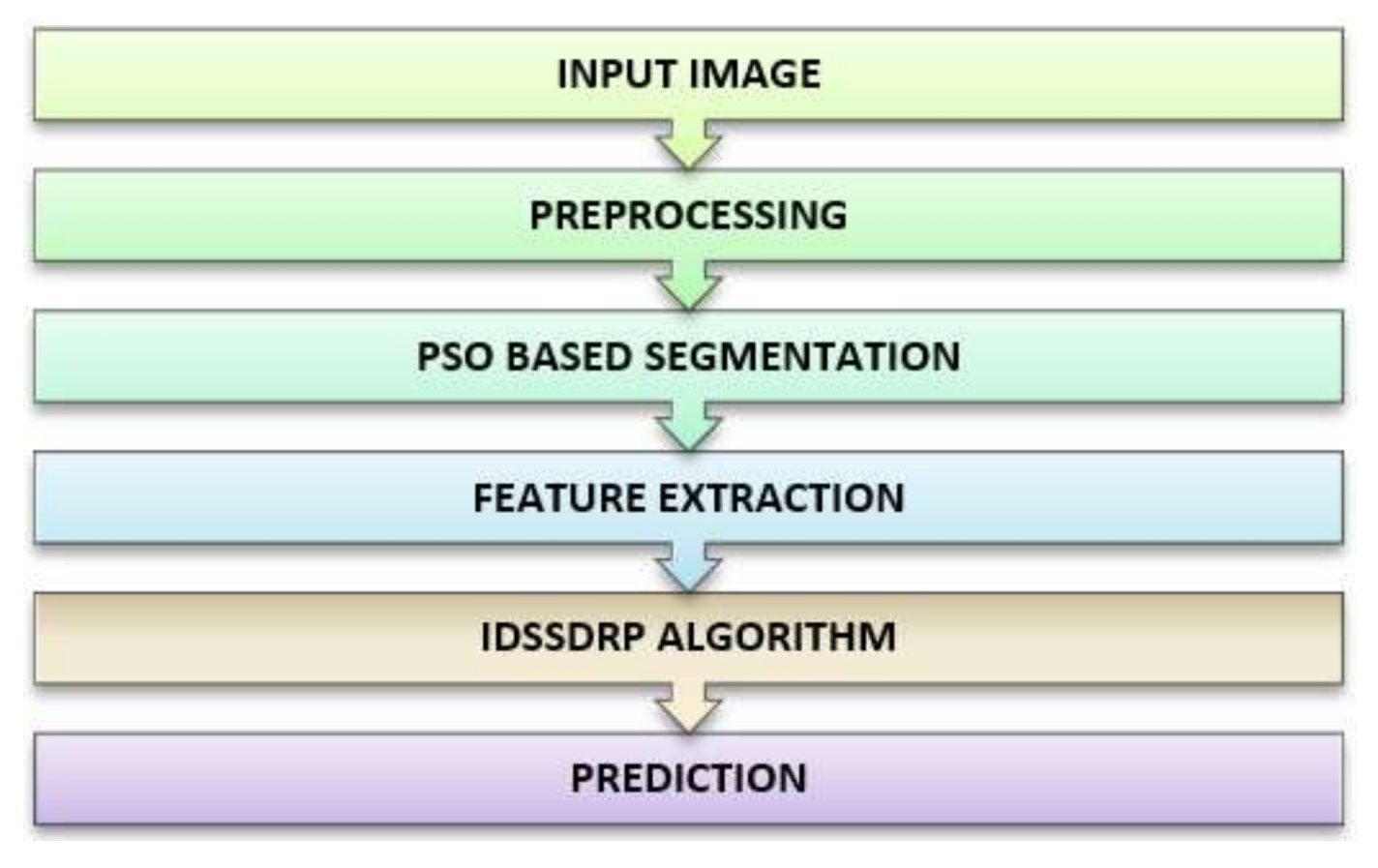

- A new algorithm is applied to segment the leukemia nucleus based on Particle Swarm Optimization (PSO), which is a popular search optimization algorithm.

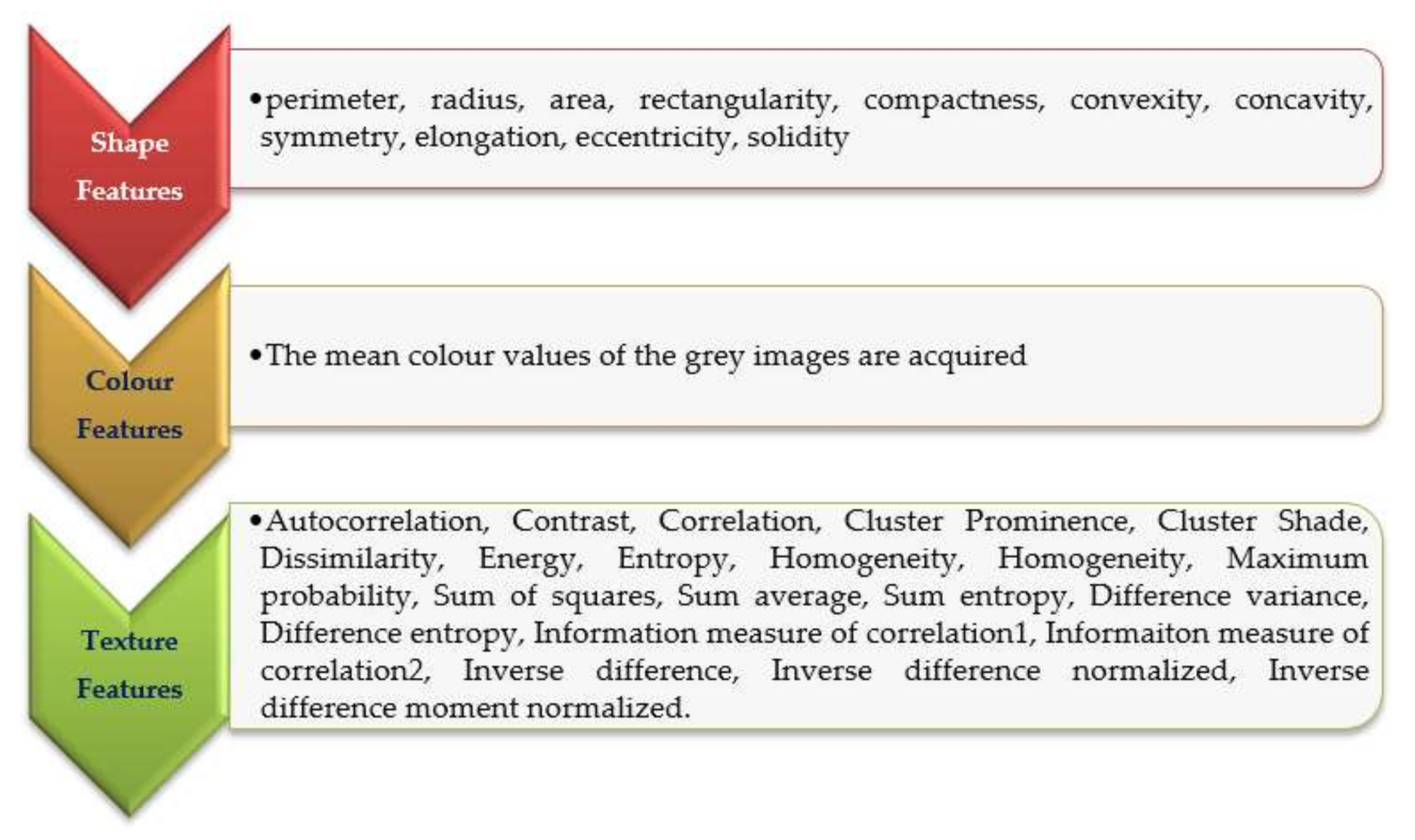

- The Haralick texture-based GLCM is employed to extract features in four directions, and shape and color based features from the segmented image.

- Improved dominance soft set-based decision rules with pruning algorithm (IDSSDRP) is applied to classify the leukemia cancerous image. This is carried out in three phases:

- In the first phase, an improved dominance soft set-based reduction technique using AND operation in multi-soft set is applied to find the reduct set.

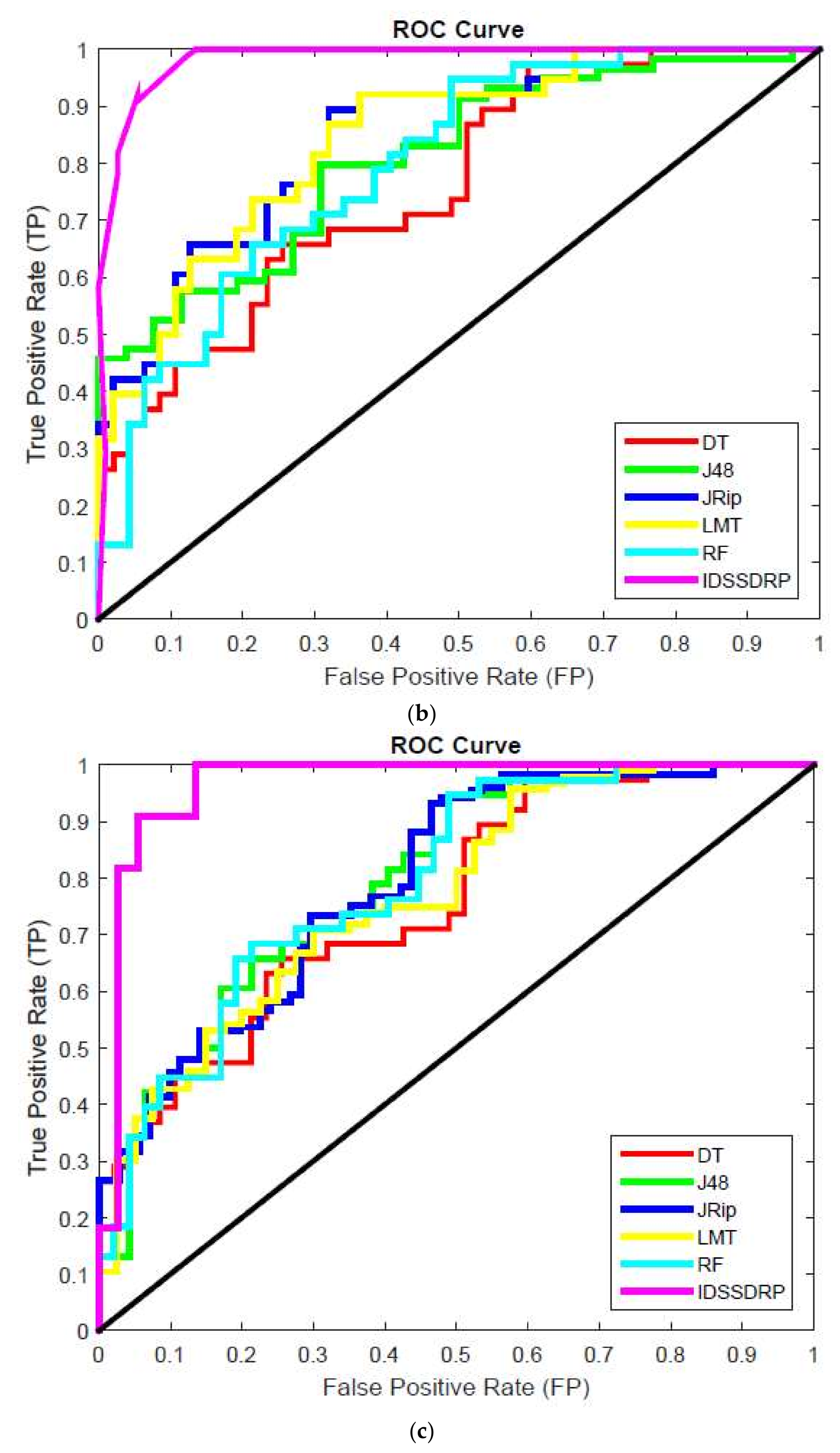

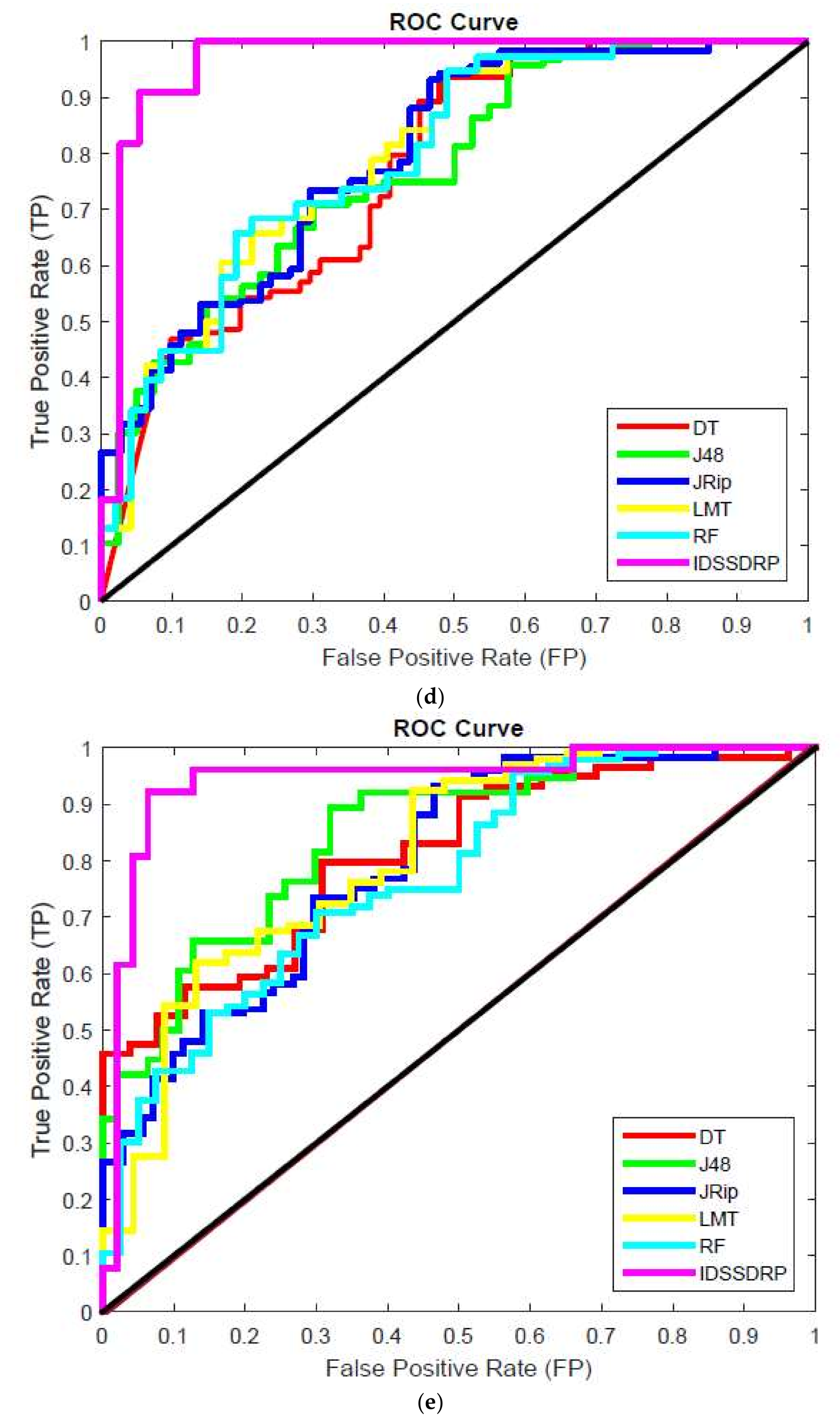

- In the second phase, the dominance soft set-based approach is applied to generate decision rules. Receiver operating characteristic (ROC) curve analysis is used to evaluate the efficiency of the proposed decision rules.

- In the third phase, the rule pruning method is employed to simplify the rules to minimize the processing time for predicting the diseases (tumor image).

- Different classification algorithms are evaluated using appropriate classification measures.

2. Related Work

3. Methods and Materials

3.1. Input Image

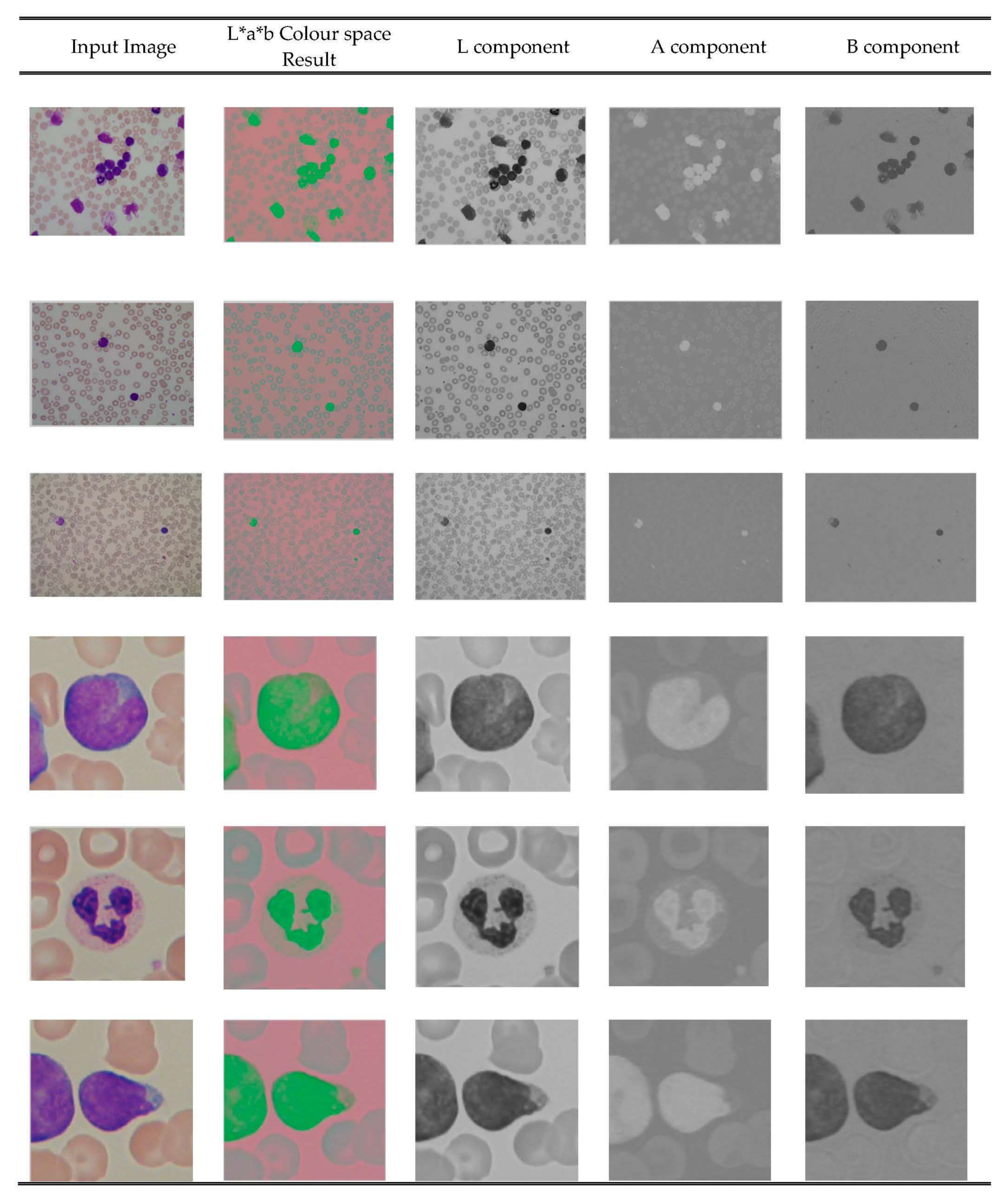

3.2. Preprocessing

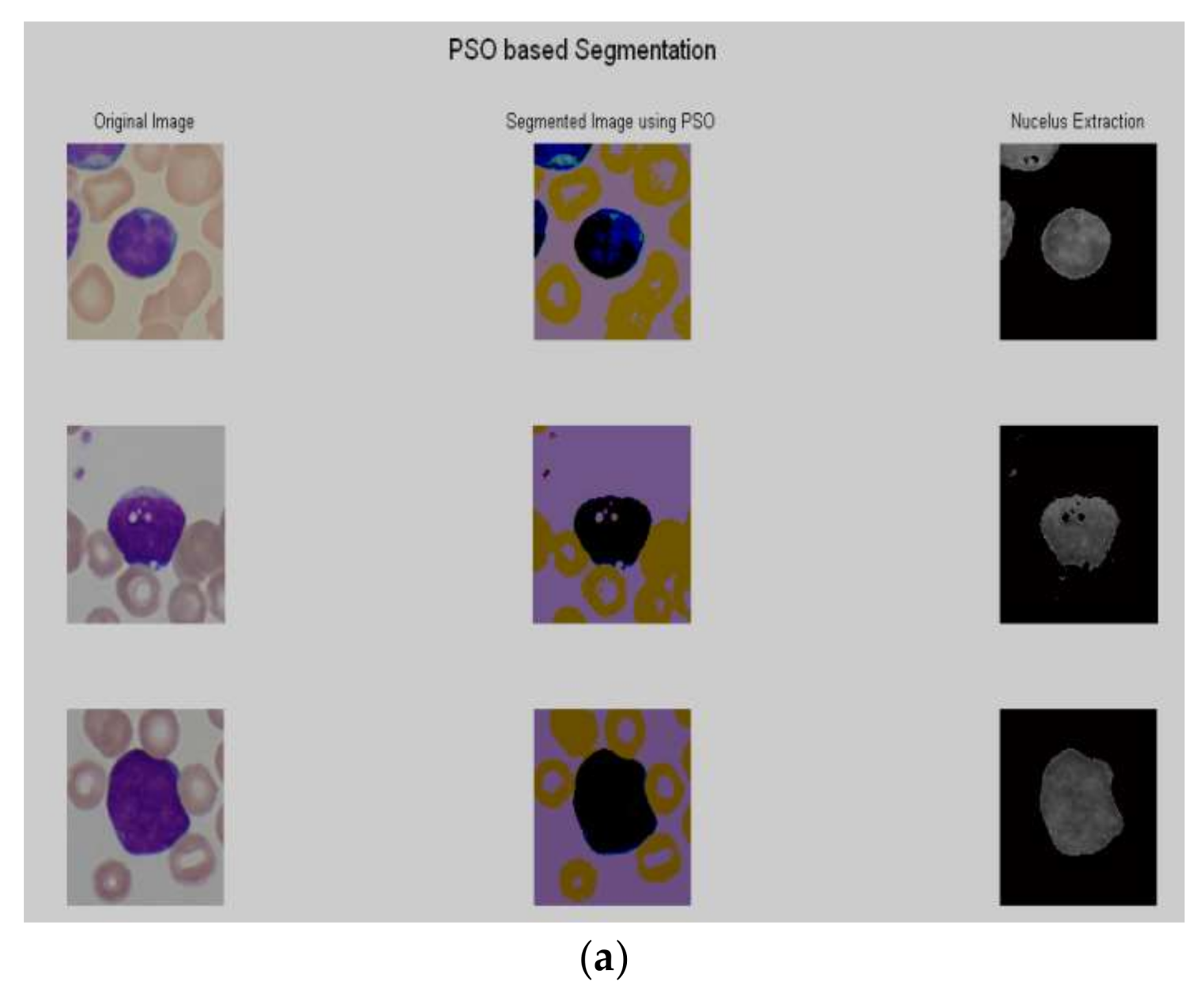

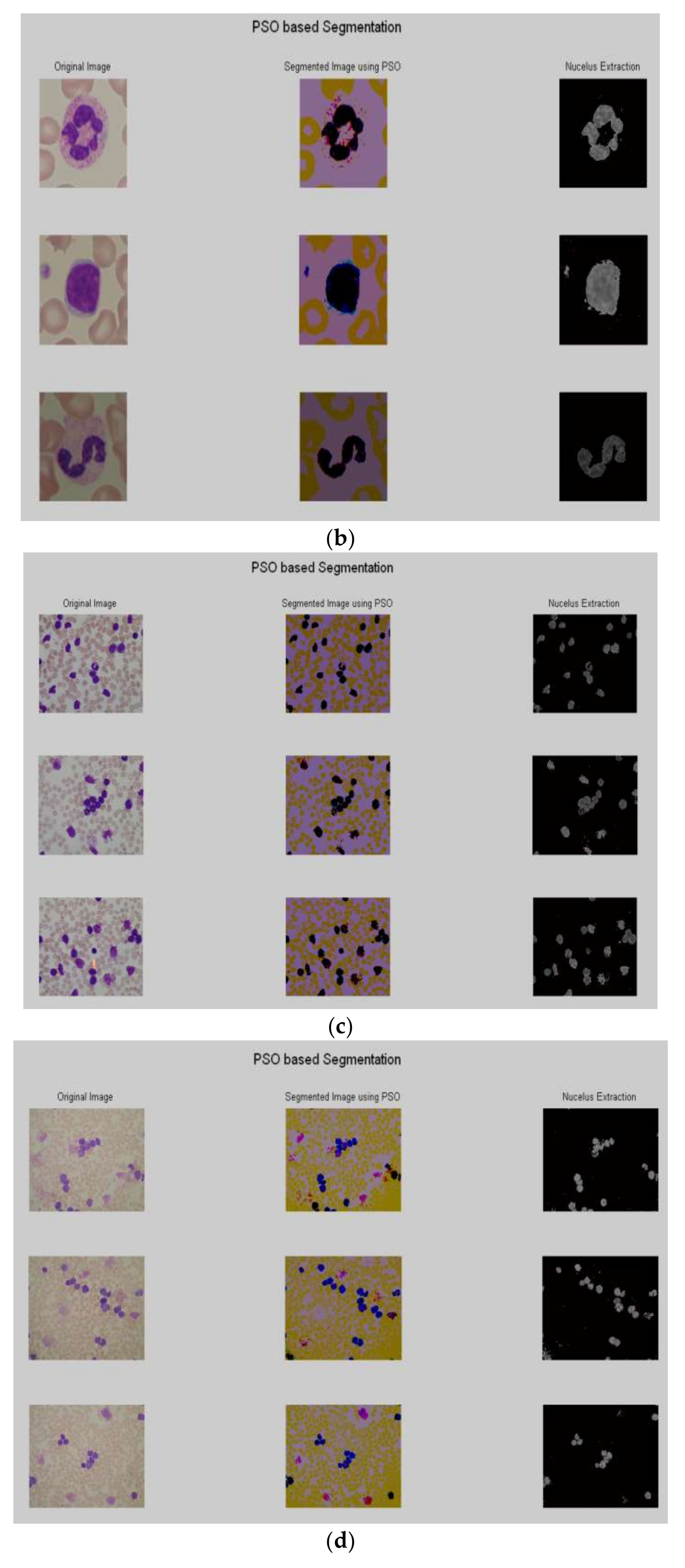

3.3. Segmentation

| Algorithm 1 Pseudo Code for PSO algorithm |

| End |

| End |

3.4. Feature Extraction

3.5. Dominance Based Soft Set Theory

3.6. Dominance Soft Set Based Decision Rules

4. The Proposed Method: Improved Dominance Soft Set Based Decision Rules with Rule Pruning (IDSSDRP)

| Algorithm 2 Improved dominance soft set-based attributes reduction using AND operation |

| Phase 1: (Improved Dominance Soft Set based Attributes Reduction using AND operation) |

| Construct the Multi-valued information Table (F, S) S |

| U = |

| Algorithm 3 Decision Rules Generation |

| Phase 2: (Decision Rules—DR Generation) |

| Algorithm 4 Decision Rule Pruning |

| Phase 3: (Rule Pruning—RP) |

4.1. Case Study

4.1.1. Phase-1 (Attribute Reduction)

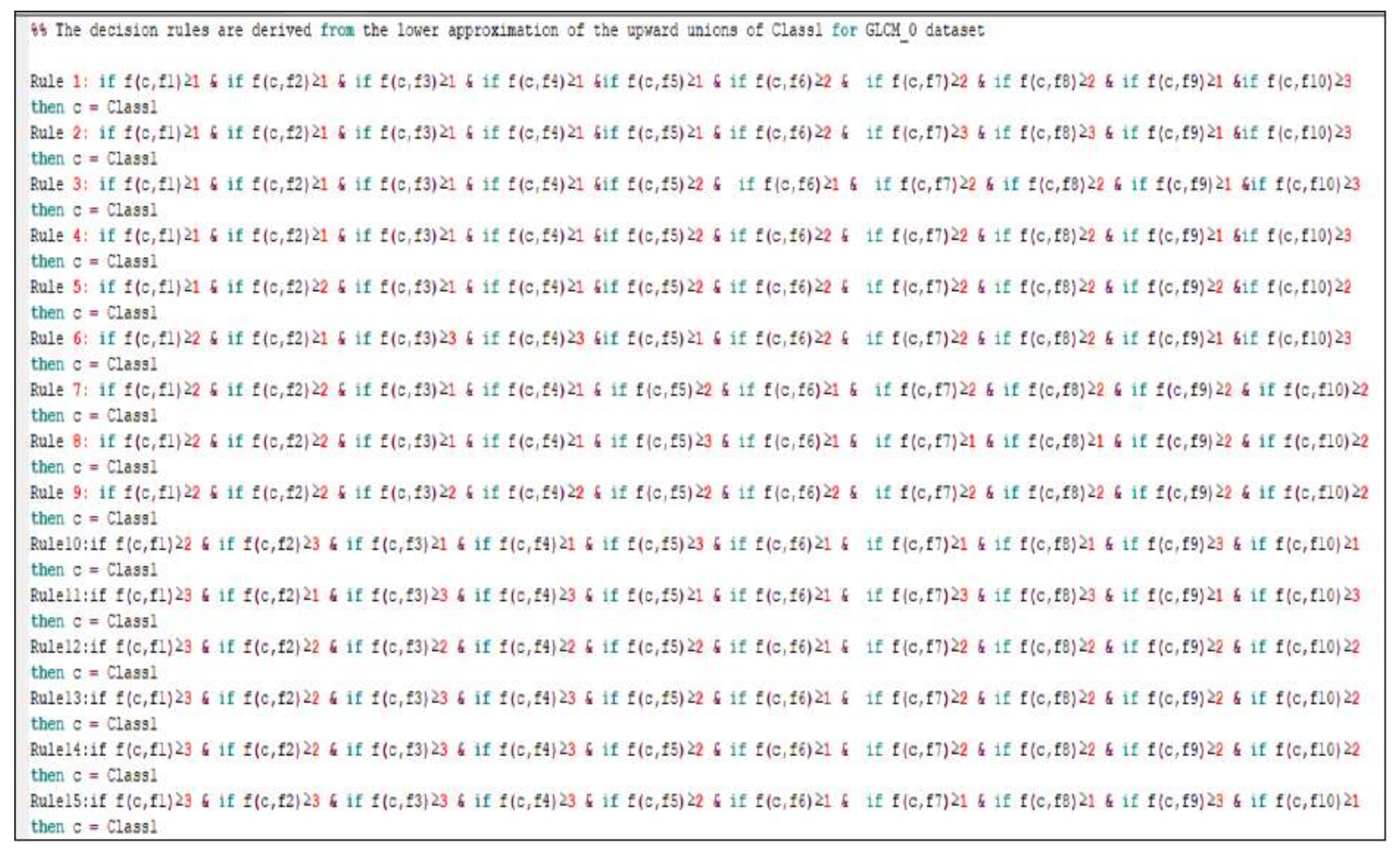

4.1.2. Phase-2 (Decision Rules Generation)

- Rule 1:

- Rule 2:

- Rule 3:

- Rule 4:

- Rule 5:

- Rule 6:

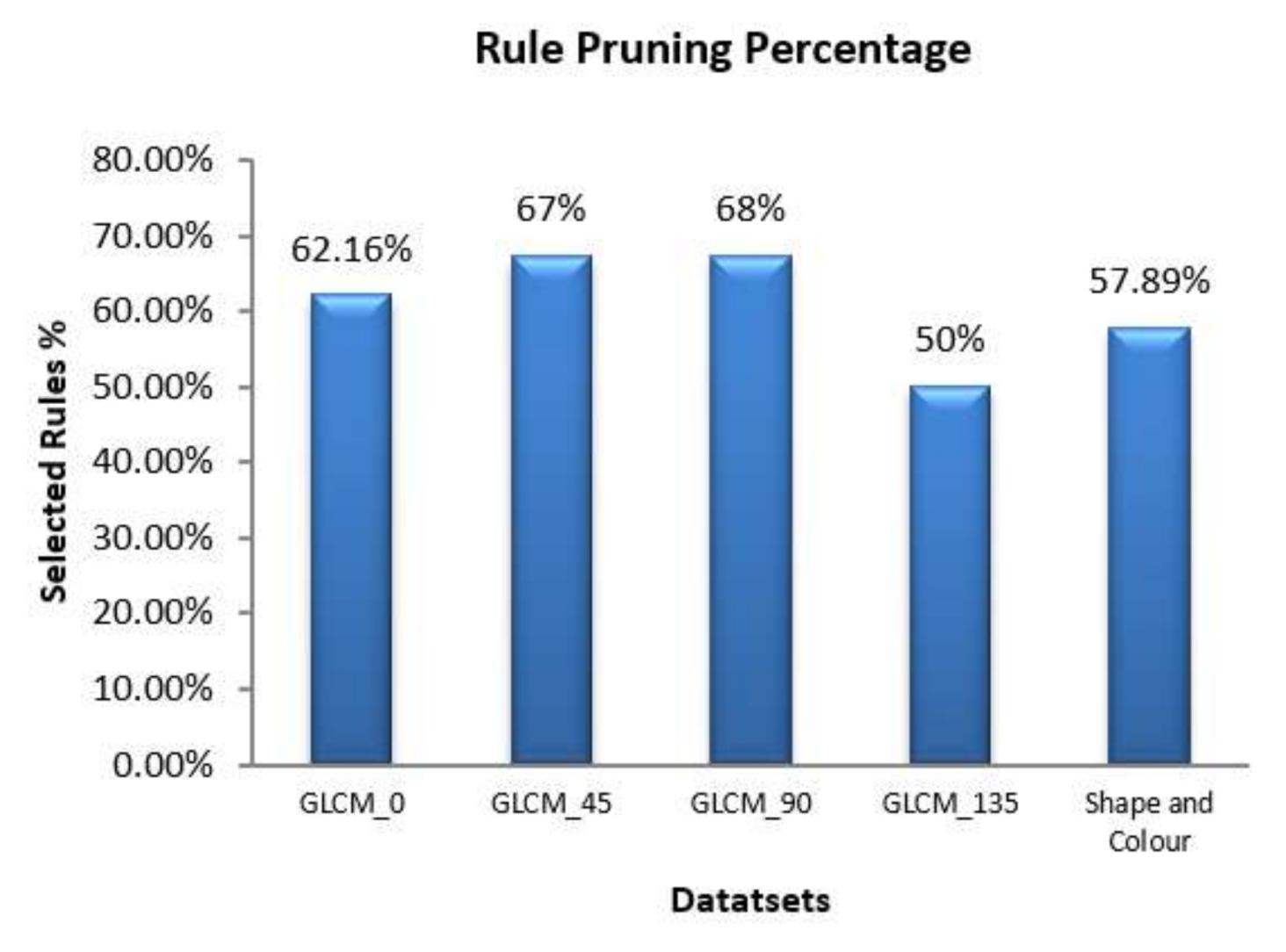

4.1.3. Phase-3 (Decision Rule Pruning)

- Rule 1:

- Rule 2:

- Rule 3:

5. Results and Discussions

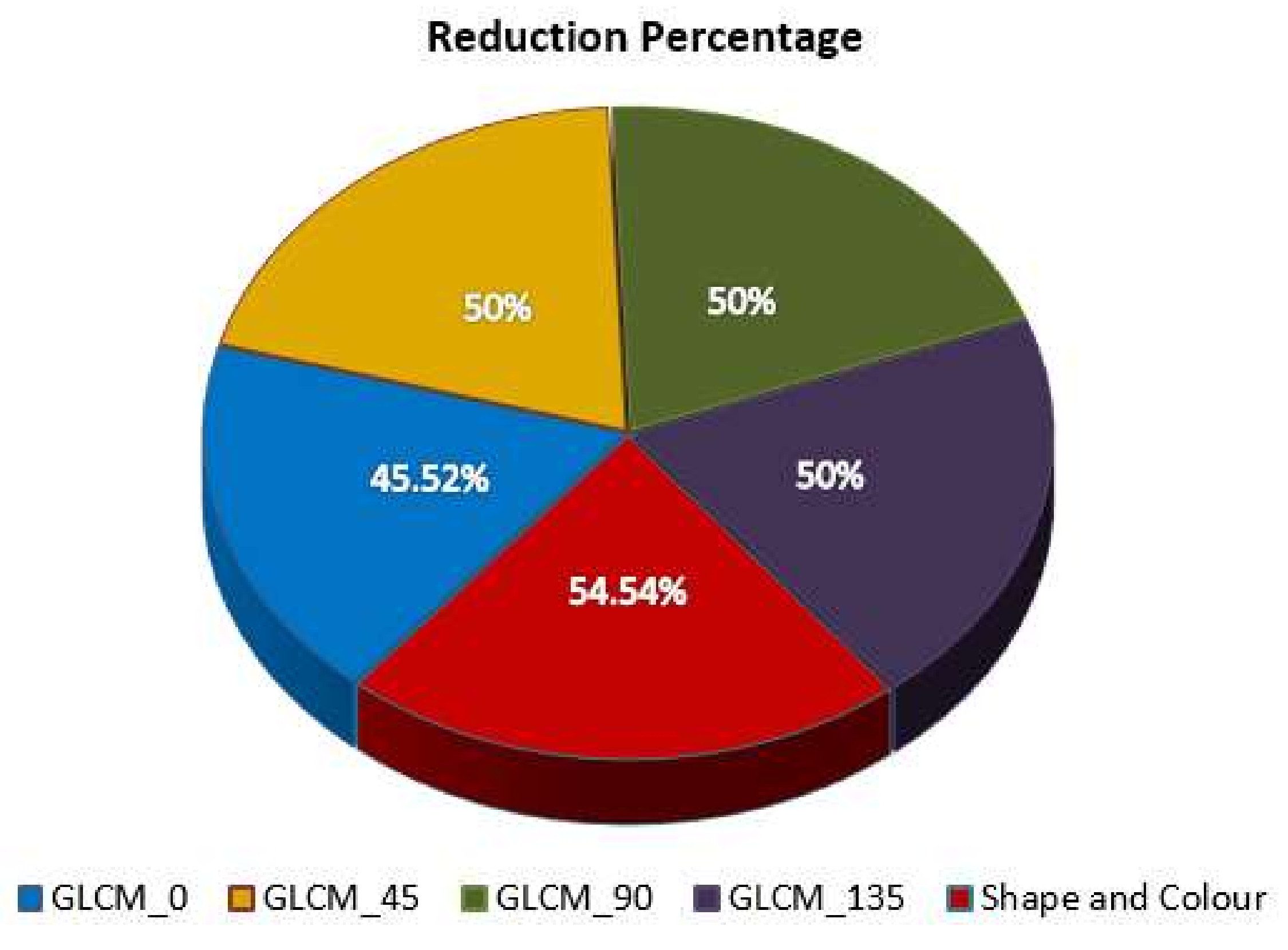

5.1. Performance Analysis of Attribute Reduction Algorithm

5.2. Evaluation of Proposed IDSSDRP Algorithm

5.3. Graphical Performance Assessment for IDSSDRP

6. Conclusions and Future Scope

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

Appendix B

References

- National Cancer Institute (NCI): Division of Cancer Control and Population Sciences (DCCPS), Surveillance Research Program (SRP). Available online: https://seer.cancer.gov/statfacts/html/leuks.html (accessed on 11 April 2020).

- Arora, R.S.; Arora, B. Acute leukemia in children: A review of the current Indian data. South Asian J. Cancer 2016, 5, 155–160. [Google Scholar] [CrossRef]

- NCRP Annual Reports. Available online: http://www.ncrpindia.org (accessed on 11 April 2020).

- Mohapatra, S.; Patra, D.; Satpathy, S. An ensemble classifier system for early diagnosis of acute lymphoblastic leukemia in blood microscopic images. Neural Comput. Appl. 2014, 24, 1887–1904. [Google Scholar] [CrossRef]

- Kennedy, J.; Eberhart, R. Particle swarm optimization (PSO). In Proceedings of the IEEE International Conference on Neural Networks, Perth, Australia, 27 November–1 December 1995; pp. 1942–1948. [Google Scholar] [CrossRef]

- Zhang, Y.; Huang, D.; Ji, M.; Xie, F. Image segmentation using PSO and PCM with Mahalanobis distance. Expert Syst. Appl. 2011, 38, 9036–9040. [Google Scholar] [CrossRef]

- Benaichouche, A.N.; Oulhadj, H.; Siarry, P. Improved spatial fuzzy c-means clustering for image segmentation using PSO initialization, Mahalanobis distance and post-segmentation correction. Digit. Signal Process. 2013, 23, 1390–1400. [Google Scholar] [CrossRef]

- Chander, A.; Chatterjee, A.; Siarry, P. A new social and momentum component adaptive PSO algorithm for image segmentation. Expert Syst. Appl. 2011, 38, 4998–5004. [Google Scholar] [CrossRef]

- Omran, M.G.; Engelbrecht, A.P.; Salman, A. Image classification using particle swarm optimization. Recent Adv. Simulated Evol. Learn. 2004, 347–365. [Google Scholar] [CrossRef]

- Inbarani, H.H.; Azar, A.T.; Jothi, G. Supervised hybrid feature selection based on PSO and rough sets for medical diagnosis. Comput. Methods Programs Biomed. 2014, 113, 175–185. [Google Scholar] [CrossRef]

- Wahhab, H.T.A. Classification of Acute Leukemia Using Image Processing and Machine Learning: Techniques. Ph.D. Thesis, University of Malaya, Kuala Lumpur, Malaysia, 2015. [Google Scholar]

- Molodtsov, D. Soft set theory—First results. Comput. Math. Appl. 1999, 37, 19–31. [Google Scholar] [CrossRef]

- Maji, P.K.; Roy, A.R.; Biswas, R. An application of soft sets in a decision-making problem. Comput. Math. Appl. 2002, 44, 1077–1083. [Google Scholar] [CrossRef]

- Hassanien, A.E.; Ali, J.M. Rough set approach for generation of classification rules of breast cancer data. Informatica 2004, 15, 23–38. [Google Scholar] [CrossRef]

- Du, W.S.; Hu, B.Q. Dominance-based rough fuzzy set approach and its application to rule induction. Eur. J. Oper. Res. 2017, 261, 690–703. [Google Scholar] [CrossRef]

- Isa, A.M.; Rose, A.N.M.; Deris, M.M. Dominance-based soft set approach in decision-making analysis. In Lecture Notes in Computer Science, Proceedings of the Advanced Data Mining and Applications, Beijing, China, 17–19 December 2011; Tang, J., King, I., Chen, L., Wang, J., Eds.; Springer: Berlin/Heidelberg, Germany, 2011; Volume 7120, p. 7120. [Google Scholar]

- Ma, X.; Liu, Q.; Zhan, J. A survey of decision-making methods based on certain hybrid soft set models. Artif. Intell. Rev. 2017, 47, 507–530. [Google Scholar] [CrossRef]

- Karaaslan, F. Possibility neutrosophic soft sets and PNS-decision making method. App. Soft Comp. 2017, 54, 403–414. [Google Scholar] [CrossRef]

- Kumar, S.U.; Inbarani, H.H.; Kumar, S.S. Bijective soft set based classification of medical data. In Proceedings of the 2013 International Conference on Pattern Recognition, Informatics and Mobile Engineering, Salem, India, 21–22 February 2013; pp. 517–552. [Google Scholar] [CrossRef]

- Zhan, J.; Ali, M.I.; Mehmood, N. On a novel uncertain soft set model: Z-soft fuzzy rough set model and corresponding decision making methods. Appl. Soft Comput. 2017, 56, 446–457. [Google Scholar] [CrossRef]

- Zhan, J.; Liu, Q.; Herawan, T. A novel soft rough set: Soft rough hemirings and corresponding multicriteria group decision making. Appl. Soft Comput. 2017, 54, 393–402. [Google Scholar] [CrossRef]

- Putzu, L.; Caocci, G.; Di Ruberto, C. Leucocyte classification for leukaemia detection using image processing techniques. Artif. Intell. Med. 2014, 62, 179–191. [Google Scholar] [CrossRef]

- Jothi, G.; Inbarani, H.H.; Azar, A.T.; Almustafa, K.M. Feature Reduction based on Modified Dominance Soft Set. In Proceedings of the 5th International Conference on Fuzzy Systems and Data Mining (FSDM2019), Kitakyushu City, Japan, 19–21 October 2019; pp. 261–272. [Google Scholar]

- Inbarani, H.H.; Azar, A.T.; Jothi, G. Leukemia image segmentation using a hybrid histogram-based soft covering rough k-means clustering algorithm. Electronics 2020, 9, 188. [Google Scholar] [CrossRef]

- Mishra, S.; Majhi, B.; Sa, P.K. Texture feature-based classification on microscopic blood smear for acute lymphoblastic leukemia detection. Biomed. Signal Process. Control 2019, 47, 303–311. [Google Scholar] [CrossRef]

- Jothi, G.; Inbarani, H.H.; Azar, A.T.; Devi, K.R. Rough set theory with Jaya optimization for acute lymphoblastic leukemia classification. Neural Comput. Appl. 2019, 31, 5175–5194. [Google Scholar] [CrossRef]

- Al-jaboriy, S.S.; Sjarif, N.N.A.; Chuprat, S.; Abduallah, W.M. Acute lymphoblastic leukemia segmentation using local pixel information. Pattern Recognit. Lett. 2019, 125, 85–90. [Google Scholar] [CrossRef]

- Negm, A.S.; Hassan, O.A.; Kandil, A.H. A decision support system for acute leukaemia classification based on digital microscopic images. Alex. Eng. J. 2018, 57, 2319–2332. [Google Scholar] [CrossRef]

- Labati, R.D.; Piuri, V.; Scotti, F. All-IDB: The acute lymphoblastic leukemia image database for image processing. In Proceedings of the 18th IEEE International Conference on Image Processing, Brussels, Belgium, 11–14 September 2011; pp. 2045–2048. [Google Scholar]

- Scotti, F. Robust segmentation and measurements techniques of white cells in blood microscope images. In Proceedings of the IEEE Instrumentation and Measurement Technology Conference Proceedings, Sorrento, Italy, 24–27 April 2006; pp. 43–48. [Google Scholar]

- Scotti, F. Automatic morphological analysis for acute leukemia identification in peripheral blood microscope images. In Proceedings of the IEEE International Conference on Computational Intelligence for Measurement Systems and Applications, Messian, Italy, 20–22 July 2005; pp. 96–101. [Google Scholar]

- Piuri, V.; Scotti, F. Morphological classification of blood leucocytes by microscope images. In Proceedings of the IEEE International Conference on Computational Intelligence for Measurement Systems and Applications, Boston, MA, USA, 14–16 July 2004; pp. 103–108. [Google Scholar]

- Prabu, G.; Inbarani, H.H. PSO for acute lymphoblastic leukemia classification in blood microscopic images. Indian J. Eng. 2015, 12, 146–151. [Google Scholar]

- Jati, A.; Singh, G.; Mukherjee, R.; Ghosh, M.; Konar, A.; Chakraborty, C.; Nagar, A.K. Automatic leukocyte nucleus segmentation by intuitionistic fuzzy divergence based thresholding. Micron 2014, 58, 55–65. [Google Scholar] [CrossRef] [PubMed]

- El-Baz, A.; Jiang, X.; Suri, J.S. Biomedical Image Segmentation: Advances and Trends; CRC Press: Boca Raton, FL, USA, 2016. [Google Scholar]

- Haralick, R.M.; Shanmugam, K. Textural features for image classification. IEEE Trans. Syst. Man Cybern. 1973, 6, 610–621. [Google Scholar] [CrossRef]

- Soh, L.K.; Tsatsoulis, C. Texture analysis of SAR sea ice imagery using gray level co-occurrence matrices. IEEE Trans. Geosci. Remote Sens. 1999, 37, 780–795. [Google Scholar] [CrossRef]

- Clausi, D.A. An analysis of co-occurrence texture statistics as a function of grey level quantization. Can. J. Remote Sens. 2002, 28, 45–62. [Google Scholar] [CrossRef]

- Jothi, G.; Inbarani, H.H. Hybrid tolerance rough set–firefly based supervised feature selection for MRI brain tumor image classification. Appl. Soft Comput. 2016, 46, 639–651. [Google Scholar]

- Alsalem, M.A.; Zaidan, A.A.; Zaidan, B.B.; Hashim, M.; Madhloom, H.T.; Azeez, N.D.; Alsyisuf, S. A review of the automated detection and classification of acute leukaemia: Coherent taxonomy, datasets, validation and performance measurements, motivation, open challenges and recommendations. Comput. Methods Prog. Biomed. 2018, 158, 93–112. [Google Scholar] [CrossRef]

- Jothi, G.; Inbarani, H.H.; Azar, A.T. Hybrid tolerance rough set: PSO based supervised feature selection for digital mammogram images. Int. J. Fuzzy Syst. Appl. 2013, 3, 15–30. [Google Scholar] [CrossRef]

- Jothi, G.; Inbarani, H.H. Soft set based feature selection approach for lung cancer. Int. J. Sci. Eng. Res. 2012, 3, 1–7. [Google Scholar]

- Herawan, T.; Deris, M.M. On multi-soft sets construction in information systems. In Emerging Intelligent Computing Technology and Applications. With Aspects of Artificial Intelligence, Lecture Notes in Computer Science, ICIC 2009, Ulsan, South Korea, 16–19 September 2009; Huang, D.S., Jo, K.H., Lee, H.H., Kang, H.J., Bevilacqua, V., Eds.; Springer: Berlin/Heidelberg, Germany, 2009; Volume 5755. [Google Scholar]

- Herawan, T.; Rose, A.N.M.; Mat Deris, M. Soft set theoretic approach for dimensionality reduction. In Database Theory and Application. DTA 2009, Communications in Computer and Information Science; Slezak, D., Kim, T., Zhang, Y., Ma, J., Chung, K., Eds.; Springer: Berlin/Heidelberg, Germany, 2009; Volume 64. [Google Scholar]

- Mitchell, T.M. Machine Learning; McGraw Hill: Burr Ridge, IL, USA, 1997; Volume 45, pp. 870–877. [Google Scholar]

- Quinlan, J.R. C4. 5: Programs for Machine Learning, Morgan Kaufmann Series in Machine Learning; Elsevier: Amsterdam, The Netherlands, 1993. [Google Scholar]

- Cohen, W.W. Fast effective rule induction. In Proceedings of the Twelfth International Conference on Machine Learning, Tahoe City, CA, USA, 9–12 July 1995; pp. 115–123. [Google Scholar]

- Landwehr, N.; Hall, M.; Frank, E. Logistic model trees. Mach. Learn. 2005, 59, 161–205. [Google Scholar] [CrossRef]

- Liaw, A.; Wiener, M. Classification and regression by random. For. R News 2002, 2, 18–22. [Google Scholar]

- Bekkar, M.; Djemaa, H.K.; Alitouche, T.A. Evaluation measures for models assessment over imbalanced data sets. J. Inf. Eng. Appl. 2013, 3, 27–38. [Google Scholar]

- Sokolova, M.; Lapalme, G. A systematic analysis of performance measures for classification tasks. Inf. Process. Manag. 2009, 45, 427–437. [Google Scholar] [CrossRef]

- Demšar, J. Statistical comparisons of classifiers over multiple data sets. J. Mach. Learn. Res. 2006, 7, 1–30. [Google Scholar]

- Ganesan, J.; Inbarani, H.H.; Azar, A.T.; Polat, K. Tolerance rough set firefly-based quick reduct. Neural Comp. Appl. 2017, 28, 2995–3008. [Google Scholar] [CrossRef]

- Sayed, G.I.; Hassanien, A.E.; Azar, A.T. Feature selection via a novel chaotic crow search algorithm. Neural Comp. Appl. 2019, 31, 171–188. [Google Scholar] [CrossRef]

- Inbarani, H.H.; Kumar, S.U.; Azar, A.T.; Hassanien, A.E. Hybrid rough-bijective soft set classification system. Neural Comp. Appl. 2018, 29, 67–78. [Google Scholar] [CrossRef]

- Kumar, S.S.; Inbarani, H.H.; Azar, A.T.; Polat, K. Covering-based rough set classification system. Neural Comp. Appl. 2017, 28, 2879–2888. [Google Scholar] [CrossRef]

- Fawcett, T. An introduction to ROC analysis. Pattern Recognit. Lett. 2006, 27, 861–887. [Google Scholar] [CrossRef]

| Candidate | a1 (Degree) | a2 (Work_Experience) | a3 (German_Lang) | a4 (Personality) | d Decision_Class |

|---|---|---|---|---|---|

| 1 | MBA | Medium | Known | Excellent | Accept |

| 2 | MBA | Low | Known | Normal | Reject |

| 3 | M.Sc | Low | Known | Good | Reject |

| 4 | MCA | High | Known | Normal | Accept |

| 5 | MCA | Medium | Known | Normal | Reject |

| 6 | MCA | High | Known | Excellent | Accept |

| 7 | MBA | High | Unknown | Good | Accept |

| 8 | M.Sc | Low | Unknown | Excellent | Reject |

| a1 | a2 | a3 | a4 | d | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| MBA | M.Sc | MCA | Medium | Low | High | Known | Unknown | Excellent | Normal | Good | Accept | Reject |

| 1 | 0 | 0 | 1 | 0 | 0 | 1 | 0 | 1 | 0 | 0 | 1 | 0 |

| 1 | 0 | 0 | 0 | 1 | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 1 |

| 0 | 1 | 0 | 0 | 1 | 0 | 1 | 0 | 0 | 0 | 1 | 0 | 1 |

| 0 | 0 | 1 | 0 | 0 | 1 | 1 | 0 | 0 | 1 | 0 | 1 | 0 |

| 0 | 0 | 1 | 1 | 0 | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 1 |

| 0 | 0 | 1 | 0 | 0 | 1 | 1 | 0 | 1 | 0 | 0 | 1 | 0 |

| 1 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 0 | 0 | 1 | 1 | 0 |

| 0 | 1 | 0 | 0 | 1 | 0 | 0 | 1 | 1 | 0 | 0 | 0 | 1 |

| Dataset | No. of Features Extracted | IDSSA |

|---|---|---|

| GLCM_0 | 22 | 10 |

| GLCM_45 | 22 | 11 |

| GLCM_90 | 22 | 11 |

| GLCM_135 | 22 | 11 |

| Shape and Colour | 22 | 12 |

| Description | Results Obtained for Confusion Matrix | ||||||||||||||

| Actual Output | - | Predicted Output | DT | J48 | JRip | LMT | RF | Proposed | |||||||

| Healthy Image (HI) | Unhealthy Image (UI) | HI | UI | HI | UI | HI | UI | HI | UI | HI | UI | HI | UI | ||

| Healthy Image | Correctly Predicted as Healthy Image (TP) | Incorrectly Predicted as Unhealthy Image (FN) | 119 | 56 | 122 | 53 | 114 | 61 | 122 | 53 | 106 | 68 | 162 | 13 | |

| Unhealthy Image | Incorrectly Predicted as Healthy Image (FP) | Correctly Predicted as Unhealthy Image (TN) | 13 | 180 | 14 | 179 | 14 | 179 | 14 | 179 | 2 | 191 | 3 | 190 | |

| Metrics | Explanation | Equation |

| Sensitivity (or Recall) (in %) | It is employed to measure the True positive rates | |

| Specificity (in %) | Measure the true negative rates | |

| Accuracy (in %) | Calculate the probability of the true value of the class attributes. | |

| Precision (in %) | Degree of exactness | |

| F1 score | The harmonic mean of precision and recall | |

| Error Rate (=1 − accuracy) | An approximation of misclassification probability. | |

| Matthews Correlation Coefficient (MCC) | The association between the actual and predicted class | |

| Lift | The proportion among the outcomes obtained with and without the Model | |

| G-mean | The product of the prediction accuracies for both classes | |

| Youden’s index | The arithmetic mean among sensitivity and specificity | |

| Balanced Classification Rate (BCR) | The mean of sensitivity and specificity. | |

| Balanced Error Rate (BER)or | The mean of the errors in each class. It also named as Half Total Error Rate (HTER) |

| Prediction Metrics | Decision Tree | J48 | JRip | LMT | Random Forest | Proposed |

|---|---|---|---|---|---|---|

| Accuracy | 79.81 | 79.81 | 78.37 | 78.85 | 78.37 | 98.08 |

| Sensitivity | 94.52 | 94.52 | 97.26 | 93.84 | 93.84 | 98.63 |

| Specificity | 45.16 | 45.16 | 33.87 | 43.55 | 41.94 | 96.77 |

| Precision | 80.23 | 80.23 | 77.60 | 79.65 | 79.19 | 98.63 |

| Error Rate | 0.20 | 0.20 | 0.22 | 0.21 | 0.22 | 0.02 |

| MCC | 0.48 | 0.48 | 0.44 | 0.45 | 0.44 | 0.95 |

| F1 measure | 86.79 | 86.79 | 86.32 | 86.16 | 85.89 | 98.63 |

| G-mean | 87.08 | 87.08 | 86.87 | 86.45 | 86.20 | 98.63 |

| Lift value | 1.14 | 1.14 | 1.11 | 1.13 | 1.13 | 1.41 |

| Youden’s index | 0.40 | 0.40 | 0.31 | 0.37 | 0.36 | 0.95 |

| BCR | 69.84 | 69.84 | 65.57 | 68.69 | 67.89 | 97.70 |

| BER | 0.30 | 0.30 | 0.34 | 0.31 | 0.32 | 0.02 |

| Prediction Metrics | Decision Tree | J48 | JRip | LMT | Random Forest | Proposed |

|---|---|---|---|---|---|---|

| Accuracy | 77.88 | 78.85 | 79.33 | 79.81 | 78.85 | 97.12 |

| Sensitivity | 92.47 | 97.26 | 92.47 | 92.47 | 93.15 | 97.26 |

| Specificity | 43.55 | 35.48 | 48.39 | 50.00 | 45.16 | 96.77 |

| Precision | 79.41 | 78.02 | 80.84 | 81.33 | 80.00 | 98.61 |

| Error Rate | 0.22 | 0.21 | 0.21 | 0.20 | 0.21 | 0.03 |

| MCC | 0.43 | 0.45 | 0.47 | 0.48 | 0.45 | 0.93 |

| F1 measure | 85.44 | 86.59 | 86.26 | 86.54 | 86.08 | 97.93 |

| G-mean | 85.69 | 87.11 | 86.46 | 86.72 | 86.33 | 97.93 |

| Lift value | 1.13 | 1.11 | 1.15 | 1.16 | 1.14 | 1.40 |

| Youden’s index | 0.36 | 0.33 | 0.41 | 0.42 | 0.38 | 0.94 |

| BCR | 68.01 | 66.37 | 70.43 | 71.23 | 69.16 | 97.02 |

| BER | 0.32 | 0.34 | 0.30 | 0.29 | 0.31 | 0.03 |

| Prediction Metrics | Decision Tree | J48 | JRip | LMT | Random Forest | Proposed |

|---|---|---|---|---|---|---|

| Accuracy | 81.25 | 81.25 | 81.25 | 82.21 | 82.21 | 99.04 |

| Sensitivity | 96.58 | 96.58 | 96.58 | 97.26 | 97.26 | 99.32 |

| Specificity | 45.16 | 45.16 | 45.16 | 46.77 | 46.77 | 98.39 |

| Precision | 80.57 | 80.57 | 80.57 | 81.14 | 81.14 | 99.32 |

| Error Rate | 0.19 | 0.19 | 0.19 | 0.18 | 0.18 | 0.01 |

| MCC | 0.52 | 0.52 | 0.52 | 0.55 | 0.55 | 0.98 |

| F1 measure | 87.85 | 87.85 | 87.85 | 88.47 | 88.47 | 99.32 |

| G-mean | 88.21 | 88.21 | 88.21 | 88.84 | 88.84 | 99.32 |

| Lift value | 1.15 | 1.15 | 1.15 | 1.16 | 1.16 | 1.41 |

| Youden’s index | 0.42 | 0.42 | 0.42 | 0.44 | 0.44 | 0.98 |

| BCR | 70.87 | 70.87 | 70.87 | 72.02 | 72.02 | 98.85 |

| BER | 0.29 | 0.29 | 0.29 | 0.28 | 0.28 | 0.01 |

| Prediction Metrics | Decision Tree | J48 | JRip | LMT | Random Forest | Proposed |

|---|---|---|---|---|---|---|

| Accuracy | 79.81 | 79.81 | 79.81 | 78.85 | 76.92 | 97.60 |

| Sensitivity | 94.52 | 94.52 | 92.47 | 91.10 | 89.04 | 98.63 |

| Specificity | 45.16 | 45.16 | 50.00 | 50.00 | 48.39 | 95.16 |

| Precision | 80.23 | 80.23 | 81.33 | 81.10 | 80.25 | 97.96 |

| Error Rate | 0.20 | 0.20 | 0.20 | 0.21 | 0.23 | 0.02 |

| MCC | 0.48 | 0.48 | 0.48 | 0.46 | 0.41 | 0.94 |

| F1 measure | 86.79 | 86.79 | 86.54 | 85.81 | 84.42 | 98.29 |

| G-mean | 87.08 | 87.08 | 86.72 | 85.95 | 84.53 | 98.29 |

| Lift value | 1.14 | 1.14 | 1.16 | 1.16 | 1.14 | 1.40 |

| Youden’s index | 0.40 | 0.40 | 0.42 | 0.41 | 0.37 | 0.94 |

| BCR | 69.84 | 69.84 | 71.23 | 70.55 | 68.71 | 96.90 |

| BER | 0.30 | 0.30 | 0.29 | 0.29 | 0.31 | 0.03 |

| Prediction Metrics | Decision Tree | J48 | JRip | LMT | Random Forest | Proposed |

|---|---|---|---|---|---|---|

| Accuracy | 81.25 | 81.73 | 79.81 | 81.73 | 80.29 | 95.67 |

| Sensitivity | 96.58 | 95.89 | 92.47 | 95.21 | 92.47 | 97.26 |

| Specificity | 45.16 | 48.39 | 50.00 | 50.00 | 51.61 | 91.94 |

| Precision | 80.57 | 81.40 | 81.33 | 81.76 | 81.82 | 96.60 |

| Error Rate | 0.19 | 0.18 | 0.20 | 0.18 | 0.20 | 0.04 |

| MCC | 0.52 | 0.54 | 0.48 | 0.54 | 0.50 | 0.90 |

| F1 measure | 87.85 | 88.05 | 86.54 | 87.97 | 86.82 | 96.93 |

| G-mean | 88.21 | 88.35 | 86.72 | 88.23 | 86.98 | 96.93 |

| Lift value | 1.15 | 1.16 | 1.16 | 1.16 | 1.17 | 1.38 |

| Youden’s index | 0.42 | 0.44 | 0.42 | 0.45 | 0.44 | 0.89 |

| BCR | 70.87 | 72.14 | 71.23 | 72.60 | 72.04 | 94.60 |

| BER | 0.29 | 0.28 | 0.29 | 0.27 | 0.28 | 0.05 |

| Classification Algorithms | Accuracy | Sensitivity | Specificity |

|---|---|---|---|

| Existing Approach | |||

| NB | 80.95 | 69.49 | 88.4 |

| KNN | 78.57 | 79.59 | 78.43 |

| MLP | 78.57 | 85.9 | 75.53 |

| RBFN | 79.05 | 64.12 | 81.05 |

| SVM | 91.43 | 75.13 | 98.7 |

| GLCM_0 Dataset | |||

| Decision Tree | 79.81 | 94.52 | 45.16 |

| J48 | 79.81 | 94.52 | 45.16 |

| JRip | 78.37 | 97.26 | 33.87 |

| LMT | 78.85 | 93.84 | 43.55 |

| Random Forest | 78.37 | 93.84 | 41.94 |

| Proposed IDSSDRP | 98.08 | 98.63 | 96.77 |

| GLCM_45 Dataset | |||

| Decision Tree | 77.88 | 92.47 | 43.55 |

| J48 | 78.85 | 97.26 | 35.48 |

| JRip | 79.33 | 92.47 | 48.39 |

| LMT | 79.81 | 92.47 | 50 |

| Random Forest | 78.85 | 93.15 | 45.16 |

| Proposed IDSSDRP | 97.12 | 97.26 | 96.77 |

| GLCM_90 Dataset | |||

| Decision Tree | 81.25 | 96.58 | 45.16 |

| J48 | 81.25 | 96.58 | 45.16 |

| JRip | 81.25 | 96.58 | 45.16 |

| LMT | 82.21 | 97.26 | 46.77 |

| Random Forest | 82.21 | 97.26 | 46.77 |

| Proposed IDSSDRP | 99.04 | 99.32 | 98.39 |

| GLCM_135 Dataset | |||

| Decision Tree | 79.81 | 94.52 | 45.16 |

| J48 | 79.81 | 94.52 | 45.16 |

| JRip | 79.81 | 92.47 | 50 |

| LMT | 78.85 | 91.1 | 50 |

| Random Forest | 76.92 | 89.04 | 48.39 |

| Proposed IDSSDRP | 97.6 | 98.63 | 95.16 |

| Shape and Colour | |||

| Decision Tree | 81.25 | 96.58 | 45.16 |

| J48 | 81.73 | 95.89 | 48.39 |

| JRip | 79.81 | 92.47 | 50 |

| LMT | 81.73 | 95.21 | 50 |

| Random Forest | 80.29 | 92.47 | 51.61 |

| Proposed IDSSDRP | 95.67 | 97.26 | 91.94 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jothi, G.; Inbarani, H.H.; Azar, A.T.; Koubaa, A.; Kamal, N.A.; Fouad, K.M. Improved Dominance Soft Set Based Decision Rules with Pruning for Leukemia Image Classification. Electronics 2020, 9, 794. https://doi.org/10.3390/electronics9050794

Jothi G, Inbarani HH, Azar AT, Koubaa A, Kamal NA, Fouad KM. Improved Dominance Soft Set Based Decision Rules with Pruning for Leukemia Image Classification. Electronics. 2020; 9(5):794. https://doi.org/10.3390/electronics9050794

Chicago/Turabian StyleJothi, Ganesan, Hannah H. Inbarani, Ahmad Taher Azar, Anis Koubaa, Nashwa Ahmad Kamal, and Khaled M. Fouad. 2020. "Improved Dominance Soft Set Based Decision Rules with Pruning for Leukemia Image Classification" Electronics 9, no. 5: 794. https://doi.org/10.3390/electronics9050794

APA StyleJothi, G., Inbarani, H. H., Azar, A. T., Koubaa, A., Kamal, N. A., & Fouad, K. M. (2020). Improved Dominance Soft Set Based Decision Rules with Pruning for Leukemia Image Classification. Electronics, 9(5), 794. https://doi.org/10.3390/electronics9050794