1. Introduction

Telemedicine is an emerging mode of modern medical services, which can not only meet the real-time monitoring and health management of people’s daily health at home, but also greatly relieve the pressure of outpatient visits in hospitals. Considering the two major current conditions of the global population, the health problems of people have risen to become concerns of the whole society. The first is that the aging population is becoming increasingly serious. In the future, many elderly people will have to face the problem of the difficulty in receiving medical treatment in hospitals. The second is that the sub-health phenomenon of young people is becoming more common with the increasing pace and pressure of the current society. The in-depth integration of the healthcare industry with the Internet of Things (IoT), 5G, Artificial Intelligence (AI), big data, cloud computing, and other advanced technologies will hopefully solve the abovementioned health problems. In particular, the 5G-enabled IoT and AI are continuously driving innovative applications in the telemedicine industry.

In the fields of medical health and medical sciences, the application scenarios of telemedicine mainly include remote monitoring, remote ultrasound, remote consultation, remote surgery, mobile medicine, as well as intelligent control of medical drugs and equipment to achieve personal medical management and health data management.

At present, wearable biomedical devices for vital signs monitoring are developing rapidly. Low cost, low power consumption, small size, and intelligence are the keys to developing wearable biomedical devices. Wearable health devices have many advantages such as the continuity of medical services and real-time perception of health data. They can realize real-time monitoring of human life characteristics and health conditions, helping people understand their physical conditions and detect symptoms early. Advanced semiconductor technology has greatly reduced the cost and power consumption of wearable devices, where the performance has been greatly improved. The combination of AI and the IoT has enhanced the degree of intelligence of wearable devices. The research and development of wearable biomedical devices have become an important direction in the field of telemedicine. In addition, it is important to guarantee the rapid development of telemedicine. It is expected that wearable devices related to medicine and health will occupy the majority of the entire wearable device market.

In recent years, the 5G network has attracted great interest in various vertical industries due to its high speed, large connections, and low latency, among others. The high-speed characteristic of 5G enables it to support the 4K/8K remote high-definition consultation and high-speed transmission and sharing of medical image data; furthermore, it allows experts to conduct consultations anytime and anywhere, improves diagnosis accuracy and guidance efficiency, and extends high-quality medical resources to the patients’ homes. The large-connection characteristic of 5G makes it possible to connect a large number of medical sensor terminals, related video devices, and wearable biomedical devices to achieve real-time perception and measurements, capture and transfer patient health information, and to realize the continuous and accurate monitoring of health information without time and space restrictions. In addition, in special wards such as radiology wards and infectious wards, medical staff can also control the medical assistant robot to move to the designated bed and complete the remote nursing service under the premise of protecting medical staff.

Various medical sensors and wearable biomedical devices can collect real-time patient health indicators, including body temperature, heart rate, blood pressure, blood glucose, and electrocardiogram, among others. These devices can be connected to the cloud; the latter performs AI analysis of patient health-related data, records the patient’s health status, provides disease analysis for doctors and patients, and provides auxiliary decision-making for patient treatment. The doctor remotely evaluates the patient’s condition and provides immediate feedback on the patient’s health. Some wearable health monitors can perform local data analysis without connecting to the cloud. For example, a heart rate monitor that can independently analyze health data can provide the necessary response immediately to alert caregivers when patients need help.

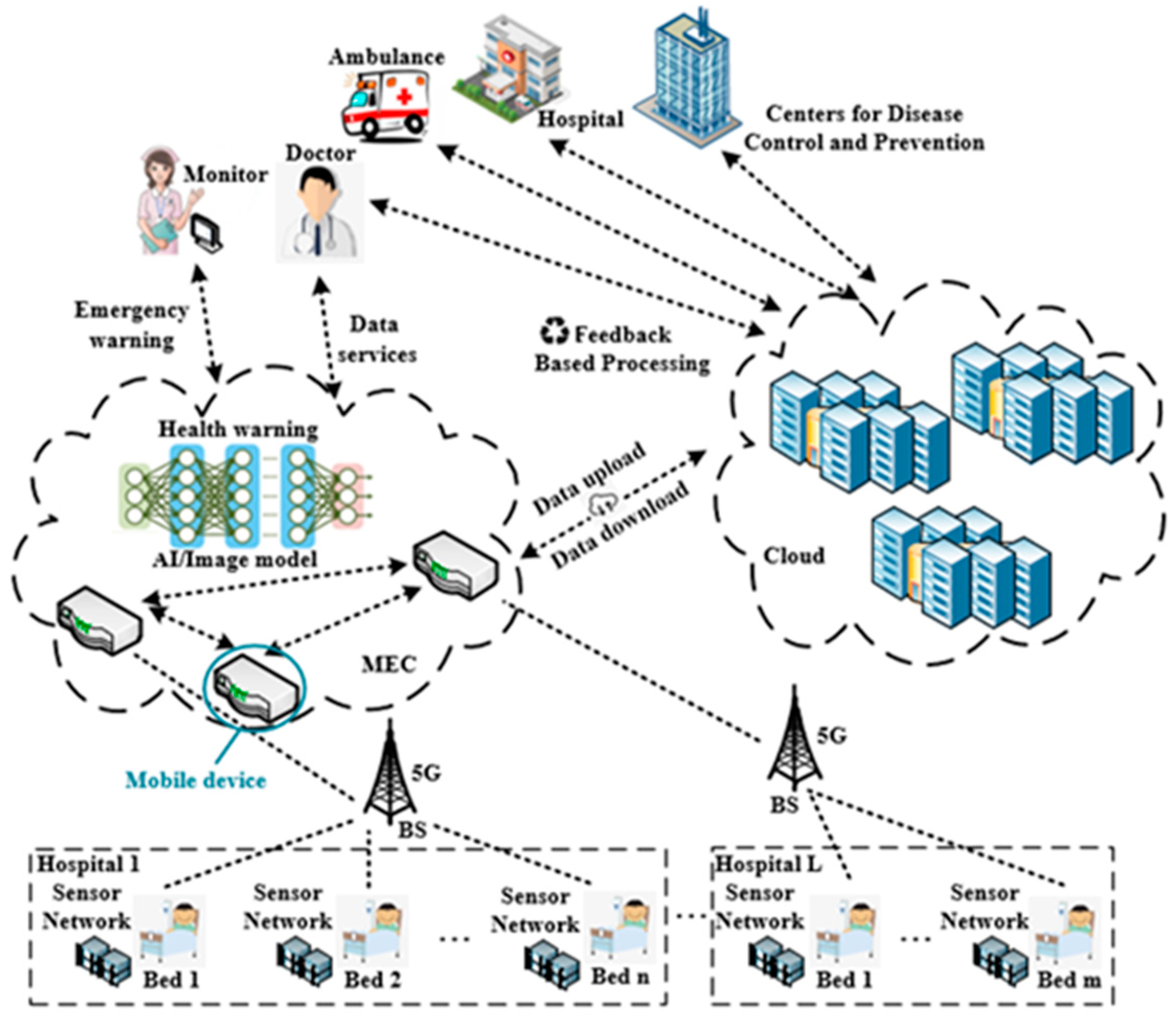

IoT devices in the medical health field will generate massive data, including some highly sensitive data, and some health data require quick analysis and an immediate decision. The telemedicine applications based on IoT cannot be executed locally on the terminals, due to the limited computing, storage capabilities, and the restricted energy supply of the IoT devices. However, uploading all health data to the cloud will not only cause tremendous pressure on the cloud, but also cause huge challenges to the network bandwidth and end-to-end delay. Moreover, the cloud computing center cannot provide location-aware applications. 5G-based mobile edge computing (MEC) provides a better infrastructure network architecture for telemedicine. MEC provides computing, storage, and other infrastructures close to the end users or data sources. The essence of MEC is the extension of the traditional cloud computing to the edge aggregation nodes (e.g., at base stations). It integrates computing, storage, network, and application capabilities at the edge of the network. Unlike traditional cloud computing, network capabilities are one of the core capabilities of MEC. MEC has the characteristics of decentralization, data localization, and low latency. The combination of MEC and telemedicine is of great significance.

However, MEC and cloud computing need to work closely together to better meet the needs of various scenarios, thereby maximizing the value of cloud computing and edge computing services. The data collected by the medical health-related terminal devices are uploaded to the MEC servers, where the data are processed and stored locally. In addition, some emergency data are processed and analyzed in real time, and feedback is immediately made. As most of the data are not uploaded to the remote cloud computing center, the end-to-end delay is greatly reduced, and the bandwidth pressure on the backhaul link is also reduced. However, this does not mean that the cloud computing center will not participate in the telemedicine process. Some data still need to be transferred from the edge nodes to the cloud computing center. The cloud computing center performs big data analysis and mining, data sharing, and simultaneously carries out training and upgrading of the AI algorithm model. The trained or upgraded AI model is pushed to the edge nodes, allowing the real-time intelligent decision-making of health data at the edge nodes to become possible. Moreover, the medical health data also need to be stored and backed up in the cloud computing center to ensure storage reliability and facilitate data sharing among different medical institutions.

Stimulated by commercial profits, some technology giants have attached importance to the layout and application of smart healthcare. IBM invested

$1 billion in 2014 to establish the Watson business group. IBM Watson is a technology platform that uses AI technology to gain insight into the regularity of the unstructured data [

1]. At present, the system has been applied to the diagnosis and treatment of tumors, cardiovascular diseases, and diabetes, and has been used in other fields, as well. Tencent launched its first AI product, Tencent Miying [

2], in the medical field in August 2017. It integrates image recognition and deep learning (DL) with medical science to assist doctors in screening for esophageal cancer, effectively improving screening accuracy and promoting accurate treatment. It also supports early screening for lung cancer, diabetic retinopathy, breast cancer, and other diseases.

In 2019, Ghulam et al. [

3] proposed a new intelligent pathology detection system using DL, cloud computing, and edge computing. The sensor will capture the human electroencephalogram (EEG) signal, which will be sent to a nearby edge computing server. The server inserts preprocessing steps and assigns them to available edge devices. Then, the advanced signals will be sent to the cloud computing server. In the cloud server, the proposed tree-based depth model replaces the EEG signal to extract depth features. The classification decision of whether it belongs to a normal person or a pathological person will be sent to the stakeholders. Li et al. [

4] proposed an Edge Learning as a Service (EdgeLaaS) framework to process health supervision data locally. Under this framework, edge learning nodes can help patients choose better suggestions from the right guardians in real time when certain emergencies occur. Prabal et al. [

5] proposed a remote patient health monitoring system based on fog computing in smart homes. It uses IoT devices to monitor patients in smart home environments. It implements event classification based on fog computing for real-time response and provides real-time decision-making information to doctors and caregivers in various situations.

The proposed work is able to obtain the physiological indicators of users through electrocardiogram (ECG) data. Existing algorithms for the automated ECG analysis of cardiac arrhythmia are based on the assessment of morphological features. After feature extraction, analysis is done by K-Nearest Neighbor (KNN) [

6], support vector machine (SVM) [

7], and Random Forest (RF) [

8] traditionally. Recent state-of-the-art performances achieved by deep learning methods in pattern recognition problems have motivated researchers to implement these techniques to the field of biomedical signal processing. Deep learning technology is based on neural networks for feature extraction and decision-making, of which the convolutional neural network (CNN) and recurrent neural network (RNN) are the two main neural network paradigms. In addition, [

9] and [

10] utilize the CNN to realize localized origins of premature ventricular contraction and Human Identification through ECGs. Attention-based recurrent neural network (MLDA-RNN) is used for the automated diagnosis of myocardial infarction severity stage [

11].

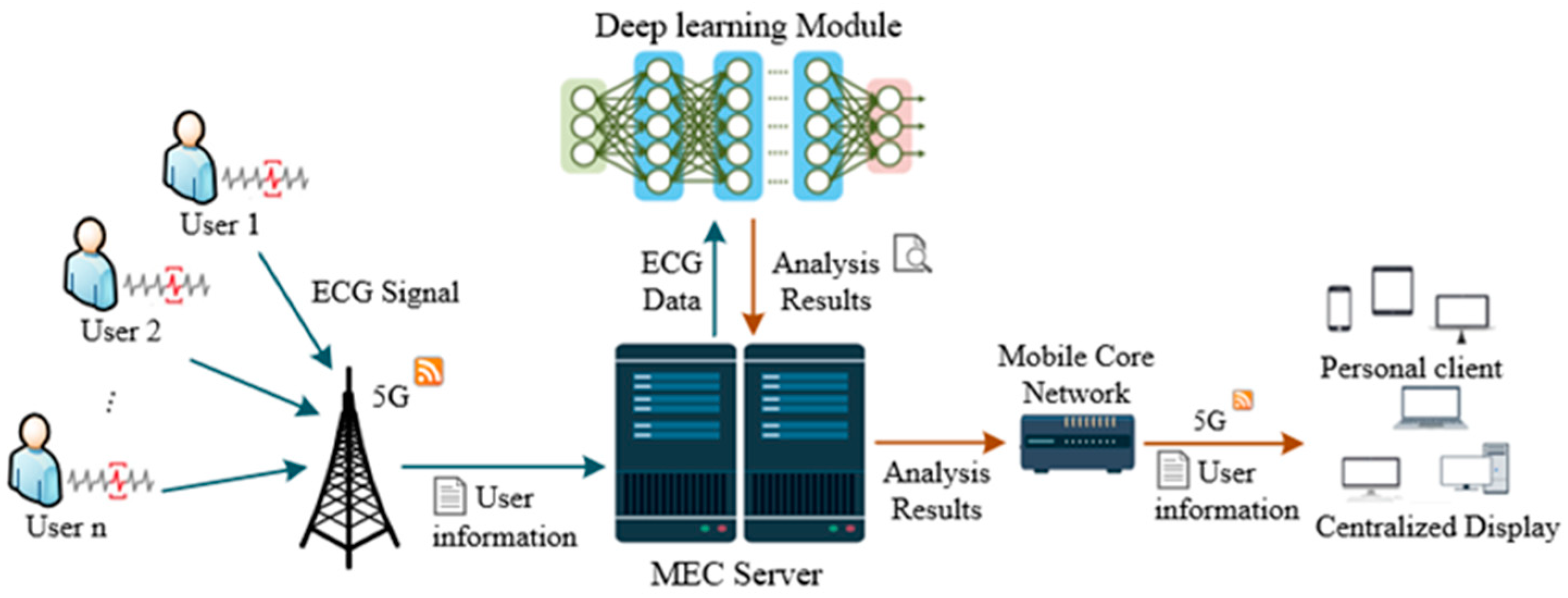

Other than the previous work, our proposed system can provide users with remote medical services through IoT, MEC, and machine learning technologies by monitoring the user’s physical indicators in real time and predicting the patient’s health. The system obtains health-based statistical information and user environment data effectively, including electrocardiogram (ECG) data, global positioning system (GPS) data, weather, and temperature data. In the MEC layer, the collected data are first pre-processed, and AI technology is then used for data analysis to obtain indicators for health monitoring. The physiological indicators monitored by the system come from ECG mostly, so achieving fast and accurate ECG automatic diagnosis and properties recognition are of great significance to the entire system. Therefore, an ECG diagnosis model based on AI is deployed in the MEC layer to improve the whole system. After data analysis, the analysis results and health information are finally transferred to the cloud server for storage and management. Compared with only cloud computing, edge computing platforms perform sensor data analysis tasks at the edge of the network, which reduces the distance of data transmission, thereby making the system run faster. As the nodes of data analysis are closer to the nodes of data generation, it is more difficult for hackers to tamper with the data. The system can reduce or avoid communication delays with the cloud so that key decisions can be immediately implemented at the edge through the deployed machine learning model. The machine learning algorithm proposed in this paper is a one-dimensional convolutional neural network (1D-CNN) model, which can diagnose and predict the type of heart disease. Experimental results prove the effectiveness of the algorithm. Putting the proposed 1D-CNN model on the MEC layer can make full use of the advantages of the proposed system and can provide users with real-time heart disease detection.

The proposed work created a multi-layer system to provide telemedicine for users, which can reduce the medical burden and provide better medical services. However, three problems need to be solved to build the system: First, continuous massive sensor data transmission; second, large amounts of medical data processing with low latency for effective response to emergency medical situations; third, intelligent algorithm for ECG diagnosis with high accuracy. The proposed system combines 5G, MEC, and AI to provide telemedicine services and solve the above problems. The AI model for automatic ECG analysis tackled the problem of data analysis. 5G and MEC guarantee the transmission of massive data with high quality of service (QoS) and allow the computation near the data source to avoid unnecessary data movement, which accelerate service delivery to improve the practicality of the system. Besides being close to the data sources, MEC provides the ability to process the collected data by deploying the AI model on it, and the task of data processing and storage can be done. There are many works using MEC to tackle the problems that exist in telemedicine. For example, one study proposed a three-tier network architecture for mobile-health applications based on MEC [

12]. Another discussed the benefits of healthcare systems with MEC-based architecture [

13].

The rest of this article is organized as follows.

Section 2 introduces the system model with details on the network framework for telemedicine and machine learning-based 5G MEC.

Section 3 develops our ECG diagnosis model, including wavelet-transformed data preprocessing and 1D-CNN automata.

Section 4 presents the experimental simulation results to justify the performance of our scheme, followed by

Section 5 to conclude the paper.

4. Experimental Simulation Results and Analysis

To train the proposed model and perform tests on it, the ECG dataset used in the present study contains a total of 160,948 records. The data distribution of the dataset in four categories is shown in

Table 1. It can be seen that the category of normal occupies a large part of the dataset, while the quantity of other categories is relatively small. The least among them is the premature beat, which only occupies 6% of all records in the training set. It should be noted that some kinds of abnormal heartbeats in the dataset are not included in the existing labels. These records are retained, so the total number of labels in

Table 1 will be slightly less than the number of records in the dataset.

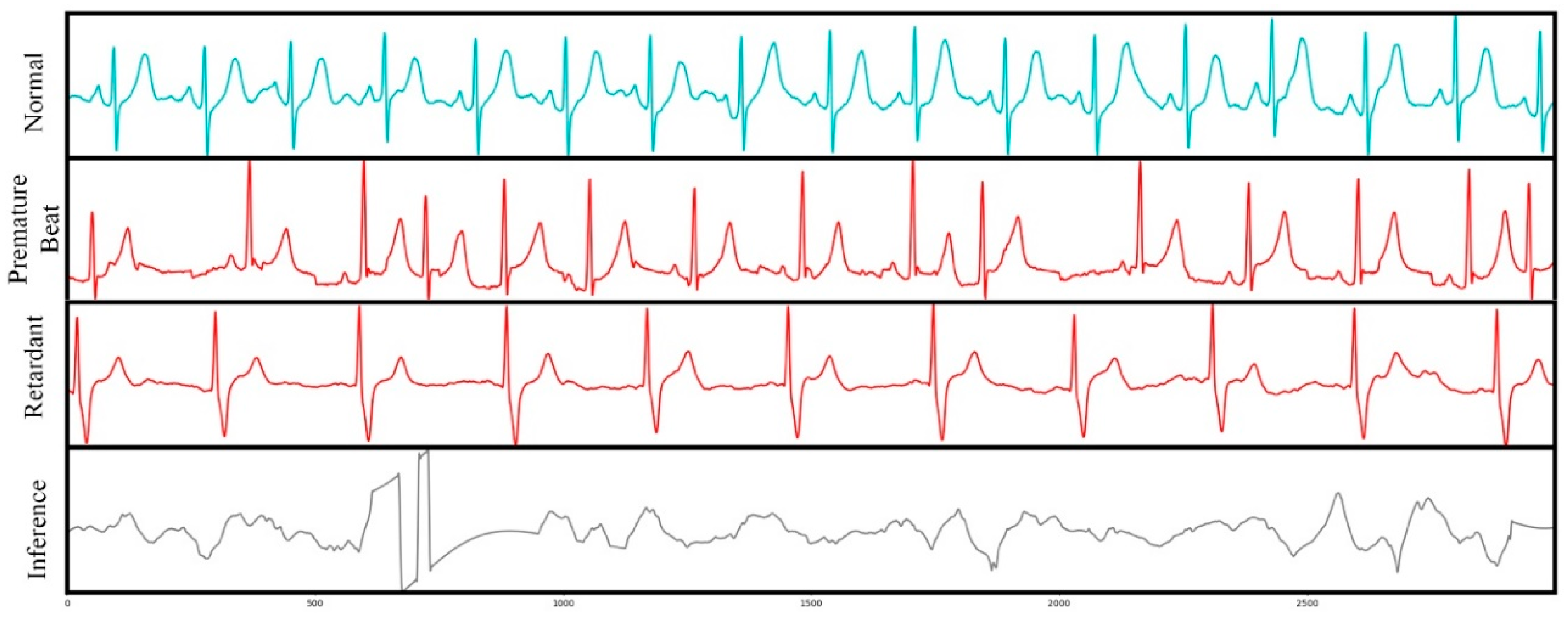

Figure 5 plots the four categories of the ECG segments in the dataset, each row of which illustrates 12 s with 3000 data points in one ECG record. From top to bottom, the category of each row is normal, premature beat, retardant, and interference, respectively. Interference means the corresponding record cannot be used in the ECG analysis because of a bad waveform or having too much noise.

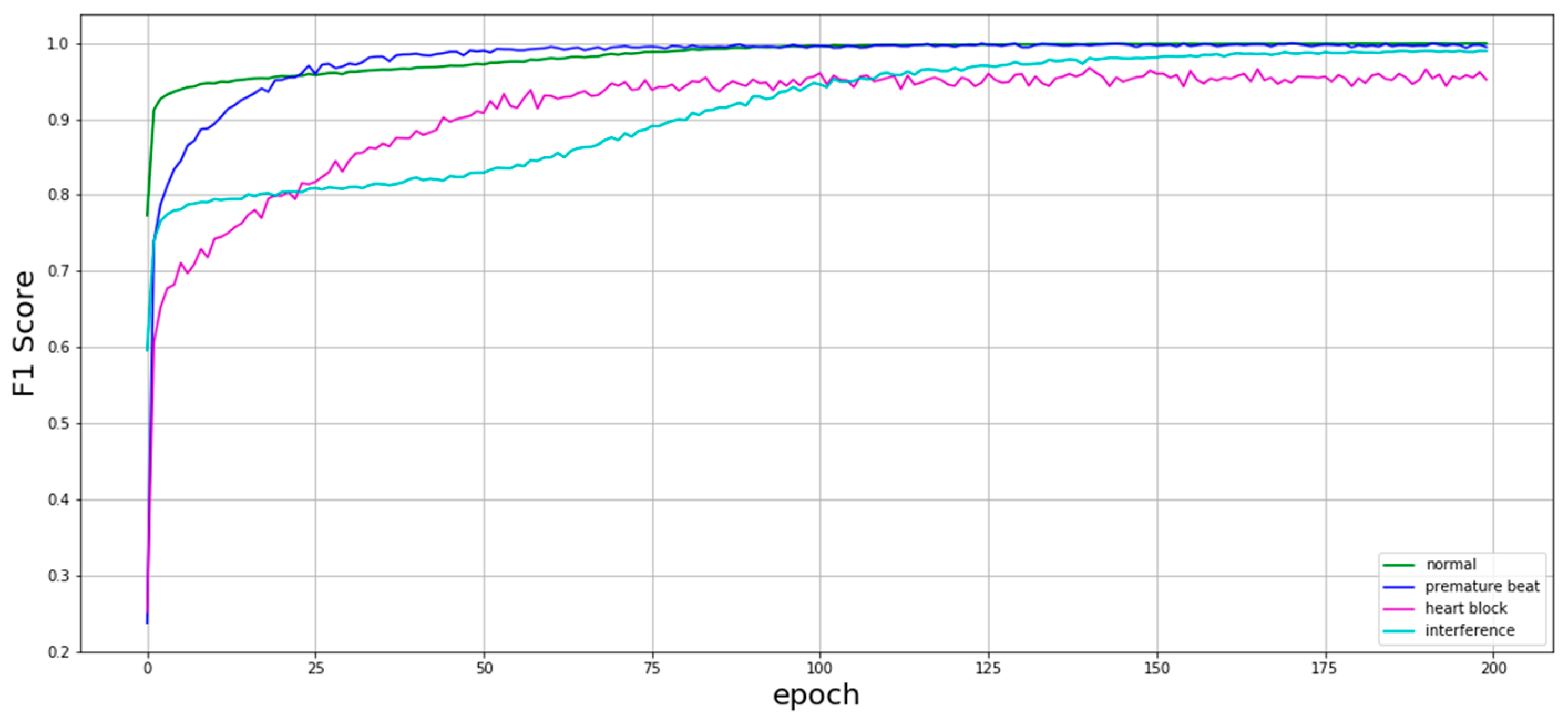

Figure 6 shows the training curve of the proposed model. The model was trained using the training set with the initial learning rate of 0.001 and exponential learning rate decay. After 200 epochs of training, the model performance tends to be stable. As illustrated in

Figure 1, the fluctuation of the training curve of the heart block is relatively strong. It is difficult for the model to converge in this category because of the small amount.

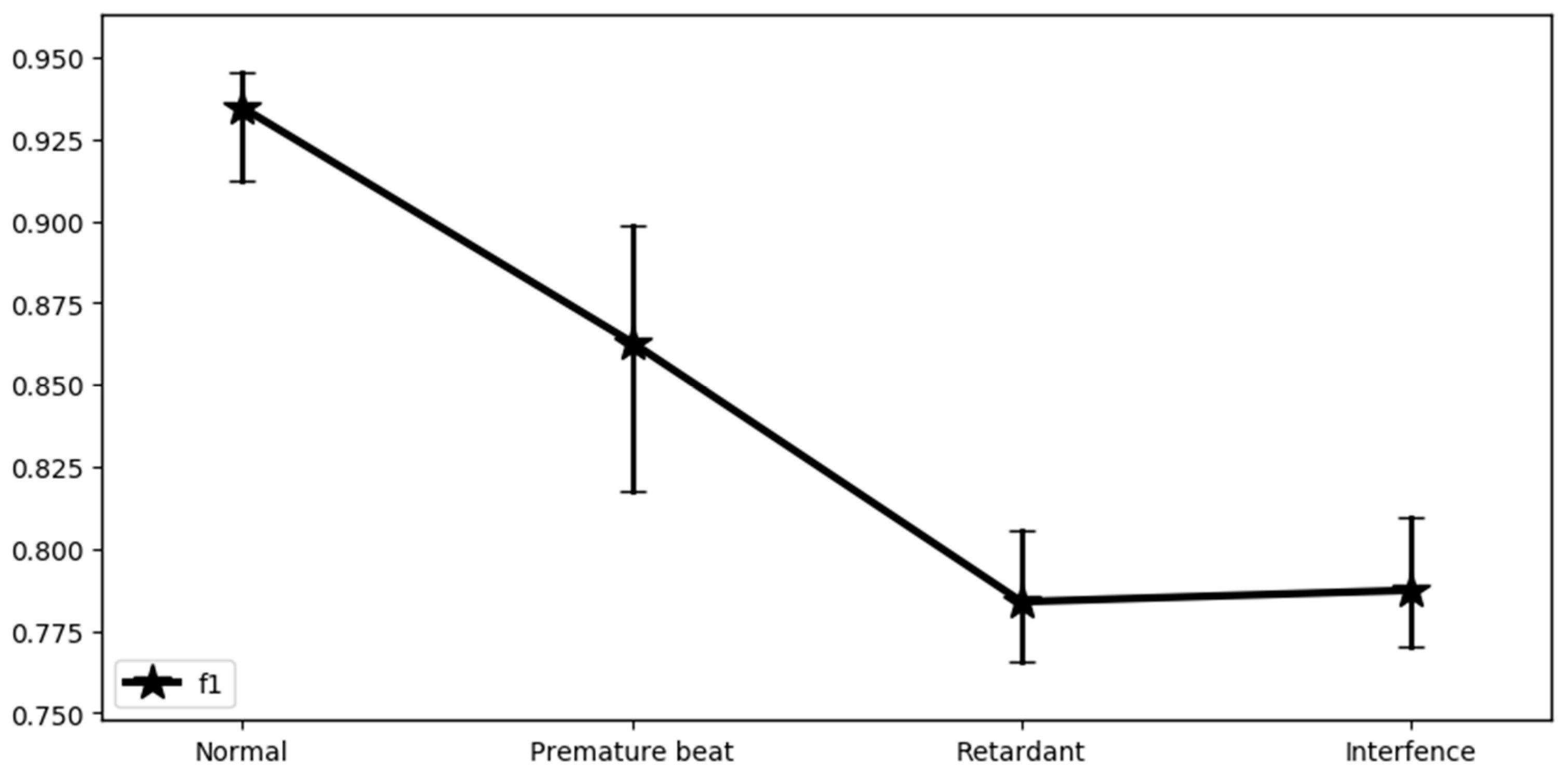

Fivefold cross-validation was performed on the whole dataset. In

Figure 7, the stars denote the average value of the F1 score in every category, while the vertical lines show the variation in the value of the F1 score. As can be observed, the difference between the maximum and minimum F1 score of the category of premature beat is the largest. This may be because the waveform of the premature beat is intermittent and does not always follow a certain period, which brings uncertainty to the model.

Table 2 shows the detail of the performance of the proposed model in the four categories. These results are calculated through the test set and include five metrics to measure model performance from multiple perspectives: acc, spe, sen, ppr, and F1 score. Among the four categories, the performance of the model in the normal category is the best. It can achieve or exceed 0.90 on the five metrics, and the ppr even reaches a high score of 0.961, indicating that the positive prediction made by the model has very high accuracy. In addition to the category of normal, the other three categories have higher accuracy to distinguish the negative samples from the positive ones. This is because the negative samples in the three categories occupy the majority of the whole category, respectively. The model can learn more features for decision making on negative samples. In the ECG dataset used in this study, the heart block category has the smallest number of records, and the ratio of positive samples (samples with heart block) to negative samples (without heart block) is about 1:20. This causes the model’s decision on the heart block category to tilt toward the negative sample during the training process, making the score on the two metrics of spe and ppr relatively low. The high value of acc shows that the model has achieved very good performance in terms of classification accuracy. In ECG datasets, the records without disease often occupy the majority, while the number of records with disease is relatively small. The high-quality performance of the model in classification accuracy is mostly generated from the health records. However, in actual application scenarios, it is particularly important to make accurate predictions on diseased records.

Figure 8 illustrates the simulation results of the model. The little markers with color plot the variation in the metrics used in simulation among the four categories. It is obvious that the F1 score will go down gradually as the number of positive samples decreases in different categories, except the category of inference. The records of inference gain a low F1 score with a relatively considerable number of positive samples because there are too many different patterns of interference for the model to learn.

In the proposed system, the IoT layer continuously generates new data that need to be processed and analyzed every second. These data come from dozens of entities or thousands of sensors and are distributed in different regions. However, sending all these data to the cloud poses several immense problems. First, the volume of data will create capacity issues. Second, it is costly to transmit that much data from its location of origin to centralized data centers in terms of energy, bandwidth, and compute power. This clearly outlines operational efficiency issues that need addressing. Third, the power consumption of transmitting and analyzing data is enormous, and finding an effective way to reduce that cost and waste is clearly needed.

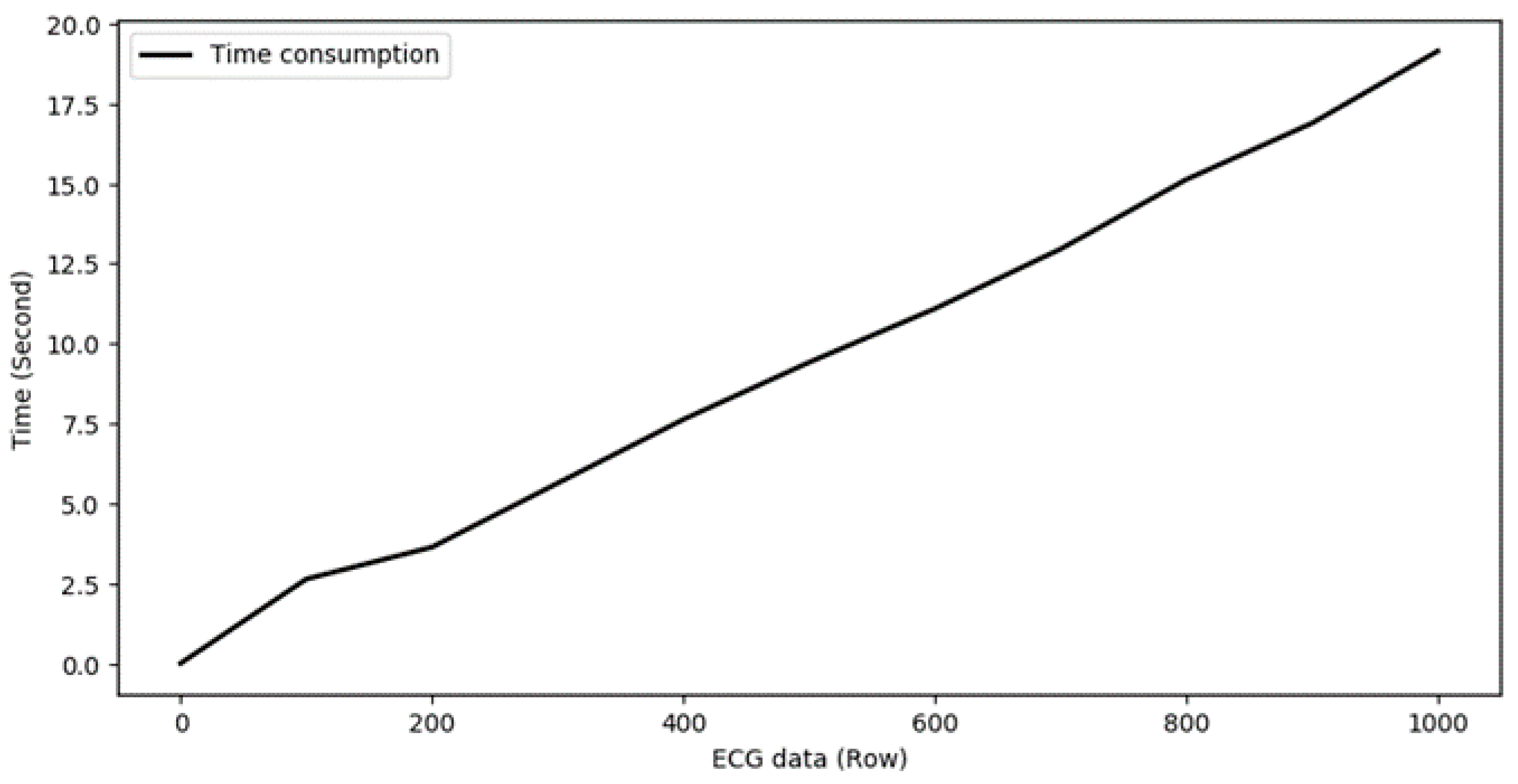

Figure 9 shows the time consumption of the ECG model with the increase in data volume. The simulation experiment is performed on an Intel Xeon Platinum 8163 CPU, manufactured by Intel Corporation, CA, USA. It takes 2.63 s to process 100 ECG records with 2.77 mega floating-point operations (MFLOPs). However, the time consumption raised to 19.14 s when processing 1000 ECG records, which needs an MEC layer with multiple machines to improve the computational efficiency of the model.

In addition to improving computational efficiency, introducing an MEC layer to process data locally reduces transmission costs. However, automated data analysis techniques are also required for ECG data analysis, and one of the most effective methods is to utilize the capabilities of AI. Therefore, all servers in the MEC layer are equipped with the ECG diagnosis model based on AI.