greenMAC Protocol: A Q-Learning-Based Mechanism to Enhance Channel Reliability for WLAN Energy Savings

Abstract

1. Introduction

2. Research

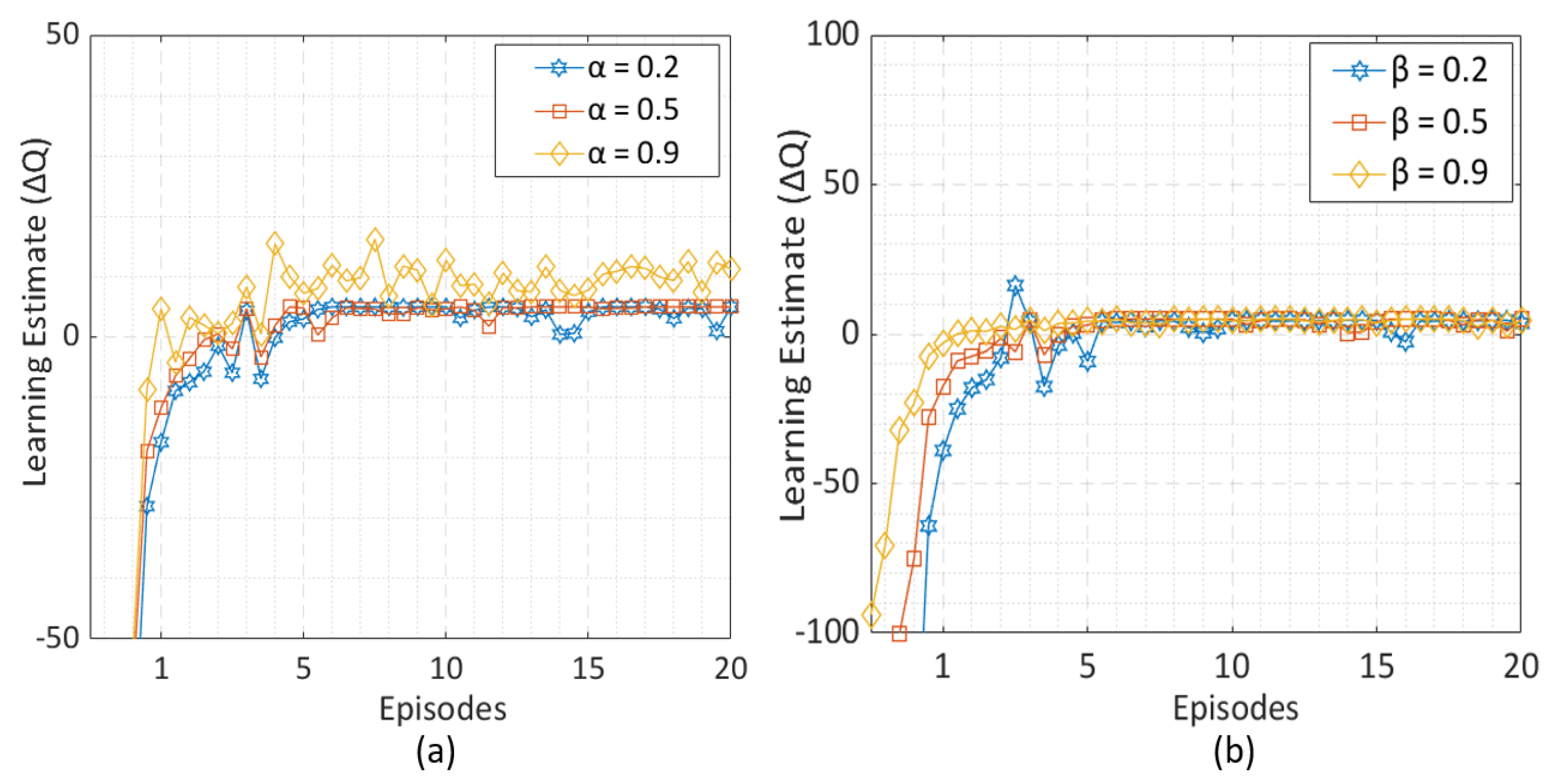

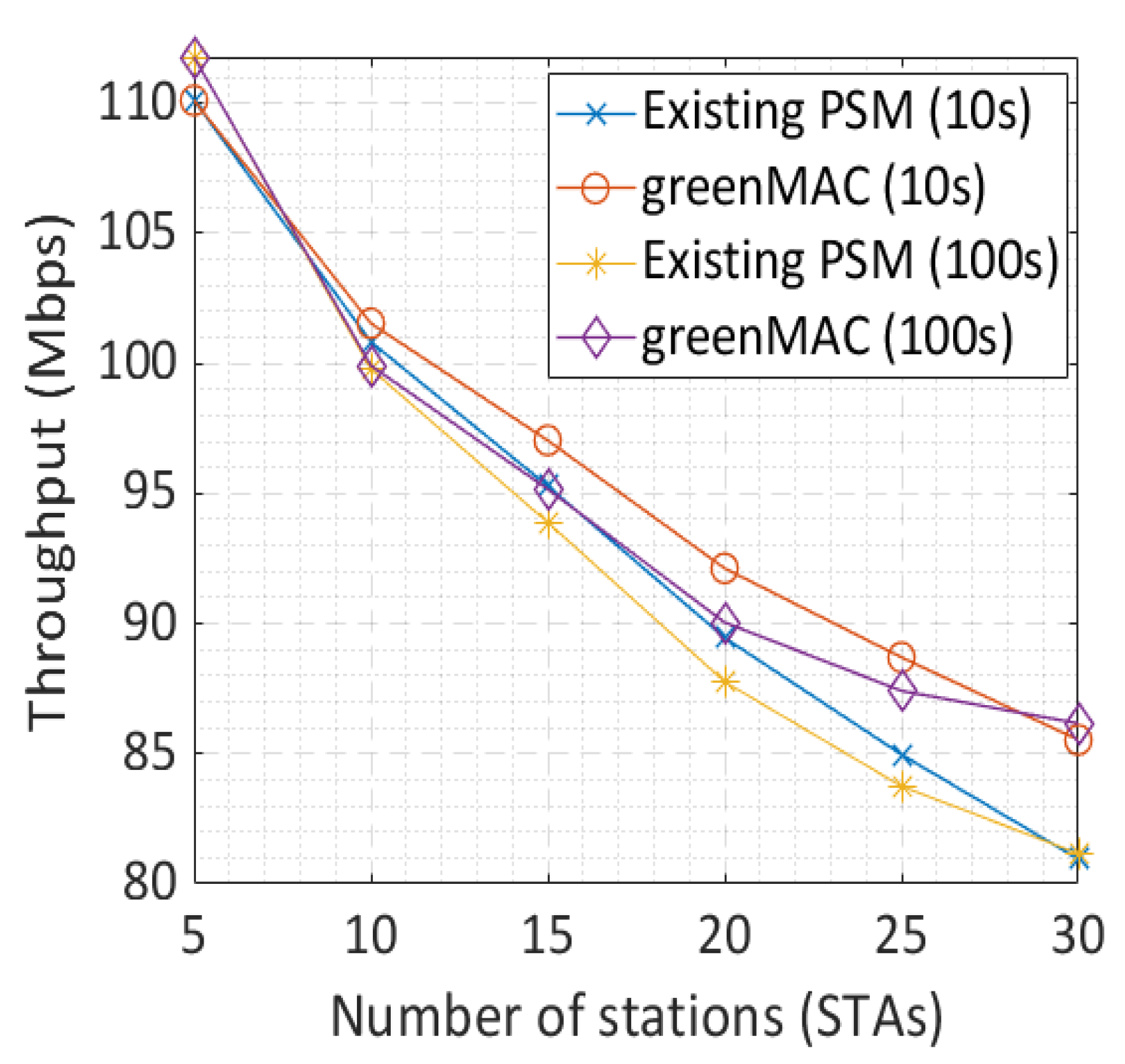

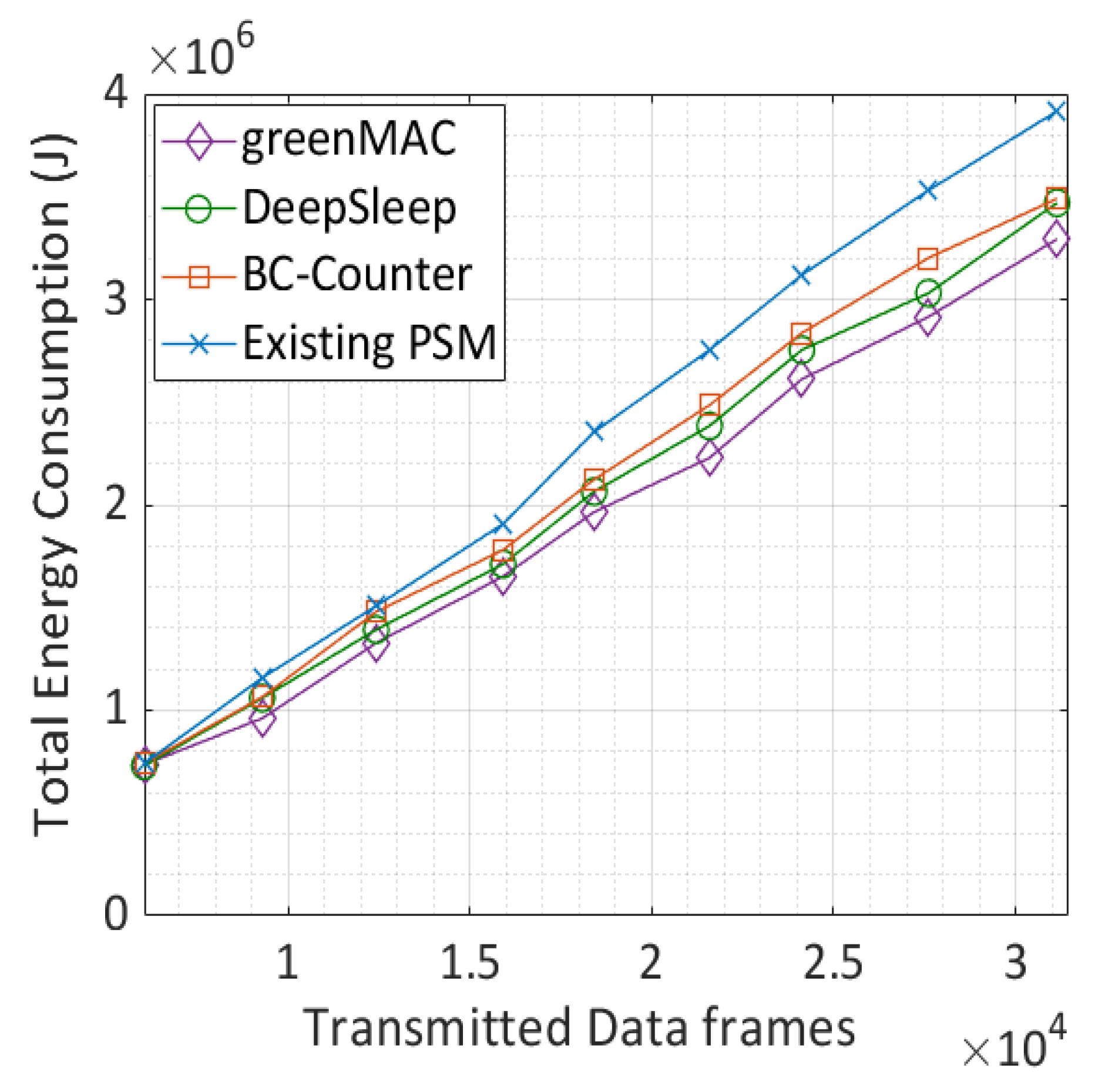

3. Proposed QL-Based PSM Mechanism

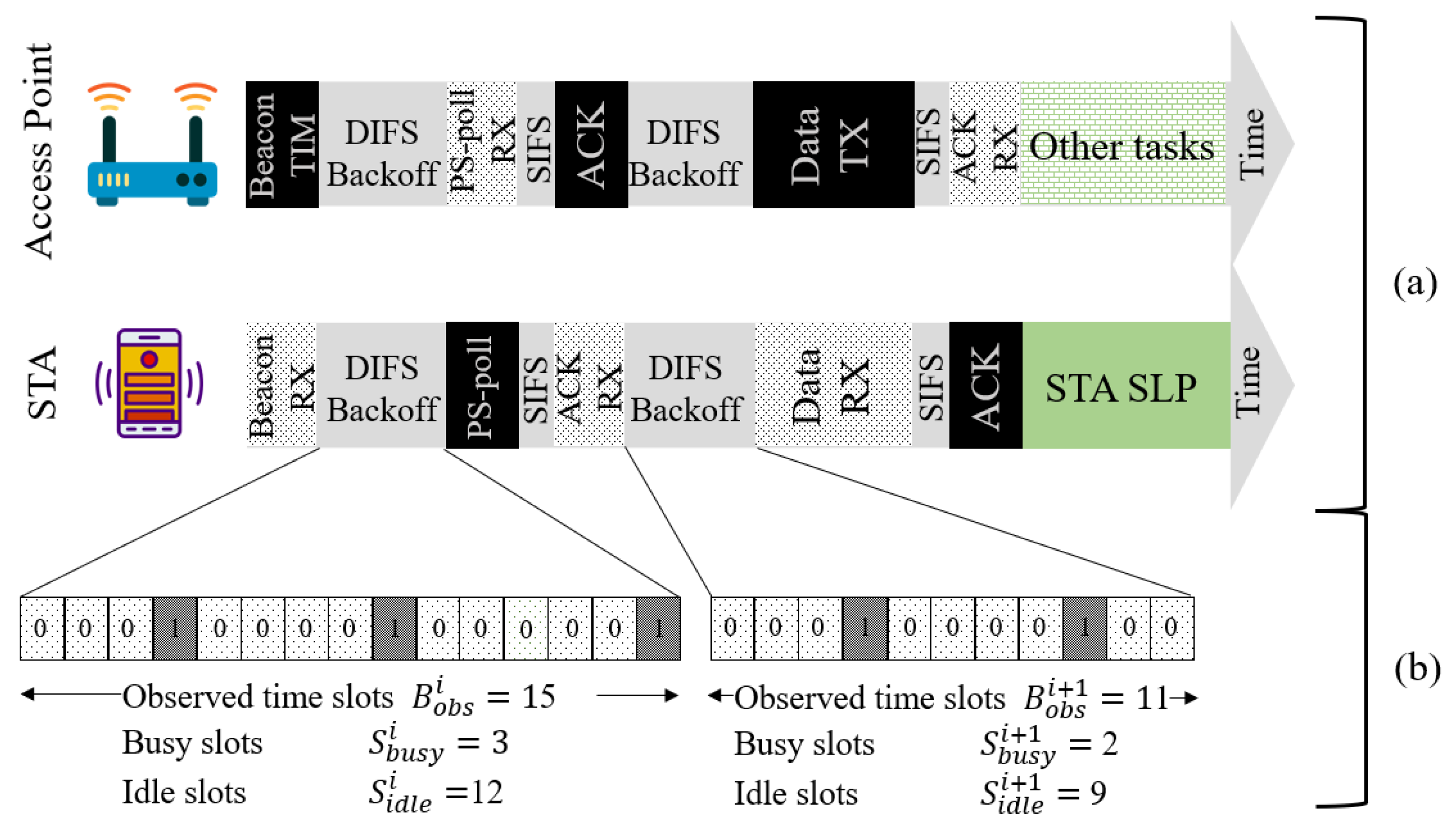

3.1. Existing PSM

3.2. Q Learning Model: Environment and Elements

3.2.1. Strategy/Policy

3.2.2. Reward

3.2.3. Q-Value Function

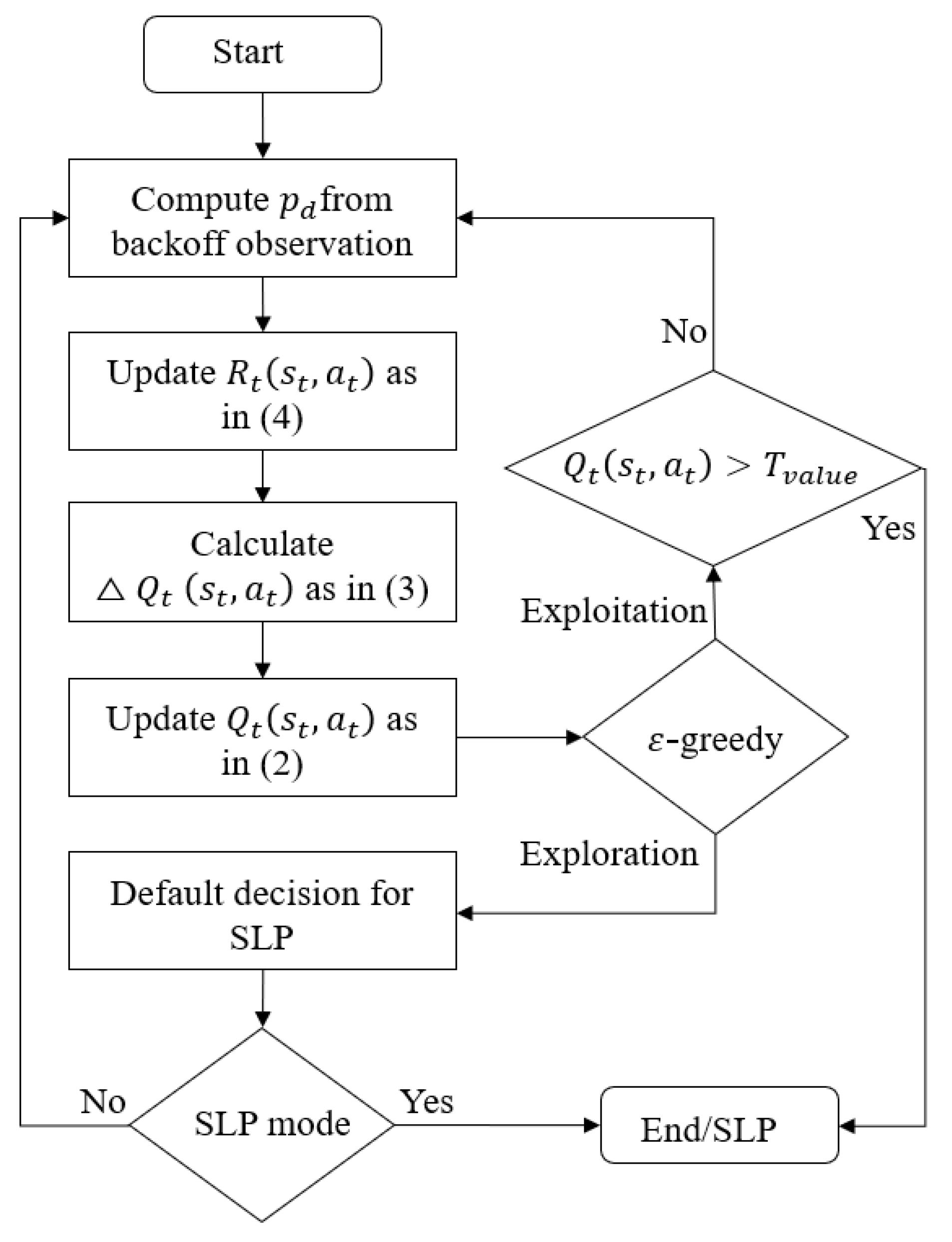

3.3. GreenMAC Protocol

4. Performance Evaluation

5. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| QL | Q-learning |

| WLAN | Wireless Local Area Networks |

| STA | Station |

| MAC | Medium Access Control |

| PSM | Power Saving Mode |

| TX | Transmit |

| RX | Receive |

| IDL | Idle |

| SLP | Sleep |

| CSMA/CA | Carrier Sense Multiple Access with Collision Avoidance |

| DCF | Distributed Coordination Function |

| CF | Contention-Free |

| CB | Contention-Based |

| ACK | Acknowledgment |

| RL | Reinforcement Learning |

| MDP | Markov Decision Process |

References

- Ren, J.; Yue, S.; Zhang, D.; Zhang, Y.; Cao, J. Joint channel assignment and stochastic energy management for rf-powered ofdma wsns. IEEE Trans. Veh. Technol. 2019, 68, 1578–1592. [Google Scholar] [CrossRef]

- IEEE 802.11 WG. Part 11: Wireless LAN Medium Access Control (MAC) and Physical Layer (PHY) Specifications; IEEE Std. 802.11n; The Institute of Electrical and Electronics Engineers: New York, NY, USA, 2012; pp. 1003–1005, 1432–1441. [Google Scholar]

- Pering, T.; Agarwal, Y.; Gupta, R.; Want, R. CoolSpots: Reducing the power consumption of wireless mobile devices with multiple radio interfaces. In Proceedings of the 4th International Conference on Mobile Systems, Applications and Services, Uppsala, Sweden, 19–22 June 2006; pp. 220–232. [Google Scholar]

- Malekshan, K.R.; Zhuang, W.; Lostanlen, Y. An Energy Efficient MAC Protocol for Fully Connected Wireless Ad Hoc Networks. IEEE Trans. Wirel. Commun. 2014, 13, 5729–5740. [Google Scholar] [CrossRef]

- Chen, B.; Jamieson, K.; Balakrishnan, H.; Morris, R. Span: An energyefficient coordination algorithm for topology maintenance in Ad Hoc wireless networks. ACM Trans. Wirel. Netw. 2002, 8, 481–494. [Google Scholar] [CrossRef]

- Rodoplu, V.; Meng, T.H. Minimum energy mobile wireless networks. IEEE J. Sel. Areas Commun. 1999, 17, 1333–1344. [Google Scholar] [CrossRef]

- Jung, E.-S.; Vaidya, N.H. An energy efficient MAC protocol for wireless LANs. In Proceedings of the Twenty-First Annual Joint Conference of the IEEE Computer and Communications Societies, New York, NY, USA, 23–27 June 2002; pp. 1756–1764. [Google Scholar]

- Ali, R.; Kim, S.W.; Kim, B.; Park, Y. Design of MAC layer resource allocation schemes for IEEE 802.11ax: Future directions. IETE Tech. Rev. 2018, 35, 28–52. [Google Scholar] [CrossRef]

- Ali, R.; Shahin, N.; Bajracharya, R.; Kim, B.; Kim, S.W. A self-scrutinized back-off mechanism for IEEE 802.11ax in 5G unlicensed networks. Sustainability 2018, 10, 1201. [Google Scholar] [CrossRef]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction, 2nd ed.; MIT Press: Cambridge, MA, USA, 1998. [Google Scholar]

- Dogar, F.R.; Steenkiste, P.; Papagiannaki, K. Catnap: Exploiting high bandwidth wireless interfaces to save energy for mobile devices. In Proceedings of the 8th International Conference on Mobile Systems, Applications, and Services, Seoul, Korea, 17–21 June 2010; pp. 107–122. [Google Scholar]

- Rozner, E.; Navda, V.; Ramjee, R.; Rayanchu, S. NAPman: Network-assisted power management for Wi-Fi devices. In Proceedings of the 8th International Conference on Mobile Systems, Applications, and Services, San Francisco, CA, USA, 15–18 June 2010; pp. 91–106. [Google Scholar]

- Anand, M.; Nightingale, E.B.; Flinn, J. Self-tuning wireless network power management. Wirel. Netw. 2005, 11, 451–469. [Google Scholar] [CrossRef]

- Qiao, D.; Shin, K.G. Smart power-saving mode for IEEE 802.11 wireless LANs. In Proceedings of the IEEE 24th Annual Joint Conference of the IEEE Computer and Communications Societies, Miami, FL, USA, 13–17 March 2005; pp. 1573–1583. [Google Scholar]

- Namboodiri, V.; Gao, L. Energy-efficient VoIP over wireless LANs. IEEE Trans. Mob. Comput. 2010, 9, 566–581. [Google Scholar] [CrossRef]

- Krashinsky, R.; Balakrishnan, H. Minimizing energy for wireless web access with bounded slowdown. Wirel. Netw. 2005, 11, 135–148. [Google Scholar] [CrossRef]

- Vukadinovic, V.; Glaropoulos, I.; Mangold, S. Enhanced power saving mode for low-latency communication in multi-hop 802.11 networks. Ad Hoc Netw. 2014, 23, 18–33. [Google Scholar] [CrossRef]

- Radwan, A.; Rodriguez, J. Energy saving in multi-standard mobile terminals through short-range cooperation. EURASIP J. Wirel. Commun. Netw. 2012, 2012, 159. [Google Scholar] [CrossRef]

- Tang, S.; Yomo, H.; Kondo, Y.; Obana, S. Wake-up receiver for radio-on-demand wireless LANs. EURASIP J. Wirel. Commun. Netw. 2012, 2012, 42. [Google Scholar] [CrossRef]

- Lin, H.H.; Shih, M.J.; Wei, H.Y.; Vannithamby, R. DeepSleep: IEEE 802.11 enhancement for energy-harvesting machine-to-machine communications. In Proceedings of the IEEE Global Communications Conference (GLOBECOM), Anaheim, CA, USA, 3–7 December 2012. [Google Scholar]

- He, Y.; Yuan, R. A novel scheduled power saving mechanism for 802.11 wireless LANs. IEEE Trans. Mob. Comput. 2009, 8, 1368–1383. [Google Scholar]

- Jung, E.-S. Improving IEEE 802.11 power saving mechanism. Wirel. Netw. 2007, 14, 375–391. [Google Scholar] [CrossRef]

- Lei, X.; Rhee, S.H. Improving the IEEE 802.11 power-saving mechanism in the presence of hidden terminals. EURASIP J. Wirel. Commun. Netw. 2016, 2016, 26. [Google Scholar] [CrossRef]

- The Network Simulator ns-3. Available online: https://www.nsnam.org/ (accessed on 5 March 2018).

| Parameters | Value |

|---|---|

| WLAN | IEEE 802.11ax |

| Frequency | 5 GHz |

| Modulation and Coding Scheme (MCS) number | 6 |

| Channel Bandwidth | 40 MHz |

| Data rate | 154.9 Mbps |

| Simulation Time | 10/100 s |

| Guard Interval (GI) | 800 ns |

| Data Payload | 1472 Bytes |

| Distance Between AP and STA | 1.0 m |

| Learning rate () | 0.2, 0.5, 0.9 |

| Discount factor () | 0.2, 0.5, 0.9 |

| Exploration/Exploitation () | 0.5 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ali, R.; Sohail, M.; Almagrabi, A.O.; Musaddiq, A.; Kim, B.-S. greenMAC Protocol: A Q-Learning-Based Mechanism to Enhance Channel Reliability for WLAN Energy Savings. Electronics 2020, 9, 1720. https://doi.org/10.3390/electronics9101720

Ali R, Sohail M, Almagrabi AO, Musaddiq A, Kim B-S. greenMAC Protocol: A Q-Learning-Based Mechanism to Enhance Channel Reliability for WLAN Energy Savings. Electronics. 2020; 9(10):1720. https://doi.org/10.3390/electronics9101720

Chicago/Turabian StyleAli, Rashid, Muhammad Sohail, Alaa Omran Almagrabi, Arslan Musaddiq, and Byung-Seo Kim. 2020. "greenMAC Protocol: A Q-Learning-Based Mechanism to Enhance Channel Reliability for WLAN Energy Savings" Electronics 9, no. 10: 1720. https://doi.org/10.3390/electronics9101720

APA StyleAli, R., Sohail, M., Almagrabi, A. O., Musaddiq, A., & Kim, B.-S. (2020). greenMAC Protocol: A Q-Learning-Based Mechanism to Enhance Channel Reliability for WLAN Energy Savings. Electronics, 9(10), 1720. https://doi.org/10.3390/electronics9101720