Communications and Driver Monitoring Aids for Fostering SAE Level-4 Road Vehicles Automation

Abstract

1. Introduction

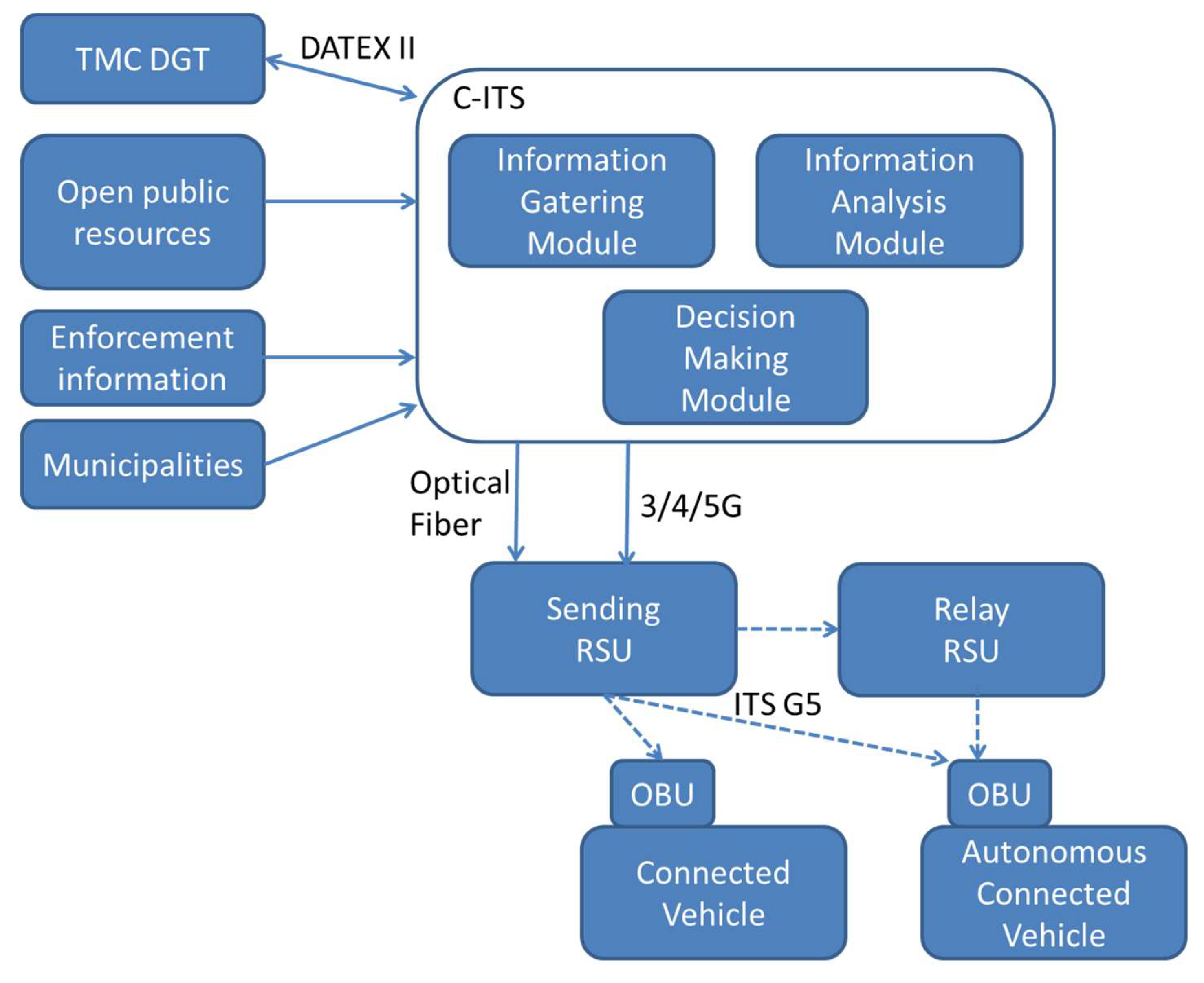

2. C-ITS Development

2.1. C-ITS Architecture

2.2. Deployment at the Test Site

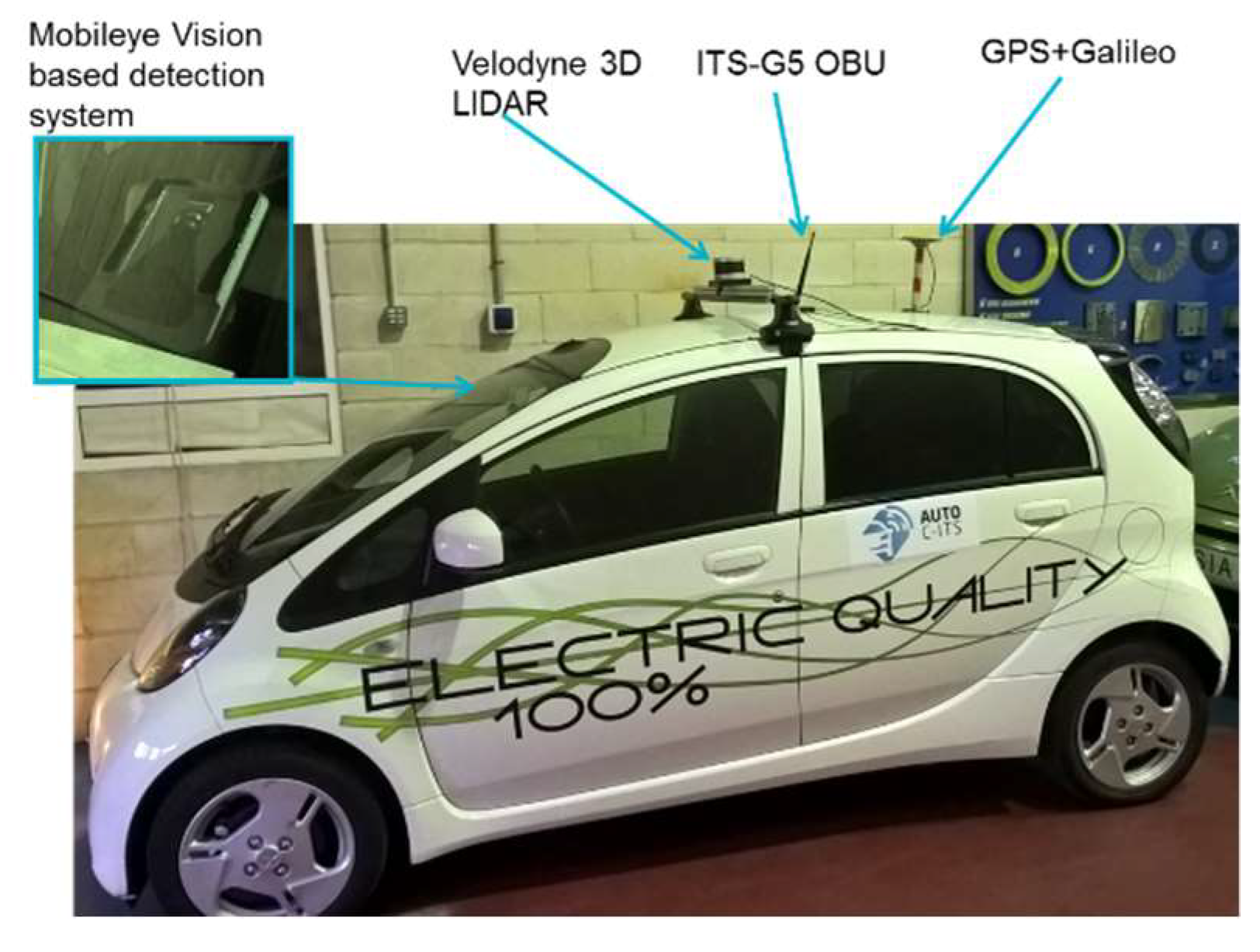

2.3. Automated and Connected Vehicles

3. Transition between Automated and Manual Driving Modes

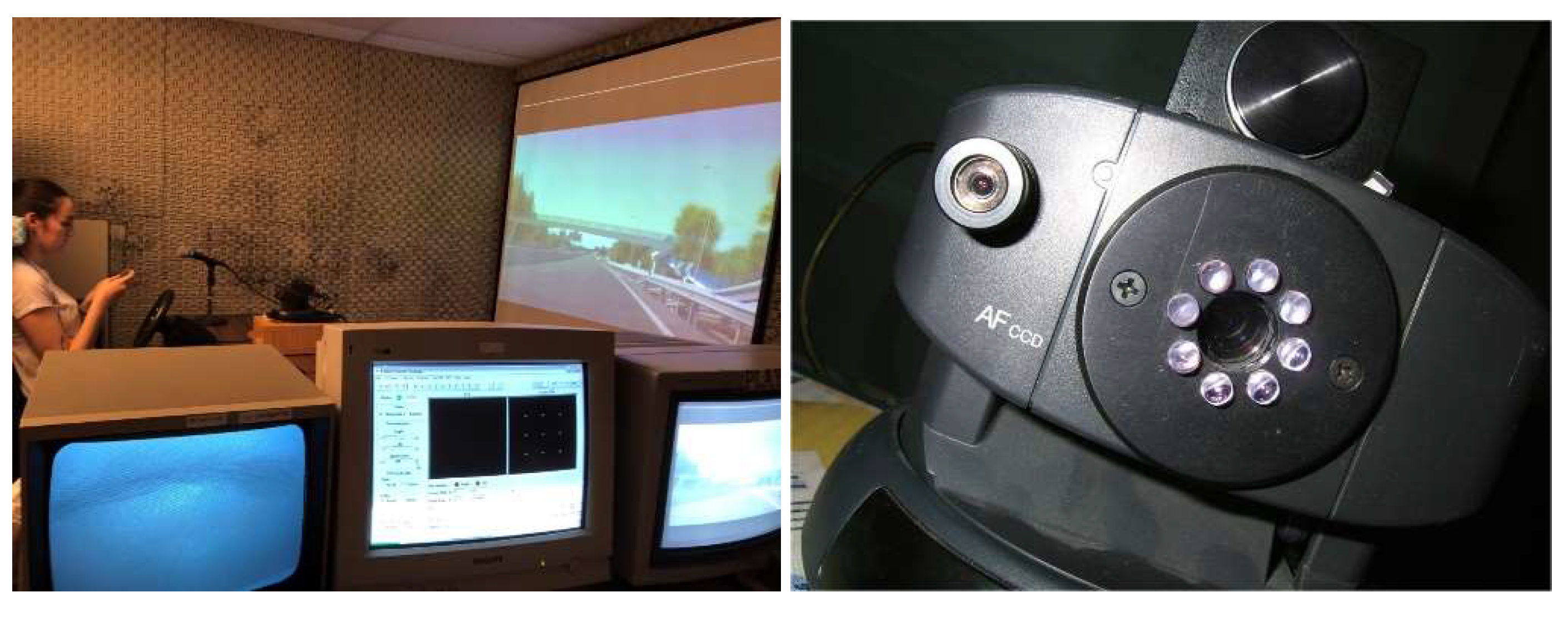

3.1. Study Approach

3.2. Results

- For the variable Time that it takes to fix the gaze in the area of interest for driving, a means difference contrast was made. There is an effect of the activation situation, in the sense that it takes longer to look when the participant is relaxed with his eyes closed (1071 ms) than when he is distracted by the mobile (881 ms), t16 = 2.602, p = 0.019, d = 0.48.

- For the variable Size of the pupil, an ANOVA of repeated measures 2 × 2 × 2 was performed (Activation situation × Type of cognitive task × Experimental moment). An effect of the experimental moment was found, with F (1, 8) = 27.09, p = 0.001, partial η2 = 0.77. When the participant was solving the cognitive task, his pupil diameter was greater than during driving (average values of 35.3 and 31.1 pixels, respectively). No differences were found either by activation situation or by type of task. These results seem to indicate that both cognitive tasks (verbal vs. arithmetical) entailed the same cognitive load for the participant. The results can be seen in Table 2.

- For the Reaction Time to press the button, a comparison of related means is also made and statistically significant differences are found for the Activation Situation factor, with t20 = −2066, p =0.05, d = 0.4. When a participant is in a situation of activation, reading or distracted with his smartphone, his reaction time to this task is greater than if he is in a relaxed situation, (1492 ms vs. 1306 ms), that is, responding on average almost 200 ms later. One possible explanation for this result is that the reaction time is longer because you take your mobile phone in your hand before answering.

- For the variables Reaction time for the cognitive task and Reaction time to the stop signal, an ANOVA of repeated measures 2 × 2 is performed. Regarding the Reaction time for the cognitive task, no effects or Situation are found of activation or the type of cognitive task on the reaction time. However, when the situation is reading, there seem to be indications that the answer to the arithmetical versus the verbal task is slower (2197 vs. 1599 ms, respectively, d = 0.7).

- There are also no statistically significant differences for the two effects studied on the reaction time to the stop sign; however, and in the same way, if participants had been in an activation situation and had performed an arithmetical task they took on average 198 ms more to brake before the stop sign than those who had also been in an activation situation but had read a word (d = 0.38).

3.3. Discussion

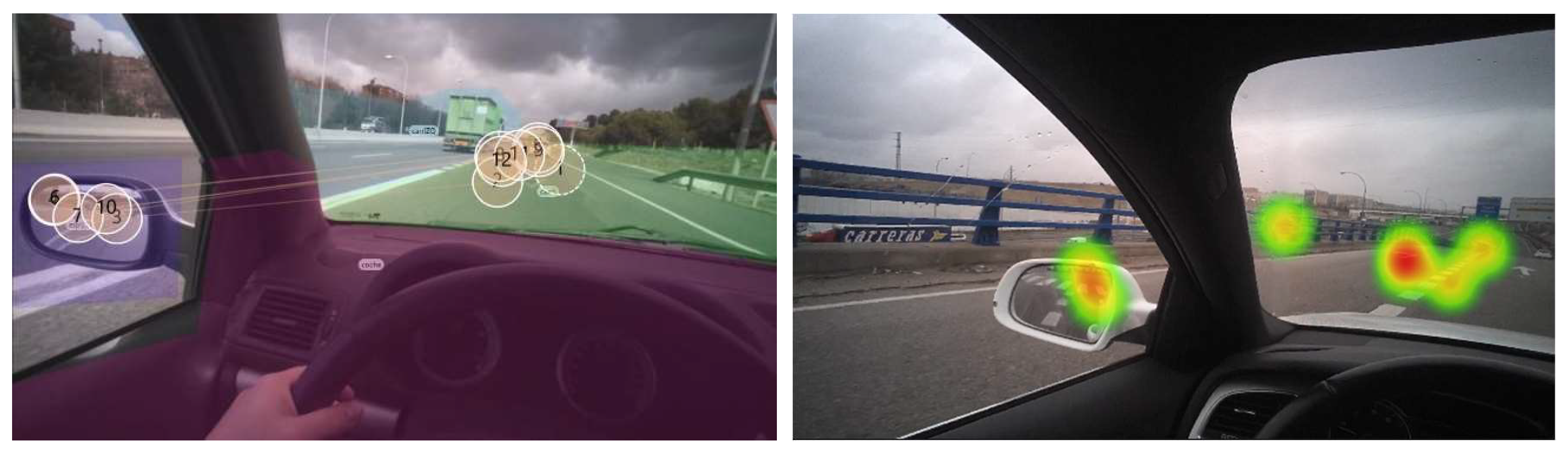

4. Driver Behaviour during a Safe-Critical Manoeuvre When Regaining Vehicle Control

4.1. Study Approach

4.2. Results

4.3. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Hobbs, F.D. Traffic Planning and Engineering; Pergamon Press: Oxford, UK, 1989. [Google Scholar]

- Stern, R.; Cui, S.; Delle Monache, M.L.; Bhadani, R.; Bunting, M.; Churchill, M.; Hamilton, N.; Haulcy, R.; Pohlmann, H.; Wu, F.; et al. Dissipation of stop-and-go waves via control of autonomous vehicles: Field experiments. Transp. Res. Part C 2018, 7, 42–57. [Google Scholar] [CrossRef]

- Aeberhard, M.; Rauch, S.; Bahram, M.; Tanzmeister, G.; Thomas, J.; Pilat, Y.; Homm, F.; Huber, W.; Kaempchen, N. Experience, results and lessons learned from automated driving on Germany’s highways. IEEE Intell. Transp. Syst. Mag. 2015, 7, 42–57. [Google Scholar] [CrossRef]

- Mersky, A.C.; Samaras, C. Fuel economy testing of autonomous vehicles. Transp. Res. Part C 2016, 65, 31–48. [Google Scholar] [CrossRef]

- Hofflinger, B.; Conte, G.; Esteve, D.; Weisglas, P. Integrated Electronics for Automotive Applications in the EUREKA Program PROMETHEUS. In Proceedings of the Sixteenth European Solid-State Circuits Conference. ESSCIRC’90, Grenoble, France, 19–21 September 1990; pp. 13–17. [Google Scholar]

- Naranjo, J.E.; Bouraoui, L.; Garcia, R.; Parent, M.; Sotelo, M.A. Interoperable Control Architecture for Cybercars and Dual-Mode Cars. IEEE Trans. Intell. Transp. Syst. 2009, 10, 146–154. [Google Scholar] [CrossRef]

- Thrun, S. Stanley: The Robot that Won the DARPA Grand Challenge. J. Field Robot. 2006, 23, 661–692. [Google Scholar] [CrossRef]

- SAE Automotive. Taxonomy and Definitions for Terms Related to Driving Automation Systems for On-Road Motor Vehicles; Report J3016_201609; SAE Automotive: Warrendale, PA, USA, 2016. [Google Scholar]

- Harding, J.; Powell, G.; Yoon, R.; Fikentscher, J.; Doyle, C.; Sade, D.; Lukuc, M.; Simons, J.; Wang, J. Vehicle-to-Vehicle Communications: Readiness of V2V Technology for Application; Tech. Rep. DOT HS 812 014; National Highway Traffic Safety Administration: Washington, DC, USA, 2014.

- California DMV. Autonomous Vehicle Disengagement Reports; California DMV: Sacramento, CA, USA, 2016.

- Montemerlo, M.S.; Murveit, H.J.; Urmson, C.P.; Dolgov, D.A.; Nemec, P. Determining When to Drive Autonomously. U.S. Patent 8718861, 6 May 2014. [Google Scholar]

- Urmson, C.P.; Dolgov, D.A.; Chatham, A.H.; Nemec, P. System and Method of Providing Recommendations to Users of Vehicles. U.S. Patent 9658620, 23 May 2017. [Google Scholar]

- Krasniqi, X.; Hajrizi, E. Use of IoT Technology to Drive the Automotive Industry from Connected to Full Autonomous Vehicles. IFAC-PapersOnLine 2016, 49, 269–274. [Google Scholar] [CrossRef]

- Vanholme, B.; Gruyer, D.; Lusetti, B.; Glaser, S.; Mammar, S. Highly automated driving on highways based on legal safety. IEEE Trans. Intell. Transp. Syst. 2013, 14, 333–347. [Google Scholar] [CrossRef]

- Castiñeira, R.; Gil, M.; Naranjo, J.E.; Jimenez, F.; Premebida, C.; Serra, P.; Vadejo, A.; Nashashibi, F.; Abualhoul, M.Y.; Asvadi, A. AUTOCITS—Regulation study for interoperability in the adoption of autonomous driving in European urban nodes. In Proceedings of the European Transportation Research Arena 2018, Viena, Austria, 16–18 April 2018. [Google Scholar]

- Nikolaou, S.; Gragapoulos, I. IoT—Based interaction of automated vehicles with Vulnerable Road Users in controlled environments. In Proceedings of the 8th International Congress on Transportation Research—ICTR 2017, Thessaloniki, Greece, 27–29 September 2017. [Google Scholar]

- Sakr, A.H.; Bansal, G.; Vladimerou, V.; Kusano, K.; Johnson, M. V2V and onboardonboard sensor fusion for road geometry estimation. In Proceedings of the 2017 IEEE 20th International Conference on Intelligent Transportation Systems (ITSC), Yokohama, Japan, 16–19 October 2017; pp. 1–8. [Google Scholar]

- Rios-Torres, J.; Malikopoulos, A.A. A Survey on the Coordination of Connected and Automated Vehicles at Intersections and Merging at Highway On-Ramps. IEEE Trans. Intell. Transp. Syst. 2017, 18, 1066–1077. [Google Scholar] [CrossRef]

- C-ITS Platform. C-ITS Platform Report Phase I; European Commission: Brussels, Belgium, January 2016. [Google Scholar]

- C-ITS Platform. C-ITS Platform Report Phase II; European Commission: Brussels, Belgium, September 2017. [Google Scholar]

- Barrachina, J.; Sanguesa, J.A.; Fogue, M.; Garrido, P.; Martinez, F.J.; Cano, J.-C.; Calafate, C.T.; Manzoni, P. V2X-d: A Vehicular Density Estimation System That Combines V2V and V2I Communications. In Proceedings of the 2013 IFIP Wireless Days, Valencia, Spain, 13–15 November 2013. [Google Scholar]

- Ilgin Guler, S.; Menendez, M.; Meier, L. Using connected vehicle technology to improve the efficiency of intersections. Transp. Res. Part C. 2014, 46, 121–131. [Google Scholar] [CrossRef]

- Dang, R.; Ding, J.; Su, B.; Yao, Q.; Tian, Y.; Li, K. A lane change warning system based on v2v communication. In Proceedings of the 17th International IEEE Conference Intelligent Transportation Systems (ITSC), Qingdao, China, 8–11 October 2014; pp. 1923–1928. [Google Scholar]

- Dey, K.C.; Rayamajhi, A.; Chowdhury, M.; Bhavsar, P.; Martin, J. Vehicle-to-vehicle (v2v) and vehicle-to-infrastructure (v2i) communication in a heterogeneous wireless network performance evaluation. Transp. Res. Part C 2016, 68, 168–184. [Google Scholar] [CrossRef]

- Kaviani, S.; O’Brien, M.; Van Brummelen, J.; Michelson, D.; Najjaran, H. INS/GPS localization for reliable cooperative driving. In Proceedings of the 2016 IEEE Canadian Conference Electrical and Computer Engineering (CCECE), Vancouver, Canada, 15–18 May 2016. [Google Scholar]

- Jin, I.; Ge, J.I.; Avedisov, S.S.; He, C.R.; Qin, W.B.; Sadeghpour, M.; Orosz, G. Experimental validation of connected automated vehicle design among human-driven vehicles. Transp. Res. Part C 2018, 91, 335–352. [Google Scholar]

- Xu, B.; Li, S.E.; Bian, Y.; Li, S.; Ban, X.J.; Wang, J.; Li, K. Distributed conflict-free cooperation for multiple connected vehicles at unsignalized intersections. Transp. Res. Part C 2018, 93, 322–334. [Google Scholar] [CrossRef]

- Rahman, M.S.; Abdel-Aty, M. Longitudinal safety evaluation of connected vehicles’ platooning on Expressways. Accid. Anal. Prev. 2018, 117, 381–391. [Google Scholar] [CrossRef] [PubMed]

- van Nunen, E.; Koch, R.; Elshof, L.; Krosse, B. Sensor safety for the european truck platooning challenge. In Proceedings of the 23rd World Congress on Intelligent Transport Systems, Melbourne, Australia, 10–14 October 2016. [Google Scholar]

- O’Brien, M.; Kaviani, S.; Van Brummelen, J.; Michelson, D.; Najjaran, H. Localization Estimation Filtering Techniques for Reliable Cooperative Driving. In Proceedings of the 2016 Canadian Society Mechanical Engineering International Congress, Kelowna, Canada, 2–5 June 2016. [Google Scholar]

- Varaiya, P.; Shladover, S.E. Sketch of an IVHS systems architecture. In Proceedings of the Vehicle Navigation and Information Systems Conference, Dearborn, MI, USA, 20–23 October 1991; pp. 909–922. [Google Scholar]

- Talebpour, A.; Mahmassani, H.S. Influence of connected and autonomous vehicles on traffic flow stability and throughput. Transp. Res. Part C 2016, 71, 143–163. [Google Scholar] [CrossRef]

- Lioris, J.; Pedarsani, R.; Tascikaraoglu, F.Y.; Varaiya, P. Platoons of connected vehicles can double throughput in urban Roads. Transp. Res. Part C 2017, 77, 292–305. [Google Scholar] [CrossRef]

- Talavera, E.; Díaz, A.; Jiménez, F.; Naranjo, J.E. Impact on Congestion and Fuel Consumption of a Cooperative Adaptive Cruise Control System with Lane-Level Position Estimation. Energies 2018, 11, 194. [Google Scholar] [CrossRef]

- Kyriakidis, M.; de Winter, J.C.; Stanton, N.; Bellet, T.; van Arem, B.; Brookhuis, K.; Martens, M.H.; Bengler, K.; Andersson, J.; Merat, N.; et al. A human factors perspective on automated driving. Theor. Issues Ergon. Sci. 2017, 1–27. [Google Scholar] [CrossRef]

- Takeda, Y.; Sato, T.; Kimura, K.; Komine, H.; Akamatsu, M.; Sato, J. Electrophysiological evaluation of attention in drivers and passengers: Toward an understanding of drivers’ attentional state in autonomous vehicles. Transp. Res. Part F 2016, 42, 140–150. [Google Scholar] [CrossRef]

- Arakawa, T.; Oi, K. Verification of autonomous vehicle over-reliance. In Proceedings of the Measuring Behavior 2016, Dublin, Ireland, 25–27 May 2016; pp. 177–182. [Google Scholar]

- Arakawa, T. Trial verification of human reliance on autonomous vehicles from the viewpoint of human factors. Int. J. Innov. Comput. Inf. Control 2018, 14, 491–501. [Google Scholar]

- Arakawa, T.; Hibi, R.; Fujishiro, T. Psychophysical assessment of a driver’s mental state in autonomous vehicles. Transp. Res. Part A 2018, in press. [Google Scholar] [CrossRef]

- McCall, R.; McGee, F.; Mirnig, A.; Meschtscherjakov, A.; Louveton, N.; Engel, T.; Tscheligi, M. A taxonomy of autonomous vehicle handover situations. Transp. Res. Part A 2018, in press. [Google Scholar] [CrossRef]

- Politis, I.; Langdon, P.; Bradley, M.; Skrypchuk, L.; Mouzakitis, A.; Clarkson, P.J. Designing autonomy in cars: A survey and two focus groups on driving habits of an inclusive user group, and group attitudes towards autonomous cars. In Proceedings of the International Conference on Applied Human Factors and Ergonomics, Los Angeles, CA, USA, 17–21 July 2017. [Google Scholar]

- Wintersberger, P.; Green, P.; Riener, A. Am I driving or are you or are we both? A taxonomy for handover and handback in automated driving. In Proceedings of the 9th International Driving Symposium on Human Factors in Driver Assessment, Training and Vehicle Design, Manchester Village Vermont, VT, USA, 26–29 June 2017. [Google Scholar]

- Nilsson, J.; Falcone, P.; Vinter, J. Safe transitions from automated to manual driving using driver controllability estimation. IEEE Trans. Intell. Transp. Syst. 2014, 16, 1806–1816. [Google Scholar] [CrossRef]

- Recarte, M.A.; Nunes, L.M. Mental workload while driving: Effects on visual search, discrimination, and decision making. J. Exp. Psychol. Appl. 2003, 9, 119–137. [Google Scholar] [CrossRef] [PubMed]

- Recarte, M.A.; Nunes, L.M. Effects of verbal and spatial-imagery tasks on eye fixations while driving. J. Exp. Psychol. Appl. 2000, 6, 31. [Google Scholar] [CrossRef] [PubMed]

- Schleicher, R.; Galley, N.; Briest, S.; Galley, L.A. Blinks and saccades as indicators of fatigue in sleepiness warnings: Looking tired? Ergonomics 2008, 51, 982–1010. [Google Scholar] [CrossRef] [PubMed]

- Dinges, D. PERCLOS: A Valid Psychophysiology Measure of Alertness as Assessed by Psychomotor Vigilance; Technical Report; Federal Highway Administration: Washington, DC, USA, 1998.

- The Royal Society for the Prevention of Accidents. Road Accidents: A Literature Review and Position Paper; The Royal Society for the Prevention of Accidents: Birmingham, UK, February 2001. [Google Scholar]

- Daza, I.G.; Hernandez, N.; Bergasa, L.M.; Parra, I.; Yebes, J.J.; Gavilan, M.; Quintero, R.; Llorca, D.F.; Sotelo, M.A. Drowsiness monitoring based on driver and driving data fusion. In Proceedings of the 2011 14th International IEEE Conference on Intelligent Transportation Systems (ITSC), Washington, DC, USA, 5–7 October 2011; pp. 1199–1204. [Google Scholar]

- Flores, M.J.; Armingol, J.M.; de la Escalera, A.A. Driver drowsiness warning system using visual information for both diurnal and nocturnal illumination conditions. EURASIP J. Adv. Signal Process. 2010, 2010, 438205. [Google Scholar] [CrossRef]

- Oron-Gilad, T.; Ronen, A.; Shinar, D. Alertness maintaining tasks (AMTs) while driving. Accid. Anal. Prev. 2008, 40, 851–860. [Google Scholar] [CrossRef] [PubMed]

- Papadelis, C.; Chen, Z.; Kourtidou-Papadeli, C.; Bamidis, P.; Chouvarda, L.; Bekiaris, E.; Maglaveras, N. Monitoring sleepiness with onboardonboard electrophysiological recordings for preventing sleep-deprived traffic accidents. Clin. Neurophysiol. 2007, 118, 1906–1922. [Google Scholar] [CrossRef] [PubMed]

- Wilson, G.F.; O’Donnell, R.D. Measurement of operator workload with the neuropsychological workload test battery. Adv. Psychol. 1988, 52, 63–100. [Google Scholar]

- Wickens, C.D. Multiple resources and performance prediction. Theor. Issues Ergon. Sci. 2002, 3, 159–177. [Google Scholar] [CrossRef]

- Bailey, B.P.; Iqbal, S.T. Understanding changes in mental workload during execution of goal-directed tasks and its application for interruption management. ACM Trans. Comput.-Hum. Interact. 2008, 14, 1–28. [Google Scholar] [CrossRef]

- Reimer, B.; Mehler, B.; Coughlin, J.F.; Godfrey, K.M.; Tan, C. An on-road assessment of the impact of cognitive workload on physiological arousal in young adult drivers. In Proceedings of the 1st International Conference on Automotive User Interfaces and Interactive Vehicular Applications, Essen, Germany, 21–22 September 2009; pp. 115–118. [Google Scholar]

- Wickens, C.D.; Hollands, J. Engineering Psychology and Human Performance, 3rd ed.; Prentice Hall: Upper Saddle River, NJ, USA, 2000. [Google Scholar]

- Ahlstrom, U.; Friedman-Berg, F. Using eye movement activity as a correlate of cognitive workload. Int. J. Ind. Ergon. 2006, 36, 623–636. [Google Scholar] [CrossRef]

- Flemisch, F.; Heesen, M.; Hesse, T.; Kelsch, J.; Schieben, A.; Beller, J. Towards a dynamic balance between humans and automation: Authority, ability, responsibility and control in shared and cooperative control situations. Cogn. Technol. Work 2012, 14, 3–18. [Google Scholar] [CrossRef]

- Debernard, S.; Chauvin, C.; Pokam, R.; Langlois, S. Designing Human-Machine Interface for Autonomous Vehicles. IFAC-PapersOnLine 2016, 49, 609–614. [Google Scholar] [CrossRef]

- Rosario, H.D.; Solaz, J.S.; Rodriguez, N.; Bergasa, L.M. Controlled inducement and measurement of drowsiness in a driving simulator. IET Intell. Transp. Syst. 2010, 4, 280–288. [Google Scholar] [CrossRef]

- Beruscha, F.; Wang, L.; Augsburg, K.; Wandke, H.; Bosch, R. Do drivers steer toward or away from lateral directional vibrations at the steering wheel? In Proceedings of the 2nd European Conference on Human Centred Design for Intelligent Transport Systems, Berlin, Gremany, 29–30 April 2010; pp. 227–236. [Google Scholar]

- Morrell, J.; Wasilewski, K. Design and evaluation of a vibrotactile seat to improve spatial awareness while driving. In Proceedings of the 2010 IEEE Haptics Symposium, Waltham, MA, USA, 25–26 March 2010; pp. 281–288. [Google Scholar]

- Anaya, J.J.; Talavera, E.; Jiménez, F.; Gómez, N.; Naranjo, J.E. A novel GeoBroadcast algorithm for V2V Communications over WSN. Electronics 2014, 3, 521–537. [Google Scholar] [CrossRef]

- Talavera, E.; Anaya, J.J.; Gómez, O.; Jiménez, F.; Naranjo, J.E. Performance comparison of Geobroadcast strategies for winding roads. Electronics 2018, 7, 32. [Google Scholar] [CrossRef]

- Naranjo, J.E.; Jiménez, F.; Gómez, O.; Zato, J.G. Low level control layer definition for autonomous vehicles based on fuzzy logic. Intell. Autom. Soft Comput. 2012, 18, 333–348. [Google Scholar] [CrossRef]

- Jiménez, F.; Naranjo, J.E.; Serradilla, F.; Pérez, E.; Hernández, M.J.; Ruiz, T.; Anaya, J.J.; Díaz, A. Intravehicular, Short- and Long-Range Communication Information Fusion for Providing Safe Speed Warnings. Sensors 2016, 16, 131. [Google Scholar] [CrossRef] [PubMed]

- TobiiAB. Tobi Pro Glasses 2 API Developer’s Guide v.1.26.0; TobiiAB: Stockholm, Sweden, 2016; p. 40. [Google Scholar]

- Poock, G.K. Information processing vs pupil diameter. Percept. Mot. Ski. 1973, 37, 1000–1002. [Google Scholar] [CrossRef]

| Task | Time Interval (min) |

|---|---|

| Reading or relaxing (eyes closed) | 1–9 |

| Beep sound | |

| Press button | |

| Cognitive task | |

| Driving task | 0.1–0.5 |

| Stop signal |

| Cognitive Task | |||

|---|---|---|---|

| Verbal | Arithmetic | ||

| Initial driver situation | Reading | 34.8 | 37.1 |

| Relaxing | 34.1 | 35.3 | |

| Variables | Description | Units |

|---|---|---|

| Gaze direction | Gazing direction for both eyes. | millimetre |

| Gaze position | Gaze position within the boundaries of the recording frame. | Up-left [0, 0] to bottom-right [1, 1] |

| Gaze position 3D | Where the pupil is located in 3D. | millimetre |

| Pupil diameter (Left and right) | Two variables with the diameter of each left and right pupil. | millimetre |

| Pupil centre (Left and right) | Two variables with the centre position for each left and right pupil. | millimetre |

| Gyroscope | The angular speed on each of the three axes. | millimetre |

| Video | The video recorded. | fps |

| Sampling rate | 50 Hz |

| Are Differences Significant? | |||

|---|---|---|---|

| Area of Interest | Total Duration | Fixations Number | Duration of First Fixation |

| Lane | Yes (baseline higher) | No | Yes (baseline lower) |

| Left lane | No | No | Yes (baseline lower) |

| Left rear screen | Yes (baseline lower) | Yes (baseline lower) | Yes (baseline lower) |

| Vehicle | No | No | No |

| Are Differences Significant? | ||

|---|---|---|

| Area of interest | Total duration | Fixations number |

| Lane- Left lane | Yes (Lane > Left lane) | No |

| Lane- Left rear screen | No | No |

| Lane-Vehicle | Yes (Lane > Vehicle) | Yes (Lane > Vehicle) |

| Left lane- Left rear screen | No | Yes (Left lane < Left rear screen) |

| Left lane-Vehicle | No | Yes (Left lane > Vehicle) |

| Left rear screen-Vehicle | Yes (Left rear screen > Vehicle) | Yes (Left rear screen > Vehicle) |

| Drivers | Average Pupil Diameter (mm) | |||

|---|---|---|---|---|

| Merging Scenario | Baseline Scenario | |||

| Left Eye | Right Eye | Left Eye | Right Eye | |

| A | 2.7116 | 2.7000 | 2.6261 | 2.5709 |

| B | 1.8747 | 1.8254 | 1.8866 | 1.8759 |

| C | 2.4157 | 2.3367 | 2.3192 | 2.2646 |

| D | 2.4898 | 2.5596 | 2.4603 | 2.4523 |

| E | 3.4948 | 3.4692 | 2.4089 | 2.4337 |

| F | 2.4801 | 2.4552 | 2.2878 | 2.2364 |

| G | 2.0994 | 2.0931 | 1.9586 | 1.9615 |

| H | 1.8746 | 1.8499 | 1.8318 | 1.7699 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jiménez, F.; Naranjo, J.E.; Sánchez, S.; Serradilla, F.; Pérez, E.; Hernández, M.J.; Ruiz, T. Communications and Driver Monitoring Aids for Fostering SAE Level-4 Road Vehicles Automation. Electronics 2018, 7, 228. https://doi.org/10.3390/electronics7100228

Jiménez F, Naranjo JE, Sánchez S, Serradilla F, Pérez E, Hernández MJ, Ruiz T. Communications and Driver Monitoring Aids for Fostering SAE Level-4 Road Vehicles Automation. Electronics. 2018; 7(10):228. https://doi.org/10.3390/electronics7100228

Chicago/Turabian StyleJiménez, Felipe, José Eugenio Naranjo, Sofía Sánchez, Francisco Serradilla, Elisa Pérez, Maria José Hernández, and Trinidad Ruiz. 2018. "Communications and Driver Monitoring Aids for Fostering SAE Level-4 Road Vehicles Automation" Electronics 7, no. 10: 228. https://doi.org/10.3390/electronics7100228

APA StyleJiménez, F., Naranjo, J. E., Sánchez, S., Serradilla, F., Pérez, E., Hernández, M. J., & Ruiz, T. (2018). Communications and Driver Monitoring Aids for Fostering SAE Level-4 Road Vehicles Automation. Electronics, 7(10), 228. https://doi.org/10.3390/electronics7100228