A Miniature Data Repository on a Raspberry Pi

Abstract

:1. Introduction

2. Related Work

3. The Airchive System

3.1. Objectives

3.2. Requirements

- (a)

- Web users access the system through the Internet via a public webpage. They explore current or historical Airchive data, and they are interested in graphical representations of the content. Typically, a Web user is able to query for the data stored in Airchive, and the system will respond with a graph of the data requested. They may also download data in common formats, such as JSON (JavaScript Object Notation), CSV (Comma-separated values), GeoJSON [24] and GeoRSS [25].

- (b)

- Software agents interact with Airchive for retrieving data or harvesting metadata. They may use different protocols and vocabularies to submit their requests. One may follow the SOS protocol for retrieving raw timeseries data, while another could use the OAI/PMH to get meta-information of the digital resources stored. Software agents interact with the system with RESTful Web services (Representational state transfer services) [26] over the HTTP protocol.

- (c)

- The system owner has full access both locally and from the Internet via Secure Shell (SSH). Her responsibilities are to administer the system by updating system software or restarting the device.

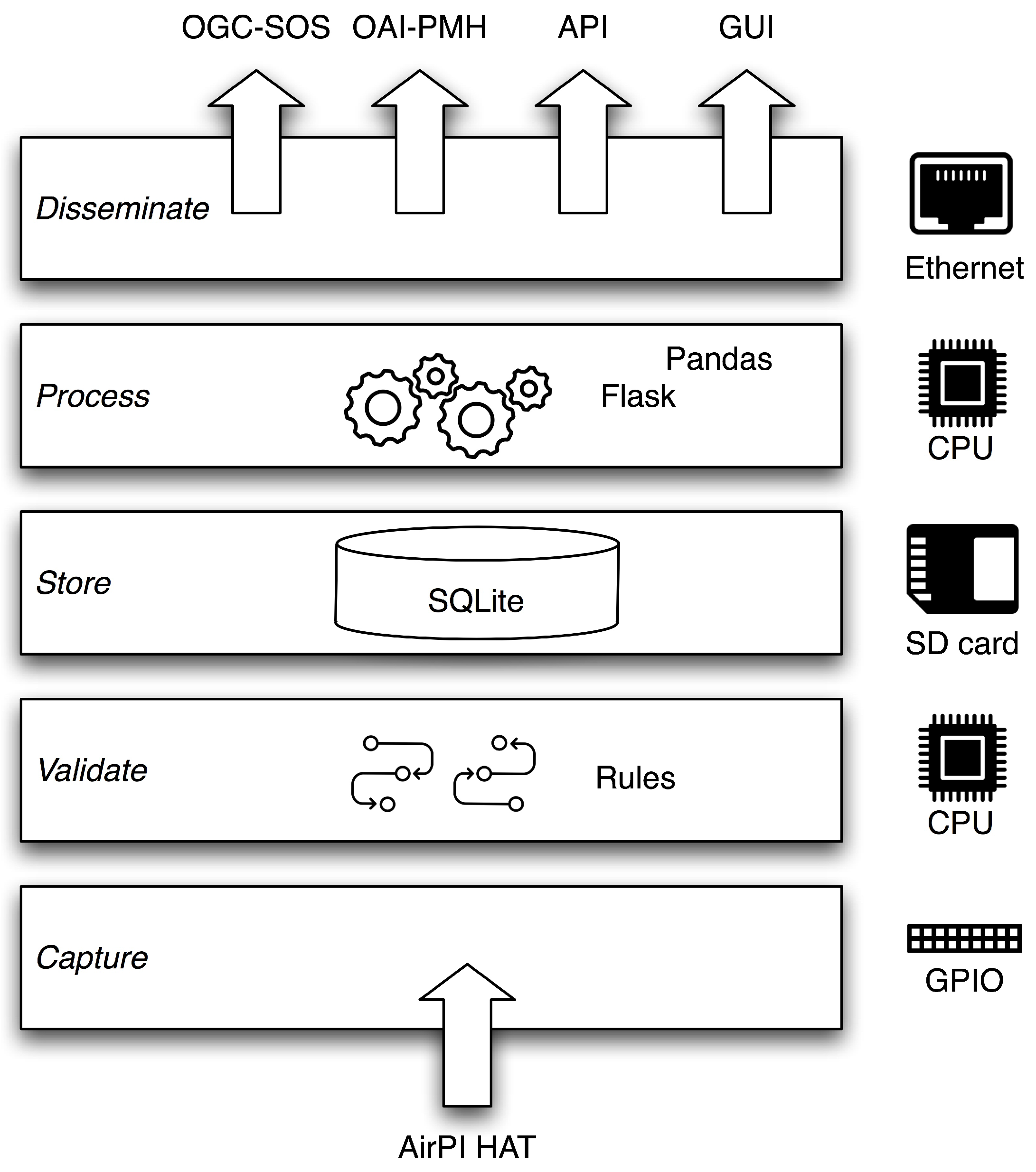

3.3. Abstract Architectural Design

4. Implementation

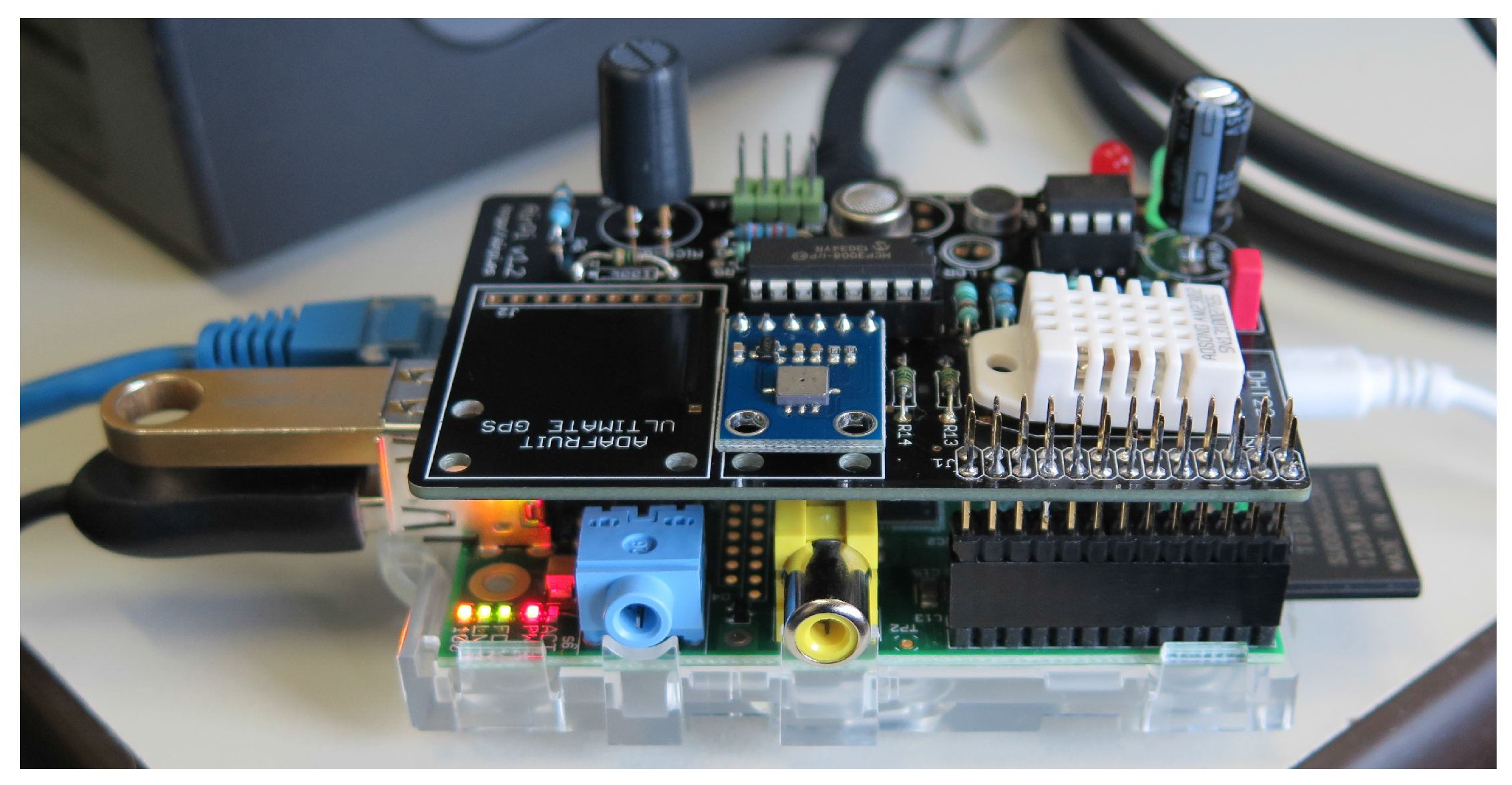

4.1. Hardware

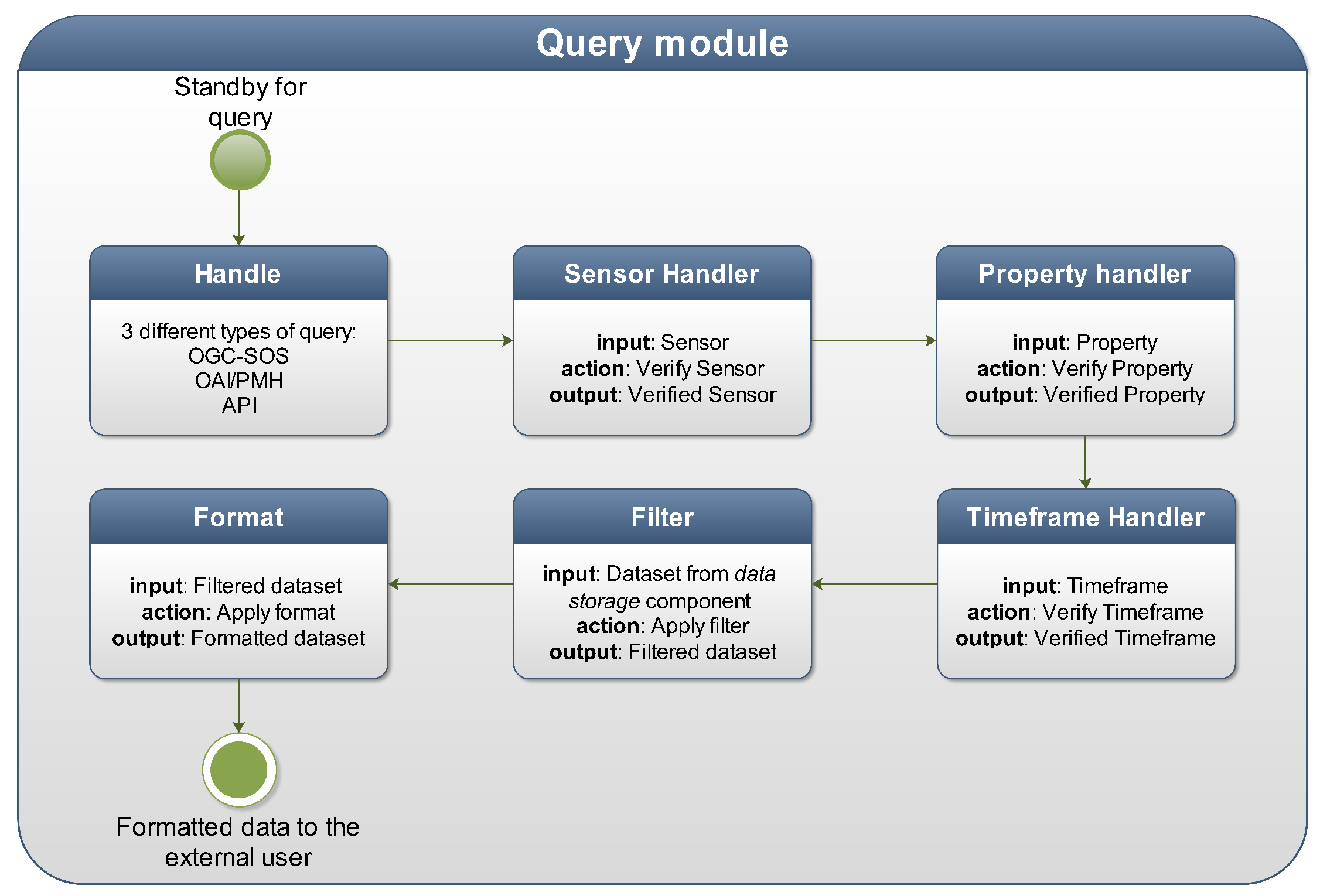

4.2. Software Development

5. Demonstration

5.1. Experimental Design

5.2. Experiment 1: Autonomy and Robustness

5.3. Experiment 2: Stress Testing

5.4. Incidents and Lessons Learned

6. Discussion

7. Conclusions

Supplementary Materials

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Upton, E.; Halfacree, G. Raspberry Pi User Guide; John Wiley & Sons: Hoboken, NJ, USA, 2014. [Google Scholar]

- Nuttall, B. Top 10 Raspberry Pi Add-on Boards. 2016. Available online: https://opensource.com/life/16/7/top-10-Raspberry-Pi-boards (accessed on 12 December 2016).

- Vujović, V.; Maksimović, M. Raspberry Pi as a Sensor Web node for home automation. Comput. Electr. Eng. 2015, 44, 153–171. [Google Scholar] [CrossRef]

- Bahrudin, B.; Saifudaullah, M.; Abu Kassim, R.; Buniyamin, N. Development of Fire Alarm System using Raspberry Pi and Arduino Uno. In Proceedings of the International Conference on Electrical, Electronics and System Engineering (ICEESE), Kuala Lumpur, Malaysia, 4–5 December 2013; pp. 43–48.

- Sapes, J.; Solsona, F. FingerScanner: Embedding a Fingerprint Scanner in a Raspberry Pi. Sensors 2016, 16, 220. [Google Scholar] [CrossRef] [PubMed]

- Chowdhury, M.N.; Nooman, M.S.; Sarker, S. Access Control of Door and Home Security by Raspberry Pi Through Internet. Int. J. Sci. Eng. Res. 2013, 4, 550–558. [Google Scholar]

- Schön, A.; Streit-Juotsa, L.; Schumann-Bölsche, D. Raspberry Pi and Sensor Networking for African Health Supply Chains. In Proceedings of the 6th International Conference on Operations and Supply Chain Management, Bali, Indonesia, 10–12 December 2014.

- Jung, M.; Weidinger, J.; Kastner, W.; Olivieri, A. Building automation and smart cities: An integration approach based on a service-oriented architecture. In Proceedings of the 27th International Conference on Advanced Information Networking and Applications Workshops (WAINA), Barcelona, Spain, 25–28 March 2013; pp. 1361–1367.

- Leccese, F.; Cagnetti, M.; Trinca, D. A Smart City Application: A Fully Controlled Street Lighting Isle Based on Raspberry-Pi Card, a ZigBee Sensor Network and WiMAX. Sensors 2014, 14, 24408–24424. [Google Scholar] [CrossRef] [PubMed]

- Cagnetti, M.; Leccese, F.; Trinca, D. A New Remote and Automated Control System for the Vineyard Hail Protection Based on ZigBee Sensors, Raspberry-Pi Electronic Card and WiMAX. J. Agric. Sci. Technol. B 2013, 3, 853. [Google Scholar]

- Nikhade, S.G. Wireless sensor network system using Raspberry Pi and ZigBee for environmental monitoring applications. In Proceedings of the International Conference on Smart Technologies and Management for Computing, Communication, Controls, Energy and Materials (ICSTM), Chennai, India, 6–8 May 2015; pp. 376–381.

- Tanenbaum, J.; Williams, A.; Desjardins, A.; Tanenbaum, K. Democratizing technology: Pleasure, utility and expressiveness in DIY and maker practice. In Proceedings of the SIGCHI Conf Human Factors in Computing Systems, Paris, France, 27 April–2 May 2013; pp. 2603–2612.

- Lanfranchi, V.; Ireson, N.; When, U.; Wrigley, S.; Fabio, C. Citizens’ observatories for situation awareness in flooding. In Proceedings of the 11th International Conference on Information Systems for Crisis Response and Management (ISCRAM), Pennsylvania State University, University Park, PA, USA, 17 May 2014; pp. 145–154.

- Muller, C.; Chapman, L.; Johnston, S.; Kidd, C.; Illingworth, S.; Foody, G.; Overeem, A.; Leigh, R. Crowdsourcing for climate and atmospheric sciences: Current status and future potential. Int. J. Climatol. 2015, 35, 3185–3203. [Google Scholar] [CrossRef]

- Chang, F.C.; Huang, H.C. A survey on intelligent sensor network and its application. J. Netw. Intell. 2016, 1, 1–15. [Google Scholar]

- Dargie, W.; Poellabauer, C. Fundamentals of Wireless Sensor Networks: Theory and Practice; John Wiley & Sons: Hoboken, NJ, USA, 2010. [Google Scholar]

- Ferdoush, S.; Li, X. Wireless sensor network system design using Raspberry Pi and Arduino for environmental monitoring applications. Procedia Comput. Sci. 2014, 34, 103–110. [Google Scholar] [CrossRef]

- Lewis, A.; Campbell, M.; Stavroulakis, P. Performance evaluation of a cheap, open source, digital environmental monitor based on the Raspberry Pi. Measurement 2016, 87, 228–235. [Google Scholar] [CrossRef]

- Oetiker, T. RRDtool. 2014. Available online: http://oss.oetiker.ch/rrdtool/ (accessed on 12 December 2016).

- Moure, D.; Torres, P.; Casas, B.; Toma, D.; Blanco, M.J.; Del Río, J.; Manuel, A. Use of Low-Cost Acquisition Systems with an Embedded Linux Device for Volcanic Monitoring. Sensors 2015, 15, 20436–20462. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Samourkasidis, A.; Athanasiadis, I.N. Airchive Software. 2016. Available online: https://github.com/ecologismico/airchive (accessed on 12 December 2016).

- Open Geospatial Consortium. OGC Sensor Observation Service 2.0; Implementation Standard 12-006; Open Geospatial Consortium: Wayland, MA, USA, 2012. [Google Scholar]

- Lagoze, C.; Van de Sompel, H. The Open Archives Initiative: Building a low-barrier interoperability framework. In Proceedings of the 1st ACM/IEEE-CS Joint Conference on Digital Libraries, Roanoke, VA, USA, 24–28 June 2001; ACM: New York, NY, USA, 2001; pp. 54–62. [Google Scholar]

- Butler, H.; Daly, M.; Doyle, A.; Gillies, S.; Hagen, S.; Schaub, T. The GeoJSON Format; RFC 7946; The Internet Engineering Task Force: 2016. Available online: http://www.rfc-editor.org/info/rfc7946 (accessed on 12 December 2016).

- GeoRSS: Geographically Encoded Objects for RSS Feeds. 2014. Available online: http://www.georss.org (accessed on 12 December 2016).

- Richardson, L.; Ruby, S. RESTful Web Services; O’Reilly Media, Inc.: Sebastopol, CA, USA, 2008. [Google Scholar]

- Open Geospatial Consortium. Observations and Measurements—XML Implementation; Implementation Standard 10-025r1; Open Geospatial Consortium: Wayland, MA, USA, 2011. [Google Scholar]

- Open Geospatial Consortium. OGC SensorML: Model and XML; Encoding Standard 12-000; Open Geospatial Consortium: Wayland, MA, USA, 2014. [Google Scholar]

- Dublin Core Metadata Initiative (DCMI) Metadata Terms. Available online: http://dublincore.org/documents/dcmi-terms/ (accessed on 12 December 2016).

- Samourkasidis, A.; Athanasiadis, I.N. Towards a low-cost, full-service air quality data archival system. In Proceedings of the 7th International Congress on Environmental Modelling and Software, International Environmental Modelling and Software Society (iEMSs), San Diego, CA, USA, 15–19 June 2014.

- Perera, C.; Zaslavsky, A.; Christen, P.; Georgakopoulos, D. Sensing as a service model for smart cities supported by Internet of Things. Trans. Emerg. Telecommun. Technol. 2014, 25, 81–93. [Google Scholar] [CrossRef]

- Athanasiadis, I.N.; Mitkas, P.A. Knowledge discovery for operational decision support in air quality management. J. Environ. Inform. 2007, 9, 100–107. [Google Scholar] [CrossRef]

- Athanasiadis, I.N.; Milis, M.; Mitkas, P.A.; Michaelides, S.C. A multi-agent system for meteorological radar data management and decision support. Environ. Model. Softw. 2009, 24, 1264–1273. [Google Scholar] [CrossRef]

- Athanasiadis, I.; Rizzoli, A.; Beard, D. Data Mining Methods for Quality Assurance in an Environmental Monitoring Network. In Proceedings of the 20th International Conference on Artificial Neural Networks (ICANN 2010), Thessaloniki, Greece, 15–18 September 2010; Lecture Notes in Computer Science; Springer: Thessaloniki, Greece, 2010; Volume 6354, pp. 451–456. [Google Scholar]

- Athanasiadis, I.N.; Mitkas, P.A. An agent-based intelligent environmental monitoring system. Manag. Environ. Qual. 2004, 15, 238–249. [Google Scholar] [CrossRef]

- Dayan, A.; Hartley, T. AirPi. 2013. Available online: http://airpi.es (accessed on 12 December 2016).

- Free Software Foundation. GNU Affero General Public License. 2007. Available online: https://www.gnu.org/licenses/agpl.html (accessed on 12 December 2016).

- Hartley, T. AirPi Software. 2013. Available online: https://github.com/tomhartley/AirPi (accessed on 12 December 2016).

- sqlite3—DB-API 2.0 Interface for SQLite Databases. 2016. Available online: https://docs.python.org/2/library/sqlite3.html (accessed on 12 December 2016).

- Bayer, M. SQLAlchemy: The Python SQL Toolkit and Object Relational Mapper. 2016. Available online: http://www.sqlalchemy.org (accessed on 12 December 2016).

- McKinney, W. pandas: A Foundational Python Library for Data Analysis and Statistics. In Proceedings of the Workshop Python for High Performance and Scientific Computing (SC11), Seattle, WA, USA, 18 November 2011.

- Ronacher, A. Jinja2. 2008. Available online: http://jinja.pocoo.org (accessed on 12 December 2016).

- Ronacher, A. Flask. 2010. Available online: http://flask.pocoo.org (accessed on 12 December 2016).

- Laursen, O.; Schnur, D. Flot: Attractive JavaScript Plotting for jQuery. 2007. Available online: http://www.flotcharts.org (accessed on 12 December 2016).

- jQuery. 2016. Available online: https://jquery.com (accessed on 12 December 2016).

- Raskin, R.G.; Pan, M.J. Knowledge representation in the semantic web for Earth and environmental terminology (SWEET). Comput. Geosci. 2005, 31, 1119–1125. [Google Scholar] [CrossRef]

- UptimeRobot. 2016. Available online: https://uptimerobot.com (accessed on 12 December 2016).

- Heyman, J.; Hamrén, J.; Byström, C.; Heyman, H. Locust: An Open Source Load Testing Tool. 2011. Available online: http://locust.io (accessed on 12 December 2016).

- Sumsal, F.; Brester, S.G.; Szépe, V.; Halchenko, Y. Fail2ban. 2005. Available online: http://www.fail2ban.org/ (accessed on 12 December 2016).

- SD Association. 2016. Available online: https://www.sdcard.org (accessed on 12 December 2016).

| Sensor Name | Observed Property | Type | Interface |

|---|---|---|---|

| DHT22 | Relative humidity, Temperature | Digital | SPI |

| BMP085 | Atmospheric pressure, Temperature | Digital | SPI |

| MICS-2710 | Nitrogen dioxide | Analog | I2C |

| MICS-5525 | Carbon monoxide | Analog | I2C |

| Metric | Duration |

|---|---|

| Total downtime | 10 days |

| Median downtime | 7 min |

| Average downtime | 543.6 min |

| St. dev. downtime | 2572.4 min |

| Concurrent Clients | Test 1: Static HTML | Test 2: API/JSON | Test 3: SOS/XML | |||

|---|---|---|---|---|---|---|

| ART (std) | RPS (std) | ART (std) | RPS (std) | ART (std) | RPS (std) | |

| 1 | 66.4 (0.5) | 16.2 (0.7) | 1830 (27) | 0.55 (0.01) | 2171 (64) | 0.46 (0.01) |

| 5 | 344 (11) | 15.4 (0.6) | 9467 (178) | 0.52 (0.01) | 11,125 (90) | 0.44 (0.00) |

| 10 | 662 (7) | 15.4 (0.3) | 18,874 (397) | 0.51 (0.01) | 21,651 (281) | 0.44 (0.01) |

| 25 | 1576 (25) | 16.6 (0.7) | 45,607 (410) | 0.49 (0.01) | 49,427 (935) | 0.45 (0.01) |

| User Types | Test 1: Static HTML | Test 2: API/JSON | Test 3: SOS/XML |

|---|---|---|---|

| Human Web users | 82 | 3 | 2 |

| (response in less than 6 s) | |||

| Software agents | 254 | 141 | 138 |

| (response guaranteed) |

© 2016 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license ( http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Samourkasidis, A.; Athanasiadis, I.N. A Miniature Data Repository on a Raspberry Pi. Electronics 2017, 6, 1. https://doi.org/10.3390/electronics6010001

Samourkasidis A, Athanasiadis IN. A Miniature Data Repository on a Raspberry Pi. Electronics. 2017; 6(1):1. https://doi.org/10.3390/electronics6010001

Chicago/Turabian StyleSamourkasidis, Argyrios, and Ioannis N. Athanasiadis. 2017. "A Miniature Data Repository on a Raspberry Pi" Electronics 6, no. 1: 1. https://doi.org/10.3390/electronics6010001

APA StyleSamourkasidis, A., & Athanasiadis, I. N. (2017). A Miniature Data Repository on a Raspberry Pi. Electronics, 6(1), 1. https://doi.org/10.3390/electronics6010001