1. Introduction

Channel coding plays a fundamental role in modern digital communication systems by improving transmission reliability through the introduction of structured redundancy without significantly increasing bandwidth requirements. In wireless communication environments, transmitted signals are inevitably affected by various impairments such as additive noise, multipath fading, interference, and hardware non-idealities. These impairments directly degrade bit error rate (BER) performance and may severely limit system throughput and quality of service. Consequently, efficient channel coding and decoding techniques are essential for ensuring reliable data transmission in high-speed communication systems.

Over the past several decades, a variety of forward error correction (FEC) techniques have been proposed and adopted in communication standards, including convolutional codes, Reed–Solomon codes, low-density parity-check (LDPC) codes, and Turbo codes. Among these schemes, Turbo codes have attracted considerable attention due to their near-Shannon-limit error-correction capability [

1]. Since their introduction in the early 1990s, Turbo codes have been widely applied in numerous wireless communication standards, including 3G, LTE, and satellite communication systems. Their excellent error-correction performance enables reliable transmission even under relatively low signal-to-noise ratio (SNR) conditions, making them a cornerstone technology for modern digital communications.

Turbo coding is a high-performance error correction scheme based on the parallel concatenation of two recursive systematic convolutional (RSC) encoders connected through an interleaver. The interleaver randomizes the input data sequence, effectively reducing correlation between encoded symbols and enabling improved decoding performance [

2]. At the receiver side, decoding is performed through an iterative process in which two component decoders exchange soft reliability information. During each iteration, the reliability estimates of information bits are progressively refined through the exchange of extrinsic information between the component decoders. This iterative soft-information exchange mechanism significantly improves the probability of correct decoding and allows Turbo codes to approach the theoretical channel capacity limit [

1].

Although Turbo codes provide excellent theoretical performance, their practical implementation presents several challenges, particularly in hardware systems. The iterative decoding process requires repeated computations of path metrics, branch metrics, and soft reliability values, which introduces significant computational complexity. In addition, the iterative exchange of extrinsic information requires large amounts of memory access and data movement between component decoders. These factors increase hardware resource consumption and power dissipation, especially in real-time communication systems where high throughput is required.

In practical communication devices such as baseband processors, channel coding and decoding modules must operate under strict constraints in terms of latency, power consumption, and hardware resources. Baseband chips used in modern communication systems integrate multiple signal processing modules, including modulation, channel estimation, equalization, and error-correction decoding. In addition to algorithmic design, accurate circuit modeling and simulation techniques are also essential for evaluating high-speed digital systems and ensuring reliable hardware behavior under various operating conditions [

3]. Among these modules, the Turbo decoder often represents one of the most computationally intensive components. Therefore, the efficiency of the Turbo codec architecture directly influences overall system performance, silicon area, and energy efficiency.

In hardware implementations, Turbo decoding algorithms such as MAP, Log-MAP, Max-Log-MAP, and SOVA (Soft Output Viterbi Algorithm) have been extensively studied. MAP-based algorithms generally provide excellent decoding performance but require complex logarithmic and exponential computations, which are expensive to implement in hardware. Simplified variants such as Max-Log-MAP reduce computational complexity but still require significant arithmetic operations and memory resources. In contrast, the SOVA algorithm offers a favorable trade-off between computational complexity and decoding performance. By extending the conventional Viterbi algorithm to produce soft reliability outputs, SOVA enables iterative decoding while maintaining relatively low hardware complexity, making it particularly suitable for FPGA and ASIC implementations [

4,

5].

Despite these advantages, the efficient hardware realization of SOVA-based Turbo decoders remains challenging. Traditional implementations often suffer from long critical paths, inefficient memory access patterns, and excessive arithmetic complexity. Furthermore, floating-point representations used in algorithm simulations are impractical for hardware implementations due to their large resource consumption. As a result, practical designs must adopt carefully optimized fixed-point quantization schemes to balance decoding accuracy and hardware efficiency. Achieving this balance is a key challenge in the design of hardware-oriented Turbo codec IP cores.

Field-Programmable Gate Arrays (FPGAs) and Application-Specific Integrated Circuits (ASICs) are commonly used platforms for implementing Turbo decoding architectures. FPGAs provide flexibility, rapid prototyping capability, and reconfigurable logic resources, making them suitable for research and system evaluation. However, FPGA resources are still limited when implementing highly parallel iterative decoding architectures. Consequently, architectural optimizations such as pipeline processing, modular decomposition, and memory scheduling are required to achieve high throughput while maintaining resource efficiency.

Motivated by these challenges, this work focuses on the hardware-oriented design of a Turbo codec IP core suitable for FPGA-based communication systems. Rather than pursuing purely algorithmic improvements, this work emphasizes a structured hardware design methodology for SOVA-based Turbo decoding under practical resource constraints.

The main contributions of this work can be summarized as follows:

- (1)

A co-design methodology combining pipelined architecture and fixed-point quantization is proposed, providing a systematic approach for mapping SOVA-based Turbo decoding onto hardware platforms.

- (2)

A modular decoder architecture is developed, where path metric computation, survivor path selection, and soft-output generation are explicitly separated, improving structural clarity and facilitating scalable hardware implementation.

- (3)

A hardware-oriented fixed-point representation strategy is introduced, which preserves the relative ordering of soft information while enabling efficient arithmetic implementation without floating-point operations.

- (4)

An iterative soft-information processing framework is constructed to support reliability propagation across decoding iterations and decoder-side soft-output evaluation.

- (5)

A wrapper-level AXI-compatible interface is incorporated to support system-level data exchange and FPGA/SoC-oriented IP-core integration.

The remainder of this paper is organized as follows.

Section 2 reviews related work on Turbo decoding algorithms and hardware architectures.

Section 3 discusses the current state of the art and existing challenges in Turbo decoder implementations.

Section 4 presents the theoretical advantages of the proposed architecture.

Section 5 evaluates the experimental results and performance of the proposed Turbo codec IP core. Finally,

Section 6 concludes the paper.

The novelty of this work lies not in proposing a new Turbo decoding algorithm, but in developing an implementation-oriented co-design methodology for a short-frame SOVA-Turbo decoder prototype. Specifically, the contribution is reflected in the joint organization of algorithm restructuring, explicit modular partitioning of the decoder datapath, pipelined execution scheduling, fixed-point reliability-preserving mapping, and wrapper-level AXI-based IP-core integration. Supported by post-route implementation results and quantitative decoder-side performance assessment under controlled perturbation, the proposed design provides a practically deployable FPGA/SoC-oriented prototype for hardware verification and system integration. In this sense, the contribution of the present work lies in showing that these implementation choices are not isolated engineering details, but jointly form a reproducible design methodology for short-frame SOVA-Turbo FPGA/SoC prototyping under practical resource constraints. More importantly, the present work addresses a distinct short-frame hardware-design niche in which structural clarity, predictable timing behavior, controllable implementation cost, and FPGA/SoC integration capability are prioritized over standardized long-frame throughput leadership. In this sense, the proposed methodology reflects a targeted implementation trade-off for resource-constrained short-frame decoding scenarios.

2. Related Works

Turbo codes have been extensively studied since their introduction due to their near-Shannon-limit error-correction capability and their practical importance in wireless communication systems. Early research mainly focused on the coding theory, iterative decoding principles, and the performance advantages of parallel concatenated convolutional code (PCCC) structures. In these studies, the interleaver and the soft-information exchange mechanism between component decoders were identified as the key factors contributing to the excellent decoding performance of Turbo codes [

1].

Hardware-oriented research has also been conducted in the broader field of electronic circuit design, where structural optimization and robustness improvement are important objectives. For example, improved circuit architectures based on active components have been proposed to enhance circuit robustness and parameter flexibility [

6]. Recent studies have also investigated low-power Turbo encoder and decoder architectures for communication systems such as NB-IoT, where power efficiency and hardware modularity are important design considerations [

7]. A large body of work has concentrated on MAP-, Log-MAP-, and Max-Log-MAP-based decoder architectures, aiming to improve decoding accuracy while reducing computational complexity. Although these algorithms provide strong error-correction performance, their hardware realization often requires complicated arithmetic operations, large memory bandwidth, and significant resource consumption. This challenge becomes more pronounced in FPGA and ASIC implementations, where throughput, power consumption, and silicon area must be jointly optimized.

To address these issues, researchers have explored simplified soft-output decoding algorithms suitable for hardware deployment. Among them, the Soft Output Viterbi Algorithm (SOVA) has attracted attention because it extends the conventional Viterbi algorithm with reliability output while maintaining relatively low implementation complexity. Existing studies have evaluated the application of SOVA in Turbo decoding and have shown that SOVA-based architectures can achieve an effective balance between decoding performance and hardware cost [

4,

8]. In addition, some improved SOVA variants, such as bidirectional or reliability-enhanced schemes, have been proposed to strengthen soft-output quality and iterative decoding effectiveness [

9].

On the hardware side, prior studies have also investigated parallel decoding architectures, pipelined processing, memory access optimization, and fixed-point quantization strategies. Parallel and pipelined architectures can significantly improve throughput, but they often introduce additional control complexity and interleaving memory conflicts [

10,

11,

12,

13]. Similarly, fixed-point implementations can greatly reduce arithmetic cost compared with floating-point realizations, yet their quantization precision must be carefully designed to avoid noticeable decoding performance degradation. Recent studies have also examined complexity-control and stopping-criterion strategies in iterative receivers. For example, Ding et al. proposed an improved stopping criterion for a BILCM-ID system and showed that adaptive iteration control can significantly reduce ineffective iteration delay with negligible BER degradation [

14].

Overall, existing work has provided valuable foundations for both Turbo decoding algorithms and hardware implementation strategies. However, there remains a need for a structurally clear and hardware-efficient SOVA-based Turbo codec IP core that jointly considers pipeline organization, modular decoder partitioning, and fixed-point quantization co-design for practical FPGA/SoC integration.

3. Current State of the Art

In hardware implementations of Turbo decoding for communication systems, a central challenge arises from the inherent complexity of the iterative decoding algorithms required to achieve near-capacity performance. Conventional component decoders, such as those based on Log-MAP or MAP algorithms, involve extensive path metric computations and soft-information exchanges, which demand significant logic resources and memory access operations when directly mapped to hardware. Moreover, the serial dependencies in conventional iterative decoders result in limited throughput for real-time applications unless careful parallelization and pipeline architectures are employed.

To address throughput limitations, existing research has extensively explored parallel and pipeline decoding structures. For example, fully parallel turbo decoder architectures have been proposed, which unroll multiple processing units to support high-speed decoding, achieving throughputs on the order of Gbps on FPGA platforms [

10,

15]. However, such highly parallel architectures face complex memory scheduling and conflict problems due to concurrent access to interleaved data, which can significantly constrain achievable clock frequencies and resource efficiency.

Another prominent direction focuses on reducing arithmetic complexity in component decoder implementations. Simplified decoding algorithms, such as Max-Log-MAP and approximate reliability-based schemes, have been adopted to trade off slight performance loss for reduced logic usage and lower power consumption [

16]. These algorithmic simplifications facilitate hardware realization with improved energy efficiency and reduced iteration counts, which is beneficial for resource-constrained platforms.

Field-Programmable Gate Arrays (FPGAs) and Application-Specific Integrated Circuits (ASICs) remain the dominant platforms for realizing Turbo codec IP cores due to their flexibility and customizability [

17]. FPGAs, in particular, offer rapid design cycles and reconfigurable logic resources that are well-suited for prototyping and evaluating hardware decoders. However, their logic and memory resources still present constraints for highly parallel Turbo decoder designs at very high data rates. Custom ASIC implementations, while offering superior performance and lower per-bit energy consumption, typically require more upfront design effort and longer development cycles.

In addition to architectural and algorithmic optimization, fixed-point arithmetic and quantization strategies also play an important role in practical hardware implementations. Fixed-point representations reduce the complexity of arithmetic units compared to floating-point implementations and can significantly decrease area and power consumption while maintaining comparable decoding performance when quantization parameters are carefully chosen [

16,

17].

Overall, the technical landscape for Turbo decoding hardware comprises a spectrum of architectural strategies—from high-throughput parallel designs to complexity-reduced algorithmic variants—each balancing performance, resource utilization, and implementation cost. The demand for real-time, high-efficiency decoding continues to drive interest in architectural refinements that maintain near-optimal error correction performance with practical hardware footprints.

4. Proposed Method

In this work, the design of the SOVA-based Turbo codec IP core follows a hardware-oriented co-design methodology, where algorithmic structure and hardware architecture are jointly considered.

The overall design flow consists of three main stages:

- (1)

Algorithm restructuring: The conventional SOVA-based Turbo decoding algorithm is reformulated to explicitly separate path metric computation, survivor path selection, and soft-output generation. This restructuring enables independent processing of computational components and facilitates hardware mapping.

- (2)

Architecture mapping: Based on the restructured algorithm, a modular hardware architecture is constructed. Each functional block is implemented as an independent processing unit, allowing parallel execution and reducing critical path dependency. Pipeline stages are inserted between major computation units to improve throughput and timing performance.

- (3)

Numerical representation design: To support efficient hardware implementation, a fixed-point quantization strategy is adopted. Instead of floating-point operations, reliability values are scaled and represented using signed integer formats. This design preserves the relative ordering of soft information while significantly reducing arithmetic complexity.

These three aspects are jointly optimized to achieve a balance between decoding reliability and hardware efficiency. Unlike conventional approaches that separately optimize algorithm and hardware, the proposed method integrates both aspects into a unified design framework.

4.1. Architectural Modularity and Parallel Processing Optimization

The proposed Turbo codec IP core adopts a modular architecture derived from the PCCC structure and SOVA-based iterative decoding principle. In the encoder design, interleaving, convolutional encoding, puncturing, and control logic are implemented as independent yet coordinated modules. In the decoder architecture, Euclidean distance computation, survivor path selection, soft-output computation, and control logic are explicitly separated into functional blocks.

This structural decomposition provides two theoretical benefits. First, by isolating path metric computation from soft-output update logic, critical path delay is reduced, allowing improved timing closure in FPGA implementations. Second, the parallel organization of component decoders and interleaving modules enables simultaneous processing of iterative data streams, which enhances throughput without fundamentally increasing algorithmic complexity.

In addition, the survivor path module and soft-output module are separated within each component decoder. The softout module calculates competitive path metrics while the survive module determines optimal paths. This separation enables concurrent computation of both the best and competing paths required for reliability evaluation, thereby improving computational efficiency and structural clarity.

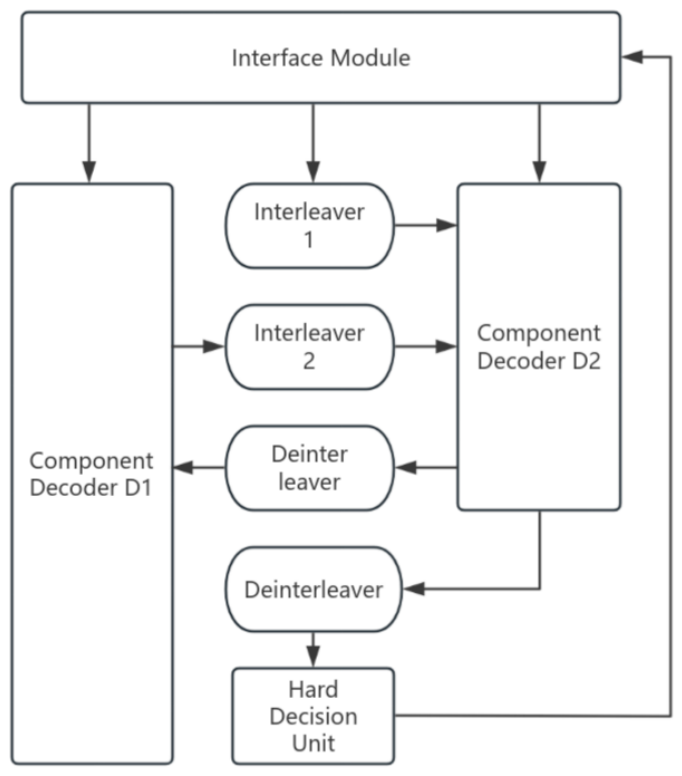

Figure 1 shows the overall architecture of the proposed SOVA-Turbo decoder. The separation of functional modules enables parallel processing and reduces critical path delay, improving hardware efficiency.

4.2. Fixed-Point Quantization Strategy for Hardware-Efficient SOVA Implementation

The proposed implementation introduces a customized fixed-point quantization strategy tailored to the SOVA decoding process. In SOVA-based Turbo decoding, the reliability of candidate paths is evaluated through the comparison of path metrics derived from received symbols and encoder outputs. The path metric of a candidate state transition can be expressed as

where

represents the received channel observation,

denotes the corresponding encoded symbol, and

corresponds to the a priori reliability information associated with the candidate path. This metric formulation allows the decoder to evaluate the relative likelihood of competing paths during the iterative decoding process.

From a hardware implementation perspective, the computation of these reliability metrics does not require high floating-point precision. Instead, SOVA-based decoding primarily depends on preserving the relative magnitude relationships among path metrics and soft information values. Therefore, floating-point operations are avoided in the proposed design by scaling soft information values (e.g., multiplying by a factor of 100) and storing them in signed fixed-point format.

Furthermore, complement-based arithmetic is employed to simplify signed operations in the hardware datapath. By replacing floating-point multipliers and complex arithmetic units with integer-based operations, the hardware design significantly reduces logic utilization and power consumption while maintaining sufficient dynamic range for reliable soft-decision decoding. This quantization-aware implementation enables efficient FPGA realization of the Turbo codec while preserving decoding reliability.

4.3. Iterative Soft-Information Enhancement and Reliability Improvement

The theoretical strength of Turbo decoding lies in the iterative exchange of extrinsic information between component decoders. In the proposed design, soft-output values (LLR-related quantities) are explicitly computed and updated during each decoding iteration. The extrinsic information generated by one component decoder is interleaved and forwarded to the other decoder, forming a closed-loop reliability refinement mechanism.

In SOVA-based decoding, the generation and iterative refinement of extrinsic information are essential for improving decoding reliability. The update of the extrinsic information can be expressed as

where

and

denote the extrinsic information produced by the first and second component decoders at iteration

r, respectively. These values represent the reliability difference between competing paths and are iteratively exchanged through the interleaver to progressively refine the bit-level decision reliability.

To support this iterative reliability refinement, the proposed architecture explicitly outputs bit-level soft reliability values through the softout module. This module evaluates competing path metrics and derives reliability information based on the metric differences between survivor and competing paths, thereby improving the distinguishability between candidate decoding paths during survivor selection.

From a theoretical perspective, the iterative reinforcement of soft reliability information gradually increases the confidence of bit-level decisions under noisy channel conditions. By continuously refining reliability metrics instead of relying solely on hard decisions, the decoder can achieve lower error probability and improved robustness. This reliability-driven iterative mechanism constitutes a key theoretical advantage of the proposed architecture.

4.4. AXI-Based Bus Interface for System-Level Integration

Beyond the internal encoder and decoder architecture, practical deployment of a Turbo codec IP core in FPGA/SoC systems requires an efficient and standardized on-chip communication interface. To address this requirement, the proposed design incorporates an AXI-based bus interface to connect the Turbo codec engine with an external host processor or upper-level control system [

18]. This interface enables the IP core to operate not merely as an isolated functional module, but as an integrable subsystem within a larger communication baseband platform.

Figure 2 shows the AXI-based interface architecture, which enables standardized communication between the Turbo codec IP core and external systems.

The adopted interface follows the AXI-FULL protocol, which provides independent read and write channels and supports burst-based data transmission [

19]. In the proposed design, the AXI host acts as the upper-level interface module that connects the external processor with the Turbo codec IP core, enabling efficient data exchange between the host system and the encoder/decoder modules.Similar AXI-compatible SoC integration strategies have also been adopted in high-performance communication systems to support efficient data exchange between processing cores, interface modules, and communication peripherals [

20].

Therefore, the AXI-based wrapper provides a practical host-to-codec interface and supports FPGA/SoC-oriented integration of the proposed prototype.

5. Experimental Results and Comparison

It should be emphasized that the present design is a 4-bit short-frame prototype intended for hardware-oriented architectural verification and FPGA/SoC integration. Therefore, the reported throughput and FER/robustness results are not directly comparable to long-frame LTE-class Turbo decoders optimized for standardized communication benchmarks.

To place the present prototype in context, representative FPGA Turbo decoder implementations are listed in

Table 1. Because of major differences in block length, parallelism, and evaluation conditions, these entries are used only as implementation-level references.

The evaluation in this work focuses on structural execution behavior, module interaction, and hardware-oriented design characteristics, rather than purely numerical performance benchmarking. This perspective allows a clearer analysis of the trade-offs between decoding complexity, implementation efficiency, and architectural organization.

To better position the proposed prototype with respect to the state of the art, a comparison is provided primarily from the hardware-implementation perspective, while the algorithmic context is included to clarify the rationale for adopting an SOVA-based design. Representative Turbo decoder implementations are considered to show the differences in target scenario, frame length, implementation platform, throughput objective, and architectural emphasis.

Log-MAP-family decoders generally provide strong decoding performance but require more complex arithmetic support, whereas SOVA-based decoders offer a more hardware-friendly trade-off between implementation cost and soft-output capability. Within this context, the proposed work is positioned as a short-frame, implementation-oriented SOVA-Turbo prototype that emphasizes structural clarity, practical hardware mapping, and FPGA/SoC-oriented integration.

Compared with representative long-frame or throughput-oriented SoA implementations, the proposed architecture does not aim to maximize standardized communication-level throughput. Instead, it focuses on the joint design of modular datapath decomposition, pipelined execution, fixed-point reliability-preserving computation, and wrapper-level AXI integration. This positioning is summarized in

Table 1.

It should be noted that the representative works listed above mainly target long-frame, standards-oriented, or high-throughput Turbo decoding scenarios, whereas the present study focuses on a 4-bit short-frame hardware prototype for architectural verification and FPGA/SoC-oriented IP-core integration. Therefore, the comparison in

Table 1 is intended to clarify design positioning, implementation scope, and hardware trade-offs, rather than to claim direct superiority in communication-level benchmarking.

Compared with representative SoA implementations, the present work does not target long-frame standardized Turbo decoding or multi-Gbps throughput optimization. Instead, it emphasizes a short-frame, implementation-oriented SOVA-Turbo prototype intended for architectural verification, fixed-point mapping, and wrapper-level system integration. Therefore, the role of

Table 1 is not to establish a head-to-head benchmark claim, but to position the proposed design relative to existing implementation directions, including fully parallel throughput-oriented decoding, low-latency parallel decoding, and Log-MAP-family implementation trade-off design.

Throughput is estimated from the measured cycle count and latency of a 4-bit prototype frame, and is therefore not directly comparable to long-frame LTE-class Turbo decoders. The entries in

Table 1 are intended as implementation-level references only, since block length, degree of parallelism, and decoding conditions differ significantly across reported designs.

Taken together,

Table 1 makes explicit the design positioning of the proposed prototype relative to representative SoA directions, including fully parallel throughput-oriented decoding, low-latency parallel decoding, and Log-MAP-family implementation trade-off design.

5.1. Throughput and Structural Execution Efficiency

The performance of the proposed Turbo codec IP core was evaluated through functional simulation of the encoder, decoder, and AXI interface modules, as documented in the PDF. The experimental validation included waveform analysis of interleaver modules, convolutional encoders, puncturing units, Euclidean distance computation blocks, survivor path modules, and soft-output computation modules.

The modular architecture enables concurrent operation of interleaving, component decoding, and soft-information update processes. In particular, the separation of Euclidean distance computation and survivor path selection allows these operations to proceed without mutual structural interference. Furthermore, the parallel organization of the two component decoders supports synchronized iterative processing.

Simulation waveforms demonstrate that data flow between modules is coordinated and that iterative processing proceeds without pipeline stalls. The hardware-oriented modular decomposition reduces sequential dependency across major computational blocks, thereby improving effective throughput compared with conventional monolithic decoder structures.

In addition to qualitative waveform verification, quantitative timing-related metrics were extracted from post-implementation analysis and cycle-level simulation. Under a 10 ns clock constraint, corresponding to a target frequency of 100 MHz, the proposed decoder requires approximately 52,000 clock cycles to complete one 4-bit prototype frame. Based on this measured execution length, the latency per frame is approximately 520 µs. Accordingly, the estimated throughput at 100 MHz is about 7.69 kbps. This result is consistent with the short-frame prototype nature of the present design and is sufficient for validating the architectural feasibility of the proposed wrapper-based decoder IP core.

These results indicate that the proposed architecture can sustain stable iterative decoding execution under a hardware-oriented implementation flow. More importantly, the measured cycle count and timing closure provide quantitative evidence that the introduced modular decomposition and pipelined organization support predictable execution behavior and practical FPGA deployment.

Figure 3 and

Figure 4 show the AXI read and write simulation waveforms. The correct VALID/READY handshake signals confirm reliable communication behavior.

In addition to the internal codec datapath, the proposed design was validated through AXI-based read and write simulations. The observed VALID/READY handshake behavior confirms correct host-to-codec communication and supports wrapper-level system integration of the proposed IP core.

5.2. Resource Utilization and Hardware Efficiency

Resource efficiency was validated through structural analysis of the implemented modules. The proposed design adopts fixed-point arithmetic by scaling soft information values and representing them in signed integer format. This approach eliminates floating-point operators and simplifies arithmetic logic.

In addition, complement-based signed operations reduce the complexity of arithmetic units required for path metric computation. The separation of functional modules—such as survive (path metric selection) and softout (soft information generation)—further reduces unnecessary logic coupling and simplifies control pathways.

To further provide quantitative implementation evidence, the proposed wrapper-based decoder was implemented and routed on the Xilinx Zynq UltraScale+ XCZU7EV-FFVC1156-2-E device (Xilinx, Inc., now part of AMD, San Jose, CA, USA). Post-route results show that the implemented design occupies 11,208 LUTs, 7008 flip-flops, 50 DSP blocks, and no BRAM resources. Under the imposed 10 ns clock constraint, all user-specified timing constraints are met. The worst negative slack (WNS) is 5.482 ns and the total negative slack (TNS) is 0.000 ns, indicating successful timing closure at 100 MHz. Moreover, the positive timing margin suggests an estimated maximum clock frequency of approximately 221 MHz.

Table 2,

Table 3 and

Table 4 collectively provide quantitative post-route evidence for the proposed decoder implementation. Specifically,

Table 2 summarizes the implementation status and key hardware metrics,

Table 3 shows the corresponding resource utilization levels, and

Table 4 confirms successful timing closure under the target clock constraint. Together, these results demonstrate that the proposed wrapper-based SOVA decoder can be implemented with modest hardware cost while maintaining predictable timing behavior on the target FPGA platform.

In addition to the above hardware statistics, the measured execution behavior shows that the decoder requires about 52,000 cycles per frame, corresponding to a latency of approximately 520 µs. At 100 MHz, the resulting estimated throughput is about 7.69 kbps.

Figure 5 presents the calculated path metrics before iteration, demonstrating the correctness of metric computation.

Compared with generic Turbo decoder implementations that rely on more complex arithmetic structures, the proposed architecture reduces logic redundancy and improves resource utilization suitability for FPGA-based deployment. The design is therefore structurally appropriate for embedded baseband processing environments where logic and memory resources are constrained.

From a system-level perspective, the AXI interface supports reliable host-to-codec data transfer without tightly coupling bus logic to the internal coding and decoding datapath. The observed read/write behavior confirms that the proposed IP core can operate as a reusable subsystem in FPGA/SoC environments while preserving the modularity of the internal processing architecture.

5.3. Error Correction Performance Under Iterative Soft-Decision Mechanism

The present study evaluates a short-frame, hardware-oriented decoder prototype and its FPGA/SoC integration. Accordingly, this subsection does not aim to reproduce a standardized long-frame communication-chain BER benchmark. Instead, decoder-side performance is quantitatively assessed using repeated AWGN perturbation applied to a verified decoder-compatible soft-input template. The resulting exact-match correct decode rate and frame error rate are used as quantitative indicators of decision-recovery capability and robustness under controlled noisy conditions.

The original received symbol groups were configured as

where the first element corresponds to the systematic bit observation and the remaining elements correspond to parity-related observations generated by the recursive convolutional encoders. In order to simplify hardware implementation and avoid floating-point operations, the received samples were scaled by a factor of 100 and stored using 16-bit signed fixed-point representation. This quantization preserves the relative reliability relationships among the received symbols while significantly reducing arithmetic complexity in the hardware implementation. The corresponding fixed-point input data and the representative soft-input template used for decoder-side robustness evaluation are summarized in

Table 5 and

Table 6, respectively.

As shown in the simulation input data, the scaled integer representations correspond to the hardware-oriented fixed-point values used by the decoder modules. In particular, the second parity observations of the first and fourth symbol groups were intentionally assigned values that differ significantly from the other groups. This configuration was designed to test the iterative error-correction capability of the decoder when encountering inconsistent reliability information among the received symbols.

Figure 6 shows the relationship between the input observations and the generated soft-output values, illustrating the correspondence between decoder input and soft-reliability output.

In addition to the above waveform-based verification, decoder-side robustness was further evaluated using the correct decode rate and frame error rate under controlled noisy perturbation. Specifically, the percentage of correctly recovered output frames and the frame error rate were measured under different SNR conditions to reflect the stability of the proposed decoder against soft-input degradation.

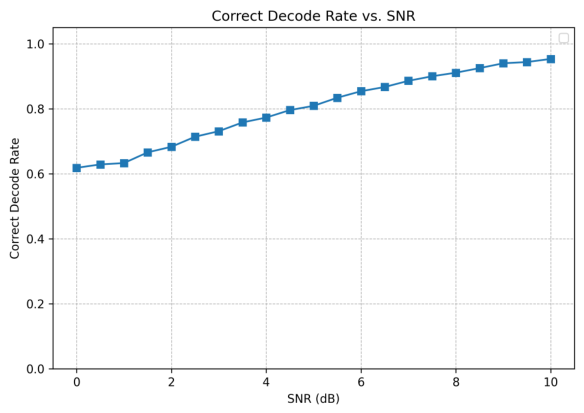

Figure 7 presents the correct decode rate under repeated AWGN perturbation of the verified soft-input template. As the SNR increases, the probability of recovering the expected 4-bit decision shows a consistent upward trend, providing direct quantitative evidence of decoder-side decision-recovery capability under noisy conditions.

Figure 8 shows the corresponding frame error rate under the same repeated AWGN perturbation setting. As the SNR increases, the FER decreases accordingly, which is consistent with the correct-decode-rate trend and further supports the decoder-side robustness of the proposed prototype under noisy conditions.

For visual clarity, the plotted curves are smoothed by a moving-average window, while the underlying statistical results are obtained from repeated decoding trials at each SNR point.

Table 7 reports representative quantitative decoder-side assessment results at selected SNR points. These numerical results are consistent with the trends observed in

Figure 7 and

Figure 8, and provide additional evidence that the proposed prototype maintains meaningful decision-recovery capability under controlled noisy perturbation.

Waveform analysis confirms that soft-output values correspond to relative reliability levels derived from competitive path metrics in the SOVA decoding process. During iterative decoding, the extrinsic information generated by one component decoder is interleaved and fed into the second decoder, forming a closed-loop reliability refinement mechanism. As iterations proceed, the reliability estimates of individual bits are progressively updated, which improves the consistency between soft-decision values and the final hard-decision outputs.

Figure 9 illustrates the timing behavior of the soft-output module, confirming synchronization between data and control signals.

To quantitatively evaluate the robustness of the proposed decoder, a verified decoder-compatible soft-input template was selected from the above functional simulation case. Additive Gaussian noise was then imposed under different SNR settings, and repeated decoding trials were performed. The correct decode rate was defined as the ratio of trials whose decoded 4-bit output exactly matched the expected target sequence 0110. Correspondingly, the frame error rate (FER) was calculated as

. The corresponding decimal and hexadecimal representations of the calculated path metrics are summarized in

Table 8.

Figure 10 shows the distribution of path metrics, verifying that the fixed-point implementation preserves relative magnitude relationships.

Furthermore, the experimental observations verify that the proposed fixed-point quantization strategy does not destroy the relative magnitude relationships of reliability metrics, which are essential for soft-decision decoding. This confirms that the fixed-point SOVA implementation can maintain decoding effectiveness while significantly reducing hardware complexity.

At the interface-validation level, the correctness of the AXI-assisted codec workflow also supports the reliability of the overall system operation. In the encoder-top-plus-AXI simulation, the returned bus data were shown to match the internally generated encoded results, indicating that no functional mismatch was introduced by the external interface layer.

Figure 11 shows the decoding write-data transmission waveform, verifying that the processed decoder data can be correctly transferred through the AXI-based system interface.

Therefore, the proposed design not only achieves reliable iterative decoding behavior internally, but also supports consistent result delivery under a host-controlled AXI-based transmission framework. This property is important for practical FPGA/SoC deployment, where decoding correctness must be maintained across both algorithmic processing and system-level communication interfaces.

5.4. Discussion of Comparison Scope and Limitations

The comparison results presented in this work should be interpreted within the scope of the proposed prototype. Unlike many representative SoA Turbo decoder implementations, which target standardized long-frame communication scenarios and prioritize multi-Gbps throughput or communication-level BER/BLER optimization, the present design is a 4-bit short-frame prototype developed primarily for hardware-oriented architectural verification and FPGA/SoC-oriented IP-core integration.

Accordingly, direct quantitative comparison with LTE-class or broadcasting-oriented Turbo decoders is inherently limited by substantial differences in frame length, decoding objective, degree of parallelism, iteration configuration, and evaluation methodology. For this reason,

Table 1 is intended to clarify the positioning of the proposed work relative to existing implementation directions, rather than to claim direct superiority over high-throughput long-frame decoders.

Within this scope, the main value of the present work lies in four aspects. First, the decoder adopts a structurally explicit modular partitioning strategy, separating path metric computation, survivor selection, and soft-output generation for hardware mapping clarity. Second, the design combines pipelined execution with fixed-point quantization to support practical implementation under FPGA resource constraints. Third, wrapper-level AXI-based integration is incorporated to facilitate host-to-codec communication in FPGA/SoC deployment. Fourth, in addition to post-route implementation metrics, decoder-side robustness is quantitatively examined through the correct decode rate under controlled noisy perturbation.

At the same time, the present study has several limitations. The evaluated prototype uses a very short frame length and therefore does not yet represent a standards-oriented Turbo decoder implementation. In addition, the current experimental results emphasize hardware feasibility, execution behavior, and decoder-side robustness, rather than full communication-chain BER/BLER benchmarking under standardized settings. Future work will extend the frame length, refine the evaluation setup under more conventional channel models and code configurations, and establish broader comparisons with representative hardware Turbo decoder architectures.

6. Conclusions

This paper presents a hardware-oriented design of a SOVA-based Turbo codec IP core targeting FPGA and SoC communication systems. The proposed architecture focuses on improving structural efficiency and implementation practicality through the co-design of pipeline organization and fixed-point numerical representation.

The design introduces a modular decomposition of the Turbo encoding and decoding datapath, where key functional modules such as path metric computation, survivor path selection, and soft-information generation are separated to improve architectural clarity and reduce critical path delay. In addition, a customized fixed-point quantization scheme is adopted to replace floating-point operations, significantly reducing hardware complexity while preserving the relative reliability relationships required for soft-decision decoding.

An AXI-based bus interface is further incorporated to enable standardized communication between the codec IP core and external host systems. This interface-level design improves the reusability and system integration capability of the proposed architecture, allowing the codec to operate as a practical subsystem in FPGA/SoC-based communication platforms.

Experimental results based on functional simulation verify that the proposed design can correctly perform encoding, iterative SOVA-based decoding, and soft-information generation while maintaining efficient data exchange through the AXI interface.

Post-route implementation on the Xilinx xczu7ev-ffvc1156-2-e device further shows that the proposed decoder occupies 11,208 LUTs, 7008 flip-flops, 50 DSP blocks, and no BRAM resources, while meeting a 100 MHz timing constraint with positive slack. In addition, robustness evaluation based on decoder-compatible soft-input perturbation shows that the correct decode rate increases and the FER decreases as the SNR increases, providing supplementary quantitative evidence for the prototype-level feasibility of the proposed hardware-oriented SOVA decoder architecture.

Rather than claiming a new decoding algorithm, this work demonstrates that a short-frame SOVA-Turbo prototype can be systematically realized through the co-design of modular datapath partitioning, pipelined execution, fixed-point reliability-preserving representation, and wrapper-level AXI integration. In this sense, the contribution of the present study lies in an implementation-oriented architectural methodology together with prototype-level validation under practical FPGA deployment constraints.

Future work will focus on further architectural optimization and large-scale FPGA/ASIC implementation to evaluate the performance of the proposed codec in high-throughput communication scenarios.