On Memorization and Generalization in Compact Transformers

Abstract

1. Introduction

1.1. Research Gap

1.2. Contributions

1.3. Novelty

2. Methods and Materials

2.1. Memorization of Random Sequences

2.1.1. Capacity in Networks

2.1.2. Transformer Models

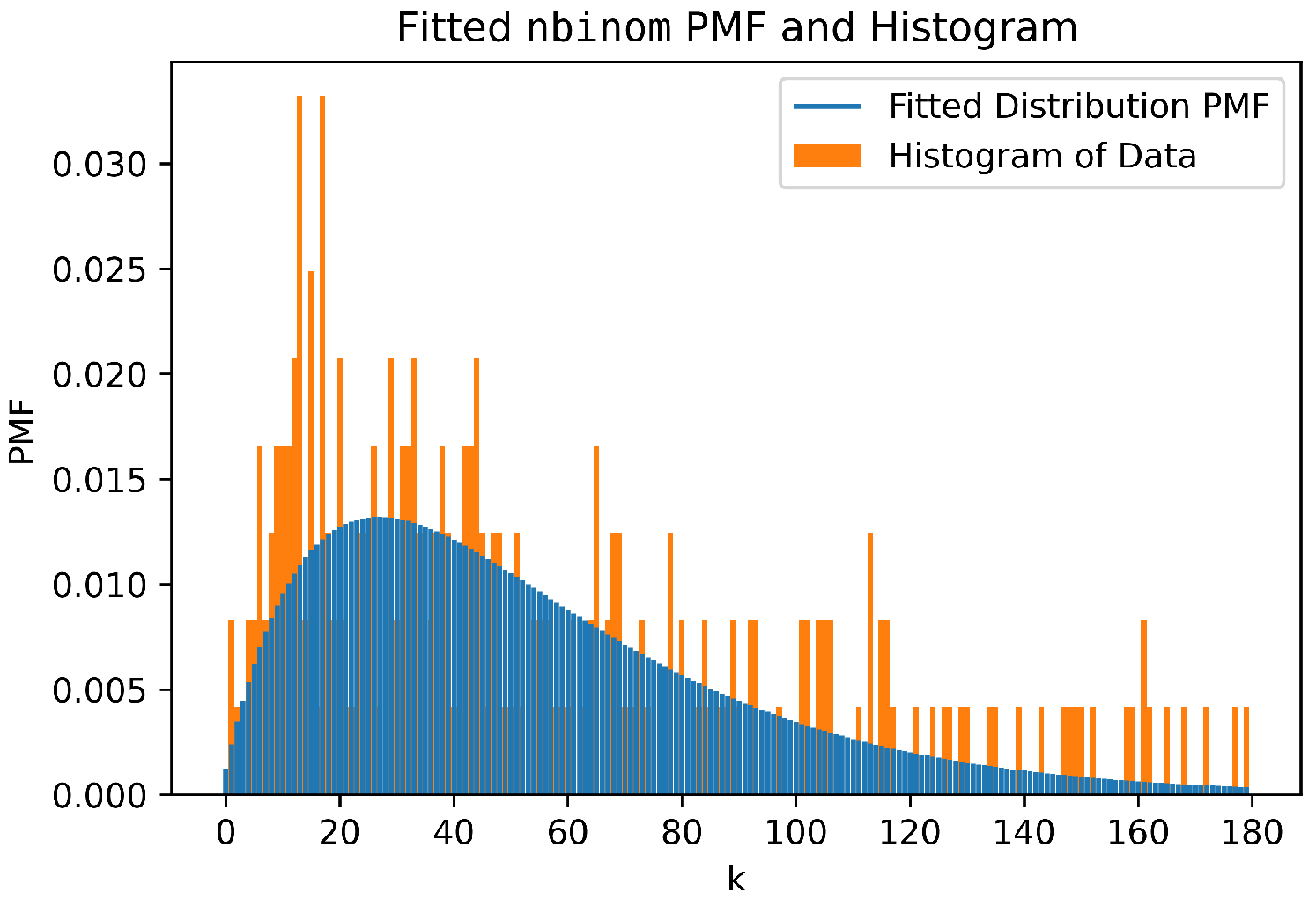

2.1.3. Data Generation

2.2. Memorization of Synthetic Sentences

2.2.1. Data Generation

2.2.2. Triplets Generation

2.2.3. Sequences Generation

2.2.4. Transformer Training

2.2.5. Triplets and Sequences Memorization

2.3. Generalization and Abstraction

2.3.1. Data Generation

2.3.2. Evaluation

2.3.3. Models

2.4. Generalization in Algorithmic Tasks

- Length generalization reflects algorithmic internalization: the model applies a fixed procedure to arbitrary input lengths.

- Compositional generalization reflects algorithmic reasoning: the model dynamically assembles subroutines into novel workflows.

- Simplicity and Interpretability: Addition is a well-defined deterministic task with minimal combinatorial complexity compared to operations like polynomial evaluation or sorting. Its stepwise nature (e.g., digit-wise processing with carry propagation) allows for granular analysis of how transformers encode sequential dependencies and positional reasoning.

- Controlled Scalability: The input length, in addition algorithmic tasks, can be systematically extended (e.g., from 5-digit to 10-digit numbers) without changing the underlying algorithm. This facilitates a precise evaluation of the generalization beyond training durations.

2.4.1. Preliminaries

2.4.2. Data Generation

Reversed Format

Index Hints

Random Space Augmentation

Zero-Padding

2.4.3. Positional Embeddings/Encodings (PE)

Absolute Positional Encoding (APE)

Additive Relative Positional Encoding (RPE)

Position Coupling

- Token Partitioning: The input sequence is divided into groups of consecutive tokens, where each token within a group carries a unique semantic meaning. This grouping enables one-to-one correspondence between tokens in different groups that are relevant to the task.

- Position ID Assignment: For each group, a sequence of consecutive numbers (typically positive integers) is assigned as position IDs, beginning from a random number during training or a fixed number during evaluation. Tokens that represent the same significance across different groups are given the same position ID (i.e., their positions are coupled).

Randomized Position Encoding

No Positional Encoding (NoPE)

Rotary Positional Encoding (RoPE)

3. Results

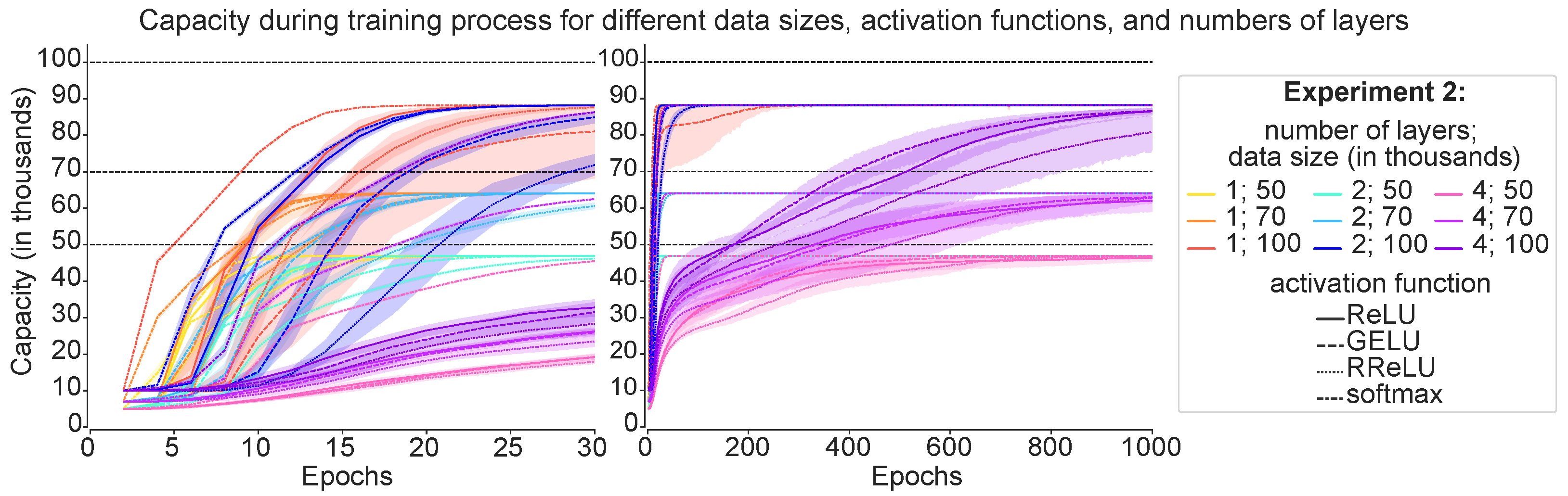

3.1. Memorization of Random Sequences

3.1.1. Capacity in the Two Formulations

3.1.2. Impact of Batch Size

3.1.3. Empirical Capacity Model

3.2. Memorization of Synthetic Sentences

3.2.1. Dataset Size Influence

3.2.2. Architectural Variations

3.2.3. Embedding-Size Influence Under a Fixed Budget

3.2.4. Insights from Sequence Datasets

3.3. Generalization and Abstraction

3.3.1. Results for the One Layer and Eight Heads

3.3.2. Results for the Two Layers and Four Heads

3.3.3. Results for the Three Layers and Two Heads

3.4. Generalization in Algorithmic Tasks

3.4.1. Enhancing Generalization via Positional Encoding and Data Formatting

3.4.2. Leveraging Task Structure: Position Coupling and Structural Symmetry

4. Discussion

4.1. Memorization of Random Sequences

4.2. Memorization of Synthetic Sentences

4.2.1. Effect of Dataset Structure

4.2.2. Architectural Influence

4.3. Generalization and Abstraction

4.3.1. Interpreting the One Layers and Eight Heads

4.3.2. Interpreting the Two Layers and Four Heads

4.3.3. Interpreting the Three Layers and Two Heads

4.3.4. Abstraction Heads

4.4. Generalization in Algorithmic Tasks

- Task Scope: This section primarily focuses on controlled algorithmic tasks—such as integer addition—to illustrate the challenges of transformer generalization. Although these tasks provide clear insights into the mechanisms of length and compositional generalization, they may not fully capture the complexities encountered in real-world applications.

- Emphasis on Length Generalization: Although our taxonomy differentiates between length and compositional generalization, the discussion and empirical focus have largely centered on length generalization. The dynamics of compositional generalization, particularly in the context of large-scale reasoning models, requires further in-depth exploration.

- Sensitivity to Experimental Settings: Many of the techniques reviewed, including specific data formatting strategies and positional encoding modifications, are sensitive to factors such as random initialization, training order, and hyperparameter choices. This sensitivity may limit the reproducibility and robustness of the improvements reported in diverse settings.

- Evolving Landscape: The field of transformer research is rapidly evolving. New architectural innovations and training methodologies continue to emerge, which may not be fully captured in our current analysis. Future work will need to continuously update the survey framework to integrate these advances.

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models are Few-Shot Learners. In Proceedings of the Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2020; Volume 33, pp. 1877–1901. [Google Scholar]

- Liang, P.; Bommasani, R.; Lee, T.; Tsipras, D.; Soylu, D.; Yasunaga, M.; Zhang, Y.; Narayanan, D.; Wu, Y.; Kumar, A.; et al. Holistic Evaluation of Language Models. Trans. Mach. Learn. Res. 2023. Available online: https://collaborate.princeton.edu/en/publications/holistic-evaluation-of-language-models/ (accessed on 1 March 2026).

- Radford, A.; Kim, J.W.; Xu, T.; Brockman, G.; McLeavey, C.; Sutskever, I. Robust Speech Recognition via Large-Scale Weak Supervision; Technical Report. 2023. Available online: https://proceedings.mlr.press/v202/radford23a.html (accessed on 1 March 2026).

- Liu, Y.; Zhang, Y.; Wang, Y.; Hou, F.; Yuan, J.; Tian, J.; Zhang, Y.; Shi, Z.; Fan, J.; He, Z. A Survey of Visual Transformers. IEEE Trans. Neural Netw. Learn. Syst. 2023, 35, 7478–7498. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Proceedings of the 31st International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 4–9 December 2017; pp. 6000–6010. [Google Scholar]

- Bahdanau, D.; Cho, K.H.; Bengio, Y. Neural machine translation by jointly learning to align and translate. In Proceedings of the International Conference Learning Representations, ICLR’15, San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. In Proceedings of the International Conference on Learning Representations, ICLR’17, San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Gupta, B.; Ta, P.; Ram, K.; Sivaprakasam, M. Comprehensive Modeling and Question Answering of Cancer Clinical Practice Guidelines using LLMs. In Proceedings of the 2024 IEEE Conference on Computational Intelligence in Bioinformatics and Computational Biology (CIBCB), Natal, Brazil, 27–29 August 2024; pp. 1–8. [Google Scholar] [CrossRef]

- Wu, J.; Liang, X.; Bai, X.; Chen, Z. SurgBox: Agent-Driven Operating Room Sandbox with Surgery Copilot. In Proceedings of the 2024 IEEE International Conference on Big Data (Big Data), Washington, DC, USA, 15–18 December 2024. [Google Scholar]

- Balloccu, S.; Reiter, E.; Li, K.J.H.; Sargsyan, R.; Kumar, V.; Reforgiato Recupero, D.; Riboni, D.; Dusek, O. Ask the experts: Sourcing a high-quality nutrition counseling dataset through Human-AI collaboration. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024, Miami, FL, USA, 12–16 November 2024; pp. 11519–11545. [Google Scholar] [CrossRef]

- Kang, B.J.; Lee, H.I.; Yoon, S.K.; Kim, Y.C.; Jeong, S.B.; O, S.J.; Kim, H. A survey of FPGA and ASIC designs for transformer inference acceleration and optimization. J. Syst. Archit. 2024, 155, 103247. [Google Scholar] [CrossRef]

- Wang, Y. Artificial-Intelligence integrated circuits: Comparison of GPU, FPGA and ASIC. Appl. Comput. Eng. 2023, 4, 99–104. [Google Scholar] [CrossRef]

- Kuon, I.; Rose, J. Measuring the Gap Between FPGAs and ASICs. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2007, 26, 203–215. [Google Scholar] [CrossRef]

- Fasi, M.; Mikaitis, M. Algorithms for Stochastically Rounded Elementary Arithmetic Operations in IEEE 754 Floating-Point Arithmetic. IEEE Trans. Emerg. Top. Comput. 2021, 9, 1451–1466. [Google Scholar] [CrossRef]

- Arar, E.M.E.; Sohier, D.; de Oliveira Castro, P.; Petit, E. The Positive Effects of Stochastic Rounding in Numerical Algorithms. In Proceedings of the 2022 IEEE 29th Symposium on Computer Arithmetic (ARITH), Virtual, 12–14 September 2022; pp. 58–65. [Google Scholar] [CrossRef]

- Liu, F.; Chao, W.; Tan, N.; Liu, H. Bag of Tricks for Inference-time Computation of LLM Reasoning. In Proceedings of the Advances in Neural Information Processing Systems, Sydney, Australia, 6–12 December 2025; Volume 38. [Google Scholar]

- Kim, J.; Kim, M.; Mozafari, B. Provable Memorization Capacity of Transformers. In Proceedings of the Eleventh International Conference on Learning Representations, Kigali, Rwanda, 1–5 May 2023. [Google Scholar]

- Kajitsuka, T.; Sato, I. On the Optimal Memorization Capacity of Transformers. In Proceedings of the Thirteenth International Conference on Learning Representations, Singapore, 24–28 April 2025. [Google Scholar]

- Mahdavi, S.; Liao, R.; Thrampoulidis, C. Memorization Capacity of Multi-Head Attention in Transformers. In Proceedings of the Twelfth International Conference on Learning Representations, Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Härmä, A.; Pietrasik, M.; Wilbik, A. Empirical Capacity Model for Self-Attention Neural Networks. arXiv 2024, arXiv:2407.15425. [Google Scholar] [CrossRef]

- Changalidis, A.; Härmä, A. Capacity Matters: A Proof-of-Concept for Transformer Memorization on Real-World Data. In Proceedings of the First Workshop on Large Language Model Memorization (L2M2); Jia, R., Wallace, E., Huang, Y., Pimentel, T., Maini, P., Dankers, V., Wei, J., Lesci, P., Eds.; Association for Computational Linguistics: Vienna, Austria, 2025; pp. 227–238. [Google Scholar] [CrossRef]

- Al-Saeedi, A.; Härmä, A. Emergence of symbolic abstraction heads for in-context learning in large language models. In Proceedings of Bridging Neurons and Symbols for Natural Language Processing and Knowledge Graphs Reasoning @ COLING 2025; Liu, K., Song, Y., Han, Z., Sifa, R., He, S., Long, Y., Eds.; Association for Computational Linguistics: Abu Dhabi, United Arab Emirates, 2025; pp. 86–96. [Google Scholar]

- Xie, S.M.; Raghunathan, A.; Liang, P.; Ma, T. An Explanation of In-context Learning as Implicit Bayesian Inference. In Proceedings of the International Conference on Learning Representations, Virtual, 25–29 April 2022. [Google Scholar]

- Nichani, E.; Damian, A.; Lee, J.D. How Transformers Learn Causal Structure with Gradient Descent. In Proceedings of the 41st International Conference on Machine Learning, Vienna, Austria, 21–27 July 2024. [Google Scholar]

- Vapnik, V.N.; Chervonenkis, A.Y. On the Uniform Convergence of Relative Frequencies of Events to Their Probabilities. Theory Probab. Its Appl. 1971, 16, 264–280. [Google Scholar] [CrossRef]

- Ramsauer, H.; Schäfl, B.; Lehner, J.; Seidl, P.; Widrich, M.; Adler, T.; Gruber, L.; Holzleitner, M.; Pavlović, M.; Sandve, G.K.; et al. Hopfield Networks is All You Need. In Proceedings of the International Conference on Learning Representations, Vienna, Austria, 3–7 May 2021. [Google Scholar]

- Radhakrishnan, A.; Belkin, M.; Uhler, C. Overparameterized neural networks implement associative memory. Proc. Natl. Acad. Sci. USA 2020, 117, 27162–27170. [Google Scholar] [CrossRef] [PubMed]

- Bietti, A.; Cabannes, V.; Bouchacourt, D.; Jegou, H.; Bottou, L. Birth of a Transformer: A Memory Viewpoint. In Proceedings of the Advances in Neural Information Processing Systems; Oh, A., Naumann, T., Globerson, A., Saenko, K., Hardt, M., Levine, S., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2023; Volume 36, pp. 1560–1588. [Google Scholar]

- Schaeffer, R.; Zahedi, N.; Khona, M.; Pai, D.; Truong, S.; Du, Y.; Ostrow, M.; Chandra, S.; Carranza, A.; Fiete, I.R.; et al. Bridging Associative Memory and Probabilistic Modeling. arXiv 2024, arXiv:2402.10202. [Google Scholar] [CrossRef]

- Allen-Zhu, Z.; Li, Y. Physics of Language Models: Part 3.3, Knowledge Capacity Scaling Laws. In Proceedings of the Thirteenth International Conference on Learning Representations, Singapore, 24–28 April 2025. [Google Scholar]

- Allen-Zhu, Z.; Li, Y. Physics of Language Models: Part 3.2, Knowledge Manipulation. In Proceedings of the Thirteenth International Conference on Learning Representations, Singapore, 24–28 April 2025. [Google Scholar]

- Allen-Zhu, Z.; Li, Y. Physics of Language Models: Part 3.1, Knowledge Storage and Extraction. In Proceedings of the 41st International Conference on Machine Learning, Vienna, Austria, 21–27 July 2024. [Google Scholar]

- Kaplan, J.; McCandlish, S.; Henighan, T.; Brown, T.B.; Chess, B.; Child, R.; Gray, S.; Radford, A.; Wu, J.; Amodei, D. Scaling Laws for Neural Language Models. arXiv 2020, arXiv:2001.08361. [Google Scholar] [CrossRef]

- McEliece, R.; Posner, E.; Rodemich, E.; Venkatesh, S. The capacity of the Hopfield associative memory. IEEE Trans. Inf. Theory 1987, 33, 461–482. [Google Scholar] [CrossRef]

- Tang, M.; Salvatori, T.; Millidge, B.; Song, Y.; Lukasiewicz, T.; Bogacz, R. Recurrent predictive coding models for associative memory employing covariance learning. PLoS Comput. Biol. 2023, 19, e1010719. [Google Scholar] [CrossRef]

- Zhong, C.; Pedrycz, W.; Li, Z.; Wang, D.; Li, L. Fuzzy associative memories: A design through fuzzy clustering. Neurocomputing 2016, 173, 1154–1162. [Google Scholar] [CrossRef]

- Steinberg, J.; Sompolinsky, H. Associative memory of structured knowledge. Sci. Rep. 2022, 12, 21808. [Google Scholar] [CrossRef]

- Tavan, P.; Grubmüller, H.; Kühnel, H. Self-organization of associative memory and pattern classification: Recurrent signal processing on topological feature maps. Biol. Cybern. 1990, 64, 95–105. [Google Scholar] [CrossRef]

- Krauth, W.; Nadal, J.P.; Mezard, M. The roles of stability and symmetry in the dynamics of neural networks. J. Phys. A Math. Gen. 1988, 21, 2995. [Google Scholar] [CrossRef]

- Baum, E.B. On the capabilities of multilayer perceptrons. J. Complex. 1988, 4, 193–215. [Google Scholar] [CrossRef]

- Vardi, G.; Yehudai, G.; Shamir, O. On the Optimal Memorization Power of ReLU Neural Networks. In Proceedings of the International Conference on Learning Representations, ICLR 2022, Virtual, 25–29 April 2022. [Google Scholar]

- Geva, M.; Schuster, R.; Berant, J.; Levy, O. Transformer Feed-Forward Layers Are Key-Value Memories. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing; Moens, M.F., Huang, X., Specia, L., Yih, S.W.t., Eds.; Association for Computational Linguistics: Punta Cana, Dominican Republic, 2021; pp. 5484–5495. [Google Scholar] [CrossRef]

- El-Sappagh, S.; Franda, F.; Ali, F.; Kwak, K.S. SNOMED CT standard ontology based on the ontology for general medical science. BMC Med. Inform. Decis. Mak. 2018, 18, 76. [Google Scholar] [CrossRef]

- Lamy, J.B. Owlready: Ontology-oriented programming in Python with automatic classification and high level constructs for biomedical ontologies. Artif. Intell. Med. 2017, 80, 11–28. [Google Scholar] [CrossRef] [PubMed]

- Shehzad, A.; Xia, F.; Abid, S.; Peng, C.; Yu, S.; Zhang, D.; Verspoor, K. Graph Transformers: A Survey. IEEE Trans. Neural Netw. Learn. Syst. 2026. [Google Scholar] [CrossRef] [PubMed]

- Paszke, A.; Gross, S.; Chintala, S.; Chanan, G.; Yang, E.; DeVito, Z.; Lin, Z.; Desmaison, A.; Antiga, L.; Lerer, A. Automatic differentiation in PyTorch. In Proceedings of the NeurIPS 2017 Workshop on Autodiff, Long Beach, CA, USA, 8 December 2017. [Google Scholar]

- Wolf, T.; Debut, L.; Sanh, V.; Chaumond, J.; Delangue, C.; Moi, A.; Cistac, P.; Rault, T.; Louf, R.; Funtowicz, M.; et al. HuggingFace’s Transformers: State-of-the-art Natural Language Processing. arXiv 2019, arXiv:1910.03771. [Google Scholar]

- Agarap, A.F. Deep Learning using Rectified Linear Units (ReLU). arXiv 2018, arXiv:1803.08375. [Google Scholar]

- Hendrycks, D.; Gimpel, K. Gaussian Error Linear Units (GELUs). arXiv 2016, arXiv:1606.08415. [Google Scholar]

- Xu, B.; Wang, N.; Chen, T.; Li, M. Empirical Evaluation of Rectified Activations in Convolutional Network. arXiv 2015, arXiv:1505.00853. [Google Scholar] [CrossRef]

- Bridle, J.S. Probabilistic Interpretation of Feedforward Classification Network Outputs, with Relationships to Statistical Pattern Recognition. In Proceedings of the NATO Neurocomputing; Springer: Berlin/Heidelberg, Germany, 1989. [Google Scholar]

- Zhou, H.; Bradley, A.; Littwin, E.; Razin, N.; Saremi, O.; Susskind, J.M.; Bengio, S.; Nakkiran, P. What Algorithms can Transformers Learn? A Study in Length Generalization. In Proceedings of the Twelfth International Conference on Learning Representations, Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Zhou, Y.; Alon, U.; Chen, X.; Wang, X.; Agarwal, R.; Zhou, D. Transformers Can Achieve Length Generalization But Not Robustly. In Proceedings of the Workshop on Mathematical and Empirical Understanding of Foundation Models (ME-FoMo) at ICLR 2024, Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Cho, H.; Cha, J.; Awasthi, P.; Bhojanapalli, S.; Gupta, A.; Yun, C. Position Coupling: Improving Length Generalization of Arithmetic Transformers Using Task Structure. In Proceedings of the Advances in Neural Information Processing Systems, Vancouver, BC, Canada, 10–15 December 2024; Volume 37. [Google Scholar]

- Guan, X.; Zhang, L.L.; Liu, Y.; Shang, N.; Sun, Y.; Zhu, Y.; Yang, F.; Yang, M. rStar-Math: Small LLMs Can Master Math Reasoning with Self-Evolved Deep Thinking. In Proceedings of the 42nd International Conference on Machine Learning, Vancouver, BC, Canada, 13–19 July 2025; Volume 267, pp. 20640–20661. [Google Scholar]

- Shao, Z.; Wang, P.; Zhu, Q.; Xu, R.; Song, J.; Bi, X.; Zhang, H.; Zhang, M.; Li, Y.K.; Wu, Y.; et al. DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models. arXiv 2024, arXiv:2402.03300. [Google Scholar]

- Zhang, Z.; Zheng, C.; Wu, Y.; Zhang, B.; Lin, R.; Yu, B.; Liu, D.; Zhou, J.; Lin, J. The Lessons of Developing Process Reward Models in Mathematical Reasoning. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2025, Vienna, Austria, 27 July–1 August 2025; pp. 10495–10516. [Google Scholar] [CrossRef]

- Nye, M.; Andreassen, A.J.; Gur-Ari, G.; Michalewski, H.; Austin, J.; Bieber, D.; Dohan, D.; Lewkowycz, A.; Bosma, M.; Luan, D.; et al. Show Your Work: Scratchpads for Intermediate Computation with Language Models. In Proceedings of the Workshop on Deep Learning for Code (DL4C) at ICLR 2022, Virtually, 29 April 2022. [Google Scholar]

- Saxton, D.; Grefenstette, E.; Hill, F.; Kohli, P. Analysing Mathematical Reasoning Abilities of Neural Models. In Proceedings of the International Conference on Learning Representations, New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- Anil, C.; Wu, Y.; Andreassen, A.; Lewkowycz, A.; Misra, V.; Ramasesh, V.; Slone, A.; Gur-Ari, G.; Dyer, E.; Neyshabur, B. Exploring Length Generalization in Large Language Models. In Proceedings of the Advances in Neural Information Processing Systems, New Orleans, LA, USA, 8–9 November 2022; Volume 35. [Google Scholar]

- Zhang, Y.; Backurs, A.; Bubeck, S.; Eldan, R.; Gunasekar, S.; Wagner, T. Unveiling Transformers with LEGO: A Synthetic Reasoning Task. arXiv 2022, arXiv:2206.04301. [Google Scholar]

- Kazemnejad, A.; Padhi, I.; Ramamurthy, K.N.; Das, P.; Reddy, S. The Impact of Positional Encoding on Length Generalization in Transformers. In Proceedings of the Advances in Neural Information Processing Systems, New Orleans, LA, USA, 10–16 December 2023; Volume 36. [Google Scholar]

- Ruoss, A.; Delétang, G.; Genewein, T.; Grau-Moya, J.; Csordás, R.; Bennani, M.; Legg, S.; Veness, J. Randomized Positional Encodings Boost Length Generalization of Transformers. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers); Rogers, A., Boyd-Graber, J., Okazaki, N., Eds.; Association for Computational Linguistics: Toronto, ON, Canada, 2023; pp. 1889–1903. [Google Scholar] [CrossRef]

- Wang, J.; Ji, T.; Wu, Y.; Yan, H.; Gui, T.; Zhang, Q.; Huang, X.; Wang, X. Length Generalization of Causal Transformers without Position Encoding. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2024; Ku, L.W., Martins, A., Srikumar, V., Eds.; Association for Computational Linguistics: Bangkok, Thailand, 2024; pp. 14024–14040. [Google Scholar] [CrossRef]

- Duan, S.; Shi, Y.; Xu, W. From Interpolation to Extrapolation: Complete Length Generalization for Arithmetic Transformers. arXiv 2024, arXiv:2310.11984. [Google Scholar] [CrossRef]

- Dubois, Y.; Dagan, G.; Hupkes, D.; Bruni, E. Location Attention for Extrapolation to Longer Sequences. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics; Jurafsky, D., Chai, J., Schluter, N., Tetreault, J., Eds.; Association for Computational Linguistics: Stroudsburg, PA, USA, 2020; pp. 403–413. [Google Scholar] [CrossRef]

- Kumar, T.; Ankner, Z.; Spector, B.F.; Bordelon, B.; Muennighoff, N.; Paul, M.; Pehlevan, C.; Ré, C.; Raghunathan, A. Scaling Laws for Precision. In Proceedings of the Thirteenth International Conference on Learning Representations, Singapore, 24–28 April 2025. [Google Scholar]

- Motamedi, M.; Sakharnykh, N.; Kaldewey, T. A Data-Centric Approach for Training Deep Neural Networks with Less Data. In Proceedings of the NeurIPS 2021 Workshop on Data-Centric AI, Virtual, 14 December 2021. [Google Scholar]

- Lee, N.; Sreenivasan, K.; Lee, J.D.; Lee, K.; Papailiopoulos, D. Teaching Arithmetic to Small Transformers. In Proceedings of the Twelfth International Conference on Learning Representations, Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Shen, R.; Bubeck, S.; Eldan, R.; Lee, Y.T.; Li, Y.; Zhang, Y. Positional Description Matters for Transformers Arithmetic. arXiv 2023, arXiv:2311.14737. [Google Scholar] [CrossRef]

- Olsson, C.; Elhage, N.; Nanda, N.; Joseph, N.; DasSarma, N.; Henighan, T.; Mann, B.; Askell, A.; Bai, Y.; Chen, A.; et al. In-context Learning and Induction Heads. arXiv 2022, arXiv:2209.11895. [Google Scholar] [CrossRef]

- Shaw, P.; Uszkoreit, J.; Vaswani, A. Self-Attention with Relative Position Representations. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 2 (Short Papers), New Orleans, LA, USA, 1–6 June 2018; pp. 464–468. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), Minneapolis, MN, USA, 2–7 June 2019; pp. 4171–4186. [Google Scholar] [CrossRef]

- Zhang, S.; Roller, S.; Goyal, N.; Artetxe, M.; Chen, M.; Chen, S.; Dewan, C.; Diab, M.; Li, X.; Lin, X.V.; et al. OPT: Open Pre-trained Transformer Language Models. arXiv 2022, arXiv:2205.01068. [Google Scholar] [CrossRef]

- Press, O.; Smith, N.A.; Lewis, M. Train Short, Test Long: Attention with Linear Biases Enables Input Length Extrapolation. In Proceedings of the International Conference on Learning Representations, Virtual, 25–29 April 2022. [Google Scholar]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer. J. Mach. Learn. Res. 2020, 21, 1–67. [Google Scholar]

- Chi, T.C.; Fan, T.H.; Ramadge, P.J.; Rudnicky, A.I. KERPLE: Kernelized Relative Positional Embedding for Length Extrapolation. In Proceedings of the Advances in Neural Information Processing Systems, New Orleans, LA, USA, 28 November–9 December 2022; Volume 35. [Google Scholar]

- Li, S.; You, C.; Guruganesh, G.; Ainslie, J.; Ontanon, S.; Zaheer, M.; Sanghai, S.; Yang, Y.; Kumar, S.; Bhojanapalli, S. Functional Interpolation for Relative Positions Improves Long Context Transformers. In Proceedings of the International Conference on Learning Representations, ICLR’24, Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Haviv, A.; Ram, O.; Press, O.; Izsak, P.; Levy, O. Transformer Language Models without Positional Encodings Still Learn Positional Information. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022; Goldberg, Y., Kozareva, Z., Zhang, Y., Eds.; Association for Computational Linguistics: Abu Dhabi, United Arab Emirates, 2022; pp. 1382–1390. [Google Scholar] [CrossRef]

- Su, J.; Lu, Y.; Pan, S.; Murtadha, A.; Wen, B.; Liu, Y. RoFormer: Enhanced Transformer with Rotary Position Embedding. Neurocomputing 2024, 568, 127063. [Google Scholar] [CrossRef]

- Chen, S.; Wong, S.; Chen, L.; Tian, Y. Extending Context Window of Large Language Models via Positional Interpolation. arXiv 2023, arXiv:2306.15595. [Google Scholar] [CrossRef]

- Peng, B.; Quesnelle, J.; Fan, H.; Shippole, E. YaRN: Efficient Context Window Extension of Large Language Models. In Proceedings of the Twelfth International Conference on Learning Representations, Vienna, Austria, 7–11 May 2024. [Google Scholar]

- Wang, P. Lucidrains/x-Transformers. 2024. Original-Date: 2020-10-24T22:13:25Z. Available online: https://github.com/lucidrains/x-transformers (accessed on 1 March 2026).

- McCandlish, S.; Kaplan, J.; Amodei, D.; OpenAI Dota Team. An Empirical Model of Large-Batch Training. arXiv 2018, arXiv:1812.06162. [Google Scholar] [CrossRef]

- Shen, K.; Guo, J.; Tan, X.; Tang, S.; Wang, R.; Bian, J. A Study on ReLU and Softmax in Transformer. arXiv 2023, arXiv:2302.06461. [Google Scholar] [CrossRef]

- Jelassi, S.; d’Ascoli, S.; Domingo-Enrich, C.; Wu, Y.; Li, Y.; Charton, F. Length Generalization in Arithmetic Transformers. arXiv 2023, arXiv:2306.15400. [Google Scholar] [CrossRef]

- Sabbaghi, M.; Pappas, G.; Hassani, H.; Goel, S. Explicitly Encoding Structural Symmetry is Key to Length Generalization in Arithmetic Tasks. arXiv 2024, arXiv:2406.01895. [Google Scholar] [CrossRef]

- Ju, Y.; Isac, A.; Nie, Y. ChunkFormer: Learning Long Time Series with Multi-stage Chunked Transformer. arXiv 2021, arXiv:2112.15087. [Google Scholar]

- He, S.; Sun, G.; Shen, Z.; Li, A. Uncovering the Redundancy in Transformers via a Unified Study of Layer Dropping. Trans. Mach. Learn. Res. 2026. Available online: https://openreview.net/forum?id=1I7PCbOPfe (accessed on 1 March 2026).

- Paik, I.; Choi, J. The Disharmony between BN and ReLU Causes Gradient Explosion, but is Offset by the Correlation between Activations. arXiv 2023, arXiv:2304.11692. [Google Scholar] [CrossRef]

- Chen, W.; Ge, H. Neural Characteristic Activation Analysis and Geometric Parameterization for ReLU Networks. In Proceedings of the NeurIPS’24, Vancouver, BC, Canada, 10–15 December 2024. [Google Scholar]

- Fu, J.; Yang, T.; Wang, Y.; Lu, Y.; Zheng, N. Breaking through the Learning Plateaus of In-context Learning in Transformer. In Proceedings of the 41st International Conference on Machine Learning, Vienna, Austria, 21–27 July 2024; Volume 235, pp. 14207–14227. [Google Scholar]

- Elhage, N.; Nanda, N.; Olsson, C.; Henighan, T.; Joseph, N.; Mann, B.; Askell, A.; Bai, Y.; Chen, A.; Conerly, T.; et al. A Mathematical Framework for Transformer Circuits. Transform. Circuits Thread 2021, 1, 12. Available online: https://transformer-circuits.pub/2021/framework/index.html (accessed on 1 March 2026).

- Nanda, N.; Chan, L.; Lieberum, T.; Smith, J.; Steinhardt, J. Progress Measures for Grokking via Mechanistic Interpretability. In Proceedings of the Eleventh International Conference on Learning Representations, Kigali, Rwanda, 1–5 May 2023. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Gu, A.; Dao, T. Mamba: Linear-Time Sequence Modeling with Selective State Spaces. In Proceedings of the First Conference on Language Modeling, Philadelphia, PA, USA, 7–9 October 2024. [Google Scholar]

- Beck, M.; Pöppel, K.; Lippe, P.; Kurle, R.; Blies, P.M.; Klambauer, G.; Böck, S.; Hochreiter, S. xLSTM 7B: A Recurrent LLM for Fast and Efficient Inference. In Proceedings of the 42nd International Conference on Machine Learning, Vancouver, BC, Canada, 13–19 July 2025; pp. 3335–3357. [Google Scholar]

| Task Type | Question | Answer |

|---|---|---|

| Addition | Compute: 53,726 + 19,177 | 72,903 |

| Polynomial Eval. | Evaluate in | 5 |

| Sorting | Sort the numbers: | |

| Summation | Compute | 7 |

| Parity | Are the number of 1’s even in ? | No |

| LEGO | ; ; ; . Find c? |

| Layers | a | b | c | d | e | RMSE | MAPE | |||

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 145.27 | −0.13 | 1.29 | 0.13 | 0.20 | 3762.70 | 8741.00 | 0.95 | 820.30 | 0.50 |

| 2 | 2.45 | −0.002 | 0.02 | −0.99 | −29.08 | 4413.10 | 14,787.00 | 0.88 | 2293.89 | 0.83 |

| Data Size | Accuracy, % | Capacity |

|---|---|---|

| Approach | Training Range | Generalizes to | Extension Factor |

|---|---|---|---|

| Standard APE (Baseline) | Up to 40 | ∼40 | 1–1.125× |

| FIRE + Reversed + Index Hints | Up to 40 | ∼100 | 2.5× |

| Relative Positional Encoding | Up to 5 | ∼15 | 3× |

| Position Coupling | 1–30 | ∼200 | 6.67× |

| Explicit Structural Symmetry | Up to 5 | ∼50 | 10× |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Härmä, A.; Al-Saeedi, A.; Changalidis, A.; Verşebeniuc, D.; Pietrasik, M.; Wilbik, A. On Memorization and Generalization in Compact Transformers. Electronics 2026, 15, 1847. https://doi.org/10.3390/electronics15091847

Härmä A, Al-Saeedi A, Changalidis A, Verşebeniuc D, Pietrasik M, Wilbik A. On Memorization and Generalization in Compact Transformers. Electronics. 2026; 15(9):1847. https://doi.org/10.3390/electronics15091847

Chicago/Turabian StyleHärmä, Aki, Ali Al-Saeedi, Anton Changalidis, Dumitru Verşebeniuc, Marcin Pietrasik, and Anna Wilbik. 2026. "On Memorization and Generalization in Compact Transformers" Electronics 15, no. 9: 1847. https://doi.org/10.3390/electronics15091847

APA StyleHärmä, A., Al-Saeedi, A., Changalidis, A., Verşebeniuc, D., Pietrasik, M., & Wilbik, A. (2026). On Memorization and Generalization in Compact Transformers. Electronics, 15(9), 1847. https://doi.org/10.3390/electronics15091847