1. Introduction

Foreign exchange rate (Forex) forecasting remains a challenging and essential task in financial markets due to the inherently unstable and nonlinear nature of currency prices. Appropriate forecasting models are crucial for investors, policymakers, and businesses to make informed decisions that reduce risk and increase returns. Traditional statistical methods often struggle with the complexity and temporal dependencies present in Forex data, prompting researchers to explore advanced machine learning and deep learning techniques. Recurrent neural networks, especially Long Short-Term Memory (LSTM) networks, have achieved significant success in obtaining sequential patterns and long-term dependencies in financial time series, providing a robust foundation for effective Forex detection systems.

Focusing on the strengths of LSTM architecture, Bidirectional LSTM (Bi-LSTM) models improve this capability by accessing input sequences in both forward and backward directions, thereby dragging past and future context to enhance forecasting accuracy.

Such architectures have proven promising results in stock price detection and commodity forecasting, as documented by Purnomo et al. [

1] who contrasted the Bi-LSTM and random forest regressor (RFR) methods for stock prices [

2], Combining attention mechanisms with Bi-LSTM methods has proven to be a powerful improvement, enabling the technique to focus on the time steps that most contributed to detecting outcomes. This has been effectively confirmed in financial forecasting by authors [

3] and Buche and Chandak [

4] who integrated attention mechanisms with Bi-LSTM to enhance stock market trend prediction.

Although these advances have been made, integrating deep learning feature extraction with robust ensemble methods such as random forest remains an underexplored area for Forex forecasting. The random forest regressor is widely known for its ability to reduce overfitting and improve generalization by combining multiple decision trees. Geng et al. [

5] demonstrated the success of hybrid models that integrated Bi-LSTM and group methods for energy forecasting, particularly the capacity benefits of such fusion approaches. Based on these findings, the current study proposes a hybrid framework that leverages the temporal modeling strengths of Bi-LSTM, the selective focus of attention mechanisms, and the generalization power of the random forest regressor to forecast foreign exchange rates more precisely than traditional or singular methods.

This research aims to introduce advanced deep learning and aggregation models for complex, unstable financial data. By integrating Bi-LSTM with attention and random forests within a single framework, this approach seeks to capture sophisticated temporal relationships while addressing overfitting, a prevalent challenge in financial time-series detection. This approach enhances clarity and reliability in the detection and forecasting process for stakeholders in critical currency markets. Next year’s exchange rates will generally follow whatever levels were established in the previous year, yet relying on that year’s rates alone fails to account for the intricate nonlinear dynamics and broader multi-year rhythms that characterize currency markets during turbulence. Movements are shaped not only by macroeconomic fundamentals and policy changes but also by shifts in market psychology and the fallout from unexpected global events, all of which can initiate abrupt structural breaks that an autoregressive architecture struggles to absorb. Our Bi-LSTM + Attention + Random Forest hybrid architecture addresses this gap by simultaneously learning long-range bidirectional dependencies and complex nonlinear feature interactions, yielding a forecasting engine that remains credible even when markets are roiled by volatility spikes or fundamental regime changes.

Unlike prior works that relied solely on either sequential deep learning models (e.g., LSTM, Bi-LSTM) or classical ensemble methods (e.g., random forest), the proposed framework fuses bidirectional temporal modeling with attention-guided feature weighting and ensemble regression. This combination enables the model to capture long-term dependencies, focus on critical historical periods, and generalize across nonlinear relationships in highly volatile foreign exchange markets. This represents a distinct contribution beyond the existing literature on hybrid financial forecasting models.

These research contributions include:

We develop a new hybrid framework that combines Bidirectional LSTM (Bi-LSTM), additive attention, and random forest regression (RFR). In contrast to most deep learning models which focus on neural outputs, the proposed framework utilizes Bi-LSTM attention for temporal feature extraction and RFR for nonlinear ensemble feature refinement, thereby increasing the robustness and generalization of the model for long-horizon exchange rate forecasting. This research focuses on single model frameworks, which incorporate deep sequential and machine learning fusion for improved performance.

This research encodes next year’s exchange rate forecasting as a supervised learning problem in a cross-country context. The model leverages each country’s long-term annual historical sequence (1960–2023) to predict the country’s value for the next year.

Such a configuration manifests the model’s learning of structural parallels and nonlinear temporal intricacies across about 200 countries. This a global scale rarely captured in annual foreign exchange forecasting.

The following sections in manuscripts are organized as follows:

Section 2 shows the literature survey,

Section 3 discusses the clear methodology,

Section 4 presents the results of this, and

Section 5 discusses the conclusions with a future scope.

2. Literature Survey

Abedin et al. [

1] incorporated a deep learning-based framework in their research to forecast the movement of exchange rates during the COVID-19 pandemic, focusing their framework on the impact of economic uncertainty on unstable exchange rates. The authors were successful in creating a more precise forecast, indicating the capabilities of using DNN techniques, especially in unstable financial circumstances. The research verified that crisis-based data can be incorporated to increase the sensitivity of models, especially in unstable or unexpected events; however, such a model is only likely to be effective during a pandemic situation. Bagherabad et al.’s [

2] research paper used the application of various machine learning algorithms to examine and understand the influence of different economic factors on businesses’ performances. This research article emphasized the need for the implementation of macroeconomic variables for the improvement of financial predictive models. Hence, the methodology used can significantly contribute to financial analytics, but mainly when focused on business economics and not Forex markets.

Adesina and Obokoh [

3] proposed a hybrid prediction model using deep learning and conventional time-series models to enhance the accuracy of exchange rate prediction. By combining statistical and nonlinear learning patterns, the model presented a reduced error in its prediction, hence increasing stability. This study has shown that a hybrid architecture can do a better job in capturing complex financial dynamics; however, this will add significant computational complexity to real-time deployment. Ding et al. [

4] proposed a method of information fusion that combined large language models and deep learning algorithms for EUR/USD exchange rate prediction. The authors were able to combine both textual and numerical financial information in their approach, demonstrating the significance of using information from multiple modalities in financial predictions; however, there was a risk that extraneous information from large language models might compromise the reliability of the hybrid model.

Iqbal et al. [

5] proposed a new hybrid deep learning model to improve exchange rate prediction accuracy. The architecture effectively modeled nonlinear dependencies in Forex time series and outperformed several competing baseline models. Their findings reinforced the value of hybrid neural networks in financial forecasting. One of the drawbacks was that large volumes of high-quality training data may be needed, which can limit the approach’s application for lower-traded currencies. Perla et al. [

6] proposed a hybrid neural network model coupled with an optimization algorithm for Forex market index forecasting and trend identification. The optimization algorithm enhanced the stability of the forecasts and reduced any errors associated with the forecast model, which showed the strength of incorporating neural learning and algorithm optimization. Nevertheless, the optimization process can significantly increase the training time for the network model.

Caroline et al. [

7] explored hybrid TCN-LSTM and LSTM-TCN architectures for Forex price prediction and demonstrated that the integration of convolutional temporal features with recurrent learning improved sequence modeling. Their approach improved prediction accuracy by capturing the short-term and long-term dependencies of time-series data, contributing to the development of an increasing trend in the usage of hybrid deep learning structures within finance; however, limited cross-currency validation has raised concerns about the generalization capability of the model. Gu et al. [

8] introduced the AB-LSTM-GRU ensemble model, which enhanced the strength of the exchange rate forecast. The ensemble model consisted of various recyclable units combined together, improving the feature learning approach. The research showed that the model achieved better results, proving its ability to address nonlinear financial models through ensemble deep learning; however, the model was not interpretable, as it was difficult to explain its decisions.

A new approach called ICEEMDAN-CNN-LSTM has been proposed by Zhou and Zhu [

9] for predicting the USD/RMB currency exchange rate. The signal decomposition resulted in better identification of intrinsic components before deep learning, which improved the accuracy of predictions using deep learning. The stable approach can handle noise problems well, especially in unstable finance fields; however, it is also cumbersome because of signal preprocessing. In the paper by Poernomo [

10], the author was able to use an LSTM network to efficiently predict the exchange rate of the Indonesian Rupiah as it varied over time. This was an indication of how well the network was able to learn and understand temporal relationships in the time series’ data such as this forecasting problem. However, it seems to be limited to only one specific architecture, whereas better results could be obtained through the use of hybrids.

In a related study, Dash et al. [

11] integrated a bidirectional LSTM and a transformer attention-based mechanism. The aim of the technique was to arbitrage and improve the effectiveness of Forex trend analysis. According to the authors, the effectiveness of attention mechanisms provided a bright prospect for enabling the use of advanced deep learning technologies; however, a critical disadvantage of the transformer component was its high computational cost. Nsengiyumva et al. [

12] compared CNN, Bi-LSTM, and the hybrid CNN-Bi-LSTM for foreign exchange forecasting. The results showed that the hybrid architecture outperformed a single network regarding the capture of nonlinear relationships. This research has given good recommendations toward the choice of a deep learning model for Forex prediction. The paper indicated that much importance should be put on the design of the architecture to arrive at good forecasts; however, the findings may be contingent on the characteristics of the dataset used, which may limit their general applicability.

Varastehpour et al. [

13] also proposed new high-performance CNN-based hybrid models for predicting AUD/USD trends. The new models were able to enhance feature extraction from complex financial data and improve the reliability of AUD/USD predictions. The paper demonstrated the success of using convolutional networks for pattern recognition and was of great help for hybrid deep learning techniques for Forex—although CNN-based techniques may not perform well for long-term dependencies without using recurrent networks. The paper by Liu [

14] analyzed an improved CNN architecture to enhance financial forecasting, with an emphasis on stock volatility prediction. Although this paper provided some valuable methods that could be used in financial modeling, it indirectly supported the use of deep learning; nevertheless, it was not very useful in this field given that it focused on stocks rather than foreign exchange currency rates.

Attention-based LSTM Network: Luo et al. [

15] proposed an attention-based LSTM network to predict foreign currency exchange rates in that the attention mechanism allowed the model to focus more on the influential time steps, resulting in higher accuracy. The results showed the advantage of selective weighting of features in a time-series analysis and the network worked exceptionally well on nonlinear financial data sets. However, attention models are sensitive to hyperparameter tuning, which can be a drawback. Hong et al. [

16] proposed a deep learning framework that merged adaptive signal decomposition with dynamic weight optimization for exchange rate prediction. The model performed effectively on non-stationary data, enhancing its predictive stability. The proposed method supported advanced preprocessing, which helped improve learning outcomes, and proved that Forex prediction required feature refinement; however, such a layered architecture complicates the system.

Hao et al. [

17] evaluated AI-based exchange rate forecasting methods with a focus on macroeconomic sensitivity and predictive performance. The study offered a comprehensive assessment of artificial intelligence techniques in financial modeling. It emphasized the growing reliance on AI for currency prediction. Their analysis favored shifting away from traditional models toward intelligent systems; however, the work was mostly evaluative, with no experimentation with new architecture. Li et al. [

18] investigated machine learning and AI for improving the accuracy of exchange rate forecasts in global markets. This study demonstrated that new predictive algorithms were increasingly effective at capturing currency dynamics. The results of this study advocated for the wider dissemination of AI-powered financial forecasting and provides conceptual contributions regarding model performance—since it was an arXiv preprint, no formal peer review had taken place.

Gao et al. [

19] applied interpretive machine learning to explain exchange rate forecasts using macroeconomic fundamentals. This work improved transparency and supported informed financial decision-making, and narrowed the gap between predictive accuracy and model explainability. This study could underpin the key role of interpretable AI in finance; however, while interpretability can sometimes be enhanced, it comes at the cost of reduced predictive performance. Similarly, Tian et al. [

20] proposed a DALG model based on a dual attention mechanism for forecasting exchange rate volatility using an LSTM-GRU network architecture within the Chinese Forex sector. The effectiveness of a combined recurrent network paradigm with attention mechanisms was clear from the experimental results, as the proposed model was appropriate for unstable exchange rate environments, but it may be region-specific.

Das et al. [

21] presented an analysis on exchange rate forecasting using machine learning, deep learning approaches, and time-series methods with alternative datasets. Although this paper was more focused on presenting a comparison of using different models for exchange rate forecasting, it offered valuable insights into choosing the appropriate forecasting method. Nonetheless, this paper was more limited in its experimental analysis because it was a conference paper rather than a journal one. Jin et al. [

22] proposed the concept of Deep Financial, which predicted the impact of exchange rates using political and financial statements. The inclusion of qualitative information helped broaden the scope of the predictions beyond the limited numerical data, highlighting the viability of text-based financial analytics. The approach was applicable for developing multidisciplinary prediction strategies; however, there was room for subjectivity in retrieving political feature information.

Althobaiti et al. [

23] proposed a shift from static to temporal machine learning using lagged features and feature significance, as seen in the EUR/USD prediction model. The model has been successful in creating a framework with better knowledge regarding time and prediction accuracy. The article further underscored the role of feature engineering in improving the accuracy of time-series prediction models. The downside was that, with feature engineering, model design can become complicated. Tong [

24] proposed Forex-Net, an enhanced hybrid LSTM model, that leveraged transfer learning for exchange rate forecasting. Transfer learning reduced the training effort while preserving strong forecasting capability, which is beneficial when the knowledge can be reused across datasets of a related financial portfolio. Their approach supported efficient deep learning deployment; however, the performance can depend heavily on the source domain relevance.

3. Materials and Methods

In this research, we forecast the foreign exchange rate by advanced deep learning techniques using Bidirectional Long Short-Term Memory (Bi-LSTM) networks and other regression models. The process starts with preprocessing the raw dataset, which involves removing non-numeric columns by applying a log transformation to equalize the variance and addressing missing data through iterative imputation. After preprocessing, the data undergoes min–max normalization, which measures the features in a defined range, promising consistency and enhancing the model’s performance.

Once the data is normalized, it is given to machine learning models. This research uses a Bidirectional Long Short-Term Memory (Bi-LSTM) network, which excels at handling time series data by capturing dependencies both forward and backward in time. In addition to Bi-LSTM, the ML method is combined with a random forest regressor, and attention mechanisms are applied. These hybrid approaches improve prediction accuracy by integrating feature importance and accounting for temporal dependencies. The result of this process is the forecasted foreign exchange rate, providing a robust and reliable solution for time-series forecasting. This shows the power of combining deep learning with traditional machine learning to address complex limitations in financial forecasting.

Figure 1 shows the workflow of the proposed hybrid model.

Foreign exchange is highly relevant in financial time series, as the past events and anticipated developments (such as policy announcements and economic indicators) have shaped and will continue to shape these patterns. Bi-LSTM employs more advanced methods to retrieve data, which helps to address many of the challenges encountered previously.

Random forest regressors have been used for advanced nonlinear feature learning and ensemble stability. Excelling with sequential dependencies, Bi-LSTM does not consider the dynamics of exchange rates that primarily rely on nonlinear relationships and isolated features. These methods can capture complex patterns beyond the boundaries fitted by Bi-LSTM; otherwise, they can be obtained through the group learning incorporated into random forests. These techniques are more resilient to overfitting than single decision trees and can effectively observe high-dimensional data. Combining Bi-LSTMs with a random forest regression improves prediction accuracy and stability with the system’s ability to conduct temporal sequence learning alongside robust nonlinear regression.

Bi-LSTM, random forest, and attention models improve the capabilities of deep learning through attention mechanisms, giving a temporal model and facilitating feature extraction while selectively concentrating on important time steps and features relevant to the task. Due to irrelevant data points, exchange rates and other time series have high noise levels, making them challenging to work with.

Selecting important factors can improve model explainability by emphasizing periods considered most significant for model predictions. Employing attention mechanisms with Bi-LSTM and random forest integrates complementary strengths to improve detection performance. Given the unstable and multifactor-driven nature of exchange markets, this hybrid approach captures both short-term and long-term temporal dependencies. It focuses on critical signals while automatically adjusting to nonlinear relationships. With diverse methodologies and mixed-model approaches, these features can enhance model robustness and generalizability, thereby reducing bias.

3.1. Dataset Details

The dataset titled as “Official exchange rate” (LCU per US$, period average) has been provided by the World Bank with the indicator code PA.NUS.FCRF. This offers annual data on the official exchange rate of 200 countries, shown as local currency units (LCU) per US dollar, averaged over each year. These are sourced from the International Monetary Fund’s International Financial Statistics (IFS) which spans from 1960 to 2024, covering 200 countries globally. The dataset can provide a comprehensive view of how exchange rates have evolved over several decades. It is an invaluable tool for viewing historical trends to understand global currency movements. As this was last updated on 15 April 2025, the dataset is historical and up-to-date.

The dataset is accessible through the World Bank’s platform at

https://data.worldbank.org/indicator/PA.NUS.FCRF (accessed on 1 January 2025). It is available in formats such as CSV, XML, and Excel, facilitating both ease of access and use for further analysis. Sample data are shown in

Table 1.

3.2. Data Preprocessing

The raw dataset is processed using the organized pipeline to maintain the quality of the numerical data, as well as the pipeline’s ability to work with deep learning systems. The dataset contains the annual official exchange rates (Local Currency Unit/United States Dollar) for more than 250 countries and areas covering the years 1960 to 2024; each row contains information for one country, while each column contains information for one year. To prevent unreliable imputations, countries with an excessive amount of missing values (>40% of observations) are eliminated. For the remaining records, missing data are predicted using an iterative imputer, which treats each feature as a function of the remaining features and makes a series of estimates until it converges. Such an approach is theoretically robust in preserving more complex cross-feature relationships in contrast to using simpler estimates based on the mean or median of the estimates.

For the country name and country code, one-hot encoding is used to allow the model to differentiate country-specific patterns and to avoid imposing ordinal relationships on the Bi-LSTM model, which would otherwise treat categorical features as numerical vectors. To stabilize variance and address the common skewness in financial time series, a logarithmic transformation

was applied to all numerical features. This transformation mitigates the impact of extreme values, reduces heteroscedasticity, and improves the model’s learning stability. The 1960–2023 time series is used to create several input features to obtain 2024 exchange rate prediction targets, and min–max scaling was applied. To avoid data leakage when splitting the time series, only the training period is scaled, and the same scaling is applied to the validation and test sets. The dataset is split chronologically, i.e., observations from 1960 to 2018 are used for training, 2019 to 2023 for validation, and 2024 for testing. In this forward-chaining approach, the model is evaluated on data it has not seen before, ensuring an accurate assessment of its predictive capability. To capture relevant temporal dependencies of the historical exchange rate sequences, the input data is organized as three-dimensional tensors of shape (samples, time steps, features) to suit the format required by the Bidirectional Long Short-Term Memory (LSTM) network.

Figure 2 shows the effect of log transformation.

3.3. Feature Scaling

In this imputation, the dataset is generalized using the MinMaxScaler. This scaling method transforms the features to a fixed range [0, 1] by ensuring that all features contribute equally to the method, preventing the model from being biased toward features with huge numeric ranges. The MinMaxScaler uses the formula:

where

X is the original value, while

are the minimum and maximum values of the feature.

After applying feature scaling, min–max scaling transforms the data into a uniform range, typically 0 to 1. This transformation normalizes the scale of all features by ensuring that each feature contributes equally to the model, regardless of its original range. The uniform distribution of values improves the stability of the learning process by preventing any feature from overwhelming the others.

Figure 3 shows the data distribution both before and after performing these normalization techniques. To prevent data leakage, min–max scaling is performed only on the training set, while the fitted scaler is applied to the test set. This ensures that future information from the test data does not influence the model during training, maintaining fair evaluation. The min–max scaling formula for a feature value

x is:

where

and

are the minimum and maximum values of the feature in the training dataset, while

is the scaled value in the range [0, 1].

3.4. Hybrid Deep Learning Model

The new forecasting method combines sequential deep learning and ensemble regression to predict complex, long-range dependency relationships in exchange rate data. The methodology is made up of three sequential parts: a Bi-LSTM (Bidirectional Long Short-Term Memory) for extracting temporal features from the input data; an additive attention mechanism for assigning weights to different time points based on their relevance to the current output; and an RFR (random forest regressor) for producing the final predictions from the weighted inputs.

This model takes historical exchange rate sequences as its input and produces contextually relevant representations by processing each sequence both forward and backward. A key advantage of using a Bi-LSTM compared to a single-directional recurrent neural network is that it can learn to represent relationships among all input data points—regardless of whether they are early or late in the sequence—rather than being limited to relationships at the beginning or end of the sequence. This provides better representation quality for use in longer horizon forecasting tasks.

Table 2 defines the best hyperparameter of the random forest regressor on GridSearchCV.

3.4.1. Bi-LSTM Architecture

The Bidirectional LSTM (Long Short-Term Memory) model processes sequential data both forward and backwards. The neural network is made up of many LSTM layers, each having an input x(t), forget, and output gates. The gates of each layer control the flow for the proper processing of information; temporal dependencies are captured as inputs and are fed sequentially; and the activation and backward layers jointly improve context-aware learning as they alternate. Bi-LSTM’s architecture is illustrated in

Figure 4.

3.4.2. Hybrid Architecture

In this architecture, Bi-LSTM has a dual role. First, it serves as a feature extractor and not as a predictor. The Bi-LSTM gains enriched representations of the sequences by generating representations from past and future contexts in a bidirectional fashion. The encoded temporal features are defined as

where

displays the hidden state at time

t and

provides context-aware embeddings to capture the long-term dependencies within the time series. To add extra layers to model the focus on relevant times, an additive attention mechanism is added in the Bi-LSTM outputs. This mechanism computes attention weights

dynamically, as the model can compute the importance of all hidden states at that given time. The attention score for time step t is given by:

where learnable parameters

,

, and

are contained. These scores undergo normalization through softmax:

The final attention context vector c is determined from the weighted aggregate of the hidden states.

This computation emphasizes the most relevant portions of the sequence required for effective detection. Attention-based feature extraction focuses on the enhanced temporal features c which are passed to the random forest regressor. By supplying the RFR with context-aware inputs, the Bi-LSTM-attention module boosts the context-aware inputs to improve its detective capacity, acting as a powerful preprocessor. The random forest models have nonlinear relationships and interactions within these features, effectively minimizing overfitting and enhancing generalization. To improve RFR performance, its hyperparameters are optimized through a GridSearchCV procedure, which generates combinations of parameters like the number of trees n_estimators, maximum tree depth d_max, and minimum samples per leaf s_min. This exhaustive tuning ensures that the ensemble leverages the context Bi-LSTM outputs and effectively enriches them. To address challenges with data quality, the architecture applies iterative imputation to handle missing values. This method is temporal in nature, which is critical in time-series scenarios where the presence of absent data points can disrupt sequential order and compromise the robustness of the model.

The ReductionLROnPlateau callback method is utilized to dynamically adjust the learning rate during training. This method reduces the learning rate η by a factor γ when defining metrics pertaining to the validation performance metric plateau

where p is the patience parameter. This adaptive approach improves convergence as it allows the model to make coarse adjustments to model parameters when it is far from optimal, as well as more precise adjustments when it approaches optimal performance. This helps with faster training while avoiding overfitting.

To reduce the complexity of the model and mitigate overfitting, Global Average Pooling (GAP) is applied after the Bi-LSTM layers. GAP computes the average of each feature map over all time steps, essentially mapping the sequence of hidden states into a fixed-size vector:

This method extracts important global temporal information while reducing the dimensionality of the slice fed into the subsequent layers. Such a reduction not only simplifies the learning process but also improves generalization of the model. Bi-LSTM, attention, and random forest regressor (RFR) together create a hybrid framework which exploits the advantages of sequential learning in deep networks, as well as the robust generalization characteristic of ensemble methods. Through cross-model feature fusion, this architecture can flexibly capture intricate and complex sequential dependencies, emphasize important temporal features, and resist overfitting, providing accurate predictions for time series data. The hyperparameter summary is shown in

Table 3. The architecture of Bi-LSTM + Attention + RFR hybrid model is illustrated in

Figure 5. Algorithm 1 presents the exchange rate forecasting algorithm using Bi-LSTM + Attention + RFR.

| Algorithm 1: Exchange rate forecasting algorithm using Bi-LSTM + Attention + RFR |

Input:

Output:

Data:

Params:

For each

=

,

For : Sample , Train tree

Predict:

Update η: η

Evaluate: , MSE = |

4. Results and Discussion

The foreign exchange dataset from 1960 to 2024 is used to predict the official exchange rate for the next year (t + 1) for each country, based on historical annual exchange rate trends. The dataset has local exchange rates in US dollars for most countries globally. This dataset is trained with models.

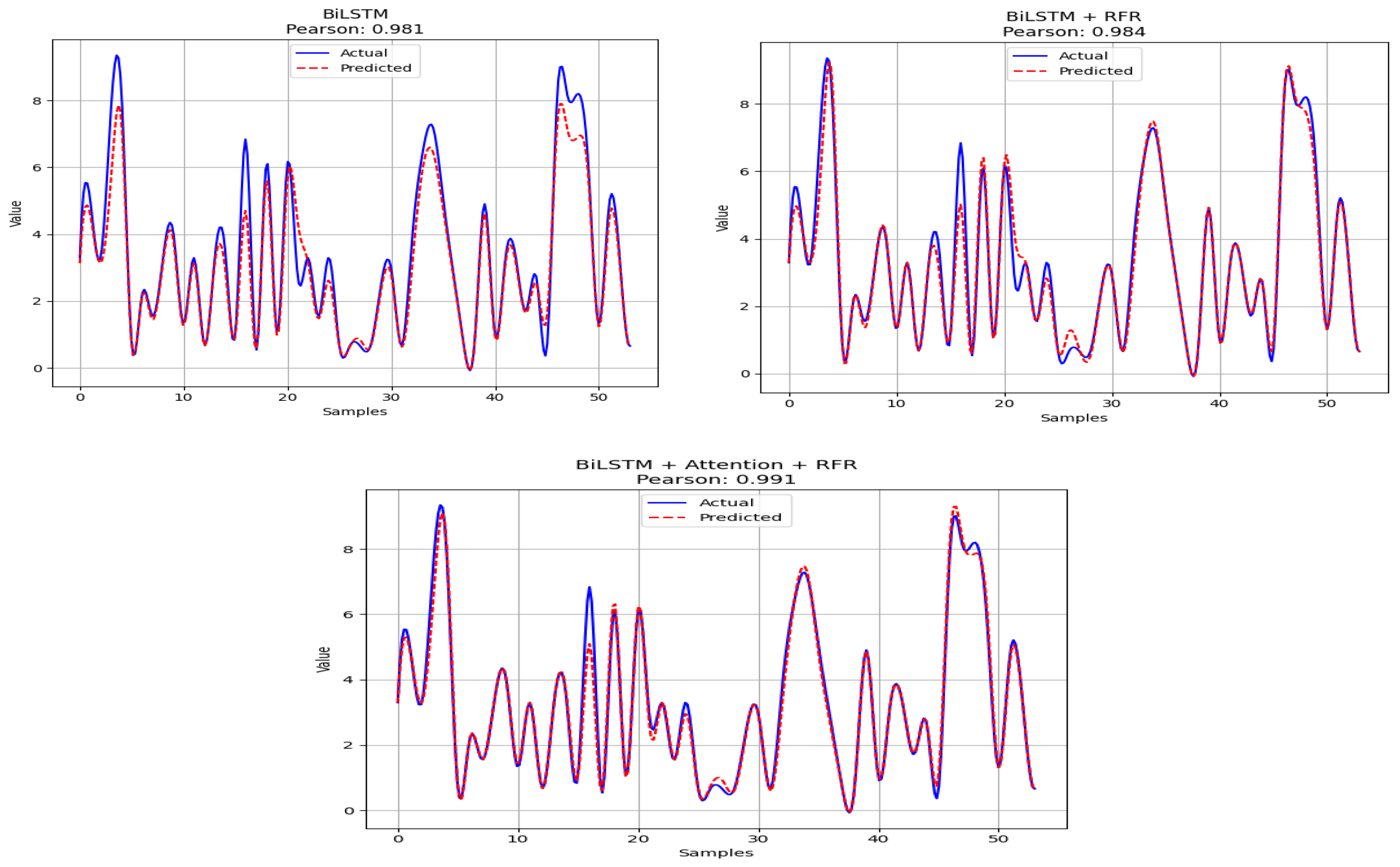

Figure 6 shows the prediction vs. the actual exchange rates of many countries’ currencies against the USD. The proposed Bi-LSTM with random forest regression and attention mechanism can process temporal features efficiently, and the evaluation of the model evaluation is presented in

Table 4. The R

2, RMSE, MAE, and loss curves are also presented for the regression evaluation task.

The experiment was repeated using a leakage-free scaling approach, confirming that the reported results are stable and not affected by potential data leakage. The experiment is conducted in the Google Colab environment using Python 3.10. The system uses 128 GB RAM with intel i7 processor. The performance of the various models is shown in

Table 4.

The simulation study in

Table 4 shows the performance comparison of various regression models for exchange rate prediction, demonstrating their predictive capabilities. The performance comparisons shown in

Table 4 clearly outline the significant differences in the predictive capabilities of the four architectures (baseline, classical machine learning, deep learning, and hybrid) for predicting future exchange rates. The naive benchmark methods have high levels of persistence in annual exchange rate changes, as evidenced by both the “Last Observed Value” method achieving a high level of fit (R

2 = 0.887) and a high level of correlation (Pearson correlation coefficient = 0.931), which clearly indicates that there are large amounts of temporal continuity within the dynamics of exchange rates. Traditional statistical machine learning methods perform significantly lower than the naive methods and thus are unable to explain a considerable amount of variation in exchange rates; for example, the linear regression approach demonstrates a very low level of explanatory power (R

2 = 0.206) and the largest error values (MSE = 4.134, MAE = 1.085), indicating that it cannot accurately represent the complex nonlinear relationships found in financial systems. The tree-based and kernel-based methods do better, but still less well than the hybrid models, while the random forest model does a good deal better than either the tree- or kernel-based models, representing a major improvement over all previous models (R

2 = 0.978, RMSE = 0.326), which indicates that ensemble learning techniques can be particularly effective in representing the complex nonlinear relationships of financial time series data.

Deep learning models are able to build upon the base performance of the other architectures, improving their own performance through the use of sequential dependency information. The standard LSTM architecture has a moderate level of predictive accuracy (R2 = 0.632), but when the directionality of the sequence is taken into consideration through the use of a Bidirectional LSTM, this increases substantially (R2 = 0.911), demonstrating that using bidirectional sequences can provide more information than unidirectional sequences for longer-term forecasting. When a random forest regressor is added to the Bi-LSTM architecture, this provides even greater improvements (R2 = 0.967, MAE = 0.201), which indicate that combining learned temporal representations with ensemble regression provides an even greater generalization of performance. The proposed hybrid Bi-LSTM + Attention + RFR model performs the best overall with the highest value for the coefficient of determination (R2 = 0.984), the lowest MAE (0.108), the lowest RMSE (0.196), and the highest correlation (0.991). These results demonstrate that the addition of attention mechanisms to the Bi-LSTM model allows the model to selectively weight different features at different points in time, and the addition of the random forest regressor allows the model to learn the interactions between the different input features. Overall, these results demonstrate that the continued improvement in performance from single models to hybrid models indicates that the combination of sequential deep learning, attention mechanisms, and ensemble regression provides a robust and highly accurate framework for forecasting future global exchange rates.

Table 5 shows the hybrid model’s mean residual difference is 0.121 lower than the naive persistence baseline with a t-statistic of 3.46 and

p value of 0.001, indicating the hybrid model is significantly better than the naive persistence model. It should be noted that naive models perform strongly in annual exchange rate forecasting due to high temporal autocorrelation present in exchange rates. The hybrid model performs even better when compared to the naive average model. The hybrid model’s mean residual difference is 0.390 lower than the naive average model with a t-statistic of 4.12 and a

p value of less than 0.001, indicating the hybrid model is much more accurate than historical average-based forecasting. Therefore, the hybrid model captures important nonlinear patterns and temporal trends that are not possible using simple average-based forecasting approaches. Also, the hybrid model shows statistically significant improvement over the random forest model, with a mean residual difference of 0.043 lower than the random forest model, a t-statistic of 2.81, and a

p value of 0.003, indicating the combination of Bi-LSTM sequential learning, attention-based feature weighting, and ensemble regression provides additional predictive power beyond that of a stand-alone machine learning model.

The scatter plots in

Figure 6 illustrate the predicted official exchange rate (LCU per USD) for next year versus next year’s actual exchange rate for different models. Each point corresponds to a country’s exchange rate for the year t + 1 predicted from historical data up to year t. The Bi-LSTM + Attention + RFR model performs the best with predictions closely following the ideal line, reflecting greater accuracy. The Bi-LSTM + RFR model outperforms the standard Bi-LSTM but is slightly less effective than the Bi-LSTM + Attention + RFR model, highlighting that the addition of attention and random forest techniques can enhance prediction accuracy and generalization. The Bi-LSTM + Attention + RFR accurately predicts the exchange rate alongside the actual rate with fewer outliers.

Figure 7 shows that the Bi-LSTM model has a wider spread in predictions, indicating less accuracy. The Bi-LSTM + RFR model reduces this spread, reflecting better accuracy due to the random forest regressor. The Bi-LSTM + Attention + RFR model shows the tightest spread, indicating the best performance as the attention mechanism improves prediction accuracy by focusing on relevant time steps. The boxplots visualize the error distribution, showing how each model improves prediction consistency.

The Bi-LSTM model exhibits significant positive and negative residuals in

Figure 8, indicating incomplete predictions. The Bi-LSTM + RFR model demonstrates fewer large residuals and a better distribution around zero, thereby improving its accuracy. The Bi-LSTM + Attention + RFR model displays the most balanced residuals, tightly clustered around zero, showcasing its superior performance by capturing data patterns and effective generalization, thus minimizing prediction errors.

Figure 9 illustrates that the Bi-LSTM + RFR model improves predictive accuracy compared to the Bi-LSTM model, while the Bi-LSTM + Attention + RFR model achieves the best performance, with predicted values closely aligning with the actual values. The Pearson correlation measures the linear relationship between actual and predicted values, indicating that the inclusion of attention and random forest techniques can enhance model accuracy.

The Bi-LSTM model exhibits a wide distribution, which indicates inconsistent predictions. In contrast, the Bi-LSTM + RFR model, as shown in

Figure 10, demonstrates more concentrated predictions that reflect improved consistency due to the random forest regressor. The Bi-LSTM + Attention + RFR model displays the most concentrated distribution, suggesting the best accuracy and stability, with the attention mechanism aiding in focusing on key data patterns. The histograms visualize the model’s prediction consistency.

The Bi-LSTM model exhibits a rapid decrease in training loss, but there is a significant gap between the training and validation losses, indicating overfitting as shown in

Figure 11a–c. The Bi-LSTM + Attention + RFR model performs better with the validation loss closer to the training loss, suggesting enhanced generalization due to the random forest regressor. The Bi-LSTM + Attention + RFR model demonstrates the best performance, with both training and validation losses decreasing and stabilizing at similar levels. The attention mechanism enables the model to concentrate on relevant time steps, which can lead to better generalization and reduced overfitting, resulting in superior performance in terms of handling unseen data.

As shown in

Figure 12a–c, the Bi-LSTM model demonstrates a wide error distribution with significant errors, indicating room for improvement. The Bi-LSTM + RFR model exhibits fewer extreme errors, suggesting that random forest reduces large prediction errors. Meanwhile, the Bi-LSTM + Attention + RFR model shows the most concentrated error distribution around zero, indicating better generalization and accuracy, as the attention mechanism helps focus on key time steps and diminishes overfitting. This model displays the best performance in handling the data.

Figure 13 shows the actual and predicted data using samples of two countries for the visual understanding. The prediction curve shows that each country’s actual data are close to predicted data.

The highest Mean Squared Error (MSE) and Mean Absolute Error (MAE) indicate that it has relatively larger prediction errors compared to the other models in

Figure 14a,b. The R-squared (R

2) value is similar to those of the other models, suggesting that it explains a reasonable proportion of the variance, but its predictions are less accurate overall. The Pearson correlation is good, though it is not as strong as the combined models, indicating a weaker linear relationship between the actual and predicted values.

Bi-LSTM + RFR Model: This improves upon the Bi-LSTM model, showing a reduction in both MSE and MAE, indicating better prediction accuracy. The R2 is slightly higher, showing that the random forest regressor (RFR) helps the model generalize better and explain the variance in the data. The Pearson correlation is also higher than the Bi-LSTM model, reflecting a stronger linear relationship between the actual and predicted values.

Bi-LSTM + Attention + RFR Model: It achieves the lowest MSE and MAE, indicating the most accurate predictions. It also has the highest R2 and Pearson correlation, showing the best overall performance in explaining the variance in the data and the strongest linear relationship between the actual and predicted values. The attention mechanism combined with Bi-LSTM and RFR provides better generalization, reduces overfitting, and ensures more consistent predictions.

In the LIME visualization shown in

Figure 15, the attributes designated as “Feature k” are the historical yearly exchange rate inputs—more specifically, Feature 63 is 2023, Feature 62 is 2022, Feature 61 is 2021, and so on—in chronological order from the oldest to the most recent. Therefore, the explanation shows that the most recent historical exchange rate values are the most influential to the prediction made by the model. This supports the theory in financial time series that recent market states are more valuable than older historical data for predictive purposes.

The results from

Table 6 shows that while recent models performed well, the R

2 and MAE statistics reveal differences among them. Each of the hybrid deep learning models demonstrate strong forecasting capability but with varying degrees of accuracy and error levels. The best performing model is the MVO-BiGRU model, demonstrating high performance across both the Saudi–Euro and China–Euro currency pairs with an R

2 of 0.984 and 0.962, respectively, as well as notably low MAE values which indicate that it is robust across a variety of currency pairs. Although the TCN-QV model exhibits the largest R

2 at 0.997 for the US–China pair, this model also exhibits the largest MAE at 1.556, thus limiting its potential for reliable use. Lastly, the SARIMA-LSTM model demonstrates a small MAE (0.006) when comparing forecasts of African currencies to those of the US, EUR, and CYN, but it did not report an R

2 statistic, which limits the ability to fully assess its relative performance compared to the other models examined. While the Bi-LSTM + Attention + RFR model reports a large R

2 (0.985), the MAE (0.118) is significantly larger, indicating that while the model has good explanatory power, the precision of its forecasts is likely lower than many of the hybrid models used in comparison. The existing models do not show real-time dynamics on specific countries data, whereas proposed model is able to forecast global foreign exchange where all countries data have been utilized to train the model. The proposed model achieves better accuracy, as highlighted in bold.

4.1. Discussion

It would appear as though the yearly exchange rate series exhibits considerable temporal dependence; however, there will still be value in terms of both the economy and decision-making from the incremental accuracy gains resulting from the proposed hybrid Bi-LSTM + Attention + RFR (random forest regressor) model. In general, even minor decreases in forecast errors can produce significant financial consequences in international financial systems where exchange rates significantly influence trade flows, investment decisions, and risk management practices.

An important example where improved accuracy in forecasting can provide value is currency hedging in international trade. Companies which have cross-border transactions frequently use either forward contracts, options, or currency swaps to hedge their foreign exchange exposure. When forecast errors are reduced, companies will be able to more accurately forecast the movement of exchange rates and choose more appropriate hedging strategies. Additionally, even relatively minor improvements in predictive accuracy may reduce hedging costs or avoid unforeseen financial loss when large transaction volumes are at stake.

Additionally, another practical application of the improved forecasting accuracy that can be provided by the hybrid model is international trade pricing and contract planning. Frequently, exporters and importers enter long-term contracts denominated in foreign currencies. More accurate forecasts of trends in exchange rates will enable companies to more accurately estimate future revenues and costs while negotiating trade agreements; therefore, improved forecasting will enable companies to better manage their exposure to exchange rate risks and make more informed pricing decisions in global markets.

The hybrid model will also potentially provide value for macro-economic policy monitoring. Central banks and financial regulators frequently monitor the direction of movement of exchange rates when assessing the external stability of an economy. Improved forecasts may assist policymakers in identifying potential pressures on currencies, adjusting their monetary policy strategies, or evaluating the possible effects of changes in international economic conditions.

In addition to the above examples of how hybrid models may provide value, hybrid forecasting models may also have value during times of structural breaks or high levels of market volatility. Naive persistence models typically assume that the most recent observation is the best predictor of future observations. This assumption may perform reasonably well during stable economic times; however, that assumption may fail when the exchange rate dynamics suddenly change due to either financial crises, geopolitical events, or major macroeconomic policy regime changes. Examples of this include currency devaluation, financial crises, or a sudden change in the monetary policy regime.

The proposed hybrid architecture has been developed to capture both the temporal dependencies and nonlinear relationships within the exchange rate data. The Bi-LSTM component captures the sequential patterns over time; the attention mechanism identifies the most informative time steps; and the random forest regression captures the nonlinear relationship amongst the feature extractions. Therefore, the hybrid model is better suited to identifying changes in exchange rate dynamics than simple persistence-based models.

While the yearly data set utilized in this study smooths out many of the short-term fluctuations in the data, the results indicate that hybrid deep learning models can still utilize additional predictive information beyond simply utilizing persistence. Future studies may build upon this analysis by employing higher-frequency datasets (e.g., monthly or daily exchange rate data) to further examine model performance under extreme volatility or structural economic changes.

4.2. Limitations

The hybrid Bi-LSTM + Attention + RFR framework shows predictive capabilities, but there are limitations. The naive persistence baseline has a high R-squared value (R2 = 0.891), indicating strong temporal autocorrelation in the annual exchange rate series. The proposed model does improve that value to R2 = 0.985, but the additional gain needs to be evaluated because it can be expected that exchange rates will change slowly over the years, making it very difficult to beat the naive persistence. The proposed model also has a significant amount of improvement with much lower prediction errors (lower MSE and MAE), which demonstrates an increase in practical value where a small forecast change can improve financial value.

Another limitation concerns the model used in the present study which is based solely on historical exchange rate data. While this model is designed to remove other macroeconomic fundamentals, it also prevents the model from incorporating elements such as interest rate differentials, inflation, GDP growth, trade balances, monetary policy, etc., all of which can drive exchange rate changes. By not including these elements, the prediction remains less interpretable. The third aspect concerns our dataset’s annual observations, which smooth out the effects of short-term volatility and structural breaks. While this can assist with long-term forecasting, high-frequency dynamics, which are of interest to traders and policy analysts, may be obscured.

Subsequent to this, the main objective is built on the framework proposed herein by including macroeconomic and financial variables, as well as higher-frequency and cross-sectional data from multiple sources. The addition of such exogenous variables is expected to improve the interpretive power, responsiveness to structural breaks, and the forecasting system’s usability in practice.