4.1. Implementation Details

Architecture. Our framework is built upon the official implementation of Mask2Former [

9]. Unless otherwise specified, we employ a Swin-Base (Swin-B) [

37] backbone initialized with weights pre-trained on the ADE20K [

30] semantic segmentation dataset. We use a vocabulary size of

standard semantic categories to form the in-distribution manifold. The input images are resized such that the shorter side is 640 pixels, preserving the aspect ratio.

Inference. For the zero-shot experiments, we freeze the entire Mask2Former network. The artifact localization is performed purely via the proposed MSR mechanism (

Section 3.2), which aggregates rejection signals from the pre-trained object queries. The anomaly maps are binarized using a fixed threshold

.

Training. In the adaptation stage, we freeze the backbone and the pixel decoder to prevent catastrophic forgetting of general semantic features. We only update the parameters of the object queries and the classification head . The model is fine-tuned using the proposed Semantic Suppression Margin Loss (). We empirically set the rejection margin and the balancing weight . Training is conducted using the AdamW optimizer with a learning rate of and a weight decay of . The model is fine-tuned for 200 iterations with a batch size of 4 on a single NVIDIA A100 GPU.

4.2. Experimental Setups

Dataset. We conduct our experiments on the Perceptual Artifacts Localization (PAL) benchmark [

8], utilizing the official train/validation/test split (80%/10%/10%). The dataset contains 10,168 synthesized images fully annotated with pixel-level artifact masks. To ensure a comprehensive evaluation, these images cover ten diverse synthesis tasks spanning three major generative paradigms: unconditional generation (e.g., StyleGAN2 [

38]), text-to-image synthesis, and image restoration (e.g., inpainting and super-resolution).

Evaluation Metrics. Following the standard protocol [

8], we adopt mean Intersection over Union (mIoU) as the primary evaluation metric to quantify the pixel-level localization accuracy of perceptual artifacts.

Comparison Methods. To demonstrate the effectiveness of our framework, we compare against the following baseline methods, covering both generic forgery detection and task-specific localization:

CNNgenerates [14] + Grad-CAM [39]: This method uses a classifier specifically trained to distinguish between generated and real images. Following [

8], we employ Grad-CAM [

39] to visualize the gradient activations of the specific “fake” class, serving as a proxy for artifact localization maps.

Patch Forensics [20]: This is a patch-based classifier designed to detect local anomalies in generated images. We utilize its truncation-based variation to predict “fake” regions based on local patch scores.

PAL4Inpaint [7]: This is a specialized segmentation model designed specifically for localizing perceptual artifacts in inpainting tasks. This serves as a strong baseline for task-specific performance comparison.

PAL [8]: The current state-of-the-art unified segmentation model trained on the full PAL dataset, serving as the direct competitor to our approach.

4.3. Results on Image Synthesis Tasks

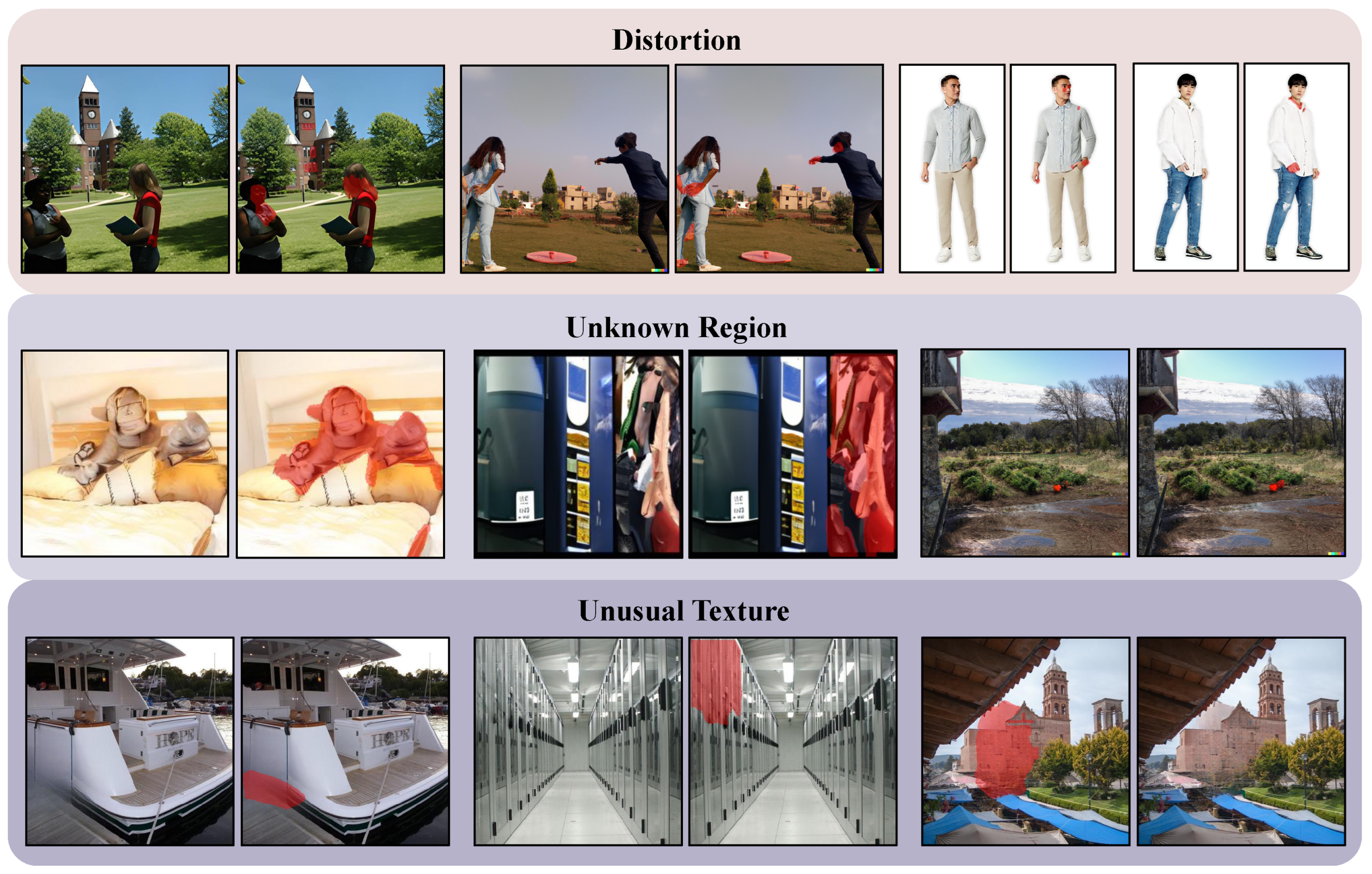

Table 1 and

Figure 3 present the quantitative comparison of artifact localization performance across ten synthesis tasks. Existing forensic baselines, including CNNgenerates [

14] and Patch Forensics [

20], exhibit suboptimal localization accuracy, with mIoU scores generally remaining below 10%. This performance deficit suggests that methods relying on low-level frequency anomalies or local texture statistics are insufficient for capturing high-level semantic inconsistencies. In comparison, the proposed MSR framework achieves state-of-the-art performance under both the Unified and Specialist settings. Specifically, the Unified MSR model demonstrates robust generalization, surpassing the previous best method, PAL (Unified) [

8], particularly on semantically complex tasks. For instance, on the text-to-image (T2I) and mask-to-image (M2I) datasets, MSR improves mIoU by 3.85% (from 19.65% to 23.50%) and 1.73% (from 39.37% to 41.10%), respectively. By leveraging object masks instead of individual pixels, our method effectively filters out background noise in complex scenes, accurately identifying artifacts as unrecognized instances. Regarding the training settings, the Specialist models generally function as a performance upper bound; for the inpainting task, the Specialist MSR achieves 44.20% mIoU, outperforming the task-specific baseline PAL4Inpaint [

7] by over 2%. A notable exception is observed in the AnyRes task, where the Unified model slightly outperforms its Specialist counterpart (36.80% vs. 36.00%), suggesting that the diversity of the unified training data may offer robustness benefits for tasks with high structural variance.

4.4. Results on Unseen Methods

Rapid advancements in generative models necessitate artifact detectors that can generalize to novel, unseen architectures. To evaluate this capability, we follow the protocol in [

8] and test on five held-out models: StyleGAN3 [

45], BlobGAN [

46], Stable Diffusion v2.0 (SD2) [

5], Versatile Diffusion (VD) [

47], and Diffusion Transformer (DiT) [

48].

As shown in

Table 2, conventional forensic methods (CNNgenerates and Patch Forensics) fail to transfer to these new domains, yielding near-random performance. While the previous state-of-the-art, PAL shows reasonable zero-shot performance on GAN-based models (e.g., StyleGAN3), it struggles significantly with modern diffusion models (e.g., 6.75% on SD2). In contrast, our MSR method demonstrates superior cross-model generalization. Even in the zero-shot setting, MSR outperforms PAL on DiT by nearly 5% (21.30% vs. 16.46%), suggesting that the proposed semantic rejection mechanism is less dependent on domain-specific texture artifacts. Furthermore, the efficacy of our method is most pronounced in the fine-tuning setting (ten-shot adaptation). By leveraging the geometric constraints of the proposed margin loss, MSR adapts rapidly to the target distribution. Notably, on the challenging Stable Diffusion v2.0, MSR achieves a 13.4% improvement over PAL (24.50% vs. 11.04%), effectively bridging the domain gap with minimal supervision.

4.5. Results on Real Images

Since our MSR framework is trained exclusively on synthetic data to reject semantic inconsistencies, a natural question arises: how does the model behave when presented with real, natural images? To investigate this, we conduct inference on real images sampled from the COCO-Stuff-164k [

29] dataset.

As illustrated in the bottom row of

Figure 4, for the vast majority of natural scenes—ranging from food and aircraft to animals—the MSR detector exhibits robust behavior, outputting empty masks. This confirms that the model maintains a low false positive rate and does not blindly overfit to low-level texture statistics common in natural images. Intriguingly, in the rare cases where the model does flag regions in real images (top row,

Figure 4), the detections are not random noise. Instead, the model shows a distinct tendency to localize semantically dense, high-frequency details, specifically small text (e.g., bus schedules), complex signage (e.g., neon street signs), and fine-grained interface elements (e.g., microwave buttons).

We attribute this phenomenon to the nature of the Mask-based Semantic Rejection mechanism. These regions—characterized by sharp edges and complex geometries—are successfully isolated as candidate objects by the mask proposal network. However, because they often lack a canonical semantic category in the pre-trained vocabulary or resemble the high-frequency structural distortions often found in generated artifacts (e.g., garbled text in T2I models), the semantic scoring function rejects them, effectively classifying them as perceptual anomalies.

We also conduct an quantitative analysis using two metrics: (1) the false positive rate (FPR), defined as the percentage of real images where the model predicts a non-empty artifact mask, and (2) the average predicted artifact area (APAA), the ratio of the predicted artifact area to the total image area, averaged across all images. As reported in

Table 3, the MSR framework demonstrates selectivity. The overall FPR is as low as 3.82%, with an APAA of only 0.14%. When broken down by scene categories, the model exhibits its highest robustness in “Nature” and “Animal” scenes (FPR < 2%), where semantic structures are well-defined in the pre-trained vocabulary. Even in complex “Indoor” and “Urban” scenes, the FPR remains well-controlled. These quantitative findings complement our qualitative observations, confirming that the MSR mechanism is highly reliable and does not suffer from high false positive rates when encountering diverse natural distributions.

4.6. Ablation Study

Mask-level vs. Pixel-level Rejection. To validate the superiority of the proposed mask-based formulation, we compare MSR against standard pixel-wise segmentation baselines. As reported in

Table 4, pixel-level approaches, including UPerNet and the standard Mask2Former pixel decoder head, achieve suboptimal performance (38.42% and 40.25% mIoU, respectively). This performance gap is attributed to the inherent noise in generated images; pixel-wise classifiers often overfit to high-frequency local artifacts, resulting in fragmented predictions. In contrast, our mask-level rejection leverages object-level priors to propose coherent regions, effectively suppressing background noise and improving mIoU by approximately 8% over the strongest pixel-wise baseline.

Anomaly Scoring Functions. We further investigate the design of the anomaly scoring function defined in Equation (

5).

Table 4 presents the comparison between our proposed metric and conventional uncertainty measures. Standard entropy

and max probability inversion (

) yield mIoU scores of 42.50% and 44.12%, respectively. While effective for out-of-distribution detection, these metrics do not explicitly account for the geometric quality of the predicted regions. By incorporating the binary mask prediction

M as a weighting factor (“Ours w/o Mask Weighting” vs. “Full Equation (

5)”), we observe a performance gain of 2.4% (45.80% → 48.20%). This confirms that modulating the semantic rejection score with the mask confidence effectively filters out low-quality proposals where the model is uncertain about the object boundaries.

Margin m in Semantic Suppression Margin Loss. We analyze the sensitivity of the proposed framework to the margin hyperparameter

m within the Semantic Suppression Margin Loss (

). This parameter controls the minimum distance enforced between the artifact embeddings and the semantic queries. As shown in

Table 5, the model performance exhibits an inverted-U trend with respect to

m. When the margin is set to a low value (e.g.,

), the regularization effect is insufficient, resulting in a suboptimal mIoU of 45.54% due to the limited discriminability between artifacts and background content. Conversely, an excessively large margin (e.g.,

) imposes overly stringent constraints on the latent space, making the optimization objective difficult to satisfy and degrading the performance to 44.80%. The model achieves optimal segmentation accuracy (48.20% mIoU) at

, indicating a balanced trade-off between feature compactness and inter-class separability.

Anomaly maps binarization threshold . We investigate the sensitivity of the final localization performance to the binarization threshold

, which is applied to the continuous anomaly map

to generate binary artifact masks. As illustrated in

Table 6, the mIoU performance follows an inverted-U trajectory relative to the threshold value. When a lower threshold is employed (e.g.,

), the MSR framework adopts a highly sensitive detection strategy. While this ensures the inclusion of most potential artifact regions, it also incorporates a broader range of semantic query responses, leading to an mIoU of 22.16%. Conversely, at the higher end of the spectrum (e.g.,

), the model focuses exclusively on the most salient structural distortions with the highest rejection confidence, achieving an mIoU of 28.51%. The optimal localization accuracy of 48.20% is reached at

. This result demonstrates that the default threshold provides a robust and effective balance between capturing diverse, subtle artifact patterns and maintaining precise localization boundaries. The stable performance observed within the intermediate range (0.3 to 0.7) further validates the reliability of our semantic rejection signals.

Training data size. Figure 5 illustrates the quantitative correlation between segmentation performance and the volume of training data utilized. We observe a consistent positive trajectory across all tasks as the data ratio increases from 0% (zero-shot) to 100% (full data fine-tuning). This performance gain is particularly pronounced for semantically complex synthesis tasks, which rely heavily on supervision to resolve ambiguities. For instance, the text-to-image (T2I) task exhibits a substantial improvement, rising from 10.00% to 26.80%, while the mask-to-image (M2I) task more than doubles its accuracy, climbing from 20.05% to 43.50% to nearly match the performance of easier tasks. Conversely, tasks with more structural constraints, such as inpainting and StyleGAN2, display a steady logarithmic growth, initializing at 32.51% and 28.12%, respectively. Crucially, despite the significant improvements yielded by fine-tuning, the model demonstrates non-trivial zero-shot expressiveness.

Efficiency analysis. To assess the scalability and potential for real-time deployment, we evaluated our MSR framework using backbones with varying computational costs. As shown in

Table 7, we compared the Swin-B (Transformer) against the lightweight ResNet-101 (CNN). Replacing Swin-B with ResNet-101 significantly reduces the computational burden, decreasing the GFLOPs from 268 to 160 (approximately a 40% reduction) and model parameters from 107 M to 63 M. Despite this substantial reduction in model capacity, the MSR framework equipped with ResNet-101 still achieves a robust mIoU of 44.57%. This result confirms two critical findings: first, our Semantic Rejection mechanism is a generic paradigm that functions effectively across both CNN and Transformer architectures; second, the framework offers a flexible trade-off between maximum precision and inference efficiency, making it suitable for diverse resource constraints.