1. Introduction

6D pose estimation refers to determining the pose of an object in three-dimensional space, including its location (3D translation) and orientation (3D rotation), and is one of the core research directions in computer vision. This technology plays a critical role in various applications such as robotic grasping in industrial settings [

1,

2,

3], autonomous driving [

4,

5], and augmented reality [

6,

7]. However, depth data is susceptible to environmental noise, occlusion, and hardware cost constraints, and it also faces limitations when dealing with reflective or transparent objects. These challenges motivate the exploration of new 6D object pose estimation solutions based solely on monocular RGB data.

With the advances in deep learning, a growing number of novel 6D pose estimation methods based on monocular RGB data have emerged, gradually overcoming the limitations of data-driven approaches and, in some cases, even surpassing methods that rely on depth data [

8,

9]. Current mainstream approaches can be categorized into three paradigms: (1) estimating 6D pose by establishing 2D-3D correspondences through precise 2D keypoint extraction [

10,

11,

12]; (2) template-based methods that compare input images against multi-view object templates for pose estimation [

13,

14]; and (3) end-to-end frameworks that directly regress 6D pose parameters [

15,

16]. Although these monocular RGB-based methods have achieved notable progress, their practical deployment remains constrained by the deep learning models’ heavy reliance on large-scale annotated datasets.

To address this challenge, we propose a decoupled pose estimation network tailored for few-shot scenarios, which independently estimates rotation and translation components of the 6D pose. Here, “few-shot” is defined relative to standard 6D pose estimation benchmarks: while fully supervised methods typically require thousands or even tens of thousands of real annotated images, our method achieves competitive performance using only a small number of real images (e.g., approximately 600 images per object, corresponding to less than 5–20% of full training sets such as YCB-Video). We acknowledge that the term ‘few-shot’ differs from its conventional usage in classification tasks; here we adopt it in the relative sense commonly used in the 6D pose estimation literature. Specifically, for rotation estimation, we introduce an object viewpoint encoding-based approach that relies solely on synthetic data for training, thereby eliminating the need for large-scale real-world annotations. In translation estimation, our method builds upon GDR-Net [

17], employing intermediate geometry features based on dense correspondences for direct regression. This decoupled network design enables the translation component to be trained using real data alone, without the need to learn rotation information, thereby significantly reducing the overall requirement for real-world samples. Experimental results demonstrate that, under few-shot conditions, our method outperforms current state-of-the-art approaches on the LINEMOD [

18], LM-O [

19], and YCB-V [

20] datasets. The implementation is publicly available at

https://github.com/cp-0510/fs6d.git (accessed on 26 January 2026).

3. Related Works

Existing monocular RGB-based 6D pose estimation methods fall into three categories: keypoint-based, template-based, and regression-based approaches.

Keypoint-based methods typically begin by employing convolutional neural networks to detect 2D keypoints of target objects in images and establish 2D–3D correspondences, followed by 6D pose estimation using the PnP algorithm. Early work by Pavlakos et al. [

21] introduced semantic keypoint detection to avoid the computational overhead of point-wise matching; however, its performance degrades when dealing with small objects or severe occlusions. To improve robustness under such conditions, PVNet [

22] proposes a vector voting mechanism that enables the inference of occluded keypoints, leading to more reliable pose estimation in cluttered scenes. Instead of directly detecting semantic keypoints, BB8 [

23] regresses the 2D projections of the eight corners of a 3D bounding box, allowing for efficient pose estimation and improved generalization to unseen object categories. More recently, OK-POSE [

24] learns cross-view consistent 3D keypoints from image pairs with relative pose supervision, thereby reducing the dependence on explicit 3D annotations or CAD models while maintaining competitive pose accuracy. Beyond pose accuracy, recent studies have also started to examine the robustness of keypoint-based pose estimation pipelines. In particular, Luo et al. [

25] propose a system-level robustness certification framework for two-stage keypoint–PnP methods, which bounds pose estimation errors by certifying keypoint perturbations under semantic input disturbances.

Template-based methods estimate object poses by pre-constructing 3D models or feature templates and matching observed image features to these templates. For example, LatentFusion [

26] significantly improves generalization to unseen objects by incorporating 3D shape learning and multi-view modeling. DPOD [

27] combines object detection with dense correspondence matching, enabling pose estimation without requiring precise instance segmentation and demonstrating strong robustness under occlusion and illumination variations, although its performance degrades on textureless objects. PoseRBPF [

28] performs pose tracking within a particle filtering framework and is able to maintain stable and continuous pose estimates even in the presence of motion blur and occlusion. RNNPose [

29] estimates correspondences between rendered and observed images and integrates a differentiable pose optimization process to iteratively refine object poses, achieving robust and accurate pose refinement even under large initialization errors or severe occlusions. Overall, while template-based methods exhibit advantages in texture-sparse or occluded scenarios, they often incur high computational cost and rely on high-quality template libraries or strong model priors, which limits their practicality in highly cluttered real-world scenes. Inspired by recent advances in causal modeling for image restoration, approaches such as CausalSR [

30] demonstrate that incorporating structural causal models and counterfactual reasoning can lead to more robust and interpretable representations, suggesting promising research directions for 6D pose estimation under complex and data-scarce conditions.

Regression-based methods formulate 6D object pose estimation as a direct regression problem, predicting object poses from RGB images without relying on intermediate keypoint representations. Early approaches such as PoseNet [

31] adopt end-to-end convolutional neural networks for pose regression but suffer from limited generalization. To improve estimation accuracy, PoseCNN [

20] incorporates 2D centroid prediction with a symmetry-aware loss, while still relying on ICP-based refinement, which introduces considerable computational overhead. To improve inference efficiency, Deep-6DPose [

32] leverages Mask R-CNN to perform end-to-end pose regression, achieving improved inference speed. Recent research has increasingly shifted toward geometry-guided direct regression to alleviate the implicit and highly non-linear mapping between image features and pose parameters. Geo6D [

33] explicitly embeds geometric constraints into the regression process through a residual pose formulation, improving training stability and reducing dependence on large-scale training data. Building upon this idea, GDRNPP [

34] proposes a fully learning-based, geometry-guided pose estimation framework with optional differentiable pose refinement, achieving state-of-the-art accuracy and efficiency on the BOP benchmark without relying on traditional optimization pipelines. Despite their efficiency and end-to-end nature, direct regression methods remain sensitive to rotation representation ambiguities and unseen pose distributions.

In recent years, research on 6D object pose estimation has increasingly focused on improving model generalization to unseen objects. These approaches typically target zero-shot pose estimation, aiming to infer object poses without relying on object-specific training, using only image observations combined with external priors. Representative methods include ZeroPose [

35], which leverages CAD models as priors during inference for rapid deployment on novel objects; CLIP-6D [

36], which exploits vision–language foundation models to learn generalizable object representations and enhance geometric reasoning; and PoseDiffusion [

37], which introduces diffusion models within a coarse-to-fine generative framework to progressively estimate and refine poses of unseen objects. Collectively, these works provide effective solutions for 6D pose estimation in scenarios involving previously unseen objects. Although the above-mentioned methods continue to be optimized and improved at the algorithmic level, their performance gains are severely constrained by the scarcity of real-world annotated data, which serves as the starting point for our research.

4. Methodology

4.1. Overview

In the field of 6D pose estimation, existing methods typically adopt a unified framework to jointly optimize rotation and translation. With sufficient training data, such joint modeling can effectively share feature representations and leverage multi-task information to achieve high performance. However, in few-shot scenarios, the differences between rotation and translation in terms of information sources, learning difficulty, and supervision requirements become pronounced, making joint optimization more sample-intensive and prone to inter-task interference. Specifically, rotation estimation primarily relies on discriminative visual cues provided by object appearance and viewpoint variations, whereas translation estimation heavily depends on real-world geometric structure, object scale, and camera intrinsics, making it more sensitive to high-quality annotated data.

Based on this observation, we propose a decoupled network architecture for few-shot 6D pose estimation, as illustrated in

Figure 1. The pipeline begins with an object detector extracting a 128 × 128 ROI from the RGB image, followed by independent estimation of rotation and translation. Rotation is estimated via a viewpoint encoder trained on synthetic data, while translation leverages geometry-aware features for direct regression, minimizing real data dependency.

In the rotation estimation network (in

Section 4.2), the viewpoint encoder is first employed to construct an object-specific viewpoint codebook, from which multiple candidate viewpoints are retrieved. For each retrieved candidate, an in-plane 2D rotation regression is performed to obtain a complete set of 3D rotation estimates. Finally, a consistency score is computed for each rotation hypothesis, and the one with the highest score is selected as the final 3D rotation estimate (see

Figure 1B).

In the translation estimation network (in

Section 4.3), several intermediate geometry features based on dense correspondences are predicted by the network and used to directly regress the full 3D translation (see

Figure 1C).

4.2. Viewpoint-Encoded Rotation Matrix Estimation

The rotation estimation network employs a viewpoint encoder to construct an object-specific codebook from synthetic data.

The core of the network is an object viewpoint encoder based on RGB images, which robustly encodes the object viewpoint into a feature vector. In this paper, the object viewpoint is defined as the out-of-plane rotation

, which determines the viewing direction of the object in 3D space. The encoded representation is sensitive to changes in viewpoint while remaining invariant to in-plane rotations around the camera optical axis. Specifically, we adopt a rotation decomposition strategy that factorizes the complete 3D rotation

R into the out-of-plane rotation

and the in-plane rotation

, where

represents the residual axial rotation about the camera optical axis:

As shown in

Figure 2, this decomposition allows the network to first extract stable viewpoint information using the object viewpoint encoder. It then compensates for axial rotations through an in-plane rotation regression module, thereby achieving complete 3D rotation estimation. This decomposition ensures robustness to in-plane rotations while accurately capturing the full 3D orientation.

The entire network consists of three core functional modules: the object viewpoint encoder, in-plane rotation regression, and 3D direction verification. The following sections provide a detailed description of the design and implementation of each of these modules.

4.2.1. Object Viewpoint Encoder

The viewpoint encoder comprises a backbone network and an encoding head . The backbone consists of eight convolutional layers (Conv2D) with batch normalization (BN), while the encoding head is composed of a single convolutional layer (Conv2D), a pooling layer, and a fully connected layer (FC). Using detected ROI images as input, it outputs a 64-dimensional feature vector representing the encoded camera viewpoint.

To achieve invariance to in-plane rotations around the camera’s optical axis while maintaining sensitivity to changes in camera viewpoint, we employ a similarity ranking-based loss function. During training, a triplet training sample

is constructed using a synthetic RGB dataset rendered from ShapeNet [

38], where

V is the original RGB image from a canonical viewpoint,

V and

differ only in in-plane rotation, and

is obtained from a different camera viewpoint. The corresponding viewpoint feature representations

are then extracted using the viewpoint encoder, as shown in

Figure 3A. The equivalent loss function

is:

This encourages the encoder to learn more robust viewpoint representations and ensures good generalization across different objects.

During inference, the trained viewpoint encoder is first used to construct a viewpoint codebook for the target object, which is then utilized for subsequent viewpoint retrieval and in-plane rotation estimation. The specific approach is as follows: a 3D bounding sphere is constructed centered on the target object, with its radius defined as

, where diameter denotes the geometric diameter of the object’s 3D mesh model. In this work, we set

, and the detailed justification and experimental analysis are provided in

Section 5.4. A total of

viewpoints

are uniformly sampled on the surface of the sphere. Then, each sampled viewpoint is combined with the 3D mesh model of the target object to render a synthetic RGB image set

. Finally, the trained viewpoint encoder is used to extract the corresponding viewpoint feature representations

from these images (i.e., first, the backbone network extracts feature maps from the images, then the encoding head encodes them into viewpoint feature vectors). These are stored in a codebook database as a set

, where

is the object mesh model and

is the object ID, as shown in

Figure 4.

After constructing the viewpoint codebook for the target object, the viewpoint encoder is used to extract the target viewpoint representation v from the ROI image V. Subsequently, the cosine similarity score between v and all entries in the corresponding viewpoint codebook (indexed by the known object ID) is calculated. The entry with the highest similarity score , is then selected as the closest viewpoint. Additionally, the entries can be ranked in descending order based on the cosine similarity scores, and the top K candidate viewpoints can be selected from the codebook.

4.2.2. In-Plane Rotation Regression

After obtaining the viewpoint information, the network regresses the in-plane rotation around the camera optical axis, thereby completing the full rotation prediction. To achieve this, we construct the regression network by attaching a regression head to the backbone network shared with the viewpoint encoder. The regression head consists of a convolutional layer (Conv2D) followed by two fully connected layers (FC). This module takes as input a pair of feature maps corresponding to the same viewpoint but with different in-plane orientations, and regresses the relative in-plane rotation angle.

During inference, we first use the shared backbone network to extract a pair of feature maps from the RGB image pair , where is the synthesized RGB image rendered using the retrieved viewpoint . Next, the regression module estimates the in-plane 2D rotation matrix from the feature map pair, and generates the complete 3D orientation estimation via . Similarly, we can perform in-plane rotation regression for each of the retrieved candidate viewpoints to obtain multiple complete 3D orientation estimations, .

We train this module by minimizing the discrepancy between the ground-truth rotation matrix

and the predicted

(see

Figure 3B). Specifically, we use the negative logarithm of cosine similarity to measure the discrepancy and define the loss function

as follows:

where

F denotes the flattening operation, and

represents the 2D spatial transformation associated with

[

39]. By optimizing this loss, the regression network is encouraged to accurately predict the in-plane rotation and avoid large rotational errors.

4.2.3. 3D Orientation Validation

Based on the previous two modules, multiple complete 3D orientation hypotheses can be derived. To select the one closest to the ground truth pose, we employ an orientation verification module to estimate the consistency between each candidate object and the actual object orientation in the ROI image V. Using these consistency scores, the multiple hypotheses can be ranked accordingly. Similar to the regression module, the verification module is constructed by attaching a verification head to the shared backbone. The verification head consists of two convolutional layers (Conv2D), a pooling layer, and a fully connected layer (FC).

During training, we optimize this module using a ranking-based loss function. As shown in

Figure 3C, the estimated in-plane rotation

is first applied to the feature map

z via a spatial transformation, i.e.,

, where

denotes the 2D spatial transformation corresponding to

[

33]. Then,

is concatenated with

and

along the feature channel dimension, respectively, and the concatenated features are fed into

to produce consistency scores

and

. The equivalent loss function is:

This ensures that the correct direction hypothesis ranks higher in the consistency scoring, thereby gradually guiding the model to learn accurate direction verification capability during training.

During inference, the estimated in-plane rotation matrix is first used to transform the feature map from the retrieved viewpoint. Next, it is combined with the feature map z from the ROI image and fed into the verification head, enabling the calculation of a consistency score for each 3D orientation hypothesis. Based on the estimated scores, we sort all hypotheses in descending order and select the top orientation hypotheses as the output. In this work, we set the number of candidate viewpoints to and use during inference, retaining only the hypothesis with the highest consistency score as the final pose estimate. All quantitative results reported in this paper are based on this setting.

4.3. Translation Vector Estimation

Translation estimation builds on GDR-Net, predicting intermediate geometric features (e.g., a 64 × 64 dense correspondence map via ResNet-34) and regressing 3D translation using a lightweight network with three convolutional and fully connected layers. This design ensures stable performance with minimal real data.

The core of this network consists of two modules: the geometric feature regression module and the translation regression module. The following sections will introduce each of them in detail.

Geometric Feature Regression Module: As a prior knowledge extractor for translation regression, this module follows the design of GDR-Net. During inference, features are extracted from the ROI image obtained by detection using a ResNet-34 network. The network then predicts three intermediate geometric feature maps, each of size 64 × 64: the dense correspondence map , the surface region attention map , and the visible object mask , where:

The dense correspondence map is obtained by first rendering the 3D object model to estimate the underlying dense coordinate mapping , which is then stacked onto the corresponding 2D pixel coordinates to generate the final map.

The surface region attention map is extracted from using farthest point sampling to capture the surface region. These geometric feature maps, by deeply exploring the object’s geometric information, provide support for the subsequent 3D translation regression.

Translation Regression Module: It is a lightweight network consisting of three Conv2D layers with group normalization [

40] and three fully connected layers (FC). It functions as the core component of the entire sub-network. During the inference phase, the scale-invariant translation parameters

[

41] are directly regressed from

and

, ultimately allowing for precise recovery of the object’s 3D translation.

4.4. Network Loss Function

The rotation and translation estimation networks are trained end-to-end with independent loss functions to more precisely constrain their respective learning objectives, ensuring that the optimization processes of the two do not interfere with each other.

The rotation estimation network consists of three sub-networks to be trained, and their corresponding optimization methods have been described in

Section 4.2. These include the viewpoint encoding loss

, the in-plane rotation regression loss

, and the orientation verification loss

. Therefore, the overall loss function

is defined as:

where

N denotes the batch size, and

and

are weighting factors. In our experiments, we set the weights to

,

,

; they are empirically chosen via validation.

For optimizing the translation estimation network, we adopt the loss function design of GDR-Net. Specifically, the overall translation loss

consists of two components: the geometric consistency loss

and the translation parameter regression loss

:

The geometric consistency loss

is composed of the

loss computed on the normalized dense correspondence map

and the visible object mask

, along with the cross-entropy (

CE) loss applied to the surface region attention map

:

On this basis, the scale-invariant 2D object center

and depth

are further supervised individually, forming the translation parameter regression loss

:

In the above equations,

and

denote the predicted and ground truth values, respectively, while ⊙ indicates element-wise multiplication.

5. Experiment

We evaluate our method on LINEMOD (13 objects in cluttered scenes), LM-O (8 occluded objects), and YCB-V (21 objects with severe occlusions). The ADD(-S) metric, with a 10% diameter threshold, assesses pose accuracy.

5.1. Datasets and Metrics

Dataset: ShapeNet [

38] is primarily used to provide training data for rotation estimation. To ensure efficient data loading, we excluded large-scale objects from the dataset, resulting in a reduced set of 19K shapes from the original 52,274 models. For each object, 16 anchor viewpoints are randomly sampled and distributed on a sphere centered at the object. Then, for each anchor viewpoint, a random in-plane rotation and a random out-of-plane rotation are applied. The corresponding RGB images are synthesized using the Pyrender, resulting in a set of 128 viewpoint triplets per object.

LINEMOD [

18] is a widely adopted benchmark for single-object 6D pose estimation, comprising RGB-D images and corresponding 3D models for 13 objects captured in cluttered environments. However, as recent works have reported recall rates exceeding 90%, the dataset has become increasingly saturated and less discriminative for evaluating advanced methods. Consequently, we employ the more challenging LM-O [

19] and YCBV [

20] datasets to better assess the robustness and generalization capability of our approach.

The LM-O dataset consists of 1214 test images and serves exclusively for evaluation purposes. It provides annotated 6D poses for eight objects under partial occlusion, thereby introducing increased challenges for pose estimation. In contrast, the YCB-V dataset comprises a significantly larger set of real-world images containing 21 objects. While YCB-V offers more extensive annotated training data, it poses additional difficulties due to severe object occlusions and the presence of multiple geometrically symmetric objects within cluttered scenes.

During training, approximately 1.4k real images per object from the LM dataset are used as the real training data for LM-O. Although YCB-V offers a larger number of annotated real images, the amount of real data remains limited for most deep learning-based methods. Therefore, a large number of physically-based rendered (PBR) training images [

42] are employed to augment the training process and enhance generalization performance. In our experimental setup, the rotation estimation network is trained using solely synthetic images, while the translation estimation requires only 600 real images per object for training, significantly reducing on real-world data. The 600 real images per object used for training are directly sampled from the training splits of public benchmark datasets, without imposing additional prior constraints on background complexity, illumination conditions, or imaging noise. For training a single object, the model contains approximately 4.8 M parameters, corresponding to an apparent training-samples-to-parameters ratio of about

, i.e., roughly one real training sample per 8000 parameters. It should be noted, however, that the rotation branch is trained solely on synthetic data and kept fixed, while the translation branch is trained using real images. As a result, the number of parameters actually trained on real data is much smaller than the total parameter count.

Metrics: We adopt the widely used ADD(-S) metric to evaluate our method. This metric measures the average distance between model points projected using the predicted pose and those projected using the ground-truth pose in the camera coordinate frame. In all experiments presented in this paper, a predicted pose is considered correct if the ADD(-S) error is less than 10% of the object’s diameter, which is the most commonly used threshold. For the YCB-V dataset, we additionally report the Area Under the Curve (AUC) of the ADD(-S) metric, with a maximum threshold of 10 cm.

Network Training: The experiments were conducted using the PyTorch deep learning framework (version 1.10). The Adam optimizer was employed for weight updates, with a cosine annealing learning rate schedule ranging from to and a weight decay of . Training was performed on a single Nvidia RTX 4060 GPU, and the overall training process required approximately 4 days. During inference, the model requires approximately 4.3 GB of system memory and takes on average 1.03 s to process each image.

5.2. Experiments on LINEMOD

Ablation Study on the Number of Viewpoints: To systematically evaluate the impact of the number of viewpoints on rotation retrieval accuracy and overall pose estimation performance, we conduct an ablation study on the LINEMOD (LM) dataset. In this experiment, the rotation estimation module directly takes the predictions of the object detector as input, while all other components in the rotation estimation pipeline are kept unchanged, including the pre-trained viewpoint encoder, the in-plane rotation regression module, and the 3D verification module. This design ensures that any performance variation can be solely attributed to differences in the number of viewpoints N.

Table 1 presents the ablation study results on the LINEMOD (LM) dataset with respect to the number of viewpoints

N. The viewpoint retrieval accuracy is measured as the percentage of predictions with a rotation error smaller than

. The codebook construction is performed once in the offline stage and is therefore excluded from the per-frame inference cost. The table also reports the codebook construction time (in seconds), the ADD(-S) scores under different values of

N, and the per-frame retrieval time (ms/frame).

As shown in the table results, when the viewpoint sampling is sparse (e.g., and ), the rotation space is insufficiently covered and the angular gaps between neighboring viewpoints are large, resulting in low viewpoint retrieval accuracies of only 42.7% and 51.6%, respectively, with the corresponding ADD(-S) scores being clearly limited. As the number of viewpoints increases to , the rotation space is discretized more densely, leading to significant improvements in both viewpoint retrieval accuracy and ADD(-S). This indicates that denser viewpoint sampling effectively alleviates the viewpoint missing problem and provides more reliable initialization for subsequent rotation refinement and geometric verification. Further increasing N to 4000 continues to improve performance, with ADD(-S) reaching 94.8%; however, the performance gain becomes marginal compared to the previous stage. When Nis further increased to 8000, the performance improvement saturates, while the per-frame retrieval time grows almost linearly, revealing a clear diminishing-returns effect.

Overall, overly coarse viewpoint sampling severely degrades rotation retrieval and pose estimation accuracy, whereas excessively dense sampling introduces unnecessary computational overhead. Therefore, a trade-off between rotation resolution and efficiency is required. Based on the above experimental results, we finally select N = 4000 as the default number of viewpoints, achieving a favorable balance between accuracy and computational cost.

Evaluation of the Core Modules in the Rotation Estimation: This study focuses on evaluating the rotation estimation network by independently assessing its core sub-modules. Experiments on the LINEMOD (LM) dataset examine each module’s accuracy and robustness under different input conditions. Specifically, we compare performance using predicted ROIs (Pred-ROI) from object detectors and ground-truth ROIs (GT-ROI) from annotations to understand their influence on overall system effectiveness.

Figure 5 illustrates the performance of the viewpoint retrieval module on the LM dataset across multiple error thresholds, comparing results based on predicted ROIs and ground-truth ROIs. In this evaluation, only the top-scoring pose hypothesis is considered to ensure a fair and focused assessment of retrieval accuracy. The results show that 72% of cases achieve an angular error ≤ 10°, confirming the high reliability of the viewpoint retrieval module. While performance with predicted ROIs is slightly lower than with ground-truth ROIs, the gap is small, suggesting limited sensitivity to ROI quality. Additionally, results on synthetic and real images are comparable, indicating strong cross-domain generalization and robustness to domain shifts.

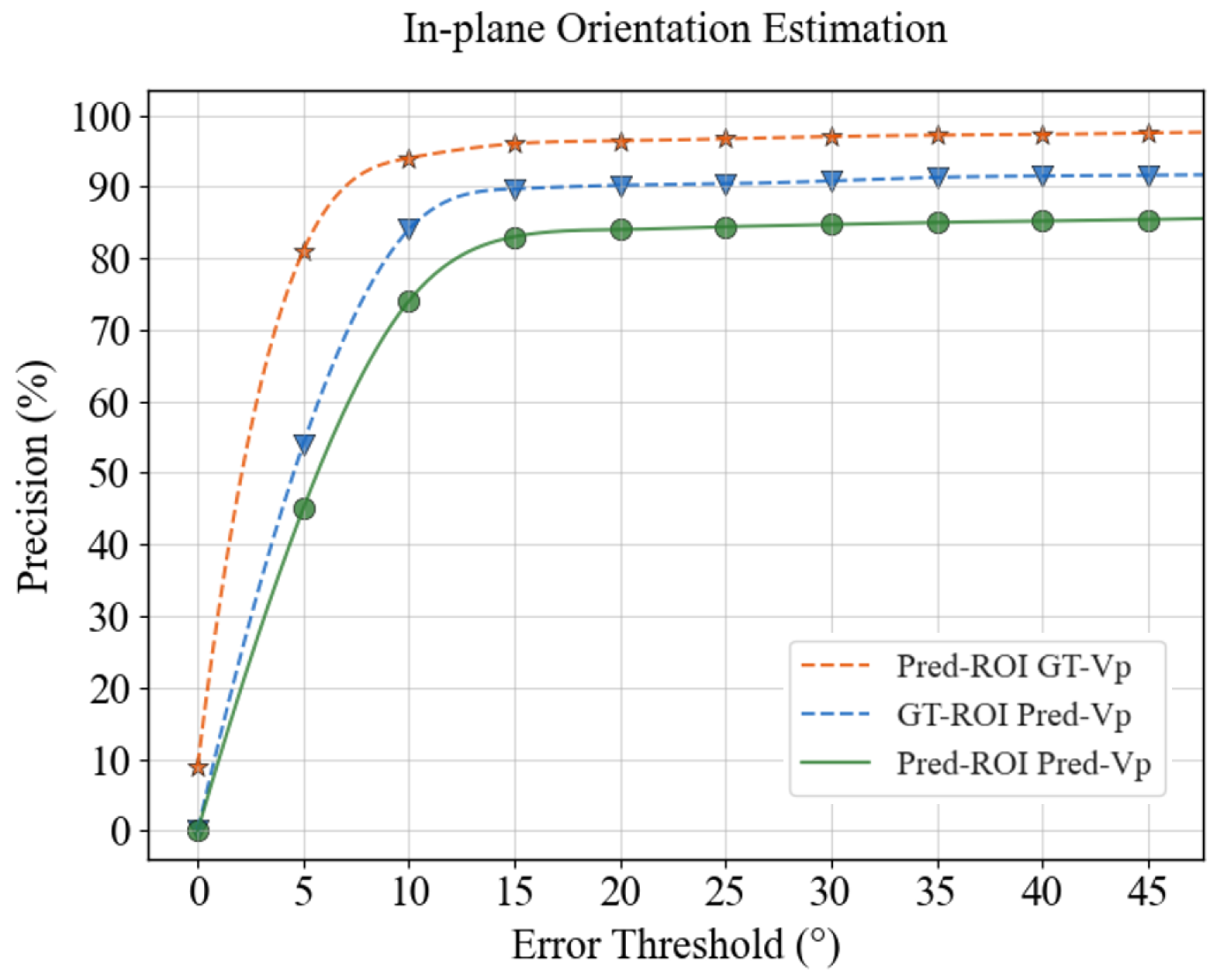

Building on the results of viewpoint retrieval, we proceed to evaluate the impact of viewpoint accuracy on the in-plane rotation estimation module. Specifically, we compare two conditions: using predicted viewpoints retrieved from the codebook and using ground-truth viewpoints derived from annotations. As shown in

Figure 6, the proposed in-plane rotation regressor achieves 74% accuracy within a ≤10° error margin when using predicted viewpoints and ROIs, and surpasses 90% accuracy when ground-truth viewpoints are provided. These results confirm that in-plane rotation estimation is highly dependent on the quality of viewpoint retrieval, underscoring its critical role within the decoupled rotation estimation pipeline.

Overall evaluation of the proposed method: To further validate the effectiveness of the proposed method in practical 6D pose estimation tasks, we conducted a systematic evaluation of the complete model on the LINEMOD (LM) dataset.

Table 2 shows our method achieving 94.8% ADD(-S) with Fast R-CNN ROIs and 97.6% with ground-truth ROIs, highlighting robustness to detector errors.

From the results in the table, it can be observed that when using Fast R-CNN as the object detector to provide the ROI (Pred-ROI) for testing, the model achieved an ADD(-S) accuracy of 94.8%. This demonstrates that, when combined with existing detectors, the proposed pose estimation method exhibits strong practicality and robustness. Furthermore, when the input is the ground truth annotated ROI (GT-ROI) provided by the dataset, the accuracy improves to 97.6%, reflecting the performance upper limit of the proposed network under high-quality input conditions.

It is worth noting that, although the introduction of the object detector introduces some errors, the overall performance of the model only shows a slight decrease. This indicates that the sub-modules, particularly the rotation estimation component, possess strong adaptability and stability under varying input quality. This result also suggests that the proposed method has good potential for real-world applications.

5.3. Comparison Experiments on LM-O and YCB-V

On LM-O, our method achieves 65.3% ADD(-S), surpassing GDR-Net (62.2%) due to its decoupled design mitigating rotation errors under occlusion. On YCB-V, it excels with 65.9% ADD(-S) and 92.4% AUC of ADD-S, outperforming baselines in multi-object scenarios. Some qualitative results are shown in

Figure 7.

In the experiments, the proposed method is systematically compared with several state-of-the-art approaches, primarily those based on monocular RGB data. To ensure a fair comparison, we used Faster R-CNN [

43] to extract 2D features from RGB images for ROI extraction in the LM-O dataset tests. For the YCB-V dataset, we used the publicly available detection results provided by FCOS [

44] as input to adapt to the detector configuration of this dataset.

Table 3 presents the quantitative comparison results between the proposed method and current state-of-the-art approaches on the LM-O dataset, with ADD(-S) used as the unified performance metric. The best results of GDR-Net are also included, providing a strong reference benchmark for performance comparison. The proposed method can be extended to other unseen instances with available CAD models, and does not address category-level generalization.

The results in the table indicate that the proposed method outperforms other approaches across most object categories. In particular, it demonstrates superior robustness and estimation accuracy on objects with complex shapes or significant occlusion, such as Ape, Duck, and Holepuncher. Moreover, for symmetric objects such as Eggbox and Glue, although traditional methods have certain advantages in handling symmetry, the proposed method still achieves further performance improvements on Glue, demonstrating its strong adaptability to symmetry-related challenges. Overall, the proposed method achieved an average accuracy of 65.3% on the LM-O dataset, representing an improvement of 3.1 percentage points over GDR-Net. This clearly demonstrates the stability and robustness of the proposed approach under complex occlusion and challenging interference conditions.

To further evaluate the few-shot capability of our method, we compare it with several recently proposed state-of-the-art approaches. While these methods generally achieve higher accuracy when trained with large-scale real data, for a fair comparison under the same limited supervision setting, all baseline models are retrained (or reported) using 600 real images, consistent with our training setup. We use the official implementations and follow the training protocols recommended by the authors as closely as possible, only reducing the amount of real training data to ensure a fair comparison under the same supervision budget.

The quantitative results are shown in

Table 4. Under this constrained training regime, all baseline methods experience significant performance drops, with average accuracies of 35.9%, 38.2%, 40.5%, 44.7%, and 48.3%, respectively. In contrast, our method maintains consistently strong performance across all object categories, achieving an average accuracy of 65.3% on the LM-O dataset. This highlights the superior generalization ability of our proposed design, particularly in low-data scenarios with occlusion and symmetry challenges.

Table 5 presents the quantitative comparison results between the proposed method and current state-of-the-art approaches on the YCB-V dataset. The AUC values reported in the table are computed using full-point interpolation.

The results in the table show that PVNet and CosyPose did not report complete ADD(-S) or AUC of ADD-S results in their original papers, which limits the comprehensiveness of the comparison. In contrast, RePose and GDR-Net serve as strong baseline methods with competitive performance. The proposed method demonstrates superior performance across all metrics: achieving 65.9% on ADD(-S), 92.4% on AUC of ADD-S, and 86.9% on AUC of ADD(-S), which represent improvements of 5.8%, 0.8%, and 2.5% over GDR-Net, respectively. In summary, the proposed method demonstrates superior pose estimation performance on the YCB-V dataset, maintaining high accuracy in typical multi-object and occlusion scenarios. It also exhibits strong generalization ability and practical applicability.

Similarly, we extend the comparison on the YCB-V dataset under the few-shot setting, where all baseline methods are retrained (or reported) using only 600 real images, consistent with our training setup. The results are summarized in

Table 6. Under this limited supervision, all baseline methods suffer noticeable performance drops, with ADD(-S) scores ranging from 33.7% to 43.5%. In contrast, our method achieves 65.9% ADD(-S) and attains the highest scores in both AUC of ADD-S (92.4%) and AUC of ADD(-S) (86.9%). These results further demonstrate the robustness and effectiveness of our approach in real-world scenarios where collecting large-scale annotated real data is costly or impractical.

We further report the AUC of ADD(-S) under a much stricter maximum threshold of 1 cm to evaluate high-precision pose estimation. Compared with the commonly used 10 cm threshold, this metric significantly reduces absolute scores for all methods. However, as shown in

Table 7, our method consistently maintains a clear margin over all baselines, indicating that the proposed approach is not merely benefiting from a loose tolerance, but is capable of producing more accurate and stable poses under stringent conditions.

5.4. Additional Experiments

Edge Deployment Feasibility and Memory Analysis: To assess the feasibility of deploying the proposed framework on resource-constrained edge devices, we conducted a detailed memory profiling of its main components during inference on an RTX 4060 GPU.

Table 8 summarizes the memory footprint of the rotation branch, translation branch, viewpoint codebook, and intermediate feature maps. The analysis shows that the viewpoint codebook and dense geometric feature extraction dominate memory usage, together accounting for over 63% of the total 4.3 GB consumption.

This highlights the primary bottlenecks for edge deployment. While the current unoptimized PyTorch implementation is not suitable for direct use on typical edge chips, the results provide valuable guidance for targeted optimization. Potential strategies include backbone pruning, codebook compression, mixed-precision inference, and offline viewpoint precomputation, all of which could substantially reduce memory and power requirements. Although direct power measurement on edge hardware remains future work, inference latency and memory consumption serve as practical proxy indicators for expected energy usage. These insights lay a foundation for the development of an edge-optimized variant of the proposed framework.

Impact of Distance Factor on Feature Matching Consistency Across Viewpoints: This study systematically analyzes the effect of the distance factor k on feature matching accuracy and consistency under different viewpoint conditions. For each value of k, images are rendered at the corresponding distances from fixed viewpoints, and feature vectors are extracted accordingly. The extracted features are then matched against the reference feature vectors of the same viewpoints using cosine similarity, enabling a quantitative evaluation of how variations in the distance factor influence feature representation consistency and matching performance.

The experiments are conducted on a viewpoint feature matching benchmark, where the reference feature vector of each viewpoint serves as the matching standard to ensure consistency and reproducibility. For each value of k, multiple samples are evaluated and the results are averaged to improve the reliability of the experimental conclusions.

The experimental results show that when

, the cosine similarity of feature matching reaches its maximum value of 0.99, indicating that this distance factor achieves optimal matching performance while maintaining high feature representation consistency (see

Figure 8). In contrast, when

k is either too small or too large, the cosine similarity decreases significantly, exhibiting a clear unimodal trend.

In summary, this experiment verifies the critical role of distance factor selection in viewpoint feature matching and provides quantitative justification for the parameter settings adopted in the subsequent experiments of this work.

Model Capacity and Overfitting Analysis: To further assess the potential risk of overfitting under limited real training data and to analyze the contribution of different components in our framework, we conduct a complementary experiment by constructing a lightweight variant of the proposed model. Specifically, we reduce the channel width of the convolution layers and remove redundant fully connected layers in the translation estimation branch, resulting in an approximately 10× reduction in parameters (from 1.5 M to 0.15 M), while keeping the rotation branch unchanged.

We evaluate both models on the LM dataset using the ADD(-S) metric. As shown in

Table 9, the lightweight variant achieves an accuracy of 88.6%, compared to 94.8% for the original model. Although the absolute performance decreases, the degradation remains relatively limited (about 6.2%) despite a 90% reduction in parameters, and the overall performance trend is preserved. These results suggest that the proposed framework does not critically rely on excessive model capacity, and that its robustness primarily stems from the decoupled design and geometry-aware formulation rather than from simply increasing the number of parameters.

6. Conclusions, Limitations and Future Work

This paper proposes a decoupled 6D pose estimation framework for few-shot learning, achieving state-of-the-art performance on the LINEMOD, LM-O, and YCB-V datasets, with only 600 real images required per object.

1. Limitations: This method relies on accurate CAD models, which, to some extent, limits its applicability. The proposed framework is mainly suitable for common scenarios with moderate complexity. Under conditions of heavy occlusion, significant scale ambiguity, or weak texture, the performance of the decoupled rotation and translation estimation strategy may degrade noticeably. In addition, the overall accuracy of the framework partially depends on the performance of the object detector and exhibits limited generalization to unseen object categories.

2. Future Work: Research could explore integrating more robust detection methods, developing end-to-end optimization strategies, and reducing dependency on CAD models, thereby enhancing the framework’s robustness in complex scenarios and its generalization to novel objects.