A Mobile Triage Robot for Natural Disaster Situations

Abstract

1. Introduction

2. Materials and Methods

2.1. Active Methodological Approach

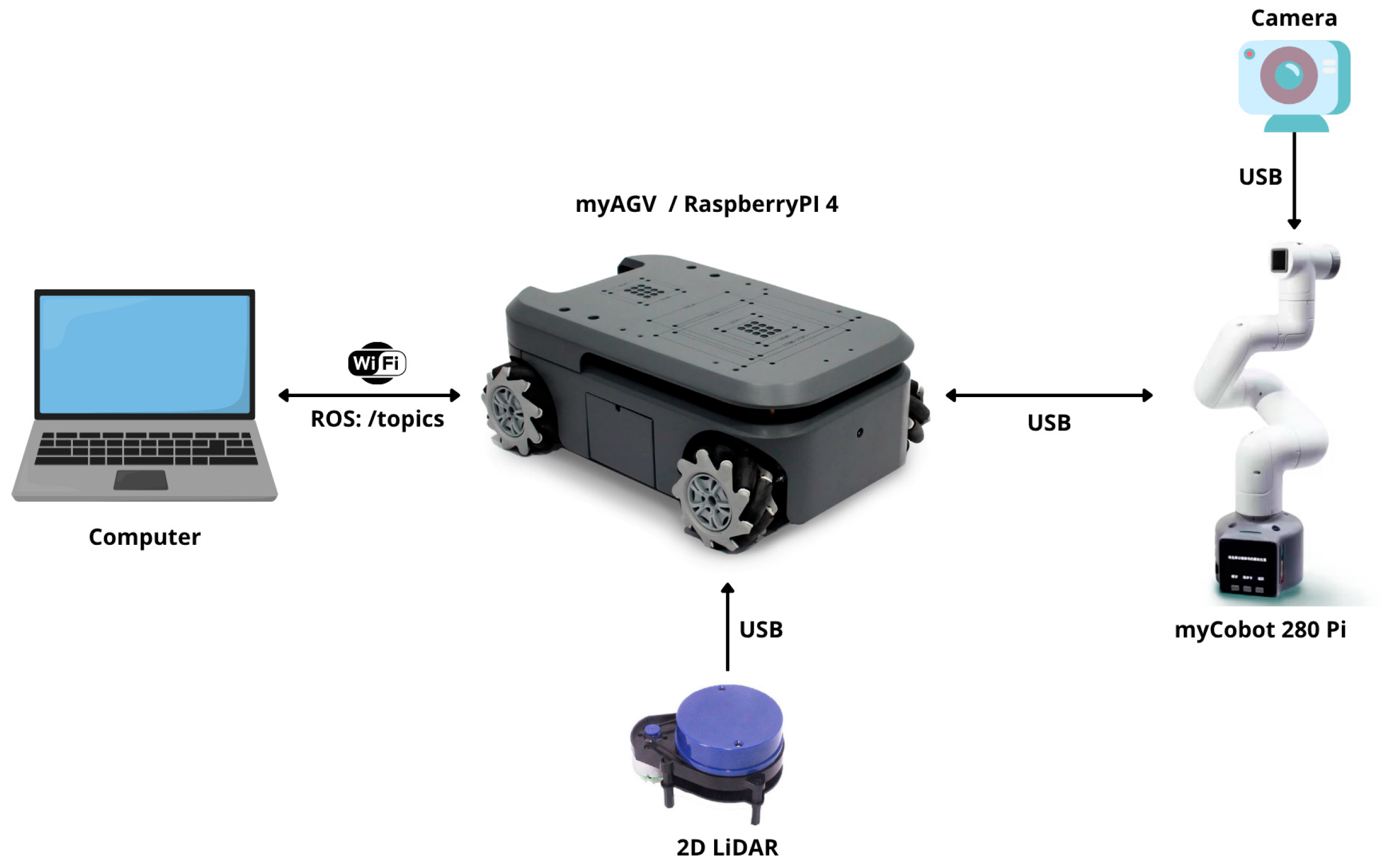

2.2. Overall System Design

- Holonomic mobile robot (myAGV): Designed with SLAM algorithms and autonomous navigation capabilities for exploration of unknown environments and victim detection.

- 6-DoF robotic manipulator (myCobot 280 Pi): Equipped with a panoramic camera as the end-effector, enabling facial recognition and the remote measurement of vital signs through rPPG techniques.

2.3. System Operation

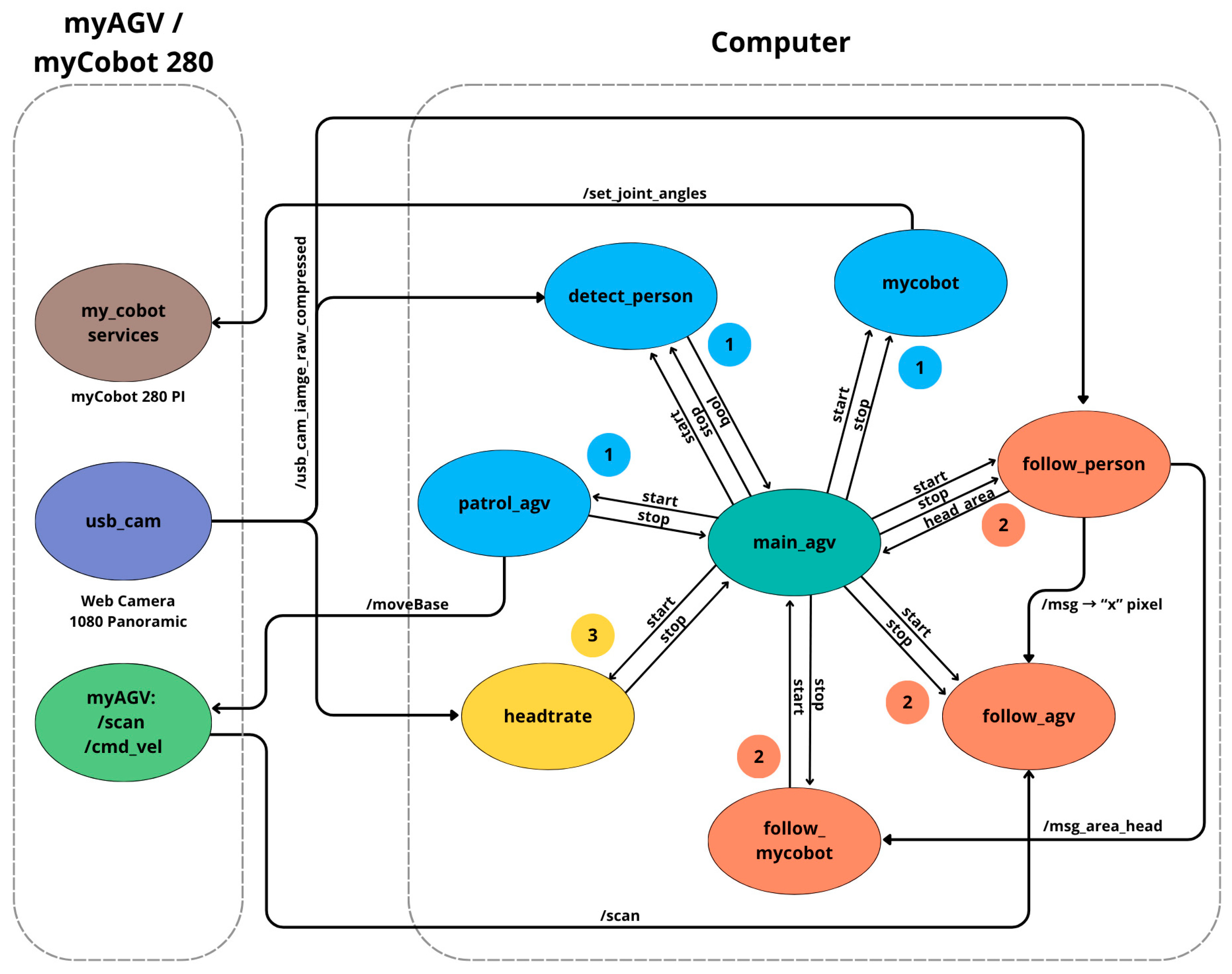

2.4. ROS Architecture Design for Triage

- main_agv: Managed exploration, victim detection, and measurement of vital signs.

- patrol_agv: Implemented SLAM to generate a map of the real environment and plan trajectories.

- mycobot: Executed a set of motion sequences with the robotic manipulator to scan the entire zone using the camera.

- detect_person: Applied computer vision to identify victims.

- follow_person, follow_agv, follow_mycobot: Operated jointly to perform trajectory replanning after victim detection and to position the system for measuring vital signs.

- Headtrate: Measured the vital signs of the victim using rPPG.

- my_cobot_services: Managed service calls for controlling the movement of the robotic manipulator.

- usb_cam: Streamed real-time compressed video to the computer.

- MyAGV;/scan,/cmd_vel: Provided LiDAR data and robot control, respectively.

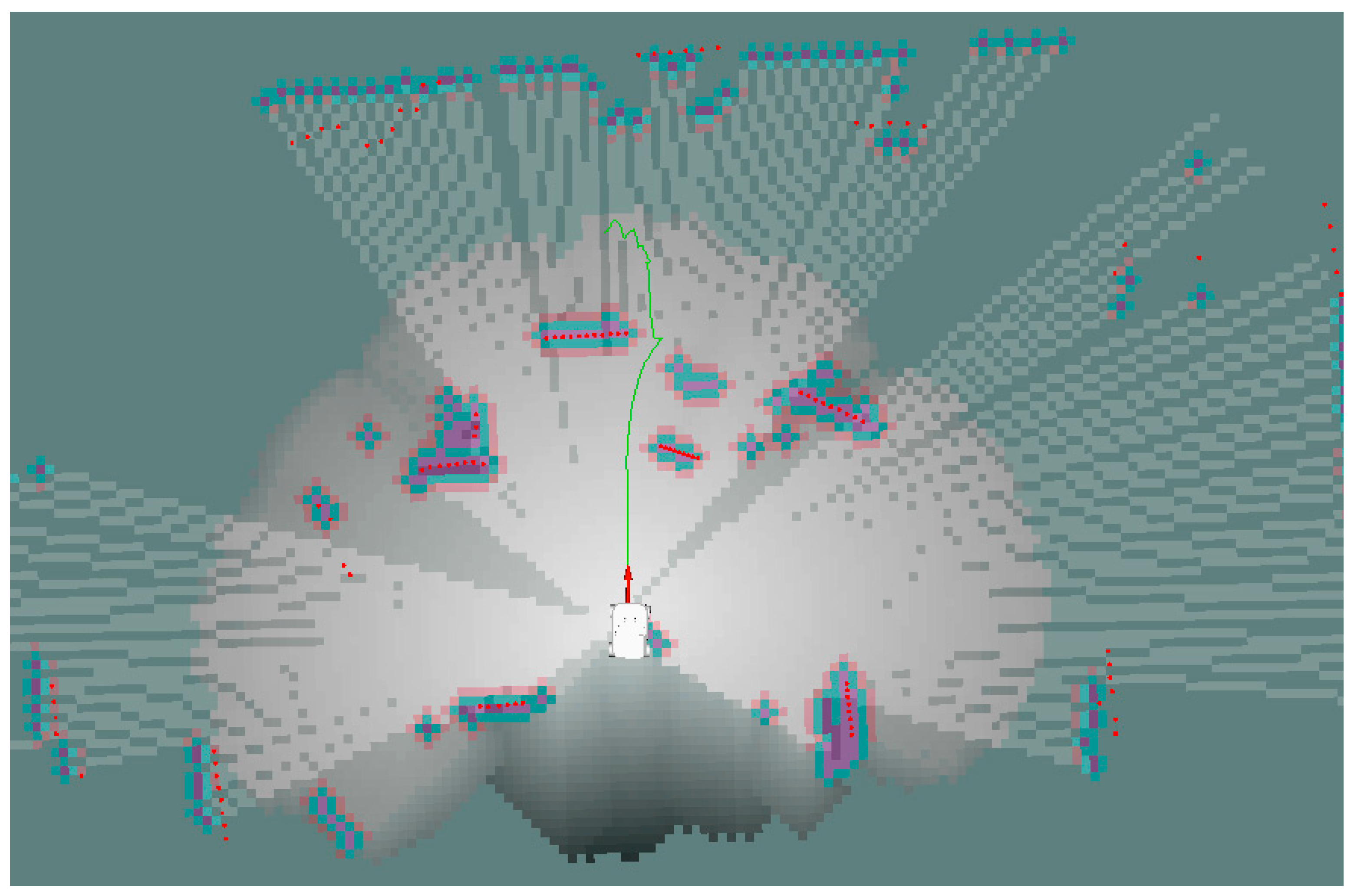

2.4.1. Autonomous Navigation and Victim Detection

2.4.2. Victim Detection and Trajectory Replanning

- is the direction with the greatest available free space,

- represents the distances measured by the LiDAR along the frontal semicircle, and

- indicates that the selected angle corresponds to the maximum measured distance.

2.4.3. Trajectory Replanning Through Computer Vision

- Left zone (0–240 px): If the victim was detected in this zone, the robot would rotate to the right until the target was centered in the image.

- Central zone (240–400 px): Within this range, the victim would be correctly aligned, so the robot would move forward toward the target.

- Right zone (400–640 px): If the victim was detected in this zone, the robot would rotate to the left until the image was centered and then move forward.

2.4.4. Coordination Between Navigation and Manipulation

2.5. Vital-Sign Estimation Methodology

2.6. Educational Value of the Project

- integrating hardware and software effectively,

- solving complex and unstructured problems,

- managing time and planning toward specific objectives,

- experimenting under conditions of technical uncertainty, and

- applying engineering thinking and evidence-based decision-making.

3. Results

3.1. Autonomous Frontier-Based Exploration

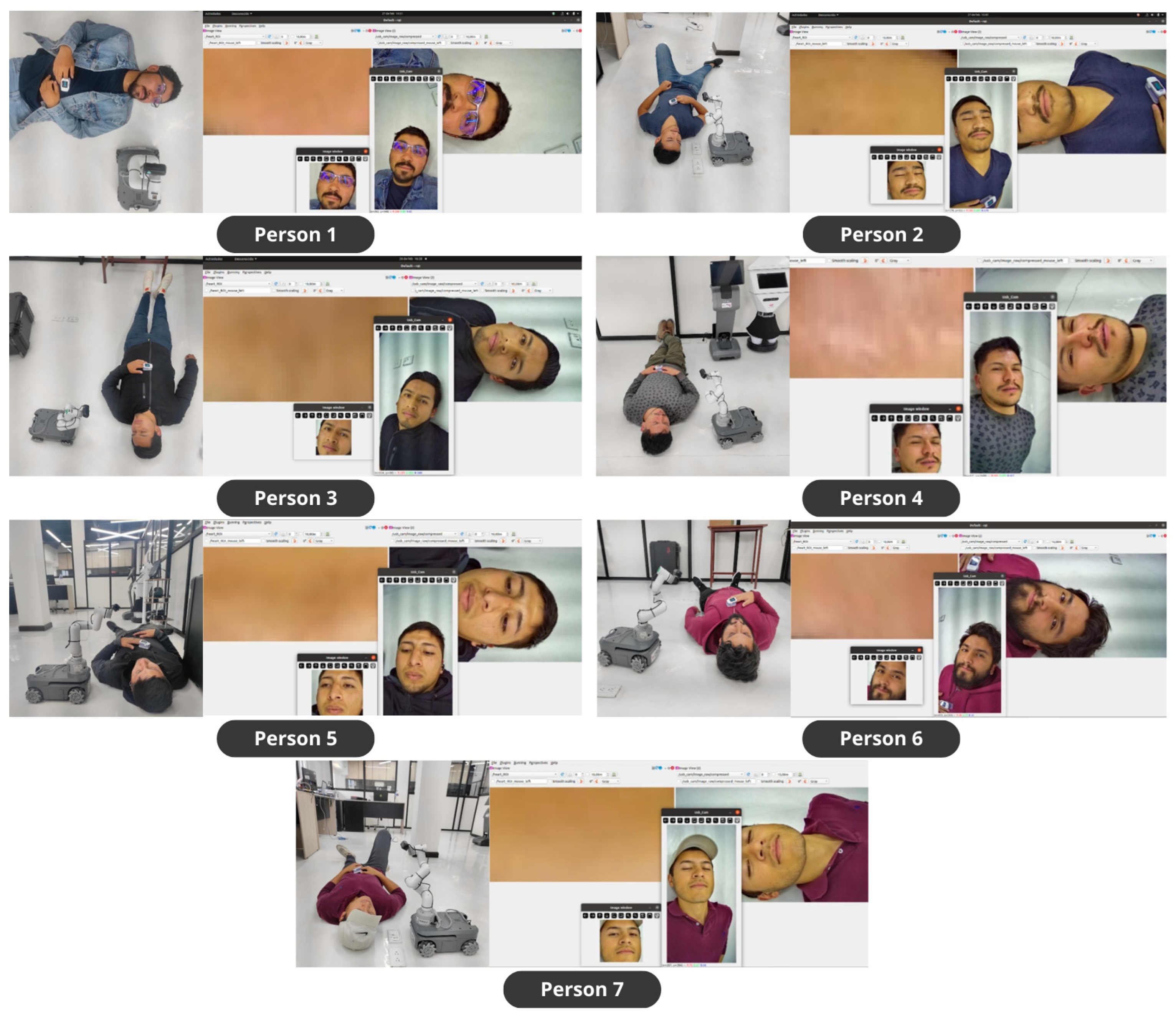

3.2. Person Detection

3.3. Computer Vision-Guided Navigation

3.4. Measurement of Vital Signs

3.5. Technical Analysis

- Integrate theory and practice in real-world scenarios.

- Develop systemic and interdisciplinary thinking.

- Strengthen core technical skills in robotics, vision, and sensing.

- Exercise autonomy, innovation and real-world problem-solving.

3.6. Educational Analysis

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Peleg, K.; Reuveni, H.; Stein, M. Earthquake Disasters—Lessons to Be Learned. Isr. Med. Assoc. J. 2002, 4, 361–365. [Google Scholar]

- Senthilkumaran, R.K.; Prashanth, M.; Viswanath, H.; Kotha, S.; Tiwari, K.; Bera, A. ARTEMIS: AI-Driven Robotic Triage Labeling and Emergency Medical Information System. arXiv 2023, arXiv:2309.08865. [Google Scholar]

- Cobos-Torres, J.-C.; Alhama Blanco, P.J.; Sánchez, A.R.; Abderrahim, M. Desarrollo de Un Sistema Robótico de Triaje Rápido Para Situaciones de Catástrofe. Figshare. 2019. Available online: https://figshare.com/articles/journal_contribution/Desarrollo_de_un_sistema_rob_tico_de_triaje_r_pido_para_situaciones_de_cat_strofe/7817810/1 (accessed on 23 January 2026).

- Sharma, D.; Rashno, E.; Zulkernine, F.; El Khodary, E.; Beninger, M.; Almeida, R.; Tao, J.; Alaca, F.; Elgazzar, K. Triage-Bot: An Assistive Triage Framework. In 2024 IEEE International Conference on Digital Health (ICDH), Shenzhen, China, 7–13 July 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 138–140. [Google Scholar]

- Ádám, N.; Vaľ’Ko, D.; Chovancová, E. Using Modern Hardware and Software Solutions for Mass Casualty Incident Management. In 2023 IEEE 27th International Conference on Intelligent Engineering Systems (INES), Nairobi, Kenya, 26–28 July 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 000183–000188. [Google Scholar]

- Saha, A.; Islam, M.Z.; Mondal, S. Hazard Hunter: A Low Cost Search and Rescue Robot. In 2024 International Conference on Signal Processing, Computation, Electronics, Power and Telecommunication (IConSCEPT), Karaikal, India, 4–5 July 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 1–6. [Google Scholar]

- Xin, C.; Qiao, D.; Hongjie, S.; Chunhe, L.; Haikuan, Z. Design and Implementation of Debris Search and Rescue Robot System Based on Internet of Things. In 2018 International Conference on Smart Grid and Electrical Automation (ICSGEA), Changsha, China, 9–10 June 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 303–307. [Google Scholar]

- Narayana Raju, P.J.; Gaurav Pampana, V.; Pandalaneni, V.; Gugapriya, G.; Baskar, C. Design and Implementation of a Rescue and Surveillance Robot Using Cross-Platform Application. In 2022 International Conference on Inventive Computation Technologies (ICICT), Lalitpur, Nepal, 20–22 July 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 644–648. [Google Scholar]

- Reghunath, S.; Mony, S.S.; Sreeyuktha, M.; Saranya, R. Human Detection Robot; AIJR Publisher: Balrampur, India, 2023; pp. 304–310. [Google Scholar]

- Mösch, L.; Pokee, D.Q.; Barz, I.; Müller, A.; Follmann, A.; Moormann, D.; Czaplik, M.; Pereira, C.B. Automated Unmanned Aerial System for Camera-Based Semi-Automatic Triage Categorization in Mass Casualty Incidents. Drones 2024, 8, 589. [Google Scholar] [CrossRef]

- Lu, J.; Wang, X.; Chen, L.; Sun, X.; Li, R.; Zhong, W.; Fu, Y.; Yang, L.; Liu, W.; Han, W. Unmanned Aerial Vehicle Based Intelligent Triage System in Mass-Casualty Incidents Using 5G and Artificial Intelligence. World J. Emerg. Med. 2023, 14, 273. [Google Scholar] [CrossRef] [PubMed]

- Kuswadi, S.; Adji, S.I.; Sigit, R.; Tamara, M.N.; Nuh, M. Disaster Swarm Robot Development: On Going Project. In 2017 International Conference on Electrical Engineering and Informatics (ICELTICs), Banda Aceh, Indonesia, 18–20 October 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 45–50. [Google Scholar]

- Pająk, A.; Przybyło, J.; Augustyniak, P. Touchless Heart Rate Monitoring from an Unmanned Aerial Vehicle Using Videoplethysmography. Sensors 2023, 23, 7297. [Google Scholar] [CrossRef]

- Cruz Ulloa, C.; Prieto Sánchez, G.; Barrientos, A.; Del Cerro, J. Autonomous Thermal Vision Robotic System for Victims Recognition in Search and Rescue Missions. Sensors 2021, 21, 7346. [Google Scholar] [CrossRef]

- Kerr, E.; McGinnity, T.M.; Coleman, S.; Shepherd, A. Human Vital Sign Determination Using Tactile Sensing and Fuzzy Triage System. Expert Syst. Appl. 2021, 175, 114781. [Google Scholar] [CrossRef]

- Tian, X.; Zheng, Y.; Huang, L.; Bi, R.; Chen, Y.; Wang, S.; Su, W. LightSeek-YOLO: A Lightweight Architecture for Real-Time Trapped Victim Detection in Disaster Scenarios. Mathematics 2025, 13, 3231. [Google Scholar] [CrossRef]

- Lee, S.; Kim, H.; Lee, B. An Efficient Rescue System with Online Multi-Agent SLAM Framework. Sensors 2019, 20, 235. [Google Scholar] [CrossRef]

- Ramezani, M.; Amiri Atashgah, M.A. Energy-Aware Hierarchical Reinforcement Learning Based on the Predictive Energy Consumption Algorithm for Search and Rescue Aerial Robots in Unknown Environments. Drones 2024, 8, 283. [Google Scholar] [CrossRef]

- Shafkat Tanjim, M.S.; Rafi, S.A.; Oishi, A.N.; Barua, S.; Dey, H.C.; Babu, M.R. Image Processing Intelligence Analysis for Robo-Res 1.0: A Part of Humanoid Rescue-Robot. In 2020 IEEE Region 10 Symposium (TENSYMP), Dhaka, Bangladesh, 5–7 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1229–1232. [Google Scholar]

- Grandia, R.; Jenelten, F.; Yang, S.; Farshidian, F.; Hutter, M. Perceptive Locomotion Through Nonlinear Model-Predictive Control. IEEE Trans. Robot. 2023, 39, 3402–3421. [Google Scholar] [CrossRef]

- Robledo-Rella, V.; Neri, L.; García-Castelán, R.M.G.; Gonzalez-Nucamendi, A.; Valverde-Rebaza, J.; Noguez, J. A Comparative Study of a New Challenge-Based Learning Model for Engineering Majors. Front. Educ. 2025, 10, 1545071. [Google Scholar] [CrossRef]

- Ramírez de Dampierre, M.; Gaya-López, M.C.; Lara-Bercial, P.J. Evaluation of the Implementation of Project-Based-Learning in Engineering Programs: A Review of the Literature. Educ. Sci. 2024, 14, 1107. [Google Scholar] [CrossRef]

- Lavado-Anguera, S.; Velasco-Quintana, P.-J.; Terrón-López, M.-J. Project-Based Learning (PBL) as an Experiential Pedagogical Methodology in Engineering Education: A Review of the Literature. Educ. Sci. 2024, 14, 617. [Google Scholar] [CrossRef]

- Farizi, S.F.; Umamah, N.; Soepeno, B. The Effect of the Challenge Based Learning Model on Critical Thinking Skills and Learning Outcomes. Anatol. J. Educ. 2023, 8, 191–206. [Google Scholar] [CrossRef]

- Oo, T.Z.; Kadyirov, T.; Kadyjrova, L.; Józsa, K. Design-Based Learning in Higher Education: Its Effects on Students’ Motivation, Creativity and Design Skills. Think. Skills Creat. 2024, 53, 101621. [Google Scholar] [CrossRef]

- Neumann, M.; Baumann, L. Agile Methods in Higher Education: Adapting and Using EduScrum with Real World Projects. In 2021 IEEE Frontiers in Education Conference (FIE), Lincoln, NE, USA, 13–16 October 2021; IEEE: Piscataway, NJ, USA, 2021. [Google Scholar]

- Macenski, S.; Jambrecic, I. SLAM Toolbox: SLAM for the Dynamic World. J. Open Source Softw. 2021, 6, 2783. [Google Scholar] [CrossRef]

- Elephant Robotics. myagv_ros: ROS Package for MyAGV Mobile Robot. 2020. Available online: https://github.com/elephantrobotics/myagv_ros (accessed on 23 January 2026).

- Hörner, J. Map-Merging for Multi-Robot System. Bachelor’s Thesis, Charles University in Prague, Faculty of Mathematics and Physics, Prague, Czech Republic, 2016. [Google Scholar]

- ultralytics YOLOv8 Pose Models. Available online: https://github.com/ultralytics/ultralytics/issues/1915 (accessed on 16 March 2025).

- Cobos Torres, J.C.; Abderrahim, M. Measuring Heart and Breath Rates by Image Photoplethysmography Using Wavelets Technique. IEEE Lat. Am. Trans. 2017, 15, 1864–1868. [Google Scholar] [CrossRef]

- Wilkerson, S.A.; Gadsden, S.A.; Lee, A.; Vandemark, R.N.; Hill, E.; Gadsden, A.D. ROS as an Undergraduate Project-Based Learning Enabler; American Society for Engineering Education: Salt Lake, UT, USA, 2018. [Google Scholar]

- Wilkerson, S.; Forsyth, J.; Korpela, C. Project Based Learning Using the Robotic Operating System (ROS) for Undergraduate Research Applications. In Proceedings of the 2017 ASEE Annual Conference & Exposition Proceedings, Columbus, OH, USA, 24 June 2017. ASEE Conferences. [Google Scholar][Green Version]

- Hernandez, J.L.; Roman, G.; Saldana, C.K.; Rios, C.A. Application of the Challenge-Based Learning Methodology, as a Trigger for Motivation and Learning in Robotics. In 2020 X International Conference on Virtual Campus (JICV), Tetouan, Morocco, 3–5 December 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1–4. [Google Scholar]

- Bavle, H.; Sanchez-Lopez, J.L.; Cimarelli, C.; Tourani, A.; Voos, H. From SLAM to Situational Awareness: Challenges and Survey. Sensors 2023, 23, 4849. [Google Scholar] [CrossRef]

- Caputo, S.; Castellano, G.; Greco, F.; Mencar, C.; Petti, N.; Vessio, G. Human Detection in Drone Images Using YOLO for Search-and-Rescue Operations. In AIxIA 2021—Advances in Artificial Intelligence; Springer: Cham, Switzerland, 2022; pp. 326–337. [Google Scholar]

- Tsai, P.-F.; Liao, C.-H.; Yuan, S.-M. Using Deep Learning with Thermal Imaging for Human Detection in Heavy Smoke Scenarios. Sensors 2022, 22, 5351. [Google Scholar] [CrossRef] [PubMed]

- Ben-Shoushan, R.; Brook, A. Fused Thermal and RGB Imagery for Robust Detection and Classification of Dynamic Objects in Mixed Datasets via Pre-Trained High-Level CNN. Remote Sens. 2023, 15, 723. [Google Scholar] [CrossRef]

- Huang, H.-W.; Chen, J.; Chai, P.R.; Ehmke, C.; Rupp, P.; Dadabhoy, F.Z.; Feng, A.; Li, C.; Thomas, A.J.; da Silva, M.; et al. Mobile Robotic Platform for Contactless Vital Sign Monitoring. Cyborg Bionic Syst. 2022, 2022, 9780497. [Google Scholar] [CrossRef] [PubMed]

- Ben Hazem, Z.; Guler, N.; Altaif, A.H. Model-Free Trajectory Tracking Control of a 5-DOF Mitsubishi Robotic Arm Using Deep Deterministic Policy Gradient Algorithm. Discov. Robot. 2025, 1, 4. [Google Scholar] [CrossRef]

| Etapa | Student Role | Pedagogical Purpose | Key Learning Outcome | Evaluation Method |

|---|---|---|---|---|

| 1. Proposed Challenge | Assumes the task of configuring and programming a robot capable of performing autonomous triage of victims in a simulated environment. | Motivate through a real-world problem (challenge-based learning) and connect robotics with social impact. | Understanding the context, defining the problem, formulating objectives and functional requirements. | Review of the problem statement and initial proposal: clarity of the challenge, relevance of the approach, and technical justification. |

| 2. Technical Investigation | Analyzes hardware, sensors, and software options; compares architectures and vision/control tools in ROS. | Develop autonomy and critical thinking through guided inquiry. | Evidence-based selection of components (sensors, camera, actuators, ROS), understanding principles of detection and triage. | Technical report or research logbook: relevance, rigor, and justification of technological decisions. |

| 3. System Design and Integration | Develops the system architecture; programs navigation, detection, and vital-sign measurement nodes. | Foster active learning through practical, experiential integration. | Competence in hardware–software integration, modular design, technical problem-solving, and collaborative work. | Practical performance assessment and peer review of the functional prototype: architectural coherence and basic operation. |

| 4. Validation and Adjustment | Performs tests, debugs errors, calibrates sensors, and optimizes robot behavior. | Apply the scientific method: hypothesis, testing, and iterative improvement. | Development of analytical thinking, technical autonomy, and continuous-improvement skills. | Experimental validation rubric: robot performance (victim detection, autonomy, vital-sign measurement) and evidence of iteration and refinement. |

| 5. Final Evaluation and Reflection | Evaluates the overall performance of the system and proposes improvements or future versions. | Promote metacognition and authentic assessment of learning. | Critical thinking, technical communication, and self-regulation of learning. | Final presentation and defense of the project, including robot demonstration and technical report delivery. Comprehensive triage-flow assessment, peer review, innovation, and justification of improvements. |

| Metric | SpO2 Value | BPM Value |

|---|---|---|

| Sample size | 210 | 210 |

| Arithmetic mean | −1.2143 | 0.1095 |

| 95% confidence interval (mean) | −1.7152 to −0.7134 | −0.07879 to 0.2978 |

| P (H0: mean = 0) | <0.0001 | 0.2529 |

| Lower limit | −8.4309 | −2.603 |

| 95% confidence interval (lower) | −9.2883 to −7.5735 | −2.9260 to −2.2813 |

| Upper limit | 6.0023 | 2.8227 |

| 95% confidence interval (upper) | 5.1450 to 6.8597 | 2.5003 to 3.1450 |

| Regression equation | y = 22.1236 − 0.2401x | y = 2.6192 − 0.03554x |

| Phase | Challenge | Solution | Methodology | Node(s)/Technology Used |

|---|---|---|---|---|

| Phase 1 Autonomous navigation based on frontier detection and SLAM, along with victim search | Exploring an unknown environment without human intervention. | Implementing SLAM for autonomous mapping and exploration. | PBL/CBL | patrol_agv, move_base, explore_lite |

| Detecting people in real time during exploration. | Integrating a model to identify human bodies and estimate posture. | PBL | detect_person, YOLOv8-pose | |

| Phase 2 Computer vision-guided navigation with obstacle avoidance | Replanning the trajectory upon detecting a victim. | Coordinating modules for computer vision-based navigation and real-time obstacle avoidance. | PBL | follow_person, follow_agv, follow_mycobot |

| Avoiding obstacles while keeping the victim within the field of view. | Combining LiDAR data and robotic manipulator control to maintain continuous vision. | PBL | LiDAR, camera + manipulator | |

| Coordinating navigation and computer vision. | Synchronizing movement, visual tracking, and camera positioning. | PBL | main_agv, manipulator, camera | |

| Phase 3 Contactless measurement of vital signs using rPPG | Measuring vital signs without placing sensors directly on the victim. | Researching and applying the rPPG technique to estimate heart rate and SpO2 without contact. | CBL/self-directed study | Heartrate, rPPG algorithm |

| Positioning the camera correctly in front of the victim’s face. | Adjusting the camera to 0.3 m from the victim’s face using the manipulator, with a defined ROI. | PBL | Mycobot, usb_cam, frontal ROI | |

| Stabilizing the signal to obtain reliable measurements. | Adjusting lighting, focus, and distance to minimize interference and optical noise. | PBL | Computer vision + signal processing |

| Stage | Delivered Evidence | Results |

|---|---|---|

| 1. Proposed Challenge | Document detailing the analysis and definition of the problem, objectives, scope, and importance of the project, and a functional requirements matrix. | The main problem of the autonomous triage system was established, defining the objectives, scope, and relevance of the project in emergency contexts. The student identified the technical requirements needed for prototype development. |

| 2. Technical Research | Technical logbook with a comparative analysis of sensors, modules, and robotic platforms available in the lab. Their SDKs and compatibility with ROS were considered as the basis for system development and integration. | The student evaluated options for available hardware, sensors, and robotic platforms. The most suitable components were selected, and the general system architecture in ROS was defined. |

| 3. System Design and Integration | Integration diagram of ROS nodes and communication configuration between the manipulator robot (myCobot), the mobile platform (myAGV), and the RGB camera used for visual perception. Fully assembled and operational prototype. | The myCobot manipulator and myAGV mobile platform were integrated and mechanically adapted. ROS control, communication, and perception nodes were configured using an RGB camera, achieving stable and coordinated system operation. |

| 4. Validation and Adjustment | Functional validation records, person-detection tests, autonomous navigation trials, and comparison of rPPG data obtained from the camera against a reference sensor. ROS parameter adjustments were made to improve accuracy and stability. | Person detection, autonomous navigation of the myAGV, and synchronization with the myCobot were validated. rPPG values showed good correlation with the physical sensor after calibration, resulting in stable system performance. |

| 5. Final Evaluation and Reflection | Final technical report, documented source code, system evaluation rubric, and peer-evaluation records. | The overall system performance was evaluated, confirming detection, navigation, and vital-sign estimation. The myAGV–myCobot setup demonstrated stable operation and synchronization within the ROS architecture. Peer evaluation provided feedback on technical execution and collaboration, identifying improvements for rPPG accuracy and motion response. |

| Stage | Difficulty and Student-Expressed Solution |

|---|---|

| 1. Proposed Challenge | “The task did not specify the hardware; I analyzed alternatives and selected the most suitable mobile platform, robotic arm, and camera for the system.” |

| 2. Technical Research | “The reviewed projects focused on basic control; I investigated the capabilities already available in the robot under ROS and adapted them to the proposed challenge to incorporate autonomous navigation.” |

| 3. System Design and Integration | “I had issues with synchronizing the system workflow, so I implemented an orchestrator node to coordinate the modules.” |

| 4. Validation and Adjustment | “The rPPG measurements were unstable; I adjusted lighting/distance until achieving consistent readings.” |

| 5. Final Evaluation and Reflection | “The complete triage sequence was validated, confirming the integration of the system as a functional solution.” |

| Study/System | Technologies Used | Capabilities | Limitations |

|---|---|---|---|

| Proposed system (YOLOv8-Pose + rPPG + SLAM) | YOLOv8-Pose, SLAM, rPPG, RGB camera | Accurate person and posture detection; autonomous navigation; contactless vital-sign measurement | Sensitive to low lighting; it requires a visible, well-lit face; limited performance under severe occlusions |

| YOLOv5 on drones | YOLOv5, aerial RGB camera | Fast aerial person detection; efficient for visual searches | It does not measure vital signs or perform autonomous navigation |

| Thermal Vision + YOLO | YOLOv4, thermal (IR) camera | Detection in smoky or low-visibility environments; contactless operation | It does not measure pulse; low resolution; no posture estimation |

| Dr. Spot (rPPG + RGB and IR camera) | rPPG with fixed RGB and IR camera mounted on robot | Highly accurate remote monitoring of heart rate, temperature, and respiration | Sensitive to low lighting; it requires a visible, well-lit face; limited performance under severe occlusions |

| Contact-based sensors | Pulse oximeters, thermometers, respiratory bands | Clinically accurate measurement of pulse, respiration, temperature, pressure, and SpO2 | It requires physical contact; slow for mass triage applications |

| Appearance | Condition/Limitation Identified | Possible Improvement/Future Research |

|---|---|---|

| Operating Environment | Evaluation carried out in simulated and controlled emergency scenarios | Progressive validation in larger, more dynamic environments |

| Environmental dynamics | SLAM-based and border-based scanning assumes mostly static environments. | Incorporating dynamic mapping and semantic perception |

| Navigation | Sensitive to moving obstacles and changes in the scene | Adaptive planning strategies |

| Visual Tracking | Stable operation at moderate speed (0.25 m/s); possible instability on uneven terrain | Dynamic adjustment in camera orientation based on orientation sensors |

| Obstacle Avoidance | Use of a fixed safety distance for simplicity and stability | Context-dependent adaptive safety margins |

| Vision (YOLOv8-Pose) | Capable of detecting human poses with partial occlusions; sensitive to unfavorable lighting or severe occlusions | Image pre-processing and controlled lighting and, in adverse scenarios, the incorporation of complementary sensors (RGB-D or thermal camera) for greater robustness. |

| rPPG Estimation | Dependent on good lighting and facial visibility | Controlled lighting and incorporation of thermal sensors |

| Sensors | The impact of noise and calibration of the RGB camera (rPPG/detection) and LiDAR (navigation) was not quantified | Noise modeling, recalibration and systematic verification of sensors |

| Scalability | Validation in areas of limited size due to mobile platform mobility restrictions | Platforms with greater locomotion capabilities on uneven terrain |

| Victim management | Sequential care for a single victim; without explicit prioritization. | Multi-objective management and prioritization criteria |

| ROS Architecture | Sequential execution using services; delays not formally assessed | Latency optimization and analysis in complex scenarios |

| Computational Load | Intensive processing performed outside the robotic platform | On-board execution of SLAM and detection, and on-demand rPPG, to reduce latency and reliance on external compute. |

| Sensing | Exclusive use of RGB vision | Integration of thermal or depth sensors |

| Cases of failure | If visual contact with the victim is lost, the system restarts the search from Phase I. | Recovery strategies and perceptual redundancy |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Angamarca-Avendaño, D.-A.; Zhañay-Salto, D.-A.; Cobos-Torres, J.-C. A Mobile Triage Robot for Natural Disaster Situations. Electronics 2026, 15, 559. https://doi.org/10.3390/electronics15030559

Angamarca-Avendaño D-A, Zhañay-Salto D-A, Cobos-Torres J-C. A Mobile Triage Robot for Natural Disaster Situations. Electronics. 2026; 15(3):559. https://doi.org/10.3390/electronics15030559

Chicago/Turabian StyleAngamarca-Avendaño, Darwin-Alexander, Diego-Alexander Zhañay-Salto, and Juan-Carlos Cobos-Torres. 2026. "A Mobile Triage Robot for Natural Disaster Situations" Electronics 15, no. 3: 559. https://doi.org/10.3390/electronics15030559

APA StyleAngamarca-Avendaño, D.-A., Zhañay-Salto, D.-A., & Cobos-Torres, J.-C. (2026). A Mobile Triage Robot for Natural Disaster Situations. Electronics, 15(3), 559. https://doi.org/10.3390/electronics15030559