1. Introduction

With the expansion of global fruit trade and increasingly stringent export standards, automated fruit inspection has become a critical component of modern agricultural supply chains. Packaging facilities must process large volumes of produce while maintaining efficient, consistent, and objective classification and grading. Among commercial fruits, mangoes pose particular challenges for automated inspection due to their irregular surface textures, non-uniform coloration, wide ripeness variations, and diverse surface defects. These characteristics impose high requirements on the robustness and generalization capability of vision-based inspection systems [

1,

2].

Conventional mango grading and defect inspection still rely heavily on manual visual assessment, which is labor-intensive, time-consuming, and inherently subjective. Such approaches are easily affected by inconsistent judgment and environmental factors such as illumination changes, often leading to unstable grading performance and reduced throughput. Consequently, there is a strong demand for automated inspection systems that can operate reliably at an industrial scale while minimizing human intervention [

3].

In recent years, deep learning-based computer vision techniques have become the dominant approach for automated fruit grading and surface defect analysis. Chuquimarca et al. [

4] provided a comprehensive survey of CNN-based methods for evaluating fruit appearance, size, and defects, along with commonly used real and synthetic datasets. Beyond conventional visual imaging, Chopra et al. [

5] integrated spectrophotometry with machine learning to develop an automated apple grading system, achieving 82% accuracy on validation data and 72% accuracy on real-world samples, demonstrating the potential of spectral information for fruit quality assessment.

In mango defect analysis, many studies focus on object detection and segmentation frameworks to localize surface defects. Methods based on YOLO [

6], Faster R-CNN, RetinaNet, and Mask R-CNN have been widely applied at the bounding-box or pixel levels. For instance, Matsui et al. [

7] enhanced a YOLO-based framework by introducing an improved loss function (SBCE) to better detect small surface defects, highlighting the importance of loss design. Wu et al. [

8] conducted five-category mango defect detection on nearly 50,000 images using a Mask R-CNN framework with an X101-FPN backbone, achieving an average precision (AP) of 67.2%.

Although detection and segmentation-based methods provide precise defect localization, they require extensive manual annotation, resulting in high cost and limited scalability. From a practical perspective, precise localization is not always necessary. In many studies [

9,

10], the primary objective is to determine whether a fruit should be accepted or rejected based on the presence of defects rather than their exact locations. Motivated by this observation, classification-oriented approaches have been explored as an alternative. Lee et al. [

11] proposed a multi-camera apple classification system that reduced missed defect inspections, demonstrating improved annotation efficiency. Similarly, Nithya et al. [

12] developed a CNN-based mango defect classification system using image-level labels, showing that effective defect recognition can be achieved without bounding-box supervision. However, these studies typically adopt binary classification (normal vs. defective), which may be insufficient for high-value fruits that require defect-specific pricing, motivating further investigation into multi-class defect classification.

Mango quality grading represents another essential task in automated inspection. Li et al. [

13] proposed a CNN-based grading framework combining CGAN and YOLOv4, where CGAN augmented limited training data and YOLOv4 performed grade classification. While data augmentation improved performance, the reliance on detection-based annotation increased labeling costs, suggesting that multi-class classification models may be a more efficient alternative. Wu et al. [

14] evaluated several CNN architectures, including AlexNet, VGG, and ResNet, for mango quality grading. Although Mask R-CNN–based background removal improved results, manual annotation was still required for transfer learning, motivating the search for more generalizable background removal methods with smoother segmentation boundaries.

Both defect classification and quality grading are typically deployed in automated production lines, where real-time performance is critical. Naik [

15] explored a non-destructive mango grading method using MobileNet combined with an SVM classifier, achieving high accuracy with low inference latency suitable for real-time applications. In addition, class imbalance is a common issue in agricultural inspection due to uncertainties in image acquisition, leading to insufficient representation of certain defect categories and degraded model performance [

16].

From a modeling perspective, most existing approaches rely on spatial-domain CNNs to analyze surface color, texture, and overall appearance. However, spatial information alone is often insufficient to distinguish subtle quality differences, particularly under uneven illumination or noisy conditions. Recent studies [

17] have shown that frequency-domain representations can effectively capture texture-related information and provide complementary cues that are less sensitive to illumination variations.

Based on these observations, this study proposes a unified deep learning framework that jointly addresses mango surface defect classification and quality grading through two task-specific branches. Surface defect assessment is reformulated as a multi-label classification problem involving five defect categories, eliminating the need for costly bounding-box annotations while enabling the identification of co-occurring defects. To mitigate class imbalance, a copy–paste–based image synthesis strategy is employed to augment scarce samples, and self-attention mechanisms are incorporated to further enhance classification accuracy.

Meanwhile, a dedicated quality grading pipeline integrates spatial-domain and frequency-domain CNNs to exploit complementary appearance and texture representations. Adaptive contrast enhancement is applied to emphasize grade-related surface textures, improving robustness under varying illumination and surface noise. Overall, the proposed framework aims to provide a comprehensive and deployment-friendly solution for large-scale automated mango assessment.

The main contributions of this work are summarized as follows:

Mango surface defect inspection is reformulated as a classification-oriented multi-label problem, demonstrating that effective defect recognition can be achieved without bounding-box or pixel-level annotations and is better aligned with industrial inspection requirements.

A unified inspection framework integrates defect screening and three-level quality grading into a single decision flow, enabling simultaneous rejection of defective mangoes and grading of acceptable products in automated production lines.

The proposed framework is designed with annotation efficiency as a core objective and is validated on large-scale, imbalanced datasets, demonstrating stable grading and improved recognition of rare defect categories under practical conditions.

2. Materials and Methods

This study proposes a dual-pipeline deep learning framework designed to perform both mango quality grading and surface defect classification. For the quality grading task, we adopt two separate neural network architectures—ResNet-18 for the frequency-domain input and ResNet-34 for the spatial-domain input. Additionally, we apply background removal and Adaptive Contrast Enhancement (ACE) [

18] to the input mango images to enhance surface texture visibility and improve classification accuracy.

For the five-class defect classification task, we employ ResNet-34 as the backbone network and incorporate self-attention modules to enhance feature representation. In addition, we apply the Copy and Paste data augmentation technique [

19] to increase the training data for scarce classes, addressing the class imbalance issue.

The two tasks operate independently, and the system can be deployed either jointly or separately based on the needs of industrial inspection pipelines.

Section 2.1 presents the composition of the dataset, while

Section 2.2 outlines the preprocessing procedures applied to the images.

Section 2.3 introduces the design of the proposed quality-grading pipeline, and

Section 2.4 details the defect-classification pipeline that completes the overall framework.

Section 2.5 further describes the end-to-end deployment logic of the proposed system. Finally,

Section 2.6 presents the training settings and implementation details used in all experiments.

2.1. Dataset

The datasets used in our experiments are sourced from the Taiwan AI CUP competition, specifically the Irwin mango image dataset [

20]. The dataset consists of two major subsets.

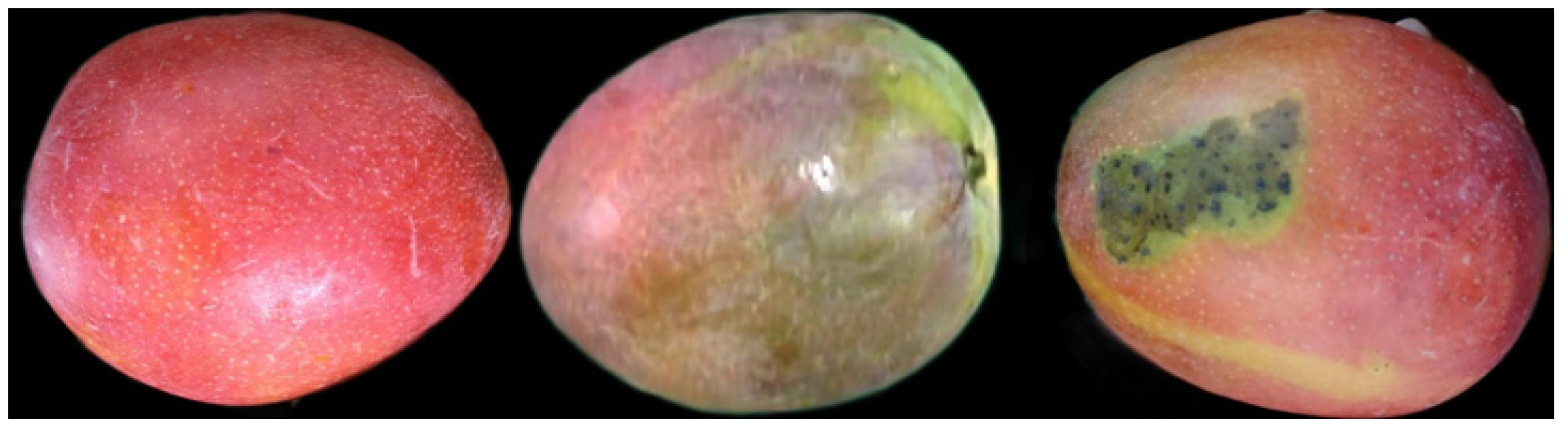

The first subset corresponds to mango appearance quality grading and is divided into three grades: A, B, and C. It consists of 5600 images for training and 800 images for testing. Owing to the limited data availability, the test set was also used as a validation set to monitor training convergence and prevent overfitting; however, it was not used for hyperparameter tuning. Grade A mangoes exhibit uniform coloration without visible black spots or scratches, representing the highest quality. Grade B mangoes show slight color inconsistency or minor surface defects and are regarded as medium quality, whereas Grade C mangoes display pronounced color non-uniformity and frequently contain large dark patches, representing the lowest quality. The sample distributions for the three quality grades are illustrated in

Figure 1a.

The second subset contains five types of mango surface defects, with the sample distribution shown in

Figure 1b. This subset was originally annotated for object detection tasks and was reconstructed in this study as a multi-label classification dataset, comprising more than 50,000 images in total. From this subset, 6000 images were randomly selected for training, 900 images were used as a test set that was also employed as a validation set to monitor training convergence, and an additional 990 images formed an independent test set for final performance evaluation.

This subsampling strategy was adopted based on two primary considerations. First, the number of samples in the D2 defect category is extremely limited in the original dataset. Under such severe class imbalance, training directly on the full dataset of over 50,000 images would further exacerbate the skewed class distribution and hinder effective model learning. Therefore, the complete dataset was not used; instead, random subsampling was employed for experimental design. Second, to maintain a comparable data scale between the defect classification task and the mango quality grading experiment, the size of the training set was deliberately controlled. This design choice aims to simulate practical application scenarios in which large-scale annotated datasets may not be readily available, thereby enhancing the applicability and robustness of the proposed method in real-world settings.

The five defect categories include:

D1: latex residue

D2: mechanical scratch

D3: anthracnose

D4: color inconsistency

D5: black spot disease

The distribution of samples in each category is shown in

Figure 1b. A significant imbalance can be observed, particularly for D2 (mechanical scratches), which contains only 177 images. This scarcity is likely due to scratches being non-intrinsic defects, typically caused by handling or conveyor belt friction, and therefore naturally less frequent.

All images in both subsets have a resolution of 1280 × 720 pixels and were captured under normal indoor lighting conditions. Representative samples of the quality-grading classes and defect types are shown in

Figure 2 and

Figure 3, respectively.

2.2. Data Preprocessing

2.2.1. Background Removal

Since the mango images in the dataset contain production-line backgrounds, background removal is necessary to prevent irrelevant visual information from affecting classification performance. We adopt the well-known U

2-Net [

21] model for this task. Beyond its strong reputation in medical image segmentation, U

2-Net provides highly generalizable pretrained weights that work effectively even on datasets outside its training domain. Our experiments show that these pretrained weights can remove the background of our mango dataset with minimal configuration, and the model remains effective even when multiple mangoes appear in the same frame. Example results are shown in

Figure 4. In terms of computational efficiency, the average processing time for background removal using U

2-Net is approximately 88 ms per image, measured on an NVIDIA RTX 3060 GPU. To avoid redundant computation during training and reduce overall training time, background removal is performed as an offline preprocessing step, and the resulting images are stored for subsequent experiments.

2.2.2. Contrast Enhancement

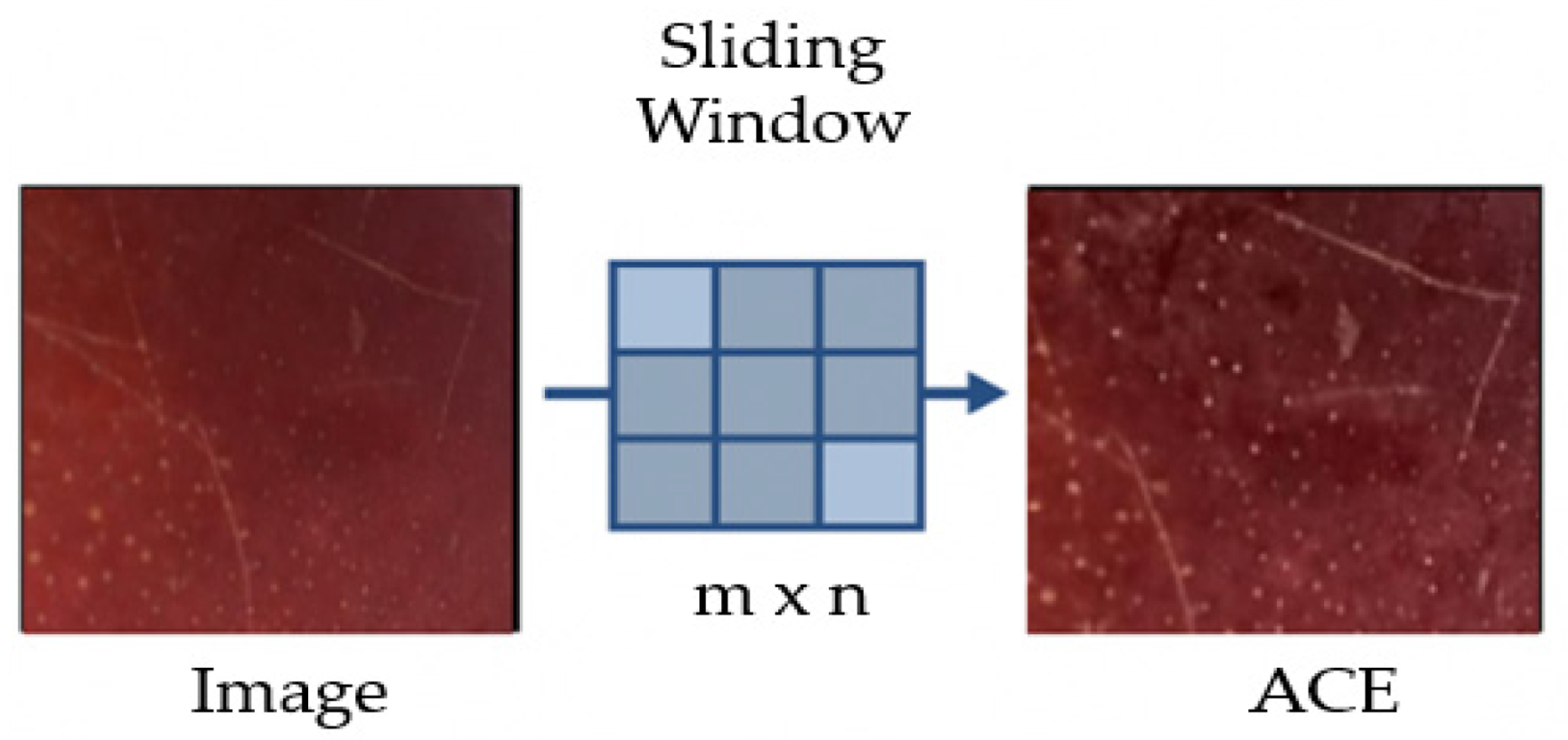

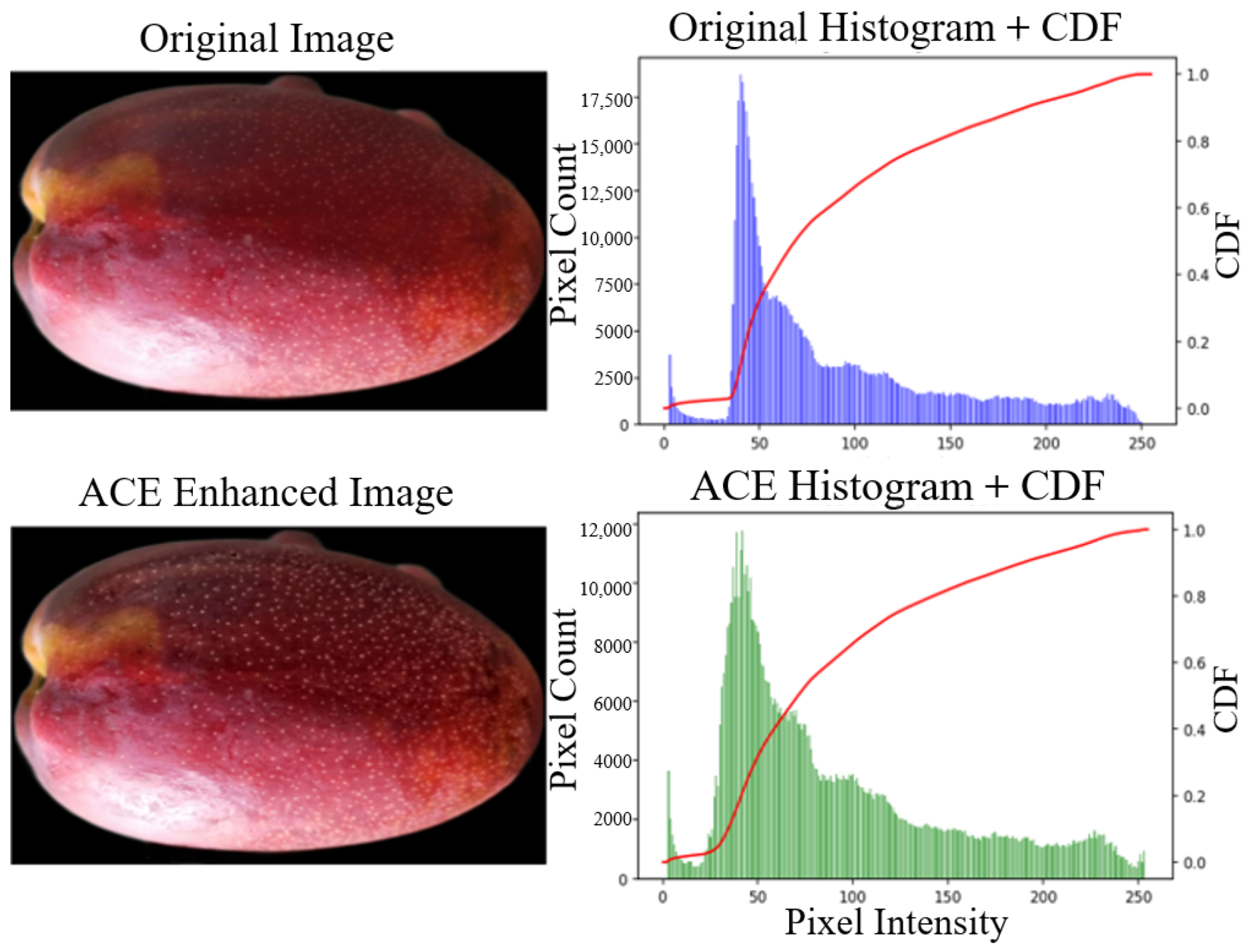

After background removal and resizing, contrast enhancement is applied to emphasize surface details. Specifically, we employ Adaptive Contrast Enhancement (ACE). As illustrated in

Figure 5 and

Figure 6, the mango surface texture becomes visually more pronounced after enhancement. The pixel value distribution further confirms this improvement: originally, most pixel intensities were concentrated in the range of 40–60, indicating low contrast. For example, around the intensity level of 50, approximately 17,500 pixels were present before enhancement; this number decreases to about 12,000 after stretching, with values in other intensity ranges correspondingly increasing. This redistribution reflects the expansion of mid-range intensities and indicates an overall improvement in image contrast.

The ACE operation is implemented on the CPU, with an average processing time of approximately 48 ms per image. Similarly to background removal, contrast enhancement is also conducted offline, and the preprocessed images are saved in advance to avoid repetitive computational overhead during training.

It is worth noting that excessively strong ACE parameters may amplify noise and degrade image quality. Since illumination conditions vary across images, selecting ACE parameters based on a small number of samples can easily lead to over-enhancement and performance deterioration. To address this issue, we conduct repeated batch-level evaluations on diverse images to identify robust parameter settings. Based on empirical testing, the optimal ACE parameters are determined as a window size (m, n) of 55 and a scaling factor α of 0.2. This configuration effectively enhances surface details while preventing excessive amplification of irrelevant noise in the majority of images.

Specifically, the process begins by computing the local mean value M within a window of size [(2n + 1), (2m + 1)] using Equation (1), where f(x,y) denotes the intensity of the pixel at coordinates (x,y). The local standard deviation σ within the same window is then calculated using Equation (2). In Equation (3), α represents the gain factor, and Mg denotes the global mean intensity of the image. Finally, Equation (4) is applied to amplify the high-frequency components of the image, thereby enhancing image details. The resulting enhanced pixel intensity at location (i,j) is denoted as I(i,j).

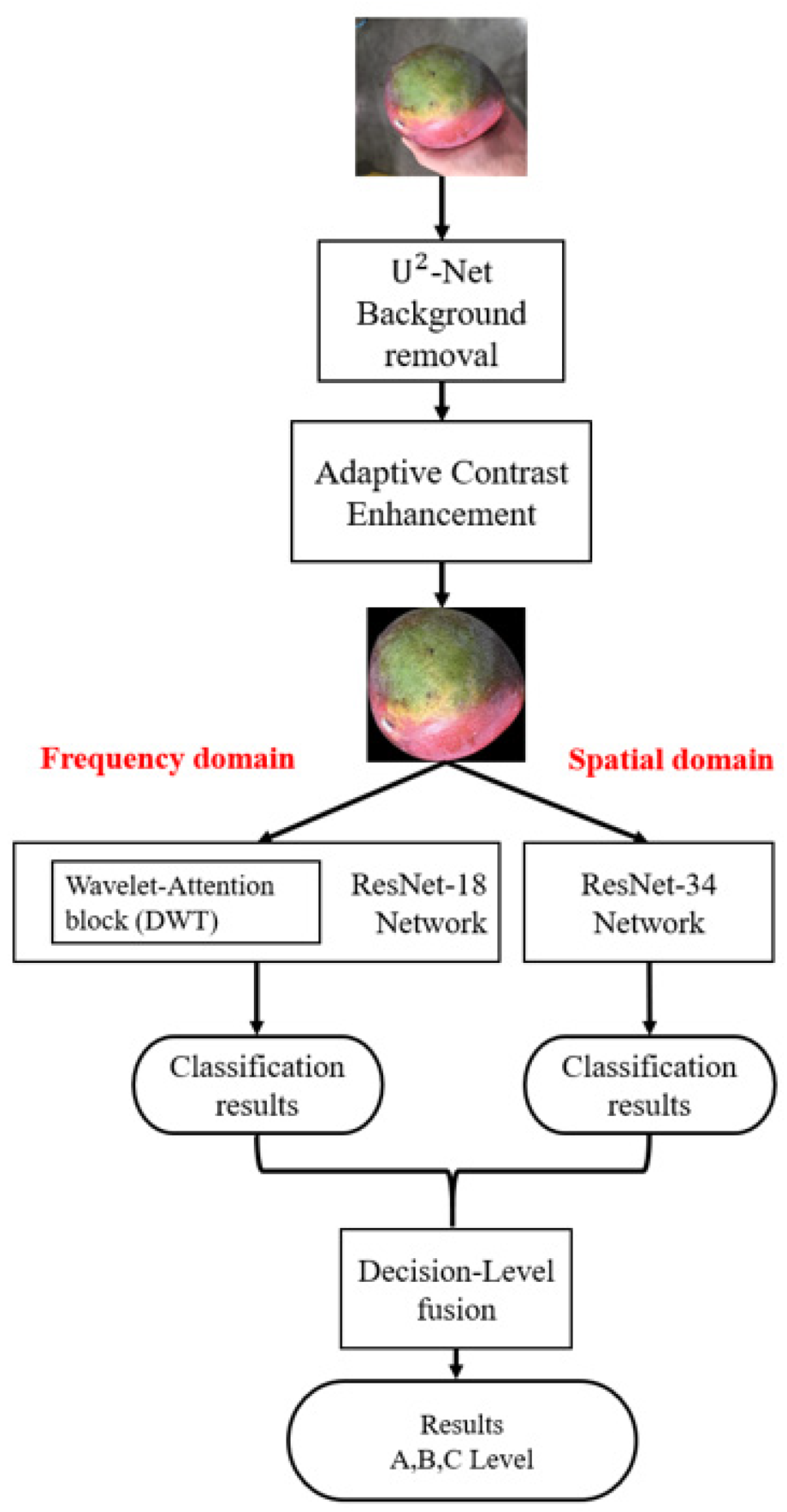

2.3. Quality Grading Pipeline

The goal of the quality grading pipeline is to categorize mangoes into three predefined quality levels based on their surface appearance. To improve prediction robustness under varying imaging conditions, the proposed system integrates both spatial-domain and frequency-domain information.

In the spatial-domain branch, background-removed and contrast-enhanced images are fed into a ResNet-34 network. In the frequency-domain branch, the images undergo a discrete wavelet transform (DWT) [

22] before being processed by a ResNet-18 network.

The class probabilities produced by the two branches are then fused at the decision level to generate the final prediction, as illustrated in

Figure 7. Specifically, a probability-gap–based fusion strategy is adopted (illustrated in

Figure 8). If the difference between the highest and second-highest predicted probabilities from the spatial-domain model is smaller than a confidence threshold TH, the spatial-domain prediction is considered uncertain, and the system defaults to the prediction from the frequency-domain model. Otherwise, the spatial-domain result is used.

To determine an appropriate confidence threshold, we analyze the relationship between the probability margin (i.e., the difference between the top two predicted probabilities) and prediction correctness. We observe that when this margin falls below approximately 0.2, the reliability of the spatial-domain predictions decreases noticeably. The selected confidence threshold is therefore dataset-dependent and may require re-tuning when applied to different datasets or application scenarios.

This decision-level fusion strategy enables the system to exploit the complementary strengths of both domains and improves robustness in ambiguous or low-contrast cases.

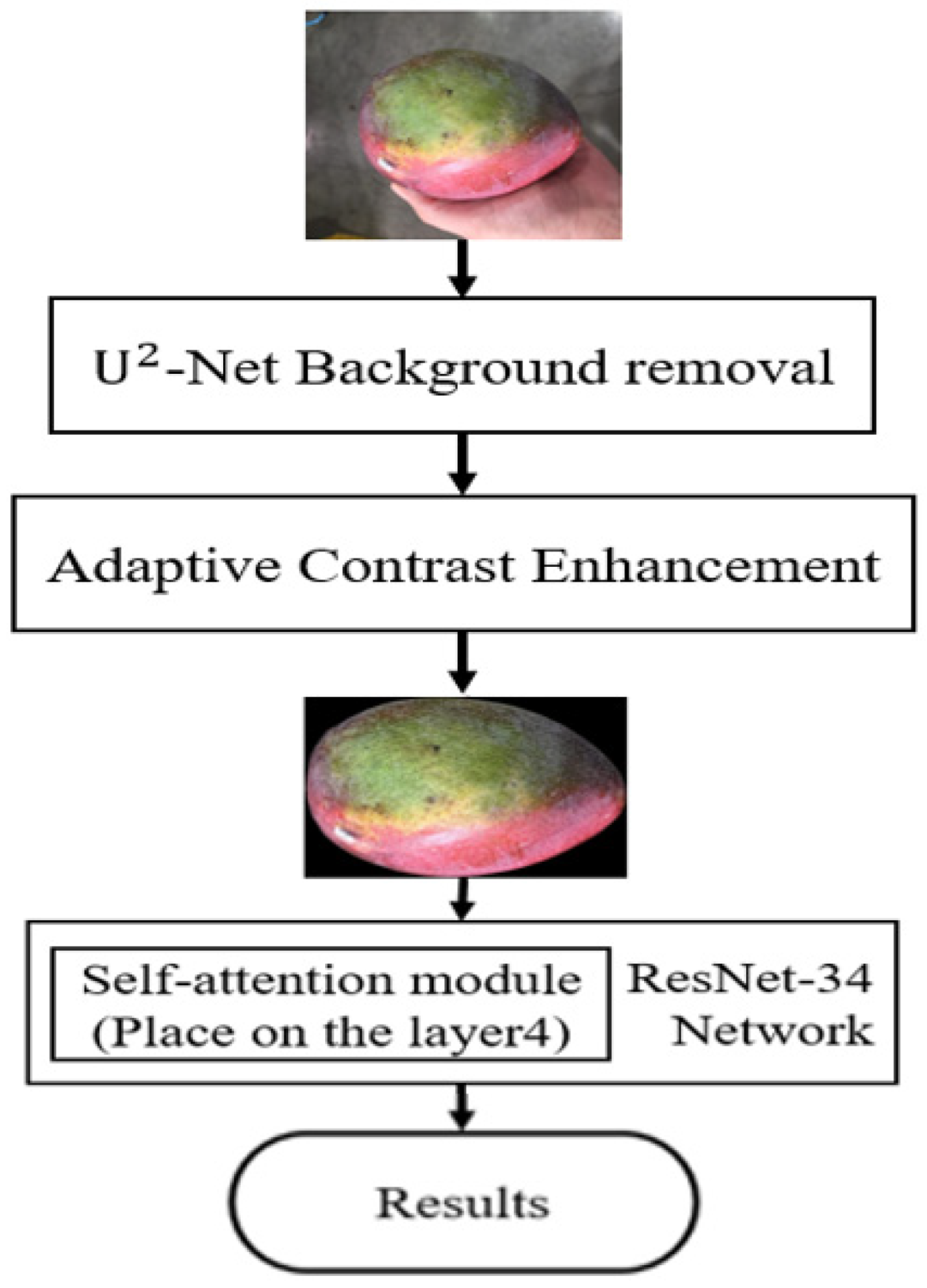

2.4. Defect Classification Pipeline

The defect classification pipeline is designed to identify five different types of mango surface defects. Unlike conventional object detection approaches that require extensive manual annotation of bounding boxes, our method formulates this task as a multi-label classification problem, as illustrated in

Figure 9. ResNet-34 is adopted as the backbone network. Inspired by Non-Local Neural Networks [

23], which demonstrate performance gains by inserting non-local blocks at different network stages, we incorporate a self-attention module into the layer-4 of ResNet-34 after considering the trade-off between computational cost and performance improvement.

Although many object detection studies rely on open-source annotation tools such as LabelMe or LabelImg, these tools are primarily designed for bounding-box or polygon-level annotations and do not natively support efficient image-level multi-label annotation.

To facilitate fast and efficient multi-label annotation, we therefore developed a lightweight annotation tool, as illustrated in

Figure 10. Each mango image is directly assigned a binary label vector indicating the presence or absence of each defect category, enabling rapid and consistent multi-label annotation without the need for region-level labeling.

Real-world datasets often exhibit highly imbalanced defect distributions, with minor defect categories occurring infrequently. Such imbalance can adversely affect model learning. To address this challenge, we adopt the Copy and Paste image synthesis technique proposed by Ghiasi et al. [

19] to artificially augment the number of samples in scarce defect categories. Specifically, we manually extract D2 defect regions from the original images to create masks, which are then randomly scaled, rotated, and blended onto clean mango surfaces. Synthesized images that exhibit visually implausible artifacts are manually filtered out, including cases where the pasted defect extends beyond the mango region, appears at an unrealistic scale (excessively large or small), or violates natural surface appearance. Through this process, the D2 category is expanded from 177 original samples to 672, significantly improving dataset diversity, as illustrated in

Figure 11.

2.5. End-to-End Deployment Logic

In a practical packhouse deployment, mango inspection follows a sequential inspection strategy commonly adopted in industrial mango sorting systems [

24], where surface defect screening and quality grading are jointly considered. The five-category surface defect classification task is performed as the first screening stage to separate mangoes into pass and fail groups.

Mangoes that fail the defect screening stage are removed from the premium processing line. In addition to disposal, the distribution of defect categories is recorded and reported to the cultivation department for further analysis of potential causes. Mangoes that pass the defect screening are subsequently evaluated by the quality grading pipeline, which produces three-level grade predictions. Mangoes classified as Grade C are designated for low-price markets, while those predicted as Grade A or Grade B are labeled as “Premium” and “Standard”, respectively, for high-value sales.

Through this sequential decision strategy, the proposed framework produces a unified end-to-end inspection outcome with three system-level decisions: Reject, Downgrade, and Accept. This deployment logic reflects real-world industrial inspection requirements and enables simultaneous defect filtering and quality grading within a single operational workflow.

2.6. Training Settings

All experiments in this study were conducted on a custom-built workstation equipped with an AMD Ryzen 5 CPU, 16 GB of RAM, and running the Windows 10 operating system. The deep learning models were implemented using PyTorch [

25] version 2.1.0, and all model training was performed on an NVIDIA RTX 3060 GPU.

2.6.1. Training Settings for Quality Grading

For the mango quality grading experiment, a ResNet-based architecture was adopted for both spatial-domain and frequency-domain inputs, sharing the same training configuration. Data augmentation was applied during training to enhance model generalization, including random affine transformations with rotation up to 20°, translation up to 20%, scaling in the range of 0.8–1.2, shear of 0.2, and random horizontal flipping with a probability of 0.5. The batch size was set to 32. The final fully connected layer of the backbone network was replaced by a custom classifier composed of two hidden layers with 512 and 64 neurons, respectively. Dropout with a rate of 0.5 was applied after each fully connected layer to mitigate overfitting, followed by batch normalization and ReLU activation. A softmax activation was used at the output layer to produce normalized prediction scores. The model was optimized using the Adam optimizer with an initial learning rate of 0.001 and a weight decay of . The Cross-Entropy loss function was employed for training. A step-based learning rate scheduler (StepLR) was applied, reducing the learning rate by a factor of 0.5 every three epochs. Each model was trained for a maximum of 50 epochs. In practice, the best validation performance was consistently achieved between 14 and 20 epochs, after which performance saturated or slightly degraded.

2.6.2. Training Settings for Defect Classification

For the mango defect classification experiment, the same ResNet backbone was employed, while the training configuration was adapted to the multi-label nature of defect recognition. During training, only random horizontal flipping with a probability of 0.5 was applied for data augmentation. Rotation, scaling, and affine transformations were intentionally excluded, as preliminary experiments showed that such augmentations consistently degraded classification accuracy. This performance drop is likely caused by partial cropping or distortion of small defect regions, which are critical for accurate defect identification. The batch size was fixed at 32 for all experiments. The network was trained using the Binary Cross-Entropy loss with logits (BCEWithLogitsLoss), which integrates a sigmoid activation internally to improve numerical stability. Optimization was performed using the Adam optimizer with an initial learning rate of 0.001. A StepLR scheduler was adopted to decay the learning rate by a factor of 0.5 every three epochs. Training was conducted for up to 50 epochs, with optimal validation performance typically observed between 15 and 25 epochs.

4. Discussion

In the model selection experiments for the mango quality grading task, the superior performance of ResNet-34 indicates that a moderate network depth can achieve better generalization capability without overfitting the training set. Although MobileNetV2 exhibits slightly lower recognition performance than ResNet-34, its computational cost is significantly lower (0.3 GFLOPs compared to 3.6 GFLOPs for ResNet-34). This highlights the potential of MobileNetV2 for deployment on resource-constrained platforms. Subsequent spatial-domain experiments further demonstrate that higher input resolutions are particularly beneficial for mango quality grading, emphasizing the importance of preserving fine-grained surface details. The effectiveness of the ACE algorithm also suggests that contrast enhancement facilitates the extraction of discriminative features. In contrast, the frequency-domain experiments show relatively lower sensitivity to input resolution. This may be attributed to the fact that frequency-domain representations rely less on precise spatial locations and instead focus more on global texture characteristics and spectral distributions. Furthermore, deeper network architectures in the frequency-domain branch do not yield additional performance gains, implying that excessive network depth may introduce redundant features or hinder optimization when processing transformed inputs. The strong performance of wavelet-based CNNs further supports the advantage of multi-scale decomposition in capturing feature information at different scales [

22].

The decision-level fusion strategy (late fusion) effectively exploits the complementary nature of spatial-domain and frequency-domain information. By selecting either spatial-domain or frequency-domain predictions based on the confidence differences across classes, the proposed system leverages the strengths of both domains, resulting in improved robustness and overall performance. In future work, we plan to further investigate feature-level fusion (early fusion) by integrating spatial-domain and frequency- domain CNN networks more tightly.

In the defect classification experiments, the results highlight the challenges posed by extreme class imbalance. Conventional data augmentation methods based on generative adversarial networks (GANs) perform poorly when training data are severely limited, whereas the copy-paste strategy effectively enriches samples of minority classes. However, it should be noted that the manual filtering of unrealistic synthesized defect images may introduce a certain degree of subjectivity. For example, determining what constitutes an unrealistic defect size can be ambiguous. In addition, the scalability of this manual filtering process, as well as its applicability to other fruit varieties or defect types, remains an open issue and warrants further investigation.

In addition, ablation studies indicate that introducing a self-attention module into the layer4 stage of ResNet-34 improves classification accuracy without incurring excessive computational overhead. As demonstrated in

Table 8, reformulating the original mango defect detection task as a multi-label defect classification problem not only alleviates the substantial annotation burden associated with pixel-level defect masks but also leads to improved classification performance.

In multi-label classification, each class is associated with an independent decision threshold and produces probability outputs in the range of 0–1, rather than enforcing a normalized probability distribution across classes as in multi-class classification. Consequently, threshold optimization for individual classes in multi-label classification [

32] represents an important research direction. In future work, we plan to further investigate adaptive threshold optimization strategies to enhance multi-label classification performance.

5. Conclusions

This study proposed an integrated deep learning framework that simultaneously addresses mango quality grading and surface defect detection. For defect classification, five types of surface imperfections were reformulated into a multi-label classification problem, and a copy-paste–based data augmentation strategy was introduced to alleviate class imbalance, particularly for rare defect categories. Although generative approaches such as Fast-GAN were evaluated, they proved ineffective for extremely scarce classes, whereas the copy-paste augmentation consistently improved performance.

For quality grading, decision-level fusion of the output probability scores from spatial-domain and frequency-domain CNNs, together with adaptive contrast enhancement, effectively captured fine surface textures essential for grade discrimination. Experimental results demonstrate that the proposed system achieves strong performance, with a Micro-F1 score of 84% for defect classification and a weighted average recall (WAR) of 87.2% for quality grading.

Despite these advantages, several limitations remain. The proposed framework was evaluated on a single mango dataset, and its generalization capability to other mango varieties or fruit types requires further validation. In addition, the effectiveness of adaptive contrast enhancement for fruits with less distinctive surface textures, such as apples, remains to be investigated.

At the current stage, the proposed system demonstrates practical applicability for industrial production environments, with the potential to reduce reliance on manual visual inspection and alleviate operator fatigue in conventional mango sorting lines. Looking forward, integrating ripeness estimation and other maturity-related indicators into the dual-pipeline framework represents a promising research direction. Such extensions would further enhance the completeness of automated mango evaluation and contribute to more intelligent, efficient, and scalable smart agriculture systems.