1. Introduction

Image thresholding is a widely applied low-level image processing method owing to its simplicity and effectiveness [

1,

2]. Its applications span various fields, including but not limited to industrial non-destructive testing [

3], remote sensing target detection [

4], infrared pedestrian recognition [

5], and biomedical image analysis [

6,

7]. Image thresholding involves comparing each pixel’s gray level with a selected threshold, thereby dividing the image into target and background regions [

8]. Threshold selection is crucial for segmentation accuracy. Therefore, one core objective of image thresholding is to robustly determine a reasonable threshold.

The gray-level histograms of images typically exhibit non-modal, unimodal, bimodal, or multimodal patterns. Automatically selecting reasonable thresholds for these four different histogram modalities within a unified framework is a challenging issue. This challenge is the direct derivation of a unified threshold selection criterion from these varying modalities.

Among various thresholding methods, some representative ones primarily include histogram-based methods, entropy-based methods, and methods based on the numerical characteristics of random variables. Histogram-based methods mainly select thresholds by utilizing shape features or statistical information of the gray-level histogram, but they often fail in images with imbalanced or fluctuating histograms [

9,

10,

11,

12,

13]. Entropy-based methods, such as those using Shannon, Tsallis, Masi, or Kaniadakis entropy, maximize information entropy to select thresholds [

14,

15,

16,

17,

18,

19,

20,

21]. However, these methods often involve nonextensive parameters that require manual tuning, limiting their adaptability to complex images. Methods based on numerical characteristics, such as the representative Otsu method [

22], utilize variance or derivatives to distinguish between classes. While effective for bimodal histograms, their performance degrades significantly for non-modal, unimodal, or multimodal patterns, especially when the target size is imbalanced [

23,

24,

25]. In summary, most existing methods tend to focus on handling images with specific histogram patterns and lack a unified framework for diverse modalities. A detailed review of these related works is presented in

Section 2.

To automatically threshold images with non-modal, unimodal, bimodal, or multimodal gray-level histograms within a unified framework, a homologous isomeric similarity thresholding (HIST) method under a unified transformation toward unimodal distribution is proposed. The HIST method first performs a unified transformation on an input image to obtain an edge image. The gray-level histogram of the edge image exhibits a special right-skewed unimodal pattern, with its mode located at the leftmost end of the gray-level histogram. Subsequently, the HIST method extracts contours from binary images obtained by different thresholds to generate corresponding binary contour images. Finally, the appropriate threshold is determined by searching for the binary contour image with the maximum homologous isomeric similarity to the edge image.

The remainder of this manuscript is structured to systematically develop and validate this HIST framework.

Section 2 reviews the related work on image thresholding.

Section 3 introduces the unified transformation method to obtain the gray-level edge images with the right-skewed unimodal patterns. This section is theoretically pivotal as it establishes the prerequisite for a unified criterion by converting diverse histogram patterns into a standardized, right-skewed unimodal distribution.

Section 4 describes a method for extracting binary contour images.

Section 5 analyzes the homologous isomeric similarity and its quantification using normalized Renyi mutual information. This mathematical formulation serves as the robust objective function for the threshold selection problem.

Section 6 outlines the overall framework of the proposed HIST method and provides the corresponding algorithm steps.

Section 7 provides a thorough experimental evaluation and comparative analysis on both synthetic and real-world datasets, validating the adaptability and accuracy of the proposed method. Finally,

Section 8 concludes the study by summarizing the contributions and outlining future research directions.

2. Related Work

Song et al. [

9] derived the gray-level gradient histogram by calculating the average gradient of an input image, and then determined the optimal threshold by analyzing the shape of this histogram. The method is effective for unimodal histograms but unsuitable for bimodal or multimodal histograms. Additionally, histogram fluctuations can impact threshold selection stability. Christy and Umamakeswari [

10] proposed the “percentage split distribution” for image thresholding, efficiently dividing images into groups by pixel distribution percentages. Although suitable for real-time applications, the method is prone to errors in images with imbalanced gray-level histograms, potentially leading to empty segmentation regions. Farshi and Demirci [

11] proposed a thresholding method that treats the histogram curve as the objective function. This method utilizes multimodal particle swarm optimization to automatically locate the peaks and valleys of the histogram, and selects the valley between two peaks as the threshold. While offering automation and user independence, the method uses a Gaussian mask with a fixed variance of 3 for histogram smoothing, potentially limiting its adaptability. Manda and Kim [

12] introduced a rapid thresholding technique for infrared images using histogram approximation and circuit theory. The approach involves modeling the gray-level histogram as the transient response of a first-order linear circuit, with threshold determination based on circuit theory operators. The method is versatile for various infrared imaging scenarios but is histogram shape-dependent and best suited for images with long-tailed or heavy-tailed distributions. Elen and Dönmez [

13] proposed a method that determines alpha and beta regions using the mean and standard deviation of the gray-level histogram, and the final threshold is obtained by calculating the average gray level of these regions. The method is computationally straightforward, making it appropriate for real-time systems. However, it is mainly suited for images with long-tail or heavy-tail distributions and may not perform well on images with imbalanced histograms.

The entropy-based methods primarily utilize different entropy models to design the objective functions for threshold selection. Since Kapur et al. [

14] first proposed the maximum Shannon entropy method, the idea of selecting thresholds by maximizing the sum of target and background entropies has garnered widespread attention. Portes de Albuquerque et al. [

15] introduced Tsallis entropy to image thresholding to address nonextensive information in images. The parameter in Tsallis entropy allows for flexible adjustment within image categories, but selecting the appropriate parameter requires experience and experimentation. Lin and Ou [

16] further employed the nonextensive parameter in Tsallis entropy to capture local long-range correlations. However, without manual ground-truth labeling, automatically selecting the appropriate nonextensive parameter is challenging due to the lack of a quantitative relationship between the parameter value and long-range correlations. Additionally, the method is primarily targeted at images with specific long-range correlations, potentially limiting its generalization abilities. Nie et al. [

17] introduced a thresholding method using Masi entropy, combining the nonextensivity of Tsallis entropy with the additivity of Renyi entropy. This allows the method to adapt to a broader range of image types. Nonetheless, its thresholding results are sensitive to the Masi entropy parameter, and an effective automatic method for determining the parameter has yet to be developed.

Within the framework of the maximum entropy, Sparavigna [

18] employed Kaniadakis entropy to create a threshold selection objective function; however, choosing an appropriate Kaniadakis entropy index requires additional knowledge and experience. Building on Sparavigna’s thresholding technique [

18], Lei and Fan [

19] adaptively select the parameter in Kaniadakis entropy via particle swarm optimization. However, particle swarm optimization will increase computational complexity. Furthermore, the method is more suitable for images with long-tailed gray-level histograms. Ferreira Junior et al. [

20] proposed an image thresholding method that integrates Tsallis and Masi nonextensive entropies to leverage their ability to represent long-range and short-range correlations. By using two nonextensive entropy parameters, the method effectively captures gray-level long-range correlations, improving segmentation for images with local long-range correlations. The added flexibility of two entropy parameters, however, complicates the parameterization process. Deng et al. [

21] combined nonextensive Tsallis entropy with within-class variance in gray-level distribution, enhancing small target extraction by introducing the nonextensive parameter to model long-range pixel correlations. The automatic estimation of the parameter adds adaptability to the algorithm. However, the estimated parameter may not accurately represent long-range correlations, especially in complex and varied images. Moreover, the method’s performance relies on image long-range correlations, potentially limiting its consistent performance across different types of images.

Thresholding methods based on the numerical characteristics of random variables primarily utilize first-order, second-order, or higher-order statistics to design the objective functions for threshold selection. The Otsu method, which is effective for bimodal histograms, is a representative example. However, target size significantly impacts the Otsu method, leading to over-segmentation for target sizes of less than 80% and under-segmentation for target sizes of over 80% [

22]. Many enhanced methods address the limitations of Otsu method, with the weighted Otsu improvement being representative. These methods incorporate weight information into Otsu’s objective function to approximate the optimal threshold more closely. For instance, Xing et al. [

23] proposed a valley-emphasis weighting method. This method incorporates a second-order derivative as a valley metric into Otsu’s objective function, thereby making the threshold closer to the histogram’s valley. However, the second-order derivative’s sensitivity to histogram noise necessitates a smooth and well-shaped gray-level histogram. Kang et al. [

24] introduced a parameter to modify the inter-class variance calculation in the Otsu method, thereby emphasizing target weight and refining the threshold selection via an adaptive iterative algorithm. The parameter computation relies on the histogram probability gradient, which, in cases with significant noise, may yield larger values, resulting in a smaller parameter value. This can diminish the effect of the parameter, potentially reducing the objective function proposed by Kang et al. to the standard Otsu form. Singh et al. [

25] utilized a weight function based on Kapur’s entropy to modify Otsu’s function. The fusion of Otsu’s and Kapur’s methods offers a more comprehensive evaluation of image characteristics, enhancing threshold selection. Although the method reduces user intervention in determining thresholds, it still requires parameter tuning for metaheuristic algorithms, which can affect the performance and may require expertise.

3. Unified Transformation Toward Unimodal Distribution

The gray-level histograms of images may exhibit different distribution patterns: non-modal, unimodal, bimodal, or multimodal. A unified transformation method is proposed to convert the gray-level histograms of different patterns into a unified, unimodal, right-skewed gray-level histogram. The unified transformation is applied to an input image to generate a gray-level edge image with this desired distribution (see

Figure 1).

For an input image

, the unified transformation first applies the bilateral filtering [

26] to the image

:

denotes the image obtained by bilateral filtering, is a normalization factor, and represents the filtering kernel. and denote the current pixel and the pixel within the -neighborhood, respectively, with their gray levels denoted by and . represents the spatial distance between pixels and , while denotes the difference in gray levels between pixels and . indicates the coordinates of the current pixel , and denotes the coordinates of pixel within the -neighborhood. and denote the spatial Gaussian weighting and gray-level Gaussian weighting, respectively. and represent the spatial domain parameter and the gray-level domain parameter, respectively, which adjust the influence of pixel distance and pixel gray-level difference on the weights. The size of the filtering kernel is typically odd, so the paper sets the filtering kernel radius to 5, resulting in a kernel size of . Given that 95% of the components of the Gaussian function are concentrated within , the spatial domain parameter can be determined by , where denotes the ceiling function. The gray-level domain parameter should not be too small or too large. When is too small, the smooth effect of bilateral filtering on the image is insufficient, failing to effectively suppress noise and other interferences. Conversely, when is too large, the bilateral filtering will gradually degrade into Gaussian filtering, thereby failing to preserve edge details well. In this paper, is set to 0.3, which enables the bilateral filtering to suppress noise while preserving edge details effectively.

The unified transformation method utilizes a

Sobel operator to compute the numerical approximations of the first-order partial derivatives of the image

in both horizontal and vertical directions, thereby calculating the gradient magnitude image

and the gradient orientation

of the image

. To refine edge details and remove redundant points, non-maximum suppression [

27] is applied to the gradient magnitude image

along the gradient direction

to obtain image

. After normalizing the gray levels of the image

to

, the gray-level histograms of the images

corresponding to four synthetic images in

Figure 1 are shown in

Figure 2.

From

Figure 2a–c, it can be observed that there is a minor peak to the right of the leftmost main peak in the gray-level histogram of the image

, which corresponds to some spurious edges in the image

. To obtain a gray-level edge image with a unified right-skewed unimodal distribution, the unified transformation method utilizes the maximum entropy thresholding method [

14] to calculate a threshold

for the image

, and then modifies the gray levels of pixels in the image

with values below the threshold

to 0. After the above processing, the final gray-level edge image preserves robust edge features while containing a little noise, and exhibits a unified right-skewed unimodal distribution (see

Figure 1). Hereinafter, the symbol

is employed to denote the gray-level edge image obtained by the unified transformation method.

4. Extraction of Binary Contour Images

Let

denote an 8-bit gray-level image with gray levels in the interval

. Given a gray level

in the interval

, we can obtain a corresponding binary image

by applying the following formula to threshold image

:

For a binary image , let denote a pixel in the image . The 4-neighborhood pixels of the pixel can be denoted as . The interior pixel region of the binary image is defined as .

Performing a bitwise inversion on the binary image , we can obtain a corresponding binary image . The 4-neighborhood pixels of the pixel can be denoted as . The interior pixel region of the binary image is defined as .

Algorithm 1 is employed to extract the contour image from the image : For the binary image , the corresponding inner contour image can be obtained by removing the pixels in its interior pixel region (i.e., setting the pixel values in the of the binary image to 0). Similarly, the outer contour image of the binary image can be obtained by removing the pixels in the interior pixel region of the binary image . Further, we can obtain the final binary contour image of the binary image by computing the union of the inner and outer contour images, as follows: .

| Algorithm 1: Extract |

| Input: | A binary image . |

| Output: | A binary contour image . |

| Step 1: | Obtain an inner contour image by setting the pixel values in the of the binary image to 0. |

| Step 2: | Obtain an outer contour image by setting the pixel values in the of the binary image to 0. |

| Step 3: | Obtain a binary contour image by computing the union of the inner and outer contour images, as follows: . |

By applying the above binary contour extraction method to each gray level in the gray-level image , we can obtain a set of binary contour images . These binary contour images reflect the contour features of the target in the image to varying degrees.

5. Calculation of Homologous Isomeric Similarity

The gray-level edge image

and the binary contour image

are homologous, as they derive from the same original gray-level image

. The gray-level edge image

and the binary contour image

are isomeric: the former describes the target edge features of image

in the discrete gray-level space, while the latter describes the target contour features of image

in the discrete binary space (see

Figure 3). Both target edges and target contours objectively describe the spatial location and shape of the target in an image, meaning that there is a specific similarity in terms of planar geometric structure between target edges and target contours. Thus, there is a certain similarity between the homologous and isomeric gray-level edge image

and the binary contour image

. We adopt the terminology from biology and chemistry to designate this similarity a Homologous Isomeric Similarity (HIS).

The HIS between the gray-level edge image and the binary contour image varies with different thresholds . For threshold , which is closer to the ideal threshold, the corresponding binary contour image more accurately represents the target contour features, resulting in a higher HIS. In contrast, for threshold , which is far from the ideal threshold, the corresponding binary contour image has a lower accuracy in representing the target contour features, leading to a lower HIS. In other words, if there exists a binary contour image that exhibits the maximum HIS with the gray-level edge image , then the corresponding gray-level is likely to be at a relatively reasonable threshold.

Under the guiding principle of maximizing HIS, the HIST method converts the problem of selecting a reasonable threshold into finding the binary contour image that is most similar to the gray-level edge image . The critical issue after this conversion is how to robustly measure the HIS.

Renyi mutual information can capture higher-order dependencies than Shannon mutual information, rendering it more robust for analyzing asymmetric or non-uniformly distributed data [

28]. Since the discrete probability distributions of the gray-level edge image

and the binary contour image

are typically asymmetric and non-uniform, Renyi mutual information is a suitable similarity measure. Furthermore, as the normalized mutual information is more robust than the standard mutual information [

29,

30], this paper adopts the normalized form of Renyi mutual information [

31] as the HIS measure, and proposes the following objective function for selecting the final threshold

:

Equation (6) defines the objective of the threshold selection problem. It states that the optimal threshold

is the value within a feasible range

that maximizes the similarity metric between the edge image

and the binary contour image

. This transforms this visual task of matching contours into a numerical optimization problem. To quantify the similarity in Equation (6), we employ the normalized Renyi mutual information, as shown in Equation (7), where

and

are the Renyi entropies of image

and image

, respectively, and

represents the joint Renyi entropy of images

and

. This is defined as follows:

where

denotes the probability of gray-level

in the gray-level edge image

,

, and

denotes the probability of pixel value

in the binary contour image

,

.

represents the joint probability between the gray-level edge image

and the binary contour image

.

The Renyi mutual information has a parameter . When , the Renyi entropy degenerates into Shannon entropy, and the normalized Renyi mutual information also degenerates into the normalized Shannon mutual information. Shannon mutual information treats each gray level proportionally to plogp, so it is equally sensitive to all structural components of the histogram, including small asymmetries and long tails. When , it assigns higher weights to high-probability events and lower weights to low-probability events in the probability distribution. Because the gray-level distribution of the edge image contains sparse data points, utilizing Renyi mutual information with can reduce the contribution of these outliers to the HIS, thereby stabilizing the computation of the HIS. Further, when , Equations (8)–(10) will involve only the squaring operation of the probability distribution, which facilitates the simplification of the HIS computation. Accordingly, this paper sets .

6. Algorithm Description of HIST Method

Algorithm 2 describes key steps for selecting the final threshold in the HIST method, while

Figure 3 visually illustrates these key steps.

| Algorithm 2: HIST |

| Input: | A gray level image . |

| Output: | A threshold and a binary image . |

| Step 1: | Set the initial values of variables , , and to 0. |

| Step 2: | Extract the gray level edge image from the image using the unified transformation method described in Section 3. |

| Step 3: | for to do |

| Step 4: | Thresholding the gray level image with the gray level to obtain a binary image . Then, extract the binary contour image from the image using the Algorithm 1 outlined in Section 4. |

| Step 5: | Calculate the normalized Renyi mutual information between the gray level edge image and the binary contour image using Equation (7) and record the result in . |

| Step 6: | if then

; |

| Step 7: | end if |

| Step 8: | end for |

| Step 9: | Thresholding the gray level image using the final threshold , and output the thresholding result image and the threshold . |

7. Experimental Results and Discussions

This section presents the experimental results and a discussion to validate the effectiveness and adaptability of the proposed HIST method. Comprehensive comparative experiments were conducted on both synthetic and real-world images against five state-of-the-art methods. To rigorously assess segmentation accuracy, Misclassification Error (ME) and Matthews Correlation Coefficient (MCC) were adopted as the primary evaluation metrics, while Jaccard, Dice, and Root–Mean–Square (RMSE) are provided in the

Supplementary Materials. The experimental section is organized as follows to ensure a logical flow:

Section 7.1 details the experimental environment and evaluation metrics to establish the testing framework;

Section 7.2 analyzes the results using synthetic images under controlled conditions;

Section 7.3 extends the validation to real-world images; and

Section 7.4 compares the computational efficiency of the methods.

7.1. Experimental Environment, Comparison Methods, and Quantitative Evaluation Indicators

The parameters of the main software and hardware used in the experiments are as follows: AMD R7-6800H 3.20 GHz CPU, 16 GB DDR5 memory, Windows 11 64-bit operating system, and Matlab 2018b 64-bit development platform. The test image set comprised 8 synthetic images and 100 real-world images. Their gray-level histograms exhibit non-modal, unimodal, bimodal, or multimodal patterns. The test images and their ground truth images can be accessed via this link:

https://wwqj.lanzoum.com/i8OMo2y1jkxa (accessed on 22 December 2025).

The proposed HIST method is compared with five recently developed methods, including three thresholding methods and two non-thresholding methods. The five compared methods are as follows: histogram-based global thresholding (HBGT) method [

13], thresholding method based on nonextensive entropy and variance of grayscale distribution (NEVGD) [

21], second-order derivative valley emphasis (SDVE) thresholding method [

23], fuzzy subspace clustering (FSC) method [

32], and region-edge-based active contours (RAC) method [

33].

ME [

5,

20,

21] and MCC [

34,

35] were utilized to quantitatively evaluate the segmentation accuracy of each method. Jaccard, Dice, and RMSE [

36,

37] were also employed. Note that, regardless of whether Jaccard, Dice or RMSE metrics were employed, or MCC and ME metrics were employed, the conclusion regarding the adaptability and accuracy of the six methods remained unchanged. Therefore, the experimental results and analysis of Jaccard, Dice, and RMSE metrics are made available as online resources in the

Supplementary Materials section.

ME reflects the proportion of target pixels misclassified as background pixels and vice versa in the segmentation result image. Its calculation is as follows:

where

and

represent the set of the target and background pixels in the ground truth image, respectively.

and

represent the set of the target and background pixels in the segmentation result image, respectively. The symbol

denotes the intersection operation and the symbol

is used to count the number of elements in a set. The ME value varies in the range [0, 1], and a lower ME indicates fewer misclassifications in the segmentation result image. Specifically, ME equals 0 when the segmentation result image is identical to the ground truth image, and ME equals 1 when the segmentation result image is completely opposite to the ground truth image.

MCC takes into account all four components of the confusion matrix: true positives, true negatives, false positives, and false negatives, making it a robust quantitative evaluation metric. The formula for calculating MCC is as follows:

where

and

represent the number of true-positive and true-negative elements, respectively, and

and

represent the number of false-positive and false-negative elements, respectively. The MCC value varies in the range [−1, 1]. Specifically, MCC equals 1 when the segmentation result image is identical to the ground truth image, and MCC equals −1 when the segmentation result image is completely opposite to the ground truth image.

7.2. Experimental Results and Analysis Using Synthetic Images

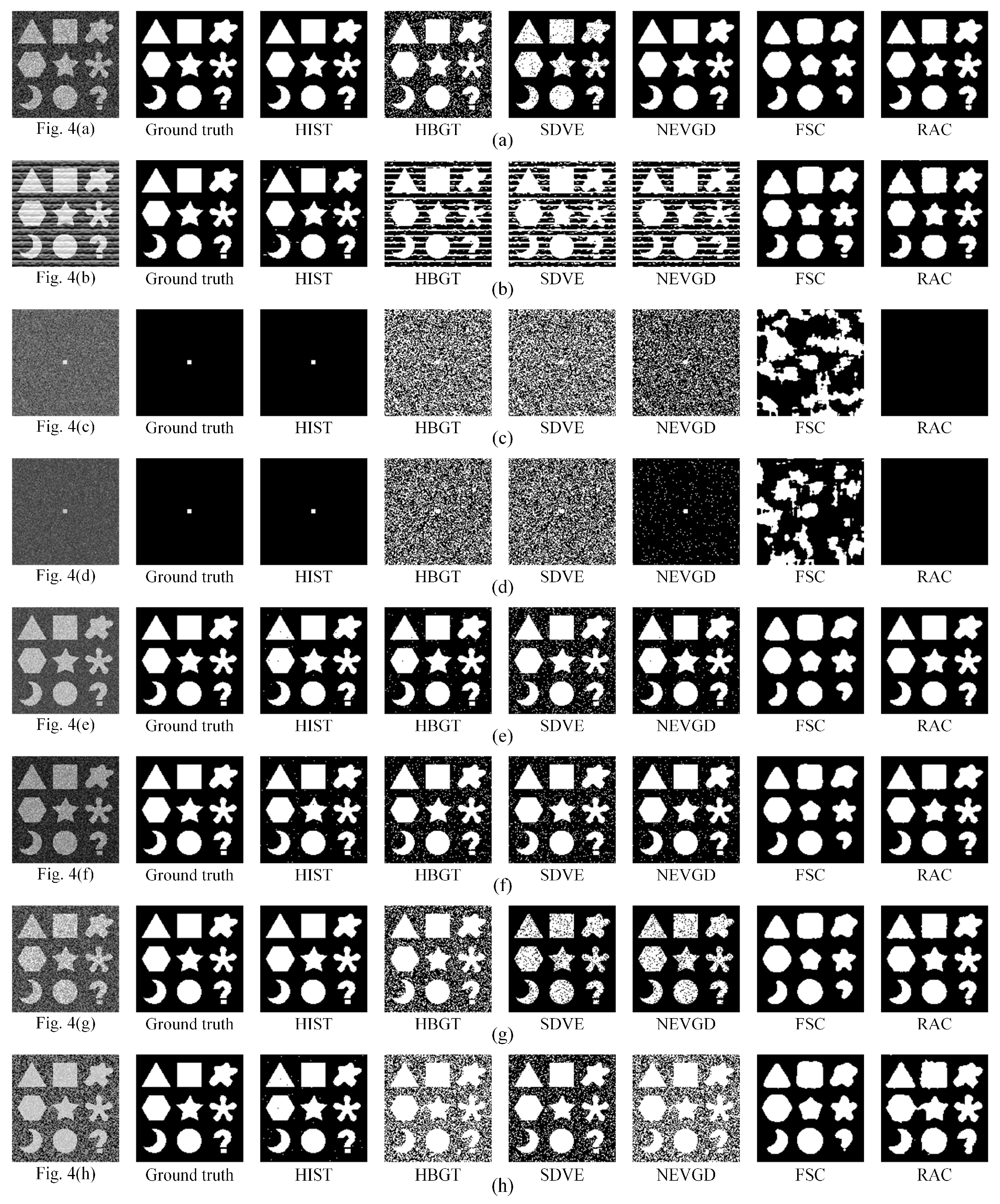

To test the six methods’ adaptability and accuracy for images with non-modal, unimodal, bimodal, or multimodal gray-level histograms, comparative experiments were first performed on eight synthetic images in

Figure 4. In

Figure 4a,b, the size ratio of the target to the background is relatively balanced, and their gray-level histograms exhibit a non-modal pattern (see

Figure 5a,b). In

Figure 4c,d, the size ratio of the target to the background is severely imbalanced, and their gray-level histograms exhibit unimodal pattern (see

Figure 5c,d).

Figure 4e,f show relatively balanced target-to-background size ratios, and exhibit bimodal gray-level histograms (see

Figure 5e,f).

Figure 4g,h also show relatively balanced target-to-background size ratios, but exhibit multimodal gray-level histograms (see

Figure 5g,h).

Threshold selection is crucial in image thresholding. Therefore, we first compare and analyze the differences in threshold selection when using the proposed HIST method and three comparative thresholding methods. Note that

denotes the optimal threshold in the sense of minimizing ME, and

denotes the optimal threshold in the sense of maximizing MCC.

Table 1 presents the

and

of the eight synthetic images, along with the thresholds selected by the four thresholding methods.

Figure 5 illustrates the objective function curves for the proposed HIST method on the eight synthetic images.

Figure 5 also presents the differences in threshold selection among the four thresholding methods for these images. In

Figure 5, each green area represents the gray-level histogram of a test image, and each black curve denotes the objective function curve of the HIST method on this test image. According to

Table 1 and

Figure 5, for the eight synthetic images with different histogram patterns, the thresholds obtained by the proposed HIST method are consistently equal to or fall within the range of the optimal thresholds. In contrast, the thresholds selected by the other three thresholding methods deviate from the optimal thresholds to varying degrees.

Figure 6 further shows the segmentation results of the eight synthetic images obtained using the six methods.

The HBGT method utilizes the mean and standard deviation of the gray-level histogram to determine alpha and beta regions. Then, it selects the threshold by calculating the average gray levels of the alpha and beta regions. Because the HBGT method relies solely on statistical information, including the mean and standard deviation of the gray-level histogram for threshold selection, it has difficulty in selecting satisfactory thresholds for the eight synthetic images with different gray-level histogram patterns. Specifically, the synthetic images in

Figure 4a–d,g,h exhibit non-modal, unimodal, or multimodal patterns, respectively. The thresholds obtained by the HBGT method are 97, 128, 100, 82, 113, and 120, all of which significantly deviate from the optimal thresholds. For

Figure 4e,f, their gray-level histograms exhibit obvious bimodal patterns. The thresholds obtained by the HBGT are 120 and 84, respectively, which are close to the valley between the two peaks in the histograms (see

Figure 5e,f). However, they still have a certain degree of deviation from the optimal thresholds at the valley positions. Overall, it can be observed from

Figure 6a–h that the HBGT method fails to effectively extract the targets from the background in synthetic images with non-modal, unimodal, or multimodal histograms.

The SDVE method improves the Otsu method by introducing a modified valley metric based on the second-order derivative, aiming to make the threshold more likely to be located at the valley between two peaks or at the bottom of the unimodal histogram. The histograms of

Figure 4a,b exhibit an approximately uniformly distributed non-modal pattern. Due to the absence of obvious peak–valley features in their histograms, the SDVE method tends to select the threshold by maximizing the inter-class variance. The thresholds obtained by the SDVE method for these two images are 121 and 116, which deviate from the optimal thresholds by 6 and 61 gray levels, respectively, resulting in varying degrees of misclassification (see

Figure 6a,b). The Otsu method is suitable for thresholding images with a balanced target-to-background size ratio. Although the SDVE method introduces a second-order derivative weight into the objective function of the Otsu method to enhance its valley emphasis, for the two unimodal synthetic images with small targets in

Figure 4c,d, it still yields thresholds far from the optimal thresholds at the bottom right of the unimodal histograms (see

Figure 5c,d). Consequently, the SDVE method fails to effectively extract the small targets from noisy backgrounds (see

Figure 6c,d).

Figure 4e,f are two bimodal synthetic images with a relatively balanced size ratio of the target to the background. The thresholds obtained by the SDVE method are close to the valley between two peaks but still deviate to some extent from the optimal thresholds at the valleys, resulting in the misclassification of some background pixels as target pixels in the segmentation results (see

Figure 6e,f). For the two multimodal synthetic images in

Figure 4g,h, the SDVE method still has difficulty in selecting satisfactory thresholds, leading to obvious misclassification (see

Figure 6g,h).

The NEVGD method is an entropy-based method that combines nonextensive entropy with the gray-level variance, which enhances the ability of the maximum Tsallis entropy method to extract targets from the background to some extent. When the gray-level distributions of the targets and the background are uniform and non-overlapping, the maximum entropy method can theoretically obtain the optimal threshold. Thus, for

Figure 4a, the threshold obtained by the NEVGD method is 115, which is identical to the optimal threshold. For the non-modal image in

Figure 4b, as its distribution is approximately uniform across the entire range [0, 255], the NEVGD method results in a threshold of 126. This outcome is foreseeable considering the maximum Tsallis entropy. However, the threshold of 126 is up to 51 gray levels away from the optimal threshold. Combined with

Figure 6b, it can be seen that the NEVGD method fails to separate the target from this image. Although the NEVGD method introduces the gray-level variance in images into the objective function of maximum Tsallis entropy, it still produces serious misclassification for both unimodal and multimodal synthetic images (see

Figure 6c,d,g,h). For the two bimodal synthetic images in

Figure 4e,f, while the NEVGD method can roughly extract the targets, it still misclassifies some obvious background pixels as targets (see

Figure 6e,f), and its segmentation accuracy is also much lower than the proposed HIST method.

Figure 6 shows that the proposed HIST method overall achieves more accurate results.

Table 2 and

Table 3 present the ME and MCC values, respectively, for the six methods on the eight synthetic images. In these two tables, the minimum ME values and maximum MCC values corresponding to each image are indicated in bold. Statistical analysis indicates that, on the eight synthetic images, the average ME values for the HIST, HBGT, SDVE, NEVGD, FSC, and RAC methods are 0.0013, 0.2265, 0.1797, 0.1220, 0.1168, and 0.0153, respectively; the average MCC values for the six methods are 0.9969, 0.5715, 0.6385, 0.6603, 0.6873, and 0.7164, respectively. Compared to the RAC method, which holds the second-highest segmentation accuracy, the proposed HIST method achieves a 91.50% decrease in the ME mean and a 39.15% increase in the MCC mean. Since lower ME values and higher MCC values indicate superior accuracy, the results in

Table 2 and

Table 3 further confirm that the proposed HIST method possesses higher accuracy and greater adaptability for test images with non-modal, unimodal, bimodal, or multimodal histogram patterns.

7.3. Experimental Results and Analysis on Real-World Images

To test the six methods’ adaptability and accuracy for real-world images with non-modal, unimodal, bimodal, or multimodal gray-level histograms, comparative experiments are further performed on 100 real-world images. Among these test images, the gray-level histograms of images numbered 1 through 10 are non-modal; those of images numbered 11 through 29 exhibit unimodal; those of images numbered 30 through 69 are bimodal; and those of images numbered 70 through 100 are multimodal, with the number of peaks in the histograms being three or more. These real-world images were collected from various application domains and were captured using diverse imaging methods, primarily including ultrasonic imaging, infrared thermal imaging, and optical CCD imaging.

Figure 7 shows eight representative test images from the 100 real-world images, and the gray-level histograms of the eight images exhibit non-modal, unimodal, bimodal, or multimodal patterns (see

Figure 8).

Figure 8 also shows the objective function curves of the proposed HIST method on the eight images, and illustrates threshold selection differences among the four thresholding methods on the eight images. For the eight images shown in

Figure 7, their

is 195, 27, 198, 70, 188, 214, 173, and 52, respectively, as is their

.

Figure 8 demonstrates that the thresholds obtained via the proposed HIST method consistently approximate the optimal thresholds most closely. In contrast, thresholds derived from the other three methods exhibit varying degrees of deviation from the optimal thresholds. Specifically, for the two real-world images with non-modal gray-level histograms (see

Figure 8a,b), the thresholds obtained by the HBGT, SDVE, and NEVGD methods deviate from the optimal thresholds by 67 and 85 gray levels, 78 and 90 gray levels, and 68 and 84 gray levels, respectively. In contrast, the thresholds selected by the proposed HIST method are completely consistent with the optimal thresholds. For the two real-world images with unimodal gray-level histograms (see

Figure 8c,d), the NEVGD method yields thresholds closer to the optimal thresholds than the HBGT and SDVE methods, with deviations of eight and six gray levels, respectively. However, the thresholds obtained by the proposed HIST method deviate from the optimal thresholds by only one gray level. For the four real-world images with bimodal or multimodal gray-level histograms (see

Figure 8e–h), only the HIST method still obtains thresholds that are the closest to the optimal thresholds.

Figure 9 further shows six methods’ result images on the 8 representative real-world images. From

Figure 9, it can be observed that only the proposed HIST method can successfully separate the targets from the backgrounds. In contrast, the other five methods often exhibit varying degrees of misclassification. Taking the infrared pedestrian image with the multimodal pattern in

Figure 7g as an example, the comparison results of different methods are shown in

Figure 9g. In

Figure 9g, it can be observed that the HBGT, SDVE, FSC, and RAC methods fail to separate the pedestrian from the complex background, with all suffering obvious misclassification. Although the NEVGD method can extract the pedestrian, it mistakenly classifies some bright regions in the background as targets. In contrast, only the proposed HIST method successfully separates the pedestrian target from the background, yielding a result image that most closely resembles the ground truth image.

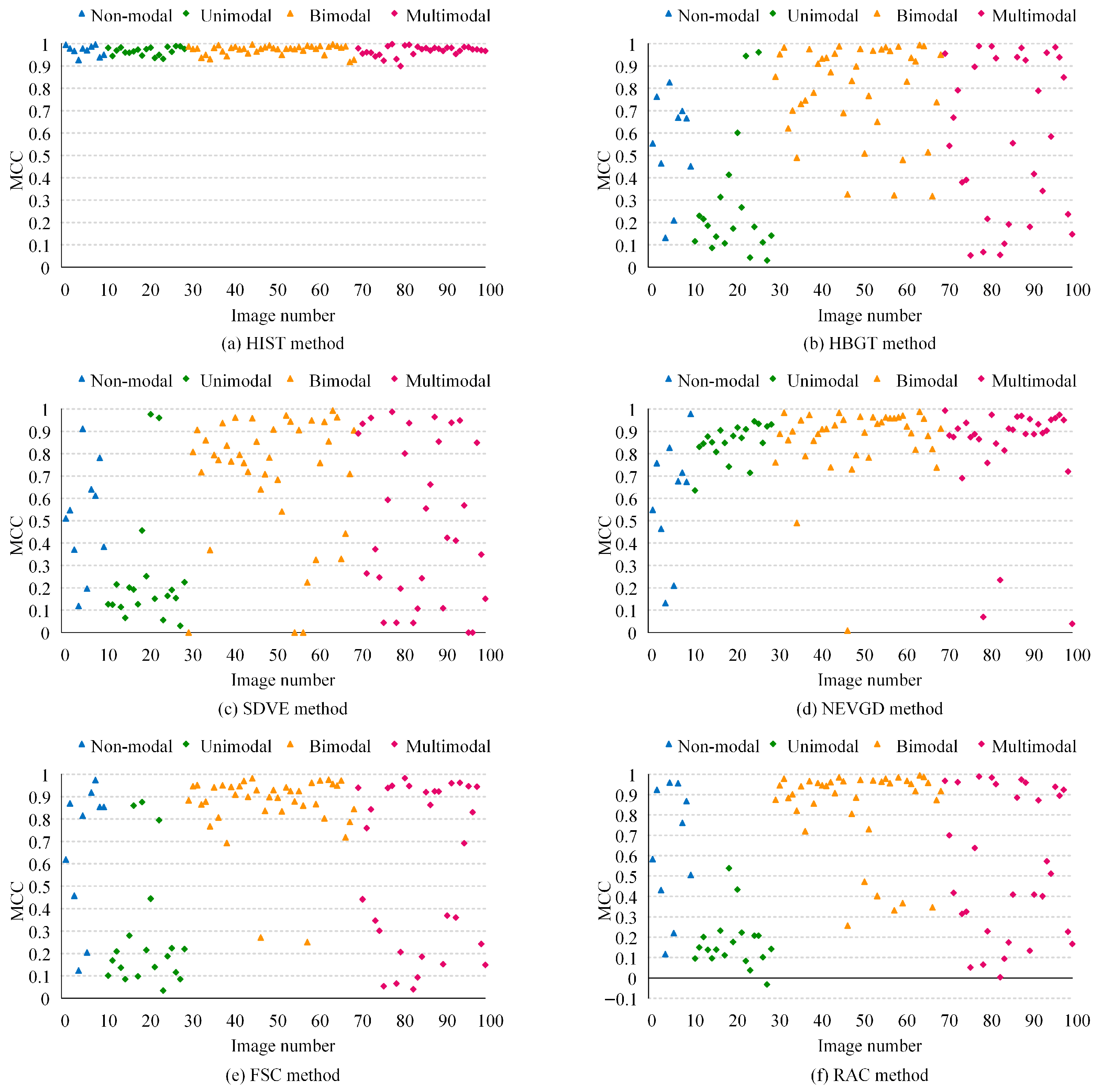

Figure 10 and

Figure 11 further show the ME and MCC values of six methods on all 100 real-world images. It can be calculated that the average ME values of the HIST, HBGT, SDVE, NEVGD, FSC, and RAC methods are 0.0052, 0.1749, 0.2381, 0.0675, 0.1508, and 0.1864, respectively, and the average MCC values of these methods are 0.9699, 0.6071, 0.5361, 0.8215, 0.6484, and 0.6027, respectively. Given that a lower ME and a higher MCC indicate better accuracy, the above statistical results show that the proposed HIST method overall has higher accuracy and greater adaptability on these 100 real-world test images. From

Figure 10 and

Figure 11, the following can be observed: (i) The ME values of the proposed HIST method are all less than 0.1, and the MCC values are all greater than 0.9, with minimal fluctuations in both metrics. This indicates that the HIST method possesses good accuracy and robust adaptability. (ii) The ME values of the HBGT method are scattered within the range of 0 to 0.7, and the MCC values are scattered between 0 and 1. The ME and MCC values of the SDVE method are both scattered between 0 and 1. These indicate that the HBGT and SDVE methods have relatively lower accuracy and weaker adaptability in general. (iii) Except for a few real-world images with non-modal distributions, the ME values of the NEVGD method are generally below 0.1, with a few values scattered between 0 and 1. The MCC values of the NEVGD method are primarily above 0.6, with a few below 0.6. These indicate that the NEVGD method performs well on unimodal, bimodal, and multimodal test images but is unsuitable for non-modal test images. (iv) FSC and RAC are non-thresholding methods that are more suitable for test images with bimodal distributions. In bimodal test images, their ME values are primarily scattered between 0 and 0.1, and their MCC values are mainly scattered between 0.7 and 1. However, they are not suitable for test images with non-modal, unimodal, or multimodal patterns.

We conducted paired

t-tests and Wilcoxon signed-rank tests on the ME and MCC scores obtained from the 100 real-world images. As shown in

Table 4, Paired

t-tests and Wilcoxon signed-rank tests on the 100 images showed that both the ME and MCC improvements are statistically significant (

p < 0.001).

7.4. Comparison of Computational Efficiency

To compare the computational efficiency of different methods, we calculated the CPU runtime for each method on 8 synthetic images and 100 real-world images, respectively. Considering that the CPU runtime may fluctuate at different times, to reduce this fluctuation effect, we calculated the average runtime of 10 consecutive runs as the CPU runtime for the method on each test image. Furthermore, the standard deviation of the CPU runtimes was used as an indicator of the stability in computational efficiency.

Table 5 presents the mean and standard deviation of CPU runtimes for each method on 8 synthetic images and 100 real-world images. According to

Table 5, the efficiency of the proposed HIST method is surpassed by only the simple thresholding methods HBGT and SDVE, but it outperformed the NEVGD, FSC, and RAC methods.

A synthetic image and a real-world image, shown in

Figure 4a and

Figure 7d, respectively, were utilized to further test the changing trend of computational efficiency for different methods as the image size varies. The original dimensions of the two images were 128 × 128 pixels and 256 × 256 pixels, respectively. In the experiments, the two images were enlarged to 1024 × 1024 pixels and 2048 × 2048 pixels, respectively.

Table 6 and

Table 7 indicate that as the image size gradually increases, the CPU runtime for all six methods also progressively increases. Overall, the HBGT, SDVE, and NEVGD methods exhibit a slower increase in CPU runtime. This is primarily because these three methods first extract one-dimensional gray-level distribution from an input gray-level image, and subsequent threshold calculations are performed in the one-dimensional information space. The slightly higher CPU runtime for the NEVGD is due to the additional determination of the nonextensive parameter value.

The HIST, FSC, and RAC methods suffer a rapid increase in CPU runtime, primarily because their computations are performed in the two-dimensional image space. For the HIST method, the main computational costs arise from the unified transformation, binary contour image extraction, and the calculation of homologous isomeric similarity. For the FSC method, the primary computational costs are associated with local variance and non-local spatial information, mean membership linking, and subspace weight allocation. For the RAC method, the main computational costs occur during region energy calculation, edge energy calculation, and energy function updating.

8. Conclusions and Future Work

Traditional representative thresholding methods tend to focus on handling images with specific gray-level histogram patterns. To address the challenge of automatically thresholding images with non-modal, unimodal, bimodal, or multimodal histogram patterns within a unified framework, the proposed HIST method applies a unified transformation toward unimodal distribution on the input image. This unified transformation converts gray-level histograms of different patterns into a unified, unimodal, right-skewed gray-level histogram, thereby reducing the prior dependence of the HIST method on the gray-level histogram of the original image. Furthermore, the HIST method converts the problem of selecting a reasonable threshold into a computational problem of HIS, and the normalized Renyi mutual information can robustly measure the HIS between the gray-level edge image and the binary contour image. However, implementing this unified transformation and similarity maximization within a computationally efficient framework presents a challenge.

Despite the challenge of maintaining high computational efficiency, experimental results show that the proposed HIST method outperforms five other methods—HBGT, SDVE, NEVGD, FSC, and RAC—in terms of adaptability and accuracy for test images with non-modal, unimodal, bimodal, or multimodal histogram patterns. On synthetic images and real-world images, compared to the second-best method, the proposed method reduces the average ME by 91.50% and 92.30%, respectively, and increases the average MCC by 39.15% and 18.06%, respectively. While the method demonstrates superior segmentation accuracy across diverse modalities, the trade-off between computational cost and precision remains a consideration for real-time applications.

The computational efficiency of the proposed HIST method is one direction that could be further strengthened. One future work will focus on refining the algorithmic process to enhance efficiency, minimizing unnecessary computations, and investigating the implementation of more sophisticated data structures. Moreover, exploring the potential of approximation algorithms is a promising line of inquiry that could potentially reduce computational time without substantially affecting the accuracy and adaptability that the HIST method offers. The main computational costs of the HIST method arise from image processing operations (e.g., bilateral filtering, edge detection) and the iterative calculation of similarity measures for multiple thresholds. These tasks are inherently suitable for parallel execution and align well with the SIMT (Single Instruction, Multiple Data) architecture of modern GPUs, and future work will involve developing a GPU-accelerated version of the HIST method to significantly reduce runtime.

The HIST method currently focuses on selecting a single threshold. Another future work will explore extending the HIS-based methodology to support multi-level thresholding, ensuring the algorithm maintains high efficiency and accuracy. This may require developing innovative algorithmic frameworks to handle the concurrent optimization of multiple thresholds.