1. Introduction

In critical fields such as aviation flight testing, nuclear physics detection, and industrial measurement and control, with the iterative upgrading of detection technologies and sensing devices, data acquisition has exhibited the characteristics of multi-target, multi-system, and multi-data type, imposing stringent requirements on the consistent acquisition and real-time storage of data. In such scenarios, various types of data generally need to be converted into PCM data streams for unified storage, and the continuous data of hundreds of megabytes per second in flight testing has become a core bottleneck restricting system performance.

Current high-speed storage systems are confronted with three core challenges: First, insufficient adaptability to extreme environments—scenarios like aviation flight testing require endurance of harsh conditions with a wide temperature range from −40 °C to 80 °C. Second, poor coordination between high-speed interfaces and storage management—controllers dominated by commercial IP cores in mainstream solutions have weak customizability, making it difficult to match the storage and export requirements of high-rate data streams. Third, difficulty in balancing reliability and transmission efficiency—traditional storage systems either prioritize speed but lack redundancy design, or rely on complex external protection to achieve environmental adaptation, leading to high costs or limited practicality. Therefore, developing a high-capacity storage system with high speed, high reliability, and strong environmental adaptability is of great practical significance for meeting the data storage needs in critical fields.

In recent years, scholars at home and abroad have conducted extensive research on high-speed storage systems, forming various technical solutions:

In terms of storage interface protocols and controller implementation, the development of high-speed serial communication technology has driven the rate of upgrading of storage interfaces. Fudan University proposed a 1.25 Gbps Ethernet receiver design scheme, laying a foundation for the high-speed transmission of storage systems [

1]. As early as 2013, Gorman et al. developed an open-source SATA core suitable for Virtex-4 series FPGAs, providing early technical reference for the hardware implementation of SATA protocols on FPGA platforms [

2]. The Kumar team carried out in-depth analysis on the application challenges of SAS 4.0 (22.5 Gbps) in server platforms, offering a performance optimization direction for the hardware adaptation of high-bandwidth storage interfaces [

3]. However, the above solutions mostly focus on general scenarios or rely on specific FPGA models, making it difficult to adapt to the customized needs in extreme environments such as aviation testing. In addition, some solutions are implemented using commercial IP cores, resulting in limited scalability and scenario adaptability.

In terms of storage medium and system architecture optimization, Meng Yuan proposed an acquisition and storage system based on eMMC storage, with a maximum storage rate of 160 MB/s, featuring high integration and scalability, but the rate still cannot meet the storage requirements of high-rate PCM data streams [

4]. Some studies have improved the array data security and I/O performance through Solid-State Drive-based (SSD-based) RAID-5 fast online reconstruction schemes and machine learning-assisted RAID scheduling algorithms [

5,

6]. For extreme environments, scholars have developed aerospace-specific high-speed data recorders and radiation-resistant on-board FPGA schemes, providing reliability support for special scenarios [

7,

8,

9]. Nevertheless, these solutions either focus on storage reliability optimization while ignoring rate improvement, or lead to increased costs due to complex architectures, failing to achieve the coordinated unification of high speed, high reliability, and strong environmental adaptability.

In terms of transmission stability and data security, technologies such as LVDS interfaces, 8 b/10 b encoding optimization, and power line electromagnetic interference suppression have provided diversified solutions for data transmission [

10,

11,

12,

13]. Bulbul et al. proposed a privacy-preserving multi-user searchable encryption scheme. The network attack detection technology developed by the Najafabadi team and the IoT-oriented AES module designed by Lee M et al. have enhanced the access security of stored data and the network-side and terminal-side data security of embedded storage systems, respectively [

14,

15,

16]. However, such studies mostly focus on performance optimization of a single link, making it difficult to solve the end-to-end performance bottleneck in the storage of high-rate data streams.

In summary, existing research still has room for improvement in terms of storage rate, environmental adaptability, and software-hardware coordination, and cannot fully meet the real-time storage requirements of high-rate PCM data streams in scenarios such as flight testing. This paper designs a SATA3.0 high-speed mass storage system with Kintex-7 series FPGA as the core. The system implements a SATA3.0 full-protocol stack controller by independently writing Verilog code, integrates the FAT32 file system, and is equipped with ruggedized mSATA hard disks. It can achieve stable storage in a wide temperature range of −40 °C to 80 °C, meeting the multi-type data acquisition needs in scenarios such as flight testing. Compared with the problems of mainstream storage systems, such as reliance on commercial IP cores, poor customizability, and insufficient adaptability to extreme environments, the innovation points of this system focus on the design of an independently controllable SATA controller and the direct mapping management between the file system and hardware, which effectively break through the technical limitations of traditional solutions and have important engineering application value.

The structure of this paper is as follows:

Section 2 introduces the overall design scheme of the system, clarifying the composition and core functions of each functional module;

Section 3 elaborates on the hardware system design, including the circuit implementation of power management, SATA interface, Double Data Rate 3 Synchronous Dynamic Random-Access Memory (DDR3) cache, and communication interface;

Section 4 expounds on the software system design, focusing on the detailed analysis of the SATA controller logic, module integration, and the construction of the FAT32 file system;

Section 5 verifies the system performance through experimental tests.

4. System Software Design

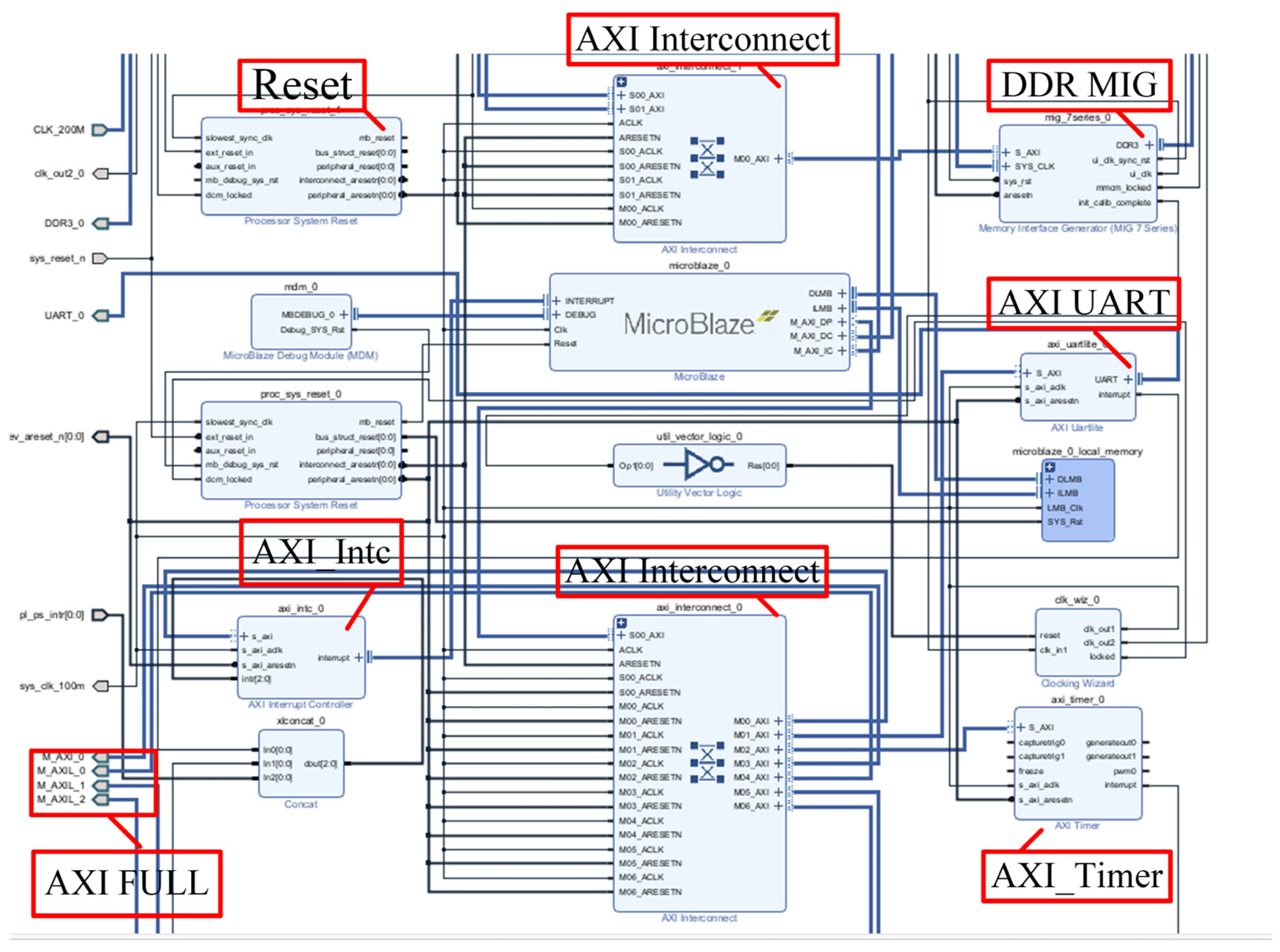

The internal logic modules of the FPGA are shown in

Figure 5: they mainly consist of the MicroBlaze control module, SATA controller module, and other components. All internal logic modules are uniformly controlled and coordinated by the MicroBlaze soft core via the Advanced eXtensible Interface (AXI) bus. When the PCM data stream is received through the LVDS communication module and needs to be stored, or when the host computer reads back data, the MicroBlaze sends commands to the SATA controller to control data reading or writing. During data transmission, the DDR3 controller IP is used to control the DDR3 chip for data buffering, and real-time status detection is also performed.

4.1. SATA Controller Logic

The SATA controller serves as the central hub for data transmission and storage in the entire storage system. Its performance directly determines the communication efficiency between the SATA 3.0 bus and the mSATA drive, the stability of data transmission, and the collaborative adaptability with other system modules, making it a critical technical enabler for the real-time storage of high-bit-rate PCM data streams. To clearly present the design logic and implementation approach of the SATA controller, the subsequent sections will first systematically analyze the core functionalities, protocol specifications, and interaction mechanisms of each layer based on the hierarchical architecture of the SATA protocol (Physical Layer, Link Layer, Transport Layer, Application Layer), thereby establishing the theoretical foundation for the controller’s logical design. This will be followed by a detailed explanation of the internal logical architecture of the controller, which is independently implemented using the Verilog language, including the division of functional modules, finite state machine design, and the control flow for data transmission/reception. The collaborative working mechanism between the controller and other modules, such as the MicroBlaze soft-core processor and DDR3 cache, will also be clarified.

4.1.1. SATA Protocol Analysis

The full English name of SATA is Serial Advanced Technology Attachment, which is a high-speed storage interface standard based on serial transmission technology. As an upgraded replacement for parallel interfaces, the SATA interface adopts an LVDS transmission mode, boasting advantages such as high transmission rate and strong anti-interference capability. The SATA 3.0 version adopted in this paper is an iterative release of this interface standard, with a theoretical transmission rate of up to 6.0 Gb/s.

With reference to the OSI seven-layer model, the SATA interface protocol can be divided into the following four layers: the Physical Layer, Link Layer, Transport Layer, and Application Layer [

20]. The schematic diagram of its hierarchical structure is shown in

Figure 6.

As the lowest layer of the SATA protocol, the Physical Layer mainly functions to transmit and receive serial data streams, extract data and clock signals from serial data streams, and initialize the SATA interfaces of the host and device, as well as negotiate transmission speeds. The Physical Layer establishes communication between the host and device via OOB signals [

21]. OOB signals are low-frequency signals composed of ALIGN primitive signals and idle levels in the SATA protocol. According to differences in idle level time intervals and functions, they can be divided into three types: COMRESET, COMINIT, and COMWAKE signals. The COMRESET signal is sent from the host to the device to implement hardware reset of the device; the COMINIT signal is sent from the device to the host to request communication initialization; and COMWAKE, as a bidirectional signal, is used to activate the Physical Layer in a power-off state.

The Link Layer is mainly responsible for implementing data frame transmission control. During transmission, the Link Layer does not need to identify the content of frames; instead, it controls the frame transmission process by delivering primitives. When the Transport Layer issues a frame transmission request, the Link Layer first negotiates with its peer Link Layer to ensure that the device gains priority in transmission when both the host and device have data transmission requirements. It then receives data input from the Transport Layer in double-word units, adds frame envelope information such as Start of Frame (SOF) and End of Frame (EOF) to the data, completes CRC verification of the data, scrambles the data, and finally performs 8 b/10 b encoding before sending the frame to the Physical Layer.

The Transport Layer is primarily used for processing Frame Information Structure (FIS), and its main operations include two items: first, simply constructing FIS for transmission, and second, decomposing received FIS. When receiving a request to encapsulate FIS from the Application Layer, the Transport Layer first identifies the type of FIS to be constructed. Common FIS types and their corresponding type numbers are shown in

Table 1 below, then acquires corresponding data from designated registers, encapsulates the data into FIS, and sends it to the Link Layer. When receiving FIS transmitted from the Link Layer, the Transport Layer decapsulates it to obtain corresponding data and commands, and then notifies the Application Layer to receive the decapsulated information.

The Application Layer mainly directly controls the device’s command block register group to drive hardware operations by receiving and parsing ATA commands issued by the operating system. According to the commands for controlling the device, the commands of the Application Layer are mainly divided into NON-Data commands, PIO commands, and DMA commands.

4.1.2. Implementation of SATA Controller Logic

Based on the principles of the Physical Layer, Link Layer, Transport Layer, and Application Layer of the SATA protocol, the implementation was coded in Verilog within the Vivado development environment. The internal logic framework of the SATA controller is shown in

Figure 7: the MicroBlaze soft core sends control instructions and data to the SATA controller via the AXI.

Its Physical Layer mainly consists of a GTX transceiver and an OOB control module. The establishment of a physical path is primarily achieved by the OOB control module controlling the transmission and reception of OOB signals. The state machine transition flow chart in the control module is shown in

Figure 8: after the system is powered on or reset, the state machine enters the initial state OOB_IDLE; in this state, the OOB controller sends a COMRESET signal, and after transmission is completed, it waits for the storage device to send a COMINIT signal to the FPGA. If the waiting time exceeds 10 ms, it returns to the initial state to resend the COMRESET signal; if the COMINIT signal is received, it proceeds to the next state. After the OOB controller sends a COMWAKE signal to the storage device, it detects whether the storage device returns a COMWAKE signal. Upon detecting the COMWAKE signal, it sends the D10.2 character, then waits to receive the ALIGNP primitive sent by the storage device within a time limit of 880μs. If the time limit is exceeded, the OOB state machine resets and returns to the initial state; if the ALIGNP primitive is received, it indicates that the transmission rate matching is completed, after which the OOB controller sends the ALIGNP primitive and starts detecting the synchronous SYNC primitive. When three consecutive back-to-back SYNC primitives are detected, it jumps to the LINKUP state. In this state, the link has been fully established, and the OOB controller pulls high the PHYRDY flag bit indicating successful data path establishment. When the link is just established, only ALIGN

P and SYNC primitives are transmitted alternately, with no data transmission occurring.

As shown in

Figure 7, when the SATA controller controls data frame transmission, two state machines are mainly used to control each underlying module. Among them, the data frame generation state machine is primarily responsible for encapsulating data frames when receiving a transmission request from the Application Layer. The state machine determines the type of data frame to be sent (e.g., 27 h, 34 h, 41 h, 46 h, etc.) based on commands from the Application Layer. If the FIS type is 46 h (i.e., data transmission), data is read from the write FIFO; for other types, data is read from registers in the Application Layer. Then, after encapsulation according to the corresponding frame structure, a CRC check code, frame envelope information, etc., are added. Once the data frame is constructed, the DATA_RDY request bit is sent.

The data frame transmission control state machine is mainly responsible for controlling the transmission of data frames, as shown in

Figure 9: after the physical path is established and the PHYRDY flag bit is detected to be pulled high, this state machine enters the idle state. When the transmission request DATA_RDY is detected to be set to 1, it controls the primitive transmitter to send the X_RDY primitive. After receiving the R_RDY primitive in response from the storage device side, it jumps to the transmission state and controls the frame transmission FIFO to send data frames. If the frame transmission FIFO is empty but the data frame transmission is not completed, it sends a HOLD primitive to control the storage device to pause data reception; when the data volume in the FIFO reaches a certain capacity, it sends the HOLD primitive again to inform the storage device to resume data reception. After data transmission is completed, it waits for feedback from the storage device and then returns to the idle state to wait for the next transmission.

During data reception, only one data reception state machine is used for control, and its flow chart is shown in

Figure 10; its main function is to control the data reception process. After detecting the successful establishment of the Physical Layer path, this state machine detects the X_RDY primitive. Upon detecting this primitive, it enters the data reception state; when the SOF primitive is detected, it starts receiving data frames. While receiving data frames, it judges the type of the received data frame and performs corresponding operations based on different types. If the receive FIFO is full, but the data frame reception is not completed during data reception, it sends a HOLD primitive to notify the device side to pause transmission; when sufficient space becomes available in the FIFO, it sends the HOLD primitive again to notify the device side to resume transmission. After completing the reception of one data frame, it performs a CRC check upon detecting the EOF primitive and uploads the check result; finally, it returns to the initial state after receiving the SYNC synchronization primitive.

The application layer implements a subset of the ATA command set [

22] by means of a finite state machine (FSM), including commands such as ReadDMAExt, WriteDMAExt, FPDMARead, FPDMAWrite, SetFeatures and IdentifyDevice. The command layer parses read/write requests and issues corresponding sector operation instructions to the transport layer. The execution of each command involves the exchange of a series of frame information structures. The MicroBlaze processor embedded in the FPGA writes commands and related parameters to the mirror registers of the application layer via the AXI, thereby controlling the operation of the underlying modules.

4.2. Block Design

The storage system supports a file system, with the MicroBlaze soft core serving as the control and scheduling module of the large-capacity storage system, through which the construction of the file system and overall control of the storage system are implemented [

23]. The implementation of the MicroBlaze module design is accomplished by invoking the embedded processor built into Xilinx. According to system requirements,

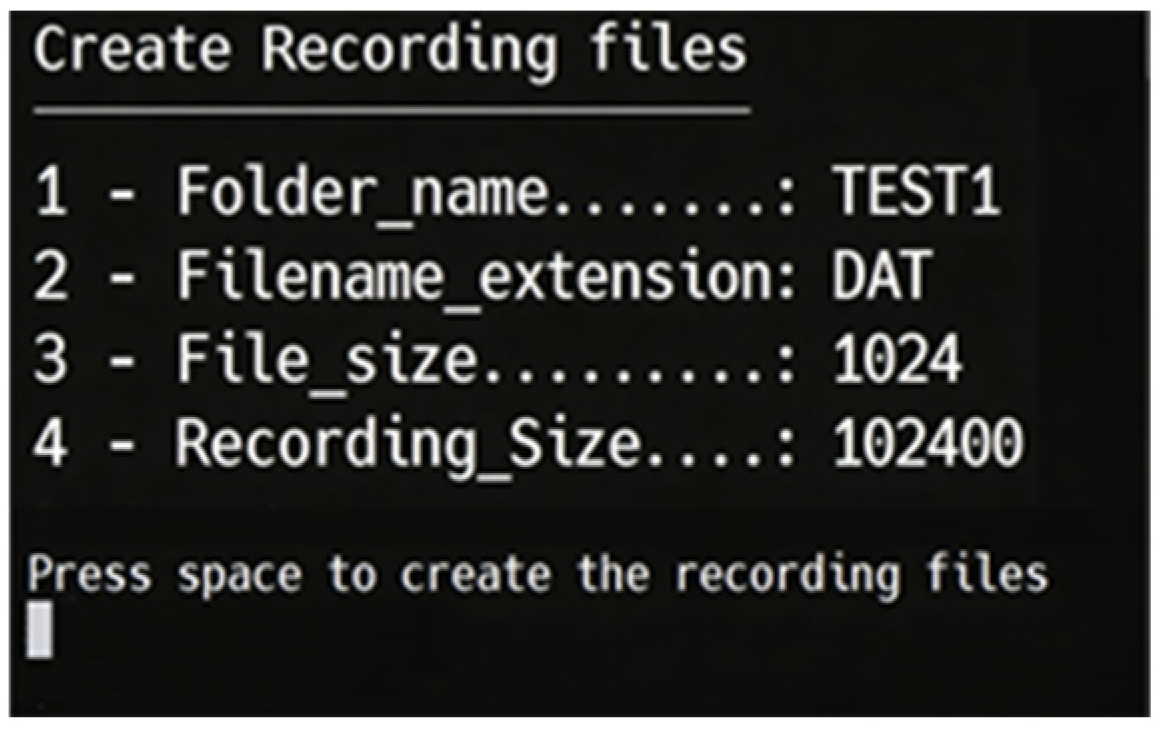

Figure 11 shows the Block Design diagram: interrupt controllers, MIG, UART, and other system-built IP cores are integrated into the MicroBlaze soft core system. During the testing phase, commands are sent via the UART serial port to test the performance of the storage system. To simplify the design of the high-speed interface between the FPGA and DDR3, the MIG core is adopted in this design; it features a standardized AXI4 interface that can be directly controlled by the MicroBlaze soft core. Meanwhile, the MicroBlaze soft core module controls the data input channel module and SATA controller module via the AXI.

4.3. File System Design

The system adopts Vitis software for the development of the MicroBlaze embedded system and implements a FAT32 file system manager capable of directly manipulating disk sectors. The adoption of the FAT32 file system in this design is primarily driven by scenario-specific requirements. Specifically, it offers robust cross-platform compatibility, allowing direct data access on diverse host systems, including Windows and Linux, as well as industrial test equipment without the need for additional drivers, which aligns with the demand for rapid analysis of flight test data. Its core logic, encompassing the management of the File Allocation Table (FAT) and directory entries, can be readily implemented on the MicroBlaze soft core without consuming excessive FPGA hardware resources. Moreover, it supports direct mapping to the Logical Block Addressing (LBA) operations of the SATA controller, thereby guaranteeing real-time storage performance under high data rate conditions. When integrated with a software-based data backup mechanism, the system is able to meet the reliability requirements of airborne applications. In contrast, while the exFAT file system is free from capacity constraints, it exhibits inadequate compatibility with legacy industrial control devices and entails high protocol complexity, which adds to the development overhead of the embedded system. Raw data storage schemes that dispense with a formal file system rely on custom parsing programs, resulting in inflexible data management and difficulties in verification using universal tools, thus limiting their engineering applicability. Meanwhile, the FAT32 file system has inherent limitations: the constraints of a maximum single-file size of 4 GB and a maximum partition size of 2 TB give rise to insufficient scalability for large-scale data storage scenarios.

The file system primarily consists of two main components: file/directory management and FAT32 structure parsing. Structure parsing is crucial for initialization, as it reads the disk’s Master Boot Record (MBR) and FAT32 Boot Sector (BPB) to extract corresponding storage device information, such as cluster size, FAT location, and the starting cluster of the root directory.

The file/directory management function is mainly to find corresponding empty slots (0x00 or 0xE5) in the directory sector and write a 32-byte “directory entry” data structure, including file name, attributes, starting cluster number, and file size. This information enables precise positioning of the corresponding file. When storing data in a file, it is sequentially stored in the data area according to the corresponding file information. The content composition of the mSATA drive is shown in

Figure 12 below:

The operations of the file system are mainly implemented by converting corresponding logical requests (reading/writing specific LBAs) into read/write operations on the memory-mapped registers of the SATA controller’s Application Layer. By writing specific parameters for file system operations to the memory-mapped addresses of the Application Layer, such as the LBA register and command register, the SATA controller is driven to perform corresponding operations on the mSATA solid-state

To prevent irreversible damage to file system data caused by sudden system power failures, this system performs backup processing on the overall file system information, file information descriptor structure table, and FAT stored in the reserved area and FAT area. The backup data is stored immediately after the corresponding original data blocks. Each time the system powers on and mounts the file system, it reads the data from the reserved area and FAT area on the hard disk for verification and initialization. If data corruption or unavailability is detected, the backup data will be read and used to replace the damaged original data. The detailed workflow is illustrated in

Figure 13.

When the system mounts the file system, it first reads the information from the reserved area and FAT area on the hard disk into the local storage structure and then performs the following checks: based on the starting cluster number of free clusters in the overall file system information, traverse the free cluster linked list in the FAT to verify whether the number of free clusters matches the record in the overall file system information and whether each entry in the free cluster linked list is marked as free, and verify whether the total number of valid files matches the file count recorded in the overall file system information; if all the above checks are passed without errors, it indicates that the original data on the hard disk is not damaged, the system then overwrites the data in the backup tables with the original data and completes the file system mounting, whereas if any check fails, the original data is deemed corrupted and the system reads the backup data of the reserved area and FAT area from the hard disk, after which the same data verification process performed on the original data is applied to the backup data, if the backup data passes the verification, it will overwrite the original data, and if the backup data also fails the verification, it means that the file system data on the hard disk is completely damaged, in which case the hard disk can only be formatted and the file system reinitialized.

4.4. On-Chip Resource Utilization

The on-chip resource utilization of this design is generally moderate, with no risk of resource bottlenecks. Among the core logic resources, the utilization rate of look-up tables (LUTs) is 12.82%, and that of registers is 5.55%. Among the storage resources, the utilization rate of LUTRAM is 6.57%, and that of block RAM (BRAM) is 14.61%. Among the interface and clock resources, the utilization rate of input/output (I/O) interfaces is 16.00%, that of high-speed serial transceivers is 12.50%, that of mixed-mode clock managers (MMCMs) is 30.00%, and that of phase-locked loops (PLLs) is 10.00%. The utilization rate of digital signal processors (DSPs) is only 0.48%, As shown in

Figure 14 below: