1. Introduction

Cyberbullying and hateful content on social media often exhibit multi-modal and highly context-dependent characteristics, where visual and textual cues interact and semantics are frequently implicit, metaphorical, or encoded, posing significant challenges for automated detection. The Hateful Memes Challenge formally introduced the task of multi-modal hate speech detection, providing a dataset and evaluation protocol that clarify its difficulties and assessment criteria [

1]. The subsequent competition report systematically summarized participating systems, data splits, and evaluation practices, offering empirical conclusions on research directions and common pitfalls [

2]. On a larger scale Twitter corpus reflecting real platform styles, MMHS150K organizes images, text, and weak labels, offering a reproducible experimental testbed for cross-platform or cross-domain research [

3]. Concurrently, work on explainable hate speech corpora highlights practical difficulties arising from annotation disagreements and semantic complexity [

4].

Memes and short texts prevalent on major social platforms are characterized by substantial cross-platform stylistic variation, subtle expression, sparse labeling, and high noise, causing models trained on a single dataset to struggle with generalization to other platforms or temporal contexts [

1]. This distribution shift manifests at both the visual level (composition, filters, fonts) and the linguistic level (slang, metaphors, cultural context), further complicating cross-domain adaptation [

3]. Effective detectors must therefore integrate visual and textual information, cope with noisy and weak supervision, and remain robust as platforms and meme conventions evolve.

In multi-modal representation learning, Radford et al. [

5] proposed CLIP, which constructs a transferable cross-modal semantic space through image–text contrastive learning. Jia et al. [

6] introduced ALIGN, leveraging large-scale noisy text supervision for vision–language representation learning and emphasizing the importance of scale and robustness. Kim et al. [

7] designed ViLT, which removes convolutional or region-based supervision on the visual side and achieves early fusion of image and text directly within a Transformer architecture, significantly simplifying the model structure. Subsequently, Li et al. [

8] introduced ALBEF, which incorporates momentum distillation under the align before fuse principle to enhance cross-modal representations, and Li et al. [

9] proposed BLIP-2, an efficient cross-modal transfer paradigm that freezes the visual encoder and language model. Zhai et al. [

10] proposed SigLIP, replacing the softmax contrastive paradigm with a sigmoid loss to improve optimization stability and performance. In retrieval-augmented vision–language pretraining, Hu et al. [

11] integrated multi-source multi-modal knowledge memory with model training, explicitly utilizing external knowledge bases to improve downstream performance. On the text and retrieval side, Karpukhin et al. [

12] designed the Dense Passage Retrieval framework, and Lewis et al. [

13] integrated retrieval into the downstream inference pipeline with Retrieval-Augmented Generation. In research more closely aligned with meme recognition, retrieval-guided contrastive learning has been shown to yield significant gains on the Hateful Memes dataset [

14].

In parameter-efficient fine-tuning, BitFit demonstrated that updating only bias terms can achieve performance close to full fine-tuning [

15]. LoRA injects task-specific capabilities via low-rank adaptation matrices [

16], while AdapterFusion enables task composition and transfer through parameterized side paths [

17]. More generally, systematic surveys on vision–language pretraining summarize recent trends in modeling, training, and adaptation [

18].

Despite these advances, state-of-the-art systems for multi-modal hate speech and cross-platform cyberbullying detection often rely on extremely large backbones (e.g., ViT-L/H scale models), full parameter fine-tuning, online retrieval, or model ensembles. This directly leads to high training costs, complex deployment pipelines, and significant online latency or external dependencies [

5,

6,

9,

11,

14]. Concurrently, label sparsity and noise in meme-style scenarios can exacerbate risks such as overfitting or unstable threshold behavior and can degrade knowledge distillation if teacher predictions are not calibrated or filtered, leading to calibration bias and poor minority class performance [

4,

19,

20]. In practice, platforms need detectors that (i) are robust under cross-domain distribution shifts, (ii) are parameter and data efficient, and (iii) can be deployed as a single, self-contained model without online retrieval or ensembles at inference.

To address these challenges, we propose a lightweight, parameter-efficient transfer learning framework for multi-modal cyberbullying and hateful content detection. First, following the “Don’t Stop Pretraining” principle [

21], we perform domain-adaptive pretraining of a compact ViLT backbone [

7] on in-domain meme-like image–text corpora such as MMHS150K [

3], so that the student model is aligned with platform-specific meme distributions before supervised fine-tuning on Hateful Memes [

1]. Second, we employ a BitFit-style parameter-efficient fine-tuning strategy that updates only bias terms, a small subset of normalization parameters, and the classification head, thereby keeping the inference computational graph unchanged and drastically reducing the proportion of trainable parameters and peak GPU memory usage [

15]. Third, we construct a stronger teacher model using pretrained text and CLIP-based image–text encoders [

5], which generates temperature-scaled soft labels offline for training and validation samples. The student is trained with a combination of supervised loss and noise-aware knowledge distillation, using only high-confidence teacher predictions to mitigate negative transfer caused by noisy labels. Teacher scoring and any retrieval infrastructure are used only to generate offline soft labels; the deployed student requires only a single forward pass at test time.

The main contributions of this paper are summarized as follows:

We propose a parameter-efficient cross-platform multi-modal transfer learning framework for cyberbullying and hateful content detection that combines domain-adaptive pretraining, BitFit-style fine-tuning, and confidence-gated distillation, without altering the inference computation graph and while substantially lowering training and deployment costs compared to full-parameter and retrieval-augmented systems.

We conduct an empirical study on the Hateful Memes benchmark to analyze the impact of domain-adaptive pretraining and noise-aware distillation on dev-set AUROC and F1 under realistic label sparsity and noise; we additionally report an auxiliary text-only experiment on IMDB to demonstrate that the same deployment-aware training recipe remains applicable when non-text modalities are unavailable.

We implement our framework such that teacher prediction and any retrieval components are used entirely offline, and student inference consists of a single forward pass with no online retrieval or ensembles. We report efficiency metrics including the proportion of trainable parameters and peak training memory, and discuss deployment considerations for resource constrained environments.

Section 2 reviews related work on multi-modal toxicity detection, adaptation, retrieval-augmented modeling, and parameter-efficient fine-tuning.

Section 3 details our framework, including domain-adaptive pretraining, BitFit and LayerNorm fine-tuning, and confidence-gated distillation.

Section 4 presents datasets, metrics, implementation details, ablations, and results.

Section 5 discusses limitations, ethics, and deployment.

Section 6 concludes and outlines future directions.

3. Proposed Method

We tackle multi-modal cyberbullying detection as a binary classification task on image–text memes. Given an image I, its associated textual content T (caption and OCR text), and a binary label , the goal is to predict whether the meme contains cyberbullying or hateful content. Our method consists of a single lightweight multi-modal student model and two transfer mechanisms: (i) cross-platform domain-adaptive pretraining on MMHS150K, and (ii) risk-aware retrieval-augmented multi-modal knowledge distillation (RAMM-KD) from a stronger teacher. During deployment, only the student model is used, which keeps the system compact in terms of storage and inference cost.

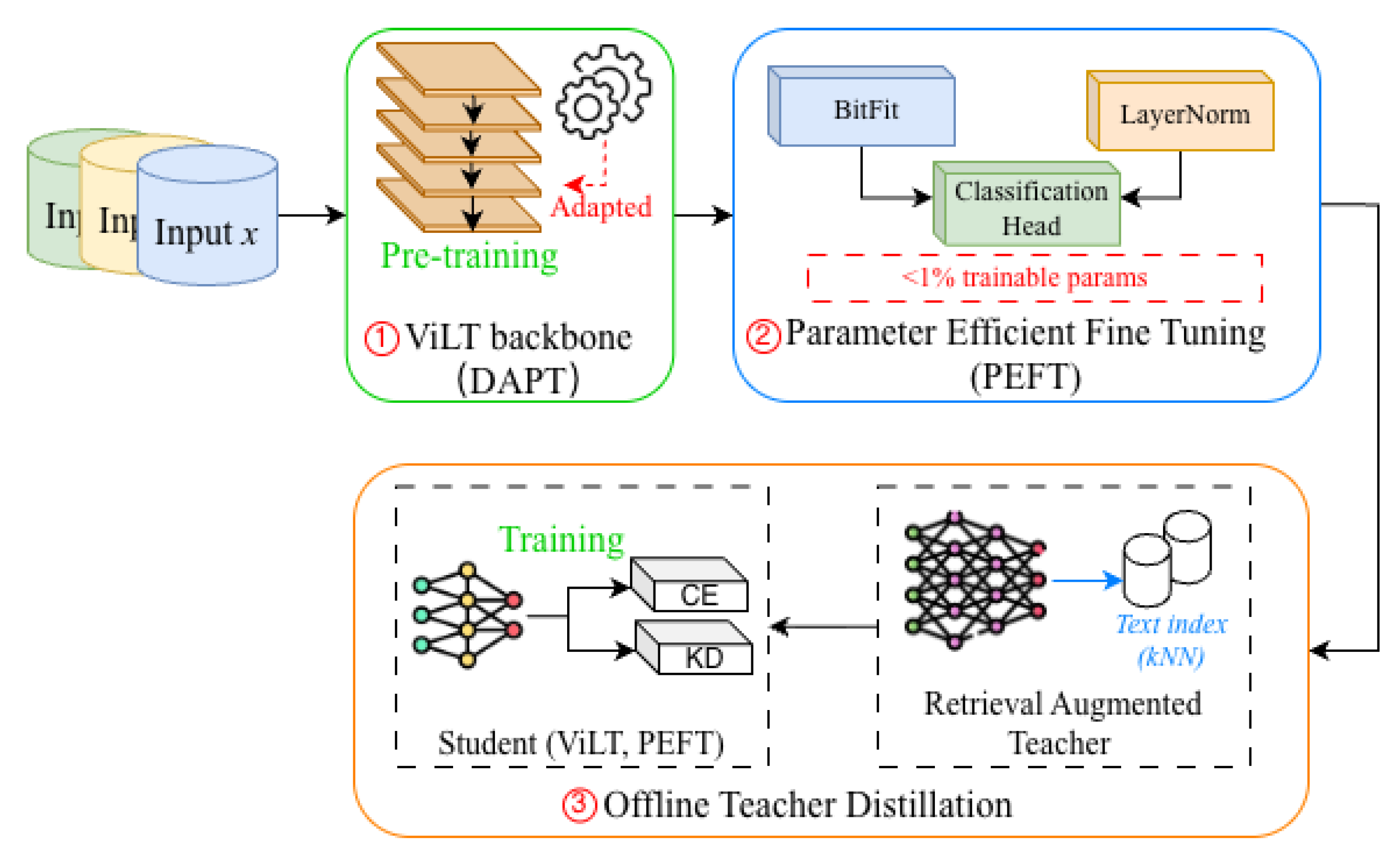

As shown in

Figure 1, a compact ViLT backbone is first adapted to meme-like distributions with domain-adaptive pretraining (DAPT). The resulting checkpoint is then fine-tuned on Hateful Memes and text-only tasks using a parameter-efficient scheme that updates only bias terms, a small subset of LayerNorm parameters, and the classification head. Finally, a retrieval-augmented teacher, used only offline, produces temperature-scaled and confidence-gated soft labels for knowledge distillation. At inference time, only the lightweight student is deployed, with no retrieval or teacher dependencies.

3.1. Multimodal Student Model

We adopt ViLT as the student backbone because it provides an early-fusion vision–language Transformer that directly operates on image patches and text tokens, avoiding external region detectors and keeping the inference graph simple. Compared with heavier region-based VLP pipelines or dual-encoder designs that often require extra fusion components, ViLT offers a compact accuracy–efficiency trade-off that aligns with deployment constraints. For each meme, the image I is resized to a fixed resolution and divided into non-overlapping patches, which are linearly projected into visual tokens. The textual content T is constructed by concatenating the meme caption and OCR-extracted overlaid text, and tokenized using a WordPiece tokenizer with a fixed length budget .

We then form a joint sequence

where

denotes the number of visual tokens. Standard token, modality type and positional embeddings are added,

The encoder consists of

L Transformer blocks with multi-head self-attention and position-wise feed-forward layers. At the

ℓ-th layer, hidden states are updated as

where

denotes multi-head self-attention,

the feed-forward network, and

layer normalization.

We use the final hidden state of the special token

as the joint representation

A two-layer MLP classifier produces the logit

and probability

,

where

inside the MLP is the GELU activation, and the outer

is the sigmoid function.

To make the student model lightweight and easy to deploy, we follow the BitFit philosophy and only update

bias parameters and

LayerNorm affine parameters during all task-specific training stages. Let

denote all parameters of the ViLT backbone. The trainable parameter subset is

which accounts for less than

of the full model parameters. The remaining parameters are kept frozen. This design reduces task-specific storage and memory footprint while preserving the representational power of the pretrained ViLT backbone and keeping the inference computation graph unchanged.

The DAPT stage is also illustrated in

Figure 2. Meme-style image–text pairs from MMHS150K are fed into a ViLT masked language modeling (MLM) head: for each example, a subset of text tokens is randomly masked, and the model is trained to predict the masked tokens given the image and the remaining text. Formally, for an input

, let

denote the corrupted text sequence and the set of masked positions, and let

be the original token at position

. The MLM loss is

Unlike contrastive objectives such as ITM/ITC, our DAPT phase focuses on token-level reconstruction, which is simple to implement and empirically sufficient to adapt the backbone to meme-like language and visual contexts before supervised fine-tuning.

3.2. Cross-Platform Domain-Adaptive Pretraining

The Hateful Memes dataset is limited in both size and stylistic diversity. To expose the student model to a broader range of hateful and Non-Hateful Memes before supervised training, we perform cross-platform domain-adaptive pretraining on the MMHS150K dataset collected from Twitter [

3]. MMHS150K provides large-scale image–text pairs with weak, hate-related labels and reflects real-world meme styles on a different platform.

We adopt a masked language modeling (MLM) objective for domain-adaptive pretraining. For each meme

from MMHS150K, we randomly mask a subset of text tokens in

T to obtain a corrupted sequence

and a mask index set

M. The ViLT backbone encodes the image and corrupted text jointly, and an MLM head predicts the original tokens at the masked positions. Let

be the original token at position

, and

the predicted distribution over the vocabulary. The DAPT loss is

We optimize for several epochs using the same ViLT backbone as in the student model. After cross-platform domain-adaptive pretraining on MMHS150K, we obtain a ViLT checkpoint that has been adapted to meme-like image–text distributions. This checkpoint is used to initialize the student model in the subsequent distillation-based training on the Hateful Memes dataset.

3.3. Retrieval-Augmented Teacher and Risk-Aware Distillation

The student model is smaller and more parameter efficient than recent state-of-the-art multi-modal models. To further enhance its performance without increasing inference cost, we distill knowledge from a stronger teacher. In

Figure 3 Teacher models and any retrieval infrastructure are used only offline to generate soft labels; at test time, only the student is used.

3.3.1. Teacher Model and Offline Soft Labels

We build a teacher ensemble on top of pretrained text and CLIP-L/14 vision-language encoders [

5]. A text classifier is trained on meme captions and OCR text, and an alignment head is trained on CLIP image and text embeddings for Hateful Memes style inputs. At training time, the two teacher components produce logits, which are averaged to obtain a single teacher logit vector for each sample. After temperature scaling, we derive a soft label distribution and a scalar confidence score.

Concretely, let

denote the ensembled teacher logits for meme

i, and

a temperature hyperparameter. We define

and take the maximum softmax probability as a confidence score

All pairs for training and development samples are precomputed and stored in JSONL files. This design decouples teacher computation from student training and avoids any online teacher or retrieval overhead.

3.3.2. Risk-Aware Confidence Gating

Teacher predictions are not uniformly reliable; low-confidence cases are likely to be ambiguous or out of distribution. We introduce a risk-aware gating mechanism based on the teacher’s confidence. For sample

i, we define a binary gate

where

is a confidence threshold. Only samples with

participate in the distillation loss. This risk-aware gating reduces the negative impact of uncertain teacher predictions.

3.3.3. Logit-Level Distillation

Let

denote the student logits for sample

i. We define the temperature-scaled student and teacher distributions as

The logit-level distillation loss is the gated KL divergence from teacher to student,

where

N is the number of training examples and the factor

follows standard knowledge distillation practice to keep gradient magnitudes comparable across temperatures. In practice we also apply an epoch-dependent ramp-up factor to the distillation weight (see

Section 3.4), so that the student first fits the ground truth labels and then increasingly aligns with the teacher on high-confidence examples.

3.4. Training Objective and Inference

Let

be the Hateful Memes training set. We first compute a supervised loss to handle label imbalance. Let

and

be the positive and negative class weights. The supervised loss is

where

is the student’s predicted probability for sample

i.

The overall training objective combines the supervised loss with the confidence-gated distillation loss. Let

be a global distillation weight and

an epoch-dependent ramp-up factor at epoch

e (e.g., linearly increasing from 0 to 1 over a fixed number of warmup epochs). The total loss at epoch

e is

This schedule encourages the student to first learn from ground truth labels and then gradually absorb the teacher’s soft label information on confident examples.

It is worth noting that label imbalance is a common challenge in cyberbullying and hate speech detection tasks. Beyond class-weighted supervised objectives, prior studies have explored a range of imbalance-aware learning strategies, particularly generative modeling approaches. Existing surveys indicate that, after transforming non-numerical social media content into numerical representations, generative models such as Boltzmann Machines, Deep Belief Networks, Deep Autoencoders, and Generative Adversarial Networks (GANs) have been investigated to synthesize additional training samples and alleviate skewed label distributions [

26]. Among them, GAN-based augmentation methods [

27] are commonly used to enrich minority class samples in scenarios with limited or highly imbalanced supervision.

In this work, we intentionally adopt a class-weighted supervised loss as a lightweight and stable mechanism to address label imbalance, in order to maintain compatibility with confidence-gated knowledge distillation and parameter-efficient fine-tuning. While more advanced imbalance handling techniques are not incorporated into the current training objective, they represent a complementary direction that can be integrated into our framework in future extensions, particularly for settings with more severe imbalance or scarce annotations.

At inference time, only the student model is used. Given a new meme , the model processes it through the ViLT backbone and classifier to produce . Retrieval, the teacher model, and all distillation-related computations are completely removed, so the deployment cost is identical to fine-tuning a single ViLT-B model with BitFit and LayerNorm updates.

4. Experiments and Results

In this section, we describe the experimental setup, results, and analysis of our proposed method. We first introduce the datasets, hardware and software environments, and evaluation metrics. Then, we present the experimental results and analyze the performance of our method compared to full parameter fine-tuning and prior work.

4.1. Experimental Setup

4.1.1. Datasets

To evaluate the proposed framework, we conduct experiments on one multi-modal benchmark and one text-only cross-dataset transfer pair.

Our primary evaluation is performed on the Hateful Memes dataset [

1,

2], a challenging multi-modal benchmark specifically designed for hate speech detection in internet memes. This dataset contains carefully curated image–text pairs where each instance consists of a visual meme and its corresponding textual content. The annotation scheme follows a binary classification paradigm, requiring models to determine whether each meme conveys hateful meaning through the combination of visual and textual modalities. The dataset’s inherent complexity stems from the nuanced interplay between images and text, where hateful meaning often emerges from their combination rather than from either modality in isolation.

To complement the multimodal evaluation and to isolate the effect of our deployment-aware training recipe, we report auxiliary text-only experiments on additional classification benchmarks, including IMDB movie reviews and TREC question classification. These unimodal experiments serve two purposes: (i) to assess the behavior of our parameter-efficient adaptation strategy (PEFT) and offline distillation when non-text modalities are unavailable, and (ii) to study cross-dataset transfer within the text-only pipeline. The IMDB dataset contains polarized movie reviews for sentiment analysis, while the TREC dataset consists of questions annotated with fine-grained question types. We report in-domain performance on each dataset and evaluate cross-dataset generalization by training on one dataset and testing on the other.

4.1.2. Domain-Adaptive Pretraining Setup

For domain-adaptive pretraining, we use masked language modeling with a masking probability of 0.15. Text is tokenized by the ViLT processor with a maximum length of 40 tokens, and images are resized to 256 pixels on the shorter side (as specified in our experimental configuration). We apply minimal corpus preprocessing beyond filtering pairs with missing or corrupted images, and rely on the standard ViLT preprocessing and tokenization pipeline for consistency.

4.1.3. Hardware and Software Environments

The experimental infrastructure is designed to support the computational demands of multi-modal deep learning. All experiments are implemented in PyTorch 2.0 with GPU acceleration via CUDA and cuDNN. For transformer model initialization and fine-tuning, we use the HuggingFace Transformers library [

28], which provides pretrained vision–language and text encoders and standardized interfaces for multi-modal learning. The complete codebase is developed in Python 3.10, with dependency management and environment isolation handled through Conda to ensure experimental reproducibility.

4.2. Comparison Methods

Retrieval-guided contrastive learning (RGCL) [

14] proposes to learn a hatefulness-aware embedding space on the Hateful Memes dataset by adding a retrieval-guided contrastive objective on top of a CLIP-style vision–language encoder. Concretely, the method dynamically retrieves pseudo gold positives (semantically similar, same class) and hard negatives (semantically similar, opposite class) during training and optimizes a contrastive loss alongside standard cross-entropy. At inference, the model can use a simple classifier or an optional

kNN majority vote over the learned embedding space.

Visual program distillation with PaLI-X/PaLI-3 55B (VPD-55B) [

29] introduces visual program distillation, where a large vision–language teacher (PaLI-X/PaLI-3, 55B parameters) generates structured rationales or programs that are distilled into task specialists.

Compared to RGCL and VPD-55B, our method targets parameter and deployment efficiency (

Table 2): (i) we perform domain-adaptive pretraining (DAPT) to adapt a ViLT base model to meme style; (ii) we fine-tune with PEFT (BitFit + LayerNorm) so that <1% of parameters are trainable; and (iii) we use offline teacher KD with temperature scaling and confidence gating, so no retrieval or teacher is needed at test time.

Evaluation Metrics

Model performance is evaluated using standard classification metrics appropriate for noisy and potentially imbalanced datasets. On the Hateful Memes benchmark, we report AUROC (Area Under the Receiver Operating Characteristic Curve) and F1. AUROC measures the model’s ability to discriminate between positive and negative classes across all possible decision thresholds and is threshold-independent, making it relatively robust to label noise and class imbalance. In contrast, the F1 score, defined as the harmonic mean of precision and recall, depends on a specific decision threshold and is therefore sensitive to calibration and operating-point selection. We include F1 to reflect performance at a concrete operating point, particularly for minority-class detection, while emphasizing AUROC as the primary indicator of overall ranking quality.

On IMDB (and TREC in the text-only experiments), we report accuracy and F1 to capture both overall correctness and threshold-dependent precision–recall trade-offs in unimodal sentiment and topic classification settings.

4.3. Experimental Results and Analysis

We first compare full parameter fine-tuning and our parameter-efficient student on the Hateful Memes dev set under a unified model selection rule, and summarize the main configuration in

Table 3. The mean of per-seed best scores for full parameter fine-tuning is AUC = 0.6945 and F1 = 0.5043. Our BitFit LayerNorm student with knowledge distillation, in the configuration reported in

Table 3, achieves AUC = 0.6760 and F1 = 0.3978 on Hateful Memes while updating only 0.1129% of the weights. This shows that a ViLT base student with fewer than 1% trainable parameters can reach AUROC close to full parameter fine-tuning, with some loss in F1.

To better understand the effect of training settings and distillation, we further examine additional configurations. In one configuration with knowledge distillation, our student reaches AUC = 0.6658 with F1 = 0.4675; the variant without KD achieves AUC = 0.6668 with F1 = 0.4607. For completeness, another configuration attains F1 = 0.4142 with AUC = 0.6720. Across these variants, the model preserves approximately 95.9% of the full parameter AUC (0.6658/0.6945), with some loss in F1 compared to the full parameter baseline, reflecting the trade-off between ranking performance and thresholded decision quality under a much smaller number of trainable parameters (about 886× fewer).

On IMDB, full parameter fine-tuning of the text classifier reaches accuracy = 0.8942 and F1 = 0.9001, whereas our parameter-efficient variant with BitFit LayerNorm and KD achieves accuracy = 0.8855 and F1 = 0.8874 while updating only 0.091% of parameters.

Table 3 summarizes the main results. We observe a similar pattern to the multi-modal case: our method attains accuracy and F1 close to full fine-tuning while requiring orders of magnitude fewer trainable parameters.

Figure 4 shows dev set AUC and F1 curves for Hateful Memes. The full parameter baseline is averaged per epoch across multiple seeds, and our method is plotted for the BitFit-LN + KD configuration. Early stopping is enabled for all runs, with each curve ending at its early-stopped epoch.

We further analyze the effect of the confidence threshold on distillation coverage, defined as the fraction of samples whose teacher confidence exceeds and are therefore selected for knowledge distillation. As increases, the gating becomes stricter and the coverage decreases substantially on both splits. On the training set, coverage drops from 0.7305 () to 0.4586 (), 0.1759 (), and 0.0034 (); on the dev set, it drops from 0.6800 to 0.3460, 0.0900, and 0.0060, respectively (with and ). These results highlight a strict–lenient trade-off: larger reduces the influence of low-confidence teacher predictions but also limits the amount of distilled supervision.

In conclusion, our method achieves AUROC close to full parameter fine-tuning on Hateful Memes while using roughly 886× fewer trainable parameters, with some loss in F1 that reflects the trade-off between capacity and efficiency. Reporting both AUC-focused and F1-focused configurations is useful for different deployment priorities.

5. Discussion

In this section, we discuss the performance and limitations of the proposed method, compare it with other state-of-the-art techniques, and analyze the key findings from the experiments. We also highlight the strengths and areas for improvement, along with potential challenges faced during the development.

5.1. Performance Comparison with State-of-the-Art Methods

Recent SOTA systems on Hateful Memes (HM) often pursue accuracy via either retrieval-augmented CLIP pipelines that require training- and test-time lookups (e.g., RGCL) [

14] or very-large specialist models with tens of billions of parameters and program/CoT distillation (e.g., VPD-55B) [

30]. Our approach occupies a different point on the accuracy–efficiency frontier: a ViLT-base student trained with parameter-efficient fine-tuning and offline KD atop domain-adaptive pretraining, with no auxiliary modules at inference. On the HM dev split, this student retains about 96% of the AUROC of full fine-tuning while using roughly 886× fewer trainable weights and introducing no test-time dependencies. DAPT adds an upfront adaptation cost that varies with corpus size, but it is a one-time procedure reusable across downstream targets and can therefore be amortized. Our primary efficiency gains are instead realized at deployment by avoiding online retrieval/ensembles and by reducing task-specific trainable parameters. In our runs, wall-clock training time was reduced by about 75%. Compared with retrieval-augmented SOTA [

14], we avoid kNN/retrieval latency and brittle system coupling; compared with very-large specialists [

30], we eliminate 55B-scale models and remain deployable on modest GPUs. Overall, our method delivers competitive accuracy with a dramatically smaller training footprint and self-contained inference (see

Table 2).

5.2. Insights from the Experimental Results

The experiments highlight several aspects of the proposed framework.

First, on Hateful Memes our ViLT-based student preserves most of the AUROC obtained by full parameter fine-tuning while updating only a tiny fraction of the weights. As shown in

Table 3, the full baseline reaches an AUROC of 0.6945, whereas our BitFit + LayerNorm+KD variant achieves 0.6760, i.e., about 96% of the baseline AUROC, with only 0.1129% trainable parameters. This confirms that domain-adaptive pretraining combined with parameter-efficient fine-tuning can retain most of the ranking ability of a fully tuned model even under strict capacity constraints.

Second, the F1 score on Hateful Memes exhibits a more noticeable drop (0.5043 vs. 0.3978). This reflects a trade-off between capacity and threshold-sensitive performance: AUROC measures the quality of the ranking across all thresholds, whereas F1 depends on a single operating point, which is more sensitive to label noise, class imbalance and small shifts in the score distribution. In our setting, we deliberately prioritize AUROC and deployment efficiency over maximizing F1, which is a reasonable design choice for noisy, weakly labeled memes where stable ranking is often more important than a particular threshold.

Third, the IMDB experiments indicate that the same BitFit + LayerNorm + KD recipe generalizes beyond multimodal memes to purely textual tasks. On IMDB, our parameter-efficient variant attains accuracy and F1 close to full fine-tuning while using only 0.091% trainable parameters (

Table 3). This suggests that the combination of DAPT, PEFT and offline distillation forms a reasonably generic recipe for low-cost transfer, not just a dataset-specific trick for Hateful Memes.

Finally, the offline teacher design proves effective in practice. By precomputing temperature-scaled soft labels and gating on high-confidence predictions, we reduce the impact of noisy teacher outputs while completely removing retrieval and teacher calls at test time. This keeps the deployed system simple: inference is just a single forward pass of a compact ViLT-B model. The ablations indicate that DAPT contributes most consistently to AUROC improvements under domain shift, PEFT (BitFit + LayerNorm) achieves large parameter savings with modest performance loss, and confidence-gated offline KD provides an additional noise-robust supervision signal without introducing deployment-time dependencies.

5.3. Limitations of the Current Approach

Despite the promising results, there are still several limitations that need to be addressed as follows:

Scalability and training cost: Although inference is lightweight and only requires a single ViLT-based student, the full training pipeline still involves several stages: domain-adaptive pretraining on MMHS150K, teacher training, offline soft label export, and student distillation. As datasets grow larger or more platforms are added, the cost and engineering complexity of this multi-stage process may become a bottleneck. Future work could explore more unified or incremental training schemes, and further reduce memory and compute via smaller backbones or quantization.

Data Imbalance: Real-world cyberbullying datasets often exhibit class imbalance and label noise. Further investigation into strategies such as class balancing, resampling, or synthetic data generation could improve model performance, especially for minority classes.

Generalization Across Domains: The current model was trained and evaluated on a specific set of datasets, and its generalization to new, unseen domains is still uncertain. Future work should explore domain-adaptation techniques to improve the model’s robustness across different domains and platforms, such as social media or different languages.

Explainability: Although the model performs well, its decision-making process remains relatively opaque. Future work could focus on improving model interpretability and explainability, which is crucial for trustworthiness in real-world applications of cyberbullying detection.

5.4. Training Dynamics and Practical Considerations

From a training perspective, the main challenge is balancing the supervised loss and the distillation signal. If the distillation weight is too high at the beginning of training, the student may overfit noisy or miscalibrated teacher predictions; if it is too low, the student underutilizes the teacher. In our implementation we warm up the distillation weight so that the model first fits the ground truth labels and only gradually relies more on the teacher. Empirically, this schedule yields smoother convergence and more stable dev set AUROC.

A second practical issue is threshold selection for F1. Because Hateful Memes is relatively small and annotated with noisy binary labels, the F1 score can vary noticeably across seeds and splits. We therefore rely primarily on AUROC for model selection and report F1 mainly as a complementary metric. In operational deployments, one would typically calibrate thresholds on a held-out validation set that reflects the target platform and policy.

6. Conclusions and Future Work

This paper presents a parameter-efficient, deployment-aware training framework for multimodal hateful content detection. The framework integrates three technical components: (i) domain-adaptive pretraining of a compact ViLT backbone on in-domain meme-like image-text corpora, (ii) parameter-efficient fine-tuning via BitFit + LayerNorm that updates less than 1% of parameters while keeping the inference computational graph unchanged, and (iii) noise-aware offline knowledge distillation from a strong teacher constructed from pretrained text encoders and CLIP-based image–text encoders, where temperature-scaled and confidence-gated soft targets are generated offline. During deployment, only the lightweight student model is required, with no dependency on the teacher model or retrieval mechanisms at inference time. Experimental results demonstrate a favorable accuracy–efficiency trade-off on Hateful Memes. In the text-only setting, the IMDB dataset is used as an auxiliary benchmark to validate the proposed training recipe.

Building upon this work, we will evaluate the framework on additional multimodal benchmarks (e.g., HarMeme or supervised MMHS150K) to further strengthen cross-platform multimodal generalization claims. We will also conduct a full performance sensitivity study over the confidence threshold to quantify the strict–lenient gating trade-off beyond the current coverage analysis. To better address severe class imbalance and label sparsity/noise in social media security settings, we will explore advanced imbalance-aware strategies. In addition, we will investigate stronger or domain-specific teachers and robustness to teacher noise to further reduce error propagation in nuanced cases. Finally, we will expand the framework to a wider variety of content formats and platforms, such as short-form videos with captions, and incorporate more fine-grained moderation labels with explainability mechanisms to enhance transparency and trustworthiness in sensitive moderation scenarios.