1. Introduction

New-energy plants and battery energy-storage stations are no longer isolated electrical assets. Photovoltaic or wind units, battery management systems, gateways, station historians, and supervisory control and data acquisition (SCADA) applications are now connected through software-defined service paths, remote maintenance channels, and protocol gateways. These connections improve observability and dispatch flexibility. They also enlarge the cyber–physical attack surface [

1,

2,

3]. Cybersecurity has therefore become a core operational requirement rather than an auxiliary information-technology add-on. Remote maintenance sessions, identity-based access decisions, endpoint-trust updates, command-audit records, and networked protection services all interact with plant availability. A station-side defense policy must therefore coordinate protection strength with operational responsiveness. Similar cyber–physical dependencies are increasingly evident in mobile new-energy applications. For example, the sophisticated energy management strategies used to optimize power distribution in fuel cell vehicles [

4,

5] rely heavily on real-time data and interconnected control networks. This further highlights the need for adaptive and context-aware protection across modern energy systems.

The engineering community has already established strong guidance on industrial and operational-technology security. NIST guidance for OT environments emphasizes asset visibility, layered defense, and controlled access paths [

6]. Zero-trust principles further encourage context-aware authorization instead of persistent trust assumptions [

7]. Standards such as IEC 62443 and IEC 62351 specify security requirements for industrial components, conduits, zones, and power-system communications [

8,

9,

10]. However, these standards do not by themselves answer a practical station-level question: how should protection intensity change when login context, device posture, and service condition vary over time? This paper focuses on that station-level orchestration problem: the objective is not only to identify risky sessions but also to decide whether the low-, medium-, or high-intensity service chain is sufficient for the current operational context.

This question has become more important as renewable generation and storage platforms adopt service-oriented architectures. Recent reviews show that cyber resilience in power-electronics-enabled systems, distributed energy resources, and battery energy-storage systems increasingly depends on communication quality, controller trust, and coordinated defense logic [

11,

12,

13,

14,

15]. Recent spatio-temporal detection research also shows that topology-aware temporal models, such as particle-swarm-optimized attention temporal graph convolution for dummy data injection attacks in power systems, can improve attack recognition when grid topology and time-series dependencies are available [

16]. Existing studies have clarified threats, standards, and detection opportunities, yet many still fall into one of two categories. The first focuses on surveys, standards, or zoning strategies [

17,

18,

19]. The second concentrates on attack detection models. Both categories are valuable, but neither directly addresses the orchestration problem faced by a digital station platform: how should identity control, access protection, auditing, and response strength be combined so that security remains strong without overloading the control path?

This paper addresses that gap through a calibrated context-aware Security-as-a-Service orchestration method called SECaaS-CARO. The core idea is to model station protection as a dispatch problem over reusable service chains. Each session is scored using explicit operational indicators whose weights are calibrated from the training split under monotone risk-direction constraints, and the resulting score is mapped to a low-, medium-, or high-intensity protection chain. The paper therefore contributes an engineering method that remains interpretable, reproducible, and directly tied to deployment cost while avoiding the appearance of an ad hoc weighted checklist.

SECaaS-CARO is not intended to be only another classifier. Conventional risk-scoring methods mainly output a numerical risk level. Zero-trust methods mainly determine whether a request should be trusted or authorized. Adaptive security-policy methods often update rules, but they do not always quantify the cost of the executed protection chain. SECaaS-CARO combines these ideas at the station side. It jointly formulates service-chain orchestration, monotone risk calibration, validation-based threshold dispatch, and deployment-oriented utility optimization. The framework therefore connects context scoring with executable low-, medium-, and high-intensity protection strength. This is the operational decision required by new-energy and energy-storage stations.

The main contributions are as follows. First, a station-oriented four-layer architecture is formulated to couple physical assets, control services, security services, and adaptive orchestration. Second, a monotone calibrated nine-indicator risk model is defined to fuse heterogeneous station context into a single decision variable. Third, validation-based threshold tuning maps that score to service-chain intensity through an explicit deployment-oriented utility objective. Fourth, a stronger baseline suite, including logistic and random-forest learned baselines in addition to a static rule, is used for comparison on repeated resampled test streams. Fifth, a fully reproducible manuscript package is provided with the data tables used in the paper. Because the evaluation is based on semi-synthetic sessions and resampled test streams, the contribution is positioned as reproducible methodological validation rather than final production certification.

2. Related Work

Cybersecurity research for electrical infrastructure has long recognized that cyber events can propagate into physical consequences. Surveys of smart-grid and power-system security established the relevance of communication integrity, state-estimation attacks, and cyber–physical coupling [

1,

2]. Broader industrial-control and Industrial Internet of Things work emphasized that asset posture, trust, and operational workflow must be considered together with network traffic [

17,

18,

20].

In the energy domain, the recent literature has become more specific. Hou et al. analyzed cyber resilience in power-electronics-enabled power systems and showed that renewable generation, storage, converters, and grid-side applications share distinct timing and control vulnerabilities [

11]. Naseri et al. reviewed security issues in cloud battery management systems, while Kharlamova et al. focused on cyberattack detection methods for battery energy-storage systems [

12,

13]. Chen et al. provided a recent survey of distributed-energy-resource cybersecurity and mapped the field across vulnerability, detection, and mitigation layers [

14]. These studies strongly motivate station-level protection research, but they are mainly review oriented and do not formulate explicit service-dispatch mechanisms.

Architecture-oriented studies for integrated smart-energy stations are closer to the target problem. Chen et al. proposed a layered cyber-security architecture for multi-station integrated smart-energy stations and emphasized zoning, service priority, and communication protection [

19]. That line of work is valuable because it grounds cyber protection in realistic energy-station workflows. Nevertheless, most architecture papers still stop short of quantifying how adaptive protection changes latency, blocking strength, and overall deployment utility as station context shifts.

The spatio-temporal model above is included because it strengthens the detection side of power-system cyber defense. It is complementary to this study: topology-aware learning can provide richer event or anomaly inputs, whereas SECaaS-CARO focuses on how station-side security services should be orchestrated once heterogeneous risk indicators and security-service outputs are available.

The present study is positioned between those two strands. It borrows the layered engineering perspective of industrial and energy-station security research but evaluates a concrete dispatch mechanism rather than only describing architecture principles. It also differs from purely predictive attack-detection papers because the target output is not just a label. Instead, the output is the intensity of a protection chain that directly affects audit density, response aggressiveness, latency, and computational cost. This framing is well aligned with the control-oriented reality of digital stations, where cyber policy decisions and operational responsiveness are tightly coupled.

3. Materials and Methods

3.1. Problem Setting

The target scenario is a digital station platform supervising renewable generation assets and an associated battery energy-storage subsystem. Representative sessions include operator authentication, telemetry synchronization, remote maintenance, gateway-mediated command delivery, battery-management alarms, and command dispatch to converters or station controllers. Some sessions are normal, some are suspicious and deserve enhanced scrutiny, and some correspond to direct attack conditions such as malicious command injection or gateway misuse.

The engineering objective is not only to classify sessions as benign or malicious. In practice, the platform must decide how strongly to respond. Low-risk sessions may require only lightweight verification and basic audit. Medium-risk sessions may require denser logging and finer-grained authorization. High-risk sessions may justify session isolation or strong command gating. Accordingly, the decision variable of this study is the service-chain intensity selected for each station session.

3.2. SECaaS-CARO Architecture

Figure 1 presents the proposed architecture. In this figure, the solid dark-blue arrows indicate the main service/data flow, the curved dark-blue arrows indicate cross-layer coordination, and the dashed purple arrows indicate adaptive policy feedback. The physical asset layer contains renewable assets, the battery storage subsystem, and the station gateway or energy management system. The control platform layer contains data acquisition, SCADA or dispatch applications, and a service bus through which session events and logs are routed. The security service layer encapsulates identity and trust assessment, access and data protection, and audit or forensics functions. The adaptive decision layer fuses station context, computes a risk score, and dispatches one of three service chains.

This decomposition has two engineering advantages. First, it treats identity, access control, auditing, and response logic as explicit services rather than scattered mechanisms. Second, it isolates the adaptive decision plane from the execution plane. Thresholds and weights can therefore be recalibrated without redesigning the underlying service stack. The architecture is thus closer to an operational control loop than to a monolithic detector. The decoupling is architectural rather than statistical: the decision layer still consumes outputs from identity, access-control, audit, and service-health functions. In continuous operation, recalibration can therefore change service-chain density and subsequently alter the future indicator distribution. The experiments below evaluate a single-cycle train–validation–test assumption, and the Discussion explicitly states the boundary of this assumption.

3.3. Calibrated Context-Aware Risk Model

For each station session, nine normalized indicators are collected: device trust, vulnerability exposure, access anomaly, data sensitivity, service health, command deviation, login risk, network instability, and identity confidence.

Table 1 summarizes their meaning and operational origin. The indicators were selected to cover three station-side security dimensions that are usually observable in modern new-energy and energy-storage stations: identity and access behavior, device and vulnerability context, and service-chain/audit context. This selection keeps the model deployable because every indicator can be linked to a concrete monitoring or enforcement service rather than to an abstract statistical feature.

All nine indicators are normalized to [0, 1] before calibration. Device trust, service health, and identity confidence are availability-and-assurance indicators for which lower values imply higher security concern; they are therefore transformed as . Vulnerability score, access anomaly, data sensitivity, command deviation, login risk, and network instability already increase with risk. In real stations, identity confidence, login risk, and access anomaly can be obtained from identity providers, access-control gateways, and login-audit records; device trust and vulnerability exposure can be obtained from endpoint-trust services, asset inventories, patch records, and vulnerability databases; command deviation and data sensitivity can be derived from command taxonomies, dispatch history, and asset registries; service health and network instability can be measured through runtime probes and communication telemetry. The exact vendor implementation may differ across sites, but each indicator corresponds to information that is typically available to the security-service layer or can be exported by adjacent operational systems.

To preserve interpretability while avoiding purely heuristic weighting, the nine indicators were first transformed into risk-aligned variables, so that larger values consistently implied greater concern. The calibration process is as follows. A logistic model is fitted on the training split using the standardized risk-aligned variables and the binary target in which normal sessions are negative and suspicious/attack sessions are positive. Let

denote the fitted coefficient of the

jth risk-aligned variable. For the interpretable dispatch score, only positive coefficients are retained, opposite-sign coefficients are clipped to zero, and the remaining values are normalized as

. Device trust, service health, and identity confidence are first converted to

, so that all retained terms have the same risk direction. Therefore, the monotone property is enforced by the deployed additive score, whose partial derivative with respect to each risk-aligned input is nonnegative, rather than by claiming that the unconstrained logistic model is a globally monotone classifier. Partial-dependence checks and counterfactual perturbations are reported in

Section 5.4. The resulting calibrated score is

where

is device trust,

V is vulnerability score,

A is access anomaly,

S is data sensitivity,

H is service health,

C is command deviation,

L is login risk,

N is network instability, and

I is identity confidence.

This calibration step is important for two reasons. First, it retains a transparent weighted-sum form that can be audited by engineers and mapped back to operational factors. Second, it anchors the weights to the observed training distribution rather than leaving them as arbitrary design constants. The highest weights are assigned to service health, device trust, identity confidence, command deviation, and login risk, which is consistent with the deployment logic of station security. The clipping of opposite-sign coefficients is a deliberate interpretability constraint rather than a claim that such coefficients are always meaningless. It prevents an increase in a risk-aligned indicator from decreasing the deployed risk score. However, an opposite-sign effect may also reflect a useful counter-intuitive signal in a complex operational environment, especially when variables are correlated, non-linear, or site dependent. For this reason, clipped coefficients should be inspected during site-specific recalibration. If the same opposite-sign pattern is repeatedly observed in real station logs, it should be tested with partial-dependence analysis, counterfactual perturbation, expert review, or additional interaction and non-linear terms instead of being automatically discarded.

3.4. Service-Chain Dispatch

Figure 2 summarizes the decision flow. The risk score is mapped to three service-chain intensities. The low threshold and high threshold are not set manually. Instead, they are selected on the validation split by maximizing a deployment-oriented utility function that rewards both protection quality and operational efficiency. The selected thresholds are

for low-intensity service,

for medium-intensity service, and

for high-intensity service. This validation-tuned operating point is important because station protection is a dispatch problem rather than a pure classification problem: a policy that is marginally better in F1 but consistently slower or heavier can still be inferior once service-chain cost is accounted for.

The utility optimized during threshold search is

where

B is blocking success on attack sessions,

is the false-alarm rate on normal sessions,

L is mean latency, and

C is mean CPU cost. This objective encodes the deployment view of the paper: a station-security mechanism should not be judged only by detection quality if it also changes control-path delay and service load. The coefficients are deployment-preference coefficients, not values fitted on the validation or test set. They encode the station-side preference that blocking dangerous sessions and controlling false alarms are more important than small differences in normalized engineering burden, while excessive latency and CPU cost should still be penalized. Blocking is rewarded because missed attack sessions can propagate into station operation; false alarms receive a larger penalty because unnecessary escalation can interrupt routine work; latency and CPU terms are smaller penalties because they represent normalized service-chain burdens. The same coefficients are fixed before test evaluation, coefficient sensitivity is reported in

Section 5.4, and future deployments should retune these coefficients with site-specific cost models.

4. Experimental Design

4.1. Semi-Synthetic Dataset

A reproducible semi-synthetic dataset was constructed because station logs with sufficient security detail are rarely releasable. Three session groups were modeled: normal sessions, suspicious sessions, and direct attack sessions. The suspicious group represents sessions that should trigger enhanced protection even when they are not yet confirmed attacks. Attack sessions include malicious command injection, privilege abuse, gateway takeover, and battery-management manipulation.

The indicators in

Table 1 were sampled from class-specific Beta distributions, and cross-feature dependencies were then injected to reflect basic station logic. Beta distributions were used because the indicators are bounded normalized variables in [0, 1]: normal sessions are concentrated in the low-risk region, suspicious sessions are shifted toward the middle range, and attack sessions are shifted toward the high-risk region after risk-direction alignment. The class-specific parameters are then modified by scenario-dependent offsets rather than by changing the indicator set itself. Cross-feature dependencies are injected after the marginal sampling step so that access anomaly depends partly on login risk and command deviation, device trust is correlated with identity confidence, and service health is degraded by network instability and vulnerability exposure. The four attack scenarios are represented by different offsets over the same nine indicators: malicious command sessions emphasize command deviation and data sensitivity; privilege-abuse sessions emphasize login risk, identity confidence, and access anomaly; gateway-takeover sessions emphasize device trust, network instability, and vulnerability exposure; and BMS-manipulation sessions combine command deviation, service-health degradation, and data sensitivity. The

Supplementary Materials (Tables S1–S3) now contains the generation script and the exported class-wise mean and covariance summaries used to reconstruct the semi-synthetic stream. This design does not claim to reproduce plant physics in full. Its purpose is to provide a structured session stream with explicit station context and reproducible overlap between classes. The 17,000 sessions should therefore be understood as a controlled validation scale, not as a claim that all realistic station operating conditions have been exhausted. The scale provides enough normal, suspicious, and attack sessions to support a non-overlapping train–validation–test split, validation-based threshold selection, and repeated 5000-session resampling from the held-out test partition. It is sufficient for testing whether the proposed calibration and threshold-dispatch procedure behaves consistently under controlled conditions, while larger site-specific logs are still required before production deployment.

Figure 3 and

Table 2 summarize the class composition of the canonical train, validation, and test splits.

4.2. Compared Methods and Evaluation Protocol

Four compared methods were used. SECaaS-CARO is the proposed calibrated orchestration framework. Logistic-Fixed trains a supervised logistic regression model on the same indicators and uses a fixed service mapping with validation-fixed thresholds of 0.45 and 0.71. RandomForest-Fixed uses a tree ensemble with the same feature set and fixed thresholds of 0.43 and 0.71. Static-Rule uses a simplified manual score over access anomaly, login risk, command deviation, device trust, vulnerability score, service health, and identity confidence, with fixed thresholds of 0.38 and 0.50. The baseline suite was expanded to address three comparison levels requested by the reviewers. Logistic-Fixed and RandomForest-Fixed represent conventional supervised machine-learning risk policies with different model complexity; the logistic model uses the same normalized indicators and a maximum-iteration setting of 3000, while the random forest uses 320 trees, maximum depth 6, and a minimum leaf size of 10 to avoid an overly flexible comparator on the semi-synthetic split. Static-Rule represents a lightweight manually defined operational rule that is easier to deploy but less adaptive. The adaptive-threshold variants reported below test whether the observed gain is due only to validation-based threshold search. All fixed thresholds and adaptive thresholds are selected on the validation split, and no test-stream information is used for threshold selection or hyperparameter adjustment.

The main comparison was repeated on 10 independently resampled test streams of 5000 sessions each. In addition to F1 score, the evaluation reports false-alarm rate, attack blocking success, mean latency, normalized CPU cost, and the deployment utility defined above. Blocking success denotes simulated prevention through assignment to a sufficiently strong service chain for the corresponding attack scenario. It is not simply binary attack identification: an attack must be dispatched to a medium- or high-intensity chain that is defined as operationally sufficient for containing that scenario. Thus, the metric reflects simulated prevention through service-chain strength rather than only classification correctness. Scenario-wise analysis reports blocking success together with the fraction of attack sessions escalated to the high-intensity chain. This is more informative than a near-saturated attack-recall table because it distinguishes aggressive escalation from effective-yet-selective response. Additional analyses include ablation, threshold sensitivity, and robustness under attack-ratio shift. The 10 test streams are generated by independent stratified resampling with replacement from the canonical test split; the class proportions are fixed to isolate policy variability from class-prior variability. The result files in the reproducibility package retain per-resample metric values rather than only aggregate means and standard deviations. Latency and CPU values are simulated service-chain proxies assigned according to

Table 3, not hardware-timed measurements from a production station. Specifically, low-, medium-, and high-intensity chains are assigned increasing proxy costs to represent lightweight verification, enhanced policy/audit processing, and strict response with dense auditing; future work should replace these proxy values with measured microservice traces or hardware-in-the-loop timing.

5. Results

5.1. Main Comparison

Table 4 reports the main comparison over 10 independent test resamples, and

Figure 4 visualizes the principal protection metrics. Logistic-Fixed achieved the marginally highest raw F1 score, but SECaaS-CARO remained essentially tied in F1 while clearly improving blocking success and substantially reducing latency and CPU cost. Because the proposed method explicitly couples calibrated detection with service dispatch, it obtained the highest overall utility.

The practical meaning of

Table 4 is important. Compared with Logistic-Fixed, SECaaS-CARO cuts mean latency by 5.77 ms and CPU cost by roughly 24.6% while improving attack blocking success by 2.37 percentage points. Compared with RandomForest-Fixed, the proposed method improves both F1 and utility while remaining more lightweight. Static-Rule remains computationally cheap, but its simplified decision logic sharply reduces blocking success and F1. SECaaS-CARO therefore occupies the most balanced operating point for the station-oriented objective of this paper.

To separate the effect of adaptive threshold optimization from the risk-scoring model itself, the learned baselines were also re-evaluated with the same validation-utility threshold search used for SECaaS-CARO.

Table 5 shows that adaptive thresholding does not remove the utility gap: Logistic-Adaptive remains close in F1 but retains higher latency and CPU cost, while RandomForest-Adaptive remains lower in utility. Thus, the reported gain is not caused only by giving SECaaS-CARO a validation-tuned threshold.

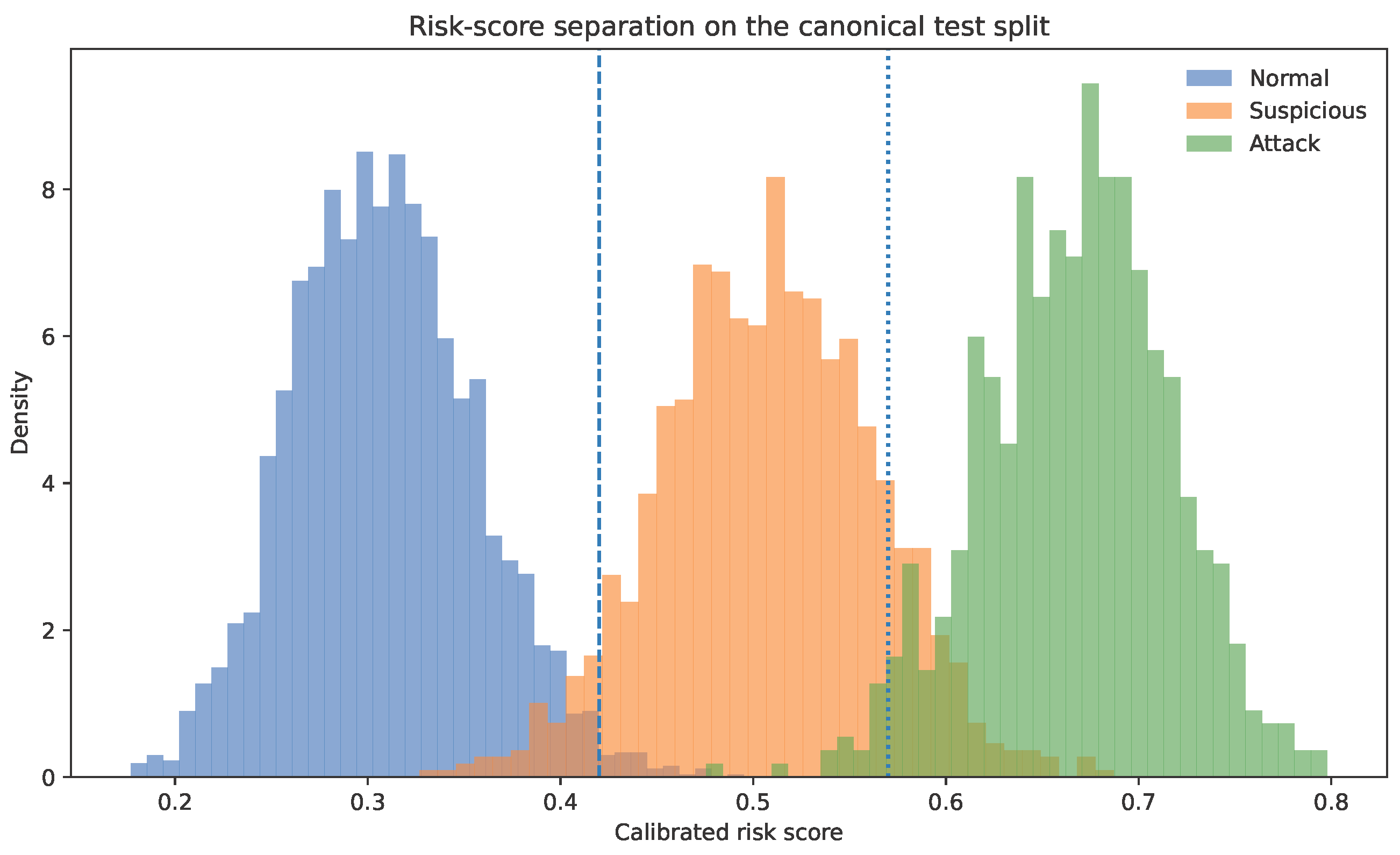

Figure 5 shows that the calibrated score separates the normal, suspicious, and attack groups in a structured way. Normal sessions concentrate in the low-risk region, suspicious sessions cluster around the medium-risk band, and direct attacks are shifted toward the high-risk band. This separation helps explain why the method can retain high blocking success without routing all positive sessions into the most expensive response chain.

Table 6 further reports attack-scenario behavior. SECaaS-CARO achieves the highest blocking success in all four direct-attack scenarios, reaching 0.984 for malicious command sessions and 0.957 for battery-management manipulation. At the same time, the high-intensity dispatch rate remains below 1.0, which indicates that the proposed method does not simply escalate every attack session as aggressively as the fixed learned baselines.

5.2. Ablation Study

Table 7 and

Figure 6 show that SECaaS-CARO depends on the interaction of threshold calibration, identity fusion, and service context. Threshold tuning produces the largest utility reduction when removed, because the dispatch boundaries become too coarse. Removing identity fusion or service context also degrades both F1 and utility, which confirms that the calibrated score is not driven by a single indicator family.

5.3. Sensitivity and Robustness

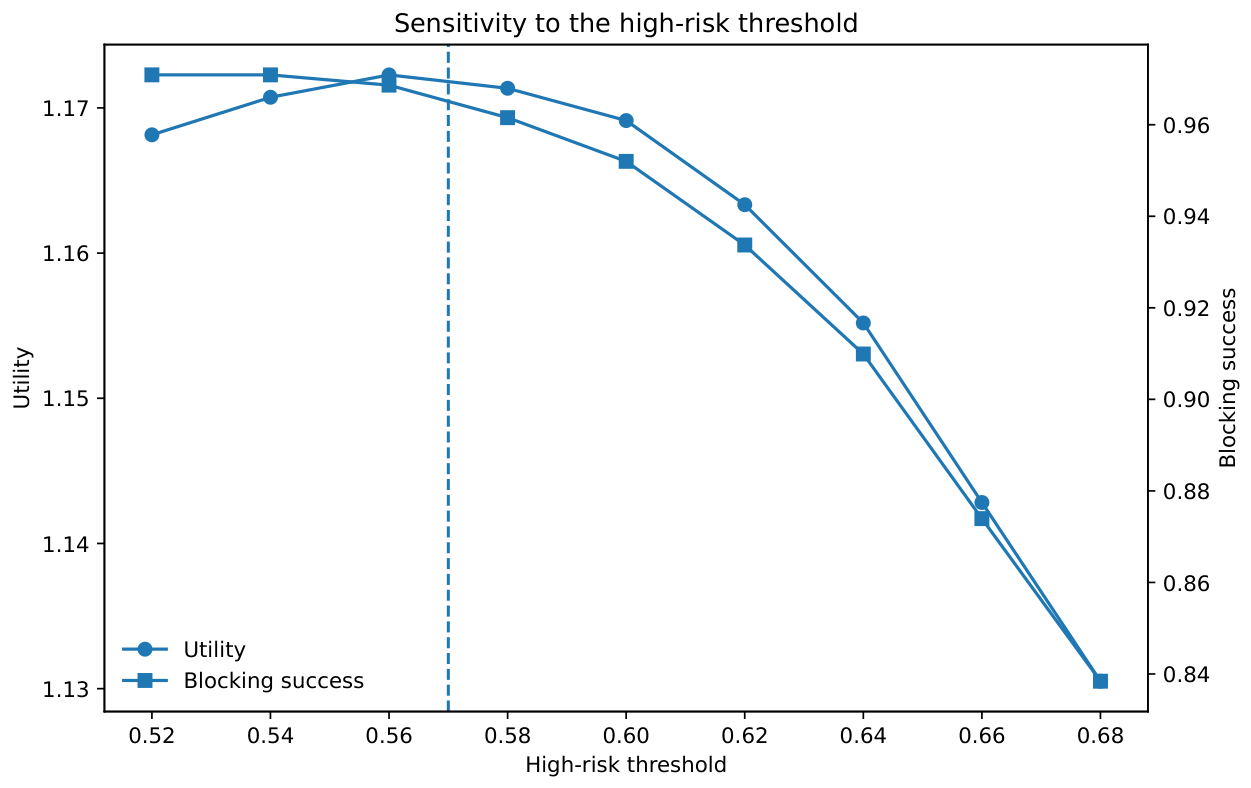

Figure 7 reports the sensitivity of SECaaS-CARO to the high-risk threshold. The selected value of 0.57 lies near the utility peak while maintaining strong blocking success. Lower thresholds increase blocking strength only slightly but also push more sessions into the high-intensity chain. Higher thresholds reduce enforcement strength enough to lower utility.

Table 8 and

Figure 8 summarize robustness under attack-ratio shift. SECaaS-CARO remains consistently superior in utility and blocking success across the tested attack ratios. This indicates that the proposed orchestration is not overly tuned to a single class balance. The Static-Rule baseline shows a small utility increase when the attack ratio changes from 0.12 to 0.16. This behavior is caused by the interaction between a fixed rule policy and the class composition of the resampled stream. Because the utility function rewards successfully blocked attack sessions and penalizes false alarms, latency, and CPU cost, a higher attack ratio can increase the reward term when more attack sessions are assigned to sufficiently strong service chains. This does not mean that Static-Rule becomes adaptive. It only indicates that a fixed policy can appear slightly more useful under a particular stream composition when the reward for correct blocking outweighs the additional service-chain cost.

5.4. Reviewer-Requested Diagnostic Analyses

Additional diagnostic analyses were added to address calibration, monotonicity, data-distribution sensitivity, service-chain false positives, and indicator importance. First, partial-dependence checks over the full normalized range confirmed that the deployed risk score is nondecreasing after each variable is converted to its risk-aligned direction. The corresponding plot is regenerated as

Figure 9 with higher resolution and enlarged axis and tick labels. The smallest adjacent partial-dependence increment over a 21-point grid was positive for all indicators, ranging from 0.0020 for data sensitivity to 0.0080 for service health. These checks verify the monotonicity of the deployed score while acknowledging that the preliminary logistic fit itself is only a calibration device.

Table 9 further reports counterfactual monotonicity checks on high-risk test sessions.

Second, weight stability was tested by recalibrating under perturbed semi-synthetic settings, including attack-density shifts, additive indicator noise, and correlation-strength perturbations. The maximum absolute weight shift was 0.0165 across the tested settings, which is below the review threshold. The paper therefore avoids claiming universal weights and specifies a deployment rule: if site recalibration changes any weight by more than 0.03 or if input-distribution drift is detected, the weight vector and thresholds should be reviewed and updated using the most recent validation split.

Table 10 decomposes false-positive burden by service-chain strength. The global FAR in

Table 4 is mainly caused by normal sessions entering the medium chain; normal-to-high escalation is zero in the canonical test split. This is operationally important because medium-chain false positives increase daily audit and authorization cost, while high-chain false positives would represent disruptive isolation or strict blocking.

Table 11 shows that the highest-weight variables have measurable effects when removed and the remaining weights are renormalized. Service health has the strongest removal effect, which is consistent with its calibrated weight. Command deviation has a larger utility impact than its rank alone suggests because it is closely tied to high-intensity blocking. These results support the calibrated ranking while also showing that weight magnitude should be interpreted as distribution-anchored importance rather than causal importance.

Finally, utility-coefficient sensitivity was evaluated by varying the blocking coefficient from 0.30 to 0.50 and the FAR coefficient from 0.45 to 0.65.

Table 12 shows that the selected threshold pair remained 0.42/0.57 under all tested preference settings. Larger site-specific cost changes should still be tuned with local cost models rather than using the coefficients here as universal constants.

6. Discussion

The revised experiments support four observations. First, station-oriented cyber protection should not be assessed only through raw classification metrics. Logistic-Fixed is marginally ahead in F1, but SECaaS-CARO delivers stronger blocking with lower latency and resource cost. This distinction matters in field platforms because every escalation changes the service chain and can delay the control path. The operational target is therefore the best protection-strength-per-burden trade-off under dispatch constraints. Second, the calibrated monotone score improves credibility. The weights are anchored to the training distribution and fixed before repeated test resampling, rather than being arbitrary constants. Third, the scenario-wise results show that selective escalation is meaningful. The proposed method does not push every direct attack into the highest service chain, yet it still achieves the strongest blocking outcome in every attack category. Fourth, the stronger baseline suite makes the engineering conclusion more convincing. SECaaS-CARO is better than a static rule and remains competitive with learned baselines while being operationally lighter.

This study is intentionally scoped as a reproducible engineering paper rather than a plant-specific field trial. The dataset is semi-synthetic and cannot replace noisy real station logs. The latency and CPU costs are normalized engineering proxies rather than hardware-timed measurements from a production deployment. These limitations are explicit design choices. The contribution is to provide a transparent orchestration method and a reproducible evaluation package that can be refined with site-specific logs, protocol traces, or hardware-in-the-loop validation. Accordingly, the current results should not be read as evidence that SECaaS-CARO is ready for direct production rollout at every new-energy or battery-storage station. They show that the calibrated score, validation-based thresholds, and low-/medium-/high-intensity service-chain dispatch are internally consistent under the stated semi-synthetic setting. A transition to real-world validation should proceed in stages. First, station logs should be collected from authentication records, access-control gateways, device-trust services, vulnerability databases, command-audit systems, service-health monitors, and policy-enforcement modules. Second, these raw logs should be mapped to the nine indicators while handling missing fields, duplicated events, inconsistent timestamps, clock drift, packet loss, and noisy outliers. Third, the risk weights and service-chain thresholds should be recalibrated on site-specific training and validation partitions, preferably with temporal separation between calibration and testing. Fourth, the policy should be evaluated in offline replay or shadow mode before active enforcement. This staged procedure would allow false alarms, blocking success, latency, CPU cost, and service-chain density to be measured under realistic operational noise. The current single-cycle evaluation is applicable when service-chain execution does not strongly change the next calibration distribution. It should be replaced by closed-loop validation when audit density or enforcement actions materially change subsequent logs. The present evaluation should therefore be interpreted as conceptual and reproducible engineering validation rather than conclusive production readiness.

Two extensions are especially important. One is to replace the current service-cost model with measured microservice traces from a real station platform. The other is to couple the event model with stronger physical constraints from battery, converter, or feeder states. Richer zero-trust telemetry from industrial identity platforms could also be integrated [

7,

21]. Even without those additions, the present results indicate that calibrated context-aware service orchestration is a credible middle ground between rigid fixed rules and heavier black-box supervision.

7. Conclusions

This paper presented SECaaS-CARO, a calibrated context-aware Security-as-a-Service orchestration framework for new-energy and energy-storage stations. The method organizes station protection into explicit services, computes a monotone nine-indicator risk score, and dispatches adaptive service chains through validation-tuned thresholds. In repeated experiments on a reproducible semi-synthetic station dataset, the proposed method delivered the highest blocking success and the highest deployment utility while maintaining near-supervised F1 performance and significantly lower latency than the strongest learned baselines. These findings suggest that service-oriented adaptive protection is a promising and lightweight engineering direction for station-level cyber security. Future work will connect the framework to public or site-specific industrial-control traces and perform closed-loop, hardware-timed validation before claiming production readiness.

Author Contributions

Conceptualization, H.X., B.F. and Q.T.; methodology, H.X., B.F., Q.L. and Q.T.; formal analysis, H.X. and B.F.; investigation, H.X., F.Y., Y.K. and Y.H.; writing—original draft preparation, H.X. and Q.L.; writing—review and editing, H.X., B.F., F.Y., Y.K., Y.H., Q.L. and Q.T.; supervision, Q.T. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the Science and Technology Project of State Grid Hubei Electric Power Co., Ltd., grant number B31532259621.

Data Availability Statement

The data presented in this study are available in the article/

Supplementary Materials. Additional data supporting the reported results are available from the corresponding author upon reasonable request.

Acknowledgments

The authors would like to thank the State Grid Hubei Electric Power Research Institute and Northwest A&F University for their support.

Conflicts of Interest

Authors Haozhe Xiong, Bingyang Feng, Fangbin Yan, Yiqun Kang and Yuxuan Hu were employed by the company State Grid Hubei Electric Power Co., Ltd. The remaining authors declare that the research was conducted in the absence of any commercial or financial relationships that could be construed as a potential conflict of interest.

References

- Fang, X.; Misra, S.; Xue, G.; Yang, D. Smart Grid—The New and Improved Power Grid: A Survey. IEEE Commun. Surv. Tutor. 2012, 14, 944–980. [Google Scholar] [CrossRef]

- Sridhar, S.; Hahn, A.; Govindarasu, M. Cyber–Physical System Security for the Electric Power Grid. Proc. IEEE 2012, 100, 210–224. [Google Scholar] [CrossRef]

- Humayed, A.; Lin, J.; Li, F.; Luo, B. Cyber–Physical Systems Security—A Survey. IEEE Internet Things J. 2017, 4, 1802–1831. [Google Scholar] [CrossRef]

- Jia, C.; Liu, W.; Chau, K.T.; He, H.; Zhou, J.; Niu, S. Passenger-aware reinforcement learning for efficient and robust energy management of fuel cell buses. eTransportation 2026, 27, 100537. [Google Scholar] [CrossRef]

- Li, K.; Zhou, J.; Jia, C.; Yi, F.; Zhang, C. Energy sources durability energy management for fuel cell hybrid electric bus based on deep reinforcement learning considering future terrain information. Int. J. Hydrogen Energy 2024, 52, 821–833. [Google Scholar] [CrossRef]

- Stouffer, K.; Pillitteri, V.; Lightman, S.; Abrams, M.; Hahn, A. Guide to Operational Technology (OT) Security; NIST SP 800-82 Rev. 3; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2023. [Google Scholar]

- Rose, S.; Borchert, O.; Mitchell, S.; Connelly, S. Zero Trust Architecture; NIST SP 800-207; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2020. [Google Scholar]

- IEC 62443-3-3; Industrial Communication Networks—Network and System Security—Part 3-3: System Security Requirements and Security Levels. International Electrotechnical Commission (IEC): Geneva, Switzerland, 2013.

- IEC 62443-4-2; Security for Industrial Automation and Control Systems—Part 4-2: Technical Security Requirements for IACS Components. International Electrotechnical Commission (IEC): Geneva, Switzerland, 2019.

- IEC 62351-5:2023; Power Systems Management and Associated Information Exchange–Data and Communications Security–Part 5: Security for IEC 60870-5 and Derivatives. International Electrotechnical Commission (IEC): Geneva, Switzerland, 2023; ISBN 978-2-8322-6017-3.

- Hou, J.; Hu, C.; Lei, S.; Hou, Y. Cyber Resilience of Power Electronics-Enabled Power Systems: A Review. Renew. Sustain. Energy Rev. 2024, 189, 114036. [Google Scholar] [CrossRef]

- Naseri, F.; Kazemi, Z.; Larsen, P.G.; Arefi, M.M.; Schaltz, E. Cyber-Physical Cloud Battery Management Systems: Review of Security Aspects. Batteries 2023, 9, 382. [Google Scholar] [CrossRef]

- Kharlamova, N.; Træhold, C.; Hashemi, S. Cyberattack Detection Methods for Battery Energy Storage Systems. J. Energy Storage 2023, 69, 107795. [Google Scholar] [CrossRef]

- Chen, J.; Yan, J.; Kemmeugne, A.; Kassouf, M.; Debbabi, M. Cybersecurity of Distributed Energy Resource Systems in the Smart Grid: A Survey. Appl. Energy 2025, 383, 125364. [Google Scholar] [CrossRef]

- Rouhani, S.H.; Su, C.-L.; Mobayen, S.; Razmjooy, N.; Elsisi, M. Cyber Resilience in Renewable Microgrids: A Review of Standards, Challenges, and Solutions. Energy 2024, 309, 133081. [Google Scholar] [CrossRef]

- Wang, X.; Geng, Y.; Luo, X.; Guan, X. Detection of Dummy Data Injection Attacks by Using Particle Swarm Optimization-Attention Temporal Graph Convolutional Network Model in Power System. Eng. Appl. Artif. Intell. 2026, 171, 114259. [Google Scholar] [CrossRef]

- Knowles, W.; Prince, D.; Hutchison, D.; Disso, J.-F.P.; Jones, K. A Survey of Cyber Security Management in Industrial Control Systems. Int. J. Crit. Infrastruct. Prot. 2015, 9, 52–80. [Google Scholar] [CrossRef]

- Volkova, A.; Niedermeier, M.; Basmadjian, R.; de Meer, H. Security Challenges in Control Network Protocols: A Survey. IEEE Commun. Surv. Tutor. 2019, 21, 619–639. [Google Scholar] [CrossRef]

- Chen, Y.; Li, J.; Lu, Q.; Lin, H.; Xia, Y.; Li, F. Cyber Security for Multi-Station Integrated Smart Energy Stations: Architecture and Solutions. Energies 2021, 14, 4287. [Google Scholar] [CrossRef]

- Sadeghi, A.-R.; Wachsmann, C.; Waidner, M. Security and Privacy Challenges in Industrial Internet of Things. In Proceedings of the 52nd Annual Design Automation Conference, San Francisco, CA, USA, 7–11 June 2015; pp. 1–6. [Google Scholar]

- National Institute of Standards and Technology. The NIST Cybersecurity Framework (CSF) 2.0; Cybersecurity White Paper 29; NIST: Gaithersburg, MD, USA, 2024.

Figure 1.

SECaaS-CARO architecture for station-oriented adaptive protection.

Figure 1.

SECaaS-CARO architecture for station-oriented adaptive protection.

Figure 2.

Decision workflow of SECaaS-CARO.

Figure 2.

Decision workflow of SECaaS-CARO.

Figure 3.

Class composition of the canonical train, validation, and test splits.

Figure 3.

Class composition of the canonical train, validation, and test splits.

Figure 4.

Main comparison of protection quality across the proposed method and baselines.

Figure 4.

Main comparison of protection quality across the proposed method and baselines.

Figure 5.

Risk-score separation on the canonical test split. The dashed lines indicate the selected low- and high-intensity thresholds.

Figure 5.

Risk-score separation on the canonical test split. The dashed lines indicate the selected low- and high-intensity thresholds.

Figure 6.

Ablation study of the proposed framework.

Figure 6.

Ablation study of the proposed framework.

Figure 7.

Sensitivity of the proposed method to the high-risk threshold.

Figure 7.

Sensitivity of the proposed method to the high-risk threshold.

Figure 8.

Utility under increasing attack-ratio shift.

Figure 8.

Utility under increasing attack-ratio shift.

Figure 9.

Partial-dependence monotonicity check for the nine risk-aligned indicators.

Figure 9.

Partial-dependence monotonicity check for the nine risk-aligned indicators.

Table 1.

Indicators used in the SECaaS-CARO risk model.

Table 1.

Indicators used in the SECaaS-CARO risk model.

| Indicator | Meaning | Risk Direction | Operational Source |

|---|

| Device trust | Terminal integrity and endpoint posture confidence | Higher value lowers risk | Endpoint inventory and trust service |

| Vulnerability score | Exposure of the target asset to known weaknesses | Higher value raises risk | Patch and vulnerability records |

| Access anomaly | Deviation from normal access timing, route, or frequency | Higher value raises risk | Session and access logs |

| Data sensitivity | Criticality of the command or data object being accessed | Higher value raises risk | Command taxonomy and asset registry |

| Service health | Health of the target service chain and its dependencies | Higher value lowers risk | Runtime health checks |

| Command deviation | Deviation of the requested control command from normal practice | Higher value raises risk | Dispatch history and asset model |

| Login risk | Suspicion level of the authentication context | Higher value raises risk | Identity provider and login audit |

| Network instability | Quality deterioration or instability around the path | Higher value raises risk | Network telemetry |

| Identity confidence | Confidence in the asserted identity and credential chain | Higher value lowers risk | Identity and trust service |

Table 2.

Composition of the canonical train, validation, and test splits.

Table 2.

Composition of the canonical train, validation, and test splits.

| Split | Normal | Suspicious | Attack | Positive Ratio |

|---|

| Train | 5760 | 2070 | 1170 | 0.36 |

| Validation | 1920 | 690 | 390 | 0.36 |

| Test | 3200 | 1150 | 650 | 0.36 |

Table 3.

Policy mapping used in the service-chain simulation.

Table 3.

Policy mapping used in the service-chain simulation.

| Attributes | Low () | Medium () | High () |

|---|

| Primary action | Lightweight verification | Enhanced policy chain | Strict response chain |

| Mean latency (ms) | 16.8 | 24.6 | 35.5 |

| CPU cost | 1.9 | 2.9 | 4.1 |

| Operational meaning | Basic credential validation and sparse audit | Fine-grained authorization and denser logging | Session isolation, strong response, and dense audit |

Table 4.

Main comparison over 10 independently resampled 5000-session test streams. Values are mean ± standard deviation.

Table 4.

Main comparison over 10 independently resampled 5000-session test streams. Values are mean ± standard deviation.

| Method | F1 | FAR | Blocking | Latency (ms) | CPU Cost | Utility |

|---|

| SECaaS-CARO | | | | | | |

| Logistic-Fixed | | | | | | |

| RandomForest-Fixed | | | | | | |

| Static-Rule | | | | | | |

Table 5.

Component-separation comparison with adaptive-threshold learned baselines. Values are mean ± standard deviation.

Table 5.

Component-separation comparison with adaptive-threshold learned baselines. Values are mean ± standard deviation.

| Method | Thresholds | F1 | Blocking | Latency (ms) | Utility |

|---|

| SECaaS-CARO | 0.42/0.57 | 0.973 ± 0.003 | 0.965 ± 0.002 | 21.28 ± 0.03 | 1.173 ± 0.004 |

| Logistic-Fixed | 0.45/0.71 | 0.974 ± 0.003 | 0.942 ± 0.001 | 27.06 ± 0.03 | 1.111 ± 0.004 |

| Logistic-Adaptive | 0.47/0.69 | 0.973 ± 0.003 | 0.942 ± 0.001 | 27.06 ± 0.03 | 1.111 ± 0.004 |

| RandomForest-Fixed | 0.43/0.71 | 0.957 ± 0.003 | 0.947 ± 0.001 | 28.15 ± 0.04 | 1.073 ± 0.004 |

| RandomForest-Adaptive | 0.43/0.69 | 0.957 ± 0.003 | 0.947 ± 0.001 | 28.19 ± 0.04 | 1.073 ± 0.004 |

Table 6.

Scenario-wise attack behavior over 10 independent resamples. Values are mean ± standard deviation.

Table 6.

Scenario-wise attack behavior over 10 independent resamples. Values are mean ± standard deviation.

| Attack Scenario | Method | Blocking Success | High-Intensity Rate |

|---|

| Malicious Command | SECaaS-CARO | 0.984 ± 0.002 | 0.974 ± 0.009 |

| Malicious Command | Logistic-Fixed | 0.960 ± 0.000 | 1.000 ± 0.000 |

| Malicious Command | RandomForest-Fixed | 0.965 ± 0.000 | 1.000 ± 0.000 |

| Malicious Command | Static-Rule | 0.933 ± 0.002 | 0.970 ± 0.009 |

| Privilege Abuse | SECaaS-CARO | 0.963 ± 0.002 | 0.969 ± 0.009 |

| Privilege Abuse | Logistic-Fixed | 0.940 ± 0.000 | 1.000 ± 0.000 |

| Privilege Abuse | RandomForest-Fixed | 0.945 ± 0.000 | 1.000 ± 0.000 |

| Privilege Abuse | Static-Rule | 0.913 ± 0.002 | 0.968 ± 0.009 |

| Gateway Takeover | SECaaS-CARO | 0.943 ± 0.002 | 0.971 ± 0.010 |

| Gateway Takeover | Logistic-Fixed | 0.920 ± 0.000 | 1.000 ± 0.000 |

| Gateway Takeover | RandomForest-Fixed | 0.925 ± 0.000 | 1.000 ± 0.000 |

| Gateway Takeover | Static-Rule | 0.887 ± 0.003 | 0.946 ± 0.012 |

| BMS Manipulation | SECaaS-CARO | 0.957 ± 0.002 | 0.987 ± 0.010 |

| BMS Manipulation | Logistic-Fixed | 0.930 ± 0.000 | 1.000 ± 0.000 |

| BMS Manipulation | RandomForest-Fixed | 0.935 ± 0.000 | 1.000 ± 0.000 |

| BMS Manipulation | Static-Rule | 0.901 ± 0.002 | 0.959 ± 0.011 |

Table 7.

Ablation results over 10 independent test resamples.

Table 7.

Ablation results over 10 independent test resamples.

| Variant | F1 | Blocking | Latency (ms) | Utility |

|---|

| SECaaS-CARO | 0.973 ± 0.003 | 0.966 ± 0.002 | 21.28 ± 0.03 | 1.174 ± 0.004 |

| w/o threshold tuning | 0.973 ± 0.003 | 0.911 ± 0.006 | 20.64 ± 0.04 | 1.157 ± 0.005 |

| w/o identity fusion | 0.967 ± 0.004 | 0.964 ± 0.002 | 21.21 ± 0.02 | 1.166 ± 0.005 |

| w/o service context | 0.958 ± 0.003 | 0.962 ± 0.003 | 21.28 ± 0.03 | 1.154 ± 0.004 |

Table 8.

Robustness under attack-ratio shift. Values are mean ± standard deviation over 10 resampled streams.

Table 8.

Robustness under attack-ratio shift. Values are mean ± standard deviation over 10 resampled streams.

| Attack Ratio | Method | Utility | Blocking |

|---|

| 0.08 | SECaaS-CARO | 1.176 ± 0.003 | 0.966 ± 0.002 |

| 0.08 | Logistic-Fixed | 1.117 ± 0.003 | 0.941 ± 0.001 |

| 0.08 | RandomForest-Fixed | 1.079 ± 0.005 | 0.946 ± 0.001 |

| 0.08 | Static-Rule | 1.126 ± 0.004 | 0.912 ± 0.004 |

| 0.12 | SECaaS-CARO | 1.172 ± 0.002 | 0.965 ± 0.001 |

| 0.12 | Logistic-Fixed | 1.111 ± 0.002 | 0.942 ± 0.001 |

| 0.12 | RandomForest-Fixed | 1.072 ± 0.004 | 0.947 ± 0.001 |

| 0.12 | Static-Rule | 1.125 ± 0.004 | 0.912 ± 0.002 |

| 0.16 | SECaaS-CARO | 1.173 ± 0.003 | 0.965 ± 0.002 |

| 0.16 | Logistic-Fixed | 1.111 ± 0.003 | 0.942 ± 0.000 |

| 0.16 | RandomForest-Fixed | 1.077 ± 0.003 | 0.947 ± 0.000 |

| 0.16 | Static-Rule | 1.131 ± 0.007 | 0.912 ± 0.001 |

| 0.20 | SECaaS-CARO | 1.168 ± 0.004 | 0.965 ± 0.001 |

| 0.20 | Logistic-Fixed | 1.104 ± 0.003 | 0.942 ± 0.000 |

| 0.20 | RandomForest-Fixed | 1.071 ± 0.004 | 0.947 ± 0.000 |

| 0.20 | Static-Rule | 1.132 ± 0.002 | 0.912 ± 0.001 |

Table 9.

Counterfactual monotonicity checks on high-risk test sessions.

Table 9.

Counterfactual monotonicity checks on high-risk test sessions.

| Risk-Aligned Indicator | Weight | Mean Score Change for +0.05 | Violation Rate |

|---|

| 1 − device trust | 0.158 | 0.0079 | 0.000 |

| Vulnerability score | 0.102 | 0.0051 | 0.000 |

| Access anomaly | 0.108 | 0.0054 | 0.000 |

| Data sensitivity | 0.040 | 0.0020 | 0.000 |

| 1 − service health | 0.161 | 0.0080 | 0.000 |

| Command deviation | 0.111 | 0.0055 | 0.000 |

| Login risk | 0.109 | 0.0054 | 0.000 |

| Network instability | 0.090 | 0.0045 | 0.000 |

| 1 − identity confidence | 0.121 | 0.0061 | 0.000 |

Table 10.

Service-chain allocation by true session group on the canonical test split.

Table 10.

Service-chain allocation by true session group on the canonical test split.

| True Group | Low Chain | Medium Chain | High Chain |

|---|

| Normal | 0.988 | 0.012 | 0.000 |

| Suspicious | 0.051 | 0.818 | 0.130 |

| Attack | 0.000 | 0.028 | 0.972 |

Table 11.

Single-indicator removal for the highest-weight indicators.

Table 11.

Single-indicator removal for the highest-weight indicators.

| Removed Indicator | Weight | ΔF1 | ΔUtility |

|---|

| Service health | 0.161 | −0.060 | −0.055 |

| Device trust | 0.158 | −0.015 | −0.016 |

| Identity confidence | 0.121 | −0.005 | −0.006 |

| Command deviation | 0.111 | −0.014 | −0.030 |

| Login risk | 0.109 | −0.005 | −0.011 |

Table 12.

Sensitivity of validation utility and selected thresholds to utility-coefficient changes.

Table 12.

Sensitivity of validation utility and selected thresholds to utility-coefficient changes.

| Coefficient Setting | Low Threshold | High Threshold | Validation Utility |

|---|

| Blocking coefficient = 0.30 | 0.42 | 0.57 | 1.080 |

| Blocking coefficient = 0.40 | 0.42 | 0.57 | 1.177 |

| Blocking coefficient = 0.50 | 0.42 | 0.57 | 1.273 |

| FAR coefficient = 0.45 | 0.42 | 0.57 | 1.178 |

| FAR coefficient = 0.55 | 0.42 | 0.57 | 1.177 |

| FAR coefficient = 0.65 | 0.42 | 0.57 | 1.176 |

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |