On the Use of an Extreme Learning Machine for GitHub Repository Popularity Prediction Based on Static Software Metrics

Abstract

1. Introduction

- The construction of a diverse and comprehensive dataset that integrates GitHub metrics with static software metrics collected from repositories belonging to different application domains;

- The development of an automated and modular data collection framework that utilizes the GitHub API and SourceMonitor CLI is developed to ensure reproducibility and scalability, enabling the construction of diverse datasets across different application domains;

- The introduction and evaluation of the ELM method for GitHub repository popularity prediction, providing a novel methodological perspective;

- A systematic comparison of the proposed ELM-based approach with several baseline machine learning methods, including Linear Regression (LR), Support Vector Machine (SVM), Random Forest (RF), and Least Squares Boosting (LSBoost), to evaluate its relative predictive performance across different datasets;

- Ablation analysis and multi-model comparisons, to systematically analyze the individual and combined effects of GitHub-based and static software metrics.

2. Literature Review

3. Data Acquisition

3.1. Software Data Acquisition Tool

3.2. Dataset Properties

4. Proposed Methodology

4.1. Extreme Learning Machine

4.1.1. Gradient-Based Solutions

- First, the convergence speed is highly sensitive to the choice of the learning rate; a small learning rate leads to slow convergence, whereas a large learning rate may cause instability or divergence;

- Second, these methods are prone to getting trapped in local minima, which prevents the model from reaching the global optimum;

- Third, an artificial neural network might be overtrained and exhibit poor generalization performance when trained using the back-propagation learning process. Therefore, the approach for reducing the cost function must include legitimate and acceptable halting methods;

- Finally, the iterative nature of gradient-based optimization often leads to high computational cost, making these methods less suitable for large-scale or time-sensitive applications.

4.1.2. Least Squares Norm

- Step 1: Randomly assign the input weights and hidden layer bias values for ;

- Step 2: Compute the hidden layer output matrix using the activation function based on the assigned weights and biases;

- Step 3: Determine , in which , and denotes the Moore–Penrose generalized inverse of matrix .

4.2. Data Preprocessing

4.3. Evaluation Metrics

5. Results and Discussion

5.1. Evaluation Scheme

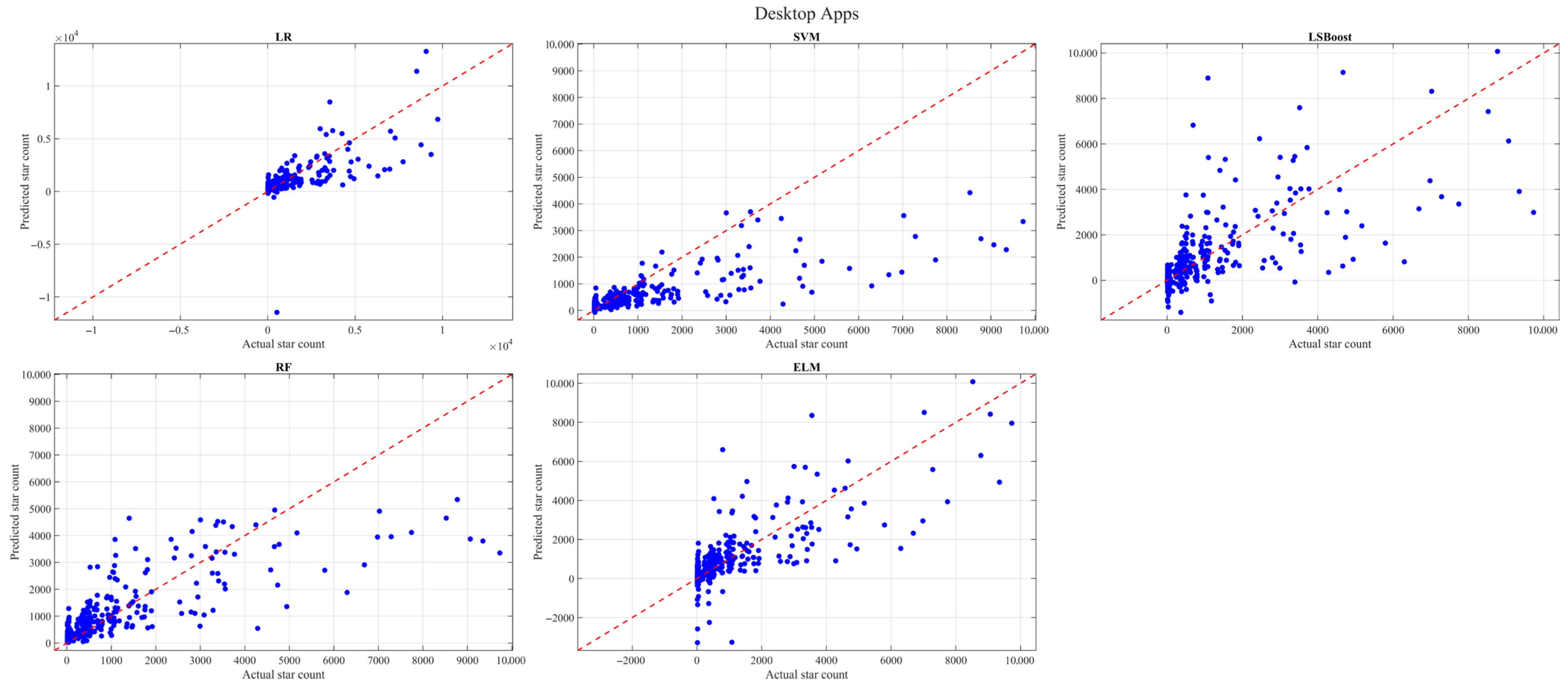

5.2. Results

5.3. Discussion

5.4. Limitations and Threats to Validity

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Baumgartner, N.; Iyenghar, P.; Schoemaker, T.; Pulvermüller, E. AI-driven Refactoring: A Pipeline for Identifying and Correcting Data Clumps in Git Repositories. Electronics 2024, 13, 1644. [Google Scholar] [CrossRef]

- Pejić, N.; Radivojević, Z.; Cvetavnović, M. Analyzing the Impact of COVID-19 on GitHub Event Trends. Sustainability 2023, 15, 14622. [Google Scholar] [CrossRef]

- Moreno Martínez, C.; Gallego Carracedo, J.; Sánchez Gallego, J. Characterizing Agile Software Development: Insights from a Data-driven Approach using Large-scale Public Repositories. Software 2025, 4, 13. [Google Scholar] [CrossRef]

- Saini, M.; Verma, R.; Singh, A.; Chahal, K.K. Investigating Diversity and Impact of the Popularity Metrics for Ranking Software Packages. J. Softw. Evol. Process 2020, 32, e2265. [Google Scholar] [CrossRef]

- Wang, T.; Wang, S.; Chen, T.H.P. Study the Correlation between the Readme File of GitHub Projects and their Popularity. J. Syst. Softw. 2023, 205, 111806. [Google Scholar] [CrossRef]

- Khezemi, N.; Ejaz, S.; Moha, N.; Guéhéneuc, Y.G. A comparison of code quality metrics and best practices in non-IoT and IoT systems. Internet Things 2025, 34, 101803. [Google Scholar] [CrossRef]

- Coelho, J.; Valente, M.T.; Milen, L.; Silva, L.L. Is this GitHub Project Maintained? Measuring the Level of Maintenance Activity of Open-source Projects. Inf. Softw. Technol. 2020, 122, 106274. [Google Scholar] [CrossRef]

- Xie, Q.; Wang, J.; Kim, G.; Lee, S.; Song, M. A Sensitivity Analysis of Factors Influential to the Popularity of Shared Data in Data Repositories. J. Informetr. 2021, 15, 101142. [Google Scholar] [CrossRef]

- Moid, M.A.; Siraj, A.; Ali, M.F.; Amoodi, A.O. Predicting Stars on Open-Source GitHub Projects. In Proceedings of the 2021 Smart Technologies, Communication and Robotics (STCR), Sathyamangalam, India, 9–10 October 2021; pp. 1–9. [Google Scholar] [CrossRef]

- AlMarzouq, M.; AlZaidan, A.; AlDallal, J. Mining GitHub for Research and Education: Challenges and Opportunities. Int. J. Web Inf. Syst. 2020, 16, 451–473. [Google Scholar] [CrossRef]

- Abedini, Y.; Heydarnoori, A. Can GitHub Issues Help in App Review Classifications? ACM Trans. Softw. Eng. Methodol. 2024, 33, 209. [Google Scholar] [CrossRef]

- Ghodke, G.M.; Chavan, T. An Overview of Git. Int. J. Sci. Res. Mod. Sci. Technol. 2024, 3, 17–23. [Google Scholar] [CrossRef]

- Kalliamvakou, E.; Gousios, G.; Blincoe, K.; Singer, L.; German, D.M.; Damian, D. An in-depth Study of the Promises and Perils of Mining GitHub. Empir. Softw. Eng. 2016, 21, 2035–2071. [Google Scholar] [CrossRef]

- Dabic, O.; Aghajani, E.; Bavota, G. Sampling Projects in GitHub for MSR Studies. In Proceedings of the 2021 IEEE/ACM 18th International Conference on Mining Software Repositories (MSR), Madrid, Spain, 17–19 May 2021; pp. 560–564. [Google Scholar] [CrossRef]

- Wang, J.; Zhang, X.; Chen, L.; Xie, X. Personalizing Label Prediction for GitHub Issues. Inf. Softw. Technol. 2022, 145, 106845. [Google Scholar] [CrossRef]

- Moriconi, F.; Durieux, T.; Falleri, J.R.; Troncy, R.; Francillon, A. GHALogs: Large-scale Dataset of GitHub Actions Runs. In Proceedings of the 2025 IEEE/ACM 22nd International Conference on Mining Software Repositories (MSR), Ottawa, ON, Canada, 28–29 April 2025; pp. 669–673. [Google Scholar] [CrossRef]

- Alqaradaghi, M.; Kozsik, T. Comprehensive Evaluation of Static Analysis Tools for Their Performance in Finding Vulnerabilities in Java Code. IEEE Access 2024, 12, 55824–55842. [Google Scholar] [CrossRef]

- Ehrlinger, L.; Wöß, W. A Survey of Data Quality Measurement and Monitoring Tools. Front. Big Data 2022, 5, 850611. [Google Scholar] [CrossRef]

- Schnoor, H.; Hasselbring, W. Comparing Static and Dynamic Weighted Software Coupling Metrics. Computers 2020, 9, 24. [Google Scholar] [CrossRef]

- Pachouly, J.; Ahirrao, S.; Kotecha, K.; Selvachandran, G.; Abraham, A. A Systematic Literature Review on Software Defect Prediction using Artificial Intelligence: Datasets, Data Validation Methods, Approaches, and Tools. Eng. Appl. Artif. Intell. 2022, 111, 104773. [Google Scholar] [CrossRef]

- Goyal, S. Static Code Metrics-based Deep Learning Architecture for Software Fault Prediction. Soft Comput. 2022, 26, 13765–13797. [Google Scholar] [CrossRef]

- Borandag, E. Software Fault Prediction using an RNN-based Deep Learning Approach and Ensemble Machine Learning Techniques. Appl. Sci. 2023, 13, 1639. [Google Scholar] [CrossRef]

- Nevendra, M.; Singh, P. A Survey of Software Defect Prediction based on Deep Learning. Arch. Comput. Methods Eng. 2022, 29, 5723–5748. [Google Scholar] [CrossRef]

- Jorayeva, M.; Akbulut, A.; Catal, C.; Mishra, A. Machine Learning-based Software Defect Prediction for Mobile Applications: A Systematic Literature Review. Sensors 2022, 22, 2551. [Google Scholar] [CrossRef]

- Khleel, N.A.A.; Nehéz, K. Software Defect Prediction using a Bidirectional LSTM Network Combined with Oversampling Techniques. Clust. Comput. 2024, 27, 3615–3638. [Google Scholar] [CrossRef]

- Jo, S.; Kwon, R.; Kwon, G. Probabilistic Model Checking GitHub Repositories for Software Project Analysis. Appl. Sci. 2024, 14, 1260. [Google Scholar] [CrossRef]

- Chowdhury, S.; Uddin, G.; Hemmati, H.; Holmes, R. Method-level Bug Prediction: Problems and Promises. ACM Trans. Softw. Eng. Methodol. 2024, 33, 98. [Google Scholar] [CrossRef]

- Jász, J. The Effectiveness of Hidden Dependence Metrics in Bug Prediction. IEEE Access 2024, 12, 77214–77225. [Google Scholar] [CrossRef]

- Wu, X.; Wang, L.; Zheng, Z.; Sang, B.; Zhang, J.; Tao, X. An Entropy-based Measure of Fork Diversity and its Correlations with Open Source Software Projects’ Received Contributions. Empir. Softw. Eng. 2025, 30, 111. [Google Scholar] [CrossRef]

- Battulga, B.; Tsoodol, L.; Dovdon, E.; Bold, N.; Namsrai, O.E. Metric-based Defect Prediction from Class Diagram. Array 2025, 27, 100438. [Google Scholar] [CrossRef]

- Kalyani, P.; Rao, C.P.; Goparaju, B.; Babu, K.K.; Kandimalla, P.C.R. BugPrioritizeAI for Multimodal Test Case Prioritisation using Bug Reports, Code Changes, and Test Metadata. Sci. Rep. 2026, 16, 1539. [Google Scholar] [CrossRef]

- Tang, Y.; Du, Y.; Gao, J.B.; Li, A.; Yang, M.S. ISRLNN: A Software Defect Prediction Method Based on Instance Similarity Reverse Loss. J. Syst. Softw. 2026, 235, 112766. [Google Scholar] [CrossRef]

- Guo, Y.; Bettaieb, S.; Casino, F. A Comprehensive Analysis on Software Vulnerability Detection Datasets: Trends, Challenges, and Road Ahead. Int. J. Inf. Secur. 2024, 23, 3311–3327. [Google Scholar] [CrossRef]

- Tang, B.; Maruyama, K. PRCollector: Facilitating on-demand Collection of Pull Request Data from GitHub. IEEE Access 2025, 13, 150608–150622. [Google Scholar] [CrossRef]

- Özçevik, Y.; Altay, O. MetricHunter: A Software Metric Dataset Generator Utilizing SourceMonitor upon Public GitHub Repositories. SoftwareX 2023, 23, 101499. [Google Scholar] [CrossRef]

- Li, J.; Wen, Y.; Liu, J.; Zeng, B.; Mirjalili, S. GFTrans: An on-the-fly Static Analysis Framework for Code Performance Profiling. Front. Big Data 2026, 9, 1779935. [Google Scholar] [CrossRef]

- Wang, J.; Lu, S.; Wang, S.H.; Zhang, Y.D. A Review on Extreme Learning Machine. Multimed. Tools Appl. 2022, 81, 41611–41660. [Google Scholar] [CrossRef]

- Kaur, R.; Roul, R.K.; Batra, S. Multilayer Extreme Learning Machine: A Systematic Review. Multimed. Tools Appl. 2023, 82, 40269–40307. [Google Scholar] [CrossRef]

- Altay, O.; Ulas, M.; Alyamac, K.E. DCS-ELM: A Novel Method for Extreme Learning Machine for Regression Problems and a New Approach for the SFRSCC. PeerJ Comput. Sci. 2021, 7, e411. [Google Scholar] [CrossRef] [PubMed]

- Singh, D.; Singh, B. Investigating the Impact of Data Normalization on Classification Performance. Appl. Soft Comput. 2020, 97, 105524. [Google Scholar] [CrossRef]

- Ulas, M.; Altay, O.; Gurgenc, T.; Özel, C. A New Approach for Prediction of the Wear Loss of PTA Surface Coatings using Artificial Neural Network and Basic, Kernel-Based, and Weighted Extreme Learning Machine. Friction 2020, 8, 1102–1116. [Google Scholar] [CrossRef]

| Abbreviation | Meaning |

|---|---|

| AMC | Average method complexity |

| AVG_CC | Average McCabe’s complexity |

| CC | Cyclomatic complexity |

| CD | Comment density |

| CLOC | Comment lines of code |

| CMC | Class method complexity |

| DIT | Depth of the inheritance tree |

| LOC | Lines of code |

| LLOC | Logical lines of code |

| MAX_CC | Maximum McCabe’s complexity |

| NFi, NOF | Number of files |

| NOA | Number of attributes |

| NOC | Number of classes |

| NOI | Number of interfaces |

| NM, NOM | Number of methods |

| NV | Number of variables per class |

| WMC | The number of methods per class |

| Reference | Year | Purpose | Methodology | Key Attributes |

|---|---|---|---|---|

| [7] | 2021 | Analyzing metadata factors driving dataset and software popularity. | Artificial neural networks-based popularity prediction combined with statistical analysis | GitHub metrics, including forks, stars, contributors, releases, and project size growth |

| [21] | 2022 | Software fault prediction | A deep learning architecture for software fault prediction | Static software metrics including AVG_CC, AMC, DIT, LOC, MAX_CC, WMC |

| [24] | 2022 | Analyzing ML-based defect prediction in mobile applications | Systematic Literature Review (SLR) of 47 studies | Static software metrics, including CMC, DIT, NOC, NM, NV, WMC |

| [2] | 2023 | Analyzing the impact of COVID-19 on GitHub development trends | Time-series trend analysis and stationarity tests of GitHub events | GitHub metrics, including forks, issues, comments, commits, and pushes |

| [22] | 2023 | Comparing ML and DL for software fault prediction | ML and DL classifiers (including RNNs) on multiple software fault datasets | Static software metrics, including CBO, DIT, LOC, WMC, and historical change metrics. |

| [25] | 2024 | Improved software defect prediction against imbalanced datasets | Bi-LSTM model combined with oversampling (SMOTE/random) on benchmark datasets. | Static software metrics including AMC, AVG_CC, CBO, LOC, MAX_CC, NOC |

| [26] | 2024 | Analyzing GitHub activity dynamics and influencing factors | Discrete-Time Markov Chains, and model checking with probabilistic Computation Tree Logic | GitHub metrics, including repository states, pull requests, and branches |

| [27] | 2024 | Dataset construction and method-level bug prediction for practical use | Empirical evaluation and comparison on multiple datasets, including the one constructed with improvement strategies | Static software metrics, including method-level CC, HCPL, LOC, NOM |

| [28] | 2024 | Improved method-level bug prediction with a dependency-aware approach | Machine learning (Random Forest) with a dependency tracking algorithm | Static software metrics including method-level metrics and code dependencies CC, CD, LOC, NL, HCPL, NUMPAR |

| [6] | 2025 | Comparing software quality, identifying differences, and best practices | Dataset creation from GitHub, metric computation, and in-depth code examination | Static software metrics, including complexity, coupling, size, cohesion, and maintainability metrics |

| [29] | 2025 | Analyzing fork diversity (fork entropy) and its impact on public GitHub repository contributions | Empirical analysis, correlation study, ARMAX, and Transformer-based prediction models | GitHub metrics, including fork count, pull requests, bugs, and historical contribution data |

| [30] | 2025 | Predicting design-stage defects using ML/DL and software metrics | Dataset creation from class diagrams, ML/DL classification, cross-dataset evaluation | Static software metrics including size-inharitance-coupling metrics DIT, Nesting, NOC, NOA, |

| [31] | 2026 | Improving test-case prioritization using multimodal and explainable AI | Deep learning–based multimodal framework jointly uses bug reports, source code changes, and test metadata with SHAP explanations | GitHub metrics, including lines added, lines deleted, the number of files modified, and the entropy of change in a commit |

| [32] | 2026 | Improving defect prediction using deep learning with instance similarity | Image-based DL model with custom loss function (ISRL) | Static software metrics, including LOC, CC, and other project or language-specific metrics |

| Attribute | Description | Acquisition Source |

|---|---|---|

| Star Count | The number of stars voted by GitHub users/developers | GitHub |

| Fork Count | The number of forks of a repository | |

| Project Size | The size of the projects in KB | |

| Lines | The total number of lines | SourceMonitor CLI |

| Statements | The number of statements | |

| Percent Comment Lines | The percentage of comment lines | |

| Percent Documentation Lines | The percentage of documentation lines | |

| Classes, Interfaces, Structs | The number of units, i.e., classes, interfaces, or structs, depending on the programming language | |

| Methods per Class | The number of methods per class | |

| Statements per Method | The number of statements per method | |

| Maximum Complexity | The maximum complexity measure of software units | |

| Average Complexity | The average complexity measure of software units | |

| Maximum Block Depth | The maximum block depth measure of software units | |

| Average Block Depth | The average block depth measure of software units |

| Mean | Std. Dev. | Median | Min. | Max. | |

|---|---|---|---|---|---|

| Star Count | 664.76 | 1878.88 | 65 | 12 | 30,352 |

| Fork Count | 164.60 | 673.46 | 23 | 0 | 25,082 |

| Project Size | 33,746.43 | 135,286.75 | 2344 | 4 | 2,567,842 |

| Lines | 39,032.07 | 269,359.40 | 4018 | 5 | 10,590,806 |

| Statements | 16,565.04 | 110,514.26 | 1895 | 2 | 3,937,322 |

| Percent Comment Lines | 2.43 | 4.18 | 0.2 | 0 | 66.5 |

| Percent Documentation Lines | 3.57 | 6.82 | 0 | 0 | 67.3 |

| Classes, Interfaces, Structs | 381.84 | 2382.76 | 56 | 0 | 98,094 |

| Methods per Class | 4.56 | 3.35 | 3.99 | 0 | 59.91 |

| Statements per Method | 5.22 | 5.65 | 4.48 | 0 | 226.5 |

| Maximum Complexity | 42.61 | 621.59 | 15 | 0 | 35,864 |

| Dataset | Data Samples | Activation Function | Number of Neurons |

|---|---|---|---|

| CICD Apps | 220 | Sigmoid | 40 |

| Database Apps | 374 | ||

| Desktop Apps | 466 | ||

| Learning-based Apps | 160 | ||

| Mobile Apps | 1159 | ||

| Service Apps | 678 | ||

| Web Apps | 1383 | ||

| Total | 3377 |

| CICD Apps | Database Apps | ||||||

|---|---|---|---|---|---|---|---|

| Method | RMSE | MAE | R2 | Method | RMSE | MAE | R2 |

| LR | 1104.9262 | 431.7647 | 0.8171 | LR | 886.3236 | 369.9072 | 0.8175 |

| SVM | 2542.2554 | 703.3277 | 0.0319 | SVM | 2031.2968 | 594.2901 | 0.0416 |

| LSBoost | 2422.6669 | 901.6331 | 0.1209 | LSBoost | 1593.3826 | 530.8455 | 0.4103 |

| RF | 2144.1646 | 589.5706 | 0.3114 | RF | 1494.5386 | 392.6650 | 0.4812 |

| ELM | 1497.9450 | 792.3538 | 0.6639 | ELM | 849.1388 | 428.4873 | 0.8325 |

| Desktop Apps | Learning-Based Apps | ||||||

| Method | RMSE | MAE | R2 | Method | RMSE | MAE | R2 |

| LR | 1092.9208 | 518.4462 | 0.4808 | LR | 1564.6021 | 676.6005 | 0.4160 |

| SVM | 1123.7339 | 470.1709 | 0.4512 | SVM | 1927.8392 | 617.4400 | 0.1134 |

| LSBoost | 1180.9284 | 607.4464 | 0.3939 | LSBoost | 1507.1669 | 589.6497 | 0.4581 |

| RF | 911.1043 | 443.5975 | 0.6392 | RF | 1630.8948 | 507.0670 | 0.3655 |

| ELM | 984.6494 | 542.1970 | 0.5786 | ELM | 1374.4634 | 725.5094 | 0.5493 |

| Mobile Apps | Service Apps | ||||||

| Method | RMSE | MAE | R2 | Method | RMSE | MAE | R2 |

| LR | 1868.4418 | 421.5051 | 0 | LR | 883.1411 | 304.0469 | 0.7353 |

| SVM | 1453.7169 | 452.0446 | 0.1404 | SVM | 1588.9056 | 480.0818 | 0.1433 |

| LSBoost | 1037.3303 | 355.4495 | 0.5623 | LSBoost | 1359.2577 | 458.1832 | 0.3730 |

| RF | 929.4019 | 277.3370 | 0.6487 | RF | 1154.5136 | 321.6246 | 0.5477 |

| ELM | 1209.5501 | 381.7863 | 0.4049 | ELM | 672.8992 | 325.9593 | 0.8463 |

| Web Apps | Total | ||||||

| Method | RMSE | MAE | R2 | Method | RMSE | MAE | R2 |

| LR | 2317.6540 | 660.2378 | 0 | LR | 1712.5227 | 537.3132 | 0.1692 |

| SVM | 2174.7013 | 681.4602 | 0.1007 | SVM | 1731.1929 | 546.7153 | 0.1510 |

| LSBoost | 1794.0643 | 635.2489 | 0.3880 | LSBoost | 1435.2235 | 509.7410 | 0.4165 |

| RF | 1527.0361 | 450.8754 | 0.5566 | RF | 1165.5877 | 340.7816 | 0.6152 |

| ELM | 1513.4826 | 637.2216 | 0.5644 | ELM | 1245.7634 | 469.0849 | 0.5604 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Borandağ, E.; Yücalar, F.; Özçevik, Y.; Altay, O. On the Use of an Extreme Learning Machine for GitHub Repository Popularity Prediction Based on Static Software Metrics. Electronics 2026, 15, 2095. https://doi.org/10.3390/electronics15102095

Borandağ E, Yücalar F, Özçevik Y, Altay O. On the Use of an Extreme Learning Machine for GitHub Repository Popularity Prediction Based on Static Software Metrics. Electronics. 2026; 15(10):2095. https://doi.org/10.3390/electronics15102095

Chicago/Turabian StyleBorandağ, Emin, Fatih Yücalar, Yusuf Özçevik, and Osman Altay. 2026. "On the Use of an Extreme Learning Machine for GitHub Repository Popularity Prediction Based on Static Software Metrics" Electronics 15, no. 10: 2095. https://doi.org/10.3390/electronics15102095

APA StyleBorandağ, E., Yücalar, F., Özçevik, Y., & Altay, O. (2026). On the Use of an Extreme Learning Machine for GitHub Repository Popularity Prediction Based on Static Software Metrics. Electronics, 15(10), 2095. https://doi.org/10.3390/electronics15102095