1. Introduction

Autonomous vehicles are highly complex systems used in various industries, such as transportation, agriculture, defense, logistics, and warehousing. These vehicles can operate without human control by relying on advanced technologies, including sensors, artificial intelligence, and complex control algorithms. Achieving autonomous operation involves a set of subtasks, such as environment perception, simultaneous localization and mapping (SLAM), collision avoidance, path planning, and motion control. Autonomous vehicles operate in sea, air, and land environments and are therefore classified based on their operating domain as unmanned underwater vehicles (UUVs), unmanned surface vehicles (USVs), unmanned aerial vehicles (UAVs), and unmanned ground vehicles (UGVs).

The authors in [

1] reported on the growth of publications related to different types of autonomous vehicles between 2015 and 2024. Other recent survey papers on autonomous vehicles include [

2], where the authors compare vision-, LiDAR-, and wireless-sensor-based methods applied to collaborative positioning in swarms of UAVs, UGVs, USVs, and UUVs.

Table 1 presents a comparative analysis of recent review papers on autonomous vehicles. The surveys were compared with respect to the types of autonomous vehicles, methods, and tasks. Most recent surveys focus on a single type of autonomous vehicle, such as UAVs [

3,

4,

5], UUVs [

6,

7,

8,

9], mobile robots [

10,

11], or self-driving cars [

12]. Review papers covering multiple types of vehicles are much less common [

1,

2,

13,

14].

Another observation is that many surveys focus on a single task, such as path (trajectory) planning [

4,

9,

13,

14,

17], sensor fusion [

1], detection and classification [

3], collaborative positioning [

2], visual-based localization and mapping [

8], or tracking control [

7]. Surveys addressing multiple tasks are also less common [

5,

10,

11,

16].

Many surveys also consider different methodological approaches, such as traditional methods (e.g., graph-based and sampling-based), bio-inspired techniques, and machine learning-based approaches [

4,

6,

10,

11,

13,

14,

16]. Surveys focusing exclusively on machine learning-based solutions are relatively rare [

3,

5,

15,

17].

This paper presents a survey of machine learning methods (2020–2026) applied to environment perception, SLAM, collision avoidance, path planning, and motion control in different types of autonomous vehicles, including UUVs, USVs, UAVs, and ground-based mobile robots.

The contributions of this paper to the literature on autonomous vehicles can be summarized as follows:

The surveyed literature covers four types of autonomous platforms: unmanned underwater vehicles, unmanned surface vehicles, unmanned aerial vehicles, and ground-based mobile robots.

The following tasks are considered in the literature review: environment perception, simultaneous localization and mapping, collision avoidance and path planning, and motion control.

The review focuses exclusively on machine learning-based methods.

The review covers studies published between 2020 and 2026.

The analysis also includes a tabular comparison, which provides information such as authors, year of publication, machine learning method, task, and verification method (simulation and/or real-world experiments).

2. Machine Learning for Unmanned Underwater Vehicles

Unmanned Underwater Vehicles can be divided into two types: Autonomous Underwater Vehicles (AUVs) and remotely operated vehicles (ROVs) [

18]. AUVs operate without real-time human control, executing preplanned missions and potentially adapting their behavior using onboard sensors and algorithms. They typically have limited communication during operation.

ROVs are piloted by a human operator in real time, typically via a tether that provides communication (often high-bandwidth) and sometimes power. This makes them well suited for precise inspection and manipulation tasks, but it limits their range and mobility compared with fully autonomous systems. These two types of UUVs are shown in

Figure 1 and

Figure 2.

Figure 3 presents the search results for scientific articles from multiple publishers’ databases published between 2020 and 2026. The following search query was used: “unmanned underwater vehicles” OR “UUV” AND “machine learning”. The results of the bibliometric analysis related to UUVs and ML are shown in

Figure 4. The analysis was performed using VOSviewer (1.6.20) [

19], which enables the creation of cluster maps based on authors’ keywords extracted from papers published between 2020 and 2026. The data were obtained from bibliographic files exported from the Web of Science.

Figure 1.

Bluefin-21AUV [

20].

Figure 1.

Bluefin-21AUV [

20].

Figure 2.

Defender ROV [

21].

Figure 2.

Defender ROV [

21].

Figure 3.

Number of publications on ML algorithms for UUVs (2020–2026).

Figure 3.

Number of publications on ML algorithms for UUVs (2020–2026).

Figure 4.

Keyword co-occurrence clustering results in VOSviewer for “unmanned underwater vehicles” and “machine learning”.

Figure 4.

Keyword co-occurrence clustering results in VOSviewer for “unmanned underwater vehicles” and “machine learning”.

2.1. Environment Perception for UUVs

In UUVs, environment perception is typically built as a pipeline:

under strong environmental constraints such as currents, temperature, attenuation, turbidity, and multipath effects in water [

22].

Limited onboard computational power and narrow communication channels also play a key role in efficient signal processing [

18]. Recent environment perception methods for UUVs increasingly rely on forward-looking sonar, with learning-based detection and segmentation improving robustness under low visibility conditions. For instance, Cao et al. [

23] combined sonar image detection with DRL-based avoidance, while Gao et al. [

24] proposed unsupervised obstacle segmentation to reduce annotation requirements. Other works leverage oscillatory sonar scanning for 3D reconstruction to support obstacle avoidance in complex environments [

25].

Environment perception is tightly coupled with collision avoidance and path planning, and many studies address these tasks simultaneously, for example, by combining sonar-based detection with avoidance or planning frameworks [

23,

25,

26,

27].

2.2. Simultaneous Localization and Mapping for UUVs

This section reviews recent advances in deep learning (DL)-based underwater SLAM that address challenges encountered during underwater navigation. Conventional SLAM techniques often show limited performance in such conditions [

28]. Recent surveys highlight key challenges in underwater SLAM, such as sensing degradation, illumination changes, and the need for multi-sensor integration [

28]. For UUV-specific visual navigation and positioning, Qin et al. [

6] provide a comprehensive survey discussing SLAM-related pipelines and sensing configurations for underwater environments.

According to the survey, there is no single dominant or widely used machine learning method for UUV SLAM; however, deep learning-based methods appear to be the most prominent ML family discussed, most often in combination with adaptive multi-sensor fusion and Kalman-filter-based localization frameworks [

22]. Image recognition methods such as CNNs and YOLO are used in UUV SLAM primarily as front-end perception modules for feature extraction, object detection, and segmentation. However, in the current literature, they are more often applied to support specific SLAM components rather than serving as the basis of a complete end-to-end UUV SLAM framework.

2.3. Collision Avoidance and Path Planning for UUVs

Recent works increasingly apply ML, especially deep reinforcement learning (DRL), to local collision avoidance and real-time path planning for UUVs operating in cluttered and uncertain underwater environments. For example, Gao et al. [

26] proposed a PPO–DWA planner that combines the Proximal Policy Optimization algorithm with a modified Dynamic Window Approach to satisfy kinematic constraints while using forward-looking sonar observations. A related approach based on the A3C algorithm was presented in [

29], while in [

30] the authors proposed Deep Deterministic Policy Gradient (DDPG) for collision avoidance tasks.

Learning in unknown environments is also a challenging task but can be effectively addressed through the integration of the Dubins Improved Hybrid A* (DIHA*) algorithm and the Fuzzy Heading Avoidance (FHA) algorithm. ML has also been applied to AUV obstacle avoidance using event-triggered reinforcement learning [

31] and the Elephant Clan Update Optimization algorithm [

32].

Figure 5 and

Table 2 summarize recent collision avoidance and path planning methods for UUVs/AUVs.

2.4. Motion Control of UUVs

Motion control of UUVs refers to the set of algorithms that enable the vehicle to follow desired motions, i.e., regulate its position, velocity, and attitude by commanding actuators (thrusters, control fins, and ballast systems), while accounting for nonlinear hydrodynamics and environmental disturbances.

In practice, it includes tasks such as heading, depth, and attitude control, station keeping, and trajectory/path tracking, typically covering full six-degree-of-freedom (6-DOF) motion: surge, sway, heave, roll, pitch, and yaw.

Motion control is closely linked to collision avoidance and path planning, which is why many studies overlap between

Section 2.3 and

Section 2.4.

Additional papers on machine learning-based motion control for UUVs are presented in

Figure 6 and

Table 3. They include solutions based on advanced algorithms such as Multi-Agent Proximal Policy Optimization (MAPPO) and Weighted Generative Adversarial Imitation Learning (WGAIL), which can mitigate reward-design challenges in DRL-based controllers, as well as reinforcement learning methods enhanced with large language models (LLMs).

3. Machine Learning for Unmanned Surface Vehicles

Unmanned surface vehicles (USVs) can be regarded as autonomous marine robots. They are used for various marine tasks, such as environmental monitoring, harbor patrol, coastal and seabed mapping, offshore platform inspection, oceanographic and meteorological data collection, and search and rescue operations.

Figure 7 summarizes the results of a search in multiple publishers’ databases for papers on machine learning applied to USVs published between 2020 and 2026. The following search query was used: “unmanned surface vehicle” OR “USV” AND “machine learning”. The results of a bibliometric analysis related to USVs and ML are shown in

Figure 8. The analysis was performed using VOSviewer [

19], which enables the creation of cluster maps based on authors’ keywords extracted from papers published between 2020 and 2026. The data were obtained from bibliographic files exported from the Web of Science.

3.1. Environment Perception for USVs

Environment perception in USVs typically involves processing data from sensors such as cameras, LiDAR, radar, sonar, Global Navigation Satellite System (GNSS), and Inertial Measurement Units (IMUs). The aim of this task is to identify obstacles such as vessels and shorelines, as well as environmental conditions such as waves and visibility. These data are crucial for enabling safe and autonomous navigation of USVs and serve as input to algorithms performing functions such as collision avoidance, path planning, and autonomous decision-making. An example of a USV,

HydroDron, with an environment perception system is shown in

Figure 9. The paper [

39] presents a survey of recent solutions and technologies applied to USVs, with special emphasis on learning-based methodologies and data-driven systems for guidance, navigation, and control.

Figure 10 and

Table 4 present recent ML-based environment perception methods for USVs.

In [

41], a dual-camera system applied on a USV was proposed for target detection and localization. In this approach, a convolutional neural network (CNN), YOLOv3, was used for target detection and extraction of regions of interest (ROIs). Feature extraction and matching were then performed within the ROIs instead of across the whole image. Targets were localized by applying the triangulation principle using matched points and calibrated camera parameters.

The authors in [

42] introduced an environment perception system for a USV based on sensor fusion of camera and LiDAR data. The system consists of five modules: a surface image denoising module (SIDM), a water reflection removal module (WRRM), a sea-sky detection module (SSDM), a surface target detection module (STDM), and an obstacle avoidance module (OAM). In the SSDM module, an SVM classifier was used to determine a hyperplane separating the sea and sky regions. In the STDM module, the YOLOv3 CNN was used for target detection, similarly to [

41]. The environment perception system was tested in simulations and real-world experiments using the USV platform

WAM-V-USV. Field tests were carried out on the Songhua River in China and in Hawaii, USA.

In [

43], the authors also presented an approach based on camera data for target detection. A CNN was used in this approach to improve obstacle edge detection accuracy. It was integrated into a superpixel segmentation method called Simple Linear Iterative Clustering (SLIC). The method was validated in simulations using three publicly available maritime image datasets.

The authors in [

44] proposed a cooperative USV–UAV system based on camera data for object detection and classification, as well as semantic segmentation of sea and air regions. The system utilized CNNs, specifically the YOLOX and PIDNet models. The approach was evaluated in field tests carried out in the Huanghai Sea near Yancheng, China, using a cooperative USV–UAV platform consisting of an unmanned catamaran and a quadrotor.

In [

45], the authors introduced a visual perception method utilizing a lightweight convolutional neural network (CNN) and the USV’s real-time heading angle. The model inputs include local environmental images and heading angle features.

In [

46], the authors proposed a semantic segmentation method to classify image elements into three categories: navigable water surfaces, waterborne obstacles, and background regions. The authors developed a model called MarineSeg, composed of a CNN–Transformer encoder with a voting-based decoder.

The analysis of recent literature on ML-based environment perception for USVs indicates that most methods are based on camera data and the application of CNNs for target detection [

41,

42,

43]. These deep learning techniques have significantly improved perception accuracy and robustness in complex and dynamic marine environments.

CNNs are commonly used for image-based tasks, including those involving USVs, due to their ability to effectively handle complex visual variability such as waves, reflections, and lighting conditions. Besides object detection, another task commonly addressed using ML-based visual perception is semantic segmentation of sea and sky regions [

42,

44,

46]. These methods are typically evaluated in simulations, although in some cases they are also tested using real USV platforms [

41,

42,

44].

Environment perception for USVs using machine learning methods remains an open research area. LiDAR- and radar-based perception using clustering algorithms, as well as point cloud processing using deep learning, are still under active development. Similarly, sensor fusion models that combine data from cameras, radar, and LiDAR using neural networks are also an ongoing area of research.

3.2. Simultaneous Localization and Mapping for USVs

SLAM for USVs is an important technology that enables marine vehicles to autonomously navigate and map unknown maritime environments. The goal of SLAM in USVs is to build a real-time map of the surroundings while simultaneously estimating the vehicle’s precise position within that map. Typical sensors used for this task include LiDAR, sonar, Global Positioning System (GPS), and cameras.

Machine learning methods can be integrated into SLAM algorithms for USVs to enhance perception, mapping accuracy, and adaptability in complex maritime environments. In particular, deep learning can improve feature extraction from sensor data, enabling more robust identification of landmarks and environmental structures.

However, an analysis of recent literature on SLAM for USVs reveals that the application of machine learning to this task remains limited. Conventional SLAM techniques, such as the Voxel Generalized Iterative Closest Point (VGICP) algorithm for LiDAR-based SLAM, are still actively developed and have been proposed in recent studies, e.g., in [

47].

The paper [

48] presents a survey of small object detection (SOD) methods developed for various types of autonomous vehicles, including USVs, and also considers ML-based approaches. SOD techniques can be integrated into SLAM systems to improve mapping and navigation accuracy.

A survey of collaborative SLAM methods for different types of unmanned vehicles, such as UAVs, UGVs, USVs, and UUVs, is presented in [

2], considering various sensor modalities, including wireless, vision, and LiDAR. The study also addresses the use of deep learning techniques.

An overview of sensors used in autonomous vehicles, including cameras, LiDAR, radar, ultrasonic sensors, GPS/GNSS, IMU/Inertial Navigation System (INS), odometry sensors, and acoustic systems, is presented in [

1].

In [

49], the authors proposed a multi-sensor fusion approach for environmental perception during berthing navigation, which was tested on a USV in Lingshui Bay, Dalian, China. In [

50], a LiDAR-based real-time moving object detection method for USVs was introduced. The approach was validated through experiments using a USV platform.

Many studies focus on individual components applicable to SLAM in USVs rather than providing complete end-to-end SLAM solutions. For instance, ref. [

51] presents a review of datasets and deep learning techniques for vision systems in USVs. In [

52], the authors describe a visual SLAM approach in which the deep learning model YOLOv8n-seg is used to detect dynamic objects.

SLAM methods for USVs must be robust to external environmental factors that can cause distortion in point clouds. LiDAR-based SLAM approaches are also affected by poor laser reflectivity from water surfaces, resulting in sparse or missing point clouds and a lack of reliable features [

47].

3.3. Collision Avoidance and Path Planning for USVs

In USVs, similarly to robot path planning, approaches can be classified into global (offline) path planning and local (online) path planning, the latter also referred to as collision avoidance. Global path planning is typically understood as the process of generating an optimal route that avoids known hazards such as shorelines, shallow waters, restricted zones, and high-risk traffic areas, often using nautical charts and environmental forecasts. Local path planning algorithms usually generate paths during navigation to handle dynamic and uncertain situations, such as encounters with manned vessels, other USVs, or unexpected obstacles detected by onboard sensors. This group of methods is also referred to as collision avoidance, as they focus on short-term maneuvers (e.g., adjusting speed or heading) to maintain safe separation and comply with maritime regulations (International Regulations for Preventing Collisions at Sea—COLREGs).

Figure 11 and

Table 5 present recent collision avoidance and path planning methods for USVs. The analysis of this comparison indicates that most methods are based on different types of deep reinforcement learning (DRL), such as Deep Deterministic Policy Gradient (DDPG) and Proximal Policy Optimization (PPO). The second most represented group consists of approaches based on reinforcement learning (RL), such as Inverse Reinforcement Learning (IRL) and Multi-Agent Reinforcement Learning (MARL).

The COLREGs implementation was typically included in the reward function. In [

54], an appropriate shape and size of the ship domain was applied to enforce COLREGs compliance of the solutions. All approaches were evaluated in simulations, and most algorithms were tested using scenarios with a limited number of target ships (TSs). The authors in [

58,

60] used encounter situations defined in the Imazu problem.

The key challenges in the application of machine learning methods to collision avoidance and path planning for USVs can be summarized as follows:

ML-based models may not guarantee safety; therefore, they should be combined with explicit safety constraints;

ML-based models may not guarantee COLREGs-compliant solutions;

limited training datasets;

multiple-vessel interaction scenarios;

reward function design;

explainability requirements.

3.4. Motion Control of USVs

Motion control methods for an unmanned surface vehicle are used to ensure that the vessel follows a predefined path or a sequence of waypoints. Various advanced control strategies, such as PID control, model predictive control, and adaptive control, are employed to accomplish this task while accounting for environmental disturbances such as waves, wind, and currents. Motion control tasks for USVs can be divided into several categories, including station keeping, trajectory tracking (TT), path following (PF), waypoint navigation, dynamic positioning, and formation control.

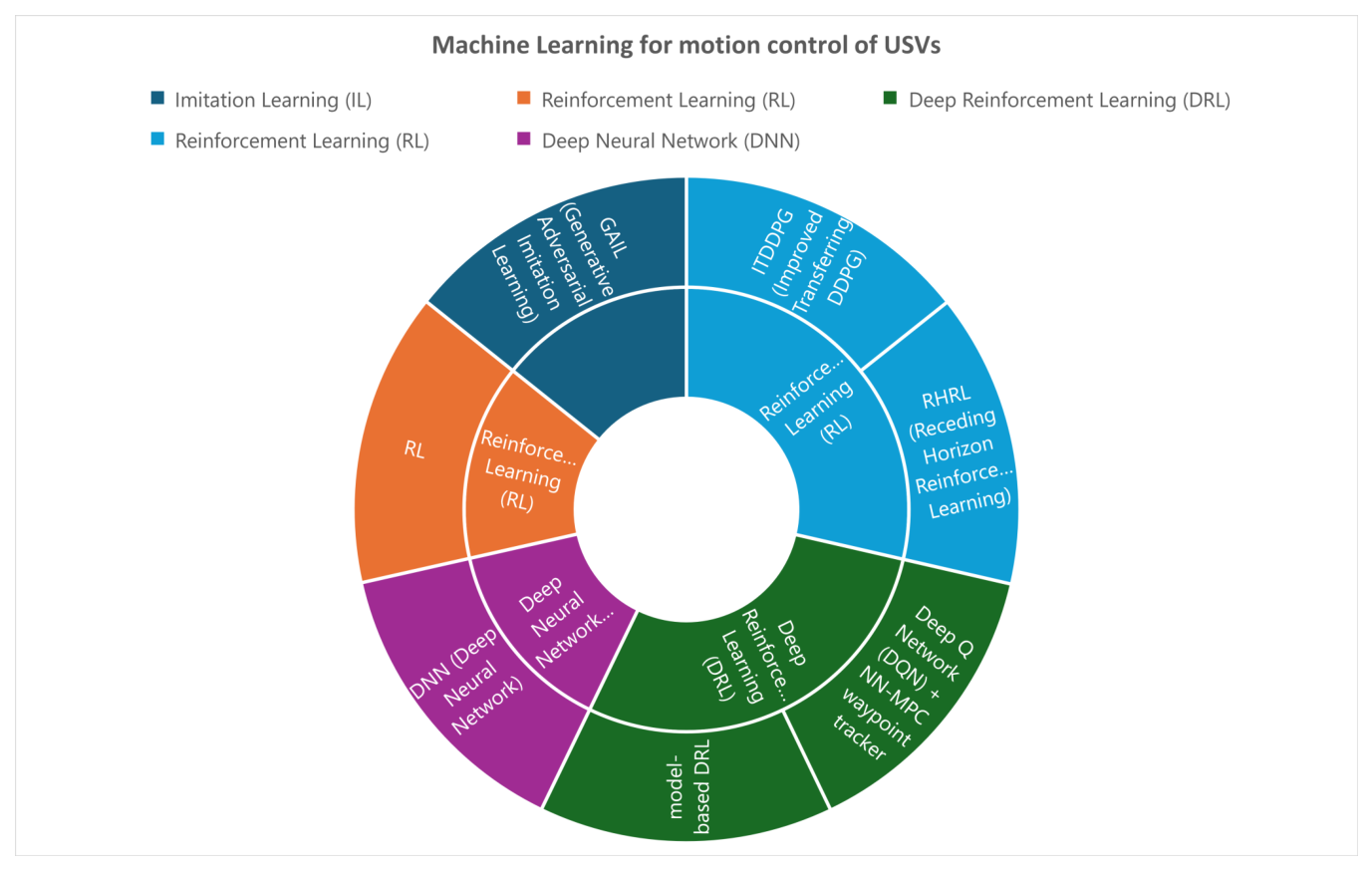

Figure 12 and

Table 6 present recent machine learning-based motion control methods for USVs.

In [

64], the authors proposed an approach based on Generative Adversarial Imitation Learning (GAIL) for steering a USV toward a goal position while avoiding obstacles. In this method, the reward function is derived from a set of demonstrations performed by a human expert.

A reinforcement learning-based control method for trajectory tracking of fully actuated surface vessels was introduced in [

65]. The method’s efficiency was evaluated in simulations and sea trials using the ReVolt unmanned surface vehicle. Three different tracking tasks were considered: the four-corner DP test, straight-path tracking, and curved-path tracking.

The use of model-based deep reinforcement learning for motion control of an underactuated unmanned surface vehicle was presented in [

66]. In this approach, a data-driven prediction model based on a deep neural network was developed using recorded input and output data. The stochastic gradient descent (SGD) method was used for training the neural network. Based on the learned prediction model, a model predictive control strategy was then applied for the USV’s path-following and trajectory-tracking tasks. The method was tested in simulations.

In the work [

67], the authors applied the Deep Q-Network (DQN) along with Neural Network–Model Predictive Control (NN-MPC) for waypoint tracking. In this approach, initial training was carried out on the Turtlebot UGV, and afterward, the policy developed in the ground domain was transferred to the water domain and further trained using a USV. The method was validated in both simulations and field tests.

In [

68], the authors proposed a receding-horizon reinforcement learning-based (RHRL) control method for the trajectory tracking task of a USV. The method was tested in simulations and compared with Lyapunov-based MPC (LMPC) and sliding mode control (SMC). The authors reported the following advantages of their approach: computational efficiency, lower sample complexity, and higher learning efficiency.

The authors in [

69] introduced an Improved Transferring Deep Deterministic Policy Gradient (ITDDPG)-based path-following controller for a USV. The method was tested in both simulations and experiments. The authors concluded that their approach is characterized by the following advantages: faster convergence speed, superior tracking performance, and stronger robustness and generalization ability compared with traditional DDPG methods.

In [

70], the authors proposed a DNN-based controller for real-time optimal USV control. The method was validated in simulations.

A survey focused on motion control methods for USVs, including literature prior to 2020, can be found in [

71]. It covers tasks such as target tracking, trajectory tracking, path following, and cooperative formation control of USVs, as well as methods based on neural networks, fuzzy logic, reinforcement learning, and Adaptive Dynamic Programming (ADP).

Most recent machine learning-based control methods for USVs focus on trajectory tracking and path-following tasks and apply reinforcement learning [

65,

68,

69] or deep reinforcement learning [

66] methods. In some cases, hybrid approaches are used, such as in [

67], where the authors combined DQN with NN-MPC. Most methods are evaluated in simulations, but some also include real-world experiments using a USV platform.

4. Machine Learning for Unmanned Aerial Vehicles

The main classification of flying drones is based on their size. This scale includes categories such as unmanned aerial vehicles (UAVs), micro air vehicles (MAVs), nano air vehicles (NAVs), pico air vehicles (PAVs), and smart dust (SD) [

72]. Most of these platforms cannot use machine learning due to their very small size and the lack of space for appropriate sensors and computing units required for data processing and analysis. The types of flying drones capable of using ML are primarily UAVs and MAVs; however, UAVs are the most commonly used category due to the widespread adoption of drones in both civil and military applications [

73]. An example of a UAV,

eBee X, is shown in

Figure 13.

Because of their wide range of applications and easy access to the environment, UAVs can be used for environmental perception, SLAM, collision avoidance, path planning, and motion control. The bird’s-eye view enables exploration of large areas, and the relatively low density of obstacles at certain altitudes facilitates measurements. The main limitations are the weight of the drone, which restricts the amount of equipment and sensors that can be carried, and the need for machine learning to optimize UAV performance.

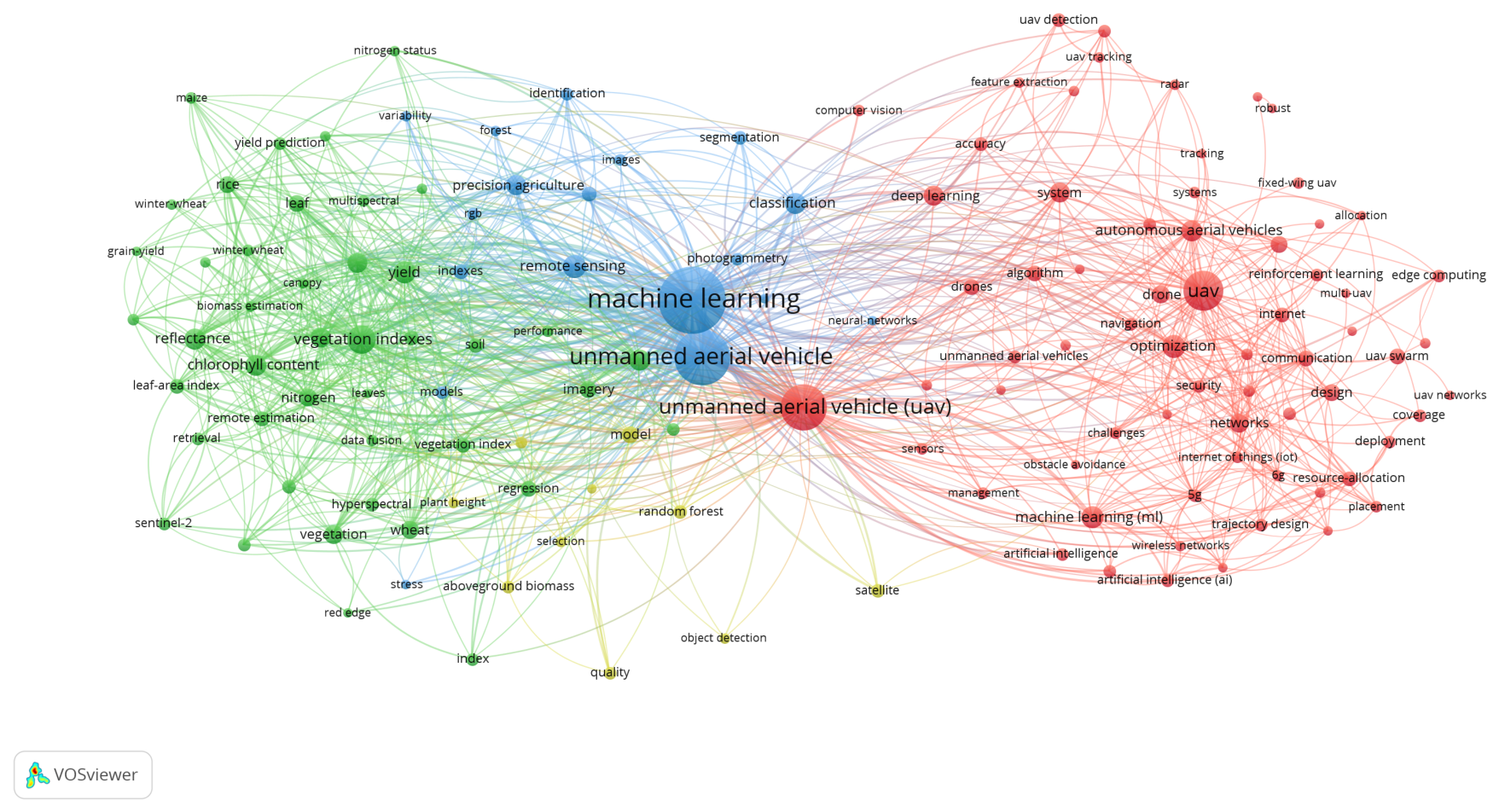

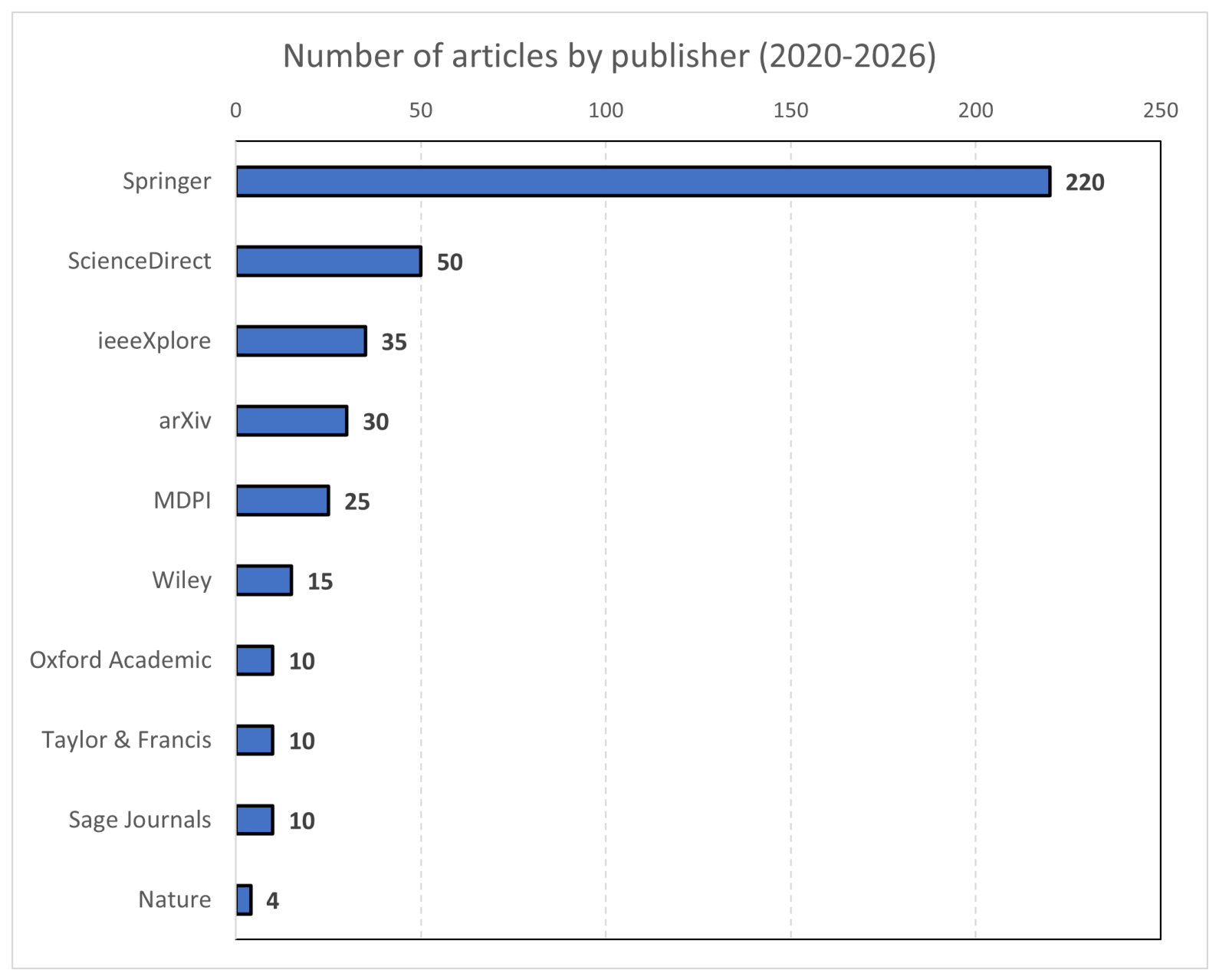

Figure 14 summarizes the results of a search in multiple publishers’ databases for papers on machine learning applied to UAVs published between 2020 and 2026. The following search query was used: “unmanned aerial vehicle” AND “machine learning”.

The results of a bibliometric analysis related to UAVs and machine learning are shown in

Figure 15. The analysis was performed using VOSviewer [

19], which enables the creation of cluster maps based on authors’ keywords extracted from papers published between 2020 and 2026. The data were obtained from bibliographic files exported from the Web of Science.

4.1. Environment Perception for UAVs

This section provides an overview of environmental perception, which uses machine learning to process raw spatial data. Interpreting this data is crucial, as it enables its use in other aspects of UAV operations. Environmental perception relies on a variety of sensors, including LiDAR, RGB cameras, radar, and ultrasonic sensors. Due to the diverse nature of the environmental data, machine learning is often used for its processing. Since the data formats vary widely and cover a broad range of applications, different algorithms and models are employed.

Detecting and recognizing objects in real time in military applications using RGB camera images is a challenging task that requires appropriate solutions. The approach proposed by the authors in [

75] involves the use of the YOLOv3 algorithm, which belongs to the class of convolutional neural networks. This type of CNN enables fast real-time image processing by analyzing each frame only once during the entire run. Thanks to this design, the model’s processing time is reduced, allowing rapidly changing environmental data to be continuously analyzed. With real-time processing capability, a smartphone was used as a device for displaying the analysis results. A similar approach—this time applied to environmental perception for navigation—can be found in another study, which also utilizes the YOLOv3 machine learning algorithm [

76].

Another issue is the processing of point clouds obtained via LiDAR. Compared with 2D images from RGB cameras, LiDAR data form a 3D point cloud, which requires a different approach. In the paper [

77], the authors addressed the problem of segmenting and classifying the obtained data. The ITCD (Individual Tree Crown Delineation) algorithm, which belongs to the supervised learning category, was used for data segmentation. In subsequent work, CNNs were used for data classification.

The authors of the paper [

78] addressed a similar problem. They demonstrated the use of the HDBSCAN (Hierarchical Density-Based Spatial Clustering of Applications with Noise) algorithm, which is an unsupervised learning method. This algorithm groups clusters of varying density, enabling efficient segmentation of unknown data.

The authors of the study [

79] described their work on fused data from an RGB camera and LiDAR. Two CNN-based architectures (U-Net and DeepLabv3) were used for data classification. The aim was to compare the effectiveness of these algorithms with RF (Random Forest) and SVM (Support Vector Machine) methods. The study demonstrated that advanced neural networks are more effective when dealing with complex data.

The authors of another article [

80] also addressed the problem of classifying fused LiDAR and RGB camera data. They reduced the dataset by considering only data points located above a certain height. As a result, they used an SVM algorithm and showed that it performs more effectively in data classification than a decision tree (DT), which is faster but less suitable for complex data.

Fused data from LiDAR and hyperspectral imaging require a complex processing approach. The authors of [

81] conducted a series of simulations to identify a suitable algorithm for this problem. The Gradient Boosting Machine (GBM) proved to be the best of the tested methods, enabling effective feature extraction from complex data. The study demonstrated that supervised learning performs well with fused data.

Figure 16 and

Table 7 present the latest machine learning-based environment perception methods for UAVs. It can be observed that all analyzed studies are based on real-world experiments, which reflects the complexity of the environments in which UAVs operate. Due to the large number of obstacles in three-dimensional space, simulation alone is often insufficient.

The main methods used for environmental perception are deep learning and supervised learning. These approaches are commonly employed because environmental perception is closely related to object detection and classification tasks, for which they are particularly effective. For the detection and classification of visual data, such as camera images, deep learning with CNN models is most commonly used [

75,

76,

79]. In the case of classifying data not derived from cameras but from other sensors, supervised learning is more commonly used [

77,

80,

81]. This is due to the need to define human-labeled classes in order to effectively analyze the environment.

4.2. Simultaneous Localization and Mapping for UAVs

This section discusses the use of machine learning in localization and mapping systems. For UAVs, localization and mapping present significant challenges due to the three-dimensional nature of the environment. Linear algorithms are insufficient; therefore, machine learning is used to enhance the navigation capabilities of UAVs.

Machine learning-based navigation often relies on different data than traditional navigation methods. The authors of [

82] used camera-based (vision-based) data. Based on this type of data, reinforcement learning was applied using the Deep Q-Network (DQN) algorithm. This approach enables autonomous navigation in dynamic environments. The results of the algorithm can be used in later stages for collision avoidance and motion control.

The authors of [

83] also used camera data for UAV navigation. The difference lies in the algorithm used: a CNN (specifically, the ResNet-18 model implemented in Python) was applied for navigation and terrain mapping. Visual and geometric features were extracted using convolutional layers, while the map can be dynamically updated during flight.

Another challenge is navigating enclosed spaces such as buildings and underground corridors. In such cases, machine learning can be used to assist with terrain mapping. The authors of [

84] used a CNN (the ENet model) to extract human silhouettes from the data. This ensures that not all detected elements are treated as part of the terrain, which simplifies the mapping process.

Mapping a selected area using additional parameters posed a challenge for the authors of [

85]. The goal was to map groundwater levels and soil moisture. Both tasks were performed using a Random Forest (RF) model. This approach enables the mapping of large areas without the need for field measurements. Thanks to the RF model, it is possible to predict groundwater levels and soil moisture, which supports environmental monitoring and mapping.

Detecting specific objects during terrain mapping enables faster responses from UAVs. The authors of [

86] described such a detection method. Machine learning was used to detect obstacles and determine their positions. A CNN-based algorithm (YOLO model) was used for this purpose. Detecting objects as obstacles enabled the subsequent application of collision-avoidance algorithms. This indicates that mapping and localization, when supported by machine learning, are crucial for UAV operations.

Another approach to mapless navigation is to use LiDAR data without real-time mapping. Instead, positioning is performed dynamically. This approach was explored by the authors of [

87]. Instead of generating a terrain map, the obtained data are used to immediately react to obstacles using two reinforcement learning models: TD3 (Twin Delayed Deep Deterministic Policy Gradient) and SAC (Soft Actor–Critic). The task of these models is to detect objects and determine their distance and angle relative to the UAV. With this information, the models can be used for collision avoidance by outputting flight speed and direction.

The next example of navigation involves using an RGB camera to locate objects in front of a UAV. The authors of [

88] investigate the use of a CNN (the Faster R-CNN model) to detect objects and determine whether an obstacle is too close (a critical obstacle). A forest was used for testing, where trees served as obstacles. These tests provided realistic results regarding how accurately the CNN can recognize and localize obstacles.

Images can also be used to detect moving objects such as people, cars, and animals, which is essential for navigation in real-world conditions. The authors of [

89] investigated a solution to this problem in dynamic environments. They used a Deep Q-Network, a type of reinforcement learning model. The distance to the target was used as the reward, where shorter distances yielded higher rewards. Conversely, the distance to detected objects was used as a penalty, where shorter distances resulted in higher penalties.

Figure 17 and

Table 8 present the latest machine learning-based SLAM methods for UAVs. It is important to note that deep learning using CNNs dominates the field of UAV localization [

83,

84,

86,

88]. Due to the complexity of the environment, the use of cameras as a source of visual information is essential for SLAM systems to function properly. Camera images enable effective object localization through CNN-based detection. The mapping problem was addressed using supervised learning, which enabled object clustering via a Random Forest algorithm [

85].

4.3. Collision Avoidance and Path Planning for UAVs

The previous sections summarized research on environmental perception and navigation. Many of the findings from this research have contributed to collision avoidance through object detection and localization. This section discusses research focused on collision avoidance and path planning for UAVs.

One machine learning approach is reinforcement learning, as proposed by the authors of [

90]. Three algorithms were compared: Proximal Policy Optimization, Advantage Actor–Critic (A2C), and Soft Actor–Critic. The study addressed collision avoidance for a flying UAV using LiDAR data and was divided into two main stages with varying levels of environmental complexity. The first stage included three obstacles: one dynamic and two static. The second stage additionally accounted for the presence of other UAVs performing the same task. The entire study was conducted in a simulated environment, and the results demonstrate the effectiveness of the aforementioned algorithms for collision avoidance. PPO exhibited greater stability and better performance, while A2C demonstrated significantly higher processing speed. The SAC algorithm proved to be the least effective among those tested for this specific problem.

The authors of [

91] explored a different approach to collision avoidance. Instead of using LiDAR data, they utilized camera images processed by artificial neural networks. A combination of two neural networks—CNN (Convolutional Neural Network) and RNN (Recurrent Neural Network)—was used to analyze video frames. This hybrid neural network enabled object detection and the subsequent determination of an evasive trajectory. The experiment was conducted under real-world conditions; however, the model was only able to avoid a single recognizable object.

An example of a collision avoidance study that included both simulation and real-world experiments is described in [

92]. The authors employed deep reinforcement learning using the Deep Deterministic Policy Gradient algorithm. The algorithm processed incoming data, adjusted the UAV’s flight speed, and adapted its trajectory to avoid obstacles.

For the path planning task, the authors of [

93] proposed a reinforcement learning approach based on the Q-learning algorithm. The main objective of the study was to plan flight trajectories while accounting for both static and dynamic obstacles. The authors compared the Q-learning algorithm with other methods and demonstrated that, despite requiring longer training time, Q-learning produces the shortest paths among the evaluated algorithms.

The authors of [

94] investigated cooperative trajectory planning for multiple UAVs. The main aspects considered include coordination among multiple vehicles, collision avoidance, and synchronization of arrival times at the destination. Reinforcement learning was used for this purpose, employing the Proximal Policy Optimization algorithm, which enabled the simultaneous coordination of multiple UAVs.

A key limitation of earlier approaches was the poor scalability of centralized swarm control and the risk of collisions in complex scenarios. This limitation was addressed by using reinforcement learning instead of classical optimization methods.

The authors of the paper [

95] investigated UAV path planning in a large and dynamic environment. To address the research problem—characterized by a vast space, incomplete knowledge of the environment, and dynamic obstacles—they employed deep reinforcement learning using the DQN algorithm. The algorithm demonstrated that deep reinforcement learning performs well in collision avoidance and path planning within a vast environment containing moving objects.

Path planning is often based not only on collision avoidance but also on other factors. The authors of [

96] conducted a study on a multi-criteria problem that additionally considered fuel consumption and safety. Reinforcement learning using the Q-learning algorithm was employed to solve this problem. This algorithm, combined with metaheuristics, enabled the selection of an optimal UAV trajectory under complex environmental conditions and specific constraints.

The combination of the Q-learning algorithm with metaheuristics also made it possible to address more complex challenges, such as path planning and collision avoidance in environments with multiple constraints, as well as swarm control. The authors of [

97] investigated this problem. Reinforcement learning using the Q-learning algorithm was employed for this task. As previously described, this algorithm is also applicable to such complex problems, and it enabled the coordination of multiple UAVs.

Figure 18 and

Table 9 present the latest machine learning-based collision avoidance and path planning methods for UAVs. It can be observed that reinforcement learning and deep reinforcement learning are the most commonly used machine learning approaches. Among all algorithms, Q-learning was the most frequently used [

93,

96,

97]. This algorithm is particularly well suited for path planning based on three-dimensional environmental data. This is because Q-learning assigns values to possible actions and, based on appropriately selected parameters, selects the action with the highest value. All studies on collision avoidance and path planning required a simulation component due to the risk of UAV damage resulting from failure to avoid obstacles.

4.4. Motion Control of UAVs

Controlling UAV motion requires considering many factors and operating in three-dimensional space. Previous sections have discussed aspects such as path planning and mapping. Based on this information, the UAV must be controlled appropriately to ensure optimal performance. Today, machine learning is often used for autonomous UAV control.

An important task in motion control is position control. Due to numerous disturbances and nonlinear dynamics, UAV control is challenging. To address this, the authors of [

98] replaced the classical PID controller and, using supervised learning, trained a neural network on data generated by the PID controller. This approach improved the accuracy and stability of UAV positioning.

The authors of [

99] also investigated replacing the classical PID controller but instead used reinforcement learning with the DDPG algorithm. In this case, rather than learning from PID-generated data, the model was trained using a reward-and-penalty mechanism based on executed actions, which enabled the development of optimal control policies independent of classical PID behavior.

The promising results of the DDPG algorithm led the researchers to further develop it in [

100]. They proposed the Robust DDPG algorithm, an improved version of the original method. The enhanced version offers more stable learning, incorporates noise into the state space, and improves exploration of control strategies.

The authors of [

101] focused on comparing UAV motion control capabilities using reinforcement learning and deep reinforcement learning. They used DQN as the algorithm under study, and the results clearly demonstrate the effectiveness of this type of machine learning. This highlights the complexity of UAV control, where many factors influence vehicle performance.

Many UAVs are based on quadrotor configurations. Controlling these systems—which are uncommon in other types of unmanned vehicles—presents an additional challenge in UAV motion control. The authors of [

102] addressed this issue. As a solution, the researchers proposed deep reinforcement learning using the PPO algorithm. This enabled optimal motor control and achieved better results than a classical PID controller.

Swarm control is a highly challenging task, which the authors of [

103] investigated using deep reinforcement learning. They employed the SAC algorithm to control motion as effectively as possible while avoiding collisions. The study showed that the DDPG and PPO algorithms perform worse in controlling multiple UAVs simultaneously.

Figure 19 and

Table 10 present the latest machine learning-based motion control methods for UAVs. As with collision avoidance and path planning, most of the research is based on simulations. This is mainly due to the risk of damaging UAVs in the event of a failure in controlling their movement. The most commonly used approach is reinforcement learning, employing various algorithms [

99,

100,

101,

102,

103].

Based on the observed research results, it can be concluded that controlling a robot in three-dimensional space with a large number of obstacles is highly challenging, and there is still a need to transfer such research into experimental validation in real-world environments. Another important aspect is swarm control, in which a group of UAVs is required to synchronize their operations. This field requires further development and research.

5. Machine Learning for Mobile Robots

This review covers the application of machine learning to ground-based mobile robots, which move using wheels, tracks, or legged locomotion. This field involves the integration of sensor data processing, distance estimation between objects, and actuator control, such as wheel motors or track drives. During the literature review, the application of machine learning in mobile robots was examined across several scientific databases, as illustrated in

Figure 20.

The results of a bibliometric analysis related to ground mobile robots and machine learning are shown in

Figure 21. The analysis was performed using VOSviewer [

19], which enables the creation of cluster maps based on authors’ keywords extracted from papers published between 2020 and 2026. The data were obtained from bibliographic files exported from the Web of Science.

Subsequent sections of this part of the paper cover the application of machine learning methods for environment perception, SLAM, path planning, obstacle avoidance, and motion control. An example of a UGV,

Husky A300 from Clearpath Robotics (Kitchener, ON, Canada), is shown in

Figure 22.

5.1. Environment Perception for Mobile Robots

In mobile robotics, processing sensor data to improve environmental perception is a primary area of interest for researchers, as it directly supports subsequent stages such as navigation and speed control, which are essential in autonomous systems. The author categorized the overview of environment perception according to the following sensors: LiDAR (Light Detection and Ranging), GPS, radar, RGB cameras, RFID, and thermal sensors. All publications described in this section are shown in

Figure 23 and

Table 11.

The problem of environment perception can be considered in two areas. The first concerns environmental detection in navigation using laser-based solutions. Another area addressed by the authors of [

105] involves unstructured environments such as construction sites. In this example, the authors propose the use of a neural network that integrates stereovision data for robust semantic segmentation of obstacles, improving perception in diverse environments. This approach enables real-time processing of sensor data in changing scenarios in autonomous mobile systems.

As the authors of [

106] note, the results may help in selecting effective design approaches for controlling robotic devices, applying machine learning methods for pattern recognition and classification, and using computer technologies for designing control systems and simulating robotic devices.

In [

107], the authors demonstrate the use of an improved neural network, specifically an evolving self-organizing incremental neural network (ESOINN), for environmental perception in both internal and external environments of a walking robot.

The authors of [

108] also combined PIR and ultrasonic sensors on a mobile social robot whose task is to locate people in the environment. The data from the sensors are analyzed using two machine learning algorithms: a supervised method (decision tree) and an unsupervised method (K-means). The results show that the accuracy of detecting a person in the tested area was 70%.

A combination of sensors for capturing RGB images and depth information was presented in [

109]. The study evaluated two CNN-based networks using this data to determine the angular velocity of the robot. The application of supervised learning using a support vector machine was also explored for vehicles operating in a networked, dynamic environment. The method proved effective under challenging environmental conditions [

110]. Finally, by combining multiple sensors supported by neural networks on a mobile robot (TurtleBot3 Waffle, ROBOTIS Co., Ltd., Seoul, Republic of Korea), the authors created a set of scenarios to train the robot to detect glass as part of the environment map using sensor fusion.

Table 11.

Recent ML-based environment perception methods for mobile robots.

Table 11.

Recent ML-based environment perception methods for mobile robots.

| Method | Authors | Year | Task | Sim./Exp. |

|---|

| SL, NN | Baretto-Cubero et al. [111] | 2022 | LiDAR LDS-01, ultrasonic sensors HC-SR04, camera RealSense 435i, TurleBot3 waffle | Real exp. |

| SL, NN | Kondratenko et al. [106] | 2022 | Software, mobile robots | Sim. |

| SL, E-SOINN NN | Xu et al. [107] | 2023 | Depth Camera (D435i, and T265), hexapod robot | Real exp. |

| USL and SL K-means and DT | Cuiffreda et al. [108] | 2023 | SRF10 ultrasonic and HC-SR501 PIR sensors; social mobile robot | Real exp. |

| SL, NN | Ziegler et al. [105] | 2025 | RGB-D, Autonomous Machine Systems | Sim. and Real exp. |

| SL, CNN | Zain et al. [109] | 2025 | IntelRealsense D415, LiDAR, Mobile robot inspired by Turtlebot2 | Real exp. |

| SL, SVM | Cui et al. [110] | 2025 | Connected network, mobile robots | Sim. |

Figure 23 and

Table 11 present the latest environment perception methods for mobile robots. The analysis of this comparison reveals that most methods are based on supervised learning (SL) using neural networks. As shown in the table, neural networks are the most commonly used approach. Mobile robots are equipped with numerous sensors, including cameras that generate large amounts of image data, which require efficient processing—often in real time. Neural networks meet these requirements because they enable automatic feature learning and effective processing of large datasets. Furthermore, they are characterized by an end-to-end approach in which input sensor data are directly converted into output decisions. This property is particularly important when integrating a wide variety of sensors, which is a key aspect of environmental perception in mobile robots. Consequently, neural networks are often the most effective choice for such tasks.

5.2. Simultaneous Localization and Mapping for Mobile Robots

SLAM for mobile robots is used to gradually create a coherent map of an unknown environment while simultaneously localising the robot within that map. All publications described in this section are shown in

Figure 24 and

Table 12.

Interesting review papers include [

112,

113], in which the authors claim that DRL policies in mobile robot navigation do not always ensure optimal performance and that the efficiency of DRL-based navigation is subject to fluctuations.

The paper [

10] presents an overview of research on mobile robots. The review covers nearly 80 articles on classical methods and machine learning. It was demonstrated that the applied techniques improve the navigation of mobile robots in complex indoor environments. However, DRL still appears to be the most promising approach for achieving breakthroughs in navigation capabilities, as demonstrated in several application scenarios, such as indoor navigation, local obstacle avoidance, multi-robot navigation, and social navigation.

An SLAM-related approach was presented in [

114], which discusses the application of different deep learning techniques. Five DL methods were investigated in this paper: convolutional neural networks for feature extraction and semantic understanding, recurrent neural networks for modeling temporal relationships, deep reinforcement learning for developing exploration strategies, graph neural networks (GNNs) for modeling spatial relationships, and attention mechanisms (AM) for selectively processing information.

A review of recent literature shows that SLAM and visual SLAM (VSLAM) capabilities can be extended through integration with learning-based algorithms for mobile robots. It is shown that combining deep learning with SLAM and VSLAM represents an innovative approach for autonomous robotic systems.

The authors of [

115,

116] presented a solution for mapless navigation control based on deep learning techniques. The proposed method utilizes data from LiDAR (Light Detection and Ranging) sensors and is based on imitation learning. The experimental results show that the mobile robot navigates safely in four unknown environments with an average success rate of 75%. The study was conducted for three simulation scenarios and one real-world scenario using reinforcement learning.

As the authors of [

117] mention, both the robot’s trajectory and the map were estimated online in their approach, without the need for prior knowledge of the environment. The study focused on incorporating two types of deep learning agents: Deep Q-Networks and Double Deep Q-Networks. The mobile robot was trained to avoid collisions and navigate in an unknown environment.

In [

118], the authors proposed a navigation method for a mobile robot to improve its adaptability to the environment. In this approach, a reinforcement learning method was applied to control the robot. The authors used the DDPG algorithm to train the neural network and verified that this method performs better than approaches utilizing vision and laser sensors, improving the navigation success rate by 10%.

In [

119], the authors proposed a random forest method for location recognition and navigation. The focus was on location recognition based on 3D point clouds, and the random forest model was trained using feature vectors. Numerous experiments were conducted on the KITTI dataset and in the outdoor campus environment of Southeast University, located in Nanjing, Jiangsu Province, referred to as SEU.

The authors of [

120] also combined the RTAB-Map method with deep CNN algorithms, improving target detection for the SROBO mobile robot.

In [

121], the authors proposed an autonomous obstacle avoidance method combined with SLAM and based on deep reinforcement learning for a wheeled snake robot using multi-sensor data. The authors of [

116] presented a deep learning-based corridor area classifier that utilizes 2D LiDAR data. The study shows that the maximum error is 77% for the modified algorithm, and that the robot’s speed increased by more than 5.6%. The route completion rate increased by 36.25% when deep learning was included, compared with SLAM-only localization.

Table 12.

Recent ML-based SLAM methods for mobile robots.

Table 12.

Recent ML-based SLAM methods for mobile robots.

| Method | Authors | Year | Task | Sim./Exp. |

|---|

| DRL, GA3C | Surman et al. [122] | 2020 | Navigation control, Turtlebot, Nvidia JetsonTX1 | Sim. and Real exp. |

| DRL | Zhu et al. [112] | 2021 | Navigation control, mobile robot | Sim. |

| DRL | Nguyen et al. [116] | 2021 | Navigation control | Sim. and Real exp. |

| DRL, DQN, and DDQN | Lee et al. [117] | 2022 | Navigation (Gazebo) | Sim. and Real exp. |

| SL, RF | Zhou et al. [119] | 2022 | Mobile robot (experimental platform), LiDAR, IMU, GPS | Sim. and Real exp. |

| SL, Deep CNN | Sadeghi et al. [120] | 2022 | Mobile robot (SROBO), LiDAR, IMU, GPS, NVIDIA | Sim. and Real exp. |

| RL and NN, DDPG | Yan et al. [118] | 2023 | Mobile robot, Gazebo | Sim. |

| DRL, DNN, and PPO | Wong et al. [123] | 2024 | Mobile robot, LiDAR, camera, ORB-SLAM2 | Sim. |

| DRL, DQN | Liu et al. [121] | 2024 | Mobile snake robot (Gazebo, RViz), NVIDIA Jetson, LiDAR, IMU | Sim. and Real exp. |

| DRL | Xu et al. [124] | 2025 | Mobile robot with Intel RealSense D435i camera | Sim. |

Figure 24 and

Table 12 show the latest SLAM methods for mobile robots. The analysis of this comparison reveals that most methods are based on DRL, including DQN, DDPG, deep neural networks (DNNs), and PPO. The DRL approach performs well in decision-making in dynamic and uncertain environments.

In classical SLAM, the robot estimates its position and builds a map, whereas in a DRL-based approach, it additionally learns strategies for exploring its surroundings. This means that the robot not only “sees” but also actively decides where to move in order to build a map more quickly and accurately.

A second advantage is the ability to learn an action policy based on experience. DRL enables the robot to optimize its movements through interaction with the environment, without the need to manually design rules. As a result, it can cope better with complex, previously unknown environments.

5.3. Collision Avoidance and Path Planning for Mobile Robots

In the case of mobile robots, key research topics include path planning and obstacle avoidance. Research often focuses on avoiding static obstacles, but the most interesting findings concern the avoidance of dynamic obstacles. All publications referred to in this section are shown in

Figure 25 and

Table 13.

For mobile robots, obstacle avoidance—particularly of dynamic obstacles—is an important research topic. The authors of [

125] proposed a reinforcement learning approach based on the SAC algorithm to train a multi-agent system in which each agent makes decisions independently without communicating with other robots.

However, the authors of [

126] noted that deep reinforcement learning improves the performance of the STORM robot in avoiding obstacles in high-traffic environments and challenging scenarios.

In [

127], the authors used the Rapidly-exploring Random Tree (RRT) method to address obstacle avoidance and path planning. GoogLeNet was used to classify obstacles. The RRT method generates a path from the starting point to the destination. In the experiments, the RRT approach was compared with a genetic algorithm (GA) and particle swarm optimization (PSO) in a static environment, as well as with an artificial potential field-based approach in a dynamic environment.

Path planning for mobile robots using machine learning has been further enhanced in [

128], where the authors proposed a new algorithm by integrating the Intrinsic Curiosity Module (ICM) and Long Short-Term Memory (LSTM) into the PPO framework. The results show that the ICM provides intrinsic rewards in addition to external rewards, accelerating initial convergence. The actor–critic network was further improved by incorporating an LSTM-based architecture to better handle dynamic obstacles.

Table 13.

Recent ML-based collision avoidance and path planning methods for mobile robots.

Table 13.

Recent ML-based collision avoidance and path planning methods for mobile robots.

| Method | Authors | Year | Task | Sim./Exp. |

|---|

| RL, RRT | Zhang et al. [127] | 2020 | Tracked trolley mobile robot | Sim. 2D |

| SL, CNN | Ran et al. [129] | 2021 | Mobile Platform Robot, Nividia and STM32, dynamic obstacle | Real exp. |

| RL, SAC, A3C, DWA, TEB | Choi et al. [125] | 2021 | Mobile platform SR7, GeFirce GTX | Sim. and Real exp. |

| RL, DRL | Feng et al. [126] | 2021 | Mobile platform (STORM), Gazebo | Real exp. Sim. |

| ML, ASGLDR (SGL, LR) | Das et al. [130] | 2022 | Mobile robot | Sim. and Real exp. |

| DRL, DQN | Wang et al. [131] | 2022 | Pioneer3-AT robot, GE Force Nividia, Gazebo | Sim. and Real exp. |

| RL, DDPG-DG | Dashpande et al. [132] | 2024 | Mobile robot (GAZEBO) | Sim. and Real exp. |

| RL, QL | Xiao et al. [133] | 2024 | Mobile robot | Sim. |

| RL, DDPG | Zgang et al. [128] | 2024 | Mobile robot, Turtlebot3 Burger | Sim. Real exp. |

| ML, ASGD-LARS | Thakur et al. [134] | 2025 | Autonomous mobile robot (AMR), Matlab | Sim. and Real Exp. |

In [

130], the authors proposed a machine learning algorithm called Adaptive Stochastic Gradient Descent Linear Regression (ASGLDR), which enables static obstacle avoidance by modeling mobile robot motion as left or right turns.

A similar approach using the Adaptive Stochastic Gradient Descent with Least Angle Regression (ASGD-LARS) algorithm was introduced in [

134]. This model was designed to classify the motion of an autonomous mobile robot (AMR) into three categories: no turn, left turn, and right turn. The proposed approach was implemented on a NodeMCU ESP8266 controller to enable real-time static obstacle avoidance.

The authors introduced a computation method based on the Euclidean distance from static obstacles and the speeds of individual robot wheels, allowing faster information processing on the NodeMCU controller and enabling rapid decision-making. Additionally, to ensure fast convergence, the weights were updated using the adaptive stochastic gradient descent optimization technique.

The work [

132] presents a solution for planning and optimizing the route of a mobile robot in open terrain. The study applies a combination of DDPG and Differential Gradient (DG) methods for motion planning involving two mobile robots. The parameters required for decision-making were obtained from LiDAR, cameras, and proximity sensors.

Regarding algorithm improvement, the authors of [

131] applied an enhanced DQN method, using collected data as training samples and combining environmental features and target point information as network inputs.

A trajectory tracking method based on optimized Q-learning (QL) was proposed in [

133] for dynamic local environments. The authors applied the Observational Space Data Processing Method (OSDPM), in which raw LiDAR data are transformed into a reduced-dimensionality feature vector containing information about the robot’s surroundings.

Figure 25 and

Table 13 show the latest collision avoidance and route planning methods for mobile robots. The analysis of this comparison reveals that most methods are based on various types of DRL. The second most common group comprises approaches based on RL.

In this section, which focuses on collision avoidance, reinforcement learning is particularly well suited to the problem, as it enables decision-making in dynamic and uncertain environments. RL/DRL methods allow for learning an optimal action strategy based on interactions with the environment through a reward function. This enables the robot to adaptively plan its motion trajectory while minimizing the risk of collision. Furthermore, this approach performs well in sequential decision-making problems and supports the integration of perception and control in real time.

5.4. Motion Control of Mobile Robots

In previous studies, robot motion control typically involved the use of PID controllers. Nowadays, researchers increasingly apply machine learning to address this task. All publications referred to in this section are shown in

Figure 26 and

Table 14.

In [

135], motion control was implemented using RL instead of a traditional control algorithm. The study involved computer simulations to learn angular velocity based on the error angle. The aim was to maintain a constant linear velocity at its maximum value during the robot’s trajectory outside the docking area. The authors used a Q-learning algorithm.

In some studies, authors combine classical methods with machine learning. The papers [

136,

137] present an innovative hybrid control strategy that combines a neural network-based kinematic controller with adaptive model reference control, in which controller parameters are determined online using neural networks.

The research described in [

138] demonstrates the integration of a PID controller with machine learning based on the SAC algorithm. This approach enables system control in environments that change in real time. A hierarchical structure was developed, consisting of an upper-level controller based on the SAC algorithm—one of the most competitive continuous control methods—and a lower-level controller based on an incremental PID controller. The effectiveness of the SAC–PID control method was verified using several trajectories of varying difficulty in both the Gazebo simulation environment and on a real Mecanum mobile robot.

Similarly, the authors of [

139] proposed a hybrid control strategy combining model-based control with a DL method based on an actor–critic (AC) framework. After training the model using the DDPG method, the action referred to as ACI (“acquired control input”) compensates for errors, resulting in improved control performance under conditions of speed and acceleration limitations, as well as system uncertainty.

Another approach to position control is the use of RL agents to control robot motion. These agents learn from experience and can improve decision-making compared with traditional control algorithms [

140,

141]. Importantly, the results show that DDPG and DQN agents achieve the shortest time to reach the destination.

Table 14.

Recent ML-based Motion control methods for mobile robots.

Table 14.

Recent ML-based Motion control methods for mobile robots.

| Method | Authors | Year | Task | Sim./Exp. |

|---|

| RL, QL | Farias et al. [135] | 2020 | Position control, mobile robot | Sim. |

| DRL, DDPG | Gao et al. [139] | 2021 | Tracking control, mobile robot | Sim. |

| NN | Hassan et al. [136] | 2022 | Tracking control, wheel mobile robot, STM32, Matlab | Sim. |

| DRL, DQN, DDPG | Quioga et al. [140] | 2022 | Position control, mobile robot Kephera IV | Sim. and Real exp. |

| RL, SAC | Yu et al. [138] | 2022 | automatic control, Mecanum mobile robot, Gazebo | Sim. and Real exp. |

| DRL, SAC | Cao et al. [141] | 2024 | Path following, mobile robot, Raspberry pi4 | Sim. |

| NN | Ha et al. [137] | 2025 | Tracking control, wheel mobile robot, STM32 | Sim. and Real exp |

Figure 26 and

Table 14 show the latest motion control methods for mobile robots. The analysis of this comparison reveals that most methods are based on various types of DRL, including DDPG and DQN. The second most common group comprises approaches based on RL, such as Q-learning and SAC.

There is no definitive answer as to which method is best suited for motion control. However, DRL-based approaches—particularly algorithms such as DDPG and SAC—can be recommended. The motion control problem is continuous in nature, requiring the generation of smooth control signals. Unlike classical RL methods, DRL algorithms are designed to operate in continuous spaces and enable end-to-end learning of control strategies.

Furthermore, DRL demonstrates a high capacity to adapt to nonlinear robot dynamics and variable environmental conditions. In particular, the SAC algorithm is characterized by greater learning stability and improved exploration of the state space.

6. Discussion

For UUVs, future research should prioritize tightly integrated perception–planning–control pipelines that operate reliably under acoustic noise, low visibility, and limited communication bandwidth. Recent studies indicate the need to combine forward-looking sonar perception with real-time avoidance and planning strategies [

23,

24,

26,

27]. A promising direction is to move from loosely coupled modules toward end-to-end or hierarchically coordinated architectures that explicitly model uncertainty and safety constraints.

Another key direction is robust sim-to-real transfer for UUV autonomy, including SLAM under domain shift, data-efficient learning, and long-duration adaptation to changing underwater conditions. In the UUV SLAM-related literature, the most commonly used machine learning approach is deep learning, often combined with Kalman filtering. However, no single architecture—such as YOLO or a specific CNN variant—emerges as dominant; such models are more frequently applied in environmental perception.

Survey papers emphasize multi-sensor integration and deployment challenges [

18,

28], while recent control studies suggest that hybrid DRL frameworks and sim-to-real tuning can improve robustness in field operations [

36,

38]. In this context, future work should also emphasize standardized benchmarks, shared datasets, and reproducible sea-trial protocols to enable fair comparisons across methods.

Most ML-based methods for environment perception in USVs rely on cameras and CNNs [

41,

43,

44,

45], whereas many recent studies employ conventional SLAM techniques instead of ML-based approaches [

47]. SLAM for USVs remains a challenging task due to external environmental factors, which can lead to sparse or missing point clouds. For motion control, most recent approaches are based on RL or DRL [

66,

67,

69,

70,

71].

The key challenges associated with the application of machine learning (ML) methods to collision avoidance and path planning for USVs include the lack of inherent safety guarantees (thus requiring the incorporation of safety constraints), possible non-compliance with COLREGs, limited availability of training data, the complexity of multi-vessel interactions, challenges in reward function design, and the need for explainability.

For UAVs, a major challenge is the three-dimensional environment, which has been divided into several key problems in scientific research. These include obstacle avoidance, terrain mapping based on various data sources, multidimensional and multi-criteria control, and swarm control. It is worth noting that different tasks are addressed using various machine learning methods. Deep reinforcement learning dominates motion control, while SLAM employs a more diverse set of methods depending on the specific task or data being processed.

Convolutional neural networks are most frequently applied in mobile robot environmental perception, whereas for robot motion control, neural networks and deep learning techniques are used, as well as reinforcement learning supported by DQN and DDPG algorithms.

It has been observed that the majority of approaches applying machine learning methods to mobile robots are tested in simulation environments, while real-world demonstrations are less common.

It is worth noting that, when conducting research related to mobile robots, the following software environments are commonly used: MATLAB, ROS, virtual machines, Gazebo, and RViz. Raspberry Pi is a popular control platform, alongside NVIDIA computing platforms.

Table 15 presents a summary of ML-based approaches for different autonomous vehicles and the four tasks covered in this review paper. As can be seen in this table, CNNs are the most commonly used approach for environment perception in different vehicles, such as UUVs, USVs, UAVs, and mobile robots. CNNs are widely used for environment perception in autonomous vehicles due to their ability to efficiently extract spatial features from images (edges, corners, textures). They are particularly effective for tasks such as object detection and semantic segmentation. However, vision transformers (ViTs) are regarded as a promising and competitive approach for computer vision tasks. They model relationships within an image using self-attention mechanisms, which makes them well suited for challenging environments with clutter or varying lighting conditions.

Simultaneous Localization and Mapping, which involves building a map of an environment while simultaneously estimating the vehicle’s position within that map, is a complex task that is relatively less developed in terms of the application of machine learning methods. Many studies apply ML only to certain components of SLAM solutions rather than providing a complete ML-based SLAM system.

As can also be seen in

Table 15, environment perception and SLAM approaches using ML are more developed for UAVs and ground-based mobile robots. In contrast, solutions for UUVs and USVs are less mature, which may result from the demanding conditions of the marine environment. Reflections, waves, and changing lighting conditions degrade sensor data quality, while underwater environments further complicate perception due to light absorption and scattering.

Collision avoidance and path planning for all four types of autonomous vehicles are mainly based on RL or DRL approaches. For UAVs and mobile robots, these methods are tested in both simulations and real-world experiments, while for UUVs and USVs, validation is typically limited to simulations. Motion control, similarly to collision avoidance and path planning tasks, is commonly based on RL or DRL across all types of vehicles. This may result from the fact that the vehicle can learn a control policy directly from interactions with the environment, and an exact mathematical model of the vehicle’s dynamics is not required. This is an advantage compared with traditional methods such as PID or MPC.

Motion control methods for different vehicles are more often tested in both simulations and real-world experiments, but papers including real-world tests are less common for UAVs. Generally, as can be seen in

Table 15, similar ML methods are applied across different operational domains; however, it also appears that the technology is more developed for UAVs and ground-based mobile robots compared with UUVs and USVs.

The survey indicates that the use of machine learning-based approaches in autonomous vehicles is growing rapidly. However, several challenges remain, leaving considerable scope for further improvement.

7. Conclusions

Autonomous vehicles are high-tech systems capable of functioning independently of human intervention by utilizing complex technologies such as sensors, artificial intelligence, and advanced control algorithms. This paper provides a comprehensive review of recent studies (2020–2026) on machine learning algorithms applied to autonomous platforms, including unmanned aerial vehicles, unmanned surface vehicles, unmanned underwater vehicles, and ground-based mobile robots.

The review addresses key tasks such as environment perception, simultaneous localization and mapping, collision avoidance, path planning, and motion control. Various machine learning approaches are examined, including supervised, semi-supervised, and unsupervised learning, as well as reinforcement learning and deep reinforcement learning.

The analyzed methods are evaluated in terms of performance, robustness, and suitability across different operational domains. Finally, the paper highlights major challenges and outlines promising future research directions aimed at improving the safety, autonomy, and reliability of autonomous vehicles.