1. Introduction

With the continuous deepening of global digital transformation and the increasing complexity of cyber threats, data security has gradually become a core element of national security and industrial competitiveness [

1]. Against the backdrop of the widespread application of emerging technologies such as cloud computing, big data, and artificial intelligence, how to effectively ensure the confidentiality, integrity, and availability of data in open environments has become a key issue in the informationization strategies of various countries. Global practices in data security are continuously evolving, covering the entire data lifecycle, including collection, storage, transmission, usage, sharing, and destruction, with the goal of building a secure and reliable digital environment [

2]. Data security is not only a critical measure to defend against cyber threats and prevent information leakage but also an essential infrastructure for the healthy development of the digital economy.

In recent years, the development of domestic operating systems in China has accelerated significantly, with overall capabilities transitioning from merely “usable” to increasingly “user-friendly.” A number of system products offering improved user experience and free availability have emerged [

3]. As the core component of information systems, the security of operating systems is directly linked to the protection of national critical infrastructure and sensitive data. However, the rapid adoption of domestic operating systems has also introduced new cybersecurity challenges. Due to inherent vulnerabilities in operating systems, various security threats may arise. First, data privacy leakage [

4,

5,

6]: according to Verizon’s 2020 Data Breach Investigations Report (DBIR), there were 157,525 network incidents, of which 3950 resulted in data breaches. Second, increasing cyber risks: for example, through threat attacks such as the dissemination of rumors and viruses; in August 2022, the Centre Hospitalier Sud Francilien (CHSF), a medium-to-large hospital near Paris, France, suffered a ransomware attack demanding 10 million USD. Third, attacks on critical information infrastructure: for instance, in January 2022, Belarusian railway critical infrastructure was attacked. Therefore, operating system hardening should support high integrity, reliability, availability, privacy, scalability, and confidentiality, while achieving the highest organizational objectives (benefits) of critical IT infrastructure at the lowest possible risk level [

7].

At present, almost all aspects of our daily lives are recorded and stored in digital form [

8], thereby constructing a vast and complex electronic world that encompasses multiple types of data, including business, finance, healthcare, multimedia, the Internet of Things (IoT), and social media [

9]. The data lifecycle can be divided into three stages: data generation, data storage, and data processing [

10]. Each stage has corresponding methods to ensure data security. In the data generation stage, actively generated data are protected from sensitive information leakage through access control. For instance, Di Francesco Maesa et al. [

11] proposed an access control system supporting private attributes by leveraging blockchain, smart contracts, and zero-knowledge proofs. Passively generated data, on the other hand, are protected through obfuscation techniques. In the data storage stage, traditional approaches rely on file-level and database-level security solutions, which are relatively mature. However, as an emerging paradigm, cloud storage has raised significant data security concerns and has become a research hotspot. Li et al. [

12] proposed a publicly verifiable public key encryption with equality test (PVPKEET) scheme, enabling users to verify whether encrypted replicas are securely stored in the cloud without decrypting the data. In the data processing stage, two primary requirements exist: first, preventing unauthorized disclosure when data may contain sensitive information; and second, extracting valuable information while ensuring privacy.

As cloud storage technologies continue to evolve, an increasing volume of data is being migrated to the cloud, and it is foreseeable that nearly all enterprises will transition to cloud-based infrastructures in the near future [

13]. This trend has motivated researchers to integrate security auditing with various technical approaches—such as data encryption [

14], access control mechanisms [

15], and differential privacy [

16]—to verify data integrity in cloud storage environments. Within enterprises and organizations, artificial intelligence techniques are also being adopted for security monitoring and data leakage prevention, enabling real-time detection of anomalous access behaviors, unauthorized file transfers, and policy violations [

17]. However, these approaches predominantly focus on technical or physical storage layers and largely overlook the impact of external environmental factors.

In fact, throughout the entire data lifecycle—generation, storage, and usage—human involvement in operational processes is unavoidable, and individuals often access and acquire data directly through visual perception. Compared with traditional network attacks or system intrusions, data leakage based on visual acquisition is characterized by high stealthiness, difficulty in forensic investigation, and limited preventive measures. Attackers may exfiltrate sensitive information without triggering conventional security mechanisms through methods such as covert screen recording, photographing displays, visual memorization, or remote screen capture. Therefore, implementing effective masking and auditing of sensitive information at the level of visual perception constitutes an indispensable complementary approach to ensuring data security.

Against the backdrop of data-driven digital transformation, the security protection of sensitive information and the establishment of trustworthy auditing mechanisms have become a focal point of concern in both academia and industry. To address the trust deficiencies and risks of data leakage caused by the reliance of current cloud storage auditing on third-party authorities (TPA), this study proposes an innovative system-wide data security auditing scheme based on the Chinese Linux operating system. The proposed scheme constructs a localized and autonomously controllable auditing architecture to achieve the core objective of “raw data remaining within the domain, data being usable but not visible.” This effectively avoids the integrity verification challenges and potential leakage risks associated with data transmission in traditional cloud auditing models.

The main contributions of this paper are as follows:

A lightweight sensitive information protection and auditing framework tailored for Chinese Linux operating systems is proposed. By incorporating dynamic interception and real-time analysis modules, the framework enables precise masking of sensitive screen information and full-chain tracking of sensitive data violations. Compared with existing TPA-based cloud auditing solutions, this method strictly confines data interaction boundaries within a trusted execution environment through localized processing, thereby fundamentally eliminating the trust gap caused by cross-domain data transmission.

A multi-pattern matching acceleration engine based on a hash table–optimized Chinese Aho-Corasick (AC) automaton is designed. To address the performance bottlenecks of traditional regular expression matching in high-concurrency scenarios, a deterministic finite automaton (DFA) together with a failure pointer jumping mechanism is introduced, thereby constructing a rapid retrieval model tailored for sensitive feature libraries.

Construct a scenario-oriented dynamic auditing mechanism. To accommodate the diversity of sensitive information characteristics across different application scenarios, a configurable sensitive word template library is constructed, thereby overcoming the generality limitations of traditional approaches. In addition, the method supports real-time statistics of sensitive word masking occurrences and generates timestamped audit reports to meet relevant data security compliance requirements.

The remainder of this paper is organized as follows:

Section 2 reviews related work;

Section 3 provides a detailed description of the proposed method;

Section 4 presents the effectiveness analysis and performance evaluation; and

Section 5 concludes the paper and discusses future research directions.

2. Related Work

In 2007, addressing the problem of remote data integrity verification, Ateniese et al. [

18] were the first to propose a public auditing scheme for provable data possession (PDP). This scheme enables any public verifier to check data integrity without retrieving the data. In the same year, Juels and Kaliski [

19] proposed the proof of retrievability (POR) model, in which sampling verification and error-correcting codes were employed to ensure the security of stored data. However, this scheme could only verify the integrity of static data. Later, Ateniese et al. [

20] proposed another scheme based on symmetric key PDP for auditing dynamic data on cloud servers. This scheme supported dynamic modification and deletion operations but did not support insertion. Erway et al. [

21] introduced authenticated skip lists in their dynamic provable data possession (DPDP) scheme. Wang et al. [

22] proposed a new PDP scheme based on a rank-based Merkle hash tree (RMHT). By improving the original RMHT and using a non-leaf node sampling strategy, the scheme reduced the number of sampling nodes during the auditing phase and the number of update nodes during the update phase. Guo et al. [

23] proposed an outsourced dynamic provable data possession (ODPDP) scheme built on DPDP, in which the integrity of all data block hashes is protected by a rank-based Merkle tree (RBMT) [

24], while data block integrity is ensured through the combination of hashes and tags. This scheme allows multiple update operations to be executed and verified simultaneously while also supporting outsourced auditing. Yang et al. [

25] designed a novel authenticated data structure, namely the numerical rank-based Merkle hash tree (NRMHT), which supports dynamic data operations. Rao et al. [

26] proposed a batch-based dynamic outsourced auditing scheme in which neither the data owner nor the third-party auditor needs to store the Merkle hash tree, thus reducing the burden on the user side. Wang et al. [

27] proposed a PDP protocol based on algebraic identities, which reduced the computational overhead of the verification algorithm. In addition, more schemes supporting dynamic updates have been proposed [

28,

29]. Some studies have also introduced auditing methods supporting privacy preservation [

30,

31], prevention of key leakage [

32,

33], multi-cloud storage [

34,

35], and data sharing [

36,

37]. All of these works fall under auditing methods for third-party auditing in cloud storage, focusing on verifying the integrity of outsourced data.

Some scholars have also combined security auditing with blockchain to verify data integrity, proposing different auditing schemes. Nakamoto et al. [

38] introduced the concept of Bitcoin, which marked the birth of blockchain. Blockchain is a shared, tamper-resistant distributed ledger that records the state of all entities in a blockchain network. With the development of Web 3.0, such decentralized and trustworthy systems have been widely applied in various fields. In data integrity auditing schemes based on third-party auditing, replacing the third-party auditor with blockchain technology can effectively address the trust issues between data owners and cloud storage providers, thereby improving the security and transparency of data storage and management. Yue and li [

39] proposed a blockchain-based framework for peer-to-peer (P2P) cloud storage data integrity verification. They developed a sampling strategy to enhance the efficiency of verification. Subsequently, Zhang et al. [

40] introduced a blockchain-based time-encapsulated data auditing scheme, which allows users to simultaneously verify the integrity of outsourced data and timestamps in P2P outsourced storage systems. Zhang et al. [

41] further proposed a blockchain-based delayed auditing scheme for public integrity verification, where the main idea is to require auditors to record each verification result on the blockchain. Chen et al. [

42] proposed a blockchain-based dynamic PDP scheme for smart city data, which adopts authenticated data structures (ADS) to support data update operations. Miao et al. [

43] proposed a blockchain-based shared data integrity auditing and deduplication scheme. This scheme employs an identity-based broadcast encryption deduplication protocol without a key server and implements key deduplication on the user side. By leveraging the characteristics of convergent encryption, they introduced a data integrity auditing protocol to enable authenticated deduplication on the cloud service provider side. Wang et al. [

44] proposed a blockchain-based provable data possession scheme for private data, which achieves data integrity verification using RSA signatures. This scheme imposes no restrictions on file block sizes, making it suitable for large files. Later, Dhinakaran et al. [

45] proposed a comprehensive framework that integrates quantum key distribution (QKD), lattice-based cryptography (CRYSTALS-Kyber), and zero-knowledge proofs (ZKP) to ensure data security in blockchain systems for cloud computing. The main goal of this framework is to enhance resistance against quantum threats through QKD, provide quantum attack resistance using CRYSTALS-Kyber, and strengthen data privacy protection and verification processes using ZKP technology. All of the above schemes are blockchain-based auditing approaches.

Some scholars have further combined security auditing with practical application scenarios such as healthcare. Li and Tang [

46] proposed a blockchain-based auditing scheme for distributed cloud medical data, in which each hospital server can act as a blockchain node, participate in consensus, and maintain a distributed ledger to perform data integrity auditing. Mahender Kumar et al. [

47] proposed an identity-based integrity auditing scheme that ensures the privacy of user-sensitive information stored in multi-cloud environments while providing protection against replacement attacks. In this scheme, users’ personal (sensitive) information is protected, while general information is distributed across multiple clouds, thereby preventing sensitive data from being exposed to other shared users. They applied this scheme to the integrity auditing of sensitive medical information in multi-cloud storage. Tian and Ye [

48], under the context of Healthcare 4.0, proposed a new certificateless public auditing scheme for cloud medical data (CPAMD). This scheme eliminates the need for complex certificate management and key escrow, enables efficient batch auditing, and allows stakeholders to access medical data and interact effectively.

3. Scheme Design

3.1. Threat Model

To clearly delineate the security boundaries and protection scope of the proposed method, this section defines the system’s threat model, including protected assets, underlying assumptions, and the attacker’s capabilities and attack vectors.

- (1)

Protected Assets

The primary assets protected by the system are highly sensitive textual data rendered on terminals of the Chinese Linux operating system. Such data may include, but are not limited to, state secrets, personal privacy information, medical records, or commercial confidential information. The goal is to ensure that these sensitive characters are identified and sanitized before being rendered into visible glyphs, while preserving the traceability of user operations.

- (2)

Trust Assumptions

Trusted Computing Base: We assume that the operating system kernel and underlying hardware are trustworthy. If the kernel is compromised—for example, if an attacker obtains root privileges and loads a malicious kernel module—any security mechanism built upon the kernel will fail.

Integrity of the Auditing Module: It is assumed that the system-wide auditing module proposed in this work is neither bypassed nor tampered with.

- (3)

Attacker Definition and Capabilities

This model primarily focuses on the following two types of attackers:

Insider threats. Over-privileged viewers: users who possess legitimate login credentials and pass identity authentication and file-system access control, yet encounter sensitive information beyond their “need-to-know” scope during business operations; Unintentional leakers: users who operate on sensitive data in public or uncontrolled environments and inadvertently expose it to bystanders.

Visual side-channel attackers. Shoulder surfing and photography: attackers who physically approach the screen or use imaging devices (e.g., mobile phones, pinhole cameras) to directly capture sensitive content displayed on the monitor. This represents the “last-mile” leakage risk that traditional encrypted transmission or storage encryption cannot mitigate; Screen-recording software: malicious user-space programs attempting to obtain sensitive content via screenshot or screen-recording APIs. Since the proposed solution intercepts at the low-level font-rendering layer (Freetype), sensitive content is sanitized before it enters the graphical buffer, thereby effectively defending against certain image-based capture attacks.

3.2. Overview and Background Knowledge

To address data security issues at the visual presentation layer, it is first necessary to clarify fundamental concepts in the operating system, such as characters, fonts, and glyphs, and to understand their interrelationships and roles in text processing and rendering workflows.

Character: A character is the basic unit of text and an element of a language, such as the letter “A,” the digit “1,” or the punctuation mark “!”.

Font: A font is a collection of character graphics with similar design and style, providing different appearances for characters. Fonts define the visual attributes of characters, including size, style (e.g., bold, italic), and strokes.

Glyph: A glyph is the actual graphical unit within a font used to render the appearance of a character. A single character can be represented by multiple glyphs. Glyphs are designed based on characters and can be described using curves and lines to form the shape of a character. Each glyph corresponds to different character styles and contextual forms.

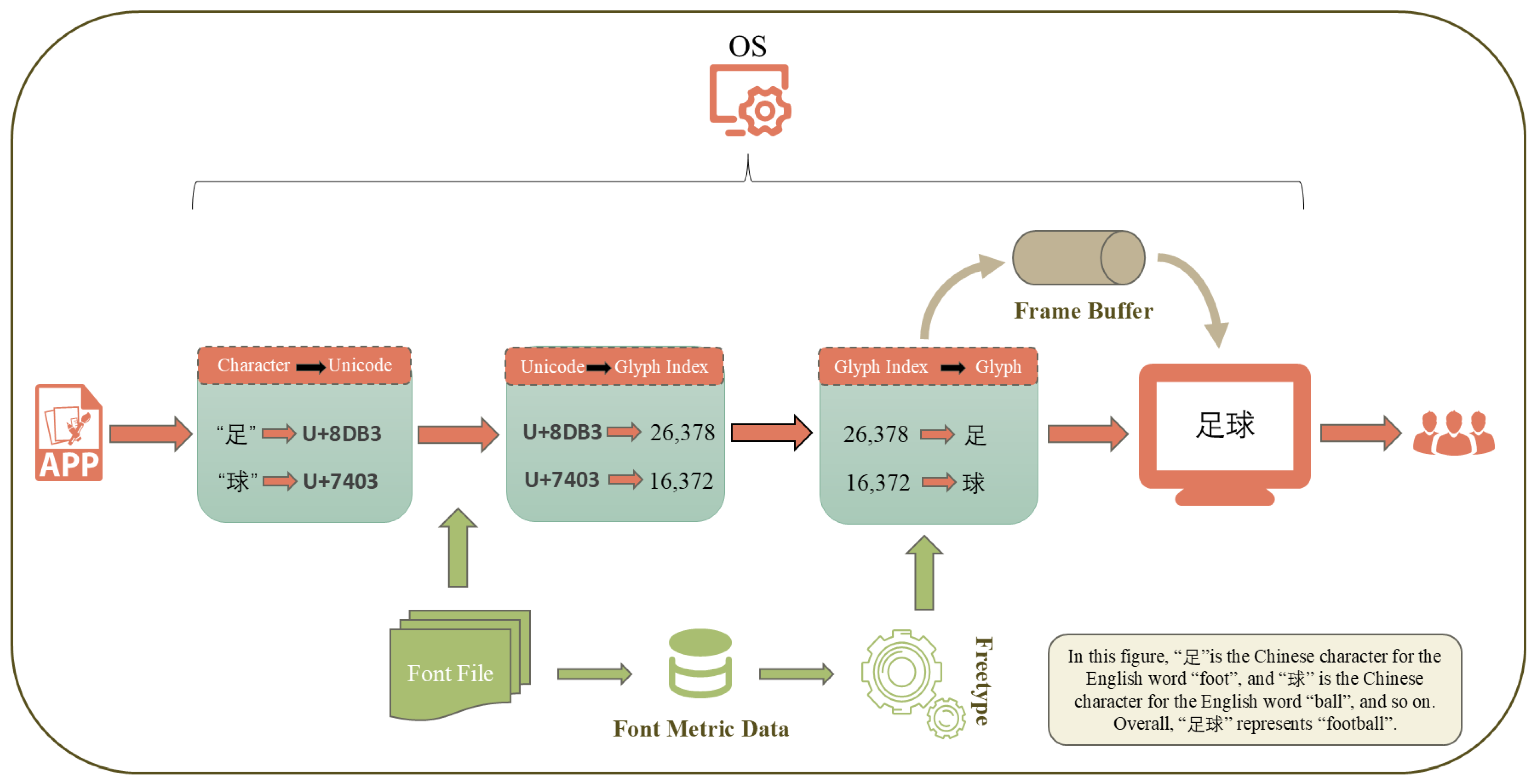

As shown in

Figure 1, the Chinese text rendering process in the operating system converts abstract character information into visible images, representing a critical procedure within the OS graphics subsystem. This process involves the coordination of multiple subsystems, covering key stages such as character encoding parsing, font matching, text layout, glyph generation, image rasterization, and final graphic composition and display output, and it exhibits high complexity and system dependency. Initially, the operating system receives text input from applications or external devices and parses it into a unified Unicode sequence to ensure consistent character handling in multilingual environments. Subsequently, the text layout engine analyzes and arranges the character sequence, handling bidirectional text, character ligatures, ligature substitution, kerning adjustments, and other language- and layout-specific features, generating glyphs along with their corresponding geometric positioning information. Once glyph positioning is determined, the operating system performs font matching and loading, locating the requested font or selecting a substitute, and mapping Unicode characters to specific glyph indices using the glyph mapping tables in font files (e.g., TrueType or OpenType). The font engine (such as FreeType) then extracts contour information based on the glyph indices and combines it with font metrics to execute precise typesetting. Next, glyph contours are rasterized into bitmap data. Anti-aliasing and subpixel rendering techniques may be employed to enhance display clarity, ensuring that characters remain legible across different display densities. After rasterization, the generated bitmap glyphs are drawn into the target graphic context by the graphics composition engine. Layer blending and clipping are applied in conjunction with background, color, transparency, and other attributes. Finally, the rendered result is written to the frame buffer and transmitted to the display device via the operating system’s graphics output subsystem (e.g., X11), completing the presentation of text on the screen. This workflow not only ensures the integrity and accuracy of text information from character encoding to visual presentation but also plays a key role in performance optimization, font compatibility, and cross-platform consistency.

In summary, characters constitute the basic elements of text, fonts define the visual appearance and style of characters, and glyphs are the actual graphical representations within a font used to display characters on the screen. To prevent the leakage of sensitive information from the perspective of visual information processing, it is crucial to intervene in the presentation of glyphs on the computer screen. Specifically, this involves masking the glyphs corresponding to sensitive information during the rendering process, thereby enabling the auditing of sensitive content.

3.3. Confidentiality Mechanism: Character Interception and Masking

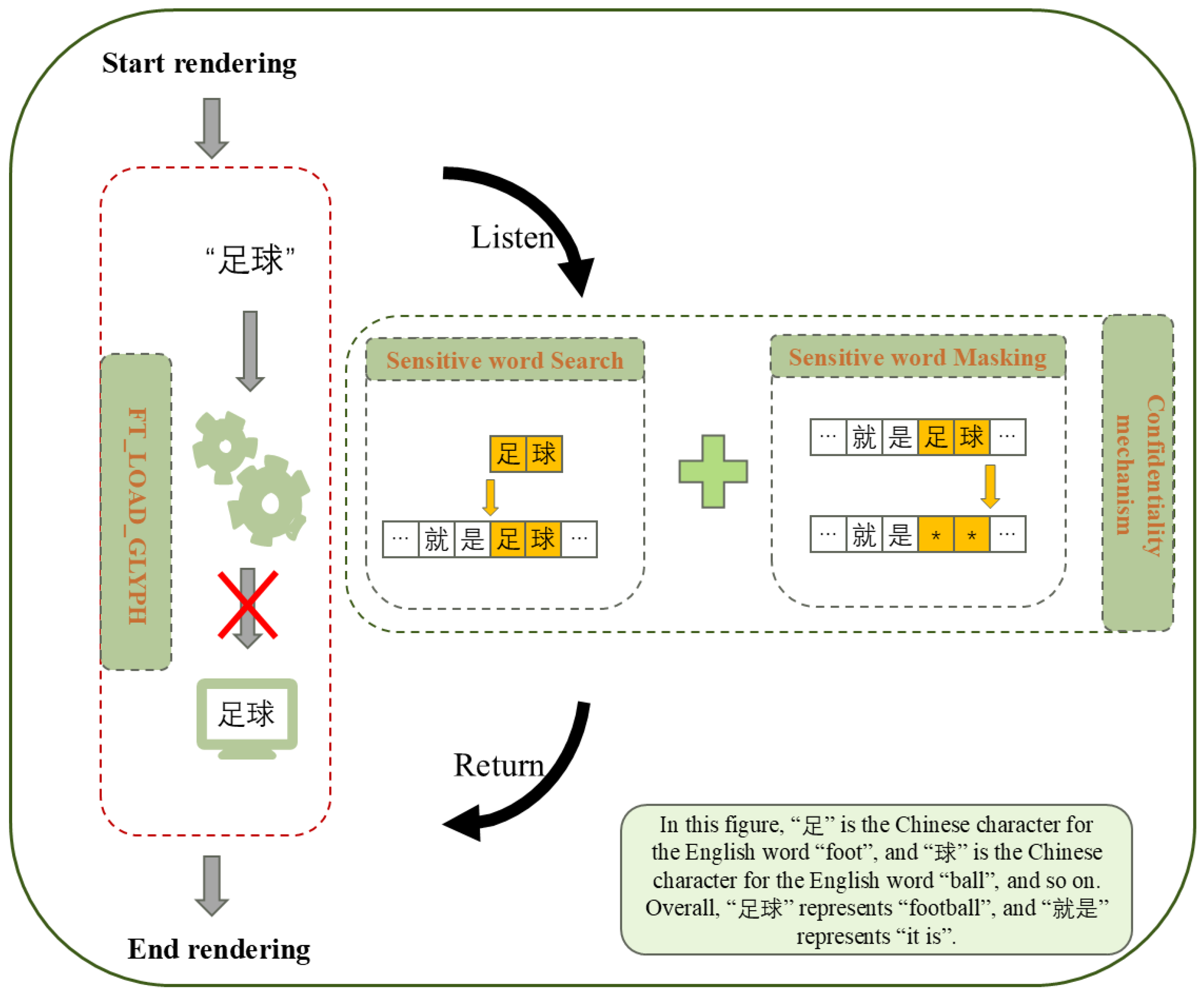

Based on the text rendering workflow illustrated in

Figure 1, this paper proposes a mechanism that embeds a security control module into the text rendering engine, enabling real-time inspection and masking of terminal display information, thereby achieving confidentiality at the visual layer. Specifically, the mechanism integrates character-level interception and replacement functionality deeply within the rendering engine, allowing semantic-level identification, analysis, and processing of character information before it is fully rendered graphically. This design provides fine-grained security control over output content without interfering with the logic of upper-layer applications, ensuring minimal visibility of sensitive information along the client-side display path. The core architecture, as shown in

Figure 2, is based on a system-level hook mechanism. By dynamically injecting specific interception logic, the mechanism effectively captures low-level system calls or function interfaces invoked by applications when submitting character or glyph data to the graphics subsystem.

The confidentiality mechanism is implemented through dynamic library injection, leveraging the LD_PRELOAD environment variable available in Chinese Linux operating system. By configuring LD_PRELOAD, the compiled security auditing module (in the form of a shared object, .so) is forcibly loaded into the address space of the target application before any standard libraries. The injected module employs Procedure Linkage Table (PLT) hooking to intercept and override the core function FT_Load_Glyph in the widely used font rendering library libfreetype. As a powerful text rendering engine, FreeType is relied upon by most Chinese Linux operating systems to support multilingual text, complex typesetting, and internationalized text rendering, making it a widely representative component in practical applications.

By decoupling the logical processing layer from the visual presentation layer, the proposed mechanism achieves the security objective of “usable but not visible.” On the one hand, interception occurs at the FT_Load_Glyph font rendering stage, while complete and correct textual data are still preserved within the application. As a result, normal functionalities such as text editing, file saving, searching, spell checking, and data transmission remain unaffected. On the other hand, when text is rendered to the screen, the confidentiality mechanism intercepts the rendering process and replaces the glyph bitmaps corresponding to detected sensitive words with masking characters.

After the confidentiality mechanism successfully intercepts the FT_Load_Glyph function, it employs an efficient sensitive word matching algorithm to detect sensitive terms in the text in real time. Once a match is identified, the system immediately replaces the corresponding substring with predefined masking symbols, effectively concealing the original sensitive content at the visual layer. This process occurs before the characters are passed into the typesetting and rendering logic, ensuring that end users cannot perceive the presence of sensitive information in the graphical interface.

Because the interception layer operates beneath the graphical display protocols—directly at the rasterization-library level—the proposed method is inherently compatible with both the X11 and Wayland display architectures. For multi-process rendering scenarios, the module adopts a thread-safe design: the AC automaton is shared in memory as a read-only structure, while each thread maintains its own matching-state context. This design effectively eliminates lock contention and ensures stable performance under high-concurrency workloads. Consequently, the mechanism enhances the security granularity and response efficiency along the information output path while preserving system functionality and business logic consistency. It offers strong practical value and deployment flexibility, making it particularly suitable for terminal software systems and scenarios that require high levels of information display security and protection of sensitive data.

3.4. Audit Mechanism: Sensitive-Word Repository Management and Logging

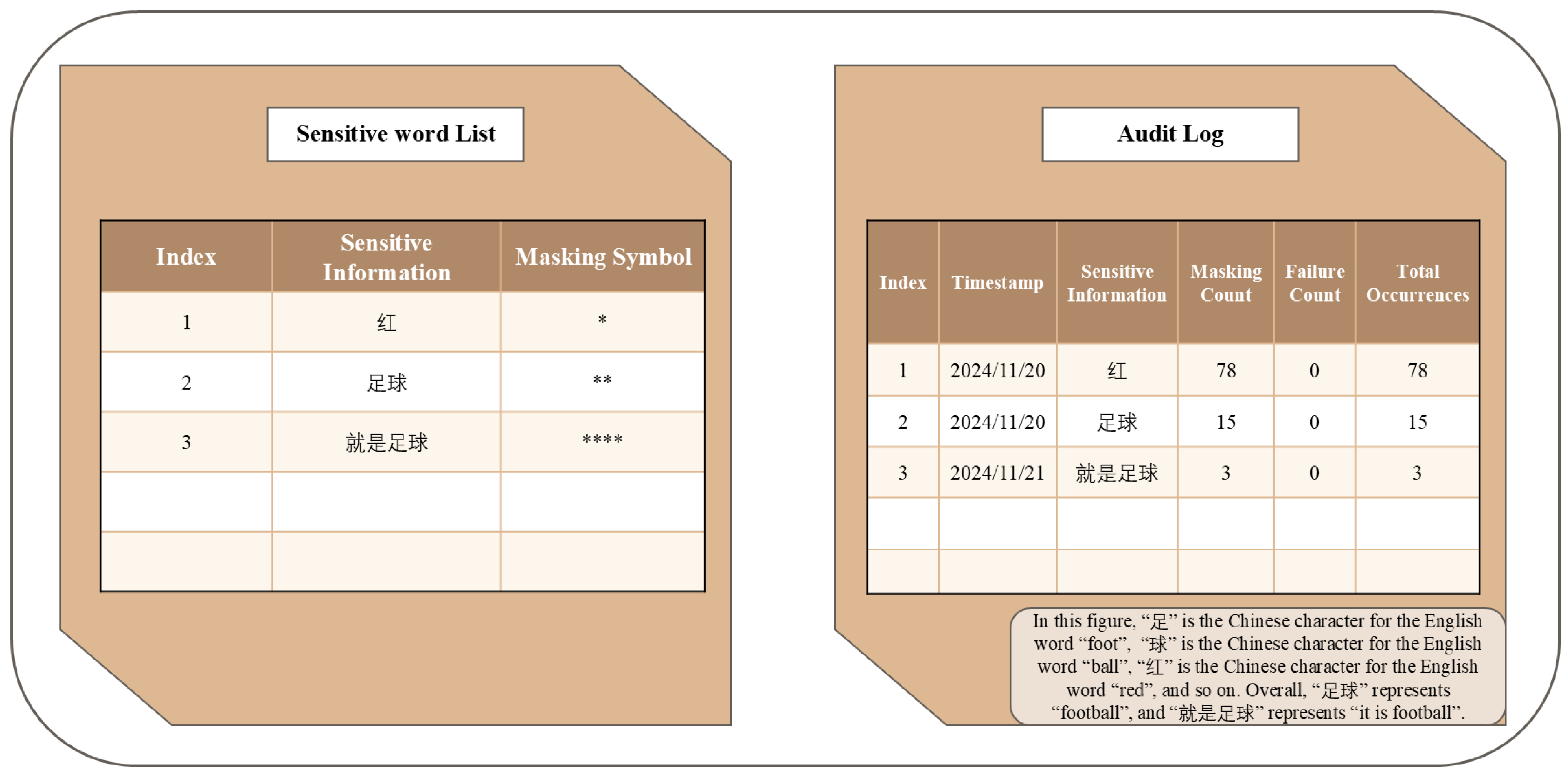

Building on the protection of sensitive information, achieving traceability and effective handling of sensitive content is a critical task for domestic operating systems in terms of content security and risk management. Therefore, the construction of a security auditing mechanism for sensitive information holds significant practical and operational value. The designed auditing mechanism is illustrated in

Figure 3, showing the complementary relationship between the confidentiality mechanism and the auditing mechanism. The confidentiality mechanism relies on the sensitive word library maintained by the auditing mechanism to identify and protect sensitive information in text. Simultaneously, the auditing mechanism records the operation logs generated by the confidentiality mechanism, ensuring that each action can be traced and audited. This bidirectional dependency guarantees that the operating system can effectively prevent the leakage of sensitive information during text processing while providing clear operational records and audit trails.

The design of the auditing mechanism is developed along two core dimensions: the construction of the sensitive word library and the design of the sensitive information logs. The sensitive word library must be highly customizable to flexibly adapt to diverse application scenarios and complex business requirements. Typically, the sensitive word library is stored in a dictionary structure, with the specific organization illustrated in

Figure 4. Given the significant variation in the definition of sensitive information across different application environments, sensitive words are highly domain-dependent. Therefore, the library should be dynamically extended and adjusted according to the content security requirements of specific scenarios to enhance its applicability, coverage, and recognition accuracy, thereby effectively supporting subsequent auditing and control operations. Once the auditing mechanism is loaded into the operating system memory, it reads the dictionary pairs in the sensitive word library, which include a series of sensitive word entries along with their corresponding masking symbol configurations. The dictionary pairs are then parsed into a set of sensitive words and a set of masking symbols. Based on the sensitive words, the auditing mechanism subsequently constructs an Aho-Corasick (AC) automaton.

The design of the sensitive information log aims to comprehensively record and manage key operations and state changes during the filtering of sensitive information, serving as a crucial component for implementing content security auditing and traceability. The log system primarily stores multidimensional information related to sensitive word masking, covering the entire filtering process to ensure auditability, traceability, and controllability of sensitive information handling. Specifically, the sensitive information log should record the following details: the timestamp of masking operations, which allows reconstruction of the processing timeline for sensitive information; the cumulative count of masking actions, reflecting the frequency of sensitive content occurrences; the number and reasons for masking failures, which assist in identifying potential deficiencies or anomalies in the filtering mechanism; and the text forms after replacement, enabling accurate evaluation and visualization of the filtering effects. This approach effectively enhances transparency in sensitive word management, optimizes filtering strategies, and provides robust support for content security.

To enable scenario-oriented dynamic auditing without disrupting system operation, a hot-reload mechanism is introduced. The system implements a file-monitoring thread based on inotify; when changes to the sensitive-word configuration file are detected, the auditing module atomically updates the corresponding AC automaton structure in memory. This allows new auditing policies to take effect immediately without requiring application or operating system restarts, thereby ensuring system continuity and real-time responsiveness.

At the security control level, technical enforcement of role separation is applied. The sensitive-word repository and audit logs are stored in a protected directory with ownership assigned to root, which technically prevents ordinary users from tampering with auditing policies or deleting audit records. This design ensures the reliability and traceability of the auditing mechanism.

3.5. Hash Table-Based Chinese AC Automaton Character Matching Algorithm

When integrating the security auditing mechanism into the text rendering workflow, scenarios involving long text or high-frequency rendering requests can impose significant pressure on the system, as the detection and replacement of sensitive words may increase operating system resource usage, prolong application response times, and negatively affect user interaction fluidity and overall experience. To mitigate these issues and improve the efficiency of sensitive word recognition, this study introduces an Aho-Corasick (AC) automaton algorithm based on a deterministic finite automaton (DFA) within the confidentiality mechanism. The construction of this automaton is illustrated in

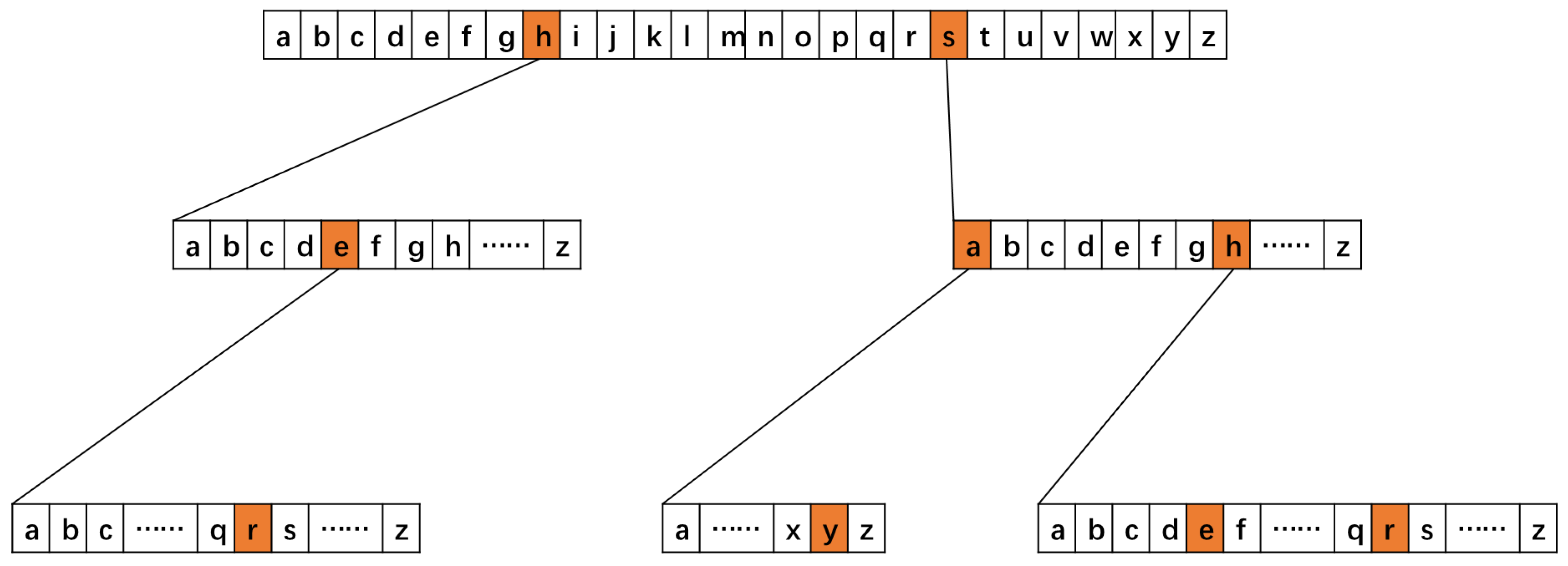

Figure 5. The AC automaton is a multi-pattern string matching algorithm with linear time complexity, capable of matching multiple keywords simultaneously in a single text scan, thereby significantly reducing the time overhead of sensitive word matching. By constructing an efficient keyword matching state machine, the confidentiality mechanism can rapidly identify and process sensitive information in long texts without adding extra computational complexity. This approach ensures real-time and accurate detection while minimizing the impact on system performance, thereby maintaining efficient text rendering and a stable user experience.

In an English-language environment, the storage structure of the AC automaton is illustrated in

Figure 6. Each node in the AC automaton stores only a single character and its associated state information, providing good storage efficiency. However, in the Chinese-language context, the character set is much larger; the number of commonly used Chinese characters exceeds 20,000, and the full Unicode character set contains over 60,000 characters. If a fixed-length array structure is still employed—allocating over 60,000 pointers for all possible child characters at each node—this would result in severe memory wastage. This issue is particularly pronounced in a Trie structure, which already contains numerous sparse nodes, and such a design would significantly increase memory overhead, severely constraining system performance and scalability.

To address the aforementioned issues, this study introduces a hash table as the child node storage structure in the design of AC automaton nodes. This approach leverages the space-saving capability and lookup performance of hash tables in sparse data access scenarios, effectively mitigating the resource wastage associated with traditional fixed-length array structures caused by sparse character encoding. Specifically, the child node set of each automaton node is maintained using a hash table constructed via the chaining method. The hash keys use the full UTF-8 encoding of characters, with each key representing a valid UTF-8 character, and the corresponding hash value pointing to the child node pointer. To improve query efficiency and reduce collision rates, the classical BKDR Hash function is employed, which is defined as follows:

The symbol denotes the -th byte in the string , and represents the total length of the string.

Based on the above design, the structure of each automaton node can be formally defined as follows:

Here, represents the hash-mapped set of child nodes for the current node, denotes the failure pointer, indicates whether the node corresponds to the end of a pattern string, and stores the complete pattern string text.

This structure not only preserves the core characteristics of efficient pattern matching in the AC automaton but also fully leverages the sparse storage and constant-time access properties of hash tables. In multi-byte character environments such as Chinese, it demonstrates superior space utilization and operational efficiency, offering excellent scalability and practical adaptability.

To provide a clearer illustration of the algorithmic procedure, Algorithm 1 presents the detailed construction and matching workflow of the hash-based Chinese AC automaton. The entire process is divided into three phases:

- (1)

Trie Construction (Phase 1): All sensitive terms are inserted into the Trie. Each node manages its outgoing transitions using a hash-table-based child-node structure.

- (2)

Failure Link Construction (Phase 2): A breadth-first search (BFS) is performed over the Trie to establish failure links, ensuring efficient state recovery upon mismatches.

- (3)

Online Matching (Phase 3): The input text stream is scanned sequentially. Matches are reported in real-time through state transitions facilitated by the failure links.

| Algorithm 1: Hash-Table-Based AC Automaton for UTF-8 Chinese Characters |

Input:

W = {w1, w2, …, wk}

T = t1, t2, …, tn

Output: M = list of matched sensitive-word occurrences

Phase 1: Build Trie with Hash-Table Nodes

1: Initialize root node r

2: for each word w in W do

3: node ← r

4: for each UTF-8 character c in w do

5: if c not in node.children then

6: node.children[c] ← new Node()

7: end if

8: node ← node.children[c]

9: end for

10: node.output.add(w)

11: end for

Phase 2: Build Failure Pointers using BFS

12: Initialize queue Q

13: for each child u of r do

14: u.fail ← r

15: Q.enqueue(u)

16: end for

17: while Q is not empty do

18: v ← Q.dequeue()

19: for each (char c, node u) in v.children do

20: f ← v.fail

21: while f ≠ r and c not in f.children do

22: f ← f.fail

23: end while

24: if c in f.children then

25: u.fail ← f.children[c]

26: else

27: u.fail ← r

28: end if

29: u.output.addAll(u.fail.output)

30: Q.enqueue(u)

31: end for

32: end while

Phase 3: Online Matching

33: v ← r

34: for each UTF-8 character c in T do

35: while v ≠ r and c not in v.children do

36: v ← v.fail

37: end while

38: if c in v.children then

39: v ← v.children[c]

40: else

41: v ← r

42: end if

43: if v.output is not empty then

44: Record all matches in v.output

45: end if

46: end for

47: return M |

4. Experimental Analysis

4.1. Effectiveness Analysis

To verify the effectiveness of the proposed security auditing mechanism in practical systems, a set of functional tests based on typical input scenarios was designed and implemented. A formal model and theoretical foundation were constructed, and combined with the improved AC automaton algorithm and replacement strategies, the system’s capability for sensitive word detection and masking was systematically evaluated.

All experiments are conducted on a UOS-22.0 computer with 16 GB memory and an Intel(R) Core(TM) i5-13500 CPU @ 2.50 GHz. The security auditing mechanism is injected into the system as a dynamic link library (.so file), which intercepts and redirects the FreeType core function FT_Load_Glyph to perform real-time analysis and processing of character-level rendering requests.

4.1.1. Functional Verification

The test data consisted of a 100,000-character Chinese input text, encompassing 15 sets of controlled experiments. These experiments covered:

- (1)

Single-character sensitive words (e.g., “红”, “土”, “早”);

- (2)

Two-character sensitive words (e.g., “足球”, “美丽”, “商人”);

- (3)

Compound phrases (e.g., “多余的”, “就是足球”, “一个小女孩”);

- (4)

Benign text without sensitive content (e.g., “德国的球迷”, “那么美好”);

- (5)

Mixed Chinese-English terms (e.g., “zu球”, “hong色”).

The control group represents rendering results with the security auditing module disabled, while the experimental group shows the results with the module enabled.

Table 1 presents the test results, and

Table 2 displays the corresponding audit logs.

The experimental results show that the proposed security auditing scheme can reliably intercept and replace multiple categories of sensitive words, while maintaining correctness even under complex conditions such as repeated occurrences and nested terms. It introduces no interference to normal text rendering, thereby avoiding false replacements or degradation of readability. Meanwhile, the audit logs record all intercepted and replaced sensitive contents along with their occurrence frequencies, providing strong traceability. Since the scheme adopts a deterministic string-matching algorithm—the Aho-Corasick automaton—rather than a probabilistic model, both precision and recall can theoretically reach 100% within the scope of the predefined sensitive-word repository. Overall, the proposed mechanism demonstrates high stability and accuracy at the functional level, as well as strong practical value in terms of security assurance and auditability.

4.1.2. Formal Definition of Sensitive Information Masking

To rigorously describe the proposed sensitive information masking mechanism, this subsection presents a formal definition of the input representation, masking rules, and audit log generation process.

Let the input text be a sequence of characters:

where

denotes the

-th character.

Let the sensitive lexicon be defined as:

where

denotes the

-th sensitive term or sensitive pattern, and

is the total number of entries in the sensitive lexicon.

Define a rendering function

and a masking function

. For any character

, the output rule is given by:

where

denotes the set of character positions in

that correspond to a matched occurrence of the sensitive term

. Characters belonging to any sensitive match are masked, while all other characters are rendered normally.

Meanwhile, an audit log is generated to record sensitive term detection:

where

denotes the detection timestamp and

represents the number of occurrences of the sensitive term.

4.1.3. Sensitive Information Masking Model

The model is first formally defined as follows:

Let the text string be , where , and represents the set of UTF-8 encoded characters.

Let the set of sensitive words be

Let the matching function be

Let the replacement function be , which replaces all matched positions with the masking string

We define output security as follows:

Definition 1 (Output Security). The system output is said to satisfy output security if, for any , it holds that .

4.1.4. Correctness Theorem of Matching

We first present a theorem regarding the correctness of the matching function

Theorem 1 (Completeness). If the pattern set is constructed using the AC automaton and a linear scan is performed over the input string , then the matching function returns all positions of occurring in completely, without any omissions or false matches.

Proof of Theorem 1. The AC automaton consists of a deterministic finite automaton (DFA) combined with a failure pointer mechanism. Its structure is built as a prefix tree based on the pattern set , and each node is assigned a failure pointer to the longest matching suffix. During the matching process, whenever the current character fails to match, the failure pointer guides the automaton to backtrack to the longest acceptable suffix state and continue matching, thereby preventing omissions. Each time a valid state is reached, all matched pattern strings can be accurately reported through the output set of that node. Therefore, the entire process covers all valid matches without matching non-pattern strings, satisfying the completeness requirement. □

4.1.5. Correctness Theorem of Replacement

Based on the completeness of matching, we further present a theorem guaranteeing the confidentiality of the replacement logic:

Theorem 2 (Confidentiality). If the matching function can enumerate all occurrences of sensitive words, and the replacement function performs irreversible masking at each position, then for any , it holds that: .

Proof of Theorem 2. The completeness of the matching function (established in Theorem 1) ensures that all positions of pattern strings in the text are accurately identified. The replacement function replaces each matched interval with an irreversible string , satisfying , and contains no matching subset with any . Therefore, no substring exists in the replaced text, and all sensitive words are completely removed from the output, satisfying the definition of output security. □

Through the above analysis, the effectiveness of the confidentiality mechanism is thus demonstrated.

4.2. Complexity Analysis

We analyzed the time and space complexity of the security auditing mechanism. The primary contributions to time and space complexity in the confidentiality mechanism arise from the construction of the AC automaton, the matching phase, and the sensitive word replacement phase. Reading the sensitive word dictionary and recording the audit logs mainly involve file I/O operations.

4.2.1. AC Automaton Construction Phase

The construction of the AC automaton involves two steps: first, the construction of the Trie tree, in which multiple pattern strings are inserted into the Trie to form the basic multi-pattern storage structure; second, the construction of failure pointers, which are established using breadth-first search (BFS) to ensure rapid backtracking upon a match failure. Let the pattern set be , where the total length of all patterns is , i.e.,

For a traditional array-based AC automaton, each node allocates an array for the entire character set, and inserting each character has a time complexity of . Therefore, the overall time complexity for constructing the Trie tree is . However, in a Chinese-language environment, the character set is very large, and initializing the array at each node incurs significant overhead, resulting in wasted space within nodes. In contrast, for the hash table-based AC automaton, each node allocates hash table entries only for characters that actually appear. The average time complexity for inserting a character remains , so the average time complexity for constructing the Trie tree is still . Nevertheless, because nodes store only the existing branches, the construction process is more efficient in Chinese-language environments, and the space overhead is significantly reduced.

Time Complexity of Failure Pointer Construction: For both the traditional array-based AC automaton and the hash table-based AC automaton, the construction of failure pointers is performed using a BFS traversal of the Trie tree. Each node’s failure pointer is computed only once, and each character backtracking operation is executed at most once. Let the Trie tree contain nodes, and model the BFS process as Ftraversing the node set , with each node undergoing one failure pointer computation. Let denote the average number of backtracking steps per node. The total backtracking complexity can then be expressed as: , where 1 accounts for visiting the node itself, and represents the number of backtracking operations. Since , and the maximum depth of the Trie does not exceed the length of any pattern string, we have ( is the size of the character set). In practice, each failure backtrack occurs at most once, so we can approximate: . Therefore, the time complexity of this process remains

Space Complexity of the Trie Tree: For the traditional array-based AC automaton, each node allocates an array for the entire character set. In a Chinese-language environment, this corresponds to the Unicode character set, resulting in a space complexity of , where is the number of nodes and is the size of the character set. In contrast, for the hash table-based AC automaton, each node stores only the branches that actually appear. The space complexity is , where is the average out-degree of a node, and . Therefore, the hash table implementation significantly reduces memory usage.

In summary, during the construction phase, both methods have a theoretical time complexity of . However, in a Chinese-character environment, the hash table-based AC automaton shows clear advantages in terms of space utilization and construction efficiency.

4.2.2. AC Automaton Matching and Replacement Phases

The core of the matching phase is to efficiently locate all pattern strings in the input text using the Trie tree and failure pointers. Let the text length be . During matching, the automaton scans the text character by character from the beginning, attempting state transitions in the Trie tree. For both the traditional array-based AC automaton and the hash table-based AC automaton, in the ideal case, each character can directly follow a transition from the current state to the next state, yielding a time complexity of . When a mismatch occurs, the automaton backtracks along the failure pointers to find the longest feasible suffix state and continues matching. Since each character undergoes at most one failure pointer backtrack on average, the amortized time complexity of this process is , and the expected overall time complexity of the matching phase remains . In extreme cases, for example, when malicious input is constructed such that each character triggers the deepest backtracking, each character may experience up to backtracks, where is the length of the longest pattern string in the set. Consequently, the worst-case time complexity becomes . After a sensitive word is successfully matched, it is replaced using a direct substitution method, which has a time complexity of

In summary, in the matching and replacement phases, the additional time overhead for sensitive word replacement is usually negligible. Therefore, the expected overall time complexity is , while in extreme input cases, the worst-case complexity may degrade to

4.3. Performance Analysis

This subsection presents a comparative evaluation of the proposed scheme across four experimental scenarios to demonstrate its feasibility and practicality while ensuring system security. The experiments are designed as follows:

- (1)

Impact of Input Text Size: The first experiment examines the effect of varying Chinese text input sizes on application startup time, CPU utilization, and memory overhead, while keeping the sensitive word dictionary size constant. This comparison assesses the scheme’s impact on system performance relative to data volume.

- (2)

Impact of Dictionary Size: The second experiment analyzes how different sensitive word counts affect the same performance metrics (startup time, CPU, and memory), with the input text size held fixed.

- (3)

Combined Variable Analysis: The third experiment investigates startup time trends by simultaneously adjusting both the size of the sensitive word corpus and the volume of the input text.

- (4)

Latency during Normal Browsing: The fourth experiment simulates a standard usage scenario to measure the average rendering latency per 1000 consecutive characters and evaluates performance overhead under multi-window concurrent conditions.

4.3.1. Performance Impact of Adding the Security Auditing Mechanism Under a Fixed Sensitive Word Scale

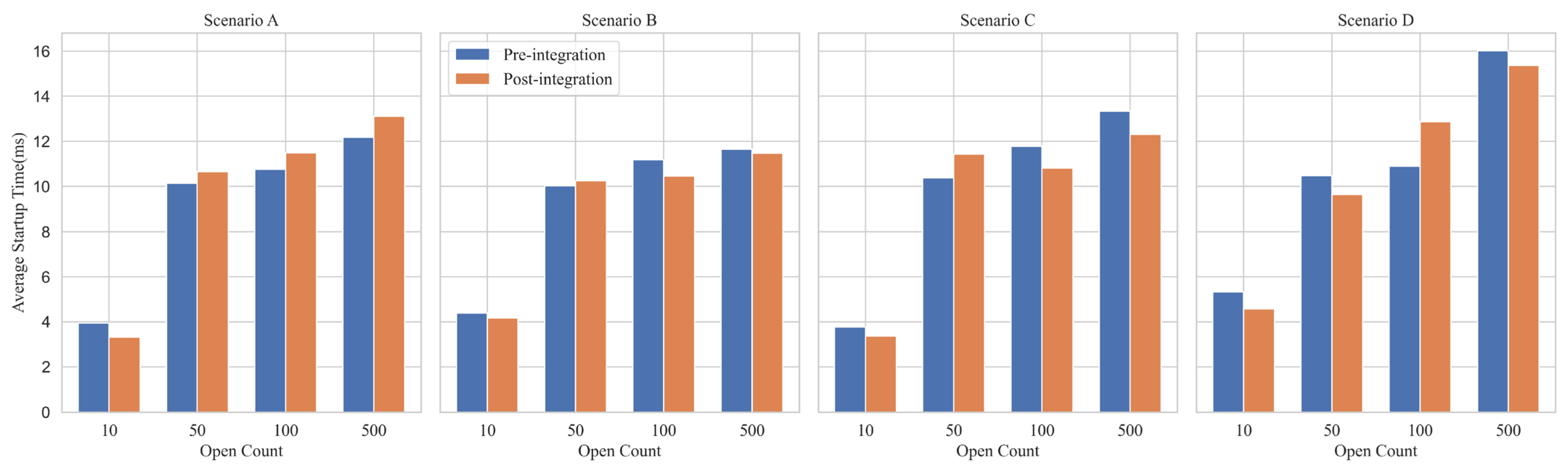

In Comparative Experiment I, four scenarios were designed to evaluate the impact of varying Chinese text scales on application startup time, system CPU usage, and memory overhead under the condition of a fixed sensitive word scale. The four scenarios, labeled A, B, C, and D, correspond to approximately 2000, 10,000, 50,000, and 100,000 Chinese characters of input text, respectively. To enhance the stability and reliability of the experimental results, each scenario was subjected to repeated startup tests of 10, 50, 100, and 500 iterations. The average values of these repeated tests were then calculated to eliminate the influence of random errors.

Figure 7 presents the impact of the security auditing mechanism on file-opening latency. In Scenario A, compared with the baseline without the auditing mechanism, the average startup time decreases by 0.628 ms at 10 repetitions. However, for 50, 100, and 500 repetitions, the average startup time increases by 0.52 ms, 0.721 ms, and 0.925 ms, respectively. At 500 repetitions, both configurations (with and without auditing) exhibit a standard deviation of approximately σ ≈ 2.15 ms, and their 95% confidence intervals overlap substantially.

In Scenario B, compared with the baseline, the auditing-enabled configuration reduces the average startup time by 0.219 ms at 10 repetitions. For 50 repetitions, the latency increases by 0.223 ms. For 100 and 500 repetitions, the latency decreases by 0.727 ms and 0.185 ms, respectively. At 500 repetitions, both configurations exhibit a standard deviation of approximately σ ≈ 1.98 ms, and the confidence interval width is significantly larger than the observed mean difference.

In Scenario C, compared with the baseline, the auditing mechanism reduces the startup time by 0.395 ms at 10 repetitions, increases it by 1.054 ms at 50 repetitions, and decreases it by 0.968 ms and 1.032 ms at 100 and 500 repetitions, respectively. At 500 repetitions, the standard deviation of both configurations is approximately σ ≈ 2.85 ms. Statistical analysis shows that the observed differences fall within one standard deviation (1σ) and therefore lack statistical significance.

In Scenario D, the auditing-enabled configuration reduces startup time by 0.748 ms and 0.837 ms at 10 and 50 repetitions, respectively; increases it by 1.968 ms at 100 repetitions; and decreases it by 0.640 ms at 500 repetitions. At 500 repetitions, both configurations have a standard deviation of approximately σ ≈ 2.30 ms, and the confidence intervals fully cover zero.

Across all four scenarios, the mean differences between the baseline and auditing-enabled configurations consistently fall within the range of [−1.0 ms, +0.9 ms], with alternating positive and negative signs, indicating no systematic performance bias. Furthermore, the absolute mean differences in all scenarios remain smaller than their corresponding standard deviations (|ΔMean| < σ), confirming that the observed fluctuations are indistinguishable from normal stochastic noise. Based on these results, we conclude that the proposed security auditing mechanism introduces negligible overhead to the startup path, achieving the design goal of lightweight operation.

Figure 8 illustrates the impact of introducing the security auditing mechanism on overall system performance. In Scenario A, after adding the security auditing mechanism, the average CPU usage increased by only 0.77%, and the average memory overhead increased by 18.74 MB; in Scenario B, the average CPU usage increased by 0.35%, and the average memory overhead increased by 17.69 MB; in Scenario C, the average CPU usage increased by 0.39%, and the average memory overhead increased by 13.3 MB; in Scenario D, the average CPU usage increased by 0.2%, and the average memory overhead increased by 25.22 MB. In summary, introducing the security auditing mechanism had a relatively limited impact on system resource consumption across all scenarios.

4.3.2. Performance Impact of Adding the Security Auditing Mechanism Under a Fixed Chinese Text Input Size

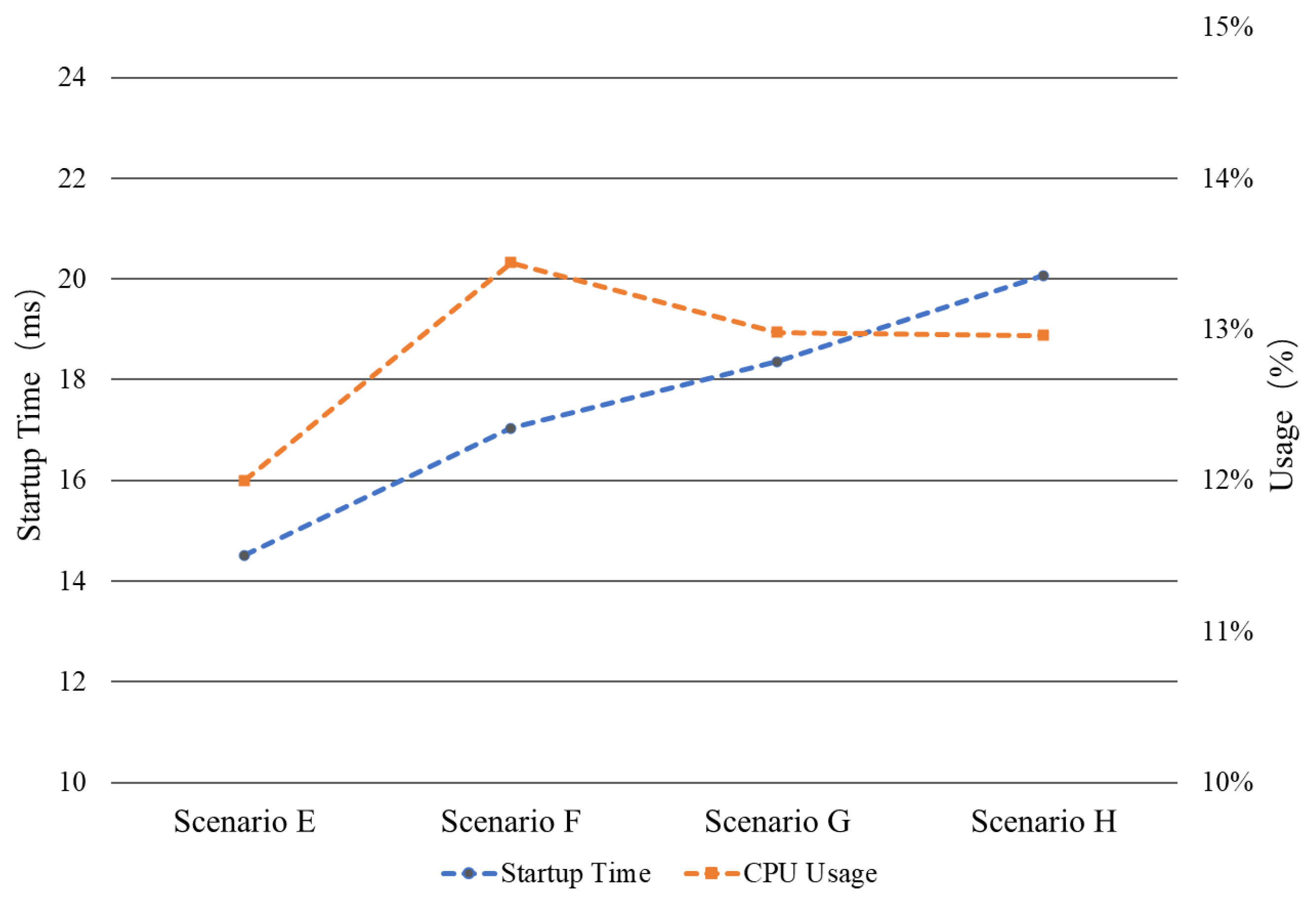

In Comparative Experiment II, four scenarios were set up using Chinese text input of the same size (10,000 characters) while progressively increasing the number of sensitive words, in order to evaluate the effect of sensitive word scale on application startup time and CPU usage. Scenarios E, F, G, and H corresponded to 10, 100, 500, and 1000 sensitive words, respectively. To improve the stability and reliability of the results, each scenario was repeated 500 times, and the average values of the collected data were analyzed. The experimental results are shown in

Figure 9. The system CPU average usage remained relatively stable at approximately 13%. Meanwhile, the application’s average startup time exhibited a gradual increase with the growth of the sensitive word set. These results indicate that the security auditing mechanism maintains good stability when processing sensitive word sets of varying sizes, with minimal impact on system CPU load. Although the number of internal read and write operations within the auditing mechanism increases as the number of sensitive words grows, the associated performance overhead remains within an acceptable range.

4.3.3. Performance Impact of Adding the Security Auditing Mechanism Under Different Scenarios

In Comparative Experiment III, three scenarios were designed to evaluate the combined effect of text size and sensitive word count on application startup time in practical usage environments. Scenario I corresponds to an input text of 100,000 Chinese characters with 50 sensitive words, representing a large-text scenario with a relatively small sensitive-word set. Scenario J uses an input text of 10,000 characters with 500 sensitive words, representing a small-text but large–sensitive-word scenario. Scenario K combines an input text of 100,000 characters with 500 sensitive words, representing a composite scenario with both large text size and a large sensitive-word set.

To ensure the stability and reliability of the results, each scenario was tested with 500 repeated startups, and the results were averaged to minimize the impact of sporadic anomalies. The experimental results are shown in

Figure 10. Compared with the baseline without the security mechanism, the average startup time increased by 1.043 ms and 0.811 ms in Scenarios I and J after enabling the security auditing mechanism, respectively, while Scenario K showed a decrease of 1.073 ms in average startup time. The data in

Figure 10 indicate that introducing the security auditing mechanism has a minimal impact on application startup time across different scenarios, essentially posing no effect on practical operations. This result further confirms that the proposed scheme provides strong system security while maintaining excellent performance stability and scenario adaptability.

4.3.4. Normal Text Rendering Latency Test

The previous experiments primarily evaluated the impact of the security auditing mechanism during the application startup phase. However, in real-world usage, users are more concerned with the smoothness of text browsing. To address this, we designed Comparison Experiment IV to simulate a typical “normal usage scenario” and assess the runtime overhead introduced by the auditing mechanism.

In this experiment, we measured the average rendering time for every 1000 continuously input characters and additionally simulated a multi-window concurrent rendering scenario. For both configurations—with and without the security auditing mechanism—we recorded the average rendering latency per 1000 characters, CPU load, and the average window-opening time under five concurrent windows.

The results are shown in

Table 3. Compared with the baseline without the security auditing mechanism, the average rendering time per 1000 characters increased by 0.64 ms after enabling the mechanism, while CPU load increased by 0.15%. In the multi-window rendering scenario, enabling the mechanism introduced an additional 0.01 ms latency. These results indicate that the proposed security auditing mechanism remains highly efficient when processing continuous text streams and imposes no significant computational burden. The experiment demonstrates that the mechanism provides strong security guarantees while fully supporting multi-window and normal browsing workloads.

4.4. Comparison with Operating-System-Level Approaches

To highlight the uniqueness and advantages of the proposed scheme in data leakage prevention, this section compares our method with several mainstream Linux security mechanisms, including SELinux MLS, AppArmor, and eBPF. The comparison dimensions cover control granularity, protection mechanisms, and visual protection capability. The detailed comparison is presented in

Table 4.

Traditional MAC mechanisms such as SELinux MLS and AppArmor primarily enforce access control policies that determine who can open a file, but they lack visibility control over what part of the content can be displayed. As a result, data visibility remains an “all-or-nothing” model. Although eBPF provides flexible programmability within the kernel, it is not well suited for handling complex user-space text rendering logic, and direct intervention in user-space data may introduce risks.

In contrast, the proposed method operates directly on the character rendering pipeline, enabling fine-grained, content-level “usable-but-invisible” control. It delivers higher stability and performance in large-scale Chinese text scenarios and effectively compensates for the lack of visual side-channel protection in traditional operating-system-level mechanisms. Therefore, it serves as a key enhancement to data leakage prevention in domestic Chinese Linux operating systems.

4.5. Security Analysis

Based on the threat model defined in

Section 3.1 and the experimental results presented above, this section analyzes the security properties of the proposed auditing method. The analysis evaluates how the system defends against data leakage risks while ensuring operational integrity and availability.

- (1)

Defense Against Visual Data Leakage: The core security feature of the proposed method is the interception of sensitive data at the rendering stage. By sanitizing sensitive characters before they are converted into visible glyphs, the system effectively mitigates “shoulder surfing” and unauthorized screen captures, ensuring that even if an attacker gains physical or visual access to the terminal, the specific content of state secrets or privacy data remains obscured.

- (2)

Fine-Grained Content Control: Unlike traditional Access Control (DAC/MAC) mechanisms like SELinux, which operate at the file or process level, this scheme provides security at the semantic and character level. It can distinguish between sensitive and benign text within the same application window, allowing for precise blocking of confidential information without hindering legitimate application usage.

- (3)

Robustness Against Obfuscation: The utilization of the improved AC automaton algorithm ensures that the system is resilient against common text obfuscation techniques. As demonstrated in the effectiveness analysis (

Section 4.1), the system correctly identifies and masks sensitive words even in complex scenarios involving nested phrases, overlapping keywords, or repeated sequences, preventing attackers from bypassing filters through sentence restructuring.

- (4)

Traceability and Non-Repudiation: The integration of a logging mechanism provides a strong audit trail. Every attempt to render sensitive information is recorded with timestamps and content details. This ensures non-repudiation, allowing security administrators to trace the source of potential leaks.

- (5)

Resistance to Denial-of-Service (DoS) via Rendering: The performance analysis indicates a minimal overhead. This efficiency prevents the security mechanism itself from becoming a bottleneck. An attacker cannot easily exhaust system resources or freeze the desktop environment simply by flooding the terminal with text input, ensuring high system availability.

- (6)

Prevention of False Positives: Security analysis confirms that the replacement strategy is strictly bounded by the sensitive keyword dictionary. Benign text adjacent to sensitive words remains unaffected (as shown in the functional tests). This ensures the integrity of normal business operations, preventing the “security audit mechanism” from acting as a denial-of-service vector for legitimate work.

- (7)

Robust Handling of Multi-Byte Characters: The proposed AC automaton-based algorithm is specifically optimized for multi-byte character sets used in Chinese Linux operating systems. This ensures that sensitive information containing Chinese characters is accurately identified and masked, effectively addressing the complexity of character matching and segmentation that generic or non-localized auditing tools often fail to handle correctly.

- (8)

Real-Time Interception Capability: The system operates synchronously with the text rendering pipeline. This real-time processing ensures there is no “time-of-check to time-of-use” gap where sensitive data could briefly flash on the screen before being masked, providing continuous protection.

- (9)

Immunity to Application-Layer Evasion: Since the mechanism is embedded within the underlying OS rendering libraries, it functions independently of the upper-layer applications. A malicious or compromised text editor, browser, or chat client cannot bypass the auditing simply by ignoring standard API calls, as the rendering is handled by the OS.

- (10)

Stability Under High Load: The formal model ensures that the state transitions in the detection algorithm are deterministic. Under high-load conditions with multiple windows rendering text simultaneously, the system maintains its security posture without race conditions that could lead to leakage, as evidenced by the negligible multi-window latency increase (+0.01 ms).

4.6. Ethical and Privacy Considerations

The proposed system-wide text rendering interception mechanism involves real-time analysis of terminal display content, which inevitably raises ethical concerns regarding user privacy and the boundaries of monitoring. To ensure responsible deployment and usage, the system design adheres to the following three principles.

First, purpose limitation and transparency. The sole intention of this technique is to prevent highly sensitive data—such as state secrets, commercial confidential information, or personally identifiable information (PII)—from being visually exposed in uncontrolled environments. It is not designed for comprehensive monitoring of user activities. In real-world deployments, organizations should follow transparency principles by clearly informing end users about the existence of the auditing mechanism, its monitoring scope, and the conditions under which it is activated, thus avoiding covert intrusion into user privacy.

Second, data minimization. The auditing mechanism enforces strict limitations on the granularity of collected information. The audit logs record only the triggered sensitive word entries, timestamps, and occurrence counts. They do not capture ordinary text, non-matching content, or any form of screen snapshots or contextual data. This design ensures that, even if audit logs are exposed, an attacker cannot reconstruct the user’s full operational context or private communication. Consequently, the system balances traceability with protection of user privacy.

Third, access control and regulatory compliance. Since the configuration of the sensitive-word repository is managed by system administrators, there exists potential for privilege misuse. To mitigate this risk, we recommend role separation and auditing of administrative operations themselves. Moreover, the deployment of this technology must strictly comply with relevant data protection laws and regulations to ensure that the technical measures operate within legal boundaries.

5. Conclusions

Chinese Linux operating system has achieved widespread deployment across multiple sectors in China. Nevertheless, it remains exposed to potential data leakage risks. To address this issue, this paper proposes a data leakage prevention security auditing mechanism suitable for general-purpose operating systems. By dynamically intercepting and analyzing application output at the visual information processing layer, the mechanism enables real-time monitoring and effective prevention of sensitive data leakage. Unlike traditional static policies or coarse-grained filtering methods, this scheme embeds auditing logic at the architectural level, offering strong transparency and extensibility. It achieves fine-grained security control without disrupting the normal user experience.

Furthermore, this paper introduces an improved Aho-Corasick (AC) automaton-based sensitive information recognition algorithm, which supports rapid matching of large-scale sensitive word sets and is suitable for multi-byte character sets such as Chinese. This enhancement significantly improves the detection efficiency and processing capability of the proposed scheme across diverse application scenarios.

Performance evaluations and comparative experiments conducted under multiple scenarios demonstrate that the proposed security auditing mechanism ensures data security while imposing minimal startup time overhead, maintaining strong operational performance and system compatibility. The experimental results indicate that the scheme not only effectively meets practical security requirements but also offers generality and customizability for multi-scenario deployment, highlighting its high potential for practical application and wider adoption.