GMAFNet: Gated Mechanism Adaptive Fusion Network for 3D Semantic Segmentation of LiDAR Point Clouds

Abstract

1. Introduction

- We propose a dynamic gated mechanism semantic segmentation network (GMAFNet) that fuses 2D image features and LiDAR point cloud features. By utilizing a dynamic gated mechanism, the network enhances attention to important information such as vehicles and pedestrians, reduces issues like information redundancy during the fusion process, and improves the overall performance of the system.

- We introduce the 2D feature extraction network (RepGhost) and the 3D feature extraction network (PV-RCNN) to extract multi-scale features. This approach provides richer and more accurate feature representations for subsequent semantic segmentation tasks.

- Through experiments on multiple datasets (such as SemanticKITTI and nuScenes), we demonstrate that GMAFNet significantly improves matching accuracy, robustness, and generalization ability compared with existing advanced methods.

2. Related Work

3. Method

3.1. Input Processing

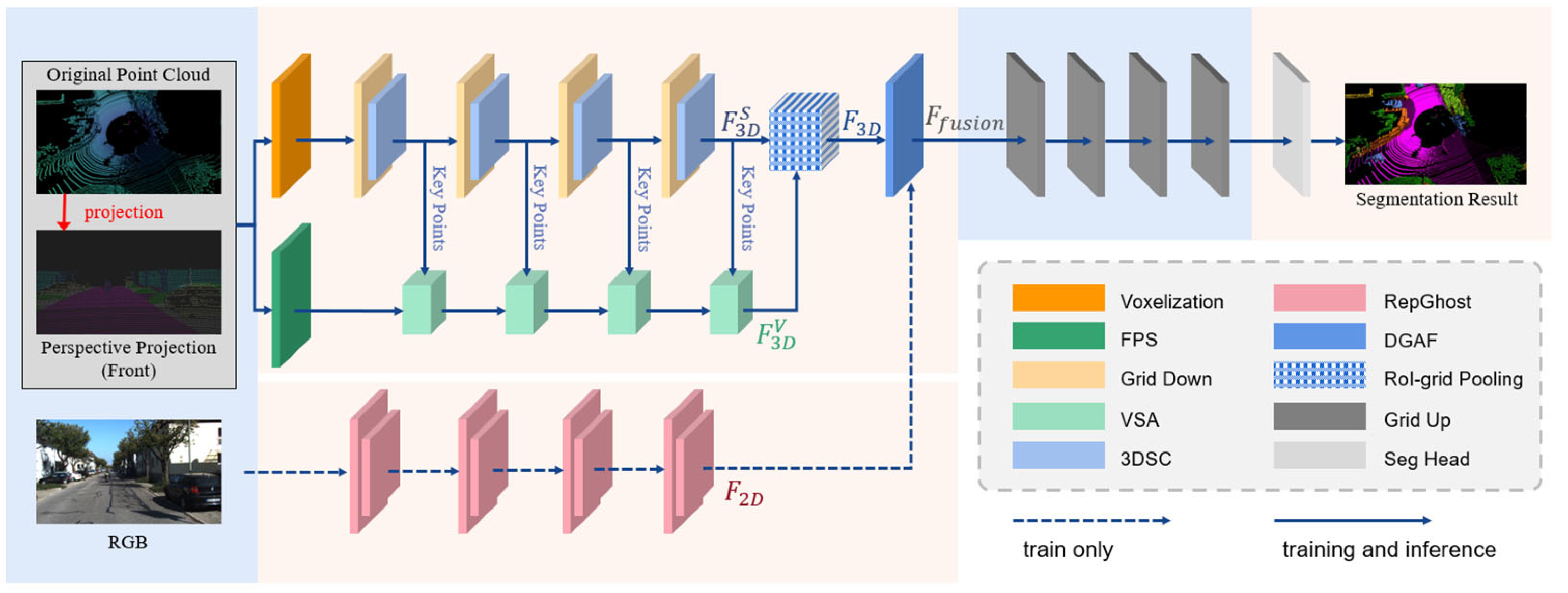

3.2. Network Overview

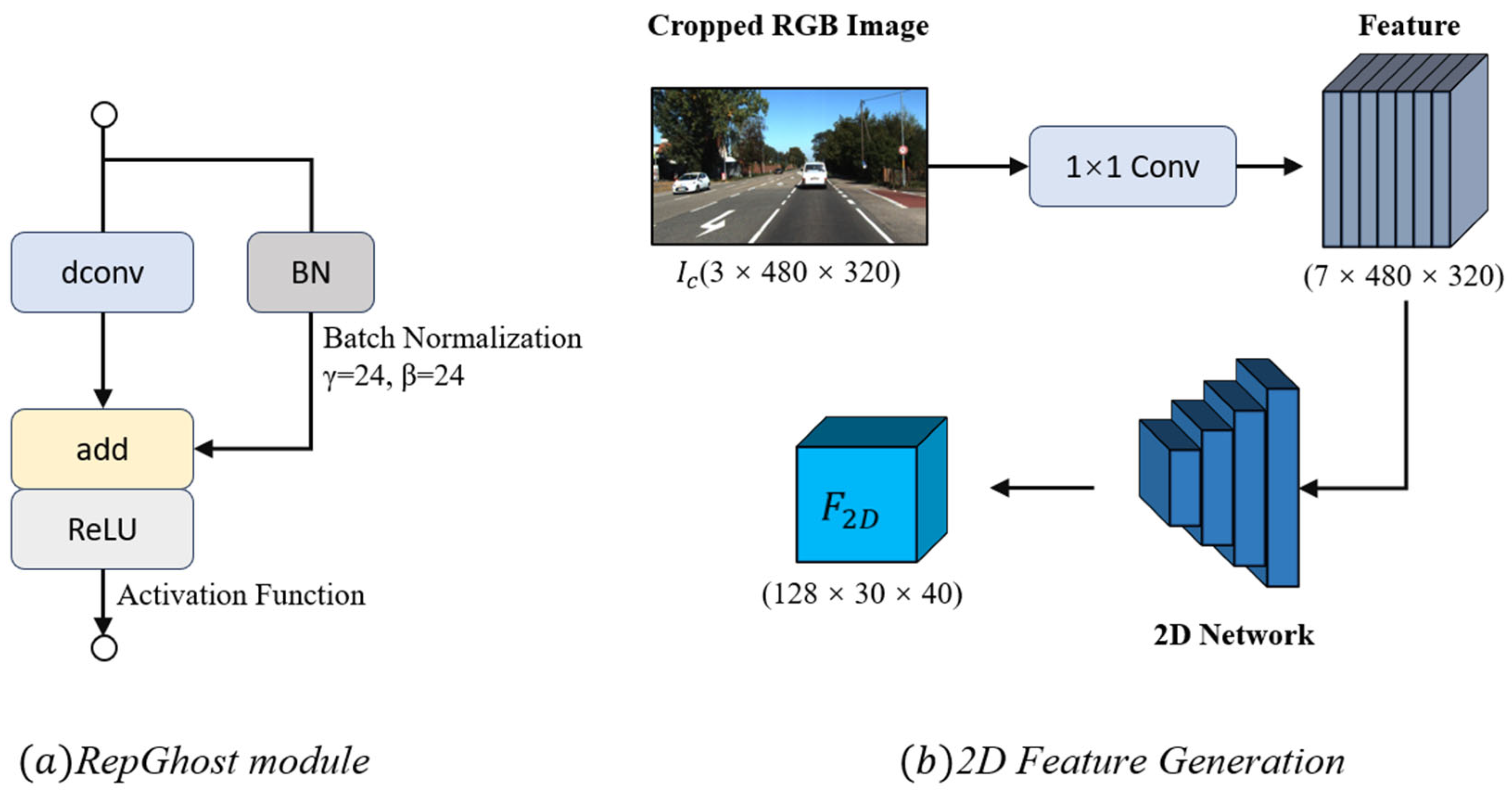

3.3. RepGhost Module

3.4. PV-RCNN 3D Feature Extraction Framework

3.4.1. Voxel Set Abstraction Module

3.4.2. RoI-Grid Pooling via Set Abstraction

3.5. Dynamic Gated Attention Fusion Module

4. Experimental Analysis

4.1. Datasets

4.2. Training and Evaluation

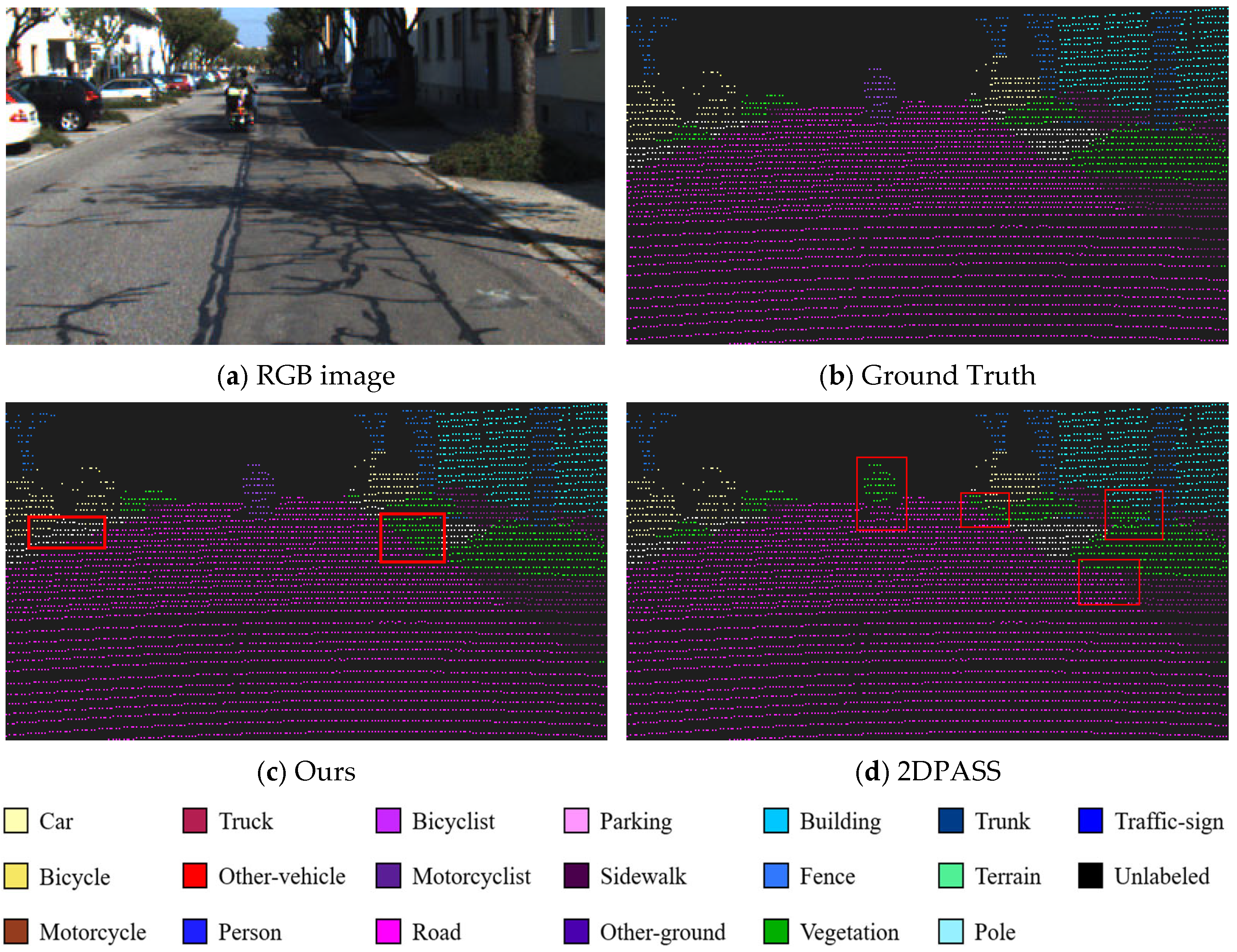

4.3. Comparative Results

4.4. Ablation Experiment

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Yan, X.; Gao, J.; Zheng, C.; Zheng, C.; Zhang, R.; Cui, S.; Li, Z. 2DPASS: 2D Priors Assisted Semantic Segmentation on LiDAR Point Clouds 2022. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; Springer Nature: Cham, Switzerland, 2022; pp. 677–695. [Google Scholar]

- Cui, Y.; Chen, R.; Chu, W.; Chen, L.; Tian, D.; Li, Y.; Cao, D. Deep Learning for Image and Point Cloud Fusion in Autonomous Driving: A Review. IEEE Trans. Intell. Transp. Syst. 2022, 23, 722–739. [Google Scholar] [CrossRef]

- Zhang, J.; Zhao, X.; Chen, Z.; Lu, Z. A Review of Deep Learning-Based Semantic Segmentation for Point Cloud. IEEE Access 2019, 7, 179118–179133. [Google Scholar] [CrossRef]

- Rong, M.; Cui, H.; Shen, S. Efficient 3D Scene Semantic Segmentation via Active Learning on Rendered 2D Images. IEEE Trans. Image Process. 2023, 32, 3521–3535. [Google Scholar] [CrossRef] [PubMed]

- Alokasi, H.; Ahmad, M.B. Deep Learning-Based Frameworks for Semantic Segmentation of Road Scenes. Electronics 2022, 11, 1884. [Google Scholar] [CrossRef]

- Xu, X.; Liu, J.; Liu, H. Interactive Efficient Multi-Task Network for RGB-D Semantic Segmentation. Electronics 2023, 12, 3943. [Google Scholar] [CrossRef]

- Kolhatkar, C.; Wagle, K. Review of SLAM Algorithms for Indoor Mobile Robot with LIDAR and RGB-D Camera Technology. In Innovations in Electrical and Electronic Engineering; Favorskaya, M.N., Mekhilef, S., Pandey, R.K., Singh, N., Eds.; Lecture Notes in Electrical Engineering; Springer: Singapore, 2021; Volume 661, pp. 397–409. ISBN 978-981-15-4691-4. [Google Scholar]

- Teso-Fz-Betoño, D.; Zulueta, E.; Sánchez-Chica, A.; Fernandez-Gamiz, U.; Saenz-Aguirre, A. Semantic Segmentation to Develop an Indoor Navigation System for an Autonomous Mobile Robot. Mathematics 2020, 8, 855. [Google Scholar] [CrossRef]

- Xue, J.; Dai, Y.; Wang, Y.; Qu, A. Multiscale Feature Extraction Network for Real-Time Semantic Segmentation of Road Scenes On the Autonomous Robot. Int. J. Control Autom. Syst. 2023, 21, 1993–2003. [Google Scholar] [CrossRef]

- Diab, A.; Kashef, R.; Shaker, A. Deep Learning for LiDAR Point Cloud Classification in Remote Sensing. Sensors 2022, 22, 7868. [Google Scholar] [CrossRef]

- Liu, W.; Wang, H.; Qiao, Y.; Zhang, H.; Yang, J. DLAFNet: Direct LiDAR-Aerial Fusion Network for Semantic Segmentation of 2-D Aerial Image and 3-D LiDAR Point Cloud. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 18, 1864–1875. [Google Scholar] [CrossRef]

- Hu, X.; Li, D. Research on a Single-Tree Point Cloud Segmentation Method Based on UAV Tilt Photography and Deep Learning Algorithm. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 4111–4120. [Google Scholar] [CrossRef]

- Long, J.; Shelhamer, E.; Darrell, T. Fully Convolutional Networks for Semantic Segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; IEEE Computer Society: Silver Spring, MD, USA, 2015; pp. 3431–3440. [Google Scholar] [CrossRef]

- Chen, L.-C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 834–848. [Google Scholar] [CrossRef] [PubMed]

- Fang, K.; Xu, K.; Wu, Z.; Huang, T.; Yang, Y. Three-Dimensional Point Cloud Segmentation Algorithm Based on Depth Camera for Large Size Model Point Cloud Unsupervised Class Segmentation. Sensors 2023, 24, 112. [Google Scholar] [CrossRef] [PubMed]

- Charles, R.Q.; Su, H.; Kaichun, M.; Guibas, L.J. PointNet: Deep Learning on Point Sets for 3D Classification and Segmentation. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 77–85. [Google Scholar]

- Qi, C.R.; Yi, L.; Su, H.; Guibas, L.J. PointNet++: Deep Hierarchical Feature Learning on Point Sets in a Metric Space. In Proceedings of the 2017 Conference on Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Volume 30. [Google Scholar]

- El Madawi, K.; Rashed, H.; El Sallab, A.; Nasr, O.; Kamel, H.; Yogamani, S. RGB and LiDAR Fusion Based 3D Semantic Segmentation for Autonomous Driving. In Proceedings of the 2019 IEEE Intelligent Transportation Systems Conference (ITSC), Auckland, New Zealand, 27–30 October 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 7–12. [Google Scholar]

- Vora, S.; Lang, A.H.; Helou, B.; Beijbom, O. PointPainting: Sequential Fusion for 3D Object Detection 2020. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; IEEE Computer Society: Washington, DC, USA, 2020; pp. 4604–4612. [Google Scholar]

- Meyer, G.P.; Charland, J.; Hegde, D.; Laddha, A.; Vallespi-Gonzalez, C. Sensor Fusion for Joint 3D Object Detection and Semantic Segmentation. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Long Beach, CA, USA, 16–17 June 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1230–1237. [Google Scholar]

- Zhang, Z.; Liang, Z.; Zhang, M.; Zhao, X.; Li, H.; Yang, M.; Tan, W.; Pu, S. RangeLVDet: Boosting 3D Object Detection in LIDAR With Range Image and RGB Image. IEEE Sens. J. 2022, 22, 1391–1403. [Google Scholar] [CrossRef]

- Song, H.; Cho, J.; Ha, J.; Park, J.; Jo, K. Panoptic-FusionNet: Camera-LiDAR Fusion-Based Point Cloud Panoptic Segmentation for Autonomous Driving. Expert Syst. Appl. 2024, 251, 123950. [Google Scholar] [CrossRef]

- Duan, Y.; Meng, L.; Meng, Y.; Zhu, J.; Zhang, J.; Zhang, J.; Liu, X. MFSA-Net: Semantic Segmentation With Camera-LiDAR Cross-Attention Fusion Based on Fast Neighbor Feature Aggregation. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 19627–19639. [Google Scholar] [CrossRef]

- Wu, Z.; Zhang, Y.; Lan, R.; Qiu, S.; Ran, S.; Liu, Y. APPFNet: Adaptive Point-Pixel Fusion Network for 3D Semantic Segmentation with Neighbor Feature Aggregation. Expert Syst. Appl. 2024, 251, 123990. [Google Scholar] [CrossRef]

- Bi, Y.; Liu, P.; Zhang, T.; Shi, J.; Wang, C. Multi-Scale Sparse Convolution and Point Convolution Adaptive Fusion Point Cloud Semantic Segmentation Method. Sci. Rep. 2025, 15, 4372. [Google Scholar] [CrossRef]

- Zhao, H.; Zhou, A. A Dual Projection Method for Semantic Segmentation of Large-Scale Point Clouds. Vis. Comput. 2025, 41, 9107–9126. [Google Scholar] [CrossRef]

- Chen, C.; Guo, Z.; Zeng, H.; Xiong, P.; Dong, J. RepGhost: A Hardware-Efficient Ghost Module via Re-Parameterization. arXiv 2024, arXiv:2211.06088. [Google Scholar]

- Shi, S.; Guo, C.; Jiang, L.; Wang, Z.; Shi, J.; Wang, X.; Li, H. PV-RCNN: Point-Voxel Feature Set Abstraction for 3D Object Detection. Int. J. Comput. Vis. 2021, 131, 531–551. [Google Scholar] [CrossRef]

- Behley, J.; Garbade, M.; Milioto, A.; Quenzel, J.; Behnke, S.; Stachniss, C.; Gall, J. SemanticKITTI: A Dataset for Semantic Scene Understanding of LiDAR Sequences. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 9296–9306. [Google Scholar]

- Caesar, H.; Bankiti, V.; Lang, A.H.; Vora, S.; Liong, V.E.; Xu, Q.; Krishnan, A.; Pan, Y.; Baldan, G.; Beijbom, O. nuScenes: A Multimodal Dataset for Autonomous Driving. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 11618–11628. [Google Scholar]

- Hu, Q.; Yang, B.; Xie, L.; Rosa, S.; Guo, Y.; Wang, Z.; Trigoni, N.; Markham, A. RandLA-Net: Efficient Semantic Segmentation of Large-Scale Point Clouds. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 11105–11114. [Google Scholar]

- Milioto, A.; Vizzo, I.; Behley, J.; Stachniss, C. RangeNet ++: Fast and Accurate LiDAR Semantic Segmentation. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 3 November 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 4213–4220. [Google Scholar]

- Zhao, H.; Jiang, L.; Jia, J.; Torr, P.H.S.; Koltun, V. Point Transformer. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhou, Z.; David, P.; Yue, X.; Xi, Z.; Gong, B.; Foroosh, H. PolarNet: An Improved Grid Representation for Online LiDAR Point Clouds Semantic Segmentation. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 9598–9607. [Google Scholar]

- Wu, X.; Lao, Y.; Jiang, L.; Liu, X.; Zhao, H. Point Transformer V2: Grouped Vector Attention and Partition-Based Pooling. arXiv 2022, arXiv:2210.05666. [Google Scholar] [CrossRef]

- Zhuang, Z.; Li, R.; Jia, K.; Wang, Q.; Li, Y.; Tan, M. Perception-Aware Multi-Sensor Fusion for 3D LiDAR Semantic Segmentation. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 16260–16270. [Google Scholar]

- Xu, C.; Wu, B.; Wang, Z.; Zhan, W.; Vajda, P.; Keutzer, K.; Tomizuka, M. SqueezeSegV3: Spatially-Adaptive Convolution for Efficient Point-Cloud Segmentation. arXiv 2020, arXiv:2003.03653. [Google Scholar]

- Cortinhal, T.; Tzelepis, G.; Aksoy, E.E. SalsaNext: Fast, Uncertainty-Aware Semantic Segmentation of LiDAR Point Clouds for Autonomous Driving. In Proceedings of the 15th International Symposium on Visual Computing, San Diego, CA, USA, 5–7 October 2020. [Google Scholar]

- Tang, H.; Liu, Z.; Zhao, S.; Lin, Y.; Lin, J.; Wang, H.; Han, S. Searching Efficient 3D Architectures with Sparse Point-Voxel Convolution 2020. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; Springer Nature: Cham, Switzerland, 2020; pp. 685–702. [Google Scholar]

| Datasets | Split Details | Total Scans | Classes | Purpose |

|---|---|---|---|---|

| SemanticKITTI | 22 sequences | 43,551 | 19 | Training & testing |

| nuScenes | 1000 scenes | 40,062 | 16 | Generalization Validation |

| Car | Bicycle | Motorcycle | Truck | Other-Vehicle | Person | Bicyclist | Road | Parking | Sidewalk | other-Ground | Building | Fence | Vegetation | Trunk | Terrian | Pole | Traffc-Sign | mIoU (%) | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| RandLANet | 92 | 8 | 12.8 | 74.8 | 46.7 | 52.3 | 46 | 93.4 | 32.7 | 78.4 | 0.1 | 84 | 43.5 | 83.7 | 57.3 | 73.1 | 48 | 27.3 | 50 |

| RangeNet++ | 89.4 | 26.5 | 48.4 | 33.9 | 26.7 | 54.8 | 69.4 | 92.9 | 37 | 69.9 | 0 | 83.4 | 51 | 83.3 | 54 | 69.1 | 49.1 | 34 | 51.2 |

| PointTransformer | 94 | 0 | 31.1 | 73.8 | 43.5 | 52.7 | 43.2 | 94.9 | 31.6 | 75.2 | 0 | 84 | 41.5 | 82.7 | 54.3 | 69.1 | 46 | 29.3 | 49.8 |

| PolarNet | 90.9 | 41.1 | 48.1 | 54.8 | 51.7 | 67.5 | 54.3 | 94.3 | 43.5 | 78.5 | 0 | 80.3 | 52.9 | 83.5 | 55.4 | 71.1 | 47.8 | 32.8 | 55.2 |

| PTv2 | 94 | 37.1 | 32 | 74.1 | 43.9 | 53.1 | 44.2 | 93.9 | 36.6 | 77.2 | 0 | 85.1 | 45.5 | 86.7 | 52.3 | 71.6 | 46.1 | 31.1 | 52.9 |

| SqueezeSegV3 | 87.1 | 34.3 | 48.6 | 47.5 | 47.1 | 58.1 | 53.8 | 95.3 | 43.1 | 78.2 | 0.3 | 78.9 | 53.2 | 82.3 | 55.5 | 70.4 | 46.3 | 33.2 | 53.3 |

| PointPainting | 94.7 | 17.7 | 35 | 28.8 | 55 | 59.4 | 63.6 | 95.3 | 39.9 | 77.6 | 0.4 | 87.5 | 55.1 | 87.7 | 67 | 72.9 | 61.8 | 36.5 | 54.5 |

| RGBAL | 87.3 | 36.1 | 26.4 | 64.6 | 54.6 | 58.1 | 72.7 | 95.1 | 45.6 | 77.5 | 0.8 | 78.9 | 53.4 | 84.3 | 61.7 | 72.9 | 56.1 | 41.5 | 56.2 |

| SalsaNext | 90.5 | 44.6 | 49.6 | 86.3 | 54.6 | 74.0 | 81.4 | 93.4 | 40.6 | 69.1 | 0 | 84.6 | 53.0 | 83.6 | 64.3 | 64.2 | 54.4 | 39.8 | 59.4 |

| SPVNAS | 96.5 | 44.8 | 63.1 | 59.9 | 64.3 | 72.0 | 86.0 | 93.9 | 42.4 | 75.9 | 0 | 88.8 | 59.1 | 88.0 | 67.5 | 73.0 | 63.5 | 44.3 | 62.3 |

| PMF | 95.4 | 47.8 | 62.9 | 68.4 | 75.2 | 78.9 | 71.6 | 96.4 | 43.5 | 80.5 | 0.1 | 88.7 | 60.1 | 88.6 | 72.7 | 75.3 | 65.5 | 43 | 63.9 |

| 2DPASS | 97 | 49.1 | 65.2 | 67.2 | 74.3 | 79.5 | 73.4 | 97.2 | 45.3 | 79.5 | 0.9 | 88.6 | 61.2 | 89.6 | 72.9 | 74.3 | 65.4 | 43.1 | 64.4 |

| APPFNet | 97.2 | 51.9 | 75.2 | 69.2 | 73.1 | 79.3 | 84.7 | 95.2 | 43.3 | 75.6 | 1.6 | 87.9 | 60.1 | 88.9 | 70.5 | 73.5 | 69.1 | 45.2 | 65.8 |

| GMAFNet (Ours) | 95.4 | 52.6 | 78.2 | 67 | 62.8 | 82.5 | 90.1 | 96.4 | 42.7 | 77.4 | 1.1 | 86.2 | 60.3 | 85.7 | 71.3 | 72.6 | 65.5 | 54.8 | 66.1 |

| Bus | Car | Engineer-Vehicle | Motorcycle | Pedestrian | Trafficcone | Trailer | Truck | Driveable | Otherflat | Sidewalk | Terrain | Construction | Vegetation | mIoU (%) | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| RangeNet++ | 77.2 | 80.9 | 30.2 | 66.8 | 69.6 | 52.1 | 54.2 | 72.3 | 94.1 | 66.6 | 63.5 | 70.1 | 83.1 | 79.8 | 65.5 |

| PMF | 89.8 | 92.1 | 54 | 77.7 | 80.5 | 70.9 | 64.6 | 82.9 | 95.5 | 73.3 | 73.6 | 74.8 | 89.4 | 87.7 | 76.7 |

| 2DPASS | 91.3 | 93.8 | 51.3 | 78 | 78.9 | 64.9 | 62.1 | 84.4 | 96.8 | 71.6 | 76.4 | 75.4 | 90.5 | 87.4 | 76.5 |

| GMAFNet (Ours) | 96 | 93.7 | 58.1 | 83.9 | 81.1 | 60.4 | 73.6 | 88.9 | 96.5 | 71.9 | 75.4 | 75.1 | 88.6 | 87 | 77.8 |

| Method | Params (M) | Inference Time (ms) | mIoU (%) | Training Time (h) |

|---|---|---|---|---|

| 2DPASS | 33.2 | 158 | 64.4 | 130 |

| GMAFNet (Ours) | 33.2 | 162 | 66.1 | 172 |

| RepGhost | VSA | RoI-Grid Pooling | DGAF | Training Time (h) | |

|---|---|---|---|---|---|

| 1 | × | × | × | × | 130 |

| 2 | √ | × | × | × | 136 |

| 3 | √ | √ | × | × | 148 |

| 4 | √ | √ | √ | × | 158 |

| 5 | √ | √ | √ | √ | 172 |

| 2DPASS | RepGhost | VSA | RoI-Grid Pooling | DGAF | mIo U(%) |

|---|---|---|---|---|---|

| √ | × | × | × | × | 64.4 |

| √ | √ | × | × | × | 64.7 |

| √ | √ | √ | × | × | 65.2 |

| √ | √ | √ | √ | × | 65.4 |

| √ | √ | √ | √ | √ | 66.1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kong, X.; Wu, W.; Wu, M.; Gui, Z.; Luo, Z.; Miao, C. GMAFNet: Gated Mechanism Adaptive Fusion Network for 3D Semantic Segmentation of LiDAR Point Clouds. Electronics 2025, 14, 4917. https://doi.org/10.3390/electronics14244917

Kong X, Wu W, Wu M, Gui Z, Luo Z, Miao C. GMAFNet: Gated Mechanism Adaptive Fusion Network for 3D Semantic Segmentation of LiDAR Point Clouds. Electronics. 2025; 14(24):4917. https://doi.org/10.3390/electronics14244917

Chicago/Turabian StyleKong, Xiangbin, Weijun Wu, Minghu Wu, Zhihang Gui, Zhe Luo, and Chuyu Miao. 2025. "GMAFNet: Gated Mechanism Adaptive Fusion Network for 3D Semantic Segmentation of LiDAR Point Clouds" Electronics 14, no. 24: 4917. https://doi.org/10.3390/electronics14244917

APA StyleKong, X., Wu, W., Wu, M., Gui, Z., Luo, Z., & Miao, C. (2025). GMAFNet: Gated Mechanism Adaptive Fusion Network for 3D Semantic Segmentation of LiDAR Point Clouds. Electronics, 14(24), 4917. https://doi.org/10.3390/electronics14244917