A Human-Adaptive Model for User Performance and Fatigue Evaluation during Gaze-Tracking Tasks

Abstract

1. Introduction

2. Overview of Related Works

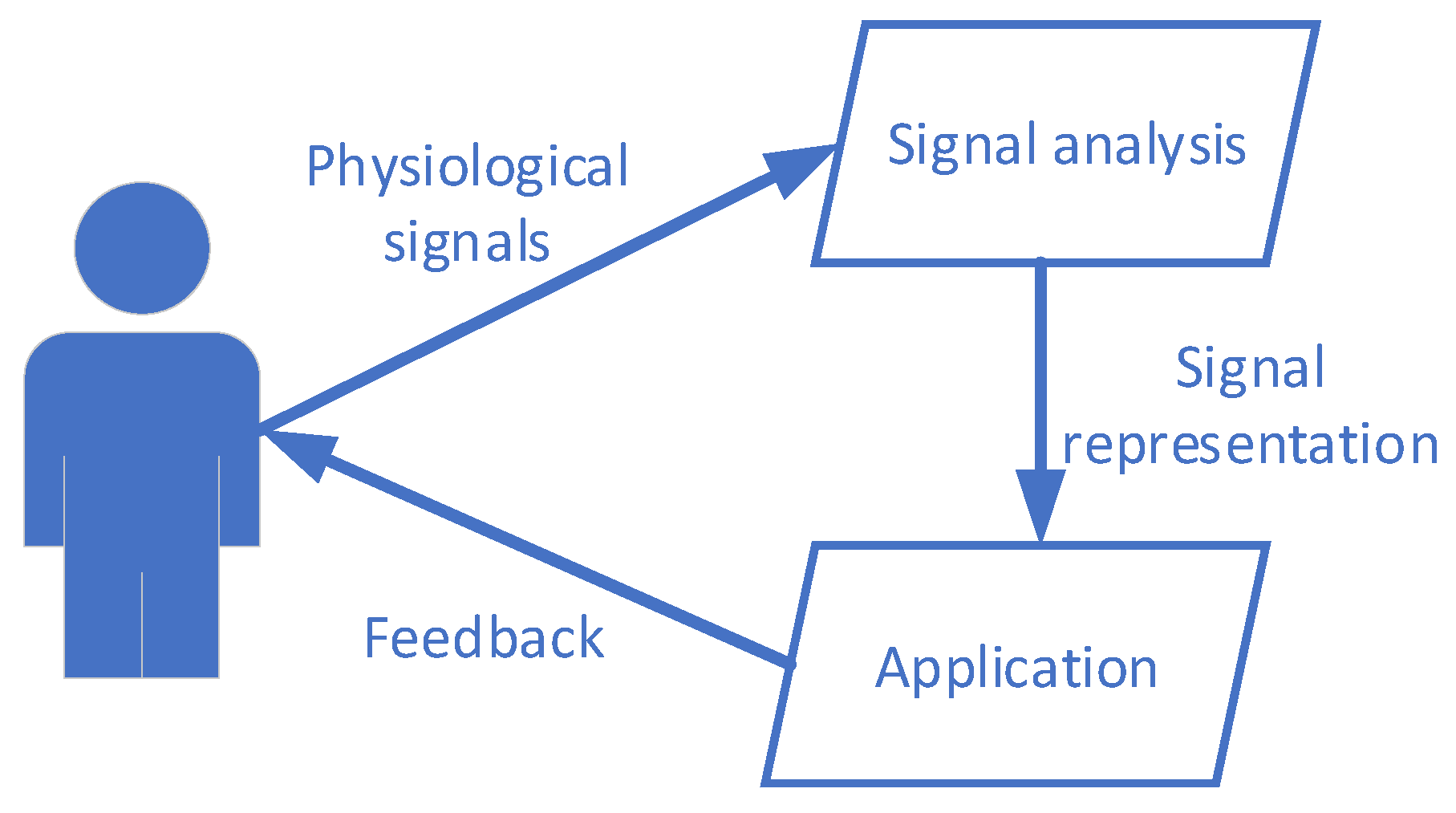

3. Foundation of Physiological Computing Systems

3.1. Biofeedback

3.2. Fatigue in HCI

3.3. Combination of Biocybernetic Loop and Performance Evaluation

4. Human-Assistive HCI Model

4.1. Structure of the Model

- The interaction layer sets communication between the user and the system. It has two components: input channel and feedback activity.

- 1.1

- The input channel represents the input modality that is used for control of the system.

- 1.2

- Feedback represents the response of the system when fatigue effects appear.

- The intelligent layer is a central component of the model responsible for coordination of other components and decision-making processes.

- The performance evaluation procedure is responsible for performance evaluation of the user using the system.

- The control layer represents application-specific actions to control the system.

- Interaction layer. This layer provides tools of communication and control of the system. It is divided into two blocks: the input channel and feedback activity. The input channel is responsible for capturing an input modality which is presented in the model as an input channel. Feedback activity is a specific response of the system when the intelligent layer triggers a decreased level of performance. The purpose of this activity is to help the user relax and recover from mental and (or) physical fatigue. The type of feedback can be visual, auditory, tactile, or somatosensory.

- Intelligent layer. This layer is responsible for decision-making processes. Each time the user sends an input signal to the system, a decision must be made whether the signal should be converted to a control command, or a recovery activity should be provided to the user. The features of the signal, which represent fatigue, depend on the type of input modality. The extraction of these features is made in an intelligent layer. Afterwards, the extracted features are sent to a performance evaluation procedure, which returns feedback as an estimate of current performance level. The features of performance can also be received from the control layer as specific metrics of application (e.g., accuracy of user control, input speed, information transfer rate, etc.). Then, the decision is made whether the user should keep controlling the system or the fatigue is too high, and the recovery activity should be activated. Furthermore, the classification of a signal to determine the specific control command of application is also made in the intelligent layer.

- Performance evaluation procedure. This serves as a tool for quantitative assessment of user performance. The performance itself may depend on fatigue and training aspects of a specific user. The aforementioned procedure is application-specific and may vary from sophisticated fatigue feature extraction and classification techniques to a threshold function, which takes as an argument certain performance parameters. The output of this procedure is an estimate of performance level. The initial performance model can be pre-defined and, if necessary, modified online.

- Control layer. The control layer determines specific actions which are used to control the application. The application area is wide; technically it encompasses almost any digital device that can receive at least one input modality of any human-suitable form and can provide at least one output modality of any human-suitable form.

4.2. Control in the Proposed Model

- Sensory feedback activity can be sensed by the user. The feedback type can be visual, auditory, tactile, or somatosensory. The main purpose of any type of sensory feedback activity is to help a user regain performing abilities. Typical examples of such feedback are a GUI change due to an increased level of fatigue or inserts of relaxing music during the control process.

- Hidden feedback activity cannot be directly sensed by the user. In this case, the user can feel improvement of the interface performance or other metrics but cannot sense it. A typical example is the adjustment of control parameters (e.g., dwell time adjustments in gaze-tracking interfaces).

- In terms of how feedback activity is included into a control–feedback loop, it falls into (i) interruptible and (ii) uninterruptible feedback activity.

- Interruptible feedback activity interrupts the control process of the system. In this case, control of the system is disabled, and the user is instead stimulated by a relaxing activity.

- Uninterruptible feedback activity does not disable the control process. It is carried out simultaneously. The adjustment of control parameters is also a proper example to demonstrate this kind of feedback.

- Pre-processed data classification to determine control commands. This procedure is common for PCS. The complexity of the classification approach depends on the application. Physiological signal classification may require sophisticated pattern recognition methods (e.g., artificial neural networks, SVM, etc.). In some cases, additional feature extraction must precede classification to reduce the dimension of the data (e.g., PCA). In simple solutions, input data can be transformed to control commands by applying a threshold function. Some interface types do not require classification at all (e.g., the gaze-tracking interface provides point of gaze). Therefore, data classification is optional in this model.

- User performance feature extraction is an important process in HA-HCI. The extracted performance features are used in the performance evaluation procedure as input arguments. Therefore, the intelligent layer and performance evaluation procedure are strongly related. Since performance is usually affected by user fatigue and training factors, the feature extraction tends to search for features in the input signal that are related with user fatigue. To extract features from input data, one may need to link a physiological measure to a specific fatigue state. Karran calls this process inference [55]. Another way to estimate the performance features is to use pre-set application-specific performance metrics of the control layer. Performance metrics like accuracy and input speed are common for many systems and those metrics are strongly related with fatigue because those metrics decrease in the presence of fatigue. A combined approach, extracting fatigue features from both input data and performance metrics, may increase accuracy, but it is a more complex approach.

- Decisions regarding when feedback activity should be triggered depend on the performance evaluation procedure. The performance evaluation procedure returns the performance estimate to the intelligent layer. The performance estimate can be a numeric value or pre-defined user state. To activate the trigger when the performance estimate is a numeric value, a threshold or sigmoid function can be used. When a pre-defined user state is an indicator, the intelligent layer should recognize this state and execute the necessary actions.

4.3. Human Performance Modelling Using Impulse–Response Models

4.4. Gaze Performance Metrics

5. Design and Evaluation of PCS Application Based on HA-HCI Model

5.1. Architecture

- Multimodal interaction layer. It describes the means of communication and feedback. The user can use one of the following input channels: (1) eye movements and (2) keyboard control. The eye movement control is established via Tobii Eye Tracker 4C. Both input channels are switched alternately based on the supervision of the intelligent layer. The component of the input channel selector is responsible for switching the input channels and informing the user of which input channel is active at the moment.

- Intelligent layer. It is responsible for analyzing the input channel parameters and making decisions related with switching between input channels. The control using eye movements is a more demanding activity, which leads to fatigue more prominently. However, it is the primary control mode of the presented game; thus, the prolonged usage of it is of interest. The relation between the eye movement parameters and fatigue is not clear enough; therefore, it is the research focus of this study. The keyboard control is enabled when the eye movement parameters indicate fatigue. It is basically a layover of the eye movement. Keyboard control is terminated after a defined period.

- DHO-based performance model. This model is chosen since it has demonstrated promising results in modelling training effects on physical performance capacity [64]. It is investigated further in the following sections.

- Eye-controlled game. The idea of the game is based on a well-known Pac-Man game, which is a type of maze chase game. We implemented a version of the game in which a player must move in the maze horizontally or vertically and collect pills. The desired eye movements are made by navigating in the maze (Figure 6). The alternating vertical and horizontal movements of the eyes are the important part of therapy that were demonstrated to improve eyesight [80] and treat amblyopia [81] and eye movement disorder.

5.2. Subjects and Setup

6. Results

7. Discussion

7.1. Discussion on Performance in Assistive Systems

7.2. Limitations

7.3. Recommendations

- Analyze requirements for user performance introduced by the specific domain of application and the developed system.

- Analyze the communication modalities used by the system and any user-related effects on performance, such as those introduced by fatigue.

- Adopt the Banister or DHO model presented in this dissertation for the developed HCI of the system. The choice of the analytical performance models is not limited to the models presented in this dissertation.

- Implement a biocybernetic feedback loop to allow the adaptability of the HCI characteristics depending on human performance when working with the system in real time.

- Evaluate usability of the interface and test with users in a real-world environment.

7.4. Theoretical Implications

- Theoretical foundations: The study has helped to establish a theoretical foundation for understanding human fatigue recognition. It has identified key factors that influence fatigue, such as sleep deprivation, circadian rhythm disruption, and workload, and has shown how these factors can affect cognitive and physical performance. This study has also demonstrated that fatigue can have both subjective and objective components, with subjective experiences of fatigue often not correlating with objective performance measures.

- Multidisciplinary perspective: The study has drawn on insights from multiple disciplines, including psychology, neuroscience, physiology, and engineering. This multidisciplinary approach has helped to build a more comprehensive understanding of fatigue and has led to the development of more effective methods for detecting and measuring fatigue.

- Technology development: The study has contributed to the development of new technologies for detecting and monitoring fatigue. For example, wearable sensors and mobile apps have been developed that can track physiological indicators of fatigue, such as heart rate variability and skin conductance. These technologies have the potential to improve safety in high-risk industries, such as transportation and healthcare, by providing real-time feedback to workers and alerting them when they are at risk of fatigue-related errors.

7.5. Managerial and Practical Implications

- Occupational safety: The findings of the study have significant implications for occupational safety. Human fatigue is a critical factor in many workplace accidents and incidents. By developing an accurate and reliable model for recognizing human fatigue, managers can take proactive measures to prevent accidents and ensure the safety of workers.

- Workforce management: The study provides a valuable tool for managers to monitor employee fatigue levels and make informed decisions about scheduling, workload, and resource allocation. This can improve productivity, reduce absenteeism, and enhance employee well-being and job satisfaction.

- Training and education: The study highlights the importance of educating employees and managers about the risks of fatigue and the importance of recognizing and managing it. By providing training and education on this topic, organizations can promote a culture of safety and well-being.

- Human resources management: The study underscores the need for human resource managers to consider fatigue when designing job roles, selecting candidates, and managing performance. By taking fatigue into account, organizations can ensure that employees are appropriately matched to their roles and have the necessary support and resources to manage fatigue effectively.

- Healthcare: The study has implications for healthcare providers who are responsible for diagnosing and treating fatigue-related conditions. By improving our understanding of the physiological and behavioral signs of fatigue, healthcare providers can develop more effective interventions to manage fatigue and its associated health risks.

8. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Jacucci, G.; Fairclough, S.; Solovey, E.T. Physiological Computing. Computer 2015, 48, 12–16. [Google Scholar] [CrossRef]

- Allen, M. (Ed.) The SAGE Encyclopedia of Communication Research Methods; SAGE Publications, Inc.: Thousand Oaks, CA, USA, 2017. [Google Scholar] [CrossRef]

- Kumari, A.; Edla, D.R. A Study on Brain–Computer Interface: Methods and Applications. SN Comput. Sci. 2023, 4, 98. [Google Scholar] [CrossRef]

- Berman, J.; Hinson, R.; Lee, I.-C.; Huang, H. Harnessing Machine Learning and Physiological Knowledge for a Novel EMG-Based Neural-Machine Interface. IEEE Trans. Biomed. Eng. 2022, 1–12. [Google Scholar] [CrossRef]

- Karaman, Ç.Ç.; Sezgin, T.M. Gaze-based predictive user interfaces: Visualizing user intentions in the presence of uncertainty. Int. J. Hum. Comput. Stud. 2018, 111, 78–91. [Google Scholar] [CrossRef]

- Anitha, T.; Shanthi, N.; Sathiyasheelan, R.; Emayavaramban, G.; Rajendran, T. Brain-Computer Interface for Persons with Motor Disabilities—A Review. Open Biomed. Eng. J. 2019, 13, 127–133. [Google Scholar] [CrossRef]

- Floreani, E.D.; Rowley, D.; Kelly, D.; Kinney-Lang, E.; Kirton, A. On the feasibility of simple brain-computer interface systems for enabling children with severe physical disabilities to explore independent movement. Front. Hum. Neurosci. 2022, 16, 1007199. [Google Scholar] [CrossRef]

- Peters, B.; Eddy, B.; Galvin-McLaughlin, D.; Betz, G.; Oken, B.; Fried-Oken, M. A systematic review of research on augmentative and alternative communication brain-computer interface systems for individuals with disabilities. Front. Hum. Neurosci. 2022, 16, 952380. [Google Scholar] [CrossRef] [PubMed]

- Ahn, M.; Lee, M.; Choi, J.; Jun, S.C. A Review of Brain-Computer Interface Games and an Opinion Survey from Researchers, Developers and Users. Sensors 2014, 14, 14601–14633. [Google Scholar] [CrossRef]

- Antunes, J.; Santana, P. A Study on the Use of Eye Tracking to Adapt Gameplay and Procedural Content Generation in First-Person Shooter Games. Multimodal Technol. Interact. 2018, 2, 23. [Google Scholar] [CrossRef]

- Kasprowski, P. Eye Tracking Hardware: Past to Present, and Beyond. In Eye Tracking: Background, Methods, and Applications; Springer: New York, NY, USA, 2022; pp. 31–48. [Google Scholar] [CrossRef]

- Poole, A.; Ball, L.J. Eye tracking in HCI and usability research. In Encyclopedia of Human Computer Interaction; IGI Global: Hershey, PA, USA, 2006; pp. 211–219. [Google Scholar]

- Maskeliunas, R.; Damasevicius, R.; Martisius, I.; Vasiljevas, M. Consumer grade EEG devices: Are they usable for control tasks? Peerj 2016, 4, e1746. [Google Scholar] [CrossRef]

- Santhanaraj, K.K.; Ramya, M.M.; Dinakaran, D. A survey of assistive robots and systems for elderly care. J. Enabling Technol. 2021, 15, 66–72. [Google Scholar] [CrossRef]

- Liu, J.; Chi, J.; Yang, H.; Yin, X. In the eye of the beholder: A survey of gaze tracking techniques. Pattern Recognit. 2022, 132, 108944. [Google Scholar] [CrossRef]

- Frutos-Pascual, M.; Garcia-Zapirain, B. Assessing Visual Attention Using Eye Tracking Sensors in Intelligent Cognitive Therapies Based on Serious Games. Sensors 2015, 15, 11092–11117. [Google Scholar] [CrossRef]

- Krishnan, S.; Amudha, J.; Tejwani, S. Intelligent-based decision support system for diagnosing glaucoma in primary eyecare centers using eye tracker. J. Intell. Fuzzy Syst. 2021, 41, 5235–5242. [Google Scholar] [CrossRef]

- Grgič, R.G.; Crespi, S.A.; De’Sperati, C. Assessing Self-Awareness through Gaze Agency. PLoS ONE 2016, 11, e0164682. [Google Scholar] [CrossRef]

- Johansen, S.A.; San Agustin, J.; Skovsgaard, H.; Hansen, J.P.; Tall, M. Low cost vs. high-end eye tracking for usability testing. In CHI ‘11 Extended Abstracts on Human Factors in Computing Systems (CHI EA ‘11); ACM: New York, NY, USA, 2011; pp. 1177–1182. [Google Scholar]

- Choi, D.Y.; Hahn, M.H.; Lee, K.C. A Comparison of Buying Decision Patterns by Product Involvement: An Eye-Tracking Approach. In Proceedings of the Intelligent Information and Database Systems: 4th Asian Conference, ACIIDS 2012, Kaohsiung, Taiwan, 19–21 March 2012; Volume 7198, pp. 37–46. [Google Scholar] [CrossRef]

- Kamil, M.H.F.M.; Jaafar, A. Usability of package and label designs using eye tracking. In Proceedings of the 2011 IEEE Conference on Open System, Langkawi, Malaysia, 25–28 September 2011; pp. 316–321. [Google Scholar] [CrossRef]

- Băiașu, A.-M.; Dumitrescu, C. Contributions to Driver Fatigue Detection Based on Eye-tracking. Int. J. Circuits Syst. Signal Process. 2021, 15, 1–7. [Google Scholar] [CrossRef]

- Holmqvist, K.; Holsanova, J.; Barthelson, M.; Lundqvist, D. Reading or scanning? A study of newspaper and net paper reading. In The Mind’s Eye: Cognitive and Applied Aspects of Eye Movement Research; Hyönä, J.R., Deubel, H., Eds.; Elsevier: Amsterdam, The Netherlands, 2003; pp. 657–670. [Google Scholar]

- Ninassi, A.; Le Meur, O.; Le Callet, P.; Barba, D.; Tirel, A. Task Impact on the Visual Attention in Subjective Image Quality Assessment. In Proceedings of the 14th European Signal Processing Conference, Florence, Italy, 4–8 September 2006. [Google Scholar]

- Kaklauskas, A.; Vlasenko, A.; Raudonis, V.; Zavadskas, E.K.; Gudauskas, R.; Seniut, M.; Juozapaitis, I.; Jackute, L.; Kanapeckiene, S.; Rimkuviene, G. Kaklauskas: Student progress assessment with the help of an intelligent pupil analysis system. Eng. Appl. Artif. Intell. 2013, 26, 35–50. [Google Scholar] [CrossRef]

- Vasiljevas, M.; Gedminas, T.; Ševčenko, A.; Jančiukas, M.; Blažauskas, T.; Damaševičius, R. Modelling eye fatigue in gaze spelling task. In Proceedings of the 12th International Conference on Intelligent Computer Communication and Processing (ICCP), Cluj-Napoca, Romania, 8–10 September 2016; pp. 95–102. [Google Scholar] [CrossRef]

- Hooge, I.T.C.; Niehorster, D.C.; Nyström, M.; Andersson, R.; Hessels, R.S. Fixation classification: How to merge and select fixation candidates. Behav. Res. Methods 2022, 54, 2765–2776. [Google Scholar] [CrossRef] [PubMed]

- Altemir, I.; Alejandre, A.; Fanlo-Zarazaga, A.; Ortín, M.; Pérez, T.; Masiá, B.; Pueyo, V. Evaluation of Fixational Behavior throughout Life. Brain Sci. 2022, 12, 19. [Google Scholar] [CrossRef]

- Masedu, F.; Vagnetti, R.; Pino, M.C.; Valenti, M.; Mazza, M. Comparison of Visual Fixation Trajectories in Toddlers with Autism Spectrum Disorder and Typical Development: A Markov Chain Model. Brain Sci. 2021, 12, 10. [Google Scholar] [CrossRef] [PubMed]

- Shah, S.M.; Sun, Z.; Zaman, K.; Hussain, A.; Shoaib, M.; Pei, L. A Driver Gaze Estimation Method Based on Deep Learning. Sensors 2022, 22, 3959. [Google Scholar] [CrossRef] [PubMed]

- Yuan, G.; Wang, Y.; Yan, H.; Fu, X. Self-calibrated driver gaze estimation via gaze pattern learning. Knowl.-Based Syst. 2021, 235, 107630. [Google Scholar] [CrossRef]

- Ledezma, A.; Zamora, V.; Sipele, Ó.; Sesmero, M.; Sanchis, A. Implementing a Gaze Tracking Algorithm for Improving Advanced Driver Assistance Systems. Electronics 2021, 10, 1480. [Google Scholar] [CrossRef]

- Khan, M.Q.; Lee, S. Gaze and Eye Tracking: Techniques and Applications in ADAS. Sensors 2019, 19, 5540. [Google Scholar] [CrossRef] [PubMed]

- Naqvi, R.A.; Arsalan, M.; Batchuluun, G.; Yoon, H.S.; Park, K.R. Deep Learning-Based Gaze Detection System for Automobile Drivers Using a NIR Camera Sensor. Sensors 2018, 18, 456. [Google Scholar] [CrossRef] [PubMed]

- Lee, K.W.; Yoon, H.S.; Song, J.M.; Park, K.R. Convolutional Neural Network-Based Classification of Driver’s Emotion during Aggressive and Smooth Driving Using Multi-Modal Camera Sensors. Sensors 2018, 18, 957. [Google Scholar] [CrossRef]

- Naqvi, R.A.; Arsalan, M.; Rehman, A.; Rehman, A.U.; Loh, W.-K.; Paul, A. Deep Learning-Based Drivers Emotion Classification System in Time Series Data for Remote Applications. Remote Sens. 2020, 12, 587. [Google Scholar] [CrossRef]

- Pageaux, B.; Lepers, R. Fatigue Induced by Physical and Mental Exertion Increases Perception of Effort and Impairs Subsequent Endurance Performance. Front. Physiol. 2016, 7, 587. [Google Scholar] [CrossRef]

- Banister, E.W.; Calvert, T.W.; Savage, M.V.; Bach, T. A systems model of training for athletic performance. Aust. J. Sports Med. 1975, 7, 57–61. [Google Scholar]

- Pershin, I.; Kholiavchenko, M.; Maksudov, B.; Mustafaev, T.; Ibragimova, D.; Ibragimov, B. Artificial Intelligence for the Analysis of Workload-Related Changes in Radiologists’ Gaze Patterns. IEEE J. Biomed. Health Inform. 2022, 26, 4541–4550. [Google Scholar] [CrossRef]

- Li, F.; Chen, C.-H.; Lee, C.-H.; Feng, S. Artificial intelligence-enabled non-intrusive vigilance assessment approach to reducing traffic controller’s human errors. Knowl.-Based Syst. 2022, 239, 108047. [Google Scholar] [CrossRef]

- Lin, H.-J.; Chou, L.-W.; Chang, K.-M.; Wang, J.-F.; Chen, S.-H.; Hendradi, R. Visual Fatigue Estimation by Eye Tracker with Regression Analysis. J. Sens. 2022, 2022, 7642777. [Google Scholar] [CrossRef]

- Bafna-Rührer, T.; Bækgaard, P.; Hansen, J.P. Smooth-pursuit performance during eye-typing from memory indicates mental fatigue. J. Eye Mov. Res. 2022, 15. [Google Scholar] [CrossRef]

- Tseng, V.W.-S.; Valliappan, N.; Ramachandran, V.; Choudhury, T.; Navalpakkam, V. Digital biomarker of mental fatigue. npj Digit. Med. 2021, 4, 47. [Google Scholar] [CrossRef] [PubMed]

- Lohr, D.J.; Abdulin, E.; Komogortsev, O.V. Detecting the onset of eye fatigue in a live framework. In Proceedings of the Ninth Biennial ACM Symposium on Eye Tracking Research Applications, Charleston, SC, USA, 14–17 March 2016; pp. 315–316. [Google Scholar]

- Craye, C.; Rashwan, A.; Kamel, M.S.; Karray, F. A multi-modal driver fatigue and distraction assessment system. Int. J. Intell. Transp. Syst. Res. 2016, 14, 173–194. [Google Scholar] [CrossRef]

- Sommer, D.; Golz, M. Evaluation of PERCLOS based current fatigue monitoring technologies. In Proceedings of the 2010 Annual International Conference of the IEEE Engineering in Medicine and Biology, Buenos Aires, Argentina, 31 August–4 September 2010; Volume 2010, pp. 4456–4459. [Google Scholar]

- Suzuki, Y.; Yamamoto, S.; Kobayashi, D. Fatigue sensation of Eye Gaze Tracking System users. In New Ergonomics Perspective: Selected papers of the 10th Pan-Pacific Conference on Ergonomics; CRC Press: Boca Raton, FL, USA, 2015; pp. 279–282. [Google Scholar]

- Allanson, J.; Fairclough, S.H. A research agenda for physiological computing. Interact. Comput. 2004, 16, 857–878. [Google Scholar] [CrossRef]

- Dillon, A.; Kelly, M.; Robertson, I.H.; A Robertson, D. Smartphone Applications Utilizing Biofeedback Can Aid Stress Reduction. Front. Psychol. 2016, 7, 832. [Google Scholar] [CrossRef]

- Fairclough, S.H. Physiological Computing and Intelligent Adaptation. In Emotions and Affect in Human Factors and Human-Computer Interaction; Academic Press: Cambridge, MA, USA, 2017; pp. 539–556. [Google Scholar]

- Cambria, E. Affective Computing and Sentiment Analysis. In IEEE Intelligent Systems; IEEE: Piscataway, NJ, USA, 2016; Volume 31, pp. 102–107. [Google Scholar] [CrossRef]

- Ewing, K.C.; Fairclough, S.H.; Gilleade, K. Evaluation of an Adaptive Game that Uses EEG Measures Validated during the Design Process as Inputs to a Biocybernetic Loop. Front. Hum. Neurosci. 2016, 10, 223. [Google Scholar] [CrossRef]

- Labonte-Lemoyne, E.; Courtemanche, F.; Louis, V.; Fredette, M.; Sénécal, S.; Léger, P.-M. Dynamic Threshold Selection for a Biocybernetic Loop in an Adaptive Video Game Context. Front. Hum. Neurosci. 2018, 12, 282. [Google Scholar] [CrossRef]

- Conrad, C.D.; Bliemel, M. Psychophysiological Measures of Cognitive Absorption and Cognitive Load in E-Learning Applications. In Proceedings of the 37th International Conference on Information Systems, Dublin, Ireland, 11–14 December 2016. [Google Scholar]

- Karran, A.J. Exploring the Biocybernetic loop: Classifying Psychophysiological Responses to Cultural Artefacts using Physiological Computing. Ph.D. Thesis, Liverpool John Moores University, Merseyside, UK, 2014. [Google Scholar]

- Ji, Q.; Lan, P.; Looney, C. A probabilistic framework for modeling and real-time monitoring human fatigue. IEEE Trans. Syst. Man Cybern. Part A Syst. Hum. 2006, 36, 862–875. [Google Scholar] [CrossRef]

- Vicente, J.; Laguna, P.; Bartra, A.; Bailón, R. Drowsiness detection using heart rate variability. Med. Biol. Eng. Comput. 2016, 54, 927–937. [Google Scholar] [CrossRef] [PubMed]

- Gehlot, A.; Singh, R.; Siwach, S.; Akram, S.V.; Alsubhi, K.; Singh, A.; Noya, I.D.; Choudhury, S. Real Time Monitoring of Muscle Fatigue with IoT and Wearable Devices. Comput. Mater. Contin. 2022, 72, 999–1015. [Google Scholar] [CrossRef]

- Shi, S.; Cao, Z.; Li, H.; Du, C.; Wu, Q.; Li, Y. Recognition System of Human Fatigue State Based on Hip Gait Information in Gait Patterns. Electronics 2022, 11, 3514. [Google Scholar] [CrossRef]

- Lalitharatne, T.D.; Hayashi, Y.; Teramoto, K.; Kiguchi, K. A study on effects of muscle fatigue on EMG-based control for human upper-limb power-assist. In Proceedings of the 2012 IEEE 6th International Conference on Information and Automation for Sustainability (ICIAfS), Beijing, China, 27–29 September 2012; pp. 124–128. [Google Scholar]

- Serbedzija, N.B.; Fairclough, S.H. Biocybernetic loop: From awareness to evolution. In Proceedings of the 2009 IEEE Congress on Evo-lutionary Computation, Trondheim, Norway, 18–21 May 2009; pp. 2063–2069. [Google Scholar]

- Muñoz, J.E.; Gouveia, E.R.; Cameirão, M.; Badia, S.B.I. The Biocybernetic Loop Engine: An Integrated Tool for Creating Physiologically Adaptive Videogames. In Proceedings of the 4th International Conference on Physiological Computing Systems, Madrid, Spain, 27–28 July 2017; pp. 45–54. [Google Scholar] [CrossRef]

- Yu, Y.; Hao, Z.; Li, G.; Liu, Y.; Yang, R.; Liu, H. Optimal search mapping among sensors in heterogeneous smart homes. Math. Biosci. Eng. 2022, 20, 1960–1980. [Google Scholar] [CrossRef]

- Duan, Z.; Song, P.; Yang, C.; Deng, L.; Jiang, Y.; Deng, F.; Jiang, X.; Chen, Y.; Yang, G.; Ma, Y.; et al. The impact of hyperglycaemic crisis episodes on long-term outcomes for inpatients presenting with acute organ injury: A prospective, multicentre follow-up study. Front. Endocrinol. 2022, 13, 1057089. [Google Scholar] [CrossRef]

- Liu, Y.; Heidari, A.A.; Cai, Z.; Liang, G.; Chen, H.; Pan, Z.; Alsufyani, A.; Bourouis, S. Simulated annealing-based dynamic step shuffled frog leaping algorithm: Optimal performance design and feature selection. Neurocomputing 2022, 503, 325–362. [Google Scholar] [CrossRef]

- Zhao, H.; Zhang, P.; Zhang, R.; Yao, R.; Deng, W. A novel performance trend prediction approach using ENBLS with GWO. Meas. Sci. Technol. 2022, 34, 025018. [Google Scholar] [CrossRef]

- Morin, S.; Ahmaïdi, S.; Leprêtre, P.M. Relevance of Damped Harmonic Oscillation for Modeling the Training Effects on Daily Physical Performance Capacity in Team Sport. Int. J. Sports Physiol. Perform. 2016, 11, 965–972. [Google Scholar] [CrossRef]

- Cornelissen, G. Cosinor-based rhythmometry. Theor. Biol. Med. Model. 2014, 11, 16. [Google Scholar] [CrossRef]

- Kolossa, D.; Bin Azhar, M.; Rasche, C.; Endler, S.; Hanakam, F.; Ferrauti, A.; Pfeiffer, M. Performance Estimation using the Fitness-Fatigue Model with Kalman Filter Feedback. Int. J. Comput. Sci. Sport 2017, 16, 117–129. [Google Scholar] [CrossRef]

- Busso, T.; Benoit, H.; Bonnefoy, R.; Feasson, L.; Lacour, J.R. Effects of training frequency on the dynamics of perfor-mance response to a single training bout. J. Appl. Physiol. 2002, 92, 572–580. [Google Scholar] [CrossRef]

- Brousseau, B.; Rose, J.; Eizenman, M. Accurate Model-Based Point of Gaze Estimation on Mobile Devices. Vision 2018, 2, 35. [Google Scholar] [CrossRef] [PubMed]

- Hunt, R.; Blackmore, T.; Mills, C.; Dicks, M. Evaluating the integration of eye-tracking and motion capture technologies: Quantifying the accuracy and precision of gaze measures. I-Perception 2022, 13. [Google Scholar] [CrossRef]

- Mardanbegi, D.; Kurauchi, A.T.N.; Morimoto, C.H. An investigation of the distribution of gaze estimation errors in head mounted gaze trackers using polynomial functions. J. Eye Mov. Res. 2018, 11. [Google Scholar] [CrossRef] [PubMed]

- Wyder, S.; Cattin, P.C. Eye tracker accuracy: Quantitative evaluation of the invisible eye center location. Int. J. Comput. Assist. Radiol. Surg. 2018, 13, 1651–1660. [Google Scholar] [CrossRef] [PubMed]

- Miao, X.; Xue, C.; Li, X.; Yang, L. A Real-Time Fatigue Sensing and Enhanced Feedback System. Information 2022, 13, 230. [Google Scholar] [CrossRef]

- Komogortsev, O.V.; Gobert, D.V.; Jayarathna, S.; Koh, D.; Gowda, S. Standardization of automated analyses of ocu-lomotor fixation and saccadic behaviors. IEEE Trans. Biomed. Eng. 2010, 57, 2635–2645. [Google Scholar] [CrossRef]

- Ehinger, B.V.; Groß, K.; Ibs, I.; König, P. A new comprehensive eye-tracking test battery concurrently evaluating the Pupil Labs glasses and the EyeLink 1000. Peerj 2019, 7, e7086. [Google Scholar] [CrossRef]

- Vasiljevas, M.; Damaševičius, R.; Połap, D.; Woźniak, M. Gamification of Eye Exercises for Evaluating Eye Fatigue. In Artificial Intelligence and Soft Computing; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2019; pp. 104–114. [Google Scholar] [CrossRef]

- Vasiljevas, M. Human-assistive interface model of physiological computing systems. Ph.D. Thesis, Kaunas University of Technology, Kaunas, Lithuania, 2019. [Google Scholar]

- Brunyé, T.T.; Mahoney, C.R.; Augustyn, J.S.; Taylor, H.A. Horizontal saccadic eye movements enhance the retrieval of landmark shape and location information. Brain Cogn. 2009, 70, 279–288. [Google Scholar] [CrossRef]

- Fronius, M.; Cirina, L.; Kuhli, C.; Cordey, A.; Ohrloff, C. Training the Adult Amblyopic Eye with “Perceptual Learning” after Vision Loss in the Non-Amblyopic Eye. Strabismus 2006, 14, 75–79. [Google Scholar] [CrossRef]

- Madhusanka, B.; Ramadass, S.; Rajagopal, P.; Herath, H. Biofeedback method for human–computer interaction to improve elder caring: Eye-gaze tracking. In Predictive Modeling in Biomedical Data Mining and Analysis; Academic Press: Cambridge, MA, USA, 2022; pp. 137–156. [Google Scholar] [CrossRef]

- Tabbal, J.; Mechref, K.; El-Falou, W. Brain Computer Interface for smart living environment. In Proceedings of the 9th Cairo International Biomedical Engineering Conference (CIBEC), Cairo, Egypt, 20–22 December 2018; pp. 61–64. [Google Scholar] [CrossRef]

- Maskeliūnas, R.; Damaševičius, R.; Segal, S. A Review of Internet of Things Technologies for Ambient Assisted Living Environments. Future Internet 2019, 11, 259. [Google Scholar] [CrossRef]

- Shneiderman, B.; Plaisant, C.; Cohen, M.; Jacobs, S.; Elmqvist, N.; Diakopoulos, N. Designing the User Interface: Strategies for Effective Human-Computer Interaction; Pearson: London, UK, 2016. [Google Scholar]

- Armada-Cortés, E.; Benítez-Muñoz, J.A.; Juan, A.F.S.; Sánchez-Sánchez, J. Evaluation of Neuromuscular Fatigue in a Repeat Sprint Ability, Countermovement Jump and Hamstring Test in Elite Female Soccer Players. Int. J. Environ. Res. Public Health 2022, 19, 15069. [Google Scholar] [CrossRef] [PubMed]

- Lu, B. Intelligent control system of physical strength in sports based on independent component analysis. Neural Comput. Appl. 2023, 35, 4397–4408. [Google Scholar] [CrossRef]

- Al Said, N.; Al-Said, K.M. Assessment of Acceptance and User Experience of Human-Computer Interaction with a Computer Interface. Int. J. Interact. Mob. Technol. 2020, 14, 107–125. [Google Scholar] [CrossRef]

- Hu, H.; Liu, Y.; Yue, K. Evaluating 3D Visual Fatigue Induced by VR Headset Using EEG and Self-attention CNN. In Proceedings of the 2022 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW), Christchurch, New Zealand, 12–16 March 2022; pp. 784–785. [Google Scholar] [CrossRef]

- Batmaz, A.U.; Stuerzlinger, W. The Effect of Pitch in Auditory Error Feedback for Fitts’ Tasks in Virtual Reality Training Systems. In Proceedings of the 2021 IEEE Virtual Reality and 3D User Interfaces (VR), Lisboa, Portugal, 27 March–1 April 2021; pp. 85–94. [Google Scholar] [CrossRef]

- Gamonales, J.M.; Rojas-Valverde, D.; Muñoz-Jiménez, J.; Serrano-Moreno, W.; Ibáñez, S.J. Effectiveness of Nitrate Intake on Recovery from Exercise-Related Fatigue: A Systematic Review. Int. J. Environ. Res. Public Health 2022, 19, 12021. [Google Scholar] [CrossRef] [PubMed]

- Rossiter, A.; Warrington, G.D.; Comyns, T.M. Effects of Long-Haul Travel on Recovery and Performance in Elite Athletes: A Systematic Review. J. Strength Cond. Res. 2022, 36, 3234–3245. [Google Scholar] [CrossRef]

- Markovic, G.; Dizdar, D.; Jukic, I.; Cardinale, M. Reliability and Factorial Validity of Squat and Countermovement Jump Tests. J. Strength Cond. Res. 2004, 18, 551–555. [Google Scholar]

| Ref. | Strengths | Weaknesses |

|---|---|---|

| Băiașu and Dumitrescu [22] | Detects drowsiness | Only frontal face images are used. The images are not captured in real-life setting |

| Pershin et al. [39] | Suggested an information gain metric blending reading time, speed, and coverage | The study was highly specific and used chest X-ray images |

| Li et al. [40] | Measured comprehension time as a proxy for vigilance | Used simple reaction time test |

| Lin et al. [41] | Measured accuracy of gaze fixation | Used just one minute of gaze data |

| Bafna-Rührer et al. [42] | Analyzed as characteristics of smooth-pursuit eye movements | High level of false positives |

| Tseng et al. [43] | Used gaze fixation of circular stimulus and measured accuracy | Used just a few minutes of gaze data |

| Lohr et al. [44] | Measured fatigue as accuracy of fixation on target | Assumes the user is not fatigued initially |

| Craye et al. [45] | Used gaze tracking as one of inputs in multimodal system | Only analyzes eye opening/closing |

| Sommer et al. [46] | Uses electro-oculogram | Temporal resolution is low |

| Suzuki et al. [47] | Captures cognitive fatigue | The study used only 10 min of gaze-tracking data |

| Criterion | Fatigue in Sports | Fatigue in HCI |

|---|---|---|

| Origins of fatigue | Mental/Physical | Mental/Physical |

| Temporal scale | Months/week/days | Hours/minutes |

| Detection methods | Physiological signals/Subjective tests/ Objective tests (performance)/Analytical training—fatigue models | Physiological signals/ Subjective tests/ Performance-based approaches |

| Environmental conditions | High physical activity and considerable strain | Low physical activity and low or medium strain |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Vasiljevas, M.; Damaševičius, R.; Maskeliūnas, R. A Human-Adaptive Model for User Performance and Fatigue Evaluation during Gaze-Tracking Tasks. Electronics 2023, 12, 1130. https://doi.org/10.3390/electronics12051130

Vasiljevas M, Damaševičius R, Maskeliūnas R. A Human-Adaptive Model for User Performance and Fatigue Evaluation during Gaze-Tracking Tasks. Electronics. 2023; 12(5):1130. https://doi.org/10.3390/electronics12051130

Chicago/Turabian StyleVasiljevas, Mindaugas, Robertas Damaševičius, and Rytis Maskeliūnas. 2023. "A Human-Adaptive Model for User Performance and Fatigue Evaluation during Gaze-Tracking Tasks" Electronics 12, no. 5: 1130. https://doi.org/10.3390/electronics12051130

APA StyleVasiljevas, M., Damaševičius, R., & Maskeliūnas, R. (2023). A Human-Adaptive Model for User Performance and Fatigue Evaluation during Gaze-Tracking Tasks. Electronics, 12(5), 1130. https://doi.org/10.3390/electronics12051130