ML-Based Traffic Classification in an SDN-Enabled Cloud Environment

Abstract

1. Introduction

- Supervised learning includes the tasks of classification, regression, and ranking. It usually involves dealing with a prediction problem. Supervised classification consists of analyzing new data and assigning them to a predefined class according to their characteristics or attributes. The algorithms are based on decision trees, neural networks, the Bayes rule, and k-nearest neighbors. Moreover, an expert is employed to correctly label examples. The learning must then find or approximate the function that allows the assignment of the correct label to these examples. Linear discriminant analysis and the support vector machine (SVM) are typical examples.

- Unsupervised learning (clustering, segmentation) differs in that there are no predefined classes; the objective is to group records that appear to be similar into the same class. The problem is that of finding homogeneous groups in a population. Techniques for aggregation around mobile centers or hierarchical bottom-up classification are often used. The essential difficulty in this type of construction is validation. No experts are required. The algorithm must discover the structure of data by itself. Clustering and Gaussian mixtures are examples of unsupervised learning algorithms.

2. Related Work

3. Traffic Data

3.1. Dataset

- Facebook: We opened the Facebook web page on a Chrome browser, and we simulated typical behaviors that a user would have by posting something, liking a publication, having a chat conversation (sending and receiving messages), seeing the last news feed, and then visiting a random page.

- YouTube: We opened this on a Chrome browser, and then we chose a video of five minutes to watch.

3.2. Features Selection

4. Methodology

- @relation <name>: Every ARFF file must start with this declaration on its first line, ideally with the name of the file (one cannot leave blank lines at the beginning). <name> will be a string, and if it contains spaces, it should be enclosed in quotes.

- @attribute <name> <data_type>: This section includes a line for each attribute existing in the dataset to indicate its name and the type. With <name>, the name of the attribute will be indicated, and it must begin with a letter; if it contains spaces, it must be enclosed in quotation marks. For <type_of_data>, the type of data for this attribute can be:

- -

- numeric, meaning that the attribute is a number.

- -

- an integer, indicating that this attribute contains whole numbers.

- -

- a string, indicating that this attribute represents text strings.

- -

- a date [<date-format>]; in <date-format>, we indicate the date format, which is of the type “yyyy-MM-dd’T’HH:mm:ss”.

- -

- <nominalspecification>. These are data types defined by the user and can take the indicated values. Each of the values must be separated by commas.

- @data: This section includes the data themselves. Each element is separated by commas, and all lines must have the same number of elements, which is a number that matches the number of declarations in @attribute, which was added in the previous section. If no data are available, a question mark (?) is written in their place, since this character represents the absence of a value in Weka. The decimal separator must be a period, and strings must be enclosed in single quotes.

- Naive Bayes—Naive Bayes is a supervised classification and prediction technique that builds probabilistic models; it is based on Bayes’ theorem and on data independence. It is a supervised technique, since it requires previously classified examples for its operation. In general terms, Bayes’ theorem expresses the probability that an event occurs when it is known that another event also occurs. Bayesian statistics are used to calculate estimates based on prior subjective knowledge.The implementations of this theorem are adapted with use and allow the combination of data from various sources and their expression in the degree of probability.

- SVM—Support Vector Machines and wide-margin separators are a set of supervised learning techniques designed to solve problems of discrimination and regression. SVMs were developed in the 1990s based on the theoretical considerations of Vladimir Vapnik [31] concerning the development of a statistical theory of learning: the Vapnik–Chervonenkis theory. SVMs were quickly adopted for their ability to work with large data sizes and low numbers of hyperparameters due to the fact that they are well founded in theory and their good results in practice.Wide-margin separators are classifiers that rely on two key ideas to deal with nonlinear discrimination problems and to reformulate classification problems as quadratic optimization problems. The first key idea is the notion of the maximum margin. The margin is the distance between a separation boundary and the nearest samples. The latter are called support vectors.In order to be able to deal with cases in which the data are not linearly separable, the second key idea of SVMs is to transform the representation space of the input data into a larger (possibly infinite) dimensional space in which a linear separator is likely to exist.

- Random Forest—This algorithm is a combination of predictor trees in such a way that each tree depends on the values of a random vector that is tested independently and with the same distribution for each of these.Random Forest makes use of an aggregation technique developed by Leo Breiman that improves the classification precision by incorporating randomness into the construction of each individual classifier. This randomization is introduced both in the construction of the tree and in the training samples. This classifier, which is simple to train and adjust, is often used.

- J48 tree (C4.5)—This is one of the most widely used ML algorithms. Its operation is based on generating a decision tree from the data through partitions that are made recursively according to the depth-first strategy. Before each data partition, the algorithm considers all of the possible tests that can divide the dataset and selects the test that produces the highest information gain.For each discrete attribute, a test with “n” results was considered, where “n” was the number of possible values that the attribute could take. For each continuous attribute, a binary test was performed on each of the values that the attribute took from the data.

5. Results and Discussion

- TP: true positive—reflects the average number of samples that the model correctly classified from the positive class.

- TN: true negative—reflects the average number of samples that the model correctly classified from the negative class.

- FP: false positive—reflects the average number of samples that the model incorrectly classified from the positive class when, in fact, it was from the negative class.

- FN: false negative—reflects the average number of samples that the model incorrectly classified from the negative class when, in fact, it was from positive class.

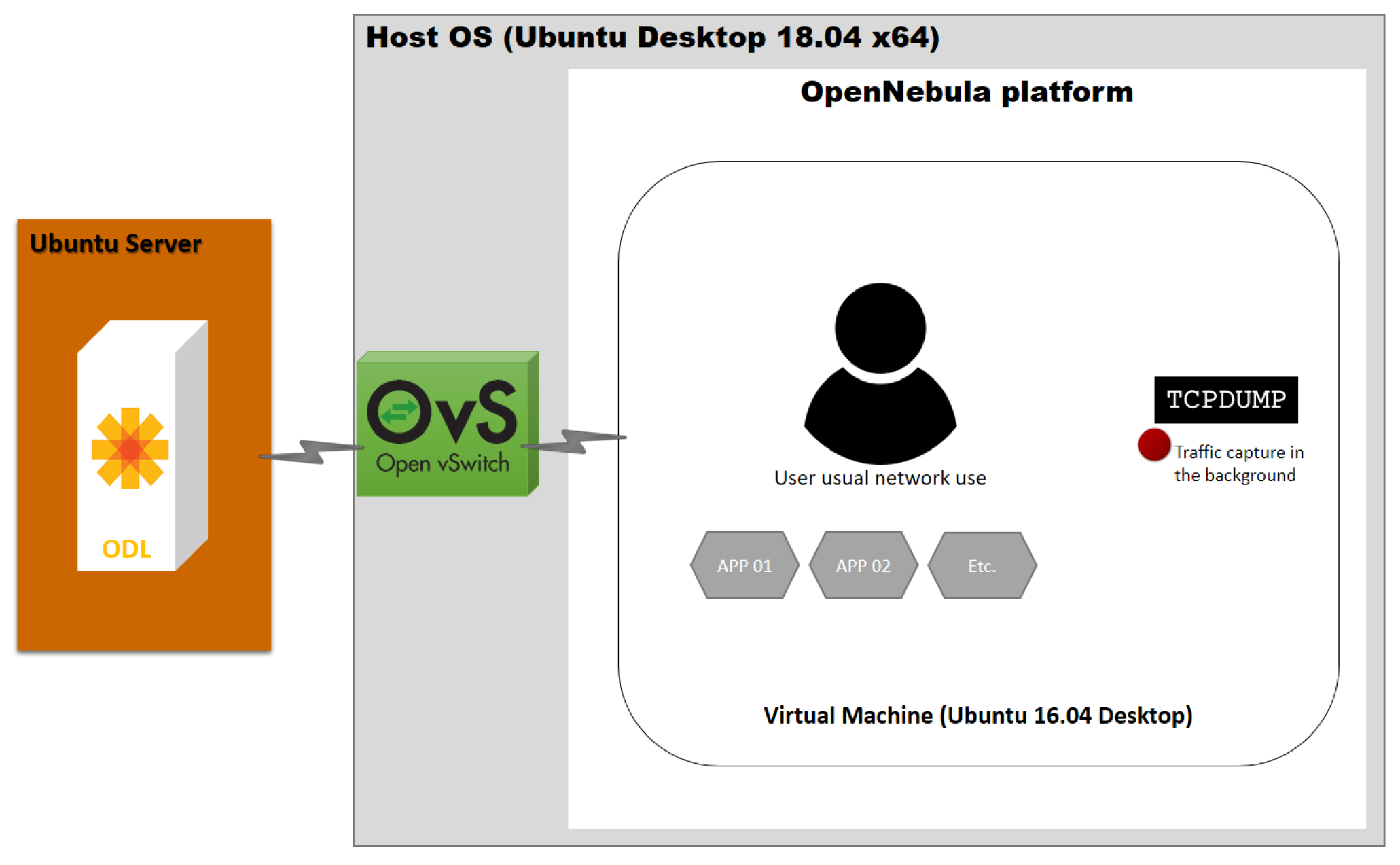

- First: An undiscussed platform setup that included two known platforms of the SDN/cloud fields (OpenDaylight and OpenNebula) was studied. As the integration of the cloud and an SDN is a great facilitator when it comes to moving towards automation, artificial intelligence and machine learning techniques were used to ensure that all digitization initiatives were integrated in a coherent way. This was all with the ultimate goal of delivering the best end-user experience and quality via traffic classification mechanisms.

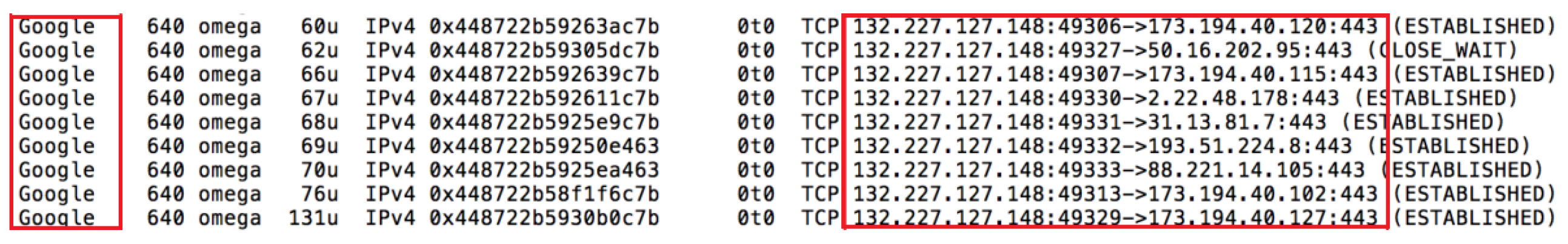

- Second: An accurate training data was created, where we knew exactly which traffic was generated by each application, and this was then used to train our classifiers.

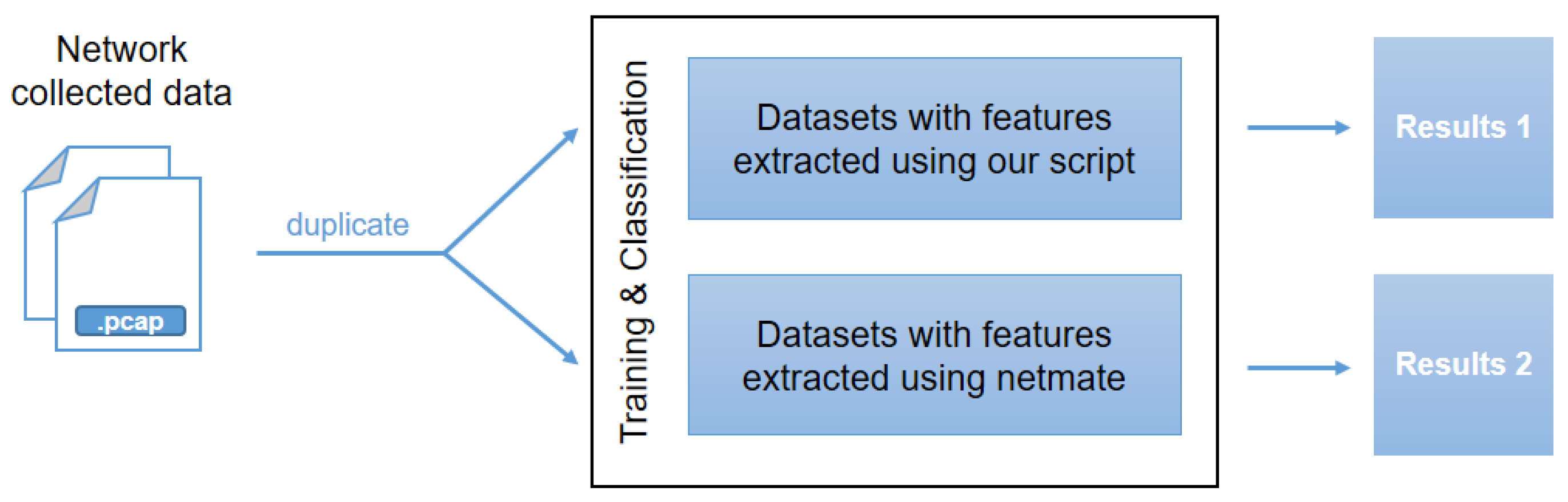

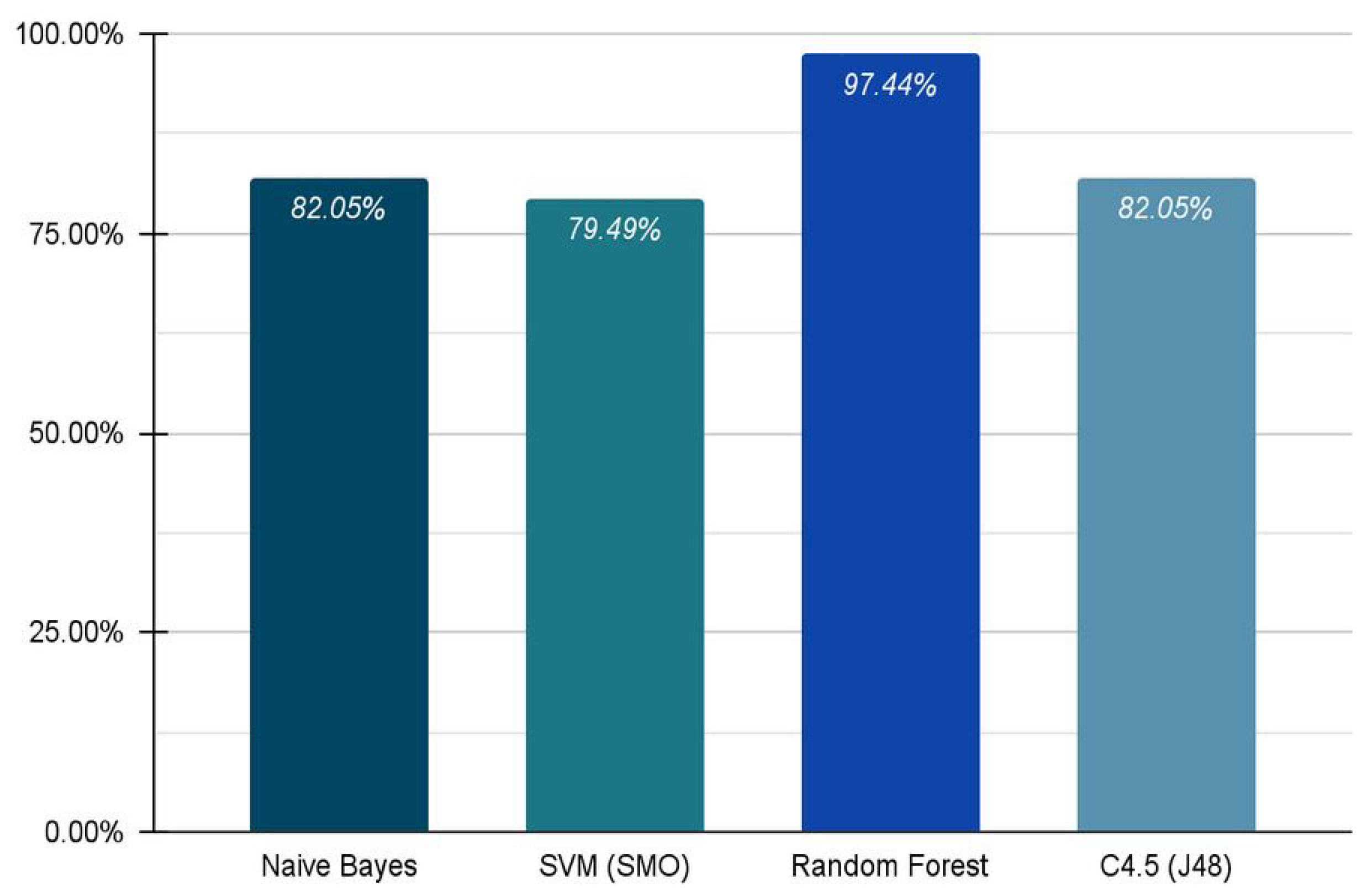

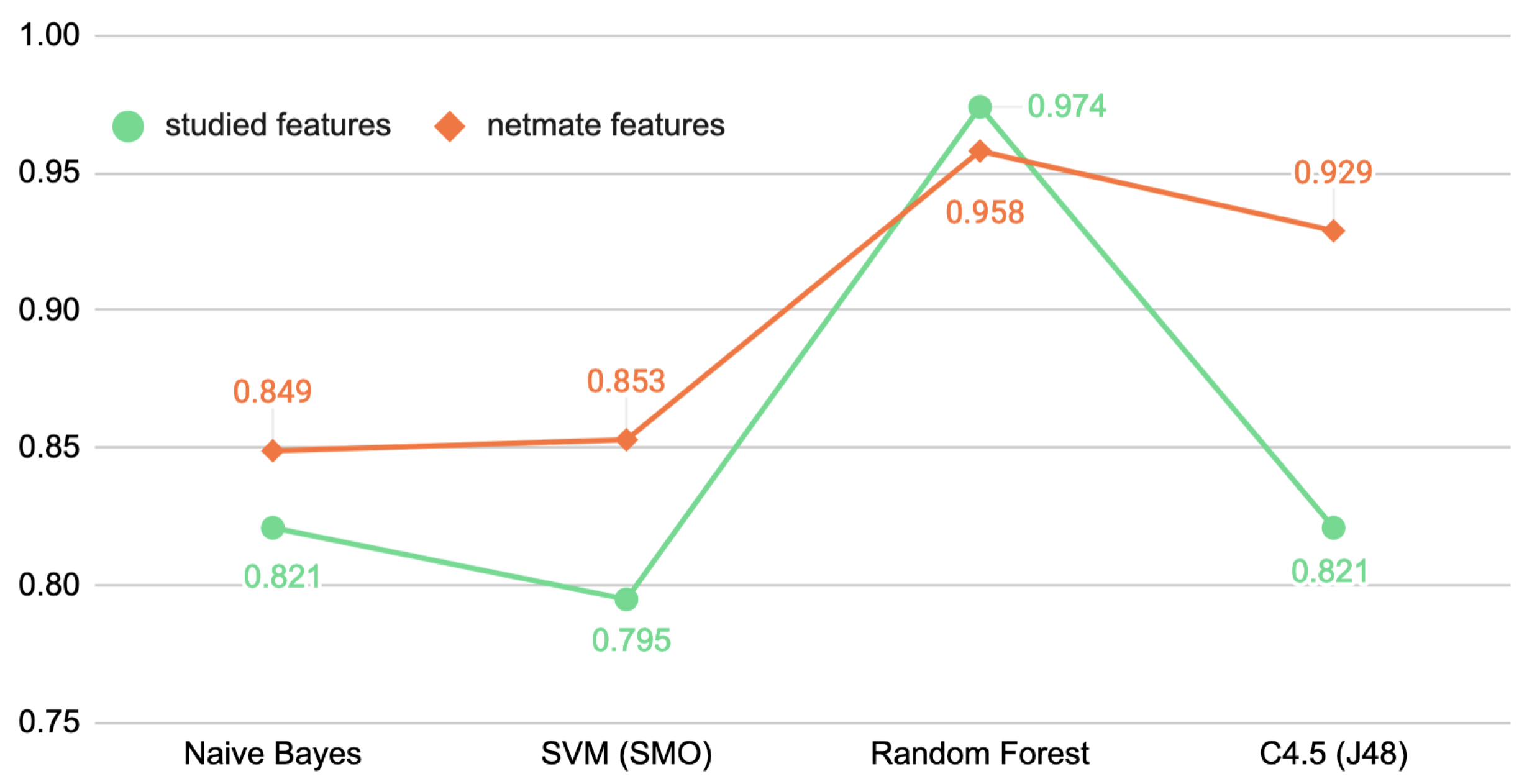

- Third: Not only were four popular algorithms that are commonly used in related work compared, but a further comparison based on two sets of features was also included. Therefore, the increased performance observed for classifying application flows in our tests was basically related to the set of features, as the whole configuration remained unchangeable, except for the change in the feature set in each iteration.

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ARFF | Attribute-Relation File Format |

| DPI | Deep Packet Inspection |

| ML | Machine Learning |

| MLP | Multilayer Perceptron |

| NARX | Nonlinear Autoregressive Exogenous Multilayer Perceptron |

| PCAP | Packet Capture |

| POP3 | Post Office Protocol 3 |

| QoS | Quality of Service |

| PCA | Principal Component Analysis |

| RBF | Radial Biased Function |

| SDN | Software-Defined Network |

| SMO | Sequential Minimal Optimization |

| SVM | Support Vector Machines |

| UI | User Interface |

References

- Zander, S.; Nguyen, T.; Armitage, G. Automated traffic classification and application identification using machine learning. In Proceedings of the IEEE Conference on Local Computer Networks 30th Anniversary (LCN’05), Sydney, NSW, Australia, 17 November 2005; pp. 250–257. [Google Scholar] [CrossRef]

- Amaral, P.; Dinis, J.; Pinto, P.; Bernardo, L.; Tavares, J.; Mamede, H.S. Machine learning in software defined networks: Data collection and traffic classification. In Proceedings of the IEEE 24th International conference on network protocols (ICNP), Singapore, 8–11 November 2016; pp. 1–5. [Google Scholar] [CrossRef]

- Nguyen, T.T.; Armitage, G. A survey of techniques for internet traffic classification using machine learning. IEEE Commun. Surv. Tutor. 2008, 10, 56–76. [Google Scholar] [CrossRef]

- Azab, A.; Khasawneh, M.; Alrabaee, S.; Raymond, C.K.K.; Sarsour, M. Network traffic classification: Techniques, datasets, and challenges. Digit. Commun. Netw. 2022. [Google Scholar] [CrossRef]

- Jaiswal, R.C.; Lokhande, S.D. Machine learning based internet traffic recognition with statistical approach. In Proceedings of the 2013 Annual IEEE India Conference (INDICON), Mumbai, India, 13–15 December 2013; pp. 1–6. [Google Scholar] [CrossRef]

- Yu, C.; Lan, J.; Xie, J.; Hu, Y. QoS-aware traffic classification architecture using machine learning and deep packet inspection in SDNs. Procedia Comput. Sci. 2018, 131, 1209–1216. [Google Scholar] [CrossRef]

- Parsaei, M.R.; Sobouti, M.J.; Khayami, S.; Javidan, R. Network traffic classification using machine learning techniques over software defined networks. Int. J. Adv. Comput. Sci. Appl. (IJACSA) 2017, 8, 220–225. [Google Scholar] [CrossRef][Green Version]

- Eom, W.J.; Song, Y.J.; Park, C.H.; Kim, J.K.; Kim, G.H.; Cho, Y.Z. Network Traffic Classification Using Ensemble Learning in Software-Defined Networks. In Proceedings of the 2021 International Conference on Artificial Intelligence in Information and Communication (ICAIIC), Jeju Island, Korea, 13–16 April 2021; pp. 89–92. [Google Scholar] [CrossRef]

- Raikar, M.M.; Meena, S.M.; Mulla, M.M.; Shetti, N.S.; Karanandi, M. Data traffic classification in software defined networks (SDN) using supervised-learning. Procedia Comput. Sci. 2020, 171, 2750–2759. [Google Scholar] [CrossRef]

- Zhao, P.; Zhao, W.; Liu, Q. Research on SDN Enabled by Machine Learning: An Overview. In International Conference on 5G for Future Wireless Networks; Springer: Cham, Switzerland, 2020; pp. 190–203. [Google Scholar] [CrossRef]

- Patil, S.; Raj, L.A. Classification of traffic over collaborative IoT and Cloud platforms using deep learning recurrent LSTM. Comput. Sci. 2021, 22. [Google Scholar] [CrossRef]

- Javeed, D.; Gao, T.; Khan, M.T.; Ahmad, I. A Hybrid Deep Learning-Driven SDN Enabled Mechanism for Secure Communication in Internet of Things (IoT). Sensors 2021, 21, 4884. [Google Scholar] [CrossRef] [PubMed]

- Oreski, D.; Androcec, D. Genetic algorithm and artificial neural network for network forensic analytics. In Proceedings of the 43rd International Convention on Information, Communication and Electronic Technology (MIPRO), Opatija, Croatia, 28 September–2 October 2020; pp. 1200–1205. [Google Scholar] [CrossRef]

- Alzahrani, R.J.; Alzahrani, A. Survey of Traffic Classification Solution in IoT Networks. Int. J. Comput. Appl. 2021, 183, 37–45. [Google Scholar] [CrossRef]

- Ganesan, E.; Hwang, I.-S.; Liem, A.T.; Ab-Rahman, M.S. SDN-Enabled FiWi-IoT Smart Environment Network Traffic Classification Using Supervised ML Models. Photonics 2021, 8, 201. [Google Scholar] [CrossRef]

- Aslam, M.; Ye, D.; Tariq, A.; Asad, M.; Hanif, M.; Ndzi, D.; Chelloug, S.A.; Elaziz, M.A.; Al-Qaness, M.A.A.; Jilani, S.F. Adaptive Machine Learning Based Distributed Denial-of-Services. Sensors 2022, 22, 2697. [Google Scholar] [CrossRef]

- Maheshwari, A.; Mehraj, B.; Khan, M.S.; Idrisi, M.S. An optimized weighted voting based ensemble model for DDoS attack detection and mitigation in SDN environment. Microprocess. Microsyst. 2022, 89, 104412. [Google Scholar] [CrossRef]

- Mishra, A.; Gupta, N. Supervised Machine Learning Algorithms Based on Classification for Detection of Distributed Denial of Service Attacks in SDN-Enabled Cloud Computing; Springer: Singapore, 2022; Volume 370, pp. 165–174. [Google Scholar] [CrossRef]

- Zafeiropoulos, A.; Fotopoulou, E.; Peuster, M.; Schneider, S.; Gouvas, P.; Behnke, D.; Müller, M.; Bök, P.B.; Trakadas, P.; Karkazis, P.; et al. Benchmarking and Profiling 5G Verticals’ Applications: An Industrial IoT Use Case. In Proceedings of the 6th IEEE Conference on Network Softwarization (NetSoft), Ghent, Belgium, 29 June–3 July 2020; pp. 310–318. [Google Scholar] [CrossRef]

- Uzunidis, D.; Karkazis, P.; Roussou, C.; Patrikakis, C.; Leligou, H.C. Intelligent Performance Prediction: The Use Case of a Hadoop Cluster. Electronics 2021, 10, 2690. [Google Scholar] [CrossRef]

- Troia, S.; Martinez, D.E.; Martín, I.; Zorello, L.M.M.; Maier, G.; Hernández, J.A.; de Dios, O.G.; Garrich, M.; Romero-Gázquez, J.L.; Moreno-Muro, F.J.; et al. Machine Learning-assisted Planning and Provisioning for SDN/NFV-enabled Metropolitan Networks. In Proceedings of the European Conference on Networks and Communications (EuCNC), Valencia, Spain, 18–21 June 2019; pp. 438–442. [Google Scholar] [CrossRef]

- Belkadi, O.; Laaziz, Y.; Vulpe, A.; Halunga, S. An Integration of OpenDaylight and OpenNebula for Cloud Management Improvement using SDN. In Proceedings of the 27th Telecommunications Forum (TELFOR), Belgrade, Serbia, 26–27 November 2019; pp. 1–4. [Google Scholar] [CrossRef]

- Tcpdump Tool. Available online: https://www.tcpdump.org/ (accessed on 18 July 2021).

- Tcptrace Tool. Available online: https://github.com/blitz/tcptrace (accessed on 18 July 2021).

- Carela-Español, V.; Bujlow, T.; Barlet-Ros, P. Is our ground-truth for traffic classification reliable? In International Conference on Passive and Active Network Measurement; Springer: Cham, Switzerland, 2014; pp. 98–108. [Google Scholar] [CrossRef]

- Moore, A.; Zuev, D.; Crogan, M. Discriminators for Use in Flow-Based Classification. s.l.; Department of Computer Science, Queen Mary and Westfield College: London, UK, 2005. [Google Scholar]

- Das, A.K.; Pathak, P.H.; Chuah, C.N.; Mohapatra, P. Contextual localization through network traffic analysis. In Proceedings of the IEEE INFOCOM 2014—IEEE Conference on Computer Communications, Toronto, ON, Canada, 27 April–2 May 2014; pp. 925–933. [Google Scholar] [CrossRef]

- Kim, H.; Claffy, K.C.; Fomenkov, M.; Barman, D.; Faloutsos, M.; Lee, K. Internet traffic classification demystified: Myths, caveats, and the best practices. In Proceedings of the 2008 ACM CoNEXT Conference, Madrid, Spain, 9–12 December 2008; pp. 1–12. [Google Scholar]

- Basher, N.; Mahanti, A.; Mahanti, A.; Williamson, C.; Arlitt, M. A comparative analysis of web and peer-to-peer traffic. In Proceedings of the 17th international conference on World Wide Web, Beijing China, 21–25 April 2008; pp. 287–296. [Google Scholar] [CrossRef]

- Moore, A.W.; Papagiannaki, K. Toward the accurate identification of network applications. In International Workshop on Passive and Active Network Measurement; Springer: Berlin/Heidelberg, Germany, 2005; pp. 41–54. [Google Scholar] [CrossRef]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Dupay, A.; Sengupta, S.; Wolfson, O.; Yemini, Y. NETMATE: A network management environment. IEEE Netw. 1991, 5, 35–40. [Google Scholar] [CrossRef]

- Netmate Features. Available online: https://github.com/DanielArndt/netmate-flowcalc/blob/master/doc/user_manual.pdf (accessed on 20 September 2021).

- Platt, J. Sequential Minimal Optimization: A Fast Algorithm for Training Support Vector Machines; Microsoft: Redmond, WA, USA, 1998. [Google Scholar]

- LibSVM Algorithm Used in WEKA. Available online: https://waikato.github.io/weka-wiki/lib_svm (accessed on 20 September 2021).

- Chang, C.C.; Lin, C.J. LIBSVM: A library for support vector machines. ACM Trans. Intell. Syst. Technol. (TIST) 2011, 2, 1–27. [Google Scholar] [CrossRef]

| Experiment | Duration | # of Packets | Bytes |

|---|---|---|---|

| 5 m 12 s | 21,354 | 16.92 MB | |

| YouTube | 5 m 14 s | 61,157 | 60.30 MB |

| Test data | 14 m 54 s | 179,190 | 153.61 MB |

| Category | Features |

|---|---|

| Ports | Client port, server port |

| Timing | statistics of packet inter-arrival time |

| statistics of flow inter-arrival time | |

| FFT of packet inter-arrival time (frequency: 1 to 10) | |

| total transmit time (difference between the times of the first and last packet) | |

| time since last connection | |

| packets per second | |

| bytes per second | |

| DNS requests per second | |

| TTL | |

| flow duration | |

| idle time | |

| Packet count | total number of packets |

| number of packets per flow | |

| number of out-of-order packets | |

| truncated packets | |

| packets with PUSH bits | |

| packets with SYN bits | |

| packets with FIN bits | |

| total number of ACK packets seen | |

| pure ACK packets | |

| number of packets carrying SACK blocks | |

| number of packets carrying DSACK blocks | |

| number of duplicate ACK packets received | |

| number of all window probe packets seen | |

| Packet size | number of unique bytes sent |

| truncated bytes | |

| sum of a flow’s packet size | |

| effective bandwidth | |

| throughput | |

| total bytes of data sent | |

| size of packets with URG bit turned on | |

| TCP | statistics of retransmission |

| segment statistics | |

| request statistics | |

| bulk mode | |

| statistics of control bytes in the packet | |

| Window level | statistics of window advertisements seen |

| number of times a zero-receive window was advertised | |

| number of bytes sent in the initial window | |

| number of segments sent in the initial window | |

| RTT | total number of RTTs |

| RTT sample statistics | |

| Full-size RTT sample | |

| RTT value calculated from the TCP three-way handshake | |

| Application | number of new flows per second |

| number of active flows per second |

| Feature | Description |

|---|---|

| Flow level | |

| Active flows per second | The number of active flows appearing in one second |

| New flows per second | The number of new flows appearing in one second |

| Flow size | The size of a flow in bytes |

| Flow duration | The time between the first and last packets seen in a flow |

| in seconds | |

| Flow inter-arrival time | Difference between the arrival times of two successive flows |

| Packet level | |

| Packet inter-arrival time | Difference between the arrival times of two successive |

| packets | |

| Packets per second | Number of packets appearing in one second |

| Bytes per second | Sum of bytes in one second |

| Number of packets | The count of packets in one flow |

| Features | Definition | Count |

|---|---|---|

| Studied Features | Selected as in Section 3.2 | 9 |

| Netmate Features | Generated by the Netmate tool [33] | 44 |

| YouTube | ||||||||

|---|---|---|---|---|---|---|---|---|

| Precision | Recall | Precision | Recall | |||||

| S_f | N_f | S_f | N_f | S_f | N_f | S_f | N_f | |

| Naive Bayes | 0.8 | 0.781 | 0.667 | 0.758 | 0.545 | 0.542 | 0.542 | 0.650 |

| SVM (SMO) | 0.833 | 0.806 | 0.417 | 0.758 | 0.5 | 0.636 | 0.875 | 0.525 |

| Random Forest | 0.923 | 0.924 | 1 | 0.924 | 1 | 0.875 | 0.875 | 0.875 |

| C4.5 (J48) | 0.727 | 0.905 | 0.667 | 0.864 | 0.6 | 0.780 | 0.75 | 0.800 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Belkadi, O.; Vulpe, A.; Laaziz, Y.; Halunga, S. ML-Based Traffic Classification in an SDN-Enabled Cloud Environment. Electronics 2023, 12, 269. https://doi.org/10.3390/electronics12020269

Belkadi O, Vulpe A, Laaziz Y, Halunga S. ML-Based Traffic Classification in an SDN-Enabled Cloud Environment. Electronics. 2023; 12(2):269. https://doi.org/10.3390/electronics12020269

Chicago/Turabian StyleBelkadi, Omayma, Alexandru Vulpe, Yassin Laaziz, and Simona Halunga. 2023. "ML-Based Traffic Classification in an SDN-Enabled Cloud Environment" Electronics 12, no. 2: 269. https://doi.org/10.3390/electronics12020269

APA StyleBelkadi, O., Vulpe, A., Laaziz, Y., & Halunga, S. (2023). ML-Based Traffic Classification in an SDN-Enabled Cloud Environment. Electronics, 12(2), 269. https://doi.org/10.3390/electronics12020269