Error Level Analysis Technique for Identifying JPEG Block Unique Signature for Digital Forensic Analysis

Abstract

:1. Introduction

2. Background and Related Literature

2.1. Existing Literature

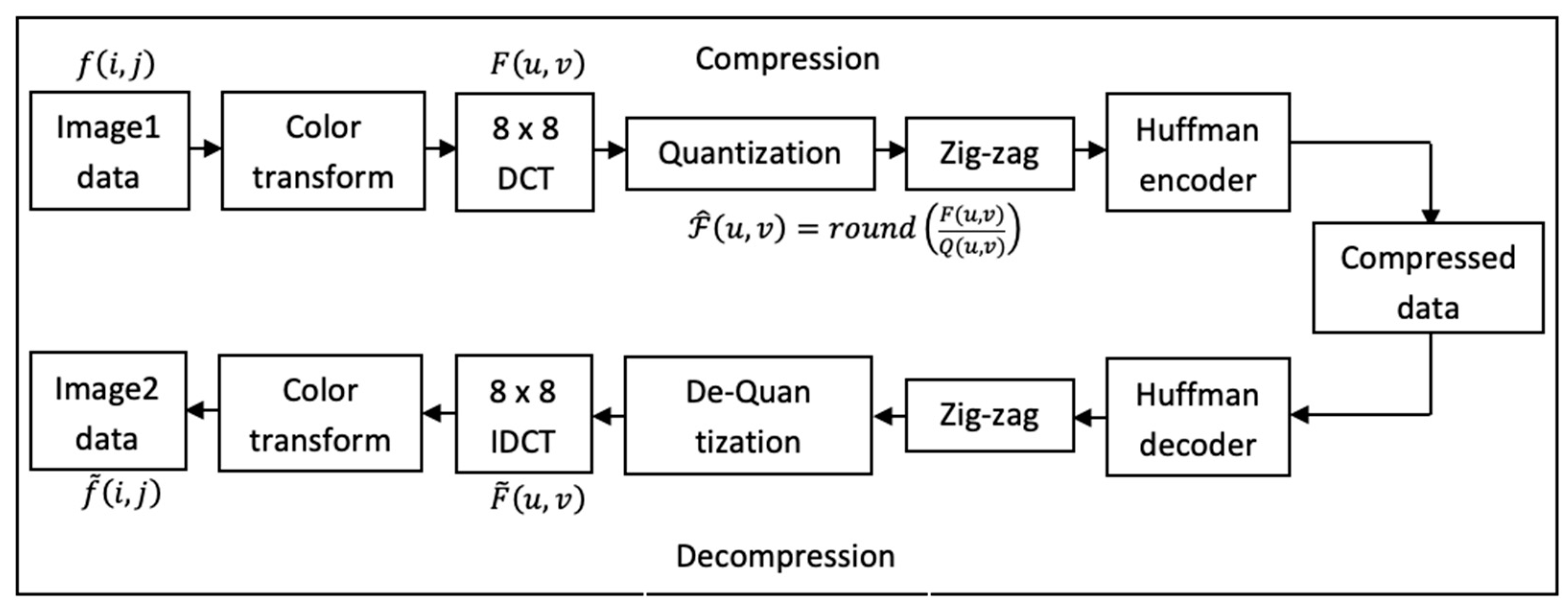

2.2. JPEG Compression

2.3. Error Level Analysis

- A JPEG is said to be original if all 8 × 8 blocks have a similar error pattern. Therefore, the 8 × 8 pixel block can be said to have attained local minima.

- A JPEG is said to be manipulated if any 8 × 8 block has a higher error pattern and an 8 × 8 pixel block is not at its local minima.

3. Proposed Algorithm

4. Results and Discussions

5. Comparison with Existing Techniques

- ▪

- Alteration of images can significantly be reduced with a lower degree of false-positives where the features extracted from the images are subjected to ELA, and this can help to build a forensic hypothesis.

- ▪

- The experiment that has been conducted in this study has shown that this approach is effective.

- ▪

- Our approach has utilized a simple dataset which in the context of this study overcomes the intensive need for rigorous training while pointing out specific features, which from a digital forensic perspective may save an investigator time.

6. Future Directions

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Adeyemi, I.R.; Razak, S.A.; Zainal, A.; Azhan, N.A.N. A Digital Forensic Investigation Model for Insider Misuse. In Advances in Computational Science, Engineering and Information Technology, AISC; Springer: Berlin/Heidelberg, Germany, 2013; Volume 225, pp. 293–305. [Google Scholar]

- Ikuesan, A.R.; Venter, H.S. Digital forensic readiness framework based on behavioral-biometrics for user attribution. In Proceedings of the 2017 IEEE Conference on Application, Information and Network Security (AINS), Miri, Malaysia, 13–14 November 2017; Volume 2018-Janua, pp. 54–59. [Google Scholar]

- Adeyemi, I.R.; Razak, S.A.; Salleh, M.; Venter, H.S. Leveraging human thinking style for user attribution in digital forensic process. Int. J. Adv. Sci. Eng. Inf. Technol. 2017, 7, 198–206. [Google Scholar]

- Mohlala, M.; Ikuesan, A.R.; Venter, H.S. User attribution based on keystroke dynamics in digital forensic readiness process. In Proceedings of the 2017 IEEE Conference on Application, Information and Network Security (AINS), Miri, Malaysia, 13–14 November 2017; Volume 2018-Janua, pp. 1–6. [Google Scholar]

- Adeyemi, I.R.; Razak, S.A.; Azhan, N.A.N. A Review of Current Research in Network Forensic Analysis. Int. J. Digit. Crime Forensics 2013, 5, 1–26. [Google Scholar] [CrossRef] [Green Version]

- Piva, A. An Overview on Image Forensics. ISRN Signal Process. 2013, 2013, 496701. [Google Scholar] [CrossRef]

- Yasinsac, A.; Erbacher, R.F.; Marks, D.G.; Pollitt, M.M.; Sommer, P.M. Computer forensics education. IEEE Secur. Priv. 2003, 1, 15–23. [Google Scholar] [CrossRef]

- Abdullah, M.T.; Mahmod, R.; Ghani, A.A.A.; Abdullah, M.Z.; Sultan, A.B.M. Advances in Computer Forensics. Int. J. Comput. Sci. Netw. Secur. 2008, 8, 215–219. [Google Scholar]

- Singh, A.; Ikuesan, A.R.; Venter, H.S. Digital Forensic Readiness Framework for Ransomware Investigation. In International Conference on Digital Forensics and Cyber Crime, Proceedings of the 10th International EAI Conference (ICDF2C 2018), New Orleans, LA, USA, 10–12 September 2018; Springer: Berlin/Heidelberg, Germany, 2018; Volume 259, p. 259. [Google Scholar]

- Makura, S.M.; Venter, H.S.; Ikuesan, R.A.; Kebande, V.R.; Karie, N.M. Proactive Forensics: Keystroke Logging from the Cloud as Potential Digital Evidence for Forensic Readiness Purposes. In Proceedings of the 2020 IEEE International Conference on Informatics, IoT, and Enabling Technologies (ICIoT 2020), Doha, Qatar, 2–5 February 2020; pp. 200–205. [Google Scholar]

- Kebande, V.R.; Ikuesan, R.A.; Karie, N.M.; Alawadi, S.; Choo, K.-K.R.; Al-Dhaqm, A. Quantifying the need for supervised machine learning in conducting live forensic analysis of emergent configurations (ECO) in IoT environments. Forensic Sci. Int. Rep. 2020, 2, 100122. [Google Scholar] [CrossRef]

- Kebande, V.R.; Karie, N.M.; Venter, H.S. Cloud-Centric Framework for isolating Big data as forensic evidence from IoT infrastructures. In Proceedings of the 2017 1st International Conference on Next Generation Computing Applications (NextComp), Mauritius, 19–21 July 2017; pp. 54–60. [Google Scholar]

- Karie, N.M.; Kebande, V.R.; Venter, H.S. Diverging deep learning cognitive computing techniques into cyber forensics. Forensic Sci. Int. Synerg. 2019, 1, 61–67. [Google Scholar] [CrossRef]

- Adeyemi, I.R.; Abd Razak, S.; Salleh, M. Understanding Online Behavior: Exploring the Probability of Online Personality Trait Using Supervised Machine-Learning Approach. Front. ICT 2016, 3, 8. [Google Scholar] [CrossRef] [Green Version]

- Kebande, V.R.; Karie, N.M.; Michael, A.; Malapane, S.; Kigwana, I.; Venter, H.S.; Wario, R.D. Towards an integrated digital forensic investigation framework for an IoT-based ecosystem. In Proceedings of the 2018 IEEE International Conference on Smart Internet of Things (SmartIoT), Xi’an, China, 17–19 August 2018; pp. 93–98. [Google Scholar]

- Simone, M.P. Data Carving Concepts; SANS Institute: Bethesda, MD, USA, 2009; p. 27. [Google Scholar]

- Beek, C. Introduction to File Carving; White Paper; McAfee Foundstone Professional Services: Mission Viejo, CA, USA, 2011. [Google Scholar]

- Garfinkel, S.L. Carving Contiguous and Fragmented Files with Fast Object Validation. Digit. Investig. 2007, 4, 2–12. [Google Scholar] [CrossRef]

- Richard III, G.G.; Roussev, V. Scalpel: A Frugal, High Performance File Carver. In Proceedings of the Digital Forensic Research Conference DFRWS 2005, New Orleans, LA, USA, 17–19 August 2005; pp. 1–10. [Google Scholar]

- Pal, A.; Memon, N. The Evolution of File Carving. IEEE Signal Process. Mag. 2009, 26, 59–71. [Google Scholar] [CrossRef]

- Deris, M.M.; Mohamad, K.M. Carving JPEG Images and Thumbnails Using Image Pattern Matching. In Proceedings of the 2011 IEEE Symposium on Computers & Informatics, Kuala Lumpur, Malaysia, 20–23 March 2011; pp. 78–83. [Google Scholar]

- Abdullah, N.A.; Ibrahim, R.; Mohamad, K.M. Carving Thumbnail/s and Embedded JPEG Files Using Image Pattern Matching. J. Softw. Eng. Appl. 2013, 6, 62–66. [Google Scholar] [CrossRef] [Green Version]

- Birmingham, B.; Farrugia, R.A.; Vella, M. Using Thumbnail Affinity for Fragmentation Point Detection of JPEG Files. In Proceedings of the IEEE EUROCON 2017—17th International Conference on Smart Technologies, Ohrid, Macedonia, 6–8 July 2017. [Google Scholar]

- Guo, H.; Xu, M. A Method for Recovering JPEG Files Based on Thumbnail. In Proceedings of the 2011 International Conference on Control, Automation and Systems Engineering (CASE), Singapore, 30–31 July 2011. [Google Scholar]

- Pal, A.; Sencar, H.T.; Memon, N. Detecting file fragmentation point using sequential hypothesis testing. Digit. Investig. 2008, 5, 2–13. [Google Scholar] [CrossRef]

- Cohen, M.I. Advanced JPEG carving. In Proceedings of the e-Forensics’08: 1st International ICST Conference on Forensic Applications and Techniques in Telecommunications, Information and Multimedia, Adelaide, Australia, 21–23 January 2008; Volume 1, pp. 1–6. [Google Scholar]

- van den Bos, J.; van der Storm, T. Bringing Domain-Specific Languages to Digital Forensics. In Proceedings of the 2011 33rd International Conference on Software Engineering (ICSE), Honolulu, HI, USA, 21–28 May 2011. [Google Scholar]

- van den Bos, J.; van der Storm, T. Domain-Specific Optimization in Digital Forensics. In International Conference on Theory and Practice of Model Transformations, Proceedings of the 5th International Conference (ICMT 2012), Prague, Czech Republic, 28–29 May 2012; Springer: Berlin/Heidelberg, Germany, 2012; pp. 121–136. [Google Scholar]

- De Bock, J.; De Smet, P. JPGcarve: An Advanced Tool for Automated Recovery of Fragmented JPEG Files. IEEE Trans. Inf. Forensics Secur. 2016, 11, 19–34. [Google Scholar] [CrossRef]

- Poisel, R.; Rybnicek, M.; Tjoa, S. Taxonomy of Data Fragment Classification Techniques. In International Conference on Digital Forensics and Cyber Crime, Proceedings of the Fifth International Conference (ICDF2C 2013), Moscow, Russia, 26–27 September 2013; Springer: Berlin/Heidelberg, Germany, 2014; pp. 67–85. [Google Scholar]

- Garfinkel, S.L. Digital forensics research: The next 10 years. Digit. Investig. 2010, 7, S64–S73. [Google Scholar] [CrossRef] [Green Version]

- Veenman, C.J. Statistical disk cluster classification for file carving. In Proceedings of the Third International Symposium on Information Assurance and Security, Manchester, UK, 29–31 August 2007; pp. 393–398. [Google Scholar]

- Fitzgerald, S.; Mathews, G.; Morris, C.; Zhulyn, O. Using NLP techniques for file fragment classification. Digit. Investig. 2012, 9, 44–49. [Google Scholar] [CrossRef]

- Alshammary, E.; Hadi, A. Reviewing and Evaluating Existing File Carving Techniques for JPEG Files. In Proceedings of the 2016 Cybersecurity and Cyberforensics Conference (CCC), Amman, Jordan, 2–4 August 2016; pp. 55–59. [Google Scholar]

- Warlock, Digital forensics: File Carving. InfoSec Publication. Available online: https://resources.infosecinstitute.com/topic/file-carving/ (accessed on 1 February 2022).

- Kadir, N.F.A.; Abd Razak, S.; Chizari, H. Identification of fragmented JPEG files in the absence of file systems. In Proceedings of the 2015 IEEE Conference on Open Systems (ICOS), Melaka, Malaysia, 24–26 August 2015; pp. 1–6. [Google Scholar]

- Gopal, S.; Yang, Y.; Salomatin, K.; Carbonell, J. Statistical Learning for File-Type Identification. In Proceedings of the 2011 10th International Conference on Machine Learning and Applications and Workshops, Honolulu, HI, USA, 18–21 December 2011. [Google Scholar]

- Ahmed, I.; Lhee, K.; Shin, H.; Hong, M. Fast Content-based File Type Identification. In International Conference on Digital Forensics, Proceedings of the 7th IFIP WG 11.9 International Conference on Digital Forensics, Orlando, FL, USA, 31 January–2 February 2011; Springer: Berlin/Heidelberg, Germany; pp. 65–75.

- Roussev, V.; Garfinkel, S.L. File Fragment Classification-The Case for Specialized Approaches. In Proceedings of the 2009 Fourth International IEEE Workshop on Systematic Approaches to Digital Forensic Engineering, Berkeley, CA, USA, 21–21 May 2009; pp. 3–14. [Google Scholar]

- Shaban Al-Ani, M.; Awad, F.H. The Jpeg Image Compression Algorithm. Int. J. Adv. Eng. Technol. 2013, 6, 1055–1062. [Google Scholar]

- Alherbawi, N.; Shukur, Z.; Sulaiman, R. A Survey on Data Carving in Digital Forensics. Asian J. Inf. Technol. 2016, 15, 5137–5144. [Google Scholar]

- ChandraSekhar, C.; Ramesh, C. A Novel Compression Technique for JPEG Error Analysis and for Digital Image Applications. Int. J. Latest Trends Comput. 2012, 3, 84–89. [Google Scholar]

- Azhan, N.; Abd Razak, S.; Adeyemi, I.R. Analysis of DQT and DHT in JPEG Files. Int. J. Inf. Technol. Comput. Sci. (IJITCS) 2013, 10, 1–11. [Google Scholar]

- Krawetz, N. A Picture ’ s Worth… Version 2 Table of Contents. Solutions 2008, 1–43. [Google Scholar]

- Ikuesan, A.R.; Venter, H.S. Digital behavioral-fingerprint for user attribution in digital forensics: Are we there yet? Digit. Investig. 2019, 30, 73–89. [Google Scholar] [CrossRef]

- Ikuesan, A.R.; Salleh, M.; Venter, H.S.; Razak, S.A.; Furnell, S.M. A heuristics for HTTP traffic identification in measuring user dissimilarity. Hum.-Intell. Syst. Integr. 2020, 2, 17–28. [Google Scholar] [CrossRef]

- Kebande, V.R.; Venter, H.S. On digital forensic readiness in the cloud using a distributed agent-based solution: Issues and challenges. Aust. J. Forensic Sci. 2018, 50, 209–238. [Google Scholar] [CrossRef] [Green Version]

- Kebande, V.R.; Venter, H.S. A comparative analysis of digital forensic readiness models using CFRaaS as a baseline. Wiley Interdiscip. Rev. Forensic Sci. 2019, 1, e1350. [Google Scholar] [CrossRef]

- Setiadi, D.R.I.M. PSNR vs SSIM: Imperceptibility quality assessment for image steganography. Multimedia Tools Appl. 2021, 80, 8423–8444. [Google Scholar] [CrossRef]

- Horé, A.; Ziou, D. Image quality metrics: PSNR vs. SSIM. In Proceedings of the 2010 20th International Conference on Pattern Recognition, Istanbul, Turkey, 23–26 August 2010; pp. 2366–2369. [Google Scholar]

- Sara, U.; Akter, M.; Uddin, M.S. Image Quality Assessment through FSIM, SSIM, MSE and PSNR—A Comparative Study. J. Comput. Commun. 2019, 07, 8–18. [Google Scholar] [CrossRef] [Green Version]

- Abd Warif, N.B.; Idris, M.Y.I.; Wahab, A.W.A.; Salleh, R. An evaluation of Error Level Analysis in image forensics. In Proceedings of the 2015 5th IEEE International Conference on System Engineering and Technology (ICSET), Shah Alam, Malaysia, 10–11 August 2015; pp. 23–28. [Google Scholar]

- Cha, S.; Kang, U.; Choi, E. The image forensics analysis of jpeg image manipulation (lightning talk). In Proceedings of the 2018 International Conference on Software Security and Assurance (ICSSA), Seoul, Korea, 26–27 July 2018; pp. 82–85. [Google Scholar]

- Gunawan, T.S.; Hanafiah, S.A.M.; Kartiwi, M.; Ismail, N.; Za’bah, N.F.; Nordin, A.N. Development of photo forensics algorithm by detecting photoshop manipulation using error level analysis. Indones. J. Electr. Eng. Comput. Sci. 2017, 7, 131–137. [Google Scholar] [CrossRef]

- Jeronymo, D.C.; Borges, Y.C.C.; Coelho, L.D.S. Image forgery detection by semi-automatic wavelet soft-Thresholding with error level analysis. Expert Syst. Appl. 2017, 85, 348–356. [Google Scholar] [CrossRef]

- Parveen, A.; Khan, Z.H.; Ahmad, S.N. Identification of the forged images using image forensic tools. In Communication and Computing Systems, Proceedings of the 2nd International Conference on Communication and Computing Systems (ICCCS 2018), Gurgaon, India, 1–2 December 2018; CRC Press: Boca Raton, FL, USA, 2018; p. 39. [Google Scholar]

- Zhang, W.; Zhao, C. Exposing Face-Swap Images Based on Deep Learning and ELA Detection. Proceedings 2019, 46, 29. [Google Scholar]

| Basic Carving | Advanced Carving |

|---|---|

|

|

|

|

| Block 1 | Block 7 | Block 13 | Block 19 | Block 25 | Block 31 | Block 37 | Block 43 |

| Block 2 | Block 8 | Block 14 | Block 20 | Block 26 | Block 32 | Block 38 | Block 44 |

| Block 3 | Block 9 | Block 15 | Block 21 | Block 27 | Block 33 | Block 39 | Block 45 |

| Block 4 | Block 10 | Block 16 | Block 22 | Block 28 | Block 34 | Block 40 | Block 46 |

| Block 5 | Block 11 | Block 17 | Block 23 | Block 29 | Block 35 | Block 41 | Block 47 |

| Block 6 | Block 12 | Block 18 | Block 24 | Block 30 | Block 36 | Block 42 | Block 48 |

| Block | Re-Save | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | 20 | 21 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 1st | 1.05 | 1.69 | 0.47 | 0.64 | 0.72 | 0.77 | 1.38 | 1.17 | 0.78 | 1.80 | 1.64 | 1.13 | 0.86 | 0.66 | 0.86 | 0.28 | 1.94 | 1.23 | 1.70 | 2.16 | 2.06 |

| 2nd | 1.05 | 1.55 | 0.47 | 0.59 | 0.72 | 0.77 | 1.38 | 1.17 | 0.78 | 1.59 | 1.64 | 1.13 | 0.86 | 0.66 | 0.86 | 0.28 | 1.94 | 1.23 | 1.27 | 2.41 | 2.06 | |

| 3rd | 1.05 | 1.31 | 0.47 | 0.41 | 0.72 | 0.77 | 1.38 | 1.17 | 0.78 | 1.59 | 1.64 | 1.13 | 0.86 | 0.66 | 0.86 | 0.28 | 1.94 | 1.23 | 1.41 | 2.03 | 2.06 | |

| 2 | 1st | 1.48 | 1.19 | 1.36 | 1.36 | 0.77 | 1.94 | 0.38 | 1.33 | 1.20 | 1.06 | 1.95 | 1.86 | 0.92 | 1.45 | 0.64 | 0.84 | 1.53 | 1.20 | 2.06 | 1.06 | 1.20 |

| 2nd | 1.48 | 1.19 | 1.36 | 1.56 | 0.77 | 1.94 | 0.38 | 1.33 | 1.20 | 1.06 | 1.95 | 1.86 | 0.92 | 1.45 | 0.64 | 0.66 | 1.53 | 1.20 | 1.97 | 1.06 | 1.20 | |

| 3rd | 1.48 | 1.19 | 1.36 | 1.56 | 0.77 | 1.94 | 0.38 | 1.33 | 1.20 | 1.06 | 1.95 | 1.86 | 0.92 | 1.45 | 0.64 | 0.56 | 1.53 | 1.20 | 1.97 | 1.06 | 1.20 | |

| 3 | 1st | 2.48 | 1.80 | 0.61 | 0.97 | 0.52 | 1.69 | 1.02 | 2.27 | 1.31 | 0.61 | 1.59 | 2.64 | 1.17 | 1.61 | 2.06 | 0.70 | 1.38 | 1.25 | 1.31 | 0.38 | 1.52 |

| 2nd | 2.48 | 1.80 | 0.61 | 0.91 | 0.52 | 1.69 | 1.02 | 2.27 | 1.31 | 0.70 | 1.59 | 2.64 | 1.17 | 1.61 | 2.06 | 0.47 | 1.38 | 1.25 | 1.31 | 0.38 | 1.52 | |

| 3rd | 2.48 | 1.80 | 0.61 | 0.91 | 0.52 | 1.69 | 1.02 | 2.27 | 1.31 | 0.61 | 1.59 | 2.64 | 1.17 | 1.61 | 2.06 | 0.52 | 1.38 | 1.25 | 1.31 | 0.38 | 1.52 | |

| 4 | 1st | 0.94 | 1.80 | 0.58 | 2.33 | 0.56 | 1.47 | 0.70 | 1.80 | 0.66 | 1.05 | 1.73 | 2.16 | 1.38 | 3.16 | 2.97 | 0.31 | 2.25 | 0.84 | 1.58 | 1.34 | 1.66 |

| 2nd | 0.94 | 1.80 | 0.75 | 1.94 | 0.66 | 1.47 | 0.70 | 1.80 | 0.66 | 1.05 | 1.73 | 2.25 | 1.38 | 3.16 | 2.97 | 0.42 | 2.25 | 0.84 | 1.58 | 1.34 | 1.66 | |

| 3rd | 0.94 | 1.80 | 0.75 | 1.69 | 0.66 | 1.47 | 0.70 | 1.80 | 0.66 | 1.05 | 1.73 | 2.25 | 1.38 | 3.16 | 2.97 | 0.38 | 2.25 | 0.84 | 1.58 | 1.34 | 1.66 | |

| 5 | 1st | 0.45 | 0.64 | 0.42 | 2.20 | 1.36 | 0.42 | 0.63 | 1.86 | 1.27 | 1.66 | 1.38 | 1.36 | 1.64 | 2.13 | 1.97 | 1.59 | 1.36 | 1.16 | 2.63 | 1.16 | 1.23 |

| 2nd | 0.45 | 0.64 | 0.42 | 1.92 | 1.36 | 0.42 | 0.83 | 1.86 | 1.27 | 1.61 | 1.38 | 1.36 | 1.64 | 2.13 | 1.97 | 1.59 | 1.14 | 1.16 | 2.63 | 1.16 | 1.23 | |

| 3rd | 1.45 | 0.64 | 0.42 | 1.92 | 1.36 | 0.42 | 0.69 | 1.86 | 1.27 | 1.86 | 1.38 | 1.36 | 1.64 | 2.13 | 1.97 | 1.59 | 1.14 | 1.16 | 2.63 | 1.16 | 1.23 | |

| 6 | 1st | 0.38 | 0.80 | 0.66 | 1.75 | 0.91 | 0.56 | 0.61 | 1.88 | 0.73 | 0.84 | 0.34 | 0.80 | 0.89 | 0.80 | 2.05 | 2.38 | 1.47 | 0.66 | 0.70 | 1.06 | 1.41 |

| 2nd | 0.38 | 0.80 | 0.66 | 1.83 | 0.91 | 0.56 | 0.66 | 1.88 | 0.73 | 0.84 | 0.34 | 0.80 | 0.89 | 0.80 | 2.05 | 2.06 | 1.47 | 0.66 | 0.70 | 1.06 | 1.41 | |

| 3rd | 0.38 | 0.80 | 0.66 | 1.77 | 0.91 | 0.56 | 0.52 | 1.88 | 0.73 | 0.84 | 0.34 | 0.80 | 0.89 | 0.80 | 2.05 | 2.06 | 1.47 | 0.66 | 0.70 | 1.06 | 1.41 | |

| 7 | 1st | 1.47 | 1.47 | 0.33 | 2.61 | 0.23 | 1.22 | 2.47 | 1.09 | 1.69 | 1.20 | 1.50 | 1.48 | 0.52 | 0.63 | 0.94 | 0.94 | 1.59 | 1.33 | 1.06 | 1.67 | 1.95 |

| 2nd | 1.47 | 1.34 | 0.33 | 2.42 | 0.28 | 1.22 | 2.19 | 0.69 | 1.69 | 1.20 | 1.50 | 1.48 | 0.66 | 0.63 | 0.78 | 0.23 | 1.59 | 1.33 | 1.06 | 1.67 | 1.95 | |

| 3rd | 1.47 | 0.98 | 0.33 | 2.42 | 0.28 | 1.22 | 1.88 | 0.69 | 1.69 | 1.20 | 1.50 | 1.48 | 0.66 | 0.63 | 0.78 | 0.75 | 1.59 | 1.33 | 1.06 | 1.67 | 1.95 | |

| 8 | 1st | 1.84 | 1.34 | 1.30 | 2.39 | 1.88 | 1.47 | 0.66 | 1.56 | 2.59 | 0.75 | 2.08 | 1.23 | 1.00 | 2.11 | 1.06 | 0.56 | 1.95 | 0.67 | 2.67 | 1.47 | 1.58 |

| 2nd | 1.84 | 1.34 | 1.30 | 2.20 | 1.78 | 1.47 | 0.58 | 1.56 | 2.59 | 0.75 | 2.08 | 1.23 | 1.00 | 2.11 | 1.06 | 0.56 | 1.95 | 0.67 | 2.38 | 1.47 | 1.58 | |

| 3rd | 1.84 | 1.34 | 1.30 | 1.86 | 1.58 | 1.47 | 0.58 | 1.56 | 2.59 | 0.75 | 2.08 | 1.23 | 1.00 | 2.11 | 1.06 | 0.56 | 1.95 | 0.67 | 2.00 | 1.47 | 1.58 | |

| 9 | 1st | 1.13 | 1.63 | 2.02 | 2.69 | 1.78 | 1.05 | 1.08 | 2.13 | 2.63 | 0.78 | 1.69 | 2.05 | 1.77 | 2.33 | 2.33 | 0.39 | 1.91 | 1.25 | 2.75 | 1.13 | 1.70 |

| 2nd | 1.13 | 1.63 | 2.02 | 2.22 | 1.86 | 1.05 | 1.08 | 2.13 | 2.63 | 0.78 | 1.69 | 2.05 | 1.77 | 2.33 | 2.33 | 0.39 | 1.91 | 1.25 | 2.80 | 1.13 | 1.70 | |

| 3rd | 1.13 | 1.63 | 2.02 | 2.20 | 1.70 | 1.05 | 1.08 | 2.13 | 2.63 | 0.78 | 1.69 | 2.05 | 1.77 | 2.33 | 2.33 | 0.39 | 1.91 | 1.25 | 2.94 | 1.13 | 1.70 | |

| 10 | 1st | 1.81 | 2.09 | 1.66 | 2.25 | 1.67 | 0.86 | 1.33 | 2.23 | 2.06 | 0.89 | 1.36 | 0.67 | 1.86 | 2.73 | 2.52 | 0.61 | 1.44 | 1.23 | 2.83 | 1.06 | 2.00 |

| 2nd | 1.81 | 2.09 | 1.66 | 2.13 | 1.67 | 0.86 | 1.33 | 2.23 | 2.06 | 0.92 | 1.36 | 0.67 | 1.86 | 2.56 | 2.59 | 0.61 | 1.44 | 1.23 | 2.83 | 1.06 | 2.00 | |

| 3rd | 1.81 | 2.09 | 1.66 | 2.00 | 1.67 | 0.86 | 1.33 | 2.23 | 2.06 | 1.06 | 1.36 | 0.67 | 1.86 | 2.72 | 2.59 | 0.61 | 1.44 | 1.23 | 2.83 | 1.06 | 2.00 | |

| 11 | 1st | 1.34 | 0.94 | 1.06 | 3.23 | 0.95 | 0.38 | 0.19 | 2.00 | 1.64 | 0.78 | 1.08 | 0.52 | 1.14 | 1.89 | 2.13 | 1.23 | 0.97 | 1.28 | 1.88 | 1.16 | 1.75 |

| 2nd | 1.34 | 0.94 | 1.06 | 2.36 | 0.95 | 0.38 | 0.19 | 2.00 | 1.64 | 0.78 | 1.08 | 0.52 | 1.14 | 1.89 | 2.13 | 1.23 | 0.97 | 1.28 | 1.88 | 1.16 | 1.75 | |

| 3rd | 1.34 | 0.94 | 1.06 | 2.27 | 0.95 | 0.38 | 0.19 | 2.00 | 1.64 | 0.78 | 1.08 | 0.52 | 1.14 | 1.89 | 2.13 | 1.23 | 0.97 | 1.28 | 1.88 | 1.16 | 1.75 | |

| 12 | 1st | 1.08 | 0.56 | 0.48 | 2.28 | 0.83 | 0.55 | 0.75 | 1.44 | 0.89 | 0.86 | 1.16 | 0.86 | 1.39 | 1.55 | 2.64 | 2.44 | 1.45 | 1.16 | 0.42 | 0.94 | 1.23 |

| 2nd | 0.64 | 0.56 | 0.48 | 1.98 | 0.83 | 0.55 | 0.75 | 1.44 | 0.88 | 0.86 | 1.16 | 0.86 | 1.39 | 1.55 | 2.59 | 2.28 | 1.45 | 1.16 | 0.50 | 0.94 | 1.23 | |

| 3rd | 0.64 | 0.56 | 0.48 | 1.72 | 0.83 | 0.55 | 1.08 | 1.44 | 0.88 | 0.86 | 1.16 | 0.86 | 1.39 | 1.55 | 2.84 | 2.28 | 1.45 | 1.16 | 0.50 | 0.94 | 1.23 | |

| 13 | 1st | 0.88 | 1.36 | 0.61 | 2.39 | 0.38 | 0.91 | 2.80 | 1.59 | 0.88 | 1.50 | 1.69 | 2.00 | 1.22 | 1.52 | 1.55 | 0.84 | 0.84 | 1.20 | 0.53 | 1.55 | 1.11 |

| 2nd | 0.88 | 1.28 | 0.61 | 2.39 | 0.38 | 0.91 | 2.09 | 1.28 | 0.81 | 1.27 | 1.73 | 1.94 | 0.84 | 1.52 | 1.55 | 0.92 | 0.84 | 1.20 | 0.66 | 1.55 | 1.11 | |

| 3rd | 0.88 | 0.86 | 0.61 | 2.39 | 0.38 | 0.91 | 1.89 | 1.34 | 0.81 | 1.27 | 1.73 | 1.91 | 0.84 | 1.52 | 1.55 | 0.92 | 0.84 | 1.20 | 0.66 | 1.55 | 1.11 | |

| 14 | 1st | 0.67 | 1.83 | 0.67 | 1.67 | 1.94 | 1.63 | 0.41 | 1.02 | 0.78 | 0.80 | 2.09 | 2.05 | 2.25 | 1.39 | 1.88 | 0.97 | 2.00 | 0.95 | 1.30 | 1.67 | 1.05 |

| 2nd | 0.67 | 1.83 | 0.67 | 1.67 | 1.94 | 1.63 | 0.41 | 1.02 | 0.78 | 0.80 | 2.05 | 2.05 | 2.25 | 1.39 | 1.88 | 0.97 | 2.00 | 0.95 | 1.30 | 1.67 | 1.05 | |

| 3rd | 0.67 | 1.83 | 0.67 | 1.67 | 1.94 | 1.63 | 0.41 | 1.02 | 0.78 | 0.80 | 2.05 | 2.05 | 2.25 | 1.39 | 1.88 | 0.97 | 2.00 | 0.95 | 1.30 | 1.67 | 1.05 | |

| 15 | 1st | 1.83 | 1.98 | 2.75 | 2.52 | 2.14 | 1.14 | 0.47 | 1.89 | 1.67 | 1.27 | 2.28 | 2.38 | 1.88 | 1.16 | 1.66 | 2.34 | 2.25 | 1.89 | 2.20 | 1.88 | 1.42 |

| 2nd | 1.83 | 1.98 | 2.75 | 2.52 | 1.73 | 1.06 | 0.47 | 1.89 | 1.67 | 1.27 | 2.28 | 2.38 | 1.88 | 1.16 | 1.66 | 2.28 | 2.25 | 1.89 | 2.20 | 1.88 | 1.42 | |

| 3rd | 1.83 | 1.98 | 2.75 | 2.52 | 1.73 | 1.06 | 0.47 | 1.89 | 1.67 | 1.27 | 2.28 | 2.38 | 1.88 | 1.16 | 1.66 | 2.28 | 2.25 | 1.89 | 2.20 | 1.88 | 1.42 | |

| 16 | 1st | 2.84 | 2.09 | 1.34 | 1.98 | 0.91 | 1.23 | 1.03 | 1.64 | 1.73 | 1.22 | 1.95 | 1.72 | 3.06 | 2.55 | 1.73 | 1.42 | 1.72 | 0.47 | 2.72 | 0.83 | 2.03 |

| 2nd | 2.94 | 2.09 | 1.34 | 1.98 | 0.89 | 1.13 | 1.03 | 1.64 | 1.73 | 1.22 | 1.95 | 1.72 | 3.38 | 2.55 | 1.73 | 1.42 | 1.72 | 0.47 | 2.72 | 0.83 | 2.03 | |

| 3rd | 2.69 | 2.09 | 1.34 | 1.98 | 0.89 | 1.13 | 1.03 | 1.64 | 1.73 | 1.22 | 1.95 | 1.72 | 3.13 | 2.55 | 1.73 | 1.42 | 1.72 | 0.47 | 2.72 | 0.83 | 2.03 | |

| 17 | 1st | 1.80 | 1.17 | 0.45 | 2.58 | 0.09 | 1.08 | 0.70 | 1.22 | 2.20 | 1.22 | 1.42 | 1.44 | 1.17 | 1.20 | 2.13 | 2.38 | 0.80 | 1.02 | 2.02 | 1.73 | 2.00 |

| 2nd | 1.80 | 1.17 | 0.42 | 2.30 | 0.09 | 1.08 | 0.61 | 1.22 | 2.20 | 1.22 | 1.42 | 1.44 | 1.17 | 1.20 | 2.13 | 2.47 | 0.80 | 1.02 | 2.02 | 1.73 | 2.00 | |

| 3rd | 1.80 | 1.17 | 0.55 | 2.34 | 0.09 | 1.08 | 0.33 | 1.22 | 2.20 | 1.22 | 1.42 | 1.44 | 1.17 | 1.20 | 2.13 | 1.84 | 0.80 | 1.02 | 2.02 | 1.73 | 2.00 | |

| 18 | 1st | 0.61 | 0.23 | 0.81 | 1.39 | 0.56 | 1.41 | 0.28 | 1.83 | 1.13 | 1.03 | 1.38 | 0.77 | 1.38 | 1.27 | 3.17 | 1.38 | 2.14 | 0.81 | 0.52 | 1.17 | 1.34 |

| 2nd | 0.61 | 0.14 | 0.81 | 1.33 | 0.66 | 1.41 | 0.28 | 1.83 | 1.13 | 0.94 | 1.38 | 0.77 | 1.38 | 1.27 | 2.53 | 1.03 | 2.14 | 0.81 | 0.52 | 1.17 | 1.34 | |

| 3rd | 0.61 | 0.38 | 0.81 | 1.33 | 0.56 | 1.41 | 0.28 | 1.83 | 1.13 | 0.75 | 1.38 | 0.77 | 1.38 | 1.27 | 2.80 | 1.00 | 2.14 | 0.81 | 0.52 | 1.17 | 1.34 | |

| 19 | 1st | 1.22 | 0.55 | 0.50 | 2.05 | 0.19 | 1.33 | 1.23 | 0.69 | 0.55 | 0.86 | 2.55 | 1.13 | 1.28 | 1.77 | 1.44 | 0.36 | 1.58 | 1.17 | 0.78 | 0.48 | 0.97 |

| 2nd | 1.22 | 0.23 | 0.50 | 2.05 | 0.47 | 1.33 | 1.23 | 0.52 | 0.55 | 0.86 | 2.17 | 1.13 | 1.28 | 1.77 | 1.44 | 0.36 | 1.58 | 1.17 | 0.69 | 0.48 | 0.97 | |

| 3rd | 1.22 | 0.23 | 0.50 | 2.05 | 0.47 | 1.33 | 1.23 | 0.52 | 0.55 | 0.86 | 1.64 | 1.13 | 1.28 | 1.77 | 1.44 | 0.36 | 1.58 | 1.17 | 0.69 | 0.48 | 0.97 | |

| 20 | 1st | 1.28 | 1.81 | 0.56 | 2.36 | 1.92 | 2.02 | 0.95 | 1.11 | 1.72 | 0.69 | 2.70 | 0.52 | 1.53 | 1.56 | 1.22 | 1.33 | 1.75 | 1.66 | 0.84 | 1.92 | 1.83 |

| 2nd | 1.28 | 1.97 | 0.56 | 2.58 | 1.66 | 2.02 | 0.91 | 1.11 | 1.72 | 0.69 | 2.08 | 0.70 | 1.53 | 1.56 | 1.22 | 1.33 | 1.75 | 1.66 | 0.84 | 1.92 | 1.83 | |

| 3rd | 1.28 | 1.77 | 0.56 | 2.52 | 1.58 | 2.02 | 0.91 | 1.11 | 1.72 | 0.69 | 2.11 | 0.70 | 1.53 | 1.56 | 1.22 | 1.33 | 1.75 | 1.66 | 0.84 | 1.92 | 1.83 | |

| 21 | 1st | 1.64 | 1.05 | 1.97 | 2.11 | 2.09 | 1.36 | 0.80 | 1.55 | 1.00 | 2.06 | 2.36 | 1.80 | 1.89 | 1.73 | 1.03 | 1.97 | 1.75 | 2.38 | 0.69 | 2.05 | 1.97 |

| 2nd | 1.64 | 1.05 | 1.97 | 2.11 | 2.09 | 1.06 | 0.80 | 1.55 | 1.00 | 2.06 | 2.36 | 1.80 | 1.89 | 1.73 | 1.03 | 1.97 | 1.75 | 2.38 | 0.69 | 2.05 | 1.97 | |

| 3rd | 1.64 | 1.05 | 1.97 | 2.11 | 2.09 | 1.06 | 0.80 | 1.55 | 1.00 | 2.06 | 2.36 | 1.80 | 1.89 | 1.73 | 1.03 | 1.97 | 1.75 | 2.38 | 0.69 | 2.05 | 1.97 | |

| 22 | 1st | 2.41 | 1.73 | 1.25 | 1.97 | 1.83 | 0.81 | 1.50 | 1.48 | 1.73 | 1.42 | 0.72 | 2.05 | 2.58 | 2.13 | 2.00 | 0.75 | 2.17 | 1.44 | 2.39 | 1.31 | 1.78 |

| 2nd | 2.41 | 1.73 | 1.25 | 1.97 | 1.73 | 1.17 | 1.50 | 1.48 | 1.73 | 1.42 | 0.72 | 2.05 | 2.58 | 2.13 | 2.00 | 0.66 | 2.17 | 1.44 | 2.39 | 1.31 | 1.78 | |

| 3rd | 2.41 | 1.73 | 1.25 | 1.97 | 1.63 | 1.14 | 1.50 | 1.48 | 1.73 | 1.42 | 0.72 | 2.05 | 2.58 | 2.13 | 2.00 | 0.66 | 2.17 | 1.44 | 2.39 | 1.31 | 1.78 | |

| 23 | 1st | 2.08 | 0.88 | 0.38 | 2.02 | 1.53 | 1.53 | 0.42 | 1.89 | 0.94 | 0.80 | 0.69 | 1.25 | 1.67 | 1.16 | 1.97 | 2.00 | 1.50 | 1.02 | 1.64 | 1.84 | 1.98 |

| 2nd | 2.08 | 0.88 | 0.38 | 2.08 | 1.58 | 1.53 | 0.42 | 1.89 | 0.94 | 0.80 | 0.69 | 1.25 | 1.67 | 1.16 | 1.97 | 1.28 | 1.50 | 1.02 | 1.64 | 1.84 | 1.98 | |

| 3rd | 2.08 | 0.88 | 0.38 | 2.08 | 1.58 | 1.53 | 0.42 | 1.89 | 0.94 | 0.80 | 0.69 | 1.25 | 1.67 | 1.16 | 1.97 | 1.47 | 1.50 | 1.02 | 1.64 | 1.84 | 1.98 | |

| 24 | 1st | 0.77 | 0.28 | 0.91 | 0.83 | 1.25 | 1.34 | 0.61 | 2.89 | 2.17 | 0.78 | 1.47 | 0.73 | 1.56 | 1.00 | 1.22 | 0.88 | 1.81 | 0.56 | 0.70 | 1.13 | 1.69 |

| 2nd | 0.77 | 0.28 | 0.91 | 0.78 | 1.25 | 1.34 | 0.33 | 2.89 | 2.17 | 0.78 | 1.47 | 0.73 | 1.56 | 1.00 | 1.22 | 0.64 | 1.81 | 0.56 | 0.70 | 1.13 | 1.69 | |

| 3rd | 0.77 | 0.28 | 0.91 | 0.78 | 1.25 | 1.34 | 0.33 | 2.89 | 2.17 | 0.78 | 1.47 | 0.73 | 1.56 | 1.00 | 1.22 | 0.64 | 1.81 | 0.56 | 0.70 | 1.13 | 1.69 | |

| 25 | 1st | 1.16 | 0.00 | 0.28 | 2.23 | 0.39 | 0.84 | 1.61 | 0.91 | 1.69 | 0.95 | 1.63 | 1.05 | 0.72 | 1.42 | 1.44 | 0.58 | 1.58 | 1.16 | 1.03 | 1.05 | 1.36 |

| 2nd | 1.16 | 0.00 | 0.28 | 2.45 | 0.39 | 0.84 | 1.16 | 0.91 | 1.69 | 0.95 | 1.45 | 1.05 | 0.72 | 1.42 | 1.44 | 0.58 | 1.56 | 1.16 | 0.91 | 1.05 | 1.36 | |

| 3rd | 1.16 | 0.00 | 0.28 | 2.45 | 0.39 | 0.84 | 1.42 | 0.91 | 1.69 | 0.95 | 1.41 | 1.05 | 0.72 | 1.42 | 1.44 | 0.58 | 1.31 | 1.16 | 0.91 | 1.05 | 1.36 | |

| 26 | 1st | 2.95 | 2.55 | 0.50 | 1.95 | 1.77 | 1.28 | 1.72 | 1.30 | 1.48 | 0.80 | 1.44 | 0.55 | 1.50 | 2.34 | 0.73 | 1.56 | 1.98 | 0.89 | 0.92 | 1.00 | 1.30 |

| 2nd | 2.95 | 2.44 | 0.48 | 2.11 | 1.55 | 1.28 | 1.72 | 1.30 | 1.48 | 0.66 | 1.13 | 0.55 | 1.50 | 2.34 | 0.73 | 1.56 | 1.98 | 0.89 | 1.23 | 1.00 | 1.30 | |

| 3rd | 2.95 | 2.69 | 0.48 | 2.11 | 1.64 | 1.28 | 1.72 | 1.30 | 1.48 | 0.80 | 1.20 | 0.55 | 1.50 | 2.34 | 0.73 | 1.56 | 1.98 | 0.89 | 1.23 | 1.00 | 1.30 | |

| 27 | 1st | 2.05 | 1.88 | 2.36 | 2.38 | 1.98 | 1.02 | 0.88 | 1.84 | 1.28 | 0.75 | 2.48 | 1.44 | 1.14 | 2.47 | 0.89 | 2.52 | 1.81 | 1.89 | 1.39 | 1.55 | 1.50 |

| 2nd | 2.05 | 1.88 | 2.36 | 2.38 | 1.98 | 1.02 | 0.88 | 1.84 | 1.28 | 0.91 | 2.48 | 1.44 | 1.14 | 2.30 | 0.89 | 2.63 | 1.81 | 1.89 | 1.39 | 1.55 | 1.50 | |

| 3rd | 2.05 | 1.88 | 2.36 | 2.38 | 1.98 | 1.02 | 0.88 | 1.84 | 1.28 | 0.91 | 2.48 | 1.44 | 1.14 | 2.30 | 0.89 | 2.34 | 1.81 | 1.89 | 1.39 | 1.55 | 1.50 | |

| 28 | 1st | 1.89 | 1.77 | 1.55 | 3.78 | 1.55 | 1.50 | 1.44 | 2.39 | 0.95 | 1.31 | 1.88 | 2.09 | 1.97 | 1.83 | 2.02 | 1.42 | 1.69 | 0.89 | 1.67 | 2.33 | 2.05 |

| 2nd | 1.89 | 1.77 | 1.55 | 3.70 | 1.55 | 1.50 | 1.44 | 2.39 | 0.95 | 1.31 | 1.88 | 2.09 | 1.97 | 1.83 | 2.02 | 1.67 | 1.69 | 0.89 | 1.67 | 2.33 | 2.05 | |

| 3rd | 1.89 | 1.77 | 1.55 | 3.75 | 1.55 | 1.50 | 1.44 | 2.39 | 0.95 | 1.31 | 1.88 | 2.09 | 1.97 | 1.83 | 2.02 | 1.70 | 1.69 | 0.89 | 1.67 | 2.33 | 2.05 | |

| 29 | 1st | 1.73 | 1.56 | 0.33 | 1.73 | 2.19 | 0.75 | 0.52 | 1.22 | 1.86 | 1.13 | 0.72 | 1.70 | 1.31 | 1.31 | 2.23 | 1.23 | 1.70 | 0.52 | 2.34 | 1.73 | 1.97 |

| 2nd | 1.73 | 1.56 | 0.33 | 1.73 | 2.19 | 0.75 | 0.52 | 1.22 | 1.86 | 1.13 | 0.72 | 1.70 | 1.31 | 1.13 | 2.23 | 1.38 | 1.70 | 0.52 | 2.28 | 1.69 | 1.89 | |

| 3rd | 1.73 | 1.56 | 0.33 | 1.73 | 2.19 | 0.75 | 0.52 | 1.22 | 1.86 | 1.13 | 0.72 | 1.70 | 1.31 | 1.13 | 2.23 | 1.38 | 1.70 | 0.52 | 1.89 | 1.69 | 1.69 | |

| 30 | 1st | 0.78 | 0.75 | 0.30 | 1.83 | 1.38 | 1.30 | 1.03 | 1.50 | 1.78 | 1.20 | 0.48 | 1.77 | 1.41 | 0.61 | 2.13 | 1.47 | 0.94 | 0.50 | 0.42 | 0.52 | 1.03 |

| 2nd | 1.09 | 0.75 | 0.30 | 2.13 | 1.38 | 1.30 | 1.03 | 1.50 | 1.78 | 1.20 | 0.48 | 1.77 | 1.41 | 0.61 | 2.13 | 1.47 | 0.94 | 0.50 | 0.42 | 0.52 | 1.03 | |

| 3rd | 0.81 | 0.75 | 0.30 | 2.13 | 1.38 | 1.30 | 1.03 | 1.50 | 1.78 | 1.20 | 0.48 | 1.77 | 1.41 | 0.61 | 2.13 | 1.47 | 0.94 | 0.50 | 0.42 | 0.52 | 1.03 | |

| 31 | 1st | 2.28 | 1.02 | 0.28 | 1.14 | 0.95 | 1.23 | 0.91 | 1.33 | 1.59 | 0.83 | 1.41 | 1.63 | 1.02 | 1.72 | 0.50 | 0.66 | 1.91 | 1.44 | 0.58 | 1.34 | 1.94 |

| 2nd | 2.14 | 1.16 | 0.28 | 1.14 | 0.95 | 1.23 | 1.27 | 1.33 | 1.59 | 0.83 | 0.94 | 1.63 | 1.02 | 1.72 | 0.50 | 0.83 | 1.91 | 1.44 | 0.58 | 1.34 | 1.94 | |

| 3rd | 2.05 | 1.13 | 0.28 | 1.14 | 0.95 | 1.23 | 1.27 | 1.33 | 1.59 | 0.83 | 0.95 | 1.63 | 1.02 | 1.72 | 0.50 | 0.83 | 1.91 | 1.44 | 0.58 | 1.34 | 1.94 | |

| 32 | 1st | 1.53 | 1.70 | 0.88 | 2.39 | 1.39 | 1.84 | 1.36 | 0.80 | 1.72 | 0.91 | 2.23 | 2.30 | 1.61 | 1.73 | 2.03 | 0.75 | 2.13 | 0.80 | 0.75 | 1.44 | 1.63 |

| 2nd | 1.53 | 1.70 | 0.88 | 2.33 | 1.13 | 1.84 | 1.36 | 0.80 | 1.77 | 0.91 | 1.95 | 2.30 | 1.61 | 1.73 | 2.03 | 0.73 | 2.13 | 0.80 | 0.75 | 1.44 | 1.63 | |

| 3rd | 1.53 | 1.70 | 0.88 | 2.08 | 1.13 | 1.84 | 1.36 | 0.80 | 1.77 | 0.91 | 2.19 | 2.30 | 1.61 | 1.73 | 2.03 | 0.70 | 2.13 | 0.80 | 0.75 | 1.44 | 1.63 | |

| 33 | 1st | 2.17 | 1.56 | 1.52 | 2.95 | 2.02 | 1.59 | 1.47 | 1.80 | 0.98 | 0.80 | 1.83 | 2.36 | 1.80 | 1.75 | 1.88 | 2.02 | 0.92 | 1.55 | 0.55 | 1.39 | 1.77 |

| 2nd | 2.17 | 1.56 | 1.52 | 2.38 | 2.02 | 1.59 | 1.47 | 1.80 | 0.98 | 0.80 | 1.83 | 2.36 | 1.80 | 1.84 | 1.88 | 2.28 | 0.92 | 1.55 | 0.55 | 1.39 | 1.77 | |

| 3rd | 2.17 | 1.56 | 1.52 | 2.38 | 2.02 | 1.59 | 1.47 | 1.80 | 0.98 | 0.80 | 1.83 | 2.36 | 1.80 | 1.84 | 1.88 | 2.28 | 0.92 | 1.55 | 0.55 | 1.39 | 1.77 | |

| 34 | 1st | 1.48 | 2.64 | 1.31 | 2.86 | 1.56 | 1.34 | 2.39 | 1.75 | 1.28 | 0.97 | 0.92 | 2.97 | 2.06 | 2.27 | 1.33 | 0.91 | 0.88 | 0.92 | 1.19 | 1.58 | 1.59 |

| 2nd | 1.48 | 2.64 | 1.31 | 2.91 | 1.56 | 1.34 | 2.39 | 1.75 | 1.28 | 0.97 | 0.92 | 2.97 | 2.06 | 2.27 | 1.33 | 0.91 | 0.88 | 0.92 | 1.19 | 1.61 | 1.59 | |

| 3rd | 1.48 | 2.64 | 1.31 | 2.91 | 1.56 | 1.34 | 2.39 | 1.75 | 1.28 | 0.97 | 0.92 | 2.97 | 2.06 | 2.27 | 1.33 | 0.91 | 0.88 | 0.92 | 1.19 | 1.61 | 1.59 | |

| 35 | 1st | 0.80 | 1.02 | 0.14 | 2.45 | 1.92 | 0.47 | 1.16 | 1.55 | 2.36 | 1.91 | 1.97 | 2.39 | 1.53 | 1.30 | 2.41 | 2.63 | 1.33 | 0.77 | 2.22 | 1.48 | 0.41 |

| 2nd | 0.80 | 1.02 | 0.14 | 2.47 | 1.92 | 0.47 | 1.16 | 1.55 | 2.36 | 1.91 | 1.97 | 2.39 | 1.53 | 1.30 | 2.41 | 2.27 | 1.33 | 0.77 | 2.22 | 1.48 | 0.41 | |

| 3rd | 0.80 | 1.02 | 0.14 | 2.16 | 1.92 | 0.47 | 1.16 | 1.55 | 2.36 | 1.91 | 1.97 | 2.39 | 1.53 | 1.30 | 2.41 | 2.27 | 1.33 | 0.77 | 2.22 | 1.48 | 0.41 | |

| 36 | 1st | 1.19 | 0.84 | 0.64 | 3.33 | 0.45 | 0.66 | 1.95 | 1.69 | 2.03 | 2.17 | 0.75 | 1.36 | 1.16 | 0.75 | 1.91 | 1.73 | 1.23 | 0.80 | 0.09 | 0.80 | 1.05 |

| 2nd | 1.19 | 0.84 | 0.59 | 2.64 | 0.45 | 0.66 | 1.95 | 1.69 | 2.03 | 2.17 | 0.75 | 1.36 | 1.16 | 0.75 | 1.91 | 1.22 | 1.23 | 0.80 | 0.09 | 0.80 | 1.05 | |

| 3rd | 1.19 | 0.84 | 0.59 | 2.64 | 0.45 | 0.66 | 1.95 | 1.69 | 2.03 | 2.17 | 0.75 | 1.36 | 1.16 | 0.75 | 1.91 | 1.06 | 1.23 | 0.80 | 0.09 | 0.80 | 1.05 | |

| 37 | 1st | 1.84 | 1.63 | 0.42 | 0.69 | 0.72 | 1.16 | 0.56 | 1.25 | 0.94 | 1.36 | 1.91 | 2.00 | 0.92 | 1.61 | 0.66 | 0.25 | 2.33 | 1.13 | 1.52 | 0.86 | 2.13 |

| 2nd | 1.84 | 1.41 | 0.42 | 0.69 | 0.72 | 1.16 | 0.56 | 1.25 | 0.94 | 1.36 | 1.55 | 2.00 | 0.91 | 1.61 | 0.66 | 0.25 | 2.33 | 1.13 | 1.52 | 0.86 | 2.02 | |

| 3rd | 1.84 | 1.41 | 0.42 | 0.69 | 0.72 | 1.16 | 0.56 | 1.25 | 0.94 | 1.36 | 1.55 | 2.00 | 0.94 | 1.61 | 0.66 | 0.25 | 2.33 | 1.13 | 1.52 | 0.86 | 1.83 | |

| 38 | 1st | 0.72 | 1.08 | 1.11 | 2.16 | 1.20 | 1.52 | 1.92 | 1.14 | 1.27 | 0.92 | 2.66 | 2.47 | 0.86 | 1.47 | 2.05 | 0.78 | 1.98 | 0.86 | 0.66 | 1.55 | 1.83 |

| 2nd | 0.72 | 1.08 | 1.11 | 2.16 | 1.20 | 1.52 | 1.69 | 1.14 | 1.27 | 0.92 | 2.33 | 2.47 | 0.86 | 1.47 | 2.05 | 0.78 | 1.98 | 0.86 | 0.66 | 1.55 | 1.83 | |

| 3rd | 0.72 | 1.08 | 1.11 | 2.16 | 1.20 | 1.52 | 1.63 | 1.14 | 1.27 | 0.92 | 2.44 | 2.47 | 0.86 | 1.47 | 2.05 | 0.78 | 1.98 | 0.86 | 0.66 | 1.55 | 1.83 | |

| 39 | 1st | 1.09 | 1.77 | 0.75 | 2.14 | 1.48 | 1.20 | 1.34 | 2.27 | 1.48 | 1.27 | 2.02 | 2.52 | 1.34 | 1.69 | 1.81 | 2.19 | 0.84 | 0.70 | 0.80 | 2.02 | 2.23 |

| 2nd | 1.09 | 1.77 | 0.58 | 2.14 | 1.48 | 1.20 | 1.34 | 2.27 | 1.48 | 1.27 | 2.03 | 2.52 | 1.34 | 1.69 | 1.81 | 1.88 | 0.84 | 0.70 | 0.80 | 2.02 | 2.23 | |

| 3rd | 1.09 | 1.77 | 0.58 | 2.14 | 1.48 | 1.20 | 1.34 | 2.27 | 1.48 | 1.27 | 1.97 | 2.52 | 1.34 | 1.69 | 1.81 | 1.88 | 0.84 | 0.70 | 0.80 | 2.02 | 2.23 | |

| 40 | 1st | 1.69 | 1.58 | 0.78 | 1.69 | 2.27 | 1.56 | 2.08 | 2.06 | 1.14 | 1.34 | 1.66 | 1.94 | 1.53 | 1.94 | 1.34 | 1.72 | 1.38 | 0.47 | 1.64 | 1.61 | 1.08 |

| 2nd | 1.69 | 1.58 | 0.78 | 1.69 | 2.27 | 1.48 | 2.08 | 2.06 | 1.14 | 1.34 | 1.58 | 1.94 | 1.53 | 1.94 | 1.34 | 1.72 | 1.38 | 0.47 | 1.64 | 1.61 | 1.08 | |

| 3rd | 1.69 | 1.58 | 0.78 | 1.69 | 2.27 | 1.41 | 2.08 | 2.06 | 1.14 | 1.34 | 1.58 | 1.94 | 1.53 | 1.94 | 1.34 | 1.72 | 1.38 | 0.47 | 1.64 | 1.61 | 1.08 | |

| 41 | 1st | 1.11 | 1.70 | 0.47 | 1.31 | 1.17 | 1.27 | 1.64 | 1.59 | 1.70 | 1.53 | 0.61 | 0.91 | 1.41 | 1.39 | 1.67 | 2.44 | 1.06 | 1.22 | 1.44 | 1.13 | 1.52 |

| 2nd | 1.11 | 1.70 | 0.47 | 1.31 | 1.17 | 1.27 | 1.64 | 1.59 | 1.70 | 1.53 | 0.61 | 0.91 | 1.41 | 1.39 | 1.67 | 2.28 | 0.94 | 1.22 | 1.44 | 1.13 | 1.47 | |

| 3rd | 1.11 | 1.70 | 0.47 | 1.31 | 1.17 | 1.27 | 1.64 | 1.59 | 1.70 | 1.53 | 0.61 | 0.91 | 1.41 | 1.39 | 1.67 | 2.16 | 0.94 | 1.22 | 1.44 | 1.13 | 1.56 | |

| 42 | 1st | 0.19 | 0.95 | 0.56 | 1.92 | 1.83 | 1.50 | 1.83 | 2.08 | 1.94 | 1.91 | 0.70 | 0.80 | 1.61 | 1.31 | 1.98 | 0.70 | 0.98 | 0.89 | 0.19 | 1.78 | 1.61 |

| 2nd | 0.19 | 0.95 | 0.56 | 1.91 | 1.83 | 1.50 | 1.83 | 2.08 | 1.94 | 1.91 | 0.70 | 0.80 | 1.61 | 1.31 | 1.98 | 0.70 | 0.98 | 0.89 | 1.08 | 1.78 | 1.61 | |

| 3rd | 0.19 | 0.95 | 0.56 | 1.91 | 1.83 | 1.50 | 1.83 | 2.08 | 1.94 | 1.91 | 0.70 | 0.80 | 1.61 | 1.31 | 1.98 | 0.70 | 0.98 | 0.89 | 1.22 | 1.78 | 1.61 | |

| 43 | 1st | 1.72 | 1.30 | 0.75 | 0.42 | 0.75 | 1.42 | 0.92 | 1.70 | 1.25 | 1.25 | 0.84 | 1.13 | 0.94 | 0.73 | 0.44 | 0.75 | 2.03 | 1.56 | 1.22 | 1.02 | 1.44 |

| 2nd | 1.84 | 1.14 | 0.75 | 0.42 | 0.75 | 1.42 | 0.75 | 1.70 | 1.25 | 1.25 | 0.84 | 1.08 | 0.94 | 0.73 | 0.44 | 0.77 | 2.03 | 1.56 | 1.22 | 1.02 | 1.44 | |

| 3rd | 1.58 | 1.14 | 0.75 | 0.42 | 0.75 | 1.42 | 0.75 | 1.70 | 1.25 | 1.25 | 0.84 | 1.08 | 0.94 | 0.73 | 0.44 | 0.77 | 2.03 | 1.56 | 1.22 | 1.02 | 1.44 | |

| 44 | 1st | 1.48 | 2.88 | 1.27 | 1.53 | 1.42 | 1.17 | 2.11 | 1.38 | 0.69 | 1.38 | 1.28 | 2.36 | 1.78 | 2.30 | 0.23 | 0.69 | 1.25 | 1.55 | 0.42 | 0.81 | 1.97 |

| 2nd | 1.48 | 2.30 | 1.27 | 1.53 | 1.42 | 1.17 | 1.80 | 1.38 | 0.69 | 1.38 | 1.28 | 2.36 | 1.78 | 2.30 | 0.23 | 0.69 | 1.25 | 1.55 | 0.42 | 0.81 | 1.97 | |

| 3rd | 1.48 | 2.42 | 1.27 | 1.53 | 1.42 | 1.17 | 1.73 | 1.38 | 0.69 | 1.38 | 1.28 | 2.36 | 1.78 | 2.30 | 0.23 | 0.69 | 1.25 | 1.55 | 0.42 | 0.81 | 1.97 | |

| 45 | 1st | 1.09 | 1.97 | 0.52 | 2.31 | 1.17 | 0.98 | 0.84 | 1.91 | 1.39 | 2.23 | 2.13 | 2.38 | 0.95 | 2.42 | 1.47 | 0.28 | 1.94 | 1.53 | 0.63 | 0.84 | 1.78 |

| 2nd | 1.09 | 1.97 | 0.52 | 2.11 | 1.17 | 0.98 | 0.84 | 1.91 | 1.39 | 2.23 | 2.13 | 2.38 | 0.95 | 2.42 | 1.47 | 0.28 | 1.94 | 1.53 | 0.64 | 0.84 | 1.78 | |

| 3rd | 1.09 | 1.97 | 0.52 | 2.17 | 1.17 | 0.98 | 0.84 | 1.91 | 1.39 | 2.23 | 2.13 | 2.38 | 0.95 | 2.42 | 1.47 | 0.28 | 1.94 | 1.53 | 0.67 | 0.84 | 1.78 | |

| 46 | 1st | 1.25 | 1.34 | 0.52 | 1.48 | 1.81 | 1.86 | 2.02 | 2.39 | 0.70 | 1.66 | 0.69 | 2.69 | 1.89 | 2.38 | 0.97 | 0.67 | 1.20 | 0.72 | 0.14 | 2.08 | 1.48 |

| 2nd | 1.25 | 1.34 | 0.52 | 1.48 | 1.81 | 1.63 | 2.02 | 2.39 | 0.70 | 1.66 | 0.69 | 2.69 | 1.89 | 2.56 | 0.97 | 0.67 | 1.20 | 0.72 | 0.14 | 1.86 | 1.48 | |

| 3rd | 1.25 | 1.34 | 0.52 | 1.48 | 1.81 | 1.92 | 2.02 | 2.39 | 0.70 | 1.66 | 0.69 | 2.69 | 1.89 | 2.31 | 0.97 | 0.67 | 1.20 | 0.72 | 0.14 | 1.81 | 1.48 | |

| 47 | 1st | 1.25 | 1.58 | 0.42 | 1.88 | 0.72 | 0.95 | 0.89 | 1.72 | 1.64 | 1.89 | 1.30 | 2.19 | 0.94 | 0.39 | 1.36 | 2.06 | 1.31 | 0.86 | 0.89 | 1.45 | 1.56 |

| 2nd | 1.25 | 1.58 | 0.28 | 1.83 | 0.72 | 1.06 | 0.89 | 1.72 | 1.64 | 1.89 | 1.30 | 2.19 | 0.94 | 0.39 | 1.45 | 2.00 | 1.22 | 0.86 | 1.22 | 1.45 | 1.56 | |

| 3rd | 1.25 | 1.58 | 0.28 | 2.27 | 0.72 | 0.94 | 0.89 | 1.72 | 1.64 | 1.89 | 1.30 | 2.19 | 0.94 | 0.39 | 1.45 | 2.17 | 1.22 | 0.86 | 1.03 | 1.45 | 1.56 | |

| 48 | 1st | 0.92 | 0.66 | 0.56 | 1.48 | 1.13 | 0.94 | 2.00 | 1.80 | 2.08 | 1.39 | 1.55 | 1.41 | 1.13 | 1.47 | 1.86 | 1.16 | 0.72 | 0.73 | 0.73 | 0.78 | 0.89 |

| 2nd | 0.92 | 0.66 | 0.56 | 1.59 | 1.13 | 0.94 | 1.89 | 1.80 | 2.08 | 1.39 | 1.55 | 1.41 | 0.73 | 1.47 | 1.86 | 0.92 | 0.72 | 0.73 | 0.73 | 0.78 | 0.89 | |

| 3rd | 0.92 | 0.66 | 0.56 | 1.66 | 1.13 | 0.94 | 1.86 | 1.80 | 2.08 | 1.39 | 1.55 | 1.41 | 0.73 | 1.47 | 1.86 | 0.92 | 0.72 | 0.73 | 0.73 | 0.78 | 0.89 | |

| Observed ELA differential. | ||||||||||||||||||||||

| Image | |||||

|---|---|---|---|---|---|

| Block | Re-Save | Img4 | Img13 | Img14 | Img15 |

| 1 | 1st | 0.97 | 0.81 | 1.16 | 0.52 |

| 2nd | 0.75 | 0.81 | 1.16 | 0.52 | |

| 3rd | 0.75 | 0.81 | 1.16 | 0.52 | |

| 2 | 1st | 1.20 | 1.03 | 1.64 | 1.47 |

| 2nd | 1.28 | 1.03 | 1.64 | 1.47 | |

| 3rd | 1.28 | 1.03 | 1.64 | 1.47 | |

| 3 | 1st | 1.23 | 0.91 | 1.28 | 1.84 |

| 2nd | 1.23 | 0.91 | 1.28 | 1.84 | |

| 3rd | 1.23 | 0.91 | 1.28 | 1.84 | |

| 4 | 1st | 1.47 | 1.36 | 1.94 | 1.30 |

| 2nd | 1.23 | 1.36 | 1.67 | 1.30 | |

| 3rd | 1.23 | 1.36 | 1.67 | 1.30 | |

| 5 | 1st | 1.94 | 0.98 | 1.55 | 1.77 |

| 2nd | 1.77 | 0.98 | 1.55 | 1.77 | |

| 3rd | 1.77 | 0.98 | 1.55 | 1.77 | |

| 6 | 1st | 1.38 | 0.86 | 0.63 | 1.67 |

| 2nd | 1.38 | 0.86 | 0.63 | 1.67 | |

| 3rd | 1.38 | 0.86 | 0.63 | 1.67 | |

| 7 | 1st | 2.08 | 1.02 | 0.98 | 1.00 |

| 2nd | 2.08 | 1.02 | 0.98 | 0.75 | |

| 3rd | 2.08 | 1.02 | 0.98 | 0.75 | |

| 8 | 1st | 1.61 | 1.22 | 1.67 | 1.30 |

| 2nd | 1.36 | 1.22 | 1.67 | 1.30 | |

| 3rd | 1.36 | 1.22 | 1.67 | 1.30 | |

| 9 | 1st | 1.58 | 1.22 | 1.22 | 1.17 |

| 2nd | 1.58 | 1.22 | 1.22 | 1.17 | |

| 3rd | 1.58 | 1.22 | 1.22 | 1.17 | |

| 10 | 1st | 2.16 | 1.55 | 2.41 | 1.27 |

| 2nd | 2.16 | 1.55 | 2.41 | 1.27 | |

| 3rd | 2.16 | 1.55 | 2.41 | 1.27 | |

| 11 | 1st | 2.92 | 0.95 | 1.73 | 1.66 |

| 2nd | 2.55 | 0.95 | 1.73 | 1.66 | |

| 3rd | 2.42 | 0.95 | 1.73 | 1.66 | |

| 12 | 1st | 1.78 | 1.50 | 1.36 | 2.41 |

| 2nd | 1.78 | 1.50 | 1.36 | 2.19 | |

| 3rd | 1.78 | 1.50 | 1.36 | 2.19 | |

| 13 | 1st | 1.42 | 0.84 | 1.80 | 1.61 |

| 2nd | 1.42 | 0.84 | 1.80 | 1.61 | |

| 3rd | 1.42 | 0.84 | 1.80 | 1.61 | |

| 14 | 1st | 1.45 | 1.77 | 1.25 | 1.86 |

| 2nd | 1.45 | 1.77 | 1.25 | 1.86 | |

| 3rd | 1.45 | 1.77 | 1.25 | 1.86 | |

| 15 | 1st | 1.36 | 1.80 | 1.33 | 1.80 |

| 2nd | 1.36 | 1.80 | 1.33 | 1.80 | |

| 3rd | 1.36 | 1.80 | 1.33 | 1.80 | |

| 16 | 1st | 1.95 | 2.16 | 1.30 | 1.22 |

| 2nd | 1.95 | 2.36 | 1.30 | 1.22 | |

| 3rd | 1.95 | 2.36 | 1.30 | 1.22 | |

| 17 | 1st | 1.64 | 0.91 | 1.31 | 1.95 |

| 2nd | 1.64 | 0.91 | 1.31 | 1.95 | |

| 3rd | 1.64 | 0.91 | 1.31 | 1.95 | |

| 18 | 1st | 1.70 | 1.59 | 1.28 | 2.06 |

| 2nd | 1.70 | 1.59 | 1.28 | 2.06 | |

| 3rd | 1.70 | 1.59 | 1.28 | 2.06 | |

| 19 | 1st | 1.25 | 1.23 | 1.28 | 1.34 |

| 2nd | 1.25 | 1.23 | 1.28 | 1.34 | |

| 3rd | 1.25 | 1.23 | 1.28 | 1.34 | |

| 20 | 1st | 2.52 | 1.44 | 1.83 | 1.08 |

| 2nd | 1.86 | 1.44 | 1.83 | 1.08 | |

| 3rd | 1.70 | 1.44 | 1.83 | 1.08 | |

| 21 | 1st | 1.44 | 1.84 | 1.20 | 1.16 |

| 2nd | 1.44 | 1.84 | 1.20 | 1.16 | |

| 3rd | 1.44 | 1.84 | 1.20 | 1.16 | |

| 22 | 1st | 1.02 | 1.55 | 1.64 | 1.53 |

| 2nd | 1.02 | 1.55 | 1.64 | 1.53 | |

| 3rd | 1.02 | 1.55 | 1.64 | 1.53 | |

| 23 | 1st | 2.13 | 1.83 | 1.61 | 1.45 |

| 2nd | 2.13 | 1.83 | 1.61 | 1.45 | |

| 3rd | 2.13 | 1.83 | 1.61 | 1.45 | |

| 24 | 1st | 0.94 | 1.03 | 0.78 | 0.88 |

| 2nd | 0.94 | 1.03 | 0.78 | 0.88 | |

| 3rd | 0.94 | 1.03 | 0.78 | 0.88 | |

| 25 | 1st | 1.67 | 0.75 | 0.47 | 1.28 |

| 2nd | 1.67 | 0.75 | 0.47 | 1.28 | |

| 3rd | 1.67 | 0.75 | 0.47 | 1.28 | |

| 26 | 1st | 1.73 | 1.02 | 1.30 | 1.06 |

| 2nd | 1.73 | 1.02 | 1.30 | 1.06 | |

| 3rd | 1.73 | 1.02 | 1.30 | 1.06 | |

| 27 | 1st | 1.83 | 0.81 | 1.91 | 0.98 |

| 2nd | 1.83 | 0.81 | 1.91 | 0.98 | |

| 3rd | 1.83 | 0.81 | 1.91 | 0.98 | |

| 28 | 1st | 2.59 | 2.17 | 1.25 | 1.36 |

| 2nd | 2.59 | 2.17 | 1.25 | 1.36 | |

| 3rd | 2.59 | 2.17 | 1.25 | 1.36 | |

| 29 | 1st | 1.61 | 0.98 | 1.02 | 1.50 |

| 2nd | 1.61 | 0.98 | 1.02 | 1.50 | |

| 3rd | 1.61 | 0.98 | 1.02 | 1.50 | |

| 30 | 1st | 1.31 | 1.13 | 0.78 | 1.30 |

| 2nd | 1.36 | 1.13 | 0.78 | 1.30 | |

| 3rd | 1.36 | 1.13 | 0.78 | 1.30 | |

| 31 | 1st | 0.78 | 0.88 | 1.17 | 0.38 |

| 2nd | 0.78 | 0.88 | 1.17 | 0.38 | |

| 3rd | 0.78 | 0.88 | 1.17 | 0.38 | |

| 32 | 1st | 2.16 | 1.55 | 1.20 | 2.09 |

| 2nd | 1.77 | 1.55 | 1.20 | 2.09 | |

| 3rd | 1.77 | 1.55 | 1.20 | 2.09 | |

| 33 | 1st | 2.27 | 1.73 | 1.75 | 1.20 |

| 2nd | 2.22 | 1.73 | 1.75 | 1.20 | |

| 3rd | 2.22 | 1.73 | 1.75 | 1.20 | |

| 34 | 1st | 2.09 | 1.00 | 1.39 | 1.73 |

| 2nd | 2.09 | 1.00 | 1.39 | 1.73 | |

| 3rd | 2.09 | 1.00 | 1.39 | 1.73 | |

| 35 | 1st | 2.11 | 1.30 | 1.22 | 1.47 |

| 2nd | 2.16 | 1.30 | 1.22 | 1.47 | |

| 3rd | 1.92 | 1.30 | 1.22 | 1.47 | |

| 36 | 1st | 1.61 | 1.58 | 0.83 | 2.19 |

| 2nd | 1.61 | 1.58 | 0.83 | 2.19 | |

| 3rd | 1.61 | 1.58 | 0.83 | 2.19 | |

| 37 | 1st | 0.89 | 1.09 | 1.53 | 0.33 |

| 2nd | 0.89 | 1.05 | 1.53 | 0.33 | |

| 3rd | 0.89 | 0.95 | 1.53 | 0.33 | |

| 38 | 1st | 2.11 | 1.39 | 1.13 | 1.06 |

| 2nd | 2.11 | 1.39 | 1.13 | 1.06 | |

| 3rd | 2.11 | 1.39 | 1.13 | 1.06 | |

| 39 | 1st | 1.63 | 0.92 | 1.56 | 1.56 |

| 2nd | 1.63 | 0.92 | 1.56 | 1.56 | |

| 3rd | 1.63 | 0.92 | 1.56 | 1.56 | |

| 40 | 1st | 1.44 | 1.02 | 1.36 | 1.44 |

| 2nd | 1.44 | 1.02 | 1.36 | 1.44 | |

| 3rd | 1.44 | 1.02 | 1.36 | 1.44 | |

| 41 | 1st | 1.30 | 1.38 | 1.27 | 1.31 |

| 2nd | 1.30 | 1.38 | 1.27 | 1.31 | |

| 3rd | 1.30 | 1.38 | 1.27 | 1.31 | |

| 42 | 1st | 1.22 | 1.39 | 1.36 | 1.19 |

| 2nd | 1.22 | 1.39 | 1.36 | 1.19 | |

| 3rd | 1.22 | 1.39 | 1.36 | 1.19 | |

| 43 | 1st | 0.84 | 0.95 | 0.52 | 0.61 |

| 2nd | 0.84 | 0.95 | 0.52 | 0.61 | |

| 3rd | 0.84 | 0.95 | 0.52 | 0.61 | |

| 44 | 1st | 0.92 | 1.77 | 1.67 | 0.47 |

| 2nd | 0.92 | 1.77 | 1.67 | 0.47 | |

| 3rd | 0.92 | 1.77 | 1.67 | 0.47 | |

| 45 | 1st | 1.89 | 0.34 | 1.50 | 1.56 |

| 2nd | 1.58 | 0.34 | 1.50 | 1.56 | |

| 3rd | 1.28 | 0.34 | 1.50 | 1.56 | |

| 46 | 1st | 1.17 | 1.36 | 1.30 | 1.08 |

| 2nd | 1.17 | 1.36 | 1.30 | 1.08 | |

| 3rd | 1.17 | 1.36 | 1.30 | 1.08 | |

| 47 | 1st | 1.98 | 1.22 | 0.64 | 0.92 |

| 2nd | 1.72 | 1.22 | 0.64 | 0.92 | |

| 3rd | 1.80 | 1.22 | 0.64 | 0.92 | |

| 48 | 1st | 1.33 | 0.70 | 1.05 | 1.47 |

| 2nd | 1.33 | 0.70 | 1.05 | 1.47 | |

| 3rd | 1.33 | 0.70 | 1.05 | 1.47 | |

| REF | Focus | Limitation |

|---|---|---|

| [52] | ELA for image forensics | Study is generalized |

| [53] | Image forensics using lossy compression using ELA | Cannot be applied across non-lossy compression, such as PNG or where color drops below 256 |

| [54] | Photo forensics algorithm using ELA | Inclined only towards lossy compression techniques |

| [55] | ELA for semi-automatic wavelet soft-thresholding | Study is weakened with produced noise |

| [56] | Forgery identification using forensic tools | Study is generalized and applies ELA, metadata analysis, JPEG luminance |

| [57] | Face-swap image exposure on deep learning based ELA | Deep learning training model cannot explicitly explain the priciple of identification as opposed to ELA |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Azhan, N.A.N.; Ikuesan, R.A.; Razak, S.A.; Kebande, V.R. Error Level Analysis Technique for Identifying JPEG Block Unique Signature for Digital Forensic Analysis. Electronics 2022, 11, 1468. https://doi.org/10.3390/electronics11091468

Azhan NAN, Ikuesan RA, Razak SA, Kebande VR. Error Level Analysis Technique for Identifying JPEG Block Unique Signature for Digital Forensic Analysis. Electronics. 2022; 11(9):1468. https://doi.org/10.3390/electronics11091468

Chicago/Turabian StyleAzhan, Nor Amira Nor, Richard Adeyemi Ikuesan, Shukor Abd Razak, and Victor R. Kebande. 2022. "Error Level Analysis Technique for Identifying JPEG Block Unique Signature for Digital Forensic Analysis" Electronics 11, no. 9: 1468. https://doi.org/10.3390/electronics11091468

APA StyleAzhan, N. A. N., Ikuesan, R. A., Razak, S. A., & Kebande, V. R. (2022). Error Level Analysis Technique for Identifying JPEG Block Unique Signature for Digital Forensic Analysis. Electronics, 11(9), 1468. https://doi.org/10.3390/electronics11091468