Machine Learning for DDoS Attack Detection in Industry 4.0 CPPSs

Abstract

:1. Introduction

- We propose an ML-based network anomaly detection system for detecting DDoS attacks in Industry 4.0 CPPSs.

- We analyze benign network traffic data collected from a real-world large-scale factory and actual DDoS attacks data from DDoSDB data base.

- We study the performance of 11 semi-supervised, unsupervised, and supervised ML algorithms for DDoS attack detection and discuss their merits for the proposed ML-based network anomaly detection system.

2. Related Work

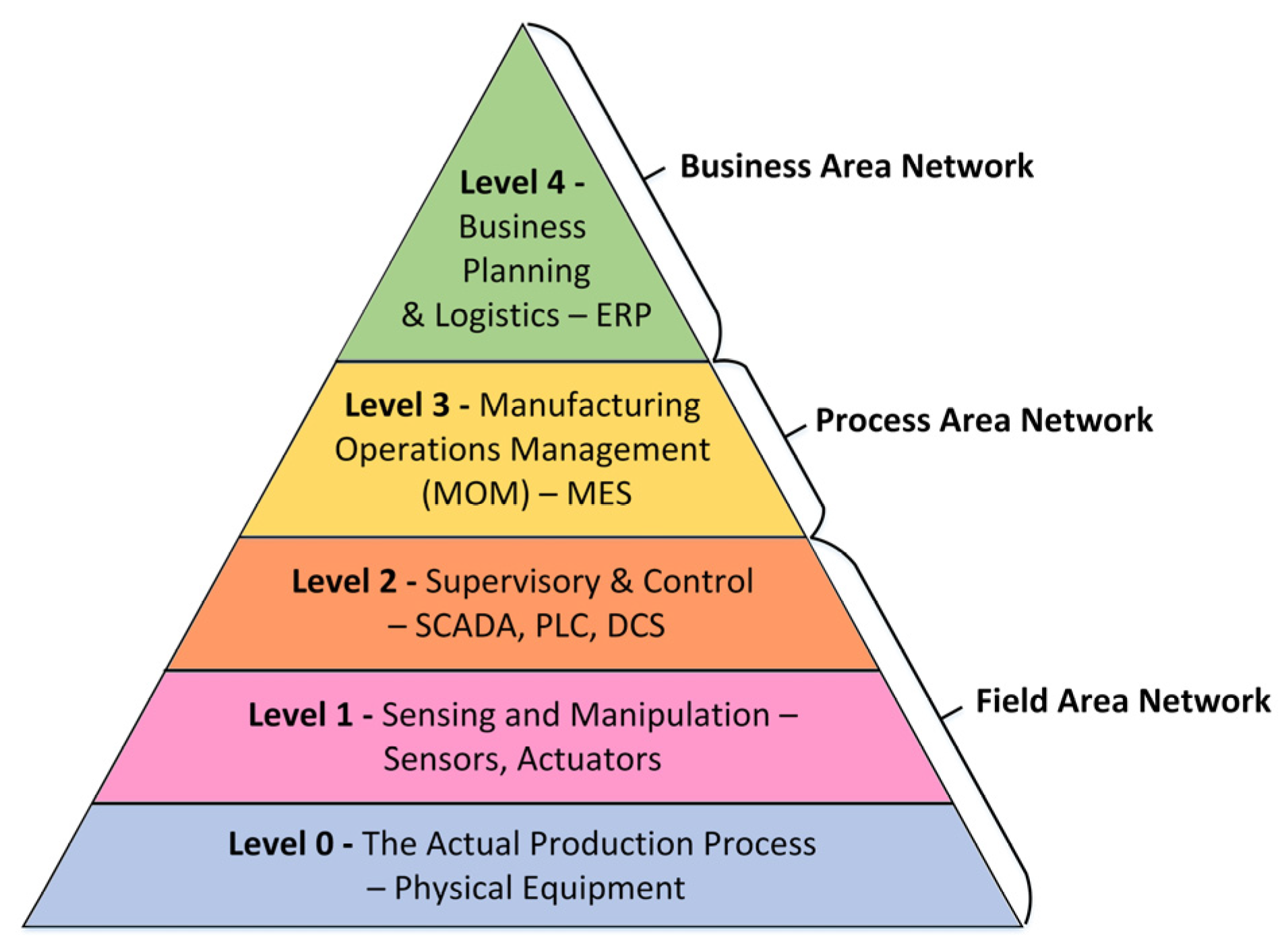

2.1. Industrial Control Systems

2.2. Intrusion Detection Systems

2.3. Intrusion Detection in Critical Infrastructures

2.4. Machine Learning for Intrusion Detection

3. Proposed DDoS Attack Detection System for Industry 4.0 CPPSs

3.1. Proposed IDS Architecture

3.2. Feature Extraction and Dataset Generation

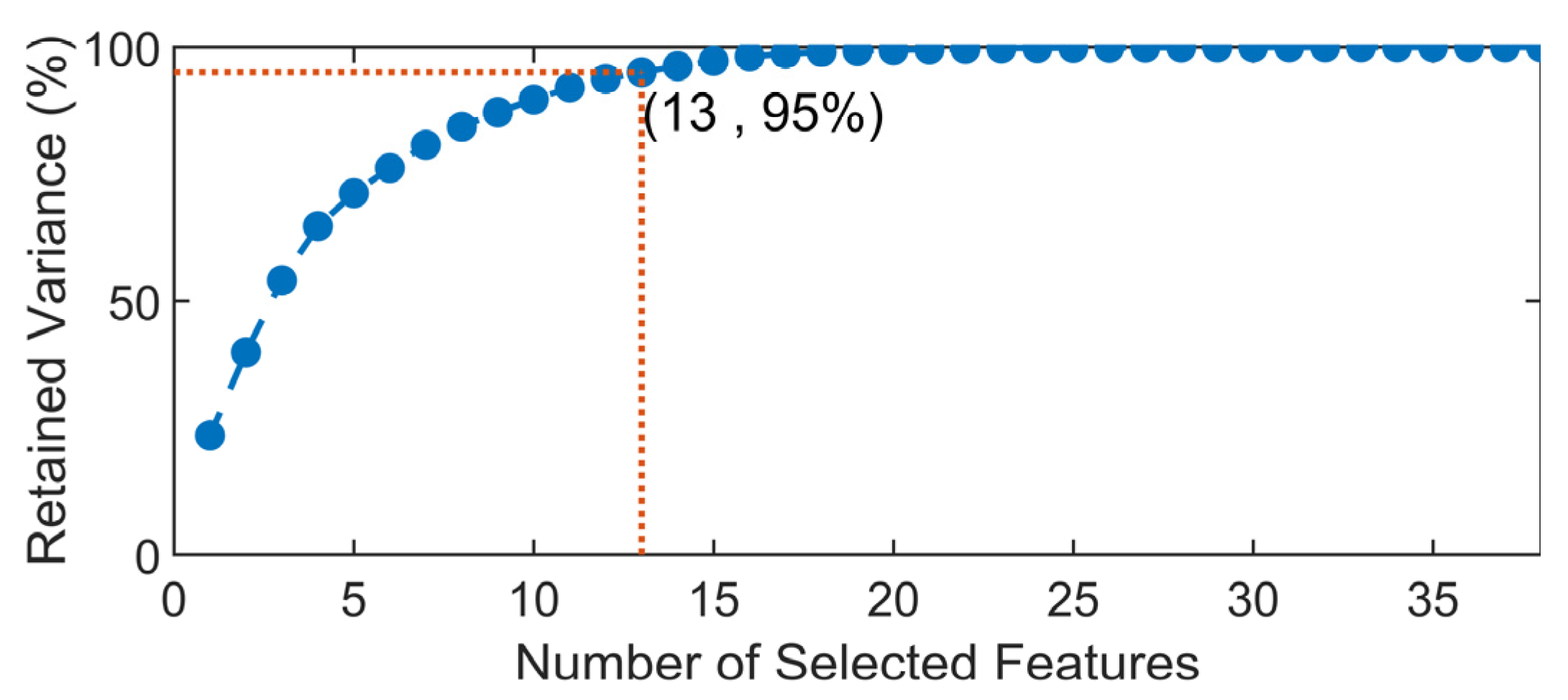

3.3. Pre-Analysis and Feature Selection

3.4. Applied Machine Learning Algorithms

3.5. Performance Evaluation Metrics

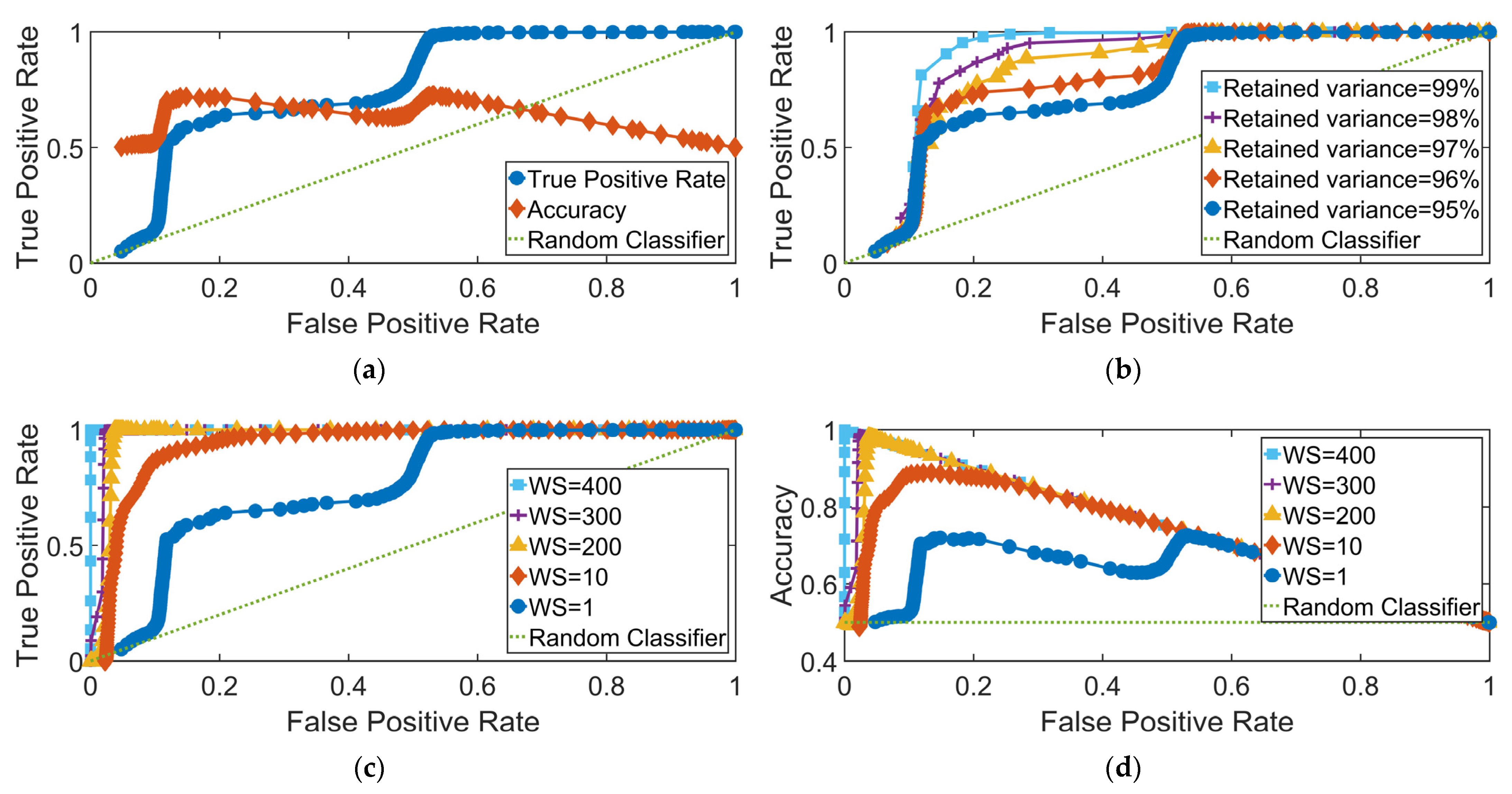

4. Performance Evaluation Results

4.1. Semi-Supervised Learning Algorithms

4.2. Unsupervised Learning Algorithms

4.3. Supervised Learning Algorithms

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Lee, J.; Bagheri, B.; Kao, H.-A. A Cyber-Physical Systems architecture for Industry 4.0-based manufacturing systems. Manuf. Lett. 2015, 3, 18–23. [Google Scholar] [CrossRef]

- Tjahjono, B.; Esplugues, C.; Ares, E.; Pelaez, G. What does Industry 4.0 mean to Supply Chain? Procedia Manuf. 2017, 13, 1175–1182. [Google Scholar] [CrossRef]

- Esfahani, A.; Mantas, G.; Matischek, R.; Saghezchi, F.B.; Rodriguez, J.; Bicaku, A.; Maksuti, S.; Tauber, M.; Schmittner, C.; Bastos, J. A Lightweight Authentication Mechanism for M2M Communications in Industrial IoT Environment. IEEE Internet Things J. 2017, 6, 288–296. [Google Scholar] [CrossRef]

- Perez, R.L.; Adamsky, F.; Soua, R.; Engel, T. Machine Learning for Reliable Network Attack Detection in SCADA Systems. In Proceedings of the 2018 17th IEEE International Conference on Trust, Security And Privacy in Computing And Communications/12th IEEE International Conference On Big Data Science And Engineering (TrustCom/BigDataSE), New York, NY, USA, 1–3 August 2018; pp. 633–638. [Google Scholar]

- Saghezchi, F.B.; Mantas, G.; Ribeiro, J.; Esfahani, A.; Alizadeh, H.; Bastos, J.; Rodriguez, J. Machine Learning to Automate Network Segregation for Enhanced Security in Industry 4.0. In Lecture Notes of the Institute for Computer Sciences, Social-Informatics and Telecommunications Engineering, LNICST; Springer: Cham, Switzerland, 2019; Volume 263, pp. 149–158. ISBN 9783030051945. [Google Scholar]

- Zargar, S.T.; Joshi, J.; Tipper, D. A Survey of Defense Mechanisms Against Distributed Denial of Service (DDoS) Flooding Attacks. IEEE Commun. Surv. Tutor. 2013, 15, 2046–2069. [Google Scholar] [CrossRef] [Green Version]

- NetMate Meter Download|SourceForge.net. Available online: https://sourceforge.net/projects/netmate-meter/ (accessed on 19 January 2022).

- Ali, S.; Li, Y. Learning Multilevel Auto-Encoders for DDoS Attack Detection in Smart Grid Network. IEEE Access 2019, 7, 108647–108659. [Google Scholar] [CrossRef]

- Saghezchi, F.B.; Mantas, G.; Ribeiro, J.; Al-Rawi, M.; Mumtaz, S.; Rodriguez, J. Towards a secure network architecture for smart grids in 5G era. In Proceedings of the 2017 13th International Wireless Communications and Mobile Computing Conference, IWCMC, Valencia, Spain, 26–30 June 2017. [Google Scholar]

- Adepu, S.; Mathur, A. Distributed Attack Detection in a Water Treatment Plant: Method and Case Study. IEEE Trans. Dependable Secur. Comput. 2021, 18, 86–99. [Google Scholar] [CrossRef]

- Junejo, K.N.; Goh, J. Behaviour-Based Attack Detection and Classification in Cyber Physical Systems Using Machine Learning. In Proceedings of the 2nd ACM International Workshop on Cyber-Physical System Security; Association for Computing Machinery: New York, NY, USA, 2016; pp. 34–43. [Google Scholar]

- Amin, S.; Litrico, X.; Sastry, S.; Bayen, A.M. Cyber Security of Water SCADA Systems—Part I: Analysis and Experimentation of Stealthy Deception Attacks. IEEE Trans. Control. Syst. Technol. 2013, 21, 1963–1970. [Google Scholar] [CrossRef]

- Maglaras, L.A.; Jiang, J.; Cruz, T.J. Combining ensemble methods and social network metrics for improving accuracy of OCSVM on intrusion detection in SCADA systems. J. Inf. Secur. Appl. 2016, 30, 15–26. [Google Scholar] [CrossRef] [Green Version]

- Alhaidari, F.A.; AL-Dahasi, E.M. New Approach to Determine DDoS Attack Patterns on SCADA System Using Machine Learning. In Proceedings of the 2019 International Conference on Computer and Information Sciences (ICCIS), Sakaka, Saudi Arabia, 3–4 April 2019; pp. 1–6. [Google Scholar]

- IBM X-Force Research: Security Attacks on Industrial Control Systems—Security Intelligence. Available online: https://securityintelligence.com/media/security-attacks-on-industrial-control-systems/ (accessed on 15 January 2022).

- Stouffer, K.; Lightman, S.; Pillitteri, V.; Abrams, M.; Hahn, A. Guide to Industrial Control Systems (ICS) Security; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2015. [CrossRef]

- Zamani, R.; Moghaddam, M.P.; Haghifam, M.-R. Dynamic Characteristics Preserving Data Compressing Algorithm for Transactive Energy Management Frameworks. IEEE Trans. Ind. Inform. 2022, 1. [Google Scholar] [CrossRef]

- Zamani, R.; Moghaddam, M.P.; Haghifam, M.-R. Evaluating the Impact of Connectivity on Transactive Energy in Smart Grid. IEEE Trans. Smart Grid 2021, 1. [Google Scholar] [CrossRef]

- Ding, D.; Han, Q.; Wang, Z.; Ge, X. A Survey on Model-Based Distributed Control and Filtering for Industrial Cyber-Physical Systems. IEEE Trans. Ind. Inform. 2019, 15, 2483–2499. [Google Scholar] [CrossRef] [Green Version]

- ISO. IEC 62264-1:2013—Enterprise-control system integration—Part 1: Models and Terminology. Available online: https://www.iso.org/standard/57308.html (accessed on 17 January 2022).

- IEEE. IEEE Std C37.1-1994—IEEE Standard Definition, Specification and Analysis of Systems Used for Supervisory Control, Data Acquisition, and Automatic Control; IEEE: Piscataway, NJ, USA, 1994. [Google Scholar]

- Zhu, B.; Sastry, S. SCADA-Specific Intrusion Detection/Prevention Systems: A Survey and Taxonomy. In Proceedings of the 1st Workshop on SECURE Control Systems (SCS), Stockholm, Sweden, 12 April 2010; Volume 11, p. 7. [Google Scholar]

- Almada-Lobo, F. The Industry 4.0 revolution and the future of Manufacturing Execution Systems (MES). J. Innov. Manag. 2015, 3, 16–21. [Google Scholar] [CrossRef]

- Bartodziej, C.J. (Ed.) The Concept Industry 4.0 BT—The Concept Industry 4.0: An Empirical Analysis of Technologies and Applications in Production Logistics; Springer Fachmedien Wiesbaden: Wiesbaden, Germany, 2017; pp. 27–50. ISBN 978-3-658-16502-4. [Google Scholar]

- Linda, O.; Vollmer, T.; Manic, M. Neural Network based Intrusion Detection System for critical infrastructures. In Proceedings of the 2009 International Joint Conference on Neural Networks, Atlanta, GA, USA, 14–19 June 2009; pp. 1827–1834. [Google Scholar]

- Ribeiro, J.; Saghezchi, F.B.; Mantas, G.; Rodriguez, J.; Abd-Alhameed, R.A. HIDROID: Prototyping a Behavioral Host-Based Intrusion Detection and Prevention System for Android. IEEE Access 2020, 8, 23154–23168. [Google Scholar] [CrossRef]

- Scarfone, K.A.; Mell, P.M. SP 800-94. Guide to Intrusion Detection and Prevention Systems (IDPS); National Institute of Standards & Technology: Gaithersburg, MD, USA, 2007.

- Liao, H.-J.; Richard Lin, C.-H.; Lin, Y.-C.; Tung, K.-Y. Intrusion detection system: A comprehensive review. J. Netw. Comput. Appl. 2013, 36, 16–24. [Google Scholar] [CrossRef]

- Borges, P.; Sousa, B.; Ferreira, L.; Saghezchi, F.B.; Mantas, G.; Ribeiro, J.; Rodriguez, J.; Cordeiro, L.; Simoes, P. Towards a Hybrid Intrusion Detection System for Android-based PPDR terminals. In Proceedings of the IM 2017—2017 IFIP/IEEE International Symposium on Integrated Network and Service Management, Lisbon, Portugal, 8–12 May 2017. [Google Scholar]

- García-Teodoro, P.; Díaz-Verdejo, J.; Maciá-Fernández, G.; Vázquez, E. Anomaly-based network intrusion detection: Techniques, systems and challenges. Comput. Secur. 2009, 28, 18–28. [Google Scholar] [CrossRef]

- Barbosa, R.R.R.; Pras, A. Intrusion Detection in SCADA Networks BT—Mechanisms for Autonomous Management of Networks and Services; Stiller, B., De Turck, F., Eds.; Springer: Berlin/Heidelberg, Germany, 2010; pp. 163–166. [Google Scholar]

- Sperotto, A.; Schaffrath, G.; Sadre, R.; Morariu, C.; Pras, A.; Stiller, B. An Overview of IP Flow-Based Intrusion Detection. IEEE Commun. Surv. Tutor. 2010, 12, 343–356. [Google Scholar] [CrossRef] [Green Version]

- Giraldo, J.; Urbina, D.; Cardenas, A.; Valente, J.; Faisal, M.; Ruths, J.; Tippenhauer, N.O.; Sandberg, H.; Candell, R. A Survey of Physics-Based Attack Detection in Cyber-Physical Systems. ACM Comput. Surv. 2018, 51, 1–36. [Google Scholar] [CrossRef]

- Guan, Y.; Ge, X. Distributed Attack Detection and Secure Estimation of Networked Cyber-Physical Systems Against False Data Injection Attacks and Jamming Attacks. IEEE Trans. Signal Inf. Process. Netw. 2018, 4, 48–59. [Google Scholar] [CrossRef] [Green Version]

- Dolk, V.S.; Tesi, P.; De Persis, C.; Heemels, W.P.M.H. Event-Triggered Control Systems Under Denial-of-Service Attacks. IEEE Trans. Control. Netw. Syst. 2017, 4, 93–105. [Google Scholar] [CrossRef] [Green Version]

- Pasqualetti, F.; Dörfler, F.; Bullo, F. Attack Detection and Identification in Cyber-Physical Systems. IEEE Trans. Autom. Control. 2013, 58, 2715–2729. [Google Scholar] [CrossRef] [Green Version]

- Zamani, R.; Moghaddam, M.P.; Panahi, H.; Sanaye-Pasand, M. Fast Islanding Detection of Nested Grids Including Multiple Resources Based on Phase Criteria. IEEE Trans. Smart Grid 2021, 12, 4962–4970. [Google Scholar] [CrossRef]

- Jonker, M.; Sperotto, A.; Pras, A. DDoS Mitigation: A Measurement-Based Approach. In Proceedings of the NOMS 2020—2020 IEEE/IFIP Network Operations and Management Symposium, Budapest, Hungary, 20–24 April 2020; pp. 1–6. [Google Scholar]

- Steinberger, J.; Sperotto, A.; Baier, H.; Pras, A. Distributed DDoS Defense: A collaborative Approach at Internet Scale. In Proceedings of the NOMS 2020—2020 IEEE/IFIP Network Operations and Management Symposium, Budapest, Hungary, 20–24 April 2020; pp. 1–6. [Google Scholar]

- Jiang, J.; Yasakethu, L. Anomaly Detection via One Class SVM for Protection of SCADA Systems. In Proceedings of the 2013 International Conference on Cyber-Enabled Distributed Computing and Knowledge Discovery, Beijing, China, 10–12 October 2013; pp. 82–88. [Google Scholar]

- Tsai, C.-F.; Hsu, Y.-F.; Lin, C.-Y.; Lin, W.-Y. Intrusion detection by machine learning: A review. Expert Syst. Appl. 2009, 36, 11994–12000. [Google Scholar] [CrossRef]

- Ribeiro, J.; Saghezchi, F.B.; Mantas, G.; Rodriguez, J.; Shepherd, S.J.; Abd-Alhameed, R.A. An Autonomous Host-Based Intrusion Detection System for Android Mobile Devices. Mob. Netw. Appl. 2020, 25, 164–172. [Google Scholar] [CrossRef]

- Amouri, A.; Alaparthy, V.T.; Morgera, S.D. A Machine Learning Based Intrusion Detection System for Mobile Internet of Things. Sensors 2020, 20, 461. [Google Scholar] [CrossRef] [Green Version]

- Sarker, I.H.; Abushark, Y.B.; Alsolami, F.; Khan, A.I. IntruDTree: A Machine Learning Based Cyber Security Intrusion Detection Model. Symmetry 2020, 12, 754. [Google Scholar] [CrossRef]

- Maglaras, L.A.; Jiang, J. Intrusion detection in SCADA systems using machine learning techniques. In Proceedings of the 2014 Science and Information Conference, London, UK, 27–29 August 2014; pp. 626–631. [Google Scholar]

- Schuster, F.; Paul, A.; Rietz, R.; Koenig, H. Potentials of Using One-Class SVM for Detecting Protocol-Specific Anomalies in Industrial Networks. In Proceedings of the 2015 IEEE Symposium Series on Computational Intelligence, Cape Town, South Africa, 8–10 December 2015; pp. 83–90. [Google Scholar]

- Do, V.L.; Fillatre, L.; Nikiforov, I. A statistical method for detecting cyber/physical attacks on SCADA systems. In Proceedings of the 2014 IEEE Conference on Control Applications (CCA), Antibes/Nice, France, 8–10 October 2014; pp. 364–369. [Google Scholar]

- Rrushi, J.; Kang, K.-D. Detecting Anomalies in Process Control Networks. In Critical Infrastructure Protection III; Palmer, C., Shenoi, S., Eds.; Springer: Berlin/Heidelberg, Germany, 2009; pp. 151–165. ISBN 978-3-642-04798-5. [Google Scholar]

- Valdes, A.; Cheung, S. Communication pattern anomaly detection in process control systems. In Proceedings of the 2009 IEEE Conference on Technologies for Homeland Security, Waltham, MA, USA, 11–12 May 2009; 2009; pp. 22–29. [Google Scholar]

- T-Shark: Terminal-Based Wireshark. Available online: https://www.wireshark.org/docs/wsug_html_chunked/AppToolstshark.html (accessed on 19 January 2022).

- GitHub—Wanduow/Libprotoident: Network Traffic Classification Library that Requires Minimal Application Payload. Available online: https://github.com/wanduow/libprotoident (accessed on 19 January 2022).

- Moon, T.K. The expectation-maximization algorithm. IEEE Signal Process. Mag. 1996, 13, 47–60. [Google Scholar] [CrossRef]

| Feature Index | Feature Description | Feature Index | Feature Description |

|---|---|---|---|

| 1 | Source IP address | 24 | Max. packet interarrival time in backward path |

| 2 | Source Port number | 25 | Std. of packet interarrival time in backward path |

| 3 | Destination IP address | 26 | Flow (session) duration (μs) |

| 4 | Destination Port number | 27 | Min. active time of the flow (before going idle) (μs) |

| 5 | Transport protocol (TCP/UDP) | 28 | Average active time of the session (μs) |

| 6 | Total packets transmitted in forward direction | 29 | Max. active time of the session (μs) |

| 7 | Total Bytes transmitted in forward direction | 30 | Std. of active time of the session (μs) |

| 8 | Total packets transmitted in backward direction | 31 | Min. idle time of the flow (before going active) (μs) |

| 9 | Total Bytes transmitted in backward direction | 32 | Average idle time of the flow (μs) |

| 10 | Min. packet length in forward direction | 33 | Max. idle time of the flow (μs) |

| 11 | Average packet length in forward direction | 34 | Std. of idle time of the flow (μs) |

| 12 | Max. packet length in forward direction | 35 | Avg. number of packets in the forward sub flow |

| 13 | Std. of packet length in forward direction | 36 | Avg. number of bytes in the forward sub flow |

| 14 | Min. packet length in backward direction | 37 | Avg. number of packets in the backward sub flow |

| 15 | Average packet length in backward direction | 38 | Avg. number of bytes in the forward sub flow |

| 16 | Max. packet length in backward direction | 39 | Number of PSH flags sent forward (0 for UDP) |

| 17 | Std. of packet length in backward direction | 40 | Number of PSH flags sent backward (0 for UDP) |

| 18 | Min. packet interarrival time in forward direction | 41 | Number of URG flags sent forward (0 for UDP) |

| 19 | Avg. packet interarrival time in forward direction | 42 | Number of URG flag sent backward (0 for UDP) |

| 20 | Max. packet interarrival time in forward direction | 43 | The total bytes used for headers in forward path |

| 21 | Std. of packet interarrival time in forward path | 44 | The total bytes used for headers in backward path |

| 22 | Avg. packet interarrival time in backward path | 45 | Time Stamp |

| 23 | Mean packet interarrival time in backward path | - | - |

| True Label | Predicted Label | |

|---|---|---|

| Benign | Malicious | |

| Benign | TN | FP |

| Malicious | FN | TP |

| Algorithm | TPR | FPR | Precision | Recall | F1 | Accuracy |

|---|---|---|---|---|---|---|

| K-Means | 0.822 | 0.78 | 0.51 | 0.822 | 0.63 | 0.52 |

| EM | 0.70 | 0.69 | 0.50 | 0.70 | 0.58 | 0.51 |

| Algorithm | TPR | FPR | Precision | Recall | F1 | Accuracy |

|---|---|---|---|---|---|---|

| K-Means | 0.90 | 0.0001 | 0.9999 | 0.90 | 0.95 | 0.95 |

| EM | 0.99995 | 0.09 | 0.91 | 0.99995 | 0.95 | 0.95 |

| Algorithm | TPR | FPR | Precision | Recall | F1 | Accuracy |

|---|---|---|---|---|---|---|

| OneR | 0.987 | 0.013 | 0.987 | 0.987 | 0.987 | 0.987 |

| LR | 0.970 | 0.029 | 0.972 | 0.970 | 0.970 | 0.970 |

| NB | 0.958 | 0.042 | 0.958 | 0.958 | 0.958 | 0.958 |

| BN | 0.995 | 0.005 | 0.995 | 0.995 | 0.995 | 0.994 |

| K-NN | 0.999 | 0.001 | 0.999 | 0.999 | 0.999 | 0.999 |

| SVM | 0.971 | 0.028 | 0.973 | 0.971 | 0.971 | 0.971 |

| DT | 0.999 | 0.001 | 0.999 | 0.999 | 0.999 | 0.999 |

| RF | 0.999 | 0.001 | 0.999 | 0.999 | 0.999 | 0.999 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Saghezchi, F.B.; Mantas, G.; Violas, M.A.; de Oliveira Duarte, A.M.; Rodriguez, J. Machine Learning for DDoS Attack Detection in Industry 4.0 CPPSs. Electronics 2022, 11, 602. https://doi.org/10.3390/electronics11040602

Saghezchi FB, Mantas G, Violas MA, de Oliveira Duarte AM, Rodriguez J. Machine Learning for DDoS Attack Detection in Industry 4.0 CPPSs. Electronics. 2022; 11(4):602. https://doi.org/10.3390/electronics11040602

Chicago/Turabian StyleSaghezchi, Firooz B., Georgios Mantas, Manuel A. Violas, A. Manuel de Oliveira Duarte, and Jonathan Rodriguez. 2022. "Machine Learning for DDoS Attack Detection in Industry 4.0 CPPSs" Electronics 11, no. 4: 602. https://doi.org/10.3390/electronics11040602

APA StyleSaghezchi, F. B., Mantas, G., Violas, M. A., de Oliveira Duarte, A. M., & Rodriguez, J. (2022). Machine Learning for DDoS Attack Detection in Industry 4.0 CPPSs. Electronics, 11(4), 602. https://doi.org/10.3390/electronics11040602