1. Introduction

Students nowadays are, in the vast majority, a part of the so-called digital native generation, which by definition [

1] describes the generations of young people surrounded by digital technologies from birth. It is often mistakenly thought that their connection to digital technology also means that they are able to apply it (or use it) better than the older generations of the so-called digital immigrants [

2]. However, it is interesting that their learning process is significantly different from the older generations, who listened carefully to the teacher presenting the material and made written notes. Instead, their learning is spontaneous (an Internet search engine is used for any question, they browse graphic and video content instead of text), and in search of answers, they will most often actively exchange information through Internet forums and social networks [

3]. In particular, their ability to efficiently collect and process more information simultaneously is impressive [

4].

New teaching methodologies recognize the above circumstances and indicate the need for learning that takes place in a student-centered learning environment, in which the student learns based on experience without constant dependence on the teacher’s instructions [

5]. In such a teaching environment, the teacher remains an important mediator who defines learning outcomes, aligns them with students’ needs, and helps students achieve planned learning goals. The teacher also manages the learning process and continuously encourages students to be active participants of the process. The importance of introducing a student-centered teaching model has been recognized as a significant indicator of quality in higher education, so Standards and Guidelines for Quality Assurance in the European Higher Education Area (ESG) defined the following standard: Institutions should ensure that programs are delivered in a way that encourages students to take an active role in creating learning process, and that the assessment of students reflects this approach [

6].

Some authors go a step further in designing student-centered learning, emphasizing that curricula should be designed to acquire “powerful knowledge”, which can be defined as specialized knowledge that serves a specific purpose [

7]. That would mean that the student acquires powerful knowledge that is applicable in a natural business environment and that deals with issues of public importance [

8] and has access to conversations and discussions about the values of society (social, cultural, political, economic, or technological) [

9]. Such knowledge is powerful because it provides students with a good understanding of the natural and social world, allowing them to assess existing knowledge (outside of individual experiences and specific contexts) and create new knowledge.

In order to ensure that students are provided with powerful knowledge, it is necessary to develop research-based curricula [

10]. For that purpose, Inquiry-Based Learning methodology is applicable, which considers research as a critical component of modern study programs with the ultimate goal of “promoting the acquisition of new knowledge, skills, and attitudes by studying issues and problems using research methods and standards” [

11]. In line with such an approach, learning occurs through research led by students and instructors, solving unsolved problems in the real world. Furthermore, research-based learning is based on a student-centered approach that emphasizes students’ responsibility in the learning process, not the teacher’s.

The development of information technology in the last decade has opened the door to digitalization in higher education, and this has significantly accelerated during the COVID-19 pandemic due to the need for online learning. The digitalization of education has brought higher education significantly closer to those target groups who previously could not afford it, suffered from transport exclusion, or were unable to attend stationary meetings due to professional or private obligations. They have used a variety of technologies, from web-based distance learning platforms (massive open online courses—MOOCs) to the latest virtual (VR) and augmented reality (AR) technologies. Ultimately, these technologies allow for a departure from the usual teaching environment such as the classroom and allow for broader (and faster) access to knowledge. Moreover, digital technologies can take education even further, in the direction of an approach that focuses on solving real business cases, whether students learn on digital platforms used in business or create an environment that simulates real business scenarios. For example, the AR technology can be beneficial for teaching [

12,

13,

14] because it can simulate, at a significantly lower cost, a natural business environment that is particularly demanding in terms of conducting practical classes and exercises (e.g., costly machines and materials are needed or work environment is hazardous).

When discussing the application of AR technology in higher education, it is important to follow the essential topics in the scientific literature. Therefore, the research from [

15], focusing on analyzing scientific productions on augmented reality in higher education (ARHE), is of particular interest. According to the above research, the most important topics in the scientific literature on ARHE from 2016 to 2019 were ’virtual reality’ and ’mobile’, and such platforms have been implemented in the Atomic project [

16].

However, teaching on digital platforms brings additional challenges that became particularly noticeable during the COVID-19 pandemic, such as lack of physical interaction, lack of motivation, and lack of oral and social communication [

17]. This is why it is crucial to avoid replicating traditional learning situations in which the same content is directed at passive and large groups of students. Students must learn about digital technologies, but with the research of actual cases, they will acquire valuable and applicable knowledge.

Although AR technology can be an important training platform in higher education, it is necessary to consider the integration of the technology itself with the competencies of teachers. The research [

18] is significant in this part, which indicates that the Technological Pedagogical Content Knowledge (TPACK) framework can greatly help different professionals develop training processes.

The ATOMIC project [

16] highlighted the need to create an extended reality-based (XR-based) teaching environment that will enable students to meet the challenges of a natural business environment such as planning and organizing, staffing and control, problem solving, critical thinking, creativity, and teamwork. The project was funded by the Erasmus+ program and with the following participants: Lodz University of Technology (Poland), University of Tartu (Estonia), University of Aveiro (Portugal), Polytechnic of Šibenik (Croatia), and Nofer Institute of Occupational Medicine (Poland). Various scenarios were developed within the project, including developing powerful knowledge in occupational safety, environmental protection, logistics, and transport, and subsequently implemented on different XR platforms. However, the most significant activity was testing these platforms during the summer school held in September 2021, where students’ experiences as potential end-users were researched and analyzed through created questionnaires.

This paper presents an XR-based educational application that enables students to meet the challenges of a natural business environment with the following contributions to the XR usability studies:

Juxtaposing four different approaches (projector-based AR, mobile-based AR, HMD AR, and HMD VR) in the context of their usability and educational effect, establishing advantages and disadvantages of each approach.

Proposing a 34-question usability testing questionnaire divided into pre- and post-test sections that can be used in future XR usability studies.

The remainder of this document is organized as follows.

Section 2 illustrates critical aspects of the XR technology used to realize use scenarios from a real business that are common in business (ergonomy, fire handling, and logistical and environmental problems in transport) and for which the critical advantages of XR technology in the field of education are clearly visible.

Section 3 describes the methodology of researching the experiences of summer school participants who gave their opinion on implemented and tested XR solutions with a particular focus on their views on the advantages and disadvantages of learning XR platforms compared to traditional classroom learning. Finally, we list some concluding remarks in

Section 4.

2. Cases

2.1. Case 1: Ergonomy (Projector-Based AR)

The Ergonomy scenario, outlined within the ATOMIC project array of scenarios, consists of an exercise tailored for familiarizing users with a small and simple set of ergonomy rules helpful in organizing their workplace. The main objective established for this scenario is to enable the user to easily arrange their work area according to work safety regulations and recommendations provided by health specialists. To do so, a shortlist of common workspace elements was selected and adapted from a longer list of possible objects (

Figure 1), including a monitor, a keyboard, a small plant, a book, a mug, and a smartphone. The users should handle and arrange these according to what they usually do at their regular workplaces. Then, they are rearranged and fine-tuned according to ergonomy-related safety regulations and recommendations provided through the application.

The whole work area is designed for a desk with at least 120 cm × 80 cm dimensions, and its surface is detected by two cameras used as the input devices for the application. Each object is detected according to where it is placed on a desk. To establish a closer link with the user’s particular characteristics, the application also detects if the user is left or right handed and the length of their forearm. The application is oblivious to the length of the user’s shirt sleeve. All measurements are performed based on the detection of the user’s left or right hand. All this information is applied to enable the user to better understand and assess their commonly used workspace layout, instead of an ergonomically optimized one. Help is provided along the way with visual feedback, in the form of arrows guiding the way, as to where each object was initially placed and where it should be. Other feedback and help solutions were discussed and tested. Still, their implementation for the alpha version of the prototype was left on standby for further development and testing (i.e., color codes for position accuracy, percentage of, etc.). A time limit was also tested but abandoned, for it was believed that users would be pressured by time and feel evaluated, inducing unnecessary stress.

The development of the Ergonomy Scenario prototype stemmed from several brainstorming sessions involving various members of the ATOMIC team with very different backgrounds (i.e., Computer Engineering, Health, Communication, and UX). In these sessions, different technological approaches were researched and discussed, and although some were discarded, they were crucial to devise the structure of the alpha version of the prototype tested. The Ergonomy prototype may be understood according to two core technologies: augmented reality and computer vision. In the first case, running the augmented reality technology relies on Vuforia [

19], an augmented reality engine used with the Unity 3D engine [

20]. Fundamentally, this means that when a VuMark [

21], which is a visual indicator, is placed within the camera’s field of view, Vuforia captures the image and searches for any information associated with it in an existing project database. In the case of computer vision solutions, these make extra-object visual artifacts such as QR codes unnecessary, thus maintaining the prototype’s aesthetic structure. Therefore, it was decided that the project would accommodate and combine both technologies to make up for any information not captured by the other. To implement the computer vision OpenCV [

22], a computer vision library that enables real-time recognition and localization of objects, was used alongside a script written in Python developed exclusively for this prototype. A custom detection model identifies the object in question and saves its coordinates when the camera captures an object. When all the objects are identified, the information is then sent through the User Datagram Protocol to a Unity application responsible for generating the information and projected onto the user’s desk.

By intertwining the above mentioned technologies, it was possible to establish the prototype’s user experience flow. The moment the user initializes the prototype, the Unity application determines his or her handedness. To do so, the user only needs to place his or her preferred forearm on the top of the table, with the elbow at the table’s end closest to their body. As soon as OpenCV detects the forearm either on the left side—left handed—or the right side—right handed—of the workplace, the information about the handedness and length of the forearm is stored, and the user is presented with some visual feedback confirming that this stage of the experience has ended. The user may start to place and distribute the objects on the table from this moment on. This object layout is performed according to how the user typically organizes his or her workspace. Suppose an object is not recognized, such as a bottle or a cup, for the sheer fact that these objects, from the camera’s perspective, appear as a circle. In that case, the user can attach a VuMark to the object, thus detecting it by Vuforia. When an object is correctly detected, its name and an arrow indicating its ideal position (calculated according to the handedness and the forearm length) are projected onto the user’s desk. In addition to the object’s information, a list containing all objects being detected at that time is also projected. This list is organized according to appreciations such as “well-positioned”, “moderately positioned”, and “badly positioned”, and information is presented quickly and succinctly, providing the positional status of each detected object. The user can then decide whether the object is in its correct position or should be moved. Besides information regarding the object’s position, the Unity application also detects and presents the object’s alignment for quickly correcting if needed.

The list of hardware used in the scenario consists of two low-cost off-the-shelf web cameras, a projector, and a laptop or desktop computer. The projector should be a short-throw portable model. The two cameras, one for Vuforia and one for OpenCV, should be high resolution—1080p or better—to provide a better detection ratio for the VuMarks and the objects. The laptop or desktop computer, given the graphic resources and performance needed to run OpenCV and the object detection system used on the prototype—You Only Look Once (YOLO) [

23,

24]—along with Vuforia and Unity, requires a powerful NVIDIA graphics card.

2.2. Case 2: Fire (HMD-Based AR)

The Offire scenario developed within the ATOMIC Erasmus+ project is, at its core, a simulator in which the user is challenged to solve a fire-related emergency in an office environment. Through its use, the user should be able to gather simple competencies beneficial for making the right decisions when in a fire hazard. According to research on the subject, the response to a fire emergency can vary according to a multitude of correlated factors, such as the type of fire, its place of origin, the time since the outbreak, the morphology and physical layout of the room, the proximity of the air vents, and the temperature and humidity levels of the environments, among others. To build some theoretical and practical background on the subject, part of the ATOMIC team interviewed members of one of the fire departments located in Aveiro, Portugal, and did an extensive literature review on the topic. This literature review included a comparative analysis of other fire hazard simulators and training applications available on the market. The sum of this fire-related research enabled the team to build a solid understanding of issues such as types of fire, levels of danger, and forms of evacuation and safety, as well as additional vital factors that steer the behavior of any given person when facing a fire [

25].

It is believed that being aware of these fire-related factors, what to do in the case of a fire, knowing or quickly identifying the safety escape routes in the surrounding environment, and knowing or quickly identifying where the available fire-fighting equipment is located, are all things that must not be discarded by whoever uses specific spaces such as offices, schools, or museums (i.e.: employees, students, visitors, etc.) [

26].

The Offire scenario was developed with Augmented Reality technology with the use of Unity 3D engine, with the DreamWorld augmented reality glasses [

27] and the NOLO Motion Tracking [

28] device as its output and input devices, respectively (

Figure 2). The three-dimensional contents were modeled using the open-source Blender software. The Offire scenario design includes a clear separation of its data layer from its logical layer, thus enabling the introduction of new challenges and experiences in future versions of the simulator.

The scenario features three different fire situations in an office environment. The three simulations are similar in terms of their functional principles but differ as to the objects available, their physical aspect, and their distribution within the environment. For example,

Figure 3 illustrates one of the simulations available in the Offire scenario. It takes place in a real office and, due to its AR nature, combines natural objects with virtual ones.

The simulation reaches its end when the user accomplishes a set of corrective actions or, in a worst-case situation, does not perform any action for at least one minute, which is the time it takes the fire to become uncontrollable. The user is expected to be able to observe and assess a fire hazard situation and, according to the fire’s origin and through the use of the firefighting resources available (i.e., extinguisher, blanket, a bucket of water, etc.), overcome the challenge and put out the fire. There is a time limit to successfully complete the task. The users are motivated to improve their results throughout time and according to the feedback received (time, errors, and feedback about what would happen in an actual situation).

Before the simulation starts, and when in a simpler simulation challenge, the following virtual equipment is set up and distributed in the actual physical room: table, chair, desktop computer, pencil holder, waste paper bin, plants, window, door, paper, heater, telephone, blanket, bottle, extinguisher, and cabinet. In this simulation, which is similar to a small office, the fire starts in the waste paper bin. The extinguisher, in this case, is the only firefighting solution available. The user should check if the doors and windows are closed or not, to avoid the spread of fire. If the previous instructions are not applied, the user may not be able to put out the fire and have a negative outcome in the simulation. According to the literature review, each simulation adds some other challenges to what needs to be accomplished and provides an interesting solution for fire safety training. Therefore, being able to use an AR simulator may be a valuable prevention and training tool in the context of office environments and may assist the creation of competencies in this particular context [

29].

2.3. Case 3: Providing Constructive Criticism to an Employee (VR-Based Application)

Scenario #3 is a typical example of a branching scenario, which means that the presented content depends on the actions taken by the users and by the narrative route they choose. This particular scenario allows the users to take on the role of a manager of a small office team and confront difficult situations which require appropriate reactions and decisions. The main goal is to teach the users how to properly give valuable feedback to other team members or employees, how to handle inappropriate behavior, and how to encourage cooperation. In key moments of the simulation, they are presented with multiple choices which branch out the story into different outcomes (see

Figure 4). Not all of the choices are effective or beneficial. However, this is a learning application, and the users are given direct feedback on the impact and results of their choices.

All dialogues are given by professional English speakers. Additionally, the key elements (questions and answers) are displayed on the menu (see

Figure 5). To make the workplace environment more realistic, all employees were animated using MCO MOCAP ONLINE [

30]. Their Office Life animation provides sitting, standing, and walking animations enriched by many different office activities, interactions, and conversations, e.g., talking on the phone, working on the computer, etc.

The virtual environment was developed using Unity-Game Engine and C# programming language in the form of two scenes (

Figure 5). The first scene is in the form of a conference room with a few office workers. The user takes the role of a manager whose task is to react to the following situation: “Your employee has a long history of being late for work. Today he was about 30 min late, which resulted in a situation where all teams had to wait until he came to start the discussion on a new project”.

The second scene is in the form of this managerial office. The user (as the manager) and the latecomer are sitting at the desk. The user can see that the employee, sitting in front, is a little nervous (cannot sit still on the armchair). They must show great assertiveness by providing information on sanctions that will be undertaken if unwelcome behavior repeats.

Time for each answer is limited according to the selected difficulty level (10 min for beginner, 6 min for intermediate, and 3 min for advanced level).

The target platform for the tool is Oculus Quest, however it can be easily ported to other VR platforms.

2.4. Case 4: Package Design and Logistics Issues (Mobile-Based AR)

The next elaborated and tested scenario concerns package design and logistics issues. The scenario presents a complex view on package creation as it has an impact on very diverse things, such as product promotion, cost of transportation, or natural environment. What is more, important aspects such as circular economy, sustainability (sustainable development), and user’s needs (human-centered design approach) are included in the application. Therefore, aspects of the scenario or its content may be found on the current market. VR and AR based circular economy learning tools have even been developed before, but as the subject is very wide and varied, so are the applications. Generally, these VR and AR solutions are focused on assisting and training the end consumer and not the product producer [

31,

32].

Developing the application on smartphones makes the application easy to set up and removes the need for very specialized hardware. Due to the recent advancements with Google’s ARCore [

33] and Apple’s ARKit [

34], the application could be developed with full Augmented Reality support. This scenario was developed in Unity3D [

20] with the use of the ARFoundation [

35] toolkit. The ARFoundation toolkit was used to build the developed application into an Android and IOS compatible package by integrating ARCore and ARKit, respectively.

The scenario features several sub-tasks that the user must complete. These sub-tasks can be functionally divided into two categories:

package design considering your target group/users (elaboration of persona—a detailed customer profile, matching the product’s target group) and environmental issues (circular economy and sustainable development);

logistics issues (pallet loading and truck loading)—to optimize them (lean management and environmental aspects).

Out of these, the package design is the most inclusive and will form the bulk of the feedback to the user. The pallet and truck loading tasks are conceptually similar and have a lot of overlapping mechanics.

The package design task allows the user to select from lists of options to set the shape and material of the package,

Figure 6. Future iterations will also allow the user to set the type of material in the package and see the consequences of the choice. The target audience may be described, and a representative of potential product users can be created using a persona template. Thus, the persona template is a description with a set of guidelines that are later used in the final evaluation and scoring. The application user should consider the described target group in developing the product label and adjust the marketing campaign to it. The package design task itself does not contain any of the AR elements found in the rest of the scenario. The user is presented with a list of options and a confirmation button. If an option is selected, the confirmation button can be pressed, and by doing so, the task moves on to the next design step.

The second part of the scenario—logistics issues—is divided into two tasks: pallet loading and truck loading.

In the pallet loading task, the user sees an AR pallet on a detected flat surface, and their mission is to try and fit as many boxes onto the pallet as possible,

Figure 7. In this scenario, the center of the screen becomes the user’s pointer, and any flat surface intersecting with the ray cast from the pointer becomes the cursor. At the start of the scenario, the cursor is a transparent model of the pallet. The pallet can be placed on any detected horizontal flat surface such as a floor or a table. This pallet, and the entire scenario, can be scaled from a life-size to 1:10 scale using a slider on the top right corner of the screen. The bottom of the screen houses three additional buttons: left, back, and right. The left and right buttons rotate the cursor counterclockwise and clockwise, respectively. The back button allows the user to exit out of the task and return to the main menu screen of the scenario. Tapping anywhere else on the screen counts as placement, and this is the main form of interaction with the scenario. After the pallet has been placed, the user’s cursor in the scenario turns into a packaging box. The packaging boxes can only be placed on top of the pallet. If the user tries to place the box outside of the pallet area or partially on the pallet, then the transparent indicator is red, and no package is placed. If the user tries to place the box intersecting another box, this instead deletes all of the intersecting boxes. This is currently the main form of deleting unwanted boxes in this scenario.

A further step in the scenario is about truck loading. The user sets an AR truck onto a detected flat surface and then loads the truck’s bed with pallets,

Figure 8. This scenario uses the same button and cursor layout as the previous one but with minor differences. The scaling here is already scaled down 1:6 and can be scaled further to 1:60. Additionally, the pallet placement does not follow the same placement method as the box placement in the pallet scenario after the truck has been placed. The truck bed is sectioned into six regions. If the cursor is inside any of the six regions, then by tapping the screen, a pallet is placed in that section. The pallets are automatically placed and stacked in the cessation and do not require precise placement. The six regions are the only places pallets can be placed, and tapping anywhere else has no effect. The last step of the scenario is a selection of transportation parameters (where the created product should be transported, measured by the distance in kilometers, and how, answered by selecting a type of vehicle). On this basis, an impact on the environment will be estimated. The user will learn about carbon footprint and possible consequences for the ecosystem. The aim of this part is to increase ecological awareness among students.

3. Usability Testing

3.1. Usability Testing—Background

Mindful developers operate according to the principle “the customer is always right” [

36], understanding that what matters is how the user, not the producer, perceives the product. The system designer should adapt their solutions to the level of knowledge, experience, cultural conditions, technical and technological capabilities, and ultimately the mindset of the final user [

37]. Well-conducted product usability tests on representatives of the target group allow the creator to verify the design assumptions [

38]. The realization that usability tests are absolutely necessary for the success of a project must be followed by proper understanding of what they should consist of.

The main issue is usability tests overloading (heuristic tests, usability evaluation, aesthetic tests, etc.), which can lead to a complete failure [

39]. Respondents must not be absorbed for too long. According to research, the upper time limit for one-time engagement in filling the survey is 10 min. After this time, the level of engagement drops dramatically, followed by the reliability of the survey. It is difficult to calculate how many questions this period corresponds to as there are many factors to consider. Response time is determined by question length and complexity, level of abstraction, type of expected answer, etc. In general, research shows that users find it easier to cope with closed questions, especially when using the Likert scale [

40], which is very popular among analysts. Unfortunately, well-designed open questions with significant cognitive value are not admired by respondents. They require a higher level of involvement and creativity, which in excess is very tiring [

41]. Thus, it is recommended to place cumbersome questions at the end of the survey.

Complex usability tests should be modular: divided into smaller, thematically related fragments filled out after/before performing subsequent interaction tasks with the tested system. An essential aspect of conducting credible usability tests is to avoid instructing users on using the tested system, except providing its purpose. Furthermore, if usability tests are conducted in a stationary manner, the user can be observed during the whole cycle of performed tasks [

42]. The observer should be as passive as possible, encouraging only immediate verbal comments from the user. Such records often become the only evidence of usability shortcomings of the system [

43], which is why many researchers record audio and video during usability tests or keep handwritten observation notes [

44]. One has to be aware that this kind of approach requires additional formal approvals and consents.

Considering the above-mentioned good practices, we organized a 3-day event collecting feedback on the application created within the ATOMIC project that was tested by its final users (students). The protocol and results are presented in the following section.

3.2. Data Collection and Participants

The four prototypes were tested with students from the Lodz University of Technology, University of Tartu, University of Aveiro, and Polytechnic of Šibenik at the Trokut Incubator (Šibenik, Croatia) on 13–16 September 2021. The participants were recruited by institutional email and using leaflets and posters disseminated in the four partner universities. Twenty participants (five from each partner university) completed the experiment––none of the volunteers stopped the AR/VR experience. Each participant was randomly assigned to one of the four prototypes each day. The participants followed four different protocols depending on the prototype they were assigned to. They were instructed about the VR/AR equipment and trained on how to use the navigation and control system. Before the experiment, they were asked to fill out a questionnaire asking for their demographic characteristics, which are the following: on average, samples were 23 years old (median = 22.5, std = 3.54, range = 18–30) and the group comprised eleven men (mean = 23.09, median = 23, std = 3.87, range = 18–30) and nine women (mean = 22.88, median= 22, std = 3.07, range = 20–30).

3.3. Assessment and Validation Procedure

In order to collect feedback from the participants, a special mobile application was developed. The procedure of gathering relevant information is presented in

Figure 9. At the very beginning of the event, each tester filled in the part concerning socio-demographic data and their level of expertise in VR/AR (called profile form).

Every testing session started with the pre-test, where testers evaluated on a 5-point scale their current mood, Q1 (from negative to positive), and how they felt in the following aspects: Q2—being tense or relaxed, Q3—apathetic–energetic, Q4—sedated–stimulated, Q5—bored–interested, and Q6—distracted–attentive. Just after the session, the user filled out a post-test. The post-test consisted of three main parts: (1) mood questionnaire with the same questions as in the pre-test (Q1–Q6), (2) user’s opinion about the tested scenario and application (Q7–Q19), and (3) UI usability testing (Q20–Q28). Additionally, after testing the cases with VR and AR googles (case #2 and case #3) there were questions investigating the susceptibility to Visually Induced Motion Sickness. The second mood questionnaire was performed to check any changes in the user’s physio-mental state. All questions concerning application usability, scenario testing, and Visually Induced Motion Sickness Susceptibility (VIMSS) are shown in

Table 1. The VIMSS questionnaire investigated the frequency of nausea, headache, dizziness, fatigue, and eyestrain when using different visual devices, and it is commonly applied for this purpose [

45,

46].

3.4. Results and Discussion

Figure 10 presents the results of testers’ current mood on a 5-point scale (from negative to positive). As one can quickly notice, the highest gain was obtained in case #3 (scenario based on VR HMD). The average increase in each category is around 30–35%. The worst results can be observed in case #2 (scenario based on AR goggles)—increased by only 10%. Only in one category (attention), is there an increase of 28%. In case #1 (projector-based AR environment), the level of stimulation and interest increased by more than 30%. In case #4 (mobile-based AR), there was a stable growth at the 20–25% level in each category.

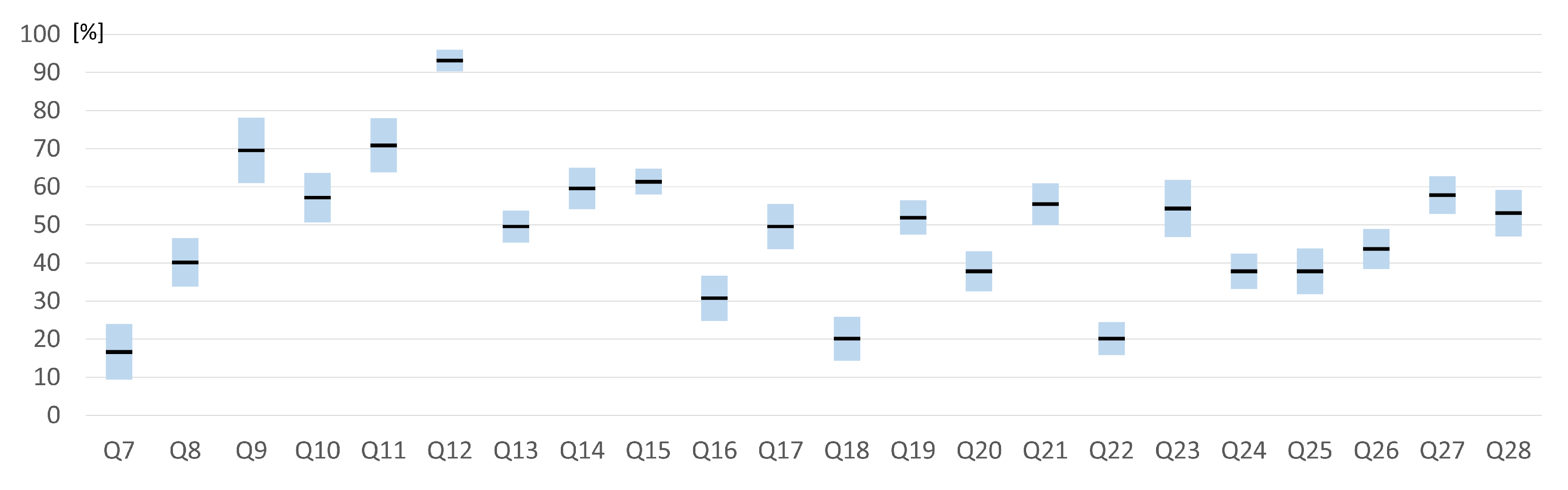

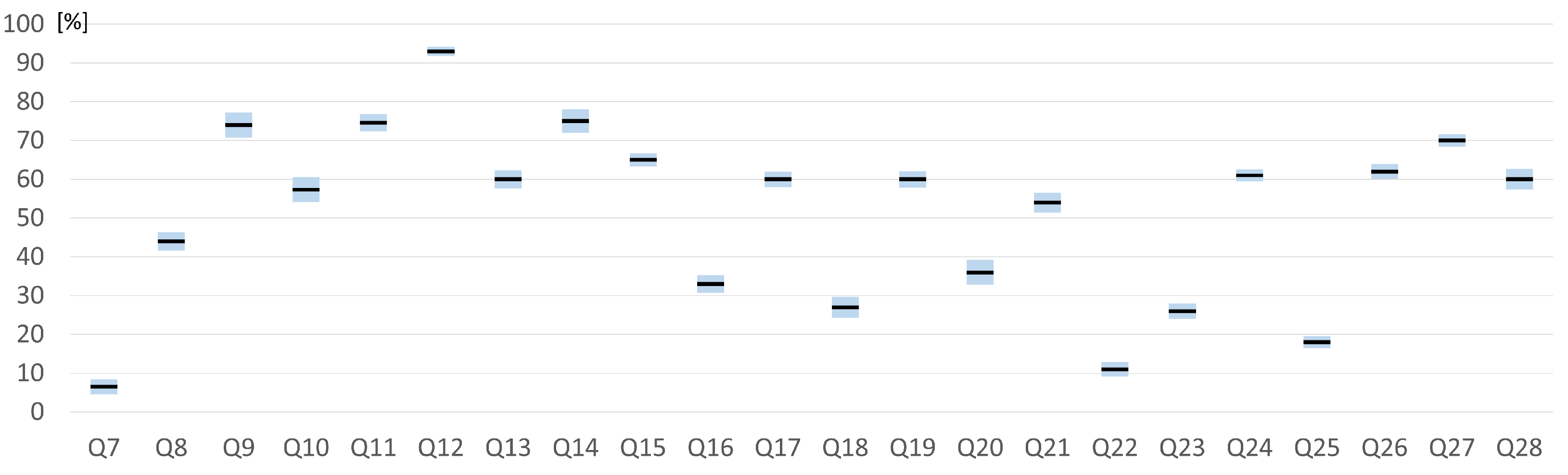

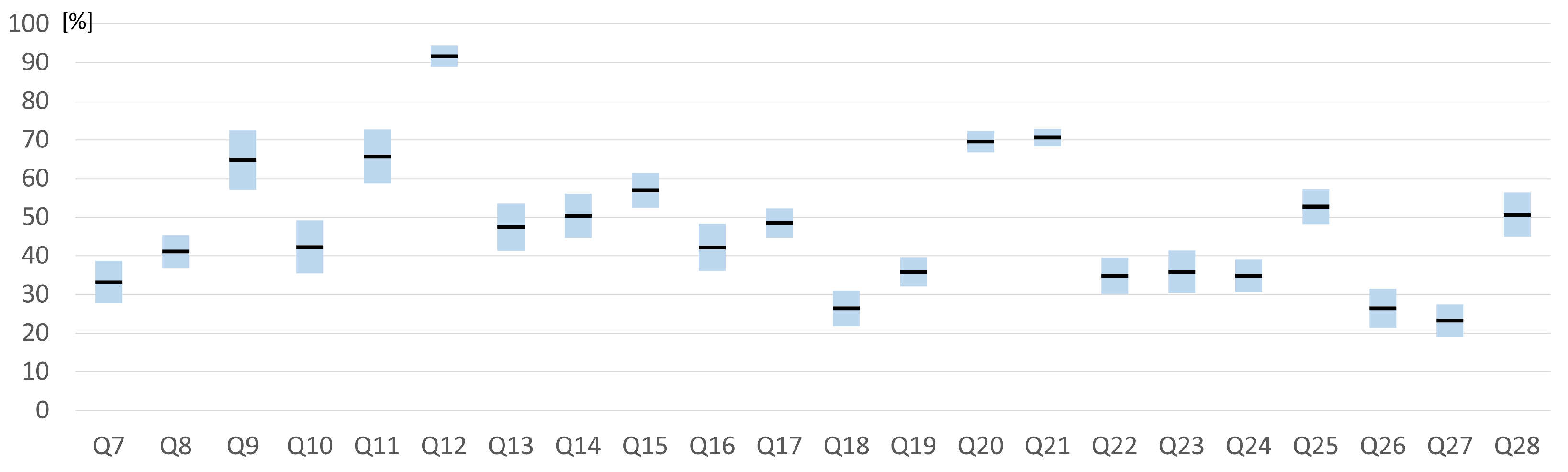

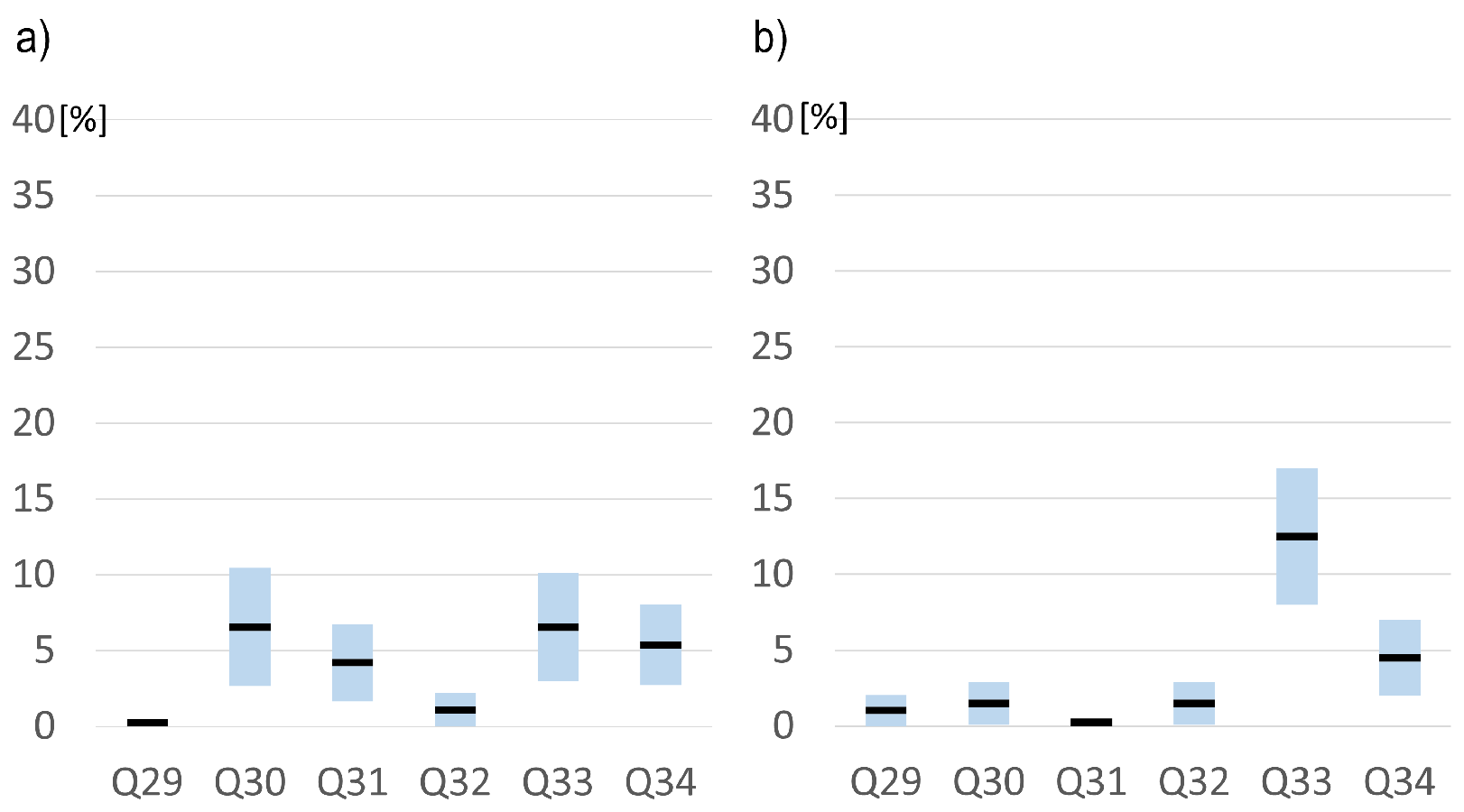

Figure 11,

Figure 12,

Figure 13 and

Figure 14 present the average values of answers to subsequent questions from

Table 1 for specific cases. All results were normalized (linear transformation) to a range of 0–100% to standardize the comparison.

The next seven questions (Q7–Q14) were focused on the tasks themselves. It is noticeable that none of the cases caused too much difficulty (Q7) among the respondents (difficulty level around 10–20% for cases #1, #2, and #3; only slightly above 30% for #4). The time to complete each case was found to be optimal, and the tasks, in general, to be very interesting (around 60–70%). In terms of task resilience (the ability to substitute real-life situations with AR or VR), it was rated at about 50–60%. According to respondents, realism is reflected worst in case #4 (mobile-based AR). It is most likely related to a small display, narrow field of view, and no stereoscopy phenomenon. The participants strongly believed that better educational effects can be achieved with VR/AR technology than traditional methods (paper–pencil exercises). The respondents also claimed that all the cases improved their knowledge and can be used as an additional tool for theoretical courses (more than 90%). The level of immersion was rated the best for case #3 (which is understandable—VR provides total immersion), and the rest of the cases were rated similarly (about 40–50%). The students found the technology for the task was chosen rather well (about 60–70%) though, again, case #4 was rated the worst. They complained about the necessity of holding the mobile throughout the task, which leads to arm fatigue, and small elements on the mobile screen.

The second part of the questionnaire (Q15–Q19) focused on the technology used in each scenario. According to the respondents, the most exciting (above 60%) was case #3, but the most challenging is case #4. In general, all of them are pleasant to use. The level of tiredness is relatively minor (20–30%) for each case. The most informative is case #3, the least is, again, case #4.

The next part of the questionnaire (Q20–Q28) focused on usability testing. In general, UI was assessed as quite complicated (Q20), especially in cases #2 and #3 (in both cases less than 40%) where the students had to deal with new technology (VR or AR goggles) that they do not use on a daily basis. They struggled with the fewest issues while using the mobile app (more then 70%), which was completely predictable—this generation of students has been comfortable with smartphones from an early age. It was confirmed in the next question (Q21) that they were able to quickly complete tasks and scenarios using mobiles (more then 70%). However, VR-based application has been rated as the least complicated (only 12%) in contrast to the mobile app (35%). Students did not need external support and information about the next steps when using mobile and VR apps (Q23). Functionality integration (Q24), consistency (Q25), clarity of message (Q27), and the interface (Q26) were rated relatively highly except in the mobile application. Fixing mistakes (Q28) is rather easy and quick in all cases (more then 50%).

In general, cybersickness did not pose a problem for this particular group of students. They did not experience nausea, headache, dizziness, or fatigue. Only a few respondents (all with visual impairment such as myopia and astigmatism) identified the eye strain issue, mostly while using VR (see

Figure 15).

4. Conclusions

This paper presents and concludes the ATOMIC project, the goal of which was to create an XR-based educational environment that enables students to meet the challenges of a natural business environment such as planning and organizing, staffing and control, problem solving, critical thinking, creativity, and teamwork. The various scenarios were developed within the project, but the most significant activity was usability testing of these platforms by the prospective end-users (students) by means of questionnaires.

Usability testing of the applications is crucial for verifying design assumptions, finding possible issues with the flow of user experience, as well as the overall level of understanding of applied interfaces in the context of the scenarios. Based on our own experience, we confirm that usability testing is always a significant part of project realization. Direct contact with representatives of the target groups is particularly valuable. Observation of users’ behaviors as well as live feedback provide a rich source of knowledge on how to improve the system. The fact that none of the cases resulted in depreciated mood or cybersickness is extremely promising. We also noticed that organizing brainstorming sessions in narrow user groups directly after usability testing brings about many spontaneous ideas that can provide valuable clues for project evaluation. Such involvement of representatives of the target user groups in the design process is highly beneficial and such designs meet better reception, because the users identify more with the project. The user feels relevant because they had a chance to influence and tailor the final shape of the system. One of the most significant changes we decided to implement was to replace the hardware platform for AR goggles-based experiences. We set off using a technologically older headset (DreamWorld) and we are positive that using the latest generation of AR technology (Hololens 2) will result in a significant increase in user satisfaction.

The test results, observations, and user interviews did not reveal any critical errors in our approach. The used stories, scenarios, and educational intentions were highly appreciated. Additionally, the selection of applied technologies was considered relevant and correct in relation to the cognitive goals. At this point, we would like to emphasize that we are still in contact with the participants of the study and they were informed about the undertaken corrections and remedial actions.

We are currently in the process of finalizing the project. We are sure that the conducted tests with the participation of the target users’ representatives have made the final product meet the expectations of both the authors and the users and that it is going to become an excellent educational tool.