1. Introduction

The COVID-19 pandemic has resulted in a huge worldwide catastrophe and has had a substantial impact on many lives across the world. The first instance of this deadly virus was reported in December 2019 from Wuhan, a Chinese province in [

1]. After emergence, the virus quickly became a worldwide epidemic, impacting many nations across the globe. Reverse transcription-polymerase chain reaction (RT PCR) is one of the most often utilized methods in the diagnosis of COVID-19. However, since PCR has a diagnostic sensitivity of about 60–70%, radiological imaging techniques including computed tomography (CT) and X-ray have been critical in the early detection of this disease [

2]. Therefore, the COVID-19 diagnosis from CT and X-ray images is an active and promising research domain, and additionally, there is much more space for improvements.

A few recent investigations have found alterations in X-ray and CT imaging scans in individuals with COVID-19 symptoms. For example, Zhao et al. [

3] discovered dilatation and consolidation, as well as ground-glass opacities, in COVID-19 patients. The fast increase in the number of positive COVID-19 instances has heightened the necessity for researchers to use artificial intelligence (AI) alongside expert opinion to aid doctors in their work. Deep learning (DL) models have begun to gain traction in this respect. Due to a scarcity of radiologists in hospitals, AI-based diagnostic models may be useful in providing timely assistance to patients. Numerous research studies based on these approaches have been published in the literature; however, only the notable ones are mentioned here. Hemdan et al. [

4] suggested seven convolutional neural network (CNN) models, including enhanced VGG19 and Google MobileNet, to diagnose COVID-19 from X-ray pictures. Wang et al. [

5] classified COVID-19 pictures from normal and viral pneumonia patients with an accuracy of 92.4%. Similarly, Ioannis et al. [

6] attained a class accuracy of 93.48% using 224 COVID-19 pictures. The Opconet, an optimized CNN, was proposed in [

7] utilizing a total of 2700 pictures, giving an accuracy score of 92.8%. Apostolopoulous et al. [

8] created a MobileNet CNN model utilizing extricated features. In [

9], three different CNN models, namely inception v3, ResNet50, and Inception-ResNet V2, were employed for classification. In [

10], a transfer learning-based method was utilized to classify COVID and non-COVID chest X-ray pictures utilizing three models such as ResNet18, ResNet50, SqueezeNet, and DenseNet121.

Although all of the above-mentioned state-of-the-art approaches use CNN, the methods do not take into consideration the spatial connections between picture pixels when training the models. As a result, when the pictures are rotated, certain resizing operations are performed, and data augmentation is executed owing to the availability of lower dataset sizes, the generated CNN models fail to properly distinguish COVID-19 instances, viral pneumonia, and normal chest X-ray scans. Although some degree of inaccuracy in recognizing viral pneumonia cases is acceptable, the misclassification of COVID-19 patients as normal or viral pneumonia might confuse doctors, leading to failure of early COVID-19 detection.

One of the promising ways for establishing an efficient COVID-19 detection model based on DL is to generate a network with proper architecture for each COVID-19 dataset. The no free lunch theorem (NFL) [

11], which claims that the universal method for tackling all real-world problems does not exist, proved as right in the DL domain [

12] and consequently standard DL model cannot render performance as good as models specifically tuned for COVID-19 diagnosis. The challenge of finding appropriate CNN and DL structures for each particular task is known in the literature as CNN (DL) hyperparameters tuning (optimization), and a good way to do it is by using an automated approach guided by metaheuristics optimizers [

12,

13,

14,

15,

16,

17,

18,

19,

20]. The metaheuristics-driven CNN tuning has also been successfully applied to COVID-19 diagnostics [

21,

22,

23,

24].

However, the CNN tuning via metaheuristics is extremely time consuming because every function evaluation requires a generated network to be trained on large datasets for measuring solutions’ quality (fitness). Additionally, the CNN training process with standard algorithms, e.g., gradient descent (GD) [

25], conjugate gradienton (CG) [

26], Krylov subspace descent (KSD) [

27], etc., itself is very slow, and it can take hours to obtain feedback. Taking into account that the COVID-19 diagnostics is critical and that the efficient network needs to be established in almost real time, more approaches for COVID-19 early detection from X-ray and CT images are required.

With the goal of shortening training time, while performing automated feature extraction, research presented in this manuscript adapts a sequential, two-phase hybrid machine learning model for COVID-19 detection from X-ray images. In the first phase, a well-known simple architecture alike LeNet-5 CNN [

28] is used as the feature extraction to reduce structural complexities within images. The second phase uses extreme gradient boosting (XGBoost) for performing classification, where outputs from the flatten layer of the LeNet-5 structure are used as XGBoost inputs. In other words, LeNet-5 fully connected (FC) layers are replaced with XGBoost to perform almost real-time classification. The LeNet structure is trained only once, shortening execution time substantially more than in the case of CNN tuned approaches.

However, according to the NFL, the XGBoost, which efficiency depends on many hyperparameters, also needs to be tuned for specific problems. Consequently, this study also proposes metaheuristics to improve XGBoost performance for COVID-19 X-ray images classification. For the purpose of this study, modified arithmetic optimization algorithm (AOA) [

29], that represents a low-level hybrid between AOA and sine cosine algorithm (SCA) [

30], is developed and adapted for XGBoost optimization. The observed drawbacks of basic AOA are analyzed, and a method that outscores the original approach is developed. This particular metaheuristics is chosen because it shows great potential in solving varieties of real-world challenges [

31,

32]; however, since it relatively recenty emerged, it is still not investigated enough, and there are still many open spaces for its improvements.

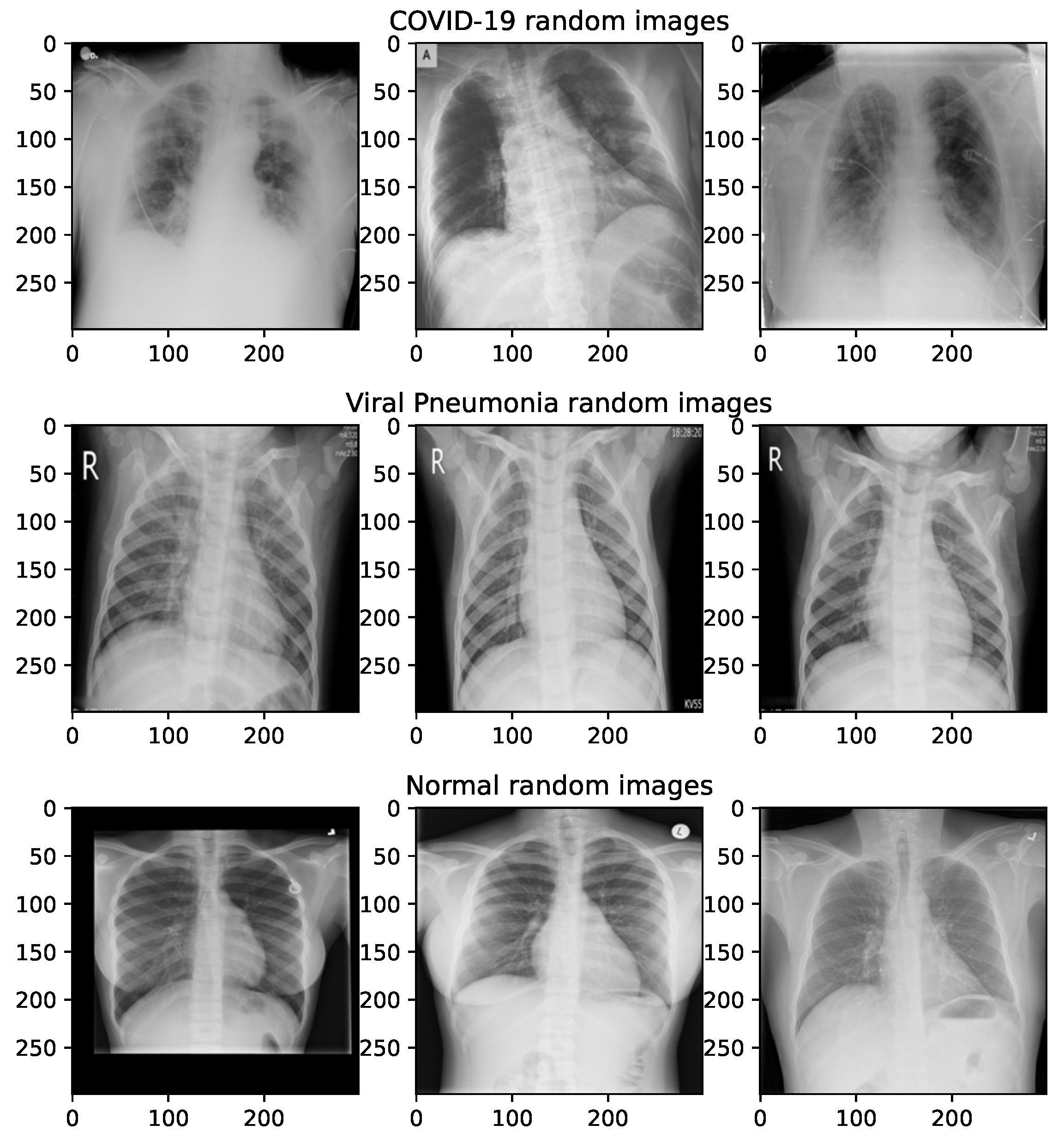

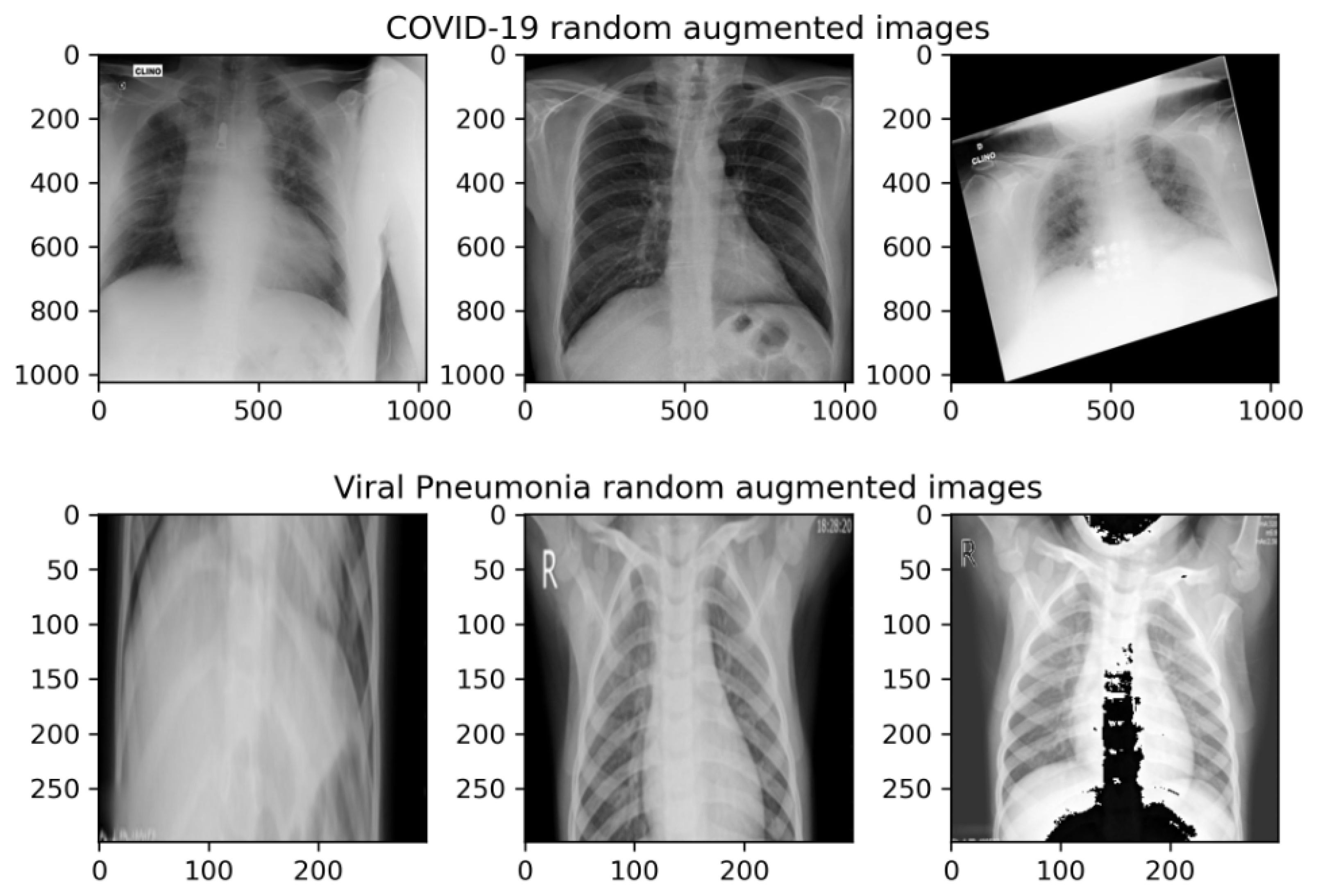

The proposed two-phases hybrid method for COVID-19 X-ray diagnosis is validated against the COVID-19 radiography database set of images, which was retrieved from the Kaggle repository [

33,

34]. The classification is performed against three classes, namely normal, COVID-19 and viral pneumonia. The viral pneumonia X-rays are also taken because only subtle differences with COVID-19 X-ray images exist. However, since the source of the COVID-19 X-ray diagnosis dataset is imbalanced toward the normal class and the aim of the proposed research is not oriented toward addressing imbalanced datasets, the COVID-19 and viral pneumonia images are augmented, while the normal images are contracted from the original repository, and at the end each class, they contained 4000 observations.

The performance of the proposed methodology is compared with other standard DL methods as well as with XGBoost classifiers tuned with other well-known metaheuristics. Additionally, the proposed modified AOA, before being adopted for XGBoost tuning for COVID-19 classification, was first tested in optimizing challenging congress on evolutionary computation 2017 (CEC2017) benchmark instances.

Considering the above, this manuscript proposes a method that is guided by the two elemental problems for investigation:

The possibility of designing a method for efficient COVID-19 diagnostics from X-ray images based on the simple CNN and XGBoost classifier and

The possibility of further improving the original AOA apporach by performing low-level hybridization with SCA metaheuritiscs.

Established upon the experimental findings showed in

Section 4 and

Section 5, the contribution of the proposed study is four-fold:

A simple light-weight neural network has been generated that obtains a decent level of performance on the COVID-19 dataset and executes fast;

An enhanced version of AOA metaheuristics has been developed that specifically targets the observed and known limitations and drawbacks of the basic AOA implementation;

It was shown that the proposed metaheuristics is efficient in solving global optimization tasks with combined, real and integer parameters types; and

The proposed COVID-19 detection methodology from X-ray images that employs the light-weight network, XGBoost and enhanced AOA obtains satisfying performance within a reasonable amount of computational time.

The sections of the manuscript are outlined as follows:

Section 2 provides a brief survey of the AI method employed in this study with a focus on CNN applications.

Section 3 explains the basic version of the AOA, points out its drawbacks and introduces the modified AOA implementation. Bound constrained simulations of the proposed algorithm on a challenging CEC2017 benchmark set are given in

Section 4. The experimental findings of the COVID-19 early diagnostics from X-ray images with the proposed methodology are provided in

Section 5, while the final remarks, proposed future work and conclusions are given in

Section 6.

3. Proposed Methodology

This section first shows a brief overview of original AOA metaheuristics, which is followed by its observed drawbacks and devised modified hybrid metaheuristics approach for the purpose of this study. Finally, this section concludes with a presentation of the two-phase sequential DL and XGboost method, which is used for COVID-19 X-ray images categorization.

3.1. Arithmetic Optimization Algorithm

A novel method called arithmetic optimization algorithm (AOA) is a metaheuristic method which draw inspiration from mathematics fundamental operators introduced by Abuligah et al. [

29].

The optimization process of AOA initializes with

X, a randomly generated matrix, for which the single solution is represented as

,

, and

, which represents the initial optimization space for solutions. The best-obtained solution is decided after each iteration and is considered a candidate for the best solution. The operations subtraction, addition, division, and multiplication control the computation of the near-optimal solution areas. The search phase selection is calculated according to the Math Optimizer Accelerated (MOA) function applied during both phases:

where the

t-th iteration function value is given as

, while the range is 1 to the maximum iterations number

T in which the current iteration is signified as

t.

and

, respectively, represent the minimum and maximum accelerated function values.

The search space is randomly explored with the use of division (

D) and multiplication (

M) operators during the exploration phase. This mechanism is given with Equation (

8). When the condition

is satisfied, the search is limited by the MOA for the current phase. The operator (

M) will not be applied until the first operator (

D) does not finish its task conditioned by

as the first rule of Equation (

8). Otherwise, operator

D is substituted by the (

M) operator for the completion of the same task.

where the arbitrary small integer is

, the fixed control parameter is

, the

i-th solution of the next iteration is

, the current location

j of the current iteration’s

i-th solution is

, and the current best solution’s

j-th position is

. Standardly, the lower and upper boundaries of the

j-th position are

and

.

where the

t-th iteration function value is denoted as the Math Optimizer Probability

, the current iteration is

t, the maximum iterations number is

T, and the fixed parameter is

with the purpose of measuring the accuracy of exploitation over iterations.

The deep search of the search space for exploitation is afterwards performed by the search strategies employed with addition (

A) and subtraction (

S) operators. This process is provided in Equation (

10). The bounds of the first rule of Equation (

10) are

which similarly links the operator (

A) to the operator (

S) as in the previous phase as (

M) to (

D). Furthermore, (

S) is substituted by (

A) to finish the task,

Conclusively, the near-optimal solution candidates tend to diverge when , while they gravitate to near-optimal solutions in case of . For the stimulation of exploration and exploitation, the values from to are incrementally increased for the parameter. Additionally, note that the computational complexity of AOA is computational complexity.

3.2. Cons of Basic AOA and Introduced Modified Algorithm

The basic version of the AOA is regarded as a potent optimizer with a wide range of practical applications, but it stills suffer from several known drawbacks in its original implementation. These flaws are namely insufficient exploitation power and an inadequate intensity of exploration process. This is reflected in the fact that in some cases, AOA is susceptible to dwell in the proximity of the local optima and also to the slow converging speed [

32,

114,

115], as it can clearly be observed in CEC2017 simulations presented in

Section 4.

One of the root causes of these deficiencies is that the solutions’ update procedure in basic AOA is focused on the proximity of the single current global best solution. As discussed by [

114,

116], it results in an extremely selective search procedure, where other solutions depend on the solitary centralized guidance to update their position, with no guarantees to converge to the global optimum. Hence, it is necessary to improve the exploration capability of the basic AOA to escape the local optimums.

Due to the above-mentioned cons, during the search process, the original AOA converges too fast toward the current best solution, and the population diversity is disturbed. Since the AOA’s efficiently depends to some extent on the generated pseudo-random numbers due to its stochastic nature, in some runs, when the current best individual in the initial population is close to optimum regions of the search domain, the AOA shows satisfying performance. However, when the algorithm is “unlucky” and the initial population is further away from optimum, the whole population quickly converges toward sub-optimum regions, and the final results have lower quality.

Additionally, besides poor exploration, the AOA’s intensification process can be also improved. As already noted, the search is conducted mostly in the neighborhood of the current best individual, and exploitation around other solutions from the population is not emphasized enough.

The enhanced AOA proposed in this manuscript addresses both observed drawbacks by improving exploration, exploitation and its balance of the original version. For that reason, the proposed method introduces the search procedure from another metaheuristics and an additional control parameter that enhances exploration, but it also establishes better intensification–diversification trade-off.

The authors were inspired by the low-level methodology of hybridization employing the principles from SCA to the AOA. This process results in satisfactory performance from both phases of the metheuristic solutions and a superior hybrid solution. The basic equations for position updating with the SCA are given (

11):

where the current option’s setting for the

i-th measurement at the

t-th model is

, arbitrary numbers

/

/

, the location factor placement in the

i-th dimension is

, and the absolute value is given as

.

As stated above, after conducting extensive examination of the search equations of AOA and SCA algorithms, it was determined that AOA search equations are not sufficient for efficient exploitation, which to a large extent depends on the current best solution, and it is required to cover a wider search space. Hence, this research aimed to merge two algorithms combined with using a quasi-reflection-learning based (QRL) procedure [

117] in the following way. Every solution life-cycle consists of two phases, where the solution performs an AOA search (phase one) and SCA search (phase two), which are controlled by the value of one additional control parameter.

Each solution is assigned a

attribute, which is utilized to monitor the improvement of the solutions. In the beginning, after producing the initial population, all solutions start with an AOA search. In each iteration, if the solution was not improved, the

parameter is increased by 1. When

reaches the threshold value

(control parameter in the proposed hybrid algorithm), that particular solution continues the search by switching to the SCA search mechanism. Again, every time when the solution is not improved,

is increased by 1. If the

reaches the

value, that solution is removed from the population and replaced by the quasi-reflexive-opposite solution

of the solution

X, which is generated by applying Equation (

12) over each component

j of solution

X.

where

part of the equation has a role to generate a random value derived from the uniform distribution inside

, and

and

represent the lower and upper limits of the search space, respectively. This procedure is executed for each parameter of every solution

X within

D dimensions.

However, the replacement is not performed for the current best solution, because, practically, if the solution manages to maintain the best rank within iterations, there is a great chance that this solution hits the right part of the search space. If such a replacement would have occured, then the search process might diverge from the optimum region.

It must be noted that when replacing the solution with its opposite, additional evaluation is not performed. The logic behind utilizing the quasi-reflexive opposite solutions is based on the fact that if the original solution did not improve for a long time, it was located far away from the optimum (or in one of the sub-optimum domains), and there is a reasonable chance that the opposite solution will fall significantly closer to the optimum. Discarding so-called exhausted solutions from the population ensures stable exploration during the whole search process in the run. The novel solution starts its life-cycle as described above, with the parameter reset to 0, and by conducting the AOA search first.

The value of the threshold was determined empirically, and it is calculated by using the following expression: , where T denotes the maximal number of iterations, and N is the size of the population. Therefore, there is no need for the researcher to fine-tune this parameter.

For simplicity reasons, the introduced AOA method is named hybrid AOA (HAOA) and its pseudo-code is provided in Algorithm 1. The introduced changes do not increase the complexity of the original AOA algorithm; hence, the complexity of the proposed HAOA is estimated as . Moreover, the HAOA introduces just one additional control parameter (), and it is automatically determined as it depends on T and N.

| Algorithm 1: Hybrid arithmetic optimization algorithm. |

Initialize the parameters and . Initialize solutions’ positions randomly (). Set values of each solution to 0. Determine value as while do Compute the fitness function for the given solutions. Find the best solution so far. Update MOA and MOP values using Equations ( 7) and ( 9), respectively. for to do if then Execute AOA search for to D do Generate a random number () in interval [0, 1]. if then Exploration phase if then Apply the division operator (D, “÷”) Update the ith solutions’ positions using the first rule in Equation ( 8). else Apply the multiplication operator (M, “×”) Update the ith solutions’ positions using the second rule in Equation ( 8). end if else Exploitation phase if then Apply the subtraction operator (S, “−”) Update the ith solutions’ positions using the first rule in Equation ( 10). else Apply the addition operator (A, “+”) Update the ith solutions’ positions using the second rule in Equation ( 10). end if end if end for Compare the old solution and updated solution and increment if needed. else if then Execute SCA search for to D do Update positions according to Equation ( 11). end for Compare old solution and updated solution and increment if needed. else if i is not the current best solution then Remove solution from the population. Replace with quasi-reflexive-opposite solution produced with Equation ( 12). Reset parameter to value 0. end if end if end for end while Return the best solution.

|

3.3. Deep Learning Approach for Image Classification

As is it was already mentioned in

Section 1, the proposed approach is executed in two phases, where the first phase performs feature extraction and the second phases employs XGBoost for performing classification.

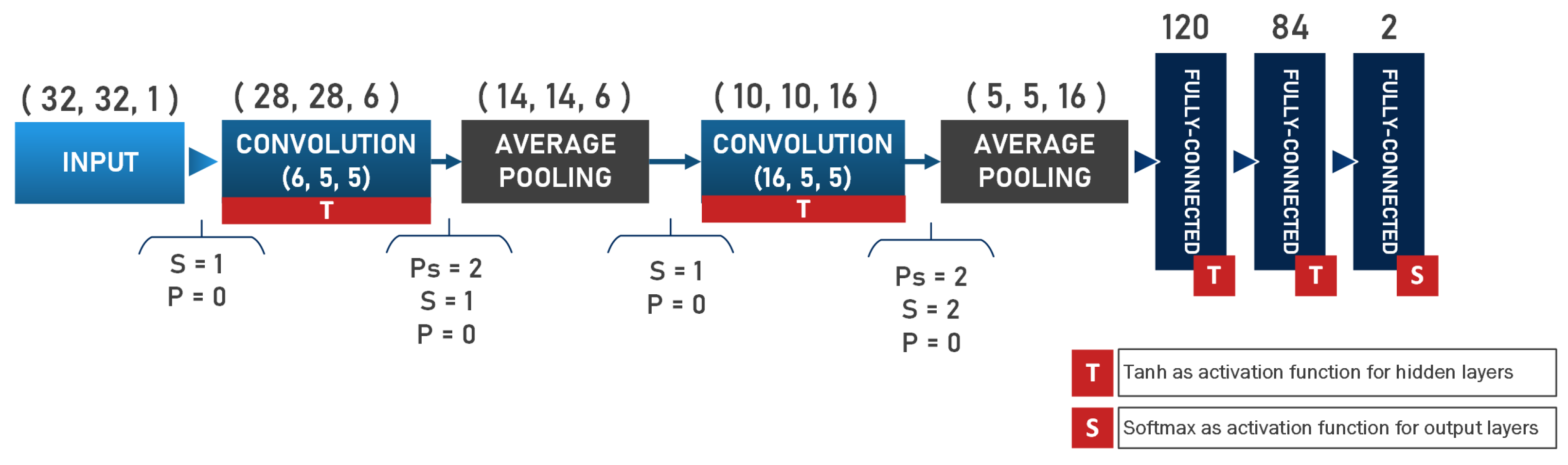

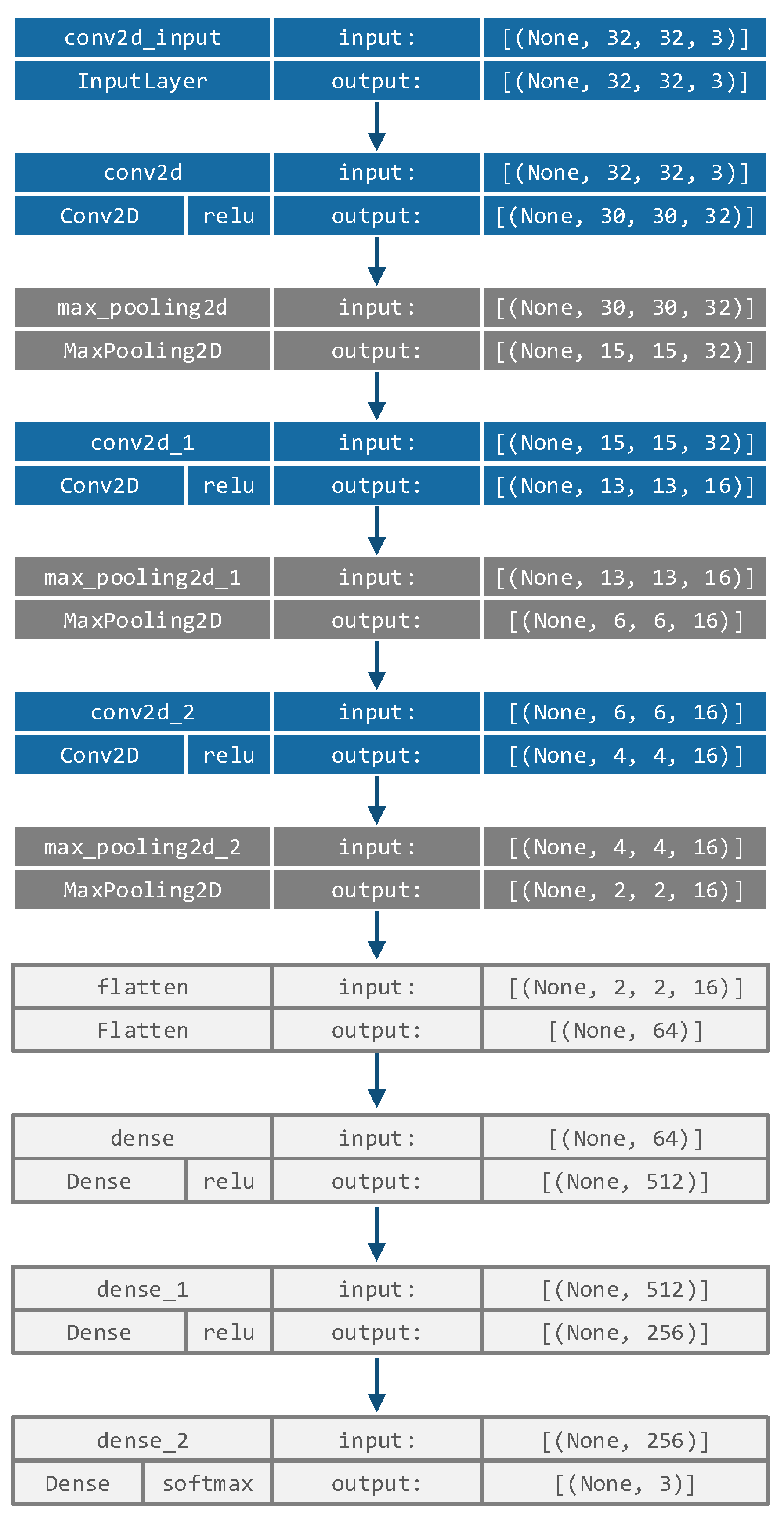

In the first phase of the proposed approach, a simple CNN architecture, similar to LeNet5 [

28] that consists of 3 convolutional and 3 max pooling layers, followed by 3 fully-connected layers, is employed. This network structure was determined empirically with the goal of being as simple as possible (allowing easier training and fast execution), while achieving a decent level of performance on the COVID-19 dataset, by performing hyperparameters optimization during the pre-research phase via a simple grid search. The hyperparameters that were tuned included the number of convolutional layers (range

, integer), number of cells in convolutional layers (range

, integer), number of fully connected layers (range

, integer) and learning rate (range

, continuous). The determined network structure is as follows: the first convolutional layer uses 32 filters with 3 × 3 kernel size, while the second and third convolutional layers employ 16 filters with 3 × 3 kernels, which is followed by 3 dense layers. The complete CNN network structure is shown in

Figure 2.

All images are resized to

pixel size and used as CNN input, where the input size is

. The convolutional layers’ weights are pre-trained on a COVID-19 dataset, as described in

Section 5.1 with the Adam optimizer and a learning rate (

) of 0.001,

sparseCatagoricalCrossEntropy loss function and a batch size of 32 over 100 epochs. The CNN uses a training set and validation set, which is a 10% fraction of the training data, and an early stopping condition with respect to validation loss with patience set to 10 epochs.

Due to the stochastic nature of the Adam optimizer, the whole training process is repeated 50 times, and the best performing pre-training model is used for the second phase. Training and validation loss for the best model during the training is shown in

Figure 3, where it can be seen that the due to early stopping criteria, training terminated after only 60 epochs.

After determining the sub-optimal weights and biases of the used simple CNN in the first phase, in the second phase, all fully connected layers from the CNN are removed, and the outputs from CNN’s flatten layer are used as inputs for the XGBoost classifier. Therefore, all CNN’s fully connected layers are replaced with XGBoost, where XGBoost inputs represent features extracted by the convolutional and maxpooling layers of CNN.

However, as it was also pointed out in

Section 1, the XGBoost should be optimized for every particular dataset. Therefore, the proposed HAOA is used for XGBoost tuning, where each HAOA solution is of length 6 (

), with every solution’s component representing one the XGBoost hyperparameters.

The collection of XGBoost hyperparameters that were addressed and tuned in this research is provided below, together with their boundaries and variable types:

Learning rate (), limits: , category: continuous;

, limits: , category: continuous;

Subsample, limits: , category: continuous;

Collsample_bytree, limits: , category: continuous;

Max_depth, limits: , category: integer; and

, limits: , category: continuous.

The parameter count required by softprob objective function (‘num_class’:self.no_classes) is further being passed as the parameter to XGBoost as well. All other parameters are determined and set to default XGBoost values.

Finally, the hybrid proposed approach is named after the used models—CNN-XGBoost-HAOA, and its flowchart is depicted in

Figure 4.

4. CEC2017 Bound-Constrained Experiments

The XGBoost tuning belongs to the group of NP-hard global optimization problems with mixed, real values and integer parameters (see

Section 3.3). However, to prove the robustness of the optimizer, it should be first tested on a larger set of global optimization benchmark instances before being validated against the practical problem such as XGBoost hyperparameters optimization.

Therefore, the HAOA was validated on exceedingly challenging global optimization benchmark functions from the CEC2017 testing suite [

118] with 30 parameters. The total number of instances is 30, and they are divided into 4 groups: from

to

—uni-modal, from

to

—multi-modal, hybrid functions are instances from

to

, and finally, the most challenging functions are the composite ones that include instances from

to

. The composite benchmarks exhibit all characteristics of the previous 3 groups; plus, they have been rotated and shifted.

The

instance was discarded from experimentation due to its unstable behavior, as pointed out in [

119]. The full specification of benchmark functions including name, class, parameters search range and global optimum value are shown in

Table 1. More details, such as its visual representation, can be seen in [

118].

All simulations were performed with 30-dimensional CEC2017 instances (

), and results for obtained mean (average) and standard deviation (std) averaged over 50 separate runs are reported. These two metrics are the most representative due to the stochastic behavior of metaheuristics. A relatively extensive evaluation of metaheuristics performance for the CEC2017 benchmark suite is provided in [

120], where state-of-the-art improved harris hawks optimization (IHHO) was introduced; therefore, a similar experimental setup as in [

120] was used in this study.

The research proposed in [

120] validated all approaches in simulations with 30 individuals in the population (

) and 500 iterations (

) throughout one runtime. However, some metaheuristics spare more

FFEs in one run, and setting the termination condition in terms of iterations may not be the most objective strategy. Therefore, to compare the proposed HAOA with other methods without biases, and at the same time to be consistent with the above-mentioned study, this research uses 15,030

FFEs (

) as the termination condition.

Additionally, most of the methods presented for validation purposes in [

120] were also implemented in this study with the same adjustments of control parameters. The comparison between the proposed HAOA and the following methods was performed: basic AOA, SCA, cutting-edge IHHO [

120], HHO [

121], differential evolution (DE) [

122], grasshopper optimization algorithm (GOA) [

123], gray wolf optimization (GWO) [

124], moth flame optimization (MFO) [

125], multi-verse optimizer (MVO) [

126], particle swarm optimization (PSO) [

78] and whale optimization algorithm (WOA) [

127].

Results for the CEC2017 simulations are displayed in

Table 2. The text in bold emphasizes the best results for every performance indicator and instance. In the case of equal performance, these results are also bolded. Regardless whether the experimentation in [

128] was performed with

T as the termination condition, the results reported in this study are similar. However, due to the stohastic behavior of the optimizer, subtle differences exist.

The best mean results for 21 functions were achieved by the HAOA, and they include

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

, and

. The functions are shown in

Table 2. The second best approach proved the best cutting-edge IHHO, and in some tests, the IHHO showed better performance than HAOA, while in others, the results of HAOA and IHHO were tied. The HAOA and IHHO obtained the same mean indicator values in the following tests:

,

,

,

, and

. The small number of cases in which the HAOA performed worse than the IHHO includes

and

experiments. There are also some cases where other methods achieved the best results, e.g., the

instance, where MVO and PSO showed superior performance. Lastly, the HAOA tied DE in the cases of

and

instances.

Additionally, it is very important to observe that the original AOA never beat HAOA. Moreover, there are instances where the HAOA tremendously outscored AOA, even by more than 1000 times, e.g., in the function test. Finally, it is also significant to compare HAOA and SCA, because the HAOA uses SCA search expressions. In all simulations, the HAOA outperformed SCA for both indicators. Accordingly, it can be concluded that the HAOA successfully managed to combine the advantages of basic AOA and SCA methods as a low-level hybrid approach.

The magnitude of results’ variances between the HAOA and every other method implemented in CEC2017 simulations can be determined from a Friedman test [

129,

130] and two-way ranks variance analysis. This was performed for the reasons of statistical importance of an improvement’s proof that is more thorough than simply putting outcomes into comparison.

Table 3 summarizes the results of the Friedman test over 29 CEC2017 instances for 12 compared methods.

Observing

Table 3, the HAOA undoubtedly performs better than any of the other 11 algorithms taken into account for comparative analysis. As expected, the second best approach is IHHO, while the original AOA and SCA take the ranks of 6 and 11, respectively. Additionally, the calculated Friedman statistics

is

, and as such, it is greater than the

critical value with 11 degrees of freedom (

) at the threshold level of

. The conclusion of this analysis is that the null hypothesis (

) can be rejected, implying that the HAOA achieved results which are substantially better than other algorithms.

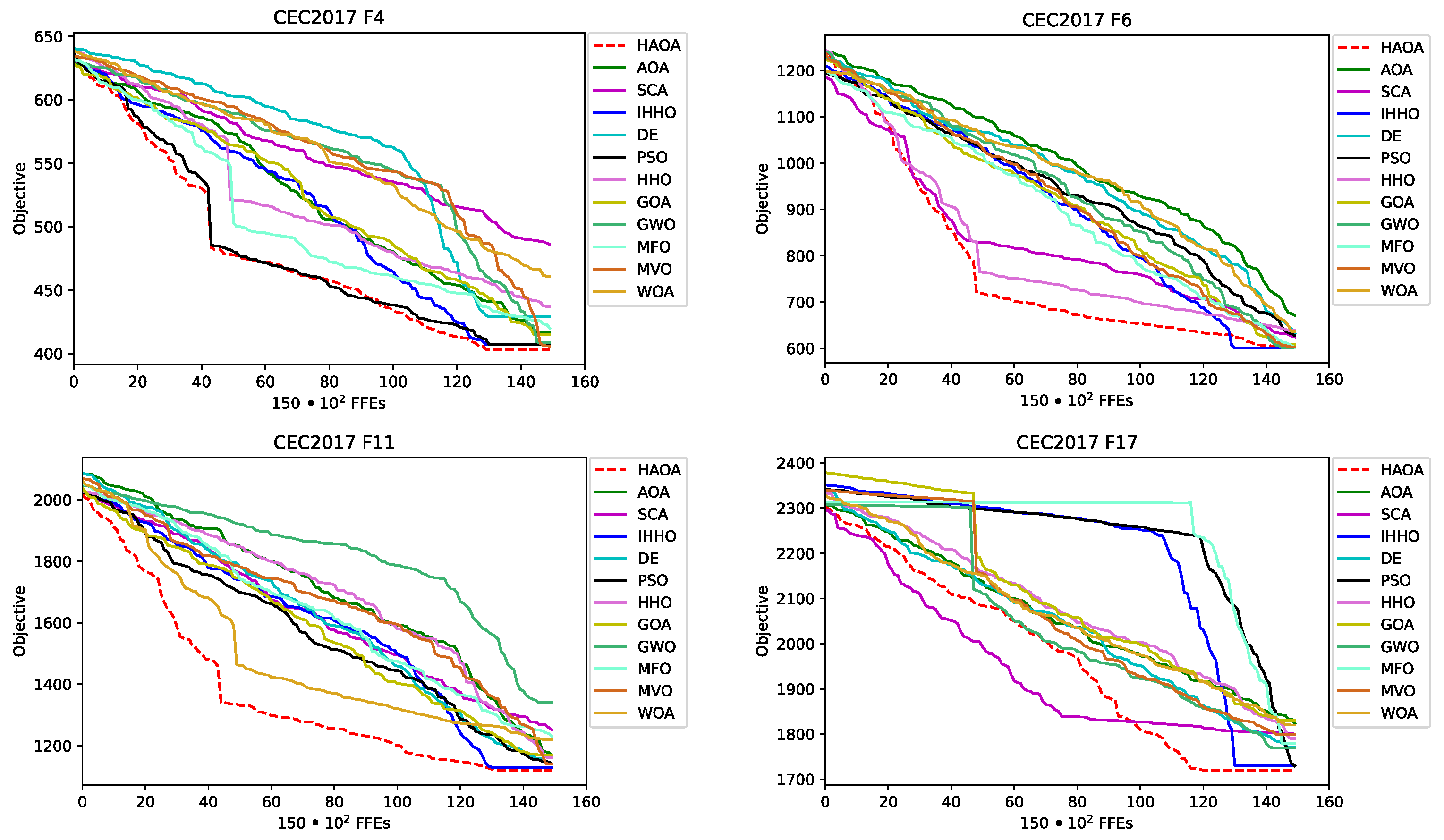

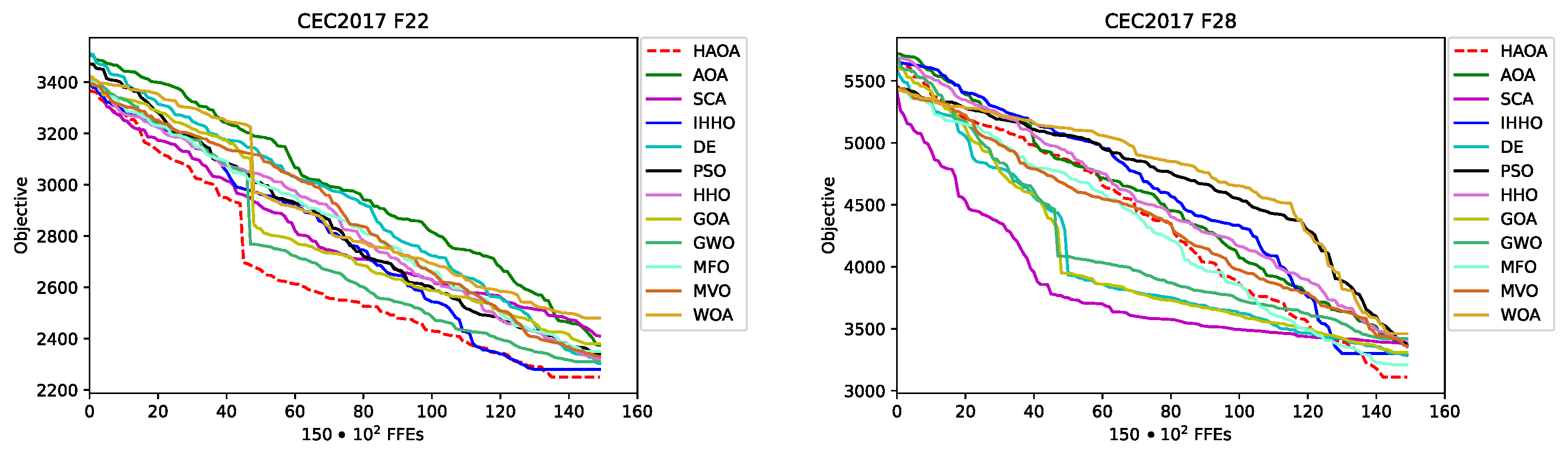

The convergence speed visual difference between the proposed HAOA and AOA, SCA, as well as between the other three best-performing metaheuristics, IHHO, DE and PSO for

,

,

,

,

and

instances, is shown in

Figure 5. From the sample functions convergence graphs, it can be observed that the HAOA converges on average faster than other methods, which is particularly emphasized in cases of

,

and

instance. It can also be seen that the results’ quality generated by HAOA is much higher than its base algorithms, AOA and SCA.

6. Conclusions

Fast diagnostics is crucial in modern medicine. The ongoing COVID-19 epidemic has shown how important it is to quickly determine whether or not a patient has been infected, and fast treatment is often the key factor to saving lives. This paper introduces a novel early diagnostics method to detect the disease from lungs X-ray images. The proposed model utilizes a novel HAOA metaheuristics algorithm, which was created by hybridizing AOA and SCA algorithms with a goal to overcome the deficiencies of the basic variants. The solutions in the proposed hybrid algorithm start by performing an AOA search procedure, and if the solution does not improve over the iterations, it will switch to the SCA search mechanism (controlled by the additional parameter). If the solution still does not improve, ultimately, it will be replaced by a quasi-reflective opposite solution, as defined by the QRL procedure.

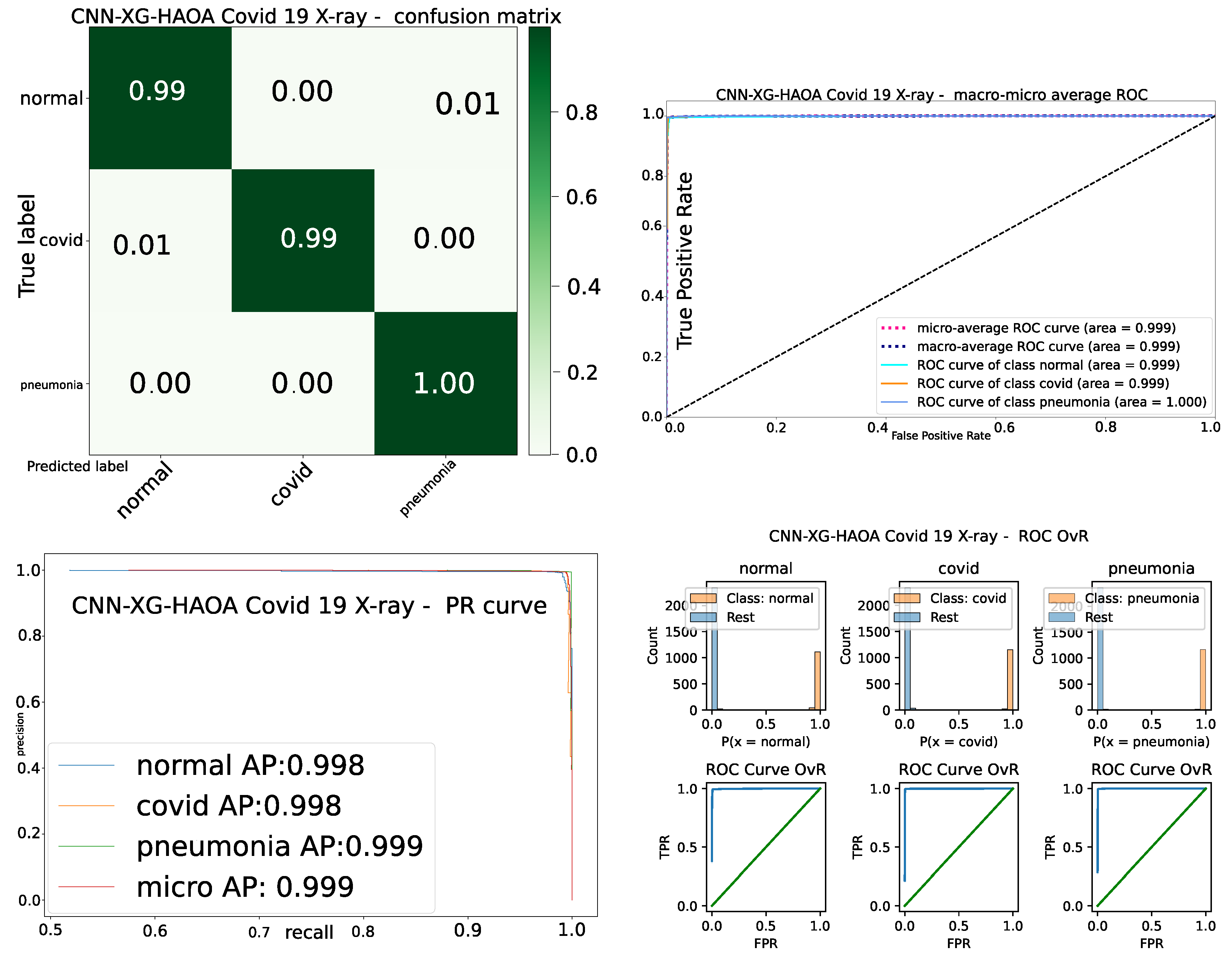

The HAOA algorithm was put to test on a set of hard CEC2017 benchmark functions and compared to the results of the basic AOA and SCA and another cutting-edge metaheuristics algorithm. It can be concluded that the HAOA undoubtedly achieves a higher level of performance than the other eleven tested algorithms. After proving the superior performance on the benchmark functions, the algorithm was employed in the machine learning framework, consisting of the simple CNN used for feature extraction and an XGBoost classifier, where HAOA was used to tune the XGBoost hyperparameters. The model was named CNN–XGBoost–HAOA, tested on a large COVID-19 X-ray images benchmark dataset, and compared to eight other metaheuristics algorithms used to evolve the XGBoost structure. The proposed CNN–XGBoost–HAOA obtained predominant accuracy of almost 99.4% on this dataset, leaving behind all other observed models.

The contribution of the proposed research can be defined on three levels. First—a simple light-weight network was generated, that is easy to train, operates fast and achieves decent performance on the COVID-19 dataset, where the XGBoost classifier was used instead of fully connected layers. Second—AOA metaheuristics was improved and used in the model. Finally, the whole model has been adapted to the COVID-19 dataset. The limitations of the proposed work are closely bound to these three levels of contributions. First, it was possible to execute more detailed experiments with the hyperparameters of the simple neural network to begin with, and it was also possible obtain another light structure that could have an even better level of performance; however, this was out of the scope of this work. Second, each metaheuristics algorithm can be modified in an infinite number of theoretically possible improvements (minor modifications and/or hybridization), leading to the conclusion that in theory, the level of improvements of the basic AOA could be even higher without increasing the complexity of the algorithm. It was also possible to include other XGBoost parameters to the tuning process, as there are many of them, but it was not possible to cover all this with just one study. Finally, experiments were executed with just one dataset, which has been balanced. The experiments with imbalanced datasets were not executed, because addressing imbalanced datasets was not goal of presented study.

Based on these encouraging results, the future work will be centered around gaining even more confidence in the suggested model by testing it further on the additional real-life COVID-19 X-ray datasets before considering the practical implementation as a part of the system that could be used in the hospitals to help in early COVID-19 diagnostics.