Information and Communication Technologies Combined with Mixed Reality as Supporting Tools in Medical Education

Abstract

1. Introduction

2. Immersive Technologies and Learning

2.1. Immersive Technologies in Chemical Classes

2.2. Immersive Technologies in Medical Student Training

2.3. Immersive Technologies in Medical Chemistry Training

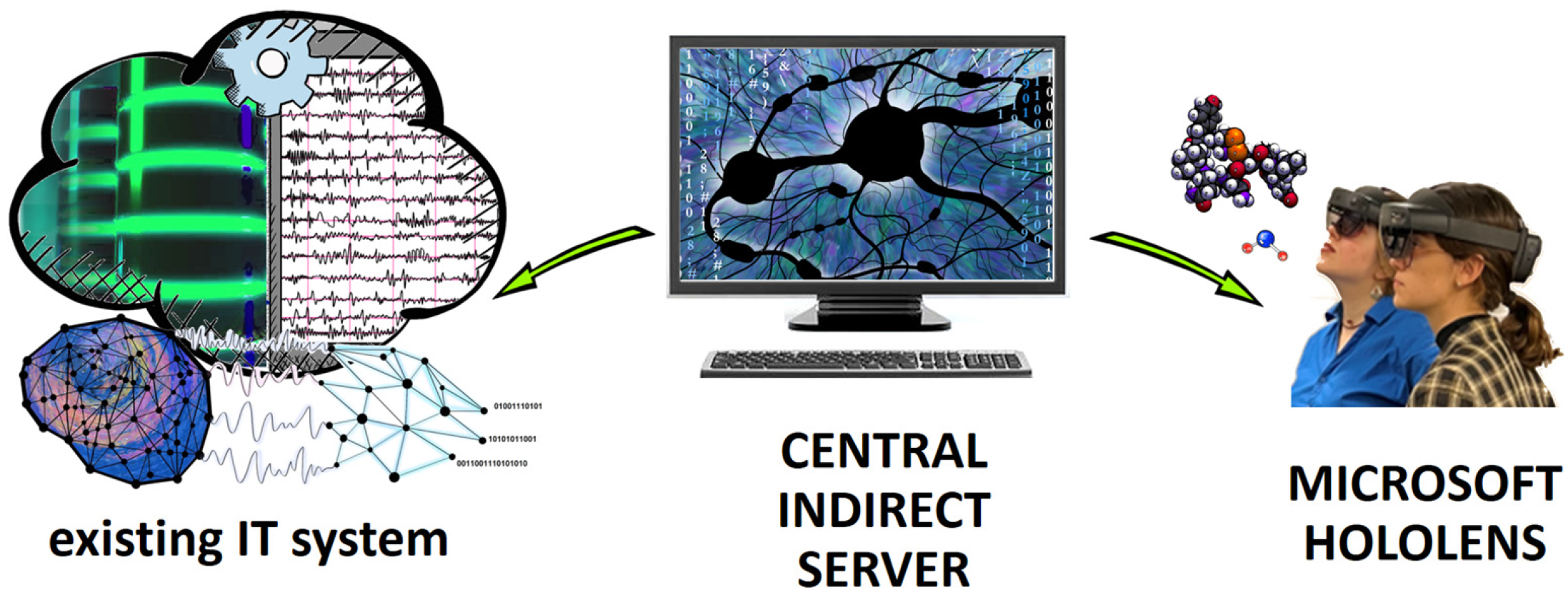

3. Materials and Methods

4. Results

5. Discussion and Conclusions

6. Future Plans

- -

- Software for displaying any 3D chemical models;

- -

- Software for displaying any computer-generated models of human anatomy elements;

- -

- Software for science popularization;

- -

- Courses/training consisting mainly of 3D models, animations and user interaction on the chemical and biochemical changes affecting disease, disease pathophysiology, how they can be visualized in 3D, and their diagnosis and treatment as a MR visualization.

Author Contributions

Funding

Conflicts of Interest

References

- Siriborvornratanakul, T. Enhancing User Experiences of Mobile-Based Augmented Reality via Spatial Augmented Reality: Designs and Architectures of Projector-Camera Devices. Adv. Multimed. 2018, 2018, 8194726. [Google Scholar] [CrossRef]

- Coyne, L.; Merritt, T.A.; Parmentier, B.L.; Sharpton, R.A.; Takemoto, J.K. The Past, Present, and Future of Virtual Reality in Pharmacy Education. Am. J. Pharm. Educ. 2019, 83, 7456. [Google Scholar] [CrossRef] [PubMed]

- Masood, T.; Egger, J. Augmented reality in support of Industry 4.0—Implementation challenges and success factors. Rob. Comput. Integr. Manuf. 2019, 58, 181–195. [Google Scholar] [CrossRef]

- Siriborvornratanakul, T. Through the Realities of Augmented Reality. In HCI International 2019—Late Breaking Papers; Stephanidis, C., Ed.; Springer: Cham, Switzerland, 2019; p. 11786. [Google Scholar]

- Kichloo, A.; Albosta, M.; Dettloff, K.; Wani, F.; El-Amir, Z.; Singh, J.; Aljadah, M.; Chakinala, R.C.; Kanugula, A.K.; Solanki, S.; et al. Telemedicine, the current COVID-19 pandemic and the future: A narrative review and perspectives moving forward in the USA. Fam. Med. Community Health 2020, 8, e000530. [Google Scholar] [CrossRef] [PubMed]

- Pregowska, A.; Masztalerz, K.; Garlińska, M.; Osial, M. A Worldwide Journey through Distance Education—From the Post Office to Virtual, Augmented and Mixed Realities, and Education during the COVID-19 Pandemic. Educ. Sci. 2021, 11, 118. [Google Scholar] [CrossRef]

- Zhuang, W.; Xiao, Q. Facilitate active learning: The role of perceived benefits of using technology. J. Educ. Bus. 2018, 93, 88–96. [Google Scholar] [CrossRef]

- Whitley, H.P. Active-learning diabetes simulation in an advanced pharmacy practice experience to develop patient empathy. Am. J. Pharm. Educ. 2012, 76, 203. [Google Scholar] [CrossRef]

- Habig, S. Who can benefit from augmented reality in chemistry? Sex differences in solving stereochemistry problems using augmented reality. Br. J. Educ. Technol. 2020, 51, 629–644. [Google Scholar] [CrossRef]

- Raja, M.; Lakshmi Priya, G.G. Using Virtual Reality and Augmented Reality with ICT Tools for Enhancing Quality in the Changing Academic Environment in COVID-19 Pandemic: An Empirical Study. In Technologies, Artificial Intelligence and the Future of Learning Post-COVID-19. Studies in Computational Intelligence; Hamdan, A., Hassanien, A.E., Mescon, T., Alareeni, B., Eds.; Springer: Cham, Switzerland, 2022; Volume 1019. [Google Scholar]

- Wu, T.C.; Ho, C.B. A scoping review of metaverse in emergency medicine. Australas. Emerg. Care 2022, 8, S2588-994X(22)00052-5. [Google Scholar]

- Jovanovic, M.; Guterman-Ram, G.; Marini, J.C. Osteogenesis Imperfecta: Mechanisms and Signaling Pathways Connecting Classical and Rare OI Types. Endocr. Rev. 2022, 43, 61–90. [Google Scholar] [CrossRef]

- Munzer, B.W.; Khan, M.M.; Shipman, B.; Mahajan, P. Augmented reality in emergency medicine: A scoping review. J. Med. Internet Res. 2019, 21, e12368. [Google Scholar] [CrossRef]

- Birlo, M.; Edwards, P.J.E.; Clarkson, M.; Stoyanov, D. Utility of optical see-through head mounted displays in augmented reality-assisted surgery: A systematic review. Med. Image Anal. 2022, 77, 102361. [Google Scholar] [CrossRef]

- Gerup, J.; Soerensen, C.B.; Dieckmann, P. Augmented reality and mixed reality for healthcare education beyond surgery: An integrative review. Int. J. Med. Educ. 2020, 11, 1–18. [Google Scholar] [CrossRef]

- Brun, H.; Bugge, R.A.B.; Suther, L.K.R.; Birkeland, S.; Kumar, R.; Pelanis, E.; Elle, O.J. Mixed reality holograms for heart surgery planning: First user experience in congenital heart disease. Eur. Heart J. Cardiovasc. Imaging 2019, 20, 883–888. [Google Scholar] [CrossRef]

- Korayem, G.B.; Alshaya, O.A.; Kurdi, S.M.; Alnajjar, L.I.; Badr, A.F.; Alfahed, A.; Cluntun, A. Simulation-Based Education Implementation in Pharmacy Curriculum: A Review of the Current Status. Adv. Med. Educ. Pract. 2022, 13, 649–660. [Google Scholar] [CrossRef]

- Nicolazzo, J.A.; Chuang, S.; Mak, V. Incorporation of MyDispense, a Virtual Pharmacy Simulation, into Extemporaneous Formulation Laboratories. Healthcare 2022, 10, 1489. [Google Scholar] [CrossRef]

- Tarng, W.; Tseng, Y.-C.; Ou, K.-L. Application of Augmented Reality for Learning Material Structures and Chemical Equilibrium in High School Chemistry. Systems 2022, 10, 141. [Google Scholar] [CrossRef]

- Moro, C.; Phelps, C.; Redmond, P.; Stromberga, Z. HoloLens and mobile augmented reality in medical and health science education: A randomised controlled trial. Br. J. Educ. Technol. 2021, 52, 680–694. [Google Scholar] [CrossRef]

- Siriborvornratanakul, T. A Study of Virtual Reality Headsets and Physiological Extension Possibilities. In Proceedings of the Computational Science and Its Applications—ICCSA 2016, Beijing, China, 4–7 July 2016; Springer: Cham, Switzerland, 2016; Volume 9787. [Google Scholar]

- Trenfield, S.J.; Awad, A.; McCoubrey, L.E.; Elbadawi, M.; Goyanes, A.; Gaisford, S.; Basit, A.W. Advancing pharmacy and healthcare with virtual digital technologies. Adv. Drug Deliv. Rev. 2022, 182, 114098. [Google Scholar] [CrossRef]

- Ingeson, M.; Blusi, M.; Nieves, J.C. Microsoft Hololens—A Mhealth Solution for Medication Adherence. In Proceedings of the International Workshop on Artificial Intelligence in Health, Stockholm, Sweden, 13–14 July 2018; Springer: Cham, Switzerland, 2018; pp. 99–115. [Google Scholar]

- Yang, S.; Pang, X.; He, X. A Novel Mobile Application for Medication Adherence Supervision Based on AR and OpenCV Designed for Elderly Patients. In Human Aspects of IT for the Aged Population. Supporting Everyday Life Activities, Proceedings of the 7th International Conference, ITAP 2021, Online, 24–29 July 2021; Springer: Cham, Switzerland, 2021; pp. 335–347. [Google Scholar]

- Karavasili, C.; Eleftheriadis, G.K.; Gioumouxouzis, C.; Andriotis, E.G.; Fatouros, D.G. Mucosal drug delivery and 3D printing technologies: A focus on special patient populations. Adv. Drug Deliv. Rev. 2021, 176, 113858. [Google Scholar] [CrossRef]

- Eleftheriadis, G.K.; Kantarelis, E.; Monou, P.K.; Andriotis, E.G.; Bouropoulos, N.; Tzimtzimis, E.K.; Tzetzis, D.; Rantanen, J.; Fatouros, D.G. Automated digital design for 3D-printed individualized therapies. Int. J. Pharm. 2021, 599, 120437. [Google Scholar] [CrossRef] [PubMed]

- Morimoto, T.; Kobayashi, T.; Hirata, H.; Otani, K.; Sugimoto, M.; Tsukamoto, M.; Yoshihara, T.; Ueno, M.; Mawatari, M. XR (Extended Reality: Virtual Reality, Augmented Reality, Mixed Reality) Technology in Spine Medicine: Status Quo and Quo Vadis. J. Clin. Med. 2022, 11, 470. [Google Scholar] [CrossRef] [PubMed]

- Levy, J.B.; Kong, E.; Johnson, N.; Khetarpal, A.; Tomlinson, J.; Martin, G.F.; Tanna, A. The mixed reality medical ward round with the MS HoloLens 2: Innovation in reducing COVID-19 transmission and PPE usage. Future Healthc. J. 2021, 8, e127–e130. [Google Scholar] [CrossRef] [PubMed]

- Hempel, D.; Sinnathurai, S.; Haunhorst, S.; Seibel, A.; Michels, G.; Heringer, F.; Recker, F.; Breitkreutz, R. Influence of case-based e-learning on students’ performance in point-of-care ultrasound courses: A randomized trial. Eur. J. Emerg. Med. 2016, 23, 298–304. [Google Scholar] [CrossRef]

- Ferrer-Torregrosa, J.; Jiménez-Rodríguez, M.Á.; Torralba-Estelles, J.; Garzón-Farinós, F.; Pérez-Bermejo, M.; Fernández-Ehrling, N. Distance learning ects and flipped classroom in the anatomy learning: Comparative study of the use of augmented reality, video and notes. BMC Med. Educ. 2016, 16, 230. [Google Scholar] [CrossRef]

- Duarte, M.L.; Santos, L.R.; Guimarães Júnior, J.B.; Peccin, M.S. Learning anatomy by virtual reality and augmented reality. A scope review. Morphologie 2020, 104, 254–266. [Google Scholar] [CrossRef]

- Ghaednia, H.; Fourman, M.S.; Lans, A.; Detels, K.; Dijkstra, H.; Lloyd, S.; Sweeney, A.; Oosterhoff, J.H.; Schwab, J.H. Augmented and virtual reality in spine surgery, current applications and future potentials. Spine J. 2021, 21, 1617–1625. [Google Scholar] [CrossRef]

- Pettersen, E.F.; Goddard, T.D.; Huang, C.C.; Couch, G.S.; Greenblatt, D.M.; Meng, E.C.; Ferrin, T.E. UCSF chimera—A visualization system for exploratory research and analysis. J. Comput. Chem. 2014, 25, 1605–1612. [Google Scholar] [CrossRef]

- Cortés Rodríguez, F.; Dal Peraro, M.; Abriata, L.A. Online tools to easily build virtual molecular models for display in augmented and virtual reality on the web. J. Mol. Graph. Model. 2022, 114, 108164. [Google Scholar] [CrossRef]

- Cortés Rodríguez, F.; Frattini, G.; Krapp, L.F.; Martinez-Hung, H.; Moreno, D.M.; Roldán, M.; Salomón, J.; Stemkoski, L.; Traeger, S.; Dal Peraro, M. MoleculARweb: A web site for chemistry and structural biology education through interactive augmented reality out of the box in commodity devices. J. Chem. Educ. 2021, 98, 2243–2255. [Google Scholar] [CrossRef]

- Cassidy, K.C.; Šefčík, J.; Raghav, Y.; Chang, A.; Durrant, J.D. ProteinVR: Web-based molecular visualization in virtual reality. PLoS Comput. Biol. 2020, 16, e1007747. [Google Scholar] [CrossRef]

- Xu, K.; Liu, N.; Xu, J.; Guo, C.; Zhao, L.; Wang, H.W.; Zhang, Q.C. VRmol: An integrative web-based virtual reality system to explore macromolecular structure. Bioinformatics 2021, 37, 1029–1031. [Google Scholar] [CrossRef]

- Tsang, W.Y.; Fan, P.; Raj, S.D.H.; Tan, Z.J.; Lee, I.Y.Y.; Boo, I.; Yap, K.Y.L. Development of a Three-Dimensional (3D) Virtual Reality Apprenticeship Program (VRx) for Training of Medication Safety Practices. Int. J. Digit. Health 2022, 2, 4. [Google Scholar] [CrossRef]

- Sakshuwong, S.; Weir, H.; Raucci, U.; Martínez, T.J. Bringing chemical structures to life with augmented reality, machine learning, and quantum chemistry. J. Chem. Phys. 2022, 156, 204801. [Google Scholar] [CrossRef]

- Macariua, C.; Iftenea, A.; Gîfua, D. Learn Chemistry with Augmented Reality. Procedia Comput. Sci. 2020, 176, 2133–2142. [Google Scholar] [CrossRef]

- Low, D.Y.S.; Poh, P.E.; Tang, S.Y. Assessing the impact of augmented reality application on students’ learning motivation in chemical engineering. Educ. Chem. Eng. 2022, 39, 31–43. [Google Scholar] [CrossRef]

- De Micheli, A.J.; Valentin, T.; Grillo, F.; Kapur, M.; Schuerle, S.J. Mixed Reality for an Enhanced Laboratory Course on Microfluidics. Chem. Educ. 2022, 99, 1272–1279. [Google Scholar] [CrossRef]

- Pan, Z.; Miao, J.; Cai, N.; Luo, T.; Zhang, M.; Pan, Z. Simulating the Dilution of Sulfuric Acid on Mixed Reality Platform. In Proceedings of the 2022 8th International Conference on Virtual Reality (ICVR), Nanjing, China, 26–28 May 2022; pp. 135–141. [Google Scholar]

- Kolecki, R.; Pregowska, A.; Dąbrowa, J.; Skuciński, J.; Pulanecki, T.; Walecki, P.; van Dam, P.M.; Dudek, D.; Richter, P.; Proniewska, K. Assessment of the utility of mixed reality in medical education. Transl. Res. Anat. 2022, 28, 100214. [Google Scholar] [CrossRef]

- Boomgaard, A.; Fritz, K.A.; Isafiade, O.E.; Kotze, R.C.M.; Ekpo, O.; Smith, M.; Gessler, T.; Filton, K.J.; Cupido, C.C.; Aden, B.; et al. A Novel Immersive Anatomy Education System (Anat Hub): Redefining Blended Learning for the Musculoskeletal System. Appl. Sci. 2022, 12, 5694. [Google Scholar] [CrossRef]

- Digital Trends. Available online: https://www.digitaltrends.com/virtual-reality/hololens-holoanatomy-award-jackson-hole-science-media-awards/ (accessed on 15 November 2022).

- Monterubbianesi, R.; Tosco, V.; Vitiello, F.; Orilisi, G.; Fraccastoro, F.; Putignano, A.; Orsini, G. Augmented, Virtual and Mixed Reality in Dentistry: A Narrative Review on the Existing Platforms and Future Challenges. Appl. Sci. 2022, 12, 877. [Google Scholar] [CrossRef]

- Blanchard, J.; Koshal, S.; Morley, S.; McGurk, M. The use of mixed reality in dentistry. Br. Dent. J. 2022, 233, 261–265. [Google Scholar] [CrossRef] [PubMed]

- Reymus, M.; Liebermann, A.; Diegritz, C. Virtual Reality: An Effective Tool for Teaching Root Canal Anatomy to Undergraduate Dental Students—A Preliminary Study. Int. Endod. J. 2020, 53, 1581–1587. [Google Scholar] [CrossRef] [PubMed]

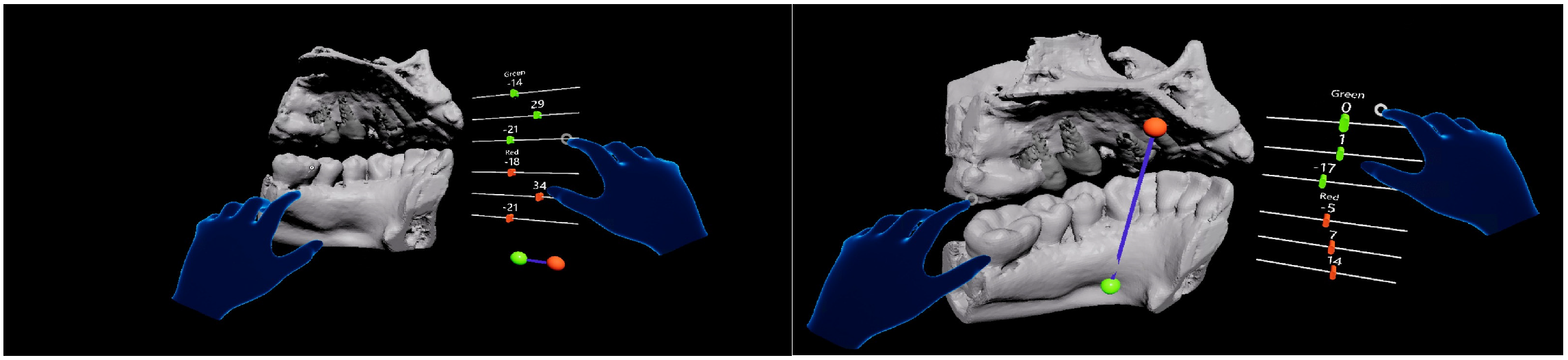

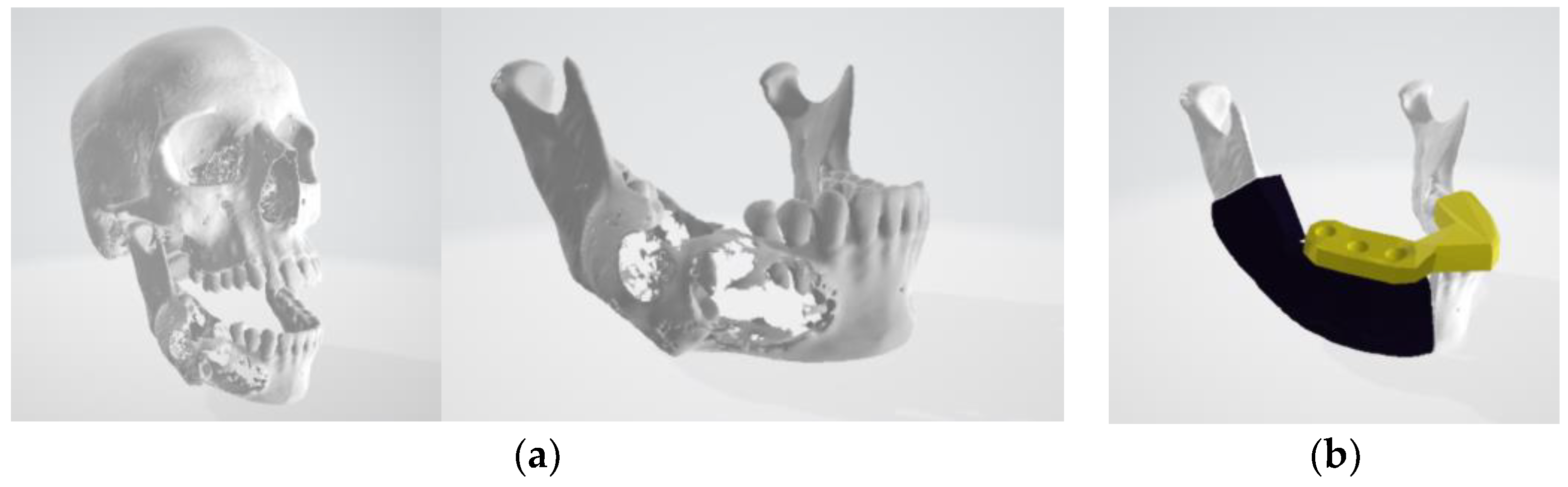

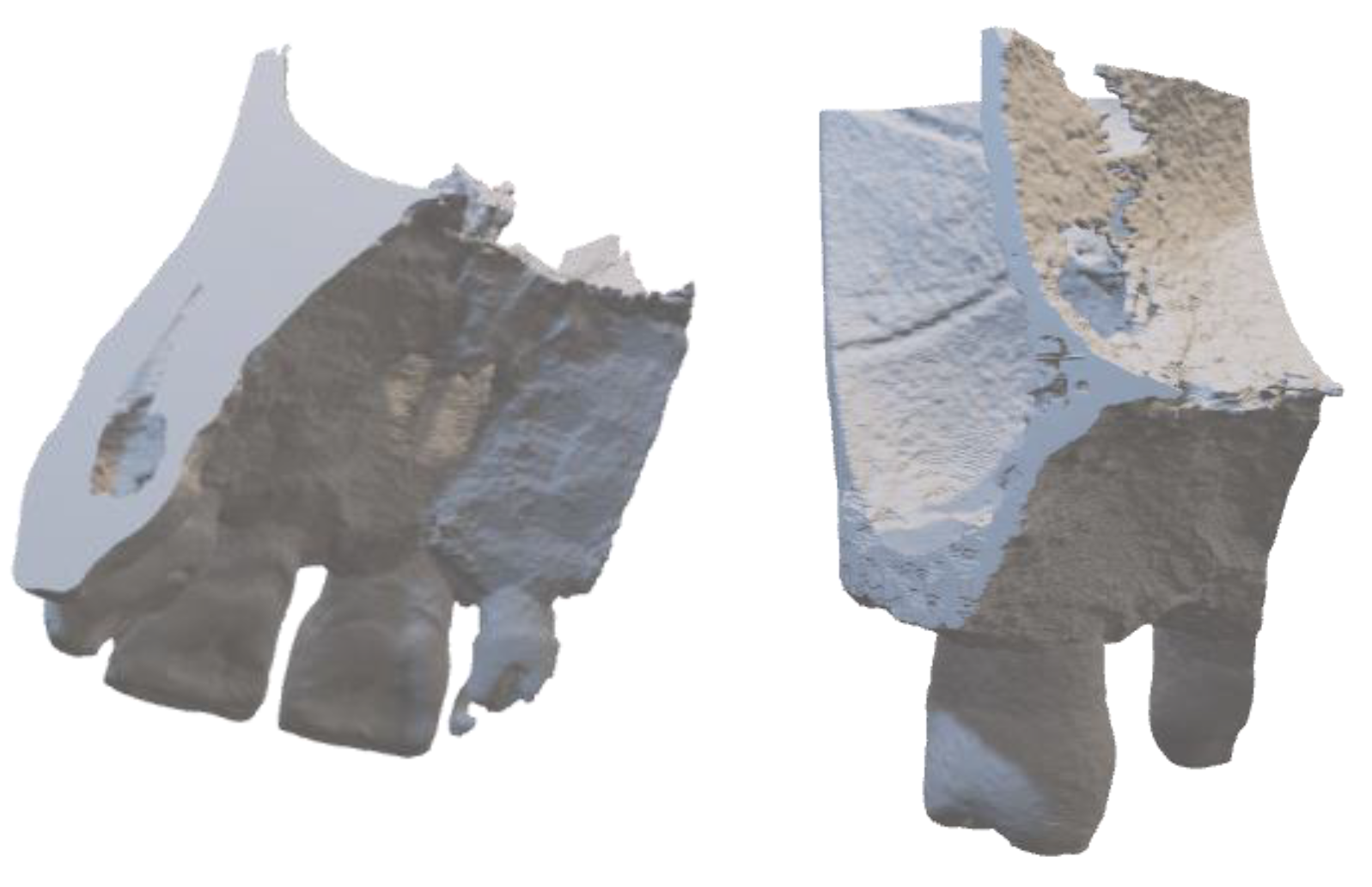

- Dolega-Dolegowski, D.; Proniewska, K.; Dolega-Dolegowska, M.; Pregowska, A.; Hajto-Bryk, J.; Trojak, M.; Chmiel, J.; Walecki, P.; Fudalej, P.S. Application of holography and augmented reality based technology to visualize the internal structure of the dental root—A proof of concept. Head Face Med. 2022, 18, 12. [Google Scholar] [CrossRef] [PubMed]

- Kaylor, J.; Hooper, V.; Wilson, A.; Burkert, R.; Lyda, M.; Fletcher, K.; Bowers, E. Reliability Testing of Augmented Reality Glasses Technology: Establishing the Evidence Base for Telewound Care. J. Wound Ostomy Cont. Nurs. 2019, 46, 485–490. [Google Scholar] [CrossRef] [PubMed]

- Papalois, Z.A.; Aydin, A.; Khan, A.; Mazaris, E.; Rathnasamy Muthusamy, A.S.; Dor, E.J.M.F.; Dasguota, P.; Ahmed, K. HoloMentor: A Novel Mixed Reality Surgical Anatomy Curriculum for Robot-Assisted Radical Prostatectomy. Eur. Surg. Res. 2022, 63, 40–45. [Google Scholar] [CrossRef]

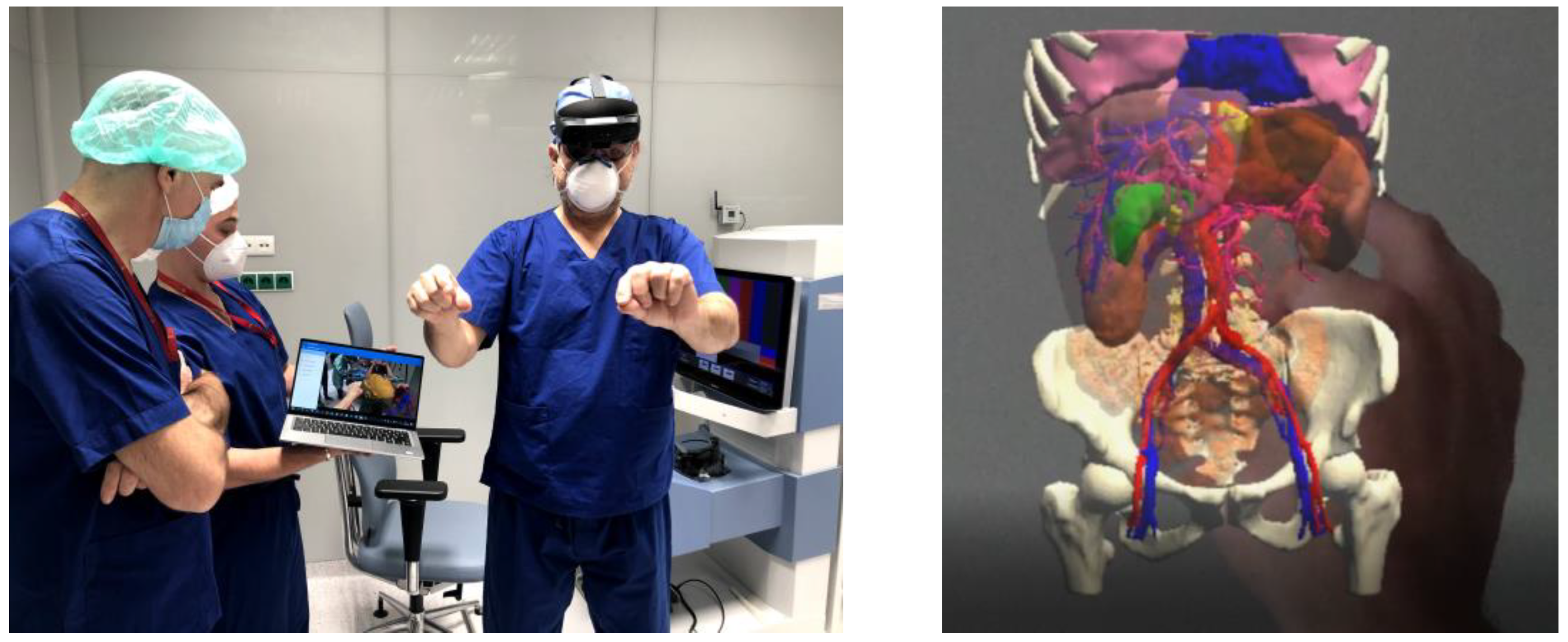

- Proniewska, K.; Khokhar, A.A.; Dudek, D. Advanced imaging in interventional cardiology: Mixed reality to optimize preprocedural planning and intraprocedural monitoring. Kardiol. Pol. 2021, 79, 331–335. [Google Scholar] [CrossRef]

- Na, F.; Wang, L.; Wu, C.; Ding, Y. Contrast-enhanced ultrasound combined with augmented reality medical technology in the treatment of rabbit liver cancer with high-energy focused knife. Comput. Intell. 2022, 38, 121–138. [Google Scholar] [CrossRef]

- Smith, C.; Friel, C.J. Development and use of augmented reality models to teach medicinal chemistry. Curr. Pharm. Teach. Learn. 2021, 13, 1010–1017. [Google Scholar] [CrossRef]

- Nounou, M.I.; Eassa, H.A.; Orzechowski, K.; Eassa, H.A.; Edouard, J.; Stepak, N.; Khdeer, M.; Kalam, M.; Huynh, D.; Kwarteng, E.; et al. Mobile-Based Augmented Reality Application in Pharmacy Schools Implemented in Pharmaceutical Compounding Laboratories: Students’ Benefits and Reception. Pharmacy 2022, 10, 72. [Google Scholar] [CrossRef]

- Roosan, D.; Chok, J.; Baskys, A.; Roosan, M.R. PGxKnow: A pharmacogenomics educational HoloLens application of augmented reality and artificial intelligence. Future Med. 2022, 23, 235–245. [Google Scholar] [CrossRef]

- Hope, D.; Haywood, A.; Bernaitis, N. Current research: Incorporating vaccine administration in pharmacy curriculum: Preparing students for emerging roles. Aust. J. Pharm. 2014, 95, 1129–1160. [Google Scholar]

- Bushell, M.; Morrissey, H.; Nuffer, W.; Ellis, S.L.; Ball, P.A. Development and design of injection skills and vaccination training program targeted for Australian undergraduate pharmacy students. Curr. Pharm. Teach. Learn. 2015, 7, 771–779. [Google Scholar] [CrossRef]

- Bushell, M.; Frost, J.; Deeks, L.; Kosari, S.; Hussain, Z.; Naunton, M. Evaluation of Vaccination Training in Pharmacy Curriculum: Preparing Students for Workforce Needs. Pharmacy 2020, 8, 151. [Google Scholar] [CrossRef]

- Essel, D.; Thompson, J.; Chapman, S. The effect of an augmented reality educational tool on the motivation towards learning in pharmacy students: An evaluative survey. Int. J. Pharm. Pract. 2022, 30, i30–i31. [Google Scholar] [CrossRef]

- Elford, A.; Gwee, C.; Veal, M.; Jani, R.; Sambell, R.; Kashef, S.; Love, P. Identification and Evaluation of Tools Utilised for Measuring Food Provision in Childcare Centres and Primary Schools: A Systematic Review. Int. J. Environ. Res. Public Health 2022, 19, 4096. [Google Scholar] [CrossRef]

- Silva, R.d.O.S.; de Araújo, D.C.S.A.; dos Santos Menezes, P.W.; Zimmer Neves, E.R.; de Lyra, S.P., Jr. Digital pharmacists: The new wave in pharmacy practice and education. Int. J. Clin. Pharm. 2022, 44, 775–780. [Google Scholar] [CrossRef]

- Slicer. Available online: https://www.slicer.org (accessed on 15 November 2022).

- Pinter, C.; Lasso, A.; Fichtinger, G. Polymorph segmentation representation for medical image computing. Comput. Methods Programs Biomed. 2019, 171, 19–26. [Google Scholar] [CrossRef]

- Zhu, L.; Kolesov, I.; Gao, Y.; Kikinis, R.; Tannenbaum, A. An Effective Interactive Medical Image Segmentation Method Using Fast GrowCut. In Proceedings of the International Conference on Medical Image Computing and Computer Assisted Intervention (MICCAI), Interactive Medical Image Computing Workshop, Boston, MA, USA, 4–18 September 2014. [Google Scholar]

- Zukić, D.; Vicory, J.; McCormick, M.; Wisse, L.; Gerig, G.; Yushkevich, P.; Aylward, S. ND morphological contour interpola-tion. Insight J. 2016, 17, 1–27. [Google Scholar]

- Birt, J.; Stromberga, Z.; Cowling, M.; Moro, C. Mobile Mixed Reality for Experiential Learning and Simulation in Medical and Health Sciences Education. Information 2018, 9, 31. [Google Scholar] [CrossRef]

- Gattullo, M.; Laviola, E.; Boccaccio, A.; Evangelista, A.; Fiorentino, M.; Manghisi, V.M.; Uva, A.E. Design of a Mixed Reality Application for STEM Distance Education Laboratories. Computers 2022, 11, 50. [Google Scholar] [CrossRef]

- Zhang, Z.; Wu, Y.; Pan, Z.; Li, W.; Su, Z. A novel animation authoring framework for the virtual teacher performing experiment in mixed reality. Comput. Appl. Eng. Educ. 2022, 30, 550–563. [Google Scholar] [CrossRef]

- Lee, T.H.; Munasinghe, V.; Li, Y.M.; Xu, J.; Lee, H.J.; Kim, S.J. GAN—Based Medical Image Registration for Augmented Reality Applications. In Proceedings of the 2022 IEEE 4th International Conference on Artificial Intelligence Circuits and Systems (AICAS), Incheon, Republic of Korea, 13–15 June 2022; pp. 279–282. [Google Scholar]

- Duan, X.; Kang, S.J.; Choi, J.I.; Kim, S.K. Mixed Reality System for Virtual Chemistry Lab. KSII Trans. Internet Inf. Syst. 2020, 14, 1673–1688. [Google Scholar]

- Yang, D.; Zhou, J.; Chen, R.; Song, Y.; Song, Z.; Zhang, X.; Wang, Q.; Wang, K.; Zhou, C.; Sun, J.; et al. Expert consensus on the metaverse in medicine. Clin. eHealth 2022, 5, 1–9. [Google Scholar] [CrossRef]

- Pimentel, D.; Fauville, G.; Frazier, F.; McGivney, G.; Rosas, S.; Woolsey, E. An Introduction to Learning in the Metaverse. Meridian Treehouse. 2022. Available online: https://scholar.harvard.edu/files/mcgivney/files/introductionlearningmetaverse-april2022-meridiantreehouse.pdf (accessed on 15 November 2022).

- Gartner. Available online: https://www.gartner.com/en/newsroom/press-releases/2022-02-07-gartner-predicts-25-percent-of-people-will-spend-at-least-one-hour-per-day-in-the-metaverse-by-2026 (accessed on 15 November 2022).

- Sun, M.; Xie, L.; Liu, Y.; Li, K.; Jiang, B.; Lu, Y.; Yang, Y.; Yu, H.; Song, Y.; Bai, C.; et al. The metaverse in current digital medicine. Clin. eHealth 2022, 5, 52–57. [Google Scholar] [CrossRef]

- Llobera, J.; Spanlang, B.; Ruffini, G.; Slater, M. Proxemics with multiple dynamic characters in an immersive virtual environment. ACM Trans. Appl. Percept. 2010, 8, 1–12. [Google Scholar] [CrossRef]

- Plechatá, A.; Makransky, G.; Böhm, R. Can extended reality in the metaverse revolutionise health communication? NPJ Digit. Med. 2022, 5, 132. [Google Scholar] [CrossRef]

- Xi, N.; Chen, J.; Gama, F.; Riar, M.; Hamari, J. The challenges of entering the metaverse: An experiment on the effect of extended reality on workload. Inf. Syst. Front. 2022, 1–22. [Google Scholar] [CrossRef]

- Christopoulos, A.; Pellas, N.; Kurczaba, J.; Macredie, R. The effects of augmented reality-supported instruction in tertiary-level medical education. Br. J. Educ. Technol. 2022, 53, 307–325. [Google Scholar] [CrossRef]

- Pickering, J.D.; Panagiotis, A.; Ntakakis, G.; Athanassiou, A.; Babatsikos, E.; Bamidis, P.D. Assessing the difference in learning gain between a mixed reality application and drawing screencasts in neuroanatomy. Anat. Sci. Educ. 2022, 15, 628–635. [Google Scholar] [CrossRef]

- Hauze, S.W.; Hoyt, H.H.; Frazee, J.P.; Greiner, P.A.; Marshall, J.M. Enhancing Nursing Education through Affordable and Realistic Holographic Mixed Reality: The Virtual Standardized Patient for Clinical Simulation. In Biomedical Visualisation; Rea, P., Ed.; Advances in Experimental Medicine and Biology; Springer: Cham, Switzerland, 2019; Volume 1120. [Google Scholar]

- Abrecht, U.V.; Folta-Schoofs, K.; Behrends, M.; von Jan, U. Effects of mobile augmented reality learning compared to textbook learning on medical students: Randomized controlled pilot study. J. Med. Internet Res. 2013, 15, e182. [Google Scholar] [CrossRef]

- Ho, S.; Liu, P.; Palombo, D.J.; Handy, T.C.; Krebs, C. The role of spatial ability in mixed reality learning with the HoloLens. Anat. Sci. Educ. 2022, 15, 1074–1085. [Google Scholar] [CrossRef]

- John, B.; Kurian, J.C.; Fitzgerald, R.; Lian Goh, D. Students’ Learning Experience in a Mixed Reality Environment: Drivers and Barriers. Commun. Assoc. Inf. Syst. 2022, 50, 510–535. [Google Scholar] [CrossRef]

| Reference | Type of Immersive Technologies | Chemical Content | Medical Content | Medical Chemistry Content | Application Fields |

|---|---|---|---|---|---|

| [79] | Metaverse, AR | No | No | No | Workload evaluation |

| [12] | Metaverse | No | No | No | Architecture lessons |

| [76] | Metaverse | No | Yes | No | Digital medicine |

| [73] | Metaverse | No | Yes | No | Medical education (a perspective) |

| [69] | AR | No | No | No | STEM laboratories |

| [15] | AR | No | Yes | No | Surgery Education |

| [32] | AR | No | Yes | No | Surgery Education |

| [33] | AR | Yes | No | No | Chemistry education |

| [35] | AR | Yes | No | No | Chemistry and biology content |

| [38] | AR | Yes | No | No | Materials structures and chemical equilibria |

| [55] | AR | No | No | Yes | Medical chemistry |

| [40] | AR | Yes | No | No | Chemistry lessons |

| [56] | AR | No | Yes | No | Pharmacy education |

| [36] | VR | Yes | No | No | Chemistry education, protein |

| [18] | VR | No | Yes | No | Pharmacy education |

| [49] | VR | No | Yes | No | Dental Education |

| [48] | VR | No | Yes | No | Dental Education |

| [81] | MR | No | Yes | No | Anatomy drawing screencasts |

| [82] | MR | No | Yes | No | Nursing Education |

| [50] | MR | No | Yes | No | Dental education |

| [16] | MR | No | Yes | No | Surgery Education |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Pregowska, A.; Osial, M.; Dolega-Dolegowski, D.; Kolecki, R.; Proniewska, K. Information and Communication Technologies Combined with Mixed Reality as Supporting Tools in Medical Education. Electronics 2022, 11, 3778. https://doi.org/10.3390/electronics11223778

Pregowska A, Osial M, Dolega-Dolegowski D, Kolecki R, Proniewska K. Information and Communication Technologies Combined with Mixed Reality as Supporting Tools in Medical Education. Electronics. 2022; 11(22):3778. https://doi.org/10.3390/electronics11223778

Chicago/Turabian StylePregowska, Agnieszka, Magdalena Osial, Damian Dolega-Dolegowski, Radek Kolecki, and Klaudia Proniewska. 2022. "Information and Communication Technologies Combined with Mixed Reality as Supporting Tools in Medical Education" Electronics 11, no. 22: 3778. https://doi.org/10.3390/electronics11223778

APA StylePregowska, A., Osial, M., Dolega-Dolegowski, D., Kolecki, R., & Proniewska, K. (2022). Information and Communication Technologies Combined with Mixed Reality as Supporting Tools in Medical Education. Electronics, 11(22), 3778. https://doi.org/10.3390/electronics11223778