Evaluation of the Predictive Ability and User Acceptance of Panoramix 2.0, an AI-Based E-Health Tool for the Detection of Cognitive Impairment

Abstract

1. Introduction

2. Materials and Methods

2.1. Selection and Description of Participants

2.2. Design of the Study

2.2.1. Procedure

2.2.2. Development and General Information Questionnaires

2.2.3. Traditional Neuropsychological Test

2.2.4. Digital Neuropsychological Test: Panoramix

2.3. Analytical Algorithms

- F1-score as a measure of a test’s accuracy.

- The ratio of correct predictions vs. the total number of input samples.

- Precision, also called positive predictive value, to compute the fraction of relevant instances among the retrieved instances.

- Recall, also known as sensitivity, to compute the fraction of relevant instances retrieved.

- Specificity or true negative rate, that refers to the probability of a negative test, conditioned on truly being negative.

3. Results

3.1. General and Cognitive Participants’ Characteristics

- Educational level (i.e., years of education): 3.00 for people without cognitive impairment; 2.14 for the MCI group; and 2.83 mean years of education for people with AD.

- Exercise level (i.e., 5-point Likert scale: 1 (nothing) to 5 (a lot)): 3.50 for controls; 3.43 for the MCI group; and 4.50 for the AD group.

- Socialization level (i.e., 5-point Likert scale: 1 (no social interaction) to 5 (frequent social interaction): 4.20 for HC subjects; 4.00 for participants with MCI; and 4.67 for people affected by AD.

- GDS (cut-off score: 1: HC; 2–3: MCI and >3 AD). In this case, the average score for controls was 1.00; 2.50 for subjects with MCI, and 3.67 for AD participants.

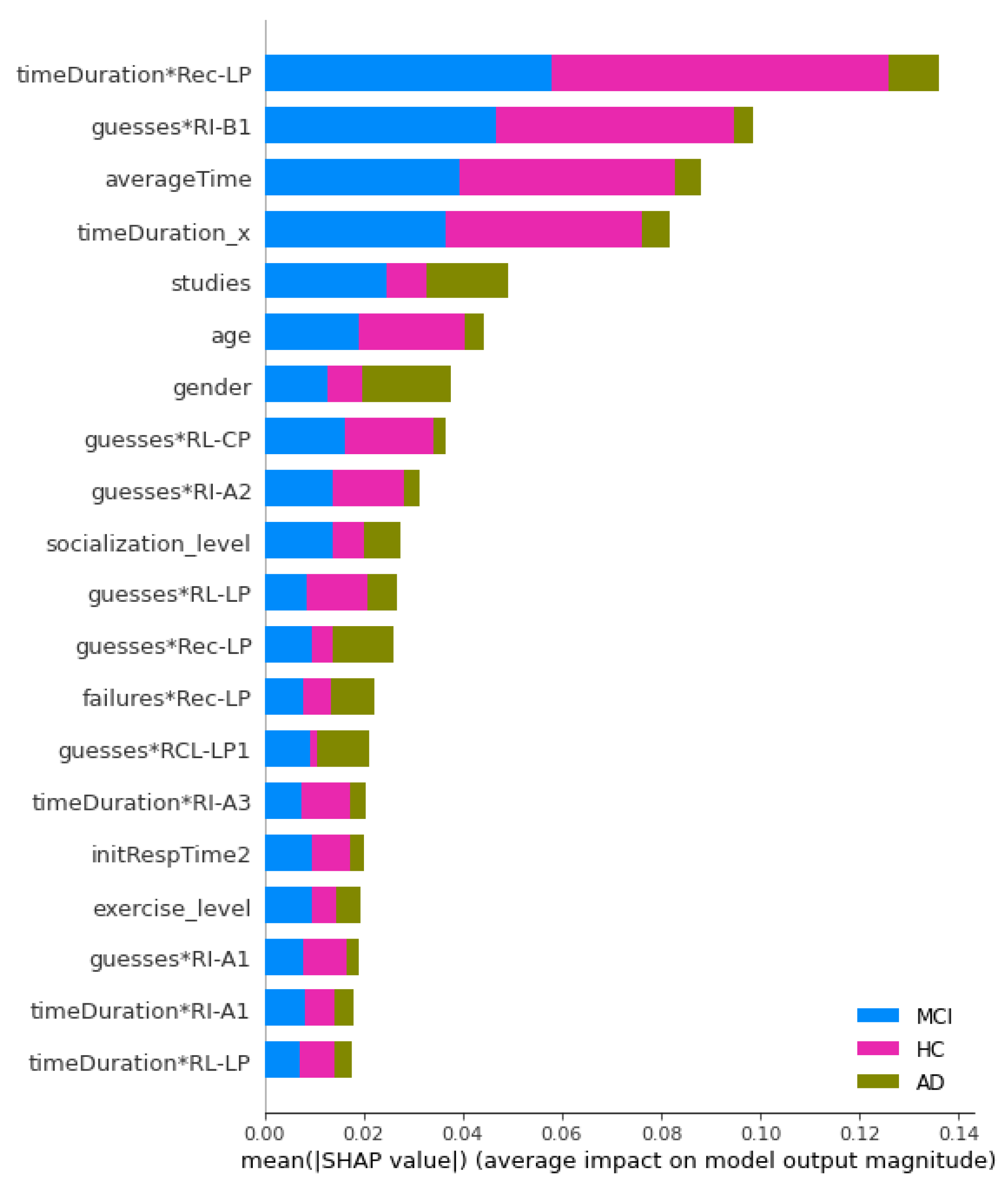

3.2. Prediction of Cognitive Impairment Using Machine Learning Classifiers

- F1-score: 1.0000 for SVM, ANN and KNN. The worst result obtained was 0.8510 in the case of ADB.

- Accuracy: a value greater than 0.9150 was obtained for all algorithms. The maximum value of 1.0000 was obtained for SVM, ANN and KNN.

- Recall or sensitivity: 1.0000 for SVM, ANN and KNN. Again, the worst result was obtained in the case of ADB (0.8000).

- Multi-layer perceptron classifier (activator: ‘relu’, hidden_layer_sizes = (50, 50), solver = ‘lbfgs’).

- Support Vector Machine (C = 10, random_state = 42).

- Random Forest Classifier (bootstrap = False, n_jobs = −1, random_state = 42).

3.3. Participant’s Differences in User Experience of Cognitive Games

4. Discussion

5. Concluding Remarks

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Cahill, S. WHO’s global action plan on the public health response to dementia: Some challenges and opportunities. Aging Ment. Health 2020, 24, 197–199. [Google Scholar] [CrossRef] [PubMed]

- WHO. First WHO Ministerial Conference on Global Action against Dementia: Meeting Report; WHO Headquarters: Geneva, Switzerland, 2015. [Google Scholar]

- Groth-Marnat, G.E. Neuropsychological Assessment in Clinical Practice: A Guide to Test Interpretation and Integration; John Wiley & Sons Inc.:: Hoboken, NJ, USA, 2000. [Google Scholar]

- Carr, T.H. Strengths and weaknesses of reflection as a guide to action: Pressure assails performance in multiple ways. Phenomenol. Cogn. Sci. 2015, 14, 227–252. [Google Scholar] [CrossRef]

- Chaytor, N.; Schmitter-Edgecombe, M. The ecological validity of neuropsychological tests: A review of the literature on everyday cognitive skills. Neuropsychol. Rev. 2003, 13, 181–197. [Google Scholar] [CrossRef] [PubMed]

- Holtzman, D.M.; Morris, J.C.; Goate, A.M. Alzheimer’s disease: The challenge of the second century. Sci. Transl. Med. 2011, 3, 77sr1. [Google Scholar] [CrossRef]

- Knight, R.G.; Titov, N. Use of virtual reality tasks to assess prospective memory: Applicability and evidence. Brain Impair. 2009, 10, 3–13. [Google Scholar] [CrossRef]

- Farias, S.T.; Harrell, E.; Neumann, C.; Houtz, A. The Relationship between neuropsychological performance and daily functioning in individuals with Alzheimer’s disease: Ecological validity of neuropsychological tests. Arch. Clin. Neuropsychol. 2003, 18, 655–672. [Google Scholar] [CrossRef]

- Cordell, C.B.; Borson, S.; Boustani, M.; Chodosh, J.; Reuben, D.; Verghese, J.; Thies, W.; Fried, L.B.; Medicare Detection of Cognitive Impairment Workgroup. Alzheimer’s Association recommendations for operationalizing the detection of cognitive impairment during the Medicare Annual Wellness Visit in a primary care setting. Alzheimer’s Dement. 2013, 9, 141–150. [Google Scholar] [CrossRef]

- Lezak, M.D. Neuropsychological Assessment; Oxford University Press: Oxford, UK, 2004. [Google Scholar]

- Hawkins, K.A.; Dean, D.; Pearlson, G.D. Alternative forms of the rey auditory verbal learning test: A review. Behav. Neurol. 2004, 15, 99–107. [Google Scholar] [CrossRef]

- Robinson, S.J.; Brewer, G. Performance on the traditional and the touch screen, tablet versions of the corsi block and the tower of hanoi tasks. Comput. Human Behav. 2016, 60, 29–34. [Google Scholar] [CrossRef]

- Tong, T.; Chignell, M. Developing a serious game for cognitive assessment: Choosing Settings and measuring performance. In Proceedings of the Second International Symposium of Chinese CHI, Toronto, ON, Canada, 26–27 April 2014; pp. 70–79. [Google Scholar]

- Beck, L.; Wolter, M.; Mungard, N.F.; Vohn, R.; Staedtgen, M.; Kuhlen, T.; Sturm, W. Evaluation of Spatial Processing in Virtual Reality Using Functional Magnetic Resonance Imaging (FMRI). Cyberpsychol. Behav. Soc. Netw. 2010, 13, 211–215. [Google Scholar] [CrossRef]

- Parsons, T.D.; Larson, P.; Kratz, K.; Thiebaux, M.; Bluestein, B.; Buckwalter, J.G.; Rizzo, A.A. Sex differences in mental rotation and spatial rotation in a virtual environment. Neuropsychologia 2004, 42, 555–562. [Google Scholar] [CrossRef] [PubMed]

- Plancher, G.; Tirard, A.; Gyselinck, V.; Nicolas, S.; Piolino, P. Using virtual reality to characterize episodic memory profiles in amnestic mild cognitive impairment and Alzheimer’s disease: Influence of active and passive encoding. Neuropsychologia 2012, 50, 592–602. [Google Scholar] [CrossRef]

- Nolin, P.; Banville, F.; Cloutier, J.; Allain, P. Virtual reality as a new approach to assess cognitive decline in the elderly. Acad. J. Interdiscip. Stud. 2013, 2, 612–616. [Google Scholar] [CrossRef][Green Version]

- Banville, F.; Nolin, P.; Lalonde, S.; Henry, M.; Dery, M.P.; Villemure, R. Multitasking and prospective memory: Can virtual reality be useful for diagnosis? Behav. Neurol. 2010, 23, 209–211. [Google Scholar] [CrossRef]

- Nori, R.; Piccardi, L.; Migliori, M.; Guidazzoli, A.; Frasca, F.; De Luca, D.; Giusberti, F. The virtual reality walking corsi test. Comput. Human Behav. 2015, 48, 72–77. [Google Scholar] [CrossRef]

- Iriarte, Y.; Diaz-Orueta, U.; Cueto, E.; Irazustabarrena, P.; Banterla, F.; Climent, G. AULA—Advanced Virtual Reality Tool for the assessment of attention: Normative study in Spain. J. Atten. Disord. 2016, 20, 542–568. [Google Scholar] [CrossRef]

- Nolin, P.; Stipanicic, A.; Henry, M.; Lachapelle, Y.; Lussier-Desrochers, D.; Allain, P. ClinicaVR: Classroom-CPT: A virtual reality tool for assessing attention and inhibition in children and adolescents. Comput. Human Behav. 2016, 59, 327–333. [Google Scholar] [CrossRef]

- Valladares-Rodriguez, S.; Pérez-Rodriguez, R.; Anido-Rifón, L.; Fernández-Iglesias, M. Trends on the application of serious games to neuropsychological evaluation: A scoping review. J. Biomed. Inform. 2016, 64, 296–319. [Google Scholar] [CrossRef]

- Valladares-Rodriguez, S.; Pérez-Rodriguez, R.; Fernandez-Iglesias, J.M.; Anido-Rifón, L.E.; Facal, D.; Rivas-Costa, C. Learning to detect cognitive impairment through digital games and machine learning techniques. Methods Inf. Med. 2018, 57, 197. [Google Scholar] [CrossRef]

- Michael, D.R.; Chen, S.L. Serious Games: Games That Educate, Train, and Inform; Muska & Lipman/Premier-Trade: Boston, MA, USA, 2005. [Google Scholar]

- Díaz-Orueta, U.; Garcia-López, C.; Crespo-Eguílaz, N.; Sánchez-Carpintero, R.; Climent, G.; Narbona, J. AULA Virtual Reality Test as an Attention Measure: Convergent Validity with Conners Continuous Performance Test. Child Neuropsychol. 2014, 20, 328–342. [Google Scholar] [CrossRef]

- Shriki, L.; Weizer, M.; Pollak, Y.; Weiss, P.L.; Rizzo, A.A.; Gross-Tsur, V. The utility of a continuous performance test embedded in virtual reality in measuring the effectiveness of MPH treatment in boys with ADHD. Harefuah 2010, 149, 63. [Google Scholar]

- Lee, J.-Y.; Kho, S.; Yoo, H.B.; Park, S.; Choi, J.-S.; Kwon, J.S.; Cha, K.R.; Jung, H.-Y. Spatial memory impairments in amnestic mild cognitive impairment in a virtual radial arm maze. Neuropsychiatr. Dis. Treat. 2014, 10, 653–660. [Google Scholar] [CrossRef] [PubMed]

- Atkins, S.M.; Sprenger, A.M.; Colflesh, G.J.H.; Briner, T.L.; Buchanan, J.B.; Chavis, S.E.; Chen, S.-Y.; Iannuzzi, G.L.; Kashtelyan, V.; Dowling, E.; et al. Measuring working memory is all fun and games: A four-dimensional spatial game predicts cognitive task performance. Exp. Psychol. 2014, 61, 417–438. [Google Scholar] [CrossRef] [PubMed]

- Hagler, S.; Jimison, H.B.; Pavel, M. Assessing executive function using a computer game: Computational modeling of cognitive processes. IEEE J. Biomed. Health Inform. 2014, 18, 1442–1452. [Google Scholar] [CrossRef]

- Werner, P.; Rabinowitz, S.; Klinger, E.; Korczyn, A.D.; Josman, N. Use of the virtual action planning supermarket for the diagnosis of mild cognitive impairment. Dement Geriatr Cogn Disord 2009, 27, 301–309. [Google Scholar] [CrossRef]

- Valladares-Rodriguez, S.; Perez-Rodriguez, R.; Facal, D.; Fernandez-Iglesias, M.J.; Anido-Rifon, L.; Mouriño-Garcia, M. Design process and preliminary psychometric study of a video game to detect cognitive impairment in senior adults. PeerJ 2017, 5, e3508. [Google Scholar] [CrossRef]

- Valladares-Rodriguez, S.; Fernández-Iglesias, M.J.; Anido-Rifón, L.; Facal, D.; Pérez-Rodríguez, R. Episodix: A serious game to detect cognitive impairment in senior adults. a psychometric study. PeerJ 2018, 6, e5478. [Google Scholar] [CrossRef]

- Facal, D.; Guàrdia-Olmos, J.; Juncos-Rabadán, O. Diagnostic transitions in mild cognitive impairment by use of simple markov models. Int. J. Geriatr. Psychiatry 2015, 30, 669–676. [Google Scholar] [CrossRef]

- Juncos-Rabadán, O.; Pereiro, A.X.; Facal, D.; Rodriguez, N.; Lojo, C.; Caamaño, J.A.; Sueiro, J.; Boveda, J.; Eiroa, P. Prevalence and correlates of cognitive impairment in adults with subjective memory complaints in primary care centres. Dement. Geriatr. Cogn. Disord. 2012, 33, 226–232. [Google Scholar] [CrossRef]

- Plancher, G.; Gyselinck, V.; Nicolas, S.; Piolino, P. Age effect on components of episodic memory and feature binding: A virtual reality study. Neuropsychology 2010, 24, 379–390. [Google Scholar] [CrossRef]

- Libon, D.J.; Bondi, M.W.; Price, C.C.; Lamar, M.; Eppig, J.; Wambach, D.M.; Nieves, C.; Delano-Wood, L.; Giovannetti, T.; Lippa, C.; et al. Verbal serial list learning in mild cognitive impairment: A profile analysis of interference, forgetting, and errors. J. Int. Neuropsychol. Soc. 2011, 17, 905–914. [Google Scholar] [CrossRef] [PubMed]

- Helkala, E.-L.; Laulumaa, V.; Soininen, H.; Riekkinen, P.J. Different error pattern of episodic and semantic memory in Alzheimer’s disease and Parkinson’s disease with dementia. Neuropsychologia 1989, 27, 1241–1248. [Google Scholar] [CrossRef]

- Pearl, J. Comment: Understanding simpson’s paradox. Am. Stat. 2014, 68, 8–13. [Google Scholar] [CrossRef]

- Albert, M.S.; DeKosky, S.T.; Dickson, D.; Dubois, B.; Feldman, H.H.; Fox, N.C.; Gamst, A.; Holtzman, D.M.; Jagust, W.J.; Petersen, R.C.; et al. The diagnosis of mild cognitive impairment due to Alzheimer’s disease: Recommendations from the national institute on Aging-Alzheimer’s Association workgroups on diagnostic guidelines for Alzheimer’s Disease. Alzheimers Dement. 2011, 7, 270–279. [Google Scholar] [CrossRef]

- Petersen, R.C. Mild cognitive impairment as a diagnostic entity. J. Intern. Med. 2004, 256, 183–194. [Google Scholar] [CrossRef] [PubMed]

- Howard, D.; Patterson, K.E. The Pyramids and Palm Trees Test: A Test of Semantic Access from Words and Pictures; Thames Valley Test Company: Suffolk, UK, 1992. [Google Scholar]

- Adams, J.A. Warm-up decrement in performance on the pursuit-rotor. Am. J. Psychol. 1952, 65, 404–414. [Google Scholar] [CrossRef]

- Fernández-Iglesias, M.J.; Anido-Rifón, L.E.; Valladares-Rodríguez, S.; Pacheco-Lorenzo, M. Integration of Diagnosis Application Data Using FHIR: The Panoramix Case Study. In Proceedings of the 4th International Conference on Intelligent Medicine and Health (ICIMH 2022), Xiamen, China, 19–21 August 2022; p. 6. [Google Scholar]

- Maroco, J.; Silva, D.; Rodrigues, A.; Guerreiro, M.; Santana, I.; de Mendonça, A. Data mining methods in the prediction of dementia: A real-data comparison of the accuracy, sensitivity and specificity of linear discriminant analysis, logistic regression, neural networks, support vector machines, classification trees and random forests. BMC Res. Notes 2011, 4, 299. [Google Scholar] [CrossRef]

- Lehmann, C.; Koenig, T.; Jelic, V.; Prichep, L.; John, R.E.; Wahlund, L.-O.; Dodge, Y.; Dierks, T. Application and comparison of classification algorithms for recognition of Alzheimer’s disease in Electrical Brain Activity (EEG). J. Neurosci. Methods 2007, 161, 342–350. [Google Scholar] [CrossRef]

- Tripoliti, E.E.; Fotiadis, D.I.; Argyropoulou, M.; Manis, G. A six stage approach for the diagnosis of the Alzheimer’s disease based on fmri data. J. Biomed. Inform. 2010, 43, 307–320. [Google Scholar] [CrossRef] [PubMed]

- Rawal, A.; Mccoy, J.; Rawat, D.B.; Sadler, B.; Amant, R. Recent advances in trustworthy explainable artificial intelligence: Status, challenges and perspectives. IEEE Trans. Artif. Intell. 2021, 1, 1. [Google Scholar] [CrossRef]

- Samek, W.; Montavon, G.; Vedaldi, A.; Hansen, L.K.; Müller, K.-R. Explainable AI: Interpreting, Explaining and Visualizing Deep Learning; Springer Nature: Heidelberg, Germany, 2019; Volume 11700. [Google Scholar]

- Baehrens, D.; Schroeter, T.; Harmeling, S.; Kawanabe, M.; Hansen, K.; Müller, K.-R. How to explain individual classification decisions. arXiv 2009, arXiv:0912.1128. [Google Scholar]

- Yu, T.; Zhu, H. Hyper-Parameter optimization: A review of algorithms and applications. arXiv 2020, arXiv:2003.05689. [Google Scholar]

- Qi, D.; Majda, A.J. Using machine learning to predict extreme events in complex systems. Proc. Natl. Acad. Sci. USA 2020, 117, 52–59. [Google Scholar] [CrossRef] [PubMed]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-Learn: Machine learning in python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Witten, I.H.; Frank, E.; Hall, M.A.; Pal, C.J. Data Mining: Practical Machine Learning Tools and Techniques; Morgan Kaufmann: Burlington, MA, USA, 2016. [Google Scholar]

- Nguyen, C.; Wang, Y.; Nguyen, H.N. Random forest classifier combined with feature selection for breast cancer diagnosis and prognostic. J. Biomed. Sci. Eng. 2013, 6, 551. [Google Scholar] [CrossRef]

- Gray, K.R.; Aljabar, P.; Heckemann, R.A.; Hammers, A.; Rueckert, D.; Alzheimer’ Disease Neuroimaging Initiative. Random forest-based similarity measures for multi-modal classification of Alzheimer’s disease. Neuroimage 2013, 65, 167–175. [Google Scholar] [CrossRef] [PubMed]

- Patel, M.J.; Andreescu, C.; Price, J.C.; Edelman, K.L.; Reynolds, C.F.; Aizenstein, H.J. Machine learning approaches for integrating clinical and imaging features in late-life depression classification and response prediction. Int. J. Geriatr. Psychiatry 2015, 30, 1056–1067. [Google Scholar] [CrossRef]

| Variables | Sample n (%) Mean (SD) | |

|---|---|---|

| Gender | Female | 22 (±0.73) |

| Male | 8 (±0.27) | |

| Mean (SD) | ||

| Age (65+ years) | n = 30 | 78.64 (±7.23) |

| Educational level | 2.42 (±1.2) | |

| Exercise level | 3.57 (±0.99) | |

| Socialize level | 4.17 (±1.05) | |

| Chronic treatment | 0.70 (±0.36) | |

| GENERAL SUBJECTS’ CHARACTERISTICS | HC (n = 10) | MCI (n = 14) | AD (n = 6) |

|---|---|---|---|

| Mean (SD) | Mean (SD) | Mean (SD) | |

| Age | 75.62 (±6.69) | 81.24 (±5.72) | 80.44 (±3.39) |

| Educational Level | 3.00 (±1.13) | 2.14 (±0.66) | 2.83 (±0.98) |

| Exercise level | 3.50 (±0.71) | 3.43 (±0.65) | 4.50 (±0.55) |

| Socialization level | 4.20 (±0.64) | 4.00 (±0.00) | 4.67 (±0.52) |

| GDS | 1.00 (±0.00) | 2.50 (±0.52) | 3.67 (±0.52) |

| ML Algorithm | Accuracy | Sensitivity (Recall) ↓ | Specificity | Precision | F1-Score |

|---|---|---|---|---|---|

| SVM | 1.00 (±0.000) | 1.00 (±0.000) | 1.00 (±0.00) | 1.00 (±0.00) | 1.00 (±0.00) |

| ANN | 1.00 (±0.000) | 1.00 (±0.000) | 1.00 (±0.00) | 1.00 (±0.00) | 1.00 (±0.00) |

| KNN | 1.00 (±0.000) | 1.00 (±0.000) | 1.00 (±0.00) | 1.00 (±0.00) | 1.00 (±0.00) |

| LR | 0.98 (±0.027) | 0.96 (±0.027) | 1.00 (±0.00) | 1.00 (±0.00) | 0.98 (±0.027) |

| RF | 0.95 (±0.026) | 0.84 (±0.023) | 1.00 (±0.00) | 1.00 (±0.00) | 0.91 (±0.025) |

| ADB | 0.91 (±0.025) | 0.80 (±0.022) | 0.96 (±0.027) | 0.90 (±0.025) | 0.85 (±0.024) |

| Recall Weighted ↓ | F1-Score Weighted | |||

|---|---|---|---|---|

| ML Algorithm | Mean | SD | Mean | SD |

| ANN | 1.0000 | ±0.0000 | 1.0000 | ±0.0000 |

| SVM | 0.9889 | ±0.0222 | 0.9873 | ±0.0254 |

| RF | 0.9784 | ±0.0265 | 0.9764 | ±0.0291 |

| LR | 0.9784 | ±0.0265 | 0.9764 | ±0.0291 |

| KNN | 0.9673 | ±0.0268 | 0.9873 | ±0.0254 |

| ADB | 0.8234 | ±0.0660 | 0.8808 | ±0.0397 |

| SUBJECTS’ PERCEPTION | HC (n = 10) | MCI (n = 14) | AD (n = 6) |

|---|---|---|---|

| Mean (SD) | Mean (SD) | Mean (SD) | |

| P1. I liked this game very much | 4.50 (±0.02) | 4.13 (±0.78) | 3.99 (±0.48) |

| P2. I find this game useful to exercise my memory | 4.63 (±0.02) | 4.36 (±0.83) | 4.06 (±0.49) |

| P3. I found the instructions clear | 4.66 (±0.02) | 4.69 (±0.88) | 4.20 (±0.51) |

| P4. I find this game easy to play | 4.54 (±0.02) | 4.53 (±0.85) | 3.84 (±0.46) |

| P5. I find this game easy to control using my fingers | 4.62 (±0.02) | 4.63 (±0.87) | 4.19 (±0.51) |

| P6. This game is good for my memory, and I would keep using it | 4.61 (±0.02) | 4.28 (±0.81) | 4.23 (±0.51) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Valladares-Rodríguez, S.; Fernández-Iglesias, M.J.; Anido-Rifón, L.E.; Pacheco-Lorenzo, M. Evaluation of the Predictive Ability and User Acceptance of Panoramix 2.0, an AI-Based E-Health Tool for the Detection of Cognitive Impairment. Electronics 2022, 11, 3424. https://doi.org/10.3390/electronics11213424

Valladares-Rodríguez S, Fernández-Iglesias MJ, Anido-Rifón LE, Pacheco-Lorenzo M. Evaluation of the Predictive Ability and User Acceptance of Panoramix 2.0, an AI-Based E-Health Tool for the Detection of Cognitive Impairment. Electronics. 2022; 11(21):3424. https://doi.org/10.3390/electronics11213424

Chicago/Turabian StyleValladares-Rodríguez, Sonia, Manuel J. Fernández-Iglesias, Luis E. Anido-Rifón, and Moisés Pacheco-Lorenzo. 2022. "Evaluation of the Predictive Ability and User Acceptance of Panoramix 2.0, an AI-Based E-Health Tool for the Detection of Cognitive Impairment" Electronics 11, no. 21: 3424. https://doi.org/10.3390/electronics11213424

APA StyleValladares-Rodríguez, S., Fernández-Iglesias, M. J., Anido-Rifón, L. E., & Pacheco-Lorenzo, M. (2022). Evaluation of the Predictive Ability and User Acceptance of Panoramix 2.0, an AI-Based E-Health Tool for the Detection of Cognitive Impairment. Electronics, 11(21), 3424. https://doi.org/10.3390/electronics11213424