1. Introduction

As soon as they arrived on the market, portable Virtual Reality (VR) systems, such as Oculus Quest, attracted a wide consumer base. In contrast, the popularity of mobile VR (i.e., VR experiences achieved through the use of smartphones) has started to wane over the last several years. Despite offering a practical alternative to dedicated VR equipment, which was previously known for being expensive and inaccessible, mobile VR was quickly overshadowed by the superior features of portable VR, coupled with its declining prices. This shift confirmed what is arguably one of VR’s strongest selling points—an unprecedented level of interactivity, fueled by the specialized equipment’s ability to track multiple body parts in six degrees of freedom (6DoF). However, increased mobility requirements imposed on the user present a complex challenge to hardware manufacturers, application developers, and researchers in fields dealing with the user’s experience with multimedia, such as User Experience (UX) and Quality of Experience (QoE).

Beyer and Möller [

1] differentiate between three categories of user performance in multimodal interactive systems: perceptual effort, cognitive workload, and physical response effort. In broad terms, UX design is usually concerned with minimizing the effort/workload necessary to interact with a productivity-oriented system, aiming to provide the user with the smoothest possible experience. However, design principles that work for some types of applications may not be suitable for others. As discussed in [

1], games are a very specific category of multimodal interactive systems, as their design relies on principles that go beyond, and often against, the purely utilitarian approach to system design. Thus, when discussing the optimization of the gaming user experience, one needs to consider the distinction between systems as

“toys” and systems as

“tools” [

2].

“Tools” are described as systems that are utilized to accomplish an external goal. An ideal

“tool” is designed for a specific, highly practical purpose, requires minimal effort on the user’s part, and yet functions in a perfectly reliable, efficient way. On the contrary, the quality of digital games, which fall under the

“toy” category, is not judged through the lens of utility. Instead, the definition of

“toys” as systems designed to be used

“for their own sake” [

2] is reminiscent of the concept of optimal experience, or

flow, defined by Csikszentmihalyi [

3] as: “

the state in which people are so involved in an activity that nothing else seems to matter; the experience itself is so enjoyable that people will do it even at great cost, for the sheer sake of doing it”. When discussing the conditions necessary to enter the

flow state during a certain activity, the author highlights the importance of being provided with clear goals, structured rules, and some sort of progress feedback. The task at hand should provide a fine balance between challenge and skill, anxiety and boredom, allowing the person to lose consciousness of the self and the passing of time.

As discussed by Brown and Cairns [

4], the concept of flow is similar to the sensation of immersion, another aspect that is often mentioned in the context of what makes the process of gaming enjoyable. As the authors explain, unlike the state of flow, complete immersion is only a fleeting experience. Ermi and Mäyrä [

5] highlight three different types of immersion as some of the key components of the gaming experience. Sensory immersion stems from the audiovisual aspects of the game surpassing the sensory stimuli coming from the real world, while narrative immersion, as the name suggests, stems from the story of the game and the extent to which the player is able to identify and empathise with its characters. The third type, challenge-based immersion, is the one that describes a flow-like state, as it occurs when there is a balance of challenge and skill. These challenges require the use of either motor or cognitive skills, but usually involve both to a certain extent.

Building on the definition of challenge-based immersion by Ermi and Mäyrä [

5], we further focus on the role of movement and motor skills in the gaming experience. While all games, including those on non-immersive platforms, require some level of physical involvement, which is increased in case the game requires high levels of spatial precision or very quick reactions, the role of movement is one of the key features that distinguish VR from non-VR games. The term

gameplay gestalt refers to the user’s thoughts about the state of the game, as well as

“the pattern of repetitive perceptual, cognitive, and motor operations” performed by the user, as permitted by the rules of the game [

6]. Input device design for non-immersive platforms limits the mobility requirements imposed on the player, as required motor operations primarily pertain to fine motor movement. While actions such as sliding the finger across the surface of a larger touchscreen or moving a computer mouse require larger movements compared to pressing a button on a controller, these operations are still confined to only two degrees of freedom. On the contrary, 6DoF tracking, that has become the industry standard for VR headsets and the accompanying controllers, significantly expands the array of options available to game developers concerned with leveraging the power of movement in designing compelling game mechanics.

While the medium of VR can be experienced using a wide range of input devices, locomotion, and interaction mechanics, in this paper we will focus on widely-used interaction methods that are manual, controller-based, and isomorphic, i.e., characterized by the one-to-one mapping between movements of the physical hand and the corresponding motion of the in-game virtual hand. In addition to observing interactions that use the virtual hand metaphor to interact with target objects in a direct (i.e., non-mediated) manner (e.g., picking up the ball with the virtual hand), we will also consider game mechanics that support tool-mediated interaction with target objects (e.g., using a hand-held ranged weapon to shoot a projectile at a target object). It is important to note that virtual reality is a multimodal medium, with audio and haptic modalities playing an important role in the formation of the user experience. However, for the sake of this paper, we will focus primarily on the visual aspect of the VR experience, while also addressing the role of position and movement as detrimental aspects of controller-based VR. Moreover, considering that the issue of locomotion in VR is a complex topic with its own set of specific challenges, such as cybersickness, we chose to narrow our focus on standing and Room-Scale games that do not employ specific in-game locomotion methods other than providing a direct mapping between user movement within the tracked physical space and their position within the virtual environment. While it is impossible to cover every type of object interaction that can be found in VR games, the repetition of certain gameplay gestalts across different games and genres is a common occurrence in the world of game development [

6], allowing us to highlight and classify some of the more popular interaction mechanics based on shared characteristics.

Based on this classification, our goal is to propose a number of parameters that may influence the quality of interaction mechanics in VR games. Exploring and analyzing the impact of these parameters may lead to a greater understanding of user experience during gaming in VR, thus contributing toward further optimization of both single-player and networked experiences.

When attempting to evaluate the impact of different parameters (e.g., implementation of navigation or interaction techniques) on the gaming experience, an important aspect to consider is the issue of test material used in VR gaming studies. Testing a game with real users is an integral part of the creative process of making a game. However, unlike researchers employed by game developer firms, researchers from an academic background are not tied to a specific game, but are often looking to make broader conclusions regarding an entire genre, or a specific platform. To understand user experience in a realistic gaming situation, academic researchers often conduct their studies using commercial VR games as test material (e.g., [

7,

8,

9,

10]), as pre-existing and commercially available content provides several benefits in addition to the obvious advantage of using readily available material as opposed to the effort required to design and develop a custom application. For example, commercial games (particularly the ones with a high budget) have been carefully crafted by larger multidisciplinary teams over long periods of time to provide the highest possible levels of sensory and imaginative immersion. Vigorous quality testing ensures that games run smoothly and appear more polished compared to custom applications. Furthermore, applications developed specifically to be used as test material usually lack the narrative complexity and visual sophistication of commercial games. Lastly, using commercial content in user studies contributes toward a higher level of experimental realism. On the other hand, from the perspective of UX/QoE researchers, commercial games are essentially black boxes, with two major disadvantages. Firstly, they do not provide the level of flexibility required for the systematic evaluation of multiple scenarios with different parameter values. Secondly, while commercial games usually present the player with a basic evaluation of in-game performance (e.g., in the form of in-game deaths, points, rankings, etc.), these measures depend on game-specific algorithms, and very rarely provide a clear, consistent measure of performance aspects such as speed and accuracy.

Therefore, in this paper we also provide suggestions for designing and implementing specific test applications that enable flexible, yet systematic evaluation of parameters that may influence the quality of interaction mechanics. To illustrate our recommendations by example, we present the concept of an application designed specifically for the purpose of evaluating interaction mechanics. Many VR games are centered around the basic pick-and-place mechanics, i.e., mechanics that allow users to grasp and manipulate target objects without the use of external tools. Considering that this type of non-mediated interaction method serves as the foundation for tool-mediated mechanics, in addition to being the primary choice for many games, we will discuss it in more detail in the following sections. However, because non-mediated manual interactions with external objects are a fundamental and universal element of the human experience, by extension, they are emulated across a wide array of VR applications, regardless of their use-case. Thus, such interaction mechanics have already been addressed in multiple studies with different implementations of test applications (e.g., [

11,

12]). In addition to addressing existing guidelines and recommendations, our paper provides a novel approach to classifying and evaluating VR mechanics that are less researched and more gaming-specific, such as the use of melee weapons and similar tools for reaching target objects positioned within the users’ extended peripersonal space, as well as the use of ranged tools to access distant, unreachable target objects.

To summarize, the aim of this paper is to provide a blueprint for the classification of interaction mechanics that are commonly found across different VR games. Furthermore, our goal is to provide user experience researchers with a framework for the evaluation of such mechanics. As such, this paper provides the following novel scientific contributions:

a taxonomy of interaction mechanics for virtual reality games;

design principles and user requirements regarding the development of platforms for the evaluation of interaction mechanics quality (the INTERACT framework);

examples of configurable parameters that may influence the quality of interaction mechanics and measures to be used for the evaluation of their impact on the user experience;

an overview of a test platform for the evaluation of interaction mechanics quality, developed according to the guidelines and concepts presented in this paper.

The paper is structured as follows.

Section 2 provides several definitions of game mechanics found across literature and describes the term

interaction mechanics as used in this paper.

Section 3 provides a taxonomy of interaction mechanics that are commonly used in virtual reality games, while

Section 4 presents different factors related to interaction mechanics design and implementation that may influence user experience. The INTERACT framework, which offers guidelines for designing and implementing applications as tools in user research regarding VR interacion mechanics, is presented in

Section 5, which also includes examples of configurable parameters and measures to be considered for this purpose. Finally,

Section 6 provides an overview of a test platform for the evaluation of interaction mechanics quality, and is followed by our concluding remarks.

2. Definition of Game Mechanics

Multiple definitions of the term

game mechanics can be found across literature. For example, Järvinen [

13] describes game mechanics as

means afforded by the game, to be used for interaction with game elements as the player attempts to reach the goal of the game, which is determined by its rules. Sicart [

14] defines game mechanics as

“methods invoked by agents, designed for interaction with the game state”. Both Järvinen [

13] and Sicart [

14] argue for the formalization of game mechanics as verbs (e.g.,

aiming,

shooting, etc.).

On the other hand, Fabricatore [

15] defines game mechanics as interactive subsystems that are based on rules, but only presented to the player as a black box that generates a certain output upon receiving a certain input. According to this definition, game mechanics are referred to as tools used to perform gameplay activities and described as state machines. As the player triggers different interactions with the mechanics, the state of the machine will change. The author gives an example of the door mechanics. Triggered by the appropriate interaction (

unlock door) the door mechanics will change from the locked state to the unlocked state. In the work of Fabricatore [

15], the verbs used in relation to game mechanics are referred to as either interactions (actions that trigger state change) or gameplay activities (activities carried out through the use of game mechanics), as opposed to game mechanics themselves, which highlights the difference between this approach and the approaches taken by Sicart [

14] and Järvinen [

13].

Regardless of their differing definitions, all of the aforementioned authors make it a point to distinguish between different mechanics depending on their relevance and frequency of use within the observed game. Most notably, each of the source papers presents some version of a definition for the term

core mechanics. Fabricatore [

15] defines them as mechanics that are used to execute gameplay activities that most frequently occur within a certain game, and are essential for its successful completion. Järvinen [

13] defines core game mechanics as those that are available throughout the entire game and notes that a game can have multiple core mechanics, but they have to be performed

“one at a time” (i.e., during a turn or a certain game state), while Sicart [

14] describes them as mechanics utilized by agents to reach a

“systemically rewarded end-game state”.

In this paper, we refer to different game mechanics using verb-based names, but considering the existence of well-established monikers for certain mechanics that are colloquially expressed in root/imperative verb form (e.g.,

hack and slash,

pick-and-place, etc.), unlike Järvinen [

13] and Sicart [

14], we will not be using the gerund form (e.g.,

shooting,

slicing). Bearing in mind the extent to which games in virtual reality (more so than any other platform) are based on immersing the player in the midst of the action, our use of the term

game mechanics predominantly addresses methods invoked by the player, which is consistent with the anthropocentric approach taken by Järvinen [

13]. However, we remain cognizant of the fact that methods invoked by other

agents (as highlighted by Sicart [

14]) largely influence the way in which the player is able to utilize core game mechanics within a certain game or even a particular moment within that game. Thus, there is the need to further distinguish player-invoked mechanics from other mechanics available in the game.

As explained by LaViola et al. [

16],

interaction techniques are methods that allow the user to perform a certain task. The use of this term primarily refers to the user’s input and the mapping between that input and the system, but may also extend to those elements that provide feedback to the user as they interact with the system. While not exclusively related to the gaming use-case, the concept of interaction techniques as a mapping between user input and the system resembles a description of game mechanics as a link between behavioral and systemic elements of the game, as explained by Järvinen [

13]. Borrowing from this definition, we introduce a new term—

interaction mechanics—to refer specifically to game mechanics that are directly and solely controlled by player input (e.g., for a VR shooter game, the

primary interaction mechanics can be described as expelling a bullet from a gun—an action that provides immediate visual, auditory, and haptic feedback to the player—in a specific direction determined by controller position in a particular moment determined by a trigger press), as opposed to other methods present in the game but invoked by agents other than the player (e.g., enemy behaviour controlled by artificial intelligence).

Furthermore, we find it important to distinguish between different types of core interaction mechanics. Both Järvinen [

13] and Sicart [

14] elaborate on the concept of

primary mechanics as core game mechanics that remain consistent throughout the game and are directly utilized to overcome challenges that contribute toward the end-game state, as opposed to other types of core mechanics, referred to as

secondary mechanics [

13] or

submechanics [

14], which play a supporting role to the primary mechanics, generally only indirectly contributing toward the end-game state. Järvinen [

13] also introduces

modifier mechanics, only available under certain conditions, giving the example of

“applying strength” to a tennis hit as a modifier mechanics to the primary

hit mechanics, considering that it has to be performed in a specific moment and a specific place. Järvinen [

13] also lists moving into appropriate position and aiming as examples of secondary mechanics to the primary

shoot mechanics. We will refrain from including this level of glanularity into our analysis of VR game mechanics for the following reason: focusing solely on methods that fit this narrow definition of

primary mechanics (e.g., to

shoot or

hit an object) is unlikely to provide information that is necessary to draw relevant conclusions regarding user experience. From the perspective of the player, the specific action of shooting or hitting is immediately preceded by more complex perceptual, cognitive, and motor operations that strongly contribute toward various aspects of user experience. For example, the perception of aspects such as challenge, competence, or exertion in the context of the

shoot mechanics is formed based on the complexity of actions taken by the player in preparation for the eventual

shoot. The player’s ability to notice and recognize the target, the complexity and speed of movements necessary to align the weapon with the target and press the trigger at precisely the right moment in time, followed by immediate feedback that signals that the intended action has been recognized by the system—these elements, along with their ultimate outcome, arguably contribute toward the formation of user experience far more than the isolated action of shooting. Therefore, to better fit the context of user experience evaluation, we will modify the classification provided by Järvinen [

13]. Instead of considering such actions as secondary or modifier mechanics, and therefore separate from the primary mechanics, we will extend the definition of primary mechanics to implicitly include preparatory mechanics such as aiming, positioning, and applying force (if required) as its integral elements.

Interaction mechanics may also be categorized as proactive (i.e., instigated by the player; the moment of instigation is thus decided by the player) or reactive (i.e., performed by the player as a direct reaction to mechanics instigated by game entities other than the player; the player is thus expected to react in the moment determined by the actions of those entities). For example, in a combative action game, interaction mechanics that are usually performed as an attack (e.g, swinging a sword to hit an enemy) can be classified as proactive mechanics, while interaction mechanics generally performed in defense (e.g., raising a shield to protect against enemy attacks) can be classified as reactive mechanics. Another example of the distinction between proactive and reactive mechanics can be found in sports games, such as throwing (proactive) and catching (reactive) a ball. In this paper, we will mostly focus on proactive mechanics, and examine the interaction between the player and a particular target object, rather than addressing the complex interplay between the player and other entities of the game, which we consider to be out of scope for this paper.

3. Taxonomy of Interaction Mechanics Used in Commercial VR Games

In this section, we highlight several aspects to consider when attempting to classify primary interaction mechanics for virtual reality games. Our taxonomy is based on different characteristics of the virtual hand metaphor implementation, features of used tools and target objects, target object placement, as well as specific features of the task itself.

3.1. Interaction Fidelity

VR games can vary in terms of

interaction fidelity, or the extent to which they emulate the actions from the physical world. As discussed in [

17,

18], interactions in 3D environments are often designed as either magical or literal. The literal approach to interaction design aims for a highly convincing reproduction of real-world interactions. On the contrary, the magical approach aims to improve the functionality of the interaction mechanics by providing the player with abilities that transcend the constraints of the physical world. Between the two extremes lies hypernaturalism, which combines the intuitive gestures of literalism with magical enhancements or

“intelligent guidance” [

19].

3.2. Symmetry and Synchrony

As discussed in [

16,

20], different tasks may require the use of one (i.e., unimanual task) or both (i.e., bimanual task) hands. In case of bimanual tasks, both hands may perform the same motions (i.e., bimanual symmetric task) or they can move in different ways (i.e., bimanual asymmetric task). Furthermore, tasks can be classified based on whether movements of both hands (symmetric or asymmetric) occur at the same time (i.e., bimanual synchronous task) or at different times (i.e., bimanual asynchronous task).

3.3. Targets

In general, when the user interacts with the virtual environment using hand-held controllers tracked in 6DoF with a one-on-one mapping between the controller and the virtual hand, we consider this interaction as an interplay (either direct or achieved through the use of proxy objects) between the virtual hand of the user and the current target. Depending on the game, the target can take different forms. Moreover, the idea of what can be defined as a target may change from one moment to the next as the player works their way through different mechanics within a single game.

To illustrate the fluidity of our idea of an in-game target, we will call upon the concept of

components, as introduced by Järvinen [

13], who defines them as objects within the game that can be manipulated and owned by the player. According to the author, the concept of ownership is highly relevant for the classification of components—depending on the current owner (the player, i.e., the

self, other players, or the system itself), a component may be considered a component-of-self, a component-of-others, or a component-of-system. The process of obtaining ownership of a component is often instigated by some type of player interaction, e.g., by invoking the

pick up interaction mechanics. This mechanics requires the player to first perceive the component, and subsequently perform cognitive and motor operations that are necessary for the successful acquirement of the component—during this process, the component is perceived as a

target. Once obtained, it is controlled by the player and becomes the component-of-self—and the player’s perception of what they consider to be a current target begins to shift. For example, in case of a cooking simulator, the player may be required to pick up a sandwich and place it on the plate. In the context of the

pick up mechanics, the

sandwich will be perceived by the player as a target object. Once obtained, the sandwich becomes a component-of-self. As the player progresses to the

place mechanics, the plate becomes the target. The concept of components does not pertain only to static objects, but to in-game characters as well. For example, an action game may require the user to operate a weapon (the component-of-self), directing it in a way that causes damage to enemies controlled by other players (characters-of-others) or the system itself (characters-of-system). In doing so, the player considers the other characters to be targets.

Static in-game objects and dynamic in-game characters are typical examples of what we refer to as explicit targets. Their saliency with regard to the surrounding environment serves as an indication of their role in some sort of in-game interaction and their boundaries are clearly defined by sensory cues given to the player (predominantly visual, but commonly aided by other modalities). While static objects and characters are generally embodied—they serve as independent entities and are often subject to physical forces—an explicit target does not necessarily need to take the form of a three-dimensional entity with physical properties, as long as the interactable area/volume is circumscribed and its boundaries are clearly indicated to the player. For example, to score points within a basketball game, a ball needs to make its way to an explicit target. By itself, a basketball hoop, a salient physical object, is not actually a target. Instead, it serves as an indication of the position as well as the limits of the actual target—an empty space encircled by it. However, depending on the game, certain interactions with the surrounding virtual environment are performed without an explicit target, although cognitive and motor operations necessary for aiming and positioning are still being performed. For games that rely on implicit targets, having to first determine the position/direction of an implicit target does not only serve as a necessary precursor to further action, but is usually considered as being one of the fundamental parts of the challenge. An example is given in tennis, where the strategy of each player relies on attempting to direct the ball away from the other player. Although the existence of boundaries (e.g., the height of the net, the dimensions of the field, the position of the other player) is common—determining what is allowed by the game, as well as what constitutes a successful attempt—what is considered as an implicit target ultimately comes down to the player and their strategy. In other words, the game may provide explicitly defined boundaries determining the “pool of possibilities” afforded to the player, but the exact placement of the implicitly defined target is familiar only to the player performing the action.

3.4. Mediation and Interaction Space

Depending on the type of task and the position of the target with regard to the user, performed interactions may be direct (i.e., non-mediated) or mediated through the use of some type of hand-held tool, an object that, once obtained, becomes a component-of-self, and can be utilized by the player to perform (or facilitate the performance of) a certain task. Considering that manual interactions in VR rely on the freedom of physical movement in the real world, the type of task and tool usage within the game will define the possible placement of target objects within the virtual environment, as to accommodate the limitations imposed on the player based on the characteristics of their body and the boundaries of the tracked physical space. In VR games relying on the virtual hand metaphor with a one-to-one mapping—just like in the “real world”—the player can only interact with objects positioned within the immediate space that surrounds them, or the so-called peripersonal space. Everything beyond the player’s peripersonal space is therefore, quite literally, outside of their reach, belonging to what we call their extrapersonal space. However, there is a certain flexibility to the boundaries between peripersonal and extrapersonal space, as the representation of peripersonal space may extend to a bigger area, for example in case of tool use [

21]. Since the focus of this paper lies elsewhere, we will refrain from delving deeper into the definition of peripersonal space. The term, as used in this paper, along with the concept of extending the singular peripersonal space beyond its initial boundaries via tool use—which may be considered an oversimplification [

22,

23]—only serves as an aid for the broad classification of different VR interaction mechanics.

Further focusing on the issue of non-mediated vs. tool-mediated interaction mechanics, we consider some types of handheld tools, commonly used in VR games (e.g., swords, clubs, bats), to serve as significant extensions of peripersonal space, enabling the user to interact with objects that are positioned in what can be described as near extrapersonal space. Thus, while non-mediated interactions only allow the player to act within the physical boundaries defined by the dimensions of the player’s body and the limitations of tracked physical space, tool-mediated interactions increase the interactable area by a small margin, based on the length of the tool in question. However, in cases in which the virtual environment covers a broader area compared to the tracked physical space, target objects positioned further from the player may still remain physically unreachable. Obviously, this is not necessarily an issue—in fact, positioning target objects somewhere in the distant extrapersonal space can almost be considered a prerequisite for the implementation of certain game mechanics. For example, the ever popular shooter genre is inherently defined by tool-mediated interactions with distant targets. Furthermore, a significant percentage of sports—which are commonly emulated in VR games—are based on repeated and rule-governed actions that can be described as throwing, shooting, or hitting objects toward a distant target or targets. Therefore, we consider tool use and target positioning to be interconnected, both serving as key features based on which we choose to categorize different game mechanics. For the sake of simplicity, in this paper we will refer to

peripersonal space (PPS) as the space in which the user is able to directly interact with objects without the use of external tools. We will refer to the space in which the user is able to physically interact with objects using handheld tools as

extended peripersonal space (EPPS). The remaining area, which spreads beyond the boundaries of extended peripersonal space and is therefore not directly reachable, will be referred to as

distant extrapersonal space (DEPS). A simplified illustration of these concepts is provided in

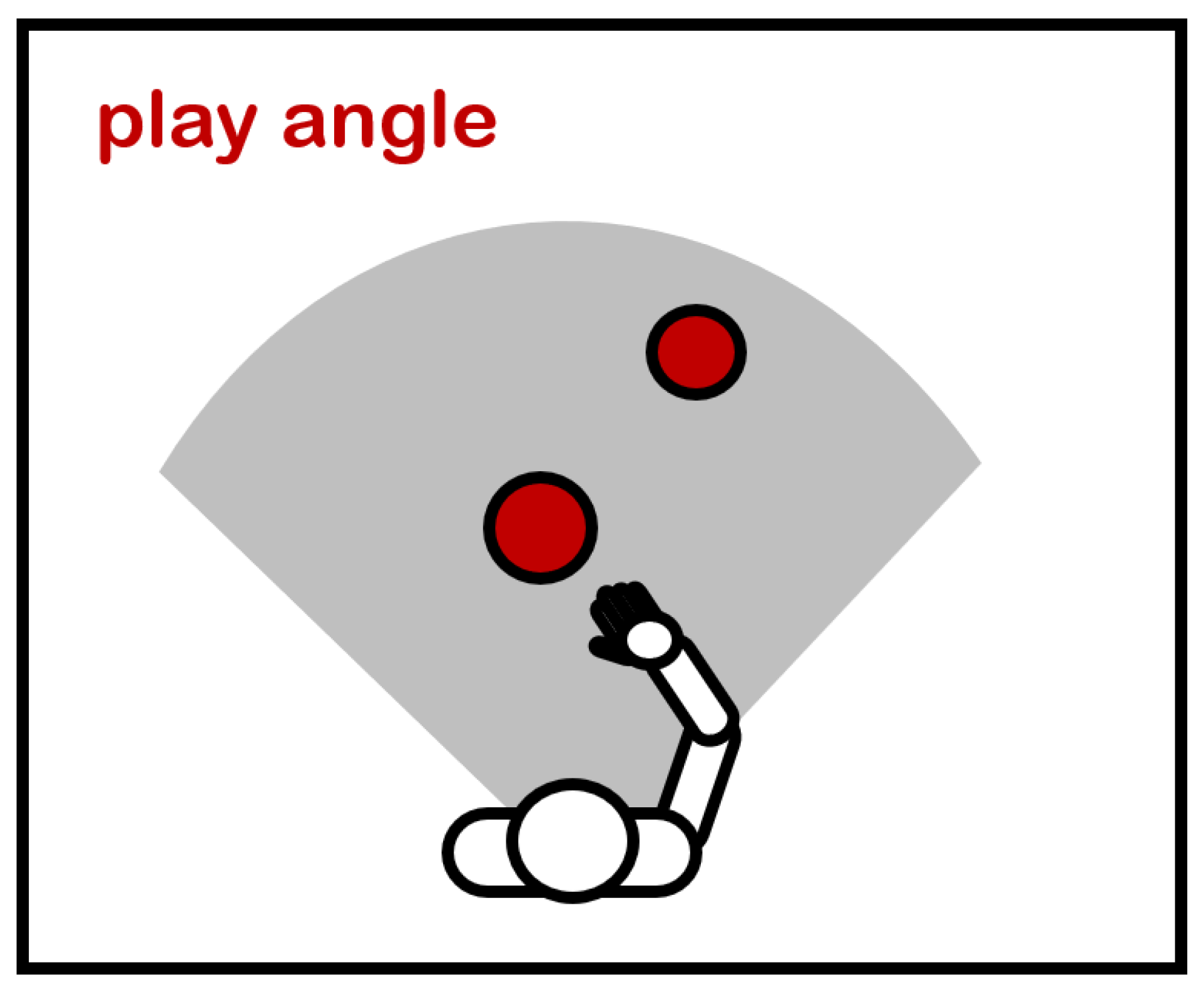

Figure 1).

To better describe the notion of tools as extensions of peripersonal space, it is useful to refer to the concept of the body as a kinetic chain, comprised of rigid segments connected together with joints. Physical movements at the core of various sports activities are usually performed with the objective of optimizing acceleration and speed at the terminal segments of the kinetic chain [

24,

25] (e.g., foot in case of soccer, hand in case of volleyball). Hand-held controllers used with commercial VR equipment provide the system with an approximation of relevant terminal segments (i.e., hands) at any given moment. By providing the player with a hand-held tool, the virtual representation of terminal segments of the kinetic chain is being extended, either by modifying the size and shape of the original terminal segment, or by adding a new terminal segment, connected to the original terminal segment with a new joint. A sword attached at the end of a controller, fixed at a certain angle, but moving together with the controller as a single, rigid entity, connected to the rest of the chain by the wrist, serves as an example of the former approach. The latter approach is taken by Fletcher [

26], who argues for the inclusion of an additional spring joint to separate the VR sword or other type of tool from the virtual representation of the controller, with the goal of presenting the player with a more convincing implementation of force feedback. While the implementation of interaction mechanics mediated by the use of swords, bats, and other types of rigid tools may include a single added joint, including a flexible tool (e.g., a chain, a flail) may even add multiple links to the original terminal segment of the kinetic chain. In addition to the fact that a hand-held tool extends the interactable space around the player to the extent afforded by its length, its dimensional and other physical properties determine its inertia and velocity profile in the context of the in-game physics system, contributing to the overall result and potentially affecting the overall perceived realism, utility and enjoyability of the game mechanics. Examples of different interaction mechanics and tools as extensions of peripersonal space are presented in

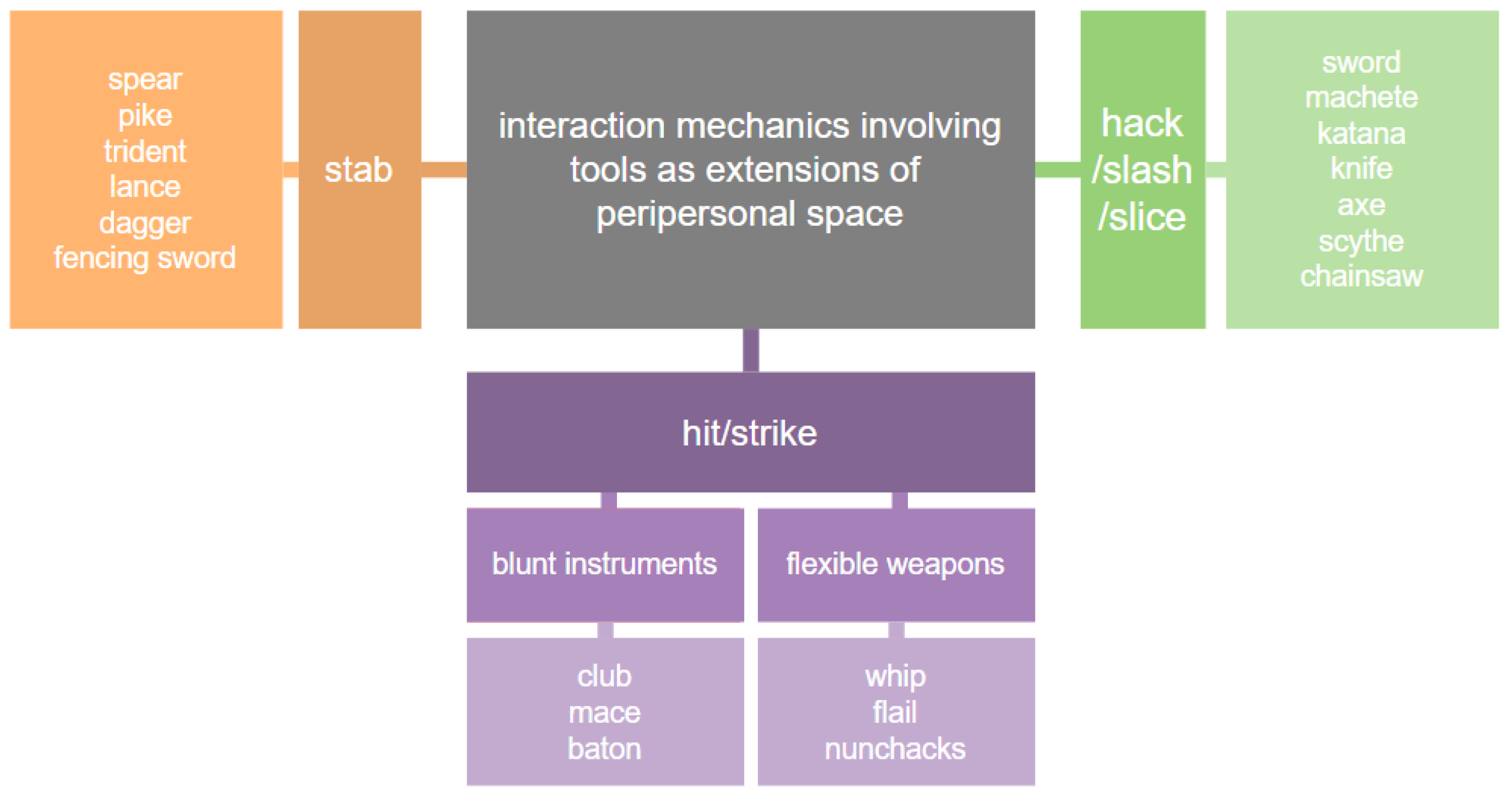

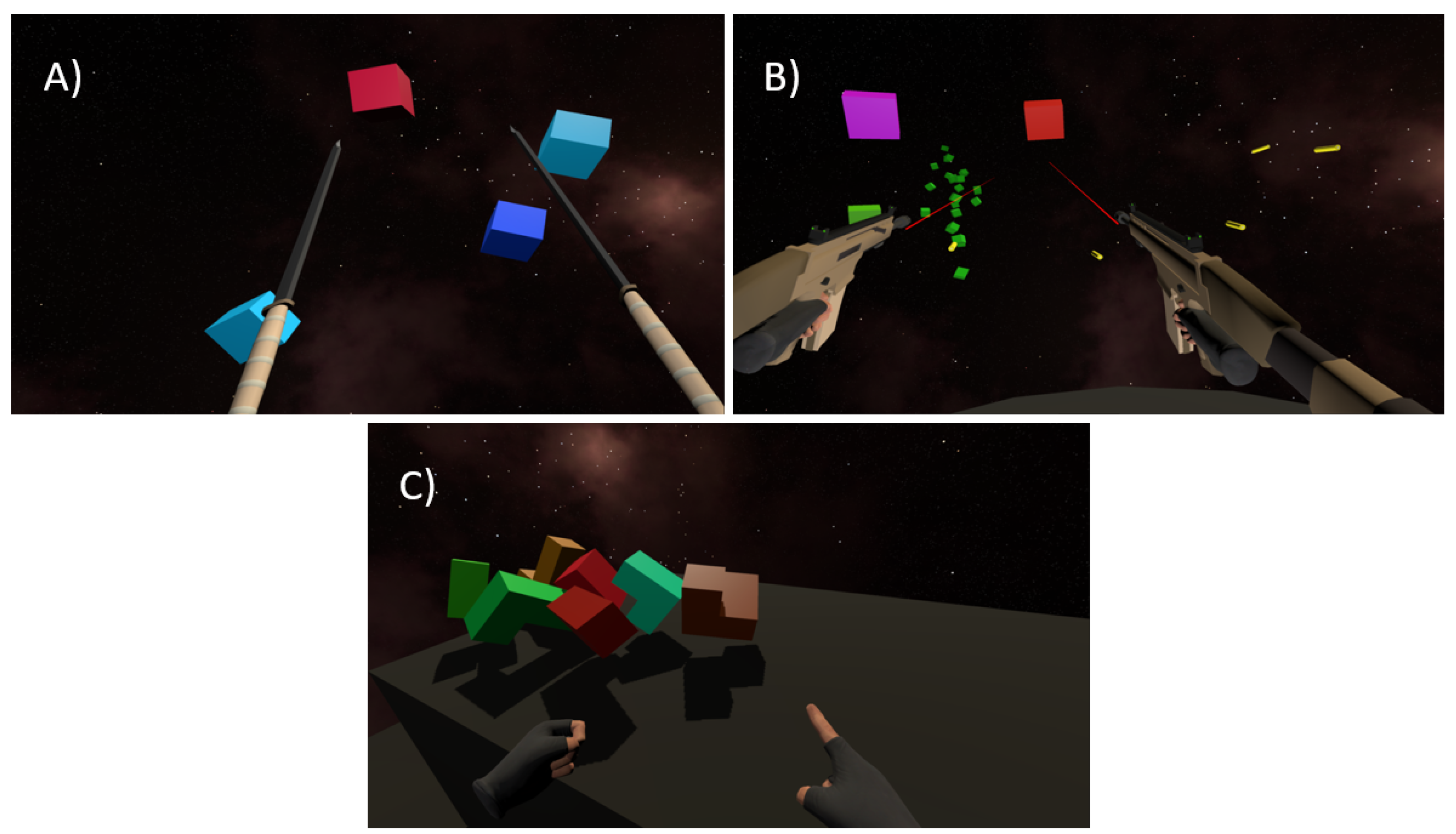

Figure 2.

Whether talking about sports equipment or weapons, there is a common thread among tool-based interaction mechanics that involve targets primarily positioned in the distant extrapersonal space—their design usually relies on the implementation of projectile motion. A projectile is an object that has initially been propelled (launched) by a certain force, and is subsequently continuing to move along a certain path (i.e., the ballistic trajectory) under the influence of gravity and other external forces. While projectiles are very common in games, the gaming use case does not call for a high level of realism, meaning that projectile motion may be calculated based on simplified ballistic flight equations that often disregard the influence of forces such as air resistance and cross wind. A projectile does not necessarily need to be launched using a tool such as a gun or a bat—it may also be hand-thrown. Whether we choose to label this category of mechanics as tool-mediated is up for debate, as a projectile may be considered a tool in itself and often serves as an intermediary object that provides the means for the player to impact the target, but for the sake of simplicity we will refer to such cases as projectile-based non-mediated interaction mechanics, as to separate this type of mechanics from those that rely on a hand-held tool to propel the projectile. Examples of different projectile-based interaction mechanics are presented in

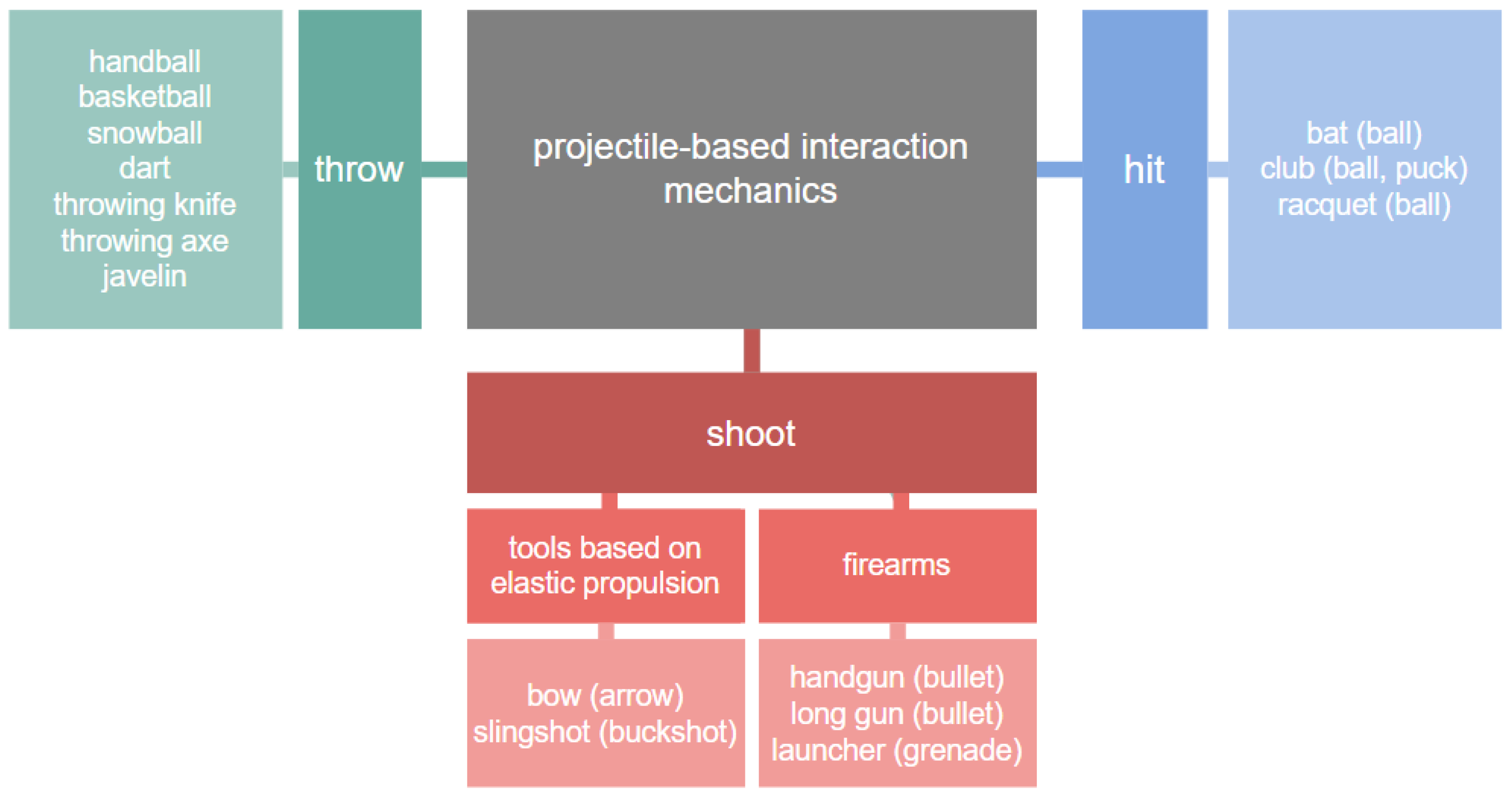

Figure 3.

Depending on the game’s genre and its core mechanics, as well as the particular tool (or lack thereof) used for propelling the projectile, there may be significant differences in the extent to which the actions performed by the user can impact its trajectory, as mechanisms responsible for projectile propulsion vary significantly. With firearms, the angle of propulsion will depend on the position of the user’s controller, but the magnitude of the muzzle velocity (i.e., the speed of a projectile as it exits the barrel) will remain constant, as determined by the implementation of the particular weapon of choice. With mechanisms based on elastic propulsion (e.g., a bow) the initial velocity of the projectile depends on the draw weight—the amount of force exerted on the bowstring (or the elastic band in case of a slingshot) as it gets pulled back in preparation for the projectile release. In the context of elastic propulsion weapons as implemented in controller-based virtual reality applications, at the moment of projectile propulsion, one of the virtual hands will likely be positioned on the handle of the bow or slingshot, while the other sits at the furthest point of the drawn bowstring or elastic band. The distance between the controllers determines the draw length, which is proportional to draw weight, and therefore serves as the basis for the calculation of the initial speed of the projectile following release. In cases where the projectile is being propelled by a manual throw (e.g., throwing a ball or a hand-thrown weapon) or as a result of a collision with a hand-held object (e.g., tennis racquet, baseball bat), its initial velocity will be determined by the velocity of the controller. It is important to note that determining the velocity of such projectiles depends on their distance from the rotational anchor (e.g., the wrist of the player) at the moment of propulsion. With hand-thrown objects, it is advisable to make sure that the object that is about to get propelled is snapped to the controller’s center of gravity, as opposed to an arbitrary point on the controller [

27], which provides the right radius necessary for an intuitive throw. In case the projectile is being propelled by a collision with a hand-held tool, the tool’s length at the point of impact will be included in the calculation of the overall tangential velocity with respect to controller velocity.

When it comes to mechanics involving tools as extensions of peripersonal space versus projectile-based interaction mechanics, certain types of tools cannot be easily categorized as belonging to one or the other. For example, some types of firearms may be equipped with a bayonet, allowing for different types of strategies depending on the distance between the player and the enemy. A tennis racquet serves a dual purpose—it is used to interact with an explicit target within the extended peripersonal space (i.e., to hit a tennis ball once it draws near), but also to launch a projectile—the ball—toward a distant implicit target. The goal of our taxonomy was not to divide interaction mechanics in separate categories—each mechanics will likely belong to several—but to aid in understanding various aspects of each mechanics.

3.5. Comprehensive Taxonomy of VR Interaction Mechanics

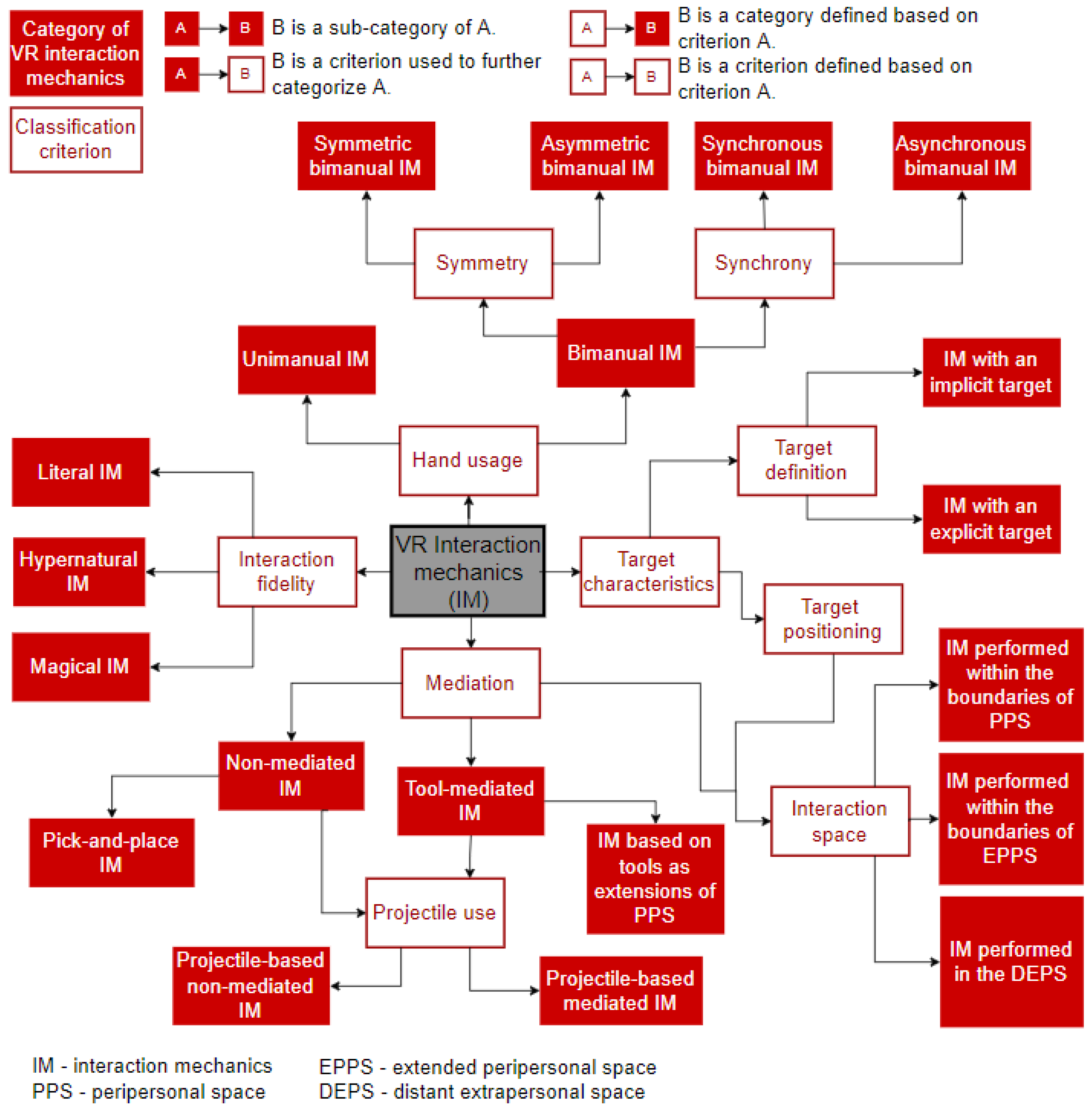

A diagram presenting a comprehensive overview of the interaction mechanics categorization discussed throughout this section is presented in

Figure 4, which highlights different categories of VR interaction mechanics, as well as criteria used for their classification. After presenting the taxonomy, we further focus on dissecting each mechanic with the goal of defining the parameters that contribute to its quality. Thus, the following section outlines and defines specific factors related to the implementation of interaction mechanics relevant to consider in the context of UX design.

4. Considerations Regarding the Implementation of Interaction Mechanics—Factors That May Influence the User Experience

The process of performing core interaction mechanics (including the preparatory mechanics) consists of multiple stages (

Figure 5), even in case of simple mechanics that do not require excessive strategizing or complex movements. After first perceiving the target (often preceded by active searching), the player chooses their subsequent action. In most cases, this includes multiple cognitive and motor operations necessary to aim, track, and interact with the target in some way, e.g., by touching it, picking it up, shooting at it, etc. Following the preparatory stages such as perceiving the target, as well as aiming and tracking, the player instigates the process of interacting with the target. The resulting contact with the target can be categorized as either instant (e.g., touching the target with a sword) or delayed (e.g., shooting an arrow and waiting for it to reach its destination), as well as discrete (e.g., bullet hits and immediately destroys the target object) or continuous (e.g., slicing through a large object).

During this process, the player adapts their behaviour depending on various conditions provided by the game in relation to themselves and the means afforded to their in-game character. The player’s action is a combined reaction to the state of the environment, target characteristics and positioning with respect to the player in the particular moment in time, and features of currently available tools. This reaction also depends on the fixed (e.g., size, fitness) and current (e.g., fatigue, mood) characteristics of the player combined with their personal tastes and preferences. A concise overview of various factors to be considered for the implementation and evaluation of interaction mechanics is presented below.

4.1. Perceiving a Target

The way in which users allocate visual attention across the virtual environment can be described by the SEEV (salience effort expectancy value) model [

28,

29], which we will explain in the remaining part of this paragraph. The

area of interest (AOI) refers to the “

physical location” in which the user can find specific information related to a certain task. Each environment is characterized by

salience, which pertains to the physical characteristics of the AOI (e.g., color, size) that make it stand out from its environment. As the user’s attention shifts between different AOIs, the process of scanning their surroundings requires a certain level of

effort. The SEEV model also includes

expectancy as one its factors, as the user is more likely to scan locations in which they expect certain changes to occur, and they base this expectation on the frequency of changes that occurred so far. The last factor of the SEEV model refers to the

value of a certain AOI in the context of the overall task. The SEEV model, which is concerned with scanning the visual environment, serves as a basis for the NSEEV model [

30], which describes the characteristics of a discrete event that have an impact on whether it is actually going to be noticed, such as the events’

eccentricity (i.e., the offset between the fixation location and the event), its expectancy, as well as its salience.

The effort required to successfully shift between AOIs will differ based on their offset, as explained in [

31]. If both areas fall within 20 degrees of visual angle (i.e., within the so-called

eye-field, the necessary effort will be considerably lower compared to a situation in which head rotation is required (i.e., within the

head-field, up to 90 degrees of visual angle). Likewise, an even greater angle will yield more effort, necessitating full-body rotation. A wider angle requires a lot more energy, especially with regular switching between the AOIs—thus, people are more likely to experience fatigue and discomfort, or even resist putting in the necessary effort.

As players scan the virtual environment, they are essentially performing visual search, using their eyes to scan the environment with the goal of finding a particular target, or targets. There are multiple factors affecting this process, such as the number of targets, distinguishing features of the target, the presence of non-targets, along with their overall number and their heterogeneity with respect to each other, and the dispersion of elements across the environment, which defines the necessary visual scanning distance [

31].

4.2. Interacting with a Target

The term

tracking task can be used to describe a task that requires manually steering a controller through the virtual environment with the goal of reaching a target with an adequate level of precision [

31]. Fitts’ Law [

32] describes the relationship between movement time, distance traveled, and target size for stationary targets. Initially referring to one-dimensional tasks, the original Fitts’ Law has been extended to three dimensions in works such as [

33], and, more recently, [

34], highlighting the impact of direction/angle of target placement with respect to the user. The difficulty of tracking a target is further increased in case of a moving target, especially in case of fast, unpredictable movement across multiple axes [

31].

Different target locations were shown to influence player comfort in different ways. Depending on target location, the task of following and manually interacting with targets requires different levels of muscle activity, and may even lead to neck and shoulder discomfort, with targets placed at eye height, or up to 15 degrees below eye height, appearing to be the most comfortable [

35].

In their testbed for studying object manipulation methods in virtual reality, Poupyrev et al. [

36] focused on pick and place tasks involving primarily stationary objects. The authors listed several parameters that are of interest for this type of task. Parameters that were considered to be relevant for the process of target selection included the number of objects, their size and their distance from the user, direction to the target with respect to the user, and occlusion of the target object, but the authors also mention target dynamics, density of objects surrounding the target, and target object bounding volume. In addition to aforementioned parameters pertaining to target selection, Poupyrev et al. [

36] listed parameters related to the task of positioning objects, such as the initial object distance and direction with respect to the user, as well as the distance and direction to its terminal position, required precision, visibility, dynamics and occlusion of the terminal position. Furthermore, the authors list parameters related to the task of orienting objects such as initial and final orientation of the object, its distance and direction with respect to the user, as well as the required precision of orientation.

4.3. Tools and Tool Usage

Physical properties of handheld tools—such as their dimensions—may prove to be a highly relevant factor, especially when it comes to interaction mechanics that utilize tools as extensions of peripersonal space. Aside from the fact that an increase in tool length enables interaction with targets further outside of the player’s reach, an increase in tangential velocity achieved for distal parts of longer tools may be relevant in the context of certain game mechanics, affecting the perceived utility and ease of use of the tool, and potentially impacting the challenge, as well as the outcome of the game. Furthermore, impaired accessibility of distant targets that comes as a result of using shorter tools may impose greater mobility/physical activity requirements on the player, which may also affect their overall experience of the game. With projectile-based interaction methods, an increase in projectile size facilitates hitting the target. Furthermore, a higher initial velocity of a projectile will result in a longer point-blank range, meaning that the player will have to elevate their weapon to compensate for the parabolic trajectory of the projectile only in cases of long distance targets. In the physical world, one may observe differences in trajectory and the eventual damage inflicted on the target in case of fired projectiles of different shapes and weights, an effect that may be simulated in a game scenario.

The use of aiming assistance may significantly improve the precision of the player, potentially contributing to the increase of their perceived level of competence. The task of selecting a remote target object in a VR game may be performed through linear of parabolic pointing, with research indicating that the use of a linear ray-cast pointer may lead to a better performance [

37]. Furthermore, although the inherent precision of VR controllers does not necessitate their inclusion, game developers dealing with projectile-based interaction mechanics may still choose to incorporate some of the aiming assistance mechanisms, such as those that have already been evaluated in 3D games research [

38]. For example,

bullet magnetism has already been considered as a method of improving player performance of projectile-based VR interaction mechanics (both

throw and

shoot; [

39]). While the aforementioned methods rely on the modification of the interaction in a way that can only be achieved within an artificial virtual environment (hypernaturalism), projectile-based interactions may also benefit from aiming assistance methods that are commonly used with existing weapons in the physical world, e.g., sighting devices such as telescopic or laser sights (literalism).

In addition to aiming aids, there are additional visual traits of weapon design that may influence user experience and performance. For example, a visual gunfire effect (a so-called muzzle flash) may appear as a reaction to a trigger press, along with a sound effect, which signals to the player that a bullet has been fired. However, because a bullet travels at a very high speed, and is thus difficult to follow, developers may choose to omit its in-game visual representation, only signalling its subsequent effect on the in-game entity that has been shot (e.g., an enemy crumbles to the floor, a wooden crate smashes to pieces). We describe such projectiles as having an invisible ballistic trajectory. In other cases, the player is able to keep an eye on the projectile from the moment it exits the barrel to the moment it reaches its destination, which allows them to form the impression of its velocity and its susceptibility to in-game physical forces, and grow more aware of their own shooting precision. Note, the trajectory of a travelling projectile can be made more obvious by incorporating an accompanying projectile trail effect (e.g., a vapor trail) that serves as its visual extension.

4.4. Player-Related Factors

It is important to note that preferences regarding interaction mechanics may vary between subjects as people differ in terms of dimensions (e.g., height, weight, arm length), visual acuity and color perception, disability, fitness and energy level, strength, motor skills, reaction speed, level of experience with virtual reality and familiarity with different activities that may affect their performance for a particular game (e.g., the skills of a real-life tennis player are likely to be useful in a VR sports game). Moreover, as hand-held controllers only track the movement of terminal segments of the kinetic chain, other segments are free to move in whichever way the player decides, which means that a vast array of movements and body positions will be afforded by the game as long as the movement of the terminal segment is successful at performing the task at hand. In other words, a game does not care if the player chooses to perform an underhand or an overhand throw, or whether they choose to keep their arms outstretched or bend their elbows as they shoot at the enemy. Likewise, the player may choose to kneel, crouch or bend at the waist in order to reach a low target or avoid an incoming projectile. These alternative approaches to performing a task may lead to different levels of discomfort or success.

7. Conclusions and Future Work

In order to provide users with the best experience, UX design typically focuses on decreasing the workload necessary to interact with a productivity-oriented system. However, when it comes to gaming, its entertainment value is dependant on the system providing a certain level of challenge to the player. In addition to their workload requirements, games are characterized by the broad range of mechanics used to reach their goal. Thus, the actions performed by the player as they interact with the game will usually significantly differ from the actions performed by the user of a non-game application, even if they are using the same platform and identical IO devices.

While multiple sources have addressed different aspects of user experience pertaining to interactions in virtual reality, research on gaming-specific VR design is limited. Although it is impossible to cover the entire spectrum of VR games within a single paper, our goal was to highlight the diversity of VR gaming, as we focus on classifying and describing the most common examples of what we refer to as interaction mechanics.

Therefore, in this paper we have presented the following novel scientific contributions. Firstly, we have described a set of criteria we used to classify interaction mechanics, and presented our taxonomy. We presented the INTERACT framework, developed to provide a conceptual foundation for designing a test application as a tool for user research, highlighting the importance of customization, automation, and repeatability. Furthermore, we highlighted multiple target-related, task-related, and tool-related parameters—related to the implementation of game mechanics—that may contribute toward the formation of user experience, and addressed several possible measures that could be considered. Finally, to provide an example for the use of our framework, we provided a concise overview of our concept of a test application for the evaluation of user experience with three different types of mechanics: pick and place, shoot, and slice.

In our future work, we plan to improve our application concept by including additional parameters and metrics (performance-based, but physiological could be added if supported by the chosen hardware configuration), and introduce these changes into the existing prototype implementation. Further changes to the existing concept/implementation could be made based on data collected in user studies we plan to conduct in the future, as we hope that their results could help us fine-tune the existing performance-based measures and better define the parameter spaces provided by the application, as well as shed light on certain parameters that should be added to the application.