Roboethics: Fundamental Concepts and Future Prospects

Abstract

1. Introduction

- 2004: First Roboethics International Symposium (Sanremo, Italy).

- 2005: IEEE Robotics and Automation Society Roboethics Workshop: ICRA 2005 (Barcelona, Spain).

- 2006: Roboethics Minisymposium: IEEE BioRob 2006—Biomedical Robotics and Biomechatronics Conference (Pisa, Italy).

- 2006: ETHICBOTS European Project International Workshop on Ethics of Human Interaction with Robotic, Bionic, and AI Systems Concepts and Policies (Naples, October 2006).

- 2007: ICRA: IEEE R&A International Conference: Workshop on Roboethics: IEEE Robotics and Automation Society Technical Committee (RAS TC) on Roboethics (Rome, Italy).

- 2007: ICAIL 2007: International Conference on Artificial Intelligence and Law (Palo Alto, USA, 4–6 June 2007).

- 2007: CEPE 2007: International Symposium on Computer Ethics Philosophical Enquiry (Topic Roboethics) (San Diego, USA, 12–14 July 2007).

- 2009: ICRA: IEEE R&A International Conference on Robotics and Automation: Workshop on Roboethics: IEEE RAS TC on Roboethics (Kobe, Japan, 2009).

- 2012: We Robot, University of Miami, FL, USA.

- 2013: International Workshop on Robot Ethics, University of Sheffield (February 2013).

- 2016: AAAI/Stanford Spring Symposium on Ethical and Moral Considerations in Non-Human Agents.

- 2016: International Research Conference on Robophilosophy (Main Topic Roboethics), Aarhus University (17–21 October 2016).

- 2018: International Conference on Robophilosophy: Envisioning Robots and Society (Main Topic Roboethics) (Vienna University, 14–17 February 2018).

“Confident of the future development of robot technology and of the numerous contributions that robots will make to Humankind, this World Robot Declaration is Expectations for next-generation robots: (a) next-generation robots will be partners that co-exist with human beings; (b) next-generation robots will assist human beings both physically and psychologically; (c) next-generation robots will contribute to the realization of a safe and peaceful society”.

- Section 2 analyzes the essential question: What is roboethics?

- Section 3 presents roboethics methodologies, starting with a brief review of ethics branches and theories.

- Section 4 outlines the roboethics branches, namely: medical roboethics, assistive roboethics, sociorobot ethics, war roboethics, autonomous car ethics, and cyborg ethics.

- Section 5 discusses some prospects for the future of robotics and roboethics.

- Section 6 gives the conclusions.

2. What Is Roboethics?

“Roboethics is an applied ethics whose objective is to develop scientific/cultural/technical tools that can be shared by different social groups and beliefs. These tools aim to promote and encourage the development of robotics for the advancement of human society and individuals, and to help preventing its misuse against humankind”.

- Level 1: Roboethics—This level is intrisically referred to philosophical issues, humanities, and social sciences.

- Level 2: Robot Ethics—This level refers mainly to science and technology.

- Level 3: Robot’s Ethics—This level mostly concerns science fiction, but it opens a wide spectrum of future contributions in the robot’s ethics field.

- “How might humans act ethically through, or with, robots?

- How can we design robots to act ethically? Or, can robots be truly moral agents?

- How can we explain the ethical relationships between human and robots?”

- “Is it ethical to create artificial moral agents and ethical robots?

- Is it unethical not to design mental/intelligent robots that possess ethical reasoning abilities?

- Is it ethical to make robotic nurses or soldiers?

- What is the proper treatment of robots by humans, and how should robots treat people?

- Should robots have rights?

- Should moral/ethical robots have new legal status?”

- Robots are mere machines (albeit, very useful and sophisticated machines).

- Robots raise intrinsic ethical concerns along different human and technological dimensions.

- Robots can be conceived as moral agents, not necessarily possessing free will mental states, emotions, or responsibility.

- Robots can be regarded as moral patients, i.e., beings deserving of at least some moral consideration.

- The ethical theory or theories adopted.

- The code of ethics embedded into the robot (machine ethics).

- The subjective morality resulting from the autonomous selection of ethical action(s) by a robot equipped with a conscience.

- Not interested in roboethics: These scholars say that the work of robot designers is purely technical and does not imply an ethical or social responsibility for them.

- Interested in short-term robot ethical issues: This view is advocated by those who adopt some social or ethical responsibility, by considering ethical behavior in terms of good or bad, and short-term impact.

- Interested in long-term robot ethical issues: Robotics scientists advocating this view express their robotic ethical concern in terms of global, long-term impact and aspects.

- Is ethics applied to robots an issue for the individual scholar or practitioner, the user, or a third party?

- What is the role that robots could have in our future life?

- How much could ethics be embedded into robots?

- How ethical is it to program robots to follow ethical codes?

- Which type of ethical codes are correct for robots?

- If a robot causes harm, is it responsible for this outcome or not? If not, who or what is responsible?

- Who is responsible for actions performed by human-robot hybrid beings?

- Is the need to embed autonomy in a robot contradictory to the need to embed ethics in it?

- What types of robots, if any, should not be designed? Why?

- How do robots determine what is the correct description of an action?

- If there are multiple rules, how do robots deal with conflicting rules?

- Are there any risks to creating emotional bonds with robots?

3. Roboethics Methodologies

3.1. Ethics Branches

- Meta-ethics. The study of concepts, judgements, and moral reasoning (i.e., what is the nature of morality in general, and what justifies moral judgements? What does right mean?).

- Normative (prescriptive) ethics. The elaboration of norms prescribing what is right or wrong, what must be done or what must not (What makes an action morally acceptable? Or what are the requirements for a human to live well? How shoud we act? What ought to be the case?).

- Applied ethics. The ethics branch which examines how ethics theories can be applied to specific problems/applications of actual life (technological, environmental, biological, professional, public sector, business ethics, etc., and how people take ethical knoweledge and put it in practice). Applied ethics is actually contrasted with theoretical ethics.

- Descriptive ethics. The empirical study of people’s moral beliefs, and the question: What is the case?

3.2. Ethics Theories

- Virtue theory (Aristotle). The theory grounded on the notion of virtue, which is specified as what character a person needs to live well. This means that in virtue ethics the moral evaluation focuses on the inherent character of a person rather than on specific actions.

- Deontological theory (Kant). The theory that focuses on the principles upon which the actions are based, rather than on the results of actions. In other words, moral evaluation carries on the actions according to imperative norms and duties. Therefore, to act rightly one must be motivated by proper universal deontological principles that treat everyone with respect (“respect for persons theory”).

- Utilitarian theory (Mill). A theory belonging to the consequentialism ethics which is “teleological”, aiming at some final outcome and evaluating the morality of actions toward this desired outcome. Actually, utilitarianism measures morality based on the optimization of “net expected utility” for all persons that are affected by an action or decision. The fundamental principle of utilitarianism says: “Actions are moral to the extent that they are oriented towards promoting the best long-term interests (greatest good) for every one concerned”. The issue here is what the concept of greatest good means. The Aristotelian meaning of greatest good is well-being (pleasure or happiness).

3.3. Roboethics Methodologies

- Top-down roboethics methodology. In this methodology, the rules of the desired ethical behavior of the robot are programmed and embodied in the robot system. The ethical rules can be formulated according to the deontological or the utilitarian theory or other ethics theories. The question here is: which theory is the most appropriate in each case? Top-down methodogy in ethics was originated from several areas including philosophy, religion, and literature. In control and automation sytems design, the top-down approach means to analyze or decompose a task in simpler sub-tasks that can be hierarchically arranged and performed to achieve a desired output orproduct. In the ethical sense, following the top-down methdology means to select an antecedently specified ethical theory and obtain its implications for particular situations. In practice, robots should combine both meanings of the top-down concept (control systems meaning and ethical systems meaning).

- “Law 1: A robot may not injure a human being or, through inaction allow a human being to come to harm.

- Law 2: A robot must obey orders it receives from human beings except when such orders conflict with Law 1.

- Law 3: A robot must protect its own existence as long as such protection does not conflict with Laws 1 and 2.”

- “Law 0: No robot may harm humanity or through inaction allow humanity to come to harm.”

- “Robots only take permissible actions.

- All relevant actions that are obligatory for robots are actually performed by them, subject to ties and conflicts among available actions.

- All permissible (or obligatory or forbidden) actions can be proved by the robot (and in some cases, associated systems, e.g., oversight systems) to be permisible (or obligatory or forbidden), and all such proofs can be explained in ordinary English”.

- “Describe every situation in the world.

- Produce alternative actions.

- Predict the situation(s) which would be the outcome of taking an action given the present situation.

- Evaluate a situation in terms of its goodness or utility.”

- Bottom-up roboethics methodology. This methodology assumes that the robots possess adequate computational and artificial intelligence capabilites to adapt themselves to different contexts so as to be capable to learn, starting from perception of the world, and then perform the planning of the actions based on sensory data, and finally execute the action [26]. In this methodology, the use of any prior knowledge is only for the purpose of specifying the task to be performed, and not for specifying a control architecture or implementation technique. A detailed discussion of bottom-up and top-down roboethics approaches is provided in Reference [26]. Actually, for a robot to be an ethical learning robot both top-down and bottom-up approaches are needed (i.e., the robot should follow a suitable hybrid approach). Typically, the robot builds its morality through developmental learning similar to the way children develop their conscience. Full discussions of top-down and bottom-up roboethics methodologies can be found in References [20,21].

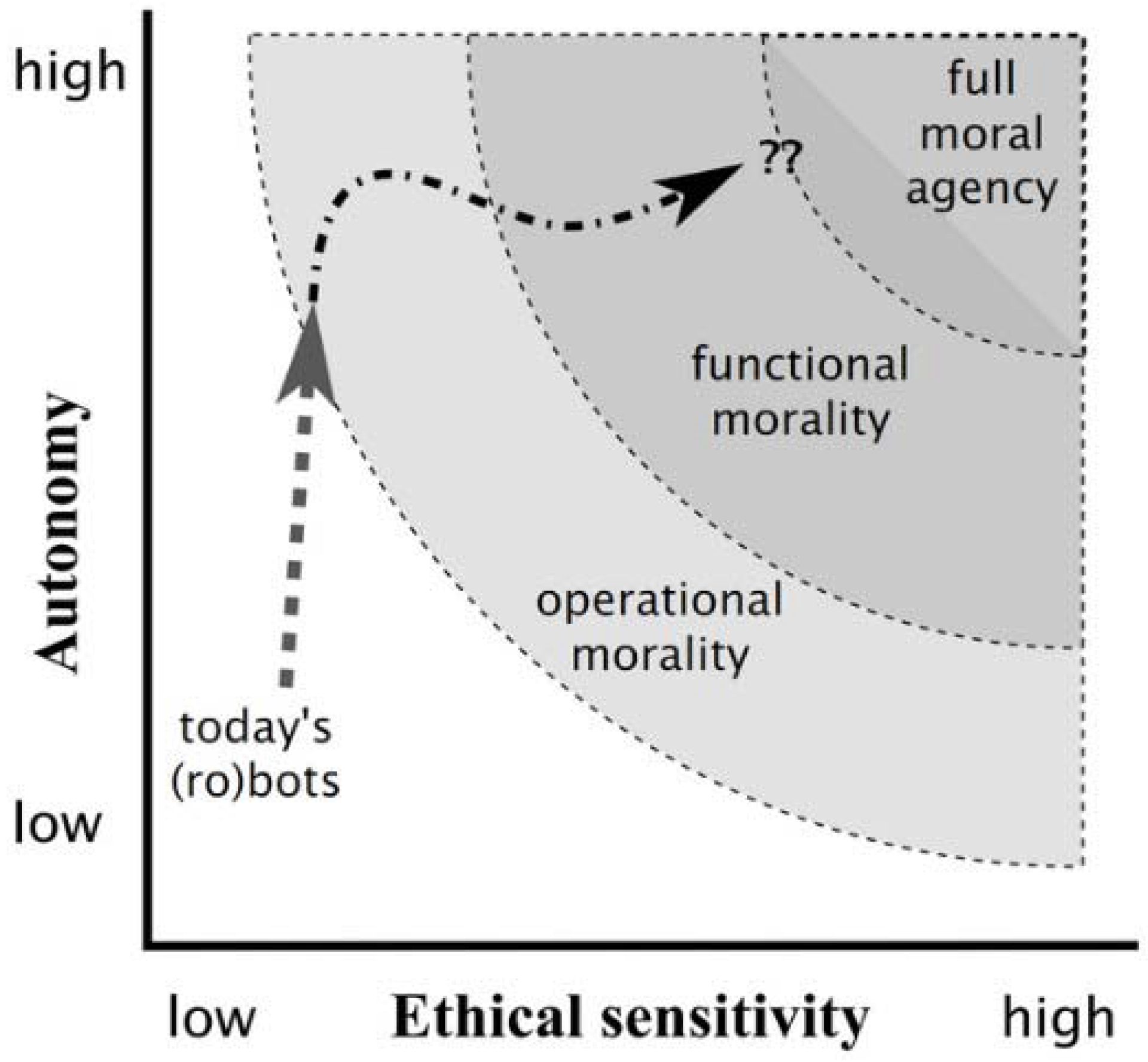

- Operational morality (moral responsibility lies entirely in the robot designer and user).

- Functional morality (the robot has the ability to make moral judgments without top-down instructions from humans, and the robot designers can no longer predict the robot’s actions and their consequences).

- Full morality (the robot is so intelligent that it fully autonomously chooses its actions, thereby being fully responsible for them).

4. Roboethics Branches

- Medical roboethics.

- Assistive roboethics.

- Sociorobot ethics.

- War roboethics.

- Autonomous car ethics.

- Cyborg ethics.

4.1. Medical Roboethics

- “Autonomy: The patients have the right to accept or refuse a treatment.

- Beneficence: The doctor should act in the best interest of the patient.

- Non-maleficence: The doctor/practitioner should aim “first not to do harm”.

- Justice: The distribution of scarce health resources and the decision of who gets what treatment should be just.

- Truthfulness: The patient shoud not be lied to and has the right to know the whole truth.

- Dignity: The patient has the right to dignity”.

4.2. Assistive Roboethics

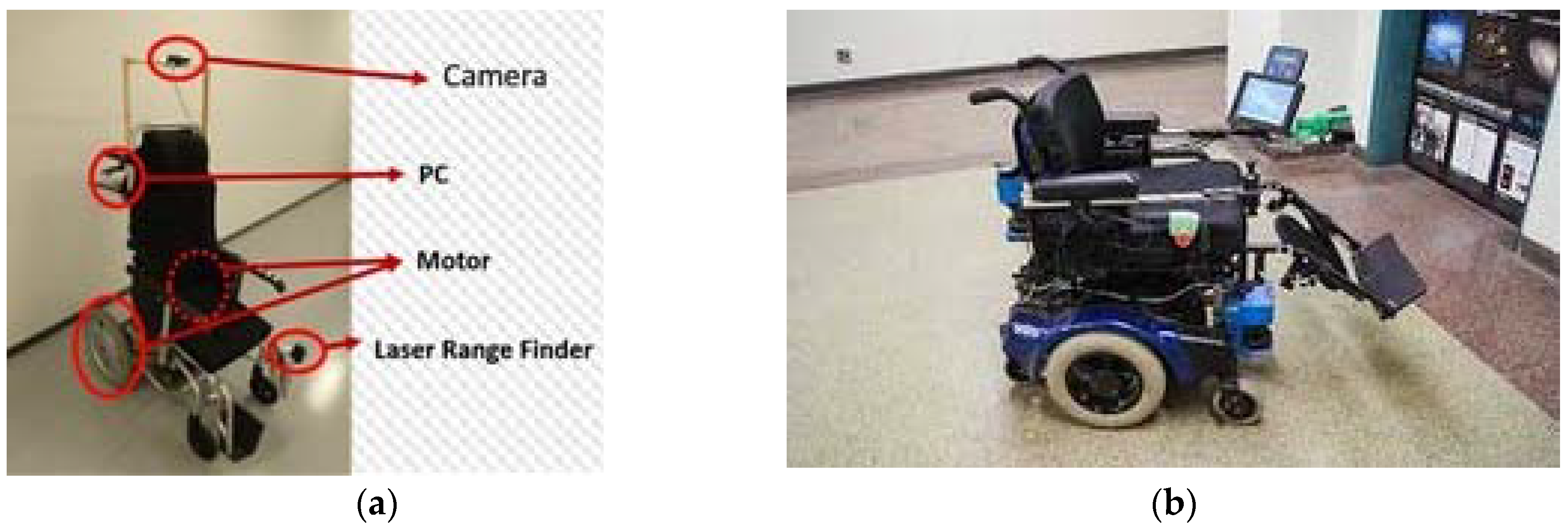

- Assistive robots/devices for people with impaired lower limbs (wheelchairs, walkers).

- Assistive robots/devices for people with impaired upper limbs and hands.

- Rehabilitation robots/devices for upper limbs or lower limbs.

- Orthotic devices.

- Prosthetic devices.

- Select and propose the most appropiate device which is economically affordable by the PwSN.

- Consider assistive technology that can help the user do things that he/she finds difficult to do.

- Ensure that the chosen assistive device is not used for activities that a person is capable of doing for him/herself (which will probably make the problem worse).

- Use assistive solutions that respect the freedom and privacy of the person.

- Ensure the users’ safety, which is of the greatest importance.

- Level 1: Select the proper device—Users should be provided the proper assistive/rehabilitation devices and services, otherwise the non-maleficence ethical principle is violated. The principles of justice, beneficence, and autonomy should also be followed at this level.

- Level 2: Competence of therapists—Effective co-operation between therapists in order to plan the best therapy program. Here again the principles of justice, autonomy, beneficence, and non-maleficence should be respected.

- Level 3: Effectiveness and efficiency of assistive devices—Use should be made of effective, reliable, and cost-effective devices. The principles of beneficence, non-maleficence, etc. should be respected here. Of highest priority at this level is the justice ethical rule.

- Level 4: Societal resources and legislation—Societal, agency, and user resources should be appropriately exploited in order to achieve the best available technologies. Best practices rehabilitation interventions should be followed for all aspects.

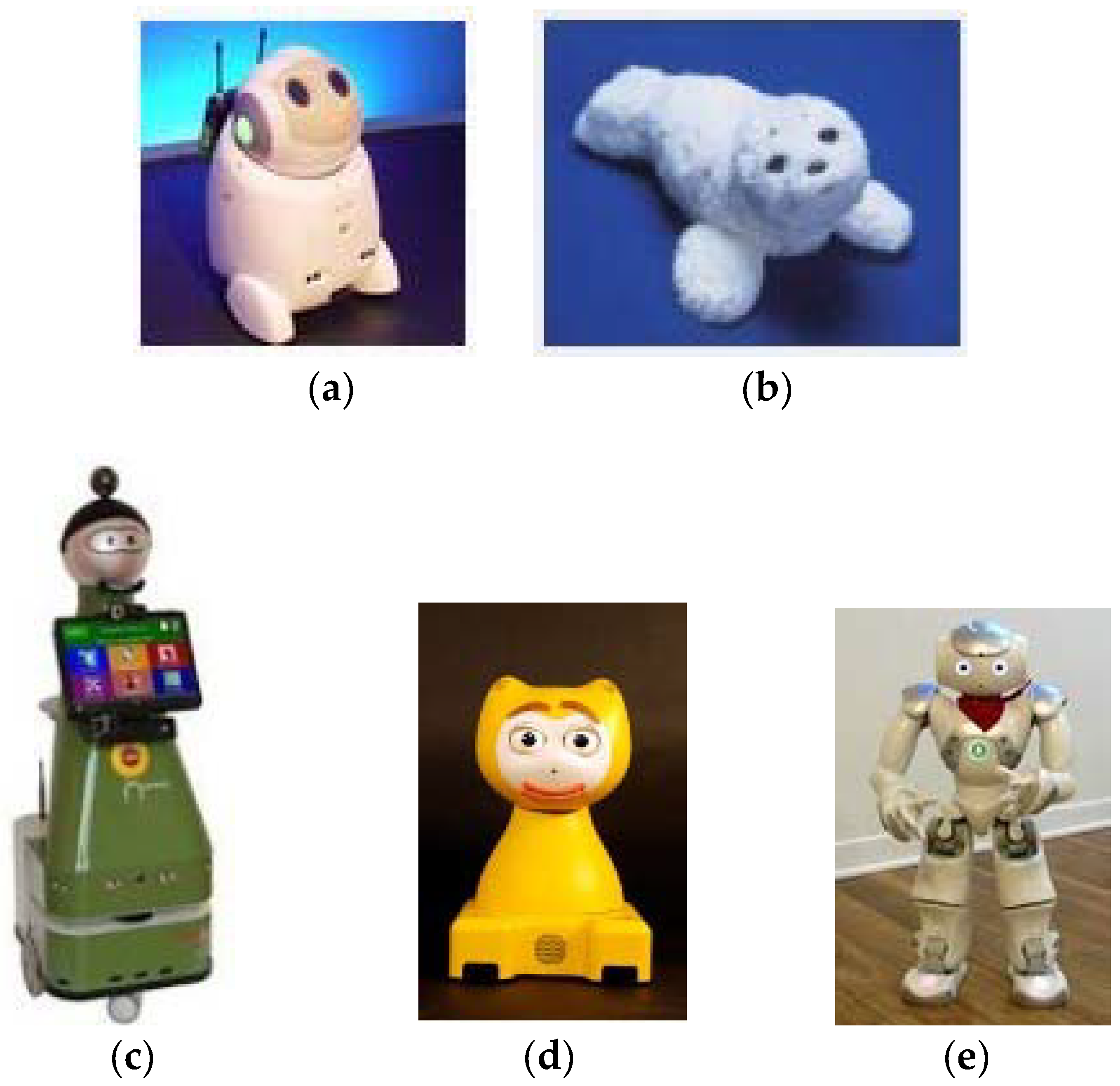

4.3. Sociorobot Ethics

- Comprehend and interact with its environment.

- Exhibit social behavior (for assisting PwSN, the elderly, and children needing mental/socialization help).

- Direct its focus of attention and communication on the user (so as to help him/her achieve specific goals).

- “Express and/or perceive emotions.

- Communicate with high-level dialogue.

- Recognize other agents and learn their models.

- Establish and/or sustain social connections.

- Use natural patterns (gestures, gaze, etc.).

- Present distinctive personality and character.

- Develop and/or learn social competence.”

- AIBO: a robotic dog (dogbot) able to interact with humans and play with a ball (SONY) [43].

- KISMET: a human-like robotic head able to express emotions (MIT) [44].

- KASPAR: a humanoid robot torso that can function as mediator of human interaction with autistic children [41].

- QRIO: a small entertainment humanoid (SONY) [45].

- “Attachment: The ethical issue here arises when a user is emotionally attached to the robot. For example, in dementia/autistic persons, the robot’s absence when it is removed for repair may produce distress and/or loss of therapeutic benefits.

- Deception: This effect can be created by the use of robots in assistive settings (robot companions, teachers, or coaches), or when the robot mimics the behavior of pets.

- Awareness: This issue concerns both users and caregivers, since they both need to be accurately informed of the risks and hazards associated with the use of robots.

- Robot authority: A sociorobot that acts as a therapist is given some authority to exert influence on the patient. Thus, the ethical issue here is who controls the type, the level, and the duration of interaction. If a patient wants to stop an exercise due to fatigue or pain a human therapist would accept this, but a robot might not accept. Such a feature is also to be possessed by the robot.

- Autonomy: A mentally healthy person has the right to make informed decisions about his/her treatment. If he/she has cognition problems, this autonomy right is passed to the person who is legally and ethically responsible for the patient’s therapy.

- Privacy: Securing privacy during robot-aided interaction and care is a primary requirement in all cases.

- Justice and responsibility: This is of primary ethical importance to observe the standard issues of the “fair distibution of scarce resources” and “responsibility assignment”.

- Human-human relation (HHR): HHR is a very important ethical issue that has to be addressed when using assistive and socialized robots. The robots are used as a means of addition or enhancement of the therapy given by caregivers, not as a replacement of them.”

4.4. War Roboethics

- A state or period of fighting between countries or groups.

- A state of usually open and declared armed hostile conflict between states or nations.

- A period of such armed conflict.

- Realism (war is an inevitable process taking place in the anarchical world system).

- Pacifism or anti-warism (rejects war in favor of peace).

- Just war (just war theory specifies the conditions for judging if it is just to go to war, and conditions for how the war should be conducted).

- “Jus ad bellum specifies the conditions under which the use of military force must be justified. The jus ad bellum requirements that have to be fulfilled for a resort to war to be justified are: (i) just cause; (ii) right intention; (iii) legitimate authority and declaration; (iv) last resort; (v) proportionality; (vi) chance of success.

- Jus in bello refers to justice in war, i.e., to conducting a war in an ethical manner. According to international war law, a war should be conducted obeying all international laws for weapons prohibition (e.g., biological or chemical weapons), and for benevolent quarantine for prisoners of war (POWs).

- Jus post bellum refers to justice at war termination. Its purpose is to regulate the termination of wars and to facilitate the return to peace. Actually, no global law exists for jus post bellum. The return to peace should obey the general moral laws of human rights to life and liberty.”

- Discrimination: It is immoral to kill civilians, i.e., non-combatants. Weapons (non-prohibited) may be used only against those who are engaged in doing harm.

- Proportionality: Soldiers are entitled to use only force proportional to the goal sought.

- Benevolent treatment of POWs: Captive enemy soldiers are “no longer engaged in harm”, and so they are to be provided with benevolent (not malevolent) quarantine away from battle zones, and they should be exchanged for one’s own POWs after the end of war.

- Controlled weapons: Soldiers are allowed to use controlled weapons and methods which are not evil in themseves.

- No retaliation: This occurs when a state A violates jus in bello in war in state B, and state B retaliates with its own violation of jus in bello, in order to force A to obey the rules.

- Firing decision: At present, the firing decision still lies with the human operator. However, the separation margin between human firing and autonomous firing in the battlefield is continuously decreased.

- Discrimination: The ability to distinguish lawful from unlawful targets by robots varies enormously from one system to another, and present-day robots are still far from having visual capabilities that may faithfully discriminate between lawful and unlawful targets, even in close contact encounter. The distinction between lawful and unlawful targets is not a pure technical issue, but it is considerably complicated by the lack of a clear definition of what counts as a civilian. The 1944 Geneva Convention states that a civilian can be defined by common sense, and the 1977 Protocol defines a civilian any person who is not an active combatant (fighter).

- Responsibility: The assignment of responsibility in case of failure (harm) is both an ethical and legislative issue in all robotic applications (medical, assistive, socialization, war robots). Yet this issue is much more critical in the case of war robots that are designed to kill humans with a view to save other humans. The question is to whom blame and punishment should be assigned for improper fight and unauthorized harm caused (intentionally or unintentionally) by an autonomous robot—to the designer, robot manufacturer, robot controller/supervisor, military commander, a state prime minister/president, or the robot itself? This question is very complicated and needs to be discussed more deeply when the robot is given a higher degree of autonomy [49].

- Proportionality: The proportionality rule requires that even if a weapon meets the test of distinction, any weapon must also undergo an evaluation that sets the anticipated military advantage to be gained against the predicted civilian harm (civilian persons or objects). In other words, the harm to civilians must not be excessive relative to the expected military gain. Proportionality is a fundamental requirement of just war theory and should be respected by the design and programming of any autonomous robotic weapon.

- Inability to program war laws (Programming the laws of war is a very difficult and challenging task for the present and the future).

- Taking humans out of the firing loop (It is wrong per se to remove human from the firing loop).

- Lower barriers to war (The removal of human soldiers from the risk and the reduction of harm to civilians through more accurate autonomous war robots diminishes the disincentive to resort to war).

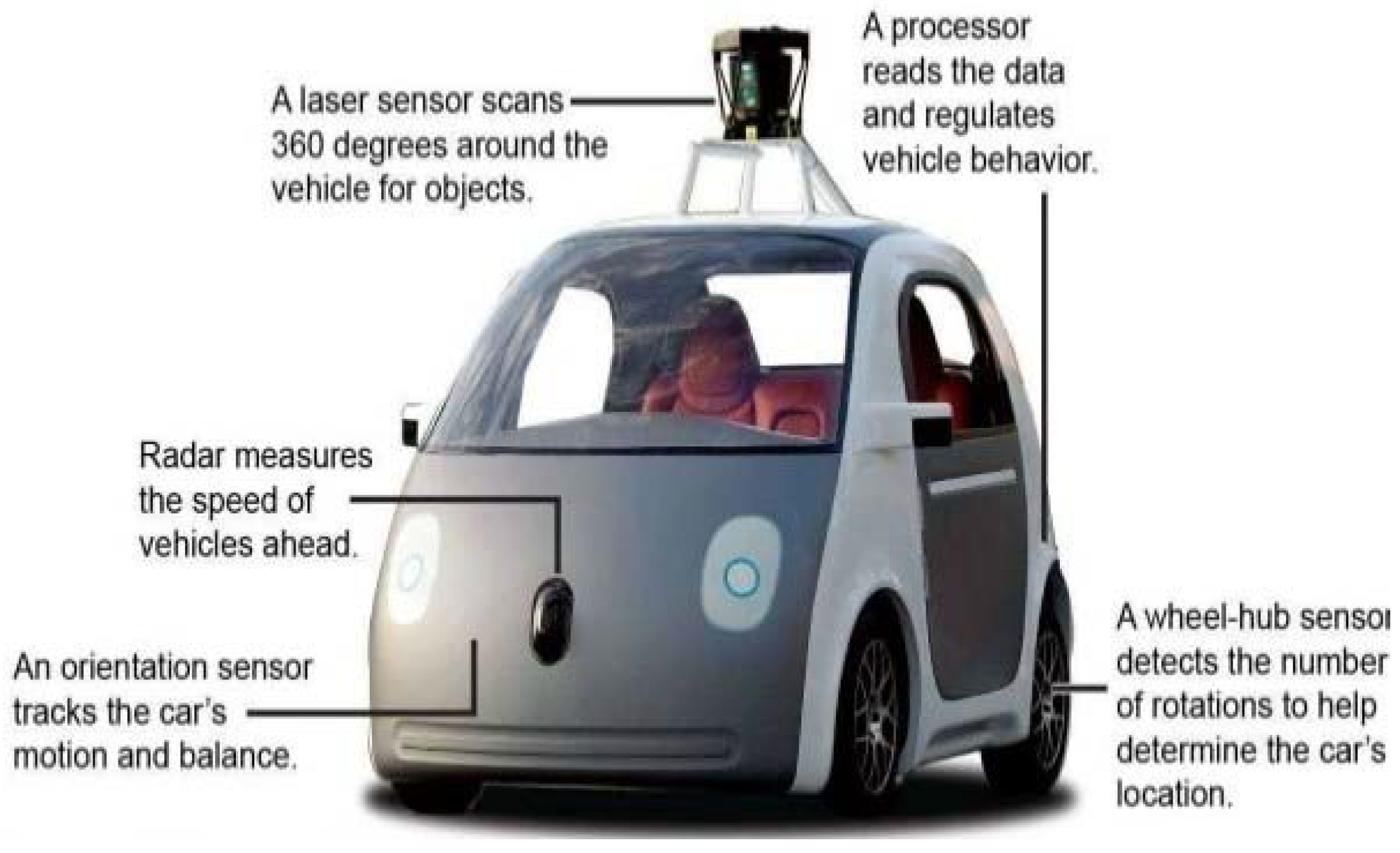

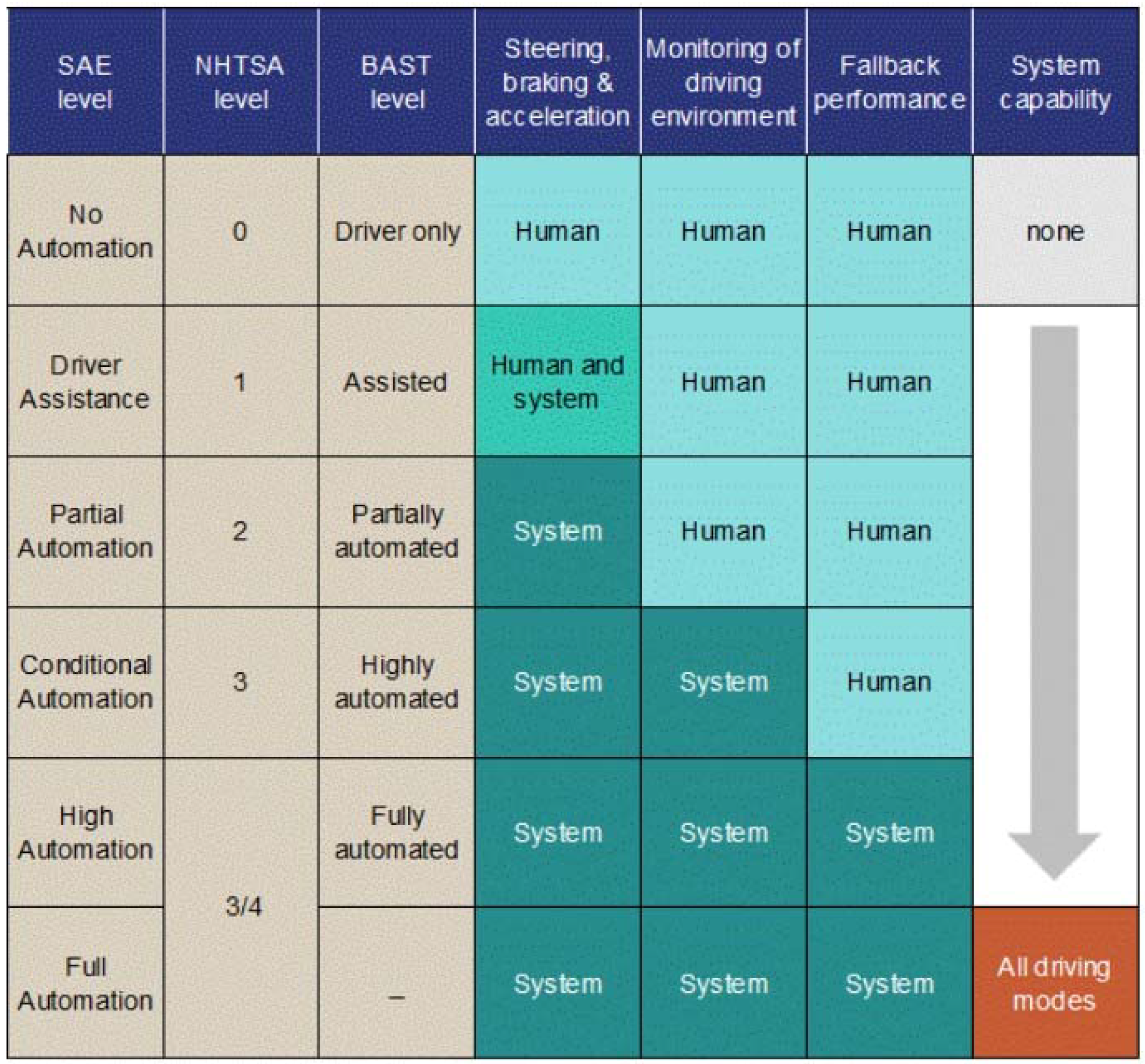

4.5. Autonomous Car Ethics

- “Identify six levels of driving automation from ‘no automation’ to ‘full automation’.

- Base definitions and levels on functional aspects of technology.

- Describe categorical distinction for step-wise progression through the levels.

- Are consistent with current industry practice.

- Eliminate confusion and are useful across numerous disciplines (engineering, legal, media, and public discourse).

- Educate a wide community by clarifying for each level what role (if any) drivers have in performing the dynamic driving task while a driving automation system is engaged.”

- “Dynamic driving tasks (i.e., operational aspects of automatic driving, such as steering, braking, accelerating, monitoring the vehicle and the road, and tactical aspects such as responding to events, determining when to change lanes, turn, etc.).

- Driving mode (i.e., a form of driving scenario with appropriate dynamic driving task requirements, such as expressway merging, high-speed cruising, low-speed traffic jam, closed-campus operations, etc.).

- Request to intervene (i.e., notification by the automatic driving system to a human driver that he should promptly begin or resume performance of the dynamic driving task).”

4.6. Cyborg Ethics

- “People with replaced parts of their body (hips, elbows, knees, wrists, arteries, etc.) can now be classified as cyborgs.

- Brain implants based on neuromorphic model of the brain and the nervous system help reverse the most devastating symptoms of Parkinson disease.”

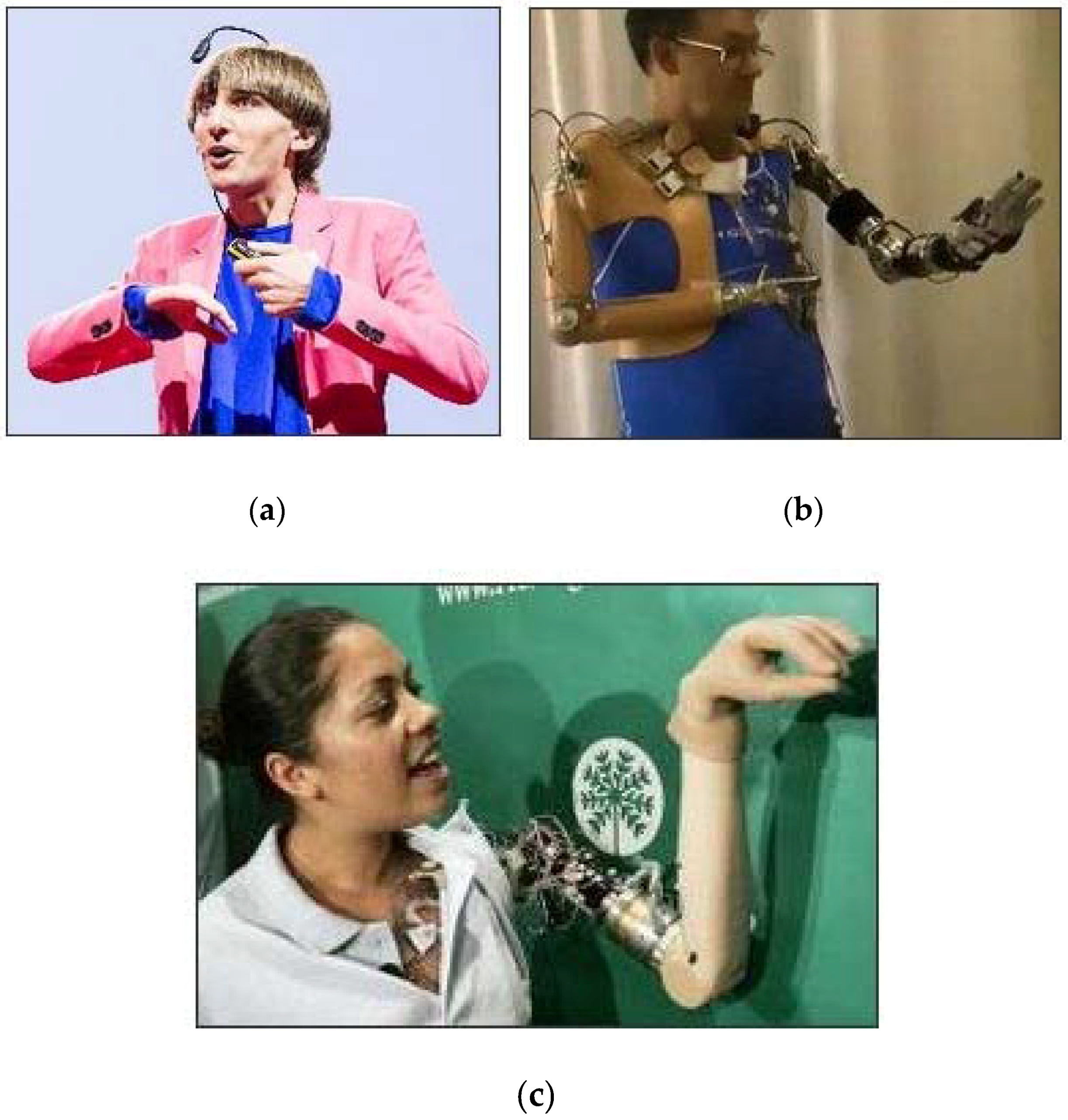

- “Cyborgs do not heal body damage normally, but, instead, body parts are replaced. Replacing broken limbs and damaged armor plating can be expensive and time-consuming.

- The artist Neil Harbinson, born with achromatopsia (able to see only black and white) is equipped with an antenna implanted into his head. With this eyeborg (electronic eye), he became able to render perceived colors as sounds on the musical scale.

- Jesse Sullivan suffered a life-threatening accident: he was electrocuted so severely that both of his arms needed to be amputated. He was fitted with a bionic limb connected through a nerve-muscle grafting. He then became able to control his limb with his mind, and also able to feel temperature as well as how much pressure his grip applies.

- Claudia Mitchell is the first woman to have a bionic arm after a motorcycle accident in which she lost her left arm completely.

5. Future Prospects of Robotics and Roboethics

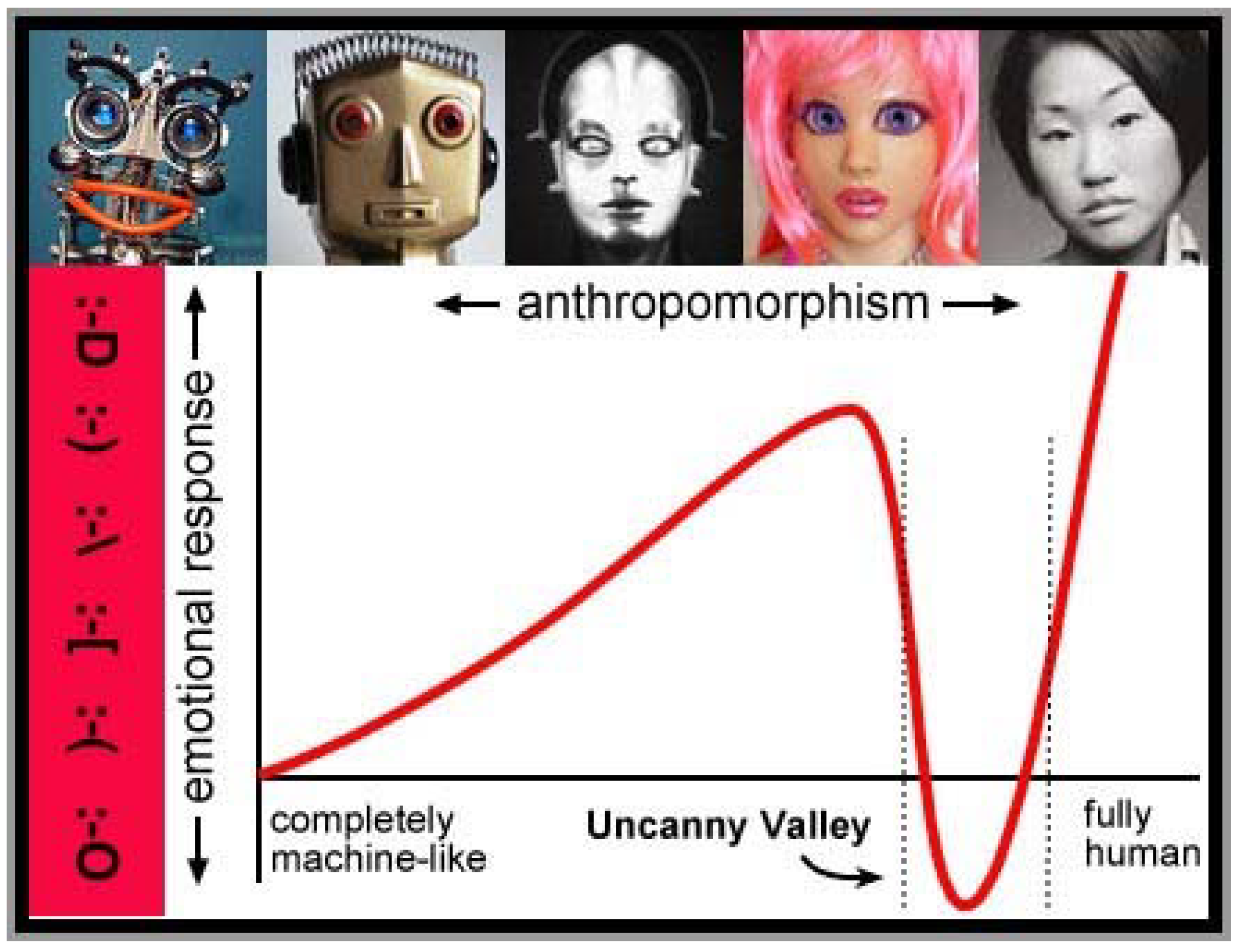

- How similar to humans should robots become?

- What are the possible effects of future technological progress of robotics on humans and society?

- How to best design future intelligent/autonomous robots?

- “Embedding values into autonomous intelligent systems.

- Methodologies to guide ethical research and design.

- Safety and beneficence of general AI and superintelligence.

- Reframing autonomous weapons systems.

- Economics and humanitarian issues.

- Personal data and individual access control.”

- Ray Jarvis (Monash University, Australia): “I think that we would recognize machine rights if we were looking at it from a human point of view. I think that humans, naturally, would be empathetic to a machine that had self-awareness. If the machine had the capacity to feel pain, if it had a psychological awareness that it was a slave, then we would want to extend rights to the machine. The question is how far should you go? To what do you extend rights?”

- Simon Longstaff (St. James Ethics Center, Australia): “It depends on how you define the conditions for personhood. Some use preferences as criteria, saying that a severely disabled baby, unable to make preferences, shouldn’t enjoy human rights yet higher forms of animal life, capable of making preferences, are eligible for rights. […] Machines would never have to contend with transcending instinct and desire, which is what humans have to do. I imagine a hungry lion on a veldt about to spring on a gazelle. The lion as far we know doesn’t think, “Well I am hungry, but the gazelle is beautiful and has children to feed.” It acts on instinct. Altruism is what makes us human, and I don’t know that you can program for altruism.”

- Jo Bell (Animal Liberation): “Asimov’s Robot series grappled with this sort of (rights) question. As we have incorporated other races and people-women, the disabled, into the category of those who can feel and think, then I think if we had machines of that kind, then we would have to extend some sort of rights to them.”

- “AI has the same problems as other conventional artifacts.

- It is wrong to exploit people’s ignorance and make them think AI is human.

- Robots will never be your friends.”

- “Human culture is already a superintelligent machine turning the planet into apes, cows, and paper clips.

- Big data + better models = ever-improving prediction, even about individuals.”

- Assuring that humans will be able to control future robots.

- Preventing the illegal use of future robots.

- Protecting data obtained by robots.

- Establishing clear traceability and identification of robots.

- Is it ethical to turn over all of our difficult and highly sensitive decisions to machines and robots?

- Is it ethical to outsource all of our autonomy to machines and robots that are able to make good decisions?

- What are the existential and ethical risks of developing superintelligent machines/robots?

6. Conclusions

Conflicts of Interest

References

- Sabanovic, S. Robots in society, society in robots. Int. J. Soc. Robots 2010, 24, 439–450. [Google Scholar] [CrossRef]

- Veruggio, G. The birth of roboethics. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA 2005): Workshop on Robot Ethics, Barcelona, Spain, 18 April 2005; pp. 1–4. [Google Scholar]

- Lin, P.; Abney, K.; Bekey, G.A. Robot Ethics: The Ethical and Social Implications of Robotics; MIT Press: Cambridge, MA, USA, 2011. [Google Scholar]

- Capurro, R.; Nagenborg, M. Ethics and Robotics; IOS Press: Amsterdam, The Netherlands, 2009. [Google Scholar]

- Tzafestas, S.G. Roboethics: A Navigating Overview; Springer: Berlin, Germany; Dordrecht, The Netherlands, 2015. [Google Scholar]

- Dekoulis, G. Robotics: Legal, Ethical, and Socioeconomic Impacts; InTech: Rijeka, Croatia, 2017. [Google Scholar]

- Jha, U.C. Killer Robots: Lethal Autonomous Weapon Systems Legal, Ethical, and Moral Challenges; Vij Books India Pvt: New Delhi, India, 2016. [Google Scholar]

- Gunkel, D.J.K. The Machine Question: Critical Perspectives on AI, Robots, and Ethics; MIT Press: Cambridge, MA, USA, 2012. [Google Scholar]

- Dekker, M.; Guttman, M. Robo-and-Information Ethics: Some Fundamentals; LIT Verlag: Muenster, Germany, 2012. [Google Scholar]

- Anderson, M.; Anderson, S.L. Machine Ethics; Cambridge University Press: Cambridge, UK, 2011. [Google Scholar]

- Veruggio, G.; Solis, J.; Van der Loos, M. Roboethics: Ethics Applied to Robotics. IEEE Robot. Autom. Mag. 2001, 18, 21–22. [Google Scholar] [CrossRef]

- Capurro, R. Ethics in Robotics. Available online: http://www.i-r-i-e.net/inhalt/006/006_full.pdf (accessed on 10 June 2018).

- Lin, P.; Abney, K.; Jenkins, R. Robot Ethics 2.0: From Autonomous Cars to Artificial Intelligence; Oxford University Press: Oxford, UK, 2018. [Google Scholar]

- Veruggio, G. Roboethics Roadmap. In Proceedings of the EURON Roboethics Atelier, Genoa, Italy, 27 Feberuary–3 March 2006. [Google Scholar]

- Arkin, R. Governing Lethal Behavior of Autonomous Robots; Chapman and Hall: New York, NY, USA, 2009. [Google Scholar]

- Moore, R.K. AI Ethics: Artificial Intelligence, Robots, and Society; CPSR: Seattle, WA, USA, 2015; Available online: www.cs.bath.ac.uk/~jjb/web/ai.html (accessed on 10 June 2018).

- Asaro, P.M. What should we want from a robot ethics? IRIE Int. Rev. Inf. Ethics 2006, 6, 9–16. [Google Scholar]

- Tzafestas, S.G. Systems, Cybernetics, Control, and Automation: Ontological, Epistemological, Societal, and Ethical Issues; River Publishers: Gistrup, Denmark, 2017. [Google Scholar]

- Verrugio, P.M.; Operto, F. Roboethics: A bottom -up interdisciplinary discourse in the field of applied ethics in robotics. IRIE Int. Rev. Inf. Ethics 2006, 6, 2–8. [Google Scholar]

- Wallach, W.; Allen, C. Moral Machines: Teaching Robots Right from Wrong; Oxford University Press: Oxford, UK, 2009. [Google Scholar]

- Wallach, W.; Allen, C.; Smit, I. Machine morality: Bottom-up and top-down approaches for modeling moral faculties. J. AI Soc. 2008, 22, 565–582. [Google Scholar] [CrossRef]

- Asimov, I. Runaround: Astounding Science Fiction (March 1942); Republished in Robot Visions: New York, NY, USA, 1991. [Google Scholar]

- Gert, B. Morality; Oxford University Press: Oxford, UK, 1988. [Google Scholar]

- Gips, J. Toward the ethical robot. In Android Epistemology; Ford, K., Glymour, C., Mayer, P., Eds.; MIT Press: Cambridge, MA, USA, 1992. [Google Scholar]

- Bringsjord, S. Ethical robots: The future can heed us. AI Soc. 2008, 22, 539–550. [Google Scholar] [CrossRef]

- Dekker, M. Can humans be replaced by autonomous robots? Ethical reflections in the framework of an interdisciplinary technology assessment. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA’07), Rome, Italy, 10–14 April 2007. [Google Scholar]

- Pence, G.E. Classic Cases in Medical Ethics; McGraw-Hill: New York, NY, USA, 2000. [Google Scholar]

- Mappes, G.E.; DeGrazia, T.M.D. Biomedical Ethics; McGraw-Hill: New York, NY, USA, 2006. [Google Scholar]

- North, M. The Hippocratic Oath (translation). National Library of Medicine, Greek Medicine. Available online: www.nlm.nih.gov/hmd/greek/greek_oath.html (accessed on 10 June 2018).

- Paola, I.A.; Walker, R.; Nixon, L. Medical Ethics and Humanities; Jones & Bartlett Publisher: Sudbary, MA, USA, 2009. [Google Scholar]

- AMA. Medical Ethics. 1995. Available online: https://www.ama.assn.org and https://www.ama.assn.org/delivering-care/ama-code-medical-ethics (accessed on 10 June 2018).

- Beabou, G.R.; Wennenmann, D.J. Applied Professional Ethics; University of Press of America: Milburn, NJ, USA, 1993. [Google Scholar]

- Rowan, J.R.; Sinaih, S., Jr. Ethics for the Professions; Cencage Learning: Boston, MA, USA, 2002. [Google Scholar]

- Dickens, B.M.; Cook, R.J. Legal and ethical issues in telemedicine and robotics. Int. J. Gynecol. Obstet. 2006, 94, 73–78. [Google Scholar] [CrossRef] [PubMed]

- World Health Organization. International Classification of Functioning, Disability, and Health; World Health Organization: Geneva, Switzerland, 2001. [Google Scholar]

- Tanaka, H.; Yoshikawa, M.; Oyama, E.; Wakita, Y.; Matsumoto, Y. Development of assistive robots using international classification of functioning, disability, and health (ICF). J. Robot. 2013, 2013, 608191. [Google Scholar] [CrossRef]

- Tanaka, H.; Wakita, Y.; Matsumoto, Y. Needs analysis and benefit description of robotic arms for daily support. In Proceedings of the RO-MAN’ 15: 24th IEEE International Symposium on Robot and Human Interactive Communication, Kobe, Japan, 31 August–4 September 2015. [Google Scholar]

- RESNA Code of Ethics. Available online: http://resna.org/certification/RESNA_Code_of_Ethics.pdf (accessed on 10 June 2018).

- Ethics Resources. Available online: www.crccertification.com/pages/crc_ccrc_code_of_ethics/10.php (accessed on 10 June 2018).

- Tzafestas, S.G. Sociorobot World: A Guided Tour for All; Springer: Berlin, Germany, 2016. [Google Scholar]

- Fog, T.; Nourbakhsh, I.; Dautenhahn, K. A survey of socially interactive robots. Robot. Auton. Syst. 2003, 42, 143–166. [Google Scholar]

- Darling, K. Extending legal protections in social robots: The effect of anthropomorphism, empathy, and violent behavior towards robots. In Robot Law; Calo, M.R., Froomkin, M., Ker, I., Eds.; Edward Elgar Publishing: Brookfield, VT, USA, 2016. [Google Scholar]

- Melson, G.F.; Kahn, P.H., Jr.; Beck, A.; Friedman, B. Robotic pets in human lives: Implications for the human-animal bond and for human relationships with personified technologies. J. Soc. Issues 2009, 65, 545–567. [Google Scholar] [CrossRef]

- Breazeal, C. Designing Sociable Robots; MIT Press: Cambridge, MA, USA, 2002. [Google Scholar]

- Sawada, T.; Takagi, T.; Fujita, M. Behavior selection and motion modulation in emotionally grounded architecture for QRIO SDR-4XIII. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS’2004), Sendai, Japan, 28 September–2 October 2004; pp. 2514–2519. [Google Scholar]

- Asaro, P. How Just Could a Robot War Be; IOS Press: Amsterdam, The Netherlands, 2008. [Google Scholar]

- Walzer, M. Just and Unjust Wars: A Moral Argument Historical with Illustrations; Basic Books: New York, NY, USA, 2000. [Google Scholar]

- Coates, A.J. The Ethics of War; University of Manchester Press: Manchester, UK, 1997. [Google Scholar]

- Asaro, A. Robots and responsibility from a legal perspective. In Proceedings of the 2007 IEEE International Conference on Robotics and Automation: Workshop on Roboethics, Rome, Italy, 10–14 April 2007. [Google Scholar]

- Human Rights Watch. HRW-IHRC, Losing Humanity: The Case against Killer Robots; Human Rights Watch: New York, NY, USA, 2012; Available online: www.hrw.org (accessed on 10 June 2018).

- Marcus, G. Moral Machines. Available online: www.newyorker.com/news_desk/moral_machines (accessed on 24 November 2012).

- Self-Driving Cars. Absolutely Everything You Need to Know. Available online: http://recombu.com/cars/article/self-driving-cars-everything-you-need-to-know (accessed on 10 June 2018).

- Notes on Autonomous Cars: Lesswrong. 2013. Available online: http://lesswrong.com/lw/gfv/notes_on_autonomous_cars (accessed on 10 June 2018).

- Lynch, W. Wilfred Implants: Reconstructing the Human Body; Van Nostrand Reihold: New York, NY, USA, 1982. [Google Scholar]

- Clynes, M.; Kline, S. Cyborgs and Space. Astronautics 1995, 29–33. Available online: http://www.tantrik-astrologer.in/book/linked/2290.pdf (accessed on 10 June 2018).

- Warwick, K. A Study of Cyborgs. Royal Academy of Engineering. Available online: www.ingenia.org.uk/Ingenia/Articles/217 (accessed on 10 June 2018).

- Warwick, K. Homo Technologicus: Threat or Opportunity? Philosophies 2016, 1, 199. [Google Scholar] [CrossRef]

- Seven Real Life Human Cyborgs. Available online: www.mnn.com/leaderboard/stories/7-real-life-human-cyborgs (accessed on 10 June 2018).

- Warwick, K. Cyborg moral, cyborg values, cyborg ethics. Ethics Inf. Technol. 2003, 5, 131–137. [Google Scholar] [CrossRef]

- Palese, E. Robots and cyborgs: To be or to have a body? Poiesis Prax. 2012, 8, 19–196. [Google Scholar] [CrossRef] [PubMed]

- Moravec, H. Robot: Mere Machine to Trancendent Mind; Oxford University Press: Oxford, UK, 1998. [Google Scholar]

- Torresen, J. A review of future and ethical perspectives of robotics and AI. Front. Robot. AI 2018. [Google Scholar] [CrossRef]

- MacDorman, K.F. Androids as an experimental apparatus: Why is there an uncanny valley and can we exploit it? In Proceedings of the CogSci 2005 Workshop: Toward Social Mechanisms of Android Science, Stresa, Italy, 25–26 July, 2005; pp. 106–118. [Google Scholar]

- IEEE Standards Association. IEEE Ethical Aligned Design; IEEE Standards Association: Piscataway, NJ, USA, 2016; Available online: http://standards.ieee.org/develop/indconn/ec/ead_v1.pdf (accessed on 10 June 2018).

- Coeckelberg, M. Robot Rights? Towards a social-relational justification of moral consideration. Ethics Inf. Technol. 2010, 12, 209–221. [Google Scholar] [CrossRef]

- ORI: Open Roboethics Institute. Should Robots Make Life/Death Decisions? In Proceedings of the UN Discussion on Lethal Autonomous Weapons, UN Palais des Nations, Geneva, Switzerland, 13–17 April 2015. [Google Scholar]

- Sullins, J.P. Robots, love, and sex: The ethics of building a love machine. IEEE Trans. Affect. Comput. 2012, 3, 389–409. [Google Scholar] [CrossRef]

- Cheok, A.D.; Ricart, C.P.; Edirisinghe, C. Special Issue “Love and Sex with Robots”. Available online: https://www.mdpi.com/journal/mti/special_issues/robots (accessed on 10 June 2018).

- Levy, D. Love and Sex with Robots: The Evolution of Human-Robot Relationship; Harper Perrenial: London, UK, 2008. [Google Scholar]

- Bostrom, N. Ethical issues in advanced artificial intelligence. In Cognitive, Emotive and Ethical Aspects of Decision Making in Humans and Artificial Intelligence; Lasker, G.E., Marreiros, G., Wallach, W., Smit, I., Eds.; International Institute for Advanced Studies in Systems Research and Cybernetics: Tecumseh, ON, Canada, 2003; Volume 2, pp. 12–17. [Google Scholar]

- Barfield, W.; Williams, A. Cyborgs and enhancement technology. Philosophies 2017, 2, 4. [Google Scholar] [CrossRef]

© 2018 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tzafestas, S.G. Roboethics: Fundamental Concepts and Future Prospects. Information 2018, 9, 148. https://doi.org/10.3390/info9060148

Tzafestas SG. Roboethics: Fundamental Concepts and Future Prospects. Information. 2018; 9(6):148. https://doi.org/10.3390/info9060148

Chicago/Turabian StyleTzafestas, Spyros G. 2018. "Roboethics: Fundamental Concepts and Future Prospects" Information 9, no. 6: 148. https://doi.org/10.3390/info9060148

APA StyleTzafestas, S. G. (2018). Roboethics: Fundamental Concepts and Future Prospects. Information, 9(6), 148. https://doi.org/10.3390/info9060148