When Robots Get Bored and Invent Team Sports: A More Suitable Test than the Turing Test?

Abstract

1. Introduction

“… the real issue involved here is strong AI (artificial intelligence that exceeds human intelligence). The standard reason for emphasizing robotics in this formulation is that intelligence needs an embodiment, a physical presence to affect the world. I disagree with the emphasis on physical presence, however, for I believe that the central concern is intelligence. Intelligence will inherently find a way to influence the world, including creating its own means for embodiment and physical manipulation.”

2. Defining Human-Level Intelligence

- Morphological intelligence—“the physical behavior that emerges from the interaction of the body, its control systems and the environment”.

- Swarm intelligence—collective behavior is distributed and decentralized.

- Individual intelligence—“the ability to both respond (instinctively) to stimuli and, optionally, learn new—or adapt existing—behaviours through a process of trial and error”.

- Social intelligence—“the kind of intelligence that allows animals or robots to learn from each other”.

3. The Embodiment of Artificial Intelligence

- Situatedness—robots are located in the world.

- Embodiment—robots have bodies in which they directly experience the world.

- Intelligence—the source of intelligence derives largely from the physical coupling between the robot and the world.

- Emergence—robot intelligence emerges from interactions among its system components, and with the world.

4. The Transition from Team-Like Behavior to Bounded Competition and the Invention of Team Sport

5. The Origins of Team Sport

6. The Emergence of Human Collective Behavior

7. Necessary Conditions for the Emergence of Team-Sport

- The intrinsic capacity of humanoid robots to compete and cooperate for resources.

- Sufficient periods of leisure time during which robots engage in simulated or artificial resource gathering activities that represent a form of proto-team sport, leading to an eventual transition to actual team sport.

- Heterogeneous robot energetic capacities.

7.1. The Capacity of Humanoid Robots to Compete and Cooperate for Resources

7.2. Leisure Time as a Necessary Condition for the Emergence of Robot Team Sports

7.3. The Heterogeneity Requirement and the Energetic Threshold for the Emergence of Team Sport

8. The Status of Robo-Soccer

9. Conclusions

Acknowledgments

Conflicts of Interest

References

- Kurzweil, R. The Singularity is Near: When Humans Transcend Biology; Penguin Group: New York, NY, USA, 2005. [Google Scholar]

- Turing, A.M. Computing machinery and intelligence. Mind 1950, 49, 433–460. [Google Scholar] [CrossRef]

- You, J. Beyond the Turing Test. Science 2015, 347, 116. [Google Scholar] [CrossRef] [PubMed]

- Grosz, B. What question would Turing pose today? AI Mag. 2012, 33, 73–81. [Google Scholar] [CrossRef]

- Ortiz, C. Why we need a physically embodied Turing test and what it might look like. AI Mag. 2016, 37, 55–62. [Google Scholar] [CrossRef]

- Moravec, M. Mind Children: The Future of Robot and Human Intelligence; Harvard University Press: Cambridge, MA, USA, 2005. [Google Scholar]

- Minsky, M. The Society of Mind; Simon & Schuster: New York, NY, USA, 1986. [Google Scholar]

- Pfeifer, R.; Bongard, J. How the Body Shapes the Way We Think—A New View of Intelligence. In A Bradford Book; The MIT Press: Cambridge, MA, USA; London, UK, 2007. [Google Scholar]

- Gershenson, C.; Trianni, V.; Werfel, J.; Sayama, H. Self-Organization and Artificial Life: A Review. In Proceedings of the 2018 Conference on Artificial Life, Tokyo, Japan, 23–27 July 2018. (submitted). [Google Scholar]

- Kitano, H.; Asada, M. The RoboCup humanoid challenge as the millennium challenge for advanced robotics. Adv. Robot. 1998, 13, 723–736. [Google Scholar] [CrossRef]

- Nolfi, S.; Floreano, D. Evolutionary Robotics: The Biology, Intelligence, and Technology of Self-Organizing Machines; MIT Press: Cambridge, MA, USA, 2000. [Google Scholar]

- Winfield, A. How intelligent is your intelligent robot? arXiv, 2017; arXiv:1712.08878. [Google Scholar]

- Do Animals Have a Sense of Competition? 2018. Available online: https://gizmodo.com/do-animals-have-a-sense-of-competition-1823122780 (accessed on 29 April 2018).

- Legg, S.; Hutter, M. A collection of definitions of intelligence. Front. Artif. Intell. Appl. 2007, 157, 17. [Google Scholar]

- Brooks, R.A.; Stein, L.A. Building brains for bodies. Auton. Robots 1994, 1, 7–25. [Google Scholar] [CrossRef]

- Clark, A. Supersizing the Mind: Embodiment, Action, and Cognitive Extension; Oxford University Press: Oxford, UK, 2008. [Google Scholar]

- Braga, A.; Logan, R.K. The Emperor of Strong AI Has No Clothes: Limits to Artificial Intelligence. Information 2017, 8, 156. [Google Scholar] [CrossRef]

- Cariani, P.A. On the Design of Devices with Emergent Semantic Functions. Ph.D. Thesis, State University of New York at Binghamton, New York, NY, USA, 1989. [Google Scholar]

- Hristovski, R. Constraints-induced emergence of functional novelty in complex neurobiological systems: A basis for creativity in sport. Nonlinear Dyn. Psychol. Life Sci. 2011, 15, 175–206. [Google Scholar]

- Brown, D.E. Human Universals; Temple University Press: Philadelphia, PA, USA, 1991. [Google Scholar]

- Glazier, P.S. Towards a grand unified theory of sports performance. Hum. Mov. Sci. 2017, 56, 184–189. [Google Scholar] [CrossRef] [PubMed]

- Araújo, D.; Davids, K. Team synergies in sport: Theory and measures. Front. Psychol. 2016, 7, 1449. [Google Scholar] [CrossRef] [PubMed]

- Balagué, N.; Torrents, C.; Hristovski, R.; Kelso, J.A. Sport science integration: An evolutionary synthesis. Eur. J. Sport Sci. 2017, 17, 51–62. [Google Scholar] [CrossRef] [PubMed]

- Scambler, G. Sport and Society: History, Power and Culture; Open University Press, McGraw-Hill Education: Berkshire, UK, 2005. [Google Scholar]

- Lombardo, M.P. On the evolution of sport. Evolut. Psychol. 2012, 10. [Google Scholar] [CrossRef]

- Sipes, R.G. War, sports and aggression: An empirical test of two rival theories. Am. Anthropol. 1973, 75, 64–86. [Google Scholar] [CrossRef]

- Trianni, V.; Dorigo, M. Self-organisation and communication in groups of simulated and physical robots. Biol. Cybern. 2006, 95, 213–231. [Google Scholar] [CrossRef] [PubMed]

- Gross, R.; Dorigo, M. Towards group transport by swarms of robots. Int. J. Bio-Inspired Comput. 2009, 1, 1–3. [Google Scholar] [CrossRef]

- Sperati, V.; Trianni, V.; Nolfi, S. Self-organised path formation in a swarm of robots. Swarm Intell. 2011, 5, 97–119. [Google Scholar] [CrossRef]

- Krieger, M.J.; Billeter, J.B.; Keller, L. Ant-like task allocation and recruitment in cooperative robots. Nature 2000, 406, 992. [Google Scholar] [CrossRef] [PubMed]

- Zedadra, O.; Seridi, H.; Jouandeau, N.; Fortino, G. Energy expenditure in multi-agent foraging: An empirical analysis. In Proceedings of the Federated Conference on Computer Science and Information Systems (FedCSIS) IEEE, Łódź, Poland, 13–16 September 2015; pp. 1773–1778. [Google Scholar]

- Liu, W.; Winfield, A.F. Modeling and optimization of adaptive foraging in swarm robotic systems. Int. J. Robot. Res. 2010, 29, 1743–1760. [Google Scholar] [CrossRef]

- Ducatelle, F.; Di Caro, G.A.; Pinciroli, C.; Mondada, F.; Gambardella, L. Communication assisted navigation in robotic swarms: Self-organization and cooperation. In Proceedings of the 2011 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), San Francisco, CA, USA, 25–30 September 2011; pp. 4981–4988. [Google Scholar]

- Nouyan, S.; Groß, R.; Bonani, M.; Mondada, F.; Dorigo, M. Teamwork in self-organized robot colonies. IEEE Trans. Evolut. Comput. 2009, 13, 695–711. [Google Scholar] [CrossRef]

- Ducatelle, F.; Di Caro, G.A.; Pinciroli, C.; Gambardella, L.M. Self-organized cooperation between robotic swarms. Swarm Intell. 2011, 5, 73. [Google Scholar] [CrossRef]

- Dorigo, M.; Floreano, D.; Gambardella, L.M.; Mondada, F.; Nolfi, S.; Baaboura, T.; Birattari, M.; Bonani, M.; Brambilla, M.; Brutschy, A.; et al. Swarmanoid: A novel concept for the study of heterogeneous robotic swarms. IEEE Robot. Autom. Mag. 2013, 20, 60–71. [Google Scholar] [CrossRef]

- Bayındır, L. A review of swarm robotics tasks. Neurocomputing 2016, 172, 292–321. [Google Scholar] [CrossRef]

- Mohan, Y.; Ponnambalam, S. An Extensive Review of Research in Swarm Robotics. In Proceedings of the World Congress on Nature & Biologically Inspired Computing, Coimbatore, India, 9–11 December 2009. [Google Scholar]

- Grosz, B.J. A multi-agent systems Turing challenge. In Proceedings of the 2013 International Conference on Autonomous Agents and Multi-Agent Systems, St. Paul, MN, USA, 6–10 May 2013. [Google Scholar]

- Nowak, M.A. Five rules for the evolution of cooperation. Science 2006, 314, 1560–1563. [Google Scholar] [CrossRef] [PubMed]

- Downward, P.; Riordan, J. Social interactions and the demand for sport: An economic analysis. Contemp. Econ. Policy 2007, 25, 518–537. [Google Scholar] [CrossRef]

- Ruseski, J.E.; Humphreys, B.R.; Hallmann, K.; Breuer, C. Family structure, time constraints, and sport participation. Eur. Rev. Aging Phys. Act. 2011, 8, 57–66. [Google Scholar] [CrossRef]

- Eberth, B.; Smith, M.D. Modelling the participation decision and duration of sporting activity in Scotland. Econ. Model. 2010, 27, 822–834. [Google Scholar] [CrossRef] [PubMed]

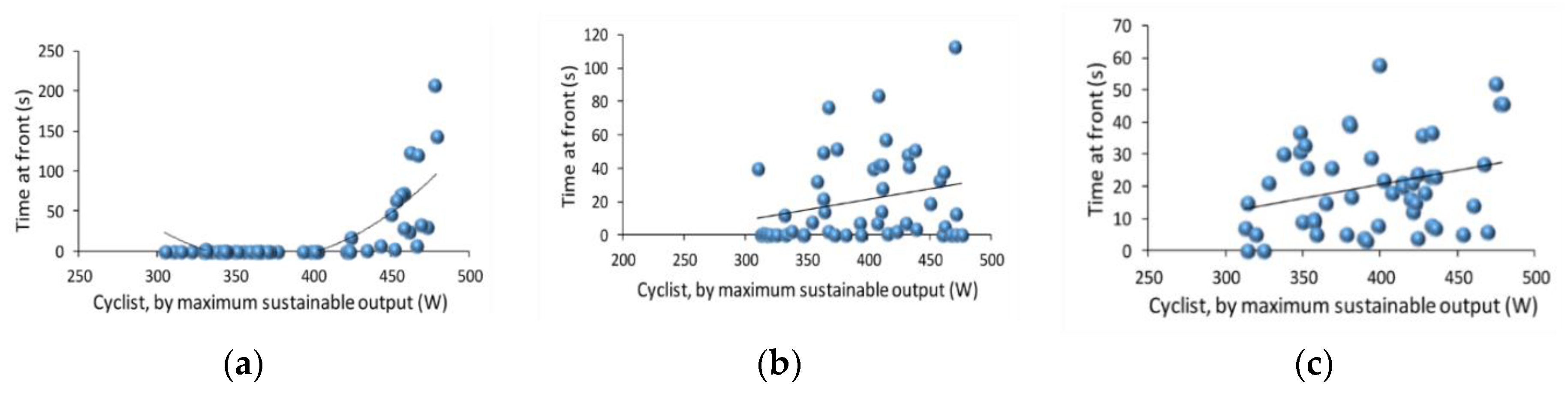

- Trenchard, H. The peloton superorganism and protocooperative behavior. Appl. Math. Comput. 2015, 270, 179–192. [Google Scholar] [CrossRef][Green Version]

- Trenchard, H.; Ratamero, E.; Richardson, A.; Perc, M. A deceleration model for bicycle peloton dynamics and group sorting. Appl. Math. Comput. 2015, 251, 24–34. [Google Scholar] [CrossRef]

- Szwaykowska, K.; Romero, L.M.; Schwartz, I.B. Collective motions of heterogeneous swarms. IEEE Trans. Autom. Sci. Eng. 2015, 12, 810–818. [Google Scholar] [CrossRef]

- Gomes, J.; Mariano, P.; Christensen, A.L. Challenges in cooperative coevolution of physically heterogeneous robot teams. Nat. Comput. 2016, 1–18. [Google Scholar] [CrossRef]

- Yang, J.; Liu, Y.; Wu, Z.; Yao, M. The evolution of cooperative behaviours in physically heterogeneous multi-robot systems. Int. J. Adv. Robot. Syst. 2012, 9, 253. [Google Scholar] [CrossRef]

- Ranjbar-Sahraeia, B.; Alersa, S.; Stankováa, K.; Tuylsab, K.; Weissa, G. Toward Soft Heterogeneity in Robotic Swarms. In Proceedings of the 25th Benelux Conference on Artificial Intelligence (BNAIC), Delft, The Netherlands, 7–8 November 2013; pp. 384–385. [Google Scholar]

- Orejan, J. Football/Soccer: History and Tactics; McFarland: Glasgow, UK, 2011. [Google Scholar]

- Osawa, E.; Kitano, H.; Asada, M.; Kuniyoshi, Y.; Noda, I. RoboCup: The robot world cup initiative. In Proceedings of the Second International Conference on Multi-Agent Systems (ICMAS-1996), Kyoto, Japan, 9–13 December 1996; AAAI Press: Menlo Park, CA, USA, 1996. [Google Scholar]

- Sahota, M.K.; Mackworth, A.K. Can situated robots play soccer? In Proceedings of the 10th Biennial Conference of the Canadian Society for Computational Studies of Intelligence, Banff, AB, Canada, 16–20 May 1994; pp. 249–254. [Google Scholar]

- Gerndt, R.; Seifert, D.; Baltes, J.H.; Sadeghnejad, S.; Behnke, S. Humanoid robots in soccer: Robots versus humans in RoboCup 2050. IEEE Robot. Autom. Mag. 2015, 22, 147–154. [Google Scholar] [CrossRef]

- World Championship 2017 SPL Finals B-Human vs. Nao-Team HTWK. 2017. Available online: https://www.youtube.com/watch?v=4uYN_3gL4_Y Robo Soccer (accessed on 5 April 2018).

- RoboCup 2017. Available online: https://www.youtube.com/watch?time_continue=24395&v=BUxqFlrvkQk (accessed on 5 April 2018).

- Snášel, V.; Svatoň, V.; Martinovič, J.; Abraham, A. Optimization of Rules Selection for Robot Soccer Strategies. Int. J. Adv. Robot. Syst. 2014, 11, 13. [Google Scholar] [CrossRef]

- Kurzweil, R. The Age of Spiritual Machines; Viking: New York, NY, USA, 1999. [Google Scholar]

© 2018 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Trenchard, H. When Robots Get Bored and Invent Team Sports: A More Suitable Test than the Turing Test? Information 2018, 9, 118. https://doi.org/10.3390/info9050118

Trenchard H. When Robots Get Bored and Invent Team Sports: A More Suitable Test than the Turing Test? Information. 2018; 9(5):118. https://doi.org/10.3390/info9050118

Chicago/Turabian StyleTrenchard, Hugh. 2018. "When Robots Get Bored and Invent Team Sports: A More Suitable Test than the Turing Test?" Information 9, no. 5: 118. https://doi.org/10.3390/info9050118

APA StyleTrenchard, H. (2018). When Robots Get Bored and Invent Team Sports: A More Suitable Test than the Turing Test? Information, 9(5), 118. https://doi.org/10.3390/info9050118