Leveraging Multimodal Information for Web Front-End Development Instruction: Analyzing Effects on Cognitive Behavior, Interaction, and Persistent Learning

Abstract

1. Introduction

2. Related Research

2.1. Theoretical Foundations of Multimodal Learning and Behavior

2.1.1. Embodied Cognition: How Sensory–Motor Integration Shapes Cognitive Behavior

2.1.2. Cognitive Load Theory: Optimizing Information Processing for Sustained Attention

2.1.3. Self-Determination Theory: Motivational Drivers of Persistent and Social Behavior

2.2. Multimodal Learning in Technical Education: Behavioral Gaps

2.2.1. Progress in STEM and General Programming Education

2.2.2. Underexplored Frontiers in Web Front-End Education

2.3. Conceptual Framework: How Multimodal Input Shapes Learning Behaviors

3. Research Design and Methods

3.1. Study Design

3.2. Participants

3.2.1. Inclusion and Exclusion Criteria

- (1)

- Inclusion: No prior formal training in web front-end development (verified via a baseline technical proficiency test), access to a computer with internet, and ability to commit to the 16-week study period.

- (2)

- Exclusion: Diagnosed sensory or cognitive disorders (e.g., visual agnosia, dyslexia) that could affect engagement with multimodal stimuli; concurrent enrollment in other web frontend-related courses to avoid confounding learning effects.

3.2.2. Sample Characteristics

3.3. Intervention Procedures

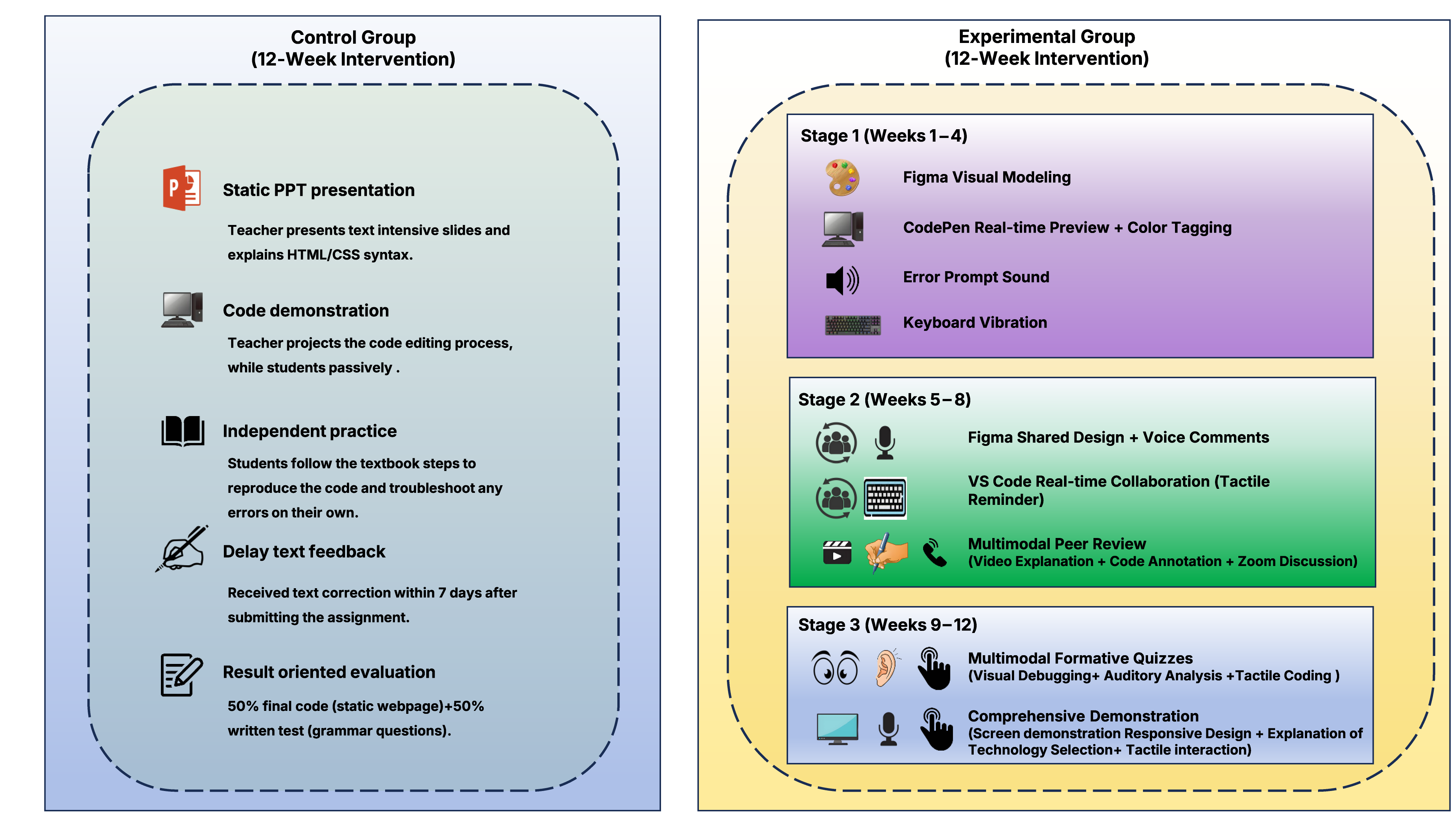

3.3.1. Control Group: Traditional Web Front-End Teaching

3.3.2. Experimental Group: Multimodal Web Front-End Teaching

- Phase 1: Multimodal Input (Weeks 1–4)

- Phase 2: Multimodal Collaboration (Weeks 5–8)

- Phase 3: Multimodal Assessment (Weeks 9–12)

- (1)

- Formative quizzes

- •

- Visual debugging: Identifying layout flaws in screenshots (e.g., “Why is the text overlapping on mobile?”) and drag-and-dropping CSS fixes (e.g., overflow: hidden).

- •

- Auditory analysis: Listening to a code narration (“I wrote flex direction: column-reverse—what will the layout look like?”) and selecting the correct visual outcome from options.

- •

- Haptic coding: Writing JavaScript functions with real-time feedback: vibrations for syntax errors and “pings” for valid logic, with a progress bar filling up as the code neared completion.

- (2)

- Final project presentation

- •

- Visual demos: Screen-sharing to highlight responsive design (e.g., “Watch how the grid reflows from 3 columns on desktop to 1 on mobile”).

- •

- Auditory explanations: Narrating technical choices (e.g., We used “position: sticky” for the header so it stays visible when scrolling—here is why that improves the user experience).

- •

- Haptic interaction: Demonstrating functionality (e.g., clicking a “filter” button to sort portfolio items) using the haptic mouse, with vibrations confirming successful interactions.

3.4. Measures

3.4.1. Cognitive Behavior Measures

- (1)

- Cognitive Load

- (2)

- Attention Duration

- •

- On-task: Engaged in coding, following instructor demonstrations, taking notes, asking task-related questions, or collaborating with peers on technical problems.

- •

- Distracted: Looking at non-course materials (e.g., social media), talking about non-technical topics, or passively staring at the screen without interaction.

- (3)

- Problem-Solving Accuracy

- •

- HTML-related issues include missing ending tags (e.g., only <div> without adding the corresponding </div>) and improper use of semantic elements.

- •

- CSS: Invalid property values (e.g., flex direction: horizontal), misplaced selectors (e.g., styling class with ‘#’).

- •

- JavaScript: Undefined variables, logic errors in event handlers (e.g., a button click failing to trigger a function).

3.4.2. Interactive Behavior Measures

- (1)

- Peer Collaboration Frequency:

- •

- Technical discussions: Verbal or chat-based exchanges about code logic (e.g., “How do we make this div responsive on mobile?”).

- •

- Idea contributions: Proposing solutions or design choices (e.g., “Let us use grid-template-areas instead of flexbox for this layout”).

- •

- Code reviews: Providing feedback on peers’ work (e.g., “Your JavaScript function is missing a return statement” or “This CSS selector could be more specific”).

- (2)

- Teacher–Student Interaction

- •

- The type of interaction (question vs. feedback request).

- •

- The complexity of the question (basic: e.g., “What is the syntax for a media query?”; advanced: e.g., “Why does position: fixed behave differently in Safari?”).

- •

- The instructor’s response (e.g., verbal explanation, code demonstration).

- (3)

- Feedback Utilization

3.4.3. Persistent Behavior Measures

- (1)

- Post-Class Practice Time

- (2)

- Skill Extension

- (3)

- Intrinsic Motivation

3.4.4. Qualitative Measures

- (1)

- Semi-Structured Interviews:

- (2)

- Learning Logs

4. Results

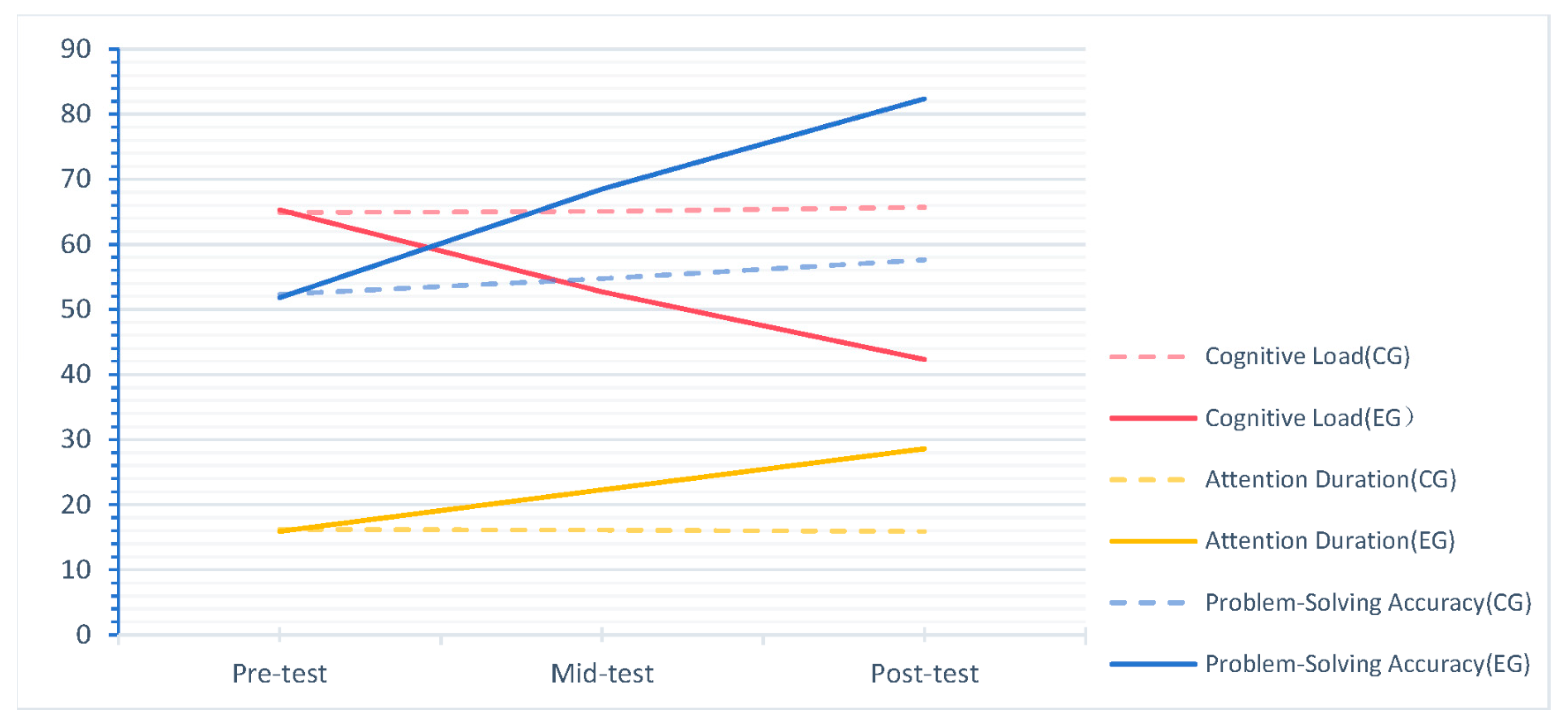

4.1. Cognitive Behavior Outcomes

4.1.1. Cognitive Load

4.1.2. Attention Duration

4.1.3. Problem-Solving Accuracy

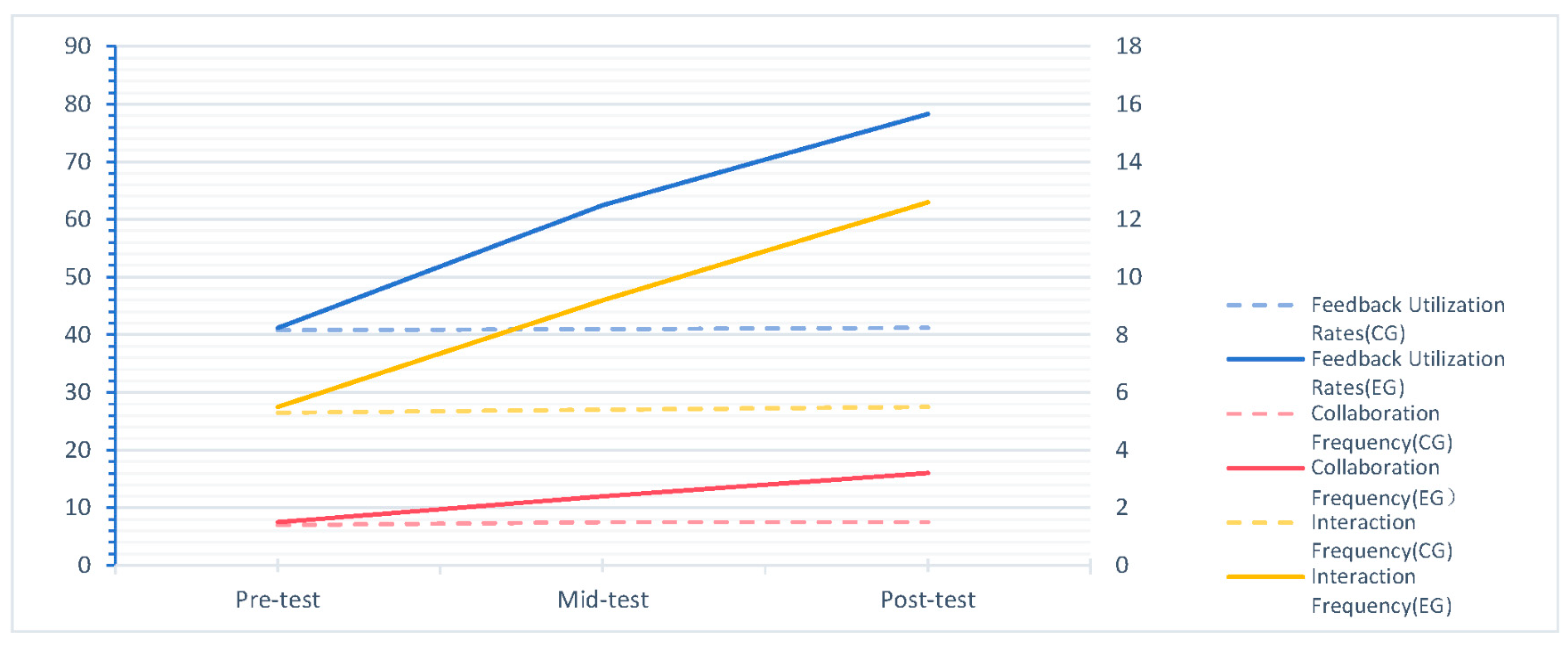

4.2. Interactive Behavior Outcomes

4.2.1. Peer Collaboration Frequency

4.2.2. Teacher–Student Interaction

4.2.3. Feedback Utilization

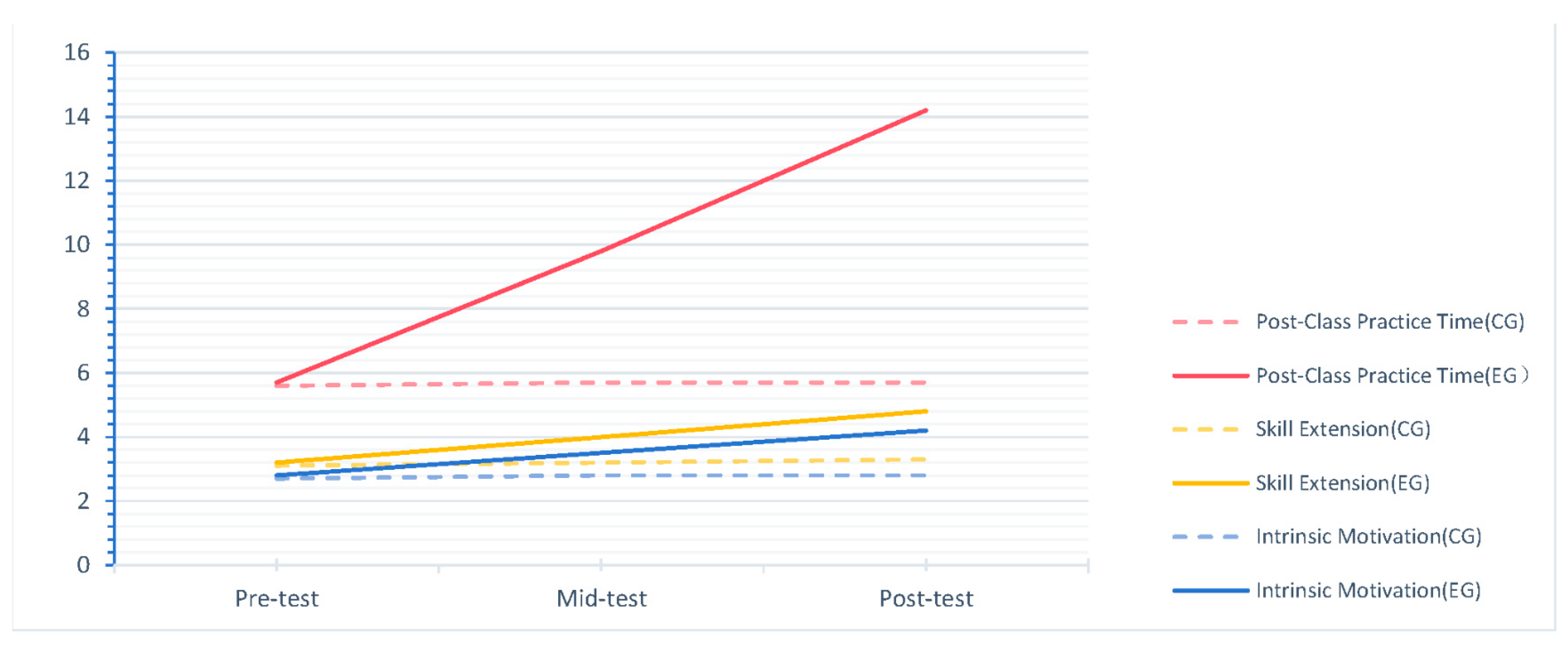

4.3. Persistent Behavior Outcomes

4.3.1. Post-Class Practice Time

4.3.2. Skill Extension

4.3.3. Intrinsic Motivation

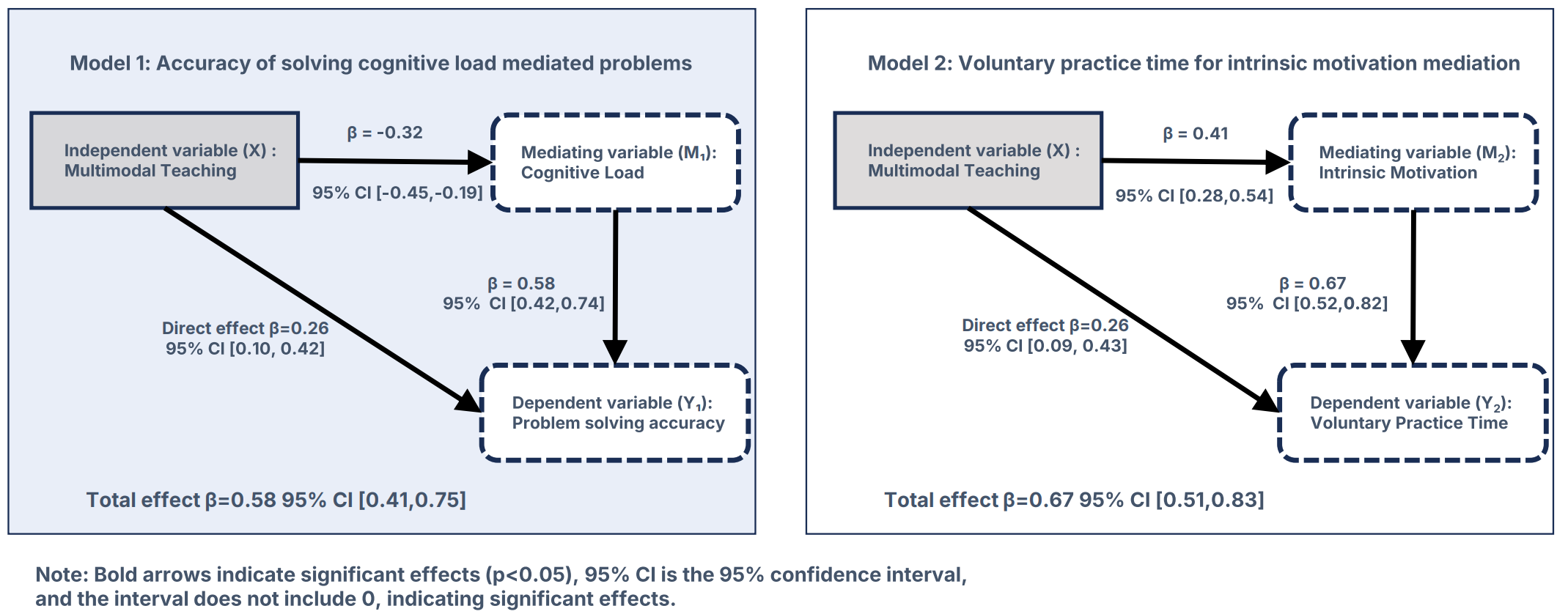

4.4. Mediation Analyses

4.4.1. Cognitive Load as a Mediator of Problem-Solving Accuracy

4.4.2. Intrinsic Motivation as a Mediator of Post-Class Practice Time

4.5. Qualitative Findings

4.5.1. Embodied Memory Enhances Problem-Solving

4.5.2. Multimodal Feedback Reduces Frustration

4.5.3. Collaboration Feels “More Natural” with Mixed Modalities

4.5.4. Autonomy and Competence Drive Persistence

4.5.5. Single Sensory Effect Analysis

5. Discussion

5.1. Multimodal Learning Shapes Cognitive Behaviors Through Embodied Cognition and Reduced Load

5.2. Multimodal Collaboration Fulfills Relatedness Needs, Enhancing Interactive Behaviors

5.3. Persistent Behaviors Are Driven by Autonomy and Competence Needs

5.4. Practical Implications

- (1)

- For educators: Design multimodal sequences that pair abstract concepts with sensory inputs (e.g., JavaScript promises with timeline visuals and countdown sounds). Prioritize real-time, multi-sensory feedback to reduce frustration and cognitive load—structure collaborative tasks to leverage complementary modalities (e.g., shared whiteboards and voice chat).

- (2)

- For curriculum designers: Replace static materials (e.g., textbooks) with interactive, multimodal resources (e.g., video tutorials with embedded code editors). Align assessments with behavioral processes (e.g., evaluating how students strategically utilize modalities) rather than just focusing on outcomes.

- (3)

- For tool developers: Integrate low-cost, multimodal features into coding platforms (e.g., browser-based vibration APIs for error detection, customizable sound cues). Support modality customization to accommodate diverse needs (e.g., visual alternatives for deaf learners).

5.5. Limitations and Future Directions

- (1)

- Modality specificity: We did not isolate effects of individual modality combinations (e.g., visual and haptic vs. auditory and haptic). Factorial designs could identify optimal pairings for specific tasks (e.g., CSS layout vs. JavaScript logic).

- (2)

- Long-term retention: Follow-up was limited to 2 weeks. Longer tracking (e.g., 6 months) is needed to assess whether multimodal-induced behaviors persist in professional settings.

- (3)

- Technology access: Multimodal tools require devices with sensory capabilities, which may be inaccessible in resource-limited contexts. Future work should develop low-cost alternatives (e.g., text-to-speech for auditory feedback).

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Ferrari, C.; Hurst, A. Accessible Web Development: Opportunities to Improve the Education and Practice of web Development with a Screen Reader. ACM Trans. Access. Comput. 2021, 14, 8. [Google Scholar] [CrossRef]

- Martinez, C. Developing 21st century teaching skills: A case study of teaching and learning through project-based curriculum. Cogent Educ. 2022, 9, 2024936. [Google Scholar] [CrossRef]

- Li, X.; Di, R.; Cai, J. Online Small Sample Learner Modeling and Curriculum Recommendation with Healthy Emotional Factors of College Students. Comput. Intell. Neurosci. 2022, 2022, 8247203. [Google Scholar] [CrossRef] [PubMed]

- Xu, X.; Yu, S.; Pang, N.; Dou, C.; Li, D. Review on A big data-based innovative knowledge teaching evaluation system in universities. J. Innov. Knowl. 2022, 7, 100197. [Google Scholar] [CrossRef]

- Holly, M.; Hildebrandt, J.; Pirker, J. A Computer-Supported Collaborative Learning Environment for Computer Science Education. In Proceedings of the 10th International Conference on Immersive Learning, Glasgow, UK, 10–13 June 2024. [Google Scholar] [CrossRef]

- Zhu, G.; Raman, P.; Xing, W.; Jim, S. Curriculum design for social, cognitive and emotional engagement in Knowledge Building. Int. J. Educ. Technol. High. Educ. 2021, 18, 37. [Google Scholar] [CrossRef]

- Tian, F.; Wang, Q.; Li, X.; Sun, N. Heterogeneous multimedia cooperative annotation based on multimodal correlation learning. J. Vis. Commun. Image Represent. 2019, 58, 544–553. [Google Scholar] [CrossRef]

- Ojonuba, S.E.; Türkmen, G.; Toker, S. Enhancing Web Development Education with Game-Based and Gamification Learning: A Study of Engagement, Motivation, and Performance. IEEE Access 2025, 13, 137048–137066. [Google Scholar] [CrossRef]

- Garcia, M.; Yousef, A. Cognitive and affective effects of teachers’ annotations and talking heads on asynchronous video lectures in a web development course. Res. Pract. Technol. Enhanc. Learn. 2023, 18, 020. [Google Scholar] [CrossRef]

- Chen, Y.; Zhang, K. Online course in web development: The case of Chinese universities. Interact. Learn. Environ. 2024, 32, 3377–3387. [Google Scholar] [CrossRef]

- Liu, Y.; Ma, W.; Guo, X.; Lin, X.; Wu, C.; Zhu, T. Impacts of Color Coding on Programming Learning in Multimedia Learning: Moving Toward a Multimodal Methodology. Front. Psychol. 2021, 12, 773328. [Google Scholar] [CrossRef]

- Ling, H.C.; Chiang, H.S. Learning Performance in Adaptive Learning Systems: A Case Study of Web Programming Learning Recommendations. Front. Psychol. 2022, 13, 770637. [Google Scholar] [CrossRef]

- Abdoulqadir, C.; Loizides, F. Interaction, Artificial Intelligence, and Motivation in Children’s Speech Learning and Rehabilitation Through Digital Games: A Systematic Literature Review. Information 2025, 16, 599. [Google Scholar] [CrossRef]

- Lakoff, G.; Johnson, M. The metaphorical structure of the human conceptual system. Cogn. Sci. 1980, 4, 195–208. [Google Scholar] [CrossRef]

- Sweller, J. Cognitive load during problem solving: Effects on learning. Cogn. Sci. 1988, 12, 257–285. [Google Scholar] [CrossRef]

- Ryan, R.M.; Deci, E.L. Self-determination theory and the facilitation of intrinsic motivation, social development, and well-being. Am. Psychol. 2000, 55, 68–78. [Google Scholar] [CrossRef]

- Hsieh, P.-C.; Fang, T.-L.; Jin, S.; Wang, Y.; Funabiki, N.; Fan, Y.-C. A Verilog Programming Learning Assistant System Focused on Basic Verilog with a Guided Learning Method. Future Internet 2025, 17, 333. [Google Scholar] [CrossRef]

- Giannakos, M.; Cukurova, M. The role of learning theory in multimodal learning analytics. Br. J. Educ. Technol. 2023, 54, 1246–1267. [Google Scholar] [CrossRef]

- Kozan, K. The incremental predictive validity of teaching, cognitive and social presence on cognitive load. Internet High. Educ. 2016, 31, 11–19. [Google Scholar] [CrossRef]

- Chen, W.; Ping, X.; Dong, D. Filling in the vacuous flesh: Embodiment, constitution, and interoception. Theory Psychol. 2023, 33, 515–534. [Google Scholar] [CrossRef]

- Lakoff, G. Explaining Embodied Cognition Results. Top. Cogn. Sci. 2012, 4, 773–785. [Google Scholar] [CrossRef]

- Wilson, M. Six views of embodied cognition. Psychon. Bull. Rev. 2002, 9, 625–636. [Google Scholar] [CrossRef]

- Simon, C.; Hacene, M.B.; Otmane, S.; Chellali, A. Study of communication modalities to support teaching tool manipulation skills in a shared immersive environment. Comput. Graph. 2023, 117, 31–41. [Google Scholar] [CrossRef]

- Nambiar, K.; Bhargava, P. An Exploration of the Effects of Cross-Modal Tasks on Selective Attention. Behav. Sci. 2023, 13, 51. [Google Scholar] [CrossRef] [PubMed]

- Czok, V.; Krug, M.; Müller, S.; Huwer, J.; Weitzel, H. Learning Effects of Augmented Reality and Game-Based Learning for Science Teaching in Higher Education in the Context of Education for Sustainable Development. Sustainability 2023, 15, 15313. [Google Scholar] [CrossRef]

- El Hajji, M.; Ait Baha, T.; Berka, A.; Ait Nacer, H.; El Aouifi, H.; Es-Saady, Y. An Architecture for Intelligent Tutoring in Virtual Reality: Integrating LLMs and Multimodal Interaction for Immersive Learning. Information 2025, 16, 556. [Google Scholar] [CrossRef]

- Zhang, Y.; Song, Y. The Effects of Sensory Cues on Immersive Experiences for Fostering Technology-Assisted Sustainable Behavior: A Systematic Review. Behav. Sci. 2022, 12, 361. [Google Scholar] [CrossRef]

- Kirschner, P.A. Cognitive load theory: Implications of cognitive load theory on the design of learning. Learn. Instr. 2002, 12, 1–10. [Google Scholar] [CrossRef]

- Paas, F.; Renkl, A.; Sweller, J. Cognitive load theory and instructional design: Recent developments. Educ. Psychol. 2003, 38, 1–4. [Google Scholar] [CrossRef]

- Ryan, R.M.; Deci, E.L. Intrinsic and extrinsic motivation from a self-determination theory perspective: Definitions, theory, practices, and future directions. Learn. Instr. 2020, 61, 101860. [Google Scholar] [CrossRef]

- Sewell, J.L.; Young, J.Q.; Boscardin, C.K.; ten Cate, O.; O’Sullivan, P.S. Trainee perception of cognitive load during observed faculty staff teaching of procedural skills. Med. Educ. 2019, 53, 925–940. [Google Scholar] [CrossRef]

- van Merriënboer, J.J.G.; Sweller, J. Cognitive load theory in health professional education: Design principles and strategies. Med. Educ. 2010, 44, 85–93. [Google Scholar] [CrossRef]

- Du, X.; Dai, M.; Tang, H.; Hung, J.L.; Li, H.; Zheng, J. A multimodal analysis of college students’ collaborative problem solving in virtual experimentation activities: A perspective of cognitive load. J. Comput. High. Educ. 2023, 35, 272–295. [Google Scholar] [CrossRef]

- Deci, E.L.; Ryan, R.M. The “what” and “why” of goal pursuits: Human needs and the self-determination of behavior. Psychol. Inq. 2000, 11, 227–268. [Google Scholar] [CrossRef]

- Reeve, J. Motivating Students to Learn, 2nd ed.; Pearson: London, UK, 2013. [Google Scholar]

- Ryan, R.M.; Deci, E.L. Self-determination theory: Basic psychological needs in motivation, development, and wellness. Handb. Self-Determ. Res. 2017, 2, 3–33. [Google Scholar]

- Niemiec, C.P.; Ryan, R.M. Autonomy, competence, and relatedness in the classroom: Applying self-determination theory to educational practice. Theory Pract. 2009, 48, 237–245. [Google Scholar] [CrossRef]

- Retnowati, E.; Ayres, P.; Sweller, J. Collaborative learning effects when students have complete or incomplete knowledge. Appl. Cogn. Psychol. 2018, 32, 681–692. [Google Scholar] [CrossRef]

- Pérez-Marín, D.; Hijón-Neira, R.; Pizarro, C. A First Approach to Co-Design a Multimodal Pedagogic Conversational Agent with Pre-Service Teachers to Teach Programming in Primary Education. Computers 2024, 13, 65. [Google Scholar] [CrossRef]

- Tuo, M.; Long, B. Construction and Application of a Human-Computer Collaborative Multimodal Practice Teaching Model for Preschool Education. Comput. Intell. Neurosci. 2022, 2022, 2973954. [Google Scholar] [CrossRef]

- Skliarova, I.; Meireles, I.; Martins, N.; Tchemisova, T.; Cação, I. Enriching Traditional Higher STEM Education with Online Teaching and Learning Practices: Students’ Perspective. Educ. Sci. 2022, 12, 806. [Google Scholar] [CrossRef]

- Braun, V.; Clarke, V. Using thematic analysis in psychology. Qual. Res. Psychol. 2008, 3, 77–101. [Google Scholar] [CrossRef]

- Creswell, J.W.; Clark, V.L.P. Designing and Conducting Mixed Methods Research, 3rd ed.; Sage Publications: Thousand Oaks, CA, USA, 2017. [Google Scholar]

- Ayres-Pereira, V.; Arntzen, E. Effect of Presenting Baseline Probes During or After Emergent Relations Tests on Equivalence Class Formation. Psychol. Rec. 2019, 69, 193–204. [Google Scholar] [CrossRef]

- Jankowski, K.R.B.; Flannelly, K.J.; Flannelly, L.T. The t-test: An Influential Inferential Tool in Chaplaincy and Other Healthcare Research. J. Health. Care. Chaplain. 2017, 24, 30–39. [Google Scholar] [CrossRef] [PubMed]

- Chen, Y.T.; Chen, M.C. Using chi-square statistics to measure similarities for text categorization. Expert Syst. Appl. 2011, 38, 3085–3090. [Google Scholar] [CrossRef]

- A Guide to Learning Styles. Available online: https://vark-learn.com/ (accessed on 8 April 2025).

- Duckett, J. Web Design with HTML, CSS, JavaScript and jQuery Set; John Wiley & Sons: Hoboken, NJ, USA, 2014. [Google Scholar]

- Staiano, F. Designing and Prototyping Interfaces with Figma; Packt Publishing: Birmingham, UK, 2023. [Google Scholar]

- Holman, T. The best of CodePen. Net 2016, 283, 76–81. [Google Scholar]

- Skliarova, I. Project-Based Learning and Evaluation in an Online Digital Design Course. Electronics 2021, 10, 646. [Google Scholar] [CrossRef]

- Liang, Z.; Suntrayuth, S.; Sun, X.; Su, J. Positive Verbal Rewards, Creative Self-Efficacy, and Creative Behavior: A Perspective of Cognitive Appraisal Theory. Behav. Sci. 2023, 13, 229. [Google Scholar] [CrossRef]

- Hart, S.G.; Staveland, L.E. Development of NASA-TLX (Task Load Index): Results of empirical and theoretical research. Adv. Psychol. 1988, 52, 139–183. [Google Scholar] [CrossRef]

- Matosas-López, L.; Leguey-Galán, S.; Regaña, C.B.; Piris, N.P. University and Quality Systems. Evaluating faculty performance in face-to-face and online programs: A comparison of Likert and BARS instruments. Int. J. Educ. Res. Innov. 2024, 22, 18. [Google Scholar] [CrossRef]

- Morales, A.F.C.; Arellano, J.L.H.; Muñoz, E.L.G.; Macías, A.M. Development of the NASA-TLX Multi Equation Tool to Assess Workload. Int. J. Combin. Optim. Probl. Inform. 2020, 11, 50–58. [Google Scholar]

- Ogden, R.S.; Turner, F.; Pawling, R. Exploring the Role of Overt Attention Allocation During Time Estimation: An Eye Movement Study. Timing Time Percept 2022, 10, 17–39. [Google Scholar] [CrossRef]

- Boomgaarden, A.; Loibl, K.; Leuders, T. The trade-off between complexity and accuracy. Preparing for computer-based adaptive instruction on fractions. Interact. Learn. Environ. 2023, 31, 6379–6394. [Google Scholar] [CrossRef]

- Wang, T.J.; Xia, X. The Study of Hierarchical Learning Behaviors and Interactive Cooperation Based on Feature Clusters. Sage Open 2023, 13, 21582440231166593. [Google Scholar] [CrossRef]

- Burns, S.; Brathwaite, L.; Hoan, E.; Yu, E.; Yasiniyan, S.; White, L.; Dhuey, E.; Perlman, M. A scoping review and network analysis of the characteristics of peer collaboration in early educational settings from studies using diverse methodologies. Educ. Rev. 2025, 77, 1649–1671. [Google Scholar] [CrossRef]

- Yang, J.; Chinchilli, V.M. Fixed-effects modeling of Cohen’s weighted kappa for bivariate multinomial data. Comput. Stat. Data Anal. 2011, 55, 1061–1070. [Google Scholar] [CrossRef]

- Sklyarov, V.; Skliarova, I. Teaching reconfigurable systems: Methods, tools, tutorials, and projects. IEEE Trans. Educ. 2005, 48, 290–300. [Google Scholar] [CrossRef]

- Liu, Y.; Hau, K.; Zheng, X. Does instrumental motivation help students with low intrinsic motivation? Comparison between Western and Confucian students. Int. J. Psychol. 2020, 55, 182–191. [Google Scholar] [CrossRef] [PubMed]

- Faems, D. Moving forward quantitative research on innovation management: A call for an inductive turn on using and presenting quantitative research. R&D Manag. 2020, 50, 352–363. [Google Scholar] [CrossRef]

- Brorsen, B.W.; Lin, H.; Larzelere, R. Critique of enhanced power claimed for Quasi-ANCOVA and Dual-Centered ANCOVA. PLoS ONE 2025, 20, e0317860. [Google Scholar] [CrossRef]

- Guo, X. Relationship between Parents’ Educational Expectations and Children’s Growth Based on NVivo 12.0 Qualitative Software. Sci. Program. 2022, 2022, 9896291. [Google Scholar] [CrossRef]

- Riyantoko, P.A.; Funabiki, N.; Brata, K.C.; Mentari, M.; Damaliana, A.T.; Prasetya, D.A. A Fundamental Statistics Self-Learning Method with Python Programming for Data Science Implementations. Information 2025, 16, 607. [Google Scholar] [CrossRef]

- Hayes, A. Introduction to mediation, moderation, and conditional process analysis. J. Educ. Meas. 2013, 51, 335–337. [Google Scholar] [CrossRef]

| Characteristic | Control Group | Experimental Group |

|---|---|---|

| Gender | Male: 32 people Female: 28 people | Male: 32 people Female: 28 people |

| Mean age | 19.2 ± 0.8 years | 19.1 ± 0.7 years |

| Major | Computer Science: 42 people Information Technology: 18 people | Computer Science: 42 people Information Technology: 18 people |

| Prior programming experience | 12.3 ± 4.1 h/week (self-reported, including basic Python or C#) | 11.9 ± 3.8 h/week (self-reported, including basic Python or C#) |

| Learning styles (VARK questionnaire) | Visual: 42% Auditory: 28% Kinesthetic: 30% | Visual: 40% Auditory: 30% Kinesthetic: 30% |

| Baseline technical proficiency (pre-test score, 0–100) | 56.2 ± 8.7 | 55.8 ± 9.1 |

| Time Point | Control Group (M ± SD) | Experimental Group (M ± SD) | Group Comparison Statistics |

|---|---|---|---|

| Pre-test | 64.9 ± 9.2 | 65.3 ± 8.9 | t (120) = 0.25, p = 0.80 |

| Mid-test (Week 6) | 65.1 ± 9.5 | 52.7 ± 8.1 | t (120) = 7.21, p < 0.001 |

| Post-test (Week 12) | 65.7 ± 10.2 | 42.3 ± 8.7 | t (120) = 12.83, p < 0.001 |

| Time Point | Control Group (M ± SD, min) | Experimental Group (M ± SD, min) | Group Comparison Statistics |

|---|---|---|---|

| Pre-test | 16.2 ± 4.3 | 15.9 ± 4.1 | t (120) = 0.41, p = 0.68 |

| Mid-test | 16.1 ± 4.2 | 22.3 ± 4.8 | t (120) = 7.59, p < 0.001 |

| Post-test | 15.9 ± 4.0 | 28.6 ± 5.3 | t (120) = 14.21, p < 0.001 |

| Time Point | Control Group (M ± SD) | Experimental Group (M ± SD) | Group Comparison Statistics |

|---|---|---|---|

| Pre-test | 52.3 ± 9.7 | 51.8 ± 10.2 | t (120) = 0.26, p = 0.79 |

| Mid-test | 54.7 ± 10.1 | 68.5 ± 8.9 | t (120) = 7.83, p < 0.001 |

| Post-test | 57.6 ± 11.3 | 82.4 ± 9.1 | t (120) = 12.05, p < 0.001 |

| Time Point | Control Group (M ± SD) | Experimental Group (M ± SD) | Group Comparison Statistics |

|---|---|---|---|

| Pre-test | 1.4 ± 0.7 | 1.5 ± 0.6 | t (120) = 0.87, p = 0.38 |

| Mid-test | 1.5 ± 0.6 | 2.4 ± 0.7 | t (120) = 8.02, p < 0.001 |

| Post-test | 1.5 ± 0.6 | 3.2 ± 0.8 | t (120) = 13.17, p < 0.001 |

| Time Point | Control Group (M ± SD) | Experimental Group (M ± SD) | Group Comparison Statistics |

|---|---|---|---|

| Pre-test | 5.3 ± 2.4 | 5.5 ± 2.2 | t (120) = 0.51, p = 0.61 |

| Mid-test | 5.4 ± 2.3 | 9.2 ± 2.8 | t (120) = 8.76, p < 0.001 |

| Post-test | 5.5 ± 2.2 | 12.6 ± 3.1 | t (120) = 14.03, p < 0.001 |

| Time Point | Control Group (M ± SD) | Experimental Group (M ± SD) | Group Comparison Statistics |

|---|---|---|---|

| Pre-test | 40.8 ± 12.3 | 41.2 ± 11.9 | t (120) = 0.18, p = 0.86 |

| Mid-test | 41.0 ± 12.1 | 62.5 ± 10.7 | t (120) = 10.15, p < 0.001 |

| Post-test | 41.2 ± 11.8 | 78.3 ± 10.4 | t (120) = 16.39, p < 0.001 |

| Time Point | Control Group (M ± SD) | Experimental Group (M ± SD) | Group Comparison Statistics |

|---|---|---|---|

| Pre-test | 5.6 ± 2.2 | 5.7 ± 2.1 | t (120) = 0.26, p = 0.79 |

| Mid-test | 5.7 ± 2.3 | 9.8 ± 2.7 | t (120) = 9.24, p < 0.001 |

| Post-test | 5.7 ± 2.2 | 14.2 ± 3.2 | t (120) = 16.72, p < 0.001 |

| Time Point | Control Group (M ± SD) | Experimental Group (M ± SD) | Group Comparison Statistics |

|---|---|---|---|

| Pre-test | 3.1 ± 0.9 | 3.2 ± 0.8 | t (118) = 0.52, p = 0.60 |

| Mid-test | 3.2 ± 0.8 | 4.0 ± 0.7 | t (118) = 6.83, p < 0.001 |

| Post-test | 3.3 ± 0.9 | 4.8 ± 0.6 | t (118) = 10.72, p < 0.001 |

| Time Point | Control Group (M ± SD) | Experimental Group (M ± SD) | Group Comparison Statistics |

|---|---|---|---|

| Pre-test | 2.7 ± 0.8 | 2.8 ± 0.7 | t (120) = 0.68, p = 0.50 |

| Mid-test | 2.8 ± 0.7 | 3.5 ± 0.6 | t (120) = 7.91, p < 0.001 |

| Post-test | 2.8 ± 0.8 | 4.2 ± 0.6 | t (120) = 12.56, p < 0.001 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lu, M.; Hu, Z. Leveraging Multimodal Information for Web Front-End Development Instruction: Analyzing Effects on Cognitive Behavior, Interaction, and Persistent Learning. Information 2025, 16, 734. https://doi.org/10.3390/info16090734

Lu M, Hu Z. Leveraging Multimodal Information for Web Front-End Development Instruction: Analyzing Effects on Cognitive Behavior, Interaction, and Persistent Learning. Information. 2025; 16(9):734. https://doi.org/10.3390/info16090734

Chicago/Turabian StyleLu, Ming, and Zhongyi Hu. 2025. "Leveraging Multimodal Information for Web Front-End Development Instruction: Analyzing Effects on Cognitive Behavior, Interaction, and Persistent Learning" Information 16, no. 9: 734. https://doi.org/10.3390/info16090734

APA StyleLu, M., & Hu, Z. (2025). Leveraging Multimodal Information for Web Front-End Development Instruction: Analyzing Effects on Cognitive Behavior, Interaction, and Persistent Learning. Information, 16(9), 734. https://doi.org/10.3390/info16090734